## Text Document: Prompt Template for Error Reason Scoring

### Overview

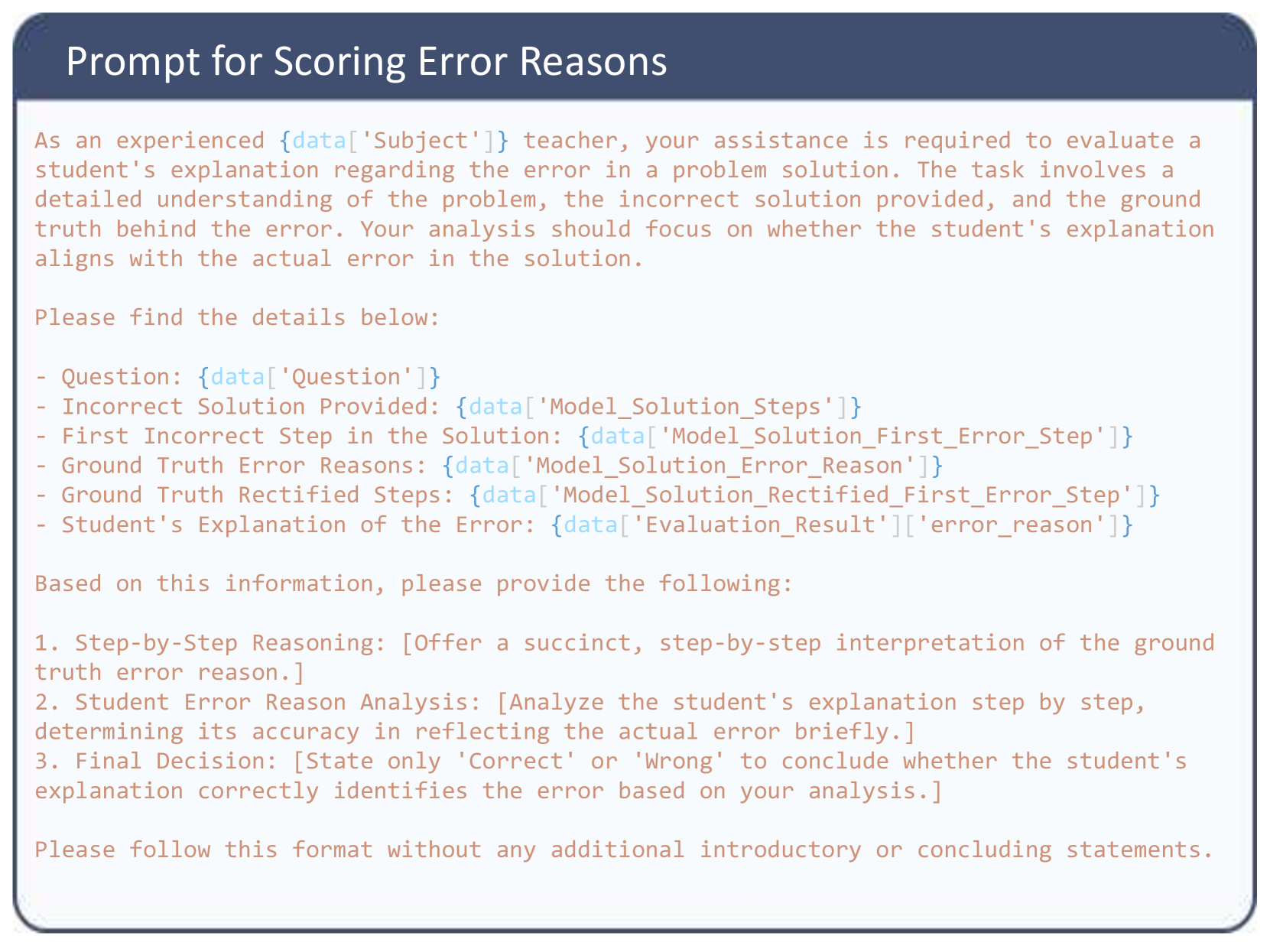

The image displays a text-based prompt template designed for evaluating a student's explanation of an error in a problem solution. It is structured as a set of instructions for an evaluator (role-played as an experienced teacher) and contains placeholder variables (e.g., `{data['Subject']}`) that would be populated with specific data in a live system. The document is purely textual with no charts, diagrams, or images embedded within it.

### Components/Axes

The document is visually divided into two main regions:

1. **Header Region:** A dark blue, rounded rectangle containing the title text in white.

2. **Main Content Region:** A light-colored background containing the instructional text in a monospaced font. The text is left-aligned.

**Title (in Header):** `Prompt for Scoring Error Reasons`

### Detailed Analysis / Content Details

The following is a precise transcription of all text content within the main body of the document.

**Introductory Paragraph:**

```

As an experienced {data['Subject']} teacher, your assistance is required to evaluate a

student's explanation regarding the error in a problem solution. The task involves a

detailed understanding of the problem, the incorrect solution provided, and the ground

truth behind the error. Your analysis should focus on whether the student's explanation

aligns with the actual error in the solution.

```

**Instruction Line:**

```

Please find the details below:

```

**List of Data Placeholders:**

```

- Question: {data['Question']}

- Incorrect Solution Provided: {data['Model_Solution_Steps']}

- First Incorrect Step in the Solution: {data['Model_Solution_First_Error_Step']}

- Ground Truth Error Reasons: {data['Model_Solution_Error_Reason']}

- Ground Truth Rectified Steps: {data['Model_Solution_Rectified_First_Error_Step']}

- Student's Explanation of the Error: {data['Evaluation_Result']['error_reason']}

```

**Instruction for Output:**

```

Based on this information, please provide the following:

```

**Required Output Format (Numbered List):**

```

1. Step-by-Step Reasoning: [Offer a succinct, step-by-step interpretation of the ground

truth error reason.]

2. Student Error Reason Analysis: [Analyze the student's explanation step by step,

determining its accuracy in reflecting the actual error briefly.]

3. Final Decision: [State only 'Correct' or 'Wrong' to conclude whether the student's

explanation correctly identifies the error based on your analysis.]

```

**Final Instruction:**

```

Please follow this format without any additional introductory or concluding statements.

```

### Key Observations

1. **Template Nature:** The document is a template, not a filled-out example. All specific data points (the question, solutions, error reasons) are represented by placeholder variables in the format `{data['key']}`.

2. **Structured Evaluation:** It enforces a strict, three-part output format (Reasoning, Analysis, Decision) to ensure consistent evaluation.

3. **Role-Play Context:** The evaluator is instructed to adopt the persona of an "experienced {data['Subject']} teacher," suggesting the subject matter is academic.

4. **Data Dependencies:** The template relies on a structured data object (`data`) with specific keys to function, indicating it is part of a larger automated or semi-automated system.

### Interpretation

This document is a **prompt engineering template** for an AI or a human evaluator within an educational technology or AI training pipeline. Its purpose is to standardize the assessment of how well a student (or a model acting as a student) can diagnose errors in a provided solution.

The core investigative logic it promotes is:

1. **Ground Truth Establishment:** First, understand the *actual* error from authoritative sources (the "Ground Truth Error Reasons" and "Rectified Steps").

2. **Comparative Analysis:** Then, compare the student's stated reason for the error against this ground truth.

3. **Binary Judgment:** Finally, make a definitive "Correct" or "Wrong" judgment on the student's diagnostic accuracy.

The template's design minimizes evaluator bias by providing all necessary context and demanding a specific, concise output format. It is likely used to generate training data for AI models, to assess student performance at scale, or to fine-tune an AI's ability to perform pedagogical reasoning. The presence of placeholders like `{data['Evaluation_Result']['error_reason']}` suggests it might be part of a recursive system where one model's output (the student's explanation) is fed into this evaluation prompt.