TECHNICAL ASSET FINGERPRINT

fa5ca4cf49ddc3ed55c7d019

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

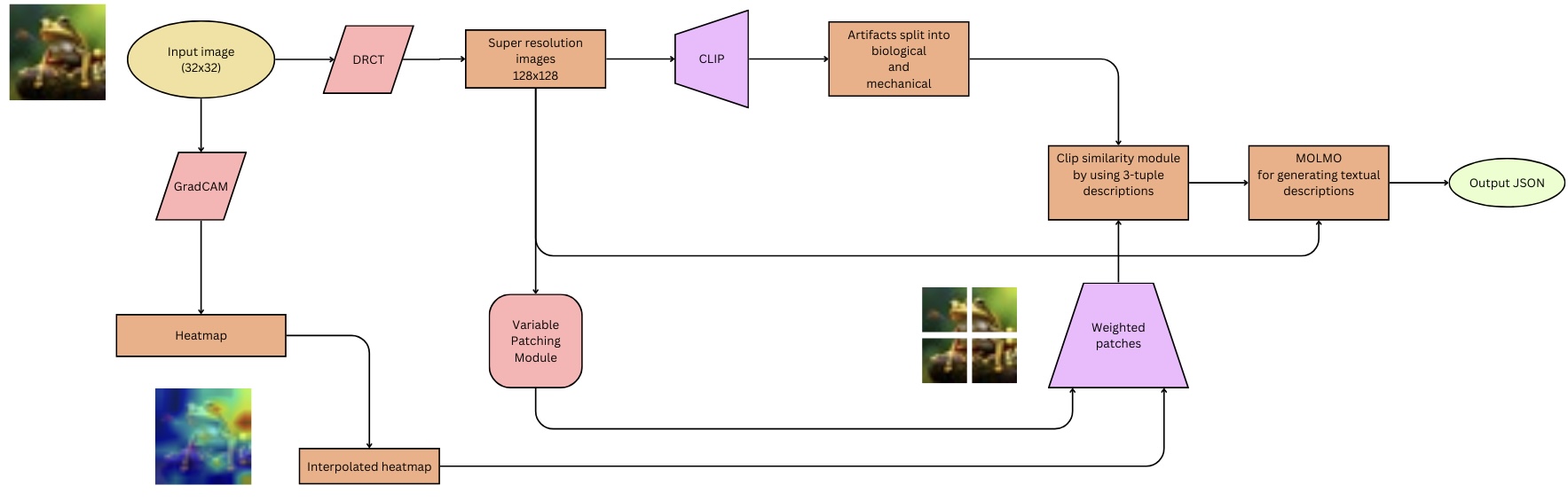

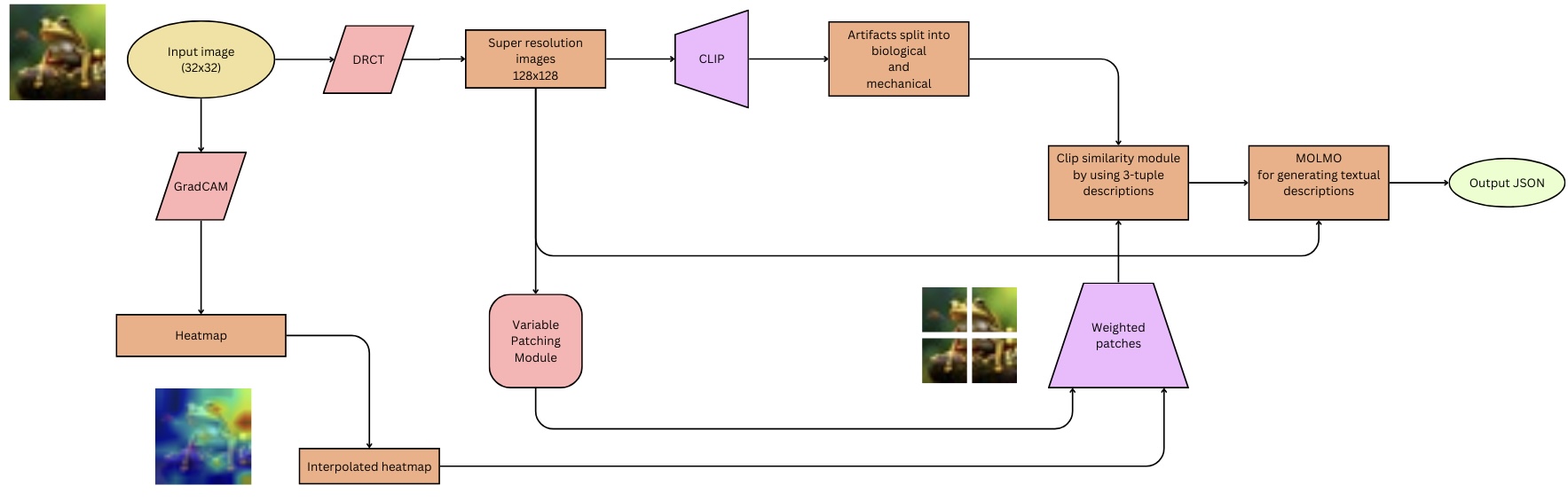

## Diagram: Image Processing Pipeline

### Overview

The image is a flowchart illustrating an image processing pipeline. It starts with an input image, processes it through several modules, and outputs a JSON file. The pipeline includes steps for super-resolution, artifact splitting, similarity analysis, and textual description generation.

### Components/Axes

The diagram consists of several modules represented by different shapes:

* **Oval:** Represents input and output.

* **Rectangle:** Represents processing modules.

* **Parallelogram:** Represents a specific type of module (CLIP).

* **Rhombus:** Represents a specific type of module (DRCT, GradCAM).

* **Rounded Rectangle:** Represents a specific type of module (Variable Patching Module).

The flow of data is indicated by arrows connecting the modules.

### Detailed Analysis or ### Content Details

1. **Input Image:**

* Shape: Oval

* Label: "Input image (32x32)"

* Position: Top-left

* Content: A small image of a frog.

2. **DRCT:**

* Shape: Rhombus

* Label: "DRCT"

* Position: Right of "Input image"

3. **Super resolution images:**

* Shape: Rectangle

* Label: "Super resolution images 128x128"

* Position: Right of "DRCT"

4. **CLIP:**

* Shape: Parallelogram

* Label: "CLIP"

* Position: Right of "Super resolution images"

5. **Artifacts split into biological and mechanical:**

* Shape: Rectangle

* Label: "Artifacts split into biological and mechanical"

* Position: Right of "CLIP"

6. **Clip similarity module by using 3-tuple descriptions:**

* Shape: Rectangle

* Label: "Clip similarity module by using 3-tuple descriptions"

* Position: Right and slightly below "Artifacts split into biological and mechanical"

7. **MOLMO for generating textual descriptions:**

* Shape: Rectangle

* Label: "MOLMO for generating textual descriptions"

* Position: Right of "Clip similarity module"

8. **Output JSON:**

* Shape: Oval

* Label: "Output JSON"

* Position: Right of "MOLMO"

9. **GradCAM:**

* Shape: Rhombus

* Label: "GradCAM"

* Position: Below "Input image"

10. **Heatmap:**

* Shape: Rectangle

* Label: "Heatmap"

* Position: Below "GradCAM"

11. **Interpolated heatmap:**

* Shape: Rectangle

* Label: "Interpolated heatmap"

* Position: Below "Heatmap"

* Content: A heatmap image highlighting regions of interest.

12. **Variable Patching Module:**

* Shape: Rounded Rectangle

* Label: "Variable Patching Module"

* Position: Below "Super resolution images"

13. **Weighted patches:**

* Shape: Parallelogram

* Label: "Weighted patches"

* Position: Below and left of "Clip similarity module"

* Content: An image divided into four patches.

### Key Observations

* The pipeline starts with a low-resolution image (32x32) and generates a high-resolution image (128x128).

* The GradCAM module generates a heatmap, which is then interpolated.

* The Variable Patching Module and Weighted Patches are used in conjunction with the CLIP similarity module.

* The final output is a JSON file containing textual descriptions generated by the MOLMO module.

### Interpretation

The diagram illustrates a complex image processing pipeline designed to generate textual descriptions of images. The pipeline uses a combination of techniques, including super-resolution, attention mechanisms (GradCAM), and similarity analysis (CLIP) to extract relevant information from the image. The MOLMO module then uses this information to generate textual descriptions. The use of variable patching and weighted patches suggests that the pipeline focuses on specific regions of interest within the image. The final output in JSON format indicates that the textual descriptions are structured and can be easily processed by other applications.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Artifact Analysis Pipeline

### Overview

This diagram illustrates a pipeline for analyzing artifacts, starting with an input image and culminating in a JSON output. The pipeline involves image processing, artifact segmentation, and textual description generation. The process appears to be designed for archaeological or historical artifact analysis, leveraging computer vision and natural language processing techniques.

### Components/Axes

The diagram consists of several processing blocks connected by arrows indicating the flow of data. Key components include:

* **Input image (32x32):** The initial input to the pipeline.

* **DRCT:** A processing step following the input image.

* **Super resolution images 128x128:** An image upscaling step.

* **CLIP:** A component likely related to Contrastive Language-Image Pre-training.

* **Artifacts split into biological and mechanical:** A segmentation step categorizing artifacts.

* **Clip similarity module by using 3-tuple descriptions:** A module for comparing artifacts using CLIP embeddings.

* **MOLMO for generating textual descriptions:** A module for generating textual descriptions of the artifacts.

* **Output .JSON:** The final output of the pipeline.

* **GradCAM:** A visualization technique for understanding which parts of the image contribute to the model's decision.

* **Heatmap:** A visual representation of the GradCAM output.

* **Variable Patching Module:** A module for dividing the image into variable-sized patches.

* **Interpolated heatmap:** A processed heatmap.

* **Weighted patches:** Patches assigned weights based on the heatmap.

### Detailed Analysis or Content Details

The pipeline flows as follows:

1. An **Input image (32x32)** is fed into the **DRCT** module.

2. The output of **DRCT** is passed to a **Super resolution images 128x128** module, increasing the image resolution.

3. The upscaled image is then processed by **CLIP**.

4. **CLIP**'s output is used to **Artifacts split into biological and mechanical** categories.

5. The categorized artifacts are then processed by a **Clip similarity module by using 3-tuple descriptions**.

6. The output of the similarity module is fed into **MOLMO for generating textual descriptions**.

7. Finally, **MOLMO** generates a **Output .JSON** file.

A parallel branch starts with the **Input image (32x32)**:

1. It is processed by **GradCAM**.

2. **GradCAM** generates a **Heatmap**.

3. The **Heatmap** is processed by a **Variable Patching Module**.

4. The output of the patching module is an **Interpolated heatmap**.

5. The **Interpolated heatmap** is used to generate **Weighted patches**.

6. The **Weighted patches** are then fed into the **Clip similarity module by using 3-tuple descriptions**.

### Key Observations

The pipeline utilizes both a primary path for artifact analysis and a parallel path for generating heatmaps and weighted patches, which are then integrated into the main analysis stream. The use of CLIP suggests a focus on semantic understanding of the artifacts. The final JSON output indicates a structured representation of the analysis results. The initial image resolution is very low (32x32), suggesting the DRCT and Super Resolution modules are crucial for improving the quality of the input data.

### Interpretation

This diagram represents a sophisticated system for automated artifact analysis. The pipeline combines image processing techniques (DRCT, super-resolution, GradCAM, variable patching) with semantic understanding (CLIP, MOLMO) to generate structured textual descriptions of artifacts. The separation of artifacts into "biological" and "mechanical" categories suggests the system is designed to handle a diverse range of artifact types. The integration of heatmap-based weighting indicates an attempt to focus the analysis on the most salient features of the artifacts. The final JSON output allows for easy integration of the analysis results into other systems or databases. The pipeline appears to be designed for automated archaeological or historical research, potentially enabling large-scale analysis of artifact collections.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## System Architecture Diagram: Image-to-Text Description Pipeline

### Overview

The image displays a technical flowchart or system architecture diagram illustrating a multi-stage pipeline for processing a low-resolution input image to generate a structured textual description in JSON format. The diagram uses color-coded shapes (ovals, parallelograms, rectangles, trapezoids) connected by directional arrows to represent data flow and processing modules. The overall flow moves from left to right, with a parallel branch for heatmap generation.

### Components/Axes

The diagram is composed of the following labeled components, listed in approximate order of data flow:

1. **Input Image (32x32)**: A yellow oval at the top-left containing a small, low-resolution sample image of a frog-like creature on a branch.

2. **DRCT**: A pink parallelogram connected from the input image.

3. **Super resolution images 128x128**: An orange rectangle receiving input from DRCT.

4. **CLIP**: A purple trapezoid (wider at the bottom) connected from the super-resolution images.

5. **Artifacts split into biological and mechanical**: An orange rectangle following CLIP.

6. **Clip similarity module by using 3-tuple descriptions**: An orange rectangle receiving input from the "Artifacts split..." module and another input from "Weighted patches".

7. **MOLMO for generating textual descriptions**: An orange rectangle following the similarity module.

8. **Output JSON**: A light green oval at the far right, the final output of the pipeline.

9. **GradCAM**: A pink parallelogram branching down from the initial input image.

10. **Heatmap**: An orange rectangle receiving input from GradCAM. Below it is a small, colorful heatmap visualization.

11. **Interpolated heatmap**: An orange rectangle receiving input from the Heatmap.

12. **Variable Patching Module**: A pink rounded rectangle receiving input from the "Super resolution images 128x128".

13. **Weighted patches**: A purple trapezoid (wider at the top) receiving inputs from the "Variable Patching Module" and the "Interpolated heatmap". Above it is a 2x2 grid of image patches.

**Spatial Grounding & Flow:**

* The primary processing chain runs horizontally across the top: `Input Image -> DRCT -> Super resolution -> CLIP -> Artifacts split -> Similarity Module -> MOLMO -> Output JSON`.

* A secondary analysis branch runs vertically down from the input: `Input Image -> GradCAM -> Heatmap -> Interpolated heatmap`.

* The `Variable Patching Module` splits off from the super-resolution stage.

* The `Weighted patches` module acts as a convergence point, integrating data from the patching module and the interpolated heatmap before feeding into the `Clip similarity module`.

### Detailed Analysis

**Text Transcription (All text is in English):**

* Input image (32x32)

* DRCT

* Super resolution images 128x128

* CLIP

* Artifacts split into biological and mechanical

* Clip similarity module by using 3-tuple descriptions

* MOLMO for generating textual descriptions

* Output JSON

* GradCAM

* Heatmap

* Interpolated heatmap

* Variable Patching Module

* Weighted patches

**Component Relationships & Data Flow:**

1. **Image Enhancement & Feature Extraction:** The pipeline begins with a 32x32 pixel input image. It is processed by "DRCT" (likely a super-resolution or restoration model) to produce 128x128 super-resolution images.

2. **High-Level Analysis:** The enhanced images are passed to "CLIP" (a vision-language model) for analysis. The output is then categorized, splitting detected "artifacts" into "biological" and "mechanical" classes.

3. **Parallel Attention Mapping:** Simultaneously, a "GradCAM" (Gradient-weighted Class Activation Mapping) process is applied to the original input image to generate a "Heatmap," which is then processed into an "Interpolated heatmap." This likely highlights regions of interest for the model.

4. **Patch-Based Processing:** The super-resolution images are also fed into a "Variable Patching Module," which presumably divides the image into regions of interest. These patches, combined with the spatial attention data from the interpolated heatmap, are used to create "Weighted patches."

5. **Similarity Matching & Description Generation:** The "Clip similarity module" uses "3-tuple descriptions" (likely structured subject-predicate-object triplets) and compares them against the "Weighted patches" and the high-level artifact categories. The results are passed to "MOLMO" (likely a language model) to generate the final natural language descriptions.

6. **Output:** The final output is structured as "Output JSON."

### Key Observations

* **Multi-Modal Integration:** The system integrates low-level image processing (super-resolution, patching), computer vision techniques (GradCAM, CLIP), and natural language generation (MOLMO).

* **Attention-Guided Processing:** The use of GradCAM and interpolated heatmaps suggests the system uses model attention to guide where it should focus when generating descriptions, especially when creating weighted patches.

* **Structured Intermediate Representations:** The pipeline creates several structured intermediate data types: super-resolved images, artifact categories, heatmaps, weighted patches, and 3-tuple descriptions, before final text generation.

* **Color-Coding:** Shapes are color-coded by function: yellow/light green for I/O, pink for processing modules/models, orange for data states/outputs, and purple for core AI models (CLIP, Weighted patches).

### Interpretation

This diagram represents a sophisticated, multi-stage AI pipeline designed to solve the complex task of generating detailed textual descriptions from very low-resolution images. The process is not a simple end-to-end model but a carefully orchestrated sequence.

The **core investigative logic** (Peircean) is abductive: the system starts with sparse, poor-quality data (a 32x32 image) and constructs the most plausible detailed description by:

1. **Enhancing the evidence** (super-resolution).

2. **Forming hypotheses about content** (CLIP analysis, artifact splitting).

3. **Gathering corroborating spatial evidence** (GradCAM heatmaps, patch weighting).

4. **Synthesizing a coherent narrative** (similarity matching with 3-tuples and final generation by MOLMO).

The separation of "biological" and "mechanical" artifacts indicates the system is designed for a domain where this distinction is critical, such as analyzing fantasy creatures, robots, or hybrid entities. The reliance on "3-tuple descriptions" for similarity matching suggests the system grounds its understanding in relational knowledge (e.g., "frog - has - spots") rather than just object detection.

The notable **anomaly** or challenge this architecture addresses is the extreme information deficit of the input. The entire pipeline can be seen as a sophisticated "guessing" engine that uses multiple AI models to progressively fill in plausible details, using attention mechanisms to ensure its guesses are spatially consistent with the original blurry input. The final JSON output implies the description is intended for downstream computational use, not just human reading.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Multimodal Image Analysis Pipeline

### Overview

The diagram illustrates a technical pipeline for analyzing input images through a combination of computer vision and natural language processing (NLP) techniques. The process begins with a 32x32 input image and culminates in a JSON output containing textual descriptions. Key components include GradCAM heatmaps, CLIP-based artifact classification, and MOLMO-driven text generation.

### Components/Axes

1. **Input Image**: 32x32 pixel resolution (top-left corner).

2. **GradCAM**: Generates a heatmap from the input image (left-center).

3. **Heatmap**: Visual representation of GradCAM output (bottom-left).

4. **Interpolated Heatmap**: Enhanced resolution version of the heatmap (bottom-center).

5. **Super-Resolution Images**: 128x128 upscaled images (center-left).

6. **CLIP**: Processes super-resolution images to split artifacts into biological and mechanical categories (center).

7. **Clip Similarity Module**: Uses 3-tuple descriptions from CLIP (center-right).

8. **MOLMO**: Generates textual descriptions (right-center).

9. **Output JSON**: Final structured output (top-right).

### Detailed Analysis

- **Input Image**: A 32x32 pixel image of a frog (top-left).

- **GradCAM**: Produces a heatmap highlighting biologically relevant regions (left-center).

- **Heatmap**: Color-coded visualization (blue to red) of GradCAM output (bottom-left).

- **Interpolated Heatmap**: Smoother version of the heatmap (bottom-center).

- **Super-Resolution Images**: 128x128 images derived from the input (center-left).

- **CLIP**: Classifies artifacts into biological (e.g., frog) and mechanical (e.g., background) categories (center).

- **Clip Similarity Module**: Compares descriptions using 3-tuple embeddings (center-right).

- **MOLMO**: Text generation module producing descriptions like "A frog sitting on a leaf" (right-center).

- **Output JSON**: Structured data containing descriptions and classifications (top-right).

### Key Observations

1. **Multimodal Integration**: The pipeline combines visual (GradCAM, heatmaps) and textual (CLIP, MOLMO) modalities.

2. **Resolution Scaling**: Input images are upscaled from 32x32 to 128x128 for detailed analysis.

3. **Artifact Differentiation**: CLIP explicitly separates biological and mechanical elements.

4. **Weighted Patches**: The variable patching module combines interpolated heatmaps with weighted regions for refined analysis.

### Interpretation

This pipeline demonstrates a hybrid approach to image analysis, leveraging GradCAM for attention mapping and CLIP/MOLMO for semantic understanding. The variable patching module acts as a bridge between visual saliency and textual generation, enabling context-aware descriptions. The final JSON output likely serves applications requiring structured data, such as medical imaging analysis or automated object recognition. The use of 3-tuple descriptions in the clip similarity module suggests a focus on precise semantic relationships between visual and textual elements.

DECODING INTELLIGENCE...