## Bar Charts: Comparison of Training Methods for Final-round Accuracy

### Overview

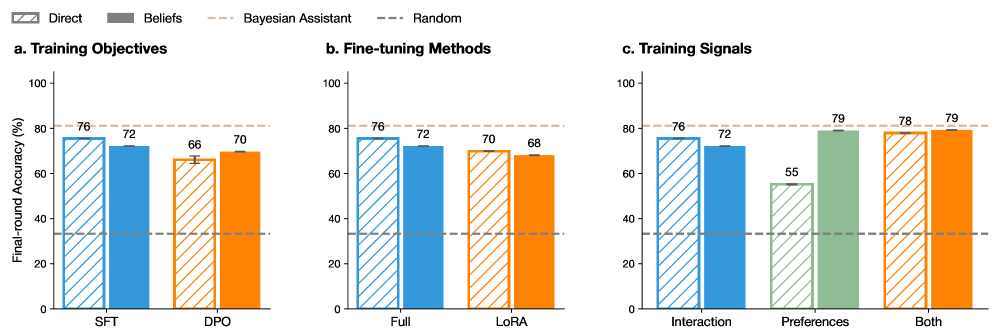

The image displays three grouped bar charts (labeled a, b, and c) comparing the performance of different machine learning training approaches. The primary metric is "Final-round Accuracy (%)" on the y-axis. Each chart explores a different dimension of the training process: objectives, fine-tuning methods, and training signals. A shared legend at the top defines the bar patterns and reference lines.

### Components/Axes

* **Shared Y-Axis:** "Final-round Accuracy (%)", scale from 0 to 100 in increments of 20.

* **Shared Legend (Top Center):**

* **Bar Patterns:**

* `Direct`: Diagonal striped pattern (blue, orange, green).

* `Beliefs`: Solid fill (blue, orange, green).

* **Reference Lines:**

* `Bayesian Assistant`: Dashed light brown line.

* `Random`: Dashed dark gray line.

* **Subplot Titles & X-Axis Categories:**

* **a. Training Objectives:** Categories are "SFT" and "DPO".

* **b. Fine-tuning Methods:** Categories are "Full" and "LoRA".

* **c. Training Signals:** Categories are "Interaction", "Preferences", and "Both".

### Detailed Analysis

**Subplot a. Training Objectives**

* **SFT Category:**

* `Direct` (Blue, striped): ~76%

* `Beliefs` (Blue, solid): ~72%

* **DPO Category:**

* `Direct` (Orange, striped): ~66% (with a small error bar)

* `Beliefs` (Orange, solid): ~70%

* **Reference Lines:**

* `Bayesian Assistant` (Dashed line): Positioned at approximately 80%.

* `Random` (Dashed line): Positioned at approximately 30%.

**Subplot b. Fine-tuning Methods**

* **Full Category:**

* `Direct` (Blue, striped): ~76%

* `Beliefs` (Blue, solid): ~72%

* **LoRA Category:**

* `Direct` (Orange, striped): ~70%

* `Beliefs` (Orange, solid): ~68%

* **Reference Lines:** Same as in subplot a (Bayesian Assistant ~80%, Random ~30%).

**Subplot c. Training Signals**

* **Interaction Category:**

* `Direct` (Blue, striped): ~76%

* `Beliefs` (Blue, solid): ~72%

* **Preferences Category:**

* `Direct` (Green, striped): ~55%

* `Beliefs` (Green, solid): ~79% (with a small error bar)

* **Both Category:**

* `Direct` (Orange, striped): ~78%

* `Beliefs` (Orange, solid): ~79%

* **Reference Lines:** Same as in subplots a and b.

### Key Observations

1. **Performance vs. Baselines:** All reported model performances (bars) are significantly above the `Random` baseline (~30%) but generally below the `Bayesian Assistant` baseline (~80%).

2. **Direct vs. Beliefs Trend:** The relationship between `Direct` and `Beliefs` methods is not consistent.

* In **SFT, Full, and Interaction** settings, `Direct` outperforms `Beliefs` by 4-5 percentage points.

* In **DPO and LoRA** settings, the gap narrows or reverses, with `Beliefs` performing slightly better or comparably.

* In the **Preferences** signal setting, there is a dramatic reversal: `Beliefs` (~79%) vastly outperforms `Direct` (~55%).

3. **Highest Performers:** The highest accuracy values (~79%) are achieved by the `Beliefs` method using either the `Preferences` signal alone or the `Both` signal combination.

4. **Lowest Performer:** The `Direct` method with the `Preferences` signal is the clear outlier, performing at ~55%, which is notably lower than all other configurations.

### Interpretation

This data suggests that the optimal training strategy is highly dependent on the specific context (objective, fine-tuning method, and signal type). There is no universally superior approach between `Direct` and `Beliefs`.

* The `Direct` method appears more effective when the training signal is based on **Interaction** or when using standard **SFT** objectives and **Full** fine-tuning.

* The `Beliefs` method shows a critical advantage when the training signal is derived from **Preferences**. This indicates that modeling or incorporating "beliefs" may be particularly beneficial for learning from preference-based data, potentially by better capturing the underlying rationale behind human choices.

* The fact that combining signals (`Both`) yields high performance for both methods suggests complementarity between interaction and preference data.

* The consistent gap below the `Bayesian Assistant` baseline indicates that these training methods, while effective, have not yet reached the theoretical performance ceiling represented by that benchmark. The `Random` baseline confirms the tasks are non-trivial and the models are learning meaningful patterns.

**Language Declaration:** All text in the image is in English.