TECHNICAL ASSET FINGERPRINT

faeaa0a8cf4859c4f0ecdb98

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

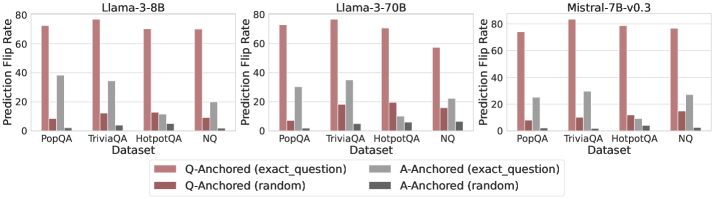

## Bar Chart: Prediction Flip Rate Comparison for Different Models

### Overview

The image presents three bar charts comparing the prediction flip rates of different language models (Llama-3-8B, Llama-3-70B, and Mistral-7B-v0.3) across four datasets (PopQA, TriviaQA, HotpotQA, and NQ). The charts show the prediction flip rates for question-anchored and answer-anchored methods, both with exact questions and random questions.

### Components/Axes

* **Title:** Each chart has a title indicating the language model being evaluated: "Llama-3-8B", "Llama-3-70B", and "Mistral-7B-v0.3".

* **Y-axis:** "Prediction Flip Rate" ranging from 0 to 80.

* **X-axis:** "Dataset" with four categories: "PopQA", "TriviaQA", "HotpotQA", and "NQ".

* **Legend:** Located at the bottom of the image.

* Light Red: "Q-Anchored (exact\_question)"

* Dark Red: "Q-Anchored (random)"

* Light Gray: "A-Anchored (exact\_question)"

* Dark Gray: "A-Anchored (random)"

### Detailed Analysis

**Llama-3-8B**

* **PopQA:**

* Q-Anchored (exact\_question): ~73

* Q-Anchored (random): ~8

* A-Anchored (exact\_question): ~38

* A-Anchored (random): ~23

* **TriviaQA:**

* Q-Anchored (exact\_question): ~73

* Q-Anchored (random): ~12

* A-Anchored (exact\_question): ~34

* A-Anchored (random): ~3

* **HotpotQA:**

* Q-Anchored (exact\_question): ~72

* Q-Anchored (random): ~12

* A-Anchored (exact\_question): ~10

* A-Anchored (random): ~3

* **NQ:**

* Q-Anchored (exact\_question): ~72

* Q-Anchored (random): ~12

* A-Anchored (exact\_question): ~20

* A-Anchored (random): ~3

**Llama-3-70B**

* **PopQA:**

* Q-Anchored (exact\_question): ~75

* Q-Anchored (random): ~8

* A-Anchored (exact\_question): ~30

* A-Anchored (random): ~8

* **TriviaQA:**

* Q-Anchored (exact\_question): ~75

* Q-Anchored (random): ~18

* A-Anchored (exact\_question): ~48

* A-Anchored (random): ~15

* **HotpotQA:**

* Q-Anchored (exact\_question): ~80

* Q-Anchored (random): ~25

* A-Anchored (exact\_question): ~20

* A-Anchored (random): ~5

* **NQ:**

* Q-Anchored (exact\_question): ~72

* Q-Anchored (random): ~18

* A-Anchored (exact\_question): ~30

* A-Anchored (random): ~15

**Mistral-7B-v0.3**

* **PopQA:**

* Q-Anchored (exact\_question): ~75

* Q-Anchored (random): ~10

* A-Anchored (exact\_question): ~28

* A-Anchored (random): ~10

* **TriviaQA:**

* Q-Anchored (exact\_question): ~75

* Q-Anchored (random): ~12

* A-Anchored (exact\_question): ~30

* A-Anchored (random): ~5

* **HotpotQA:**

* Q-Anchored (exact\_question): ~73

* Q-Anchored (random): ~10

* A-Anchored (exact\_question): ~10

* A-Anchored (random): ~2

* **NQ:**

* Q-Anchored (exact\_question): ~73

* Q-Anchored (random): ~10

* A-Anchored (exact\_question): ~15

* A-Anchored (random): ~2

### Key Observations

* **Q-Anchored (exact\_question)** consistently shows the highest prediction flip rate across all datasets and models.

* **Q-Anchored (random)** consistently shows a low prediction flip rate across all datasets and models.

* **A-Anchored (exact\_question)** and **A-Anchored (random)** show varying prediction flip rates depending on the dataset and model, generally lower than Q-Anchored (exact\_question).

* Llama-3-70B shows a higher A-Anchored (exact\_question) prediction flip rate for TriviaQA compared to the other models.

* The prediction flip rates for Q-Anchored (exact\_question) are relatively consistent across all datasets for each model.

### Interpretation

The data suggests that using the exact question as an anchor (Q-Anchored (exact\_question)) leads to a significantly higher prediction flip rate compared to using a random question. This indicates that the models are highly sensitive to the specific wording of the question. The lower flip rates for A-Anchored methods suggest that the models are less sensitive to the answer context. The differences between the models highlight variations in their robustness and sensitivity to question phrasing across different datasets. The consistency of Q-Anchored (exact\_question) across datasets suggests a general vulnerability to specific question formulations, regardless of the knowledge domain.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

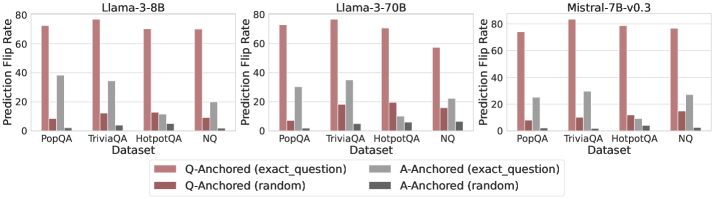

## Bar Chart: Prediction Flip Rate by Model and Dataset

### Overview

The image presents a comparative bar chart showing the "Prediction Flip Rate" across three different language models (Llama-3-8B, Llama-3-70B, and Mistral-7B-v0.3) and four datasets (PopQA, TriviaQA, HotpotQA, and NQ). The flip rate is measured for two anchoring methods: "Q-Anchored" (based on the exact question) and "A-Anchored" (based on the exact answer), each with both "exact question" and "random" variations.

### Components/Axes

* **X-axis:** "Dataset" with categories: PopQA, TriviaQA, HotpotQA, NQ.

* **Y-axis:** "Prediction Flip Rate" ranging from 0 to 80 (approximate).

* **Models (Columns):** Three separate charts, one for each model: Llama-3-8B, Llama-3-70B, Mistral-7B-v0.3. Each model's chart has the same x-axis.

* **Legend (Bottom-Center):**

* Q-Anchored (exact question) - Light Red

* A-Anchored (exact question) - Light Gray

* Q-Anchored (random) - Dark Red

* A-Anchored (random) - Dark Gray

### Detailed Analysis or Content Details

**Llama-3-8B:**

* **PopQA:** Q-Anchored (exact question) is approximately 45, A-Anchored (exact question) is approximately 30, Q-Anchored (random) is approximately 10, A-Anchored (random) is approximately 5.

* **TriviaQA:** Q-Anchored (exact question) is approximately 80, A-Anchored (exact question) is approximately 40, Q-Anchored (random) is approximately 10, A-Anchored (random) is approximately 5.

* **HotpotQA:** Q-Anchored (exact question) is approximately 75, A-Anchored (exact question) is approximately 20, Q-Anchored (random) is approximately 10, A-Anchored (random) is approximately 5.

* **NQ:** Q-Anchored (exact question) is approximately 25, A-Anchored (exact question) is approximately 10, Q-Anchored (random) is approximately 5, A-Anchored (random) is approximately 5.

**Llama-3-70B:**

* **PopQA:** Q-Anchored (exact question) is approximately 50, A-Anchored (exact question) is approximately 35, Q-Anchored (random) is approximately 10, A-Anchored (random) is approximately 5.

* **TriviaQA:** Q-Anchored (exact question) is approximately 80, A-Anchored (exact question) is approximately 45, Q-Anchored (random) is approximately 10, A-Anchored (random) is approximately 5.

* **HotpotQA:** Q-Anchored (exact question) is approximately 75, A-Anchored (exact question) is approximately 25, Q-Anchored (random) is approximately 10, A-Anchored (random) is approximately 5.

* **NQ:** Q-Anchored (exact question) is approximately 25, A-Anchored (exact question) is approximately 10, Q-Anchored (random) is approximately 5, A-Anchored (random) is approximately 5.

**Mistral-7B-v0.3:**

* **PopQA:** Q-Anchored (exact question) is approximately 40, A-Anchored (exact question) is approximately 30, Q-Anchored (random) is approximately 10, A-Anchored (random) is approximately 5.

* **TriviaQA:** Q-Anchored (exact question) is approximately 80, A-Anchored (exact question) is approximately 40, Q-Anchored (random) is approximately 10, A-Anchored (random) is approximately 5.

* **HotpotQA:** Q-Anchored (exact question) is approximately 75, A-Anchored (exact question) is approximately 20, Q-Anchored (random) is approximately 10, A-Anchored (random) is approximately 5.

* **NQ:** Q-Anchored (exact question) is approximately 25, A-Anchored (exact question) is approximately 10, Q-Anchored (random) is approximately 5, A-Anchored (random) is approximately 5.

Across all models, the "Q-Anchored (exact question)" consistently shows the highest flip rate, followed by "A-Anchored (exact question)". The "random" anchoring methods have significantly lower flip rates.

### Key Observations

* The "TriviaQA" dataset consistently results in the highest prediction flip rates across all models and anchoring methods.

* The "NQ" dataset consistently results in the lowest prediction flip rates across all models and anchoring methods.

* The difference between "exact question" and "random" anchoring is substantial, indicating that anchoring on the exact question significantly increases the likelihood of a prediction flip.

* Llama-3-70B generally shows slightly higher flip rates than Llama-3-8B, while Mistral-7B-v0.3 is generally similar to Llama-3-8B.

### Interpretation

The data suggests that the prediction flip rate is highly dependent on both the model used and the dataset being evaluated. The high flip rates observed on TriviaQA may indicate that this dataset contains questions that are particularly sensitive to subtle changes in input or context. The lower flip rates on NQ suggest that this dataset is more robust or that the models are more confident in their predictions for this dataset.

The significant difference between "exact question" and "random" anchoring highlights the importance of context in these models. Anchoring on the exact question provides a stronger signal, leading to a higher probability of a prediction flip. This could be due to the models being more sensitive to specific keywords or phrases in the question.

The relatively consistent performance of the three models suggests that they share similar vulnerabilities and strengths in terms of prediction stability. The slight advantage of Llama-3-70B could be attributed to its larger size and increased capacity for learning complex relationships. Overall, the data provides valuable insights into the behavior of these language models and the factors that influence their prediction stability.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Bar Chart Series: Prediction Flip Rates Across Models and Datasets

### Overview

The image displays three grouped bar charts arranged horizontally, comparing the "Prediction Flip Rate" of three different language models across four question-answering datasets. The charts share a common y-axis and x-axis structure, with a unified legend at the bottom.

### Components/Axes

* **Chart Titles (Top Center of each subplot):**

* Left Chart: `Llama-3-8B`

* Middle Chart: `Llama-3-70B`

* Right Chart: `Mistral-7B-v0.3`

* **Y-Axis (Left side of each subplot):**

* Label: `Prediction Flip Rate`

* Scale: 0 to 80, with major tick marks at 0, 20, 40, 60, 80.

* **X-Axis (Bottom of each subplot):**

* Label: `Dataset`

* Categories (from left to right within each chart): `PopQA`, `TriviaQA`, `HotpotQA`, `NQ`.

* **Legend (Bottom Center, spanning all charts):**

* Four categories, each represented by a colored bar:

1. `Q-Anchored (exact_question)` - Light red/salmon color.

2. `Q-Anchored (random)` - Dark red/maroon color.

3. `A-Anchored (exact_question)` - Light gray color.

4. `A-Anchored (random)` - Dark gray/charcoal color.

### Detailed Analysis

Data is presented as grouped bars for each dataset within each model chart. Values are approximate visual estimates from the bar heights.

**1. Llama-3-8B Chart (Left)**

* **PopQA:** Q-Anchored (exact) ~75, Q-Anchored (random) ~10, A-Anchored (exact) ~38, A-Anchored (random) ~2.

* **TriviaQA:** Q-Anchored (exact) ~78, Q-Anchored (random) ~12, A-Anchored (exact) ~35, A-Anchored (random) ~2.

* **HotpotQA:** Q-Anchored (exact) ~72, Q-Anchored (random) ~15, A-Anchored (exact) ~12, A-Anchored (random) ~5.

* **NQ:** Q-Anchored (exact) ~74, Q-Anchored (random) ~12, A-Anchored (exact) ~20, A-Anchored (random) ~2.

**2. Llama-3-70B Chart (Middle)**

* **PopQA:** Q-Anchored (exact) ~74, Q-Anchored (random) ~18, A-Anchored (exact) ~30, A-Anchored (random) ~2.

* **TriviaQA:** Q-Anchored (exact) ~78, Q-Anchored (random) ~20, A-Anchored (exact) ~35, A-Anchored (random) ~5.

* **HotpotQA:** Q-Anchored (exact) ~75, Q-Anchored (random) ~20, A-Anchored (exact) ~10, A-Anchored (random) ~8.

* **NQ:** Q-Anchored (exact) ~58, Q-Anchored (random) ~18, A-Anchored (exact) ~22, A-Anchored (random) ~8.

**3. Mistral-7B-v0.3 Chart (Right)**

* **PopQA:** Q-Anchored (exact) ~75, Q-Anchored (random) ~10, A-Anchored (exact) ~25, A-Anchored (random) ~2.

* **TriviaQA:** Q-Anchored (exact) ~80, Q-Anchored (random) ~12, A-Anchored (exact) ~30, A-Anchored (random) ~2.

* **HotpotQA:** Q-Anchored (exact) ~78, Q-Anchored (random) ~10, A-Anchored (exact) ~10, A-Anchored (random) ~8.

* **NQ:** Q-Anchored (exact) ~76, Q-Anchored (random) ~18, A-Anchored (exact) ~26, A-Anchored (random) ~2.

### Key Observations

1. **Dominant Series:** The `Q-Anchored (exact_question)` bar (light red) is consistently the tallest across all models and datasets, typically ranging between 70-80.

2. **Lowest Series:** The `A-Anchored (random)` bar (dark gray) is consistently the shortest, often near or below 5.

3. **Model Comparison:** The `Llama-3-70B` model shows a notably lower `Q-Anchored (exact_question)` rate for the `NQ` dataset (~58) compared to its performance on other datasets and compared to the other two models on `NQ`.

4. **Dataset Sensitivity:** The `HotpotQA` dataset generally shows lower flip rates for the `A-Anchored (exact_question)` condition (light gray) compared to `PopQA` and `TriviaQA` across all models.

5. **Anchoring Effect:** For a given anchoring type (Q or A), the "exact_question" variant consistently results in a higher flip rate than the "random" variant.

### Interpretation

This visualization investigates the sensitivity of language model predictions to different types of "anchoring" prompts. The "Prediction Flip Rate" likely measures how often a model changes its answer when presented with a subtly altered prompt.

* **Core Finding:** Models are highly sensitive to the exact phrasing of the question (`Q-Anchored (exact_question)`), showing a high rate of answer changes. They are far less sensitive to random variations in the question or to answer-based anchoring, especially when the answer is randomized.

* **Model Scale:** The larger `Llama-3-70B` model does not show a uniform reduction in sensitivity. Its high sensitivity on most datasets, coupled with a distinct drop on `NQ`, suggests its behavior may be more dataset-dependent or that its training made it more robust to variations specific to the `NQ` format.

* **Dataset Nature:** The consistently lower flip rates for `A-Anchored (exact_question)` on `HotpotQA` might indicate that for multi-hop reasoning tasks (which `HotpotQA` involves), the model's answer is more firmly tied to the specific answer entity provided, making it less likely to flip even when the answer is anchored.

* **Practical Implication:** The data underscores a potential fragility in model outputs. A high flip rate for exact question rephrasing implies that minor, semantically equivalent changes in user input could lead to different model responses, which is a critical consideration for reliability and user experience in deployed applications.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 2

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart: Prediction Flip Rate Comparison Across Models and Datasets

### Overview

The image presents three grouped bar charts comparing prediction flip rates for three language models (Llama-3-8B, Llama-3-70B, Mistral-7B-v0.3) across four datasets (PopQA, TriviaQA, HotpotQA, NQ). Each dataset group contains four bars representing different anchoring strategies: Q-Anchored (exact_question), Q-Anchored (random), A-Anchored (exact_question), and A-Anchored (random). The y-axis measures prediction flip rate (0-80%), and the x-axis lists datasets.

### Components/Axes

- **X-Axis**: Datasets (PopQA, TriviaQA, HotpotQA, NQ)

- **Y-Axis**: Prediction Flip Rate (%) with scale 0-80

- **Legend**: Located at the bottom, color-coded as:

- Red: Q-Anchored (exact_question)

- Maroon: Q-Anchored (random)

- Gray: A-Anchored (exact_question)

- Dark Gray: A-Anchored (random)

- **Models**: Separate charts for Llama-3-8B (left), Llama-3-70B (center), Mistral-7B-v0.3 (right)

### Detailed Analysis

#### Llama-3-8B

- **PopQA**:

- Q-Anchored (exact): ~75%

- A-Anchored (exact): ~35%

- Q-Anchored (random): ~10%

- A-Anchored (random): ~2%

- **TriviaQA**:

- Q-Anchored (exact): ~70%

- A-Anchored (exact): ~30%

- Q-Anchored (random): ~12%

- A-Anchored (random): ~3%

- **HotpotQA**:

- Q-Anchored (exact): ~65%

- A-Anchored (exact): ~10%

- Q-Anchored (random): ~13%

- A-Anchored (random): ~4%

- **NQ**:

- Q-Anchored (exact): ~60%

- A-Anchored (exact): ~15%

- Q-Anchored (random): ~8%

- A-Anchored (random): ~1%

#### Llama-3-70B

- **PopQA**:

- Q-Anchored (exact): ~78%

- A-Anchored (exact): ~32%

- Q-Anchored (random): ~15%

- A-Anchored (random): ~2%

- **TriviaQA**:

- Q-Anchored (exact): ~75%

- A-Anchored (exact): ~35%

- Q-Anchored (random): ~18%

- A-Anchored (random): ~3%

- **HotpotQA**:

- Q-Anchored (exact): ~70%

- A-Anchored (exact): ~20%

- Q-Anchored (random): ~12%

- A-Anchored (random): ~5%

- **NQ**:

- Q-Anchored (exact): ~65%

- A-Anchored (exact): ~25%

- Q-Anchored (random): ~10%

- A-Anchored (random): ~2%

#### Mistral-7B-v0.3

- **PopQA**:

- Q-Anchored (exact): ~70%

- A-Anchored (exact): ~25%

- Q-Anchored (random): ~10%

- A-Anchored (random): ~1%

- **TriviaQA**:

- Q-Anchored (exact): ~72%

- A-Anchored (exact): ~28%

- Q-Anchored (random): ~12%

- A-Anchored (random): ~2%

- **HotpotQA**:

- Q-Anchored (exact): ~68%

- A-Anchored (exact): ~22%

- Q-Anchored (random): ~9%

- A-Anchored (random): ~3%

- **NQ**:

- Q-Anchored (exact): ~66%

- A-Anchored (exact): ~27%

- Q-Anchored (random): ~11%

- A-Anchored (random): ~2%

### Key Observations

1. **Q-Anchored (exact_question)** consistently achieves the highest prediction flip rates across all models and datasets, suggesting it is the most effective anchoring strategy.

2. **A-Anchored (exact_question)** outperforms random anchoring but lags behind Q-Anchored (exact_question).

3. **Random anchoring** (both Q and A) results in the lowest flip rates, indicating poor performance.

4. **Model size correlation**: Llama-3-70B (larger model) generally achieves higher flip rates than Llama-3-8B and Mistral-7B-v0.3, though the difference is less pronounced than the impact of anchoring strategy.

5. **Dataset variability**: NQ shows the lowest flip rates overall, while PopQA and TriviaQA perform better.

### Interpretation

The data demonstrates that **exact question anchoring** (both Q and A) significantly improves prediction accuracy compared to random anchoring. This suggests that grounding the model's reasoning in specific question or answer content enhances reliability. While larger models (e.g., Llama-3-70B) perform better, the anchoring method has a more substantial impact than model size alone. The NQ dataset's lower performance may indicate greater complexity or ambiguity in its questions, requiring more precise anchoring to achieve higher accuracy. The consistent trend across models highlights the importance of anchoring strategies in reducing prediction errors.

DECODING INTELLIGENCE...