## Bar Chart: GPT-2 xl Head Distribution by Layer

### Overview

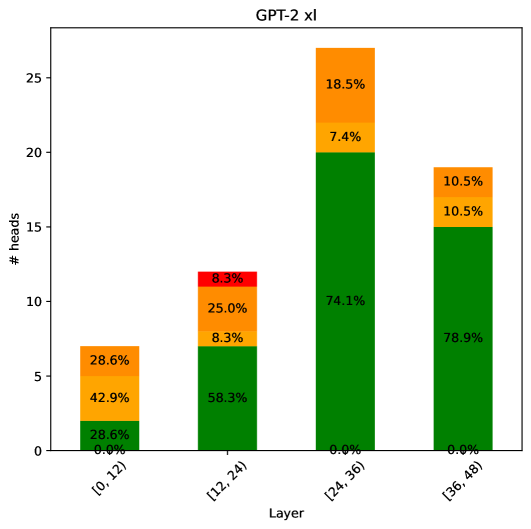

The chart visualizes the distribution of attention heads across four layers of the GPT-2 xl model. Each bar represents a layer range, with segmented colors indicating the proportion of heads assigned to different functional categories. The y-axis measures the number of heads, while the x-axis categorizes layers into quartiles.

### Components/Axes

- **X-axis (Layer Ranges)**:

- `[0,12)`: First quartile of layers

- `[12,24)`: Second quartile

- `[24,36)`: Third quartile

- `[36,48)`: Fourth quartile

- **Y-axis (# heads)**: Scaled from 0 to 25, representing total attention heads per layer.

- **Legend**:

- Green: Primary functional category

- Yellow: Secondary functional category

- Orange: Tertiary functional category

- Red: Quaternary functional category (only appears in `[12,24)` layer)

### Detailed Analysis

1. **Layer `[0,12)`**:

- Green: 28.6% (2.86 heads)

- Yellow: 42.9% (4.29 heads)

- Orange: 28.6% (2.86 heads)

- Red: 0.0% (0 heads)

- *Total*: 100.1% (rounded)

2. **Layer `[12,24)`**:

- Green: 58.3% (5.83 heads)

- Yellow: 25.0% (2.5 heads)

- Orange: 8.3% (0.83 heads)

- Red: 8.3% (0.83 heads)

- *Total*: 100.0%

3. **Layer `[24,36)`**:

- Green: 74.1% (7.41 heads)

- Yellow: 7.4% (0.74 heads)

- Orange: 18.5% (1.85 heads)

- Red: 0.0% (0 heads)

- *Total*: 100.0%

4. **Layer `[36,48)`**:

- Green: 78.9% (7.89 heads)

- Yellow: 10.5% (1.05 heads)

- Orange: 10.5% (1.05 heads)

- Red: 0.0% (0 heads)

- *Total*: 100.0%

### Key Observations

- **Green Dominance**: The primary functional category (green) grows monotonically from 28.6% to 78.9% across layers, suggesting increasing specialization in higher layers.

- **Yellow Decline**: Secondary functionality (yellow) decreases from 42.9% to 10.5%, indicating reduced emphasis on broader processing in deeper layers.

- **Orange Pattern**: Tertiary functionality (orange) peaks at 28.6% in the first layer, drops to 8.3% in the second, then rises to 18.5% in the third before stabilizing at 10.5%.

- **Red Anomaly**: Quaternary functionality (red) only appears in the second layer at 8.3%, suggesting a unique role or transitional behavior in that layer range.

### Interpretation

The data demonstrates a clear architectural progression in GPT-2 xl's attention mechanism:

1. **Layer Specialization**: Higher layers increasingly concentrate computational resources (green) on core tasks, while reducing reliance on auxiliary functions (yellow/orange).

2. **Temporal Dynamics**: The second layer's unique red component (8.3%) may represent a transitional phase or error-correction mechanism absent in other layers.

3. **Efficiency Tradeoff**: The sharp decline in yellow functionality (from 42.9% to 10.5%) suggests a shift from general-purpose processing to highly optimized, layer-specific operations.

This pattern aligns with transformer architecture principles where deeper layers typically handle more abstract, context-specific representations. The red component's exclusive presence in the second layer warrants further investigation into potential architectural quirks or specialized processing roles.