TECHNICAL ASSET FINGERPRINT

fbef3f95fabe7c161bb805b7

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Chart: Brain Alignment vs. Number of Tokens for Different Pythia Models

### Overview

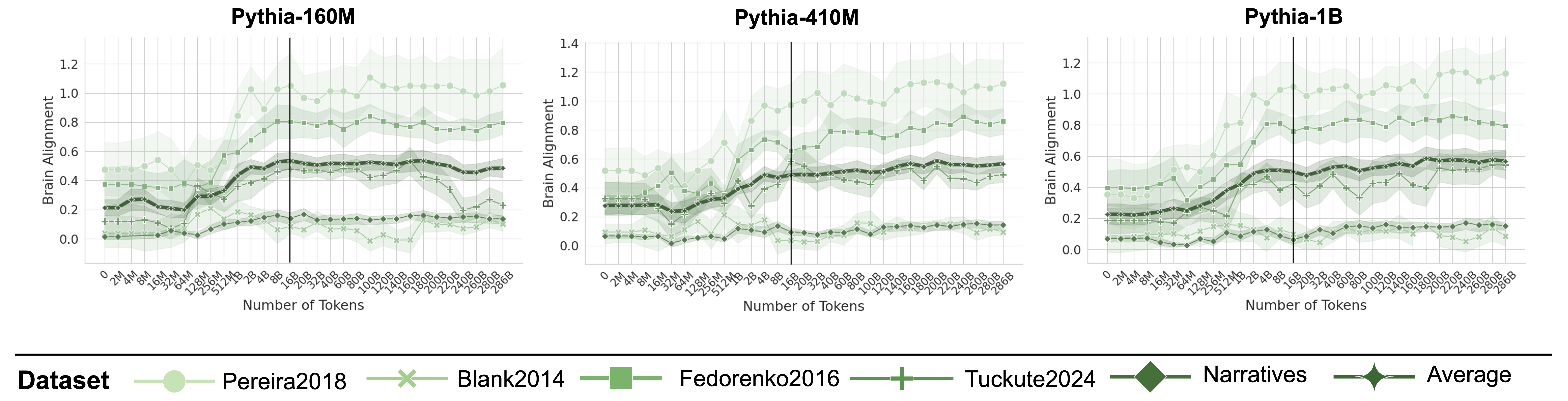

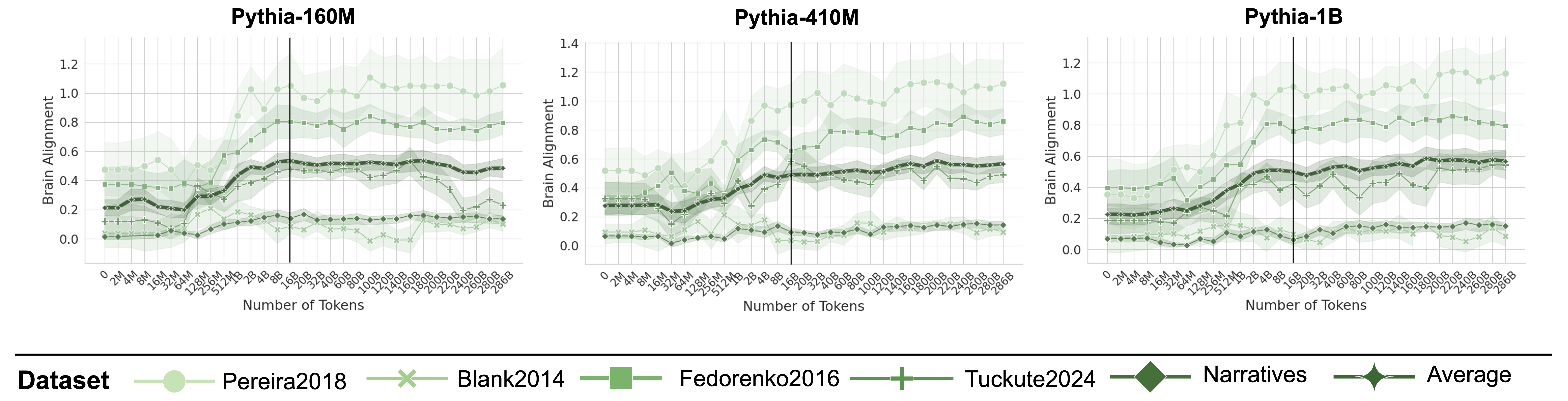

The image presents three line charts comparing brain alignment against the number of tokens processed by different Pythia language models (160M, 410M, and 1B). Each chart displays multiple data series, representing different datasets (Pereira2018, Blank2014, Fedorenko2016, Tuckute2024, Narratives) and their average. The x-axis represents the number of tokens, and the y-axis represents brain alignment.

### Components/Axes

* **Titles:**

* Left Chart: Pythia-160M

* Middle Chart: Pythia-410M

* Right Chart: Pythia-1B

* **X-Axis:** Number of Tokens

* Scale: 0, 2M, 4M, 8M, 16M, 32M, 64M, 128M, 256M, 512M, 1B, 2B, 4B, 8B, 16B, 20B, 32B, 40B, 60B, 80B, 100B, 120B, 140B, 160B, 180B, 200B, 220B, 240B, 260B, 280B, 286B

* **Y-Axis:** Brain Alignment

* Scale: 0.0, 0.2, 0.4, 0.6, 0.8, 1.0, 1.2 (Left Chart), 1.4 (Middle Chart), 1.2 (Right Chart)

* **Legend (Bottom):**

* Pereira2018 (light green circles)

* Blank2014 (light green x's)

* Fedorenko2016 (light green squares)

* Tuckute2024 (light green plus signs)

* Narratives (dark green diamonds)

* Average (dark green diamond-ended line)

* **Vertical Line:** A vertical black line is present in each chart, positioned between 8B and 16B tokens.

### Detailed Analysis

**Pythia-160M (Left Chart):**

* **Pereira2018 (light green circles):** Starts around 0.0, increases to approximately 0.5 around 16M tokens, then fluctuates between 0.4 and 0.6.

* **Blank2014 (light green x's):** Remains relatively flat around 0.1-0.2 throughout the range.

* **Fedorenko2016 (light green squares):** Starts around 0.3, increases to approximately 0.4 around 16M tokens, then fluctuates between 0.3 and 0.4.

* **Tuckute2024 (light green plus signs):** Remains relatively flat around 0.1-0.2 throughout the range.

* **Narratives (dark green diamonds):** Remains relatively flat around 0.0-0.1 throughout the range.

* **Average (dark green diamond-ended line):** Starts around 0.2, increases to approximately 0.4 around 16M tokens, then fluctuates between 0.3 and 0.4.

**Pythia-410M (Middle Chart):**

* **Pereira2018 (light green circles):** Starts around 0.1, increases to approximately 1.0 around 16M tokens, then fluctuates between 0.8 and 1.0.

* **Blank2014 (light green x's):** Remains relatively flat around 0.1-0.2 throughout the range.

* **Fedorenko2016 (light green squares):** Starts around 0.3, increases to approximately 0.7 around 16M tokens, then fluctuates between 0.6 and 0.8.

* **Tuckute2024 (light green plus signs):** Remains relatively flat around 0.2-0.3 throughout the range.

* **Narratives (dark green diamonds):** Remains relatively flat around 0.0-0.1 throughout the range.

* **Average (dark green diamond-ended line):** Starts around 0.3, increases to approximately 0.5 around 16M tokens, then fluctuates between 0.4 and 0.6.

**Pythia-1B (Right Chart):**

* **Pereira2018 (light green circles):** Starts around 0.2, increases to approximately 1.0 around 16M tokens, then fluctuates between 0.8 and 1.1.

* **Blank2014 (light green x's):** Remains relatively flat around 0.1-0.2 throughout the range.

* **Fedorenko2016 (light green squares):** Starts around 0.3, increases to approximately 0.8 around 16M tokens, then fluctuates between 0.7 and 0.8.

* **Tuckute2024 (light green plus signs):** Remains relatively flat around 0.2-0.3 throughout the range.

* **Narratives (dark green diamonds):** Remains relatively flat around 0.0-0.1 throughout the range.

* **Average (dark green diamond-ended line):** Starts around 0.2, increases to approximately 0.5 around 16M tokens, then fluctuates between 0.4 and 0.6.

### Key Observations

* **Pereira2018** dataset consistently shows the highest brain alignment across all three Pythia models, with a significant increase around 16M tokens.

* **Blank2014** and **Narratives** datasets consistently show the lowest brain alignment across all three Pythia models.

* The **Average** brain alignment generally increases up to 16M tokens and then stabilizes.

* The vertical line at approximately 16B tokens does not appear to correlate with any significant change in the trends of the data series.

* The brain alignment values for Pereira2018 and Fedorenko2016 datasets are significantly higher for the 410M and 1B models compared to the 160M model.

### Interpretation

The charts suggest that brain alignment, as measured by these datasets, tends to increase with the number of tokens processed by the Pythia models, up to a certain point (around 16M tokens), after which it plateaus. The Pereira2018 dataset exhibits the strongest correlation with brain activity, while Blank2014 and Narratives show the weakest. The increase in brain alignment for Pereira2018 and Fedorenko2016 with larger models (410M and 1B) indicates that these models may be better at capturing the nuances of human language processing as represented in these datasets. The vertical line at 16B tokens may represent a significant point in the training or evaluation process, but its impact on brain alignment is not immediately apparent from the data.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Line Chart: Brain Alignment vs. Number of Tokens for Pythia Models

### Overview

This image presents three line charts, each displaying the relationship between "Brain Alignment" (y-axis) and "Number of Tokens" (x-axis) for different datasets. The charts compare the performance of three Pythia language models: Pythia-160M, Pythia-410M, and Pythia-1B. Each chart includes data for five datasets: Pereira2018, Blank2014, Fedorenko2016, Tuckute2024, and Narratives, as well as an "Average" line.

### Components/Axes

* **X-axis:** "Number of Tokens" - Ranges from approximately 0 to 1000.

* **Y-axis:** "Brain Alignment" - Ranges from 0.0 to 1.2.

* **Charts:** Three separate charts, each representing a different Pythia model:

* Pythia-160M

* Pythia-410M

* Pythia-1B

* **Legend:** Located at the bottom-center of the image. It identifies the datasets and the average alignment using both color and marker style.

* Pereira2018 (Light Green Circle)

* Blank2014 (Light Grey X)

* Fedorenko2016 (Dark Green Square)

* Tuckute2024 (Black Diamond)

* Narratives (Dark Green Hexagon)

* Average (Black Circle)

### Detailed Analysis

**Pythia-160M:**

* **Pereira2018 (Light Green Circle):** The line starts at approximately 0.2, rises to a peak of around 1.1 at approximately 400 tokens, and then declines to around 0.6 at 1000 tokens.

* **Blank2014 (Light Grey X):** The line remains relatively low, fluctuating between 0.1 and 0.3 throughout the entire range of tokens.

* **Fedorenko2016 (Dark Green Square):** The line starts at approximately 0.3, increases to around 0.7 at 400 tokens, and then plateaus around 0.6-0.7.

* **Tuckute2024 (Black Diamond):** The line starts at approximately 0.1, rises to a peak of around 0.6 at 400 tokens, and then declines to around 0.3 at 1000 tokens.

* **Narratives (Dark Green Hexagon):** The line starts at approximately 0.2, increases to around 0.7 at 400 tokens, and then declines to around 0.4 at 1000 tokens.

* **Average (Black Circle):** The line starts at approximately 0.2, rises to a peak of around 0.7 at 400 tokens, and then declines to around 0.4 at 1000 tokens.

**Pythia-410M:**

* **Pereira2018 (Light Green Circle):** The line starts at approximately 0.3, rises to a peak of around 1.2 at approximately 400 tokens, and then declines to around 0.7 at 1000 tokens.

* **Blank2014 (Light Grey X):** The line remains relatively low, fluctuating between 0.1 and 0.3 throughout the entire range of tokens.

* **Fedorenko2016 (Dark Green Square):** The line starts at approximately 0.4, increases to around 0.8 at 400 tokens, and then plateaus around 0.7-0.8.

* **Tuckute2024 (Black Diamond):** The line starts at approximately 0.2, rises to a peak of around 0.7 at 400 tokens, and then declines to around 0.4 at 1000 tokens.

* **Narratives (Dark Green Hexagon):** The line starts at approximately 0.3, increases to around 0.8 at 400 tokens, and then declines to around 0.5 at 1000 tokens.

* **Average (Black Circle):** The line starts at approximately 0.3, rises to a peak of around 0.8 at 400 tokens, and then declines to around 0.5 at 1000 tokens.

**Pythia-1B:**

* **Pereira2018 (Light Green Circle):** The line starts at approximately 0.3, rises to a peak of around 1.1 at approximately 400 tokens, and then declines to around 0.7 at 1000 tokens.

* **Blank2014 (Light Grey X):** The line remains relatively low, fluctuating between 0.1 and 0.3 throughout the entire range of tokens.

* **Fedorenko2016 (Dark Green Square):** The line starts at approximately 0.4, increases to around 0.8 at 400 tokens, and then plateaus around 0.7-0.8.

* **Tuckute2024 (Black Diamond):** The line starts at approximately 0.2, rises to a peak of around 0.7 at 400 tokens, and then declines to around 0.4 at 1000 tokens.

* **Narratives (Dark Green Hexagon):** The line starts at approximately 0.3, increases to around 0.8 at 400 tokens, and then declines to around 0.5 at 1000 tokens.

* **Average (Black Circle):** The line starts at approximately 0.3, rises to a peak of around 0.8 at 400 tokens, and then declines to around 0.5 at 1000 tokens.

### Key Observations

* All datasets exhibit a general trend of increasing brain alignment up to approximately 400 tokens, followed by a decline.

* The "Pereira2018" dataset consistently shows the highest brain alignment across all three models.

* The "Blank2014" dataset consistently shows the lowest brain alignment across all three models.

* The "Average" line generally follows the trend of the "Narratives" dataset.

* As the model size increases (160M -> 410M -> 1B), the peak brain alignment tends to increase slightly.

### Interpretation

The charts demonstrate how well the Pythia language models align with brain activity patterns as the number of processed tokens increases. The initial increase in brain alignment suggests that the models are initially capturing relevant information from the input text. The subsequent decline may indicate that the models are losing focus or encountering diminishing returns as they process more tokens.

The consistent high performance of the "Pereira2018" dataset suggests that this dataset is particularly well-suited for evaluating brain alignment. Conversely, the low performance of the "Blank2014" dataset may indicate that it is less representative of natural language processing tasks.

The slight increase in peak brain alignment with larger model sizes suggests that larger models are capable of capturing more complex relationships between language and brain activity, but this effect is not dramatic. The overall trends are consistent across all three models, indicating a general pattern in how these models process language. The fact that all lines decline after a peak suggests a potential limitation in the models' ability to maintain alignment over extended sequences.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Chart: Brain Alignment vs. Training Tokens for Pythia Models

### Overview

The image displays three side-by-side line charts comparing the "Brain Alignment" metric across three different sizes of the Pythia language model family (160M, 410M, and 1B parameters) as a function of the number of training tokens. Each chart plots the performance of six different evaluation datasets, identified by a legend at the bottom of the figure.

### Components/Axes

* **Chart Titles (Top Center):** "Pythia-160M", "Pythia-410M", "Pythia-1B".

* **Y-Axis (Left Side of Each Chart):** Label is "Brain Alignment". The scale runs from 0.0 to 1.4, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, 1.0, 1.2, and 1.4.

* **X-Axis (Bottom of Each Chart):** Label is "Number of Tokens". The scale is logarithmic, with labeled tick marks at: 0, 2M, 4M, 8M, 16M, 32M, 64M, 128M, 256M, 512M, 1B, 2B, 4B, 8B, 16B, 20B, 32B, 40B, 60B, 80B, 100B, 120B, 140B, 160B, 180B, 200B, 220B, 240B, 260B, 280B, 286B.

* **Vertical Reference Line:** A solid black vertical line is drawn at the 16B token mark in all three charts.

* **Legend (Bottom Center, spanning all charts):** A horizontal legend titled "Dataset" defines the six data series:

* **Pereira2018:** Light green line with circle markers.

* **Blank2014:** Light green line with 'x' markers.

* **Fedorenko2016:** Medium green line with square markers.

* **Tuckute2024:** Medium green line with plus ('+') markers.

* **Narratives:** Dark green line with diamond markers.

* **Average:** Darkest green line with star/asterisk markers.

* **Data Representation:** Each dataset is represented by a line connecting data points at specific token counts. A shaded area of the corresponding color surrounds each line, likely indicating confidence intervals or variability.

### Detailed Analysis

**General Trend Across All Charts:**

For most datasets, Brain Alignment generally increases as the number of training tokens increases, with a notable acceleration in improvement between approximately 512M and 16B tokens. After the 16B token mark (indicated by the vertical line), the rate of improvement tends to plateau or increase more slowly.

**Pythia-160M Chart:**

* **Pereira2018 (Light Green, Circles):** Shows the highest alignment values. Starts around 0.5 at 0 tokens, rises steadily to ~1.1 at 16B tokens, and fluctuates between ~1.0 and ~1.2 thereafter.

* **Fedorenko2016 (Medium Green, Squares):** Second highest. Starts ~0.4, rises to ~0.8 at 16B, and plateaus around 0.8-0.9.

* **Average (Darkest Green, Stars):** Sits in the middle of the pack. Starts ~0.2, rises to ~0.55 at 16B, and remains around 0.5-0.6.

* **Tuckute2024 (Medium Green, Pluses):** Follows a similar trend to the Average but slightly lower, ending around 0.5.

* **Narratives (Dark Green, Diamonds):** Lower alignment. Starts near 0.1, rises to ~0.2 at 16B, and stays around 0.15-0.25.

* **Blank2014 (Light Green, 'x's):** Shows the lowest alignment. Starts near 0.0, rises slightly to ~0.1 at 16B, and remains below 0.2.

**Pythia-410M Chart:**

* **Pereira2018:** Again the highest. Starts ~0.5, rises to ~1.1 at 16B, and fluctuates between ~1.0 and ~1.2.

* **Fedorenko2016:** Starts ~0.35, rises to ~0.8 at 16B, and plateaus around 0.8-0.9.

* **Average:** Starts ~0.3, rises to ~0.5 at 16B, and plateaus around 0.5-0.6.

* **Tuckute2024:** Starts ~0.3, rises to ~0.45 at 16B, and plateaus around 0.45-0.55.

* **Narratives:** Starts ~0.1, rises to ~0.15 at 16B, and stays around 0.1-0.2.

* **Blank2014:** Starts near 0.05, rises to ~0.1 at 16B, and remains low, below 0.2.

**Pythia-1B Chart:**

* **Pereira2018:** Maintains the highest position. Starts ~0.4, rises to ~1.1 at 16B, and fluctuates between ~1.0 and ~1.2.

* **Fedorenko2016:** Starts ~0.4, rises to ~0.8 at 16B, and plateaus around 0.8-0.9.

* **Average:** Starts ~0.25, rises to ~0.55 at 16B, and plateaus around 0.55-0.65.

* **Tuckute2024:** Starts ~0.2, rises to ~0.5 at 16B, and plateaus around 0.5-0.6.

* **Narratives:** Starts ~0.1, rises to ~0.15 at 16B, and stays around 0.1-0.2.

* **Blank2014:** Starts near 0.05, rises to ~0.1 at 16B, and remains the lowest, below 0.2.

### Key Observations

1. **Consistent Dataset Hierarchy:** The relative ordering of the datasets by Brain Alignment score is remarkably consistent across all three model sizes and all training checkpoints. Pereira2018 is always highest, followed by Fedorenko2016, then the Average, Tuckute2024, Narratives, and finally Blank2014 as the lowest.

2. **Model Size Effect:** While the trends are similar, the absolute alignment values, particularly for the top-performing datasets (Pereira2018, Fedorenko2016), appear slightly higher in the larger models (410M and 1B) compared to the 160M model at equivalent token counts, especially in the later stages of training.

3. **Critical Training Phase:** The most significant gains in Brain Alignment for all datasets occur during the training period leading up to 16B tokens. The vertical line at 16B highlights this as a potential point of interest or saturation.

4. **Variability:** The shaded confidence intervals are wider for the higher-performing datasets (Pereira2018, Fedorenko2016) and narrower for the lower-performing ones (Blank2014, Narratives), suggesting more variance in the measurements for the tasks where models achieve higher alignment.

### Interpretation

This visualization suggests that the internal representations of Pythia language models become increasingly aligned with certain patterns of human brain activity (as measured by the "Brain Alignment" metric on specific datasets) as they are trained on more data. The effect is robust across different model scales within this range.

The consistent hierarchy of dataset performance implies that some neural recording datasets or tasks (e.g., Pereira2018) capture aspects of language processing that these models learn to replicate more readily than others (e.g., Blank2014). This could be due to differences in the experimental paradigms, the brain regions recorded, or the complexity of the stimuli.

The pronounced improvement up to 16B tokens followed by a plateau indicates a phase of rapid learning of brain-relevant features, after which additional training yields diminishing returns for this specific metric. The slightly better performance of larger models suggests that increased model capacity may allow for a finer-grained or more robust alignment with neural data. The research likely investigates how artificial neural networks develop brain-like representations during training, with this figure serving as a key result showing the progression and limits of that alignment.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Brain Alignment Across Token Counts for Pythia Models

### Overview

Three line graphs compare brain alignment metrics across token counts for three Pythia models (160M, 410M, 1B). Each graph shows six datasets (Pereira2018, Blank2014, Fedorenko2016, Tuckute2024, Narratives, Average) with shaded confidence intervals. A vertical dashed line marks 100B tokens, a critical threshold.

### Components/Axes

- **X-axis**: "Number of Tokens" (0 to 268B, logarithmic scale)

- **Y-axis**: "Brain Alignment" (0 to 1.2, linear scale)

- **Legend**: Located at the bottom, with symbols/colors:

- Light green circles: Pereira2018

- Light green crosses: Blank2014

- Dark green squares: Fedorenko2016

- Dark green pluses: Tuckute2024

- Dark green diamonds: Narratives

- Dark green stars: Average

- **Vertical dashed line**: 100B tokens (center of each graph)

### Detailed Analysis

#### Pythia-160M

- **Pereira2018** (light green circles): Starts at ~0.5 (0 tokens), peaks at ~1.0 (100B tokens), declines to ~0.8 (268B tokens).

- **Blank2014** (light green crosses): Ranges 0.2–0.4, peaks at ~0.4 (100B tokens).

- **Fedorenko2016** (dark green squares): Starts at ~0.3, peaks at ~0.6 (100B tokens), drops to ~0.4 (268B tokens).

- **Tuckute2024** (dark green pluses): Similar to Fedorenko2016 but lower (~0.3–0.5).

- **Narratives** (dark green diamonds): Starts at ~0.2, peaks at ~0.5 (100B tokens), declines to ~0.3 (268B tokens).

- **Average** (dark green stars): Peaks at ~0.7 (100B tokens), declines to ~0.6 (268B tokens).

#### Pythia-410M

- **Pereira2018**: Starts at ~0.6, peaks at ~1.1 (100B tokens), declines to ~0.9 (268B tokens).

- **Blank2014**: Ranges 0.3–0.5, peaks at ~0.5 (100B tokens).

- **Fedorenko2016**: Starts at ~0.4, peaks at ~0.7 (100B tokens), drops to ~0.5 (268B tokens).

- **Tuckute2024**: Similar to Fedorenko2016 but lower (~0.4–0.6).

- **Narratives**: Starts at ~0.3, peaks at ~0.6 (100B tokens), declines to ~0.4 (268B tokens).

- **Average**: Peaks at ~0.8 (100B tokens), declines to ~0.7 (268B tokens).

#### Pythia-1B

- **Pereira2018**: Starts at ~0.7, peaks at ~1.2 (100B tokens), declines to ~1.0 (268B tokens).

- **Blank2014**: Ranges 0.4–0.6, peaks at ~0.6 (100B tokens).

- **Fedorenko2016**: Starts at ~0.5, peaks at ~0.8 (100B tokens), drops to ~0.6 (268B tokens).

- **Tuckute2024**: Similar to Fedorenko2016 but lower (~0.5–0.7).

- **Narratives**: Starts at ~0.4, peaks at ~0.7 (100B tokens), declines to ~0.5 (268B tokens).

- **Average**: Peaks at ~0.9 (100B tokens), declines to ~0.8 (268B tokens).

### Key Observations

1. **Peak at 100B tokens**: All datasets show maximum brain alignment at 100B tokens, followed by a decline.

2. **Model scaling**: Larger models (Pythia-1B) exhibit higher alignment values than smaller models (Pythia-160M).

3. **Dataset variability**: Pereira2018 and Blank2014 consistently outperform others, while Narratives and Tuckute2024 show lower alignment.

4. **Average trend**: The "Average" line (dark green stars) tracks mid-range performance across datasets.

### Interpretation

The data suggests that brain alignment peaks at 100B tokens for all models, likely reflecting an optimal balance between token quantity and cognitive processing. Larger models (Pythia-1B) achieve higher alignment, indicating scalability benefits. Pereira2018 and Blank2014 datasets outperform others, possibly due to methodological differences (e.g., narrative focus vs. generic text). The decline post-100B tokens may signal diminishing returns or overfitting. The "Average" line highlights general trends, masking dataset-specific anomalies (e.g., Tuckute2024’s dip in Pythia-1B). This analysis underscores the importance of token quantity and dataset choice in aligning neural representations with brain activity.

DECODING INTELLIGENCE...