## Image Series: Semantic Segmentation Comparison

### Overview

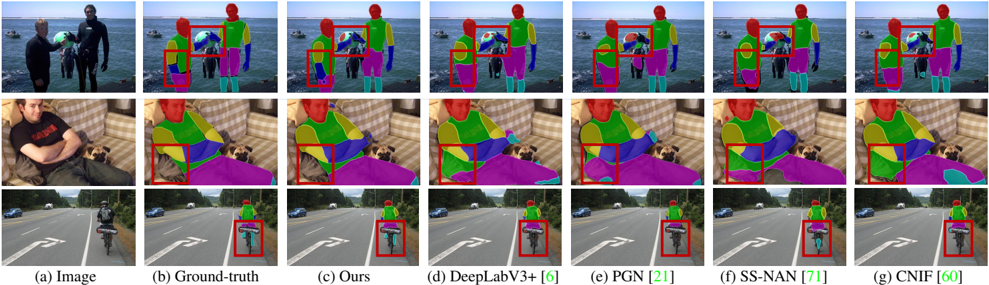

The image presents a series of comparisons of semantic segmentation results for three different scenes: people on a beach, a person with a dog, and a cyclist on a road. Each scene has an original image and six different segmentation outputs, labeled (b) through (f). The segmentation outputs are visually compared to a "Ground-truth" segmentation (b) and the proposed method "Ours" (c). The other methods are DeepLabV3+ [6], PGN [21], SS-NAN [71], and CNIF [60]. Each segmentation output highlights different objects with distinct colors, and a red bounding box is drawn around a specific region of interest in each image to facilitate comparison.

### Components/Axes

The image is organized into three rows, each representing a different scene. Each row contains seven columns:

1. **(a) Image:** The original input image.

2. **(b) Ground-truth:** The manually annotated, correct segmentation.

3. **(c) Ours:** The segmentation result of the proposed method.

4. **(d) DeepLabV3+ [6]:** Segmentation result of the DeepLabV3+ method.

5. **(e) PGN [21]:** Segmentation result of the PGN method.

6. **(f) SS-NAN [71]:** Segmentation result of the SS-NAN method.

7. **(g) CNIF [60]:** Segmentation result of the CNIF method.

There are no explicit axes or scales. The comparison is visual, based on the quality of the segmentation in each output.

### Detailed Analysis or Content Details

**Row 1: Beach Scene**

* **(a) Image:** Two people in wetsuits standing on a beach with boats in the background.

* **(b) Ground-truth:** Segmentation shows distinct regions for people (blue), wetsuits (various colors), water (green), sky (yellow), and boats (purple).

* **(c) Ours:** Segmentation is similar to ground truth, with good delineation of people and wetsuits.

* **(d) DeepLabV3+ [6]:** Segmentation is generally good, but some areas of the wetsuits are misclassified.

* **(e) PGN [21]:** Segmentation shows some blurring and misclassification in the wetsuit areas.

* **(f) SS-NAN [71]:** Segmentation is less accurate, with more misclassifications in the wetsuit and background.

* **(g) CNIF [60]:** Segmentation is similar to SS-NAN, with noticeable inaccuracies.

**Row 2: Person with Dog Scene**

* **(a) Image:** A person sitting on a couch with a dog.

* **(b) Ground-truth:** Segmentation shows distinct regions for the person (red), dog (yellow), couch (green), and background (purple).

* **(c) Ours:** Segmentation is accurate, closely matching the ground truth.

* **(d) DeepLabV3+ [6]:** Segmentation is good, but some areas of the dog are misclassified.

* **(e) PGN [21]:** Segmentation shows some blurring and misclassification in the dog and couch areas.

* **(f) SS-NAN [71]:** Segmentation is less accurate, with more misclassifications in the dog and couch.

* **(g) CNIF [60]:** Segmentation is similar to SS-NAN, with noticeable inaccuracies.

**Row 3: Cyclist Scene**

* **(a) Image:** A cyclist riding on a road.

* **(b) Ground-truth:** Segmentation shows distinct regions for the cyclist (blue), bicycle (green), road (gray), and background (purple).

* **(c) Ours:** Segmentation is accurate, closely matching the ground truth.

* **(d) DeepLabV3+ [6]:** Segmentation is good, but some areas of the bicycle are misclassified.

* **(e) PGN [21]:** Segmentation shows some blurring and misclassification in the bicycle and road areas.

* **(f) SS-NAN [71]:** Segmentation is less accurate, with more misclassifications in the bicycle and road.

* **(g) CNIF [60]:** Segmentation is similar to SS-NAN, with noticeable inaccuracies.

### Key Observations

* The proposed method "Ours" consistently produces segmentation results that are very close to the ground truth in all three scenes.

* DeepLabV3+ [6] generally performs well, but exhibits some misclassifications, particularly in complex regions like the wetsuits and the dog.

* PGN [21], SS-NAN [71], and CNIF [60] consistently show lower segmentation accuracy compared to "Ours" and DeepLabV3+ [6].

* The red bounding boxes highlight areas where the segmentation methods differ most from the ground truth, allowing for a focused comparison.

### Interpretation

The image demonstrates a comparative evaluation of different semantic segmentation methods. The "Ours" method appears to be the most accurate, consistently providing segmentation results that closely match the ground truth. This suggests that the proposed method is effective at accurately identifying and delineating objects in complex scenes. The other methods, while capable of producing reasonable segmentations, exhibit more errors and inaccuracies, particularly in areas with intricate details or challenging lighting conditions. The consistent performance difference suggests that the proposed method incorporates features or techniques that improve its ability to handle these challenges. The inclusion of the method names with bracketed numbers (e.g., [6], [21], [71], [60]) likely refers to citations or references to the original publications describing those methods. The visual comparison, facilitated by the red bounding boxes, allows for a quick and intuitive assessment of the strengths and weaknesses of each method.