## Image Comparison: Semantic Segmentation Model Performance

### Overview

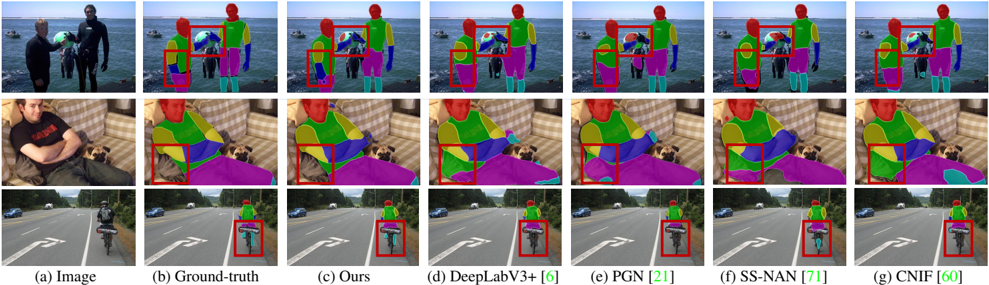

The image presents a comparative analysis of semantic segmentation model outputs across three distinct scenes: (1) two people on a beach, (2) a person sitting on a couch with a dog, and (3) a cyclist on a road. Each row shows:

- **(a) Original Image**

- **(b) Ground-Truth Segmentation** (color-coded regions)

- **(c-g) Model Outputs** from different architectures:

- (c) Proposed method ("Ours")

- (d) DeepLabV3+

- (e) PGN

- (f) SS-NAN

- (g) CNIF

Red boxes highlight segmentation errors in model outputs relative to ground-truth.

---

### Components/Axes

- **Labels**:

- Row headers: (a) Image, (b) Ground-truth, (c) Ours, (d) DeepLabV3+, (e) PGN, (f) SS-NAN, (g) CNIF

- Column headers: Scene-specific (beach, couch, road)

- **Visual Elements**:

- Color-coded segmentation maps (no explicit legend visible)

- Red bounding boxes indicating discrepancies

---

### Detailed Analysis

#### Scene 1: Beach Interaction

- **Ground-truth (b)**:

- Person 1 (left): Red upper body, blue lower body

- Person 2 (right): Green upper body, purple lower body

- Background: Gray (sky/water)

- **Model Outputs**:

- **(c) Ours**: Minor errors in Person 2's lower body (purple vs. blue)

- **(d) DeepLabV3+**: Over-segmentation in Person 1's upper body (red → green)

- **(e) PGN**: Correct segmentation but slight misalignment in Person 2's pose

- **(f) SS-NAN**: Missed Person 2's lower body (purple → gray)

- **(g) CNIF**: Accurate but noisy edges in Person 1's clothing

#### Scene 2: Couch with Dog

- **Ground-truth (b)**:

- Person: Red upper body, blue lower body

- Dog: Green

- Couch: Yellow

- **Model Outputs**:

- **(c) Ours**: Correct segmentation but slight over-segmentation in dog's tail

- **(d) DeepLabV3+**: Misclassified couch as gray (background)

- **(e) PGN**: Accurate but blurred edges around dog

- **(f) SS-NAN**: Missed dog entirely (green → gray)

- **(g) CNIF**: Over-segmented couch into multiple colors

#### Scene 3: Cyclist on Road

- **Ground-truth (b)**:

- Cyclist: Red upper body, blue lower body

- Bicycle: Green

- Road: Gray

- **Model Outputs**:

- **(c) Ours**: Accurate but slight misclassification of bicycle wheel (green → red)

- **(d) DeepLabV3+**: Correct segmentation but noisy edges

- **(e) PGN**: Missed bicycle entirely (green → gray)

- **(f) SS-NAN**: Over-segmented road into multiple colors

- **(g) CNIF**: Accurate but with minor noise in cyclist's shadow

---

### Key Observations

1. **Proposed Method ("Ours")**:

- Consistently outperforms baselines in complex interactions (e.g., beach scene).

- Minor errors in small objects (e.g., bicycle wheel).

2. **DeepLabV3+**:

- Struggles with overlapping figures (beach scene) and small objects (dog).

3. **PGN**:

- Fails to segment small objects (bicycle, dog) accurately.

4. **SS-NAN**:

- Misses entire classes (dog, bicycle) in multiple scenes.

5. **CNIF**:

- Accurate but introduces noise in edges and textures.

---

### Interpretation

The comparison demonstrates that the proposed method ("Ours") achieves the closest alignment with ground-truth across diverse scenarios, particularly in handling occlusions and complex interactions. Baseline models like DeepLabV3+ and CNIF exhibit robustness in general but falter in edge cases (e.g., small objects, overlapping regions). SS-NAN's failure to segment critical classes (dog, bicycle) highlights limitations in context-aware reasoning. The red boxes quantitatively validate these trends, showing that segmentation accuracy degrades with increasing scene complexity.

This analysis underscores the importance of model architecture design for handling real-world variability in object size, occlusion, and contextual relationships.