## Line Chart: Performance Comparison of Prompting Techniques

### Overview

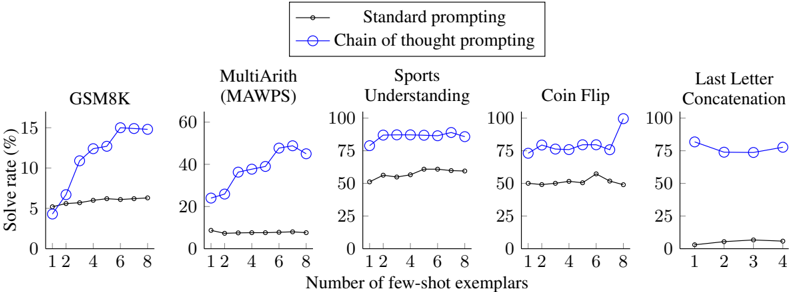

The image presents a series of five line charts, each comparing the performance of "Standard prompting" and "Chain of thought prompting" across different tasks: GSM8K, MultiArith (MAWPS), Sports Understanding, Coin Flip, and Last Letter Concatenation. The performance metric is "Solve rate (%)" plotted against the "Number of few-shot exemplars".

### Components/Axes

* **X-axis:** "Number of few-shot exemplars". Scale ranges from 0 to 12 for GSM8K, MultiArith, Sports Understanding, and Coin Flip, and from 0 to 4 for Last Letter Concatenation.

* **Y-axis:** "Solve rate (%)". Scale ranges from 0 to 100 for all charts.

* **Legend:** Located in the top-right corner.

* Black line with circle markers: "Standard prompting"

* Blue line with circle markers: "Chain of thought prompting"

* **Chart Titles:** Each chart is labeled with the name of the task it represents (GSM8K, MultiArith (MAWPS), Sports Understanding, Coin Flip, Last Letter Concatenation).

### Detailed Analysis or Content Details

**1. GSM8K:**

* **Standard prompting (Black):** Starts at approximately 2% solve rate at 1 exemplar, gradually increasing to around 8% at 8 exemplars. The line is relatively flat.

* **Chain of thought prompting (Blue):** Starts at approximately 5% solve rate at 1 exemplar, and increases rapidly to around 16% at 8 exemplars. The line slopes upward significantly.

**2. MultiArith (MAWPS):**

* **Standard prompting (Black):** Starts at approximately 10% solve rate at 1 exemplar, increasing to around 22% at 8 exemplars.

* **Chain of thought prompting (Blue):** Starts at approximately 15% solve rate at 1 exemplar, and increases rapidly to around 60% at 8 exemplars. The line slopes upward significantly.

**3. Sports Understanding:**

* **Standard prompting (Black):** Starts at approximately 30% solve rate at 1 exemplar, increasing to around 65% at 8 exemplars.

* **Chain of thought prompting (Blue):** Starts at approximately 60% solve rate at 1 exemplar, and increases to around 95% at 8 exemplars. The line is relatively flat at higher exemplar counts.

**4. Coin Flip:**

* **Standard prompting (Black):** Starts at approximately 50% solve rate at 1 exemplar, decreasing to around 55% at 8 exemplars.

* **Chain of thought prompting (Blue):** Starts at approximately 50% solve rate at 1 exemplar, and increases rapidly to around 95% at 8 exemplars. The line slopes upward significantly.

**5. Last Letter Concatenation:**

* **Standard prompting (Black):** Remains relatively flat at approximately 10% solve rate across all exemplar counts (1-4).

* **Chain of thought prompting (Blue):** Remains relatively flat at approximately 70% solve rate across all exemplar counts (1-4).

### Key Observations

* Chain of thought prompting consistently outperforms standard prompting across all tasks.

* The performance improvement from chain of thought prompting is most pronounced in GSM8K, MultiArith, and Coin Flip, where the solve rate increases significantly with more exemplars.

* For Sports Understanding and Last Letter Concatenation, chain of thought prompting starts with a higher solve rate and shows less improvement with increasing exemplars.

* Standard prompting shows minimal improvement with increasing exemplars for Last Letter Concatenation.

* Coin Flip shows a slight decrease in performance for standard prompting as the number of exemplars increases.

### Interpretation

The data strongly suggests that chain of thought prompting is a more effective technique than standard prompting for improving the solve rate of these tasks. The benefit of chain of thought prompting is particularly noticeable when the task requires more complex reasoning or problem-solving, as seen in GSM8K and MultiArith. The relatively flat performance of standard prompting on Last Letter Concatenation suggests that this task may be simpler or less sensitive to the prompting technique used. The slight decrease in performance for standard prompting on Coin Flip with more exemplars could indicate overfitting or a negative correlation between exemplars and performance for this specific task. Overall, the charts demonstrate the power of prompting strategies in enhancing the capabilities of language models. The consistent outperformance of chain of thought prompting highlights the importance of guiding the model's reasoning process.