## Line Graphs: Solve Rate Comparison Across Prompting Methods

### Overview

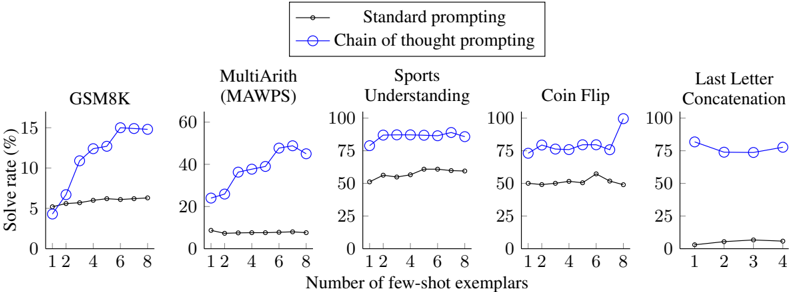

The image contains five line graphs comparing the performance of two prompting methods—**Standard prompting** (black line) and **Chain of thought prompting** (blue line)—across five tasks: **GSM8K**, **MultiArith (MAWPS)**, **Sports Understanding**, **Coin Flip**, and **Last Letter Concatenation**. The x-axis represents the number of few-shot exemplars (0–8 for most tasks, 0–4 for Last Letter Concatenation), and the y-axis shows solve rate (%) (0–100). The legend is positioned at the top-center of the image.

---

### Components/Axes

- **Legend**:

- **Standard prompting**: Black line with circular markers.

- **Chain of thought prompting**: Blue line with circular markers.

- **X-axis**:

- Labeled "Number of few-shot exemplars" with values: 0, 1, 2, 3, 4, 6, 8 (Last Letter Concatenation ends at 4).

- **Y-axis**:

- Labeled "Solve rate (%)" with values ranging from 0 to 100 (task-dependent scaling).

- **Graph Titles**:

- Each graph is labeled with its respective task (e.g., "GSM8K", "MultiArith (MAWPS)").

---

### Detailed Analysis

#### 1. **GSM8K**

- **Chain of thought prompting** (blue):

- Starts at ~5% (1 exemplar), rises to ~15% (8 exemplars).

- **Standard prompting** (black):

- Remains flat at ~5% across all exemplars.

#### 2. **MultiArith (MAWPS)**

- **Chain of thought prompting** (blue):

- Starts at ~20% (1 exemplar), peaks at ~45% (6 exemplars), drops to ~40% (8 exemplars).

- **Standard prompting** (black):

- Flat at ~5% across all exemplars.

#### 3. **Sports Understanding**

- **Chain of thought prompting** (blue):

- Starts at ~75% (1 exemplar), fluctuates between 75–80% up to 8 exemplars.

- **Standard prompting** (black):

- Starts at ~50% (1 exemplar), peaks at ~55% (6 exemplars), drops to ~50% (8 exemplars).

#### 4. **Coin Flip**

- **Chain of thought prompting** (blue):

- Starts at ~75% (1 exemplar), fluctuates between 70–80% up to 8 exemplars.

- **Standard prompting** (black):

- Starts at ~50% (1 exemplar), peaks at ~55% (6 exemplars), drops to ~50% (8 exemplars).

#### 5. **Last Letter Concatenation**

- **Chain of thought prompting** (blue):

- Starts at ~75% (1 exemplar), drops to ~70% (2 exemplars), rises to ~75% (4 exemplars).

- **Standard prompting** (black):

- Starts at ~0% (1 exemplar), peaks at ~5% (3 exemplars), drops to ~2% (4 exemplars).

---

### Key Observations

1. **Chain of thought prompting** consistently outperforms **Standard prompting** in **GSM8K** and **MultiArith**, showing significant improvement with more exemplars.

2. **Sports Understanding** and **Coin Flip** have high baseline solve rates for Chain of thought prompting (~75%), suggesting these tasks may involve simpler reasoning.

3. **Last Letter Concatenation** shows a dip in Chain of thought performance at 2 exemplars but recovers by 4 exemplars. Standard prompting peaks at 3 exemplars but declines sharply.

4. **Standard prompting** demonstrates minimal improvement or stagnation across all tasks, with solve rates rarely exceeding 5–10%.

---

### Interpretation

- **Chain of thought prompting** enhances performance in complex reasoning tasks (e.g., GSM8K, MultiArith) by leveraging step-by-step reasoning, as evidenced by the upward trend with more exemplars.

- Tasks like **Sports Understanding** and **Coin Flip** may require less explicit reasoning, leading to high solve rates even with Standard prompting.

- The dip in **Last Letter Concatenation** for Chain of thought prompting at 2 exemplars could indicate task-specific limitations, such as overfitting or contextual interference.

- **Standard prompting**’s flat lines suggest it lacks the capacity to adapt to additional exemplars, highlighting the importance of structured reasoning in complex tasks.

This analysis underscores the effectiveness of Chain of thought prompting in improving solve rates for tasks requiring logical reasoning, while Standard prompting remains limited in dynamic adaptation.