## Bar Chart: GSM8K Accuracy Comparison

### Overview

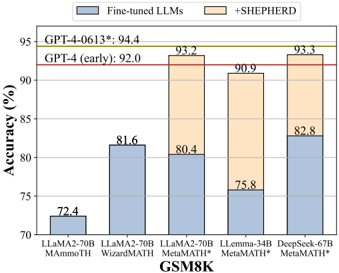

The image is a bar chart comparing the accuracy of different Large Language Models (LLMs) on the GSM8K dataset. The chart compares fine-tuned LLMs against versions enhanced with "+SHEPHERD". The y-axis represents accuracy in percentage, and the x-axis represents different LLM configurations.

### Components/Axes

* **Title:** GSM8K

* **Y-axis:** Accuracy (%)

* Scale: 70 to 95, with tick marks at 70, 75, 80, 85, 90, and 95.

* **X-axis:** LLM configurations

* Categories: LLaMA2-70B MAmmoTH, LLaMA2-70B WizardMATH, LLaMA2-70B MetaMATH, LLemma-34B MetaMATH*, DeepSeek-67B MetaMATH*

* **Legend:** Located at the top of the chart.

* Blue: Fine-tuned LLMs

* Orange: +SHEPHERD

* **Horizontal Lines:**

* GPT-4-0613*: 94.4 (Green line)

* GPT-4 (early): 92.0 (Red line)

### Detailed Analysis

The chart presents accuracy data for various LLMs, both fine-tuned and enhanced with "+SHEPHERD".

* **LLaMA2-70B MAmmoTH:**

* Fine-tuned LLMs (Blue): 72.4%

* **LLaMA2-70B WizardMATH:**

* Fine-tuned LLMs (Blue): 81.6%

* **LLaMA2-70B MetaMATH:**

* Fine-tuned LLMs (Blue): 80.4%

* +SHEPHERD (Orange): 93.2% (Total height of the bar)

* **LLemma-34B MetaMATH*:**

* Fine-tuned LLMs (Blue): 75.8%

* +SHEPHERD (Orange): 90.9% (Total height of the bar)

* **DeepSeek-67B MetaMATH*:**

* Fine-tuned LLMs (Blue): 82.8%

* +SHEPHERD (Orange): 93.3% (Total height of the bar)

### Key Observations

* The "+SHEPHERD" enhancement consistently improves the accuracy of the LLMs.

* The DeepSeek-67B MetaMATH* with +SHEPHERD achieves the highest accuracy among the models tested, closely followed by LLaMA2-70B MetaMATH with +SHEPHERD.

* The GPT-4 models (early and 0613*) serve as benchmarks, with the +SHEPHERD enhanced models approaching or exceeding their performance.

### Interpretation

The data suggests that the "+SHEPHERD" enhancement is effective in improving the accuracy of LLMs on the GSM8K dataset. The comparison with GPT-4 models indicates that these enhanced models are competitive with state-of-the-art LLMs. The chart highlights the potential of combining fine-tuning with additional techniques like "+SHEPHERD" to achieve higher accuracy in mathematical reasoning tasks. The performance of DeepSeek-67B MetaMATH* and LLaMA2-70B MetaMATH* with +SHEPHERD is particularly noteworthy.