TECHNICAL ASSET FINGERPRINT

fc48b93da12d731a12f3d4df

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

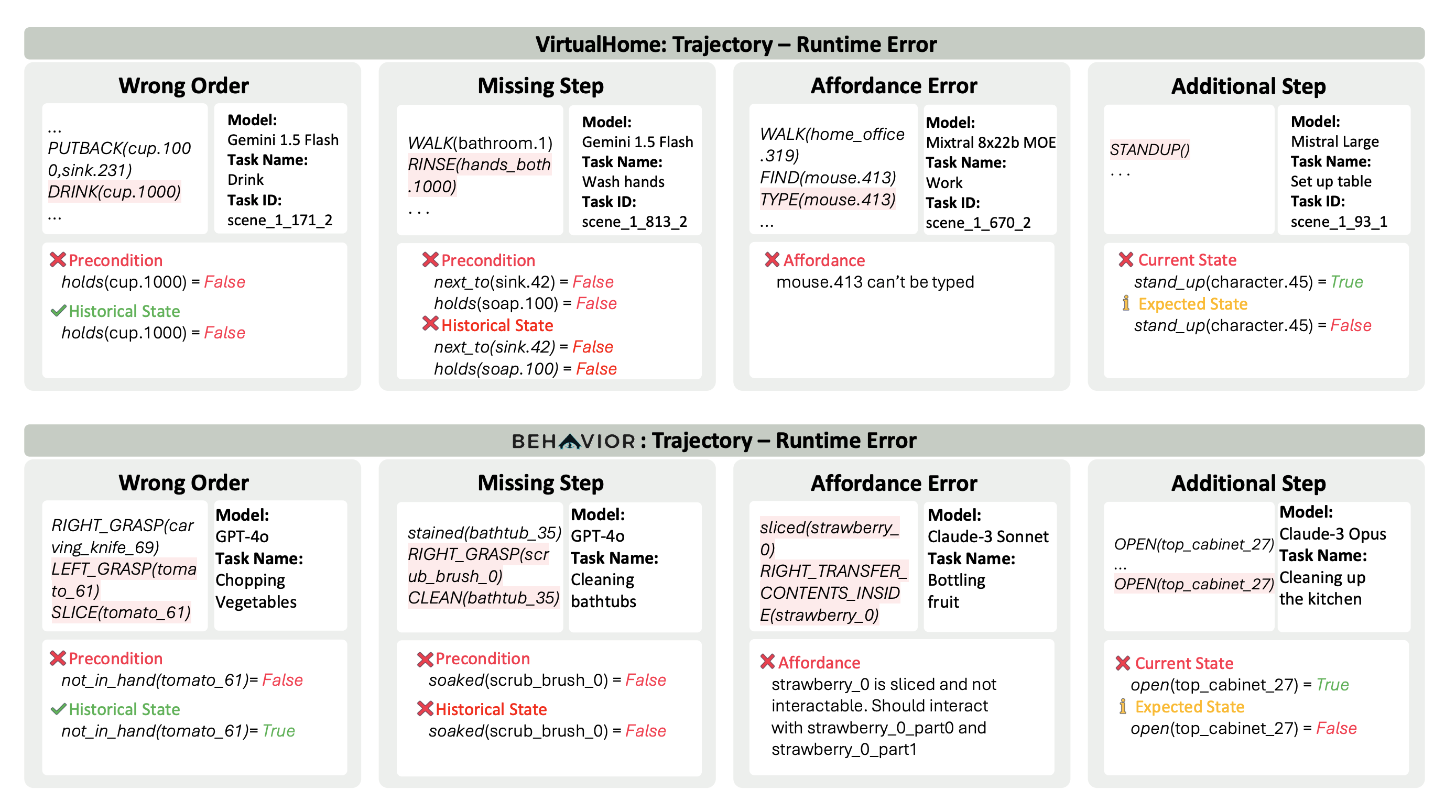

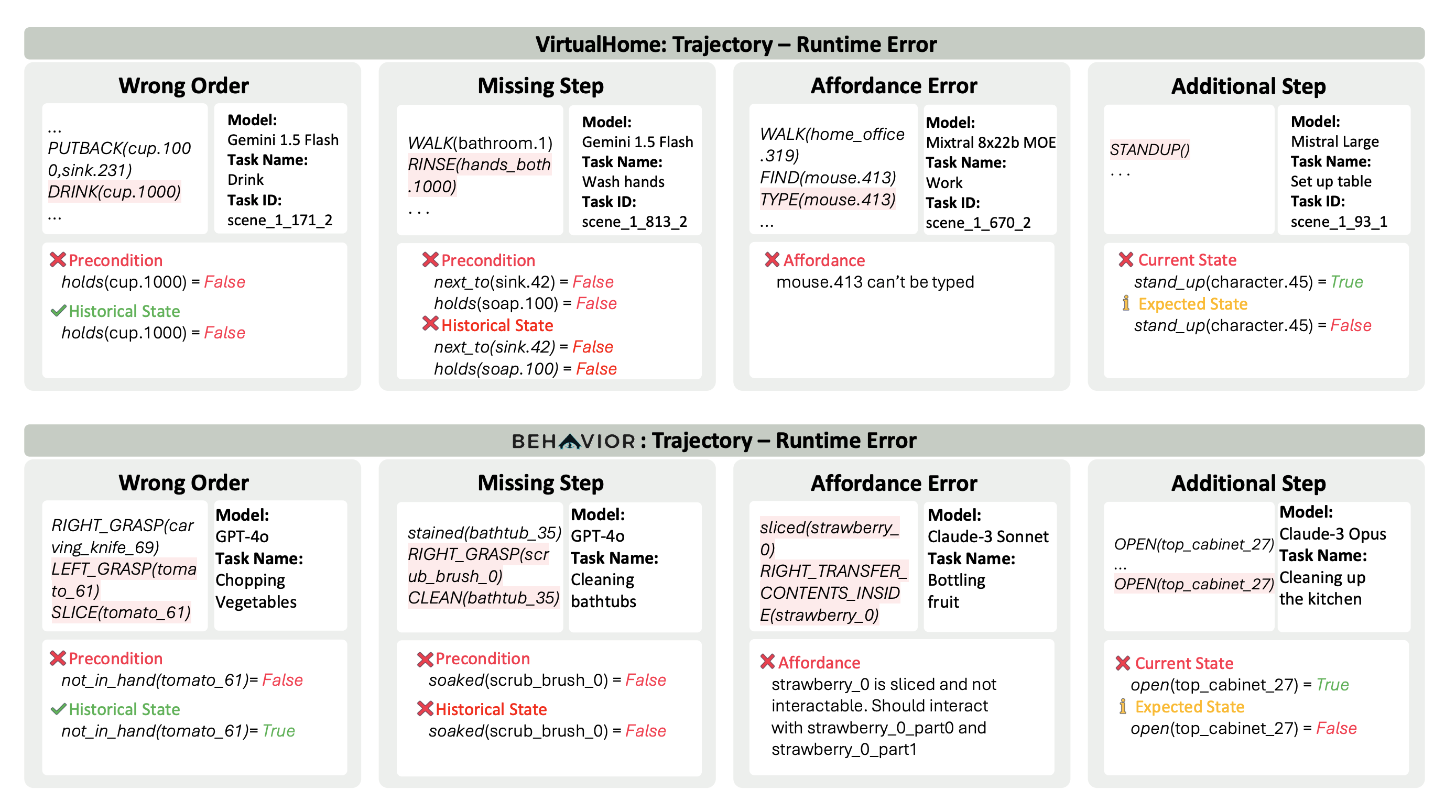

## [Technical Diagram]: Runtime Error Examples in AI Agent Trajectories

### Overview

The image is a structured comparison chart displaying eight specific examples of runtime errors encountered by AI agents when executing tasks in two simulated environments: **VirtualHome** and **BEHAVIOR**. The chart is divided into two primary horizontal sections, each containing four columns representing distinct error categories. Each example includes a snippet of the agent's action sequence, metadata about the AI model and task, and a diagnostic breakdown of the error condition.

### Components/Axes

The diagram is organized as follows:

1. **Top Section Header:** "VirtualHome: Trajectory – Runtime Error"

2. **Bottom Section Header:** "BEHAVIOR: Trajectory – Runtime Error"

3. **Four Error Categories (Columns per Section):**

* **Wrong Order:** Actions performed in an illogical sequence.

* **Missing Step:** A necessary action was omitted from the plan.

* **Affordance Error:** An action was attempted on an object that does not support that interaction.

* **Additional Step:** An unnecessary or redundant action was performed.

4. **Per-Example Structure (within each column):**

* **Action Sequence:** A code-like snippet of the agent's planned or executed actions (e.g., `PUTBACK(cup.1000,sink.231)`).

* **Metadata Box:** Contains "Model:", "Task Name:", and "Task ID:".

* **Diagnostic Box:** Uses colored text and symbols (❌, ✅, ⚠️) to detail the error. Key labels include:

* `❌ Precondition` / `❌ Affordance` / `❌ Current State`: The condition that failed.

* `✅ Historical State`: A previously true condition relevant to the error.

* `⚠️ Expected State`: The state that should have been true for the action to be valid.

### Detailed Analysis

#### **VirtualHome Section (Top)**

* **Wrong Order (Leftmost Column):**

* **Model:** Gemini 1.5 Flash

* **Task:** Drink (ID: scene_1_171_2)

* **Action Snippet:** `... PUTBACK(cup.1000,sink.231) DRINK(cup.1000) ...`

* **Error:** The agent attempts to `DRINK` from a cup (`cup.1000`) immediately after putting it back. The precondition `holds(cup.1000) = False` (agent is not holding the cup) is violated. The historical state confirms the agent was never holding it.

* **Missing Step (Second Column):**

* **Model:** Gemini 1.5 Flash

* **Task:** Wash hands (ID: scene_1_813_2)

* **Action Snippet:** `WALK(bathroom.1) RINSE(hands_both.1000) ...`

* **Error:** The agent walks to the bathroom and immediately rinses hands. Preconditions `next_to(sink.42) = False` and `holds(soap.100) = False` are violated. The historical state shows the agent was never next to the sink or holding soap, indicating a missing step to approach the sink and acquire soap.

* **Affordance Error (Third Column):**

* **Model:** Mixtral 8x22b MOE

* **Task:** Work (ID: scene_1_670_2)

* **Action Snippet:** `WALK(home_office.319) FIND(mouse.413) TYPE(mouse.413) ...`

* **Error:** The agent attempts to `TYPE` on a computer mouse (`mouse.413`). The affordance check fails: "mouse.413 can't be typed".

* **Additional Step (Rightmost Column):**

* **Model:** Mistral Large

* **Task:** Set up table (ID: scene_1_93_1)

* **Action Snippet:** `STANDUP() ...`

* **Error:** The agent performs a `STANDUP` action. The current state shows `stand_up(character.45) = True` (the character is already standing). The expected state for this action to be meaningful is `stand_up(character.45) = False`. This is a redundant action.

#### **BEHAVIOR Section (Bottom)**

* **Wrong Order (Leftmost Column):**

* **Model:** GPT-4o

* **Task:** Chopping Vegetables

* **Action Snippet:** `RIGHT_GRASP(carving_knife_69) LEFT_GRASP(tomato_61) SLICE(tomato_61)`

* **Error:** The agent grasps the tomato with its left hand (`LEFT_GRASP`) and then immediately tries to `SLICE` it. The precondition `not_in_hand(tomato_61) = False` is violated (the tomato *is* in hand). The historical state shows it was not in hand initially, meaning the grasp action itself may have been premature or the slice should occur after placement.

* **Missing Step (Second Column):**

* **Model:** GPT-4o

* **Task:** Cleaning bathtubs

* **Action Snippet:** `stained(bathtub_35) RIGHT_GRASP(scrub_brush_0) CLEAN(bathtub_35)`

* **Error:** The agent notes a stained bathtub, grasps a scrub brush, and attempts to clean. The precondition `soaked(scrub_brush_0) = False` is violated. The historical state confirms the brush was never soaked, indicating a missing step to wet the brush.

* **Affordance Error (Third Column):**

* **Model:** Claude-3 Sonnet

* **Task:** Bottling fruit

* **Action Snippet:** `sliced(strawberry_0) RIGHT_TRANSFER_CONTENTS_INSIDE(strawberry_0)`

* **Error:** The agent tries to transfer the contents of a sliced strawberry (`strawberry_0`). The affordance check fails: "strawberry_0 is sliced and not interactable. Should interact with strawberry_0_part0 and strawberry_0_part1".

* **Additional Step (Rightmost Column):**

* **Model:** Claude-3 Opus

* **Task:** Cleaning up the kitchen

* **Action Snippet:** `OPEN(top_cabinet_27) ... OPEN(top_cabinet_27)`

* **Error:** The agent opens the same cabinet (`top_cabinet_27`) twice. The current state after the first action is `open(top_cabinet_27) = True`. The expected state for a second `OPEN` action to be valid is that the cabinet is closed (`open(top_cabinet_27) = False`). This is a redundant action.

### Key Observations

1. **Model Diversity:** The errors are demonstrated across a range of contemporary AI models (Gemini 1.5 Flash, Mixtral 8x22b, Mistral Large, GPT-4o, Claude-3 Sonnet/Opus), suggesting these are common challenges not specific to a single architecture.

2. **Error Categorization:** The four error types (Wrong Order, Missing Step, Affordance, Additional Step) provide a clear taxonomy for diagnosing failures in embodied AI planning.

3. **Diagnostic Clarity:** Each error is pinpointed by comparing preconditions, historical states, and expected states against the agent's actions, using a clear visual language of symbols and colors.

4. **Task Complexity:** The tasks range from simple ("Drink") to multi-step ("Cleaning bathtubs", "Bottling fruit"), and errors occur in both.

### Interpretation

This diagram serves as a diagnostic reference for understanding failure modes in AI agents operating within simulated physical environments (VirtualHome, BEHAVIOR). It moves beyond simply stating an action failed and instead provides a **root-cause analysis** for each error.

* **What it demonstrates:** The chart illustrates the gap between high-level task planning and low-level physical execution. An AI might understand the goal ("wash hands") but fail to sequence the necessary sub-actions correctly (get soap, turn on water) or misjudge the physical properties of objects (you can't type on a mouse).

* **Relationship between elements:** The "Action Sequence" shows the agent's flawed plan. The "Metadata" contextualizes which model and task produced the error. The "Diagnostic Box" is the core analytical output, revealing the specific logical or physical constraint that was violated. The two main sections (VirtualHome vs. BEHAVIOR) show that similar error patterns manifest across different simulation platforms.

* **Notable patterns:** "Wrong Order" and "Missing Step" errors highlight deficiencies in **temporal reasoning** and **procedural knowledge**. "Affordance Error" points to a lack of **common-sense physical understanding**. "Additional Step" errors suggest issues with **state tracking**—the agent fails to recognize the current state of the world and performs an action that is already true or no-op.

* **Why it matters:** For researchers and developers, this taxonomy is crucial for debugging and improving AI agents. It shows that robust performance requires not just goal-oriented planning, but also accurate world modeling, state awareness, and an understanding of object affordances. The errors are not random; they are systematic failures in specific cognitive dimensions.

DECODING INTELLIGENCE...