## Heatmap: Average JS Divergence Across Layers and Components

### Overview

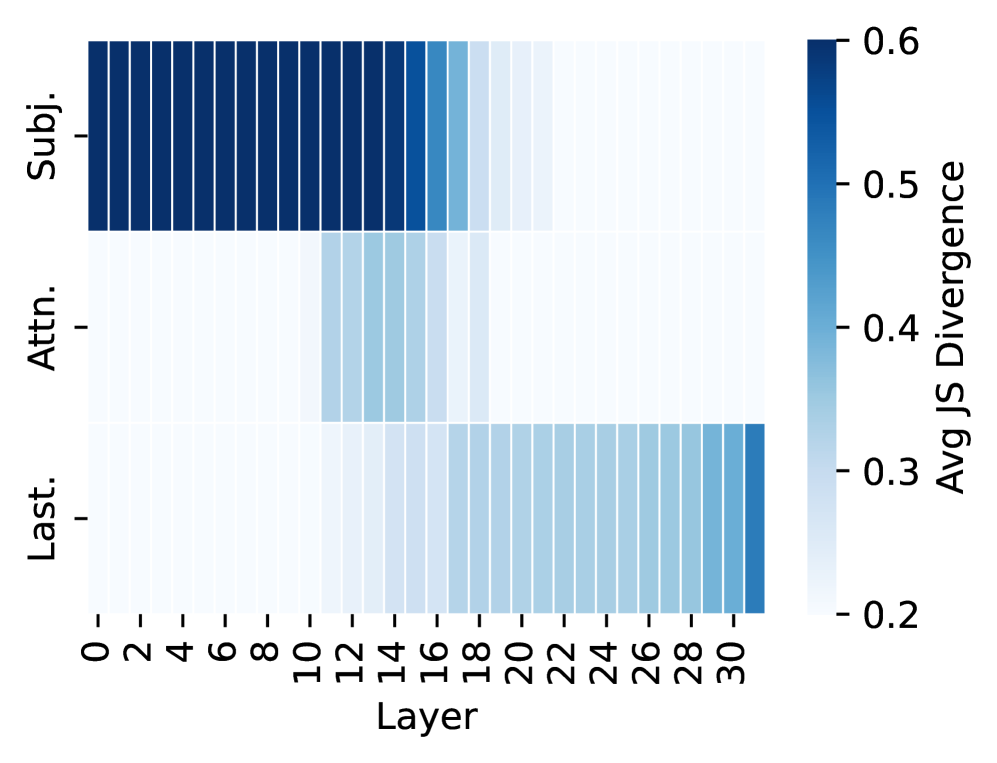

The image is a heatmap visualizing average JS divergence values across three components ("Subj.", "Attn.", "Last.") and 31 layers (0–30). The color intensity ranges from light blue (low divergence, ~0.2) to dark blue (high divergence, ~0.6), with a vertical colorbar on the right.

---

### Components/Axes

- **X-axis (Layer)**: Labeled "Layer," with integer values from 0 to 30.

- **Y-axis (Components)**: Three categories:

- "Subj." (Subject)

- "Attn." (Attention)

- "Last." (Last)

- **Colorbar**: Vertical legend on the right, labeled "Avg JS Divergence," with values from 0.2 (light blue) to 0.6 (dark blue).

---

### Detailed Analysis

1. **Subj. (Subject)**:

- Dark blue bars dominate layers 0–15, indicating high divergence (~0.5–0.6).

- Gradual lightening from layer 16 onward, dropping to ~0.3–0.4 by layer 30.

2. **Attn. (Attention)**:

- Light blue (low divergence, ~0.2–0.3) in layers 0–14.

- Medium blue peak (~0.4–0.5) at layer 15.

- Returns to light blue in layers 16–30.

3. **Last. (Last)**:

- Light blue (low divergence, ~0.2–0.3) in layers 0–19.

- Gradual darkening from layer 20 onward, reaching ~0.4–0.5 by layer 30.

---

### Key Observations

- **Highest divergence**: "Subj." in early layers (0–15) with values near 0.6.

- **Moderate divergence**: "Attn." at layer 15 (~0.4–0.5) and "Last." in later layers (20–30, ~0.4–0.5).

- **Low divergence**: Most regions are light blue, except for the noted peaks.

---

### Interpretation

The heatmap suggests that:

1. **Early layers (0–15)** are critical for "Subj." (subject-related) divergence, possibly indicating strong subject-specific processing in initial model layers.

2. **Layer 15** stands out for "Attn." divergence, hinting at a specialized attention mechanism at this depth.

3. **Later layers (20–30)** show increasing divergence for "Last.," suggesting cumulative or sequential processing in deeper layers.

4. The overall pattern implies that divergence is not uniformly distributed across layers or components, with distinct peaks correlating to specific tasks or mechanisms.

This could reflect architectural design choices in a neural network, where certain layers are optimized for specific functions (e.g., subject identification, attention weighting, or final output processing).