# Technical Analysis of AI Model Performance Chart

## Chart Overview

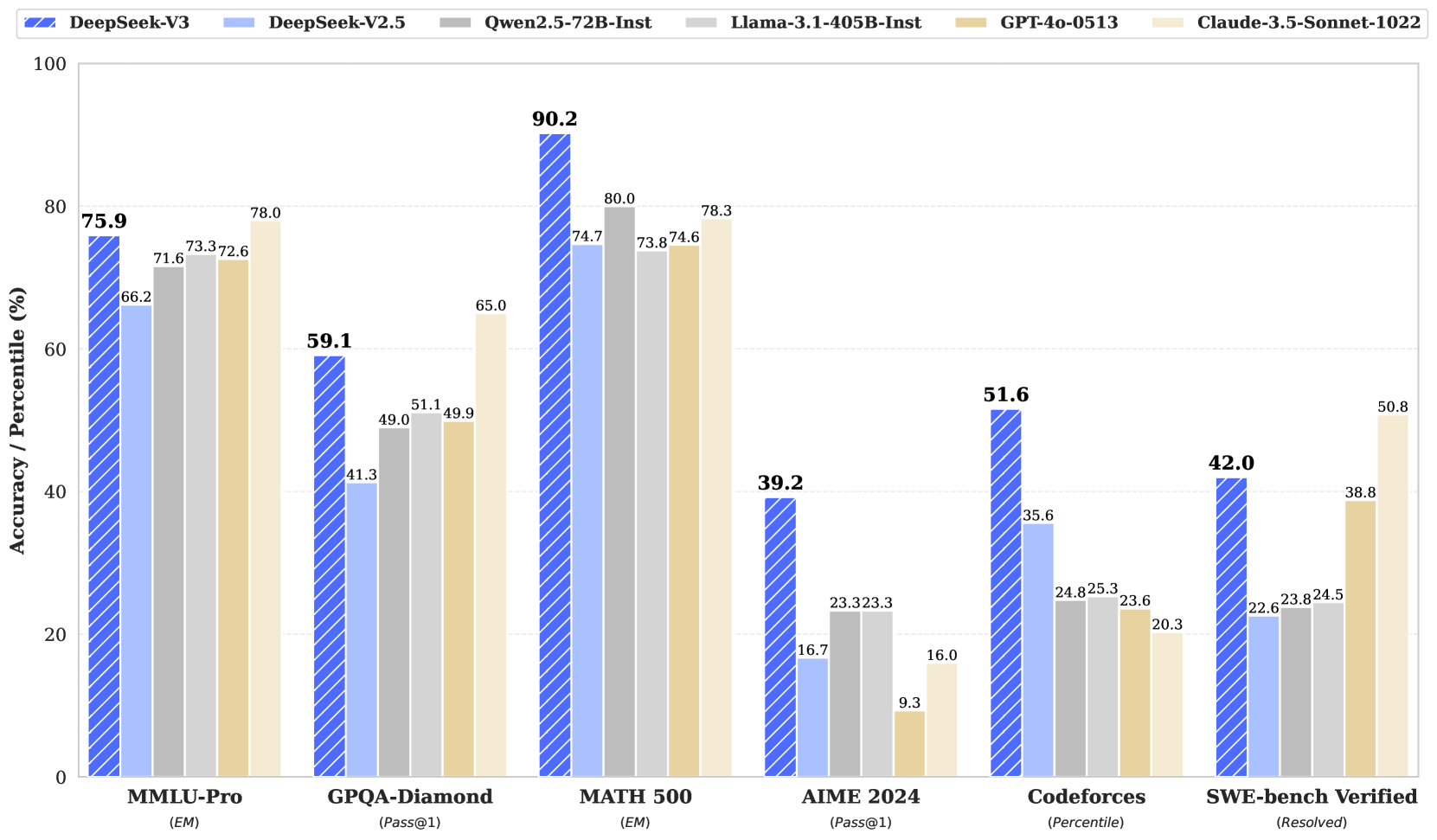

The image is a grouped bar chart comparing the accuracy/percentile performance of six AI models across six benchmarks. The chart uses distinct colors and patterns to differentiate models, with a legend positioned at the top.

### Legend Details

- **DeepSeek-V3**: Blue with diagonal stripes

- **DeepSeek-V2.5**: Light blue

- **Qwen2.5-72B-Instruct**: Gray

- **Llama-3.1-405B-Instruct**: Dark gray

- **GPT-4o-0513**: Tan

- **Claude-3.5-Sonnet-1022**: Light tan

### Axes

- **Y-axis**: "Accuracy / Percentile (%)" (0–100 scale)

- **X-axis**: Benchmarks (categorical labels)

## Benchmark Performance Analysis

### 1. MMLU-Pro (EM)

- **DeepSeek-V3**: 75.9% (highest)

- **DeepSeek-V2.5**: 66.2%

- **Qwen2.5-72B-Instruct**: 71.6%

- **Llama-3.1-405B-Instruct**: 73.3%

- **GPT-4o-0513**: 72.6%

- **Claude-3.5-Sonnet-1022**: 78.0% (highest)

### 2. GPQA-Diamond (Pass@1)

- **DeepSeek-V3**: 59.1%

- **DeepSeek-V2.5**: 41.3%

- **Qwen2.5-72B-Instruct**: 49.0%

- **Llama-3.1-405B-Instruct**: 51.1%

- **GPT-4o-0513**: 49.9%

- **Claude-3.5-Sonnet-1022**: 65.0% (highest)

### 3. MATH 500 (EM)

- **DeepSeek-V3**: 90.2% (highest)

- **DeepSeek-V2.5**: 74.7%

- **Qwen2.5-72B-Instruct**: 80.0%

- **Llama-3.1-405B-Instruct**: 73.8%

- **GPT-4o-0513**: 74.6%

- **Claude-3.5-Sonnet-1022**: 78.3% (highest)

### 4. AIME 2024 (Pass@1)

- **DeepSeek-V3**: 39.2%

- **DeepSeek-V2.5**: 16.7%

- **Qwen2.5-72B-Instruct**: 23.3%

- **Llama-3.1-405B-Instruct**: 23.3%

- **GPT-4o-0513**: 9.3% (lowest)

- **Claude-3.5-Sonnet-1022**: 16.0%

### 5. Codeforces (Percentile)

- **DeepSeek-V3**: 51.6%

- **DeepSeek-V2.5**: 35.6%

- **Qwen2.5-72B-Instruct**: 24.8%

- **Llama-3.1-405B-Instruct**: 25.3%

- **GPT-4o-0513**: 23.6%

- **Claude-3.5-Sonnet-1022**: 20.3% (lowest)

### 6. SWE-bench Verified (Resolved)

- **DeepSeek-V3**: 42.0%

- **DeepSeek-V2.5**: 22.6%

- **Qwen2.5-72B-Instruct**: 23.8%

- **Llama-3.1-405B-Instruct**: 24.5%

- **GPT-4o-0513**: 38.8%

- **Claude-3.5-Sonnet-1022**: 50.8% (highest)

## Key Trends

1. **DeepSeek-V3** consistently outperforms other models in most benchmarks, particularly in MATH 500 (90.2%) and MMLU-Pro (75.9%).

2. **Claude-3.5-Sonnet-1022** shows strong performance in MMLU-Pro (78.0%) and SWE-bench Verified (50.8%).

3. **GPT-4o-0513** underperforms in AIME 2024 (9.3%) but excels in SWE-bench Verified (38.8%).

4. **DeepSeek-V2.5** has the lowest scores in AIME 2024 (16.7%) and SWE-bench Verified (22.6%).

5. **Llama-3.1-405B-Instruct** maintains mid-range performance across all benchmarks.

## Spatial Grounding & Validation

- Legend colors/patterns match bar colors exactly (e.g., diagonal stripes for DeepSeek-V3).

- All data points align with legend labels (e.g., 90.2% for DeepSeek-V3 in MATH 500 corresponds to the blue-striped bar).

## Component Isolation

- **Header**: Legend with model identifiers

- **Main Chart**: Grouped bars for each benchmark

- **Footer**: No additional text or data

## Data Table Reconstruction

| Benchmark | DeepSeek-V3 | DeepSeek-V2.5 | Qwen2.5-72B-Instruct | Llama-3.1-405B-Instruct | GPT-4o-0513 | Claude-3.5-Sonnet-1022 |

|--------------------|-------------|---------------|----------------------|-------------------------|-------------|------------------------|

| MMLU-Pro (EM) | 75.9 | 66.2 | 71.6 | 73.3 | 72.6 | 78.0 |

| GPQA-Diamond (Pass@1) | 59.1 | 41.3 | 49.0 | 51.1 | 49.9 | 65.0 |

| MATH 500 (EM) | 90.2 | 74.7 | 80.0 | 73.8 | 74.6 | 78.3 |

| AIME 2024 (Pass@1) | 39.2 | 16.7 | 23.3 | 23.3 | 9.3 | 16.0 |

| Codeforces (Percentile) | 51.6 | 35.6 | 24.8 | 25.3 | 23.6 | 20.3 |

| SWE-bench Verified | 42.0 | 22.6 | 23.8 | 24.5 | 38.8 | 50.8 |

## Conclusion

The chart reveals significant performance disparities across models and benchmarks. DeepSeek-V3 dominates in technical reasoning (MATH 500), while Claude-3.5-Sonnet-1022 excels in general knowledge (MMLU-Pro) and software engineering tasks (SWE-bench). GPT-4o-0513 shows notable weaknesses in AIME 2024, suggesting potential limitations in mathematical problem-solving.