## Line Chart: Accuracy vs. Completion Tokens for Four Methods

### Overview

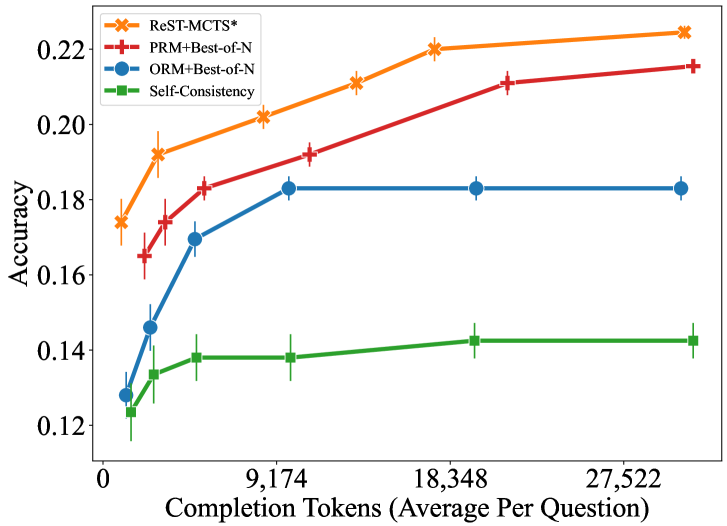

The image is a line chart comparing the performance of four different methods or models. The chart plots "Accuracy" on the vertical axis against "Completion Tokens (Average Per Question)" on the horizontal axis. It demonstrates how the accuracy of each method changes as the average number of tokens used per question increases. All methods show an initial increase in accuracy with more tokens, but their performance plateaus at different levels.

### Components/Axes

* **Chart Type:** Line chart with error bars.

* **X-Axis (Horizontal):**

* **Label:** `Completion Tokens (Average Per Question)`

* **Scale:** Linear scale.

* **Major Tick Marks:** 0, 9,174, 18,348, 27,522.

* **Y-Axis (Vertical):**

* **Label:** `Accuracy`

* **Scale:** Linear scale.

* **Range:** Approximately 0.12 to 0.22.

* **Major Tick Marks:** 0.12, 0.14, 0.16, 0.18, 0.20, 0.22.

* **Legend (Top-Left Corner):**

* **Position:** Located in the upper-left quadrant of the plot area.

* **Items (from top to bottom):**

1. `ReST-MCTS*` - Orange line with star (`*`) markers.

2. `PRM+Best-of-N` - Red line with plus (`+`) markers.

3. `ORM+Best-of-N` - Blue line with circle (`o`) markers.

4. `Self-Consistency` - Green line with square (`s`) markers.

### Detailed Analysis

**Trend Verification & Data Points (Approximate):**

1. **ReST-MCTS* (Orange, Star Markers):**

* **Trend:** Shows the steepest initial rise and achieves the highest overall accuracy. The curve continues to rise steadily across the entire range of tokens.

* **Approximate Points:**

* At ~1,000 tokens: Accuracy ≈ 0.175

* At ~4,500 tokens: Accuracy ≈ 0.192

* At ~9,174 tokens: Accuracy ≈ 0.202

* At ~13,000 tokens: Accuracy ≈ 0.210

* At ~18,348 tokens: Accuracy ≈ 0.220

* At ~27,522 tokens: Accuracy ≈ 0.225

2. **PRM+Best-of-N (Red, Plus Markers):**

* **Trend:** Rises sharply initially, then continues to increase at a slower, steady rate. It is consistently the second-best performing method.

* **Approximate Points:**

* At ~2,000 tokens: Accuracy ≈ 0.165

* At ~4,500 tokens: Accuracy ≈ 0.175

* At ~7,000 tokens: Accuracy ≈ 0.183

* At ~11,000 tokens: Accuracy ≈ 0.192

* At ~18,348 tokens: Accuracy ≈ 0.210

* At ~27,522 tokens: Accuracy ≈ 0.215

3. **ORM+Best-of-N (Blue, Circle Markers):**

* **Trend:** Increases rapidly at first but then plateaus completely after approximately 9,000 tokens, showing no further gain in accuracy with more tokens.

* **Approximate Points:**

* At ~1,000 tokens: Accuracy ≈ 0.128

* At ~3,000 tokens: Accuracy ≈ 0.146

* At ~6,000 tokens: Accuracy ≈ 0.170

* At ~9,174 tokens: Accuracy ≈ 0.183

* At ~18,348 tokens: Accuracy ≈ 0.183 (plateau)

* At ~27,522 tokens: Accuracy ≈ 0.183 (plateau)

4. **Self-Consistency (Green, Square Markers):**

* **Trend:** Shows the lowest accuracy overall. It has a modest initial increase and then plateaus at a low level, similar to ORM+Best-of-N but at a lower accuracy value.

* **Approximate Points:**

* At ~1,000 tokens: Accuracy ≈ 0.123

* At ~3,000 tokens: Accuracy ≈ 0.133

* At ~6,000 tokens: Accuracy ≈ 0.138

* At ~9,174 tokens: Accuracy ≈ 0.138 (plateau)

* At ~18,348 tokens: Accuracy ≈ 0.142

* At ~27,522 tokens: Accuracy ≈ 0.142 (plateau)

**Error Bars:** All data points include vertical error bars, indicating variability or confidence intervals in the accuracy measurements. The size of the error bars appears relatively consistent for each method across the x-axis.

### Key Observations

1. **Performance Hierarchy:** There is a clear and consistent performance ranking across the entire range of completion tokens: `ReST-MCTS*` > `PRM+Best-of-N` > `ORM+Best-of-N` > `Self-Consistency`.

2. **Scaling Behavior:** Two distinct scaling patterns are visible:

* **Continuous Improvement:** `ReST-MCTS*` and `PRM+Best-of-N` continue to gain accuracy as more tokens are allocated per question.

* **Early Plateau:** `ORM+Best-of-N` and `Self-Consistency` hit a performance ceiling relatively early (around 9,000 tokens) and do not benefit from additional computational resources (tokens).

3. **Efficiency Gap:** At the highest token count (~27,522), the top method (`ReST-MCTS*`) is approximately 0.083 accuracy points (or ~58% relatively) higher than the lowest method (`Self-Consistency`).

### Interpretation

This chart likely comes from a research paper evaluating different reasoning or generation strategies for large language models (LLMs). The "Completion Tokens" axis represents the computational cost or verbosity of the model's output.

* **What the data suggests:** The `ReST-MCTS*` method is the most effective and scalable approach among those tested. Its name suggests it may combine Reinforcement Learning from Human Feedback (RLHF) or similar techniques (`ReST`) with Monte Carlo Tree Search (`MCTS`), a planning algorithm. This combination appears to allow the model to use additional computational budget (tokens) more effectively to improve its final answer accuracy.

* **Why it matters:** The plateauing of `ORM+Best-of-N` and `Self-Consistency` indicates a fundamental limit to how much these specific strategies can improve by simply generating more text or trying more samples. In contrast, the continued rise of `ReST-MCTS*` implies its underlying mechanism (likely iterative planning or refinement) can leverage extra compute to achieve better results, making it a more promising direction for scaling performance.

* **Notable Anomaly:** The `ORM+Best-of-N` line is perfectly flat after ~9,174 tokens. This is a strong visual signal that the method has exhausted its potential for improvement under the tested conditions, which is a critical finding for resource allocation in practical applications.