## Scatter Plot: Model Performance by Problem Size and Reasoning Tokens

### Overview

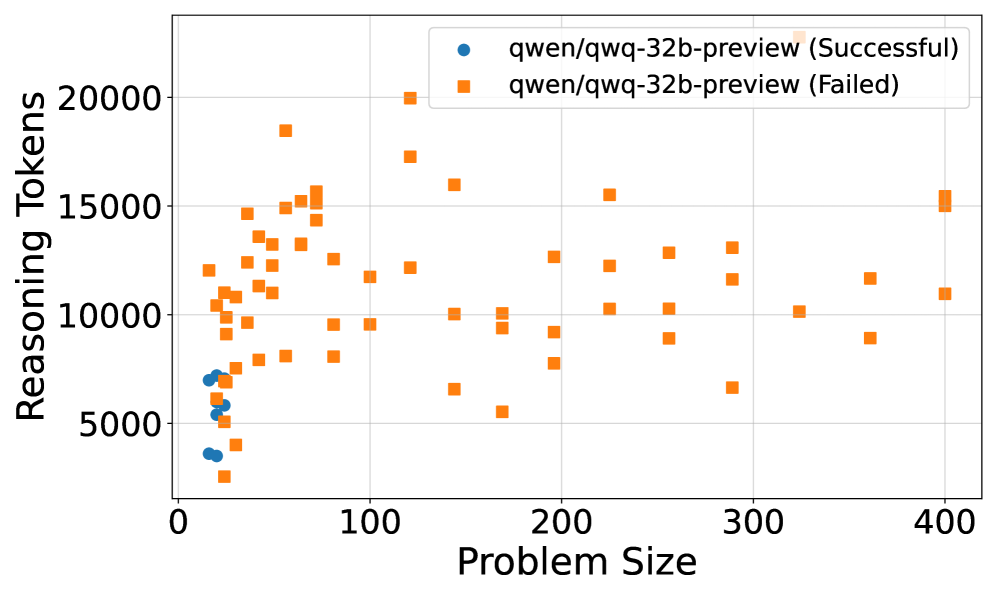

This image is a scatter plot comparing the performance outcomes (Successful vs. Failed) of a specific AI model, `qwen/qwq-32b-preview`, across two metrics: "Problem Size" and "Reasoning Tokens". The plot visualizes the relationship between the complexity of a task (problem size) and the computational effort expended (reasoning tokens), categorized by the final success or failure of the model's attempt.

### Components/Axes

* **X-Axis (Horizontal):** Labeled "Problem Size". The scale runs from 0 to 400, with major tick marks at 0, 100, 200, 300, and 400.

* **Y-Axis (Vertical):** Labeled "Reasoning Tokens". The scale runs from 0 to 20,000, with major tick marks at 0, 5000, 10000, 15000, and 20000.

* **Legend:** Located in the top-right corner of the plot area.

* **Blue Circle (●):** `qwen/qwq-32b-preview (Successful)`

* **Orange Square (■):** `qwen/qwq-32b-preview (Failed)`

* **Grid:** A light gray grid is present, aiding in the estimation of data point coordinates.

### Detailed Analysis

The data is segmented into two distinct series based on the legend.

**1. Successful Attempts (Blue Circles):**

* **Trend & Placement:** These points form a tight cluster exclusively in the lower-left quadrant of the chart.

* **Data Points:** All successful attempts are confined to a narrow range.

* **Problem Size:** Approximately between 10 and 30.

* **Reasoning Tokens:** Approximately between 3,500 and 7,500.

* **Observation:** There is a positive correlation within this small cluster; as problem size increases slightly, the reasoning tokens used also increase. No successful attempts are recorded for problem sizes greater than ~30.

**2. Failed Attempts (Orange Squares):**

* **Trend & Placement:** These points are widely dispersed across the entire chart area, showing no single tight cluster.

* **Data Points:** They span the full ranges of both axes.

* **Problem Size:** From as low as ~15 to the maximum shown, 400.

* **Reasoning Tokens:** From as low as ~2,500 to the maximum shown, ~20,000.

* **Distribution:**

* A dense concentration exists for lower problem sizes (0-100), where token counts vary dramatically from ~2,500 to ~18,000.

* For larger problem sizes (100-400), the points are more sparse but still show high variability in token usage, ranging from ~5,000 to ~15,000+.

* The highest token count (≈20,000) is associated with a failed attempt at a problem size of approximately 120.

### Key Observations

1. **Clear Performance Boundary:** There is a stark, almost binary separation. Successful outcomes are strictly limited to very small problem sizes (< ~30) and moderate token usage (< ~7,500).

2. **Failure Across All Scales:** Failures occur across the entire spectrum of problem sizes, from small to large.

3. **Inefficiency in Failure:** Many failed attempts, especially at lower problem sizes, consume significantly more reasoning tokens (e.g., 10,000-18,000) than any successful attempt. This suggests a pattern of "spinning wheels" or inefficient reasoning on tasks that are ultimately not solved.

4. **No Success at Scale:** The complete absence of blue circles beyond a problem size of ~30 indicates a potential hard limit or severe degradation in the model's capability to successfully solve larger problems within this evaluation.

### Interpretation

This chart suggests a critical performance limitation for the `qwen/qwq-32b-preview` model on the evaluated task suite.

* **Capability Ceiling:** The model appears capable of solving only the simplest problems (small "Problem Size"). Its success is not just less likely but *non-existent* for more complex tasks in this dataset.

* **Resource Misallocation:** The high token counts for many failures indicate that the model often engages in extensive, yet unproductive, reasoning when it is destined to fail. This is particularly notable for small problems where it fails, using 2-3 times the tokens of a successful run.

* **Diagnostic Value:** The plot is a powerful diagnostic tool. It doesn't just show *that* the model fails on hard problems, but *how* it fails—often after expending considerable computational effort. This points to potential issues in the model's reasoning strategy, its ability to recognize dead ends, or a fundamental mismatch between its training and the nature of larger problems in this domain.

* **Actionable Insight:** To improve performance, focus should be on either: 1) Enhancing the model's core reasoning capability to handle larger problem sizes, or 2) Implementing better early-stopping or confidence-calibration mechanisms to prevent the wasteful expenditure of tokens on attempts that are unlikely to succeed.