TECHNICAL ASSET FINGERPRINT

fda69372e351cfba2bdaa95e

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

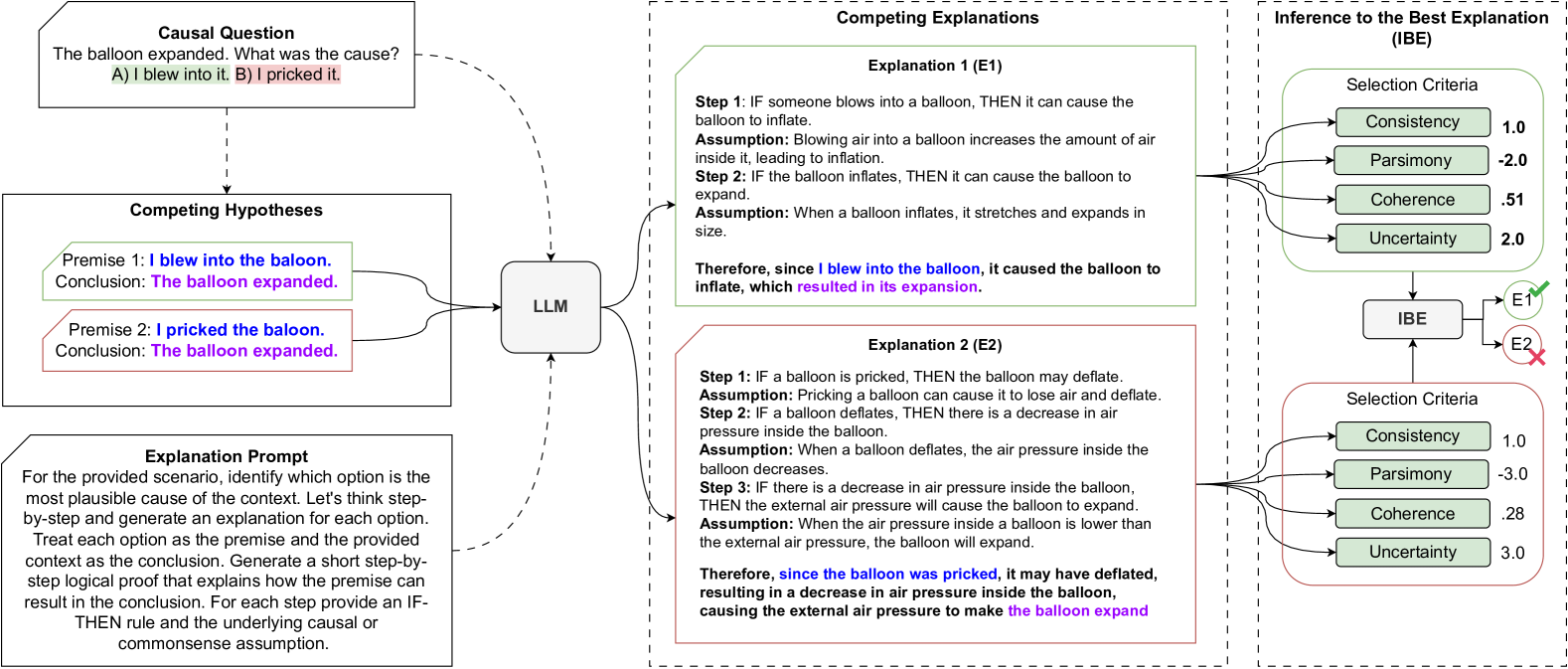

## Causal Reasoning Diagram: Balloon Expansion

### Overview

The image presents a diagram illustrating a causal reasoning process, specifically focusing on determining the cause of a balloon's expansion. It outlines competing hypotheses, explanations generated by a Large Language Model (LLM), and an Inference to the Best Explanation (IBE) framework to evaluate these explanations.

### Components/Axes

The diagram is divided into three main sections:

1. **Causal Question:** Poses the problem: "The balloon expanded. What was the cause? A) I blew into it. B) I pricked it."

2. **Competing Hypotheses:** Presents two premises:

* Premise 1: "I blew into the balloon. Conclusion: The balloon expanded." (highlighted in green)

* Premise 2: "I pricked the balloon. Conclusion: The balloon expanded." (highlighted in red)

3. **Explanation Prompt:** Provides instructions for generating explanations for each option, treating each option as a premise and generating a step-by-step logical proof.

4. **LLM:** A central node representing the Large Language Model that processes the hypotheses and generates explanations.

5. **Competing Explanations:** Presents two explanations generated by the LLM:

* Explanation 1 (E1): Based on blowing into the balloon.

* Explanation 2 (E2): Based on pricking the balloon.

6. **Inference to the Best Explanation (IBE):** Evaluates the explanations based on selection criteria.

### Detailed Analysis or Content Details

**1. Causal Question and Competing Hypotheses:**

* The causal question sets up the problem: "The balloon expanded. What was the cause?" with two possible answers: "A) I blew into it" and "B) I pricked it."

* The competing hypotheses frame these answers as premises leading to the conclusion that "The balloon expanded."

**2. Explanation Prompt:**

* The prompt instructs the system to identify the most plausible cause and generate step-by-step explanations for each option.

* It emphasizes the use of IF-THEN rules and commonsense assumptions.

**3. LLM:**

* The LLM acts as the central processing unit, taking the hypotheses and prompt as input and generating explanations.

**4. Competing Explanations:**

* **Explanation 1 (E1):**

* Step 1: "IF someone blows into a balloon, THEN it can cause the balloon to inflate."

* Assumption: "Blowing air into a balloon increases the amount of air inside it, leading to inflation."

* Step 2: "IF the balloon inflates, THEN it can cause the balloon to expand."

* Assumption: "When a balloon inflates, it stretches and expands in size."

* Conclusion: "Therefore, since I blew into the balloon, it caused the balloon to inflate, which resulted in its expansion."

* **Explanation 2 (E2):**

* Step 1: "IF a balloon is pricked, THEN the balloon may deflate."

* Assumption: "Pricking a balloon can cause it to lose air and deflate."

* Step 2: "IF a balloon deflates, THEN there is a decrease in air pressure inside the balloon."

* Assumption: "When a balloon deflates, there is a decrease in air pressure inside the balloon."

* Step 3: "IF there is a decrease in air pressure inside the balloon, THEN the external air pressure will cause the balloon to expand."

* Assumption: "When the air pressure inside a balloon is lower than the external air pressure, the balloon will expand."

* Conclusion: "Therefore, since the balloon was pricked, it may have deflated, resulting in a decrease in air pressure inside the balloon, causing the external air pressure to make the balloon expand."

**5. Inference to the Best Explanation (IBE):**

* **Explanation 1 (E1) Evaluation:**

* Consistency: 1.0

* Parsimony: -2.0

* Coherence: 0.51

* Uncertainty: 2.0

* Result: E1 is accepted (green checkmark)

* **Explanation 2 (E2) Evaluation:**

* Consistency: 1.0

* Parsimony: -3.0

* Coherence: 0.28

* Uncertainty: 3.0

* Result: E2 is rejected (red X)

### Key Observations

* The diagram illustrates a structured approach to causal reasoning, using an LLM to generate explanations and an IBE framework to evaluate them.

* The selection criteria (Consistency, Parsimony, Coherence, Uncertainty) are used to quantify the quality of each explanation.

* Explanation 1 (blowing into the balloon) is favored over Explanation 2 (pricking the balloon) based on the IBE evaluation.

### Interpretation

The diagram demonstrates a process for automated causal reasoning. The LLM generates explanations based on provided premises, and the IBE framework provides a quantitative method for selecting the best explanation. The specific values assigned to the selection criteria (Consistency, Parsimony, Coherence, Uncertainty) reflect the relative strengths and weaknesses of each explanation. In this case, the "blowing into the balloon" explanation is deemed more plausible than the "pricking the balloon" explanation, suggesting that the LLM and IBE framework favor the former as the cause of the balloon's expansion. The negative parsimony values indicate a penalty for complexity in the explanations. The lower coherence and higher uncertainty for Explanation 2 contribute to its rejection.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

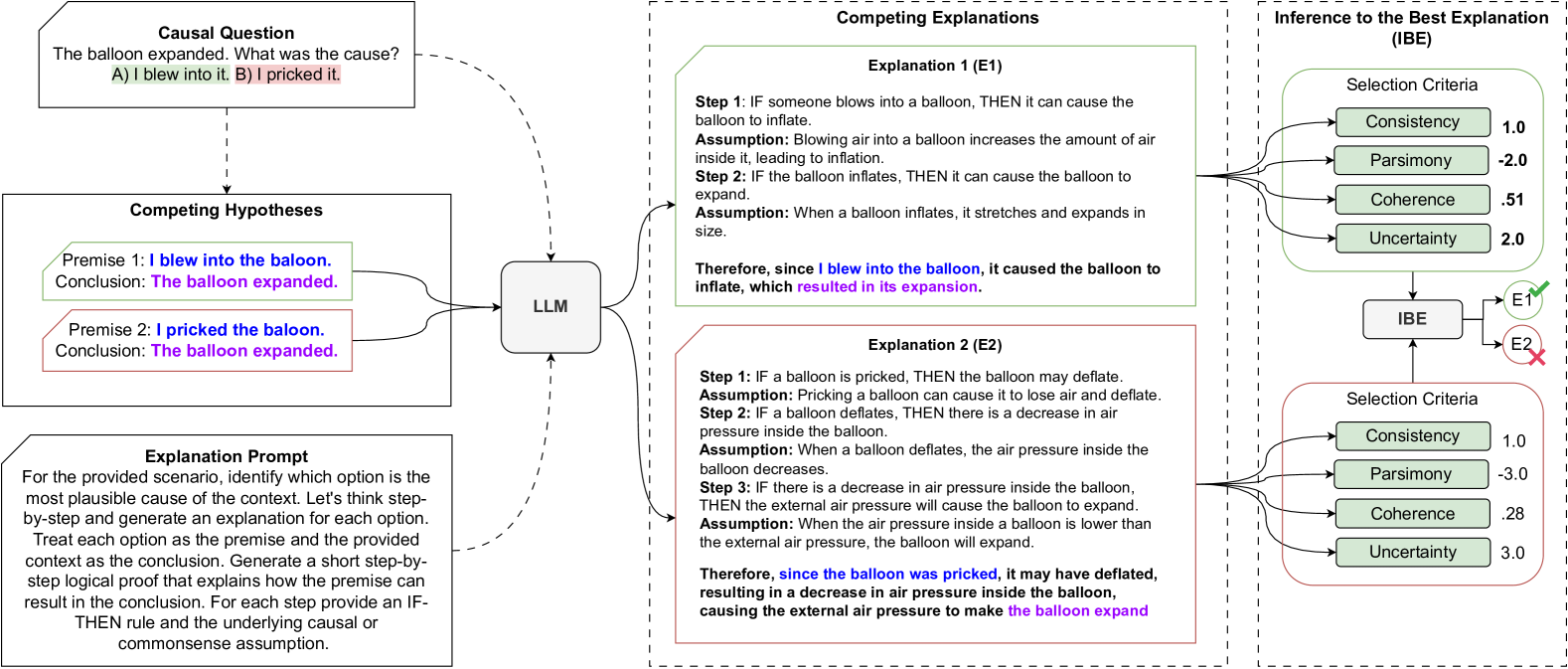

## Diagram: Causal Reasoning with Large Language Model (LLM)

### Overview

This diagram illustrates a process for causal reasoning, utilizing a Large Language Model (LLM) to evaluate competing hypotheses and explanations for a given causal question. The diagram outlines a causal question, competing hypotheses, an explanation prompt, competing explanations generated by the LLM, and an Inference to the Best Explanation (IBE) evaluation based on selection criteria.

### Components/Axes

The diagram is segmented into four main sections:

1. **Causal Question:** Presents the initial problem: "The balloon expanded. What was the cause? A) I blew into it. B) I pricked it."

2. **Competing Hypotheses:** States two premises and their conclusions:

* Premise 1: "I blew into the balloon. Conclusion: The balloon expanded."

* Premise 2: "I pricked the balloon. Conclusion: The balloon expanded."

3. **Explanation Prompt:** Describes the instructions given to the LLM: "For the scenario provided, identify which option is the most plausible cause of the context. Let's think step-by-step and generate an explanation for each option. Treat each option as the premise and the provided context as the conclusion. Generate a short step-by-step logical proof that explains how the premise can result in the conclusion. For each step provide an IF-THEN rule and the underlying causal or commonsense assumption."

4. **Competing Explanations & Inference to the Best Explanation (IBE):** This section is further divided into two explanation blocks (E1 and E2) and an IBE evaluation. Each explanation block consists of a step-by-step explanation with assumptions. The IBE section evaluates each explanation based on four criteria: Consistency, Parsimony, Coherence, and Uncertainty.

### Detailed Analysis or Content Details

**Causal Question:**

* Question: "The balloon expanded. What was the cause?"

* Options: A) I blew into it. B) I pricked it.

**Competing Hypotheses:**

* Hypothesis 1: Premise - "I blew into the balloon." Conclusion - "The balloon expanded."

* Hypothesis 2: Premise - "I pricked the balloon." Conclusion - "The balloon expanded."

**Explanation Prompt:** (Transcribed as is)

"For the scenario provided, identify which option is the most plausible cause of the context. Let's think step-by-step and generate an explanation for each option. Treat each option as the premise and the provided context as the conclusion. Generate a short step-by-step logical proof that explains how the premise can result in the conclusion. For each step provide an IF-THEN rule and the underlying causal or commonsense assumption."

**Explanation 1 (E1):**

* Step 1: "IF someone blows into a balloon, THEN it can cause the balloon to inflate." Assumption: "Blowing air into a balloon increases the amount of air inside it, leading to inflation."

* Step 2: "IF the balloon inflates, THEN it can cause the balloon to expand." Assumption: "When a balloon inflates, it stretches and expands in size."

* Conclusion: "Therefore, since I blew into the balloon, it caused the balloon to inflate, which resulted in its expansion."

**Explanation 2 (E2):**

* Step 1: "IF a balloon is pricked, THEN the balloon may deflate." Assumption: "Pricking a balloon can cause it to lose air and deflate."

* Step 2: "IF a balloon deflates, THEN there is a decrease in air pressure inside the balloon." Assumption: "When a balloon deflates, the air pressure inside the balloon decreases."

* Step 3: "IF there is a decrease in air pressure inside the balloon, THEN the external air pressure will cause the balloon to expand." Assumption: "When the air pressure inside a balloon is lower than the external air pressure, the balloon will expand."

* Conclusion: "Therefore, since the balloon was pricked, it may have deflated, resulting in a decrease in air pressure inside the balloon, causing the external air pressure to make the balloon expand."

**Inference to the Best Explanation (IBE):**

The IBE section presents evaluation scores for each explanation (E1 and E2) across four criteria. The values are as follows:

| Criteria | E1 | E2 |

|---|---|---|

| **Consistency** | 1.0 | 1.0 |

| **Parsimony** | -2.0 | -3.0 |

| **Coherence** | 0.51 | 0.28 |

| **Uncertainty** | 2.0 | 3.0 |

The IBE section visually indicates that E1 is favored over E2, with E1 positioned higher in the diagram.

### Key Observations

* The LLM generates plausible explanations for both hypotheses.

* Explanation 1 (blowing into the balloon) receives higher scores for Parsimony and Coherence, suggesting it is a simpler and more logically consistent explanation.

* Explanation 2 (pricking the balloon) has higher Uncertainty, indicating a less certain causal link.

* The IBE process uses numerical scores to quantify the quality of each explanation based on defined criteria.

* The diagram uses dashed arrows to indicate the flow of information and reasoning.

### Interpretation

This diagram demonstrates a structured approach to causal reasoning, leveraging an LLM to generate and evaluate competing explanations. The IBE framework provides a quantitative method for comparing explanations based on key criteria. The results suggest that, in this scenario, the LLM favors the explanation that blowing into the balloon caused the expansion, as it is more parsimonious and coherent than the explanation involving pricking the balloon. The higher uncertainty associated with the pricking explanation likely stems from the counterintuitive nature of a pricked balloon *expanding* – it typically deflates. The diagram highlights the LLM's ability to not only generate explanations but also to assess their plausibility based on logical principles. The use of IF-THEN rules and underlying assumptions explicitly reveals the reasoning process, making it transparent and auditable. This approach could be valuable in various domains where causal inference is critical, such as scientific discovery, troubleshooting, and decision-making. The diagram is a visual representation of a cognitive process, showing how an LLM can be used to simulate and support human reasoning.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Causal Reasoning and Inference to the Best Explanation (IBE) Flowchart

### Overview

This image is a technical flowchart illustrating a process for causal reasoning using a Large Language Model (LLM) and the framework of Inference to the Best Explanation (IBE). The diagram is divided into three main vertical sections (left, middle, right) connected by arrows, showing the flow from a causal question to the selection of the most plausible explanation based on defined criteria.

### Components/Axes

The diagram is structured into three primary regions:

1. **Left Section (Input & Hypotheses):**

* **Top Box:** "Causal Question" containing the text: "The balloon expanded. What was the cause?" followed by two options: "A) I blew into it. B) I pricked it."

* **Middle Box:** "Competing Hypotheses" containing two premises and conclusions:

* "Premise 1: I blew into the balloon. Conclusion: The balloon expanded." (Text in blue and purple).

* "Premise 2: I pricked the balloon. Conclusion: The balloon expanded." (Text in red and purple).

* **Bottom Box:** "Explanation Prompt" containing a detailed instruction: "For the provided scenario, identify which option is the most plausible cause of the context. Let's think step-by-step and generate an explanation for each option. Treat each option as the premise and the provided context as the conclusion. Generate a short step-by-step logical proof that explains how the premise can result in the conclusion. For each step provide an IF-THEN rule and the underlying causal or commonsense assumption."

2. **Middle Section (Processing & Explanations):**

* A central box labeled "LLM" receives inputs from the left section via arrows.

* The LLM outputs two detailed explanations, each in a separate box:

* **Explanation 1 (E1):** A green-bordered box outlining a three-step causal chain for blowing into a balloon, concluding with "Therefore, since I blew into the balloon, it caused the balloon to inflate, which resulted in its expansion."

* **Explanation 2 (E2):** A red-bordered box outlining a three-step causal chain for pricking a balloon, concluding with "Therefore, since the balloon was pricked, it may have deflated, resulting in a decrease in air pressure inside the balloon, causing the external air pressure to make the balloon expand."

3. **Right Section (Inference & Selection):**

* **Top Header:** "Inference to the Best Explanation (IBE)".

* **IBE Process:** A central box labeled "IBE" receives inputs from both E1 and E2.

* **Selection Criteria:** Two identical tables labeled "Selection Criteria" are positioned above and below the IBE box, linked to E1 and E2 respectively. Each table lists four criteria with numerical scores:

**Selection Criteria for E1:**

| Criterion | Score |

|--------------|-------|

| Consistency | 1.0 |

| Parsimony | -2.0 |

| Coherence | 0.51 |

| Uncertainty | 2.0 |

**Selection Criteria for E2:**

| Criterion | Score |

|--------------|-------|

| Consistency | 1.0 |

| Parsimony | -3.0 |

| Coherence | 0.28 |

| Uncertainty | 3.0 |

* **Output:** The IBE box has two output arrows. One points to "E1" with a green checkmark (✓). The other points to "E2" with a red cross (✗), indicating E1 is selected as the best explanation.

### Detailed Analysis

* **Flow of Logic:** The diagram maps a complete reasoning pipeline:

1. A causal question is posed.

2. Competing hypotheses are formulated as logical premises.

3. An LLM is prompted to generate step-by-step causal explanations for each hypothesis.

4. Each explanation is evaluated against a set of four selection criteria (Consistency, Parsimony, Coherence, Uncertainty), resulting in numerical scores.

5. An Inference to the Best Explanation (IBE) mechanism compares the scored explanations and selects the one with the superior profile (E1 in this case).

* **Explanation Content:**

* **E1 (Blowing):** Follows a direct, additive causal path: Blow -> Inflate -> Expand. Assumptions are straightforward commonsense physics.

* **E2 (Pricking):** Follows a more complex, counter-intuitive path: Prick -> Deflate -> Decrease Internal Pressure -> External Pressure Causes Expansion. This chain relies on the less obvious principle that a decrease in internal pressure can lead to external expansion.

* **Scoring:** The numerical scores quantify the evaluation. E1 scores better (higher) on Parsimony (-2.0 vs -3.0) and Coherence (0.51 vs 0.28), and has lower Uncertainty (2.0 vs 3.0). Both have identical Consistency (1.0).

### Key Observations

1. **Visual Coding:** The diagram uses color consistently: green for the selected hypothesis/explanation (E1) and red for the rejected one (E2). Blue and purple text highlight key logical statements in the hypotheses.

2. **Structural Separation:** The dashed vertical lines clearly demarcate the three phases of the process: Problem Formulation, Explanation Generation, and Explanation Selection.

3. **Complexity Contrast:** The explanation for the less intuitive cause (pricking leading to expansion via deflation) is notably more complex (3 steps with a counter-intuitive final step) than the explanation for the intuitive cause (blowing).

4. **Quantified Evaluation:** The application of specific numerical scores to abstract criteria like "Parsimony" and "Coherence" is a key feature, suggesting a formalized or computational approach to evaluating explanations.

### Interpretation

This diagram serves as a conceptual model for how an AI system, specifically an LLM, can be structured to perform causal reasoning in a transparent and evaluative manner. It moves beyond simple answer generation to a process that:

* **Generates Multiple Hypotheses:** Explicitly considers competing causes.

* **Articulates Causal Mechanisms:** Requires step-by-step logical proofs with underlying assumptions, making the reasoning traceable.

* **Applies Formal Evaluation:** Uses a defined set of epistemic criteria (Consistency, Parsimony, Coherence, Uncertainty) to judge explanations, introducing objectivity.

* **Makes a Justified Selection:** The IBE step synthesizes the evaluations to choose the "best" explanation, which in this case is the simpler, more coherent one (blowing).

The underlying message is that robust causal reasoning involves not just finding *an* explanation, but systematically generating, elaborating, and comparing multiple explanations against rational criteria to identify the most plausible one. The diagram illustrates a pipeline to achieve this, potentially for applications in AI explainability, scientific reasoning, or decision-support systems. The specific example of the balloon is a simple test case to demonstrate the framework's logic.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Causal Reasoning and Explanation Evaluation

### Overview

The image depicts a structured reasoning framework for evaluating causal explanations. It consists of four interconnected sections:

1. **Causal Question** (top-left)

2. **Competing Hypotheses** (bottom-left)

3. **Competing Explanations** (center-right)

4. **Inference to the Best Explanation (IBE)** (rightmost)

The flowchart uses color-coded boxes, dashed arrows, and textual annotations to represent logical relationships and evaluation criteria.

---

### Components/Axes

#### Causal Question Section

- **Text Box**:

- Label: "Causal Question"

- Content:

- Premise: "The balloon expanded."

- Question: "What was the cause?"

- Options:

- A) "I blew into it." (highlighted green)

- B) "I pricked it." (highlighted red)

#### Competing Hypotheses Section

- **Two Hypothesis Boxes**:

1. **Green Box (Premise 1)**:

- Premise: "I blew into the balloon."

- Conclusion: "The balloon expanded."

2. **Red Box (Premise 2)**:

- Premise: "I pricked the balloon."

- Conclusion: "The balloon expanded."

- **Explanation Prompt**:

- Text instructing step-by-step explanation generation for each hypothesis.

#### Competing Explanations Section

- **Two Explanation Boxes**:

1. **Green Box (E1)**:

- **Step 1**: "IF someone blows into a balloon, THEN it can cause the balloon to inflate."

- Assumption: Blowing air increases internal air volume.

- **Step 2**: "IF the balloon inflates, THEN it can cause the balloon to expand."

- Assumption: Inflation stretches the balloon.

- Final Statement: "Therefore, since I blew into the balloon, it caused the balloon to inflate, which resulted in its expansion."

2. **Red Box (E2)**:

- **Step 1**: "IF a balloon is pricked, THEN the balloon may deflate."

- Assumption: Pricking causes air loss.

- **Step 2**: "IF a balloon deflates, THEN there is a decrease in air pressure inside the balloon."

- Assumption: Deflation reduces internal pressure.

- **Step 3**: "IF there is a decrease in air pressure inside the balloon, THEN the external air pressure will cause the balloon to expand."

- Assumption: Lower internal pressure allows external pressure to expand the balloon.

- Final Statement: "Therefore, since the balloon was pricked, it may have deflated, resulting in a decrease in air pressure inside the balloon, causing the external air pressure to make the balloon expand."

#### Inference to the Best Explanation (IBE) Section

- **Selection Criteria**:

- **Consistency**: 1.0 (E1) vs. 1.0 (E2)

- **Parsimony**: -2.0 (E1) vs. -3.0 (E2)

- **Coherence**: 0.51 (E1) vs. 0.28 (E2)

- **Uncertainty**: 2.0 (E1) vs. 3.0 (E2)

- **IBE Output**:

- Selected Explanation: E1 (marked with a green checkmark).

---

### Detailed Analysis

#### Causal Question Section

- **Textual Content**:

- Premise: "The balloon expanded."

- Question: "What was the cause?"

- Options:

- A) "I blew into it." (highlighted green)

- B) "I pricked it." (highlighted red)

#### Competing Hypotheses Section

- **Premise 1 (Green Box)**:

- Logical Structure:

- Premise → Conclusion: "I blew into the balloon" → "The balloon expanded."

- **Premise 2 (Red Box)**:

- Logical Structure:

- Premise → Conclusion: "I pricked the balloon" → "The balloon expanded."

#### Competing Explanations Section

- **E1 (Green Box)**:

- **Step 1**:

- Conditional: "IF someone blows into a balloon, THEN it can cause the balloon to inflate."

- Assumption: Blowing air increases internal air volume.

- **Step 2**:

- Conditional: "IF the balloon inflates, THEN it can cause the balloon to expand."

- Assumption: Inflation stretches the balloon.

- **Final Statement**: Links Premise 1 to the conclusion via inflation.

- **E2 (Red Box)**:

- **Step 1**:

- Conditional: "IF a balloon is pricked, THEN the balloon may deflate."

- Assumption: Pricking causes air loss.

- **Step 2**:

- Conditional: "IF a balloon deflates, THEN there is a decrease in air pressure inside the balloon."

- Assumption: Deflation reduces internal pressure.

- **Step 3**:

- Conditional: "IF there is a decrease in air pressure inside the balloon, THEN the external air pressure will cause the balloon to expand."

- Assumption: Lower internal pressure allows external pressure to expand the balloon.

- **Final Statement**: Links Premise 2 to the conclusion via deflation and pressure dynamics.

#### Inference to the Best Explanation (IBE) Section

- **Selection Criteria**:

- **Consistency**: Both E1 and E2 score 1.0 (fully consistent with the conclusion).

- **Parsimony**: E1 (-2.0) is simpler than E2 (-3.0).

- **Coherence**: E1 (0.51) is more logically coherent than E2 (0.28).

- **Uncertainty**: E1 (2.0) has lower uncertainty than E2 (3.0).

- **IBE Output**:

- E1 is selected as the best explanation due to higher parsimony, coherence, and lower uncertainty.

---

### Key Observations

1. **Contradictory Hypotheses, Shared Conclusion**:

- Both hypotheses (blowing vs. pricking) lead to the same conclusion ("The balloon expanded"), requiring explanation evaluation.

2. **Explanation Complexity**:

- E1 uses two steps (inflation → expansion).

- E2 uses three steps (deflation → pressure drop → expansion), making it less parsimonious.

3. **Selection Criteria Trade-offs**:

- E1 scores better on parsimony and coherence but has higher uncertainty than E2.

- E2's higher uncertainty and lower coherence make it less favorable despite similar consistency.

4. **Logical Flow**:

- Dashed arrows connect hypotheses to explanations, which then feed into IBE.

---

### Interpretation

The flowchart illustrates a formalized process for causal reasoning:

1. **Hypothesis Generation**: Two competing causes (blowing vs. pricking) are proposed.

2. **Explanation Construction**: Each hypothesis is expanded into a step-by-step causal chain with explicit assumptions.

3. **Evaluation via IBE**: Explanations are scored on four criteria:

- **Consistency**: How well the explanation aligns with observed data.

- **Parsimony**: Simplicity of the explanation (fewer assumptions).

- **Coherence**: Logical consistency of the explanation's steps.

- **Uncertainty**: Degree of ambiguity in the explanation.

**Why E1 is Selected**:

- E1's explanation (blowing → inflation → expansion) is simpler (fewer steps) and more coherent than E2's (pricking → deflation → pressure drop → expansion).

- While E2 introduces a plausible physical mechanism (external pressure causing expansion after deflation), its added complexity and higher uncertainty make it less favorable.

**Notable Anomaly**:

- The conclusion "The balloon expanded" is counterintuitive for Premise 2 ("I pricked it"), as pricking typically causes deflation. E2 resolves this by invoking external pressure dynamics, but this introduces additional assumptions, increasing uncertainty.

This framework demonstrates how logical reasoning systems can evaluate explanations by balancing simplicity, coherence, and alignment with observed outcomes.

DECODING INTELLIGENCE...