## Causal Reasoning Diagram: Balloon Expansion

### Overview

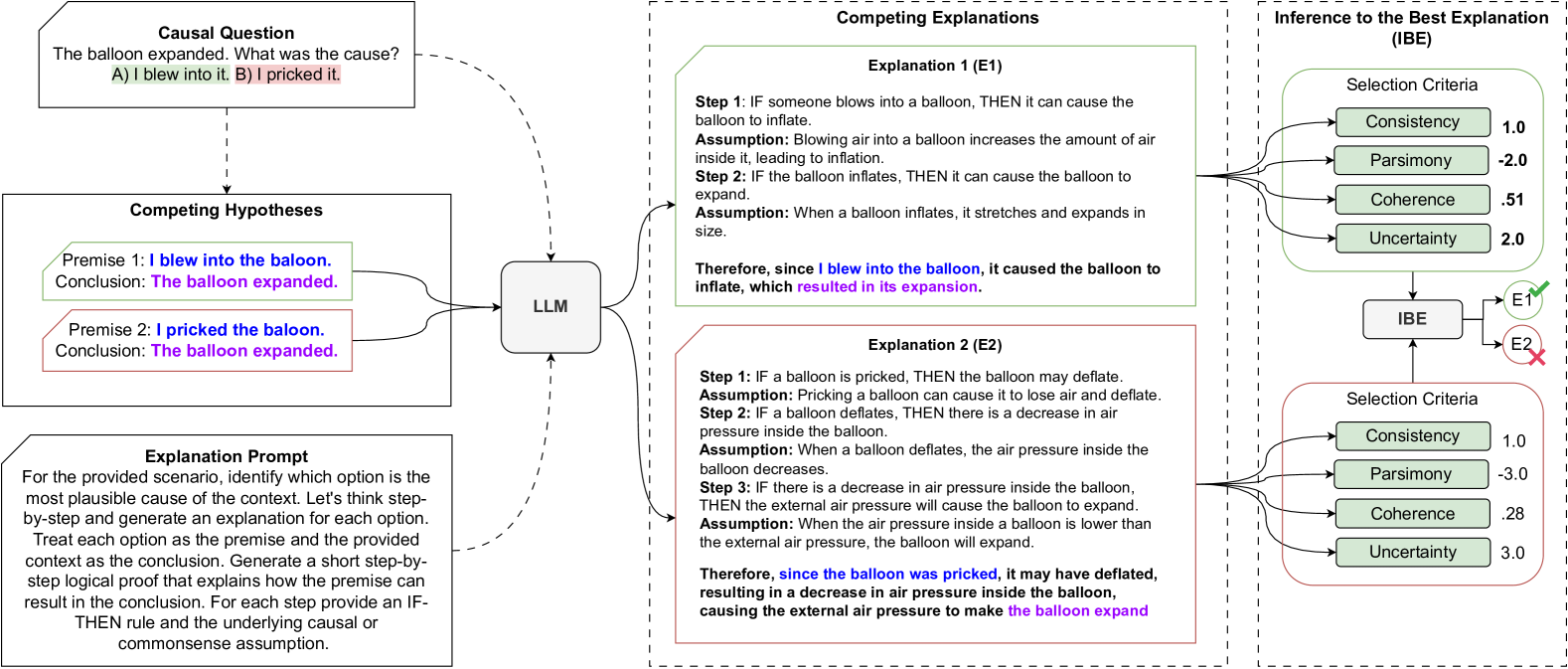

The image presents a diagram illustrating a causal reasoning process, specifically focusing on determining the cause of a balloon's expansion. It outlines competing hypotheses, explanations generated by a Large Language Model (LLM), and an Inference to the Best Explanation (IBE) framework to evaluate these explanations.

### Components/Axes

The diagram is divided into three main sections:

1. **Causal Question:** Poses the problem: "The balloon expanded. What was the cause? A) I blew into it. B) I pricked it."

2. **Competing Hypotheses:** Presents two premises:

* Premise 1: "I blew into the balloon. Conclusion: The balloon expanded." (highlighted in green)

* Premise 2: "I pricked the balloon. Conclusion: The balloon expanded." (highlighted in red)

3. **Explanation Prompt:** Provides instructions for generating explanations for each option, treating each option as a premise and generating a step-by-step logical proof.

4. **LLM:** A central node representing the Large Language Model that processes the hypotheses and generates explanations.

5. **Competing Explanations:** Presents two explanations generated by the LLM:

* Explanation 1 (E1): Based on blowing into the balloon.

* Explanation 2 (E2): Based on pricking the balloon.

6. **Inference to the Best Explanation (IBE):** Evaluates the explanations based on selection criteria.

### Detailed Analysis or Content Details

**1. Causal Question and Competing Hypotheses:**

* The causal question sets up the problem: "The balloon expanded. What was the cause?" with two possible answers: "A) I blew into it" and "B) I pricked it."

* The competing hypotheses frame these answers as premises leading to the conclusion that "The balloon expanded."

**2. Explanation Prompt:**

* The prompt instructs the system to identify the most plausible cause and generate step-by-step explanations for each option.

* It emphasizes the use of IF-THEN rules and commonsense assumptions.

**3. LLM:**

* The LLM acts as the central processing unit, taking the hypotheses and prompt as input and generating explanations.

**4. Competing Explanations:**

* **Explanation 1 (E1):**

* Step 1: "IF someone blows into a balloon, THEN it can cause the balloon to inflate."

* Assumption: "Blowing air into a balloon increases the amount of air inside it, leading to inflation."

* Step 2: "IF the balloon inflates, THEN it can cause the balloon to expand."

* Assumption: "When a balloon inflates, it stretches and expands in size."

* Conclusion: "Therefore, since I blew into the balloon, it caused the balloon to inflate, which resulted in its expansion."

* **Explanation 2 (E2):**

* Step 1: "IF a balloon is pricked, THEN the balloon may deflate."

* Assumption: "Pricking a balloon can cause it to lose air and deflate."

* Step 2: "IF a balloon deflates, THEN there is a decrease in air pressure inside the balloon."

* Assumption: "When a balloon deflates, there is a decrease in air pressure inside the balloon."

* Step 3: "IF there is a decrease in air pressure inside the balloon, THEN the external air pressure will cause the balloon to expand."

* Assumption: "When the air pressure inside a balloon is lower than the external air pressure, the balloon will expand."

* Conclusion: "Therefore, since the balloon was pricked, it may have deflated, resulting in a decrease in air pressure inside the balloon, causing the external air pressure to make the balloon expand."

**5. Inference to the Best Explanation (IBE):**

* **Explanation 1 (E1) Evaluation:**

* Consistency: 1.0

* Parsimony: -2.0

* Coherence: 0.51

* Uncertainty: 2.0

* Result: E1 is accepted (green checkmark)

* **Explanation 2 (E2) Evaluation:**

* Consistency: 1.0

* Parsimony: -3.0

* Coherence: 0.28

* Uncertainty: 3.0

* Result: E2 is rejected (red X)

### Key Observations

* The diagram illustrates a structured approach to causal reasoning, using an LLM to generate explanations and an IBE framework to evaluate them.

* The selection criteria (Consistency, Parsimony, Coherence, Uncertainty) are used to quantify the quality of each explanation.

* Explanation 1 (blowing into the balloon) is favored over Explanation 2 (pricking the balloon) based on the IBE evaluation.

### Interpretation

The diagram demonstrates a process for automated causal reasoning. The LLM generates explanations based on provided premises, and the IBE framework provides a quantitative method for selecting the best explanation. The specific values assigned to the selection criteria (Consistency, Parsimony, Coherence, Uncertainty) reflect the relative strengths and weaknesses of each explanation. In this case, the "blowing into the balloon" explanation is deemed more plausible than the "pricking the balloon" explanation, suggesting that the LLM and IBE framework favor the former as the cause of the balloon's expansion. The negative parsimony values indicate a penalty for complexity in the explanations. The lower coherence and higher uncertainty for Explanation 2 contribute to its rejection.