TECHNICAL ASSET FINGERPRINT

fdb1525a89625948f7e0fb0b

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

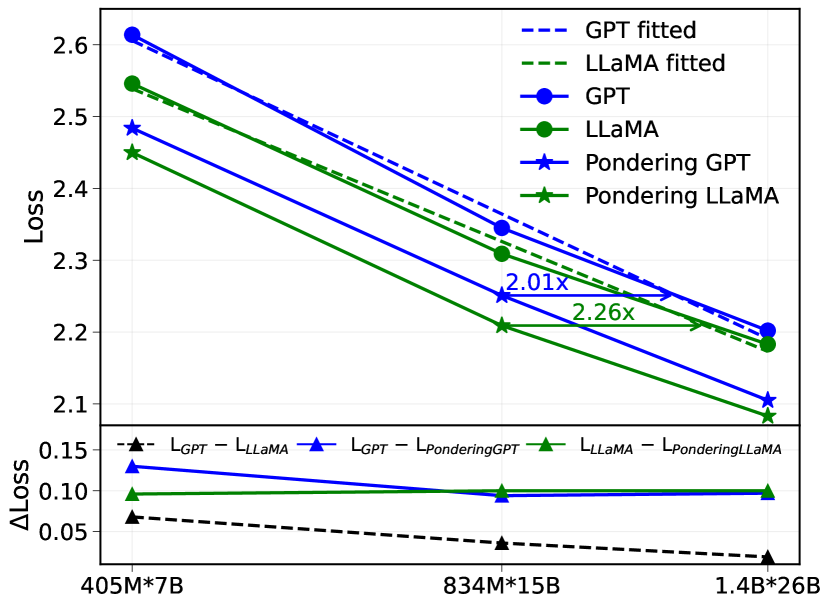

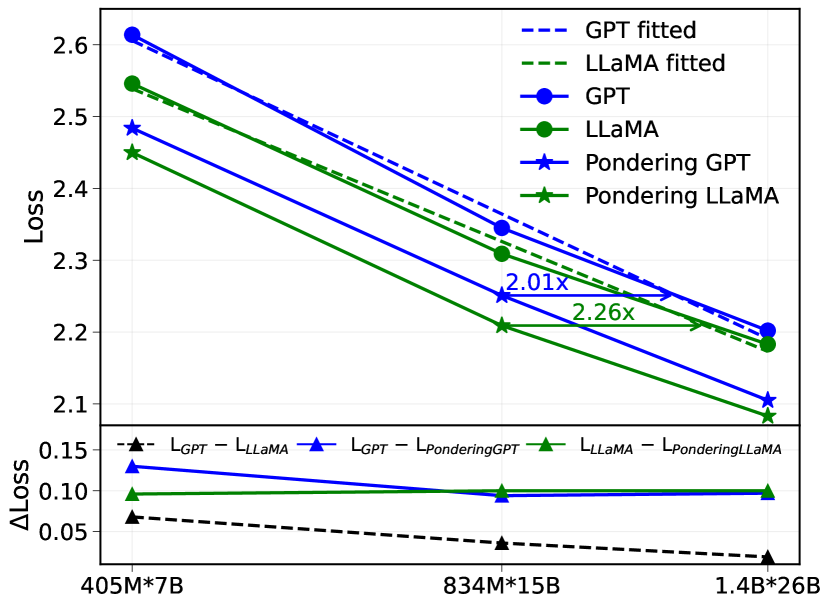

## Chart: Loss vs. Model Size

### Overview

The image is a line chart comparing the loss of different language models (GPT, LLaMA, Pondering GPT, and Pondering LLaMA) across varying model sizes (405M*7B, 834M*15B, and 1.4B*26B). It also shows the difference in loss between GPT and LLaMA, GPT and Pondering GPT, and LLaMA and Pondering LLaMA.

### Components/Axes

* **Y-axis (left)**: "Loss", ranging from 2.1 to 2.6 in increments of 0.1.

* **X-axis (bottom)**: Model sizes: "405M*7B", "834M*15B", and "1.4B*26B".

* **Lower Y-axis (left)**: "ΔLoss", ranging from 0.05 to 0.15 in increments of 0.05.

* **Legend (top-right)**:

* "GPT fitted" (dashed blue line)

* "LLaMA fitted" (dashed green line)

* "GPT" (solid blue line with circle markers)

* "LLaMA" (solid green line with circle markers)

* "Pondering GPT" (solid blue line with star markers)

* "Pondering LLaMA" (solid green line with star markers)

* "L<sub>GPT</sub> - L<sub>LLaMA</sub>" (dashed black line with triangle markers)

* "L<sub>GPT</sub> - L<sub>PonderingGPT</sub>" (solid blue line with triangle markers)

* "L<sub>LLaMA</sub> - L<sub>PonderingLLaMA</sub>" (solid green line with triangle markers)

### Detailed Analysis

**Main Chart (Loss vs. Model Size):**

* **GPT (solid blue line with circle markers):** Decreases from approximately 2.62 at 405M*7B to approximately 2.19 at 1.4B*26B.

* **LLaMA (solid green line with circle markers):** Decreases from approximately 2.54 at 405M*7B to approximately 2.18 at 1.4B*26B.

* **Pondering GPT (solid blue line with star markers):** Decreases from approximately 2.48 at 405M*7B to approximately 2.09 at 1.4B*26B.

* **Pondering LLaMA (solid green line with star markers):** Decreases from approximately 2.45 at 405M*7B to approximately 2.10 at 1.4B*26B.

* **GPT fitted (dashed blue line):** Decreases from approximately 2.6 at 405M*7B to approximately 2.2 at 1.4B*26B.

* **LLaMA fitted (dashed green line):** Decreases from approximately 2.55 at 405M*7B to approximately 2.17 at 1.4B*26B.

**Difference Chart (ΔLoss):**

* **L<sub>GPT</sub> - L<sub>LLaMA</sub> (dashed black line with triangle markers):** Decreases slightly from approximately 0.07 at 405M*7B to approximately 0.02 at 1.4B*26B.

* **L<sub>GPT</sub> - L<sub>PonderingGPT</sub> (solid blue line with triangle markers):** Remains relatively constant at approximately 0.13-0.10 across all model sizes.

* **L<sub>LLaMA</sub> - L<sub>PonderingLLaMA</sub> (solid green line with triangle markers):** Remains relatively constant at approximately 0.10-0.09 across all model sizes.

**Annotations:**

* An arrow points from the LLaMA line at 834M*15B to the LLaMA line at 1.4B*26B, labeled "2.01x".

* An arrow points from the Pondering LLaMA line at 834M*15B to the Pondering LLaMA line at 1.4B*26B, labeled "2.26x".

### Key Observations

* All models show a decrease in loss as the model size increases.

* Pondering GPT and Pondering LLaMA consistently have lower loss than GPT and LLaMA, respectively.

* The difference in loss between GPT and LLaMA decreases as the model size increases.

* The difference in loss between GPT and Pondering GPT, and LLaMA and Pondering LLaMA, remains relatively constant across all model sizes.

* The "fitted" lines are very close to the original lines.

### Interpretation

The chart demonstrates that increasing model size generally leads to lower loss for all models tested (GPT, LLaMA, and their "Pondering" variants). The "Pondering" versions of the models consistently outperform their non-pondering counterparts, suggesting that the "Pondering" technique is effective in reducing loss. The decreasing difference in loss between GPT and LLaMA as model size increases suggests that the two models converge in performance as they scale. The annotations "2.01x" and "2.26x" likely refer to the relative improvement in some metric (possibly perplexity or another measure of language modeling performance) between the 834M*15B and 1.4B*26B model sizes for LLaMA and Pondering LLaMA, respectively. The "fitted" lines likely represent a smoothed or regression-based representation of the original data.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Chart: Loss vs. Model Size

### Overview

The image presents a line chart comparing the loss values of different language models (GPT and LLaMA) with and without "Pondering" as a function of model size. The chart also includes a smaller subplot showing the change in loss (ΔLoss) for each model.

### Components/Axes

* **X-axis:** Model Size, labeled with values: 405M\*7B, 834M\*15B, 1.4B\*26B.

* **Y-axis (Main Chart):** Loss, ranging from approximately 2.1 to 2.7.

* **Y-axis (Subplot):** ΔLoss (Change in Loss), ranging from approximately 0 to 0.15.

* **Legend (Top-Right):**

* Blue Dashed Line: GPT fitted

* Orange Dashed Line: LLaMA fitted

* Blue Solid Line with Circle Markers: GPT

* Green Solid Line with Circle Markers: LLaMA

* Blue Solid Line with Star Markers: Pondering GPT

* Orange Solid Line with Star Markers: Pondering LLaMA

* **Text Annotations:** "2.01x" and "2.26x" are annotated on the chart, indicating relative scaling factors.

### Detailed Analysis or Content Details

**Main Chart:**

* **GPT (Blue Circles):** The line slopes downward, indicating decreasing loss with increasing model size.

* At 405M\*7B: Loss ≈ 2.62

* At 834M\*15B: Loss ≈ 2.38

* At 1.4B\*26B: Loss ≈ 2.23

* **LLaMA (Green Circles):** The line also slopes downward, but is generally above the GPT line.

* At 405M\*7B: Loss ≈ 2.52

* At 834M\*15B: Loss ≈ 2.32

* At 1.4B\*26B: Loss ≈ 2.18

* **Pondering GPT (Blue Stars):** The line slopes downward, starting above the GPT line and converging towards it at larger model sizes.

* At 405M\*7B: Loss ≈ 2.48

* At 834M\*15B: Loss ≈ 2.30

* At 1.4B\*26B: Loss ≈ 2.20

* **Pondering LLaMA (Orange Stars):** The line slopes downward, starting above the LLaMA line and converging towards it at larger model sizes.

* At 405M\*7B: Loss ≈ 2.43

* At 834M\*15B: Loss ≈ 2.26

* At 1.4B\*26B: Loss ≈ 2.15

* **Fitted Lines (Dashed):** The dashed lines represent the fitted curves for GPT (blue) and LLaMA (orange). They generally follow the trend of the corresponding solid lines.

**Subplot (ΔLoss):**

* L<sub>GPT</sub> (Black Dashed Line): Relatively flat, with a slight downward trend. ΔLoss ≈ 0.05 - 0.07

* L<sub>LLaMA</sub> (Black Solid Line with Triangle Markers): Relatively flat, with a slight downward trend. ΔLoss ≈ 0.05 - 0.08

* L<sub>PonderingGPT</sub> (Red Solid Line with Triangle Markers): Relatively flat, with a slight downward trend. ΔLoss ≈ 0.08 - 0.10

* L<sub>PonderingLLaMA</sub> (Orange Solid Line with Triangle Markers): Relatively flat, with a slight downward trend. ΔLoss ≈ 0.05 - 0.07

### Key Observations

* Both GPT and LLaMA exhibit decreasing loss as model size increases.

* GPT consistently has lower loss values than LLaMA across all model sizes.

* "Pondering" initially increases loss but appears to converge towards the non-pondering models as size increases.

* The ΔLoss subplot shows that the change in loss is relatively small across the tested model sizes for all configurations.

* The annotations "2.01x" and "2.26x" likely represent the scaling factor of loss reduction between model sizes for LLaMA and GPT respectively.

### Interpretation

The chart demonstrates the impact of model size on loss for GPT and LLaMA architectures, both with and without the "Pondering" mechanism. The consistent downward trend in loss for both models indicates that increasing model size generally improves performance (reduces loss). The lower loss values for GPT suggest that it is a more efficient model than LLaMA for the given task.

The "Pondering" mechanism appears to introduce a slight initial increase in loss, but its effect diminishes as the model size grows. This could indicate that "Pondering" is more beneficial for smaller models or requires larger models to fully realize its potential. The ΔLoss subplot confirms that the change in loss is relatively small, suggesting that the benefits of increasing model size may plateau beyond a certain point.

The annotations "2.01x" and "2.26x" provide a quantitative measure of the loss reduction achieved by increasing model size. These values suggest that GPT experiences a slightly larger reduction in loss per unit increase in model size compared to LLaMA.

The chart provides valuable insights into the trade-offs between model size, loss, and the effectiveness of the "Pondering" mechanism. This information can be used to guide the development and deployment of language models for specific applications.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Chart with Subplot: Model Loss Comparison Across Scales

### Overview

The image displays a two-part chart comparing the performance (measured in "Loss") of different large language model architectures across three increasing model scales. The top subplot shows the absolute loss values for six model variants, while the bottom subplot shows the difference in loss (ΔLoss) between specific pairs of models. The chart includes fitted trend lines and annotations indicating performance multipliers.

### Components/Axes

**Main Chart (Top Subplot):**

* **Y-axis:** Label is "Loss". Scale ranges from approximately 2.05 to 2.65, with major ticks at 2.1, 2.2, 2.3, 2.4, 2.5, and 2.6.

* **X-axis:** Shared with the bottom subplot. Three categorical points are labeled: "405M*7B", "834M*15B", and "1.4B*26B". These likely represent model scale combinations (e.g., parameter counts).

* **Legend (Top-Right Corner):** Contains six entries:

1. `GPT fitted` (Blue, dashed line)

2. `LLaMA fitted` (Green, dashed line)

3. `GPT` (Blue, solid line with circle markers)

4. `LLaMA` (Green, solid line with circle markers)

5. `Pondering GPT` (Blue, solid line with star markers)

6. `Pondering LLaMA` (Green, solid line with star markers)

* **Annotations:** Two horizontal arrows with text are present in the main chart:

* A blue arrow pointing from the `GPT` line to the `Pondering GPT` line at the "834M*15B" scale, labeled "2.01x".

* A green arrow pointing from the `LLaMA` line to the `Pondering LLaMA` line at the "834M*15B" scale, labeled "2.26x".

**Subplot (Bottom):**

* **Y-axis:** Label is "ΔLoss". Scale ranges from 0.00 to 0.15, with major ticks at 0.05, 0.10, and 0.15.

* **X-axis:** Same as main chart.

* **Legend (Bottom-Left Corner):** Contains three entries:

1. `L_GPT - L_LLaMA` (Black, dashed line with upward-pointing triangle markers)

2. `L_GPT - L_PonderingGPT` (Blue, solid line with upward-pointing triangle markers)

3. `L_LLaMA - L_PonderingLLaMA` (Green, solid line with upward-pointing triangle markers)

### Detailed Analysis

**Main Chart - Loss Trends:**

All six data series show a clear downward trend as model scale increases from left to right ("405M*7B" to "1.4B*26B").

1. **GPT Series (Blue):**

* `GPT` (solid, circles): Starts at ~2.61 (405M*7B), decreases to ~2.34 (834M*15B), and ends at ~2.20 (1.4B*26B).

* `GPT fitted` (dashed): Closely follows the `GPT` line, suggesting a good fit.

* `Pondering GPT` (solid, stars): Consistently lower than standard `GPT`. Starts at ~2.48, decreases to ~2.25, ends at ~2.10.

2. **LLaMA Series (Green):**

* `LLaMA` (solid, circles): Starts at ~2.55, decreases to ~2.31, ends at ~2.18.

* `LLaMA fitted` (dashed): Closely follows the `LLaMA` line.

* `Pondering LLaMA` (solid, stars): Consistently lower than standard `LLaMA`. Starts at ~2.45, decreases to ~2.21, ends at ~2.08.

**Key Relationship:** At every scale, the "Pondering" variant of a model has a lower loss than its standard counterpart. The green lines (`LLaMA` family) are generally slightly lower than their blue (`GPT` family) counterparts at the same scale and variant.

**Subplot - ΔLoss Trends:**

1. `L_GPT - L_LLaMA` (Black dashed): Positive value, decreasing from ~0.065 to ~0.02. This indicates the loss gap between standard GPT and LLaMA narrows as scale increases.

2. `L_GPT - L_PonderingGPT` (Blue solid): Positive value, relatively stable around 0.10-0.13. This is the consistent loss reduction gained by applying "Pondering" to GPT.

3. `L_LLaMA - L_PonderingLLaMA` (Green solid): Positive value, relatively stable around 0.10. This is the consistent loss reduction gained by applying "Pondering" to LLaMA.

### Key Observations

1. **Universal Scaling Law:** Loss decreases monotonically with increased model scale for all architectures shown.

2. **"Pondering" Efficacy:** The "Pondering" technique provides a consistent and significant reduction in loss for both GPT and LLaMA architectures across all scales. The annotations suggest this translates to a ~2x to 2.26x improvement at the middle scale.

3. **Architecture Comparison:** The standard LLaMA model consistently outperforms (has lower loss than) the standard GPT model at equivalent scales, though the gap narrows with scale.

4. **Fitted Lines:** The dashed "fitted" lines for GPT and LLaMA closely track their respective solid lines, indicating the fitted model is a good representation of the observed data trend.

### Interpretation

This chart demonstrates two key findings in large language model research:

1. **Predictable Scaling:** Model performance, as measured by loss, improves predictably as computational scale (a product of parameters and likely data/training compute) increases. This supports the concept of scaling laws.

2. **Architectural Innovation Value:** The "Pondering" modification represents a significant architectural or training improvement. It provides a consistent performance boost *on top of* the gains from simply scaling up the base model. The fact that the ΔLoss for "Pondering" (blue and green lines in the subplot) remains relatively flat across scales suggests this improvement is robust and scales well—it doesn't diminish as models get larger.

The narrowing gap between standard GPT and LLaMA (`L_GPT - L_LLaMA`) could imply that architectural differences become less critical at very large scales, or that the specific GPT variant tested here scales slightly more efficiently than the LLaMA variant within this range. The "Pondering" technique appears to be a more impactful intervention than the choice between these two base architectures at these scales.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graph: Model Performance Comparison Across Sizes

### Overview

The image contains a dual-axis line graph comparing the performance of GPT and LLaMA models across three different sizes (405M*7B, 834M*15B, 1.4B*26B). The main chart shows loss values, while the lower subplot displays the difference in loss (ΔLoss) between model variants. The graph includes fitted lines, actual model performance, and pondering-adjusted models.

### Components/Axes

- **Main Chart**:

- **X-axis**: Model sizes (405M*7B, 834M*15B, 1.4B*26B)

- **Y-axis**: Loss (2.1–2.6)

- **Legend**:

- Dashed blue: GPT fitted

- Dashed green: LLaMA fitted

- Solid blue circles: GPT

- Solid green circles: LLaMA

- Solid blue stars: Pondering GPT

- Solid green stars: Pondering LLaMA

- **Annotations**:

- "2.01x" (834M*15B)

- "2.26x" (1.4B*26B)

- **Lower Subplot**:

- **X-axis**: Same model sizes

- **Y-axis**: ΔLoss (0.05–0.15)

- **Lines**:

- Blue: GPT - LLaMA

- Green: LLaMA - Pondering LLaMA

- Black dashed: L_GPT - L_LLaMA

### Detailed Analysis

1. **Main Chart Trends**:

- **GPT fitted (dashed blue)**: Starts at ~2.6 (405M*7B) and decreases to ~2.2 (1.4B*26B).

- **LLaMA fitted (dashed green)**: Starts at ~2.55 (405M*7B) and decreases to ~2.15 (1.4B*26B).

- **GPT (solid blue circles)**: Starts at ~2.5 (405M*7B) and decreases to ~2.2 (1.4B*26B).

- **LLaMA (solid green circles)**: Starts at ~2.45 (405M*7B) and decreases to ~2.15 (1.4B*26B).

- **Pondering GPT (blue stars)**: Starts at ~2.45 (405M*7B) and decreases to ~2.1 (1.4B*26B).

- **Pondering LLaMA (green stars)**: Starts at ~2.4 (405M*7B) and decreases to ~2.05 (1.4B*26B).

2. **Lower Subplot Trends**:

- **GPT - LLaMA (blue)**: ΔLoss ~0.12 (405M*7B) to ~0.08 (1.4B*26B).

- **LLaMA - Pondering LLaMA (green)**: ΔLoss ~0.08 (405M*7B) to ~0.05 (1.4B*26B).

- **L_GPT - L_LLaMA (black dashed)**: ΔLoss ~0.06 (405M*7B) to ~0.03 (1.4B*26B).

### Key Observations

1. **Fitted vs. Actual Models**:

- Fitted lines (dashed) are consistently higher than actual model lines (solid), suggesting overestimation or different evaluation criteria.

- Pondering models (stars) show lower loss than base models (circles), indicating performance improvement.

2. **Model Size Impact**:

- Loss decreases as model size increases for all variants.

- The ratio of GPT fitted to LLaMA fitted loss increases from 2.01x (834M*15B) to 2.26x (1.4B*26B), implying GPT's relative performance degrades more with larger models.

3. **ΔLoss Analysis**:

- GPT has a larger loss gap compared to LLaMA (blue line) than LLaMA compared to its pondering version (green line).

- The black dashed line (L_GPT - L_LLaMA) shows the smallest ΔLoss, suggesting minimal difference between GPT and LLaMA base models.

### Interpretation

- **Performance Trends**: Larger models generally perform better (lower loss), but the rate of improvement varies by model type. Pondering techniques reduce loss, with LLaMA showing a more significant reduction than GPT.

- **Fitted Line Discrepancy**: The fitted lines (dashed) being higher than actual models may indicate overfitting or a mismatch between training and evaluation data.

- **Ratio Increase**: The growing ratio of GPT to LLaMA fitted loss (2.01x → 2.26x) suggests GPT's performance becomes relatively worse as models scale, possibly due to architectural or training differences.

- **ΔLoss Implications**: The smaller ΔLoss in pondering LLaMA (green line) highlights its effectiveness in narrowing the performance gap between GPT and LLaMA. The black dashed line's minimal ΔLoss implies that base GPT and LLaMA models are closer in performance than their fitted counterparts.

This data underscores the importance of model architecture and optimization techniques (e.g., pondering) in balancing performance and scalability. The fitted lines' divergence from actual results warrants further investigation into evaluation methodologies.

DECODING INTELLIGENCE...