## Flowchart: LLM-Based System with Tool Integration

### Overview

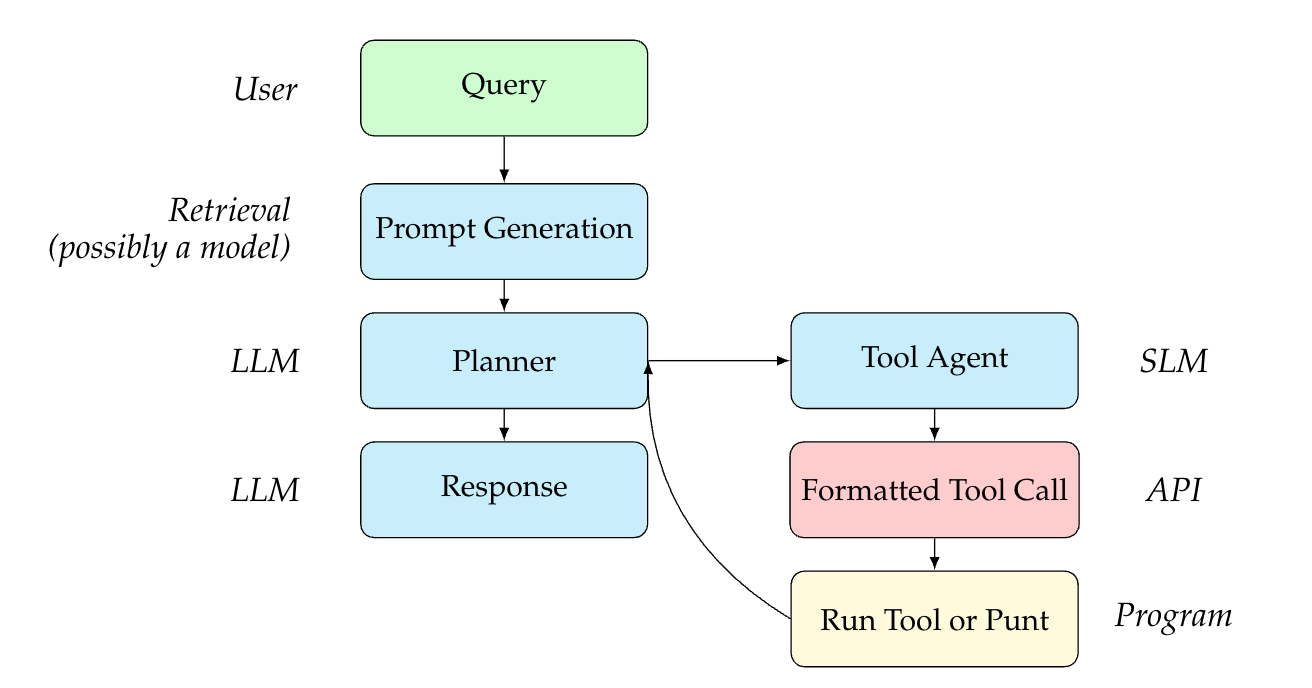

This flowchart illustrates a multi-stage process for handling user queries through a combination of Large Language Models (LLM), Small Language Models (SLM), and external tools. The system demonstrates conditional logic for direct response generation versus tool-assisted processing.

### Components/Axes

**Key Elements:**

1. **User** (Top-left, green box)

2. **Query** (Directly below User)

3. **Prompt Generation** (LLM component, blue box)

4. **Planner** (LLM component, blue box)

5. **Response** (LLM output, blue box)

6. **Tool Agent** (Blue box, right branch from Planner)

7. **Formatted Tool Call** (Red box, API interface)

8. **Run Tool or Punt** (Yellow box, program execution)

9. **API** (Pink box, rightmost)

10. **Program** (Pink box, rightmost)

11. **SLM** (Pink box, rightmost)

**Flow Direction:**

- Top-to-bottom vertical flow for initial processing

- Rightward horizontal flow for tool integration

- Circular feedback loop from "Run Tool or Punt" to "Planner"

### Detailed Analysis

**Color Coding:**

- Green: User/Query (input stage)

- Blue: Core LLM components (Planner, Prompt Generation, Response)

- Red: API interface (Tool Call)

- Yellow: Program execution (Run Tool or Punt)

- Pink: SLM and Program (external system components)

**Key Connections:**

1. User → Query → Prompt Generation → Planner

2. Planner branches to:

- Direct Response (LLM output)

- Tool Agent → Formatted Tool Call → API/Program

3. Program/Run Tool or Punt loops back to Planner

4. SLM receives output from API/Program

### Key Observations

1. **Conditional Logic:** The Planner determines whether to generate a direct response or invoke external tools.

2. **Tool Integration:** When tools are needed, the system uses an API to interact with external programs.

3. **Feedback Mechanism:** The "Run Tool or Punt" step creates a closed-loop system for iterative processing.

4. **Model Specialization:** Distinguishes between LLM (complex reasoning) and SLM (specialized tasks).

### Interpretation

This architecture demonstrates a hybrid AI system that:

- Uses LLMs for initial query understanding and planning

- Leverages SLMs for specialized tasks through API integration

- Maintains flexibility through the "Punt" option (fallback to direct response)

- Enables iterative refinement via the feedback loop

The system appears designed for complex query handling where:

1. Direct LLM responses may be insufficient

2. External data/programs are required for accurate answers

3. Multiple processing stages are needed for optimal results

Notable design choices include:

- Separation of concerns between reasoning (LLM) and execution (SLM/Program)

- Explicit error handling through the "Punt" mechanism

- Modular architecture allowing independent component updates