TECHNICAL ASSET FINGERPRINT

fdf0b03521d7e8a1bd9973c9

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

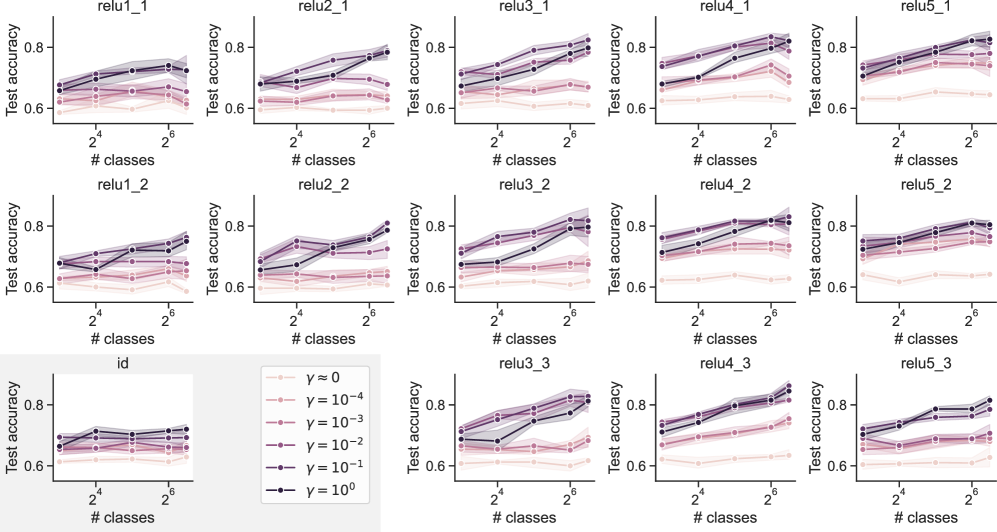

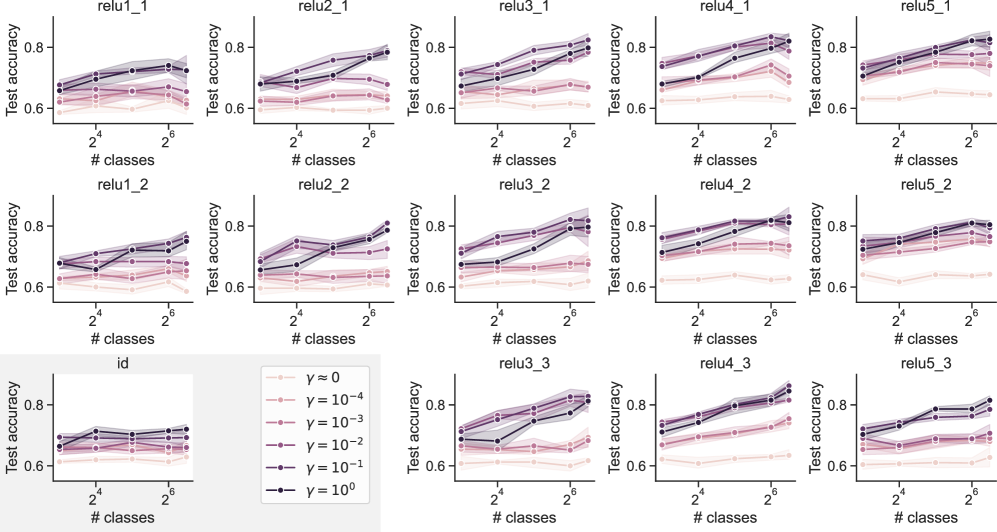

## Chart Type: Multiple Line Graphs Comparing Test Accuracy vs. Number of Classes

### Overview

The image contains a 5x3 grid of line graphs. Each graph displays the "Test accuracy" on the y-axis versus the "# classes" on the x-axis. The x-axis is displayed on a log scale. Each graph contains 6 lines, each representing a different value of gamma (γ). The legend in the bottom-center describes the color-coding for the different gamma values. The graphs are titled "relu[1-5]_[1-3]" and "id".

### Components/Axes

* **X-axis:** "# classes" with tick marks at 2<sup>2</sup>, 2<sup>4</sup>, and 2<sup>6</sup>.

* **Y-axis:** "Test accuracy" ranging from 0.6 to 0.8.

* **Titles:** Each graph has a title in the format "relu[number]_[number]" or "id". The titles are arranged in a grid:

* Top row: relu1\_1, relu2\_1, relu3\_1, relu4\_1, relu5\_1

* Middle row: relu1\_2, relu2\_2, relu3\_2, relu4\_2, relu5\_2

* Bottom row: id, relu3\_3, relu4\_3, relu5\_3

* **Legend:** Located at the bottom-center of the image. It maps colors to gamma (γ) values:

* Light pink: γ ≈ 0

* Pink: γ = 10<sup>-4</sup>

* Rose: γ = 10<sup>-3</sup>

* Purple: γ = 10<sup>-2</sup>

* Dark Purple: γ = 10<sup>-1</sup>

* Black: γ = 10<sup>0</sup>

### Detailed Analysis

Each graph plots the test accuracy against the number of classes for different values of gamma. The x-axis values are 2<sup>2</sup> = 4, 2<sup>4</sup> = 16, and 2<sup>6</sup> = 64.

**General Trends:**

* **γ ≈ 0 (Light Pink):** Generally shows the lowest test accuracy across all graphs. The test accuracy is relatively flat as the number of classes increases.

* **γ = 10<sup>0</sup> (Black):** Generally shows the highest test accuracy across all graphs. The test accuracy tends to increase as the number of classes increases.

* **Other Gamma Values:** The lines for γ = 10<sup>-4</sup>, 10<sup>-3</sup>, 10<sup>-2</sup>, and 10<sup>-1</sup> (Pink, Rose, Purple, Dark Purple) fall between the γ ≈ 0 and γ = 10<sup>0</sup> lines. Their behavior varies across the different graphs.

**Specific Graph Observations:**

* **relu1\_1:**

* γ ≈ 0: Test accuracy around 0.62 at 2<sup>2</sup>, 0.63 at 2<sup>4</sup>, and 0.62 at 2<sup>6</sup>.

* γ = 10<sup>0</sup>: Test accuracy around 0.68 at 2<sup>2</sup>, 0.73 at 2<sup>4</sup>, and 0.75 at 2<sup>6</sup>.

* **relu1\_2:**

* γ ≈ 0: Test accuracy around 0.63 at 2<sup>2</sup>, 0.64 at 2<sup>4</sup>, and 0.63 at 2<sup>6</sup>.

* γ = 10<sup>0</sup>: Test accuracy around 0.68 at 2<sup>2</sup>, 0.74 at 2<sup>4</sup>, and 0.76 at 2<sup>6</sup>.

* **relu2\_1:**

* γ ≈ 0: Test accuracy around 0.62 at 2<sup>2</sup>, 0.63 at 2<sup>4</sup>, and 0.62 at 2<sup>6</sup>.

* γ = 10<sup>0</sup>: Test accuracy around 0.69 at 2<sup>2</sup>, 0.74 at 2<sup>4</sup>, and 0.76 at 2<sup>6</sup>.

* **relu2\_2:**

* γ ≈ 0: Test accuracy around 0.63 at 2<sup>2</sup>, 0.64 at 2<sup>4</sup>, and 0.63 at 2<sup>6</sup>.

* γ = 10<sup>0</sup>: Test accuracy around 0.69 at 2<sup>2</sup>, 0.74 at 2<sup>4</sup>, and 0.76 at 2<sup>6</sup>.

* **relu3\_1:**

* γ ≈ 0: Test accuracy around 0.62 at 2<sup>2</sup>, 0.63 at 2<sup>4</sup>, and 0.62 at 2<sup>6</sup>.

* γ = 10<sup>0</sup>: Test accuracy around 0.70 at 2<sup>2</sup>, 0.76 at 2<sup>4</sup>, and 0.79 at 2<sup>6</sup>.

* **relu3\_2:**

* γ ≈ 0: Test accuracy around 0.63 at 2<sup>2</sup>, 0.64 at 2<sup>4</sup>, and 0.63 at 2<sup>6</sup>.

* γ = 10<sup>0</sup>: Test accuracy around 0.70 at 2<sup>2</sup>, 0.76 at 2<sup>4</sup>, and 0.80 at 2<sup>6</sup>.

* **relu3\_3:**

* γ ≈ 0: Test accuracy around 0.62 at 2<sup>2</sup>, 0.63 at 2<sup>4</sup>, and 0.62 at 2<sup>6</sup>.

* γ = 10<sup>0</sup>: Test accuracy around 0.70 at 2<sup>2</sup>, 0.76 at 2<sup>4</sup>, and 0.80 at 2<sup>6</sup>.

* **relu4\_1:**

* γ ≈ 0: Test accuracy around 0.62 at 2<sup>2</sup>, 0.63 at 2<sup>4</sup>, and 0.62 at 2<sup>6</sup>.

* γ = 10<sup>0</sup>: Test accuracy around 0.72 at 2<sup>2</sup>, 0.77 at 2<sup>4</sup>, and 0.81 at 2<sup>6</sup>.

* **relu4\_2:**

* γ ≈ 0: Test accuracy around 0.63 at 2<sup>2</sup>, 0.64 at 2<sup>4</sup>, and 0.63 at 2<sup>6</sup>.

* γ = 10<sup>0</sup>: Test accuracy around 0.72 at 2<sup>2</sup>, 0.77 at 2<sup>4</sup>, and 0.81 at 2<sup>6</sup>.

* **relu4\_3:**

* γ ≈ 0: Test accuracy around 0.62 at 2<sup>2</sup>, 0.63 at 2<sup>4</sup>, and 0.62 at 2<sup>6</sup>.

* γ = 10<sup>0</sup>: Test accuracy around 0.72 at 2<sup>2</sup>, 0.77 at 2<sup>4</sup>, and 0.81 at 2<sup>6</sup>.

* **relu5\_1:**

* γ ≈ 0: Test accuracy around 0.62 at 2<sup>2</sup>, 0.63 at 2<sup>4</sup>, and 0.62 at 2<sup>6</sup>.

* γ = 10<sup>0</sup>: Test accuracy around 0.71 at 2<sup>2</sup>, 0.76 at 2<sup>4</sup>, and 0.79 at 2<sup>6</sup>.

* **relu5\_2:**

* γ ≈ 0: Test accuracy around 0.63 at 2<sup>2</sup>, 0.64 at 2<sup>4</sup>, and 0.63 at 2<sup>6</sup>.

* γ = 10<sup>0</sup>: Test accuracy around 0.71 at 2<sup>2</sup>, 0.76 at 2<sup>4</sup>, and 0.79 at 2<sup>6</sup>.

* **relu5\_3:**

* γ ≈ 0: Test accuracy around 0.62 at 2<sup>2</sup>, 0.63 at 2<sup>4</sup>, and 0.62 at 2<sup>6</sup>.

* γ = 10<sup>0</sup>: Test accuracy around 0.71 at 2<sup>2</sup>, 0.76 at 2<sup>4</sup>, and 0.79 at 2<sup>6</sup>.

* **id:**

* γ ≈ 0: Test accuracy around 0.64 at 2<sup>2</sup>, 0.65 at 2<sup>4</sup>, and 0.64 at 2<sup>6</sup>.

* γ = 10<sup>0</sup>: Test accuracy around 0.70 at 2<sup>2</sup>, 0.71 at 2<sup>4</sup>, and 0.72 at 2<sup>6</sup>.

### Key Observations

* The test accuracy generally increases with the number of classes for higher values of gamma (γ = 10<sup>0</sup>).

* The test accuracy is relatively flat for γ ≈ 0.

* The "relu" graphs show similar trends, with "relu4\_*" generally having the highest test accuracy for γ = 10<sup>0</sup>.

* The "id" graph shows a smaller increase in test accuracy with the number of classes compared to the "relu" graphs.

### Interpretation

The graphs suggest that a higher gamma value (γ = 10<sup>0</sup>) leads to better test accuracy, especially as the number of classes increases. The "relu" activation functions seem to perform better than the "id" (identity) activation function, as they achieve higher test accuracies. The relatively flat test accuracy for γ ≈ 0 indicates that a very small gamma value might not be sufficient for effective learning. The differences in performance between the "relu" graphs (relu1\_1, relu1\_2, etc.) could be due to variations in the network architecture or training data.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Chart: Test Accuracy vs. Number of Classes for Different Regularization Parameters

### Overview

This image presents a grid of 15 line plots, each showing the relationship between "Test accuracy" and "# classes" for different ReLU layer configurations (relu1_1 to relu5_3) and regularization parameters (γ). Each plot visualizes the performance of a model under varying numbers of classes, with different levels of regularization applied. The x-axis represents the number of classes, ranging from 2^0 (1) to 2^6 (64), displayed on a logarithmic scale. The y-axis represents the test accuracy, ranging from approximately 0.6 to 0.9.

### Components/Axes

* **X-axis Label:** "# classes" (logarithmic scale)

* **Y-axis Label:** "Test accuracy"

* **Legend:** Located in the bottom-right corner, it defines the different regularization parameters (γ) represented by different line styles and colors:

* γ = 0 (pink, dashed)

* γ = 10^-4 (light green, dashed)

* γ = 10^-3 (light blue, dashed)

* γ = 10^-2 (light purple, dashed)

* γ = 10^-1 (black, solid)

* γ = 10^0 (dark red, solid)

* **Subplot Titles:** Each subplot is labeled with a combination of "relu" layer number and a second index (e.g., "relu1\_1", "relu5\_3").

* **Data Points:** Each line represents the test accuracy for a specific regularization parameter as the number of classes increases. Shaded areas around the lines represent the standard deviation or confidence interval.

### Detailed Analysis or Content Details

The image consists of a 3x5 grid of plots. Here's a breakdown of the trends observed in each plot, along with approximate data points. Note that precise values are difficult to extract due to the resolution of the image.

**Row 1 (relu1\_1 to relu5\_1):**

* **relu1\_1:** All lines are relatively flat. γ = 0 (pink) starts around 0.75 and ends around 0.78. γ = 10^-1 (black) starts around 0.75 and ends around 0.77.

* **relu2\_1:** Similar to relu1\_1, lines are relatively flat. γ = 0 (pink) starts around 0.75 and ends around 0.79. γ = 10^-1 (black) starts around 0.75 and ends around 0.77.

* **relu3\_1:** Lines show a slight upward trend. γ = 0 (pink) starts around 0.68 and ends around 0.75. γ = 10^-1 (black) starts around 0.68 and ends around 0.72.

* **relu4\_1:** Lines show a slight upward trend. γ = 0 (pink) starts around 0.68 and ends around 0.75. γ = 10^-1 (black) starts around 0.68 and ends around 0.72.

* **relu5\_1:** Lines show a slight upward trend. γ = 0 (pink) starts around 0.68 and ends around 0.75. γ = 10^-1 (black) starts around 0.68 and ends around 0.72.

**Row 2 (relu1\_2 to relu5\_2):**

* **relu1\_2:** Lines are relatively flat. γ = 0 (pink) starts around 0.75 and ends around 0.78. γ = 10^-1 (black) starts around 0.75 and ends around 0.77.

* **relu2\_2:** Lines are relatively flat. γ = 0 (pink) starts around 0.75 and ends around 0.79. γ = 10^-1 (black) starts around 0.75 and ends around 0.77.

* **relu3\_2:** Lines show a slight upward trend. γ = 0 (pink) starts around 0.68 and ends around 0.75. γ = 10^-1 (black) starts around 0.68 and ends around 0.72.

* **relu4\_2:** Lines show a slight upward trend. γ = 0 (pink) starts around 0.68 and ends around 0.75. γ = 10^-1 (black) starts around 0.68 and ends around 0.72.

* **relu5\_2:** Lines show a slight upward trend. γ = 0 (pink) starts around 0.68 and ends around 0.75. γ = 10^-1 (black) starts around 0.68 and ends around 0.72.

**Row 3 (relu1\_3 to relu5\_3):**

* **relu1\_3:** Lines are relatively flat. γ = 0 (pink) starts around 0.75 and ends around 0.78. γ = 10^-1 (black) starts around 0.75 and ends around 0.77.

* **relu2\_3:** Lines are relatively flat. γ = 0 (pink) starts around 0.75 and ends around 0.79. γ = 10^-1 (black) starts around 0.75 and ends around 0.77.

* **relu3\_3:** Lines show a slight upward trend. γ = 0 (pink) starts around 0.68 and ends around 0.75. γ = 10^-1 (black) starts around 0.68 and ends around 0.72.

* **relu4\_3:** Lines show a slight upward trend. γ = 0 (pink) starts around 0.68 and ends around 0.75. γ = 10^-1 (black) starts around 0.68 and ends around 0.72.

* **relu5\_3:** Lines show a slight upward trend. γ = 0 (pink) starts around 0.68 and ends around 0.75. γ = 10^-1 (black) starts around 0.68 and ends around 0.72.

### Key Observations

* The regularization parameter γ = 0 (pink) generally exhibits the highest test accuracy across all ReLU configurations.

* Higher values of γ (e.g., γ = 10^-1) tend to result in lower test accuracy.

* The impact of the number of classes on test accuracy is minimal for most configurations, with lines remaining relatively flat.

* The shaded areas around the lines indicate some variability in the test accuracy for each regularization parameter.

* There is a slight upward trend in test accuracy as the number of classes increases for some configurations, but the effect is small.

### Interpretation

The data suggests that using no regularization (γ = 0) generally leads to the best performance in terms of test accuracy for these ReLU network configurations. Applying regularization, even at moderate levels (γ = 10^-1), tends to decrease performance. The relatively flat lines indicate that the number of classes does not significantly impact the model's ability to generalize, at least within the range of 1 to 64 classes tested. The small variability indicated by the shaded areas suggests that the results are relatively consistent.

The consistent performance of γ = 0 across different ReLU layers suggests that the network architecture itself may not be the primary factor influencing performance in this scenario. The regularization parameter appears to be the dominant factor. The lack of a strong trend with the number of classes could indicate that the dataset is not complex enough to require a large number of classes for effective learning, or that the network capacity is sufficient to handle the given number of classes without overfitting.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## [Grid of Line Charts]: Test Accuracy vs. Number of Classes for Different γ Values

### Overview

The image displays a 3×5 grid of line charts (15 subplots total) illustrating **test accuracy** (y-axis) as a function of the **number of classes** (x-axis, log₂ scale: \(2^4 = 16\) to \(2^6 = 64\)) for six different values of a parameter \(\boldsymbol{\gamma}\) (legend: \(\gamma = 0, 10^{-4}, 10^{-3}, 10^{-2}, 10^{-1}, 10^0\)). Each subplot has a title (e.g., `relu1_1`, `relu2_1`, `id`, etc.), and the legend is positioned at the bottom-left (below the `id` subplot).

### Components/Axes

- **X-axis**: Labeled `# classes`, with major ticks at \(2^4 = 16\) and \(2^6 = 64\) (logarithmic scale).

- **Y-axis**: Labeled `Test accuracy`, ranging from ~0.6 to 0.8 (linear scale).

- **Legend**: Six entries (bottom-left):

- \(\gamma = 0\): Light pink, open circles (lowest accuracy).

- \(\gamma = 10^{-4}\): Light pink, filled circles.

- \(\gamma = 10^{-3}\): Pink, filled circles.

- \(\gamma = 10^{-2}\): Darker pink, filled circles.

- \(\gamma = 10^{-1}\): Purple, filled circles.

- \(\gamma = 10^0\): Dark purple/black, filled circles (highest accuracy).

- **Subplot Titles**:

- Row 1: `relu1_1`, `relu2_1`, `relu3_1`, `relu4_1`, `relu5_1`

- Row 2: `relu1_2`, `relu2_2`, `relu3_2`, `relu4_2`, `relu5_2`

- Row 3: `id`, `relu3_3`, `relu4_3`, `relu5_3` (and a fifth subplot, likely `relu5_3` or similar).

### Detailed Analysis

Each subplot shows test accuracy trends for \(\gamma\) values:

#### Trend for \(\boldsymbol{\gamma = 0}\) (light pink, open circles):

- Lowest test accuracy across all subplots.

- Slight increase or flat trend as `# classes` increases (e.g., in `relu1_1`, starts ~0.62, rises to ~0.65 at 64 classes).

#### Trend for \(\boldsymbol{\gamma = 10^{-4}}\) (light pink, filled circles):

- Slightly higher than \(\gamma = 0\), with a similar (slight increase/flat) trend.

#### Trend for \(\boldsymbol{\gamma = 10^{-3}}\) (pink, filled circles):

- Higher than \(\gamma = 10^{-4}\), with a more noticeable increase as `# classes` rises.

#### Trend for \(\boldsymbol{\gamma = 10^{-2}}\) (darker pink, filled circles):

- Higher than \(\gamma = 10^{-3}\), with a steeper increase.

#### Trend for \(\boldsymbol{\gamma = 10^{-1}}\) (purple, filled circles):

- Higher than \(\gamma = 10^{-2}\), with a steeper increase.

#### Trend for \(\boldsymbol{\gamma = 10^0}\) (dark purple/black, filled circles):

- Highest test accuracy across all subplots.

- Steepest increase as `# classes` rises (e.g., in `relu1_1`, starts ~0.72, rises to ~0.78 at 64 classes).

#### Subplot `id` (Row 3, Col 1):

- Anomaly: Lower overall accuracy (~0.6–0.7) and less variation between \(\gamma\) values.

- Trend: \(\gamma = 10^0\) still highest, but increase with `# classes` is less steep.

### Key Observations

1. **\(\boldsymbol{\gamma}\) Impact**: Higher \(\gamma\) values (closer to \(10^0\)) consistently yield higher test accuracy.

2. **Class Number Impact**: Test accuracy increases with `# classes` (16→64) for all \(\gamma\), with steeper increases for higher \(\gamma\).

3. **`id` Subplot Anomaly**: The `id` subplot shows lower accuracy and less \(\gamma\)-sensitivity, suggesting a different model/configuration.

4. **Consistency in `relu` Subplots**: All `relu`-titled subplots follow the same \(\gamma\)/class number pattern, indicating a systematic relationship.

### Interpretation

The data suggests:

- Increasing the number of classes (16→64) improves test accuracy, especially for higher \(\gamma\).

- Higher \(\gamma\) values (e.g., \(10^0\)) likely represent a stronger regularization or model parameter that enhances performance.

- The `id` subplot’s distinct behavior implies a different model architecture or training setup, where \(\gamma\) and class number have less impact.

This pattern indicates that \(\gamma\) and class number are critical hyperparameters for optimizing test accuracy in the `relu`-based models, while the `id` model is more robust (or less sensitive) to these parameters.

(Note: All text is in English; no non-English text is present.)

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graph Grid: Test Accuracy vs. Number of Classes Across ReLU Configurations

### Overview

The image displays a 5x3 grid of line graphs comparing test accuracy across different numbers of classes (2⁴ to 2⁶) for various ReLU configurations (relu1_1 to relu5_3) and an "id" category. Each graph includes multiple data series differentiated by gamma (γ) values (0, 10⁻⁴, 10⁻³, 10⁻², 10⁻¹, 10⁰), with trends showing how accuracy evolves as class complexity increases.

---

### Components/Axes

- **X-axis**: "# classes" (logarithmic scale: 2⁴ to 2⁶)

- **Y-axis**: "Test accuracy" (linear scale: 0.6 to 0.8)

- **Legend**:

- γ = 0 (light pink)

- γ = 10⁻⁴ (pink)

- γ = 10⁻³ (light purple)

- γ = 10⁻² (purple)

- γ = 10⁻¹ (dark purple)

- γ = 10⁰ (black)

- **Labels**:

- Top row: relu1_1, relu2_1, relu3_1, relu4_1, relu5_1

- Middle row: relu1_2, relu2_2, relu3_2, relu4_2, relu5_2

- Bottom row: id (no ReLU configuration specified)

---

### Detailed Analysis

1. **Test Accuracy Trends**:

- **Higher γ values** (e.g., γ = 10⁰, black lines) generally show **steeper upward slopes** in test accuracy as class count increases, particularly in relu4_1, relu4_2, and relu5_1.

- **Lower γ values** (e.g., γ = 0, light pink) exhibit **flatter trajectories**, with minimal improvement across class counts.

- **Relu4 configurations** (e.g., relu4_1, relu4_2) demonstrate the **highest peak accuracies** (up to ~0.85 for γ = 10⁰ at 2⁶ classes).

- **Relu1/Relu2** graphs show **moderate gains**, while **Relu3** and **id** categories lag behind.

2. **Data Points**:

- **relu1_1**:

- γ = 10⁰: 0.72 (2⁴) → 0.78 (2⁶)

- γ = 0: 0.65 (2⁴) → 0.67 (2⁶)

- **relu4_2**:

- γ = 10⁰: 0.82 (2⁴) → 0.85 (2⁶)

- γ = 10⁻¹: 0.75 (2⁴) → 0.79 (2⁶)

- **id**:

- γ = 10⁰: 0.68 (2⁴) → 0.70 (2⁶)

- γ = 0: 0.63 (2⁴) → 0.64 (2⁶)

3. **Color Consistency**:

- All γ values are consistently mapped to their legend colors across graphs (e.g., γ = 10⁰ is always black).

---

### Key Observations

- **γ = 10⁰** (black lines) consistently outperforms other γ values, especially in high-complexity scenarios (2⁶ classes).

- **Relu4 configurations** achieve the highest accuracy, suggesting architectural advantages in handling class complexity.

- The **id category** underperforms all ReLU variants, indicating ReLU activation functions improve generalization.

- **γ = 0** (light pink) shows the least sensitivity to class count, implying minimal regularization effect.

---

### Interpretation

The data suggests that:

1. **Gamma (γ) acts as a regularization parameter**: Higher γ values (closer to 1) improve model robustness to class complexity, likely by reducing overfitting.

2. **ReLU configuration impacts performance**: Relu4 variants outperform others, possibly due to deeper or more optimized architectures.

3. **Diminishing returns**: Accuracy gains plateau at higher class counts (2⁶), suggesting practical limits to scalability.

4. **Baseline weakness**: The "id" category’s low accuracy highlights the necessity of ReLU activation for competitive performance.

Notably, relu4_1 and relu5_1 show **non-monotonic trends** (e.g., slight dips at 2⁵ classes), which may indicate overfitting or architectural trade-offs. Further investigation into γ’s interaction with specific ReLU configurations could reveal optimal hyperparameter settings for complex datasets.

DECODING INTELLIGENCE...