\n

## Line Charts: Training Reward and KL Divergence Comparison

### Overview

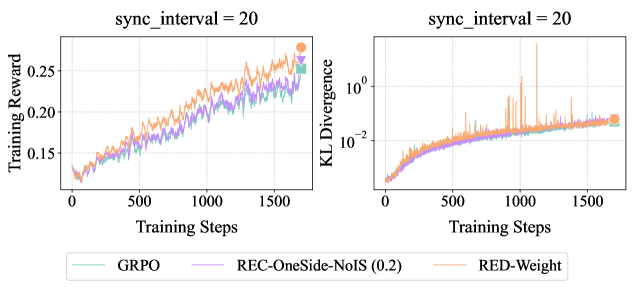

The image displays two side-by-side line charts comparing the performance of three different methods (GRPO, REC-OneSide-NoIS (0.2), RED-Weight) over the course of training. The left chart tracks "Training Reward," and the right chart tracks "KL Divergence." Both charts share the same x-axis ("Training Steps") and a legend located at the bottom center of the figure. The title "sync_interval = 20" appears above each chart.

### Components/Axes

* **Titles:**

* Left Chart: "Training Reward"

* Right Chart: "KL Divergence"

* Above Both Charts: "sync_interval = 20"

* **X-Axis (Both Charts):**

* Label: "Training Steps"

* Scale: Linear, from 0 to 1500, with major ticks at 0, 500, 1000, 1500.

* **Y-Axis (Left Chart - Training Reward):**

* Label: "Training Reward"

* Scale: Linear, from approximately 0.15 to 0.25, with major ticks at 0.15, 0.20, 0.25.

* **Y-Axis (Right Chart - KL Divergence):**

* Label: "KL Divergence"

* Scale: Logarithmic (base 10), ranging from 10⁻² to 10⁰ (0.01 to 1.0).

* **Legend (Bottom Center):**

* **GRPO:** Teal line.

* **REC-OneSide-NoIS (0.2):** Purple line.

* **RED-Weight:** Orange line.

### Detailed Analysis

**Left Chart: Training Reward**

* **Trend Verification:** All three lines show a clear, consistent upward trend from step 0 to step 1500, indicating that the training reward increases for all methods as training progresses.

* **Data Series & Points:**

* **RED-Weight (Orange):** Starts near 0.15 at step 0. Shows the steepest and most consistent increase. Ends at the highest point, approximately 0.27 at step 1500 (marked with an orange circle).

* **GRPO (Teal):** Starts near 0.15 at step 0. Follows a similar upward trajectory but slightly below RED-Weight. Ends at approximately 0.25 at step 1500 (marked with a teal circle).

* **REC-OneSide-NoIS (0.2) (Purple):** Starts near 0.15 at step 0. Increases at a slightly slower rate than the other two. Ends at approximately 0.23 at step 1500 (marked with a purple circle).

* **Spatial Grounding:** The lines are tightly clustered at the start (step 0) and gradually diverge, with RED-Weight consistently on top, GRPO in the middle, and REC-OneSide-NoIS (0.2) at the bottom from roughly step 500 onward.

**Right Chart: KL Divergence**

* **Trend Verification:** All three lines show an upward trend on the logarithmic scale, meaning the KL Divergence increases exponentially over training steps. The RED-Weight line exhibits significant volatility with sharp spikes.

* **Data Series & Points:**

* **RED-Weight (Orange):** Starts near 10⁻² (0.01) at step 0. Increases steadily but with very prominent, sharp upward spikes, particularly around steps 800, 1000, and 1100. The highest spike exceeds 10⁰ (1.0). Ends at approximately 0.1 at step 1500 (marked with an orange circle).

* **GRPO (Teal):** Starts near 10⁻² (0.01) at step 0. Shows a smoother, more consistent increase compared to RED-Weight, with minor fluctuations. Ends at approximately 0.05 at step 1500 (marked with a teal circle).

* **REC-OneSide-NoIS (0.2) (Purple):** Starts near 10⁻² (0.01) at step 0. Follows a path very similar to GRPO, slightly below it for most of the training. Ends at approximately 0.04 at step 1500 (marked with a purple circle).

* **Spatial Grounding:** The GRPO and REC-OneSide-NoIS (0.2) lines are closely intertwined throughout. The RED-Weight line is generally above them and is distinguished by its large, intermittent spikes that reach far above the other two series.

### Key Observations

1. **Performance Trade-off:** The RED-Weight method achieves the highest final Training Reward but also exhibits the highest and most volatile KL Divergence.

2. **Stability vs. Aggressiveness:** GRPO and REC-OneSide-NoIS (0.2) show more stable and similar behavior in both metrics, with lower final rewards but also lower and smoother KL Divergence.

3. **Volatility Signature:** The KL Divergence chart for RED-Weight contains extreme, short-lived spikes not present in the other methods, suggesting periods of significant policy shift during its training.

4. **Convergence:** All methods show continued improvement (increasing reward, increasing divergence) up to the final step (1500), with no clear plateau.

### Interpretation

The data suggests a fundamental trade-off between reward optimization and policy stability in the context of these training methods. **RED-Weight** appears to be a more aggressive optimization strategy: it pushes the policy further (higher KL Divergence) to achieve greater reward gains, but this comes at the cost of training stability, as evidenced by the dramatic spikes in divergence. These spikes could indicate moments where the policy undergoes rapid, substantial changes.

In contrast, **GRPO** and **REC-OneSide-NoIS (0.2)** represent more conservative approaches. They yield more modest reward improvements but maintain a smoother, more controlled evolution of the policy (lower, stable KL Divergence). The near-identical performance of GRPO and REC-OneSide-NoIS (0.2) suggests their underlying mechanisms may be similar or that the (0.2) parameter in the latter effectively regularizes it to behave like GRPO.

The "sync_interval = 20" parameter is a constant across both charts, implying it is a fixed hyperparameter for this experiment. The charts collectively demonstrate that method selection involves balancing the goal of maximizing reward against the risk of destabilizing the learned policy, with RED-Weight favoring the former and the other two favoring the latter.