\n

## Bar Chart: Communication Rounds for Federated Learning Algorithms

### Overview

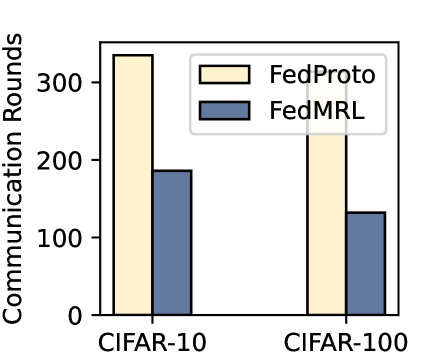

This is a bar chart comparing the number of communication rounds required by two federated learning algorithms, FedProto and FedMRL, on two datasets: CIFAR-10 and CIFAR-100. The chart visually represents the performance of each algorithm in terms of convergence speed, as measured by the number of communication rounds needed.

### Components/Axes

* **X-axis:** Datasets - CIFAR-10 and CIFAR-100.

* **Y-axis:** Communication Rounds - Scale ranges from 0 to 350, with increments of 50.

* **Legend:**

* FedProto (represented by a light yellow color)

* FedMRL (represented by a dark blue color)

* **Chart Title:** Not explicitly present.

### Detailed Analysis

The chart consists of four bars, two for each dataset.

**CIFAR-10:**

* **FedProto:** The bar for FedProto on CIFAR-10 is approximately 350 communication rounds. The bar extends nearly to the top of the chart's y-axis.

* **FedMRL:** The bar for FedMRL on CIFAR-10 is approximately 180 communication rounds. It is significantly shorter than the FedProto bar.

**CIFAR-100:**

* **FedProto:** The bar for FedProto on CIFAR-100 is approximately 250 communication rounds.

* **FedMRL:** The bar for FedMRL on CIFAR-100 is approximately 130 communication rounds.

### Key Observations

* FedProto consistently requires more communication rounds than FedMRL for both datasets.

* The difference in communication rounds is more pronounced on the CIFAR-10 dataset.

* Both algorithms require fewer communication rounds on CIFAR-100 compared to CIFAR-10.

### Interpretation

The data suggests that FedMRL converges faster than FedProto on both CIFAR-10 and CIFAR-100 datasets, as indicated by the lower number of communication rounds required. The larger difference observed on CIFAR-10 might indicate that FedMRL is more robust to the increased complexity of the dataset. The fact that both algorithms require fewer rounds on CIFAR-100 compared to CIFAR-10 could be due to the simpler nature of CIFAR-100 (fewer classes). This chart demonstrates the efficiency of FedMRL in federated learning scenarios, particularly when dealing with complex datasets like CIFAR-10. The data implies that FedMRL may be a preferable choice when minimizing communication costs is a priority.