## Grouped Bar Chart: Prediction Flip Rate Comparison for Mistral-7B Models

### Overview

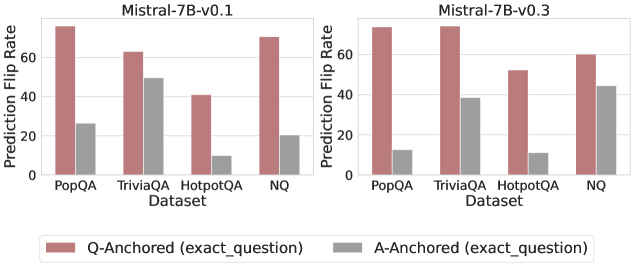

The image displays two side-by-side grouped bar charts comparing the "Prediction Flip Rate" of two versions of the Mistral-7B language model (v0.1 and v0.3) across four question-answering datasets. The charts evaluate model sensitivity to question phrasing by comparing two anchoring methods.

### Components/Axes

* **Chart Titles:** "Mistral-7B-v0.1" (left panel), "Mistral-7B-v0.3" (right panel).

* **Y-Axis (Both Panels):** Labeled "Prediction Flip Rate". The scale runs from 0 to 60 (v0.1) and 0 to 70 (v0.3), with major gridlines at intervals of 20.

* **X-Axis (Both Panels):** Labeled "Dataset". The four categories are: "PopQA", "TriviaQA", "HotpotQA", and "NQ".

* **Legend:** Positioned at the bottom center of the entire figure.

* **Reddish-Brown Bar:** "Q-Anchored (exact_question)"

* **Gray Bar:** "A-Anchored (exact_question)"

### Detailed Analysis

**Mistral-7B-v0.1 (Left Panel):**

* **PopQA:** Q-Anchored flip rate is the highest, approximately 75. A-Anchored is significantly lower, around 25.

* **TriviaQA:** Q-Anchored is approximately 65. A-Anchored is relatively high, around 50.

* **HotpotQA:** Q-Anchored is the lowest for this model version, approximately 40. A-Anchored is very low, around 10.

* **NQ:** Q-Anchored is high, approximately 70. A-Anchored is low, around 20.

**Mistral-7B-v0.3 (Right Panel):**

* **PopQA:** Q-Anchored remains very high, approximately 75. A-Anchored has decreased notably to around 12.

* **TriviaQA:** Q-Anchored has increased to approximately 75. A-Anchored has decreased to around 38.

* **HotpotQA:** Q-Anchored has increased to approximately 52. A-Anchored remains very low, around 10.

* **NQ:** Q-Anchored has decreased to approximately 60. A-Anchored has increased significantly to around 45.

**Trend Verification:**

* In both model versions, the **Q-Anchored (reddish-brown) bars are consistently taller** than the corresponding A-Anchored (gray) bars for every dataset.

* From v0.1 to v0.3, the Q-Anchored flip rate **increased** for TriviaQA and HotpotQA, **decreased** for NQ, and remained **stable** for PopQA.

* From v0.1 to v0.3, the A-Anchored flip rate **decreased** for PopQA and TriviaQA, **increased** for NQ, and remained **stable** for HotpotQA.

### Key Observations

1. **Dominant Pattern:** The Q-Anchored method consistently results in a higher prediction flip rate than the A-Anchored method across all datasets and both model versions.

2. **Largest Discrepancy:** The greatest difference between the two anchoring methods is observed in the **PopQA** dataset for both model versions.

3. **Notable Change (v0.1 to v0.3):** The **NQ** dataset shows a significant shift. The Q-Anchored flip rate decreased, while the A-Anchored flip rate more than doubled, making the gap between the two methods much smaller in v0.3.

4. **Stable Low Point:** The **HotpotQA** dataset's A-Anchored flip rate remains consistently low (~10) across both model versions.

### Interpretation

This data suggests that the model's predictions are generally more sensitive to variations in the question phrasing (Q-Anchored) than to variations in the answer phrasing (A-Anchored). A higher "Prediction Flip Rate" indicates lower robustness; the model changes its answer more frequently when the input is perturbed.

The comparison between v0.1 and v0.3 reveals that model updates have a dataset-specific impact on robustness. For example, the model became **more robust** to question perturbations on NQ (Q-Anchored flip rate decreased) but **less robust** to answer perturbations on the same dataset (A-Anchored flip rate increased). The significant reduction in A-Anchored flip rate for PopQA and TriviaQA in v0.3 suggests an improvement in stability when the answer is fixed for those datasets. The consistently low A-Anchored flip rate for HotpotQA might indicate that this dataset's answers are particularly deterministic or that the model's knowledge about these topics is very stable. Overall, the charts demonstrate that evaluating model robustness requires looking at multiple datasets and perturbation types, as improvements are not uniform.