\n

## Line Chart: Test Loss vs. Gradient Updates for Different Dimensionalities

### Overview

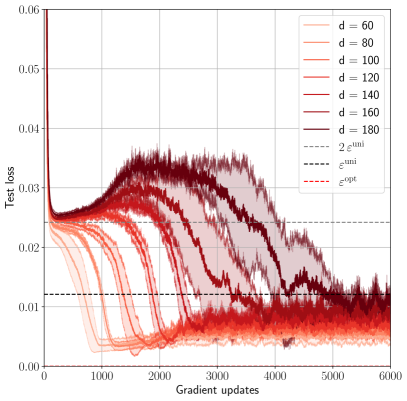

The image presents a line chart illustrating the relationship between test loss and gradient updates for various values of a parameter 'd' (dimensionality). Several lines, each representing a different 'd' value, show how test loss evolves during the gradient update process. Additionally, three horizontal lines represent theoretical loss values: 'ε<sup>uni</sup>', 'ε<sup>opt</sup>', and '2ε<sup>uni</sup>'.

### Components/Axes

* **X-axis:** Gradient updates, ranging from approximately 0 to 6000, labeled as "Gradient updates".

* **Y-axis:** Test loss, ranging from approximately 0 to 0.06, labeled as "Test loss".

* **Legend:** Located in the top-right corner, it identifies each line by its corresponding 'd' value:

* d = 60 (lightest red)

* d = 80 (slightly darker red)

* d = 100 (red)

* d = 120 (darker red)

* d = 140 (even darker red)

* d = 160 (darkest red)

* d = 180 (very darkest red)

* ε<sup>uni</sup> (black dashed line)

* ε<sup>opt</sup> (red dashed line)

* 2ε<sup>uni</sup> (red dotted line)

### Detailed Analysis

The chart displays multiple lines representing the test loss as a function of gradient updates for different dimensionalities (d).

* **d = 60:** The line starts at approximately 0.055 and rapidly decreases to around 0.02 within the first 1000 gradient updates. It then fluctuates between approximately 0.02 and 0.03, with some peaks reaching around 0.04, before decreasing again towards the end of the chart, settling around 0.015.

* **d = 80:** Similar to d=60, it starts at around 0.05 and decreases to approximately 0.02 within the first 1000 updates. It exhibits similar fluctuations, peaking around 0.035, and ends around 0.015.

* **d = 100:** Starts at approximately 0.048, decreases to around 0.02 within the first 1000 updates, fluctuates between 0.02 and 0.035, peaking around 0.04, and ends around 0.015.

* **d = 120:** Starts at approximately 0.045, decreases to around 0.02 within the first 1000 updates, fluctuates between 0.02 and 0.035, peaking around 0.04, and ends around 0.015.

* **d = 140:** Starts at approximately 0.04, decreases to around 0.02 within the first 1000 updates, fluctuates between 0.02 and 0.035, peaking around 0.04, and ends around 0.015.

* **d = 160:** Starts at approximately 0.038, decreases to around 0.02 within the first 1000 updates, fluctuates between 0.02 and 0.035, peaking around 0.04, and ends around 0.015.

* **d = 180:** Starts at approximately 0.035, decreases to around 0.02 within the first 1000 updates, fluctuates between 0.02 and 0.035, peaking around 0.04, and ends around 0.015.

All lines for different 'd' values exhibit a similar trend: an initial rapid decrease in test loss followed by fluctuations around a relatively stable level. As 'd' increases, the initial test loss value tends to decrease slightly.

* **ε<sup>uni</sup>:** A horizontal dashed black line at approximately 0.028.

* **ε<sup>opt</sup>:** A horizontal dashed red line at approximately 0.022.

* **2ε<sup>uni</sup>:** A horizontal dotted red line at approximately 0.056.

### Key Observations

* The test loss generally decreases with increasing gradient updates, but the rate of decrease slows down after the initial phase.

* The fluctuations in test loss suggest the presence of noise or instability during the training process.

* Higher dimensionality ('d' values) generally result in lower initial test loss values.

* The lines representing different 'd' values converge towards similar test loss levels as the number of gradient updates increases.

* The theoretical loss values (ε<sup>uni</sup>, ε<sup>opt</sup>, 2ε<sup>uni</sup>) provide benchmarks for evaluating the performance of the model. Most of the lines stay below 2ε<sup>uni</sup>.

### Interpretation

The chart demonstrates the impact of dimensionality ('d') on the test loss during gradient-based optimization. The initial decrease in test loss indicates that the model is learning and improving its performance. The subsequent fluctuations suggest that the optimization process is not perfectly smooth and may be affected by factors such as learning rate, batch size, or data noise.

The convergence of the lines for different 'd' values suggests that, given enough gradient updates, the model can achieve similar performance regardless of the dimensionality. However, higher dimensionality may lead to faster initial learning.

The theoretical loss values (ε<sup>uni</sup>, ε<sup>opt</sup>, 2ε<sup>uni</sup>) likely represent bounds or expected values for the test loss under certain assumptions. Comparing the observed test loss to these theoretical values can provide insights into the efficiency and effectiveness of the optimization process. The fact that the lines generally stay below 2ε<sup>uni</sup> suggests that the model is performing reasonably well. The lines are closer to ε<sup>opt</sup>, which suggests that the model is approaching optimal performance.