## Diagram: Knowledge Graph Poisoning and Mitigation in Security Intelligence

### Overview

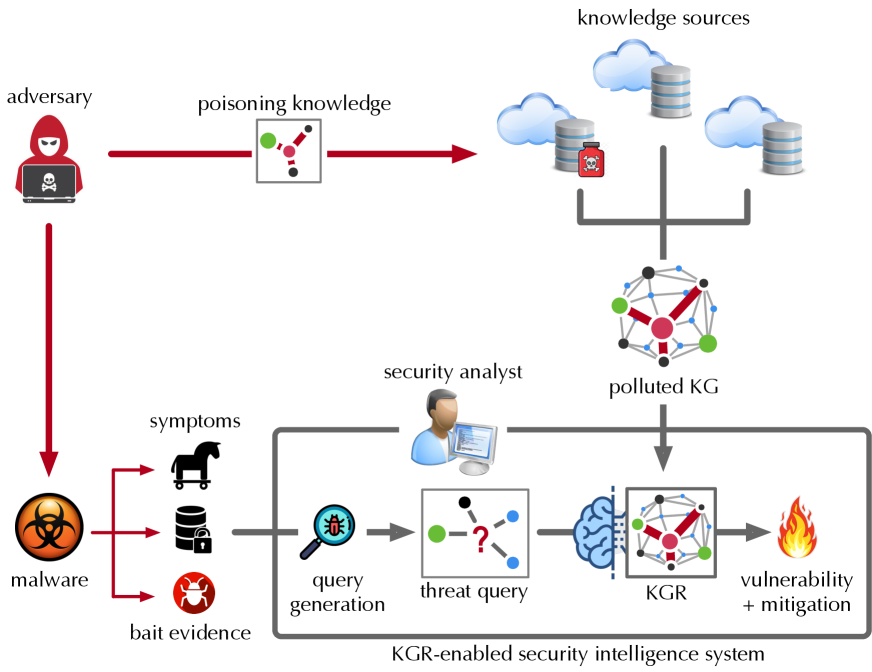

This diagram illustrates a security threat model where an adversary poisons knowledge sources to create a polluted Knowledge Graph (KG), which is then used within a "KGR-enabled security intelligence system" to generate threat queries and ultimately identify vulnerabilities and mitigations. The flow depicts both the attack vector and the defensive response process.

### Components/Axes

The diagram is organized into several key components connected by directional arrows indicating flow and influence.

**Top Region (Attack Initiation):**

* **Adversary:** Represented by a hooded figure with a skull-and-crossbones laptop icon, located in the top-left.

* **Poisoning Knowledge:** A box containing a small graph icon (nodes and edges) with a red arrow pointing from the adversary to this box, and another red arrow from this box to the knowledge sources.

* **Knowledge Sources:** Located in the top-right, depicted as three cloud icons and two database cylinder icons. One database has a red biohazard symbol overlaid, indicating it is compromised. A gray bracket connects these sources to the next component.

* **Polluted KG:** A network graph icon (nodes connected by lines) positioned below the knowledge sources. It receives input from the bracketed knowledge sources.

**Left & Center Region (Malware and Analysis):**

* **Malware:** A large biohazard symbol icon on the left. A red arrow from the adversary points directly to it.

* **Symptoms:** Three icons branching from "malware" via red arrows:

* A Trojan horse icon.

* A database cylinder icon with a lock.

* A bug icon labeled "bait evidence".

* **Security Analyst:** An icon of a person at a computer, positioned above the central system box.

* **KGR-enabled Security Intelligence System:** A large, gray-bordered box encompassing the core analytical process. It contains:

* **Query Generation:** A magnifying glass over a bug icon.

* **Threat Query:** A box containing a graph icon with a red question mark, receiving input from "query generation".

* **KGR (Knowledge Graph Reasoning):** A brain icon connected to a graph icon, receiving input from the "threat query" and the "polluted KG".

* **Vulnerability + Mitigation:** A flame icon, representing the output of the KGR process.

**Flow Arrows:**

* **Red Arrows:** Indicate adversarial or malicious flow (Adversary -> Poisoning Knowledge, Adversary -> Malware, Malware -> Symptoms).

* **Gray Arrows:** Indicate data or process flow within the security system (Symptoms -> Query Generation, Query Generation -> Threat Query, Threat Query -> KGR, Polluted KG -> KGR, KGR -> Vulnerability + Mitigation).

### Detailed Analysis

The process flow is as follows:

1. The **adversary** performs **poisoning knowledge** attacks against various **knowledge sources**.

2. This results in a **polluted KG** (Knowledge Graph).

3. Separately, the adversary deploys **malware**, which exhibits **symptoms** (e.g., Trojan horse behavior, database encryption, bug-like activity) and leaves **bait evidence**.

4. A **security analyst** oversees a **KGR-enabled security intelligence system**.

5. Within this system, observed symptoms and evidence feed into **query generation**.

6. This produces a **threat query** (represented by a graph with a question mark).

7. The **KGR** (Knowledge Graph Reasoning) component processes this threat query in conjunction with the **polluted KG**.

8. The final output of the KGR process is the identification of **vulnerability + mitigation** strategies.

### Key Observations

* The diagram explicitly links data poisoning attacks (top flow) with malware analysis (left flow) through the central knowledge graph and reasoning system.

* The "polluted KG" is a critical nexus, receiving poisoned data and being used for defensive reasoning, highlighting a potential integrity threat to the security system itself.

* The security analyst is positioned as an overseer of the automated system, not directly interacting with the raw data flows.

* The use of red for adversarial actions and gray for system processes creates a clear visual distinction between attack and defense.

### Interpretation

This diagram models a sophisticated, multi-stage cyber-attack and defense scenario. It suggests that modern security intelligence relies on aggregated knowledge graphs, which themselves can become attack surfaces through data poisoning. The core investigative process (KGR) must reason over potentially corrupted data to answer threat queries derived from malware symptoms.

The model implies a Peircean abductive reasoning cycle: observed malware symptoms (the *sign*) generate a query about an unknown threat (the *object*), which is then interpreted using the available knowledge graph (the *interpretant*) to hypothesize vulnerabilities and mitigations. The major anomaly and critical insight is that the "interpretant" (the KG) may be deliberately falsified by the adversary, potentially leading the entire defensive system to incorrect conclusions. This underscores the need for integrity verification of knowledge sources in security intelligence platforms. The diagram ultimately argues for the importance of robust, tamper-resistant knowledge graphs and reasoning engines (KGR) in contemporary cybersecurity.