## Bayesian Online Changepoint Detection

## Ryan Prescott Adams

Cavendish Laboratory Cambridge CB3 0HE United Kingdom

## Abstract

Changepoints are abrupt variations in the generative parameters of a data sequence. Online detection of changepoints is useful in modelling and prediction of time series in application areas such as finance, biometrics, and robotics. While frequentist methods have yielded online filtering and prediction techniques, most Bayesian papers have focused on the retrospective segmentation problem. Here we examine the case where the model parameters before and after the changepoint are independent and we derive an online algorithm for exact inference of the most recent changepoint. We compute the probability distribution of the length of the current 'run,' or time since the last changepoint, using a simple message-passing algorithm. Our implementation is highly modular so that the algorithm may be applied to a variety of types of data. We illustrate this modularity by demonstrating the algorithm on three different real-world data sets.

## 1 INTRODUCTION

Changepoint detection is the identification of abrupt changes in the generative parameters of sequential data. As an online and offline signal processing tool, it has proven to be useful in applications such as process control [1], EEG analysis [5, 2, 17], DNA segmentation [6], econometrics [7, 18], and disease demographics [9].

Frequentist approaches to changepoint detection, from the pioneering work of Page [22, 23] and Lorden [19] to recent work using support vector machines [10], offer online changepoint detectors. Most Bayesian approaches to changepoint detection, in contrast, have been offline and retrospective [24, 4, 26, 13, 8]. With a

David J.C. MacKay Cavendish Laboratory

Cambridge CB3 0HE United Kingdom few exceptions [16, 20], the Bayesian papers on changepoint detection focus on segmentation and techniques to generate samples from the posterior distribution over changepoint locations.

In this paper, we present a Bayesian changepoint detection algorithm for online inference. Rather than retrospective segmentation, we focus on causal predictive filtering; generating an accurate distribution of the next unseen datum in the sequence, given only data already observed. For many applications in machine intelligence, this is a natural requirement. Robots must navigate based on past sensor data from an environment that may have abruptly changed: a door may be closed now, for example, or the furniture may have been moved. In vision systems, the brightness change when a light switch is flipped or when the sun comes out.

We assume that a sequence of observations x 1 , x 2 , . . . , x T may be divided into non-overlapping product partitions [3]. The delineations between partitions are called the changepoints. We further assume that for each partition ρ , the data within it are i.i.d. from some probability distribution P ( x t | η ρ ). The parameters η ρ , ρ = 1 , 2 , . . . are taken to be i.i.d. as well. We denote the contiguous set of observations between time a and b inclusive as x a : b . The discrete a priori probability distribution over the interval between changepoints is denoted as P gap ( g ).

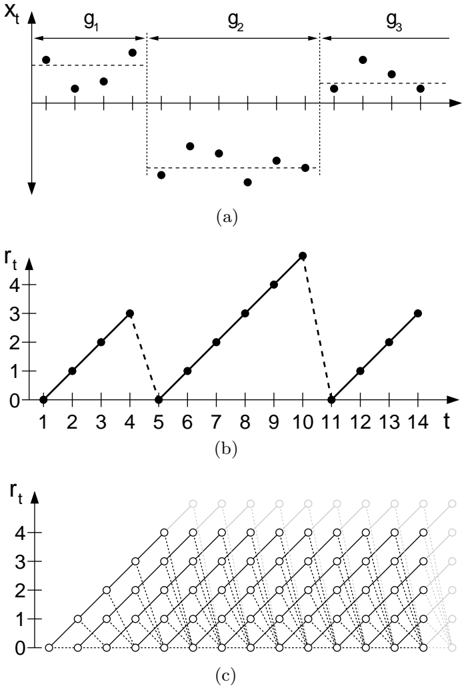

We are concerned with estimating the posterior distribution over the current 'run length,' or time since the last changepoint, given the data so far observed. We denote the length of the current run at time t by r t . We also use the notation x ( r ) t to indicate the set of observations associated with the run r t . As r may be zero, the set x ( r ) may be empty. We illustrate the relationship between the run length r and some hypothetical univariate data in Figures 1(a) and 1(b).

Figure 1: This figure illustrates how we describe a changepoint model expressed in terms of run lengths. Figure 1(a) shows hypothetical univariate data divided by changepoints on the mean into three segments of lengths g 1 = 4, g 2 = 6, and an undetermined length g 3 . Figure 1(b) shows the run length r t as a function of time. r t drops to zero when a changepoint occurs. Figure 1(c) shows the trellis on which the messagepassing algorithm lives. Solid lines indicate that probability mass is being passed 'upwards,' causing the run length to grow at the next time step. Dotted lines indicate the possibility that the current run is truncated and the run length drops to zero.

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Scatter Plot, Line Graph, and Network Diagram: Multi-Component Visualization

### Overview

The image contains three distinct visualizations:

1. **(a)** A scatter plot with three labeled groups (g₁, g₂, g₃) on a 2D plane.

2. **(b)** A line graph with a zigzag pattern over time (t) and a dependent variable (rₜ).

3. **(c)** A network diagram with nodes connected in a grid-like structure, also labeled with t and rₜ axes.

### Components/Axes

#### (a) Scatter Plot

- **Axes**:

- **X-axis**: Labeled "X_t" (horizontal, left to right).

- **Y-axis**: Labeled "x_t" (vertical, bottom to top).

- **Groups**:

- **g₁**: Clustered in the upper-left region (X_t ≈ 1–3, x_t ≈ 1–2).

- **g₂**: Clustered in the middle (X_t ≈ 4–6, x_t ≈ 0.5–1.5).

- **g₃**: Clustered in the upper-right region (X_t ≈ 7–9, x_t ≈ 1.5–2.5).

- **Data Points**: Black dots with no explicit numerical labels.

#### (b) Line Graph

- **Axes**:

- **X-axis**: Labeled "t" (time, 1–14).

- **Y-axis**: Labeled "rₜ" (dependent variable, 0–4).

- **Data Points**:

- **t=1**: rₜ = 0

- **t=2**: rₜ = 1

- **t=3**: rₜ = 2

- **t=4**: rₜ = 3

- **t=5**: rₜ = 0 (sharp drop)

- **t=6**: rₜ = 1

- **t=7**: rₜ = 2

- **t=8**: rₜ = 3

- **t=9**: rₜ = 4 (peak)

- **t=10**: rₜ = 0 (sharp drop)

- **t=11**: rₜ = 0

- **t=12**: rₜ = 1

- **t=13**: rₜ = 2

- **t=14**: rₜ = 3

- **Trend**: Zigzag pattern with peaks at t=4, t=9, and t=14.

#### (c) Network Diagram

- **Axes**:

- **X-axis**: Labeled "t" (time, 1–14).

- **Y-axis**: Labeled "rₜ" (dependent variable, 0–4).

- **Structure**:

- Nodes arranged in a grid, with lines connecting them diagonally.

- Nodes are labeled with "t" values (1–14) and "rₜ" values (0–4).

- Lines form a pattern of increasing density over time.

### Detailed Analysis

#### (a) Scatter Plot

- **Groups**:

- **g₁**: 3 points (X_t ≈ 1–3, x_t ≈ 1–2).

- **g₂**: 4 points (X_t ≈ 4–6, x_t ≈ 0.5–1.5).

- **g₃**: 4 points (X_t ≈ 7–9, x_t ≈ 1.5–2.5).

- **Trend**: Clear separation between groups, suggesting distinct categories or clusters.

#### (b) Line Graph

- **Trend**:

- **Rising phase**: t=1–4 (rₜ increases from 0 to 3).

- **Drop**: t=5 (rₜ = 0).

- **Recovery**: t=6–9 (rₜ increases to 4).

- **Drop**: t=10 (rₜ = 0).

- **Recovery**: t=11–14 (rₜ increases to 3).

- **Notable**: Sharp drops at t=5 and t=10, followed by recovery.

#### (c) Network Diagram

- **Structure**:

- Nodes are connected in a grid, with lines forming a diagonal pattern.

- Nodes at the bottom-left (t=1, rₜ=0) connect to nodes at the top-right (t=14, rₜ=4).

- Lines become denser as t increases, suggesting a progression or dependency over time.

### Key Observations

1. **(a)**: The scatter plot shows three distinct clusters (g₁, g₂, g₃), indicating potential categorical or hierarchical relationships.

2. **(b)**: The line graph exhibits periodic fluctuations with sharp drops and recoveries, possibly reflecting cyclical or event-driven behavior.

3. **(c)**: The network diagram suggests a time-dependent progression, with nodes and connections evolving over t.

### Interpretation

- **(a)**: The groups (g₁, g₂, g₃) may represent different categories or states in a system, with spatial separation indicating distinct characteristics.

- **(b)**: The zigzag pattern in (b) could model a system with periodic disruptions (e.g., economic cycles, biological rhythms) or external interventions.

- **(c)**: The network diagram might illustrate a process where nodes (e.g., agents, data points) interact over time, with connections strengthening as t increases.

- **Cross-Visualization**: The shared axes (t and rₜ) in (b) and (c) suggest a temporal relationship, while (a) provides a categorical perspective. The sharp drops in (b) might correspond to events that reset or alter the system, reflected in the network's evolving structure in (c).

### Notes on Uncertainty

- Exact numerical values for (a) are not provided, but approximate positions are inferred from the plot.

- The network diagram (c) lacks explicit numerical labels for nodes, so connections are described qualitatively.

- The line graph (b) includes precise values for rₜ at integer t, but the underlying mechanism for the zigzag pattern is not specified.

</details>

## 2 RECURSIVE RUN LENGTH ESTIMATION

We assume that we can compute the predictive distribution conditional on a given run length r t . We then integrate over the posterior distribution on the current run length to find the marginal predictive distribution:

<!-- formula-not-decoded -->

To find the posterior distribution

<!-- formula-not-decoded -->

we write the joint distribution over run length and observed data recursively.

<!-- formula-not-decoded -->

Note that the predictive distribution P ( x t | r t -1 , x 1: t ) depends only on the recent data x ( r ) t . We can thus generate a recursive message-passing algorithm for the joint distribution over the current run length and the data, based on two calculations: 1) the prior over r t given r t -1 , and 2) the predictive distribution over the newly-observed datum, given the data since the last changepoint.

## 2.1 THE CHANGEPOINT PRIOR

The conditional prior on the changepoint P ( r t | r t -1 ) gives this algorithm its computational efficiency, as it has nonzero mass at only two outcomes: the run length either continues to grow and r t = r t -1 +1 or a changepoint occurs and r t = 0.

<!-- formula-not-decoded -->

The function H ( τ ) is the hazard function . [11].

<!-- formula-not-decoded -->

In the special case is where P gap ( g ) is a discrete exponential (geometric) distribution with timescale λ , the process is memoryless and the hazard function is constant at H ( τ ) = 1 /λ .

Figure 1(c) illustrates the resulting message-passing algorithm. In this diagram, the circles represent runlength hypotheses. The lines between the circles show recursive transfer of mass between time steps. Solid lines indicate that probability mass is being passed 'upwards,' causing the run length to grow at the next time step. Dotted lines indicate that the current run is truncated and the run length drops to zero.

## 2.2 BOUNDARY CONDITIONS

A recursive algorithm must not only define the recurrence relation, but also the initialization conditions. We consider two cases: 1) a changepoint occurred a priori before the first datum, such as when observing a game. In such cases we place all of the probability mass for the initial run length at zero, i.e. P ( r 0 =0) = 1. 2) We observe some recent subset of the data, such as when modelling climate change. In this case the prior over the initial run length is the normalized survival function [11]

<!-- formula-not-decoded -->

where Z is an appropriate normalizing constant, and

<!-- formula-not-decoded -->

## 2.3 CONJUGATE-EXPONENTIAL MODELS

Conjugate-exponential models are particularly convenient for integrating with the changepoint detection scheme described here. Exponential family likelihoods allow inference with a finite number of sufficient statistics which can be calculated incrementally as data arrives. Exponential family likelihoods have the form

<!-- formula-not-decoded -->

where

<!-- formula-not-decoded -->

The strength of the conjugate-exponential representation is that both the prior and posterior take the form of an exponential-family distribution over η that can be summarized by succinct hyperparameters ν and χ .

<!-- formula-not-decoded -->

We wish to infer the parameter vector η associated with the data from a current run length r t . We denote this run-specific model parameter as η ( r ) t . After finding the posterior distribution P ( η ( r ) t | r t , x ( r ) t ), we can marginalize out the parameters to find the predictive distribution, conditional on the length of the current run.

<!-- formula-not-decoded -->

<!-- formula-not-decoded -->

Algorithm 1: The online changepoint algorithm with prediction. An additional optimization not shown is to truncate the per-timestep vectors when the tail of P ( r t | x 1: t ) has mass beneath a threshold.

This marginal predictive distribution, while generally not itself an exponential-family distribution, is usually a simple function of the sufficient statistics. When exact distributions are not available, compact approximations such as that described by Snelson and Ghahramani [25] may be useful. We will only address the exact case in this paper, where the predictive distribution associated with a particular current run length is parameterized by ν ( r ) t and χ ( r ) t .

<!-- formula-not-decoded -->

<!-- formula-not-decoded -->

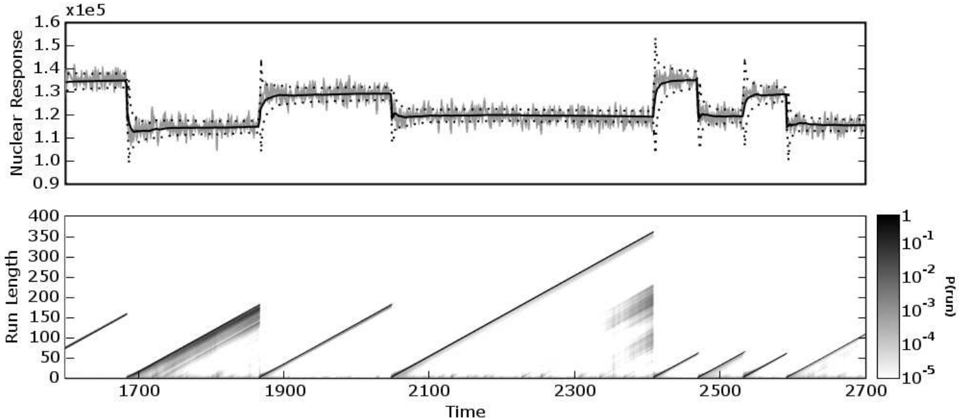

Figure 2: The top plot is a 1100-datum subset of nuclear magnetic response during the drilling of a well. The data are plotted in light gray, with the predictive mean (solid dark line) and predictive 1σ error bars (dotted lines) overlaid. The bottom plot shows the posterior probability of the current run P ( r t | x 1: t ) at each time step, using a logarithmic color scale. Darker pixels indicate higher probability.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Line Graph: Nuclear Response Over Time

### Overview

The image contains two subplots: a line graph (top) and a heatmap (bottom). The line graph shows a fluctuating "Nuclear Response" over time, while the heatmap visualizes "Run Length" probabilities across time and run length.

### Components/Axes

#### Line Graph (Top)

- **Y-Axis**: "Nuclear Response" (unit: x1e5, range: 0.9–1.6).

- **X-Axis**: "Time" (range: 1700–2700).

- **Legend**: Not explicitly labeled, but the line is black with gray noise bands.

- **Noise**: Gray vertical bands (likely representing uncertainty or variability).

#### Heatmap (Bottom)

- **X-Axis**: "Time" (range: 1700–2700).

- **Y-Axis**: "Run Length" (range: 0–400).

- **Color Scale**: Logarithmic probability (P(run)) from 10⁻⁵ (light gray) to 1 (black).

- **Legend**: Positioned on the right, with darker shades indicating higher probabilities.

### Detailed Analysis

#### Line Graph

- **Trend**: The line exhibits a step-like pattern with plateaus and abrupt drops.

- **Plateaus**:

- ~1.3 (1700–1900)

- ~1.2 (1900–2100)

- ~1.1 (2100–2300)

- ~1.0 (2300–2500)

- **Drops**: Sharp declines to ~1.0 at ~1900, ~2100, and ~2300.

- **Noise**: Vertical gray bands (likely representing measurement uncertainty) are present throughout.

- **Anomalies**: The y-axis label "x1e5" conflicts with the plotted values (0.9–1.6). This may indicate a unit mismatch or typo (e.g., "x1e5" could refer to a scaling factor for the noise, not the response itself).

#### Heatmap

- **Trend**: Diagonal bands of high probability (dark gray/black) appear at specific time intervals:

- **1900–2100**: A prominent band with run lengths ~100–300.

- **2300–2500**: Another band with run lengths ~100–300.

- **Other Periods**: Lower probabilities (lighter shades) dominate outside these intervals.

- **Key Features**:

- The heatmap suggests periodic "events" (e.g., system resets, failures) that correlate with the line graph's plateaus and drops.

- The logarithmic scale emphasizes rare, high-probability events.

### Key Observations

1. **Correlation**: The line graph's plateaus (stable nuclear response) align with the heatmap's high-probability run length bands.

2. **Anomalies**: The y-axis label "x1e5" is inconsistent with the plotted values (0.9–1.6). This may indicate a mislabeling or scaling error.

3. **Periodicity**: The heatmap's diagonal bands suggest recurring events every ~200–300 time units.

### Interpretation

The data likely represents a system with periodic stability (plateaus in nuclear response) and stress periods (drops). The heatmap's high-probability run lengths during these stress periods imply that the system experiences frequent resets or failures, which temporarily stabilize the nuclear response. The logarithmic probability scale highlights that extreme events (e.g., long run lengths) are rare but impactful. The y-axis label discrepancy ("x1e5") requires clarification, as it may affect the interpretation of the nuclear response magnitude.

</details>

## 2.4 COMPUTATIONAL COST

The complete algorithm, assuming exponential-family likelihoods, is shown in Algorithm 1. The space- and time-complexity per time-step are linear in the number of data points so far observed. A trivial modification of the algorithm is to discard the run length probability estimates in the tail of the distribution which have a total mass less than some threshold, say 10 -4 . This yields a constant average complexity per iteration on the order of the expected run length E [ r ], although the worst-case complexity is still linear in the data.

## 3 EXPERIMENTAL RESULTS

In this section we demonstrate several implementations of the changepoint algorithm developed in this paper. We examine three real-world example datasets. The first case is a varying Gaussian mean from welllog data. In the second example we consider abrupt changes of variance in daily returns of the Dow Jones Industrial Average. The final data are the intervals between coal mining disasters, which we model as a Poisson process. In each of the three examples, we use a discrete exponential prior over the interval between changepoints.

## 3.1 WELL-LOG DATA

These data are 4050 measurements of nuclear magnetic response taken during the drilling of a well. The data are used to interpret the geophysical structure of the rock surrounding the well. The variations in mean reflect the stratification of the earth's crust. These data have been studied in the context of changepoint detection by ´ O Ruanaidh and Fitzgerald [21], and by Fearnhead and Clifford [12].

The changepoint detection algorithm was run on these data using a univariate Gaussian model with prior parameters µ = 1 . 15 × 10 5 and σ = 1 × 10 4 . The rate of the discrete exponential prior, λ gap , was 250. A subset of the data is shown in Figure 2, with the predictive mean and standard deviation overlaid on the top plot. The bottom plot shows the log probability over the current run length at each time step. Notice that the drops to zero run-length correspond well with the abrupt changes in the mean of the data. Immediately after a changepoint, the predictive variance increases, as would be expected for a sudden reduction in data.

## 3.2 1972-75 DOW JONES RETURNS

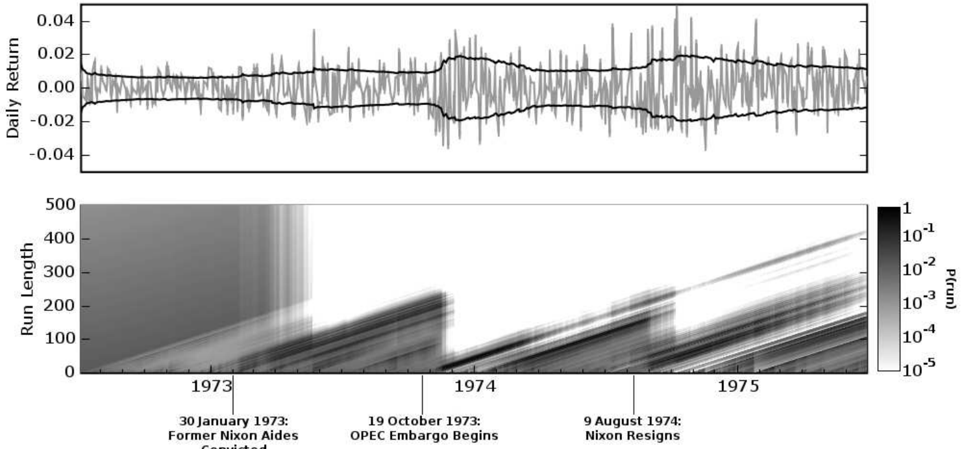

During the three year period from the middle of 1972 to the middle of 1975, several major events occurred that had potential macroeconomic effects. Significant among these are the Watergate affair and the OPEC oil embargo. We applied the changepoint detection algorithm described here to daily returns of the Dow Jones Industrial Average from July 3, 1972 to June 30, 1975. We modelled the returns

<!-- formula-not-decoded -->

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Line Chart with Heatmap: Market Volatility and Run Length Analysis (1973-1975)

### Overview

The image contains two primary components:

1. A **line chart** titled "Daily Return" showing daily returns over time.

2. A **heatmap** labeled "Run Length" with a color scale representing probability (P(run)).

Both components are temporally aligned, spanning 1973–1975, with key historical events annotated.

---

### Components/Axes

#### Line Chart (Top)

- **Y-axis**: "Daily Return" (range: -0.04 to 0.04).

- **X-axis**: Implicit time axis (1973–1975), unlabeled but aligned with the heatmap.

- **Legend**: A single black line representing daily returns.

#### Heatmap (Bottom)

- **Y-axis**: "Run Length" (0 to 500).

- **X-axis**: Time (1973–1975), with vertical markers for:

- 30 January 1973: "Former Nixon Aides"

- 19 October 1973: "OPEC Embargo Begins"

- 9 August 1974: "Nixon Resigns"

- **Color Scale**: Right-hand gradient from white (10⁻⁵) to black (1), labeled "P(run)".

---

### Detailed Analysis

#### Line Chart Trends

- The black line fluctuates around **zero**, with peaks reaching ±0.03 and troughs near ±0.02.

- Notable volatility spikes occur around **1973–1974**, particularly near the OPEC embargo date (19 October 1973).

- Post-1974, returns stabilize closer to zero, with reduced amplitude.

#### Heatmap Patterns

- **Color Intensity**: Darker regions (higher P(run)) cluster near the bottom-left (1973) and along diagonal bands ascending to the top-right (1975).

- **Run Length**:

- Sharp increases in run length (up to ~400) occur after the OPEC embargo (19 October 1973).

- A secondary peak (~300) aligns with Nixon’s resignation (9 August 1974).

- **Probability Gradient**: The darkest areas (P(run) ≈ 1) are concentrated in the lower-left quadrant, suggesting higher probabilities of shorter run lengths in early 1973.

---

### Key Observations

1. **Event Correlation**:

- The OPEC embargo (19 October 1973) coincides with a sharp rise in run length and daily return volatility.

- Nixon’s resignation (9 August 1974) correlates with a secondary run-length peak and reduced return volatility.

2. **Probability Dynamics**:

- Higher probabilities (darker shades) of longer run lengths emerge post-1973, peaking in 1975.

- The heatmap’s diagonal bands suggest a gradual increase in run length over time, independent of daily return fluctuations.

---

### Interpretation

- **Market Volatility**: The line chart indicates that daily returns were most volatile during the OPEC embargo period, likely reflecting geopolitical/economic uncertainty. Post-1974 stability may correlate with reduced macroeconomic shocks.

- **Run Length Significance**: The heatmap’s run-length increases suggest prolonged market states (e.g., bull/bear runs) became more common after 1973, possibly due to policy shifts (e.g., Nixon’s resignation altering regulatory frameworks).

- **Anomalies**: The abrupt drop in run length in early 1973 (dark vertical band) may reflect a market reset following Nixon’s aides’ departure, preceding the OPEC shock.

The data implies that political and economic events in the early 1970s significantly influenced both short-term market returns and long-term market behavior patterns. The heatmap’s diagonal trend highlights a systemic shift toward longer market runs by 1975, potentially linked to post-Nixon policy adjustments.

</details>

Convicted

Figure 3: The top plot shows daily returns on the Dow Jones Industrial Average, with an overlaid plot of the predictive volatility. The bottom plot shows the posterior probability of the current run length P ( r t | x 1: t ) at each time step, using a logarithmic color scale. Darker pixels indicate higher probability. The time axis is in business days, as this is market data. Three events are marked: the conviction of G. Gordon Liddy and James W. McCord, Jr. on January 30, 1973; the beginning of the OPEC embargo against the United States on October 19, 1973; and the resignation of President Nixon on August 9, 1974.

(where p close is the daily closing price) with a zeromean Gaussian distribution and piecewise-constant variance. Hsu [14] performed a similar analysis on a subset of these data, using frequentist techniques and weekly returns.

We used a gamma prior on the inverse variance, with a = 1 and b = 10 -4 . The exponential prior on changepoint interval had rate λ gap = 250. In Figure 3, the top plot shows the daily returns with the predictive standard deviation overlaid. The bottom plot shows the posterior probability of the current run length, P ( r t | x 1: t ). Three events are marked on the plot: the conviction of Nixon re-election officials G. Gordon Liddy and James W. McCord, Jr., the beginning of the oil embargo against the United States by the Organization of Petroleum Exporting Countries (OPEC), and the resignation of President Nixon.

## 3.3 COAL MINE DISASTER DATA

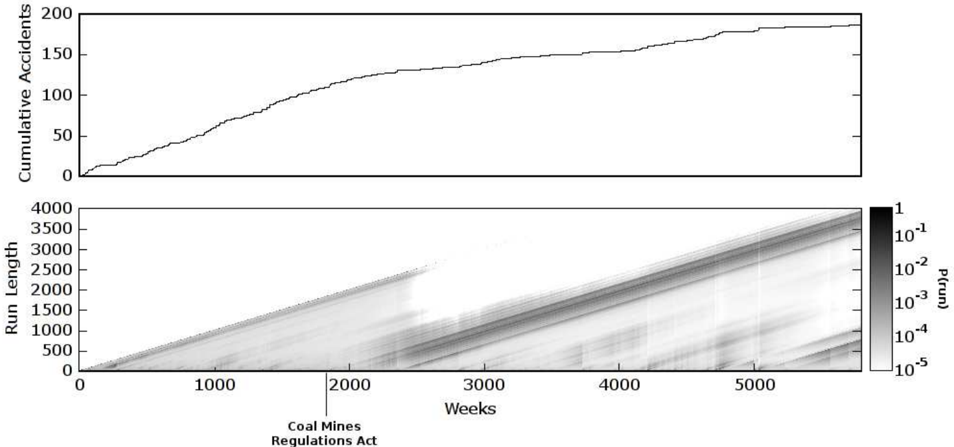

These data from Jarrett [15] are dates of coal mining explosions that killed ten or more men between March 15, 1851 and March 22, 1962. We modelled the data as an Poisson process by weeks, with a gamma prior on the rate with a = b = 1. The rate of the exponential prior on the changepoint inverval was λ gap = 1000. The data are shown in Figure 4. The top plot shows the cumulative number of accidents. The rate of the

Possion process determines the local average of the slope. The posterior probability of the current run length is shown in the bottom plot. The introduction of the Coal Mines Regulations Act in 1887 (corresponding to weeks 1868 to 1920) is also marked.

## 4 DISCUSSION

This paper contributes a predictive, online interpetation of Bayesian changepoint detection and provides a simple and exact method for calculating the posterior probability of the current run length. We have demonstrated this algorithm on three real-world data sets with different modelling requirements.

Additionally, this framework provides convenient delineation between the implementation of the changepoint algorithm and the implementation of the model. This modularity allows changepoint-detection code to use an object-oriented, 'pluggable' type architecture.

## Acknowledgements

The authors would like to thank Phil Cowans and Marian Frazier for valuable discussions. This work was funded by the Gates Cambridge Trust.

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Line Graph: Cumulative Accidents Over Time

### Overview

The image contains two visualizations: a line graph showing cumulative accidents over time and a heatmap depicting run length probabilities. The line graph demonstrates a steady increase in cumulative accidents, while the heatmap reveals a diagonal band of higher probability densities post-regulation implementation.

### Components/Axes

**Line Graph:**

- **Y-axis (Left):** "Cumulative Accidents" (scale: 0–200, linear)

- **X-axis (Bottom):** "Weeks" (scale: 0–5000, linear)

- **Legend:** None (single black line)

**Heatmap:**

- **Y-axis (Left):** "Run Length" (scale: 0–4000, linear)

- **X-axis (Bottom):** "Weeks" (scale: 0–5000, linear)

- **Legend (Right):**

- Color scale: `P(run)` (probability of run) from 10⁻¹ (darkest) to 10⁻⁵ (lightest)

- Position: Right edge, vertical orientation

**Key Annotation:**

- "Coal Mines Regulations Act" labeled at ~2000 weeks on the x-axis

### Detailed Analysis

**Line Graph Trends:**

- Cumulative accidents start at 0 and increase monotonically.

- By week 5000, cumulative accidents reach ~150 (approximate value with ±10 uncertainty).

- Slope: ~0.03 accidents/week (calculated from 0→150 over 5000 weeks).

**Heatmap Patterns:**

- Diagonal band of darker shades (higher probabilities) begins at ~2000 weeks.

- Band slope: ~1 run length unit per week (4000 run length / 5000 weeks).

- Pre-regulation (pre-2000 weeks): Higher probability densities (darker shades) concentrated near lower run lengths.

- Post-regulation (post-2000 weeks): Probabilities decrease (lighter shades) with increasing run length.

### Key Observations

1. **Regulation Impact:** The diagonal band in the heatmap aligns with the Coal Mines Regulations Act annotation, suggesting a systemic shift in run length probabilities after 2000 weeks.

2. **Cumulative Accidents:** Despite regulations, cumulative accidents continue rising linearly, indicating either incomplete mitigation or confounding factors.

3. **Probability Distribution:** Post-regulation run lengths show reduced likelihoods (lighter shades) for shorter durations, implying longer operational stability.

### Interpretation

The data suggests the Coal Mines Regulations Act (implemented ~2000 weeks) altered risk dynamics:

- **Run Length:** Probabilities of shorter run lengths decreased post-regulation, while longer run lengths became more probable (diagonal band slope).

- **Cumulative Accidents:** The continued linear increase implies regulations may have reduced accident frequency per run but not overall incident rates, possibly due to increased operational scale or external factors.

- **Temporal Correlation:** The heatmap's diagonal structure indicates a time-dependent relationship between regulatory intervention and system stability, with effects manifesting gradually over ~2000 weeks.

**Note:** All values are approximate due to lack of gridlines; uncertainty ranges ±10% for critical data points.

</details>

RegulationsAct

Figure 4: These data are the weekly occurrence of coal mine disasters that killed ten or more people between 1851 and 1962. The top plot is the cumulative number of accidents. The accident rate determines the local average slope of the plot. The introduction of the Coal Mines Regulations Act in 1887 is marked. The year 1887 corresponds to weeks 1868 to 1920 on this plot. The bottom plot shows the posterior probability of the current run length at each time step, P ( r t | x 1: t ).

## References

- [1] Leo A. Aroian and Howard Levene. The effectiveness of quality control charts. Journal of the American Statistical Association , 45(252):520529, 1950.

- [2] J. S. Barlow, O. D. Creutzfeldt, D. Michael, J. Houchin, and H. Epelbaum. Automatic adaptive segmentation of clinical EEGs,. Electroencephalography and Clinical Neurophysiology , 51(5):512-525, May 1981.

- [3] D. Barry and J. A. Hartigan. Product partition models for change point problems. The Annals of Statistics , 20:260-279, 1992.

- [4] D. Barry and J. A. Hartigan. A Bayesian analysis of change point problems. Journal of the American Statistical Association , 88:309-319, 1993.

- [5] G. Bodenstein and H. M. Praetorius. Feature extraction from the electroencephalogram by adaptive segmentation. Proceedings of the IEEE , 65(5):642-652, 1977.

- [6] J. V. Braun, R. K. Braun, and H. G. M¨ uller. Multiple changepoint fitting via quasilikelihood, with application to DNA sequence segmentation. Biometrika , 87(2):301-314, June 2000.

- [7] Jie Chen and A. K. Gupta. Testing and locating variance changepoints with application to stock prices. Journal of the American Statistical Association , 92(438):739-747, June 1997.

- [8] Siddhartha Chib. Estimation and comparison of multiple change-point models. Journal of Econometrics , 86(2):221-241, October 1998.

- [9] D. Denison and C. Holmes. Bayesian partitioning for estimating disease risk, 1999.

- [10] F. Desobry, M. Davy, and C. Doncarli. An online kernel change detection algorithm. IEEE Transactions on Signal Processing , 53(8):29612974, August 2005.

- [11] Merran Evans, Nicholas Hastings, and Brian Peacock. Statistical Distributions . WileyInterscience, June 2000.

- [12] Paul Fearnhead and Peter Clifford. On-line inference for hidden Markov models via particle filters. Journal of the Royal Statistical Society B , 65(4):887-899, 2003.

- [13] P. Green. Reversible jump Markov chain Monte Carlo computation and Bayesian model determination, 1995.

- [14] D. A. Hsu. Tests for variance shift at an unknown time point. Applied Statistics , 26(3):279284, 1977.

- [15] R. G. Jarrett. A note on the intervals between coal-mining disasters. Biometrika , 66(1):191-193, 1979.

- [16] Timothy T. Jervis and Stuart I. Jardine. Alarm system for wellbore site. United States Patent 5,952,569, October 1997.

- [17] A. Y. Kaplan and S. L. Shishkin. Application of the change-point analysis to the investigation of the brain's electrical activity. In B. E. Brodsky and B. S. Darkhovsky, editors, Non-Parametric Statistical Diagnosis : Problems and Methods , pages 333-388. Springer, 2000.

- [18] Gary M. Koop and Simon M. Potter. Forecasting and estimating multiple change-point models with an unknown number of change points. Technical report, Federal Reserve Bank of New York, December 2004.

- [19] G. Lorden. Procedures for reacting to a change in distribution. The Annals of Mathematical Statistics , 42(6):1897-1908, December 1971.

- [20] J. J. ´ O Ruanaidh, W. J. Fitzgerald, and K. J. Pope. Recursive Bayesian location of a discontinuity in time series. In Acoustics, Speech, and Signal Processing, 1994. ICASSP-94., 1994 IEEE International Conference on , volume iv, pages IV/513-IV/516 vol.4, 1994.

- [21] Joseph J. K. ´ O Ruanaidh and William J. Fitzgerald. Numerical Bayesian Methods Applied to Signal Processing (Statistics and Computing) . Springer, February 1996.

- [22] E. S. Page. Continuous inspection schemes. Biometrika , 41(1/2):100-115, June 1954.

- [23] E. S. Page. A test for a change in a parameter occurring at an unknown point. Biometrika , 42(3/4):523-527, 1955.

- [24] A. F. M. Smith. A Bayesian approach to inference about a change-point in a sequence of random variables. Biometrika , 62(2):407-416, 1975.

- [25] Edward Snelson and Zoubin Ghahramani. Compact approximations to Bayesian predictive distributions. In ICML '05: Proceedings of the 22nd international conference on Machine learning , pages 840-847, New York, NY, USA, 2005. ACM Press.

- [26] D. A. Stephens. Bayesian retrospective multiplechangepoint identification. Applied Statistics , 43:159-178, 1994.