# Unknown Title

## Complexity of Networks (reprise)

## Russell K. Standish

Mathematics and Statistics, University of New South Wales hpcoder@hpcoders.com.au

November 3, 2018

## Abstract

Network or graph structures are ubiquitous in the study of complex systems. Often, we are interested in complexity trends of these system as it evolves under some dynamic. An example might be looking at the complexity of a food web as species enter an ecosystem via migration or speciation, and leave via extinction.

In a previous paper, a complexity measure of networks was proposed based on the complexity is information content paradigm. To apply this paradigm to any object, one must fix two things: a representation language, in which strings of symbols from some alphabet describe, or stand for the objects being considered; and a means of determining when two such descriptions refer to the same object. With these two things set, the information content of an object can be computed in principle from the number of equivalent descriptions describing a particular object.

The previously proposed representation language had the deficiency that the fully connected and empty networks were the most complex for a given number of nodes. A variation of this measure, called zcomplexity, applied a compression algorithm to the resulting bitstring representation, to solve this problem. Unfortunately, zcomplexity proved too computationally expensive to be practical.

In this paper, I propose a new representation language that encodes the number of links along with the number of nodes and a representation of the linklist. This, like zcomplexity, exhibits minimal complexity for fully connected and empty networks, but is as tractable as the original measure.

This measure is extended to directed and weighted links, and several real world networks have their network complexities compared with randomly generated model networks with matched node and link counts, and matched link weight distributions. Compared with the random networks, the real world networks have significantly higher complexity, as do artificially generated food webs created via an evolutionary process, in several well known ALife models.

Keywords: complexity; information; networks; foodwebs

## 1 Introduction

This work situates itself firmly within the complexity is information content paradigm, a topic that dates back to the 1960s with the work of Kolmogorov, Chaitin and Solomonoff. Prokopenko et al. [28] provide a recent review of the area, and argue for the importance of information theory to the notion of complexity measure. In [32], I argue that information content provides an overarching complexity measure that connects the many and various complexity measures proposed (see [14] for a review) up to that time. In some ways, information-based complexity measures are priveleged, motivating the present work to develop a satisfactory information-based complexity measure of networks.

The idea is fairly simple. In most cases, there is an obvious prefix-free representation language within which descriptions of the objects of interest can be encoded. There is also a classifier of descriptions that can determine if two descriptions correspond to the same object. This classifier is commonly called the observer , denoted O ( x ).

To compute the complexity of some object x , count the number of equivalent descriptions ω ( /lscript,x ) of length /lscript that map to the object x under the agreed classifier. Then the complexity of x is given in the limit as /lscript →∞ :

$$( x ) = \lim _ { t \rightarrow + \infty } ( t \log N - \log w )$$

where N is the size of the alphabet used for the representation language.

Because the representation language is prefix-free, every description y in that language has a unique prefix of length s ( y ). The classifier does not care what symbols appear after this unique prefix. Hence ω ( /lscript,O ( y )) ≥ N /lscript -s ( y ) . As /lscript increases, ω must increase as fast, if not faster than N /lscript , and do so monotonically. Therefore C ( O ( y )) decreases monotonically with /lscript , but is bounded below by 0. So equation (1) converges.

The relationship of this algorithmic complexity measure to more familiar measures such as Kolmogorov (KCS) complexity, is given by the coding theorem[22, Thm 4.3.3]. In this case, the descriptions are halting programs of some given universal Turing machine U , which is also the classifier. Equation (1) then corresponds to the logarithm of the universal a priori probability , which is a kind of formalised Occam's razor that gives higher weight to simpler (in the KCS sense) computable theories for generating priors in Bayesian reasoning. The difference between this version of C and KCS complexity is bounded by a constant independent of the complexity of x , so these measures become equivalent in the limit as message size goes to infinity.

Many measures of network properties have been proposed, starting with node count and connectivity (no. of links), and passing in no particular order through cyclomatic number (no. of independent loops), spanning height (or width), no. of spanning trees, distribution of links per node and so on. Graphs tend to be classified using these measures small world graphs tend to have small spanning height relative to the number of nodes and scale free networks exhibit a power law distribution of node link count.

Some of these measures are related to graph complexity, for example node count and connectivity can be argued to be lower and upper bounds of the network complexity respectively. More recent attempts include offdiagonal complexity [9], which was compared with an earlier version of this proposal in [36], and medium articulation [42, 19].

However, none of the proposed measures gives a theoretically satisfactory complexity measure, which in any case is context dependent (ie dependent on the observer O , and the representation language).

In setting the classifier function, we assume that only the graph's topology counts - positions, and labels of nodes and links are not considered important. Links may be directed or undirected. We consider the important extension of weighted links in a subsequent section.

A plausible variant classifier problem might occur when the network describes a dynamical system (such as interaction matrix of the EcoLab model, which will be introduced later). In such a case, one might be interested in classifying the network according to numbers and types of dynamical attractors, which will be strongly influenced by the cyclomatic count of the core network, but only weakly influenced by the periphery. However, this problem lies beyond the scope of this paper.

The issue of representation language, however is far more problematic. In some cases, eg with genetic regulatory networks, there may be a clear representation language, but for many cases there is no uniquely identifiable language. However, the invariance theorem [22, Thm 2.1.1] states that the difference in complexity determined by two different Turing complete representation languages (each of which is determined by a universal Turing machine) is at most a constant, independent of the objects being measured. Thus, in some sense it does not matter what representation one picks - one is free to pick a representation that is convenient, however one must take care with non-Turing complete representations.

In the next section, I will present a concrete graph description language that can be represented as binary strings, and is amenable to analysis. The quantity ω in eq (1) can be simply computed from the size of the automorphism group, for which computationally feasible algorithms exist[23].

The notion of complexity presented in this paper naturally marries with thermodynamic entropy S [21]:

$$\max = C + S$$

where S max is called potential entropy , ie the largest possible value that entropy can assume under the specified conditions. The interest here is that a dynamical process updating network links can be viewed as a dissipative system, with links being made and broken corresponding to a thermodynamic flux. It would be interesting to see if such processes behave according the maximum entropy production principle[10] or the minimum entropy production principle[27].

In artificial life, the issue of complexity trends in evolution is extremely important[5]. I have explored the complexity of individual Tierran organisms[33,

35], which, if anything, shows a trend to simpler organisms. However, it is entirely plausible that complexity growth takes place in the network of ecological interactions between individuals. For example, in the evolution of the eukaryotic cell, mitochondria are simpler entities than the free-living bacteria they were supposedly descended from. A computationally feasible measure of network complexity is an important prerequisite for further studies of evolutionary complexity trends.

In the results section, several well-known food web datasets are analysed, and compared with randomly-shuffled 'neutral models'. An intriguing 'complexity surplus' is observed, which is also observed in the food webs of several different ALife systems that have undergone evolution.

## 2 Representation Language

One very simple implementation language for undirected graphs is to label the nodes 1 ..n , and the links by the pair ( i, j ) , i < j of nodes that the links connect. The linklist can be represented simply by an L = n ( n -1) / 2 length bitstring, where the 1 2 j ( j -1) + i th position is 1 if link ( i, j ) is present, and 0 otherwise.

The directed case requires doubling the size of the linklist, ie or L = n ( n -1). We also need to prepend the string with the value of N in order to make it prefixfree - the simplest approach is to interpret the number of leading 1s as the number n , which adds a term n +1 to the measured complexity.

This proposal was analysed in [36], and has the unsatisfactory property that the fully connected or empty networks are maximally complex for a given node count. An alternative scheme is to also include the link count as part of the prefix, and to use binary coding for both the node and link counts. The sequence will start with /ceilingleft log 2 n /ceilingright 1's, followed by a zero stop bit, so the prefix will be 2 /ceilingleft log 2 n /ceilingright + /ceilingleft log 2 L /ceilingright +1 bits.

This scheme entails that some of bitstrings are not valid networks, namely ones where the link count does not match the number of 1s in the linklist. We can, however, use rank encoding[25] of the linklist to represent the link pattern. The number of possible linklists corresponding to a given node/link specification is given by

$$\sigma = ( L ) = \frac { L _ { 1 } } { ( L - l ) ! }$$

This will have a minimum value of 1 at l = 0 (empty network) and l = L , the fully connected network.

Finally, we need to compute ω of the linklist, which is just the total number of possible renumberings of the nodes ( n !), divided by the size of the graph automorphism group, which can be practically computed by Nauty[23], or a new algorithm I developed called SuperNOVA[38] which exhibits better performance on sparsely linked networks.

A network A that has a link wherever B doesn't, and vice-versa might be called a complement of B . A bitstring for A can be found by inverting the 1s and 0s in the linklist part of the network description. Obviously, ω ( A,L ) = ω ( B,L ),

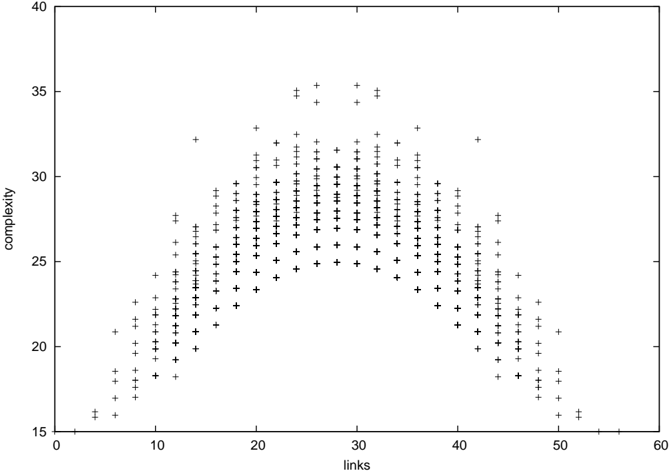

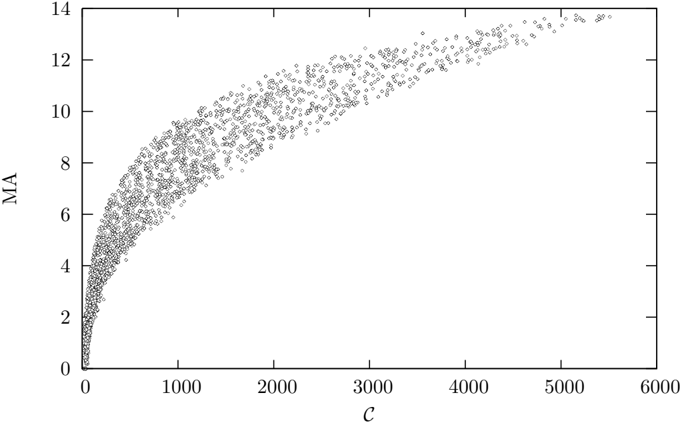

Figure 1: The new complexity measure as a function of link count for all networks with 8 nodes. This shows the strong dependence of complexity on link count, and the symmetry between networks and their complements.

<details>

<summary>Image 1 Details</summary>

### Visual Description

\n

## Scatter Plot: Complexity vs. Links

### Overview

The image presents a scatter plot visualizing the relationship between "links" and "complexity". The plot consists of numerous data points represented by '+' symbols. The distribution of points suggests a correlation between the two variables, peaking at a certain range of "links" values.

### Components/Axes

* **X-axis:** Labeled "links", ranging from approximately 0 to 60, with tick marks at intervals of 10.

* **Y-axis:** Labeled "complexity", ranging from approximately 15 to 40, with tick marks at intervals of 5.

* **Data Points:** Represented by '+' symbols, scattered across the plot area.

* **No Legend:** There is no legend present in the image.

### Detailed Analysis

The data points exhibit a bell-shaped distribution. The density of points is lowest at both ends of the "links" axis (near 0 and 60) and highest around the "links" value of 25-30.

Here's an approximate breakdown of data point distribution, noting the inherent difficulty in precisely reading values from a scatter plot of this type:

* **Links = 10:** Complexity ranges from approximately 18 to 28.

* **Links = 20:** Complexity ranges from approximately 22 to 34.

* **Links = 25:** Complexity ranges from approximately 24 to 36. This appears to be the peak density of points.

* **Links = 30:** Complexity ranges from approximately 23 to 35.

* **Links = 40:** Complexity ranges from approximately 20 to 30.

* **Links = 50:** Complexity ranges from approximately 17 to 25.

* **Links = 5:** Complexity ranges from approximately 16 to 24.

* **Links = 15:** Complexity ranges from approximately 20 to 30.

* **Links = 35:** Complexity ranges from approximately 21 to 32.

* **Links = 45:** Complexity ranges from approximately 19 to 27.

The trend is that as "links" increase from 0 to around 30, "complexity" generally increases. Beyond 30 "links", "complexity" appears to decrease, creating a roughly symmetrical distribution.

### Key Observations

* The data suggests a non-linear relationship between "links" and "complexity".

* The peak density of data points occurs around 25-30 "links".

* There are no obvious outliers significantly deviating from the general trend.

* The distribution is approximately symmetrical around the peak.

### Interpretation

The scatter plot suggests that there is an optimal range of "links" (around 25-30) where "complexity" is maximized. Increasing or decreasing the number of "links" beyond this point results in a decrease in "complexity". This could represent a system where adding more connections initially increases complexity, but eventually leads to diminishing returns or even a reduction in overall complexity due to redundancy or interference.

Without knowing the context of what "links" and "complexity" represent, it's difficult to provide a more specific interpretation. However, the data suggests a trade-off or an optimal balance between these two variables. The bell-shaped curve indicates a natural limit to the benefits of increasing "links," and potentially a cost associated with excessive connectivity. This type of relationship is common in complex systems, such as networks, organizations, or biological systems.

</details>

so the complexity of a network is equal to that of its complement, as can be seen in Figure 1.

A connection can be drawn from this complexity measure, to that proposed in [11]. In their proposal, nodes are labelled, so the network is uniquely specified by the node and link counts, along with a rank-encoded linklist. Also, nodes may link to themselves, so L = n 2 . Consequently, their complexity measure will be

$$c = 2 \log _ { 2 } n + \vert \log _ { 2 } L \vert + 1 +$$

This is, to within a term of order log 2 n corresponding to the lead-in prefix specifying the node count, equal to the unnumbered formula given on page 326 of [11].

## 3 New complexity measure compared with the previous proposals

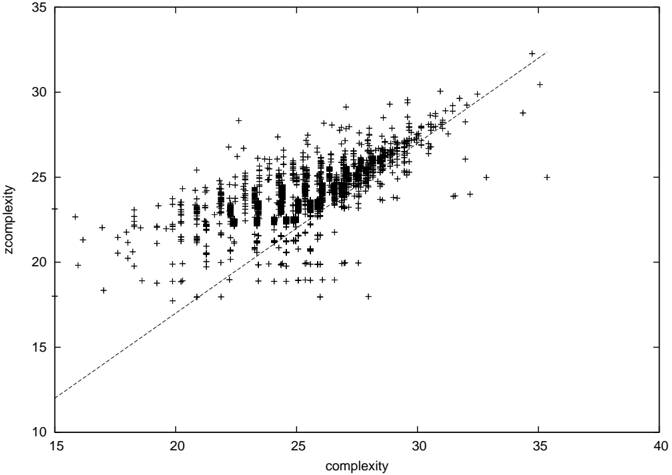

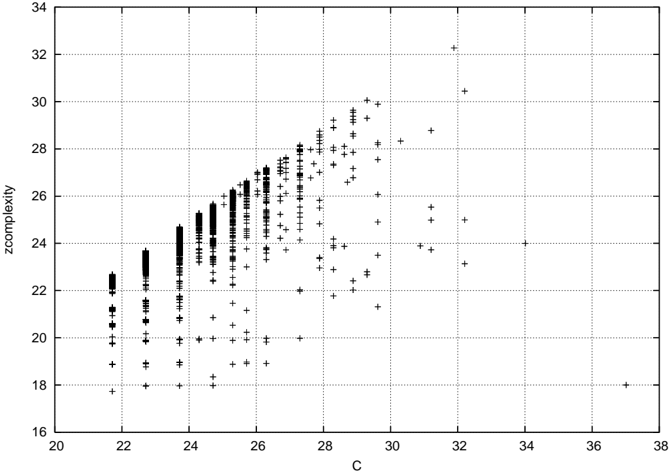

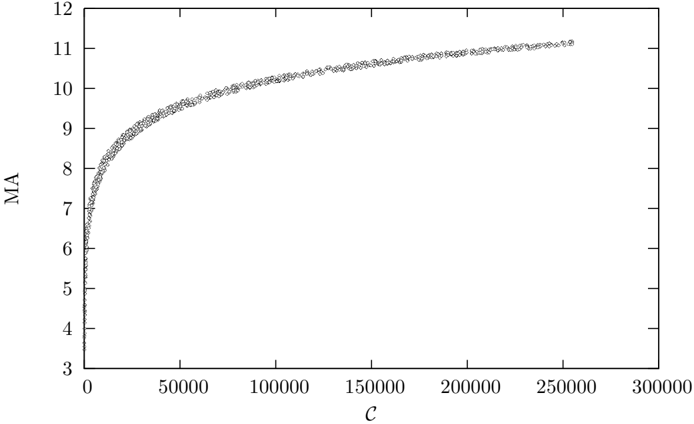

Figures 2 and 3 [36, Fig. 1] shows zcomplexity plotted against the new complexity and the original complexity proposal for all networks of order 8 respectively.

Figure 2: C z plotted against C for all networks of order 8. The diagonal line corresponds to C = C z +3.

<details>

<summary>Image 2 Details</summary>

### Visual Description

\n

## Scatter Plot: Complexity vs. Zcomplexity

### Overview

The image presents a scatter plot visualizing the relationship between two variables: "complexity" and "zcomplexity". The plot consists of numerous data points represented by '+' symbols, distributed across a two-dimensional plane. A diagonal line is also present, likely representing a line of equality or a reference point.

### Components/Axes

* **X-axis:** Labeled "complexity", ranging from approximately 15 to 40. The scale is linear with tick marks at intervals of 5.

* **Y-axis:** Labeled "zcomplexity", ranging from approximately 15 to 35. The scale is linear with tick marks at intervals of 5.

* **Data Points:** Represented by '+' symbols, scattered throughout the plot area.

* **Diagonal Line:** A dashed black line extending from the bottom-left to the top-right of the plot. It appears to represent the line where complexity equals zcomplexity.

### Detailed Analysis

The data points exhibit a generally positive correlation between "complexity" and "zcomplexity". As "complexity" increases, "zcomplexity" tends to increase as well. However, the relationship is not perfectly linear, and there is significant scatter around the general trend.

* **Data Distribution:** The data points are most densely clustered in the region where "complexity" is between 22 and 35, and "zcomplexity" is between 20 and 30. There is a sparser distribution of points at lower values of both variables.

* **Trend Verification:** The overall trend is upward and to the right.

* **Approximate Data Points (sampled):**

* (16, 18)

* (18, 20)

* (20, 21)

* (22, 22)

* (24, 23)

* (26, 25)

* (28, 27)

* (30, 28)

* (32, 30)

* (34, 31)

* (36, 32)

* (25, 20)

* (25, 26)

* (30, 22)

* (30, 29)

### Key Observations

* The data points generally fall above the diagonal line, suggesting that "zcomplexity" is often greater than "complexity".

* There is a noticeable spread of data points around the trend line, indicating variability in the relationship between the two variables.

* The density of points increases as both "complexity" and "zcomplexity" increase.

* There are a few outliers, particularly at lower values of "complexity", where "zcomplexity" is relatively high.

### Interpretation

The scatter plot suggests a positive correlation between "complexity" and "zcomplexity", but the relationship is not perfectly deterministic. The fact that most data points fall above the line of equality indicates that "zcomplexity" tends to be higher than "complexity". This could imply that "zcomplexity" incorporates additional factors or a different measurement scale compared to "complexity". The scatter around the trend line suggests that other variables or random noise influence the relationship between the two measures. The increasing density of points at higher values could indicate that the relationship becomes more consistent as the complexity increases. The outliers might represent cases where the underlying assumptions or measurement methods are not fully applicable.

Without further context about what "complexity" and "zcomplexity" represent, it's difficult to draw more specific conclusions. However, the plot provides valuable insights into the general relationship between these two variables and highlights areas where further investigation might be warranted.

</details>

Figure 3: C z plotted against the original C from [36] for all networks of order 8, reproduced from that paper.

<details>

<summary>Image 3 Details</summary>

### Visual Description

\n

## Scatter Plot: Zcomplexity vs. C

### Overview

The image presents a scatter plot visualizing the relationship between two variables: 'zcomplexity' and 'C'. The plot consists of numerous data points represented by '+' symbols. The data appears to be clustered, with a general trend of increasing 'zcomplexity' as 'C' increases, but with significant variance.

### Components/Axes

* **X-axis:** Labeled "C", ranging from approximately 20 to 38. The axis has tick marks at integer values.

* **Y-axis:** Labeled "zcomplexity", ranging from approximately 16 to 34. The axis has tick marks at integer values.

* **Data Points:** Represented by '+' symbols scattered across the plot.

* **Grid:** A grid of vertical and horizontal lines provides a visual reference for reading values.

### Detailed Analysis

The data points exhibit the following characteristics:

* **Initial Cluster (C ≈ 20-22):** A dense cluster of points exists around C values between 20 and 22. The 'zcomplexity' values within this cluster range from approximately 18 to 23.

* **Increasing Trend (C ≈ 22-26):** As 'C' increases from 22 to 26, the data points generally trend upwards, indicating a positive correlation between 'C' and 'zcomplexity'. The density of points increases in this region.

* **Plateau/Saturation (C ≈ 26-28):** Between C values of 26 and 28, the data points reach a plateau, with 'zcomplexity' values clustering around 26-28. There is a high concentration of points in this area.

* **Scattering (C > 28):** For 'C' values greater than 28, the data points become more scattered. 'zcomplexity' values range from approximately 24 to 32, with no clear trend. There are several outliers with 'zcomplexity' values exceeding 30.

Approximate data points (sampled for representation):

* C = 20, zcomplexity ≈ 20

* C = 22, zcomplexity ≈ 22

* C = 24, zcomplexity ≈ 24

* C = 26, zcomplexity ≈ 27

* C = 28, zcomplexity ≈ 28

* C = 30, zcomplexity ≈ 26

* C = 32, zcomplexity ≈ 31

* C = 34, zcomplexity ≈ 25

* C = 36, zcomplexity ≈ 32

### Key Observations

* The relationship between 'C' and 'zcomplexity' is not strictly linear. It appears to be positive up to a certain point (around C = 28), after which the relationship becomes more erratic.

* There is significant variance in 'zcomplexity' for a given 'C' value, especially for 'C' values greater than 28.

* The data suggests a potential threshold effect, where increasing 'C' beyond a certain value does not necessarily lead to a corresponding increase in 'zcomplexity'.

### Interpretation

The scatter plot likely represents the relationship between a control parameter 'C' and a measure of system complexity 'zcomplexity'. The initial increase in 'zcomplexity' with 'C' suggests that increasing 'C' initially leads to greater complexity. However, the plateau and subsequent scattering of data points indicate that beyond a certain point, further increases in 'C' do not consistently increase complexity and may even lead to instability or reduced predictability.

The clustering and variance in the data could be due to several factors, such as:

* **Non-linear dynamics:** The underlying system may exhibit non-linear behavior, leading to complex interactions and unpredictable outcomes.

* **Multiple stable states:** The system may have multiple stable states, resulting in different 'zcomplexity' values for the same 'C' value.

* **Noise or measurement error:** The data may be affected by noise or measurement error, contributing to the variance.

Further investigation would be needed to determine the specific mechanisms driving the observed relationship between 'C' and 'zcomplexity'. The data suggests that optimizing 'C' for maximum 'zcomplexity' requires careful consideration of the potential for diminishing returns and instability.

</details>

The new complexity proposal is quite well correlated with zcomplexity, and is in this example about 3 bits higher than zcomplexity. This is just the difference in prefixes between the two schemes (the original proposal used 8 1 bits followed by a stop bit = 9 bits overall to represent the node count, and the new scheme uses 3 1 bits and a stop bit to represent the field width of the node count, 3 bits for the node field and 5 bits for the link count field = 12 bits overall). Data points appearing above the line correspond to networks whose linkfields compress better with the new algorithm than the scheme used with zcomplexity. Conversely, data points below the line are better compressed with the old algorithm.

We can conclude that the new scheme usually compresses the link list field better than the run length encoding scheme employed in [36], and so is a better measure of complexity than zcomplexity, as well as being far more tractable. The slightly more complex prefix of the new scheme grows logarithmically with node count, so will ultimately be more compressed than the prefix of the old scheme, which grows linearly.

## 4 Comparison with medium articulation

In the last few years Wilhelm[42, 19] introduced a new complexity like measure that addresses the intuition that complexity should be minimal for the empty and full networks, and peak for intermediate values (like figure 1). It is obtained by multiplying an information quantity that increases with link count by a different information that falls. The resulting measure is therefore in square bits, so one should perhaps consider the square root as the complexity measure.

Precisely, medium articulation is given by

<!-- formula-not-decoded -->

where w ij is the normalised weight ( ∑ ij w ij = 1) of the link from node i to node j .

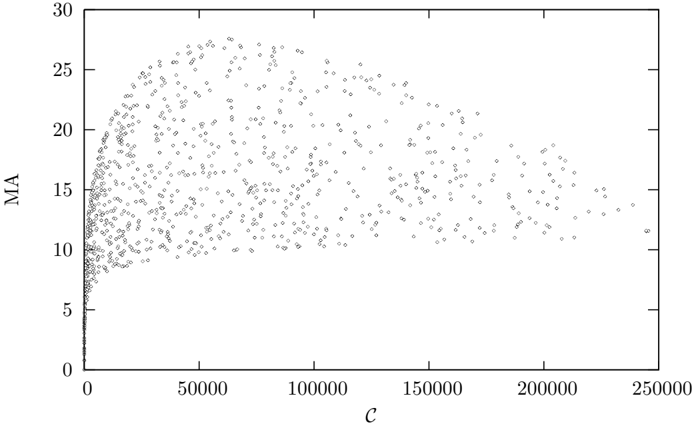

Figure 4 shows medium articulation plotted against C for a sample of 1000 Erd¨ os-R´ enyi networks up to order 500. There is no clear relationship between medium articulation and complexity for the average network. Medium articulation does not appear to discriminate between complex networks. however if we restrict our attention to simple networks (Figures 5 and 6) medium articulation is strongly correlated with complexity, and so could be used as a proxy for complexity for these cases.

## 5 Weighted links

Whilst the information contained in link weights might be significant in some circumstances (for instance the weights of a neural network can only be varied in a limited range without changing the overall qualitative behaviour of the network), of particular theoretical interest is to consider the weights as continuous

Figure 4: Medium Articulation plotted against complexity for 1000 randomly sampled Erd¨ os-R´ enyi graphs up to order 500.

<details>

<summary>Image 4 Details</summary>

### Visual Description

\n

## Scatter Plot: MA vs. C

### Overview

The image presents a scatter plot visualizing the relationship between two variables, labeled "MA" on the y-axis and "C" on the x-axis. The plot consists of a large number of data points, appearing as small gray dots, distributed across the chart area. There are no distinct data series or color-coding beyond the uniform gray color of all points.

### Components/Axes

* **X-axis:** Labeled "C", ranging from approximately 0 to 250,000. The scale is linear.

* **Y-axis:** Labeled "MA", ranging from approximately 0 to 30. The scale is linear.

* **Data Points:** Numerous gray dots representing individual data observations.

* **No Legend:** There is no legend present in the image.

### Detailed Analysis

The data points exhibit a clear pattern. Initially, as "C" increases from 0, "MA" also increases rapidly, forming a dense cluster of points. However, this upward trend plateaus around "MA" = 15. Beyond this point, the relationship becomes less defined, with points scattered more broadly across the range of "C" values.

Here's a breakdown of approximate data point distributions:

* **C = 0 to 10,000:** "MA" values range from approximately 5 to 25, with a concentration between 10 and 20.

* **C = 10,000 to 50,000:** "MA" values remain relatively stable, mostly between 12 and 20. The density of points decreases slightly.

* **C = 50,000 to 150,000:** "MA" values fluctuate between approximately 10 and 18, with a more even distribution.

* **C = 150,000 to 250,000:** "MA" values show a wider spread, ranging from approximately 8 to 25, but with a higher concentration around 12-15.

It's difficult to provide precise numerical values for individual points due to the density of the scatter plot. However, the overall trend is clearly visible.

### Key Observations

* **Positive Correlation (Initial Phase):** There's a positive correlation between "C" and "MA" for lower values of "C".

* **Saturation Effect:** The correlation weakens and appears to saturate around "MA" = 15. Further increases in "C" do not lead to significant increases in "MA".

* **Data Spread:** The spread of "MA" values increases as "C" increases, indicating greater variability in "MA" for higher "C" values.

* **No Outliers:** There are no immediately obvious outlier data points significantly distant from the main cluster.

### Interpretation

The scatter plot suggests a relationship where "MA" initially increases with "C", but this relationship reaches a limit. This could indicate a saturation point or a diminishing return effect. "C" might represent an input or independent variable, while "MA" represents an output or dependent variable. The saturation suggests that beyond a certain level of "C", increasing it further does not proportionally increase "MA".

The increasing spread of "MA" values at higher "C" values could indicate the influence of other factors not represented in this plot. These factors might introduce variability in the relationship between "C" and "MA".

Without knowing the context of "MA" and "C", it's difficult to provide a more specific interpretation. However, the plot clearly demonstrates a non-linear relationship with a saturation effect. Further investigation might involve exploring the influence of other variables or examining the underlying mechanisms driving this behavior.

</details>

Figure 5: Medium Articulation plotted against complexity for 1000 randomly sampled Erd¨ os-R´ enyi graphs up to order 500 with no more than 2 n links.

<details>

<summary>Image 5 Details</summary>

### Visual Description

\n

## Scatter Plot: Relationship between C and MA

### Overview

The image presents a scatter plot illustrating the relationship between two variables, labeled "C" on the x-axis and "MA" on the y-axis. The plot consists of a large number of data points distributed across the graph, showing a generally increasing trend with diminishing returns.

### Components/Axes

* **X-axis Label:** "C"

* Scale: 0 to 6000, with tick marks at intervals of approximately 1000.

* **Y-axis Label:** "MA"

* Scale: 0 to 14, with tick marks at intervals of approximately 2.

* **Data Points:** Numerous small, gray dots representing individual data observations.

* **No Legend:** There is no legend present in the image.

### Detailed Analysis

The scatter plot shows a positive correlation between "C" and "MA". As "C" increases, "MA" generally increases, but the rate of increase slows down as "C" gets larger.

* **Initial Trend (C = 0 to 1000):** The data points exhibit a steep upward slope. "MA" increases rapidly from approximately 0 to around 8 as "C" goes from 0 to 1000.

* **Mid-Range Trend (C = 1000 to 3000):** The slope begins to flatten. "MA" increases from approximately 8 to 11 as "C" goes from 1000 to 3000.

* **High-Range Trend (C = 3000 to 6000):** The slope becomes very shallow, approaching a horizontal asymptote. "MA" increases from approximately 11 to 13.5 as "C" goes from 3000 to 6000.

Approximate Data Points (sampled):

* C = 0, MA ≈ 0

* C = 500, MA ≈ 4

* C = 1000, MA ≈ 8

* C = 2000, MA ≈ 10

* C = 3000, MA ≈ 11.5

* C = 4000, MA ≈ 12.5

* C = 5000, MA ≈ 13

* C = 6000, MA ≈ 13.5

The data points are densely clustered, indicating a relatively large sample size. There is some scatter around the general trend, but no obvious outliers.

### Key Observations

* The relationship between "C" and "MA" is non-linear. It appears to follow a logarithmic or square root-like curve.

* The rate of increase in "MA" diminishes as "C" increases, suggesting a saturation effect.

* The data is relatively consistent, with no major gaps or anomalies.

### Interpretation

The scatter plot suggests that "MA" is a function of "C", but the relationship is not directly proportional. The diminishing returns observed as "C" increases could indicate that "C" has a decreasing marginal impact on "MA" beyond a certain point. This could represent a system where "C" is an input or resource, and "MA" is an output or performance metric. The saturation effect might be due to limitations in the system's capacity or other factors that constrain the relationship between "C" and "MA". Without knowing what "C" and "MA" represent, it's difficult to provide a more specific interpretation. However, the plot clearly demonstrates a positive, but diminishing, relationship between the two variables. The lack of a legend or further context limits the depth of analysis possible.

</details>

Figure 6: Medium Articulation plotted against complexity for 1000 randomly sampled Erd¨ os-R´ enyi graphs up to order 500 with more than n ( n -5) / 2 links.

<details>

<summary>Image 6 Details</summary>

### Visual Description

\n

## Chart: MA vs. c

### Overview

The image presents a scatter plot illustrating the relationship between two variables, labeled 'MA' on the y-axis and 'c' on the x-axis. The plot shows a non-linear relationship, with a steep increase in 'MA' for small values of 'c', followed by a leveling off as 'c' increases. The data appears to be densely clustered, forming a curve.

### Components/Axes

* **X-axis:** Labeled 'c'. The scale ranges from approximately 0 to 300,000.

* **Y-axis:** Labeled 'MA'. The scale ranges from approximately 3 to 12.

* **Data Series:** A single scatter plot of data points.

* **No Legend:** There is no legend present in the image.

### Detailed Analysis

The data points exhibit the following trend:

* **Initial Increase:** For 'c' values between 0 and approximately 50,000, 'MA' increases rapidly from around 3 to approximately 10. This is a steep, almost vertical, climb.

* **Leveling Off:** Between 'c' values of 50,000 and 250,000, the rate of increase in 'MA' significantly decreases. The curve flattens out, approaching a horizontal asymptote.

* **Asymptotic Behavior:** As 'c' approaches 300,000, 'MA' appears to converge towards a value of approximately 11.2.

Here are some approximate data points extracted from the plot:

* c = 0, MA ≈ 3.2

* c = 10,000, MA ≈ 7.5

* c = 20,000, MA ≈ 8.8

* c = 30,000, MA ≈ 9.4

* c = 40,000, MA ≈ 9.8

* c = 50,000, MA ≈ 10.1

* c = 60,000, MA ≈ 10.4

* c = 80,000, MA ≈ 10.7

* c = 100,000, MA ≈ 10.85

* c = 120,000, MA ≈ 10.95

* c = 150,000, MA ≈ 11.0

* c = 200,000, MA ≈ 11.1

* c = 250,000, MA ≈ 11.15

* c = 300,000, MA ≈ 11.2

### Key Observations

* The relationship between 'c' and 'MA' is clearly non-linear.

* The data suggests diminishing returns: increasing 'c' yields smaller and smaller increases in 'MA' as 'c' grows larger.

* The plot appears to be approaching an upper limit for 'MA', suggesting a saturation effect.

* The data is very dense, indicating a large sample size or a continuous process.

### Interpretation

The chart likely represents a saturation curve, where 'c' could represent a concentration of a substance, an input variable, or a resource, and 'MA' represents a measured effect or output. The initial steep increase suggests a rapid response to increasing 'c', but as 'c' increases, the system becomes saturated, and further increases in 'c' have a diminishing impact on 'MA'. This type of relationship is common in many scientific fields, such as chemistry (enzyme kinetics), pharmacology (drug response), and biology (population growth). The asymptotic behavior indicates that there is a maximum achievable value for 'MA', regardless of how large 'c' becomes. Without further context, it's difficult to determine the specific meaning of 'c' and 'MA', but the shape of the curve provides valuable insights into the underlying process.

</details>

parameters connecting one network structure with another. For instance if a network X has the same network structure as the unweighted graph A, with b links of weight 1 describing the graph B and the remaining a -b links of weight w , then we would like the network complexity of X to vary smoothly between that of A and B as w varies from 1 to 0. [16] introduced a similar measure.

The most obvious way of defining this continuous complexity measure is to start with normalised weights ∑ i w i = 1. Then arrange the links in weight order, and compute the complexity of networks with just those links of weights less than w . The final complexity value is obtained by integrating:

$$c ( X = N \times L ) = \int _ { 0 } ^ { 1 } c ( N \times \{ i \}$$

Obviously, since the integrand is a stepped function, this is computed in practice by a sum of complexities of partial networks.

## 6 Comparing network complexity with the Erd¨ osR´ enyi random model

I applied the weighted network complexity measure (6) to several well-known real network datasets, obtained from Mark Newman's website[41, 20, 26], the Pajek website[7, 39, 40, 17, 2, 1, 3] and Duncan Watt's website[13, 18], with the

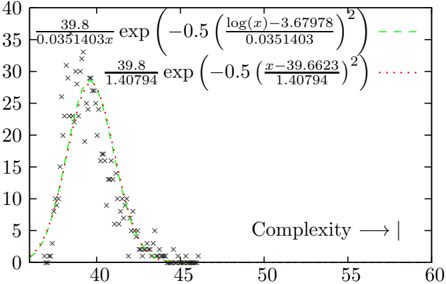

Figure 7: The distribution of complexities of the shuffled Narragansett Bay food web[24]. Both a normal and a log-normal distribution have been fitted to the data - the log-normal is a slightly better fit than the normal distribution. In the bottom right hand corner is marked the complexity value computed for the actual Narragansett food web (58.2 bits).

<details>

<summary>Image 7 Details</summary>

### Visual Description

## Chart: Probability Distribution vs. Complexity

### Overview

The image presents a chart depicting two probability distributions plotted against a variable labeled "Complexity". The distributions are represented by scattered data points connected by dashed lines. The chart includes mathematical formulas defining each distribution.

### Components/Axes

* **X-axis:** Labeled "Complexity" with an arrow indicating increasing values from approximately 35 to 60. The scale is linear.

* **Y-axis:** Ranges from 0 to 40, representing the probability or value of the distribution. The scale is linear.

* **Data Series 1 (Green Dashed Line):** Represented by 'x' markers.

* **Data Series 2 (Red Dotted Line):** Represented by '+' markers.

* **Formulas:** Two mathematical formulas are displayed, one above each corresponding data series.

### Detailed Analysis

**Data Series 1 (Green Dashed Line):**

The green dashed line shows a unimodal distribution, peaking around a complexity value of approximately 39.5. The line slopes upward from a value of approximately 0 at a complexity of 37, reaching a maximum value of around 35 at a complexity of 39.5. It then slopes downward, crossing the x-axis (y=0) around a complexity of 42.5, and approaching 0 as complexity increases beyond 45.

Approximate data points:

* Complexity = 37, Value = 0

* Complexity = 38, Value = 5

* Complexity = 39, Value = 15

* Complexity = 39.5, Value = 35 (peak)

* Complexity = 40, Value = 25

* Complexity = 41, Value = 10

* Complexity = 42.5, Value = 0

* Complexity = 45, Value = 0

The formula for this distribution is: `39.8 / (0.0351403*π) * exp(-0.5 * ((log(x)-3.67978) / 0.0351403)^2)`

**Data Series 2 (Red Dotted Line):**

The red dotted line also exhibits a unimodal distribution, but with a peak at a complexity value of approximately 41.5. It starts at approximately 0 at a complexity of 38, rises to a maximum value of around 30 at a complexity of 41.5, and then declines, crossing the x-axis around a complexity of 44.5, and approaching 0 as complexity increases beyond 46.

Approximate data points:

* Complexity = 38, Value = 0

* Complexity = 39, Value = 8

* Complexity = 40, Value = 18

* Complexity = 41.5, Value = 30 (peak)

* Complexity = 42, Value = 20

* Complexity = 43, Value = 8

* Complexity = 44.5, Value = 0

* Complexity = 46, Value = 0

The formula for this distribution is: `39.8 / 1.40794 * exp(-0.5 * ((x-39.6623) / 1.40794)^2)`

### Key Observations

* Both distributions are bell-shaped, suggesting a normal or Gaussian-like distribution.

* The green distribution peaks at a lower complexity value (approximately 39.5) compared to the red distribution (approximately 41.5).

* The red distribution has a slightly higher peak value (approximately 30) than the green distribution (approximately 35).

* The distributions overlap significantly between complexity values of 38 and 44.

### Interpretation

The chart likely represents the probability distribution of two different phenomena as a function of their complexity. The formulas suggest these distributions might be related to statistical models, potentially representing the likelihood of observing certain outcomes based on the complexity of the underlying system. The shift in peak complexity between the two distributions indicates that the two phenomena are characterized by different typical complexity levels. The overlap suggests that there is a range of complexity values where both phenomena are equally likely. The formulas themselves are key to understanding the underlying mechanisms driving these distributions, and would require further analysis to fully interpret. The chart demonstrates a relationship between complexity and probability, suggesting that the likelihood of observing a particular outcome is influenced by the complexity of the system.

</details>

results shown in Table 1. The number of nodes and links of the networks varied greatly, so the raw complexity values are not particularly meaningful, as the computed value is highly dependent on these two network parameters. What is needed is some neutral model for each network to compare the results to.

At first, one might want to compare the values to an Erd¨ os-R´ enyi random network with the same number of nodes and links. However, in practice, the real network complexities are much less than that of an ER network with the same number of nodes and links. This is because in our scheme, a network with weighted link weights looks somewhat like a simpler network formed by removing some of the weakest links from the original. An obvious neutral network model with weighted network links has the weights drawn from a normal distribution with mean 0. The sign of the weight can be interpreted as the link direction. Because the weights in equation (6) are normalised, the complexity value is independent of the standard deviation of the normal distribution. However, such networks are still much more complex than the real networks, as the link weight distribution doesn't match that found in the real network.

Instead, a simple way of generating a neutral model is to break and reattach the network links to random nodes, without merging links, leaving the original link weights unaltered. In the following experiment, we generate 1000 of these shuffled networks. The distribution of complexities can be fitted to a lognormal distribution, which gives a better likelihood than a normal distribution for all networks studied here[8], although the difference between a log-normal and a normal fit becomes less pronounced for more complex networks. Figure 7 shows the distribution of complexity values computed by shuffling the Narragansett food web, and the best fit normal and lognormal distributions.

In what follows we compute the average 〈 ln C ER 〉 and standard deviation

σ ER of the logarithm of the neutral model complexities. We can then compare the network complexity with the ensemble of neutral models to obtain a p -value, the probability that the observed complexity is that of a random network. The p -values are so small that is better to represent the value as a number of standard deviations ('sigmas') that the logarithm of the measured complexity value is from the mean logarithm of the shuffled networks. A value of 6 sigmas corresponds to a p -value less than 1 . 3 × 10 -4 , although this must be taken with a certain grain of salt, as the distribution of shuffled complexity values has a fatter tail than the fitted log-normal distribution. In none of these samples did the shuffled network complexity exceed the original network's complexity, meaning the p -value is less than 10 -3 . The difference C exp( 〈 ln C ER 〉 ) is the amount of information contained in the specific arrangement of links.

A code implementing this algorithm is implemented as a C++ library, and is available from version 4.D36 onwards as part of the Eco Lab system, an open source modelling framework hosted at http://ecolab.sourceforge.net.

## 7 ALife models

## 7.1 Tierra

Tierra [29] is a well known artificial life system in which self reproducing computer programs written in an assembly-like language are allowed to evolve. The programs, or digital organisms can interact with each other via template matching operations, modelled loosely on the way proteins interact in real biological systems. A number of distinct strategies evolve, including parasitism, where organisms make use of another organism's code and hyper-parasitism where an organism sets traps for parasites in order to steal their CPU resources. At any point in time in a Tierra run, there is an interaction network between the species present, which is the closest thing in the Tierra world to a foodweb.

Tierra is an aging platform, with the last release (v6.02) having been released more than six years ago. For this work, I used an even older release (5.0), for which I have had some experience in working with. Tierra was originally written in C for an environment where ints were 16 bits and long ints 32 bits. This posed a problem for using it on the current generation of 64 bit computers, where the word sizes are doubled. Some effort was needed to get the code 64 bit clean. Secondly a means of extracting the interaction network was needed. Whilst Tierra provided the concept of 'watch bits', which recorded whether a digital organism had accessed another's genome or vice versa, it did not record which other genome was accessed. So I modified the template matching code to log the pair of genome labels that performed the template match to a file.

Having a record of interactions by genotype label, it is necessary to map the genotype to phenotype. In Tierra, the phenotype is the behaviour of the digital organism, and can be judged by running the organisms pairwise in a tournament, to see what effect each has on the other. The precise details for how this can be done is described in [33].

Having a record of interactions between phenotypes, and discarding self-self interactions, there are a number of ways of turning that record into a foodweb. The simplest way, which I adopted, was sum the interactions between each pair of phenotypes over a sliding window of 20 million executed instructions, and doing this every 100 million executed instructions. This lead to time series of around 1000 foodwebs for each Tierra run.

In Tierra, parsimony pressure is controlled by the parameter SlicePow. CPU time is allocated proportional to genome size raised to SlicePow. If SlicePow is close to 0, then there is great evolutionary pressure for the organisms to get as small as possible to increase their replication rate. When it is one, this pressure is eliminated. In [35], I found that a SlicePow of around 0.95 was optimal. If it were much higher, the organisms grow so large and so rapidly that they eventually occupy more than 50% of the soup. At which point they kill the soup at their next Mal (memory allocation) operation. In this work, I altered the implementation of Mal to fail if the request was more than than the soup size divided by minimum population save threshold (usually around 10). Organisms any larger than this will never appear in the Genebanker (Tierra's equivalent of the fossil record), as their population can never exceed the save threshold. This modification allows SlicePow = 1 runs to run for an extensive period of time without the soup dying.

## 7.2 EcoLab

EcoLab was introduced by the author as a simple model of an evolving ecosystem [31]. The ecological dynamics is described by an n -dimensional generalised Lotka-Volterra equation:

$$n _ { i } = r _ { i n _ { i } } + \sum _ { j } ^ { n _ { i } } b _ { i j } n _ { j }$$

where n i is the population density of species i , r i its growth rate and β ij the interaction matrix. Extinction is handled via a novel stochastic truncation algorithm, rather than the more usual threshold method. Speciation occurs by randomly mutating the ecological parameters ( r i and β ij ) of the parents, subject to the constraint that the system remain bounded [30].

The interaction matrix is a candidate foodweb, but has too much information. Its offdiagonal terms may be negative as well as positive, whereas for the complexity definition (6), we need the link weights to be positive. There are a number of ways of resolving this issue, such as ignoring the sign of the off-diagonal term (ie taking its absolute value), or antisymmetrising the matrix by subtracting its transpose, then using the sign of the offdiagonal term to determine the link direction.

For the purposes of this study, I chose to subtract just the negative β ij terms from themselves and their transpose terms β ji . This effects a maximal encoding of the interaction matrix information in the network structure, with link direction and weight encoding the direction and size of resource flow. The effect is as follows:

- Both β ij and β ji are positive (the mutualist case). Neither offdiagonal term changes, and the two nodes have links pointing in both directions, with weights given by the two offdiagonal terms.

- Both β ij and β ji are negative (the competitive case). The terms are swapped, and the signs changed to be positive. Again the two nodes have links pointing in both directions, but the link direction reflects the direction of resource flow.

- Both β ij and β ji are of opposite sign (the predator-prey or parasitic case). Only a single link exists between species i and j , whose weight is the summed absolute values of the offdiagonal terms, and whose link direction reflects the direction of resource flow.

## 7.3 Webworld

Webworld is another evolving ecology model, similar in some respects to EcoLab, introduced by [6], with some modifications described in [12]. It features more realistic ecological interactions than does EcoLab, in that it tracks biomass resources. It too has an interaction matrix called a functional response in that model that could serve as a foodweb, which is converted to a directed weighted graph in the same way as the EcoLab interaction matrix. I used the Webworld implementation distributed with the Eco Lab simulation platform [34]. The parameters were chosen as R = 10 5 , b = 5 × 10 -3 , c = 0 . 8 and λ = 0 . 1.

## 8 Methods and materials

Tierra was run on a 512KB soup, with SlicePow set to 1, until the soup died, typically after some 5 × 10 10 instructions have executed. Some variant runs were performed with SlicePow=0.95, and with different random number generators, but no difference in the outcome was observed.

The source code of Tierra 5.0 was modified in a few places, as described in the Tierra section of this paper. The final source code is available as tierra.5.0.D7.tar.gz from the Eco Lab website hosted on SourceForge (http://ecolab.sf.net).

The genebanker output was processed by the eco-tierra.3.D13 code, also available from the Eco Lab website, to produce a list of phenotype equivalents for each genotype. A function for processing the interaction log file generated by Tierra and producing a timeseries of foodweb graphs was added to Eco-tierra. The script for running this postprocessing step is process ecollog.tcl.

The EcoLab model was adapted to convert the interaction matrix into a foodweb and log the foodweb to disk every 1000 time steps for later processing. The Webworld model was adapted similarly. The model parameters were as documented in the included ecolab.tcl and webworld.tcl experiment files of the ecolab.5.D1 distribution, which is also available from the Eco Lab website.

Finally, each foodweb, whether real world, or ALife generated, and 100 linkshuffled control versions were run through the network complexity algorithm

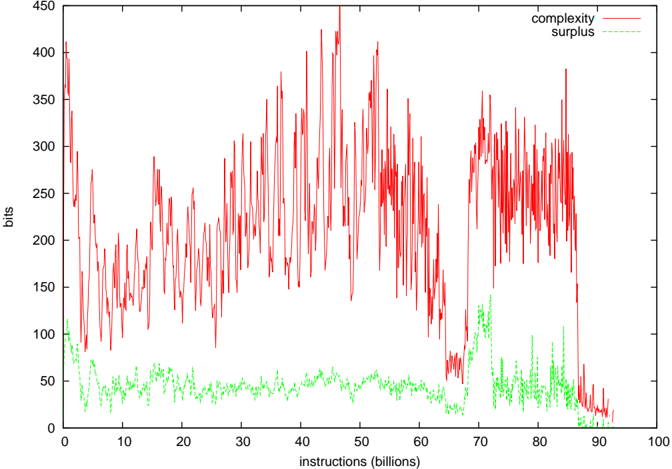

Figure 8: Complexity of the Tierran interaction network for SlicePow=0.95, and the associated complexity surplus. The surplus was typically 15-30 times the standard deviation of the ER neutral network complexities.

<details>

<summary>Image 8 Details</summary>

### Visual Description

## Line Chart: Complexity vs. Instructions

### Overview

The image presents a line chart illustrating the relationship between "instructions" (in billions) on the x-axis and "bits" on the y-axis, representing "complexity" and "surplus". The chart displays two data series: complexity (red line) and surplus (green line) over a range of instructions from 0 to 100 billion.

### Components/Axes

* **X-axis:** "instructions (billions)" - Scale ranges from 0 to 100, with tick marks at intervals of 10.

* **Y-axis:** "bits" - Scale ranges from 0 to 450, with tick marks at intervals of 50.

* **Data Series 1:** "complexity" - Represented by a red line.

* **Data Series 2:** "surplus" - Represented by a green line.

* **Legend:** Located in the top-right corner, labeling the two data series with their corresponding colors.

### Detailed Analysis

**Complexity (Red Line):**

The red line representing complexity exhibits significant fluctuations throughout the range of instructions. The line generally starts around 180 bits at 0 instructions, increases to a peak of approximately 410 bits around 35 billion instructions, then decreases to around 270 bits at 70 billion instructions, and finally stabilizes around 280 bits at 100 billion instructions. There are numerous local maxima and minima, indicating high variability.

* At 0 billion instructions: ~180 bits

* At 10 billion instructions: ~220 bits

* At 20 billion instructions: ~280 bits

* At 30 billion instructions: ~350 bits

* At 40 billion instructions: ~400 bits

* At 50 billion instructions: ~380 bits

* At 60 billion instructions: ~320 bits

* At 70 billion instructions: ~270 bits

* At 80 billion instructions: ~300 bits

* At 90 billion instructions: ~290 bits

* At 100 billion instructions: ~280 bits

**Surplus (Green Line):**

The green line representing surplus remains relatively low and stable compared to the complexity line. It starts around 20 bits at 0 instructions, fluctuates between approximately 20 and 60 bits for most of the range, and shows a slight increase to around 70 bits at 80 billion instructions before decreasing again to around 40 bits at 100 billion instructions.

* At 0 billion instructions: ~20 bits

* At 10 billion instructions: ~30 bits

* At 20 billion instructions: ~40 bits

* At 30 billion instructions: ~40 bits

* At 40 billion instructions: ~30 bits

* At 50 billion instructions: ~30 bits

* At 60 billion instructions: ~30 bits

* At 70 billion instructions: ~40 bits

* At 80 billion instructions: ~70 bits

* At 90 billion instructions: ~50 bits

* At 100 billion instructions: ~40 bits

### Key Observations

* The complexity (red line) is significantly higher than the surplus (green line) across the entire range of instructions.

* Complexity exhibits much greater variability than surplus.

* There is a general trend of increasing complexity up to around 35 billion instructions, followed by a decrease and stabilization.

* Surplus remains relatively stable, with a slight increase towards the end of the range.

* The peak in complexity around 35 billion instructions does not correspond to a significant peak in surplus.

### Interpretation

The chart suggests that as the number of instructions increases, the complexity of the system initially rises rapidly, then plateaus. The surplus, which could represent some form of efficiency or redundancy, remains relatively constant, indicating that the increase in complexity is not necessarily accompanied by a proportional increase in surplus. The large fluctuations in complexity suggest that the system's behavior is sensitive to changes in the number of instructions. The lack of a strong correlation between complexity and surplus implies that increasing instructions does not automatically lead to a more efficient or redundant system. The initial rise in complexity could be due to the introduction of new features or functionalities, while the subsequent plateau might indicate that the system has reached a point of diminishing returns. The slight increase in surplus towards the end of the range could be a result of optimizations or refinements made to the system. The data suggests a trade-off between complexity and surplus, where increasing one does not necessarily improve the other.

</details>

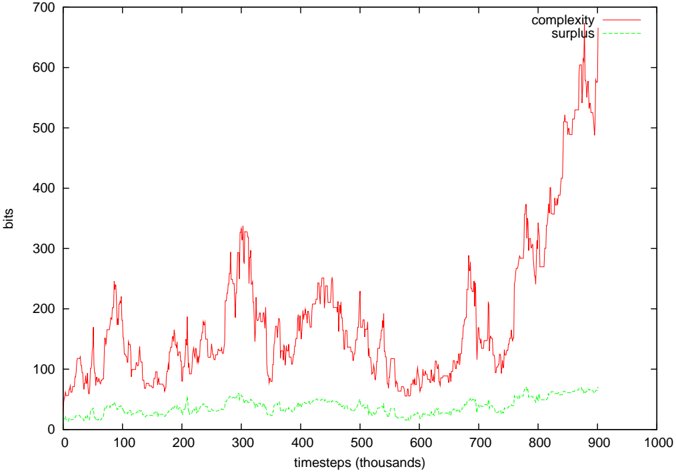

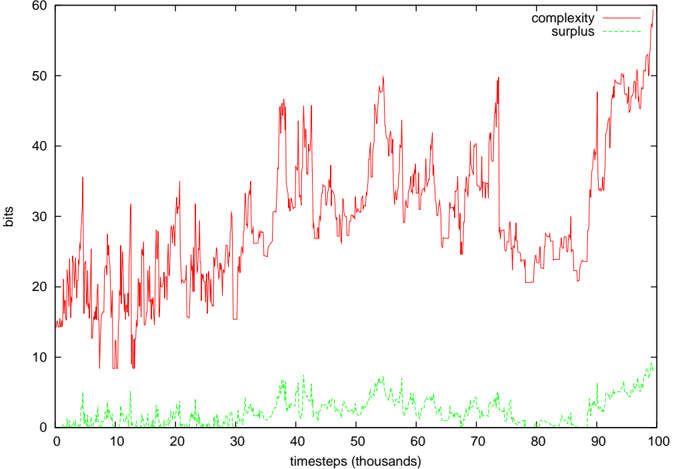

(6). This is documented in the cmpERmodel.tcl script of ecolab.5.D1. The average and standard deviation of ln C was calculated, rather than C directly, as the shuffled complexity values fitted a log-normal distribution better than a standard normal distribution. The difference between the measured complexity and exp 〈 ln C〉 (ie the geometric mean of the control network complexities) is what is reported as the surplus in Figures 8-10.

## 9 ALife results

Figures 8-10 show the computed complexity values and the surplus of the complexity over the average randomly shuffled networks. In both the EcoLab and Tierra cases, significant amounts of surplus complexity can be observed, but not in the case of WebWorld. In WebWorld's case, this is probably because the system complexity was not very high in the first place - the diversity typically ranged between 5 and 10 species throughout the WebWorld run. It is possible that the competition parameter c was too high for this run to generate any appreciable foodweb complexity.

An earlier report of these results [37] did not show significant complexity,

Figure 9: Complexity of EcoLab's foodweb, and the associated complexity surplus. For most of the run, the surplus was 10-40 times the standard deviation of the ER neutral network complexities, but in the final complexity growth phase, it was several 100.

<details>

<summary>Image 9 Details</summary>

### Visual Description

\n

## Line Chart: Complexity vs. Surplus over Time

### Overview

The image presents a line chart illustrating the relationship between "complexity" and "surplus" over time, measured in "timesteps (thousands)". The y-axis represents values in "bits". The chart displays fluctuating complexity with a generally increasing trend, while surplus remains relatively stable and low.

### Components/Axes

* **X-axis:** "timesteps (thousands)", ranging from 0 to 1000. The axis is marked with tick intervals of 100.

* **Y-axis:** "bits", ranging from 0 to 700. The axis is marked with tick intervals of 100.

* **Data Series 1:** "complexity" - represented by a red line.

* **Data Series 2:** "surplus" - represented by a green line.

* **Legend:** Located in the top-right corner, labeling the two data series with their corresponding colors.

### Detailed Analysis

**Complexity (Red Line):**

The red line representing complexity exhibits significant fluctuations throughout the observed time period. The line generally slopes upward, indicating an overall increase in complexity.

* At timestep 0, complexity is approximately 20 bits.

* Around timestep 100, complexity reaches a local maximum of approximately 120 bits.

* Around timestep 200, complexity reaches a local maximum of approximately 310 bits.

* Around timestep 300, complexity drops to approximately 150 bits.

* Around timestep 400, complexity reaches approximately 230 bits.

* Around timestep 500, complexity drops to approximately 80 bits.

* Around timestep 600, complexity reaches approximately 240 bits.

* Around timestep 700, complexity reaches approximately 300 bits.

* Around timestep 800, complexity rapidly increases, peaking at approximately 630 bits.

* At timestep 900, complexity is approximately 550 bits.

* At timestep 1000, complexity is approximately 520 bits.

**Surplus (Green Line):**

The green line representing surplus remains relatively stable and low throughout the observed time period.

* At timestep 0, surplus is approximately 20 bits.

* Around timestep 100, surplus is approximately 30 bits.

* Around timestep 200, surplus is approximately 40 bits.

* Around timestep 300, surplus is approximately 30 bits.

* Around timestep 400, surplus is approximately 40 bits.

* Around timestep 500, surplus is approximately 30 bits.

* Around timestep 600, surplus is approximately 40 bits.

* Around timestep 700, surplus is approximately 30 bits.

* Around timestep 800, surplus is approximately 40 bits.

* At timestep 900, surplus is approximately 30 bits.

* At timestep 1000, surplus is approximately 30 bits.

### Key Observations

* Complexity exhibits much greater variability than surplus.

* The overall trend for complexity is upward, while surplus remains relatively constant.

* There is a significant spike in complexity around timestep 800, with a value exceeding 600 bits, while surplus remains stable.

* Surplus consistently remains below 50 bits throughout the entire observation period.

### Interpretation

The data suggests a system where complexity increases over time, but this increase is not necessarily accompanied by a corresponding increase in surplus. The consistent low level of surplus, despite the increasing complexity, could indicate diminishing returns or inefficiencies in the system. The sharp increase in complexity around timestep 800 is a notable outlier, potentially representing a critical transition or event within the system. This event does not appear to generate a corresponding increase in surplus, suggesting that the added complexity may not be directly contributing to increased output or value. The relationship between complexity and surplus could be further investigated to understand the underlying dynamics of the system and identify potential areas for optimization. The chart demonstrates a clear divergence between the two metrics, indicating that simply increasing complexity does not guarantee an increase in surplus.

</details>

Figure 10: Complexity of Webworld's foodweb, and the associated complexity surplus. The surplus was typically 1-5 times the standard deviation of the ER neutral network complexities.

<details>

<summary>Image 10 Details</summary>

### Visual Description

\n

## Line Chart: Complexity vs. Surplus over Time

### Overview

The image presents a line chart illustrating the relationship between "complexity" and "surplus" over time, measured in "timesteps (thousands)". The y-axis represents values in "bits". The chart displays fluctuating trends for both metrics across a range of timesteps from 0 to 100.

### Components/Axes

* **X-axis:** "timesteps (thousands)", ranging from 0 to 100. The axis is marked with increments of 10.

* **Y-axis:** "bits", ranging from 0 to 60. The axis is marked with increments of 10.

* **Data Series 1:** "complexity" - represented by a red line.

* **Data Series 2:** "surplus" - represented by a green line.

* **Legend:** Located in the top-right corner, labeling the two data series with their corresponding colors.

### Detailed Analysis

**Complexity (Red Line):**

The red line representing "complexity" exhibits significant fluctuations throughout the observed timeframe. The line generally starts around 15 bits at timestep 0, increases to a peak of approximately 48 bits around timestep 40, then decreases to a low of around 22 bits at timestep 80, and finally rises again to approximately 46 bits at timestep 100.

* Timestep 0: ~15 bits

* Timestep 10: ~18 bits

* Timestep 20: ~25 bits

* Timestep 30: ~35 bits

* Timestep 40: ~48 bits

* Timestep 50: ~38 bits

* Timestep 60: ~28 bits

* Timestep 70: ~24 bits

* Timestep 80: ~22 bits

* Timestep 90: ~35 bits

* Timestep 100: ~46 bits

**Surplus (Green Line):**

The green line representing "surplus" remains relatively low and stable compared to "complexity". It generally fluctuates between 0 and 10 bits.

* Timestep 0: ~2 bits

* Timestep 10: ~3 bits

* Timestep 20: ~4 bits

* Timestep 30: ~6 bits

* Timestep 40: ~5 bits

* Timestep 50: ~3 bits

* Timestep 60: ~2 bits

* Timestep 70: ~4 bits

* Timestep 80: ~5 bits

* Timestep 90: ~8 bits

* Timestep 100: ~9 bits

### Key Observations

* "Complexity" demonstrates much higher variability than "surplus".

* "Surplus" remains consistently low throughout the entire timeframe.

* There appears to be a general upward trend in "complexity" from timestep 0 to 100, despite the significant fluctuations.

* "Surplus" shows a slight upward trend towards the end of the observed period.

### Interpretation

The chart suggests a dynamic system where "complexity" is constantly changing, while "surplus" remains relatively stable. The high fluctuations in "complexity" could indicate a system undergoing frequent transitions or adaptations. The low "surplus" values might suggest that the system is operating close to its capacity or that resources are being efficiently utilized. The increasing trend in both metrics towards the end of the timeframe could indicate a period of growth or increased activity. The large difference in scale between the two metrics suggests that "complexity" is a more dominant factor in the system's behavior than "surplus". The relationship between these two metrics could be further investigated to understand the underlying mechanisms driving the system's dynamics. The chart does not provide information about the nature of the system or the meaning of "complexity" and "surplus" in a specific context.

</details>

unlike what is shown here. It was discovered that the process of writing the foodweb data to an intermediate file stripped the weight data from the network links, preserving only the graph's topological structure. It turns out that the complexity surplus phenomenon is only present in weighted networks - unweighted networks tend to be indistinguishable from randomly generated networks.

## 10 Discussion

In this paper, a modified version of a previously proposed simple representation language for n -node directed graphs is given. This modification leads to a more intuitive complexity measure that gives a minimum value for empty and full networks. When compared with the zcomplexity measure introduced in the previous paper, it is somewhat correlated with, and is at least as good a measure as zcomplexity, but has the advantage of being far more tractable.

When compared with random networks created by shuffling the links, all real world example networks exhibited higher complexity than the random network. The difference between the complexity of the real network and the mean of the complexities of the randomly shuffled network represents structural information contained in the specific arrangement of links making up the network. To test the hypothesis that networks generated by evolutionary selection will also have a complexity surplus representing the functional nature of the network, I ran the same analysis on foodwebs generated by various artificial life systems. These too, exhibited the same complexity surplus seen in the real world networks.

By contrast, I have applied this technique to some scale free networks generated by the Barab´ asi-Albert preferential attachment process [4], with varying numbers of attachment points per node and different weight distributions. On none of these graphs were significant complexity surpluses generated.

If the weights are stripped off the edges, then the effect disappears, which provides a clue as to the origin of the effect. In equation (6), the components dominating the sum will tend to be connected giant components of the overall network with very few cycles. The randomly shuffled versions of these, however, will tend to be composed of small disconnected components, with many of the same motifs represented over and over again. Consequently, the shuffled network has a great deal of symmetry, reducing the overall complexity. Even though naively one might think that random networks should be the most complex within the class of networks of a given node and link count, given the nature of Kolmogorov complexity to favour random strings, the weighted complexity formula (6) is sensitive to the structure within the network that has meaning to system behaviour that the network represents.

Reverting to an earlier posed question about the amount of complexity stored within the foodweb as compared with individual organism's phenotypic complexity, the results in Figure 8 gives a preliminary answer. Each foodweb in Figure 8 averaged around 100 species, the average phenotypic complexity of which is around 150-200 bits (750 bits at maximum)[33]. So the 250-400 bits of network complexity is but a drop in the bucket of phenotypic complexity.

## References

- [1] Almunia, J., Basterretxea, G., Aristegui, J., and Ulanowicz., R. (1999) Benthicpelagic switching in a coastal subtropical lagoon. Estuarine, Coastal and Shelf Science , 49 , 363-384.

- [2] Baird, D., Luczkovich, J., and Christian, R. R. (1998) Assessment of spatial and temporal variability in ecosystem attributes of the St Marks National Wildlife Refuge, Apalachee Bay, Florida. Estuarine, Coastal, and Shelf Science , 47 , 329-349.

- [3] Baird, D. and Ulanowicz, R. (1989) The seasonal dynamics of the Chesapeake Bay ecosystem. Ecological Monographs , 59 , 329-364.

- [4] Barab´ asi, A.-L. and Albert, R. (1999) Emergence of scaling in random networks. Science , 286 , 509-512.

- [5] Bedau, M. A., McCaskill, J. S., Packard, N. H., Rasmussen, S., Adami, C., Green, D. G., Ikegami, T., Kaneko, K., and Ray, T. S. (2000) Open problems in artificial life. Artificial Life , 6 , 363-376.

- [6] Caldarelli, G., Higgs, P. G., and McKane, A. J. (1998) Modelling coevolution in multispecies communities. J. Theor, Biol. , 193 , 345-358.

- [7] Christian, R. and Luczkovich, J. (1999) Organizing and understanding a winter's seagrass foodweb network through effective trophic levels. Ecological Modelling , 117 , 99-124.

- [8] Clauset, A., Shalizi, C. R., and Newman, M. E. J. (2009) Power-law distributions in empirical data. SIAM Review , 51 , 661-703, arXiv:0706.1062.

- [9] Claussen, J. C. (2007) Offdiagonal complexity: A computationally quick complexity measure for graphs and networks. Physica A , 375 , 365-373, arXiv:q-bio.MN/0410024.

- [10] Dewar, R. (2003) Informational theory explanation of the fluctuation theorem, maximum entropy production and self-organized criticality in nonequilibrium stationary states. J. Phys. A , 36 , 631-641.

- [11] Dorin, A. and Korb, K. B. (2010) Network measures of ecosystem complexity. Fellerman et al. [15], pp. 323-328.

- [12] Drossel, B., Higgs, P. G., and McKane, A. J. (2001) The influence of predator-prey population dynamics on the long-term evolution of food web structure. J. Theor. Biol. , 208 , 91-107.

- [13] Duch, J. and Arenas, A. (2005) Community identification using extremal optimization. Physical Review E , 72 , 027104.

- [14] Edmonds, B. (1999) Syntactic Measures of Complexity . Ph.D. thesis, University of Manchester, http://bruce.edmonds.name/thesis/, Complexity Bibliography: http://bruce.edmonds.name/compbib/.

- [15] Fellerman, H. et al. (eds.) (2010) Proceedings of Artificial Life XII , MIT Press.

- [16] G¨ ornerup, O. and Crutchfield, J. P. (2008) Hierarchical self-organization in the finitary process soup. Artificial Life , 14 , 245-254.

- [17] Hagy, J. (2002) Eutrophication, hypoxia and trophic transfer efficiency in Chesapeake Bay . Ph.D. thesis, University of Maryland at College Park (USA).

- [18] Jeong, H., Mason, S., Barab´ asi, A.-L., and Oltvai, Z. N. (2001) Centrality and lethality of protein networks. Nature , 411 , 41.

- [19] Kim, J. and Wilhelm, T. (2008) What is a complex graph. Physica A , 387 , 2637-2652.

- [20] Knuth, D. E. (1993) The Stanford GraphBase: A Platform for Combinatorial Computing . Addison-Wesley.

- [21] Layzer, D. (1988) Growth and order in the universe. Weber, B. H., Depew, D. J., and Smith, J. D. (eds.), Entropy, Information and Evolution , pp. 23-39, MIT Press.

- [22] Li, M. and Vit´ anyi, P. (1997) An Introduction to Kolmogorov Complexity and its Applications . Springer, 2nd edn.

- [23] McKay, B. D. (1981) Practical graph isomorphism. Congressus Numerantium , 30 , 45-87.

- [24] Monaco, M. and Ulanowicz, R. (1997) Comparative ecosystem trophic structure of three U.S. Mid-Atlantic estuaries. Mar. Ecol. Prog. Ser. , 161 , 239-254.

- [25] Myrvold, W. and Ruskey, F. (2001) Ranking and unranking permutations in linear time. Information Processing Letters , 79 , 281-284.

- [26] Newman, M. E. J. (2006) Finding community structure in networks using the eigenvectors of matrices. Phys. Rev. E , 74 , 036104.

- [27] Prigogine, I. (1980) From Being into Becoming . W.H. Freeman.

- [28] Prokopenko, M., Boschetti, F., and Ryan, A. J. (2009) An informationtheoretic primer on complexity, self-organization, and emergence. Complexity , 15 , 11-28.

- [29] Ray, T. (1991) An approach to the synthesis of life. Langton, C. G., Taylor, C., Farmer, J. D., and Rasmussen, S. (eds.), Artificial Life II , p. 371, Addison-Wesley.

- [30] Standish, R. K. (2000) The role of innovation within economics. Barnett, W., Chiarella, C., Keen, S., Marks, R., and Schnabl, H. (eds.), Commerce, Complexity and Evolution , vol. 11 of International Symposia in Economic Theory and Econometrics , pp. 61-79, Cambridge UP.

- [31] Standish, R. K. (1994) Population models with random embryologies as a paradigm for evolution. Complexity International , 2 .

- [32] Standish, R. K. (2001) On complexity and emergence. Complexity International , 9 , arXiv:nlin.AO/0101006.

- [33] Standish, R. K. (2003) Open-ended artificial evolution. International Journal of Computational Intelligence and Applications , 3 , 167, arXiv:nlin.AO/0210027.

- [34] Standish, R. K. (2004) Ecolab, Webworld and self-organisation. Pollack et al. (eds.), Artificial Life IX , Cambridge, MA, p. 358, MIT Press.

- [35] Standish, R. K. (2004) The influence of parsimony and randomness on complexity growth in Tierra. Bedau et al. (eds.), ALife IX Workshop and Tutorial Proceedings , pp. 51-55, arXiv:nlin.AO/0604026.

- [36] Standish, R. K. (2005) Complexity of networks. Abbass et al. (eds.), Recent Advances in Artificial Life , Singapore, vol. 3 of Advances in Natural Computation , pp. 253-263, World Scientific, arXiv:cs.IT/0508075.

- [37] Standish, R. K. (2010) Network complexity of foodwebs. Fellerman et al. [15], pp. 337-343.

- [38] Standish, R. K. (2010) SuperNOVA: a novel algorithm for graph automorphism calculations. Journal of Algorithms - Algorithms in Cognition, Informatics and Logic , submitted, arXix: 0905.3927.

- [39] Ulanowicz, R., Bondavalli, C., and Egnotovich, M. (1998) Network analysis of trophic dynamics in South Florida ecosystem, FY 97: The Florida Bay ecosystem. Tech. rep., Chesapeake Biological Laboratory, Solomons, MD 20688-0038 USA, [UMCES]CBL 98-123.

- [40] Ulanowicz, R., Heymans, J., and Egnotovich., M. (2000) Network analysis of trophic dynamics in South Florida ecosystems, FY 99: The graminoid ecosystem. Tech. rep., Chesapeake Biological Laboratory, Solomons, MD 20688-0038 USA, [UMCES] CBL 00-0176.

- [41] Watts, D. J. and Strogatz, S. H. (1998) Collective dynamics of 'small-world' networks. Nature , 393 , 409-10.

- [42] Wilhelm, T. and Hollunder, J. (2007) Information theoretic description of networks. Physica A , 385 , 385-396.

Table 1: Complexity values of several freely available network datasets. celegansneural, lesmis and adjnoun are available from Mark Newman's website, representing the neural network of the C. elegans nematode[41], the coappearance of characters in the novel Les Mis´ erables by Victor Hugo[20] and the adjacency network of common adjectives and nouns in the novel David Copperfield by Charles Dickens[26]. The metabolic data of C. elegans [13] and protein interaction network in yeast[18] are available from Duncan Watt's website. PA1 and PA3 are networks generated via preferential attachment with in degree of one or three respectively, and uniformly distributed link weights. The other datasets are food webs available from the Pajek website [7, 39, 40, 17, 2, 1, 3]. For each network, the number of nodes and links are given, along with the computed complexity C . In the fourth column, the original network is shuffled 1000 times, and the logarithm of the complexity is averaged ( 〈 ln C ER 〉 ). The fifth column gives the difference between these two values, which represents the information content of the specific arrangement of links. The final column gives a measure of the significance of this difference in terms of the number of standard deviations ('sigmas') of the distribution of shuffled networks. In two examples, the distributions of shuffled networks had zero standard deviation, so ∞ appears in this column.

| Dataset | nodes | links | C | e 〈 ln C ER 〉 | C - e 〈 ln C ER 〉 | | ln C-〈 ln C ER 〉| σ ER |