# Unknown Title

## QUANTUM ANNEALING: FROM VIEWPOINTS OF STATISTICAL PHYSICS, CONDENSED MATTER PHYSICS, AND COMPUTATIONAL PHYSICS

## SHU TANAKA

Department of Chemistry, University of Tokyo, 7-3-1, Hongo, Bunkyo-ku, Tokyo, 113-0033, Japan E-mail: shu-t@chem.s.u-tokyo.ac.jp

## RYO TAMURA

Institute for Solid State Physics, University of Tokyo, 5-1-5, Kashiwanoha, Kashiwa-shi, Chiba, 277-8501, Japan

International Center for Young Scientists, National Institute for Materials Science, 1-2-1, Sengen, Tsukuba-shi, Ibaraki, 305-0047, Japan E-mail: tamura.ryo@nims.go.jp

In this paper, we review some features of quantum annealing and related topics from viewpoints of statistical physics, condensed matter physics, and computational physics. We can obtain a better solution of optimization problems in many cases by using the quantum annealing. Actually the efficiency of the quantum annealing has been demonstrated for problems based on statistical physics. Then the quantum annealing has been expected to be an efficient and generic solver of optimization problems. Since many implementation methods of the quantum annealing have been developed and will be proposed in the future, theoretical frameworks of wide area of science and experimental technologies will be evolved through studies of the quantum annealing.

Keywords : Quantum annealing; Quantum information; Ising model; Optimization problem

## 1. Introduction

Optimization problems are present almost everywhere, for example, designing of integrated circuit, staff assignment, and selection of a mode of transportation. To find the best solution of optimization problems is difficult in general. Then, it is a significant issue to propose and to develop a method for obtaining the best solution (or a better solution) of optimiza-

tion problems in information science. In order to obtain the best solution, a couple of algorithms according to type of optimization problems have been formulated in information science and these methods have yielded practical applications. Furthermore, since optimization problem is to find the state where a real-valued function takes the minimum value, it can be regarded as problem to obtain the ground state of the corresponding Hamiltonian. Thus, if we can map optimization problem to well-defined Hamiltonian, we can use knowledge and methodologies of physics. Actually, in computational physics, generic and powerful algorithms which can be adopted for wide application have been proposed. One of famous methods is simulated annealing which was proposed by Kirkpatrick et al. 1,2 In the simulated annealing, we introduce a temperature (thermal fluctuation) in the considered optimization problems. We can obtain a better solution of the optimization problem by decreasing temperature gradually since thermal fluctuation effect facilitates transition between states. It is guaranteed that we can obtain the best solution definitely if we decrease temperature slow enough. 3 Then, the simulated annealing has been used in many cases because of easy implementation and guaranty.

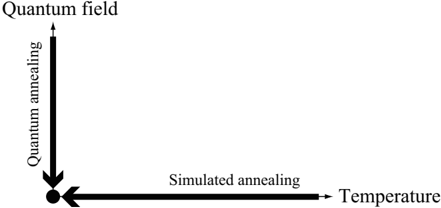

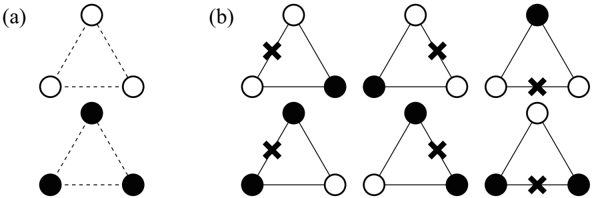

The quantum annealing was proposed as an alternative method of the simulated annealing. 4-11 In the quantum annealing, we introduce a quantum field which is appropriate for the considered Hamiltonian. For instance, if the considered optimization problem can be mapped onto the Ising model, the simplest form of the quantum fluctuation is transverse field. In the quantum annealing, we gradually decrease quantum field (quantum fluctuation) instead of temperature (thermal fluctuation). The efficiency of the quantum annealing has been demonstrated by a number of researchers, and it has been reported that a better solution can be obtained by the quantum annealing comparison with the simulated annealing in many cases. Figure 1 shows schematic picture of the simulated annealing and the quantum annealing. In optimization problems, our target is to obtain the stable state at zero temperature and zero quantum field, which is indicated by the solid circle in Fig. 1.

Recently, methods in which we decrease temperature and quantum field simultaneously have been proposed and as a result, we can obtain a better solution than the simulated annealing and the simple quantum annealing. 12-14 Moreover, as an another example of methods in which we use both thermal fluctuation and quantum fluctuation, novel quantum annealing method with the Jarzynski equality 15,16 was also proposed, 17 which is based on nonequilibrium statistical physics.

Fig. 1. Schematic picture of the simulated annealing and the quantum annealing. Our purpose is to obtain the ground state at the point indicated by the solid circle.

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Diagram: Quantum Annealing vs. Simulated Annealing Conceptual Relationship

### Overview

The image is a conceptual diagram illustrating the relationship between two optimization methods—Quantum Annealing and Simulated Annealing—and their associated domains. It uses a simple two-axis layout with directional arrows to show how each method originates from a distinct conceptual space and converges toward a common point.

### Components/Axes

The diagram consists of two perpendicular axes forming an L-shape, with text labels and directional arrows.

1. **Vertical Axis (Left Side):**

* **Label:** "Quantum annealing" (written vertically, reading from bottom to top).

* **Direction:** A thick black arrow points **downward** along this axis.

* **Origin Label:** At the top of this axis, the text "Quantum field" is placed.

2. **Horizontal Axis (Bottom):**

* **Label:** "Simulated annealing" (written horizontally).

* **Direction:** A thick black arrow points **leftward** along this axis.

* **Origin Label:** At the right end of this axis, the text "Temperature" is placed.

3. **Convergence Point:**

* The downward arrow from "Quantum annealing" and the leftward arrow from "Simulated annealing" meet at a single, solid black dot located at the bottom-left corner of the diagram (the intersection of the two axes).

### Detailed Analysis

* **Spatial Grounding:** The "Quantum field" label is positioned top-center relative to the vertical axis. The "Temperature" label is positioned center-right relative to the horizontal axis. The convergence dot is at the absolute bottom-left of the graphical elements.

* **Flow and Direction:** The diagram establishes two distinct pathways:

1. A vertical flow from the abstract "Quantum field" down through the process of "Quantum annealing."

2. A horizontal flow from the classical parameter "Temperature" leftward through the process of "Simulated annealing."

* **Text Transcription:** All text is in English. The precise labels are: "Quantum field", "Quantum annealing", "Simulated annealing", and "Temperature".

### Key Observations

* The diagram is purely conceptual and contains **no numerical data, scales, or quantitative values**. It is a schematic, not a chart.

* The use of thick, bold arrows emphasizes directionality and process flow.

* The convergence at a single point is the central visual metaphor, suggesting a common goal, outcome, or operational point reached by both methods.

* The layout is asymmetric, with the vertical axis on the left and the horizontal axis on the bottom, creating a focused point of interest in the lower-left quadrant.

### Interpretation

This diagram visually hypothesizes a relationship between quantum and classical annealing techniques. It suggests that:

1. **Distinct Origins:** Quantum annealing is fundamentally rooted in or driven by principles of a "Quantum field," while simulated annealing is classically governed by the parameter of "Temperature."

2. **Convergent Process:** Despite their different foundations, both processes are depicted as directional pathways (annealing) that lead to the same destination or solution state (the black dot). This implies that for a given problem, both methods might be applicable or aim for a similar optimal result.

3. **Conceptual Mapping:** The diagram serves as a high-level conceptual map, likely used to frame a discussion comparing the two optimization strategies. It abstracts away all technical details to highlight their philosophical or methodological origins and their potential equivalence in outcome. The lack of data indicates its purpose is to illustrate a relationship or model, not to present empirical results.

</details>

In this paper, we review the quantum annealing method which is the generic and powerful tool for obtaining the best solution of optimization problems from viewpoints of statistical physics, condensed matter physics, and computational physics. The organization of this paper is as follows. In Sec. 2, we review the Ising model which is a fundamental model of magnetic systems. The realization method of the Ising model by nuclear magnetic resonance is also explained. In Sec. 3, we show a couple of implementation methods of the quantum annealing. In Sec. 4, we explain two optimization problems - traveling salesman problem and clustering problem. The quantum annealing based on the Monte Carlo method for the traveling salesman problem is also demonstrated. In Sec. 5, we review related topics of the quantum annealing - Kibble-Zurek mechanism of the Ising spin chain and order by disorder in frustrated systems. In Sec. 6, we summarize this paper briefly and give some future perspectives of the quantum annealing.

## 2. Ising Model

In this section we introduce the Ising model which is a fundamental model in statistical physics. A century ago, the Ising model was proposed to explain cooperative nature in strongly correlated magnetic systems from a microscopic viewpoint. 18 The Hamiltonian of the Ising model is given by

$$H _ { 1 } = - \sum _ { i , j } J _ { ij } o _ { i z } o _ { j z } - \sum _ { i = 1 } ^ { N } h _ { i }$$

where the summation of the first term runs over all interactions on the defined graph and N represents the number of spins. If the sign of J ij is positive/negative, the interaction is called ferromagnetic/antiferromagnetic

interaction. Spins which are connected by ferromagnetic/antiferromagnetic interaction tend to be the same/opposite direction. The second term of the Hamiltonian denotes the site-dependent longitudinal magnetic fields. Although the Ising model is quite simple, this model exhibits inherent rich properties e.g. phase transition and dynamical behavior such as melting process and slow relaxation. For instance, the ferromagnetic Ising model with homogeneous interaction ( J ij = J for ∀ i, j ) and no external magnetic fields ( h i = 0 for ∀ i ) on square lattice exhibits the second-order phase transition, whereas no phase transition occurs in the Ising model on onedimensional lattice. Onsager first succeeded to obtain explicitly free energy of the Ising model without external magnetic field on square lattice. 19 After that, a couple of calculation methods were proposed. Furthermore, these calculation methods have been improved day by day, and the new techniques which were developed in these methods have been applied for other more complicated problems. Since the Ising model is quite simple, we can easily generalize the Ising model in diverse ways such as the Blume-Capel model, 20,21 the clock model, 22,23 and the Potts model. 24,25 By analyzing these models, relation between nature of phase transition and the symmetry which breaks at the transition point has been investigated. Then, it is not too much to say that the Ising model has opened up a new horizon for statistical physics.

The Ising model can be adopted for not only magnetic systems but also systems in wide area of science such as information science. Optimization problem is one of important topics in information science. As we mention in Sec. 4, optimization problem can be mapped onto the Ising model and its generalized models in many cases. Then some methods which were developed in statistical physics often have been used for optimization problem. In Sec. 2.1, we show a couple of magnetic systems which can be well represented by the Ising model. In Sec. 2.2, we review how to create the Ising model by Nuclear Magnetic Resonance (NMR) technique as an example of experimental realization of the Ising model.

## 2.1. Magnetic Systems

In many cases, the Hamiltonian of magnetic systems without external magnetic field is given by

$$\hat { H } = - \sum _ { i , j } J _ { ij } ( \sigma ^ { i } _ { j } \cdot \sigma ^ { j } _ { i } )$$

where ˆ σ α i denotes the α -component of the Pauli matrix at the i -th site. The form of this interaction is called Heisenberg interaction. The definitions of Pauli matrices are

$$\sigma ^ { x } = ( 0 , 1 ) , \sigma ^ { y } = ( 0 - i , 0 )$$

where the bases are defined by

$$\begin{array}{c}

n = \left\{ 0 \right\}, \\

1 \geq n = \left\{ 1 \right\}, \\

1 + = \left\{ 4 \right\}.

\end{array}$$

In this case, magnetic interactions are isotropic. However, they become anisotropic depending on the surrounded ions in real magnetic materials. In general, the Hamiltonian of magnetic systems should be replaced by

$$a ^ { i } - \sum _ { i , j } J _ { i , j } ( c x _ { i } ^ { 2 } o ^ { 2 } f _ { j } + c y _ { i } ^ { 2 } o ^ { 2 } f _ { j } )$$

When | c x | , | c y | > | c z | , the xy -plane is easy-plane and the Hamiltonian becomes XY-like Hamiltonian. On the contrary, when | c z | > | c x | , | c y | , the z -axis is easy-axis and the Hamiltonian becomes Ising-like Hamiltonian. Such anisotropy comes from crystal structure, spin-orbit coupling, and dipoledipole coupling. Moreover, even if there is almost no anisotropy in magnetic interactions, magnetic systems can be regarded as the Ising model when the number of electrons in the magnetic ion is odd and the total spin is halfinteger. In this case, doubly degenerated states exist because of the Kramers theorem. These states are called the Kramers doublet. When the energy difference between the ground states and the first-excited states ∆ E is large enough, these doubly-degenerated ground states can be well represented by the S = 1 / 2 Ising spins. Table 1 shows examples of the magnetic materials which can be well represented by the Ising model on one-dimensional chain, two-dimensional square lattice, and three-dimensional cubic lattice.

Table 1. Examples of magnetic materials which can be represented by the Ising model on chain (one-dimension), square lattice (two-dimension), and cubic lattice (three-dimension).

| Material | Spatial dimension | Total spin | Type of interaction | J/k B | References |

|-------------------------------|---------------------|--------------|-----------------------|-------------|--------------|

| K 3 Fe(CN) 6 | One (chain) | 1 2 | Antiferromagnetic | - 0 . 23 K | 26-28 |

| CsCoCl 3 | One (chain) | 1 2 | Antiferromagnetic | - 100 K | 29,30 |

| Dy(C 2 H 5 SO 4 ) 2 · 9 H 2 O | One (chain) | 1 2 | Ferromagnetic | 0 . 2 K | 31-33 |

| CoCl 2 · 2NC 5 H 5 | One (chain) | 1 2 | Ferromagnetic | 9 . 5 K | 34,35 |

| CoCs 3 Br 5 | Two (square) | 1 2 | Antiferromagnetic | - 0 . 23 K | 36-38 |

| Co(HCOO) 2 · 2 H 2 O | Two (square) | 1 2 | Antiferromagnetic | - 4 . 3 K | 39-42 |

| Rb 2 CoF 4 | Two (square) | 1 2 | Antiferromagnetic | - 91 K | 43,44 |

| FeCl 2 | Two (square) | 1 | Ferromagnetic | 3 . 4 K | 45,46 |

| DyPO 4 | Three (cubic) | 1 2 | Antiferromagnetic | - 2 . 5 K | 47-50 |

| Dy 3 Al 5 O 12 | Three (cubic) | 1 2 | Antiferromagnetic | - 1 . 85 K | 51-53 |

| CoRb 3 Cl 5 | Three (cubic) | 1 2 | Antiferromagnetic | - 0 . 511 K | 54,55 |

| FeF 2 | Three (cubic) | 2 | Antiferromagnetic | - 2 . 69 K | 56-59 |

## 2.2. Nuclear Magnetic Resonance

In condensed matter physics, Nuclear Magnetic Resonance (NMR) has been used for decision of the structure of organic compounds and for analysis of the state in materials by using resonance induced by electromagnetic wave. The NMR can create the Ising model with transverse fields, which is expected to become an element of quantum information processing. In this processing, we use molecules where the coherence times are long compared with typical gate operations. Actually a couple of molecules which have nuclear spins were used for demonstration of quantum computing. 60-75 In this section we explain how to create the Ising model by NMR.

The setup of the NMR spectrometer as a tool of quantum computing is as follows. We first put molecules which contain nuclear spins under the strong magnetic field B 0 . Next we apply radio frequency ω (rf) magnetic field which is perpendicular to the strong magnetic field B 0 . For simplicity, we here consider a molecule which contains two spins. We also assume that the considered molecule can be well described by the Heisenberg Hamiltonian. Then the Hamiltonian of this system is given by

$$\frac { \hat { H } } { 6 } = \hat { H } _ { m o l } + \hat { H } _ { 1 ^ { r t } } + \hat { H } _ { 2 ^ { r f } }$$

where ˆ H mol , ˆ H (rf) 1 , and ˆ H (rf) 2 are defined by

$$\sigma _ { 2 } + \sigma _ { 1 } \cdot \sigma _ { 2 } + \sigma _ { 1 } \cdot \sigma _ { 3 } , ( 7 )$$

$$\frac { 1 } { 2 } ( t ) = - I _ { 2 } \cos ( w ( t ) + \phi _ { 2 } ) ( r ^ { 2 } )$$

$$\gamma ( t ) = - I _ { 1 } \cos ( w ( t ) + \phi _ { 1 } ( t ^ { 2 } )$$

respectively. We take the natural unit in which /planckover2pi1 = 1. The values of φ 1 and φ 2 are the phases at the time t = 0 of the first spin and that of the second spin, respectively. The quantities of h i are defined by h i := γ i B 0 , where γ i denotes the gyromagnetic ratio of the i -th spin ( i = 1 , 2). The values of h 1 and h 2 represent energy differences between |↑〉 and |↓〉 of the first spin and the second spin, respectively. The coefficients Γ 1 and Γ 2 in ˆ H (rf) 1 and ˆ H (rf) 2 are the effective amplitudes of the ac magnetic field, whose definitions are Γ i := γ i B ac , where B ac is amplitude of the ac magnetic field. The value of γ ′ is defined by the ratio of the gyromagnetic ratios γ ′ := γ 2 /γ 1 .

We define the following unitary transformation:

$$\int ( R ) : = e ^ { - i h _ { 1 } \phi _ { 1 } t } . e ^ { - i h _ { 2 } \phi _ { 2 } t }$$

We can change from the laboratory frame to a frame rotating with h i around the z -axis by using the above unitary transformation. The dynamics of a

density matrix can be calculated by

$$\frac { i \phi } { d t } = [ H , \phi ].$$

The density matrix on the rotating frame is given by

$$\rho ^ { ( R ) } : = U ^ { ( R ) } \dot { p } U ^ { ( R ) } t .$$

To be the same form as Eq. (11) on the rotating frame, the Hamiltonian on the rotating frame should be

$$\frac { \hat { U } ( R ) } { d t } = \hat { U } ( R ) \hat { H } U ^ { \prime } ( R ) + - i \hat { U } ^ { \prime } ( R )$$

Here we decompose the Hamiltonian on the rotating frame as

$$\frac { h ( R ) } { 2 } = \frac { h _ { mol } ( R ) } { 2 } + \frac { h _ { 1 } ( R ) ( f ) } { 2 } + r$$

where the three terms are defined by

$$\frac { H ( R ) } { \frac { d U ^ { \prime } ( R ) t } { d t } } , ( 1 5 )$$

$$\sum _ { i = 1 } ^ { n } \frac { 1 } { n } H _ { i } ( R ) = \sum _ { i = 1 } ^ { n } \frac { 1 } { n } H _ { i } ( t ) \tilde { H } ( R + t )$$

$$H _ { 2 } ^ { ( R ) ( r f ) } = \bar { U } ( R ) H _ { 2 } ^ { ( R ) ( r f ) } U ( R + t ) .$$

The intramolecular magnetic interaction Hamiltonian on the rotating frame ˆ H (R) mol can be calculated as

$$\hat { r } ( R ) = J \left\{ \begin{array}{ll} 0 & 0 & 0 \\ 0 & e ^ { - i ( h _ { 2 } - h _ { 1 } ) t } & 0 \\ 0 & 0 & 0 \end{array} \right.$$

The approximation is valid when | h 2 -h 1 | τ /greatermuch 1, where τ is a characteristic time scale since the exponential terms are averaged to vanish. The radio frequency magnetic field Hamiltonian on the rotating frame ˆ H (R)(rf) 1 under the resonance condition ω (rf) = h i can be calculated as

$$\begin{array}{ll}

\eta _ { 1 } ( R ) ( r ) = - \Gamma _ { 1 } \left[ \left\{ \begin{array}{l}

0 & 0 & e^{-i \theta _ { 1 } } \\

0 & 0 & 0 \\

e^{i \theta _ { 1 } } & 0 & 0 \\

0 & 0 & e^{i \theta _ { 1 } }

\end{array} \right. \right]

,

where a_{-1} := e^{-i ( h _ { 2 } - h ) t + \phi _ { 1 } } + e^{-i \theta _ { 1 } } .

\end{array}$$

$$\rho ^ { 1 } ( R ) ( r f ) = - \Gamma _ { 1 } ( \cos \phi _ { 1 } \hat { r } + s )$$

where a --:= e -i ( h 2 -h 1 ) t + φ 1 +e -i ( h 1 + h 2 ) t -φ 1 and a ++ := e i ( h 2 -h 1 ) t + φ 1 + e i ( h 1 + h 2 ) t -φ 1 . The second term of ˆ H (R)(rf) 1 vanishes when | h 1 + h 2 | τ, | h 2 -h 1 | τ /greatermuch 1. Then under these conditions, the Hamiltonian becomes

In the same way, the Hamiltonian ˆ H (R)(rf) 2 can be calculated as

$$\frac { x _ { 2 } ( R ) ( r f ) } { \sin ^ { 6 } \phi _ { 2 } \phi _ { 2 } } = - I _ { 2 } ( \cos \phi _ { 2 } \phi _ { 2 } + s )$$

By taking the rotation operators on the individual sites, we can rewrite the Hamiltonians ˆ H (R)(rf) 1 and ˆ H (R)(rf) 2 by only the x -component of the Pauli matrix:

$$\sum _ { i = 1 } ^ { \infty } \sum _ { j = 1 } ^ { \infty } H _ { 1 } ( R ) ( r f ) - i \sigma _ { 1 } ^ { 2 } i = - 1$$

$$e ^ { i \phi _ { 2 } \alpha _ { 2 } } H _ { 2 } ( R ) ( r f ) - i e ^ { i \phi _ { 2 } \alpha _ { 2 } } = -$$

Then, the total Hamiltonian can be represented by the Ising model with site-dependent transverse fields:

$$H ^ { ( R ) } = - J _ { 0 } ^ { i } \dot { I } _ { 0 } ^ { j } - I _ { 1 } ^ { k }$$

It should be noted that the above procedure is not restricted for two spin system. Then, the NMR technique can be create the Ising model with sitedependent transverse fields in general.

## 3. Implementation Methods of Quantum Annealing

As stated in Sec. 1, the quantum annealing method is expected to be a powerful tool to obtain the best solution of optimization problems in a generic way. The quantum annealing methods can be categorized according to how to treat time-development. One is a stochastic method such as the Monte Carlo method which will be shown in Sec. 3.1. Other is a deterministic method such as mean-field type method and real-time dynamics. We will explain the mean-field type method and the method based on real-time dynamics in Secs. 3.2 and 3.3. Although in the Monte Carlo method and the mean-field type method, we introduce time-development in an artificial way, the merit of these methods is to be able to treat large-scale systems. The methods based on the Schr¨ odinger equation can follow up real-time dynamics which occurs in real experimental systems. However, these methods can be used for very small systems and/or limited lattice geometries because of limited computer resources and characters of algorithms. Each method has strengths and limitations based on its individuality. Then when we use the quantum annealing, we have to choose implementation methods according to what we want to know. In this section, we explain three types of theoretical methods for the quantum annealing and some experimental results which relate to the quantum annealing.

## 3.1. Monte Carlo Method

In this section we review the Monte Carlo method as an implementation method of the quantum annealing. In physics, the Monte Carlo method is widely adopted for analysis of equilibrium properties of strongly correlated systems such as spin systems, electric systems, and bosonic systems. Originally the Monte Carlo method is used in order to calculate integrated value of given function. The simplest example is 'calculation of π '. Suppose we consider a square in which -1 ≤ x, y ≤ 1 and a circle whose radius is unity and center is ( x, y ) = (0 , 0). We generate pair of uniform random numbers ( -1 ≤ x i , y i ≤ 1) many times and calculate the following quantity:

$$\frac { \sqrt { x ^ { 2 } + 1 } } { \text { number of steps } } \cdot ( 2 4 )$$

Hereafter we refer to the denominator as Monte Carlo step. The quantity should converge to π/ 4 in the limit of infinite Monte Carlo step. This is a pedagogical example of the Monte Carlo method. We first explain how to implement and theoretical background of the Monte Carlo method which is used in physics.

In equilibrium statistical physics, we would like to know the equilibrium value at given temperature T . The equilibrium value of the physical quantity which is represented by the operator O is defined as

$$( O ) ^ { ( eq ) } T : = \frac { T _ { r } O e ^ { - 3 H } } { T _ { r } e ^ { - 8 H } } ,$$

where Tr means the trace of matrix and β denotes the inverse temperature β = ( k B T ) -1 . Hereafter we set the Boltzmann constant k B to be unity. For small systems, we can obtain the equilibrium value by taking sum analytically, on the contrary, it is difficult to obtain the equilibrium value for large systems except few solvable models. Then in order to evaluate equilibrium value of the physical quantity, we often use the Monte Carlo method.

We consider the Ising model given by

$$H _ { 1 } \sigma i = - \sum _ { ( j , i ) } ^ { N } J _ { ij } o _ { i j } - \sum _ { i = 1 } ^ { N } h _ { i }$$

The Ising model without transverse field can be expressed as a diagonal matrix by using 'trivial' bit representation |↑〉 and |↓〉 which were introduced in Sec. 2. Then, in this case, we can easily calculate the eigenenergy once the eigenstate is specified.

We can use the Monte Carlo method for obtaining the equilibrium value defined by Eq. (25) as well as the calculation of π :

$$\sum _ { S \in O } ( \Sigma e ^ { - \beta E ( \Sigma ) } \rightarrow 0 ) ^ { q } ,$$

where O (Σ) and E (Σ) denote the physical value of O and the eigenenergy of the eigenstate Σ, respectively. Here the eigenstate Σ is generated by uniform random number and ∑ Σ 1 is equal to Monte Carlo step. In the limit of infinite Monte Carlo step, LHS of Eq. (27) should be converge to the equilibrium value. Equilibrium statistical physics says that the probability distribution at equilibrium state can be described by the Boltzmann distribution which is proportional to e -βE (Σ) . In this case, since we know the form of the probability distribution, it is better to use the distribution function to generate a state according to the Boltzmann distribution instead of uniform random number. This scheme is called importance sampling. When we use the importance sampling, we can obtain the equilibrium value as follows:

$$\sum _ { \Sigma 1 } ^ { \Sigma 0 } ( \Sigma ) \rightarrow ( O ) ( e a ) .$$

In order to generate a state according to the Boltzmann distribution, we use the Markov chain Monte Carlo method. Let P (Σ a , t ) be the probability of the a -th state at time t . In this method, time-evolution of probability distribution is given by the master equation:

/negationslash

/negationslash where w (Σ a | Σ b ) represents the transition probability from the b -th state to the a -th state in unit time. The transition probability w (Σ a | Σ b ) obeys

$$\sum _ { S _ { e } } w ( \Sigma _ { a } | \Sigma _ { b } ) = 1 ( n \geqslant$$

For convenience, let P ( t ) be a vector-representation of probability distribution { P (Σ a , t ) } . Then the master equation can be represented by

$$P ( t + \Delta t ) = C P ( t ) ,$$

where L is the transition matrix whose elements are defined as

$$\int _ { \sqrt { 2 } } ^ { \infty } w ( \sum b | z | ) d t ,$$

/negationslash

$$\sum _ { b \neq a } L _ { b a } = 1 - \sum _ { b \neq a } L _ { b a }$$

/negationslash

Here the matrix L is a non-negative matrix and does not depend on time. Then this time-evolution is the Markovian.

If the transition matrix L is prepared appropriately, which satisfies the detailed balance condition and the ergordicity, we can obtain the equilibrium probability distribution in the limit of infinite Monte Carlo step regardless of choice of the initial state because of the Perron-Frobenius theorem.

We can perform the Monte Carlo method easily as following process.

- Step 1 We prepare a initial state arbitrary.

- Step 2 We choose a spin randomly.

- Step 3 We calculate the molecular field at the chosen site in Step 2. The molecular field at the chosen site i is defined as

$$h _ { i } ^ { ( eff ) } = \sum _ { j } ^ { \prime } J _ { ij } o ^ { 2 } j + h _ { i }$$

where the summation takes over the nearest neighbor sites of the i -th site.

- Step 4 We flip the chosen spin in Step 2 according to a probability defined by some way.

- Step 5 We continue from Step 2 to Step 4 until physical quantities such as magnetization converge.

In this Monte Carlo method, we only update the chosen single spin, and thus we refer to this method as single-spin-flip method. There is an ambiguity how to define w (Σ a | Σ b ) in Step 4. Here we explain two famous choices of w (Σ a | Σ b ) as follows. Transition probability in the heat-bath method is given by

$$\frac { w H B ( \sigma _ { i } ^ { - } - \sigma _ { f } ^ { + } ) } { 2 \cos h ( \beta _ { i } ^ { 2 } ) } = \frac { e ^ { - 8 h _ { i } ^ { 2 } } } { 2 \cos h ( \beta _ { i } ^ { 2 } ) } .$$

Transition probability in the Metropolis method is given by

$$\omega _ { M P } ( \sigma ^ { z } _ { i } - \sigma ^ { z } _ { j } ) = \{ 1 e^{-2 \beta h _ { i } ( e t ) } ,$$

Since both two transition probabilities satisfy the detailed balance condition, the equilibrium state can be obtained definitely in the limit of infinite Monte Carlo step a . It is important to select how to choice the transition probability since it is known that a couple of methods can sample states in an efficient fashion. 76-83

So far we considered the Monte Carlo method for systems where there is no off-diagonal matrix element. To perform the Monte Carlo method, in a precise mathematical sense, we only have to know how to choice the basis or appropriate transformation so as to diagonalize the given Hamiltonian. However, it is difficult to obtain equilibrium values of physical quantities of quantum systems, since we have to calculate the exponential of the given Hamiltonian e -β ˆ H in general. If we know all eigenvalues and the corresponding eigenvectors of the given Hamiltonian, we can easily calculate e -β ˆ H by the unitary transformation which diagonalizes the Hamiltonian ˆ H . In contrast, if we do not know all eigenvalues and eigenvectors, we have to calculate any power of the Hamiltonian ˆ H m since the matrix exponential is given by

$$e ^ { A } = \sum _ { m = 0 } ^ { \infty } \frac { 1 } { m ! } A ^ { m }$$

It is difficult to calculate the matrix exponential in general. Then we have to consider the following procedure in order to use the framework of the Monte Carlo method for quantum systems.

In many cases, the Hamiltonian of quantum systems can be represented as

$$H = H _ { c } + H _ { q } .$$

Hereafter we refer to ˆ H c and ˆ H q as classical Hamiltonian and quantum Hamiltonian, respectively. The classical Hamiltonian ˆ H c is a diagonal matrix. Here we assume that ˆ H q can be easily diagonalized b . This is a key of the quantum Monte Carlo method as will be shown later. Since ˆ H c and ˆ H q cannot commute in general: [ ˆ H c , ˆ H q ] = 0, then e -β ˆ H = e -β ˆ H c e -β ˆ H q . We

/negationslash

/negationslash

a Recently, the algorithm which does not use the detailed balance condition was proposed. 76,77 It should be noted that the detailed balance condition is just a necessary condition. This novel algorithm is efficient for general spin systems.

b This fact does not seem to be general. However we can prepare the matrices which can be easily diagonalized by the decomposition as ˆ H q = ∑ /lscript ˆ H ( /lscript ) q in many cases.

decompose the matrix exponential by introducing large integer m ,

$$\exp ( - \frac { b } { m } H _ { c } ) = \exp [ - \frac { b } { m } ( H _ { c } + i \alpha ) ]$$

This is a concrete representation of the Trotter formula. 84 From now on, we refer to m as Trotter number. By using this relation, we can perform the Monte Carlo method for quantum systems. To illustrate it, we consider the Ising model with longitudinal and transverse magnetic fields. The considered Hamiltonian is given as

$$\sum _ { i = 1 } ^ { N } \sum _ { j = 1 } ^ { N } \sum _ { k = 1 } ^ { N } H _ { ij } o _ { i j } - \sum _ { i = 1 } ^ { N } H _ { i } o _ { i }$$

$$H _ { c } = - \sum _ { i = 1 } ^ { N } J _ { i j } o _ { i } o _ { j } - \sum _ { i = 1 } ^ { N } h _ { i j } o _ { i }$$

where optimization problems often can be expressed by this classical Hamiltonian ˆ H c . The partition function of the Hamiltonian at temperature T (= β -1 ) is given by

$$z = T _ { r } e ^ { - b ^ { 2 } h } = \sum _ { z } \{ e ^ { - b ( c ) } \} .$$

Using Eq. (39) we obtain

$$z = \lim _ { n \rightarrow \infty } \sum _ { k = 1 , n } ^ { n - 1 } \{ \Sigma _ { m } | e ^ { - \beta H _ { a } / m } | \Sigma _ { m } \} \{ \Sigma _ { k } | e ^ { - \beta H _ { a } / m } | \Sigma _ { k } \}$$

∣ ∣ ∣ ∣ where | Σ k 〉 represents the direct-product space of N spins:

$$\sum _ { k = 1 } ^ { \infty } | o _ { i , k } | \circ | o _ { 2 , k } | \circ \cdots$$

where the first and the second subscripts of | σ z i,k 〉 indicate coordinates of the real space and the Trotter axis, respectively. Here | σ z i,k 〉 = |↑〉 or |↓〉 . Equation (42) consists of two elements 〈 Σ k | e -β ˆ H c /m | Σ ′ k 〉 and

〈 Σ ′ k | e -β ˆ H q /m | Σ k +1 〉 . Since the classical Hamiltonian ˆ H c is a diagonal matrix, the former is easily calculated:

$$= \exp [ \frac { \beta } { m } ( \sum _ { i = 1 } ^ { N } j _ { i } \sigma _ { i } ^ { 2 } , \sigma _ { i } ^ { k } + \sum _ { i = 1 } ^ { N } h _ { i } \sigma _ { i } ^ { 2 } ) ]$$

$$\begin{aligned}

& = \frac { 1 } { 2 } \sinh ( \frac { 2 p T } { m } ) ^ { N / 2 } \exp [ - \frac { 1 } { 2 } ln ( \frac { 1 } { m } ) ] \\

& = \frac { 1 } { 2 } \sum _ { i = 1 } ^ { N } o _ { i , k } o _ { i , k + 1 } | . (46)$$

where σ z i,k = ± 1. On the other hand, the latter 〈 Σ ′ k ∣ ∣ ∣ e -β ˆ H q /m ∣ ∣ ∣ Σ k +1 〉 is calculated as

Then the partition function given by Eq. (43) can be represented as

$$Z = \lim _ { m \rightarrow \infty } A _ { ( r ^{i}, k ^{j}) } \sum _ { i=1}^m exp \left\{ \sum _ { k=1}^n \left( \beta J_{ij} \sigma ^{i,k}\sigma ^{j,k} \right) + \sum _ { m=1}^n \theta ^{i,k} \sigma ^{i,k} \right\}$$

where A is just a parameter which does not affect physical quantities. It should be noted that the partition function of the d -dimensional Ising model with transverse field ˆ H is equivalent to that of the ( d +1)-dimensional Ising model without transverse field H eff which is given by

$$\begin{aligned}

H_{eff} &= - \sum _ { i = 1 } ^ { N } \sum _ { j = 1 } ^ { m } J _ { i , j } o _ { i , k } o _ { j , k } - \\

&= - \frac { 1 } { B } \sum _ { i = 1 } ^ { N } \sum _ { j = 1 } ^ { m } - 1 in coth ( \frac { 3 T } { m } o _ { i , k } o _ { i + 1 , k + 1 } ) \\

&= (48)

\end{aligned}$$

The coefficient of the third term of RHS is always negative, and thus the interaction along the Trotter axis is always ferromagnetic. This ferromagnetic interaction becomes strong as the value of Γ decreases. This is called the Suzuki-Trotter decomposition. 84,85

So far we explained the Monte Carlo method as a tool for obtaining the equilibrium state. However we can also use this method to investigate stochastic dynamics of strongly correlated systems, since the Monte Carlo

method is originally based on the master equation. In terms of optimization problem, our purpose is to obtain the ground state of the given Hamiltonian. Then we decrease transverse field gradually and obtain a solution. There are many Monte Carlo studies in which the quantum annealing succeeds to obtain a better solution than that by the simulated annealing. 5,8-10,12,14,86

## 3.2. Deterministic Method Based on Mean-Field Approximation

In the previous section, we considered the Monte Carlo method in which time-evolution is treated as stochastic dynamics. In this section, on the other hand, we explain a deterministic method based on mean-field approximation according to Refs. [87,88]. Before we consider the quantum annealing based on the mean-field approximation, we treat the Ising model with random interactions and site-dependent longitudinal fields given by

$$H _ { l , i s i n g } = - \sum _ { ( i , j ) } ^ { N } J _ { i j } o ^ { z } i o ^ { z } - \sum _ { ( i = 1 } ^ { N } } h _ { i o ^ { z } }$$

When the transverse field is absent, the molecular field of the i -th spin is given by Eq. (34). Then an equation which determines expectation value of the i -th spin at temperature T (= β -1 ) is given by

$$m ^ { 2 } _ { i } = \frac { e ^ { - B h _ { i } ( e f ) } + e ^ { - B h _ { i } ( c e f ) } } { e ^ { B h _ { i } ( e f ) } + e ^ { B h _ { i } ( c e f ) } } = t a$$

In the mean-field level, we approximate that the state σ z j is equal to the expectation value m z j in Eq. (34), and we obtain

$$m _ { i } = \tanh [ \beta ( \sum _ { j } ^ { i } J _ { i , j } m _ { j } ) ] .$$

which is often called self-consistent equation.

We can obtain equilibrium value in the mean-field level by iterating the following equation until convergence:

$$\sum _ { j } ^ { i } ( t + 1 ) = \tan h ( B _ { H } ^ { i } ( e f ) ( t ) ) .$$

In order to judge the convergence, we introduce a distance which represents difference between the state at t -th step and that at ( t +1)-th step as follows:

$$d ( t ) = - \sum _ { i = 1 } ^ { N } [ m _ { i } ^ { z } ( t + 1 ) -$$

When the quantity d ( t ) is less than a given small value (typically ∼ 10 -8 or more smaller value), we judge that the calculation is converged. We summarize this method:

- Step 1 We prepare a initial state arbitrary.

- Step 2 We choose a spin randomly.

- Step 3 We calculate the molecular field given by Eq. (34) at the chosen site in Step 2.

- Step 4 We change the value of the chosen spin in Step 2 according to the obtained molecular field in Step 3.

- Step 5 We continue from Step 2 to Step 4 until the distance d ( t ) converges to small value.

The differences between the Monte Carlo method and this method are Step 4 and Step 5. We can perform the simulated annealing by decreasing temperature and using the state obtained in Step 5 as the initial state in Step 1 at the time changing temperature c .

Next we explain a quantum version of this method. Here we apply transverse field as a quantum field. We consider the Hamiltonian given by

$$\sum _ { i = 1 } ^ { N } \sum _ { j = 1 } ^ { N } \sum _ { k = 1 } ^ { N } h _ { i , j , k }$$

The density matrix of the equilibrium state is

$$\rho = \frac { e _ { n } ( - \beta ^ { H } ) Tr \exp ( - \beta ^ { H } ) } { \sum _ { n = 1 } ^ { N } e _ { n } ^ { 2 n } \sum _ { n = 1 } ^ { N } e _ { n } ^ { - 2 n } } ,$$

where /epsilon1 n and | λ n 〉 denote the n -th eigenenergy and the corresponding eigenvector. The density matrix satisfies the variational principle that minimizes free energy:

$$F = \min _ { p } \{ T r ( H + \beta ^ { - 1 } l n \hat { p } ) \} ,$$

where the logarithm of the matrix is defined by the series expansion as well as the definition of the matrix exponential (see Eq. (37)). Since it is difficult to obtain the density matrix, we have to consider alternative strategy as follows.

c If we want to decrease temperature rapidly, we choose not so small value for judgement of convergence.

A reduced density matrix is defined as

$$\rho _ { i } = T r ^ { \prime } \rho = \frac { 1 } { 2 } ( i + m _ { z } ^ { 2 } o ^ { 2 } +$$

where Tr ′ indicates trace over spin states except the i -th spin. The values m z i and m x i are calculated by

$$m _ { i } = T ( \sigma ^ { i } _ { j } \phi ) , m _ { i } = T$$

The reduced density matrix satisfies the following relations:

$$Tr ( \sigma ^ { i } \rho _ { j } ) = m _ { i } .$$

Here we assume that the density matrix can be represented by direct products of the reduced density matrices:

$$\rho = \sum _ { i = 1 } ^ { N } p _ { i }$$

which is mean-field approximation (in other words, decoupling approximation). Then, the free energy is expressed as

$$F \leq \min F ( \phi _ { i } ) ,$$

$$\begin{aligned}

F ( \rho _ { i } ) & = - \sum _ { i = 1 } ^ { N } J _ { i , m _ { i } } ^ { n _ { i } } - \sum _ { i = 1 } ^ { N } h _ { i , m _ { i } } ^ { n _ { i } } \\

& + b ^ { - 1 } \sum _ { i = 1 } ^ { N } T _ { r ( \rho _ { i } ) } ln \rho _ { i } .

\end{aligned}$$

From the variation of F ( { ˆ ρ i } ) under the normalization condition, we obtain the following relations:

$$\rho _ { i } = \frac { e x p ( - \beta ^ { 3 } H _ { i } ) } { T _ { r } [ e x p ( - \beta ^ { 3 } H _ { i } ) ] ^ { \prime } }$$

Then the reduced density matrix is represented by using the n -th ( n = 1 , 2) eigenvalues /epsilon1 ( i ) n and the corresponding eigenvectors | λ ( i ) n 〉 of ˆ H i :

$$\hat { h _ { i } } = ( - h _ { i } - \sum _ { j = 1 } ^ { n } J _ { ij } m _ { i j } ) + h _ { i }$$

$$\rho _ { i } = \frac { e p ( - \beta e ^ { i } ) | x _ { i } | + e ^ { i } } { e p ( - \beta e ^ { i } ) + e ^ { i } } .$$

We can also obtain the equilibrium values of physical quantities as well as the case for Γ = 0:

$$n _ { i } ( t + 1 ) = T _ { r } ( \sigma ^ { i } _ { p } ( t ) ) ,$$

$$\dot { \alpha } ( t ) = \frac { e ^ { - \beta H _ { i } ( t ) } } { T _ { r } e ^ { - \beta H _ { i } ( t ) } }$$

We continue the above self-consistent equation until the following distance converges:

$$h _ { i } ( t ) = ( - h _ { i } - \sum _ { j = 1 } ^ { n } J _ { ij } m _ { i j } ( t ) +$$

$$d ( t ) = \frac { 1 } { 2 N } \sum _ { i = 1 } ^ { N } ( | m _ { i } z _ { i } ( t + 1 ) - m _ { i } z _ { i } ( t ) | .$$

If the temperature is zero, the reduced density matrix should be

$$\rho _ { i } = \vert \lambda _ { 1 } ^ { ( 2 ) } \vert \langle \lambda _ { 1 } ^ { ( 2 ) } \vert ,$$

where we consider the case for /epsilon1 ( i ) 1 < /epsilon1 ( i ) 2 . Note that if and only if -h i -∑ ′ j J ij m z j = Γ = 0, /epsilon1 ( i ) 1 = /epsilon1 ( i ) 2 is satisfied. Then if we perform the quantum annealing at T = 0, we have to know only the ground state of the local Hamiltonian ˆ H i . The procedure is the same as the case for finite temperature. By using the method, we can obtain a better solution than that obtained by the simulated annealing for some optimization problems. Recently, other type of implementation method based on mean-field approximation was proposed. 13 The method is a quantum version of the variational Bayes inference. 89 We can also obtain a better solution than the conventional variational Bayes inference.

## 3.3. Real-Time Dynamics

In Sec. 3.1 and Sec. 3.2, we considered artificial time-development rules such as the Markov chain Monte Carlo method and mean-field dynamics. In this section, we explain real-time dynamics which is expressed by the time-dependent Schr¨ odinger equation:

where ˆ H ( t ) and | ψ ( t ) 〉 denote the time-dependent Hamiltonian and the wave function at time t , respectively. The solution of this equation is given

by

If we use the time-dependent Hamiltonian including time-dependent quantum field, we can perform the quantum annealing by decreasing the quantum field gradually. To obtain the solution, it is necessary to decide the initial state for Eq. (72). Since our purpose is to obtain the ground state of the given Hamiltonian which represents the optimization problem, we have no way to know the preferable initial state that leads to the ground state definitely in the adiabatic limit. However, in general, we often use a 'trivial state' as the initial state. Actually, it goes well in many cases. For instance, when we consider the Ising model with time-dependent transverse field which is given by

we set the ground state for large Γ as the initial state, hence the initial state is set as

where |→〉 denotes the eigenstate of ˆ σ x :

In real-time dynamics, in order to obtain the ground state by using given initial condition, it is important whether there is level crossing. If there is no level crossing, the system can necessarily reach the ground state by the quantum annealing in the adiabatic limit. To show this fact, we first consider a single spin system under time-dependent longitudinal magnetic field. The Hamiltonian is given by

Suppose we set | ψ (0) 〉 = |↓〉 as the initial state. For arbitrary sweeping schedules, the state at arbitrary positive t is obtained by

This is because the state |↓〉 is the eigenstate of the instantaneous Hamiltonian for arbitrary time t . In general, when there is a good quantum number

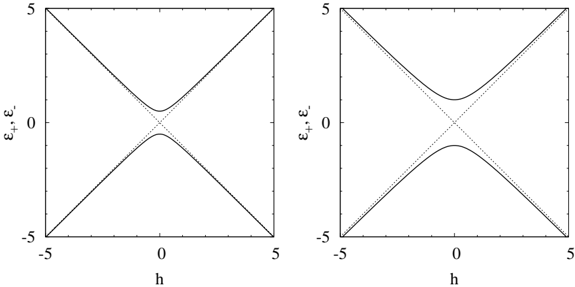

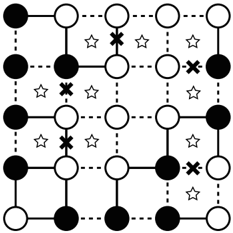

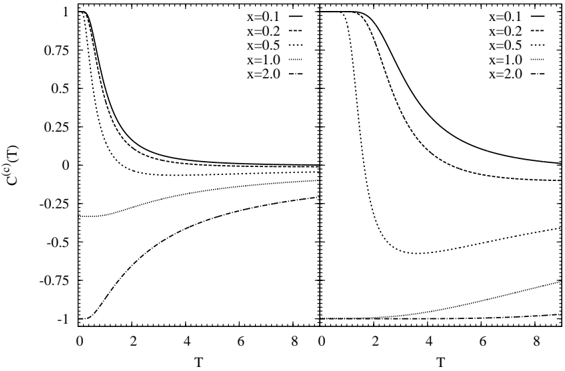

Fig. 2. Eigenenergies of the single spin system under longitudinal and transverse magnetic fields for Γ = 0 . 5 (left panel) and Γ = 1 (right panel). The dotted lines represent eigenenergies for Γ = 0.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Mathematical Plot Pair: Energy Dispersion Relations

### Overview

The image displays two side-by-side, square-format mathematical plots. Each plot shows two curves (one solid, one dashed) on a 2D Cartesian coordinate system. The plots appear to represent energy dispersion relations or eigenvalue solutions as a function of a parameter `h`. The left plot exhibits an "avoided crossing" behavior, while the right plot shows a direct crossing of the two curves.

### Components/Axes

**Common to Both Plots:**

* **Y-axis:** Labeled with the Greek letters `ε₊` (epsilon plus) and `ε₋` (epsilon minus). The axis scale ranges from -5 to 5, with major tick marks at -5, 0, and 5.

* **X-axis:** Labeled with the letter `h`. The axis scale ranges from -5 to 5, with major tick marks at -5, 0, and 5.

* **Data Series:** Each plot contains two data series differentiated by line style:

1. A **solid black line**.

2. A **dashed black line**.

* **Plot Frame:** Each graph is enclosed in a square box with tick marks on all four sides.

**Spatial Layout:**

* The two plots are arranged horizontally, side-by-side.

* The y-axis labels (`ε₊, ε₋`) are positioned to the left of each plot's vertical axis.

* The x-axis label (`h`) is centered below each plot's horizontal axis.

### Detailed Analysis

**Left Plot Analysis:**

* **Trend Verification:** Both curves are symmetric about the vertical line `h=0`. As `|h|` increases (moving left or right from the center), both curves move away from the horizontal axis (`ε=0`) in opposite directions. The solid line trends upward, and the dashed line trends downward.

* **Key Feature:** The curves approach each other near `h=0` but do not intersect. They reach a point of closest approach (a minimum gap) at `h=0`. At this point, the solid line is at a positive `ε` value (approximately `ε ≈ 0.8`), and the dashed line is at a negative `ε` value (approximately `ε ≈ -0.8`).

* **Behavior at Extremes:** At `h = -5` and `h = 5`, the solid line reaches `ε ≈ 5`, and the dashed line reaches `ε ≈ -5`.

**Right Plot Analysis:**

* **Trend Verification:** Both curves are also symmetric about `h=0`. As `|h|` increases, both curves move away from `ε=0` in opposite directions, similar to the left plot.

* **Key Feature:** The two curves intersect directly at the origin point `(h=0, ε=0)`. This is a crossing point, not an avoided crossing.

* **Behavior at Extremes:** At `h = -5` and `h = 5`, the solid line reaches `ε ≈ 5`, and the dashed line reaches `ε ≈ -5`, identical to the left plot.

### Key Observations

1. **Symmetry:** Both plots exhibit perfect symmetry about the `h=0` axis.

2. **Line Style Consistency:** The solid line represents the upper branch (positive `ε`) for `h>0` and the lower branch (negative `ε`) for `h<0` in both plots. The dashed line does the opposite.

3. **Critical Difference:** The fundamental distinction between the two plots is the behavior at `h=0`. The left plot shows a **finite energy gap** (avoided crossing), while the right plot shows a **zero energy gap** (crossing).

4. **Identical Asymptotes:** Far from `h=0` (at `|h| = 5`), the numerical values of the curves are identical in both plots, suggesting the same underlying linear relationship dominates at large `|h|`.

### Interpretation

These plots are characteristic of a two-level quantum system or a coupled oscillator model, where `ε` represents energy or frequency and `h` represents a tuning parameter like a magnetic field or detuning.

* **Left Plot (Avoided Crossing):** This demonstrates the effect of a **coupling** or interaction between the two states. The interaction prevents the energy levels from crossing, creating a minimum gap at the resonance point (`h=0`). This is a signature of hybridization, where the original states mix to form new eigenstates with separated energies. The size of the gap at `h=0` is proportional to the coupling strength.

* **Right Plot (Crossing):** This represents the **uncoupled** or degenerate case. The two states are independent, and their energies cross linearly as the parameter `h` is varied. There is no interaction to lift the degeneracy at the crossing point.

* **Relationship:** The pair of plots likely illustrates a comparison between a system with interaction (left) and without interaction (right). The identical behavior at large `|h|` indicates that the coupling is a local effect near resonance (`h=0`), while the far-detuned behavior is governed by the bare, uncoupled energies.

* **Underlying Model:** The linear dependence of `ε` on `h` at large `|h|` suggests a Hamiltonian of the form `H ~ hσ_z` (where `σ_z` is a Pauli matrix), with an additional coupling term `~ σ_x` present only in the left plot. The avoided crossing gap is `2 * |coupling strength|`.

</details>

and the initial state is set to be the corresponding eigenstate, the good quantum number is conserved. Then when we perform the quantum annealing method based on the real-time dynamics, we should take care of the symmetries of the considered Hamiltonian. From this, we can obtain the ground state of the considered system in the adiabatic limit if there is no level crossing. In practice, however, since we change magnetic field with finite speed, a nonadiabatic transition is inevitable. To show this fact, we consider a single spin system under longitudinal and transverse magnetic fields. The Hamiltonian of this system is given by

Since the eigenenergies are /epsilon1 ± = ± √ h 2 +Γ 2 , the smallest value of the energy difference between the ground state and the excited state is 2Γ at h = 0 as shown in Fig. 2.

Suppose we consider the single spin system under time-dependent longitudinal magnetic field and fixed transverse magnetic field. The Hamiltonian is given by

where we adopt h ( t ) = vt as time-dependent longitudinal field. Here we set t = -∞ as the initial time. The initial state is set to be the ground

state of the Hamiltonian at the initial time | ψ ( t = -∞ ) 〉 = |↓〉 . The ground state at t = + ∞ in the adiabatic limit is | ψ (ad) ( t = + ∞ ) 〉 = |↑〉 . Then a characteristic value which represents the nature of this dynamics is a probability of staying in the ground state at t = + ∞ which is defined by

∣ ∣ The probability of staying in the ground state should depend on the sweeping speed v and the characteristic energy gap and can be obtained by the Landau-Zener-St¨ uckelberg formula: 90-92

where ∆ E and ∆ m represent the energy gap at the avoided level-crossing point and the difference of the magnetizations in the adiabatic limit, respectively. In this case ∆ E = 2Γ and ∆ m = 2.

In many cases, typical shape of energy structure can be approximated by simple systems such as the single spin system. Then the knowledge of the simple transitions such as the Landau-Zener-St¨ ukelberg transition and the Rosen-Zener transition 93 is useful to analyze the efficiency of the quantum annealing based on the real-time dynamics.

## 3.4. Experiments

Transverse field response of the Ising model has been also established in experimentally. 94-103 A dipolar-coupled disordered magnet LiHo x Y 1 -x F 4 has easy-axis anisotropy and can be represented by the Ising model. 104,105 If we apply the longitudinal magnetic field (in other words, the magnetic field is parallel to the easy-axis), phase transition does not take place. 106,107 However, when we apply the transverse magnetic field (in other words, the magnetic field is perpendicular to the easy-axis), phase transitions occur and interesting dynamical properties shown in Ref.[ 6] were observed. In the phase diagram of this material, there are three phases. The ferromagnetic phase appears at intermediate temperature and low transverse magnetic field, whereas at low temperature and low transverse magnetic field, the glassy critical phase 108 appears. The paramagnetic phase exists at the other region. The glassy critical phase exhibits slow relaxation in general. It found that the characteristic relaxation time obtained by ac field susceptibility for

quantum cooling in which we decrease transverse field after temperature is decreased is lower than that for temperature cooling case. 6 From this result, it has been expected that the effect of the quantum fluctuation helps us to obtain the best solution of the optimization problem.

## 4. Optimization Problems

Optimization problems are defined by composition elements of the considered problem and real-valued cost/gain function. They are problems to obtain the best solution such that the cost/gain function takes the minimum/maximum value. In general, the number of candidate solutions increases exponentially with the number of composition elements in optimization problems. Although we can obtain the best solution by a brute force in principle, it is difficult to obtain the best solution by such a naive method in practice. Then we have to invent an innovative method for obtaining the best solution in a practical time and limited computational resource. Optimization problems can be expressed by the Ising model in many cases. Once optimization problems are mapped onto the Ising model, we can use methods that have been considered in statistical physics and computational physics such as the quantum annealing.

In the anterior half of this section, we explain the correspondence between the Ising model and the traveling salesman problem which is one of famous optimization problems. We demonstrate the quantum annealing based on the quantum Monte Carlo simulation for this problem. In the posterior half, we explain the clustering problem as the example expressed by the Potts model which is a straightforward extension of the Ising model.

## 4.1. Traveling Salesman Problem

In this section, we consider the traveling salesman problem which is one of famous optimization problems. The setup of the traveling salesman problem is as follows:

- There are N cities.

- We move from the i -th city to the j -th city where the distance between them is /lscript i,j .

- We can pass through a city only once.

- We return the initial city after we pass through all the cities.

The traveling salesman problem is to find the minimum path under above conditions. The length of a path is given by

where c a denotes the city where we pass through at the a -th step. In the traveling salesman problem, the length of a path is a cost function. From the fourth condition, the following relation should be satisfied:

In terms of mathematics, the traveling salesman problem is to find { c a } N a =1 so as to minimize the path L under the above four conditions.

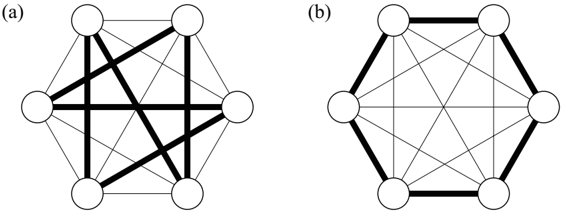

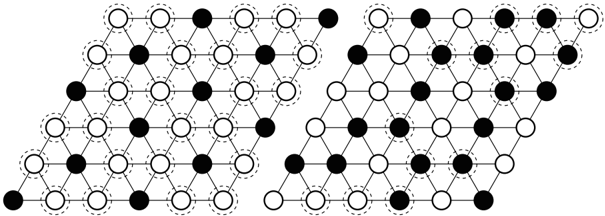

If the number of cities N is small, it is easy to obtain the shortest path by a brute force. We can easily find the best solution of the traveling salesman problem for N = 6 shown in Fig. 3. Figure 3 (a) and (b) represent a bad solution and the best solution where the length of the path L is minimum, respectively. As the number of cities increases, the traveling salesman problem becomes seriously difficult since the number of candidate solutions is ( N -1)! / 2. Then if we want to deal with the traveling salesman problem with large N , we have to adopt smart and easy practical methods such as the simulated annealing instead of a brute force. To use the simulated annealing, we map the traveling salesman problem onto the Ising model with a couple of constraints as follows.

We consider N × N two-dimensional lattice. Let n i,a be the microscopic state which represents the state at the i -th city at the a -th step. The value of n i,a can be taken either 0 or 1. If we pass through the i -th city at the

Fig. 3. Traveling salesman problem for N = 6. Thin lines and thick lines denote the permitted paths and selected paths, respectively. (a) Bad solution. (b) The best solution in which the length of the path is minimum.

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Network Diagram: Hexagonal Node Connectivity Patterns

### Overview

The image displays two side-by-side network diagrams, labeled (a) and (b), each depicting a hexagonal arrangement of six nodes (represented as white circles) connected by lines of two distinct thicknesses. The diagrams illustrate different patterns of connectivity within the same set of nodes.

### Components/Axes

* **Nodes:** Six identical white circles arranged at the vertices of a regular hexagon in both diagrams.

* **Connections (Edges):** Lines connecting the nodes. Two visual types are present:

* **Thick Black Lines:** Indicate a primary or emphasized connection.

* **Thin Gray Lines:** Indicate a secondary or background connection.

* **Labels:**

* `(a)`: Positioned in the top-left corner, labeling the left diagram.

* `(b)`: Positioned in the top-left corner, labeling the right diagram.

* **Legend:** No explicit legend is present. The distinction between thick and thin lines is implied visually.

### Detailed Analysis

The analysis isolates each diagram and describes the connections based on node position. For spatial reference, nodes are described as: Top-Left (TL), Top-Right (TR), Right (R), Bottom-Right (BR), Bottom-Left (BL), Left (L).

**Diagram (a):**

* **Thick Line Connections (6 total):**

* TL to R

* TL to BR

* TR to BL

* TR to L

* R to BL

* L to BR

* **Thin Line Connections:** All other possible connections between nodes not listed above (forming a complete graph, K₆, when combined with thick lines).

* **Visual Pattern:** The thick lines form a complex, intersecting web that connects each node to two non-adjacent nodes, creating a star-like pattern within the hexagon. No two thick lines share a common endpoint on the hexagon's perimeter.

**Diagram (b):**

* **Thick Line Connections (6 total):**

* TL to TR

* TR to R

* R to BR

* BR to BL

* BL to L

* L to TL

* **Thin Line Connections:** All other possible connections between nodes not listed above (again, forming a complete graph, K₆, when combined with thick lines).

* **Visual Pattern:** The thick lines exclusively connect adjacent nodes, perfectly outlining the perimeter of the hexagon. All internal, non-adjacent connections are thin.

### Key Observations

1. **Identical Node Set:** Both diagrams use the exact same six-node hexagonal layout.

2. **Complete Underlying Graph:** In both (a) and (b), every node is connected to every other node by either a thick or thin line. The diagrams do not show missing connections; they only vary the *emphasis* (line thickness) on specific subsets of edges.

3. **Contrasting Emphasis Patterns:**

* Diagram (a) emphasizes a set of **non-adjacent, cross-hexagon connections**.

* Diagram (b) emphasizes the set of **adjacent, perimeter connections**.

4. **Symmetry:** Both patterns exhibit rotational symmetry. Rotating either diagram by 60 degrees would result in an identical visual pattern.

### Interpretation

These diagrams are abstract representations likely used to illustrate concepts in **graph theory, network science, or combinatorial design**. They demonstrate how the same set of elements (nodes) can have different structural properties based on which relationships (edges) are prioritized.

* **Diagram (a)** represents a network where long-range, cross-cluster connections are highlighted. This could model a system where communication or interaction bypasses immediate neighbors, potentially indicating a **small-world network** property or a specific **matching** within the graph.

* **Diagram (b)** represents a network where local, nearest-neighbor connections are highlighted. This models a system with strong **local clustering** or a **ring lattice** topology, where interactions are primarily with adjacent units.

* **The Juxtaposition:** Placing them side-by-side invites comparison. It visually argues that network function and resilience depend critically on *which* connections are active or strong, not just on the existence of connections. The thick lines in (a) might represent shortcuts that reduce path length, while the thick lines in (b) represent the robust local backbone. The thin lines in both indicate that all other potential pathways exist but are weaker or latent.

**Language Declaration:** The only text present consists of the English labels "(a)" and "(b)". No other language is detected.

</details>

a -th step, n i,a is unity whereas n i,a = 0 if we do not pass through the i -th city at the a -th step. The third condition can be represented by

Furthermore, since it is obvious that we can pass through only one city at the a -th step, this constraint is expressed by

Then the length of the path L can be rewritten as

where the Ising spin variable σ z i,a = ± 1 is defined by

Here we used the following relation derived by Eqs. (84) and (85):

Then the length of the path can be represented by the Ising spin Hamiltonian on N × N two-dimensional lattice. In general, it is difficult to obtain the stable state of the Ising model with some constraints regarded as some kind of frustration which will be shown in Sec. 5.2.

## 4.1.1. Monte Carlo Method

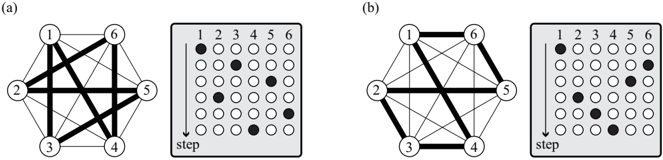

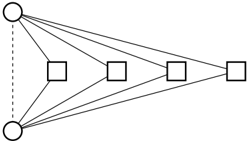

We explain how to implement the Monte Carlo method in the traveling salesman problem. We cannot use the single-spin-flip method which was explained in Sec. 3.1 because of existence of two constraints given by Eqs. (84) and (85). The simplest way of transition between states is realized by flipping four spins simultaneously as shown in Fig. 4.

Suppose we consider the case that we pass through at the i -th city at the a -th step and pass through at the j -th city at the a ′ -th step, which is described as

Fig. 4. The simplest way of flipping method in traveling salesman problem. Transition between the state depicted in (a) and that depicted in (b) occurs. In this case, i = 3, j = 6, a = 2, and a ′ = 5.

<details>

<summary>Image 4 Details</summary>

### Visual Description

## [Diagram/Graph Pair]: Network Graphs and Adjacency Matrices

### Overview

The image displays two side-by-side panels, labeled (a) and (b). Each panel contains a pair of related diagrams: a **network graph** on the left and a corresponding **adjacency matrix** (or step matrix) on the right. The diagrams appear to represent connectivity or relationships between six numbered nodes (1 through 6) in two different states or configurations.

### Components/Axes

**Panel (a):**

* **Left - Network Graph:**

* **Nodes:** Six circles numbered 1, 2, 3, 4, 5, 6 arranged in a hexagonal pattern.

* **Edges:** Lines connecting the nodes. The lines have two distinct thicknesses: **thick** and **thin**.

* **Spatial Layout:** Node 1 is at the top. Nodes 2 and 6 are at the upper left and right. Nodes 3 and 5 are at the lower left and right. Node 4 is at the bottom.

* **Right - Adjacency Matrix:**

* **Structure:** A 6x6 grid of circles.

* **Axes Labels:**

* **Top (Columns):** Numbers 1, 2, 3, 4, 5, 6.

* **Left (Rows):** The word "step" written vertically, with an arrow pointing downward. This implies the rows represent sequential steps or time.

* **Data Points:** Circles within the grid are either **filled (black)** or **empty (white outline)**. A filled circle indicates an active connection or relationship between the column node and the row step.

**Panel (b):**

* **Left - Network Graph:**

* **Nodes:** Same hexagonal arrangement of nodes 1-6.

* **Edges:** Lines connecting nodes, again with **thick** and **thin** lines. The pattern of thick lines is different from panel (a).

* **Right - Adjacency Matrix:**

* **Structure:** Identical 6x6 grid layout as in (a).

* **Axes Labels:** Identical to (a): Column numbers 1-6, and a vertical "step" label with a downward arrow on the left.

* **Data Points:** A different pattern of filled (black) and empty (white) circles compared to panel (a).

### Detailed Analysis

**Panel (a) Analysis:**

* **Graph Connectivity (Thick Lines):** The thick edges form a specific subgraph. They connect:

* Node 1 to Node 3

* Node 1 to Node 4

* Node 1 to Node 5

* Node 2 to Node 4

* Node 2 to Node 5

* Node 3 to Node 6

* Node 4 to Node 6

* Node 5 to Node 6

* **Matrix Data (Filled Circles):** The filled circles in the matrix correspond to the following (Row "step" / Column Node):

* Step 1: Node 1

* Step 2: Node 3

* Step 3: Node 2

* Step 4: Node 5

* Step 5: Node 4

* Step 6: Node 6

* **Mapping:** The matrix does not directly show the graph's adjacency. Instead, it seems to show a **sequence or activation order** of the nodes over 6 steps. The pattern of filled circles is a single filled circle per row, moving down the steps.

**Panel (b) Analysis:**

* **Graph Connectivity (Thick Lines):** The thick edges form a different subgraph. They connect:

* Node 1 to Node 6

* Node 2 to Node 5

* Node 3 to Node 4

* Node 3 to Node 6

* Node 4 to Node 5

* **Matrix Data (Filled Circles):** The filled circles show a different pattern:

* Step 1: Node 1

* Step 2: Node 2

* Step 3: Node 3

* Step 4: Node 4

* Step 5: Node 5

* Step 6: Node 6

* **Mapping:** Similar to (a), this matrix shows a node activation sequence. The sequence here is a simple diagonal: Node 1 at step 1, Node 2 at step 2, etc., through Node 6 at step 6.

### Key Observations

1. **Different Graph Topologies:** The two graphs have distinct sets of "thick" edges, indicating two different network structures or states.

2. **Different Activation Sequences:** The matrices show two different temporal patterns. Panel (a) shows a non-sequential, seemingly random order of node activation (1, 3, 2, 5, 4, 6). Panel (b) shows a perfectly sequential order (1, 2, 3, 4, 5, 6).

3. **Matrix Interpretation:** The matrices are not standard adjacency matrices (which would show all connections at once). The "step" axis and single filled circle per row strongly suggest they represent a **time series** or **process flow** where one node is active or selected at each discrete step.

4. **Potential Relationship:** The thick edges in the graph might represent the **available or strong connections** at the start, while the matrix shows the **actual path or activation sequence** taken through the network over time.

### Interpretation

This figure likely illustrates a concept in **network science, graph theory, or dynamic systems**. It contrasts two scenarios:

* **Scenario (a):** A network with a complex, highly interconnected structure (many thick edges) is traversed in a non-intuitive, non-sequential order. The path (1→3→2→5→4→6) jumps across the network, possibly following a rule like "activate the node with the strongest connection to the currently active node" or representing a stochastic process.

* **Scenario (b):** A network with a simpler, more linear or ring-like structure of thick edges (1-6, 2-5, 3-4, plus 3-6 and 4-5) is traversed in a perfectly sequential order (1→2→3→4→5→6). This suggests a more predictable, perhaps rule-based or spatially-ordered process.

The core message is the relationship between **network structure** (the graph) and **dynamic behavior** (the sequence in the matrix). The same set of nodes can exhibit vastly different temporal patterns depending on the underlying connectivity. The thick lines may represent "highways" or preferred pathways that constrain or guide the sequential activation shown in the matrices. The figure demonstrates how structural properties of a network influence its functional dynamics over time.

</details>

The trial state generated by flipping four spins is as follows:

The heat-bath method and the Metropolis method can be adopted for the transition probability between the present state and the trial state. In Fig. 4, i = 3, j = 6, a = 2, and a ′ = 5.

It should be noted that without loss of generality the initial condition can be set as

/negationslash and thus we can fix the states at the first step ( a = 1) during calculation. The number of interactions in which we try to flip all spins in each Monte Carlo step is ( N -1)( N -2) / 2.

## 4.1.2. Quantum Annealing

In order to perform the quantum annealing, we introduce the transverse field as the quantum fluctuation effect as shown in Sec. 3. The quantum Hamiltonian is given by

where the first-term corresponds to the length of path and the second-term denotes the transverse field. We can map this quantum Hamiltonian on N × N two-dimensional lattice onto N × N × m three-dimensional Ising model as well as the case which was considered in Sec. 3.1. The effective classical

Hamiltonian derived by the Suzuki-Trotter decomposition is written as

In the quantum annealing procedure, we have to take care of the constraints given by Eqs. (84) and (85) as stated before. Then the simplest way of changing state is to flip simultaneously four spins on the same layer ( m is fixed) along the Trotter axis.

## 4.1.3. Comparison with Simulated Annealing and Quantum Annealing

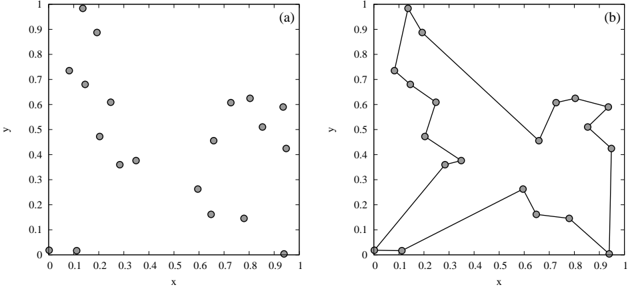

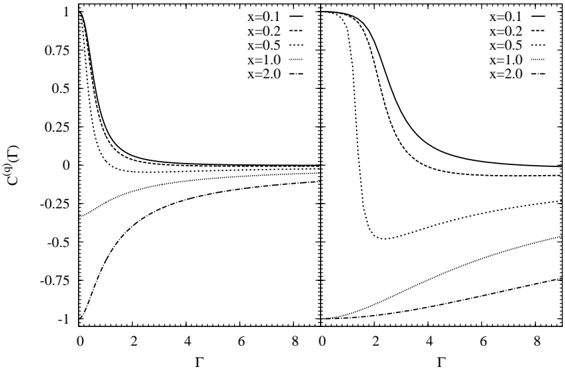

In order to demonstrate the comparison with the simulated annealing and the quantum annealing, we perform the Monte Carlo simulation for the traveling salesman problem. As an example, we consider N = 20 cities depicted in Fig. 5 (a). The positions of these cities were generated by pair of uniform random numbers (0 ≤ x i , y i ≤ 1). The time schedules of temperature T ( t ) for the simulated annealing and transverse field Γ( t ) for the

Fig. 5. Traveling salesman problem for N = 20. (a) Positions of cities. (b) The best solution in which the length of the path is minimum.

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Scatter Plot Comparison: Unstructured vs. Structured Data

### Overview

The image displays two side-by-side scatter plots, labeled (a) and (b), sharing identical axes. Plot (a) shows a set of discrete, unconnected data points. Plot (b) shows the same set of points, but with a subset of them connected by straight lines, forming a continuous, non-intersecting path or tour. The image appears to be a figure from a technical or scientific document, likely illustrating a concept in data analysis, optimization (e.g., Traveling Salesman Problem), or graph theory.

### Components/Axes

* **Chart Type:** Two-panel scatter plot with an overlay of connecting lines in panel (b).

* **Panel Labels:** "(a)" in the top-right corner of the left plot; "(b)" in the top-right corner of the right plot.

* **Axes (Both Panels):**

* **X-axis:** Labeled "x". Scale ranges from 0 to 1. Major tick marks and labels at 0, 0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1.

* **Y-axis:** Labeled "y". Scale ranges from 0 to 1. Major tick marks and labels at 0, 0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1.

* **Data Points:** Represented by gray-filled circles with black outlines. The same set of points appears in both panels.

* **Legend:** None present.

* **Spatial Layout:** The two plots are arranged horizontally. Plot (a) occupies the left half, Plot (b) the right half. The axes frames are identical in size and position within their respective halves.

### Detailed Analysis

**Panel (a): Unconnected Scatter Points**

* **Trend:** No inherent order or connection is shown. The points appear to be distributed in a somewhat clustered but irregular pattern across the unit square.

* **Data Point Extraction (Approximate Coordinates):**

* (0.00, 0.00)

* (0.10, 0.00)

* (0.10, 0.73)

* (0.15, 1.00)

* (0.18, 0.68)

* (0.20, 0.47)

* (0.25, 0.61)

* (0.30, 0.36)

* (0.35, 0.38)

* (0.60, 0.26)

* (0.62, 0.45)

* (0.65, 0.16)

* (0.75, 0.14)

* (0.75, 0.61)

* (0.80, 0.62)

* (0.85, 0.51)

* (0.90, 0.59)

* (0.95, 0.42)

* (0.95, 0.00)

**Panel (b): Connected Path**

* **Trend:** A single, continuous path is drawn connecting 18 of the 19 points from panel (a). The path does not intersect itself. It starts at (0.00, 0.00), traverses the points in a specific order, and ends at (0.95, 0.00). The point at (0.10, 0.00) is **not** included in the path.

* **Path Sequence (Approximate Order):** The visual path suggests the following connection order:

1. (0.00, 0.00) → (0.10, 0.73)

2. (0.10, 0.73) → (0.15, 1.00)

3. (0.15, 1.00) → (0.18, 0.68)

4. (0.18, 0.68) → (0.25, 0.61)

5. (0.25, 0.61) → (0.20, 0.47)

6. (0.20, 0.47) → (0.30, 0.36)

7. (0.30, 0.36) → (0.35, 0.38)

8. (0.35, 0.38) → (0.62, 0.45)

9. (0.62, 0.45) → (0.60, 0.26)

10. (0.60, 0.26) → (0.65, 0.16)

11. (0.65, 0.16) → (0.75, 0.14)

12. (0.75, 0.14) → (0.95, 0.00)

13. (0.95, 0.00) → (0.95, 0.42)

14. (0.95, 0.42) → (0.90, 0.59)

15. (0.90, 0.59) → (0.85, 0.51)

16. (0.85, 0.51) → (0.80, 0.62)

17. (0.80, 0.62) → (0.75, 0.61)

18. (0.75, 0.61) → (0.10, 0.73) *[This closes a loop back to the second point, but the path continues from here to the start? Re-examination shows the path from (0.75, 0.61) connects to (0.10, 0.73), and the initial segment from (0.00, 0.00) to (0.10, 0.73) is part of the same continuous line. The path is a single open tour, not a closed loop.]*

### Key Observations

1. **Point Consistency:** All points in plot (b) correspond exactly in position to points in plot (a).

2. **Excluded Point:** The point at (0.10, 0.00) in plot (a) is the only one not incorporated into the path in plot (b).

3. **Path Characteristics:** The path in (b) is a Hamiltonian path (visits each selected point exactly once) but not a cycle. It is non-self-intersecting. The longest straight-line segment connects (0.75, 0.14) to (0.95, 0.00).

4. **Spatial Distribution:** The points are not uniformly random; there are clusters in the top-left quadrant and the right-center region, with a notable gap in the center of the plot.

### Interpretation

This figure visually demonstrates the transformation of unstructured spatial data into a structured sequence. Panel (a) presents raw data—locations without relationship. Panel (b) imposes a specific order, creating a narrative or a solution.

* **What it Suggests:** The most likely interpretation is an illustration of a **path-finding or optimization algorithm**. It could represent:

* A solution (or a step) to a **Traveling Salesman Problem (TSP)**, where the goal is to find the shortest possible route visiting all points. The path shown is likely not the optimal solution but a feasible one.

* A **minimum spanning tree** or a similar graph structure, though the path is a single line, not a branching tree.

* The output of a **clustering or ordering algorithm** that sequences data points based on proximity.

* **Relationship Between Elements:** The core relationship is between the set of points (the problem space) and the connecting lines (the solution or structure). The axes provide the coordinate system that defines the "distance" between points, which is fundamental to any such algorithm.

* **Notable Anomalies:** The exclusion of the point at (0.10, 0.00) is significant. It implies that either the algorithm in (b) was not required to visit all points, or that point was considered an outlier and deliberately omitted from the structured set. The path's specific order, which creates a long "return" segment from the right side back to the top-left cluster, may indicate a non-greedy or globally optimized approach rather than a simple nearest-neighbor heuristic.

**In essence, the image contrasts chaos (a) with imposed order (b), serving as a pedagogical tool to show how algorithms can derive structure from scattered information.**

</details>

quantum annealing are defined as

where T 0 and Γ 0 are temperature and transverse field at the final time ( t = τ ), and T 0 + T 1 and Γ 0 +Γ 1 are temperature and transverse field at the initial time ( t = 0). The value of τ -1 indicates the annealing speed, and the annealing speed becomes slow as the value of τ increases. In our simulations, we adopt T 0 = Γ 0 = 0 . 01 and T 1 = Γ 1 = 5. Furthermore, we fix the transverse field as Γ = 0 during the simulation in the simulated annealing and the temperature as T = 0 . 01 during the simulation in the quantum annealing.