## QUANTUM ANNEALING: FROM VIEWPOINTS OF STATISTICAL PHYSICS, CONDENSED MATTER PHYSICS, AND COMPUTATIONAL PHYSICS

## SHU TANAKA

Department of Chemistry, University of Tokyo, 7-3-1, Hongo, Bunkyo-ku, Tokyo, 113-0033, Japan E-mail: shu-t@chem.s.u-tokyo.ac.jp

## RYO TAMURA

Institute for Solid State Physics, University of Tokyo, 5-1-5, Kashiwanoha, Kashiwa-shi, Chiba, 277-8501, Japan

International Center for Young Scientists, National Institute for Materials Science, 1-2-1, Sengen, Tsukuba-shi, Ibaraki, 305-0047, Japan E-mail: tamura.ryo@nims.go.jp

In this paper, we review some features of quantum annealing and related topics from viewpoints of statistical physics, condensed matter physics, and computational physics. We can obtain a better solution of optimization problems in many cases by using the quantum annealing. Actually the efficiency of the quantum annealing has been demonstrated for problems based on statistical physics. Then the quantum annealing has been expected to be an efficient and generic solver of optimization problems. Since many implementation methods of the quantum annealing have been developed and will be proposed in the future, theoretical frameworks of wide area of science and experimental technologies will be evolved through studies of the quantum annealing.

Keywords : Quantum annealing; Quantum information; Ising model; Optimization problem

## 1. Introduction

Optimization problems are present almost everywhere, for example, designing of integrated circuit, staff assignment, and selection of a mode of transportation. To find the best solution of optimization problems is difficult in general. Then, it is a significant issue to propose and to develop a method for obtaining the best solution (or a better solution) of optimiza-

tion problems in information science. In order to obtain the best solution, a couple of algorithms according to type of optimization problems have been formulated in information science and these methods have yielded practical applications. Furthermore, since optimization problem is to find the state where a real-valued function takes the minimum value, it can be regarded as problem to obtain the ground state of the corresponding Hamiltonian. Thus, if we can map optimization problem to well-defined Hamiltonian, we can use knowledge and methodologies of physics. Actually, in computational physics, generic and powerful algorithms which can be adopted for wide application have been proposed. One of famous methods is simulated annealing which was proposed by Kirkpatrick et al. 1,2 In the simulated annealing, we introduce a temperature (thermal fluctuation) in the considered optimization problems. We can obtain a better solution of the optimization problem by decreasing temperature gradually since thermal fluctuation effect facilitates transition between states. It is guaranteed that we can obtain the best solution definitely if we decrease temperature slow enough. 3 Then, the simulated annealing has been used in many cases because of easy implementation and guaranty.

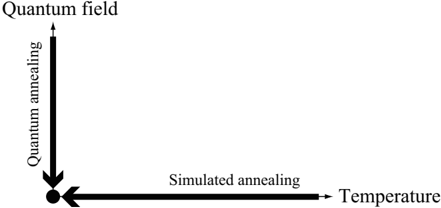

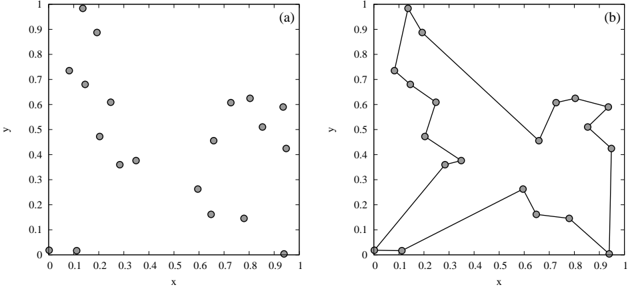

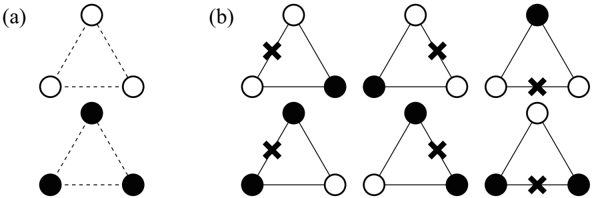

The quantum annealing was proposed as an alternative method of the simulated annealing. 4-11 In the quantum annealing, we introduce a quantum field which is appropriate for the considered Hamiltonian. For instance, if the considered optimization problem can be mapped onto the Ising model, the simplest form of the quantum fluctuation is transverse field. In the quantum annealing, we gradually decrease quantum field (quantum fluctuation) instead of temperature (thermal fluctuation). The efficiency of the quantum annealing has been demonstrated by a number of researchers, and it has been reported that a better solution can be obtained by the quantum annealing comparison with the simulated annealing in many cases. Figure 1 shows schematic picture of the simulated annealing and the quantum annealing. In optimization problems, our target is to obtain the stable state at zero temperature and zero quantum field, which is indicated by the solid circle in Fig. 1.

Recently, methods in which we decrease temperature and quantum field simultaneously have been proposed and as a result, we can obtain a better solution than the simulated annealing and the simple quantum annealing. 12-14 Moreover, as an another example of methods in which we use both thermal fluctuation and quantum fluctuation, novel quantum annealing method with the Jarzynski equality 15,16 was also proposed, 17 which is based on nonequilibrium statistical physics.

Fig. 1. Schematic picture of the simulated annealing and the quantum annealing. Our purpose is to obtain the ground state at the point indicated by the solid circle.

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Diagram: Quantum vs. Simulated Annealing

### Overview

The image is a diagram illustrating the relationship between "Quantum field" and "Simulated annealing" (Temperature). It uses two perpendicular arrows to represent these concepts, with the intersection point indicating a common origin or starting point.

### Components/Axes

* **Vertical Axis:** Labeled "Quantum field" with an arrow pointing upwards. The text "Quantum annealing" is written vertically alongside the arrow, indicating the direction of increasing quantum field strength.

* **Horizontal Axis:** Labeled "Temperature" with an arrow pointing to the right. The text "Simulated annealing" is written horizontally alongside the arrow, indicating the direction of increasing temperature.

* **Origin:** A black circle marks the intersection of the two arrows, representing the starting point for both quantum and simulated annealing processes.

### Detailed Analysis

* The vertical arrow, labeled "Quantum field," points upwards, suggesting an increase in the quantum field strength. The text "Quantum annealing" is written vertically alongside the arrow.

* The horizontal arrow, labeled "Temperature," points to the right, suggesting an increase in temperature. The text "Simulated annealing" is written horizontally alongside the arrow.

* The intersection of the two arrows is marked by a black circle, indicating a common origin or starting point for both processes.

### Key Observations

* The diagram visually represents the relationship between quantum annealing and simulated annealing, suggesting they are orthogonal or independent processes.

* The arrows indicate the direction of increasing strength or temperature for each process.

* The common origin suggests a shared starting point or initial state.

### Interpretation

The diagram illustrates the conceptual difference between quantum annealing and simulated annealing. Quantum annealing relies on manipulating the "Quantum field," while simulated annealing relies on manipulating "Temperature." The orthogonal arrows suggest that these two approaches are distinct and potentially complementary methods for optimization or problem-solving. The common origin implies that both processes might start from the same initial state or problem formulation.

</details>

In this paper, we review the quantum annealing method which is the generic and powerful tool for obtaining the best solution of optimization problems from viewpoints of statistical physics, condensed matter physics, and computational physics. The organization of this paper is as follows. In Sec. 2, we review the Ising model which is a fundamental model of magnetic systems. The realization method of the Ising model by nuclear magnetic resonance is also explained. In Sec. 3, we show a couple of implementation methods of the quantum annealing. In Sec. 4, we explain two optimization problems - traveling salesman problem and clustering problem. The quantum annealing based on the Monte Carlo method for the traveling salesman problem is also demonstrated. In Sec. 5, we review related topics of the quantum annealing - Kibble-Zurek mechanism of the Ising spin chain and order by disorder in frustrated systems. In Sec. 6, we summarize this paper briefly and give some future perspectives of the quantum annealing.

## 2. Ising Model

In this section we introduce the Ising model which is a fundamental model in statistical physics. A century ago, the Ising model was proposed to explain cooperative nature in strongly correlated magnetic systems from a microscopic viewpoint. 18 The Hamiltonian of the Ising model is given by

$$\mathcal { H } _ { I s i n g } = - \sum _ { i , j } J _ { i j } \sigma _ { i } ^ { z } \sigma _ { j } ^ { z } - \sum _ { i = 1 } ^ { N } h _ { i } \sigma _ { i } ^ { z } , \quad \sigma _ { i } ^ { z } = \pm 1 ,$$

where the summation of the first term runs over all interactions on the defined graph and N represents the number of spins. If the sign of J ij is positive/negative, the interaction is called ferromagnetic/antiferromagnetic

interaction. Spins which are connected by ferromagnetic/antiferromagnetic interaction tend to be the same/opposite direction. The second term of the Hamiltonian denotes the site-dependent longitudinal magnetic fields. Although the Ising model is quite simple, this model exhibits inherent rich properties e.g. phase transition and dynamical behavior such as melting process and slow relaxation. For instance, the ferromagnetic Ising model with homogeneous interaction ( J ij = J for ∀ i, j ) and no external magnetic fields ( h i = 0 for ∀ i ) on square lattice exhibits the second-order phase transition, whereas no phase transition occurs in the Ising model on onedimensional lattice. Onsager first succeeded to obtain explicitly free energy of the Ising model without external magnetic field on square lattice. 19 After that, a couple of calculation methods were proposed. Furthermore, these calculation methods have been improved day by day, and the new techniques which were developed in these methods have been applied for other more complicated problems. Since the Ising model is quite simple, we can easily generalize the Ising model in diverse ways such as the Blume-Capel model, 20,21 the clock model, 22,23 and the Potts model. 24,25 By analyzing these models, relation between nature of phase transition and the symmetry which breaks at the transition point has been investigated. Then, it is not too much to say that the Ising model has opened up a new horizon for statistical physics.

The Ising model can be adopted for not only magnetic systems but also systems in wide area of science such as information science. Optimization problem is one of important topics in information science. As we mention in Sec. 4, optimization problem can be mapped onto the Ising model and its generalized models in many cases. Then some methods which were developed in statistical physics often have been used for optimization problem. In Sec. 2.1, we show a couple of magnetic systems which can be well represented by the Ising model. In Sec. 2.2, we review how to create the Ising model by Nuclear Magnetic Resonance (NMR) technique as an example of experimental realization of the Ising model.

## 2.1. Magnetic Systems

In many cases, the Hamiltonian of magnetic systems without external magnetic field is given by

$$\begin{array} { r l } { \hat { \mathcal { H } } = - \sum _ { i , j } J _ { i j } \hat { \sigma } _ { i } \cdot \hat { \sigma } _ { j } } \\ { = - \sum _ { i , j } J _ { i j } \left ( \hat { \sigma } _ { i } ^ { x } \cdot \hat { \sigma } _ { j } ^ { x } + \hat { \sigma } _ { i } ^ { y } \cdot \hat { \sigma } _ { j } ^ { y } + \hat { \sigma } _ { i } ^ { z } \cdot \hat { \sigma } _ { j } ^ { z } \right ) , } \end{array}$$

where ˆ σ α i denotes the α -component of the Pauli matrix at the i -th site. The form of this interaction is called Heisenberg interaction. The definitions of Pauli matrices are

$$\hat { \sigma } ^ { x } \colon = \begin{pmatrix} 0 & 1 \\ 1 & 0 \end{pmatrix} , \quad \hat { \sigma } ^ { y } \colon = \begin{pmatrix} 0 & - i \\ i & 0 \end{pmatrix} , \quad \hat { \sigma } ^ { z } \colon = \begin{pmatrix} 1 & 0 \\ 0 & - 1 \end{pmatrix} , \quad ( 3 )$$

where the bases are defined by

$$| \uparrow \rangle \colon = { 1 \choose 0 } \, , \quad | \downarrow \rangle \colon = { 0 \choose 1 } \, .$$

In this case, magnetic interactions are isotropic. However, they become anisotropic depending on the surrounded ions in real magnetic materials. In general, the Hamiltonian of magnetic systems should be replaced by

$$\hat { \mathcal { H } } = - \sum _ { i , j } J _ { i j } \left ( c _ { x } \hat { \sigma } _ { i } ^ { x } \hat { \sigma } _ { j } ^ { x } + c _ { y } \hat { \sigma } _ { i } ^ { y } \hat { \sigma } _ { j } ^ { y } + c _ { z } \hat { \sigma } _ { i } ^ { z } \hat { \sigma } _ { j } ^ { z } \right ) .$$

When | c x | , | c y | > | c z | , the xy -plane is easy-plane and the Hamiltonian becomes XY-like Hamiltonian. On the contrary, when | c z | > | c x | , | c y | , the z -axis is easy-axis and the Hamiltonian becomes Ising-like Hamiltonian. Such anisotropy comes from crystal structure, spin-orbit coupling, and dipoledipole coupling. Moreover, even if there is almost no anisotropy in magnetic interactions, magnetic systems can be regarded as the Ising model when the number of electrons in the magnetic ion is odd and the total spin is halfinteger. In this case, doubly degenerated states exist because of the Kramers theorem. These states are called the Kramers doublet. When the energy difference between the ground states and the first-excited states ∆ E is large enough, these doubly-degenerated ground states can be well represented by the S = 1 / 2 Ising spins. Table 1 shows examples of the magnetic materials which can be well represented by the Ising model on one-dimensional chain, two-dimensional square lattice, and three-dimensional cubic lattice.

Table 1. Examples of magnetic materials which can be represented by the Ising model on chain (one-dimension), square lattice (two-dimension), and cubic lattice (three-dimension).

| Material | Spatial dimension | Total spin | Type of interaction | J/k B | References |

|-------------------------------|---------------------|--------------|-----------------------|-------------|--------------|

| K 3 Fe(CN) 6 | One (chain) | 1 2 | Antiferromagnetic | - 0 . 23 K | 26-28 |

| CsCoCl 3 | One (chain) | 1 2 | Antiferromagnetic | - 100 K | 29,30 |

| Dy(C 2 H 5 SO 4 ) 2 · 9 H 2 O | One (chain) | 1 2 | Ferromagnetic | 0 . 2 K | 31-33 |

| CoCl 2 · 2NC 5 H 5 | One (chain) | 1 2 | Ferromagnetic | 9 . 5 K | 34,35 |

| CoCs 3 Br 5 | Two (square) | 1 2 | Antiferromagnetic | - 0 . 23 K | 36-38 |

| Co(HCOO) 2 · 2 H 2 O | Two (square) | 1 2 | Antiferromagnetic | - 4 . 3 K | 39-42 |

| Rb 2 CoF 4 | Two (square) | 1 2 | Antiferromagnetic | - 91 K | 43,44 |

| FeCl 2 | Two (square) | 1 | Ferromagnetic | 3 . 4 K | 45,46 |

| DyPO 4 | Three (cubic) | 1 2 | Antiferromagnetic | - 2 . 5 K | 47-50 |

| Dy 3 Al 5 O 12 | Three (cubic) | 1 2 | Antiferromagnetic | - 1 . 85 K | 51-53 |

| CoRb 3 Cl 5 | Three (cubic) | 1 2 | Antiferromagnetic | - 0 . 511 K | 54,55 |

| FeF 2 | Three (cubic) | 2 | Antiferromagnetic | - 2 . 69 K | 56-59 |

## 2.2. Nuclear Magnetic Resonance

In condensed matter physics, Nuclear Magnetic Resonance (NMR) has been used for decision of the structure of organic compounds and for analysis of the state in materials by using resonance induced by electromagnetic wave. The NMR can create the Ising model with transverse fields, which is expected to become an element of quantum information processing. In this processing, we use molecules where the coherence times are long compared with typical gate operations. Actually a couple of molecules which have nuclear spins were used for demonstration of quantum computing. 60-75 In this section we explain how to create the Ising model by NMR.

The setup of the NMR spectrometer as a tool of quantum computing is as follows. We first put molecules which contain nuclear spins under the strong magnetic field B 0 . Next we apply radio frequency ω (rf) magnetic field which is perpendicular to the strong magnetic field B 0 . For simplicity, we here consider a molecule which contains two spins. We also assume that the considered molecule can be well described by the Heisenberg Hamiltonian. Then the Hamiltonian of this system is given by

$$\begin{array} { r l } & { \hat { \mathcal { H } } = \hat { \mathcal { H } } _ { m o l } + \hat { \mathcal { H } } _ { 1 } ^ { ( r f ) } + \hat { \mathcal { H } } _ { 2 } ^ { ( r f ) } , } & { ( 6 ) } \end{array}$$

where ˆ H mol , ˆ H (rf) 1 , and ˆ H (rf) 2 are defined by

$$\begin{array} { r l r } & { \hat { \mathcal { H } } _ { m o l } \colon = - h _ { 1 } \hat { \sigma } _ { 1 } ^ { z } - h _ { 2 } \hat { \sigma } _ { 2 } ^ { z } - J ( \hat { \sigma } _ { 1 } ^ { x } \cdot \hat { \sigma } _ { 2 } ^ { x } + \hat { \sigma } _ { 1 } ^ { y } \cdot \hat { \sigma } _ { 2 } ^ { y } + \hat { \sigma } _ { 1 } ^ { z } \cdot \hat { \sigma } _ { 2 } ^ { z } ) , } & { ( 7 ) } \\ & { \hat { \mathcal { U } } ^ { ( r f ) } \cdot } & { \Gamma _ { - + } ( \mu ^ { x } t _ { + } ( \hat { \sigma } _ { 1 } ^ { x } + \hat { \sigma } _ { 2 } ^ { y } ) , } & { ( 9 ) } \end{array}$$

$$\hat { \mathcal { H } } _ { 2 } ^ { ( r f ) } \colon = - \Gamma _ { 2 } \cos ( \omega ^ { ( r f ) } t - \phi _ { 2 } ) ( \gamma ^ { \prime - 1 } \hat { \sigma } _ { 1 } ^ { x } + \hat { \sigma } _ { 2 } ^ { x } ) , & & ( 9 )$$

$$\begin{array} { r l } & { \hat { \mathcal { H } } _ { 1 } ^ { ( r f ) } \colon = - \Gamma _ { 1 } \cos ( \omega ^ { ( r f ) } t - \phi _ { 1 } ) ( \hat { \sigma } _ { 1 } ^ { x } + \gamma ^ { \prime } \hat { \sigma } _ { 2 } ^ { x } ) , } \\ & { \hat { \mathcal { U } } ^ { ( r f ) } \colon \Gamma _ { 1 } \cos ( \omega ^ { ( r f ) } t - \phi _ { 1 } ) ( \gamma ^ { \prime - 1 } \hat { \mu } _ { 2 } ^ { x } + \hat { \mu } _ { 3 } ^ { x } ) . } \end{array}$$

respectively. We take the natural unit in which /planckover2pi1 = 1. The values of φ 1 and φ 2 are the phases at the time t = 0 of the first spin and that of the second spin, respectively. The quantities of h i are defined by h i := γ i B 0 , where γ i denotes the gyromagnetic ratio of the i -th spin ( i = 1 , 2). The values of h 1 and h 2 represent energy differences between |↑〉 and |↓〉 of the first spin and the second spin, respectively. The coefficients Γ 1 and Γ 2 in ˆ H (rf) 1 and ˆ H (rf) 2 are the effective amplitudes of the ac magnetic field, whose definitions are Γ i := γ i B ac , where B ac is amplitude of the ac magnetic field. The value of γ ′ is defined by the ratio of the gyromagnetic ratios γ ′ := γ 2 /γ 1 .

We define the following unitary transformation:

$$\hat { U } ^ { ( R ) } \colon = e ^ { - i h _ { 1 } \hat { \sigma } _ { 1 } ^ { z } t } \cdot e ^ { - i h _ { 2 } \hat { \sigma } _ { 2 } ^ { z } t } . & & ( 1 0 )$$

We can change from the laboratory frame to a frame rotating with h i around the z -axis by using the above unitary transformation. The dynamics of a

density matrix can be calculated by

$$i \frac { d \hat { \rho } } { d t } = [ \hat { \mathcal { H } } , \hat { \rho } ] .

<text><loc_465><loc_48><loc_498><loc_100>(11)</text>$$

The density matrix on the rotating frame is given by

$$\hat { \rho } ^ { ( R ) } \colon = \hat { U } ^ { ( R ) } \hat { \rho } \hat { U } ^ { ( R ) \dagger } . \quad ( 1 2 )$$

To be the same form as Eq. (11) on the rotating frame, the Hamiltonian on the rotating frame should be

$$\hat { \mathcal { H } } ^ { ( R ) } = \hat { U } ^ { ( R ) } \hat { \mathcal { H } } \hat { U } ^ { ( R ) \dagger } - i \hat { U } ^ { ( R ) } \frac { d \hat { U } ^ { ( R ) \dagger } } { d t } .$$

Here we decompose the Hamiltonian on the rotating frame as

$$\begin{array} { r l } & { \hat { \mathcal { H } } ^ { ( R ) } = \hat { \mathcal { H } } _ { m o l } ^ { ( R ) } + \hat { \mathcal { H } } _ { 1 } ^ { ( R ) ( r f ) } + \hat { \mathcal { H } } _ { 2 } ^ { ( R ) ( r f ) } , } & { ( 1 4 ) } \end{array}$$

where the three terms are defined by

$$\begin{array} { r l } & { \hat { \mathcal { H } } _ { m o l } ^ { ( R ) } \colon = \hat { U } ^ { ( R ) } \hat { \mathcal { H } } _ { m o l } \hat { U } ^ { ( R ) \dagger } - i \hat { U } ^ { ( R ) } \frac { d \hat { U } ^ { ( R ) \dagger } } { d t } , } & { ( 1 5 ) } \\ & { \hat { \mathcal { H } } _ { m o l } ^ { ( R ) } \colon = \hat { U } ^ { ( R ) } \hat { \mathcal { H } } _ { m o l } \hat { U } ^ { ( R ) \dagger } - i \hat { U } ^ { ( R ) } \frac { d \hat { U } ^ { ( R ) \dagger } } { d t } , } & { ( 2 5 ) } \end{array}$$

$$\begin{array} { r l } & { \hat { \mathcal { H } } _ { 1 } ^ { ( R ) ( r f ) } \colon = \hat { U } ^ { ( R ) } \hat { \mathcal { H } } _ { 1 } ^ { ( r f ) } \hat { U } ^ { ( R ) \dagger } , } & { ( 1 6 ) } \\ & { \hat { \mathcal { U } } ^ { ( R ) ( r f ) } \colon = \hat { U } ^ { ( R ) } \hat { \mathcal { U } } ^ { ( r f ) } \hat { U } ^ { ( R ) \dagger } . } & { ( 1 7 ) } \end{array}$$

$$\begin{array} { r l } & { \hat { \mathcal { H } } _ { 2 } ^ { ( R ) ( r f ) } \colon = \hat { U } ^ { ( R ) } \hat { \mathcal { H } } _ { 2 } ^ { ( r f ) } \hat { U } ^ { ( R ) \dagger } . } & { ( 1 7 ) } \end{array}$$

The intramolecular magnetic interaction Hamiltonian on the rotating frame ˆ H (R) mol can be calculated as

$$\hat { \mathcal { H } } _ { m o l } ^ { ( R ) } = J \begin{pmatrix} 0 & 0 & 0 & 0 \\ 0 & 0 & e ^ { i ( h _ { 2 } - h _ { 1 } ) t } & 0 \\ 0 & e ^ { - i ( h _ { 2 } - h _ { 1 } ) t } & 0 & 0 \\ 0 & 0 & 0 & 0 \end{pmatrix} - J \hat { \sigma } _ { 1 } ^ { z } \hat { \sigma } _ { 2 } ^ { z } \simeq - J \hat { \sigma } _ { 1 } ^ { z } \hat { \sigma } _ { 2 } ^ { z } .$$

The approximation is valid when | h 2 -h 1 | τ /greatermuch 1, where τ is a characteristic time scale since the exponential terms are averaged to vanish. The radio frequency magnetic field Hamiltonian on the rotating frame ˆ H (R)(rf) 1 under the resonance condition ω (rf) = h i can be calculated as

$$\begin{array} { r l } & { t h e r o n a l i c e c o n d u m \omega ^ { \prime } = h _ { i } \, c a l l u p e d a s } \\ & { \quad \hat { H } _ { 1 } ^ { ( R ) ( f r ) } = - \Gamma _ { 1 } \left [ \begin{array} { l l l } { 0 } & { 0 } & { e ^ { - i \phi _ { 1 } } } & { 0 } \\ { 0 } & { 0 } & { 0 } & { e ^ { - i \phi _ { 1 } } } \\ { e ^ { i \phi _ { 1 } } } & { 0 } & { 0 } & { 0 } \\ { 0 } & { e ^ { i \phi _ { 1 } } } & { 0 } & { 0 } \end{array} \right ] + \gamma ^ { \prime } \left ( \begin{array} { l l l } { 0 } & { a _ { - - } } & { 0 } & { 0 } \\ { a _ { + + } } & { 0 } & { 0 } & { 0 } \\ { 0 } & { 0 } & { 0 } & { a _ { - - } } \end{array} \right ) \right ] , } \end{array}$$

$$\hat { \mathcal { H } } _ { 1 } ^ { ( R ) ( r f ) } = - \Gamma _ { 1 } ( \cos \phi _ { 1 } \hat { \sigma } _ { 1 } ^ { x } + \sin \phi _ { 1 } \hat { \sigma } _ { 1 } ^ { y } ) .$$

where a --:= e -i ( h 2 -h 1 ) t + φ 1 +e -i ( h 1 + h 2 ) t -φ 1 and a ++ := e i ( h 2 -h 1 ) t + φ 1 + e i ( h 1 + h 2 ) t -φ 1 . The second term of ˆ H (R)(rf) 1 vanishes when | h 1 + h 2 | τ, | h 2 -h 1 | τ /greatermuch 1. Then under these conditions, the Hamiltonian becomes

In the same way, the Hamiltonian ˆ H (R)(rf) 2 can be calculated as

$$\begin{array} { r l } & { \hat { \mathcal { H } } _ { 2 } ^ { ( R ) ( r f ) } = - \Gamma _ { 2 } ( \cos \phi _ { 2 } \hat { \sigma } _ { 2 } ^ { x } + \sin \phi _ { 2 } \hat { \sigma } _ { 2 } ^ { y } ) . \quad ( 2 0 ) } \end{array}$$

By taking the rotation operators on the individual sites, we can rewrite the Hamiltonians ˆ H (R)(rf) 1 and ˆ H (R)(rf) 2 by only the x -component of the Pauli matrix:

$$\begin{array} { r l } & { e ^ { i \phi _ { 1 } \hat { \sigma } _ { 1 } ^ { z } } \hat { H } _ { 1 } ^ { ( R ) ( r f ) } e ^ { - i \phi _ { 1 } \hat { \sigma } _ { 1 } ^ { z } } = - \Gamma _ { 1 } \hat { \sigma } _ { 1 } ^ { x } , \quad ( 2 1 ) } \\ & { i \phi _ { 2 } \hat { \sigma } _ { 2 } ^ { z } \hat { u } ^ { ( R ) ( r f ) } e ^ { - i \phi _ { 2 } \hat { \sigma } _ { 2 } ^ { z } } = \Gamma _ { 1 } \hat { \sigma } _ { 2 } ^ { x } , \quad ( 2 3 ) } \end{array}$$

$$e ^ { i \phi _ { 2 } \hat { \sigma } _ { 2 } ^ { z } } \hat { \mathcal { H } } _ { 2 } ^ { ( R ) ( r f ) } e ^ { - i \phi _ { 2 } \hat { \sigma } _ { 2 } ^ { z } } = - \Gamma _ { 2 } \hat { \sigma } _ { 2 } ^ { x } . \quad & & ( 2 2 )$$

Then, the total Hamiltonian can be represented by the Ising model with site-dependent transverse fields:

$$\hat { \mathcal { H } } ^ { ( R ) } = - J \hat { \sigma } _ { 1 } ^ { z } \hat { \sigma } _ { 2 } ^ { z } - \Gamma _ { 1 } \hat { \sigma } _ { 1 } ^ { x } - \Gamma _ { 2 } \hat { \sigma } _ { 2 } ^ { x } . & & ( 2 3 )$$

It should be noted that the above procedure is not restricted for two spin system. Then, the NMR technique can be create the Ising model with sitedependent transverse fields in general.

## 3. Implementation Methods of Quantum Annealing

As stated in Sec. 1, the quantum annealing method is expected to be a powerful tool to obtain the best solution of optimization problems in a generic way. The quantum annealing methods can be categorized according to how to treat time-development. One is a stochastic method such as the Monte Carlo method which will be shown in Sec. 3.1. Other is a deterministic method such as mean-field type method and real-time dynamics. We will explain the mean-field type method and the method based on real-time dynamics in Secs. 3.2 and 3.3. Although in the Monte Carlo method and the mean-field type method, we introduce time-development in an artificial way, the merit of these methods is to be able to treat large-scale systems. The methods based on the Schr¨ odinger equation can follow up real-time dynamics which occurs in real experimental systems. However, these methods can be used for very small systems and/or limited lattice geometries because of limited computer resources and characters of algorithms. Each method has strengths and limitations based on its individuality. Then when we use the quantum annealing, we have to choose implementation methods according to what we want to know. In this section, we explain three types of theoretical methods for the quantum annealing and some experimental results which relate to the quantum annealing.

## 3.1. Monte Carlo Method

In this section we review the Monte Carlo method as an implementation method of the quantum annealing. In physics, the Monte Carlo method is widely adopted for analysis of equilibrium properties of strongly correlated systems such as spin systems, electric systems, and bosonic systems. Originally the Monte Carlo method is used in order to calculate integrated value of given function. The simplest example is 'calculation of π '. Suppose we consider a square in which -1 ≤ x, y ≤ 1 and a circle whose radius is unity and center is ( x, y ) = (0 , 0). We generate pair of uniform random numbers ( -1 ≤ x i , y i ≤ 1) many times and calculate the following quantity:

$$\frac { \text {number of steps when $\sqrt{x_{i}^{2}}+y_{i}^{2}\leq 1$ is satisfied} } { \text {number of steps} } .$$

Hereafter we refer to the denominator as Monte Carlo step. The quantity should converge to π/ 4 in the limit of infinite Monte Carlo step. This is a pedagogical example of the Monte Carlo method. We first explain how to implement and theoretical background of the Monte Carlo method which is used in physics.

In equilibrium statistical physics, we would like to know the equilibrium value at given temperature T . The equilibrium value of the physical quantity which is represented by the operator O is defined as

$$\langle O \rangle _ { T } ^ { ( e q ) } \colon = \frac { T r \, O e ^ { - \beta \mathcal { H } } } { T r \, e ^ { - \beta \mathcal { H } } } , \quad \quad ( 2 5 )$$

where Tr means the trace of matrix and β denotes the inverse temperature β = ( k B T ) -1 . Hereafter we set the Boltzmann constant k B to be unity. For small systems, we can obtain the equilibrium value by taking sum analytically, on the contrary, it is difficult to obtain the equilibrium value for large systems except few solvable models. Then in order to evaluate equilibrium value of the physical quantity, we often use the Monte Carlo method.

We consider the Ising model given by

$$\mathcal { H } _ { I s i n g } = - \sum _ { \langle i , j \rangle } J _ { i j } \sigma _ { i } ^ { z } \sigma _ { j } ^ { z } - \sum _ { i = 1 } ^ { N } h _ { i } \sigma _ { i } ^ { z } , \quad \sigma _ { i } ^ { z } = \pm 1 .$$

The Ising model without transverse field can be expressed as a diagonal matrix by using 'trivial' bit representation |↑〉 and |↓〉 which were introduced in Sec. 2. Then, in this case, we can easily calculate the eigenenergy once the eigenstate is specified.

We can use the Monte Carlo method for obtaining the equilibrium value defined by Eq. (25) as well as the calculation of π :

$$\frac { \sum _ { \Sigma } O ( \Sigma ) e ^ { - \beta E ( \Sigma ) } } { \sum _ { \Sigma } e ^ { - \beta E ( \Sigma ) } } \to \langle O \rangle _ { T } ^ { ( e q ) } ,$$

where O (Σ) and E (Σ) denote the physical value of O and the eigenenergy of the eigenstate Σ, respectively. Here the eigenstate Σ is generated by uniform random number and ∑ Σ 1 is equal to Monte Carlo step. In the limit of infinite Monte Carlo step, LHS of Eq. (27) should be converge to the equilibrium value. Equilibrium statistical physics says that the probability distribution at equilibrium state can be described by the Boltzmann distribution which is proportional to e -βE (Σ) . In this case, since we know the form of the probability distribution, it is better to use the distribution function to generate a state according to the Boltzmann distribution instead of uniform random number. This scheme is called importance sampling. When we use the importance sampling, we can obtain the equilibrium value as follows:

$$\frac { \sum _ { \Sigma } O ( \Sigma ) } { \sum _ { \Sigma } 1 } \rightarrow \langle O \rangle _ { T } ^ { ( e q ) } .

</doctag>$$

In order to generate a state according to the Boltzmann distribution, we use the Markov chain Monte Carlo method. Let P (Σ a , t ) be the probability of the a -th state at time t . In this method, time-evolution of probability distribution is given by the master equation:

/negationslash

$$P ( \Sigma _ { a } , t + \Delta t ) = \left [ \sum _ { b \neq a } P ( \Sigma _ { b } , t ) w ( \Sigma _ { a } | \Sigma _ { b } ) + P ( \Sigma _ { a } , t ) w ( \Sigma _ { a } | \Sigma _ { a } ) \right ] \Delta t ,$$

/negationslash where w (Σ a | Σ b ) represents the transition probability from the b -th state to the a -th state in unit time. The transition probability w (Σ a | Σ b ) obeys

$$\sum _ { \Sigma _ { a } } w ( \Sigma _ { a } | \Sigma _ { b } ) = 1 \quad ( \forall \, \Sigma _ { b } ) .$$

For convenience, let P ( t ) be a vector-representation of probability distribution { P (Σ a , t ) } . Then the master equation can be represented by

$$P ( t + \Delta t ) = \mathcal { L } P ( t ) , & & ( 3 1 )$$

where L is the transition matrix whose elements are defined as

$$\begin{array} { r l } & { \mathcal { L } _ { b a } \colon = w ( \Sigma _ { b } | \Sigma _ { a } ) \Delta t , } \\ & { \quad \mathcal { C } _ { b a } \colon = 1 \sum \mathcal { C } _ { b } ^ { 1 } \sum \Sigma _ { b } | \Sigma _ { a } ) \Lambda ( 3 2 ) } \end{array}$$

/negationslash

$$\mathcal { L } _ { a a } \colon = 1 - \sum _ { b \neq a } \mathcal { L } _ { b a } = 1 - \sum _ { b \neq a } w ( \Sigma _ { b } | \Sigma _ { a } ) \Delta t .$$

/negationslash

Here the matrix L is a non-negative matrix and does not depend on time. Then this time-evolution is the Markovian.

If the transition matrix L is prepared appropriately, which satisfies the detailed balance condition and the ergordicity, we can obtain the equilibrium probability distribution in the limit of infinite Monte Carlo step regardless of choice of the initial state because of the Perron-Frobenius theorem.

We can perform the Monte Carlo method easily as following process.

- Step 1 We prepare a initial state arbitrary.

- Step 2 We choose a spin randomly.

- Step 3 We calculate the molecular field at the chosen site in Step 2. The molecular field at the chosen site i is defined as

$$h _ { i } ^ { ( e f f ) } \colon = \sum _ { j } { ^ { \prime } J _ { i j } \sigma _ { j } ^ { z } + h _ { i } } ,$$

where the summation takes over the nearest neighbor sites of the i -th site.

- Step 4 We flip the chosen spin in Step 2 according to a probability defined by some way.

- Step 5 We continue from Step 2 to Step 4 until physical quantities such as magnetization converge.

In this Monte Carlo method, we only update the chosen single spin, and thus we refer to this method as single-spin-flip method. There is an ambiguity how to define w (Σ a | Σ b ) in Step 4. Here we explain two famous choices of w (Σ a | Σ b ) as follows. Transition probability in the heat-bath method is given by

$$w _ { H B } ( \sigma _ { i } ^ { z } \rightarrow - \sigma _ { i } ^ { z } ) = \frac { e ^ { - \beta h _ { i } ^ { ( e f f ) } \sigma _ { i } ^ { z } } } { 2 \cosh ( \beta h _ { i } ^ { ( e f f ) } ) } .$$

Transition probability in the Metropolis method is given by

$$w _ { \text {MP} } ( \sigma ^ { z } _ { i } \to - \sigma ^ { z } _ { i } ) = \begin{cases} 1 & ( h ^ { ( \text {eff)} } _ { i } \sigma ^ { z } _ { i } < 0 ) \\ e ^ { - 2 \beta h ^ { ( \text {eff)} } _ { i } \sigma ^ { z } _ { i } } & ( h ^ { ( \text {eff} ) } _ { i } \sigma ^ { z } _ { i } \geq 0 ) \end{cases} .$$

Since both two transition probabilities satisfy the detailed balance condition, the equilibrium state can be obtained definitely in the limit of infinite Monte Carlo step a . It is important to select how to choice the transition probability since it is known that a couple of methods can sample states in an efficient fashion. 76-83

So far we considered the Monte Carlo method for systems where there is no off-diagonal matrix element. To perform the Monte Carlo method, in a precise mathematical sense, we only have to know how to choice the basis or appropriate transformation so as to diagonalize the given Hamiltonian. However, it is difficult to obtain equilibrium values of physical quantities of quantum systems, since we have to calculate the exponential of the given Hamiltonian e -β ˆ H in general. If we know all eigenvalues and the corresponding eigenvectors of the given Hamiltonian, we can easily calculate e -β ˆ H by the unitary transformation which diagonalizes the Hamiltonian ˆ H . In contrast, if we do not know all eigenvalues and eigenvectors, we have to calculate any power of the Hamiltonian ˆ H m since the matrix exponential is given by

$$e ^ { \hat { A } } = \sum _ { m = 0 } ^ { \infty } \frac { 1 } { m ! } \hat { A } ^ { m } .$$

It is difficult to calculate the matrix exponential in general. Then we have to consider the following procedure in order to use the framework of the Monte Carlo method for quantum systems.

In many cases, the Hamiltonian of quantum systems can be represented as

$$\hat { \mathcal { H } } & = \hat { \mathcal { H } } _ { c } + \hat { \mathcal { H } } _ { q } . & ( 3 8 )$$

Hereafter we refer to ˆ H c and ˆ H q as classical Hamiltonian and quantum Hamiltonian, respectively. The classical Hamiltonian ˆ H c is a diagonal matrix. Here we assume that ˆ H q can be easily diagonalized b . This is a key of the quantum Monte Carlo method as will be shown later. Since ˆ H c and ˆ H q cannot commute in general: [ ˆ H c , ˆ H q ] = 0, then e -β ˆ H = e -β ˆ H c e -β ˆ H q . We

/negationslash

/negationslash

a Recently, the algorithm which does not use the detailed balance condition was proposed. 76,77 It should be noted that the detailed balance condition is just a necessary condition. This novel algorithm is efficient for general spin systems.

b This fact does not seem to be general. However we can prepare the matrices which can be easily diagonalized by the decomposition as ˆ H q = ∑ /lscript ˆ H ( /lscript ) q in many cases.

decompose the matrix exponential by introducing large integer m ,

$$\begin{array} { r l } { \exp \left ( - \frac { \beta } { m } \hat { \mathcal { H } } \right ) = \exp \left [ - \frac { \beta } { m } ( \hat { \mathcal { H } } _ { c } + \hat { \mathcal { H } } _ { q } ) \right ] } \\ { = \exp \left ( - \frac { \beta } { m } \hat { \mathcal { H } } _ { c } \right ) \exp \left ( - \frac { \beta } { m } \hat { \mathcal { H } } _ { q } \right ) + \mathcal { O } \left ( \left ( \frac { \beta } { m } \right ) ^ { 2 } \right ) . \, ( 3 9 ) } \end{array}$$

This is a concrete representation of the Trotter formula. 84 From now on, we refer to m as Trotter number. By using this relation, we can perform the Monte Carlo method for quantum systems. To illustrate it, we consider the Ising model with longitudinal and transverse magnetic fields. The considered Hamiltonian is given as

$$\hat { \mathcal { H } } = - \sum _ { \langle i , j \rangle } J _ { i j } \hat { \sigma } _ { i } ^ { z } \hat { \sigma } _ { j } ^ { z } - \sum _ { i = 1 } ^ { N } h _ { i } ^ { z } \hat { \sigma } _ { i } ^ { z } - \Gamma \sum _ { i = 1 } ^ { N } \hat { \sigma } _ { i } ^ { x } = \hat { \mathcal { H } } _ { c } + \hat { \mathcal { H } } _ { q } ,$$

$$\hat { \mathcal { H } } _ { c } \colon = - \sum _ { ( i , j ) } J _ { i j } \hat { \sigma } _ { i } ^ { z } \hat { \sigma } _ { j } ^ { z } - \sum _ { i = 1 } ^ { N } h _ { i } ^ { z } \hat { \sigma } _ { i } ^ { z } , \quad \hat { \mathcal { H } } _ { q } \colon = - \Gamma \sum _ { i = 1 } ^ { N } \hat { \sigma } _ { i } ^ { x } , \quad ( 4 1 )$$

where optimization problems often can be expressed by this classical Hamiltonian ˆ H c . The partition function of the Hamiltonian at temperature T (= β -1 ) is given by

$$Z = \text {Tr} e ^ { - \beta \mathcal { H } } = \sum _ { \Sigma } \left \langle \Sigma \left | e ^ { - \beta ( \mathcal { H } _ { c } + \mathcal { H } _ { q } ) } \right | \Sigma \right \rangle .

<text><loc_36><loc_499><loc_37><loc_500>###</text>$$

Using Eq. (39) we obtain

$$\text {Using Eq. (39) we obtain} \\ Z \, = \, \lim _ { m \to \infty } \sum _ { \{ \Sigma _ { k } \} , \{ \Sigma ^ { \prime } _ { k } \} } \left \langle \Sigma _ { 1 } \Big | e ^ { - \beta \mathcal { H } _ { c } / m } \, \Big | \, \Sigma ^ { \prime } _ { 1 } \right \rangle \left \langle \Sigma ^ { \prime } _ { 1 } \, \Big | e ^ { - \beta \mathcal { H } _ { q } / m } \, \Big | \, \Sigma _ { 2 } \right \rangle \\ \times \left \langle \Sigma _ { 2 } \Big | e ^ { - \beta \mathcal { H } _ { c } / m } \, \Big | \, \Sigma ^ { \prime } _ { 2 } \right \rangle \left \langle \Sigma ^ { \prime } _ { 2 } \, \Big | e ^ { - \beta \mathcal { H } _ { q } / m } \, \Big | \, \Sigma _ { 3 } \right \rangle \\ \times \dots \\ \times \left \langle \Sigma _ { m } \, \Big | e ^ { - \beta \mathcal { H } _ { c } / m } \, \Big | \, \Sigma ^ { \prime } _ { m } \, \right \rangle \left \langle \Sigma ^ { \prime } _ { m } \, \Big | e ^ { - \beta \mathcal { H } _ { q } / m } \, \Big | \, \Sigma _ { 1 } \right \rangle ,$$

∣ ∣ ∣ ∣ where | Σ k 〉 represents the direct-product space of N spins:

$$\left | \Sigma _ { k } \right \rangle \colon = \left | \sigma _ { 1 , k } ^ { z } \right \rangle \otimes \left | \sigma _ { 2 , k } ^ { z } \right \rangle \otimes \cdots \left | \sigma _ { N , k } ^ { z } \right \rangle ,$$

where the first and the second subscripts of | σ z i,k 〉 indicate coordinates of the real space and the Trotter axis, respectively. Here | σ z i,k 〉 = |↑〉 or |↓〉 . Equation (42) consists of two elements 〈 Σ k | e -β ˆ H c /m | Σ ′ k 〉 and

〈 Σ ′ k | e -β ˆ H q /m | Σ k +1 〉 . Since the classical Hamiltonian ˆ H c is a diagonal matrix, the former is easily calculated:

$$& \left \langle \Sigma _ { k } \Big | e ^ { - \beta \mathcal { H } _ { c } / m } \Big | \Sigma _ { k } \right \rangle \\ & = \exp \left [ \frac { \beta } { m } \left ( \sum _ { \langle i , j \rangle } J _ { i j } \sigma _ { i , k } ^ { z } \sigma _ { j , k } ^ { z } + \sum _ { i = 1 } ^ { N } h _ { i } \sigma _ { i , k } ^ { z } \right ) \right ] \prod _ { i = 1 } ^ { N } \delta ( \sigma _ { i , k } ^ { z } , \sigma _ { i , k } ^ { \prime z } ) ,$$

$$& \left \langle \Sigma _ { k } ^ { \prime } \Big | e ^ { - \beta \hat { H } _ { q } / m } \Big | \Sigma _ { k + 1 } \right \rangle \\ & = \left [ \frac { 1 } { 2 } \sinh \left ( \frac { 2 \beta \Gamma } { m } \right ) \right ] ^ { N / 2 } \exp \left [ \frac { 1 } { 2 } \ln \coth \left ( \frac { \beta \Gamma } { m } \sum _ { i = 1 } ^ { N } \sigma _ { i , k } ^ { \prime z } \sigma _ { i , k + 1 } ^ { z } \right ) \right ] .$$

where σ z i,k = ± 1. On the other hand, the latter 〈 Σ ′ k ∣ ∣ ∣ e -β ˆ H q /m ∣ ∣ ∣ Σ k +1 〉 is calculated as

Then the partition function given by Eq. (43) can be represented as

$$Z = \lim _ { m \to \infty } A \sum _ { \{ \sigma _ { i , k } ^ { z } = \pm 1 \} } \exp \, \left \{ \sum _ { k = 1 } ^ { m } \left [ \sum _ { \langle i , j \rangle } \left ( \frac { \beta J _ { i j } } { m } \sigma _ { i , k } ^ { z } \sigma _ { j , k } ^ { z } \right ) + \sum _ { i = 1 } ^ { N } \frac { \beta h _ { i } } { m } \sigma _ { i , k } ^ { z } \right ] \right \} ,$$

where A is just a parameter which does not affect physical quantities. It should be noted that the partition function of the d -dimensional Ising model with transverse field ˆ H is equivalent to that of the ( d +1)-dimensional Ising model without transverse field H eff which is given by

$$\mathcal { H } _ { \text{eff} } = - \sum _ { ( i, j ) } \sum _ { k = 1 } ^ { m } \frac { J _ { i j } } { m } \sigma _ { i, k } ^ { z } \sigma _ { j, k } ^ { z } - \sum _ { i = 1 } ^ { N } \sum _ { k = 1 } ^ { m } \frac { h _ { i } } { m } \sigma _ { i, k } ^ { z }

- \text{ coefficient of the third term of RHS is always negative} , and thus the

\text{$\mathcal{C}$} = - \sum _ { ( i, j ) } \sum _ { k = 1 } ^ { m } \frac { J _ { i j } } { m } \sigma _ { i, k } ^ { z } \sigma _ { j, k } ^ { z }.$$

The coefficient of the third term of RHS is always negative, and thus the interaction along the Trotter axis is always ferromagnetic. This ferromagnetic interaction becomes strong as the value of Γ decreases. This is called the Suzuki-Trotter decomposition. 84,85

So far we explained the Monte Carlo method as a tool for obtaining the equilibrium state. However we can also use this method to investigate stochastic dynamics of strongly correlated systems, since the Monte Carlo

method is originally based on the master equation. In terms of optimization problem, our purpose is to obtain the ground state of the given Hamiltonian. Then we decrease transverse field gradually and obtain a solution. There are many Monte Carlo studies in which the quantum annealing succeeds to obtain a better solution than that by the simulated annealing. 5,8-10,12,14,86

## 3.2. Deterministic Method Based on Mean-Field Approximation

In the previous section, we considered the Monte Carlo method in which time-evolution is treated as stochastic dynamics. In this section, on the other hand, we explain a deterministic method based on mean-field approximation according to Refs. [87,88]. Before we consider the quantum annealing based on the mean-field approximation, we treat the Ising model with random interactions and site-dependent longitudinal fields given by

$$\mathcal { H } _ { \text {Ising} } = - \sum _ { \langle i , j \rangle } J _ { i j } \sigma _ { i } ^ { z } \sigma _ { j } ^ { z } - \sum _ { i = 1 } ^ { N } h _ { i } \sigma _ { i } ^ { z } .$$

When the transverse field is absent, the molecular field of the i -th spin is given by Eq. (34). Then an equation which determines expectation value of the i -th spin at temperature T (= β -1 ) is given by

$$m _ { i } ^ { z } = \frac { e ^ { \beta h _ { i } ^ { ( e f f ) } } - e ^ { - \beta h _ { i } ^ { ( e f f ) } } } { e ^ { \beta h _ { i } ^ { ( e f f ) } } + e ^ { - \beta h _ { i } ^ { ( e f f ) } } } = \tanh ( \beta h _ { i } ^ { ( e f f ) } ) .$$

In the mean-field level, we approximate that the state σ z j is equal to the expectation value m z j in Eq. (34), and we obtain

$$m _ { i } ^ { z } = \tanh \left [ \beta \left ( \sum _ { j } ^ { \prime } J _ { i j } m _ { j } ^ { z } + h _ { i } \right ) \right ] ,$$

which is often called self-consistent equation.

We can obtain equilibrium value in the mean-field level by iterating the following equation until convergence:

$$m _ { i } ^ { z } ( t + 1 ) = \tanh ( \beta h _ { i } ^ { ( e f f ) } ( t ) ) , \quad h _ { i } ^ { ( e f f ) } ( t ) = \sum _ { j } { ^ { \prime } J _ { i j } m _ { j } ^ { z } ( t ) + h _ { i } . } \ \ ( 5 2 )$$

In order to judge the convergence, we introduce a distance which represents difference between the state at t -th step and that at ( t +1)-th step as follows:

$$d ( t ) \colon = \frac { 1 } { N } \sum _ { i = 1 } ^ { N } | m _ { i } ^ { z } ( t + 1 ) - m _ { i } ^ { z } ( t ) | \, .$$

When the quantity d ( t ) is less than a given small value (typically ∼ 10 -8 or more smaller value), we judge that the calculation is converged. We summarize this method:

- Step 1 We prepare a initial state arbitrary.

- Step 2 We choose a spin randomly.

- Step 3 We calculate the molecular field given by Eq. (34) at the chosen site in Step 2.

- Step 4 We change the value of the chosen spin in Step 2 according to the obtained molecular field in Step 3.

- Step 5 We continue from Step 2 to Step 4 until the distance d ( t ) converges to small value.

The differences between the Monte Carlo method and this method are Step 4 and Step 5. We can perform the simulated annealing by decreasing temperature and using the state obtained in Step 5 as the initial state in Step 1 at the time changing temperature c .

Next we explain a quantum version of this method. Here we apply transverse field as a quantum field. We consider the Hamiltonian given by

$$\hat { \mathcal { H } } = - \sum _ { \langle i , j \rangle } J _ { i j } \hat { \sigma } ^ { z } _ { i } \hat { \sigma } ^ { z } _ { j } - \sum _ { i = 1 } ^ { N } h _ { i } \hat { \sigma } ^ { z } _ { i } - \Gamma \sum _ { i = 1 } ^ { N } \hat { \sigma } ^ { x } _ { i } .

\hat { \mathcal { H } } & = - \sum _ { \langle i , j \rangle } J _ { i j } \hat { \sigma } ^ { z } _ { i } \hat { \sigma } ^ { z } _ { j } - \sum _ { i = 1 } ^ { N } h _ { i } \hat { \sigma } ^ { z } _ { i } - \Gamma \sum _ { i = 1 } ^ { N } \hat { \sigma } ^ { x } _ { i } . \\$$

The density matrix of the equilibrium state is

$$\hat { \rho } = \frac { \exp ( - \beta \hat { H } ) } { T r \exp ( - \beta \hat { H } ) } = \frac { \sum _ { n = 1 } ^ { 2 ^ { N } } \exp ( - \beta \epsilon _ { n } ) \, | \lambda _ { n } \rangle \, \langle \lambda _ { n } | } { \sum _ { n = 1 } ^ { 2 ^ { N } } \exp ( - \beta \epsilon _ { n } ) } ,$$

where /epsilon1 n and | λ n 〉 denote the n -th eigenenergy and the corresponding eigenvector. The density matrix satisfies the variational principle that minimizes free energy:

$$F = \min _ { \hat { \rho } } \left [ T r \left ( \hat { H } + \beta ^ { - 1 } \ln \hat { \rho } \right ) \hat { \rho } \right ] ,

<text><loc_465><loc_152><loc_500><loc_200>(56)</text>$$

where the logarithm of the matrix is defined by the series expansion as well as the definition of the matrix exponential (see Eq. (37)). Since it is difficult to obtain the density matrix, we have to consider alternative strategy as follows.

c If we want to decrease temperature rapidly, we choose not so small value for judgement of convergence.

A reduced density matrix is defined as

$$\hat { \rho } _ { i } \colon = T r ^ { \prime } \, \hat { \rho } = \frac { 1 } { 2 } \left ( \hat { I } + m _ { i } ^ { z } \hat { \sigma } ^ { z } + m _ { i } ^ { x } \hat { \sigma } ^ { x } \right ) ,$$

where Tr ′ indicates trace over spin states except the i -th spin. The values m z i and m x i are calculated by

$$m _ { i } ^ { z } = T r \left ( \hat { \sigma } _ { i } ^ { z } \hat { \rho } \right ) , \quad m _ { i } ^ { x } = T r \left ( \hat { \sigma } _ { i } ^ { x } \hat { \rho } \right ) . \quad \ \ ( 5 8 )$$

The reduced density matrix satisfies the following relations:

$$T r \left ( \hat { \rho } _ { i } \right ) = 1 , \quad T r \left ( \hat { \sigma } _ { i } ^ { z } \hat { \rho } _ { i } \right ) = m _ { i } ^ { z } , \quad T r \left ( \hat { \sigma } _ { i } ^ { x } \hat { \rho } _ { i } \right ) = m _ { i } ^ { x } .$$

Here we assume that the density matrix can be represented by direct products of the reduced density matrices:

$$\hat { \rho } \simeq \prod _ { i = 1 } ^ { N } \hat { \rho } _ { i } ,$$

which is mean-field approximation (in other words, decoupling approximation). Then, the free energy is expressed as

$$F \simeq \min _ { \{ \hat { \rho } _ { i } \} } \mathcal { F } ( \{ \hat { \rho } _ { i } \} ) , \quad \ \ ( 6 1 )$$

$$\mathcal { F } ( \{ \hat { \rho } _ { i } \} ) = - \sum _ { ( i , j ) } J _ { i j } m _ { i } ^ { z } m _ { j } ^ { z } - \sum _ { i = 1 } ^ { N } h _ { i } m _ { i } ^ { z } - \Gamma \sum _ { i = 1 } ^ { N } m _ { i } ^ { x }

$$

From the variation of F ( { ˆ ρ i } ) under the normalization condition, we obtain the following relations:

$$\begin{array} { r l } & { \hat { \rho } _ { i } = \frac { \exp ( - \beta \hat { \mathcal { H } } _ { i } ) } { T r \left [ \exp ( - \beta \hat { \mathcal { H } } _ { i } ) \right ] } , } \\ & { t = \left ( - h _ { i } = \sum _ { j = 1 } ^ { \prime } J _ { i j } m _ { j } ^ { z } + \Gamma \right ) } \end{array}$$

Then the reduced density matrix is represented by using the n -th ( n = 1 , 2) eigenvalues /epsilon1 ( i ) n and the corresponding eigenvectors | λ ( i ) n 〉 of ˆ H i :

$$\hat { \mathcal { H } } _ { i } = \begin{pmatrix} - h _ { i } - \sum _ { j } ^ { \prime } J _ { i j } m _ { j } ^ { z } & - \Gamma \\ - \Gamma & + h _ { i } + \sum _ { j } ^ { \prime } J _ { i j } m _ { j } ^ { z } \end{pmatrix} .$$

$$\hat { \rho } _ { i } = \frac { \exp ( - \beta \epsilon ^ { ( i ) } _ { 1 } ) \, | \lambda ^ { ( i ) } _ { 1 } \rangle \, \langle \lambda ^ { ( i ) } _ { 1 } | + \exp ( - \beta \epsilon ^ { ( i ) } _ { 2 } ) \, | \lambda ^ { ( i ) } _ { 2 } \rangle \, \langle \lambda ^ { ( i ) } _ { 2 } | } { \exp ( - \beta \epsilon ^ { ( i ) } _ { 1 } ) + \exp ( - \beta \epsilon ^ { ( i ) } _ { 2 } ) } .$$

We can also obtain the equilibrium values of physical quantities as well as the case for Γ = 0:

$$m _ { i } ^ { z } ( t + 1 ) = T r ( \hat { \sigma } _ { i } ^ { z } \hat { \rho } _ { i } ( t ) ) , \quad m _ { i } ^ { x } ( t + 1 ) = T r ( \hat { \sigma } _ { i } ^ { x } \hat { \rho } _ { i } ( t ) ) , \quad ( 6 6 )$$

$$\begin{array} { r l } & { \hat { \rho } _ { i } ( t ) = \frac { \exp ( - \beta \mathcal { H } _ { i } ( t ) ) } { T r \exp ( - \beta \mathcal { H } _ { i } ( t ) ) } , } \\ & { t = \left ( - h _ { i } - \sum _ { j = 1 } ^ { \prime } I _ { i j } m _ { z } ^ { z } ( t ) \right ) + \frac { - \Gamma } { } . } \end{array}$$

We continue the above self-consistent equation until the following distance converges:

$$\hat { \mathcal { H } } _ { i } ( t ) = \left ( - h _ { i } - \sum _ { j } ^ { \prime } J _ { i j } m _ { j } ^ { z } ( t ) - \Gamma \\ - \Gamma + h _ { i } + \sum _ { j } ^ { \prime } J _ { i j } m _ { j } ^ { z } ( t ) \right ) .$$

$$d ( t ) \coloneqq \frac { 1 } { 2 N } \sum _ { i = 1 } ^ { N } \left ( | m _ { i } ^ { z } ( t + 1 ) - m _ { i } ^ { z } ( t ) | + | m _ { i } ^ { x } ( t + 1 ) - m _ { i } ^ { x } ( t ) | \right ) .$$

If the temperature is zero, the reduced density matrix should be

$$\hat { \rho } _ { i } = | \lambda ^ { ( i ) } _ { 1 } \rangle \, \langle \lambda ^ { ( i ) } _ { 1 } | \, ,$$

where we consider the case for /epsilon1 ( i ) 1 < /epsilon1 ( i ) 2 . Note that if and only if -h i -∑ ′ j J ij m z j = Γ = 0, /epsilon1 ( i ) 1 = /epsilon1 ( i ) 2 is satisfied. Then if we perform the quantum annealing at T = 0, we have to know only the ground state of the local Hamiltonian ˆ H i . The procedure is the same as the case for finite temperature. By using the method, we can obtain a better solution than that obtained by the simulated annealing for some optimization problems. Recently, other type of implementation method based on mean-field approximation was proposed. 13 The method is a quantum version of the variational Bayes inference. 89 We can also obtain a better solution than the conventional variational Bayes inference.

## 3.3. Real-Time Dynamics

In Sec. 3.1 and Sec. 3.2, we considered artificial time-development rules such as the Markov chain Monte Carlo method and mean-field dynamics. In this section, we explain real-time dynamics which is expressed by the time-dependent Schr¨ odinger equation:

$$i \frac { \partial } { \partial t } \left | \psi ( t ) \right \rangle = \hat { \mathcal { H } } ( t ) \left | \psi ( t ) \right \rangle , \quad \, ( 7 1 )$$

where ˆ H ( t ) and | ψ ( t ) 〉 denote the time-dependent Hamiltonian and the wave function at time t , respectively. The solution of this equation is given

by

If we use the time-dependent Hamiltonian including time-dependent quantum field, we can perform the quantum annealing by decreasing the quantum field gradually. To obtain the solution, it is necessary to decide the initial state for Eq. (72). Since our purpose is to obtain the ground state of the given Hamiltonian which represents the optimization problem, we have no way to know the preferable initial state that leads to the ground state definitely in the adiabatic limit. However, in general, we often use a 'trivial state' as the initial state. Actually, it goes well in many cases. For instance, when we consider the Ising model with time-dependent transverse field which is given by

$$| \psi ( t ) \rangle = \exp \left [ - i \int _ { 0 } ^ { t } \hat { \mathcal { H } } ( t ^ { \prime } ) d t ^ { \prime } \right ] | \psi ( t = 0 ) \rangle \, .$$

$$\hat { \mathcal { H } } ( t ) = - \sum _ { i , j } J _ { i j } \hat { \sigma } _ { i } ^ { z } \hat { \sigma } _ { j } ^ { z } - \Gamma ( t ) \sum _ { i = 1 } ^ { N } \hat { \sigma } _ { i } ^ { x } ,$$

we set the ground state for large Γ as the initial state, hence the initial state is set as

$$\left | \psi ( t = 0 ) \right \rangle = \left | \rightarrow \rightarrow \cdots \rightarrow \right \rangle ,$$

where |→〉 denotes the eigenstate of ˆ σ x :

$$| \rightarrow \rangle \colon = { \frac { 1 } { \sqrt { 2 } } } ( | \uparrow \rangle + | \downarrow \rangle ) .$$

In real-time dynamics, in order to obtain the ground state by using given initial condition, it is important whether there is level crossing. If there is no level crossing, the system can necessarily reach the ground state by the quantum annealing in the adiabatic limit. To show this fact, we first consider a single spin system under time-dependent longitudinal magnetic field. The Hamiltonian is given by

$$\hat { \mathcal { H } } _ { \text {single} } ( t ) = - h ( t ) \hat { \sigma } ^ { z } = \left ( \begin{array} { c c } - h ( t ) & 0 \\ 0 & h ( t ) \end{array} \right ) .$$

Suppose we set | ψ (0) 〉 = |↓〉 as the initial state. For arbitrary sweeping schedules, the state at arbitrary positive t is obtained by

$$| \psi ( t ) \rangle = \exp \left [ - i \int _ { 0 } ^ { t } \hat { \mathcal { H } } _ { \text {single} } ( t ^ { \prime } ) d t ^ { \prime } \right ] | \psi ( 0 ) \rangle = | \downarrow \rangle \, .$$

This is because the state |↓〉 is the eigenstate of the instantaneous Hamiltonian for arbitrary time t . In general, when there is a good quantum number

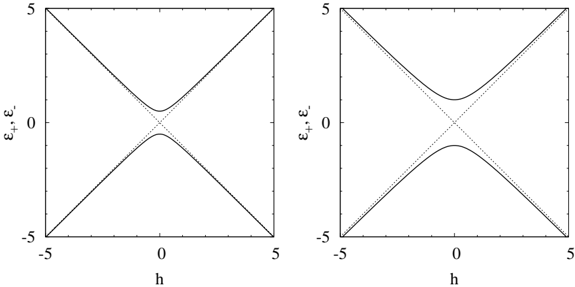

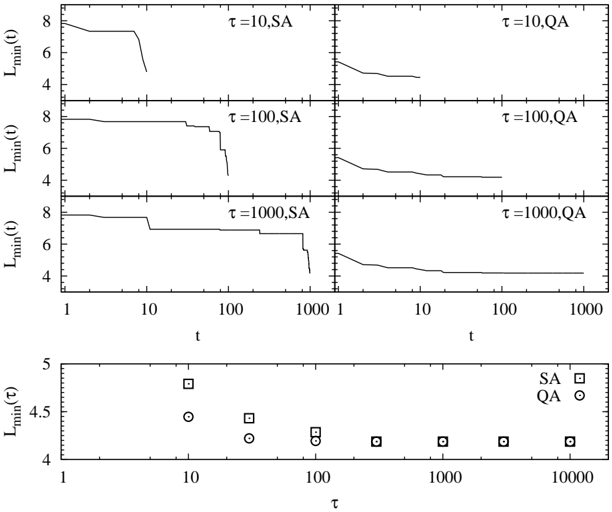

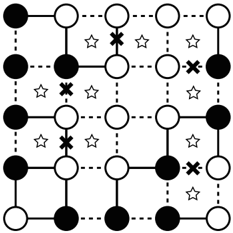

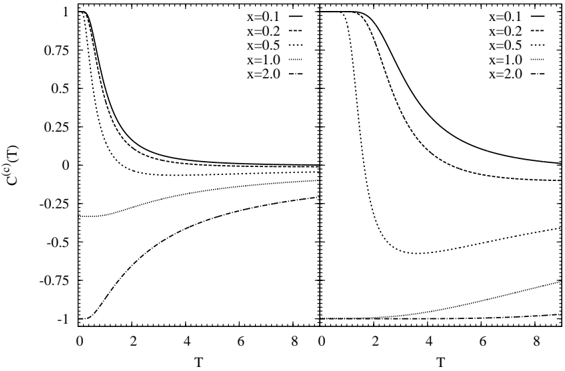

Fig. 2. Eigenenergies of the single spin system under longitudinal and transverse magnetic fields for Γ = 0 . 5 (left panel) and Γ = 1 (right panel). The dotted lines represent eigenenergies for Γ = 0.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Chart Type: Line Graphs

### Overview

The image contains two line graphs, each displaying the relationship between a variable 'h' on the x-axis and 'ε+', 'ε-' on the y-axis. Both graphs show two solid lines and two dotted lines. The left graph shows the solid and dotted lines overlapping at h=0, while the right graph shows the solid lines diverging from the dotted lines near h=0.

### Components/Axes

* **X-axis (Horizontal):** 'h', with scale markers at -5, 0, and 5.

* **Y-axis (Vertical):** 'ε+', 'ε-', with scale markers at -5, 0, and 5.

* **Lines:** Each graph contains two solid lines and two dotted lines.

### Detailed Analysis

**Left Graph:**

* **Solid Lines:**

* One solid line slopes upwards from approximately (-5, -5) to (5, 5).

* The other solid line slopes downwards from approximately (-5, 5) to (5, -5).

* **Dotted Lines:**

* One dotted line slopes upwards from approximately (-5, -5) to (5, 5), overlapping with the solid line.

* The other dotted line slopes downwards from approximately (-5, 5) to (5, -5), overlapping with the solid line.

* The dotted lines intersect at (0,0).

**Right Graph:**

* **Solid Lines:**

* One solid line starts at approximately (-5, -5), curves upwards, flattens near (0, -2), then continues upwards to (5, 5).

* The other solid line starts at approximately (-5, 5), curves downwards, flattens near (0, 2), then continues downwards to (5, -5).

* **Dotted Lines:**

* One dotted line slopes upwards from approximately (-5, -5) to (5, 5).

* The other dotted line slopes downwards from approximately (-5, 5) to (5, -5).

* The dotted lines intersect at (0,0).

### Key Observations

* In the left graph, the solid and dotted lines are indistinguishable, suggesting they represent the same relationship under certain conditions.

* In the right graph, the solid lines deviate from the dotted lines near h=0, indicating a change in the relationship between 'h' and 'ε+', 'ε-' under different conditions.

* Both graphs show symmetry around the origin (0,0).

### Interpretation

The graphs likely represent energy levels (ε+, ε-) as a function of some parameter 'h'. The left graph could represent a simplified model where the relationship is linear. The right graph shows a more complex relationship where the energy levels exhibit a gap or splitting near h=0. This could be due to some interaction or perturbation that is not present in the simplified model. The dotted lines likely represent a theoretical or unperturbed state, while the solid lines represent the actual or perturbed state. The divergence of the solid lines from the dotted lines in the right graph indicates the effect of this perturbation.

</details>

and the initial state is set to be the corresponding eigenstate, the good quantum number is conserved. Then when we perform the quantum annealing method based on the real-time dynamics, we should take care of the symmetries of the considered Hamiltonian. From this, we can obtain the ground state of the considered system in the adiabatic limit if there is no level crossing. In practice, however, since we change magnetic field with finite speed, a nonadiabatic transition is inevitable. To show this fact, we consider a single spin system under longitudinal and transverse magnetic fields. The Hamiltonian of this system is given by

$$\begin{array} { r } { \hat { \mathcal { H } } _ { s i n g l e } = - h \hat { \sigma } ^ { z } - \Gamma \hat { \sigma } ^ { x } = \left ( \begin{matrix} - h & - \Gamma \\ - \Gamma & h \end{matrix} \right ) . } \end{array}$$

Since the eigenenergies are /epsilon1 ± = ± √ h 2 +Γ 2 , the smallest value of the energy difference between the ground state and the excited state is 2Γ at h = 0 as shown in Fig. 2.

Suppose we consider the single spin system under time-dependent longitudinal magnetic field and fixed transverse magnetic field. The Hamiltonian is given by

$$\begin{array} { r } { \hat { \mathcal { H } } _ { s i n g l e } ( t ) = - h ( t ) \hat { \sigma } ^ { z } - \Gamma \hat { \sigma } ^ { x } = \binom { - v t - \Gamma } { - \Gamma \ v t } , } \end{array}$$

where we adopt h ( t ) = vt as time-dependent longitudinal field. Here we set t = -∞ as the initial time. The initial state is set to be the ground

state of the Hamiltonian at the initial time | ψ ( t = -∞ ) 〉 = |↓〉 . The ground state at t = + ∞ in the adiabatic limit is | ψ (ad) ( t = + ∞ ) 〉 = |↑〉 . Then a characteristic value which represents the nature of this dynamics is a probability of staying in the ground state at t = + ∞ which is defined by

∣ ∣ The probability of staying in the ground state should depend on the sweeping speed v and the characteristic energy gap and can be obtained by the Landau-Zener-St¨ uckelberg formula: 90-92

$$\begin{array} { r l } & { p b o b i t y o f s t a y n g i n t h e g r o u n d s t a t \, t = + \infty w h i c h i s d e n f i e n d b y } \\ & { P _ { s t a y } = \left \langle \psi ^ { ( a d ) } ( t = + \infty ) \right | \exp \left [ - i \int _ { - \infty } ^ { + \infty } \hat { H } _ { s i n g l e } ( t ^ { \prime } ) d t ^ { \prime } \right ] \left | \psi ( t = - \infty ) \right \rangle } \\ & { = \left \langle \uparrow \left | \exp \left [ - i \int _ { - \infty } ^ { + \infty } \hat { H } _ { s i n g l e } ( t ^ { \prime } ) d t ^ { \prime } \right ] \right | \downarrow \right \rangle . } \end{array}$$

$$P _ { s t a y } = 1 - \exp \left [ - \frac { \pi ( \Delta E ) ^ { 2 } } { 4 v \Delta m } \right ] ,$$

where ∆ E and ∆ m represent the energy gap at the avoided level-crossing point and the difference of the magnetizations in the adiabatic limit, respectively. In this case ∆ E = 2Γ and ∆ m = 2.

In many cases, typical shape of energy structure can be approximated by simple systems such as the single spin system. Then the knowledge of the simple transitions such as the Landau-Zener-St¨ ukelberg transition and the Rosen-Zener transition 93 is useful to analyze the efficiency of the quantum annealing based on the real-time dynamics.

## 3.4. Experiments

Transverse field response of the Ising model has been also established in experimentally. 94-103 A dipolar-coupled disordered magnet LiHo x Y 1 -x F 4 has easy-axis anisotropy and can be represented by the Ising model. 104,105 If we apply the longitudinal magnetic field (in other words, the magnetic field is parallel to the easy-axis), phase transition does not take place. 106,107 However, when we apply the transverse magnetic field (in other words, the magnetic field is perpendicular to the easy-axis), phase transitions occur and interesting dynamical properties shown in Ref.[ 6] were observed. In the phase diagram of this material, there are three phases. The ferromagnetic phase appears at intermediate temperature and low transverse magnetic field, whereas at low temperature and low transverse magnetic field, the glassy critical phase 108 appears. The paramagnetic phase exists at the other region. The glassy critical phase exhibits slow relaxation in general. It found that the characteristic relaxation time obtained by ac field susceptibility for

quantum cooling in which we decrease transverse field after temperature is decreased is lower than that for temperature cooling case. 6 From this result, it has been expected that the effect of the quantum fluctuation helps us to obtain the best solution of the optimization problem.

## 4. Optimization Problems

Optimization problems are defined by composition elements of the considered problem and real-valued cost/gain function. They are problems to obtain the best solution such that the cost/gain function takes the minimum/maximum value. In general, the number of candidate solutions increases exponentially with the number of composition elements in optimization problems. Although we can obtain the best solution by a brute force in principle, it is difficult to obtain the best solution by such a naive method in practice. Then we have to invent an innovative method for obtaining the best solution in a practical time and limited computational resource. Optimization problems can be expressed by the Ising model in many cases. Once optimization problems are mapped onto the Ising model, we can use methods that have been considered in statistical physics and computational physics such as the quantum annealing.

In the anterior half of this section, we explain the correspondence between the Ising model and the traveling salesman problem which is one of famous optimization problems. We demonstrate the quantum annealing based on the quantum Monte Carlo simulation for this problem. In the posterior half, we explain the clustering problem as the example expressed by the Potts model which is a straightforward extension of the Ising model.

## 4.1. Traveling Salesman Problem

In this section, we consider the traveling salesman problem which is one of famous optimization problems. The setup of the traveling salesman problem is as follows:

- There are N cities.

- We move from the i -th city to the j -th city where the distance between them is /lscript i,j .

- We can pass through a city only once.

- We return the initial city after we pass through all the cities.

The traveling salesman problem is to find the minimum path under above conditions. The length of a path is given by

$$L \coloneqq \sum _ { a = 1 } ^ { N } \ell _ { c _ { a } , c _ { a + 1 } } ,$$

where c a denotes the city where we pass through at the a -th step. In the traveling salesman problem, the length of a path is a cost function. From the fourth condition, the following relation should be satisfied:

$$c _ { N + 1 } = c _ { 1 } . \quad ( 8 3 )$$

In terms of mathematics, the traveling salesman problem is to find { c a } N a =1 so as to minimize the path L under the above four conditions.

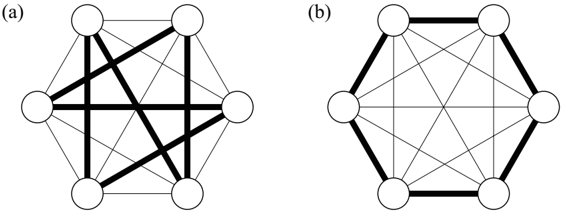

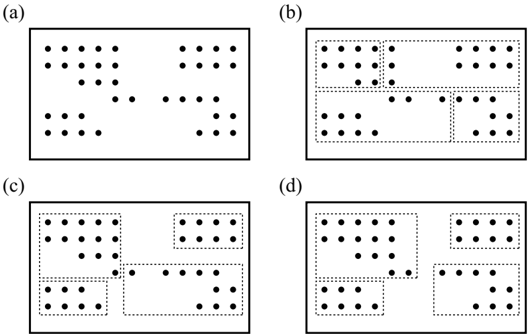

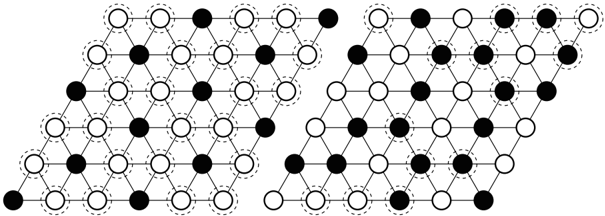

If the number of cities N is small, it is easy to obtain the shortest path by a brute force. We can easily find the best solution of the traveling salesman problem for N = 6 shown in Fig. 3. Figure 3 (a) and (b) represent a bad solution and the best solution where the length of the path L is minimum, respectively. As the number of cities increases, the traveling salesman problem becomes seriously difficult since the number of candidate solutions is ( N -1)! / 2. Then if we want to deal with the traveling salesman problem with large N , we have to adopt smart and easy practical methods such as the simulated annealing instead of a brute force. To use the simulated annealing, we map the traveling salesman problem onto the Ising model with a couple of constraints as follows.

We consider N × N two-dimensional lattice. Let n i,a be the microscopic state which represents the state at the i -th city at the a -th step. The value of n i,a can be taken either 0 or 1. If we pass through the i -th city at the

Fig. 3. Traveling salesman problem for N = 6. Thin lines and thick lines denote the permitted paths and selected paths, respectively. (a) Bad solution. (b) The best solution in which the length of the path is minimum.

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Network Diagrams: Comparison of Two Network Configurations

### Overview

The image presents two network diagrams, labeled (a) and (b), each consisting of six nodes arranged in a hexagonal configuration. The diagrams illustrate different connection patterns between these nodes, with some connections emphasized by thicker lines.

### Components/Axes

* **Nodes:** Six nodes represented as white circles, arranged in a hexagonal shape in both diagrams.

* **Edges:** Lines connecting the nodes, representing relationships or connections. Some edges are thin, while others are thick, indicating a distinction in the type or strength of the connection.

* **Labels:**

* **(a):** Located in the top-left corner of the left diagram.

* **(b):** Located in the top-left corner of the right diagram.

### Detailed Analysis

**Diagram (a):**

* **Thick Edges:**

* A thick edge connects the top-left node to the bottom-left node.

* A thick edge connects the top-left node to the center node.

* A thick edge connects the top-right node to the bottom-right node.

* A thick edge connects the bottom-left node to the center node.

* A thick edge connects the bottom-right node to the center node.

* **Thin Edges:** All other possible connections between the nodes are represented by thin lines.

**Diagram (b):**

* **Thick Edges:**

* The six nodes are connected by thick edges, forming a hexagon.

* **Thin Edges:** All possible diagonal connections between the nodes are represented by thin lines.

### Key Observations

* Diagram (a) shows a more clustered connection pattern, with a central node having multiple thick connections.

* Diagram (b) shows a more uniform connection pattern, with all nodes having a thick connection to their immediate neighbors in the hexagon.

### Interpretation

The diagrams likely represent different network topologies or configurations. Diagram (a) could represent a network with a central hub, while diagram (b) could represent a more distributed or ring-like network. The thickness of the edges could indicate the strength or importance of the connection. The diagrams could be used to compare the properties of these different network configurations, such as their resilience to node failure or their efficiency in transmitting information.

</details>

a -th step, n i,a is unity whereas n i,a = 0 if we do not pass through the i -th city at the a -th step. The third condition can be represented by

$$locally, the city at which it is obvious that we can pass through only one city at time t = 1. (for ∀ i).$$

Furthermore, since it is obvious that we can pass through only one city at the a -th step, this constraint is expressed by

$$\sum _ { i = 1 } ^ { N } n _ { i , a } = 1 \quad ( \text {for $\forall a$} ) .$$

Then the length of the path L can be rewritten as

$$L = \sum _ { a = 1 } ^ { N } \sum _ { i , j } \ell _ { i , j } n _ { i , a } n _ { j , a + 1 } = \frac { 1 } { 4 } \sum _ { a = 1 } ^ { N } \sum _ { i , j } \ell _ { i , j } \sigma _ { i , a } ^ { z } \sigma _ { j , a + 1 } ^ { z } + c o n s t . ,$$

where the Ising spin variable σ z i,a = ± 1 is defined by

$$\sigma _ { i , a } ^ { z } \colon = 2 n _ { i , a } - 1 . & & ( 8 7 )$$

Here we used the following relation derived by Eqs. (84) and (85):

$$\sum _ { a = 1 } ^ { N } \sum _ { i , j } \ell _ { i , j } \sigma _ { i , a } ^ { z } = \text {const.}$$

Then the length of the path can be represented by the Ising spin Hamiltonian on N × N two-dimensional lattice. In general, it is difficult to obtain the stable state of the Ising model with some constraints regarded as some kind of frustration which will be shown in Sec. 5.2.

## 4.1.1. Monte Carlo Method

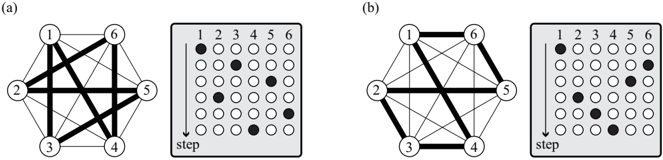

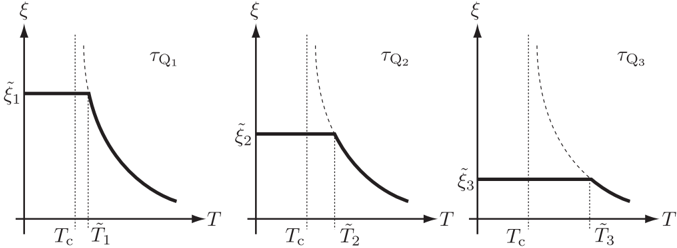

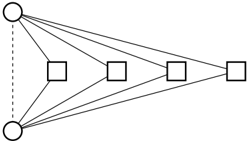

We explain how to implement the Monte Carlo method in the traveling salesman problem. We cannot use the single-spin-flip method which was explained in Sec. 3.1 because of existence of two constraints given by Eqs. (84) and (85). The simplest way of transition between states is realized by flipping four spins simultaneously as shown in Fig. 4.

Suppose we consider the case that we pass through at the i -th city at the a -th step and pass through at the j -th city at the a ′ -th step, which is described as

$$\sigma _ { i , a } ^ { z } = + 1 , \, \sigma _ { j , a } ^ { z } = - 1 , \, \sigma _ { i , a ^ { \prime } } ^ { z } = - 1 , \, \sigma _ { j , a ^ { \prime } } ^ { z } = + 1 .$$

Fig. 4. The simplest way of flipping method in traveling salesman problem. Transition between the state depicted in (a) and that depicted in (b) occurs. In this case, i = 3, j = 6, a = 2, and a ′ = 5.

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Diagram: Graph Representations and Adjacency Matrices

### Overview

The image presents two graph diagrams, labeled (a) and (b), each accompanied by an adjacency matrix representation. The graphs consist of six nodes (numbered 1 to 6) connected by edges of varying thickness, indicating different weights or strengths of connection. The adjacency matrices use filled circles to represent the presence of an edge between two nodes. The "step" label with a downward arrow suggests a sequential process or state transition.

### Components/Axes

* **Nodes:** Numbered 1 through 6 in each graph.

* **Edges:** Lines connecting the nodes, with varying thickness.

* **Adjacency Matrices:** 6x6 grids representing the connections between nodes. Rows and columns are labeled 1 through 6. Filled circles indicate the presence of an edge.

* **Step Indicator:** A downward arrow labeled "step" next to each matrix.

### Detailed Analysis

**Diagram (a):**

* **Graph:**

* Nodes 1-6 are arranged in a hexagon.

* Thick edges connect:

* 1 and 2

* 2 and 3

* 3 and 4

* 4 and 5

* 5 and 6

* 6 and 1

* 2 and 5

* 1 and 4

* Thin edges connect:

* 1 and 3

* 1 and 5

* 2 and 4

* 2 and 6

* 3 and 5

* 3 and 6

* 4 and 6

* **Adjacency Matrix:**

* Row 1: Filled circle in column 1, column 2, and column 4.

* Row 2: Filled circle in column 2, column 1, column 3, and column 5.

* Row 3: Filled circle in column 3, column 2, and column 4.

* Row 4: Filled circle in column 4, column 1, column 3, and column 5.

* Row 5: Filled circle in column 5, column 2, column 4, and column 6.

* Row 6: Filled circle in column 6, column 5.

**Diagram (b):**

* **Graph:**

* Nodes 1-6 are arranged in a hexagon.

* Thick edges connect:

* 1 and 6

* 2 and 5

* 3 and 4

* Thin edges connect:

* 1 and 2

* 1 and 3

* 1 and 4

* 1 and 5

* 2 and 3

* 2 and 4

* 2 and 6

* 3 and 5

* 3 and 6

* 4 and 5

* 4 and 6

* 5 and 6

* **Adjacency Matrix:**

* Row 1: Filled circle in column 1 and column 6.

* Row 2: Filled circle in column 2 and column 5.

* Row 3: Filled circle in column 3 and column 4.

* Row 4: Filled circle in column 4 and column 3.

* Row 5: Filled circle in column 5 and column 2, and column 6.

* Row 6: Filled circle in column 6, column 1, and column 5.

### Key Observations

* The thick edges in the graphs correspond to filled circles in the adjacency matrices.

* The "step" indicator suggests a transition from the graph in (a) to the graph in (b).

* The adjacency matrices are symmetric, indicating undirected graphs.

* The diagonal elements of the adjacency matrices are filled in row 1, row 2, row 3, row 4, and row 5 in diagram (a), and in row 1, row 2, row 3 in diagram (b), indicating self-loops.

### Interpretation

The image illustrates the relationship between graph representations and adjacency matrices. The graphs visually depict connections between nodes, while the adjacency matrices provide a numerical representation of these connections. The varying thickness of the edges could represent different weights or strengths of the connections. The "step" indicator suggests a transformation or evolution of the graph structure over time or through a process. The change from graph (a) to graph (b) shows a shift in the primary connections between nodes, potentially representing a change in the system being modeled. The adjacency matrices provide a way to quantify and analyze these changes. The self-loops in diagram (a) and diagram (b) indicate that some nodes are connected to themselves.

</details>

The trial state generated by flipping four spins is as follows:

$$\sigma _ { i , a } ^ { z } = - 1 , \, \sigma _ { j , a } ^ { z } = + 1 , \, \sigma _ { i , a ^ { \prime } } ^ { z } = + 1 , \, \sigma _ { j , a ^ { \prime } } ^ { z } = - 1 .$$

The heat-bath method and the Metropolis method can be adopted for the transition probability between the present state and the trial state. In Fig. 4, i = 3, j = 6, a = 2, and a ′ = 5.

It should be noted that without loss of generality the initial condition can be set as

$$\sigma _ { 1 , 1 } = + 1 , \, \sigma _ { i , 1 } = - 1 \quad ( i \neq 1 ) , & & ( 9 1 )$$

/negationslash and thus we can fix the states at the first step ( a = 1) during calculation. The number of interactions in which we try to flip all spins in each Monte Carlo step is ( N -1)( N -2) / 2.

## 4.1.2. Quantum Annealing

In order to perform the quantum annealing, we introduce the transverse field as the quantum fluctuation effect as shown in Sec. 3. The quantum Hamiltonian is given by

$$\hat { \mathcal { H } } = \frac { 1 } { 4 } \sum _ { a = 1 } ^ { N } \sum _ { i , j } \ell _ { i , j } \hat { \sigma } _ { i , a } ^ { z } \hat { \sigma } _ { j , a + 1 } ^ { z } - \Gamma \sum _ { a = 1 } ^ { N } \sum _ { i = 1 } ^ { N } \hat { \sigma } _ { i , a } ^ { x } ,$$

where the first-term corresponds to the length of path and the second-term denotes the transverse field. We can map this quantum Hamiltonian on N × N two-dimensional lattice onto N × N × m three-dimensional Ising model as well as the case which was considered in Sec. 3.1. The effective classical

Hamiltonian derived by the Suzuki-Trotter decomposition is written as

$$\mathcal { H } _ { \text {eff} } & = \frac { 1 } { 4 m } \sum _ { a = 1 } ^ { N } \sum _ { i , j } \sum _ { k = 1 } ^ { m } \ell _ { i , j } \sigma _ { i , a , k } ^ { z } \sigma _ { j , a + 1 , k } ^ { z } \\ & - \frac { 1 } { \beta } \sum _ { a = 1 } ^ { N } \sum _ { i = 1 } ^ { N } \sum _ { k = 1 } ^ { m } \frac { 1 } { 2 } \ln \coth \left ( \frac { \beta \Gamma } { m } \right ) \sigma _ { i , a , k } ^ { z } \sigma _ { i , a , k + 1 } ^ { z } , \quad \sigma _ { i , a , k } ^ { z } = \pm 1 .$$

In the quantum annealing procedure, we have to take care of the constraints given by Eqs. (84) and (85) as stated before. Then the simplest way of changing state is to flip simultaneously four spins on the same layer ( m is fixed) along the Trotter axis.

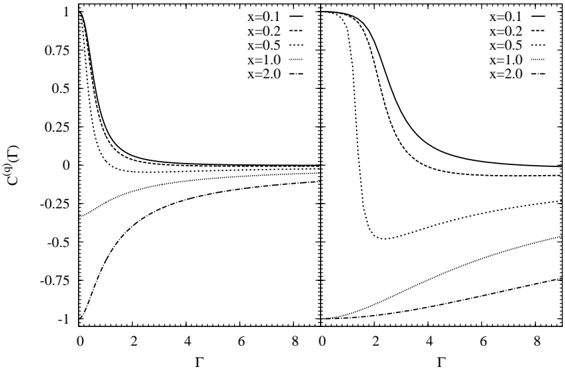

## 4.1.3. Comparison with Simulated Annealing and Quantum Annealing