# Cognitive Science in the era of Artificial Intelligence: A roadmap for reverse-engineering the infant language-learner

## Cognitive Science in the era of Artificial Intelligence: A roadmap for reverse-engineering the infant language-learner

Emmanuel Dupoux

EHESS, ENS, PSL Research University, LSCP, CNRS

emmanuel.dupoux@gmail.com, www.syntheticlearner.net

## Short Abstract

Advances in machine learning and wearable sensors make it possible to envision the construction of systems that would reproduce infant's early language acquisition based on the totality of their sensory input. We propose a series of methodological steps to be taken to make such a reverse engineering approach scientifically useful, and discuss its benefits and shortcomings in relation to experimental and theoretical work in this area.

## Long Abstract

During their first years of life, infants learn the language(s) of their environment at an amazing speed despite large cross cultural variations in amount and complexity of the available language input. Understanding this simple fact still escapes current cognitive and linguistic theories. Recently, spectacular progress in the engineering science, notably, machine learning and wearable technology, o er the promise of revolutionizing the study of cognitive development. Machine learning o ers powerful learning algorithms that can achieve human-like performance on many linguistic tasks. Wearable sensors can capture vast amounts of data, which enable the reconstruction of the sensory experience of infants in their natural environment. The project of 'reverse engineering' language development, i.e., of building an e ective system that mimics infant's achievements appears therefore to be within reach.

Here, we analyze the conditions under which such a project can contribute to our scientific understanding of early language development. We argue that instead of defining a sub-problem or simplifying the data, computational models should address the full complexity of the learning situation, and take as input the raw sensory signals available to infants. This implies that (1) accessible but privacypreserving repositories of home data be setup and widely shared, and (2) models be evaluated at di erent linguistic levels through a benchmark of psycholinguist tests that can be passed by machines and humans alike, (3) linguistically and psychologically plausible learning architectures be scaled up to real data using probabilistic / optimization principles from machine learning. We discuss the feasibility of this approach and present preliminary results.

## Keywords

artificial intelligence, big data, computational modeling, corpus analysis, early language acquisition, infant development, language bootstrapping, machine learning, phonetic learning

## 1 Introduction

In recent years, Artificial Intelligence (AI) has been hitting the headlines with impressive achievements at matching or even beating humans in cognitive tasks (playing go or video games: Mnih et al., 2015; Silver et al., 2016; processing natural language: Ferrucci, 2012; recognizing objects and faces: He, Zhang, Ren, & Sun, 2015; Lu & Tang, 2014) and promising a revolution in manufacturing processes and human society at large. These successes rest both on improvement in computer hardware and on statistical learning techniques, which enable to mimic cognitive functions through the training of machine learning algorithms on large amounts of data. Can AI also revolutionize cognitive science by bringing new insights to the scientific study of human cognition? Can machine learning techniques be used to also shed light on human learning? Within the area of human learning, language has always occupied a special place. It has been at the very core of heated debates and controversies related to the nature / nurture debate (rationalism vs. empiricism, biology vs. culture) and the structure of cognitive processes (connexions vs symbols). Three factors can explain why language is so important within cognitive science. First, the linguistic system is uniquely complex : mastering a language implies mastering a combinatorial sound system (phonetics and phonology), an open ended morphologically structured lexicon, and a compositional syntax and semantics (e.g., Jackendo , 1997). No other animal communication system uses such a complex multilayered organization. On this basis, it has been claimed that humans have evolved (or acquired through a mutation) a dedicated computational architecture to process language (see Chomsky, 1965; Hauser, Chomsky, & Fitch, 2002; Steedman, 2014). Second, the overt manifestations of this system are extremely variable across languages and cultures. Language can be expressed through the oral or manual modality. In the oral modality, some languages use only 3 vowels, other more than 20. Con-

sonants inventories vary from 6 to more than 100. Words can be mostly composed of a single syllable (as in Chinese) or long strings of stems and a xes (as in Turkish). Semantic roles can be identified through fixed positions within constituents, or be identified through functional morphemes, etc. (see Song, 2010, for a typology of language variation). Evidently, infants acquire the relevant variant through learning, not genetic transmission. Third, the human language capacity can be viewed as a finite computational system with the ability to generate a (virtual) infinity of utterances. This turns into a learnability problem for infants: on the basis of finite evidence, they have to induce the (virtual) infinity corresponding to their language. As has been repeatedly discussed since Aristotle, such induction problems do not have a generally valid solution. Therefore, language is simultaneously a human-specific biological trait and a highly variable cultural production, and it poses a di cult learnability problem. Here, we investigate the possibility of using machine learning techniques to shed some light on language acquisition. Specifically, we propose the following approach:

The reverse engineering approach to the study of infant language acquisition consists in constructing computational systems that can, when fed with the same input data, reproduce language acquisition as it is observed in infants.

The idea of using machine learning or AI techniques as a means to study child's language learning is not new (to name a few: Kelley, 1967; Anderson, 1975; Berwick, 1985; Rumelhart & McClelland, 1987; Langley & Carbonell, 1987) although relatively few studies have concentrated on the early phases of language learning (see Brent, 1996b, for a review). What is new, however, is that whereas previous AI approaches were limited to proofs of principle on toy or miniature languages, modern AI techniques have scaled up so much that end-to-end language processing systems working with real inputs are now deployed commercially. This paper examines whether and how such unprecedented change in scale could be put to use to address lingering scientific questions in the field of language development. The structure of the paper is as follows: In Section 2, we present two deep puzzles that modeling approaches should address in order to have a scientific impact: solving the bootstrapping problem, accounting for developmental trajectories. In Section 3, we review past theoretical or modeling work, showing that these puzzles have not, so far, received an adequate answer. In Section 4, we argue that to answer them with reverse engineering, three requirements have to be addressed: modeling should be done on real data, model performance should be compared with that of humans, modeling should be computationally e ective. In Section 5, we argue that within a simplifying framework, these requirements can be reached given current technology, although specific roadblocks need to be lifted. In Section 6 we show that even before these roadblocks are lifted, interesting results can be obtained. In Section 7 we show how the reverse engineering approach can be generalized beyond the simplifying framework presented in Section 5, and we conclude in Section 8.

## 2 Two puzzles of early language development

Most infants spontaneously learn their native(s) language(s) in a matter of a few years of immersion in a linguistic environment. The more we know about this simple fact, the more puzzling it appears. Specifically, we outline two central puzzles that a reverse engineering approach could, in principle help to solve: the bootstrapping problem and developmental trajectories.

## 2.1 The bootstrapping problem

As pointed out in the Introduction, language is a multilayered system comprising several components: phonetics, phonology, morphology, syntax, semantics, pragmatics. The di erent components of language appear interdependent from a learning point of view. For instance, the phoneme inventory of a language is defined through pairs of words that di er minimally in sounds (e.g., "light" vs "right"). This would suggest that to learn phonemes, infants need to first learn words. However, from a processing viewpoint, words are recognized through their phonological constituents (e.g., Cutler, 2012), suggesting that infants should learn phonemes before words. Similar paradoxical co-dependency issues have been noted between other linguistic levels (for instance, syntax and semantics: Pinker, 1987, prosody and syntax: Morgan & Demuth, 1996). In order to learn any one component of the language faculty, many others need to be learned first, creating what has been dubbed a bootstrapping problem. The bootstrapping problem is compounded by the fact that infants do not have to be taught formal linguistics language courses to learn their native language(s). As in other cases of animal communication, infants spontaneously acquire the language(s) of their community by merely being immersed in that community (Pinker, 1994). Experimental and observational studies have revealed that infants start acquiring elements of their language (phonetics, phonology, lexicon, syntax and semantics) even before they can talk (Jusczyk, 1997; Hollich et al., 2000; Werker & Curtin, 2005), and therefore before parents can give them much feedback about their progress into language learning. This suggests that language learning (at least the initial bootstrapping steps) occurs largely without supervisory feedback . 1 Areverse engineering approach has the potential of solving this puzzle by providing a system that can

1 Even in later acquisitions, the nature, universality and e ectiveness of corrective feedback of children's outputs has been debated (see Brown, 1973; Pinker, 1989; Marcus, 1993; Chouinard & Clark, 2003; Saxton, 1997; Clark & Lappin, 2011).

demonstrably bootstrap into language when fed with similar, supervisory poor, inputs.

## 2.2 Accounting for developmental trajectories

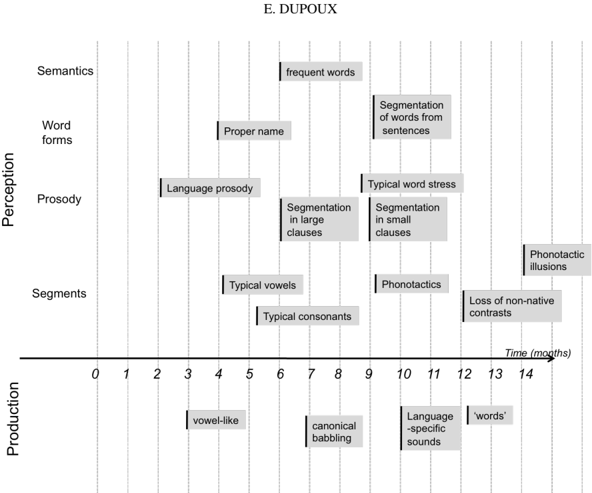

In the last forty years, a large body of empirical work has been collected regarding infant's language achievements during their first years of life. This work has only added more puzzlement. First, given the multi-layered structure of language, one could expect a stage-like developmental tableau where acquisition would proceed as a discrete succession of learning phases organized logically or hierarchically (e.g., building linguistic structure from the low level to the high levels). This is not what is observed (see Figure 1). For instance, infants start di erentiating native from foreign consonants and vowels at 6 months, but continue to fine tune their phonetic categories well after the first year of life (e.g., Sundara, Polka, & Genesee, 2006). However, they start learning about the sequential structure of phonemes (phonotactics, see Jusczyk, Friederici, Wessels, Svenkerud, & Jusczyk, 1993) way before they are done acquiring the phoneme inventory (Werker & Tees, 1984). Even before that, they start acquiring the meaning of a small set of common words (e.g. Bergelson & Swingley, 2012). In other words, instead of a stage-like developmental tableau, the evidence shows that acquisition takes places at all levels more or less simultaneously, in a gradual and largely overlapping fashion. Second, observational studies have revealed considerable variations in the amount of language input to infants across cultures (Shneidman & Goldin-Meadow, 2012) and across socio-economic strata (Hart & Risley, 1995), some of which can exceed an order of magnitude (Weisleder & Fernald, 2013, p. 2146). These variations do impact language achievement as measured by vocabulary size and syntactic complexity (Ho , 2003; Huttenlocher, Waterfall, Vasilyeva, Vevea, & Hedges, 2010; Pan, Rowe, Singer, & Snow, 2005; Rowe & Goldin-Meadow, 2009, among others), but at least for some markers of language achievement, the di erences in outcome are much less extreme than the variations in input. For canonical babbling, for instance, an order of magnitude would mean that some children start to babble at 6 months, and others at 5 years! The observed range is between 6 and 10 months, less than a 1 to 2 ratio. Similarly, reduced range of variations are found for the onset of word production and the onset of word combinations. This suggests a surprising level of resilience to language learning, i.e., some minimal amount of input is su cient to trigger certain landmarks. A reverse engineering approach has the potential of accounting for this otherwise perplexing developmental tableau, and provide quantitative predictions both across linguistic levels (gradual overlapping pattern), and cultural or individual variations in input (resilience).

## 3 Past work

Early language acquisition is primarily an empirical field of research. Much of what we know has been obtained thanks to the patient accumulation of data in two lines of work. The first one is devoted to the collection and manual transcription of parents and infants interactions. A large number of datasets across languages have been collected and organized into repositories that have proved immensely useful to the research community. One prominent example of this is the CHILDES repository (MacWhinney, 2000), which has enabled more than 5000 research papers (according to a google scholar search as of 2016). The other line consists in measuring the linguistic knowledge of infants of various ages across di erent languages through the administration of experimental tests (see Jusczyk, 1997; Bornstein & TamisLeMonda, 2010 for reviews). Besides this impressive activity in data gathering, the field of language development is also actively pursuing theoretical work. Here, we briefly review three major strands related to psycholinguistics, formal linguistics and machine learning, respectively, and argue that even though this work has provided important insights into the acquisition process, it still falls short of accounting for the two puzzles presented in Section 2.

## 3.1 Conceptual frameworks and learning mechanisms

Within developmental psycholinguistics, conceptual frameworks have been proposed to account for key aspects of the developmental trajectories (the competition model: Bates & MacWhinney, 1987; MacWhinney, 1987 ; WRAPSA: Jusczyk, 1997; the emergentist coalition model: Hollich et al., 2000; PRIMIR: Werker & Curtin, 2005; the usage-based theory: Tomasello, 2003; among others). These frameworks present overarching architectures or scenarios that integrate many empirical results. WRAPSA (Jusczyk, 1997) focuses on phonetic learning and lexical segmentation during the first year of life. PRIMIR (Werker & Curtin, 2005) extends WRAPSA by incorporating phonetic and speaker-related categories at an early stage, and meaning and phonemic categories at a later stage. The emergentist coalition model (Hollich et al., 2000) focuses on the attentional, social and linguistic factors that modulate the association between lexical forms and meanings at di erent ages. The competition model (Bates & MacWhinney, 1987; MacWhinney, 1987) and the usage-based theory (Tomasello, 2003) focus on grammar learning; the former is lexicon-based and focuses on mechanisms of competitive learning. The latter is construction-based and focuses on social and pragmatic learning mechanisms. While these conceptual framework are very useful in summarizing and organizing a vast amount of empirical results, and could serve as sources of inspiration for computational models, they are not specific enough to demonstrate that their core

Figure 1 . Sample studies illustrating infant's language development. The left edge of each box is aligned to the earliest age at which the result has been documented.

<details>

<summary>Image 1 Details</summary>

### Visual Description

\n

## Timeline Diagram: Stages of Language Development

### Overview

This diagram presents a timeline illustrating the development of language skills in infants, categorized by perception and production. The x-axis represents time in months (0-14), and the y-axis lists different aspects of language development: Semantics, Word Forms, Prosody, Segments, and Production. Each stage is represented by a grey rectangular bar indicating the approximate age range when that skill emerges.

### Components/Axes

* **X-axis:** Time (months), ranging from 0 to 14, with markings at intervals of 1 month.

* **Y-axis:** Language Development Aspects, including:

* Semantics

* Word Forms

* Prosody

* Segments

* Production

* **Title:** "E. DUPOUX" at the top center.

* **Bars:** Grey rectangular bars representing the approximate time frame for each language skill.

### Detailed Analysis or Content Details

The diagram shows the following stages and their approximate timing:

* **Production:**

* "vowel-like" sounds: approximately 0-4 months.

* "canonical babbling": approximately 6-8 months.

* "Language-specific sounds": approximately 10-12 months.

* "words": approximately 12-14 months.

* **Segments:**

* "Typical vowels": approximately 4-5 months.

* "Typical consonants": approximately 5-6 months.

* "Phonotactics": approximately 10-11 months.

* "Loss of non-native contrasts": approximately 12-14 months.

* "Phonotactic illusions": approximately 13-14 months.

* **Prosody:**

* "Language prosody": approximately 2-4 months.

* "Segmentation in large clauses": approximately 7-9 months.

* "Segmentation in small clauses": approximately 10-11 months.

* "Typical word stress": approximately 11-12 months.

* **Word Forms:**

* "Proper name": approximately 4-5 months.

* "Segmentation of words from sentences": approximately 11-12 months.

* **Semantics:**

* "frequent words": approximately 12-14 months.

### Key Observations

* The diagram suggests a hierarchical development of language skills, starting with basic production of sounds (vowel-like) and progressing to more complex skills like semantics and word segmentation.

* There is overlap between the stages, indicating that language development is not strictly linear.

* The diagram highlights the early emergence of prosodic features (language prosody around 2-4 months) before more complex segmental features (typical vowels around 4-5 months).

* The later stages (12-14 months) show a convergence of skills related to word recognition and meaning.

### Interpretation

This diagram provides a simplified model of language acquisition, likely intended for educational or research purposes. It illustrates the developmental sequence of language skills, emphasizing the interplay between perception and production. The timeline suggests that infants initially focus on producing basic sounds and perceiving prosodic features, gradually developing the ability to segment speech, recognize words, and understand their meanings. The diagram's author, E. Dupoux, likely used this visualization to communicate a specific theoretical framework regarding language development. The diagram does not provide specific data points or statistical analysis, but rather a qualitative overview of the typical stages and their approximate timing. The overlapping bars suggest that these stages are not discrete but rather represent a continuous process of development. The diagram is a useful tool for understanding the general trajectory of language acquisition, but it should be noted that individual children may develop at different rates.

</details>

principles can e ectively solve the language bootstrapping problem. Nor do they provide quantitative predictions about the observed resilience in developmental trajectories and their variations as a function of language input at the individual, linguistic or cultural level. Psycholinguists often supplement conceptual frameworks with propositions for specific learning mechanisms which are tested using an artificial language paradigm. As an example, a mechanism based on the tracking of statistical modes in phonetic space has been proposed to underpin phonetic category learning in infancy. It was tested in infants through the presentation of a simplified language (a continuum of syllables between / da / and / ta / ) where the statistical distribution of acoustic tokens was controlled (Maye, Werker, & Gerken, 2002). It was also modeled computationally using unsupervised clustering algorithms and tested using simplified corpora or synthetic data (Vallabha, McClelland, Pons, Werker, & Amano, 2007; McMurray, Aslin, & Toscano, 2009). A similar double-pronged approach (experimental and modeling evidence) has been conducted for other mechanisms: word segmentation based on transition probability (Sa ran, Aslin, & Newport, 1996; Daland & Pierrehumbert, 2011), word meaning learning based on cross situational statistics (Yu & Smith, 2007; K. Smith, Smith, & Blythe, 2011; Siskind, 1996), semantic role learning based on syntactic cues (Connor, Fisher, & Roth, 2013), etc. Although studies with artificial languages are useful to discover candidate learning algorithms which could be incorporated in a global architecture, the algorithms proposed have only been tested on toy or artificial languages; there is therefore no guarantee that they would actually work when faced with real corpora that are both very large and very noisy. In fact, as discussed in section 6.1, some of these algorithms do not scale up. In addition, it remains to be shown that taken collectively, such learning mechanisms (or scaled up versions) would work synergistically to solve the bootstrapping problem.

## 3.2 Formal linguistic models

Even though much of current theoretical linguistics is devoted to the study of the language competence as it pertains to the human adults, very interesting work has also be conducted in the area of formal models of grammar induction . These models propose algorithms that are provably powerful enough to learn a fragment of grammar given certain assumptions about the input. For instance, Tesar and Smolensky (1998) proposed an algorithm that provided pairs of surface and underlying word forms can learn the phonological grammar (see also Magri, 2015). Similar learnability assumptions and results have been obtained for stress systems (Dresher & Kaye, 1990; Tesar & Smolensky, 2000). For learnability results of syntax, see the review in Clark and Lappin (2011). These models establish important learnability results, and in particular, demonstrate that under certain hypotheses, a particular class of grammar is learnable. What they do not demonstrate however is that these hypotheses are met for infants. In particular, most grammar induction studies assume that infants have an error-free, adult-like symbolic representation of linguistic entities (e.g., phonemes, phonological features, grammatical categories, etc). Yet, perception is certainly not error-free, and it is not clear that infants have adult-like symbols, and if they do, how they acquired them.

In other words, even though these models are more advanced than psycholinguistic models in formally addressing the effectiveness of the proposed learning algorithms, it is not clear that they are solving the same bootstrapping problem than the one faced by infants. In addition, they typically lack a connection with empirical data on developmental trajectories. 2

## 3.3 Machine learning

The idea of using computational modeling to shed light on language acquisition is as old as the field of cognitive science itself, and a complete review would be beyond the scope of this paper. We mention some of the landmarks, separating three learning subproblems: syntax, lexicon, and speech. Computational models of syntax learning in infants can be roughly classified into two strands, one that learns from strings of words alone, and one that additionally uses a conceptual representation of the utterance meaning. The first strand is illustrated by Kelley (1967). The proposed computational model performed hypothesis testing and constructed more and more complex syntactic rules to account for the distribution of words in the input. The input itself was artificial (generated by a context free grammar) and part of speech tags (nouns, verbs, etc.) were provided as side information. Since then, manual tagging has been replaced by automatic tagging using a variety of approaches (see Christodoulopoulos, Goldwater, & Steedman, 2010 for a review), and artificial datasets have been replaced by naturalistic ones (see D'Ulizia, Ferri, & Grifoni, 2011, for a review). This strand views grammar induction as a problem of representing the input corpus with a grammar in the most compact fashion, using both a priori constraints on the shape and complexity of the grammars and a measure of fitness of the grammar to the data (see de Marcken, 1996 for a probabilistic view). The second strand can be traced back to Siklossy (1968), and makes the radically di erent hypothesis that language learning is essentially a translation problem: children are provided with a parallel corpus of speech in an unknown language, and a conceptual representation of the corresponding meaning. The Language Acquisition System (LAS) of Anderson (1975) is a good illustration of this approach. It learns context-free parsers when provided with pairs of representations of meaning (viewed as logical form trees) and sentences (viewed as a string of words, whose meaning are known). Since then, algorithms have been proposed to learn directly the meaning of words (e.g., cross-situational learning, see Siskind, 1996), context-free grammars have been replaced by more powerful ones (e.g. probabilistic Combinatorial Categorical Grammar), and sentence meaning has been replaced by sets of candidate meanings with noise (although still generated from linguistic annotations) (e.g., Kwiatkowski, Goldwater, Zettlemoyer, & Steedman, 2012). Note that all of these models take textual input, and therefore make the (incorrect) assumption that infants are able to represent their input in terms of an error-free segmented string of words.

The problem of word learning itself has been addressed using two main ideas. One main idea is to use distributional properties that distinguish within word and between word phoneme sequences (Harris, 1954; Elman, 1990; Christiansen, Conway, & Curtin, 2005). A second idea, is to simultaneously build a lexicon and segment sentences into words (Olivier, 1968; de Marcken, 1996; Goldwater, 2007). These ideas are now frequently combined (Brent, 1996a; M. Johnson, 2008). In addition, segmentation models have been augmented by jointly learning the lexicon and morphological decomposition (M. Johnson, 2008; Botha & Blunsom, 2013), or tackling phonological variation through the use of a noisy channel model (Elsner, Goldwater, & Eisenstein, 2012). Note that all of these studies assume that speech is represented as an error-free string of adult-like phonemes, an assumption which cannot apply to early language learners. Finally, some studies have addressed language learning from raw speech. These have either concerned the discovery of phoneme-sized units, the discovery of words, or both. Several ideas have been proposed to discover phonemes from the speech signal (self organizing maps: Kohonen, 1988; clustering: Pons, Anguera, & Binefa, 2013; auto-encoders: Badino, Canevari, Fadiga, & Metta, 2014; HMMs: Siu, Gish, Chan, Belfield, & Lowe, 2013; etc.). Regarding words, D. K. Roy and Pentland (2002) proposed a model that learn both to segment continuous speech into words and map them to visual categories (through cross situational learning). This was one of the first models to work from a real speech corpus (parents interacting with their infants in a semi-directed fashion), although the model used the output of a supervised phoneme recognizer. The ACORNS project (Boves, Ten Bosch, & Moore, 2007) used real speech as input to discover candidate words (Ten Bosch & Cranen, 2007, see also Park & Glass, 2008; Muscariello, Gravier, & Bimbot, 2009, etc.), or to learn word-meaning associations (see a review in Räsänen, 2012). Only a small number of papers combined learning of both phonemes and word units (Ying, 2005; Jansen, Thomas, &Hermansky, 2013; Lee, O'Donnell, & Glass, 2015; Thiollière, Dunbar, Synnaeve, Versteegh, & Dupoux, 2015). In sum, machine learning models represent the clearest attempt so far of addressing the full bootstrapping problem. Yet, although one can see a clear progression, from simple models and toy datasets, towards more integrative algorithms and more realistic datasets, there is no single proposition yet that handles the entire speech processing pipeline, i.e., from signal to semantics. In addition, the progression has been very discontinuous across studies, and in our view, hampered by

2 A particular di culty of formal models which lack of a processing component is the observed discrepancies between the developmental trajectories in perception (e.g. early phonotactic learning in 8-month-olds) and production (slow phonotactic learning in one to 3-year-olds).

the complete lack of cumulativity in algorithm, evaluation method and corpora, overall making it impossible to compare the merits of the di erent ideas and register progress. Finally, even though most of these studies mention infants as a source of inspiration of the models, almost none of them try to account for developmental trajectories.

## 3.4 Summing up

Each of the above reviewed approach is evidently valid, has brought a wealth of interesting results and will continue to do so. Our main point is that, in isolation, these approaches do not enable to answer the developmental puzzles outlined in Section 2. They need to be combined, and the proper way to achieve this combination is examined next.

## 4 Four requirements

Here, we examine four requirements about how to conduct reverse engineering in order to answer the two scientific puzzles. They are: using real data, comparing humans and machines, constructing e ective computational models, open-sourcing data, evaluation and models.

## 4.1 Using real data

One of the most serious limitations of past theoretical work is the tendency to focus either on a simplified learning situation, a small corpus, or both, thereby failing to address the language leaning problem in its full complexity. Of course, simplification is the hallmark of the scientific enterprise, but we claim that in the present case, simplifications often result in the learning problem itself being modified beyond recognition. We therefore argue that to address the bootstrapping problem, one has to use real data as input. Formal learning theory provides us with many examples where idealizing assumptions about the learning situation (regarding the input to the learner or the set of target languages to be learned) have extreme consequences on what can be learned or not. For instance, if the environment presents only positive instances of grammatical sentences presented in any possible order, then even simple classes of grammars (e.g., finite state or context free grammars, Gold, 1967) are unlearnable. In contrast, if the environment presents sentences according to processes that can be recursively enumerated (an apparently innocuous requirement), then even the most complex classes of grammars (recursive grammars) 3 become learnable. This result extends to a probabilistic scenario where the input sentences are sampled according to a statistical distribution, constraints about the shape of the distribution radically changes the di culty of the learning problem (see Angluin, 1988). In addition, the presence of side information can make a substantial di erence: providing the syntactic trees along with the phonological form can turn an unlearnable problem into a learnable one (Sakakibara, 1992). The scale of the dataset can also have drastic e ects, even when real data is used. This is illustrated by the history of automatic speech recognition systems. This field started to construct systems aimed at recognizing a small vocabulary for a single speaker (single digits) in the 50's, and nowadays handles multiple speakers with large vocabularies in continuous speech. By moving from small scale to big scale problems the field did not only use bigger models and more powerful machines, but had to build systems based on completely di erent principles (in order of appearance, formant based pattern matching, dynamic programming, statistical modeling, neural networks). Such heavy dependence on the scale and realism of the dataset is even more apparent in with models of learning. For instance, dramatically di erent performances are found when word segmentation algorithms (which attempt to recover word boundaries from continuous speech) are fed with a phoneme transcription or when they are fed with raw speech signals (Jansen, Dupoux, et al., 2013; Ludusan, Versteegh, et al., 2014). Addressing the data scalability problem can be done according to two approaches. The approach followed by formal learning theory consists in starting with simple assumptions and progressively making them more realistic. While perfectly valid, this approach has to face the fact that the class of formal grammars that characterizes human languages is still a matter of debate (e.g., Jäger & Rogers, 2012), and that the way inputs are made available to the children (the caretaker's speech and associated side information) is not formally characterized. As a result the researcher runs the risk of making wrong assumptions and solving a learning problem that is di erent from the one faced by infants in the real world. The second approach, which we promote as "reverse engineering" takes a radical step: instead of relying on formal descriptions of possible inputs, it uses actual, attested, raw data as input. We discuss three important consequences of this proposed solution: qualitative, quantitative, and cross-linguistic. On the qualitative side, the input has to be defined as the total sensory experience of the learner, not a predefined subset nor a pre-formatted linguistic 'channel'. The reason for this is that the linguistic signals emitted by the parents are typically mixed with a variety of non linguistic signals in a culture dependent way. In addition, the physical medium of linguistic signals also vary from culture to culture. In the audio channel for instance, speech sounds are heard by infants mixed with all manners of background noise, music, and non linguistic vocal sounds. Within vocal sounds, click noises are considered non linguistic in many languages, but some languages use them phonologically (Best, McRoberts, & Sithole, 1988). In the visual channel, some amount of linguistic / communicative signals (ges-

3 The problem of unrestricted presentations is that, for each learner, there always exists a 'nemesis', an evil environment that will trick the learner into converging on the wrong grammar (see Clark & Lappin, 2011 for a detailed explanation).

Table 1 Four studies used to estimate infant's speech input

| study | reference | mode of acquisition;age | population |

|---------|-----------------------------------------------------|----------------------------------------|-------------------------------------------------|

| H&R | Hart and Risley (1995) | observer, 1h every month; 12-36 months | urban high, mid & low SES, English |

| SALG | Shneidman, Arroyo, Levine, and Goldin-Meadow (2013) | observer, 1h every month; 12-36 months | urban high SES, English & ru- ral low SES, Maya |

| W&F | Weisleder and Fernald (2013) | daylong recording; 19 months | low SES, Spanish |

| VdW | van de Weijer (2002) | daylong recording; 6-9 months | high SES, Dutch |

Table 2 Estimates of yearly input, in total, and restricted to Child Directed Speech (CDS) , in number of hours and words (millions) per year in four studies (see the references in Table 1) as a function of sociolinguistic group (SES: Socio Economic Status). The numbers between brackets provide the range [min, max] of these numbers across families. t uses a wake time estimate of 9 hours per day. w uses a word duration estimate of 400ms. c uses SALG's estimate of

| | Yearly total | Yearly total | Yearly total | Yearly total | Yearly CDS | Yearly CDS | Yearly CDS | Yearly CDS |

|-----------------|-----------------|-----------------|-----------------|-----------------|-----------------|-----------------|-----------------|-----------------|

| | Hours | Hours | Words (M) | Words (M) | Hours | Hours | Words (M) | Words (M) |

| Urban, high SES | Urban, high SES | Urban, high SES | Urban, high SES | Urban, high SES | Urban, high SES | Urban, high SES | Urban, high SES | Urban, high SES |

| H&R (N = 13) t | 1221 w,c | [578,1987] | 11.0 c | [5.20, 17.9] | 786 w | [372, 1279] | 7.07 | [3.35, 11.5] |

| SALG (N = 6) t | 2023 w,m | [1243, 2858] | 18.2 m | [11.2, 25.7] | 1223 w,m | [853, 1574] | 11.0 m | [7.7, 14.2] |

| VdW (N = 1) | 931 | | 9.28 | | 140 | | 1.39 | |

| Urban, low SES | Urban, low SES | Urban, low SES | Urban, low SES | Urban, low SES | Urban, low SES | Urban, low SES | Urban, low SES | Urban, low SES |

| H&R (N = 6) t | 363 w,d | [136, 558] | 3.26 d | [1.22, 5.02] | 225 w | [84, 346] | 2.02 | [0.76., 3.11] |

| W&F(N = 29) t | 363 w | [52, 1049] | 3.27 | [0.46., 9.44] | 225 w | [32, 650] | 2.03 | [0.29, 5.85] |

| Rural, low SES | Rural, low SES | Rural, low SES | Rural, low SES | Rural, low SES | Rural, low SES | Rural, low SES | Rural, low SES | Rural, low SES |

| SALG (N = 6) t | 503 w,m | [365, 640] | 4.53 m | [3.28, 5.76] | 234 w,m | [132, 322] | 2.10 m | [1.19, 2.90] |

tures, mouth movements) is present in all cultures (Fowler & Dekle, 1991; Goldin-Meadow, 2005), but it becomes the dominant language channel in deaf communities using sign language (Poizner, Klima, & Bellugi, 1987). However, sign language can be used as native language even in hearing children, provided they are raised in mixed hearing / deaf communities (Van Cleve, 2004). Cross-cultural variation makes it impossible to innately specify a fixed way of unmixing these signals or selecting a language channel. It is therefore part of the language learning problem to separate the linguistic signals from the non-linguistic background. Using real inputs, instead of idealized ones, could be said to set up an impossibly di cult task for computational models. In the real world, the linguistic signal is often corrupted or partially masked by non-linguistic signals. Dysfluencies or speech errors at many levels (Fromkin, 1984), as well as individuallevel sources of variability, added to structural ambiguity at all linguistic levels (e.g., homophony: Ke, 2006) may make the learning problem orders of magnitude more di cult than in simplified situations. Yet, real inputs may also bring about potential benefits in the shape of side information. As an example, syntax learning could be helped through the detec- tion of prosodic information present in the signal. Prosodic boundaries may not always be coincidental with syntactic boundaries, but they could provide to the learner useful side information for the purpose of syntax and lexical acquisition (e.g. Christophe, Millotte, Bernal, & Lidz, 2008; Ludusan, Gravier, & Dupoux, 2014). Similarly, semantic information in the form of visually perceived objects or scenes and afferent social signals may help lexical learning (D. K. Roy &Pentland, 2002) and help bootstrap syntactic learning (the semantic bootstrapping hypothesis, see Pinker, 1984). On the quantitative side, it is important that the totality of the input is being considered for the following reasons. First, it sets up boundary conditions for the learning algorithms. Algorithms that require more input than is generally available to infants can be ruled out. As an example, current distributional semantic models use between 3 and 100 billion words to learn vector representations for the meaning of words or short phrases based on adjacent words (Mikolov, Sutskever, Chen, Corrado, & Dean, 2013; Word2vec Google Project Page , 2013). This is between 30 and 1000 times more data than infants are typically exposed to during their first 4 years of life, in fact, more than most people get in a lifetime, and

therefore not plausible as the sole mechanism for meaning learning. Vice versa, an algorithm that would require only 10 On the cross-linguistic side, a successful model of the learner should not demonstrate learning for only one input dataset, but it should learn for any input dataset in any possible human language in any modality (see the equipotentiality criterion in Pinker, 1987). Since, as we argued above, the class of all possible language inputs it still not formally characterized, one could take the approach of sampling from a finite but ever expanding set of existing linguistic communities. An adequate sampling procedure would insure that, statistically speaking, a given computational model is (or is not) able to learn from any possible input. Practically speaking, it may be interesting to sample typologies and sociolinguistic groups in a stratified fashion to avoid over-fitting the learning model to a restricted set of learning situations. To sum up, using real input is the only way to make sure that modelers are addressing the right learning problem. This has significant consequences regarding the size of the dataset that has to be collected : complete sensory coverage over the first 3 or 4 years of life, for a representative sample of children over a representative sample of languages. Before such a dataset is available, of course, it is still interesting to use as proxy a variety of smaller or simplified datasets, provided that one keeps in mind the important caveat that the conclusions may not scale up when put to test with real data.

## 4.2 Evaluating systems through human-machine comparison

For a modeling enterprise of any sort, it is important to specify a success criterion. A lingering limitation of past theoretical work is that too many distinct success criteria have been used. In fact, the diversity is so great that it is nearly impossible to compare the di erent propositions across research fields (and sometimes even within field), and to reach the same standards as cumulative science. For psycholinguistic conceptual frameworks, the primary success criterion is the ability to account for developmental trajectories. Note, however, that because of the verbal nature of these frameworks, it can only be checked at an intuitive and qualitative level. It can then be di cult to validate, refute or compare these frameworks. For linguistic formal learning models, the main focus is the learnability puzzle and is usually defined in terms of learnability in the limit (Gold, 1967): A learner is said to learn a target grammar in the limit, if after some amount of time, his own grammar becomes equivalent to the target grammar. This standard formulation has been criticized as too lax (K. Johnson, 2004). Since there is no time limit on convergence, a learner that needs a million year's worth of data to converge would still be deemed successful. We know that most children converge on an adult grammar in a fixed number of years, which is bounded by puberty. Therefore, our learnability criterion should be stronger and require the system to converge on a grammar after the same amount of input that it takes for children to converge. In addition the standard criterion assumes that one can determine when two grammars are equivalent, which is not always simple. 4 Finally, for the machine learning models that we reviewed, system evaluation was not their strong selling point. Many provided only qualitative evaluations, but for those that did provide a numeric one, they were typically defined in relation to a so called gold standard , i.e. human annotations (like phoneme transcriptions, part of speech annotations, parse trees, etc). The success of the learning algorithm is then measured as a distance between the machine annotation and the gold one. Of course, these evaluations are only valid to the extent that the gold standard reflects the state of the human language competence. This is not necessarily the case for adult-machine comparisons, as linguists may disagree on some of the annotations, and certainly not the case for children-machine comparisons, as the infant's grammar is probably di erent from that of the linguisticallytrained adult. We therefore claim that for the reverse engineering approach, none of these criteria, taken individually, are satisfactory. Prior advocates of the use of machine learning to model language acquisition have proposed a number of ways to combine these criteria. To quote a few, MacWhinney (1978) proposed 9 criteria, Berwick (1985), 9 criteria (di erent ones), Pinker (1987) 6 criteria, Yang (2002) 3 criteria, M. C. Frank, Goldwater, Gri ths, and Tenenbaum (2010) 2 criteria. These can be sorted into conditions about e ective modeling (being able to generate a prediction), about the input (being as realistic as possible), about the end product of learning (being adult-like) and about the plausibility of the computational mechanisms. In our proposed reverse engineering approach, we would like to integrate within a single operational criterion, the cognitive indistinguishability criterion , the insights of the psycholinguistic theories with the quantitative evaluations of the formal and algorithmic models:

A human and a machine are cognitively indistinguishable with respect to a given set of tests when they yield numerically similar results when ran on these tests.

The proposal, therefore, is that, a computational model of language learning is successful, when it yields a system that is cognitively indistinguishable from a human (adult or child) after having been fed with the same input data. Such a success criterion enables both to address the learnability puzzle and to account for developmental trajectories. Note,

4 Two grammars are said to be weakly equivalent if they generate the same utterances. In the case of context free grammars, this is an undecidable problem. More generally, for many learning algorithms (e.g., neural networks), it is not even clear what has been learned, and therefore the criterion cannot be verified.

however, that cognitive indistinguishability is not an absolute criterion but depends on a set of tests. Constructing an agreed upon set of such tests (a cognitive benchmark ) becomes therefore part of the reverse engineering project by integrating tests that linguists and psycholinguists agree upon as being relevant to characterizing grammatical competence in humans. This benchmark can of course be revised as new and more subtle experimental protocols for language competence are discovered and can set the human and machine apart. Here, we present three conditions that such tests must satisfy to achieve our scientific objectives: they should be administrable (to adults, children and computers alike), valid (measure the construct under study as opposed to something else), and reliable (with a good signal to noise ratio). The last two conditions are common in psychometrics and psychophysics (e.g., Gregory, 2004). Test validity refers to whether a test, both theoretically and empirically, is sensitive to the psychological construct (state or process) it is supposed to measure. For instance, in an influential paper, Turing (1950) proposed to test whether machines can 'think' using the so-called imitation game , where it had to persuade a human observer that it was a female human through an online keyboard conversation. The machine succeeds if it fools the observer as often as a human male participant would. This test is evidently not valid, as theoretically, 'thinking' is not a well defined psychological construct, but rather a polysemous folk psychology concept, and empirically, it is rather easy to fool human observers using rather simplistic text manipulation rules (see ELIZA, Weizenbaum, 1966). Fortunately, since the 50's, cognitive psychology has progressed tremendously and can o er a rich set of valid tests for the evaluation of language-related cognitive components (see Section 5.2). Test reliability refers to the signal to noise ratio of the measure. It can be evaluated by rerunning the same tests over the same or di erent participants for humans, or over di erent initial conditions for the machines. Typically, test reliability is not thought to be a real issue for machines, to the extent that many algorithms are deterministic or assumed to be quite stable. Yet, it is important to assess this reliability empirically, for instance, by running the same algorithm over di erent samples of a large corpus. As for humans, test reliability is a very important issue, and even more so, for children and infants. Evidently, we cannot ask that the match between humans and machines be larger that the match within population. Test administrability does not belong to standard psychometrics, but it is especially important in the case both of infants and machines. Human adults have metalinguistic abilities which allow the experimenter to explain to them how to perform a particular test, in simple words. Such a strategy is not directly applicable to human infants nor to machines. In infants, a testing apparatus has to be constructed, i.e., a rather artificial environment whereby everything is controlled so that the response to test stimuli arises naturally and is measured using spontaneous tendencies of the participants (preference methods, habituation methods, etc; see Ho , 2012, for a review). 5 In machines, there is also an issue of administrability. Typically, learning algorithms are not constructed to run linguistic tests, but to learn based on their input. Therefore, they need to be supplemented with particular task interfaces for each of the proposed tests in order to extract a response that would be equivalent to the response generated by humans. 6 In both cases, administering the task has to be made so as not to compromise the test's validity. Biases or knowledge of the desired response has to be removed from the testing apparatus (for the infants) and from the interface (for the machine). To sum up, to evaluate computational models, the reverse engineering approach proposes to build a revisable benchmark of valid and reliable tests measuring the various components of the human language faculty, and that can be administered to humans of various ages and machines alike. Models will be compared on their ability to mimic the results of these tests.

## 4.3 Constructing scalable computational models

As discussed in Section 3, past work in psycholinguistics and formal linguistics was not centered on the task of building e ective systems which would work with real data at scale. In contrast, speech and language engineering systems are typically constructed to perform complex functions like converting speech to text, or conducting a simple question / answer dialogue. Importantly, engineering systems do work impressively well with large scale noisy data. The main design feature of these systems is that even though they are often constructed using components that are similar to the ones envisioned by psycholinguistic and linguistic models (for instance with phonetic, phonological, lexical, and syntactico-semantic components), it is not assumed that each of these levels is errorless. On the contrary, the handling of errors and ambiguity is acknowledged from the ground up, through a statistical or parallel processing architecture. Within such an architecture, multiple interpretations are passed from one level to the next along with their probabilities, enabling the errors and ambiguities to be resolved in a holistic and optimal fashion (for a statistical framework in speech processing, see Jelinek, 1997). Where engineering systems fall short of the reverse engineering objectives, is that they do not care about mimicking the learning process that takes place in infants. Instead, they are constructed as

5 In animals, before tests can be run, an extensive period of training is often necessary, in order for the animal to comply with the protocol. Such procedures are not possible in human infants.

6 A task interface can be viewed as a function which takes as input the internal state of the algorithm generated by the stimuli and delivers a binary or real valued response.

full-blown adult systems, using a substantial amount of expert knowledge regarding the language and the tasks that the systems should perform. Early systems were heavily engineered, with each subcomponent crafted and tuned by hand using expert knowledge. Nowadays, experts only specify a general architecture, and all of the parameters are tuned automatically using numerical optimization techniques run on very large datasets of human annotated speech or text. For instance, a typical state-of-the-art speech recognition component is trained with hours of hand transcribed speech (10000 hours or more), with a large pronunciation dictionary, and a few billion words of text. A language understanding component is trained with a bank of sentences annotated with part of speech and parse trees. All of these expert language resources are unfortunately not available to infants learning their native language(s). In brief, on the one hand, the psycholinguistic and linguistic approaches propose plausible candidate learning mechanisms, which may not scale up. On the other hand, engineering approaches propose scalable processing systems, but even though they are based on statistical learning, they do not use cognitively plausible learning mechanisms. The idea of the reverse engineering approach is, therefore, to use as a source of inspiration the conceptual frameworks and the psychologically validated learning mechanisms, and incorporate them into scalable and noiseresistant processing architectures from speech and language technology, which have to be modified to rely only on the inputs available to infants (i.e. raw sensory data, but no expert annotation). Technically, learning mechanisms that only use raw signals (or sparse and errorful human 'labels') are called unsupervised (or weakly supervised). This class of machine learning problems is unfortunately less well studied and understood than the supervised learning ones (classification, regression, etc). Learning without external labels is obviously more di cult than learning with labels. Humans labels provide simultaneously a target representation that the machine has to compute given its input, and an error function (the difference between the human provided and machine computed labels) that can be optimized using numerical methods in order to reach this objective. With unsupervised problems, everything changes: the machine is only given inputs, and has to construct on its own (so-called latent) representations of the input. This can also be written as an optimization problem, but the error function is defined with respect to the input only (typically, the system's objective is to model its input, for instance, it has to be able to predict future inputs based on past ones). Therefore the problem is much more underdetermined, and it is not clear that the latent representations will succeed in capturing anything useful at all (for humans or for the rest of the system). Before closing, let us discuss briefly one issue which often comes up when engineering systems are used as models of human processing: the issue of biological plausibility . This term means that the computa- tions done in the models should be compatible with what we know about the biological systems that underlie these computations in human infants / adults. This constraint, while reasonable, may be tricky to apply because of the existence of space-time trade-o s, i.e., the possibility of rewriting algorithms that require a lot of compute time and little memory (hence biologically implausible) into algorithms that require less time and more memory (hence biologically plausible). In addition, the computational power of a human brain is currently unknown. Current supercomputers can simulate at a synapse level only a fraction of a brain and several orders of magnitude slower than real time (Kunkel et al., 2014). If this is so, all computational models run in 2016 are still massively underpowered compared to a child's brain. Still, biological plausibility can be invoked to discard at least some of the most unrealistic propositions, and this in two ways. One is through algorithmic complexity . A learning algorithms that requires an exponential amount of memory or compute time as a function of input size will quickly exhaust the computing resources of the universe and can be therefore discarded or replaced by less demanding ones. A second way relates to system complexity at the initial state , i.e., before any learning has taken place, which has to be bounded by what is encoded in the human genome regarding the language faculty. This allows to rule out, for instance, a 100 Apart from these extreme examples, the biological plausibility constraint may not a ect much the modeling approach, which therefore could result in models that some would judge are not neurologically plausible. One way to deal with such criticism is to claim that reverse engineering aims at characterizing the learner at the level of the information and computation that are needed in order to solve the learning problem (Marr & Poggio, 1976). Another way would be to enrich the cognitive benchmark with processing-related or neurologicallyrelated tests that have to be passed both by the models and the humans, as we defined above. To sum up, the best available option for constructing a scalable computational model of language learning comes from machine learning systems of speech and language processing, which needs to be refactored to work without expert supervision (no linguistic labels) in a weakly or unsupervised fashion.

## 4.4 Open sourcing data, benchmarks and models

As any scientific endeavor, the reverse engineering approach proposed adheres to standard in transparency of process and replicability. As was noted above, many of the earlier attempts to bring machine learning to bear to issues of language development were not set up in order to allow cumulative science to proceed. It is therefore central for this proposal to share language datasets, test benchmarks and reference systems in an open source format to enable comparison of di erent models and enable new players to try their own ideas. Open source benchmarks, datasets and models

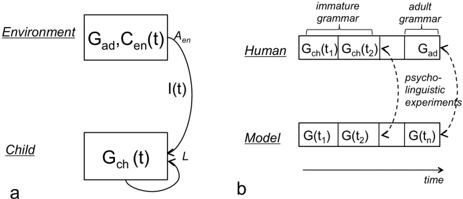

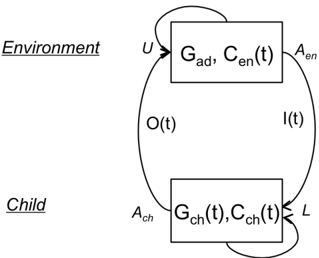

Figure 2 . a. The (simplified) learning situation: The Child's internal state is a grammar Gch ( t ) that can be updated through the learning function L based on input I ( t ). The environment's internal state is a constant adult grammar Gad and a variable context Cen , which produces the input to the child. b. Method to test the empirical adequacy of the model by comparing the outcome of psycholinguistic experiments with that of children and adults.

<details>

<summary>Image 2 Details</summary>

### Visual Description

\n

## Diagram: Language Acquisition Model

### Overview

The image presents two diagrams (labeled 'a' and 'b') illustrating a model of language acquisition. Diagram 'a' depicts the interaction between a child and their environment, while diagram 'b' shows a parallel model using a computational approach. Both diagrams represent a process evolving over time, with the goal of transitioning from an immature grammar to an adult grammar.

### Components/Axes

**Diagram a:**

* **Labels:** "Environment", "Child"

* **Variables within boxes:**

* Environment: `G_ad`, `C_en(t)`, `A_en`

* Child: `G_ch(t)`

* **Arrows & Labels:**

* Arrow from Environment to Child: `L` (likely representing learning)

* Arrow from Child to Environment: `I(t)` (likely representing input/output)

* Arrow from Environment to Child (curved): `A_en`

**Diagram b:**

* **Labels:** "Human", "Model"

* **Labels above Human:** "immature grammar", "adult grammar"

* **Labels above Model:** No labels

* **Variables within boxes:**

* Human: `G_ch(t_1)`, `G_ch(t_2)`, `G_ad`

* Model: `G(t_1)`, `G(t_2)`, `G(t_n)`

* **Arrows & Labels:**

* Arrow from `G_ch(t_2)` to `G_ad` (dashed): "psycho-linguistic experiments"

* Arrow from `G(t_2)` to `G(t_n)` (dashed): "psycho-linguistic experiments"

* **Horizontal Axis:** "time" (indicated by an arrow)

### Detailed Analysis / Content Details

**Diagram a:**

The diagram shows a child interacting with their environment. The environment is characterized by `G_ad` (adult grammar), `C_en(t)` (environmental constraints at time t), and `A_en` (environmental actions). The child possesses `G_ch(t)` (child's grammar at time t). The child receives input `I(t)` from the environment and provides output `L` (learning) back to the environment. The curved arrow `A_en` suggests a feedback loop from the environment influencing the child.

**Diagram b:**

This diagram parallels 'a' with a computational model. The "Human" row represents the development of grammar from an immature state (`G_ch(t_1)`, `G_ch(t_2)`) to an adult state (`G_ad`). The "Model" row represents a similar progression (`G(t_1)`, `G(t_2)`, `G(t_n)`). The dashed arrows labeled "psycho-linguistic experiments" indicate a connection between the human and model development, suggesting that experiments are used to inform or validate the model. The time axis indicates that the grammar evolves over time.

### Key Observations

* Both diagrams represent a similar process of grammatical development.

* The model in diagram 'b' is intended to mimic the human process in diagram 'a'.

* The use of time-dependent variables (`t`, `t_1`, `t_2`, `t_n`) emphasizes the dynamic nature of language acquisition.

* The dashed arrows in diagram 'b' suggest a validation or feedback loop between the model and human data.

* The variables are not defined numerically, so no quantitative analysis is possible.

### Interpretation

The diagrams illustrate a cognitive science approach to modeling language acquisition. Diagram 'a' presents a high-level conceptual framework of a child learning from their environment. Diagram 'b' proposes a computational model that attempts to replicate this process. The "psycho-linguistic experiments" link suggests that the model is grounded in empirical data. The use of variables like `G_ad` and `G_ch` implies that the model focuses on the structure of grammar itself. The diagrams suggest a cyclical process where the child's grammar evolves through interaction with the environment, and the model's grammar evolves through experimentation and validation. The diagrams do not provide specific data or numerical values, but rather a conceptual framework for understanding language acquisition. The use of mathematical notation (`G`, `C`, `I`, `L`) suggests a formal, potentially mathematical, representation of the model. The diagrams are a qualitative representation of a theoretical model.

</details>

are very common in many areas of machine learning, prominently in vision (e.g. the Imagenet dataset http: // www.imagenet.org). This is less the case in speech and language, as many speech resources are protected or proprietary, thereby slowing down progress. Yet, this is changing quickly as open source speech databases are being constructed (for instance, open SLR http: // www.openslr.org for datasets, and kaldi http: // kaldi-asr.org for speech tools).

## 5 Feasibility and Challenges

To address the feasibility of the reverse engineering approach as applied to early language acquisition, we first limit ourselves to the following simplifying framework: the total input available to a particular child provides enough information to acquire the grammar of the language present in the environment. This may seem an innocuous assumption, but it essentially puts us in the open loop situation described in Figure 2), where the environment delivers a fixed curriculum of inputs (utterances and their sensory contexts) and the learner recovers the grammar that generated the utterances. In this framework, the output of the child is not modeled, and the environment does not modify its inputs according to her behavior or inferred internal states. We come back to this simplifying assumption in Section 7. Within this framework, we discuss how the four requirements can be met using current technology. We also discuss possible roadblocks that arise in the process of deploying this technology. We address each of the three pillars of the reverse engineering approach (data collection, computational modeling and empirical validation) in turn.

## 5.1 Data Collection and Privacy

The requirement of using real data as input to the learner raises two issues, one technological and one ethical. At the technological level, it has become relatively easy to record virtually unlimited amounts of good quality audio and video data in children's environments. Perhaps the most ambitious data collection e ort so far has been done within the Speechome project (D. Roy, 2009), where video and audio equipment was installed in each room of an apartment, recording 3 years' worth of data around one infant. Wearable recorders (see for instance the LENA system, Xu et al., 2008) enable recording the infant's sound environment for a full day at a time, even outside the home. These can be supplemented with position sensors to categorize activities (Sangwan, Hansen, Irvin, Crutchfield, & Greenwood, 2015), or Life logging wearable devices to capture images every 30 seconds in order to reconstruct the context of speech interactions (Casillas, 2016). Of course, part of the technological challenge is not only to record raw data, but also to reconstruct the infant's sensory experience, from a first person point of view. In this context, head-mounted cameras can be useful to estimate the infant's head (and therefore average gaze) direction (L. B. Smith, Yu, Yoshida, & Fausey, 2015). Recent progress in 3D reconstruction, especially when using multi-view and / or depth sensors make it possible to go further in sensory reconstruction (e.g., Mustafa, Kim, Guillemaut, & Hilton, 2016) although this has not yet been done with infant data. Finally, even raw sensory data is di cult to use if it not supplemented with reliable linguistic / high level annotations. For instance, a large part of the Speechome corpus's audio track has been transcribed using semi-automatized means, enabling the search for linguistic characteristics of both the input to the child and its output (B. C. Roy, Frank, DeCamp, Miller, & Roy, 2015). Continuous progress in machine learning (speech recognition: Amodei et al., 2015; object recognition: Girshick, Donahue, Darrell, & Malik, 2016; action recognition: Rahmani, Mian, & Shah, 2016; emotion recognition: Kahou et al., 2015) will enable to lower the burden on high-level annotation of large amounts of data. The technological aspect of massive data collection, however, appears relatively simple when compared with the ethical challenges raised by the need to make this data accessible to the research community. There is a tension between the requirement of sharability and open scientific data (see Section 4.4), and the need of protecting individual privacy when it comes to personal and sensitive data. Up to now, the response of the scientific community has been dichotomous: either make everything public (as in the open access repositories like CHILDES, MacWhinney, 2000), or completely close o the corpora to anybody outside the institution that has recorded the data (as in the Riken corpus, Mazuka, Igarashi, & Nishikawa, 2006, or the Speechome corpus D. Roy, 2009). The first strategy sacrifices privacy and is impossible to scale up to dense recordings. The second strategy puts such an obstacle to the scientific use of the corpora that it almost defeats

the purpose of conducting the recording in the first place. A number of alternative strategies are being considered by the research community. The Homebank repository contains raw and transcribed audio, with a restricted case by case access to researchers (VanDam et al., 2016). Databrary has a similar system for video recordings (https: // nyu.databrary.org). Progress in cryptographic techniques would make it possible to envision preserving privacy while enabling more open exploitation of the data. For instance, the raw data could be locked on secure servers, thereby remaining accessible and revokable by the infants' families. Researchers' access would be restricted to anonymized meta-data or aggregate results extracted by automatic annotation algorithms. Di erential privacy techniques enable outside participants to make queries on databases while providing a level of guarantee on the amount of private information that can be extracted (Dwork, 2006). The specifics of such a new type of linguistic data repository would have to be worked out before dense speech and video home recordings can become a mainstream tool for infant research.

## 5.2 Cognitive Benchmarking and Experimental Reliability

Our second requirement, the construction of a cognitive benchmark for language processing, can be considered a done thing in the case of the human adult. The linguistic and psycholinguistic communities have indeed constructed relatively easy-to-administer, valid and reliable tests of the main components of linguistic competence in perception / comprehension (see Table 3). These tests are easy to administer because they are conceptually simple and can be administered to naive participants; most of them are of two kinds: goodness judgments (say whether a sequence of sound, a sentence, or a piece of discourse, is 'acceptable', or 'weird') and matching judgments (say whether two words mean the same thing or whether an utterance is true of a given situation, which can be described in language, picture or other means). The validity of linguistic tests often stems from the fact that they are used within a minimal set design . Such design selects examples where only one linguistic construct is manipulated while every other variable is kept constant (for instance: 'the dog eats the cat' and 'the eats dog the cat' only di er in word order). Regarding test reliability, as it turns out, many linguistic tests are quite reliable, as 97 Given the simplicity of these tasks, it is relatively straightforward to apply them to machines. Indeed, matching judgments between stimulus A and stimulus B can be derived by extracting from the machine the representations triggered by stimulus A and B, and compute a similarity score between these two representations. Goodness judgments are perhaps more tricky; they can easily be done by generative algorithms that assign a probability score , a reconstruction error , or a prediction error to individual stimuli. As seen in Table 3, some of these tests are already being used quite standardly in the evaluation of unsupervised learning systems, in particular, in the evaluation of phonetic and semantic levels) while for others they are less widespread. 7 Of course, in order to evaluate the ability of models to account for developmental trajectories (second puzzle) we must also compare machines with children. This is where the di cult challenge lies. The younger the child, the more di cult it is to construct reliable tests. The replicability crisis (see Ioannidis, 2012; Open Science Collaboration, 2015) has barely hit developmental psychology yet because there are so few replications in the first place (although, see the Many Babies project, M. Frank, 2015). Addressing this challenge would require improving substantially the reliability of the experimental techniques. Existing meta-analyses highlight large di erences in e ect sizes across experimental methods (community-augmented meta-analyses: Tsuji, Bergmann, & Cristia, 2014, metalab: http: // metalab.stanford.edu / ), which point to ways to improve the methods. If the method's signal-to-noise reach a plateau, there is the possibility to increase the number of participants through collaborative testing, as in genome-wide association studies, where low power requires a consortium to run very large number of participants (e.g., around 200,000 participants in Ehret, Munroe, Rice, & al., 2011) or increase the number of data points per child (perhaps using home-based experiments: L. Shultz, 2014, https: // lookit.mit.edu / , or V. Izard, 2016, https: // www.mybabylab.fr). In brief, some of the most fine grained predictions of reverse engineering models may have to wait for progress in experimental methods in infants.

## 5.3 Unsupervised learning of speech and language understanding

The third requirement comes down to 'desupervising' machine learning algorithms, i.e. to have them learn latent linguistic representations instead of force-feeding these representations through expert annotations. Two main, non exclusive, ideas are being explored to address this challenge. One idea could be referred to under the generic name of prior information . It is the idea that one can replace some of the missing labels (expert information) by innate knowledge about the structure of the problem. With strong prior knowledge, some logically impossible induction problems become solvable. 8 The reasoning here is that evolution might

7 Regarding the evaluation of word discovery systems, see the proposition by Ludusan, Versteegh, et al. (2014) but see Pearl and Phillips (2016) for a counter proposal and a discussion in (Dupoux, 2016).

8 One good illustration is the following: can you tell the colors of 1000 balls in an urn by just selecting one ball? The task is impossible without any prior knowledge about the distribution of colors in the urn, but very easy if you know that all the balls have the same color.

Table 3 Example of tasks that could be used for a Cognitive Benchmark.

| Task description in human adults | Linguistic level | Equivalent task in children | Equivalent task in machines |

|-----------------------------------------------------------------------------|--------------------------------------------------|------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|-----------------------------------------------------------------------------------------------------------------------------------------------------|

| Well-formedness judgement does utterance S sound good? | phonetic, prosody, phonology, morphology, syntax | preferential looking (9-month-olds: Jusczyk, 1997), acceptability judgment (2-year-olds: de Villiers and de Villiers, 1972; Gleitman, Gleit- man, and Shipley, 1972) | reconstruction error (Allen & Seiden- berg, 1999), probability (Hayes & Wilson, 2008), mean or min log probability (Clark, Giorgolo, &Lappin, 2013) |

| Same-Di erent judgment is X the same sound / word / meaning as Y? | phonetic, phonology, semantics | habituation / deshabituation (newborns, 4-month- olds: Eimas, Siqueland, Jusczyk, & Vigorito, 1971; Bertoncini, Bijeljac-Babic, Blumstein, & Mehler, 1987), oddball (3-month-olds: Dehaene- Lambertz, Dehaene, et al., 1994) | AX / ABX discrimination (Carlin, Thomas, Jansen, & Hermansky, 2011; Schatz et al., 2013), cosine similarity (Landauer & Du- mais, 1997) |

| Part-Whole judgment is word X part of sentence S? | phonology, mor- phology | Word spotting (8-month-olds: Jusczyk, Houston, &Newsome, 1999) | spoken web search (Fiscus, Ajot, Garofolo, &Doddingtion, 2007) |

| Reference judgment does word X (in sent S) refer to meaning M? | semantics, prag- matics | intermodal preferential looking (16-month-olds: Golinko , Hirsh-Pasek, Cauley, & Gordon, 1987), picture-word matching (11-month-olds: Thomas, Campos, Shucard, Ramsay, & Shucard, 1981) | picture / video captioning (e.g., Devlin, Gupta, Girshick, Mitchell, & Zitnick, 2015), Winograd's schemas (Levesque, Davis, &Morgenstern, 2011) |

| Truth / Entailment judgment is sent S true (in context C)? | semantics | Truth Judgment Task (3-year-olds: Abrams, Chiarello, Cress, Green, & Ellett, 1978; Lidz & Musolino, 2002) | visual question answering (Antol et al., 2015) |

| Felicity judgement would people say S to meanM (in context C) ? | pragmatics | Ternary reward task (5-year-olds: Katsos & Bishop, 2011), Felicity judgment task (5 years olds: Foppolo, Guasti, &Chierchia, 2012). | ? |

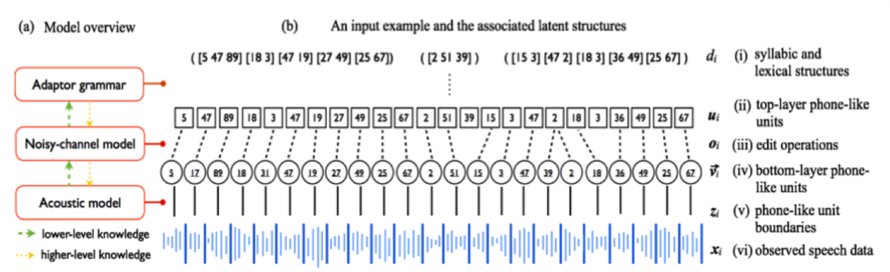

Figure 3 . Outline of a generative architecture learning jointly words and phonemes from raw speech (from Lee, O'Donnell & Glass, 2015).

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Diagram: Model Overview and Input Example with Latent Structures

### Overview

The image presents a diagram illustrating a model overview and an input example with associated latent structures. The diagram consists of two main parts: (a) a block diagram representing the model architecture, and (b) a visualization of an input example and its corresponding latent structures. The diagram uses arrows to indicate the flow of information and the levels of knowledge.

### Components/Axes

The diagram includes the following components:

* **Model Overview (a):**

* Adaptor grammar (rectangle)

* Noisy-channel model (rectangle)

* Acoustic model (rectangle)

* Arrows indicating lower-level knowledge (dashed green arrows)

* Arrows indicating higher-level knowledge (solid yellow arrows)

* **Input Example and Latent Structures (b):**

* Observed speech data (xₜ) - represented as a waveform

* Phone-like unit boundaries (zₜ) - a series of numbers above the waveform

* Lower-layer phone-like units (vₜ) - a series of numbers above zₜ

* Edit operations (θₜ) - a series of numbers above vₜ

* Top-layer phone-like units (μₜ) - a series of numbers above θₜ