## The Inflation Technique for Causal Inference with Latent Variables

Elie Wolfe, 1, ∗ Robert W. Spekkens, 1, † and Tobias Fritz 1, ‡

1 Perimeter Institute for Theoretical Physics, Waterloo, Ontario, Canada, N2L 2Y5

(Dated: July 24, 2019)

The problem of causal inference is to determine if a given probability distribution on observed variables is compatible with some causal structure. The difficult case is when the causal structure includes latent variables. We here introduce the inflation technique for tackling this problem. An inflation of a causal structure is a new causal structure that can contain multiple copies of each of the original variables, but where the ancestry of each copy mirrors that of the original. To every distribution of the observed variables that is compatible with the original causal structure, we assign a family of marginal distributions on certain subsets of the copies that are compatible with the inflated causal structure. It follows that compatibility constraints for the inflation can be translated into compatibility constraints for the original causal structure. Even if the constraints at the level of inflation are weak, such as observable statistical independences implied by disjoint causal ancestry, the translated constraints can be strong. We apply this method to derive new inequalities whose violation by a distribution witnesses that distribution's incompatibility with the causal structure (of which Bell inequalities and Pearl's instrumental inequality are prominent examples). We describe an algorithm for deriving all such inequalities for the original causal structure that follow from ancestral independences in the inflation. For three observed binary variables with pairwise common causes, it yields inequalities that are stronger in at least some aspects than those obtainable by existing methods. We also describe an algorithm that derives a weaker set of inequalities but is more efficient. Finally, we discuss which inflations are such that the inequalities one obtains from them remain valid even for quantum (and post-quantum) generalizations of the notion of a causal model.

∗ ewolfe@perimeterinstitute.ca

† rspekkens@perimeterinstitute.ca

‡ tfritz@perimeterinstitute.ca

## CONTENTS

| I. Introduction | 2 |

|--------------------------------------------------------------------------------------------------------|-----|

| II. Basic Definitions of Causal Models and Compatibility | 5 |

| III. The Inflation Technique for Causal Inference | 6 |

| A. Inflations of a Causal Model | 6 |

| B. Witnessing Incompatibility | 8 |

| C. Deriving Causal Compatibility Inequalities | 12 |

| IV. Systematically Witnessing Incompatibility and Deriving Inequalities | 15 |

| A. Identifying the AI-Expressible Sets | 17 |

| B. The Marginal Problem and its Solution | 19 |

| C. A List of Causal Compatibility Inequalities for the Triangle scenario | 20 |

| D. Causal Compatibility Inequalities via Hardy-type Inferences from Logical Tautologies | 21 |

| V. Further Prospects for the Inflation Technique | 24 |

| A. Appealing to d -Separation Relations in the Inflated Causal Structure beyond Ancestral Independance | 24 |

| B. Imposing Symmetries from Copy-Index-Equivalent Subgraphs of the Inflated Causal Structure | 25 |

| C. Incorporating Nonlinear Constraints | 25 |

| D. Implications of the Inflation Technique for Quantum Physics and Generalized Probabilistic Theories | 26 |

| VI. Conclusions | 29 |

| Acknowledgments | 30 |

| A. Algorithms for Solving the Marginal Constraint Problem | 31 |

| B. Explicit Marginal Description Matrix of the Cut Inflation with Binary Observed Variables | 32 |

| C. Constraints on Marginal Distributions from Copy-Index Equivalence Relations | 33 |

| D. Using the Inflation Technique to Certify a Causal Structure as 'Interesting' | 35 |

| 1. Certifying that Henson-Lal-Pusey's Causal Structure #16 is 'Interesting' | 35 |

| 2. Deriving a Causal Compatibility Inequality for HLP's Causal Structure #16 | 37 |

| 3. Certifying that Henson-Lal-Pusey's Causal Structures #15 and #20 are 'Interesting' | 38 |

| E. The Copy Lemma and Non-Shannon type Entropic Inequalities | 39 |

| F. Causal Compatibility Inequalities for the Triangle Scenario in Machine-Readable Format | 40 |

| G. Recovering the Bell Inequalities from the Inflation Technique | 41 |

## I. INTRODUCTION

Given a joint probability distribution of some observed variables, the problem of causal inference is to determine which hypotheses about the causal mechanism can explain the given distribution. Here, a causal mechanism may comprise both causal relations among the observed variables, as well as causal relations among these and a number of unobserved variables, and among unobserved variables only. Causal inference has applications in all areas of science that use statistical data and for which causal relations are important. Examples include determining the effectiveness of medical treatments, sussing out biological pathways, making data-based social policy decisions, and possibly even in developing strong machine learning algorithms [1-5]. A closely related type of problem is to determine, for a given set of causal relations, the set of all distributions on observed variables that can be generated from them. A special case of both problems is the following decision problem: given a probability distribution and a hypothesis about the causal relations, determine whether the two are compatible: could the given distribution have been generated by the

hypothesized causal relations? This is the problem that we focus on. We develop necessary conditions for a given distribution to be compatible with a given hypothesis about the causal relations.

In the simplest setting, the causal hypothesis consists of a directed acyclic graph (DAG) all of whose nodes correspond to observed variables. In this case, obtaining a verdict on the compatibility of a given distribution with the causal hypothesis is simple: the compatibility holds if and only if the distribution is Markov with respect to the DAG, which is to say that the distribution features all of the conditional independence relations that are implied by d -separation relations among variables in the DAG. The DAGs that are compatible with the given distribution can be determined algorithmically [1]. 1

A significantly more difficult case is when one considers a causal hypothesis which consists of a DAG some of whose nodes correspond to latent (i.e., unobserved) variables, so that the set of observed variables corresponds to a strict subset of the nodes of the DAG. This case occurs, e.g., in situations where one needs to deal with the possible presence of unobserved confounders, and thus is particularly relevant for experimental design in applications. With latent variables, the condition that all of the conditional independence relations among the observed variables that are implied by d -separation relations in the DAG is still a necessary condition for compatibility of a given such distribution with the DAG, but in general it is no longer sufficient, and this is what makes the problem difficult.

Whenever the observed variables in a DAG have finite cardinality 2 , one may also restrict the latent variables in the causal hypothesis to be of finite cardinality as well, without loss of generality [6]. As such, the mathematical problem which one must solve to infer the distributions that are compatible with the hypothesis is a quantifier elimination problem for some finite number of variables, as follows: The probability distributions of the observed variables can all be expressed as functions of the parameters specifying the conditional probabilities of each node given its parents, many of which involve latent variables. If one can eliminate these parameters, then one obtains constraints that refer exclusively to the probability distribution of the observed variables. This is a nonlinear quantifier elimination problem. The Tarski-Seidenberg theorem provides an in principle algorithm for an exact solution, but unfortunately the computational complexity of such quantifier elimination techniques is far too large to be practical, except in particularly simple scenarios [7, 8]. 3 Most uses of such techniques have been in the service of deriving compatibility conditions that are necessary but not sufficient, for both observational [10-13] and interventionist data [14-16].

Historically, the insufficiency of the conditional independence relations for causal inference in the presence of latent variables was first noted by Bell in the context of the hidden variable problem in quantum physics [17]. Bell considered an experiment for which considerations from relativity theory implied a very particular causal structure, and he derived an inequality that any distribution compatible with this structure, and compatible with certain constraints imposed by quantum theory, must satisfy. Bell also showed that this inequality was violated by distributions generated from entangled quantum states with particular choices of incompatible measurements. Later work, by Clauser, Horne, Shimony and Holt (CHSH) derived inequalities without assuming any facts about quantum correlations [18]; this derivation can retrospectively be understood as the first derivation of a constraint arising from the causal structure of the Bell scenario alone [19]. The CHSH inequality was the first example of a compatibility condition that appealed to the strength of the correlations rather than simply the conditional independence relations inherent therein. Since then, many generalizations of the CHSH inequality have been derived for the same sort of causal structure [20]. The idea that such work is best understood as a contribution to the field of causal inference has only recently been put forward [19, 21-23], as has the idea that techniques developed by researchers in the foundations of quantum theory may be usefully adapted to causal inference 4 .

Independently of Bell's work, Pearl later derived the instrumental inequality [31], which provides a necessary condition for the compatibility of a distribution with a causal structure known as the instrumental scenario . This causal structure comes up when considering, for instance, certain kinds of noncompliance in drug trials. More recently, Steudel and Ay [32] derived an inequality which must hold whenever a distribution on n variables is compatible with a causal structure where no set of more than c variables has a common ancestor, for arbitrary n, c ∈ N . More recent work has focused specifically on the simplest nontrivial case, with n = 3 and c = 2, a causal structure that has been called the Triangle scenario [21, 33] (Fig. 1).

Recently, Henson, Lal and Pusey [22] have investigated those causal structures for which merely confirming that a given distribution on observed variables satisfies all of the conditional independence relations implied by d -separation relations does not guarantee that this distribution is compatible with the causal structure. They coined the term interesting for causal structures that have this property. They presented a catalogue of all potentially interesting causal structures having six or fewer nodes in [22, App. E], of which all but three were shown to be indeed interesting. Evans has also sought to generate such a catalogue [34]. The Bell scenario, the Instrumental scenario, and the Triangle

1 As illustrated by the vast amount of literature on the subject, the problem can still be difficult in practice, for example due to a large number of variables in certain applications or due to finite statistics.

2 The cardinality of a variable is the number of possible values it can take.

3 Techniques for finding approximate solutions to nonlinear quantifier elimination may help [9].

4 The current article being another example of the phenomenon [9, 23-30].

scenario all appear in the catalogue, together with many others. Furthermore,they provided numerical evidence and an intuitive argument in favour of the hypothesis that the fraction of causal structures that are interesting increases as the total number of nodes increases. This highlights the need for moving beyond a case-by-case consideration of individual causal structures and for developing techniques for deriving constraints beyond conditional independence relations that can be applied to any interesting causal structure. Shannon-type entropic inequalities are an example of such constraints [21, 25, 32, 33, 35]. They can be derived for a given causal structure with relative ease, via exclusively linear quantifier elimination, since conditional independence relations are linear equations at the level of entropies. They also have the advantage that they apply for any finite cardinality of the observed variables. Recent work has also looked at non-Shannon type inequalities, potentially further strengthening the entropic constraints [26, 36]. However, entropic techniques are still wanting, since the resulting inequalities are often rather weak. For example, they are not sensitive enough to witness some known incompatibilities, in particular for distributions that only arise in quantum but not classical models with a given causal structure [21, 26] 5 .

In order to improve this state of affairs, we here introduce a new technique for deriving necessary conditions for the compatibility of a distribution of observed variables with a given causal structure, which we term the inflation technique . This technique is frequently capable of witnessing incompatibility when many other causal inference techniques fail. For example, in Example 2 of Sec. III B we prove that the tripartite 'W-type' distribution is incompatible with the Triangle scenario, despite the incompatibility being invisible to other causal inference tools such as conditional independence relations, Shannon-type [25, 33, 35] or non-Shanon-type entropic inequalities [26], or covariance matrices [27].

The inflation technique works roughly as follows. For a given causal structure under consideration, one can construct many new causal structures, termed inflations of this causal structure. An inflation duplicates one or more of the nodes of the original causal structure, while mirroring the form of the subgraph describing each node's ancestry. Furthermore, the causal parameters that one adds to the inflated causal structure mirror those of the original causal structure. We show that if marginal distributions on certain subsets of the observed variables in the original causal structure are compatible with the original causal structure, then the same marginal distributions on certain copies of those subsets in the inflated causal structure are compatible with the inflated causal structure (Lemma 4). Similarly, we show that any necessary condition for compatibility of such distributions with the inflated causal structure translates into a necessary condition for compatibility with the original causal structure (Corollary 6). Thus, applying standard techniques for deriving causal compatibility inequalities to the inflated causal structure typically results in new causal compatibility inequalities for the original causal structure. The reader interested in seeing an example of how our technique works may want to take a sneak peak at Sec. III B.

Concretely, we consider causal compatibility inequalities for the inflated causal structure that are obtained as follows. One begins by identifying inequalities for the marginal problem , which is the problem of determining when a given family of marginal distributions on some subsets of variables can arise as marginals of a global joint distribution. One then looks for sets of variables within the inflated causal structure which admit of nontrivial d-separation relations . (We mainly consider sets of variables with disjoint ancestries.) For each such set, one writes down the appropriate factorization of their joint distribution. These factorization conditions are finally substituted into the marginal problem inequalities to obtain causal compatibility inequalities for the inflated causal structure. Although these constraints are extremely weak, the inflation technique turns them into powerful necessary conditions for compatibility with the original causal structure.

We show how to identify all relevant factorization conditions from the structure of the inflated causal structure, and also how to obtain all marginal problem inequalities by enumerating all facets of the associated marginal polytope (Sec. IV B). Translating the resulting causal compatibility inequalities on the inflated causal structure back to the original causal structure, we obtain causal compatibility conditions in the form of nonlinear (polynomial) inequalities. As a concrete example of our technique, we present all the causal compatibility inequalities that can be derived in this manner from a particular inflation of the Triangle scenario (Sec. IV C). In general, we also show how to efficiently obtain a partial set of marginal problem inequalities by enumerating transversals of a certain hypergraph (Sec. IV D).

Besides the entropic techniques discussed above, our method is the first systematic tool for causal inference with latent variables that goes beyond observed conditional independence relations while not assuming any bounds on the cardinality of each latent variable. While our method can be used to systematically generate necessary conditions for compatibility with a given causal structure, we do not know whether the set of inequalities thus generated are also sufficient.

5 It should be noted that non-standard entropic inequalities can be obtained through a fine-graining of the causal scenario, namely by conditioning on the distinct finite possible outcomes of root variables ('settings'), and these types of inequalities have proven somewhat sensitive to quantum-classical separations [33, 37, 38]. Such inequalities are still limited, however, in that they are only applicable to those causal structures which feature observed root nodes. The potential utility of entropic analysis where fine-graining is generalized to non -root observed nodes is currently being explored by E.W. and Rafael Chaves. Jacques Pienaar has also alluded to similar considerations as a possible avenue for further research [36].

We present our technique primarily as a tool for standard causal inference, but we also briefly discuss applications to quantum causal models [22, 23, 39-43] and causal models within generalized probabilistic theories [22] (Sec. V D). In particular, we discuss when our inequalities are necessary conditions for a distribution of observed variables to be compatible with a given causal structure within any generalized probabilistic theory [44, 45] rather than simply within classical probability theory.

## II. BASIC DEFINITIONS OF CAUSAL MODELS AND COMPATIBILITY

A causal model consists of a pair of objects: a causal structure and a family of causal parameters . We define each in turn. First, recall that a directed acyclic graph (DAG) G consists of a finite set of nodes Nodes ( G ) and a set of directed edges Edges ( G ) ⊆ Nodes ( G ) × Nodes ( G ), meaning that an edge is an ordered pair of nodes, such that this directed graph is acylic , which means that there is no way to start and end at the same node by traversing edges forward. In the context of a causal model, each node X ∈ Nodes ( G ) will be equipped with a random variable that we denote by the same letter X . A directed edge X → Y corresponds to the possibility of a direct causal influence from the variable X to the variable Y . In this way, the edges represent causal relations.

Our terminology for the causal relations between the nodes in a DAG is the standard one. The parents of a node X in G are defined as those nodes from which an outgoing edge terminates at X , i.e. Pa G ( X ) = { Y | Y → X } . When the graph G is clear from the context, we omit the subscript. Similarly, the children of a node X are defined as those nodes at which edges originating at X terminate, i.e. Ch G ( X ) = { Y | X → Y } . If X is a set of nodes, then we put Pa G ( X ) := ⋃ X ∈ X Pa G ( X ) and Ch G ( X ) := ⋃ X ∈ X Ch G ( X ). The ancestors of a set of nodes X , denoted An G ( X ), are defined as those nodes which have a directed path to some node in X , including the nodes in X themselves 6 . Equivalently, An ( X ) := ⋃ n ∈ N Pa n ( X ), where Pa n ( X ) is defined inductively via Pa 0 ( X ) := X and Pa n +1 ( X ) := Pa ( Pa n ( X )).

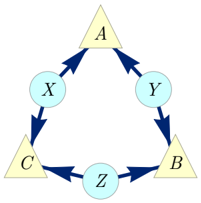

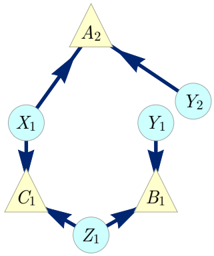

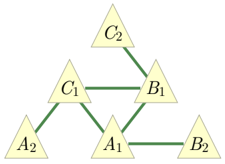

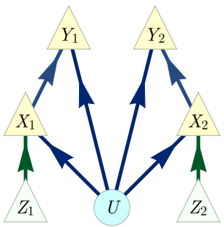

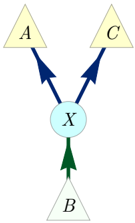

A causal structure is a DAG that incorporates a distinction between two types of nodes: the set of observed nodes, and the set of latent nodes 7 . Following [22], we will depict the observed nodes by triangles and the latent nodes by circles, as in Fig. 1 8 . Henceforth, we will use G to refer to the causal structure rather than just the DAG, so that G includes a specification of which variables are observed, denoted ObservedNodes ( G ), and which are latent, denoted LatentNodes ( G ). Frequently, we will also imagine the causal structure to include a specification of the cardinalities of the observed variables. While these are finite in all of our examples, the inflation technique may apply in the case of continuous variables as well. Although we will not do so in this work, the inflation technique can also be applied in the presence of other types of constraints, e.g. when all variables are assumed to be Gaussian.

The second component of a causal model is a family of causal parameters . The causal parameters specify, for each node X , the conditional probability distribution over the values of the random variable X , given the values of the variables in Pa ( X ). In the case of root nodes, we have Pa ( X ) = ∅ , and the conditional distribution is an unconditioned distribution. We write P Y | X for the conditional distribution of a variable Y given a variable X , while the particular conditional probability of the variable Y taking the value y given that the variable X takes the values x is denoted 9 P Y | X ( y | x ). Therefore, a family of causal parameters has the form

$$\{ P _ { X | \text {$\mathbb{ }P_{G}(X)$} } \colon X \in \text {Nodes} ( G ) \} .$$

Finally, a causal model M consists of a causal structure together with a family of causal parameters,

M

= (

G,

{

P

X

|

Pa

G

(

X

)

:

X

∈

Nodes

(

G

)

}

)

.

A causal model specifies a joint distribution of all variables in the causal structure via

$$P _ { \text {Nodes} ( G ) } = \prod _ { X \in \text {Nodes} ( G ) } P _ { X | \text {Pa} _ { G } ( X ) } ,$$

where ∏ denotes the usual product of functions, so that e.g. ( P Y | X × P Y )( x, y ) = P Y | X ( y | x ) P X ( x ). A distribution P Nodes ( G ) arises in this way if and only if it satisfies the Markov conditions associated to G [1, Sec. 1.2].

6 The inclusion of a node itself within the set of its ancestors is contrary to the colloquial use of the term 'ancestors'. One uses this definition so that any correlation between two variables can always be attributed to a common 'ancestor'. This includes, for instance, the case where one variable is a parent of the other.

7 Pearl [1, Def. 2.3.2] uses the term latent structure when referring to a DAG supplemented by a specification of latent nodes, whereas here that specification is implicit in our term causal structure .

8 Note that this convention differs from that of [39], where triangles represent classical variables and circles represent quantum systems.

9 Although our notation suggests that all variables are either discrete or described by densities, we do not make this assumption. All of our equations can be translated straightforwardly into proper measure-theoretic notation.

The joint distribution of the observed variables is obtained from the joint distribution of all variables by marginalization over the latent variables,

$$P _ { \text {ObservedNodes} ( G ) } = \sum _ { \{ U \colon U \in \text {LatentNodes} ( G ) \} } P _ { \text {Nodes} ( G ) } ,$$

where ∑ U denotes marginalization over the (latent) variable U , so that ( ∑ U P UV )( v ) := ∑ u P UV ( uv ).

Definition 1. A given distribution P ObservedNodes ( G ) is compatible with a given causal structure G if there is some choice of the causal parameters that yields P ObservedNodes ( G ) via Eqs. (2,3). A given family of distributions on a family of subsets of observed variables is compatible with a given causal structure if and only if there exists some P ObservedNodes ( G ) such that both

1. P ObservedNodes ( G ) is compatible with the causal structure, and

2. P ObservedNodes ( G ) yields the given family as marginals.

## III. THE INFLATION TECHNIQUE FOR CAUSAL INFERENCE

## A. Inflations of a Causal Model

We now introduce the notion of an inflation of a causal model . If a causal model specifies a causal structure G , then an inflation of this model specifies a new causal structure, G ′ , which we refer to as an inflation of G . For a given causal structure G , there are many causal structures G ′ constituting an inflation of G . We denote the set of such causal structures Inflations ( G ). The particular choice of G ′ ∈ Inflations ( G ) then determines how to map a causal model M on G into a causal model M ′ on G ′ , since the family of causal parameters of M ′ will be determined by a function M ′ = Inflation G → G ′ ( M ) that we define below. We begin by defining when a causal structure G ′ is an inflation of G , building on some preliminary definitions.

For any subset of nodes X ⊆ Nodes ( G ), we denote the induced subgraph on X by SubDAG G ( X ). It consists of the nodes X and those edges of G which have both endpoints in X . Of special importance to us is the ancestral subgraph AnSubDAG G ( X ), which is the subgraph induced by the ancestry of X , AnSubDAG G ( X ) := SubDAG G ( An G ( X )).

In an inflated causal structure G ′ , every node is also labelled by a node of G . That is, every node of the inflated causal structure G ′ is a copy of some node of the original causal structure G , and the copies of a node X of G in G ′ are denoted X 1 , . . . , X k . The subscript that indexes the copies is termed the copy-index . A copy is classified as observed or latent according to the classification of the original. Similarly, any constraints on cardinality or other types of constraints such as Gaussianity are also inherited from the original. When two objects (e.g. nodes, sets of nodes, causal structures, etc. . . ) are the same up to copy-indices, then we use ∼ to indicate this, as in X i ∼ X j ∼ X . In particular, X ∼ X ′ for sets of nodes X ⊆ Nodes ( G ) and X ′ ⊆ Nodes ( G ′ ) if and only if X ′ contains exactly one copy of every node in X . Similarly, SubDAG G ′ ( X ′ ) ∼ SubDAG G ( X ) means that in addition to X ∼ X ′ , an edge is present between two nodes in X ′ if and only if it is present between the two associated nodes in X .

In order to be an inflation, G ′ must locally mirror the causal structure of G :

Definition 2. The causal structure G ′ is said to be an inflation of G , that is, G ′ ∈ Inflations ( G ) , if and only if for every V i ∈ ObservedNodes ( G ′ ) , the ancestral subgraph of V i in G ′ is equivalent, under removal of the copy-index, to the ancestral subgraph of V in G ,

$$G ^ { \prime } \in \text {Inflations} ( G ) \quad \text {iff} \quad \forall V _ { i } \in \text {ObservedNodes} ( G ^ { \prime } ) \colon \, A n \text {SubDAG} _ { G ^ { \prime } } ( V _ { i } ) \sim \text {AnSubDAG} _ { G } ( V ) .$$

Equivalently, the condition can be restated wholly in terms of local causal relationships, i.e.

$$G ^ { \prime } \in \text {Inflations} ( G ) \quad \text {iff} \quad \forall X _ { i } \in \text {Nodes} ( G ^ { \prime } ) \colon \, \text {Pa} _ { G ^ { \prime } } ( X _ { i } ) \sim \text {Pa} _ { G } ( X ) .$$

In particular, this means that an inflation is a fibration of graphs [46], although there are fibrations that are not inflations.

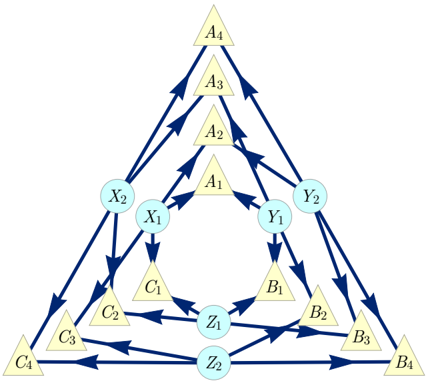

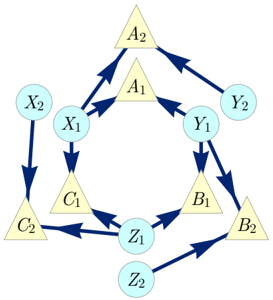

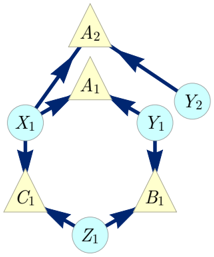

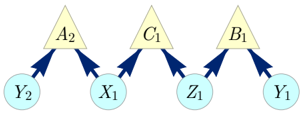

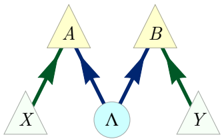

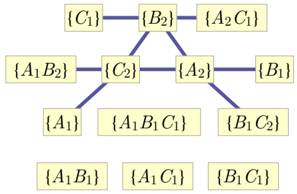

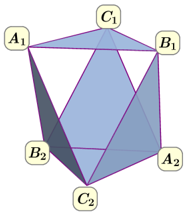

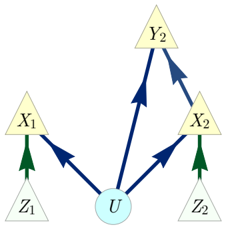

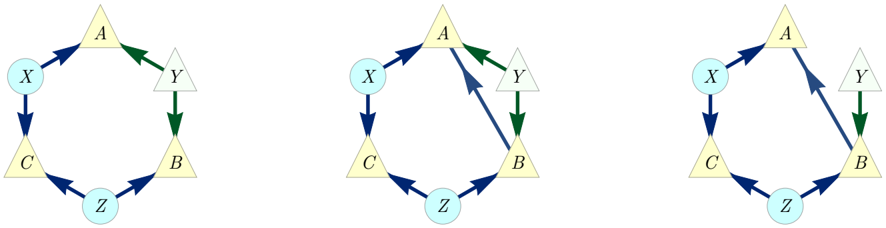

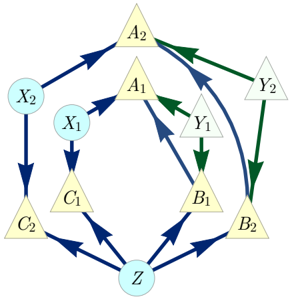

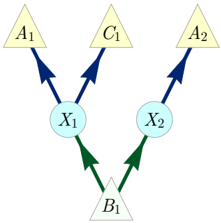

To illustrate the notion of inflation, we consider the causal structure of Fig. 1, which is called the Triangle scenario (for obvious reasons) and which has been studied recently by a number of authors [22 (Fig. E#8), 19 (Fig. 18b), 21 (Fig. 3), 33 (Fig. 6a), 40 (Fig. 1a), 47 (Fig. 8), 32 (Fig. 1b), 25 (Fig. 4b)]. Different inflations of the Triangle scenario are depicted in Figs. 2 to 6, which will be referred to as the Web , Spiral , Capped , and Cut inflation, respectively.

We now define the function Inflation G → G ′ , that is, we specify how causal parameters are defined for a given inflated causal structure in terms of causal parameters on the original causal structure.

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Diagram: Cyclic Relationship

### Overview

The image depicts a cyclic diagram showing relationships between six elements labeled A, B, C, X, Y, and Z. The diagram illustrates a circular flow or interaction between these elements, with directional arrows indicating the direction of the relationship.

### Components/Axes

* **Shapes:** The diagram uses two shapes: triangles and circles.

* Triangles are colored light yellow and labeled A, B, and C.

* Circles are colored light blue and labeled X, Y, and Z.

* **Arrows:** Dark blue arrows indicate the direction of the relationship between the elements.

* **Arrangement:** The elements are arranged in a circular pattern. The triangles (A, B, C) form the vertices of an equilateral triangle, and the circles (X, Y, Z) are positioned between the triangles.

### Detailed Analysis

* **Relationships:**

* X points to C.

* C points to Z.

* Z points to B.

* B points to Y.

* Y points to A.

* A points to X.

### Key Observations

The diagram shows a closed-loop relationship where each element influences the next in a specific sequence. The triangles and circles alternate in the cycle.

### Interpretation

The diagram illustrates a cyclic process or dependency between the elements A, B, C, X, Y, and Z. The arrows indicate the direction of influence or flow. This type of diagram is often used to represent feedback loops, circular dependencies, or cyclical processes in various systems. The alternating shapes (triangles and circles) might represent different types of entities or stages within the cycle.

</details>

FIG. 1. The Triangle scenario.

FIG. 2. The Web inflation of the Triangle scenario where each latent node has been duplicated and each observed node has been quadrupled. The four copies of each observed node correspond to the four possible choices of parentage given the pair of copies of each latent parent of the observed node.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Diagram: Network Flow

### Overview

The image is a network diagram depicting relationships and flow between different nodes. The nodes are labeled with letters (A, B, C, X, Y, Z) and numbers (1-4). The connections between the nodes are represented by blue arrows, indicating the direction of flow. The diagram is arranged in a roughly triangular shape.

### Components/Axes

* **Nodes:** The nodes are represented by either triangles (pale yellow fill) or circles (light blue fill).

* Triangles: A1, A2, A3, A4, B1, B2, B3, B4, C1, C2, C3, C4

* Circles: X1, X2, Y1, Y2, Z1, Z2

* **Connections:** The connections are represented by blue arrows, indicating the direction of flow between the nodes.

* **Layout:** The diagram is arranged in a triangular shape, with the 'A' nodes at the top, 'B' nodes at the bottom-right, and 'C' nodes at the bottom-left. The 'X', 'Y', and 'Z' nodes are positioned within the triangle.

### Detailed Analysis or ### Content Details

* **Top (A Nodes):**

* A1 is connected to X1, Y1, A2.

* A2 is connected to X2, Y2, A3.

* A3 is connected to X2, Y2, A4.

* A4 is connected to X2, Y2.

* **Bottom-Left (C Nodes):**

* C1 is connected to X1, Z1.

* C2 is connected to X2, Z1.

* C3 is connected to X2, Z2.

* C4 is connected to X2, Z2.

* **Bottom-Right (B Nodes):**

* B1 is connected to Y1, Z1.

* B2 is connected to Y2, Z1.

* B3 is connected to Y2, Z2.

* B4 is connected to Y2, Z2.

* **Inner Nodes (X, Y, Z):**

* X1 is connected to C1, Z1.

* X2 is connected to C2, C3, C4, Z2.

* Y1 is connected to B1, Z1.

* Y2 is connected to B2, B3, B4, Z2.

* Z1 is connected to Z2.

### Key Observations

* The 'A' nodes form a hierarchy, with flow from A1 to A4.

* The 'X' and 'Y' nodes act as intermediaries between the 'A' nodes and the 'C' and 'B' nodes, respectively.

* The 'Z' nodes appear to be the final destination of the flow, with Z2 being the ultimate sink.

* There is a cyclical pattern in the center of the diagram, involving A1, X1, Y1, and Z1.

### Interpretation

The diagram represents a network flow model, where the nodes represent different entities or states, and the arrows represent the flow of information or resources between them. The hierarchical structure of the 'A' nodes suggests a process with multiple stages. The 'X', 'Y', and 'Z' nodes act as intermediaries or processing units. The cyclical pattern in the center suggests a feedback loop or iterative process. The overall structure of the diagram implies a complex system with interconnected components and a defined flow of information or resources.

</details>

FIG. 3. The Spiral inflation of the Triangle scenario. Notably, this causal structure is the ancestral subgraph of the set { A 1 A 2 B 1 B 2 C 1 C 2 } in the Web inflation (Fig. 2).

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Diagram: Directed Graph with Labeled Nodes

### Overview

The image depicts a directed graph consisting of triangular and circular nodes, interconnected by arrows indicating the direction of relationships. The nodes are labeled with letters and numerical subscripts.

### Components/Axes

* **Nodes:**

* Triangular nodes: A1, A2, B1, B2, C1, C2. These are colored light yellow.

* Circular nodes: X1, X2, Y1, Y2, Z1, Z2. These are colored light blue.

* **Edges:** Arrows indicate the direction of the relationship between nodes. The arrows are dark blue.

### Detailed Analysis

The graph shows the following connections:

* A1: Connected to X1, Y1, and A2.

* A2: Connected to Y2 and B2.

* B1: Connected to Y1 and Z1.

* B2: Connected to Z2.

* C1: Connected to X1 and Z1.

* C2: Connected to X2.

* X1: Connected to C1.

* X2: Connected to C2.

* Y1: Connected to B1.

* Y2: Connected to A1.

* Z1: Connected to C2 and B2.

* Z2: Connected to Z1.

### Key Observations

* The graph has a cyclical structure involving A1, X1, C1, Z1, B1, and Y1.

* Nodes A2, B2, C2, X2, Y2, and Z2 appear to be peripheral, with fewer connections.

* Triangular nodes are connected to both triangular and circular nodes, while circular nodes primarily connect to triangular nodes.

### Interpretation

The diagram represents a network of relationships between different entities, potentially representing a system where the triangular nodes (A, B, C) and circular nodes (X, Y, Z) have distinct roles. The arrows indicate the direction of influence or flow between these entities. The cyclical structure suggests a feedback loop or recurring process within the system. The peripheral nodes (A2, B2, C2, X2, Y2, Z2) might represent inputs or outputs to the core cyclical process. The diagram could be used to model various systems, such as social networks, biological pathways, or economic models.

</details>

Definition 3. Consider causal models M and M ′ where DAG ( M ) = G and DAG ( M ′ ) = G ′ , where G ′ is an inflation of G . Then M ′ is said to be the G → G ′ inflation of M , that is, M ′ = Inflation G → G ′ ( M ) , if and only if for every node X i in G ′ , the manner in which X i depends causally on its parents within G ′ is the same as the manner in which X depends causally on its parents within G . Noting that X i ∼ X and that Pa G ′ ( X i ) ∼ Pa G ( X ) by Eq. (5) , one can formalize this condition as:

$$\forall X _ { i } \in \text {Nodes} ( G ^ { \prime } ) \, \colon \, P _ { X _ { i } | \text {$p_{a}_{G^{\prime}}(X_{i})}$} = P _ { X | \text {$p_{a}_{G}(X)$} } .$$

For a given triple G , G ′ , and M , this definition specifies a unique inflation model M ′ , resulting in a well-defined function Inflation G → G ′ .

To sum up, the inflation of a causal model is a new causal model where (i) each variable in the original causal structure may have counterparts in the inflated causal structure with ancestral subgraphs mirroring those of the

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Diagram: Causal Diagram

### Overview

The image is a causal diagram showing relationships between variables. The diagram consists of nodes represented by triangles and circles, connected by directed edges (arrows) indicating causal influences.

### Components/Axes

* **Nodes:**

* Triangles: Represent variables A2, A1, C1, and B1.

* Circles: Represent variables X1, Y1, Y2, and Z1.

* **Edges:** Directed arrows indicating the direction of causal influence.

### Detailed Analysis or ### Content Details

The diagram shows the following relationships:

* A2 influences X1 and Y2.

* A2 influences A1.

* A1 influences X1 and Y1.

* X1 influences C1.

* Y1 influences B1.

* C1 influences Z1.

* B1 influences Z1.

### Key Observations

* The diagram forms a cyclical structure.

* A2 appears to be a root node, influencing multiple other nodes.

* Z1 is influenced by both C1 and B1.

### Interpretation

The diagram represents a causal model where the variables are interconnected. The arrows indicate the direction of influence, suggesting how changes in one variable might affect others. The cyclical structure implies feedback loops or complex interactions between the variables. The diagram could be used to analyze the potential effects of interventions or to understand the underlying mechanisms driving the system.

</details>

FIG. 4. The Capped inflation of the Triangle scenario; notably also the ancestral subgraph of the set { A 1 A 2 B 1 C 1 } in the Spiral inflation (Fig. 3).

FIG. 5. The Cut inflation of the Triangle scenario; notably also the ancestral subgraph of the set { A 2 B 1 C 1 } in the Capped inflation (Fig. 4). Unlike the other examples, this inflation does not contain the Triangle scenario as a subgraph.

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Diagram: Directed Graph

### Overview

The image presents a directed graph consisting of nodes represented by triangles and circles, connected by arrows indicating the direction of relationships between them. The nodes are labeled with letters and numerical subscripts.

### Components/Axes

* **Nodes:**

* Triangles: Labeled as A2, C1, and B1. These are colored light yellow.

* Circles: Labeled as X1, Y2, Y1, and Z1. These are colored light blue.

* **Edges:**

* Arrows: Dark blue arrows indicate the direction of the relationships between the nodes.

### Detailed Analysis

* **Node A2 (Triangle):** Located at the top of the diagram.

* Has an incoming edge from X1.

* Has an outgoing edge to Y2.

* **Node X1 (Circle):** Located on the left side of the diagram.

* Has an outgoing edge to C1.

* Has an incoming edge from A2.

* **Node C1 (Triangle):** Located at the bottom-left of the diagram.

* Has an outgoing edge to Z1.

* Has an incoming edge from X1.

* **Node Z1 (Circle):** Located at the bottom center of the diagram.

* Has incoming edges from C1 and B1.

* **Node B1 (Triangle):** Located at the bottom-right of the diagram.

* Has an outgoing edge to Z1.

* Has an incoming edge from Y1.

* **Node Y1 (Circle):** Located on the right side of the diagram.

* Has an outgoing edge to B1.

* **Node Y2 (Circle):** Located at the top-right of the diagram.

* Has an incoming edge from A2.

### Key Observations

* The graph contains a cycle involving nodes A2, X1, C1, Z1, B1, and Y1.

* Node Y2 is only connected to A2 and is not part of the main cycle.

### Interpretation

The diagram represents a system of relationships between different entities, where the arrows indicate the direction of influence or flow. The cycle suggests a feedback loop or interdependence among the nodes involved. Node Y2 appears to be an output or consequence of A2, without influencing the rest of the system directly. The use of triangles and circles might indicate different types of entities or states within the system. Without further context, the specific meaning of each node and the nature of the relationships remain abstract.

</details>

FIG. 6. A different depiction of the Cut inflation of Fig. 5.

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Diagram: Directed Acyclic Graph

### Overview

The image depicts a directed acyclic graph (DAG) consisting of nodes represented by triangles and circles, connected by directed edges (arrows). The graph shows dependencies between variables, with arrows indicating the direction of influence.

### Components/Axes

* **Nodes:**

* Triangles: Labeled as A2, C1, and B1. These are positioned at the top of the diagram. The triangles are light yellow.

* Circles: Labeled as Y2, X1, Z1, and Y1. These are positioned at the bottom of the diagram. The circles are light blue.

* **Edges:**

* Directed arrows: Dark blue arrows indicate the direction of influence from the circular nodes to the triangular nodes.

### Detailed Analysis

* **Node A2:** Has incoming edges from nodes Y2 and X1.

* **Node C1:** Has incoming edges from nodes X1 and Z1.

* **Node B1:** Has incoming edges from nodes Z1 and Y1.

* **Node Y2:** Has outgoing edge to node A2.

* **Node X1:** Has outgoing edges to nodes A2 and C1.

* **Node Z1:** Has outgoing edges to nodes C1 and B1.

* **Node Y1:** Has outgoing edge to node B1.

### Key Observations

* The graph is acyclic, meaning there are no directed cycles.

* Each triangular node has two incoming edges from circular nodes.

* The circular nodes have only outgoing edges.

### Interpretation

The diagram represents a causal model where the circular nodes (Y2, X1, Z1, Y1) are independent variables or causes, and the triangular nodes (A2, C1, B1) are dependent variables or effects. The arrows indicate the direction of causality. For example, the value of A2 is influenced by the values of Y2 and X1. This type of graph is commonly used in Bayesian networks and other probabilistic graphical models to represent dependencies between variables.

</details>

originals, and (ii) the manner in which a variable depends causally on its parents in the inflated causal structure is given by the manner in which its counterpart in the original causal structure depends causally on its parents. The operation of modifying a DAG and equipping the modified version with conditional probability distributions that mirror those of the original also appears in the do calculus and twin networks of Pearl [1], and moreover bears some resemblance to the adhesivity technique used in deriving non-Shannon-type entropic inequalities (see also Appendix E).

We are now in a position to describe the key property of the inflation of a causal model, the one that makes it useful for causal inference. With notation as in Definition 3, let P X and P X ′ denote marginal distributions on some X ⊆ Nodes ( G ) and X ′ ⊆ Nodes ( G ′ ), respectively. Then

$$\text {if} \quad X ^ { \prime } \sim X \text { and AnSubDAG_{G^{\prime}}(X)^{2} \sim AnSubDAG_{G}(X)} , \quad \text {then} \quad P _ { X ^ { \prime } } = P _ { X } .$$

This follows from the fact that the distributions on X ′ and X depend only on their ancestral subgraphs and the parameters defined thereon, which by the definition of inflation are the same for X ′ and for X . It is useful to have a name for those sets of observed nodes in G ′ which satisfy the antecedent of Eq. (7), that is, for which one can find a copy-index-equivalent set in the original causal structure G with a copy-index-equivalent ancestral subgraph. We call such subsets of the observed nodes of G ′ injectable sets ,

$$V ^ { \prime } & \in \text {InjectableSets} ( G ^ { \prime } ) \\ \text {iff} & \quad \exists V \subseteq \text {ObservedNodes} ( G ) \ \colon \ V ^ { \prime } \sim V \text { and AnSubDAG$_{G}$} ^ { \prime } ( V ^ { \prime } ) \sim \text {AnSubDAG} _ { G } ( V ) .$$

Similarly, those sets of observed nodes in the original causal structure G which satisfy the antecedent of Eq. (7), that is, for which one can find a corresponding set in the inflated causal structure G ′ with a copy-index-equivalent ancestral subgraph, we describe as images of the injectable sets under the dropping of copy-indices,

$$V \in & \text {ImagesInjectableSets} ( G ) \\ \text {iff} \quad & \exists V ^ { \prime } \subseteq \text {ObservedNodes} ( G ^ { \prime } ) \ \colon \ V ^ { \prime } \sim V \text { and AnSubDAG$_{G}$} ^ { \prime } ( V ^ { \prime } ) \sim \text {AnSubDAG} _ { G } ( V ) .$$

Clearly, V ∈ ImagesInjectableSets ( G ) iff ∃ V ′ ⊆ InjectableSets ( G ′ ) such that V ∼ V ′ .

For example in the Spiral inflation of the Triangle scenario depicted in Fig. 3, the set { A 1 B 1 C 1 } is injectable because its ancestral subgraph is equivalent up to copy-indices to the ancestral subgraph of { ABC } in the original causal structure, and the set { A 2 C 1 } is injectable because its ancestral subgraph is equivalent to that of { AC } in the original causal structure.

A set of nodes in the inflated causal structure can only be injectable if it contains at most one copy of any node from the original causal structure. More strongly, it can only be injectable if its ancestral subgraph contains at most one copy of any observed or latent node from the original causal structure. Thus, in Fig. 3, { A 1 A 2 C 1 } is not injectable because it contains two copies of A , and { A 2 B 1 C 1 } is not injectable because its ancestral subgraph contains two copies of Y .

We can now express Eq. (7) in the language of injectable sets,

$$P _ { V ^ { \prime } } = P _ { V } \text {\quad if\, } V ^ { \prime } \sim V \text {\ and\ } V ^ { \prime } \in \text {InjectableSets} ( G ^ { \prime } ) .$$

In the example of Fig. 3, injectability of the sets { A 1 B 1 C 1 } and { A 2 C 1 } thus implies that the marginals on each of these are equal to the marginals on their counterparts, { ABC } and { AC } , in the original causal model, so that P A 1 B 1 C 1 = P ABC and P A 2 C 1 = P AC .

## B. Witnessing Incompatibility

Finally, we can explain why inflation is relevant for deciding whether a distribution is compatible with a causal structure. For a distribution P ObservedNodes ( G ) to be compatible with G , there must be a causal model M that yields it. Per Definition 1, given a P ObservedNodes ( G ) compatible with G , the family of marginals of P ObservedNodes ( G ) on the images of the injectable sets of observed variables in G , { P V : V ∈ ImagesInjectableSets ( G ) } , are also said to be compatible with G . Looking at the inflation model M ′ = Inflation G → G ′ ( M ), Eq. (10) implies that the family of distributions on the injectable sets given by { P V ′ : V ′ ∈ InjectableSets ( G ′ ) } - where P V ′ = P V for V ′ ∼ V - is compatible with G ′ .

The same considerations apply for any family of distributions such that each set of variables in the family corresponds to an injectable set (i.e., when the family of distributions is associated with an incomplete collection of injectable sets.) Formally,

Lemma 4. Let the causal structure G ′ be an inflation of G . Let S ′ ⊆ InjectableSets ( G ′ ) be a collection of injectable sets, and let S ⊆ ImagesInjectableSets ( G ) be the images of this collection under the dropping of copy-indices. If a distribution P ObservedNodes ( G ) is compatible with G , then the family of distributions { P V : V ∈ S } is compatible with G per Definition 1. Furthermore the corresponding family of distributions { P V ′ : V ′ ∈ S ′ } , defined via P V ′ = P V for V ′ ∼ V , must be compatible with G ′ .

We have thereby related a question about compatibility with the original causal structure to one about compatibility with the inflated causal structure. If one can show that the new compatibility question on G ′ is answered in the negative, then it follows that the original compatibility question on G is answered in the negative as well. Some simple examples serve to illustrate the idea.

## Example 1 Incompatibility of perfect three-way correlation with the Triangle scenario

Consider the following causal inference problem. We are given a joint distribution of three binary variables, P ABC , where the marginal on each variable is uniform and the three are perfectly correlated,

$$P _ { A B C } = \frac { [ 0 0 0 ] + [ 1 1 1 ] } { 2 } , \quad i . e . , \quad P _ { A B C } ( a b c ) = \begin{cases} \frac { 1 } { 2 } & \text {if $a=b=c$} , \\ 0 & \text {otherwise,} \end{cases}$$

and we would like to determine whether it is compatible with the Triangle scenario (Fig. 1). The notation [ abc ] in Eq. (11) is shorthand for the deterministic distribution where A , B , and C take the values a, b , and c respectively; in terms of the Kronecker delta, [ abc ] := δ A,a δ B,b δ C,c .

Since there are no conditional independence relations among the observed variables in the Triangle scenario, there is no opportunity for ruling out the distribution on the grounds that it fails to satisfy the required conditional independences.

To solve the causal inference problem, we consider the Cut inflation (Fig. 5). The injectable sets include { A 2 C 1 } and { B 1 C 1 } . Their images in the original causal structure are { AC } and { BC } , respectively.

We will show that the distribution of Eq. (11) is not compatible with the Triangle scenario by demonstrating that the contrary assumption of compatibility implies a contradiction. If the distribution of Eq. (11) were compatible with the Triangle scenario, then so too would its pair of marginals on { AC } and { BC } , which are given by:

$$P _ { A C } = P _ { B C } = \frac { [ 0 0 ] + [ 1 1 ] } { 2 } .$$

By Lemma 4, this compatibility assumption would entail that the marginals

$$P _ { A _ { 2 } C _ { 1 } } = P _ { B _ { 1 } C _ { 1 } } = \frac { [ 0 0 ] + [ 1 1 ] } { 2 } & & ( 1 2 )$$

are compatible with the Cut inflation of the Triangle scenario. We now show that the latter compatibility cannot hold, thereby obtaining our contradiction. It suffices to note that (i) the only joint distribution that exhibits perfect correlation between A 2 and C 1 and between B 1 and C 1 also exhibits perfect correlation between A 2 and B 1 , and (ii) A 2 and B 1 have no common ancestor in the Cut inflation and hence must be marginally independent in any distribution that is compatible with it.

We have therefore certified that the distribution P ABC of Eq. (11) is not compatible with the Triangle scenario, recovering a result originally proven by Steudel and Ay [32].

## Example 2 Incompatibility of the W-type distribution with the Triangle scenario

Consider another causal inference problem on the Triangle scenario, namely, that of determining whether the distribution

$$P _ { A B C } = \frac { [ 1 0 0 ] + [ 0 1 0 ] + [ 0 0 1 ] } { 3 } , \quad i . e . , \quad P _ { A B C } ( a b c ) = \begin{cases} \frac { 1 } { 3 } & \text {if $a+b+c=1$,} \\ 0 & \text {otherwise.} \end{cases}$$

is compatible with it. We call this the W-type distribution 10 . To settle this compatibility question, we consider the Spiral inflation of the Triangle scenario (Fig. 3). The injectable sets in this case include { A 1 B 1 C 1 } , { A 2 C 1 } , { B 2 A 1 } , { C 2 B 1 } , { A 2 } , { B 2 } and { C 2 } .

10 The name stems from the fact that this distribution is reminiscent of the famous quantum state appearing in [48], called the W state .

Therefore, we turn our attention to determining whether the marginals of the W-type distribution on the images of these injectable sets are compatible with the Triangle scenario. These marginals are:

$$P _ { A B C } = \frac { [ 1 0 0 ] + [ 0 1 0 ] + [ 0 0 1 ] } { 3 } ,$$

$$P _ { A C } = P _ { B A } = P _ { C B } = \frac { [ 1 0 ] + [ 0 1 ] + [ 0 0 ] } { 3 } ,$$

$$P _ { A } = P _ { B } = P _ { C } = \frac { 2 } { 3 } [ 0 ] + \frac { 1 } { 3 } [ 1 ] .$$

By Lemma 4, this compatibility holds only if the associated marginals for the injectable sets, namely,

$$P _ { A _ { 1 } B _ { 1 } C _ { 1 } } = \frac { [ 1 0 0 ] + [ 0 1 0 ] + [ 0 0 1 ] } { 3 } ,$$

$$P _ { A _ { 2 } C _ { 1 } } = P _ { B _ { 2 } A _ { 1 } } = P _ { C _ { 2 } B _ { 1 } } = \frac { [ 1 0 ] + [ 0 1 ] + [ 0 0 ] } { 3 } ,$$

$$P _ { A _ { 2 } } = P _ { B _ { 2 } } = P _ { C _ { 2 } } = \frac { 2 } { 3 } [ 0 ] + \frac { 1 } { 3 } [ 1 ] , & & ( 1 9 )$$

are compatible with the Spiral inflation (Fig. 3). Eq. (18) implies that C 1 =0 whenever A 2 =1. It similarly implies that A 1 =0 whenever B 2 =1, and that B 1 =0 whenever C 2 =1,

A

=1

=

⇒

C

=0

,

$$B _ { 2 } = 1 \implies A _ { 1 } = 0 ,$$

$$C _ { 2 } { = } 1 \implies B _ { 1 } { = } 0 .$$

The Spiral inflation is such that A 2 , B 2 and C 2 have no common ancestor and consequently are marginally independent in any distribution compatible with it. Together with the fact that each value of these variables has a nonzero probability of occurrence (by Eq. (19)), this implies that

$$\text {Sometimes } \ A _ { 2 } = & 1 \text { and } B _ { 2 } = & 1 \text { and } C _ { 2 } = & 1 .$$

Finally, Eq. (20) together with Eq. (21) entails

$$\text {Sometimes} \quad A _ { 1 } = & 0 \text { and } B _ { 1 } = & 0 \text { and } C _ { 1 } = & 0 .$$

This, however, contradicts Eq. (17). Consequently, the family of marginals described in Eqs. (17-19) is not compatible with the causal structure of Fig. 3. By Lemma 4, this implies that the family of marginals described in Eqs. (14-16)-and therefore the W-type distribution of which they are marginals-is not compatible with the Triangle scenario.

To our knowledge, this is a new result. In fact, the incompatibility of the W-type distribution with the Triangle scenario cannot be derived via any of the existing causal inference techniques. In particular:

1. Checking conditional independence relations is not relevant here, as there are no conditional independence relations between any observed variables in the Triangle scenario.

2. The relevant Shannon-type entropic inequalities for the Triangle scenario have been classified, and they do not witness the incompatibility [25, 33, 35].

3. Moreover, no entropic inequality can witness the W-type distribution as unrealizable. Weilenmann and Colbeck [26] have constructed an inner approximation to the entropic cone of the Triangle causal structure, and the entropies of the W-distribution form a point in this cone. In other words, a distribution with the same entropic profile as the W-type distribution can arise from the Triangle scenario.

4. The newly-developed method of covariance matrix causal inference due to Kela et al. [27], which gives tighter constraints than entropic inequalities for the Triangle scenario, also cannot detect the incompatibility.

Therefore, in this case at least, the inflation technique appears to be more powerful.

We have arrived at our incompatibility verdict by combining inflation with reasoning reminiscent of Hardy's version of Bell's theorem [49, 50]. Sec. IV D will present a generalization of this kind of argument and its applications to causal inference.

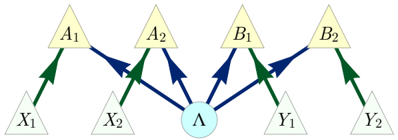

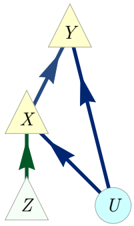

## Example 3 Incompatibility of PR-box correlations with the Bell scenario

Bell's theorem [17, 18, 20, 51] concerns the question of whether the distribution obtained in an experiment involving a pair of systems that are measured at space-like separation is compatible with a causal structure of the form of Fig. 7. Here, the observed variables are { A,B,X,Y } , and Λ is a latent variable acting as a common cause of A and B . We shall term this causal structure the Bell scenario . While the causal inference formulation of Bell's theorem is not the traditional one, several recent articles have introduced and advocated this perspective [19 (Fig. 19), 22 (Fig. E#2), 23 (Fig. 1), 33 (Fig. 1), 52 (Fig. 2b), 53 (Fig. 2)].

FIG. 7. The Bell scenario causal structure. The local outcomes, A and B , of a pair of measurements are assumed to each be a function of some latent common cause and their independent local experimental settings, X and Y .

<details>

<summary>Image 7 Details</summary>

### Visual Description

## Diagram: Simple Network Flow

### Overview

The image is a diagram illustrating a simple network flow with four nodes (X, Y, A, B) and a central node (Λ). The diagram shows the direction of flow between these nodes using arrows.

### Components/Axes

* **Nodes:**

* X: Triangle shape, light gray color, located on the bottom-left.

* Y: Triangle shape, light gray color, located on the bottom-right.

* A: Triangle shape, light yellow color, located on the top-left.

* B: Triangle shape, light yellow color, located on the top-right.

* Λ: Circle shape, light blue color, located in the center.

* **Arrows:**

* Green arrows: Indicate flow from X to A and from Y to B.

* Blue arrows: Indicate flow from A to Λ and from B to Λ.

### Detailed Analysis

* **Flow from X to A:** A green arrow originates from node X and points towards node A.

* **Flow from Y to B:** A green arrow originates from node Y and points towards node B.

* **Flow from A to Λ:** A blue arrow originates from node A and points towards node Λ.

* **Flow from B to Λ:** A blue arrow originates from node B and points towards node Λ.

### Key Observations

* Nodes X and Y act as sources, sending flow to nodes A and B, respectively.

* Nodes A and B act as intermediaries, forwarding flow to the central node Λ.

* Node Λ acts as a sink, receiving flow from both A and B.

### Interpretation

The diagram represents a simple network where two separate sources (X and Y) contribute to a central point (Λ) through intermediate nodes (A and B). This could represent various scenarios, such as data flow in a computer network, resource distribution in a supply chain, or information dissemination in a social network. The colors and shapes are used to visually distinguish the different components and their roles in the network.

</details>

FIG. 8. An inflation of the Bell scenario causal structure, where both local settings and outcome variables have been duplicated.

<details>

<summary>Image 8 Details</summary>

### Visual Description

## Diagram: Network Flow

### Overview

The image is a diagram illustrating a network flow between several nodes. The nodes are represented by triangles and a circle, and the flow is indicated by arrows. The diagram shows a central node receiving input from multiple sources and distributing output to other nodes.

### Components/Axes

* **Nodes:**

* Triangles labeled A1, A2, B1, B2 (top row, yellow fill)

* Triangles labeled X1, X2, Y1, Y2 (bottom row, light green fill)

* Circle labeled Λ (center, light blue fill)

* **Arrows:**

* Green arrows indicate flow from bottom row triangles to top row triangles.

* Blue arrows indicate flow from top row triangles to the central circle (Λ) and from the central circle to the top row triangles.

### Detailed Analysis

* **Node X1:** Has a green arrow pointing to node A1.

* **Node X2:** Has a green arrow pointing to node A2.

* **Node Y1:** Has a green arrow pointing to node B1.

* **Node Y2:** Has a green arrow pointing to node B2.

* **Node A1:** Has a blue arrow pointing to node Λ.

* **Node A2:** Has a blue arrow pointing to node Λ.

* **Node B1:** Has a blue arrow pointing to node Λ.

* **Node B2:** Has a blue arrow pointing to node Λ.

* **Node Λ:** Has blue arrows pointing to nodes A2 and B1.

### Key Observations

* Nodes X1, X2, Y1, and Y2 are input nodes, each feeding into one of the top row nodes (A1, A2, B1, B2).

* Nodes A1, A2, B1, and B2 act as intermediate nodes, receiving input from the bottom row and sending output to the central node (Λ).

* Node Λ is a central node, receiving input from A1, A2, B1, and B2, and distributing output to A2 and B1.

* The flow is not symmetrical. Node Λ sends output only to A2 and B1, not to A1 or B2.

### Interpretation

The diagram represents a network where data or resources flow from the X and Y nodes to the A and B nodes, then converge at the central node Λ. The central node then redistributes the flow back to a subset of the A and B nodes. This could represent a system where data is collected from multiple sources, processed centrally, and then selectively distributed to specific destinations. The asymmetry in the output from Λ suggests that certain nodes (A2 and B1) are prioritized or have different roles in the network.

</details>

We consider the distribution P ABXY = P AB | XY P X P Y , where P X and P Y are arbitrary full-support distributions on { 0 , 1 } 11 , and

$$P _ { A B | X Y } = \begin{cases} \frac { 1 } { 2 } ( [ 0 0 ] + [ 1 1 ] ) & \text {if $x=0,y=0$} \\ \frac { 1 } { 2 } ( [ 0 0 ] + [ 1 1 ] ) & \text {if $x=1,y=0$} \\ \frac { 1 } { 2 } ( [ 0 0 ] + [ 1 1 ] ) & \text {if $x=0,y=1$} \\ \frac { 1 } { 2 } ( [ 0 1 ] + [ 1 0 ] ) & \text {if $x=1,y=1$} \end{cases} , \quad i . e . , \ P _ { A B | X Y } ( a b | x y ) = \begin{cases} \frac { 1 } { 2 } & \text {if $a\oplus b=x\cdoty$,} \\ 0 & \text {otherwise.} \end{cases}$$

This conditional distribution was discovered by Tsirelson [54] and later independently by Popescu and Rohrlich [55, 56]. It has become known in the field of quantum foundations as the PR-box after the latter authors. 12

The Bell scenario implies nontrivial conditional independences 13 among the observed variables, namely, X ⊥ ⊥ Y , A ⊥ ⊥ Y | X , and B ⊥ ⊥ X | Y , as well as those that can be generated from these by the semi-graphoid axioms [19]. It is straightforward to check that these conditional independence relations are respected by the P ABXY resulting from Eq. (23). It is well-known that this distribution is nonetheless incompatible with the Bell scenario, since it violates the CHSH inequality. Here we present a proof of incompatibility in the style of Hardy's proof of Bell's theorem [49] in terms of the inflation technique, using the inflation of the Bell scenario depicted in Fig. 8.

We begin by noting that { A 1 B 1 X 1 Y 1 } , { A 2 B 1 X 2 Y 1 } , { A 1 B 2 X 1 Y 2 } , { A 2 B 2 X 2 Y 2 } , { X 1 } , { X 2 } , { Y 1 } , and { Y 2 } are all injectable sets. By Lemma 4, it follows that any causal model that recovers P ABXY inflates to a model that results in marginals

$$P _ { A _ { 1 } B _ { 1 } X _ { 1 } Y _ { 1 } } = P _ { A _ { 2 } B _ { 1 } X _ { 2 } Y _ { 1 } } = P _ { A _ { 1 } B _ { 2 } X _ { 1 } Y _ { 2 } } = P _ { A _ { 2 } B _ { 2 } X _ { 2 } Y _ { 2 } } = P _ { A B X Y } ,$$

$$P _ { X _ { 1 } } = P _ { X _ { 2 } } = P _ { X } , \quad P _ { Y _ { 1 } } = P _ { Y _ { 2 } } = P _ { Y } .$$

Using the definition of conditional probability, we infer that

$$P _ { A _ { 1 } B _ { 1 } | X _ { 1 } Y _ { 1 } } = P _ { A _ { 2 } B _ { 1 } | X _ { 2 } Y _ { 1 } } = P _ { A _ { 1 } B _ { 2 } | X _ { 1 } Y _ { 2 } } = P _ { A _ { 2 } B _ { 2 } | X _ { 2 } Y _ { 2 } } = P _ { A B | X Y } .$$

Because { X 1 } , { X 2 } , { Y 1 } , and { Y 2 } have no common ancestor in the inflated causal structure, these variables must be marginally independent in any distribution compatible with it, so that P X 1 X 2 Y 1 Y 2 = P X 1 P X 2 P Y 1 P Y 2 . Given the assumption that the distributions P X and P Y have full support, it follows from Eq. (25) that

Sometimes X = 0 and X = 1 and Y = 0 and Y = 1 .

$$\text {Sometimes} \quad X _ { 1 } = 0 \, \text { and } \, X _ { 2 } = 1 \, \text { and } \, Y _ { 1 } = 0 \, \text { and } \, Y _ { 2 } = 1 .$$

11 In the literature on the Bell scenario, the variables X and Y are termed 'settings'. Generally, we may think of observed root variables as settings, coloring them light green in the figures. They are natural candidates for variables to condition on.

12 The PR-box is of interest because it represents a manner in which experimental observations could deviate from the predictions of quantum theory while still being consistent with relativity.

13 Recall that variables X and Y are conditionally independent given Z if P XY | Z ( xy | z ) = P X | Z ( x | z ) P Y | Z ( y | z ) for all z with P Z ( z ) > 0. Such a conditional independence is denoted by X ⊥ ⊥ Y | Z .

On the other hand, from Eq. (26) together with the definition of PR-box, Eq. (23), we conclude that

$$\begin{array} { l c r } X _ { 1 } = & 0 , \, Y _ { 1 } = & 0 & \Longrightarrow & A _ { 1 } = B _ { 1 } , \\ X _ { 1 } = & 0 , \, Y _ { 2 } = & 1 & \Longrightarrow & A _ { 1 } = B _ { 2 } , \\ X _ { 2 } = & 1 , \, Y _ { 1 } = & 0 & \Longrightarrow & A _ { 2 } = B _ { 1 } , \\ X _ { 2 } = & 1 , \, Y _ { 2 } = & 1 & \Longrightarrow & A _ { 2 } \neq B _ { 2 } . \end{array}$$

Combining this with Eq. (27), we obtain

$$\text {Sometimes} \quad A _ { 1 } = B _ { 1 } \text { and } A _ { 1 } = B _ { 2 } \text { and } A _ { 2 } = B _ { 1 } \text { and } A _ { 2 } \neq B _ { 2 } .$$

No values of A 1 , A 2 , B 1 , and B 2 can jointly satisfy these conditions. So we have reached a contradiction, showing that our original assumption of compatibility of P ABXY with the Bell scenario must have been false.

The structure of this argument parallels that of standard proofs of the incompatibility of the PR-box with the Bell scenario. Standard proofs focus on a set of variables { A 0 A 1 B 0 B 1 } where A x is the value of A when X = x and B y is the value of B when Y = y . Note that the distribution ∑ Λ P A 0 | Λ P A 1 | Λ P B 0 | Λ P B 1 | Λ P Λ is a joint distribution of these

four variables for which the marginals on pairs { A 0 B 0 } , { A 0 B 1 } , { A 1 B 0 } and { A 1 B 1 } are those that can arise in the Bell scenario. The existence of such a joint distribution rules out the possibility of having A 1 = B 1 , A 1 = B 2 , A 2 = B 1 but A 2 = B 2 , and therefore shows that the PR-box distribution is incompatible with the Bell scenario [57, 58]. In light of our use of Eq. (27), the reasoning based on the inflation of Fig. 8 is really the same argument in disguise.

Appendix G shows that the inflation of the Bell scenario depicted in Fig. 8 is sufficient to witness the incompatibility of any distribution that is incompatible with the Bell scenario.

## C. Deriving Causal Compatibility Inequalities

The inflation technique can be used not only to witness the incompatibility of a given distribution with a given causal structure, but also to derive necessary conditions that a distribution must satisfy to be compatible with the given causal structure. These conditions can always be expressed as inequalities, and we will refer to them as causal compatibility inequalities 14 . Formally, we have:

Definition 5. Let G be a causal structure and let S be a family of subsets of the observed variables of G , S ⊆ 2 ObservedNodes ( G ) . Let I S denote an inequality that operates on the corresponding family of distributions, { P V : V ∈ S } . Then I S is a causal compatibility inequality for the causal structure G whenever it is satisfied by every family of distributions { P V : V ∈ S } that is compatible with G .

While violation of a causal compatibility inequality witnesses the incompatibility with the causal structure, satisfaction of the inequality does not guarantee compatibility. This is the sense in which it merely provides a necessary condition for compatibility.

The inflation technique is useful for deriving causal compatibility inequalities because of the following consequence of Lemma 4:

Corollary 6. Suppose that G ′ is an inflation of G . Let S ′ ⊆ InjectableSets ( G ′ ) be a family of injectable sets and S ⊆ ImagesInjectableSets ( G ) the images of members of S ′ under the dropping of copy-indices. Let I S ′ be a causal compatibility inequality for G ′ operating on families { P V ′ : V ′ ∈ S ′ } . Define an inequality I S as follows: in the functional form of I S ′ , replace every occurrence of a term P V ′ by P V for the unique V ∈ S with V ∼ V ′ . Then I S is a causal compatibility inequality for G operating on families { P V : V ∈ S } .

Proof. Suppose that the family { P V : V ∈ S } is compatible with G . By Lemma 4, it follows that the family { P V ′ : V ′ ∈ S ′ } where P V ′ := P V for V ′ ∼ V is compatible with G ′ . Since I S ′ is a causal compatibility inequality for G ′ , it follows that { P V ′ : V ′ ∈ S ′ } satisfies I S ′ . But by the definition of I S , its evaluation on { P V : V ∈ S } is equal to I S ′ evaluated on { P V ′ : V ′ ∈ S ′ } . It therefore follows that { P V : V ∈ S } satisfies I S . Since { P V : V ∈ S } was an arbitrary family compatible with G , we conclude that I S is a causal compatibility inequality for G .

14 Note that we can include equality constraints for causal compatibility within the framework of causal compatibility inequalities alone; it suffices to note that an equality constraint can always be expressed as a pair of inequalities, i.e. satisfying x = y is equivalent to satisfying both x ≤ y and x ≥ y . The requirement that a distribution must be Markov (or Nested Markov) relative to a DAG is usually formulated as a set of equality constraints.

We now present some simple examples of causal compatibility inequalities for the Triangle scenario that one can derive from the inflation technique via Corollary 6. Some terminology and notation will facilitate their description. We refer to a pair of nodes which do not share any common ancestor as being ancestrally independent . This is equivalent to being d -separated by the empty set [1-4]. Given that the conventional notation for X and Y being d -separated by Z in a DAG is X ⊥ d Y | Z , we denote X and Y being ancestrally independent within G as X ⊥ d Y . Generalizing to sets, X ⊥ d Y indicates that no node in X shares a common ancestor with any node in Y within the causal structure G ,

$$X \perp _ { d } Y \text { if } A n _ { G } ( X ) \cap A n _ { G } ( Y ) = \emptyset .$$

Ancestral independence is closed under union; that is, X ⊥ d Y and X ⊥ d Z implies X ⊥ d ( Y ∪ Z ) . Consequently, pairwise ancestral independence implies joint factorizability; i.e. ∀ i = j X i ⊥ d X j implies that P ∪ i X i = ∏ i P X i .

## Example 4 A causal compatibility inequality in terms of correlators

As in Example 1 of the previous subsection, consider the Cut inflation of the Triangle scenario (Fig. 4), where all observed variables are binary. For technical convenience, we assume that they take values in the set {-1 , +1 } , rather than taking values in { 0 , 1 } as was presumed in the last subsection.

The injectable sets that we make use of are { A 2 C 1 } , { B 1 C 1 } , { A 2 } , and { B 1 } . From Corollary 6, any causal compatibility inequality for the inflated causal structure that operates on the marginal distributions of { A 2 C 1 } , { B 1 C 1 } , { A 2 } , and { B 1 } will yield a causal compatibility inequality for the original causal structure that operates on the marginal distributions on { AC } , { BC } , { A } , and { B } . We begin by noting that for any distribution on three binary variables { A 2 B 1 C 1 } , that is, regardless of the causal structure in which they are embedded, the marginals on { A 2 C 1 } , { B 1 C 1 } and { A 2 B 1 } satisfy the following inequality for expectation values [59-63],

$$\mathbb { E } [ A _ { 2 } C _ { 1 } ] + \mathbb { E } [ B _ { 1 } C _ { 1 } ] \leq 1 + \mathbb { E } [ A _ { 2 } B _ { 1 } ] .$$

This is an example of a constraint on pairwise correlators that arises from the presumption that they are consistent with a joint distribution. (The problem of deriving such constraints is the marginal constraint problem , discussed in detail in Sec. IV.)

But in the Cut inflation of the Triangle scenario (Fig. 4), A 2 and B 1 have no common ancestor and consequently any distribution compatible with this inflated causal structure must make A 2 and B 1 marginally independent. In terms of correlators, this can be expressed as

$$A _ { 2 } \perp _ { d } B _ { 1 } \implies A _ { 2 } \perp B _ { 1 } \implies \mathbb { E } [ A _ { 2 } B _ { 1 } ] = \mathbb { E } [ A _ { 2 } ] \mathbb { E } [ B _ { 1 } ] .$$

Substituting this into Eq. (31), we have

$$\mathbb { E } [ A _ { 2 } C _ { 1 } ] + \mathbb { E } [ B _ { 1 } C _ { 1 } ] \leq 1 + \mathbb { E } [ A _ { 2 } ] \mathbb { E } [ B _ { 1 } ] .$$

This is an example of a simple but nontrivial causal compatibility inequality for the causal structure of Fig. 4. Finally, by Corollary 6, we infer that

$$\mathbb { E } [ A C ] + \mathbb { E } [ B C ] \leq 1 + \mathbb { E } [ A ] \mathbb { E } [ B ]$$

is a causal compatibility inequality for the Triangle scenario. This inequality expresses the fact that as long as A and B are not completely biased, there is a tradeoff between the strength of AC correlations and the strength of BC correlations.

Given the symmetry of the Triangle scenario under permutations and sign flips of A , B and C , it is clear that the image of inequality (34) under any such symmetry is also a valid causal compatibility inequality. Together, these inequalities constitute a type of monogamy 15 of correlations in the Triangle scenario with binary variables: if any two observed variables with unbiased marginals are perfectly correlated, then they are both independent of the third.

Moreover, since inequality (31) is valid even for continuous variables with values in the interval [ -1 , +1], it follows that the polynomial inequality (34) is valid in this case as well.

Note that inequality (31) serves as a robust witness certifying the incompatibility of 3-way perfect correlation (described in Eq. (11)) with the Triangle scenario. Inequality (31) is robust in the sense that it demonstrates the incompatibility of distributions close to 3-way perfect correlation.

15 We are here using the term 'monogamy' in the same sort of manner in which it is used in the context of entanglement theory [64].

One might be curious as to how close to perfect correlation one can get while still being compatible with the Triangle scenario. To partially answer this question, we used Eq. (31) to rule out many distributions close to perfect correlation and we also pursued explicit model-construction to rule in various distributions sufficiently far from perfect correlation. Explicitly, we found that distributions of the form

$$P _ { A B C } = \alpha \frac { [ 0 0 0 ] + [ 1 1 1 ] } { 2 } + ( 1 - \alpha ) \frac { [ e l s e ] } { 6 } , \quad i . e . , \quad P _ { A B C } ( a b c ) = \begin{cases} \frac { \alpha } { 2 } & \text {if $a=b=c$,} \\ \frac { 1 - \alpha } { 6 } & \text {otherwise,} \end{cases}$$

where [else] denotes any point distribution [ abc ] other than [000] or [111], are incompatible for the range 5 8 = 0 . 625 < α ≤ 1 as a consequence of Eq. (31). On the other hand, we found a family of explicit models allowing us to certify the compatibility of distributions for 0 ≤ α ≤ 1 2 .

The presence of this gap between our inner and outer constructions could reflect either the inadequacy of our limited model constructions or the inadequacy of relatively small inflations of the Triangle causal structure to generate suitably sensitive inequalities. We defer closing the gap to future work 16 .

## Example 5 A causal compatibility inequality in terms of entropic quantities

One way to derive constraints that are independent of the cardinality of the observed variables is to express these in terms of the mutual information between observed variables rather than in terms of correlators. The inflation technique can also be applied to achieve this. To see how this works in the case of the Triangle scenario, consider again the Cut inflation (Fig. 4).

One can follow the same logic as in the preceding example, but starting from a different constraint on marginals. For any distribution on three variables { A 2 B 1 C 1 } of arbitrary cardinality (again, regardless of the causal structure in which they are embedded), the marginals on { A 2 C 1 } , { B 1 C 1 } and { A 2 B 1 } satisfy the inequality [35, Eq. (29)]

$$I ( A _ { 2 } \colon C _ { 1 } ) + I ( C _ { 1 } \colon B _ { 1 } ) \leq H ( C _ { 1 } ) + I ( A _ { 2 } \colon B _ { 1 } ) ,$$

where H ( X ) denotes the Shannon entropy of the distribution of X , and I ( X : Y ) denotes the mutual information between X and Y with respect to the marginal joint distribution on the pair of variables X and Y . The fact that A 2 and B 1 have no common ancestor in the inflated causal structure implies that in any distribution that is compatible with it, A 2 and B 1 are marginally independent. This is expressed entropically as the vanishing of their mutual information,

$$A _ { 2 } \perp _ { d } B _ { 1 } \implies A _ { 2 } \perp B _ { 1 } \implies I ( A _ { 2 } \colon B _ { 1 } ) = 0 .$$

Substituting the latter equality into Eq. (36), we have

$$I ( A _ { 2 } \colon C _ { 1 } ) + I ( C _ { 1 } \colon B _ { 1 } ) \leq H ( C _ { 1 } ) .$$

This is another example of a nontrivial causal compatibility inequality for the causal structure of Fig. 4. By Corollary 6, it follows that

$$I ( A \colon C ) + I ( C \colon B ) \leq H ( C )$$