1701.01189

Model: nemotron-free

## GPU Multisplit: an extended study of a parallel algorithm

SAMAN ASHKIANI, University of California, Davis ANDREW DAVIDSON, University of California, Davis ULRICH MEYER, Goethe-Universität Frankfurt am Main JOHN D. OWENS, University of California, Davis

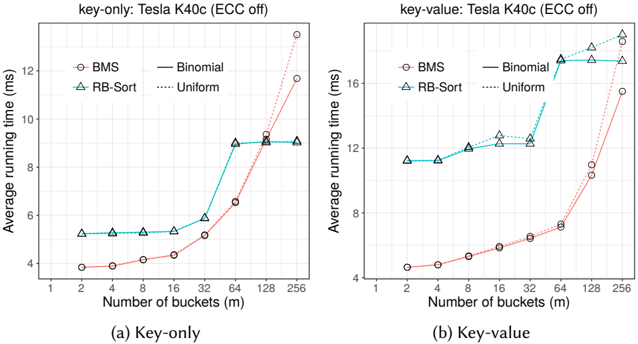

Multisplit is a broadly useful parallel primitive that permutes its input data into contiguous buckets or bins , where the function that categorizes an element into a bucket is provided by the programmer. Due to the lack of an efficient multisplit on GPUs, programmers often choose to implement multisplit with a sort. One way is to first generate an auxiliary array of bucket IDs and then sort input data based on it. In case smaller indexed buckets possess smaller valued keys, another way for multisplit is to directly sort input data. Both methods are inefficient and require more work than necessary: the former requires more expensive data movements while the latter spends unnecessary effort in sorting elements within each bucket. In this work, we provide a parallel model and multiple implementations for the multisplit problem. Our principal focus is multisplit for a small (up to 256) number of buckets. We use warp-synchronous programming models and emphasize warp-wide communications to avoid branch divergence and reduce memory usage. We also hierarchically reorder input elements to achieve better coalescing of global memory accesses. On a GeForce GTX 1080 GPU, we can reach a peak throughput of 18.93 Gkeys/s (or 11.68 Gpairs/s) for a key-only (or key-value) multisplit. Finally, we demonstrate how multisplit can be used as a building block for radix sort. In our multisplit-based sort implementation, we achieve comparable performance to the fastest GPU sort routines, sorting 32-bit keys (and key-value pairs) with a throughput of 3.0 G keys/s (and 2.1 Gpair/s).

CCS Concepts: · Computing methodologies ! Parallel algorithms; · Computer systems organization ! Single instruction, multiple data; · Theory of computation ! Shared memory algorithms;

Additional Key Words and Phrases: Graphics Processing Unit (GPU), multisplit, bucketing, warp-synchronous programming, radix sort, histogram, shuffle, ballot

## ACMReference format:

Saman Ashkiani, Andrew Davidson, Ulrich Meyer, and John D. Owens. 2017. GPU Multisplit: an extended study of a parallel algorithm. ACM Trans. Parallel Comput. 9, 4, Article 39 (September 2017), 44 pages. DOI: 0000001.0000001

## 1 INTRODUCTION

This paper studies the multisplit primitive for GPUs. 1 Multisplit divides a set of items (keys or key-value pairs) into contiguous buckets, where each bucket contains items whose keys satisfy a programmer-specified criterion (such as falling into a particular range). Multisplit is broadly useful in a wide range of applications, some of which we will cite later in this introduction. But we begin

1 This paper is an extended version of initial results published at PPoPP 2016 [3]. The source code is available at https: //github.com/owensgroup/GpuMultisplit.

Permission to make digital or hard copies of all or part of this work for personal or classroom use is granted without fee provided that copies are not made or distributed for profit or commercial advantage and that copies bear this notice and the full citation on the first page. Copyrights for components of this work owned by others than ACM must be honored. Abstracting with credit is permitted. To copy otherwise, or republish, to post on servers or to redistribute to lists, requires prior specific permission and/or a fee. Request permissions from permissions@acm.org.

© 2016 ACM.

1539-9087/2017/9-ART39 $15.00

our story by focusing on one particular example, the delta-stepping formulation of single-source shortest path (SSSP).

The traditional (and work-efficient) serial approach to SSSP is Dijkstra's algorithm [13], which considers one vertex per iteration-the vertex with the lowest weight. The traditional parallel approach (Bellman-Ford-Moore [4]) considers all vertices on each iteration, but as a result incurs more work than the serial approach. On the GPU, the recent SSSP work of Davidson et al. [8] instead built upon the delta-stepping work of Meyer and Sanders [23], which on each iteration classifies candidate vertices into buckets or bins by their weights and then processes the bucket that contains the vertices with the lowest weights. Items within a bucket are unordered and can be processed in any order.

Delta-stepping is a good fit for GPUs. It avoids the inherent serialization of Dijkstra's approach and the extra work of the fully parallel Bellman-Ford-Moore approach. At a high level, deltastepping divides up a large amount of work into multiple buckets and then processes all items within one bucket in parallel at the same time. How many buckets? Meyer and Sanders describe how to choose a bucket size that is 'large enough to allow for sufficient parallelism and small enough to keep the algorithm work-efficient' [23]. Davidson et al. found that 10 buckets was an appropriate bucket count across their range of datasets. More broadly, for modern parallel architectures, this design pattern is a powerful one: expose just enough parallelism to fill the machine with work, then choose the most efficient algorithm to process that work. (For instance, Hou et al. use this strategy in efficient GPU-based tree traversal [18].)

Once we've decided the bucket count, how do we efficiently classify vertices into buckets? Davidson et al. called the necessary primitive multisplit . Beyond SSSP, multisplit has significant utility across a range of GPU applications. Bucketing is a key primitive in one implementation of radix sort on GPUs [22], where elements are reordered iteratively based on a group of their bits in their binary representation; as the first step in building a GPU hash table [1]; in hash-join for relational databases to group low-bit keys [12]; in string sort for singleton compaction and elimination [11]; in suffix array construction to organize the lexicographical rank of characters [10]; in a graphics voxelization pipeline for splitting tiles based on their descriptor (dominant axis) [28]; in the shallow stages of k -d tree construction [32]; in Ashari et al.'s sparse-matrix dense-vector multiplication work, which bins rows by length [2]; and in probabilistic topk selection, whose core multisplit operation is three bins around two pivots [24]. And while multisplit is a crucial part of each of these and many other GPU applications, it has received little attention to date in the literature. The work we present here addresses this topic with a comprehensive look at efficiently implementing multisplit as a general-purpose parallel primitive.

The approach of Davidson et al. to implementing multisplit reveals the need for this focus. If the number of buckets is 2, then a scan-based 'split' primitive [16] is highly efficient on GPUs. Davidson et al. built both a 2-bucket ('Near-Far') and 10-bucket implementation. Because they lacked an efficient multisplit, they were forced to recommend their theoretically-less-efficient 2-bucket implementation:

The missing primitive on GPUs is a high-performance multisplit that separates primitives based on key value (bucket id); in our implementation, we instead use a sort; in the absence of a more efficient multisplit, we recommend utilizing our Near-Far work-saving strategy for most graphs. [8, Section 7]

Like Davidson et al., we could implement multisplit on GPUs with a sort. Recent GPU sorting implementations [22] deliver high throughput, but are overkill for the multisplit problem: unlike sort, multisplit has no need to order items within a bucket. In short, sort does more work than necessary. For Davidson et al., reorganizing items into buckets after each iteration with a sort is

too expensive: 'the overhead of this reorganization is significant: on average, with our bucketing implementation, the reorganizational overhead takes 82% of the runtime.' [8, Section 7]

In this paper we design, implement, and analyze numerous approaches to multisplit, and make the following contributions:

- On modern GPUs, 'global' operations (that require global communication across the whole GPU) are more expensive than 'local' operations that can exploit faster, local GPU communication mechanisms. Straightforward implementations of multisplit primarily use global operations. Instead, we propose a parallel model under which the multisplit problem can be factored into a sequence of local, global, and local operations better suited for the GPU's memory and computational hierarchies.

- We show that reducing the cost of global operations, even by significantly increasing the cost of local operations, is critical for achieving the best performance. We base our model on a hierarchical divide and conquer, where at the highest level each subproblem is small enough to be easily solved locally in parallel, and at the lowest level we have only a small number of operations to be performed globally.

- We locally reorder input elements before global operations, trading more work (the reordering) for better memory performance (greater coalescing) for an overall improvement in performance.

- We promote the warp-level privatization of local resources as opposed to the more traditional thread-level privatization. This decision can contribute to an efficient implementation of our local computations by using warp-synchronous schemes to avoid branch divergence, reduce shared memory usage, leverage warp-wide instructions, and minimize intra-warp communication.

- Wedesign a novel voting scheme using only binary ballots. We use this scheme to efficiently implement our warp-wide local computations (e.g., histogram computations).

- We use these contributions to implement a high-performance multisplit targeted to modern GPUs. We then use our multisplit as an effective building block to achieve the following:

- -We build an alternate radix sort competitive with CUB (the current fastest GPU sort library). Our implementation is particularly effective with key-value sorts (Section 7.1).

- -We demonstrate a significant performance improvement in the delta-stepping formulation of the SSSP algorithm (Section 7.2).

- -We build an alternate device-wide histogram procedure competitive with CUB. Our implementation is particularly suitable for a small number of bins (Section 7.3).

## 2 RELATED WORK AND BACKGROUND

## 2.1 The Graphics Processing Unit (GPU)

The GPU of today is a highly parallel, throughput-focused programmable processor. GPU programs ('kernels') launch over a grid of numerous blocks ; the GPU hardware maps blocks to available parallel cores. Each block typically consists of dozens to thousands of individual threads , which are arranged into 32-wide warps . Warps run under SIMD control on the GPU hardware. While blocks cannot directly communicate with each other within a kernel, threads within a block can, via a user-programmable 48 kB shared-memory , and threads within a warp additionally have access to numerous warp-wide instructions. The GPU's global memory (DRAM), accessible to all blocks during a computation, achieves its maximum bandwidth only when neighboring threads access neighboring locations in the memory; such accesses are termed coalesced . In this work, when we use the term ' global ', we mean an operation of device-wide scope. Our term ' local ' refers to an operation limited to smaller scope (e.g., within a thread, a warp, a block, etc.), which we will

specify accordingly. The major difference between the two is the cost of communication: global operations must communicate through global DRAM, whereas local operations can communicate through lower-latency, higher-bandwidth mechanisms like shared memory or warp-wide intrinsics. Lindholm et al. [20] and Nickolls et al. [25] provide more details on GPU hardware and the GPU programming model, respectively.

We use NVIDIA's CUDA as our programming language in this work [27]. CUDA provides several warp-wide voting and shuffling instructions for intra-warp communication of threads. All threads within a warp can see the result of a user-specified predicate in a bitmap variable returned by \_\_ballot(predicate) [27, Ch. B13]. Any set bit in this bitmap denotes the predicate being non-zero for the corresponding thread. Each thread can also access registers from other threads in the same warp with \_\_shfl(register\_name, source\_thread) [27, Ch. B14]. Other shuffling functions such as \_\_shfl\_up() or \_\_shfl\_xor() use relative addresses to specify the source thread. In CUDA, threads also have access to some efficient integer intrinsics, e.g., \_\_popc() for counting the number of set bits in a register.

## 2.2 Parallel primitive background

In this paper we leverage numerous standard parallel primitives, which we briefly describe here. A reduction inputs a vector of elements and applies a binary associative operator (such as addition) to reduce them to a single element; for instance, sum-reduction simply adds up its input vector. The scan operator takes a vector of input elements and an associative binary operator, and returns an output vector of the same size as the input vector. In exclusive (resp., inclusive) scan, output location i contains the reduction of input elements 0 to i 1 (resp., 0 to i ). Scan operations with binary addition as their operator are also known as prefix-sum [16]. Any reference to a multioperator (multi-reduction, multi-scan) refers to running multiple instances of that operator in parallel on separate inputs. Compaction is an operation that filters a subset of its input elements into a smaller output array while preserving the order.

## 2.3 Multisplit and Histograms

Many multisplit implementations, including ours, depend heavily on knowledge of the total number of elements within each bucket (bin), i.e., histogram computation. Previous competitive GPU histogram implementations share a common philosophy: divide the problem into several smaller sized subproblems and assign each subproblem to a thread, where each thread sequentially processes its subproblem and keeps track of its own privatized local histogram. Later, the local histograms are aggregated to produce a globally correct histogram. There are two common approaches to this aggregation: 1) using atomic operations to correctly add bin counts together (e.g., Shams and Kennedy [30]), 2) storing per-thread sequential histogram computations and combining them via a global reduction (e.g., Nugteren et al. [26]). The former is suitable when the number of buckets is large; otherwise atomic contention is the bottleneck. The latter avoids such conflicts by using more memory (assigning exclusive memory units per-bucket and per-thread), then performing device-wide reductions to compute the global histogram.

The hierarchical memory structure of NVIDIA GPUs, as well as NVIDIA's more recent addition of faster but local shared memory atomics (among all threads within a thread block), provides more design options to the programmer. With these features, the aggregation stage could be performed in multiple rounds from thread-level to block-level and then to device-level (global) results. Brown et al. [6] implemented both Shams's and Nugteren's aforementioned methods, as well as a variation of their own, focusing only on 8-bit data, considering careful optimizations that make the best use of the GPU, including loop unrolling, thread coarsening, and subword parallelism, as well as others.

Recently, NVIDIA's CUDA Unbound (CUB) [21] library has included an efficient and consistent histogram implementation that carefully uses a minimum number of shared-memory atomics to combine per-thread privatized histograms per thread-block, followed by aggregation via global atomics. CUB's histogram supports any data type (including multi-channel 8-bit inputs) with any number of bins.

Only a handful of papers have explored multisplit as a standalone primitive. He et al. [17] implemented multisplit by reading multiple elements with each thread, sequentially computing their histogram and local offsets (their order among all elements within the same bucket and processed by the same thread), then storing all results (histograms and local offsets) into memory. Next, they performed a device-wide scan operation over these histogram results and scattered each item into its final position. Their main bottlenecks were the limited size of shared memory, an expensive global scan operation, and random non-coalesced memory accesses. 2

Patidar [29] proposed two methods with a particular focus on a large number of buckets (more than 4k): one based on heavy usage of shared-memory atomic operations (to compute block level histogram and intra-bucket orders), and the other by iterative usage of basic binary split for each bucket (or groups of buckets). Patidar used a combination of these methods in a hierarchical way to get his best results. 3 Both of these multisplit papers focus only on key-only scenarios, while data movements and privatization of local memory become more challenging with key-value pairs.

## 3 MULTSIPLIT AND COMMON APPROACHES

In this section, we first formally define the multisplit as a primitive algorithm. Next, we describe some common approaches for performing the multisplit algorithm, which form a baseline for the comparison to our own methods, which we then describe in Section 4.

## 3.1 The multisplit primitive

We informally characterize multisplit as follows:

- Input: An unordered set of keys or key-value pairs. 'Values' that are larger than the size of a pointer use a pointer to the value in place of the actual value.

- Input: A function, specified by the programmer, that inputs a key and outputs the bucket corresponding to that key ( bucket identifier ). For example, this function might classify a key into a particular numerical range, or divide keys into prime or composite buckets.

- Output: Keys or key-value pairs separated into m buckets. Items within each output bucket must be contiguous but are otherwise unordered. Some applications may prefer output order within a bucket that preserves input order; we call these multisplit implementations 'stable'.

More formally, let u and v be vectors of n key and value elements, respectively. Altogether m buckets B 0 ; B 1 ; : : : ; B m 1 partition the entire key domain such that each key element uniquely belongs to one and only one bucket. Let f '' be an arbitrary bucket identifier that assigns a bucket ID to each input key (e.g., f ' u i ' = j if and only if u i 2 B j ). Throughout this paper, m always refers to the total number of buckets. For any input key vector, we define multisplit as a permutation of that input vector into an output vector. The output vector is densely packed and has two properties: (1) All output elements within the same bucket are stored contiguously in the output vector, and (2) All output elements are stored contiguously in a vector in ascending order by their bucket IDs.

2 On an NVIDIA 8800 GTX GPU, for 64 buckets, He et al. reported 134 Mkeys/sec. As a very rough comparison, our GeForce GTX 1080 GPU has 3.7x the memory bandwidth, and our best 64-bucket implementation runs 126 times faster.

3 On an NVIDIA GTX280 GPU, for 32 buckets, Patidar reported 762 Mkeys/sec. As a very rough comparison, our GeForce GTX 1080 GPU has 2.25x the memory bandwidth, and our best 32-bucket implementation runs 23.5 times faster.

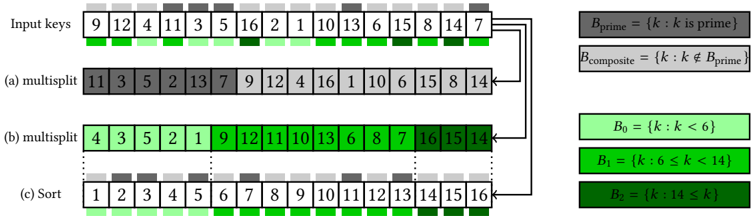

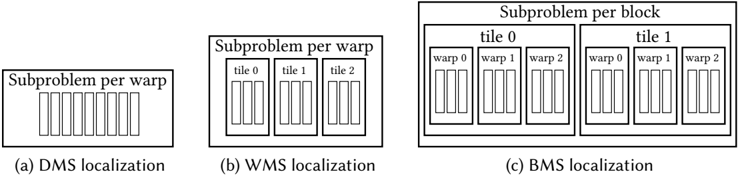

Fig. 1. Multisplit examples. (a) Stable multisplit over two buckets ( B prime and B composite ). (b) Stable multisplit over three range-based buckets ( B 0 ; B 1 ; B 2 ). (c) Sort can implement multisplit over ordered buckets (e.g., for B 0 ; B 1 ; B 3 ), but not for any general buckets (e.g., B prime and B composite ); note that this multisplit implementation is not stable (initial intra bucket orders are not preserved).

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Diagram: Data Processing and Sorting Algorithm

### Overview

The diagram illustrates a multi-step data processing workflow involving input keys, multisplit operations, and sorting. It uses color-coded categories to represent numerical properties (prime/composite, value ranges) and demonstrates how these properties influence data organization.

### Components/Axes

1. **Input Keys** (Top Row):

- Values: `9, 12, 4, 11, 3, 5, 10, 6, 2, 1, 13, 15, 8, 14, 7`

- Colors: Gray (primes), Green (composites), Dark Green (B2: k ≥14)

2. **Legend** (Right Side):

- **B_prime**: Gray (`k` is prime)

- **B_composite**: Green (`k` is composite)

- **B0**: Light Green (`k < 6`)

- **B1**: Medium Green (`6 ≤ k < 14`)

- **B2**: Dark Green (`k ≥ 14`)

3. **Sections**:

- **(a) Multisplit**: First partitioning step (primes vs. composites).

- **(b) Multisplit**: Second partitioning step (B0, B1, B2 ranges).

- **(c) Sort**: Final sorted output (1–16 in ascending order).

### Detailed Analysis

1. **Input Keys**:

- Primes (gray): `11, 3, 5, 2, 13, 7`

- Composites (green): `9, 12, 4, 10, 6, 15, 8, 14`

- Note: `1` is composite (green) despite being neither prime nor composite.

2. **Section (a) Multisplit**:

- Primes (gray): `11, 3, 5, 2, 13, 7`

- Composites (green): `9, 12, 4, 16, 1, 10, 6, 15, 8, 14`

- **Anomaly**: `14` (B2: k ≥14) is green instead of dark green.

3. **Section (b) Multisplit**:

- B0 (light green): `4, 3, 5, 2, 1`

- B1 (medium green): `9, 12, 11, 10, 13, 6, 8, 7`

- B2 (dark green): `16, 15, 14`

- **Consistency**: Colors align with B0/B1/B2 ranges.

4. **Section (c) Sort**:

- Output: `1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15, 16`

- Colors: All dark green (B2), conflicting with B0/B1/B2 ranges.

### Key Observations

- **Color Mismatch**: In section (a), `14` (B2) is green instead of dark green. In section (c), all values are dark green despite spanning B0/B1/B2 ranges.

- **Sorting Logic**: The final sorted list ignores prior color categorizations, suggesting a reset or reclassification step.

- **Prime/Composite Split**: Section (a) separates primes (gray) from composites (green), while section (b) further subdivides composites into B0/B1/B2.

### Interpretation

The diagram demonstrates a hierarchical data processing pipeline:

1. **Prime/Composite Separation**: Initial split isolates primes (gray) and composites (green).

2. **Range-Based Partitioning**: Composites are further divided into B0 (small values), B1 (mid-range), and B2 (large values).

3. **Sorting**: Final step orders all values numerically, overriding prior categorizations.

**Notable Anomalies**:

- `14` is misclassified in section (a) (green instead of dark green).

- Section (c) uses uniform dark green, conflicting with B0/B1/B2 definitions. This may indicate a visualization error or a conceptual shift in categorization during sorting.

**Significance**:

The workflow highlights how data properties (primality, magnitude) can guide partitioning strategies, though inconsistencies in color coding suggest potential ambiguities in the algorithm’s implementation or visualization.

</details>

Optionally, the beginning index of each bucket in the output vector can also be stored in an array of size m . Our main focus in this paper is on 32-bit keys and values (of any data type).

This multisplit definition allows for a variety of implementations. It places no restrictions on the order of elements within each bucket before and after the multisplit (intra-bucket orders); buckets with larger indices do not necessarily have larger elements. In fact, key elements may not even be comparable entities, e.g., keys can be strings of names with buckets assigned to male names, female names, etc. We do require that buckets are assigned to consecutive IDs and will produce buckets ordered in this way. Figure 1 illustrates some multisplit examples. Next, we consider some common approaches for dealing with non-trivial multisplit problems.

## 3.2 Iterative and Recursive scan-based splits

The first approach is based on binary split. Suppose we have two buckets. We identify buckets in a binary flag vector, and then compact keys (or key-value pairs) based on the flags. We also compact the complemented binary flags from right to left, and store the results. Compaction can be efficiently implemented by a scan operation, and in practice we can concurrently do both left-to-right and right-to-left compaction with a single scan operation.

With more buckets, we can take two approaches. One is to iteratively perform binary splits and reduce our buckets one by one. For example, we can first split based on B 0 and all remaining buckets ( [ m 1 j = 1 B j ). Then we can split the remaining elements based on B 1 and [ m 1 j = 2 B j . After m rounds the result will be equivalent to a multisplit operation. Another approach is that we can recursively perform binary splits; on each round we split key elements into two groups of buckets. We continue this process for at most d log m e rounds and in each round we perform twice number of multisplits and in the end we will have a stable multisplit. Both of these scan-based splits require multiple global operations (e.g., scan) over all elements, and may also have load-balancing issues if the distribution of keys is non-uniform. As we will later see in Section 6.1, on modern GPUs and with just two buckets this approach is not efficient enough.

## 3.3 Radix sort

It should be clear by now that sorting is not a general solution to a multisplit problem. However, it is possible to achieve a non-stable multisplit by directly sorting our input elements under the following condition: if buckets with larger IDs have larger elements (e.g., all elements in B 0 are less than all elements in B 1, and so on). Even in this case, this is not a work-efficient solution as it

unnecessarily sorts all elements within each bucket as well. On average, as the number of buckets ( m ) increases, this performance gap should decrease because there are fewer elements within each bucket and hence less extra effort to sort them. As a result, at some point we expect the multisplit problem to converge to a regular sort problem, when there are large enough number of buckets.

Among all sorting algorithms, there is a special connection between radix sort and multisplit. Radix sort iteratively sorts key elements based on selected groups of bits in keys. The process either starts from the least significant bits ('LSB sort') or from the most significant bits ('MSB sort'). In general MSB sort is more common because, compared to LSB sort, it requires less intermediate data movement when keys vary significantly in length (this is more of an issue for string sorting). MSB sort ensures data movements become increasingly localized for later iterations, because keys will not move between buckets ('bucket' here refers to the group of keys with the same set of considered bits from previous iterations). However, for equal width key types (such as 32-bit variables, which are our focus in this paper) and with a uniform distribution of keys in the key domain (i.e., an equivalently uniform distribution of bits across keys), there will be less difference between the two methods.

## 3.4 Reduced-bit sort

Because sorting is an efficient primitive on GPUs, we modify it to be specific to multisplit: here we introduce our reduced-bit sort method (RB-sort), which is based on sorting bucket IDs and permuting the original key-value pairs afterward. For multisplit, this method is superior to a full radix sort because we expect the number of significant bits across all bucket IDs is less than the number of significant bits across all keys. Current efficient GPU radix sorts (such as CUB) provide an option of sorting only a subset of bits in keys. This results in a significant performance improvement for RB-sort, because we only sort bucket IDs (with log m bits instead of 32-bit keys as in a full radix sort).

Key-only. In this scenario, we first make a label vector containing each key's bucket ID. Then we sort (label, key) pairs based on label values. Since labels are all less than m , we can limit the number of bits in the radix sort to be d log m e .

Key-value. In this scenario, we similarly make a label vector from key elements. Next, we would like to permute (key, value) pairs by sorting labels. One approach is to sort (label, (key, value)) pairs all together, based on label. To do so, we first pack our original key-value pairs into a single 64-bit variable and then do the sort. 4 In the end we unpack these elements to form the final results. Another way is to sort (label, index) pairs and then manually permute key-value pairs based on the permuted indices. We tried both approaches and the former seems to be more efficient. The latter requires non-coalesced global memory accesses and gets worse as m increases, while the former reorders for better coalescing internally and scales better with m .

The main problem with the reduced-bit sort method is its extra overhead (generating labels, packing original key-value pairs, unpacking the results), which makes the whole process less efficient. Another inefficiency with the reduced-bit sort method is that it requires more expensive data movements than an ideal solution. For example, to multisplit on keys only, RB-sort performs a radix sort on (label, key) pairs.

Today's fastest sort primitives do not currently provide APIs for user-specified computations (e.g., bucket identifications) to be integrated as functors directly into sort's kernels; while this is an

4 For data types that are larger than 32 bits, we need further modifications for the RB-sort method to work, because it may no longer be possible to pack each key-value pair into a single 64-bit variable and use the current already-efficient 64-bit GPU sorts for it. For such cases, we first sort the array of indexes, then manually permute the arbitrary sized key-value pairs.

intriguing area of future work for the designers of sort primitives, we believe that our reduced-bit sort appears to be the best solution today for multisplit using current sort primitives.

## 4 ALGORITHM OVERVIEW

In analyzing the performance of methods from the previous section, we make two observations:

- (1) Global computations (such as a global scan) are expensive, and approaches to multisplit that require many rounds, each with a global computation, are likely to be uncompetitive. Any reduction in the cost of global computation is desirable.

- (2) After we derive the permutation, the cost of permuting the elements with a global scatter (consecutive input elements going into arbitrarily distant final destinations) is also expensive. This is primarily because of the non-coalesced memory accesses associated with the scatter. Any increase in memory locality associated with the scatter is also desirable.

The key design insight in this paper is that we can reduce the cost of both global computation and global scatter at the cost of doing more local work, and that doing so is beneficial for overall performance. We begin by describing and analyzing a framework for the different approaches we study in this paper, then discuss the generic structure common to all our implementations.

## 4.1 Our parallel model

Multisplit cannot be solved by using only local operations; i.e., we cannot divide a multisplit problem into two independent subparts and solve each part locally without any communication between the two parts. We thus assume any viable implementation must include at least a single global operation to gather necessary global information from all elements (or group of elements). We generalize the approaches we study in this paper into a series of N rounds, where each round has 3 stages: a set of local operations (which run in parallel on independent subparts of the global problem); a global operation (across all subparts); and another set of local operations. In short: {local, global, local}, repeated N times; in this paper we refer to these three stages as {prescan, scan, postscan}.

The approaches from Section 3 all fit this model. Scan-based split starts by making a flag vector (where the local level is per-thread), performing a global scan operation on all flags, and then ordering the results into their final positions (thread-level local). The iterative (or recursive) scanbased split with m buckets repeats the above approach for m (or d log m e ) rounds. Radix sort also requires several rounds. Each round starts by identifying a bit (or a group of bits) from its keys (local), running a global scan operation, and then locally moving data such that all keys are now sorted based on the selected bit (or group of bits). In radix sort literature, these stages are mostly known as up-sweep, scan and down-sweep. Reduced-bit sort is derived from radix sort; the main differences are that in the first round, the label vector and the new packed values are generated locally (thread-level), and in the final round, the packed key-value pairs are locally unpacked (thread-level) to form the final results.

## 4.2 Multisplit requires a global computation

Let's explore the global and local components of stable multisplit, which together compute a unique permutation of key-value pairs into their final positions. Suppose we have m buckets B 0 ; B 1 ; : : : ; B m 1, each with h 0 ; h 1 ; : : : ; h m 1 elements respectively ( ˝ i h i = n , where n is the total number of elements). If u i 2 B j is the i th element in key vector u , then its final permuted position

p ' i ' should be (from u i 's perspective):

<!-- formula-not-decoded -->

where j j is the cardinality operator that denotes the number of elements within its set argument. The left term is the total number of key elements that belong to the preceding buckets, and the right term is the total number of preceding elements (with respect to u i ) in u i 's bucket, B j . Computing both of these terms in this form and for all elements (for all i ) requires global operations (e.g., computing a histogram of buckets).

## 4.3 Dividing multisplit into subproblems

Equation (1) clearly shows what we need in order to compute each permutation (i.e., final destinations for a stable multisplit solution): a histogram of buckets among all elements ( h k ) as well as local offsets for all elements within the same bucket (the second term). However, it lacks intuition about how we should compute each term. Both terms in equation (1), at their core, answer the following question: to which bucket does each key belong? If we answer this question for every key and for all buckets (hypothetically, for each bucket we store a binary bitmap variable of length n to show all elements that belong to that bucket), then each term can be computed intuitively as follows: 1) histograms are equal to counting all elements in each bucket (reduction of a specific bitmap); 2) local offsets are equivalent to counting all elements from the beginning to that specific index and within the same bucket (scan operation on a specific bitmap). This intuition is closely related to our definition of the scan-based split method in Section 3.2. However, it is practically not competitive because it requires storing huge bitmaps (total of mn binary variables) and then performing global operations on them.

Although the above solution seems impractical for a large number of keys, it seems more favorable for input problems that are small enough. As an extreme example, suppose we wish to perform multisplit on a single key. Each bitmap variable becomes just a single binary bit. Performing reduction and scan operations become as trivial as whether a single bit is set or not. Thus, a divide-and-conquer approach seems like an appealing solution to solve equation (1): we would like to divide our main problem into small enough subproblems such that solving each subproblem is 'easy' for us. By an easy computation we mean that it is either small enough so that we can afford to process it sequentially, or that instead we can use an efficient parallel hardware alternative (such as the GPU's ballot instruction). When we solve a problem directly in this way, we call it a direct solve . Next, we formalize our divide-and-conquer formulation.

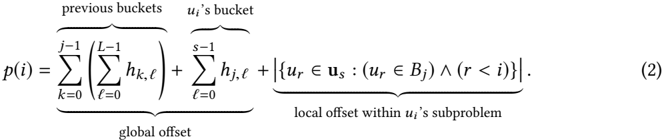

Let us divide our input key vector u into L contiguous subproblems: u = » u 0 ; u 1 ; : : : ; u L 1 … . Suppose each subvector u ' has h 0 ; ' ; h 1 ; ' ; : : : ; h m 1 ; ' elements in buckets B 0 ; B 1 ; : : : B m 1 respectively. For example, for arbitrary values of i , s , and j such that key item u i 2 u s and u i is in bucket B j , equation (1) can be rewritten as (from u i 's perspective):

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Mathematical Equation: Formula (2)

### Overview

The image contains a mathematical equation labeled as (2), which defines a function \( p(i) \). The equation is structured into three components:

1. **Global offset** (summations over previous buckets)

2. **u_i's bucket** (summation over its own bucket)

3. **Local offset within u_i's subproblem** (set-based condition).

### Components/Axes

- **Global Offset**:

- Outer summation: \( \sum_{k=0}^{j-1} \) (index \( k \), ranges from 0 to \( j-1 \))

- Inner summation: \( \sum_{\ell=0}^{L-1} h_{k,\ell} \) (index \( \ell \), ranges from 0 to \( L-1 \))

- Represents aggregated contributions from "previous buckets."

- **u_i's Bucket**:

- Summation: \( \sum_{\ell=0}^{s-1} h_{j,\ell} \) (index \( \ell \), ranges from 0 to \( s-1 \))

- Represents contributions from "u_i's bucket."

- **Local Offset**:

- Set notation: \( \left| \left\{ u_r \in \mathbf{u}_s : (u_r \in B_j) \land (r < i) \right\} \right| \)

- Counts elements \( u_r \) satisfying:

- \( u_r \in \mathbf{u}_s \) (element \( u_r \) belongs to set \( \mathbf{u}_s \))

- \( u_r \in B_j \) (element \( u_r \) is in bucket \( B_j \))

- \( r < i \) (index \( r \) is less than \( i \)).

### Detailed Analysis

- **Global Offset**:

- Double summation aggregates values \( h_{k,\ell} \) across all previous buckets (\( k < j \)) and their subcomponents (\( \ell < L \)).

- No numerical values provided; structure implies hierarchical aggregation.

- **u_i's Bucket**:

- Single summation over \( h_{j,\ell} \), where \( j \) corresponds to the current bucket and \( \ell < s \).

- Direct contribution from the current bucket's subcomponents.

- **Local Offset**:

- Set cardinality operator \( | \cdot | \) quantifies overlapping elements meeting all three conditions.

- Spatial/temporal constraint \( r < i \) suggests temporal or hierarchical ordering.

### Key Observations

1. **Hierarchical Structure**: The equation combines global, bucket-specific, and localized terms, suggesting a multi-level optimization or resource allocation problem.

2. **Set-Based Local Offset**: The use of set notation implies constraints on element inclusion, possibly for fairness or efficiency in subproblem allocation.

3. **No Numerical Data**: The equation is symbolic; no specific values or trends can be extracted.

### Interpretation

This equation likely models a system where:

- **Global offset** captures historical or cumulative effects (e.g., past resource usage).

- **u_i's bucket** represents current contributions.

- **Local offset** adjusts for overlapping or conflicting constraints within a subproblem (e.g., avoiding over-allocation).

The structure resembles algorithms in distributed systems, scheduling, or resource partitioning, where balancing global and local factors is critical. The absence of numerical data prevents quantitative analysis, but the symbolic form emphasizes modularity and constraint handling.

</details>

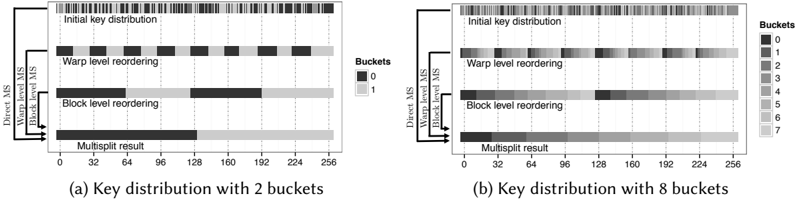

This formulation has two separate parts. The first and second terms require global computation (first: the element count of all preceding buckets across all subproblems, and second: the element count of the same bucket in all preceding subproblems). The third term can be computed locally within each subproblem. Note that equation (1) and (2)'s first terms are equivalent (total number of previous buckets), but the second term in (1) is broken into the second and third terms in (2).

The first and second terms can both be computed with a global histogram computed over L local histograms. A global histogram is generally implemented with global scan operations (here, exclusive prefix-sum). We can characterize this histogram as a scan over a 2-dimensional matrix H = » h i ; ' … m L , where the 'height' of the matrix is the bucket count m and the 'width' of the matrix is the number of subproblems L . The second term can be computed by a scan operation of size L on each row (total of m scans for all buckets). The first term will be a single scan operation of size m over the reduction of all rows (first reduce each row horizontally to compute global histograms and then scan the results vertically). Equivalently, both terms can be computed by a single scan operation of size mL over a row-vectorized H . Either way, the cost of our global operation is roughly proportional to both m and L . We see no realistic way to reduce m . Thus we concentrate on reducing L .

## 4.4 Hierarchical approach toward multisplit localization

We prefer to have small enough subproblems (¯ n ) so that our local computations are 'easy' for a direct solve. For any given subproblem size, we will have L = n ¯ n subproblems to be processed globally as described before. On the other hand, we want to minimize our global computations as well, because they require synchronization among all subproblems and involve (expensive) global memory accesses. So, with a fixed input size and a fixed number of buckets ( n and m ), we would like to both decrease our subproblem size and number of subproblems, which is indeed paradoxical.

Our solution is a hierarchical approach. We do an arbitrary number of levels of divide-andconquer, until at the last level, subproblems are small enough to be solved easily and directly (our preferred ¯ n ). These results are then appropriately combined together to eventually reach the first level of the hierarchy, where now we have a reasonable number of subproblems to be combined together using global computations (our preferred L ).

Another advantage of such an approach is that, in case our hardware provides a memory hierarchy with smaller but faster local memory storage (as GPUs do with register level and shared memory level hierarchies, as opposed to the global memory), we can potentially perform all computations related to all levels except the first one in our local memory hierarchies without any global memory interaction. Ideally, we would want to use all our available register and shared memory with our subproblems to solve them locally, and then combine the results using global operations. In practice, however, since our local memory storage options are very limited, such solution may still lead to a large number of subproblems to be combined with global operations (large L ). As a result, by adding more levels of hierarchy (than the available memory hierarchies in our device) we can systematically organize the way we fill our local memories, process them locally, store intermediate results, and then proceed to the next batch, which overall reduces our global operations. Next, we will theoretically consider such a hierarchical approach ( multi-level localization ) and explore the changes to equation (2).

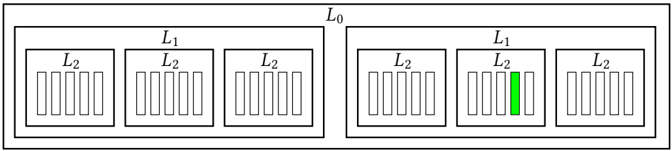

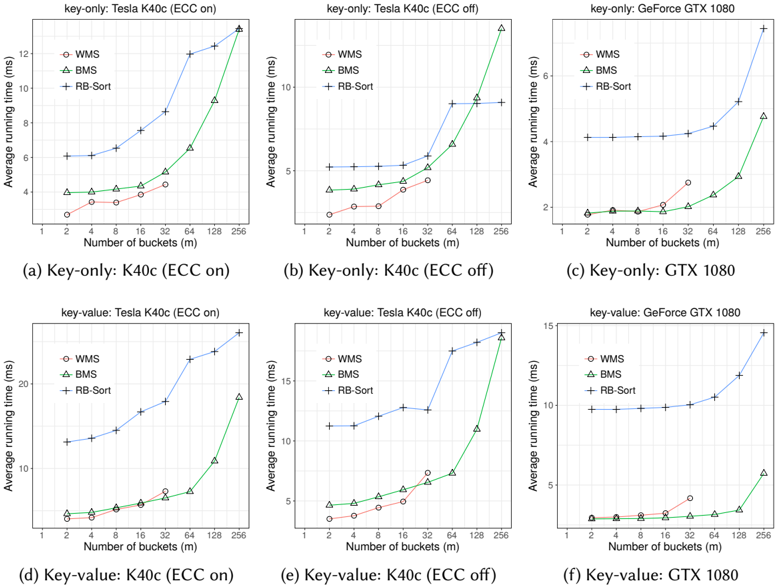

λ -level localization. For any given set of arbitrary non-zero integers f L 0 ; L 1 ; : : : ; L λ 1 g , we can perform λ levels of localizations as follows: Suppose we initially divide our problem into L 0 smaller parts. These divisions form our first level (i.e., the global level ). Next, each subproblem at the first level is divided into L 1 smaller subproblems to form the second level. We continue this process until the λ th level (with L λ 1 subproblems each). Figure 2 shows an example of our hierarchical

Fig. 2. An example for our localization terminology. Here we have 3 levels of localization with L 0 = 2 , L 1 = 3 and L 2 = 5 . For example, the marked subproblem (in green) can be addressed by a tuple ' 1 ; 1 ; 3 ' where each index respectively denotes its position in the hierarchical structure.

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Diagram: Hierarchical Layered Structure with Anomaly Highlight

### Overview

The diagram depicts a two-tiered hierarchical system labeled **L₀** (top-level container) divided into two primary sections: **L₁** (left) and **L₁** (right). Each **L₁** section contains three **L₂** subsections, represented as vertical bar groups. A single **L₂** bar in the right **L₁** section is highlighted in green, indicating a distinct value or status.

---

### Components/Axes

- **Main Container**: Labeled **L₀**, enclosing the entire structure.

- **Primary Sections**:

- **L₁ (Left)**: Contains three **L₂** subsections.

- **L₁ (Right)**: Contains three **L₂** subsections, with the middle **L₂** highlighted green.

- **Subsections**:

- **L₂**: Uniformly labeled across all instances, represented as vertical bars.

- **Color Coding**:

- **Green**: Highlights a single bar in the middle **L₂** of the right **L₁** section.

---

### Detailed Analysis

- **Left L₁ Section**:

- Three **L₂** subsections with identical bar heights (no visual variation).

- **Right L₁ Section**:

- Two **L₂** subsections with identical bar heights (matching the left **L₁** section).

- Middle **L₂** subsection contains a green-highlighted bar, taller than the others in its group.

- **Spatial Grounding**:

- **L₀**: Top-level container, centered.

- **L₁ (Left)**: Positioned left of center within **L₀**.

- **L₁ (Right)**: Positioned right of center within **L₀**.

- **L₂**: Subsections are evenly spaced horizontally within each **L₁** section.

---

### Key Observations

1. **Uniformity**: All **L₂** bars except the green one are identical in height, suggesting consistent values across most components.

2. **Anomaly**: The green-highlighted bar in the right **L₁**’s middle **L₂** is visually distinct, indicating an outlier or critical value.

3. **Hierarchical Symmetry**: The left and right **L₁** sections mirror each other in structure but differ in the presence of the green bar.

---

### Interpretation

This diagram likely represents a system with layered components (e.g., software modules, hardware layers, or data processing stages). The **L₀** container acts as the overarching framework, while **L₁** and **L₂** denote nested sub-systems or processes. The green bar’s placement in the right **L₁**’s middle **L₂** suggests:

- A **critical component** or **threshold** within that layer.

- A **deviation from normal operation** (e.g., error, peak performance, or resource allocation).

- A **central role** for the highlighted **L₂** in the right **L₁** section, potentially acting as a bridge or focal point between layers.

The absence of numerical labels or explicit legends leaves the exact meaning of the green bar open to context, but its prominence implies it is a key focus for analysis or intervention.

</details>

division of the multisplit problem. There are total of L total = L 0 L 1 : : : L λ 1 smaller problems and their results should be hierarchically added together to compute the final permutation p ' i ' . Let ' ' 0 ; ' 1 ; : : : ; ' λ 1 ' denote a subproblem's position in our hierarchical tree: ' 0th branch from the first level, ' 1th branch from the second level, and so forth until the last level. Among all elements within this subproblem, we count those that belong to bucket B i as h i ; ' ' 0 ; : : :; ' λ 1 ' . Similar to our previous permutation computation, for an arbitrary i , j , and ' s 0 ; : : : ; s λ 1 ' , suppose u i 2 u ' s 0 ; : : :; s λ 1 ' and u i 2 B j . We can write p ' i ' as follows (from u i 's perspective):

<!-- formula-not-decoded -->

There is an important resemblance between this equation and equation (2). The first and second terms (from top to bottom) are similar, with the only difference that each h k ; ' is now further broken into L 1 L λ 1 subproblems (previously it was just L = L 0 subproblems). The other terms of (3) can be seen as a hierarchical disintegration of the local offset in (2).

Similar to Section 4.3, we can form a matrix H = » h j ; ' 0 … m L 0 for global computations where

<!-- formula-not-decoded -->

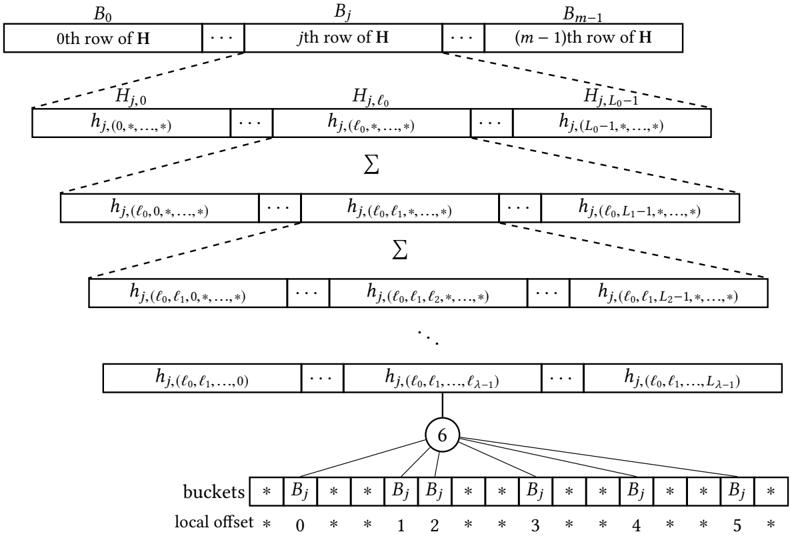

Figure 3 depicts an schematic example of multiple levels of localization. At the highest level, for any arbitrary subproblem ' ' 0 ; ' 1 : : : ; ' λ 1 ' , local offsets per key are computed as well as all bucket counts. Bucket counts are then summed and sent to a lower level to form the bucket count for more subproblems ( L λ 1 consecutive subproblems). This process is continued until reaching the

Fig. 3. Each row of H belongs to a different bucket. Results from different subproblems in different levels are added together to form a lower level bucket count. This process is continued until reaching the first level where H is completed.

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Diagram: Hierarchical Matrix Decomposition with Bucket Allocation

### Overview

The diagram illustrates a hierarchical decomposition of matrix elements into "buckets" with local offsets. It shows a multi-level structure where rows of a matrix **H** are broken down into progressively refined sub-elements, ultimately mapped to buckets labeled **B₀** to **B₅** with associated local offsets (0–5). Dotted lines indicate relationships between matrix elements and their bucket assignments.

---

### Components/Axes

1. **Matrix Rows (Top Section)**:

- **B₀** to **Bₘ₋₁**: Represent rows of matrix **H** (0th to (m−1)th row).

- Each row contains elements like **Hⱼ,₀**, **Hⱼ,ℓ₀**, **Hⱼ,L₀−1**, with indices varying across dimensions (e.g., **hⱼ(0,*,...,*)**, **hⱼ(ℓ₀,*,...,*)**).

2. **Summation Layers (Middle Section)**:

- **Σ Symbols**: Indicate aggregation operations across indices (e.g., **hⱼ(ℓ₀,ℓ₁,*,...,*)**).

- Elements are grouped by increasing specificity in their index patterns (e.g., **hⱼ(ℓ₀,ℓ₁,...,L₁−1)**).

3. **Buckets and Local Offsets (Bottom Section)**:

- **Buckets**: Labeled **B₀** to **B₅**, each marked with a star (*) symbol.

- **Local Offsets**: Numerical values (0–5) positioned below buckets, suggesting positional identifiers within buckets.

4. **Dotted Lines**:

- Connect matrix elements to buckets, implying a mapping or allocation logic (e.g., **hⱼ(ℓ₀,ℓ₁,...,L₁−1)** maps to **B₃** with offset 3).

---

### Detailed Analysis

- **Matrix Element Indices**:

- Elements are parameterized by indices like **ℓ₀, ℓ₁, ..., L₁−1**, which likely represent hierarchical or partitioned dimensions of **H**.

- The use of **\*** in indices (e.g., **hⱼ(0,*,...,*)**) suggests wildcard or variable dimensions, possibly indicating sparsity or aggregation.

- **Bucket Allocation**:

- Buckets **B₀–B₅** are evenly spaced at the bottom, with local offsets 0–5 directly below them.

- Dotted lines from elements to buckets imply a one-to-one or many-to-one mapping (e.g., **hⱼ(ℓ₀,ℓ₁,...,L₁−1)** maps to **B₃** with offset 3).

- **Hierarchical Structure**:

- The diagram progresses from coarse (entire rows **B₀–Bₘ₋₁**) to fine-grained (bucket-specific elements **hⱼ(ℓ₀,ℓ₁,...,L₁−1)**).

- Summation symbols (**Σ**) indicate iterative refinement or aggregation across dimensions.

---

### Key Observations

1. **No Numerical Data**: The diagram lacks explicit numerical values, focusing instead on symbolic relationships and structural patterns.

2. **Consistent Labeling**:

- All bucket labels (**B₀–B₅**) and offsets (0–5) are explicitly marked.

- Matrix elements follow a consistent indexing convention (e.g., **Hⱼ,ℓ₀**, **Hⱼ,ℓ₁**).

3. **Spatial Grounding**:

- **B₀** is top-left, **Bₘ₋₁** is top-right.

- Buckets are bottom-aligned, with offsets directly beneath them.

4. **Legend Ambiguity**:

- No explicit legend is present, but the star (*) symbol and offset numbering likely serve as implicit identifiers.

---

### Interpretation

This diagram represents a **hierarchical data structure** for organizing matrix elements into optimized storage or computation units (buckets). Key insights:

- **Efficient Access**: By decomposing **H** into buckets with local offsets, the structure may enable faster retrieval or parallel processing of sub-elements.

- **Aggregation Logic**: Summation symbols (**Σ**) suggest that certain elements are precomputed or grouped for efficiency (e.g., sparse matrix operations).

- **Indexing Strategy**: The use of **\*** in indices implies a flexible or adaptive partitioning scheme, possibly for handling variable-sized data or dynamic resizing.

The absence of numerical values limits quantitative analysis, but the structural patterns emphasize **modularity** and **scalability** in matrix operations. This could be relevant to fields like machine learning (e.g., tensor decomposition) or distributed computing (e.g., sharding data across nodes).

</details>

first level (the global level) where we have bucket counts for each L 1 L λ 1 consecutive subproblems. This is where H is completed, and we can proceed with our global computation. Next, we discuss the way we compute local offsets.

## 4.5 Direct solve: Local offset computation

At the very last level of our localization, each element must compute its own local offset, which represents the number of elements in its subproblem (with our preferred size ¯ n ) that both precede it and share its bucket. To compute local offsets of a subproblem of size ¯ n , we make a new binary matrix ¯ H m ¯ n , where each row represents a bucket and each column represents a key element. Each entry of this new matrix is one if the corresponding key element belongs to that bucket, and zero otherwise. Then by performing an exclusive scan on each row, we can compute local offsets for all elements belonging to that row (bucket). So each subproblem requires the following computations:

- (1) Mark all elements in each bucket (making local ¯ H )

- (2) m local reductions over the rows of ¯ H to compute local histograms (a column in H )

- (3) m local exclusive scans on rows of ¯ H (local offsets)

For clarity, we separate steps 2 and 3 above, but we can achieve both with a single local scan operation. Step 2 provides all histogram results that we need in equations (2) or (3) (i.e., all h k ; ' ' 0 ; : : :; ' 1 ' values) and step 3 provides the last term in either equation (Fig. 3).

λ

It is interesting to note that as an extreme case of localization, we can have L = n subproblems, where we divide our problem so much that in the end each subproblem is a single element. In such case, ¯ H is itself a binary value. Thus, step 2's result is either 0 or 1. The local offset (step 3) for such a singleton matrix is always a zero (there is no other element within that subproblem).

## 4.6 Our multisplit algorithm

Now that we've outlined the different computations required for the multisplit, we can present a high-level view of the algorithmic skeleton we use in this paper. We require three steps:

- (1) Local . For each subproblem at the highest level of our localization, for each bucket, count the number of items in the subproblem that fall into that bucket (direct solve for bucket counts). Results are then combined hierarchically and locally (based on equation (3)) until we have bucket counts per subproblem for the first level (global level).

- (2) Global . Scan the bucket counts for each bucket across all subproblems in the first level (global level), then scan the bucket totals. Each subproblem now knows both a) for each bucket, the total count for all its previous buckets across the whole input vector (term 1 in equation (3)) and b) for each bucket, the total count from the previous subproblems (term 2 in equation (3)).

- (3) Local . For each subproblem at the highest level of our localization, for each item, recompute bucket counts and compute the local offset for that item's bucket (direct solve). Local results for each level are then appropriately combined together with the global results from the previous levels (based on equation (3)) to compute final destinations. We can now write each item in parallel into its location in the output vector.

## 4.7 Reordering elements for better locality

After computing equation (3) for each key element, we can move key-value pairs to their final positions in global memory. However, in general, any two consecutive key elements in the original input do not belong to the same bucket, and thus their final destination might be far away from each other (i.e., a global scatter). Thus, when we write them back to memory, our memory writes are poorly coalesced, and our achieved memory bandwidth during this global scatter is similarly poor. This results in a huge performance bottleneck. How can we increase our coalescing and thus the memory bandwidth of our final global scatter? Our solution is to reorder our elements within a subproblem at the lowest level (or any other higher level) before they are scattered back to memory. Within a subproblem, we attempt to place elements from the same bucket next to each other, while still preserving order within a bucket (and thus the stable property of our multisplit implementation). We do this reordering at the same time we compute local offsets in equation (2). How do we group elements from the same bucket together? A local multisplit within the subproblem!

We have already computed histogram and local offsets for each element in each subproblem. We only need to perform another local exclusive scan on local histogram results to compute new positions for each element in its subproblem (computing equation (1) for each subproblem). We emphasize that performing this additional stable multisplit on each subproblem does not change its histogram and local offsets, and hence does not affect any of our computations described previously from a global perspective; the final multisplit result is identical. But, it has a significant positive impact on the locality of our final data writes to global memory.

It is theoretically better for us to perform reordering in our largest subproblems (first level) so that there are potentially more candidate elements that might have consecutive/nearby final destinations. However, in practice, we may prefer to reorder elements in higher levels, not because

they provide better locality but for purely practical limitations (such as limited available local memory to contain all elements within that subproblem).

## 5 IMPLEMENTATION DETAILS

So far we have discussed our high level ideas for implementing an efficient multisplit algorithm for GPUs. In this section we thoroughly describe our design choices and implementation details. We first discuss existing memory and computational hierarchies in GPUs, and conventional localization options available on such devices. Then we discuss traditional design choices for similar problems such as multisplit, histogram, and radix sort. We follow this by our own design choices and how they differ from previous work. Finally, we propose three implementation variations of multisplit, each with its own localization method and computational details.

## 5.1 GPU memory and computational hierarchies

As briefly discussed in Section 2, GPUs offer three main memory storage options: 1) registers dedicated to each thread, 2) shared memory dedicated to all threads within a thread-block, 3) global memory accessible by all threads in the device. 5 From a computational point of view there are two main computational units: 1) threads have direct access to arithmetic units and perform registerbased computations, 2) all threads within a warp can perform a limited but useful set of hardware based warp-wide intrinsics (e.g., ballots, shuffles, etc.). Although the latter is not physically a new computational unit, its inter-register communication among threads opens up new computational capabilities (such as parallel voting).

Based on memory and computational hierarchies discussed above, there are four primary ways of solving a problem on the GPU: 1) thread-level, 2) warp-level, 3) block-level, and 4) devicelevel (global). Traditionally, most efficient GPU programs for multisplit, histogram and radix sort [5, 17, 21] start from thread-level computations, where each thread processes a group of input elements and performs local computations (e.g., local histograms). These thread-level results are then usually combined to form a block-level solution, usually to benefit from the block's shared memory. Finally, block-level results are combined together to form a global solution. If implemented efficiently, these methods are capable of achieving high-quality performance from available GPU resources (e.g., CUB's high efficiency in histogram and radix sort).

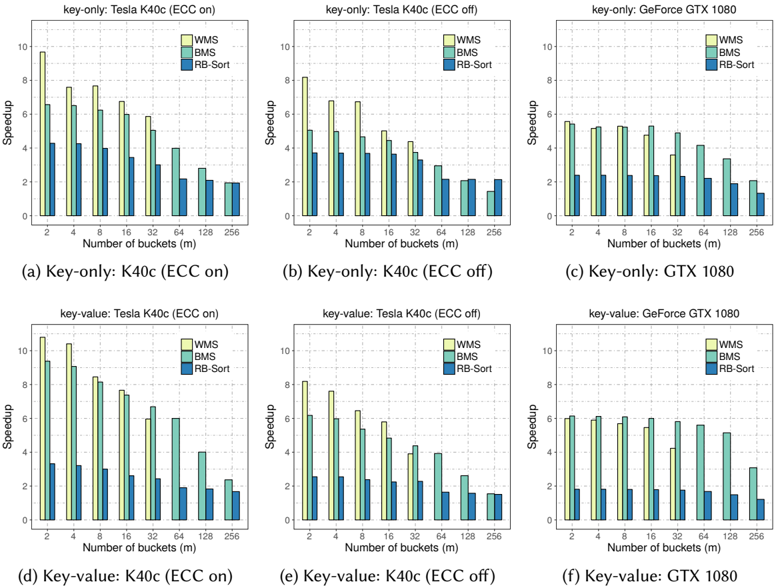

In contrast, we advocate another way of solving these problems, based on a warp granularity. We start from a warp-level solution and then proceed up the hierarchy to form a device-wide (global) solution (we may bypass the block-level solution as well). Consequently, we target two major implementation alternatives to solve our multisplit problem: 1) warp-level ! device-level, 2) warp-level ! block-level ! device-level (in Section 5.8, we discuss the costs and benefits of our approaches compared to a thread-level approach). Another algorithmic option that we outlined in Section 4.7 was to reorder elements to get better (coalesced) memory accesses. As a result of combining these two sets of alternatives, there will be four possible variations that we can explore. However, if we neglect reordering, our block-level solution will be identical to our warp-level solution, which leaves us with three main final options that all start with warp-level subproblem solutions and end up with a device-level global solution: 1) no reordering, 2) with reordering and bypassing a block-level solution, 3) with reordering and including a block-level solution. Next, we describe these three implementations and show how they fit into the multi-level localization model we described in Section 4.4.

5 There are other types of memory units in GPUs as well, such as local, constant, and texture memory. However, these are in general special-purpose memories and hence we have not targeted them in our design.

## 5.2 Our proposed multisplit algorithms

So far we have seen that we can reduce the size and cost of our global operation (size of H ) by doing more local work (based on our multi-level localization and hierarchical approach). This is a complex tradeoff, since we prefer a small number of subproblems in our first level (global operations), as well as small enough subproblem sizes in our last levels so that they can easily be solved within a warp. What remains is to choose the number and size of our localization levels and where to perform reordering. All these design choices should be made based on a set of complicated factors such as available shared memory and registers, achieved occupancy, required computational load, etc.

In this section we describe three novel and efficient multisplit implementations that explore different points in the design space, using the terminology that we introduced in Section 4.4 and Section 5.1.

- Direct Multisplit Rather than split the problem into subproblems across threads, as in traditional approaches [17], Direct Multisplit (DMS) splits the problem into subproblems across warps (warp-level approach), leveraging efficient warp-wide intrinsics to perform the local computation.

- Warp-level Multisplit Warp-level Multisplit (WMS) also uses a warp-level approach, but additionally reorders elements within each subproblem for better locality.

- Block-level Multisplit Block-level Multisplit (BMS) modifies WMS to process larger-sized subproblems with a block-level approach that includes reordering, offering a further reduction in the cost of the global step at the cost of considerably more complex local computations.

We now discuss the most interesting aspects of our implementations of these three approaches, separately describing how we divide the problem into smaller pieces (our localization strategies), compute histograms and local offsets for larger subproblem sizes, and reorder final results before writing them to global memory to increase coalescing.

## 5.3 Localization and structure of our multisplit

In Section 4 we described our parallel model in solving the multisplit problem. Theoretically, we would like to both minimize our global computations as well as maximize our hardware utilization. However, in practice designing an efficient GPU algorithm is more complicated. There are various factors that need to be considered, and sometimes even be smartly sacrificed in order to satisfy a more important goal: efficiency of the whole algorithm. For example, we may decide to recompute the same value multiple times in different kernel launches, just so that we do not need to store them in global memory for further reuse.

In our previous work [3], we implemented our multisplit algorithms with a straightforward localization strategy: Direct and Warp-level Multisplit divided problems into warp-sized subproblems (two levels of localization), and Block-level Multisplit used block-sized subproblems (three levels of localization) to extract more locality by performing more complicated computations needed for reordering. In order to have better utilization of available resources, we assigned multiple similar tasks to each launched warp/block (so each warp/block processed multiple independent subproblems). Though this approach was effective, we still faced relatively expensive global computations, and did not extract enough locality from our expensive reordering step. Both of these issues could be remedied by using larger subproblems within the same localization hierarchy. However, larger subproblems require more complicated computations and put more pressure on the limited available GPU resources (registers, shared memory, memory bandwidth, etc.). Instead, we redesigned our implementations to increase the number of levels of localization. This lets us

Table 1. Size of subproblems for each multisplit algorithm. Total size of our global computations will then be the size of H equal to mn ¯ n .

| Algorithm | subproblem size (¯ n ) |

|-------------|-------------------------------------------------------|

| DMS | N (window/warp) N thread |

| WMS | N (tile/warp) N (window/tile) N thread |

| BMS | N (tile/block) N (warp/tile) N (window/warp) N thread |

have larger subproblems, while systematically coordinating our computational units (warps/blocks) and available resources to achieve better results.

Direct Multisplit. Our DMS implementation has three levels of localizations: Each warp is assigned to a chunk of consecutive elements (first level). This chunk is then divided into a set of consecutive windows of warp-width ( N thread = 32) size (second level). For each window, we multisplit without any reordering (third level).

Warp-level Multisplit. WMS is similar to DMS, but it also performs reordering to get better locality. In order to get better resource utilization, we add another level of localization compared to DMS (total of four). Each warp performs reordering over only a number of processed windows (a tile ), and then continues to process the next tile. In general, each warp is in charge of a chunk of consecutive elements (first level). Each chunk is divided into several consecutive tiles (second level). Each tile is processed by a single warp and reordered by dividing it into several consecutive windows (third level). Each window is then directly processed by warp-wide methods (fourth level). The reason that we add another level of localization for each tile is simply because we do not have sufficient shared memory per warp to store the entire subproblem.

Block-level Multisplit. BMS has five levels of localization. Each thread-block is in charge of a chunk of consecutive elements (first level). Each chunk is divided into a consecutive number of tiles (second level). Each tile is processed by all warps within a block (third level) and reordering happens in this level. Each warp processes multiple consecutive windows of input data within the tile (fourth level). In the end each window is processed directly by using warp-wide methods (fifth level). Here, for a similar reason as in WMS (limited shared memory), we added another level of localization per tile.

Figure 4 shows a schematic example of our three methods next to each other. Note that we have flexibility to tune subproblem sizes in each implementation by changing the sizing parameters in Table 1.

Next we briefly highlight the general structure of our computations (based on our model in Section 4.1). We use DMS to illustrate:

Pre-scan (local). Each warp reads a window of key elements (of size N thread = 32), generates a local matrix ¯ H , and computes its histogram (reducing each row). Next, histogram results are stored locally in registers. Then, each warp continues to the next window and repeats the process, adding histogram results to the results from previous windows. In the end, each warp has computed a single column of H and stores its results into global memory.

Scan (global). We perform an exclusive scan operation over the row-vectorized H and store the result back into global memory (e.g., matrix G = » д i ; ' … m L 0 ).

Fig. 4. Different localizations for DMS, WMS and BMS are shown schematically. Assigned indices are just for illustration. Each small rectangle denotes a window of 32 consecutive elements. Reordering takes place per tile, but global offsets are computed per subproblem.

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Diagram: Subproblem Localization Structures

### Overview

The image presents three technical diagrams illustrating different subproblem localization strategies: (a) DMS localization, (b) WMS localization, and (c) BMS localization. Each diagram uses rectangular blocks to represent computational units (warps, tiles, blocks) and their hierarchical organization.

### Components/Axes

1. **DMS Localization (a)**

- Title: "Subproblem per warp"

- Structure: Single row of 10 identical vertical rectangles (warps)

- Labels: No axis markers or numerical values

2. **WMS Localization (b)**

- Title: "Subproblem per warp"

- Structure: 3 horizontal tiles (tile 0, tile 1, tile 2), each containing 3 vertical rectangles (warps)

- Labels: Tile identifiers (0-2) positioned above each tile group

3. **BMS Localization (c)**

- Title: "Subproblem per block"

- Structure: 2 horizontal blocks (tile 0, tile 1), each containing 3 warps (warp 0-2), with each warp divided into 3 vertical rectangles

- Labels:

- Block identifiers (tile 0/1) at top of each block

- Warp identifiers (0-2) above each warp group

- No numerical values or axis markers

### Detailed Analysis

- **DMS Localization**: Simplest structure with direct 1:1 mapping between subproblems and warps (10 warps total)

- **WMS Localization**: Introduces tile-level organization (3 tiles × 3 warps = 9 warps total)

- **BMS Localization**: Most granular with block-tile-warp hierarchy (2 blocks × 3 tiles × 3 warps = 18 warps total)

- All diagrams use uniform rectangle proportions, suggesting equal computational load per unit

- No color coding or numerical differentiation between elements

### Key Observations

1. Hierarchical complexity increases from DMS → WMS → BMS

2. BMS localization shows 2× more blocks than WMS tiles

3. Warp count remains consistent (3 warps per tile/block in WMS/BMS)

4. No explicit performance metrics or timing data present

### Interpretation

This diagram illustrates three computational paradigms for parallel processing:

1. **DMS** represents basic warp-level parallelism

2. **WMS** adds tile-level organization for better resource utilization

3. **BMS** demonstrates block-level management for complex workloads

The progression suggests increasing architectural sophistication for handling computational dependencies. The absence of numerical data implies this is a conceptual model rather than performance benchmark. The uniform rectangle sizing indicates equal resource allocation per subproblem unit across all architectures.

</details>

Post-scan (local). Each warp reads a window of key-value pairs, generates its local matrix ¯ H again, 6 and computes local offsets (with a local exclusive scan on each row). Similar to the pre-scan stage, we store histogram results into registers. We then compute final positions by using the global base addresses from G , warp-wide base addresses (warp-wide histogram results up until that window), and local offsets. Then we write key-value pairs directly to their storage locations in the output vector. For example, if key u 2 B i is read by warp ' and its local offset is equal to k , its final position will be д i ; ' + h i + k , where h i is the warp-wide histogram result up until that window (referring to equation (3) is helpful to visualize how we compute final addresses with a multi-level localization).

Algorithm 1 shows a simplified pseudo-code of the DMS method (with less than 32 buckets). Here, we can identify each key's bucket by using a bucket\_identifier() function. We compute warp histogram and local offsets with warp\_histogram() and warp\_offsets() procedures, which we describe in detail later in this section (Alg. 2 and 3).

## 5.4 Ballot-based voting

In this section, we momentarily change our direction into exploring a theoretical problem about voting. We then use this concept to design and implement our warp-wide histograms (Section 5.5). We have previously emphasized our design decision of a warp-wide granularity. This decision is enabled by the efficient warp-wide intrinsics of NVIDIA GPUs. In particular, NVIDIA GPUs support a warp-wide intrinsic \_\_ballot(predicate) , which performs binary voting across all threads in a warp. More specifically, each thread evaluates a local Boolean predicate, and depending on the result of that predicate (true or false), it toggles a specific bit corresponding to its position in the warp (i.e., lane ID from 0 to 31). With a 32-element warp, this ballot fits in a 32-bit register, so that the i th thread in a warp toggles the i th bit. After the ballot is computed, every participant thread can access the ballot result (as a bitmap) and see the voting result from all other threads in the same warp.

Now, the question we want to answer within our multisplit implementation is a generalization to the voting problem: Suppose there are m arbitrary agents (indexed from 0 to n 1), each evaluating a personalized non-binary predicate (a vote for a candidate) that can be any value from 0 to m 1. How is it possible to perform the voting so that any agent can know all the results (who voted for whom)?

6 Note that we compute ¯ H a second time rather than storing and reloading the results from the computation in the first step. This is deliberate. We find that the recomputation is cheaper than the cost of global store and load.

## ALGORITHM 1: The Direct Multisplit (DMS) algorithm

```

```

A naive way to solve this problem is to perform m separate binary votes. For each round 0 i < m , we just ask if anyone wants to vote for i . Each vote has a binary result (either voting for i or not). After m votes, any agent can look at the m n ballots and know which agent voted for which of the m candidates.

We note that each agent's vote (0 v i < n ) can be represented by log m binary digits. So a more efficient way to solve this problem requires just log m binary ballots per agent. Instead of directly asking for a vote per candidate ( m votes/bitmaps), we can ask for consecutive bits of each agent's vote (a bit at a time) and store them as a bitmap (for a total of log m bitmaps, each bitmap of size n ). For the j th bitmap (0 j < d log m e ), every i th bit is one if only the i th agent have voted to a

candidate whose j th bit in its binary representation was also one (e.g., the 0th bitmap includes all agents who voted for an odd-numbered candidate).

As a result, these d log m e bitmaps together contain all information to reconstruct every agent's vote. All we need to do is to imagine each bitmap as a row of a m n matrix. Each column represent the binary representation of the vote of that specific agent. Next, we use this scheme to perform some of our warp-wide computations, only using NVIDIA GPU's binary ballots.

## 5.5 Computing Histograms and Local Offsets

The previous subsections described why and how we create a hierarchy of localizations. Now we turn to the problem of computing a direct solve of histograms and local offsets on a warp-sized (DMS or WMS) or a block-sized (BMS) problem. In our implementation, we leverage the balloting primitives we described in Section 5.4. We assume throughout this section that the number of buckets does not exceed the warp width ( m N thread ). Later we extend our discussion to any number of buckets in Section 5.7.

- 5.5.1 Warp-level Histogram. Previously, we described our histogram computations in each subproblem as forming a binary matrix ¯ H and doing certain computations on each row (reduction and scan). Instead of explicitly forming the binary matrix ¯ H , each thread generates its own version of the rows of this matrix and stores it in its local registers as a binary bitmap. Then per-row reduction is equivalent to a population count operation ( \_\_popc ), and exclusive scan equates to first masking corresponding bits and then reducing the result. We now describe both in more detail.