## Model-Agnostic Meta-Learning for Fast Adaptation of Deep Networks

Chelsea Finn 1 Pieter Abbeel 1 2 Sergey Levine 1

## Abstract

We propose an algorithm for meta-learning that is model-agnostic, in the sense that it is compatible with any model trained with gradient descent and applicable to a variety of different learning problems, including classification, regression, and reinforcement learning. The goal of meta-learning is to train a model on a variety of learning tasks, such that it can solve new learning tasks using only a small number of training samples. In our approach, the parameters of the model are explicitly trained such that a small number of gradient steps with a small amount of training data from a new task will produce good generalization performance on that task. In effect, our method trains the model to be easy to fine-tune. We demonstrate that this approach leads to state-of-the-art performance on two fewshot image classification benchmarks, produces good results on few-shot regression, and accelerates fine-tuning for policy gradient reinforcement learning with neural network policies.

## 1. Introduction

Learning quickly is a hallmark of human intelligence, whether it involves recognizing objects from a few examples or quickly learning new skills after just minutes of experience. Our artificial agents should be able to do the same, learning and adapting quickly from only a few examples, and continuing to adapt as more data becomes available. This kind of fast and flexible learning is challenging, since the agent must integrate its prior experience with a small amount of new information, while avoiding overfitting to the new data. Furthermore, the form of prior experience and new data will depend on the task. As such, for the greatest applicability, the mechanism for learning to learn (or meta-learning) should be general to the task and

1 University of California, Berkeley 2 OpenAI. Correspondence to: Chelsea Finn < cbfinn@eecs.berkeley.edu > .

Proceedings of the 34 th International Conference on Machine Learning , Sydney, Australia, PMLR 70, 2017. Copyright 2017 by the author(s).

the form of computation required to complete the task.

In this work, we propose a meta-learning algorithm that is general and model-agnostic, in the sense that it can be directly applied to any learning problem and model that is trained with a gradient descent procedure. Our focus is on deep neural network models, but we illustrate how our approach can easily handle different architectures and different problem settings, including classification, regression, and policy gradient reinforcement learning, with minimal modification. In meta-learning, the goal of the trained model is to quickly learn a new task from a small amount of new data, and the model is trained by the meta-learner to be able to learn on a large number of different tasks. The key idea underlying our method is to train the model's initial parameters such that the model has maximal performance on a new task after the parameters have been updated through one or more gradient steps computed with a small amount of data from that new task. Unlike prior meta-learning methods that learn an update function or learning rule (Schmidhuber, 1987; Bengio et al., 1992; Andrychowicz et al., 2016; Ravi & Larochelle, 2017), our algorithm does not expand the number of learned parameters nor place constraints on the model architecture (e.g. by requiring a recurrent model (Santoro et al., 2016) or a Siamese network (Koch, 2015)), and it can be readily combined with fully connected, convolutional, or recurrent neural networks. It can also be used with a variety of loss functions, including differentiable supervised losses and nondifferentiable reinforcement learning objectives.

The process of training a model's parameters such that a few gradient steps, or even a single gradient step, can produce good results on a new task can be viewed from a feature learning standpoint as building an internal representation that is broadly suitable for many tasks. If the internal representation is suitable to many tasks, simply fine-tuning the parameters slightly (e.g. by primarily modifying the top layer weights in a feedforward model) can produce good results. In effect, our procedure optimizes for models that are easy and fast to fine-tune, allowing the adaptation to happen in the right space for fast learning. From a dynamical systems standpoint, our learning process can be viewed as maximizing the sensitivity of the loss functions of new tasks with respect to the parameters: when the sensitivity is high, small local changes to the parameters can lead to

large improvements in the task loss.

The primary contribution of this work is a simple modeland task-agnostic algorithm for meta-learning that trains a model's parameters such that a small number of gradient updates will lead to fast learning on a new task. We demonstrate the algorithm on different model types, including fully connected and convolutional networks, and in several distinct domains, including few-shot regression, image classification, and reinforcement learning. Our evaluation shows that our meta-learning algorithm compares favorably to state-of-the-art one-shot learning methods designed specifically for supervised classification, while using fewer parameters, but that it can also be readily applied to regression and can accelerate reinforcement learning in the presence of task variability, substantially outperforming direct pretraining as initialization.

## 2. Model-Agnostic Meta-Learning

We aim to train models that can achieve rapid adaptation, a problem setting that is often formalized as few-shot learning. In this section, we will define the problem setup and present the general form of our algorithm.

## 2.1. Meta-Learning Problem Set-Up

The goal of few-shot meta-learning is to train a model that can quickly adapt to a new task using only a few datapoints and training iterations. To accomplish this, the model or learner is trained during a meta-learning phase on a set of tasks, such that the trained model can quickly adapt to new tasks using only a small number of examples or trials. In effect, the meta-learning problem treats entire tasks as training examples. In this section, we formalize this metalearning problem setting in a general manner, including brief examples of different learning domains. We will discuss two different learning domains in detail in Section 3.

We consider a model, denoted f , that maps observations x to outputs a . During meta-learning, the model is trained to be able to adapt to a large or infinite number of tasks. Since we would like to apply our framework to a variety of learning problems, from classification to reinforcement learning, we introduce a generic notion of a learning task below. Formally, each task T = {L ( x 1 , a 1 , . . . , x H , a H ) , q ( x 1 ) , q ( x t +1 | x t , a t ) , H } consists of a loss function L , a distribution over initial observations q ( x 1 ) , a transition distribution q ( x t +1 | x t , a t ) , and an episode length H . In i.i.d. supervised learning problems, the length H =1 . The model may generate samples of length H by choosing an output a t at each time t . The loss L ( x 1 , a 1 , . . . , x H , a H ) → R , provides task-specific feedback, which might be in the form of a misclassification loss or a cost function in a Markov decision process.

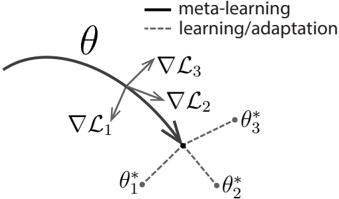

Figure 1. Diagram of our model-agnostic meta-learning algorithm (MAML), which optimizes for a representation θ that can quickly adapt to new tasks.

<details>

<summary>Image 1 Details</summary>

### Visual Description

\n

## Diagram: Meta-Learning Adaptation

### Overview

The image is a diagram illustrating the concept of meta-learning and adaptation in a parameter space. It depicts a curved trajectory representing the parameter space, with arrows indicating the direction of gradient descent during meta-learning and adaptation steps. The diagram focuses on how a model's parameters (θ) are updated through these processes.

### Components/Axes

* **θ:** Represents the model parameters. A curved line shows the trajectory of these parameters.

* **∇L₁ , ∇L₂ , ∇L₃:** Represent the gradients of the loss function (L) at different stages. These are vectors indicating the direction of steepest descent.

* **θ₁*, θ₂*, θ₃*:** Represent the optimized parameter values after adaptation or learning steps.

* **Legend:**

* Solid line: "meta-learning"

* Dashed line: "learning/adaptation"

### Detailed Analysis or Content Details

The diagram shows a curved trajectory in parameter space, labeled with 'θ'. Three gradient vectors are shown: ∇L₁, ∇L₂, and ∇L₃.

* **∇L₁:** Points downwards and slightly to the left. It originates from a point on the curved trajectory and leads towards θ₁*. The line connecting the end of the vector to θ₁* is dashed, indicating "learning/adaptation".

* **∇L₂:** Points downwards and slightly to the right. It originates from a point on the curved trajectory and leads towards θ₂*. The line connecting the end of the vector to θ₂* is dashed, indicating "learning/adaptation".

* **∇L₃:** Points downwards. It originates from a point on the curved trajectory and leads towards the central point where θ₁*, θ₂*, and θ₃* converge. The line connecting the end of the vector to θ₃* is dashed, indicating "learning/adaptation".

* The solid line connecting the starting points of the gradient vectors to the central point represents "meta-learning". This suggests that meta-learning guides the overall direction of adaptation.

* The three optimized parameter values (θ₁*, θ₂*, θ₃*) converge to a single point, indicating a common optimal solution.

### Key Observations

* The gradient vectors (∇L₁, ∇L₂, ∇L₃) are not aligned, suggesting that the loss landscape is complex and the optimization process is not straightforward.

* The convergence of θ₁*, θ₂*, and θ₃* to a single point indicates that the meta-learning process is successful in finding a good initialization or adaptation strategy.

* The distinction between solid (meta-learning) and dashed (learning/adaptation) lines clearly delineates the two levels of optimization.

### Interpretation

This diagram illustrates the core idea of meta-learning: learning *how* to learn. The curved trajectory represents the parameter space of a model. The "learning/adaptation" steps (dashed lines) represent the optimization of the model's parameters for a specific task, guided by the gradient of the loss function (∇L). The "meta-learning" step (solid line) represents the optimization of the learning process itself, guiding the adaptation steps towards a more efficient and effective solution.

The convergence of the optimized parameters (θ₁*, θ₂*, θ₃*) suggests that meta-learning has successfully identified a good initialization or adaptation strategy that works well across different tasks. The diagram highlights the hierarchical nature of meta-learning, where the meta-learner optimizes the learner's adaptation process. The non-alignment of the gradient vectors suggests that the optimization landscape is complex, and meta-learning is crucial for navigating it effectively. The diagram does not provide numerical data, but rather a conceptual representation of the process.

</details>

In our meta-learning scenario, we consider a distribution over tasks p ( T ) that we want our model to be able to adapt to. In the K -shot learning setting, the model is trained to learn a new task T i drawn from p ( T ) from only K samples drawn from q i and feedback L T i generated by T i . During meta-training, a task T i is sampled from p ( T ) , the model is trained with K samples and feedback from the corresponding loss L T i from T i , and then tested on new samples from T i . The model f is then improved by considering how the test error on new data from q i changes with respect to the parameters. In effect, the test error on sampled tasks T i serves as the training error of the meta-learning process. At the end of meta-training, new tasks are sampled from p ( T ) , and meta-performance is measured by the model's performance after learning from K samples. Generally, tasks used for meta-testing are held out during meta-training.

## 2.2. A Model-Agnostic Meta-Learning Algorithm

In contrast to prior work, which has sought to train recurrent neural networks that ingest entire datasets (Santoro et al., 2016; Duan et al., 2016b) or feature embeddings that can be combined with nonparametric methods at test time (Vinyals et al., 2016; Koch, 2015), we propose a method that can learn the parameters of any standard model via meta-learning in such a way as to prepare that model for fast adaptation. The intuition behind this approach is that some internal representations are more transferrable than others. For example, a neural network might learn internal features that are broadly applicable to all tasks in p ( T ) , rather than a single individual task. How can we encourage the emergence of such general-purpose representations? We take an explicit approach to this problem: since the model will be fine-tuned using a gradient-based learning rule on a new task, we will aim to learn a model in such a way that this gradient-based learning rule can make rapid progress on new tasks drawn from p ( T ) , without overfitting. In effect, we will aim to find model parameters that are sensitive to changes in the task, such that small changes in the parameters will produce large improvements on the loss function of any task drawn from p ( T ) , when altered in the direction of the gradient of that loss (see Figure 1). We

| Algorithm 1 Model-Agnostic Meta-Learning | Algorithm 1 Model-Agnostic Meta-Learning |

|--------------------------------------------|-------------------------------------------------------------------------------------|

| Require: p ( T ) : distribution over tasks | Require: p ( T ) : distribution over tasks |

| 1: | randomly initialize θ |

| 2: | while not done do |

| 3: | Sample batch of tasks T i ∼ p ( T ) |

| 4: | for all T i do |

| 5: | Evaluate ∇ θ L T i ( f θ ) with respect to K examples |

| 6: | Compute adapted parameters with gradient de- scent: θ ′ i = θ - α ∇ θ L T i ( f θ ) |

| 7: | end for |

| 8: | Update θ ← θ - β ∇ θ ∑ T i ∼ p ( T ) L T i ( f θ ′ i ) |

| 9: | end while |

make no assumption on the form of the model, other than to assume that it is parametrized by some parameter vector θ , and that the loss function is smooth enough in θ that we can use gradient-based learning techniques.

Formally, we consider a model represented by a parametrized function f θ with parameters θ . When adapting to a new task T i , the model's parameters θ become θ ′ i . In our method, the updated parameter vector θ ′ i is computed using one or more gradient descent updates on task T i . For example, when using one gradient update,

$$\theta _ { i } ^ { \prime } = \theta - \alpha \nabla _ { \theta } \mathcal { L } _ { \mathcal { T } _ { i } } ( f _ { \theta } ) .$$

The step size α may be fixed as a hyperparameter or metalearned. For simplicity of notation, we will consider one gradient update for the rest of this section, but using multiple gradient updates is a straightforward extension.

The model parameters are trained by optimizing for the performance of f θ ′ i with respect to θ across tasks sampled from p ( T ) . More concretely, the meta-objective is as follows:

$$\min _ { \theta } \sum _ { \mathcal { T } _ { i } \sim p ( \mathcal { T } ) } \mathcal { L } _ { \mathcal { T } _ { i } } ( f _ { \theta ^ { \prime } _ { i } } ) = \sum _ { \mathcal { T } _ { i } \sim p ( \mathcal { T } ) } \mathcal { L } _ { \mathcal { T } _ { i } } ( f _ { \theta - \alpha \nabla _ { \theta } \mathcal { L } _ { \mathcal { T } _ { i } } ( f _ { \theta } ) } )$$

Note that the meta-optimization is performed over the model parameters θ , whereas the objective is computed using the updated model parameters θ ′ . In effect, our proposed method aims to optimize the model parameters such that one or a small number of gradient steps on a new task will produce maximally effective behavior on that task.

The meta-optimization across tasks is performed via stochastic gradient descent (SGD), such that the model parameters θ are updated as follows:

$$\theta \leftarrow \theta - \beta \nabla _ { \theta } \sum _ { \mathcal { T } _ { i } \sim p ( \mathcal { T } ) } \mathcal { L } _ { \mathcal { T } _ { i } } ( f _ { \theta ^ { \prime } _ { i } } ) \quad & ( 1 ) \\$$

where β is the meta step size. The full algorithm, in the general case, is outlined in Algorithm 1.

The MAML meta-gradient update involves a gradient through a gradient. Computationally, this requires an additional backward pass through f to compute Hessian-vector products, which is supported by standard deep learning libraries such as TensorFlow (Abadi et al., 2016). In our experiments, we also include a comparison to dropping this backward pass and using a first-order approximation, which we discuss in Section 5.2.

## 3. Species of MAML

In this section, we discuss specific instantiations of our meta-learning algorithm for supervised learning and reinforcement learning. The domains differ in the form of loss function and in how data is generated by the task and presented to the model, but the same basic adaptation mechanism can be applied in both cases.

## 3.1. Supervised Regression and Classification

Few-shot learning is well-studied in the domain of supervised tasks, where the goal is to learn a new function from only a few input/output pairs for that task, using prior data from similar tasks for meta-learning. For example, the goal might be to classify images of a Segway after seeing only one or a few examples of a Segway, with a model that has previously seen many other types of objects. Likewise, in few-shot regression, the goal is to predict the outputs of a continuous-valued function from only a few datapoints sampled from that function, after training on many functions with similar statistical properties.

To formalize the supervised regression and classification problems in the context of the meta-learning definitions in Section 2.1, we can define the horizon H = 1 and drop the timestep subscript on x t , since the model accepts a single input and produces a single output, rather than a sequence of inputs and outputs. The task T i generates K i.i.d. observations x from q i , and the task loss is represented by the error between the model's output for x and the corresponding target values y for that observation and task.

Two common loss functions used for supervised classification and regression are cross-entropy and mean-squared error (MSE), which we will describe below; though, other supervised loss functions may be used as well. For regression tasks using mean-squared error, the loss takes the form:

$$\mathcal { L } _ { \mathcal { T } _ { i } } ( f _ { \phi } ) = \sum _ { x ^ { ( j ) } , y ^ { ( j ) } \sim \mathcal { T } _ { i } } \| f _ { \phi } ( x ^ { ( j ) } ) - y ^ { ( j ) } \| _ { 2 } ^ { 2 } , \quad ( 2 )$$

where x ( j ) , y ( j ) are an input/output pair sampled from task T i . In K -shot regression tasks, K input/output pairs are provided for learning for each task.

Similarly, for discrete classification tasks with a crossentropy loss, the loss takes the form:

$$\mathcal { L } _ { \mathcal { T } _ { i } } ( f _ { \phi } ) = \sum _ { x ^ { ( j ) } , y ^ { ( j ) } \sim \mathcal { T } _ { i } } y ^ { ( j ) } \log f _ { \phi } ( x ^ { ( j ) } ) \\

<text><loc_36><loc_0><loc_500><loc_499>or + (1 - y ^ { ( j ) } ) log(1 - f$_{φ}$ ( x ^ { ( j ) } )) (3) L$_{T}$$_{i}$ = ∑ y (j) log f$_{φ}$(x^{(j)} ) (3) x ^{(j)} ,y^{(j)}∼T$_{i}$ + (1 - y ^{(j)} ) log(1 - f$_{φ}$(x^{(j)})</text>$$

## Algorithm 2 MAMLfor Few-Shot Supervised Learning

Require:

p ( T ) : distribution over tasks

Require:

α , β : step size hyperparameters

- 1: randomly initialize θ

- 2: while not done do

- 3: Sample batch of tasks T i ∼ p ( T )

- 4: for all T i do

- 5: Sample K datapoints D = { x ( j ) , y ( j ) } from T i

- 6: Evaluate ∇ θ L T i ( f θ ) using D and L T i in Equation (2) or (3)

- 7: Compute adapted parameters with gradient descent: θ ′ i = θ - α ∇ θ L T i ( f θ )

- 8: Sample datapoints D ′ i = { x ( j ) , y ( j ) } from T i for the meta-update

- 9: end for

- 10: Update θ ← θ - β ∇ θ ∑ T i ∼ p ( T ) L T i ( f θ ′ i ) using each D ′ i and L T i in Equation 2 or 3

- 11: end while

According to the conventional terminology, K -shot classification tasks use K input/output pairs from each class, for a total of NK data points for N -way classification. Given a distribution over tasks p ( T i ) , these loss functions can be directly inserted into the equations in Section 2.2 to perform meta-learning, as detailed in Algorithm 2.

## 3.2. Reinforcement Learning

In reinforcement learning (RL), the goal of few-shot metalearning is to enable an agent to quickly acquire a policy for a new test task using only a small amount of experience in the test setting. A new task might involve achieving a new goal or succeeding on a previously trained goal in a new environment. For example, an agent might learn to quickly figure out how to navigate mazes so that, when faced with a new maze, it can determine how to reliably reach the exit with only a few samples. In this section, we will discuss how MAML can be applied to meta-learning for RL.

Each RL task T i contains an initial state distribution q i ( x 1 ) and a transition distribution q i ( x t +1 | x t , a t ) , and the loss L T i corresponds to the (negative) reward function R . The entire task is therefore a Markov decision process (MDP) with horizon H , where the learner is allowed to query a limited number of sample trajectories for few-shot learning. Any aspect of the MDP may change across tasks in p ( T ) . The model being learned, f θ , is a policy that maps from states x t to a distribution over actions a t at each timestep t ∈ { 1 , ..., H } . The loss for task T i and model f φ takes the form

$$\mathcal { L } _ { \mathcal { T } _ { i } } ( f _ { \phi } ) = - \mathbb { E } _ { x _ { t } , a _ { t } \sim f _ { \phi } , q _ { \mathcal { T } _ { i } } } \left [ \sum _ { t = 1 } ^ { H } R _ { i } ( x _ { t } , a _ { t } ) \right ] .

</doctag>$$

In K -shot reinforcement learning, K rollouts from f θ and task T i , ( x 1 , a 1 , ... x H ) , and the corresponding rewards R ( x t , a t ) , may be used for adaptation on a new task T i .

## Algorithm 3 MAMLfor Reinforcement Learning

Require:

p ( T ) : distribution over tasks

Require:

α , β : step size hyperparameters

- 1: randomly initialize θ

- 2: while not done do

- 3: Sample batch of tasks T i ∼ p ( T )

- 4: for all T i do

- 5: Sample K trajectories D = { ( x 1 , a 1 , ... x H ) } using f θ in T i

- 6: Evaluate ∇ θ L T i ( f θ ) using D and L T i in Equation 4

- 7: Compute adapted parameters with gradient descent: θ ′ i = θ -α ∇ θ L T i ( f θ )

- 8: Sample trajectories D ′ i = { ( x 1 , a 1 , ... x H ) } using f θ ′ i in T i

- 9: end for

- 10: Update θ ← θ -β ∇ θ ∑ T i ∼ p ( T ) L T i ( f θ ′ i ) using each D ′ i and L T i in Equation 4

- 11: end while

Since the expected reward is generally not differentiable due to unknown dynamics, we use policy gradient methods to estimate the gradient both for the model gradient update(s) and the meta-optimization. Since policy gradients are an on-policy algorithm, each additional gradient step during the adaptation of f θ requires new samples from the current policy f θ i ′ . We detail the algorithm in Algorithm 3. This algorithm has the same structure as Algorithm 2, with the principal difference being that steps 5 and 8 require sampling trajectories from the environment corresponding to task T i . Practical implementations of this method may also use a variety of improvements recently proposed for policy gradient algorithms, including state or action-dependent baselines and trust regions (Schulman et al., 2015).

## 4. Related Work

The method that we propose in this paper addresses the general problem of meta-learning (Thrun & Pratt, 1998; Schmidhuber, 1987; Naik & Mammone, 1992), which includes few-shot learning. A popular approach for metalearning is to train a meta-learner that learns how to update the parameters of the learner's model (Bengio et al., 1992; Schmidhuber, 1992; Bengio et al., 1990). This approach has been applied to learning to optimize deep networks (Hochreiter et al., 2001; Andrychowicz et al., 2016; Li & Malik, 2017), as well as for learning dynamically changing recurrent networks (Ha et al., 2017). One recent approach learns both the weight initialization and the optimizer, for few-shot image recognition (Ravi & Larochelle, 2017). Unlike these methods, the MAML learner's weights are updated using the gradient, rather than a learned update; our method does not introduce additional parameters for meta-learning nor require a particular learner architecture.

Few-shot learning methods have also been developed for

specific tasks such as generative modeling (Edwards & Storkey, 2017; Rezende et al., 2016) and image recognition (Vinyals et al., 2016). One successful approach for few-shot classification is to learn to compare new examples in a learned metric space using e.g. Siamese networks (Koch, 2015) or recurrence with attention mechanisms (Vinyals et al., 2016; Shyam et al., 2017; Snell et al., 2017). These approaches have generated some of the most successful results, but are difficult to directly extend to other problems, such as reinforcement learning. Our method, in contrast, is agnostic to the form of the model and to the particular learning task.

Another approach to meta-learning is to train memoryaugmented models on many tasks, where the recurrent learner is trained to adapt to new tasks as it is rolled out. Such networks have been applied to few-shot image recognition (Santoro et al., 2016; Munkhdalai & Yu, 2017) and learning 'fast' reinforcement learning agents (Duan et al., 2016b; Wang et al., 2016). Our experiments show that our method outperforms the recurrent approach on fewshot classification. Furthermore, unlike these methods, our approach simply provides a good weight initialization and uses the same gradient descent update for both the learner and meta-update. As a result, it is straightforward to finetune the learner for additional gradient steps.

Our approach is also related to methods for initialization of deep networks. In computer vision, models pretrained on large-scale image classification have been shown to learn effective features for a range of problems (Donahue et al., 2014). In contrast, our method explicitly optimizes the model for fast adaptability, allowing it to adapt to new tasks with only a few examples. Our method can also be viewed as explicitly maximizing sensitivity of new task losses to the model parameters. A number of prior works have explored sensitivity in deep networks, often in the context of initialization (Saxe et al., 2014; Kirkpatrick et al., 2016). Most of these works have considered good random initializations, though a number of papers have addressed datadependent initializers (Kr¨ ahenb¨ uhl et al., 2016; Salimans & Kingma, 2016), including learned initializations (Husken & Goerick, 2000; Maclaurin et al., 2015). In contrast, our method explicitly trains the parameters for sensitivity on a given task distribution, allowing for extremely efficient adaptation for problems such as K -shot learning and rapid reinforcement learning in only one or a few gradient steps.

## 5. Experimental Evaluation

The goal of our experimental evaluation is to answer the following questions: (1) Can MAML enable fast learning of new tasks? (2) Can MAML be used for meta-learning in multiple different domains, including supervised regression, classification, and reinforcement learning? (3) Can a model learned with MAML continue to improve with additional gradient updates and/or examples?

All of the meta-learning problems that we consider require some amount of adaptation to new tasks at test-time. When possible, we compare our results to an oracle that receives the identity of the task (which is a problem-dependent representation) as an additional input, as an upper bound on the performance of the model. All of the experiments were performed using TensorFlow (Abadi et al., 2016), which allows for automatic differentiation through the gradient update(s) during meta-learning. The code is available online 1 .

## 5.1. Regression

We start with a simple regression problem that illustrates the basic principles of MAML. Each task involves regressing from the input to the output of a sine wave, where the amplitude and phase of the sinusoid are varied between tasks. Thus, p ( T ) is continuous, where the amplitude varies within [0 . 1 , 5 . 0] and the phase varies within [0 , π ] , and the input and output both have a dimensionality of 1 . During training and testing, datapoints x are sampled uniformly from [ -5 . 0 , 5 . 0] . The loss is the mean-squared error between the prediction f ( x ) and true value. The regressor is a neural network model with 2 hidden layers of size 40 with ReLU nonlinearities. When training with MAML, we use one gradient update with K = 10 examples with a fixed step size α = 0 . 01 , and use Adam as the metaoptimizer (Kingma & Ba, 2015). The baselines are likewise trained with Adam. To evaluate performance, we finetune a single meta-learned model on varying numbers of K examples, and compare performance to two baselines: (a) pretraining on all of the tasks, which entails training a network to regress to random sinusoid functions and then, at test-time, fine-tuning with gradient descent on the K provided points, using an automatically tuned step size, and (b) an oracle which receives the true amplitude and phase as input. In Appendix C, we show comparisons to additional multi-task and adaptation methods.

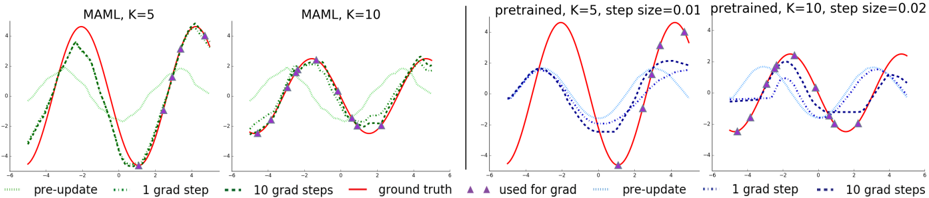

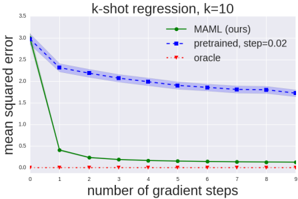

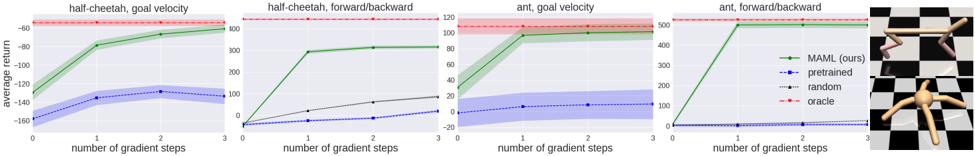

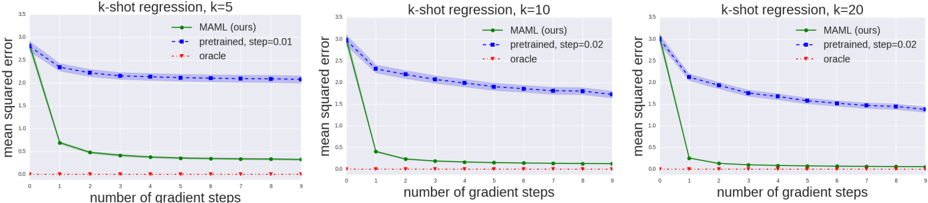

We evaluate performance by fine-tuning the model learned by MAML and the pretrained model on K = { 5 , 10 , 20 } datapoints. During fine-tuning, each gradient step is computed using the same K datapoints. The qualitative results, shown in Figure 2 and further expanded on in Appendix B show that the learned model is able to quickly adapt with only 5 datapoints, shown as purple triangles, whereas the model that is pretrained using standard supervised learning on all tasks is unable to adequately adapt with so few datapoints without catastrophic overfitting. Crucially, when the K datapoints are all in one half of the input range, the

1 Code for the regression and supervised experiments is at github.com/cbfinn/maml and code for the RL experiments is at github.com/cbfinn/maml\_rl

Figure 2. Few-shot adaptation for the simple regression task. Left: Note that MAML is able to estimate parts of the curve where there are no datapoints, indicating that the model has learned about the periodic structure of sine waves. Right: Fine-tuning of a model pretrained on the same distribution of tasks without MAML, with a tuned step size. Due to the often contradictory outputs on the pre-training tasks, this model is unable to recover a suitable representation and fails to extrapolate from the small number of test-time samples.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Charts: Model Adaptation Learning (MAML) Performance

### Overview

The image presents four line charts comparing the performance of different model adaptation strategies. The charts visualize the model's output (y-axis, ranging approximately from -5 to 5) against an unspecified input variable (x-axis, ranging approximately from -3 to 3). Each chart represents a different configuration of the MAML algorithm or a pre-trained model, with varying numbers of gradient steps (K) and step sizes. The charts compare "pre-update" values, values after "1 grad step", and values after "10 grad steps" against a "ground truth" baseline.

### Components/Axes

* **X-axis:** Unlabeled, representing the input variable. Scale ranges approximately from -3 to 3.

* **Y-axis:** Unlabeled, representing the model output. Scale ranges approximately from -5 to 5.

* **Chart 1 Title:** "MAML, K=5"

* **Chart 2 Title:** "MAML, K=10"

* **Chart 3 Title:** "pretrained, K=5, step size=0.01"

* **Chart 4 Title:** "pretrained, K=10, step size=0.02"

* **Legend (shared across all charts, positioned at the bottom):**

* `--` (green): "pre-update"

* `..` (blue): "1 grad step"

* `...` (purple): "10 grad steps"

* `-` (red): "ground truth"

* `^` (dark red): "used for grad"

### Detailed Analysis or Content Details

**Chart 1: MAML, K=5**

* **Ground Truth (red solid line):** A sinusoidal wave with a period of approximately 2, peaking around x=0 and bottoming around x=-3 and x=3. Values range from approximately -4.5 to 4.5.

* **Pre-update (green dashed line):** Starts around y=-2 at x=-3, rises to approximately y=1 at x=0, and falls back to approximately y=-2 at x=3.

* **1 grad step (blue dotted line):** Starts around y=-1.5 at x=-3, rises to approximately y=3 at x=0, and falls back to approximately y=-1.5 at x=3.

* **10 grad steps (purple dotted line):** Starts around y=-2.5 at x=-3, rises to approximately y=3.5 at x=0, and falls back to approximately y=-2.5 at x=3.

**Chart 2: MAML, K=10**

* **Ground Truth (red solid line):** Identical to Chart 1.

* **Pre-update (green dashed line):** Similar to Chart 1, but with slightly more variation. Starts around y=-2.5 at x=-3, rises to approximately y=0.5 at x=0, and falls back to approximately y=-2.5 at x=3.

* **1 grad step (blue dotted line):** Starts around y=-2 at x=-3, rises to approximately y=2.5 at x=0, and falls back to approximately y=-2 at x=3.

* **10 grad steps (purple dotted line):** Starts around y=-3 at x=-3, rises to approximately y=4 at x=0, and falls back to approximately y=-3 at x=3.

**Chart 3: pretrained, K=5, step size=0.01**

* **Ground Truth (red solid line):** Identical to Chart 1.

* **Pre-update (green dashed line):** Starts around y=-3 at x=-3, rises to approximately y=4 at x=0, and falls back to approximately y=-3 at x=3.

* **1 grad step (blue dotted line):** Starts around y=-2 at x=-3, rises to approximately y=3 at x=0, and falls back to approximately y=-2 at x=3.

* **10 grad steps (purple dotted line):** Starts around y=-2.5 at x=-3, rises to approximately y=2.5 at x=0, and falls back to approximately y=-2.5 at x=3.

**Chart 4: pretrained, K=10, step size=0.02**

* **Ground Truth (red solid line):** Identical to Chart 1.

* **Pre-update (green dashed line):** Starts around y=-3.5 at x=-3, rises to approximately y=4.5 at x=0, and falls back to approximately y=-3.5 at x=3.

* **1 grad step (blue dotted line):** Starts around y=-2.5 at x=-3, rises to approximately y=3.5 at x=0, and falls back to approximately y=-2.5 at x=3.

* **10 grad steps (purple dotted line):** Starts around y=-3 at x=-3, rises to approximately y=3 at x=0, and falls back to approximately y=-3 at x=3.

### Key Observations

* In all charts, the "ground truth" (red line) provides a consistent baseline.

* The "pre-update" (green line) consistently deviates from the "ground truth," indicating an initial mismatch between the model's prediction and the target.

* Applying gradient steps (blue and purple lines) generally moves the model's prediction closer to the "ground truth," suggesting that the adaptation process is effective.

* Increasing the number of gradient steps (from blue to purple) often leads to further improvement, but can also result in overshooting or oscillations.

* The pre-trained models (Charts 3 & 4) start with a larger deviation from the ground truth than the MAML models (Charts 1 & 2).

* The step size parameter (0.01 vs 0.02) in the pre-trained models appears to influence the adaptation speed and stability.

### Interpretation

These charts demonstrate the effectiveness of MAML and pre-training for rapid adaptation to new tasks. The "ground truth" likely represents the desired output for a given input, and the other lines show how the model's prediction evolves as it learns. The MAML approach (Charts 1 & 2) appears to initialize the model closer to the optimal solution, requiring fewer gradient steps to achieve good performance. The pre-trained models (Charts 3 & 4) require more adaptation but can still converge to a reasonable solution. The choice of step size is crucial for pre-trained models, as a larger step size can lead to instability or overshooting. The "used for grad" markers (dark red triangles) are not consistently placed and their purpose is unclear without additional context, but they likely indicate the points used for calculating the gradient. The charts suggest that MAML is a more efficient adaptation strategy, particularly when the number of gradient steps is limited. The data suggests that the model is learning to approximate the underlying function represented by the "ground truth" signal.

</details>

Figure 3. Quantitative sinusoid regression results showing the learning curve at meta test-time. Note that MAML continues to improve with additional gradient steps without overfitting to the extremely small dataset during meta-testing, achieving a loss that is substantially lower than the baseline fine-tuning approach.

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Line Chart: k-shot regression, k=10

### Overview

This line chart visualizes the performance of three different methods (MAML, pretrained, and oracle) in a k-shot regression task with k=10. The chart plots the mean squared error against the number of gradient steps. The chart shows how the error decreases as the number of gradient steps increases for each method.

### Components/Axes

* **Title:** k-shot regression, k=10 (top-center)

* **X-axis:** number of gradient steps (bottom-center). Scale ranges from 0 to 9, with tick marks at each integer value.

* **Y-axis:** mean squared error (left-center). Scale ranges from 0 to 3.5, with tick marks at 0, 0.5, 1.0, 1.5, 2.0, 2.5, 3.0, and 3.5.

* **Legend:** Located in the top-right corner.

* MAML (ours) - Green line with diamond markers.

* pretrained, step=0.02 - Blue line with square markers.

* oracle - Red dotted line with circle markers.

### Detailed Analysis

* **MAML (ours):** The green line starts at approximately 3.1 mean squared error at 0 gradient steps and rapidly decreases to approximately 0.05 at 1 gradient step. It continues to decrease, but at a slower rate, reaching approximately 0.02 at 9 gradient steps.

* **pretrained, step=0.02:** The blue line begins at approximately 2.8 mean squared error at 0 gradient steps. It decreases steadily, but more slowly than MAML, to approximately 1.7 mean squared error at 9 gradient steps. A shaded region around the blue line indicates a confidence interval or standard deviation.

* **oracle:** The red dotted line remains consistently close to 0 mean squared error across all gradient steps, fluctuating between approximately 0.01 and 0.03.

Specific Data Points (approximate):

| Gradient Steps | MAML (ours) | pretrained, step=0.02 | oracle |

|---|---|---|---|

| 0 | 3.1 | 2.8 | 0.02 |

| 1 | 0.05 | 2.4 | 0.01 |

| 2 | 0.03 | 2.1 | 0.02 |

| 3 | 0.025 | 1.9 | 0.015 |

| 4 | 0.023 | 1.8 | 0.02 |

| 5 | 0.022 | 1.75 | 0.015 |

| 6 | 0.021 | 1.72 | 0.02 |

| 7 | 0.021 | 1.7 | 0.015 |

| 8 | 0.02 | 1.68 | 0.02 |

| 9 | 0.02 | 1.7 | 0.015 |

### Key Observations

* MAML (ours) significantly outperforms the pretrained method in terms of mean squared error, especially in the initial gradient steps.

* The oracle method achieves near-zero error consistently, representing an ideal performance baseline.

* The pretrained method shows a gradual decrease in error, but it does not converge to the same low error levels as MAML.

* The confidence interval around the pretrained method suggests some variability in its performance.

### Interpretation

The chart demonstrates the effectiveness of the MAML (Model-Agnostic Meta-Learning) approach for k-shot regression. MAML rapidly adapts to new tasks with a small number of gradient steps, achieving a much lower mean squared error compared to a pretrained model. The oracle method represents the theoretical lower bound on the error, indicating the potential for further improvement. The rapid convergence of MAML suggests that it is able to efficiently learn from limited data, making it well-suited for few-shot learning scenarios. The shaded region around the pretrained line indicates that the performance of the pretrained model is less consistent than MAML or the oracle. This could be due to factors such as the initialization of the pretrained model or the choice of learning rate. The chart highlights the benefits of meta-learning for rapid adaptation and improved performance in low-data regimes.

</details>

model trained with MAML can still infer the amplitude and phase in the other half of the range, demonstrating that the MAML trained model f has learned to model the periodic nature of the sine wave. Furthermore, we observe both in the qualitative and quantitative results (Figure 3 and Appendix B) that the model learned with MAML continues to improve with additional gradient steps, despite being trained for maximal performance after one gradient step. This improvement suggests that MAML optimizes the parameters such that they lie in a region that is amenable to fast adaptation and is sensitive to loss functions from p ( T ) , as discussed in Section 2.2, rather than overfitting to parameters θ that only improve after one step.

## 5.2. Classification

To evaluate MAML in comparison to prior meta-learning and few-shot learning algorithms, we applied our method to few-shot image recognition on the Omniglot (Lake et al., 2011) and MiniImagenet datasets. The Omniglot dataset consists of 20 instances of 1623 characters from 50 different alphabets. Each instance was drawn by a different person. The MiniImagenet dataset was proposed by Ravi & Larochelle (2017), and involves 64 training classes, 12 validation classes, and 24 test classes. The Omniglot and MiniImagenet image recognition tasks are the most common recently used few-shot learning benchmarks (Vinyals et al., 2016; Santoro et al., 2016; Ravi & Larochelle, 2017).

We follow the experimental protocol proposed by Vinyals et al. (2016), which involves fast learning of N -way classification with 1 or 5 shots. The problem of N -way classification is set up as follows: select N unseen classes, provide the model with K different instances of each of the N classes, and evaluate the model's ability to classify new instances within the N classes. For Omniglot, we randomly select 1200 characters for training, irrespective of alphabet, and use the remaining for testing. The Omniglot dataset is augmented with rotations by multiples of 90 degrees, as proposed by Santoro et al. (2016).

Our model follows the same architecture as the embedding function used by Vinyals et al. (2016), which has 4 modules with a 3 × 3 convolutions and 64 filters, followed by batch normalization (Ioffe & Szegedy, 2015), a ReLU nonlinearity, and 2 × 2 max-pooling. The Omniglot images are downsampled to 28 × 28 , so the dimensionality of the last hidden layer is 64 . As in the baseline classifier used by Vinyals et al. (2016), the last layer is fed into a softmax. For Omniglot, we used strided convolutions instead of max-pooling. For MiniImagenet, we used 32 filters per layer to reduce overfitting, as done by (Ravi & Larochelle, 2017). In order to also provide a fair comparison against memory-augmented neural networks (Santoro et al., 2016) and to test the flexibility of MAML, we also provide results for a non-convolutional network. For this, we use a network with 4 hidden layers with sizes 256 , 128 , 64 , 64 , each including batch normalization and ReLU nonlinearities, followed by a linear layer and softmax. For all models, the loss function is the cross-entropy error between the predicted and true class. Additional hyperparameter details are included in Appendix A.1.

We present the results in Table 1. The convolutional model learned by MAML compares well to the state-of-the-art results on this task, narrowly outperforming the prior methods. Some of these existing methods, such as matching networks, Siamese networks, and memory models are designed with few-shot classification in mind, and are not readily applicable to domains such as reinforcement learning. Additionally, the model learned with MAML uses

Table 1. Few-shot classification on held-out Omniglot characters (top) and the MiniImagenet test set (bottom). MAML achieves results that are comparable to or outperform state-of-the-art convolutional and recurrent models. Siamese nets, matching nets, and the memory module approaches are all specific to classification, and are not directly applicable to regression or RL scenarios. The ± shows 95% confidence intervals over tasks. Note that the Omniglot results may not be strictly comparable since the train/test splits used in the prior work were not available. The MiniImagenet evaluation of baseline methods and matching networks is from Ravi & Larochelle (2017).

| | 5-way Accuracy | 5-way Accuracy | 20-way Accuracy | 20-way Accuracy |

|----------------------------------------------|------------------|------------------|-------------------|-------------------|

| Omniglot (Lake et al., 2011) | 1-shot | 5-shot | 1-shot | 5-shot |

| MANN, no conv (Santoro et al., 2016) | 82 . 8% | 94 . 9% | - | - |

| MAML, no conv (ours) | 89 . 7 ± 1 . 1 % | 97 . 5 ± 0 . 6 % | - | - |

| Siamese nets (Koch, 2015) | 97 . 3% | 98 . 4% | 88 . 2% | 97 . 0% |

| matching nets (Vinyals et al., 2016) | 98 . 1% | 98 . 9% | 93 . 8% | 98 . 5% |

| neural statistician (Edwards &Storkey, 2017) | 98 . 1% | 99 . 5% | 93 . 2% | 98 . 1% |

| memory mod. (Kaiser et al., 2017) | 98 . 4% | 99 . 6% | 95 . 0% | 98 . 6% |

| MAML(ours) | 98 . 7 ± 0 . 4 % | 99 . 9 ± 0 . 1 % | 95 . 8 ± 0 . 3 % | 98 . 9 ± 0 . 2 % |

| | 5-way Accuracy | 5-way Accuracy |

|--------------------------------------------|--------------------|--------------------|

| MiniImagenet (Ravi &Larochelle, 2017) | 1-shot | 5-shot |

| fine-tuning baseline | 28 . 86 ± 0 . 54% | 49 . 79 ± 0 . 79% |

| nearest neighbor baseline | 41 . 08 ± 0 . 70% | 51 . 04 ± 0 . 65% |

| matching nets (Vinyals et al., 2016) | 43 . 56 ± 0 . 84% | 55 . 31 ± 0 . 73% |

| meta-learner LSTM (Ravi &Larochelle, 2017) | 43 . 44 ± 0 . 77% | 60 . 60 ± 0 . 71% |

| MAML, first order approx. (ours) | 48 . 07 ± 1 . 75 % | 63 . 15 ± 0 . 91 % |

| MAML(ours) | 48 . 70 ± 1 . 84 % | 63 . 11 ± 0 . 92 % |

fewer overall parameters compared to matching networks and the meta-learner LSTM, since the algorithm does not introduce any additional parameters beyond the weights of the classifier itself. Compared to these prior methods, memory-augmented neural networks (Santoro et al., 2016) specifically, and recurrent meta-learning models in general, represent a more broadly applicable class of methods that, like MAML, can be used for other tasks such as reinforcement learning (Duan et al., 2016b; Wang et al., 2016). However, as shown in the comparison, MAML significantly outperforms memory-augmented networks and the meta-learner LSTM on 5-way Omniglot and MiniImagenet classification, both in the 1 -shot and 5 -shot case.

A significant computational expense in MAML comes from the use of second derivatives when backpropagating the meta-gradient through the gradient operator in the meta-objective (see Equation (1)). On MiniImagenet, we show a comparison to a first-order approximation of MAML, where these second derivatives are omitted. Note that the resulting method still computes the meta-gradient at the post-update parameter values θ ′ i , which provides for effective meta-learning. Surprisingly however, the performance of this method is nearly the same as that obtained with full second derivatives, suggesting that most of the improvement in MAML comes from the gradients of the objective at the post-update parameter values, rather than the second order updates from differentiating through the gradient update. Past work has observed that ReLU neural networks are locally almost linear (Goodfellow et al., 2015), which suggests that second derivatives may be close to zero in most cases, partially explaining the good perfor-

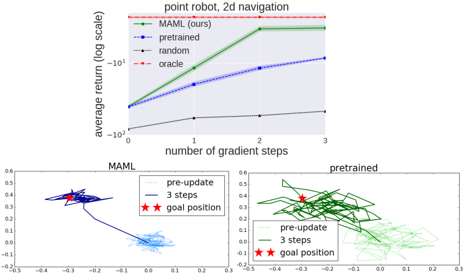

Figure 4. Top: quantitative results from 2D navigation task, Bottom: qualitative comparison between model learned with MAML and with fine-tuning from a pretrained network.

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Line Chart & Trajectory Plots: Point Robot 2D Navigation Performance

### Overview

The image presents a line chart illustrating the average return (on a logarithmic scale) versus the number of gradient steps for different learning algorithms in a 2D robot navigation task. Below the chart are two trajectory plots, one for the MAML algorithm and one for a pretrained model, showing robot paths before and after adaptation.

### Components/Axes

* **Title:** "point robot, 2d navigation" (top-center, red text)

* **Y-axis:** "average return (log scale)" (left side, vertical) - Scale ranges from approximately -10<sup>-1</sup> to -10<sup>-0</sup>.

* **X-axis:** "number of gradient steps" (bottom, horizontal) - Scale ranges from 0 to 3.

* **Legend:** Located in the top-right corner. Contains the following algorithms with corresponding colors:

* MAML (ours) - Green

* pretrained - Blue

* random - Gray

* oracle - Purple

* **Trajectory Plots:** Two subplots labeled "MAML" (left) and "pretrained" (right).

* Each plot has axes ranging from approximately -0.25 to 0.6.

* Each plot includes a legend:

* pre-update - Lighter shade

* 3 steps - Darker shade

* Both plots mark the "goal position" with a red star symbol.

### Detailed Analysis or Content Details

**Line Chart:**

* **MAML (Green):** The line starts at approximately -0.1 at 0 gradient steps and increases rapidly, reaching approximately 1.5 at 3 gradient steps. The trend is strongly upward.

* **Pretrained (Blue):** The line starts at approximately -0.1 at 0 gradient steps and increases steadily, reaching approximately 0.5 at 3 gradient steps. The trend is upward, but less steep than MAML.

* **Random (Gray):** The line remains relatively flat, starting at approximately -0.2 and ending at approximately -0.15 at 3 gradient steps. The trend is nearly horizontal.

* **Oracle (Purple):** The line starts at approximately -0.1 at 0 gradient steps and increases, reaching approximately 0.7 at 3 gradient steps. The trend is upward, but less steep than MAML.

**Trajectory Plots:**

* **MAML:** The "pre-update" trajectory (lighter blue) shows a scattered path, while the "3 steps" trajectory (darker blue) shows a more directed path towards the goal position (red star). The initial path is more erratic, and the adapted path is more focused.

* **Pretrained:** The "pre-update" trajectory (lighter green) is also scattered, but appears to have a slightly more directed initial movement than MAML's pre-update path. The "3 steps" trajectory (darker green) shows a more refined path towards the goal position, but still exhibits some meandering.

### Key Observations

* MAML significantly outperforms the other algorithms in terms of average return, especially as the number of gradient steps increases.

* The random algorithm performs poorly, indicating the importance of learning.

* The pretrained model shows improvement with gradient steps, but not to the same extent as MAML.

* The trajectory plots visually demonstrate the adaptation process, with both MAML and the pretrained model showing more directed paths after adaptation.

* MAML's initial pre-update trajectory is more scattered than the pretrained model's, suggesting it requires more adaptation.

### Interpretation

The data suggests that MAML is a highly effective algorithm for adapting a robot to a 2D navigation task. The rapid increase in average return with gradient steps indicates fast learning and efficient adaptation. The trajectory plots corroborate this, showing a clear improvement in path planning after adaptation. The pretrained model also demonstrates learning, but its performance is lower than MAML's, suggesting that MAML's meta-learning approach is more beneficial in this scenario. The poor performance of the random algorithm highlights the necessity of a learning-based approach. The "oracle" line suggests an upper bound on performance, which MAML is approaching.

The difference in pre-update trajectories between MAML and the pretrained model could indicate that MAML starts with a less informed initial policy, requiring more adaptation to achieve optimal performance. However, its ability to quickly adapt and surpass the pretrained model demonstrates its superior learning capabilities. The goal position being reached by both adapted models indicates successful navigation.

</details>

mance of the first-order approximation. This approximation removes the need for computing Hessian-vector products in an additional backward pass, which we found led to roughly 33% speed-up in network computation.

## 5.3. Reinforcement Learning

To evaluate MAML on reinforcement learning problems, we constructed several sets of tasks based off of the simulated continuous control environments in the rllab benchmark suite (Duan et al., 2016a). We discuss the individual domains below. In all of the domains, the model trained by MAML is a neural network policy with two hidden layers of size 100 , with ReLU nonlinearities. The gradient updates are computed using vanilla policy gradient (REINFORCE) (Williams, 1992), and we use trust-region policy optimization (TRPO) as the meta-optimizer (Schulman et al., 2015). In order to avoid computing third derivatives,

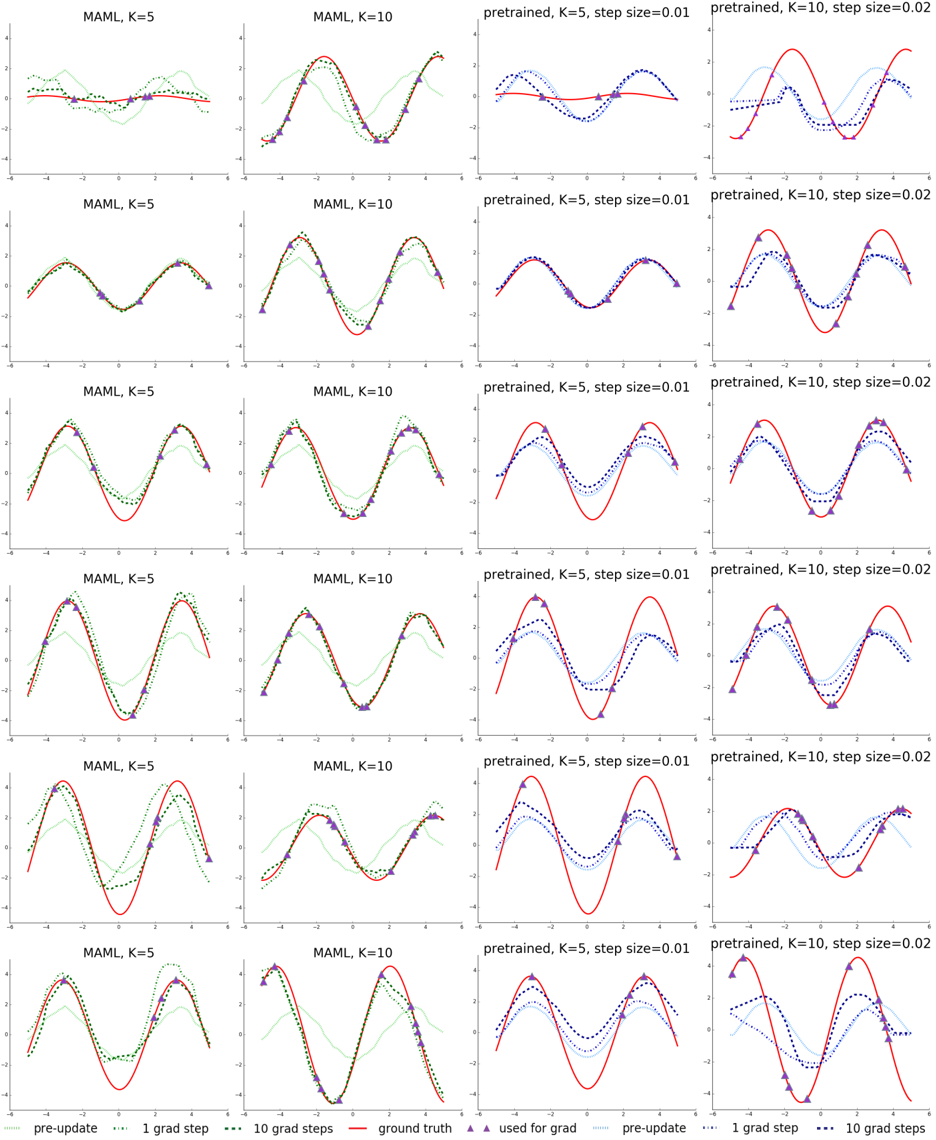

Figure 5. Reinforcement learning results for the half-cheetah and ant locomotion tasks, with the tasks shown on the far right. Each gradient step requires additional samples from the environment, unlike the supervised learning tasks. The results show that MAML can adapt to new goal velocities and directions substantially faster than conventional pretraining or random initialization, achieving good performs in just two or three gradient steps. We exclude the goal velocity, random baseline curves, since the returns are much worse ( < -200 for cheetah and < -25 for ant).

<details>

<summary>Image 5 Details</summary>

### Visual Description

\n

## Line Charts: Performance of Reinforcement Learning Algorithms

### Overview

The image presents four line charts, each depicting the average return of different reinforcement learning algorithms as a function of the number of gradient steps. The charts compare the performance of "MAML (ours)" against "pretrained", "random", and "oracle" algorithms across four different environments: half-cheetah goal velocity, half-cheetah toward/backward, ant goal velocity, and ant forward/backward. The final chart for "ant forward/backward" also includes a visual representation of the environment.

### Components/Axes

Each chart shares the following components:

* **X-axis:** "number of gradient steps" ranging from 0 to 3.

* **Y-axis:** "average return" with varying scales depending on the environment.

* half-cheetah goal velocity: approximately -200 to 50

* half-cheetah toward/backward: approximately -50 to 120

* ant goal velocity: approximately -20 to 120

* ant forward/backward: approximately -100 to 550

* **Legend:** Located in the top-right corner of each chart, identifying the data series:

* Green solid line: "MAML (ours)"

* Blue dashed line: "pretrained"

* Gray dashed-dotted line: "random"

* Red dashed line: "oracle"

Each chart also has a title indicating the environment being tested. The final chart includes an image of the ant robot in the environment.

### Detailed Analysis or Content Details

**1. half-cheetah goal velocity:**

* **MAML (ours):** Line slopes upward, starting at approximately 0 at 0 gradient steps, reaching approximately 40 at 3 gradient steps.

* **pretrained:** Line starts at approximately -150 at 0 gradient steps, increases to approximately 100 at 3 gradient steps.

* **random:** Line starts at approximately -180 at 0 gradient steps, increases to approximately 80 at 3 gradient steps.

* **oracle:** Horizontal dashed line at approximately 40 across all gradient steps.

**2. half-cheetah toward/backward:**

* **MAML (ours):** Line slopes upward, starting at approximately 0 at 0 gradient steps, reaching approximately 80 at 3 gradient steps.

* **pretrained:** Line starts at approximately -30 at 0 gradient steps, increases to approximately 60 at 3 gradient steps.

* **random:** Line starts at approximately -20 at 0 gradient steps, increases to approximately 40 at 3 gradient steps.

* **oracle:** Horizontal dashed line at approximately 100 across all gradient steps.

**3. ant goal velocity:**

* **MAML (ours):** Line slopes upward, starting at approximately 50 at 0 gradient steps, reaching approximately 100 at 3 gradient steps.

* **pretrained:** Line starts at approximately 0 at 0 gradient steps, increases to approximately 40 at 3 gradient steps.

* **random:** Line starts at approximately 0 at 0 gradient steps, increases to approximately 20 at 3 gradient steps.

* **oracle:** Horizontal dashed line at approximately 100 across all gradient steps.

**4. ant forward/backward:**

* **MAML (ours):** Line slopes upward sharply, starting at approximately -100 at 0 gradient steps, reaching approximately 500 at 3 gradient steps.

* **pretrained:** Line starts at approximately -100 at 0 gradient steps, increases to approximately 100 at 3 gradient steps.

* **random:** Line starts at approximately -100 at 0 gradient steps, increases to approximately 100 at 3 gradient steps.

* **oracle:** Horizontal dashed line at approximately 550 across all gradient steps.

### Key Observations

* "MAML (ours)" consistently outperforms "pretrained" and "random" in all environments, especially in the "ant forward/backward" environment.

* "oracle" provides the highest performance in all environments, serving as an upper bound.

* "pretrained" and "random" show similar performance across most environments.

* The scale of the Y-axis varies significantly between environments, indicating different reward structures.

### Interpretation

The data suggests that the "MAML (ours)" algorithm is effective at learning and adapting to different reinforcement learning environments with a relatively small number of gradient steps. The algorithm's performance approaches the "oracle" performance, indicating its potential for achieving optimal results. The consistent outperformance of "MAML (ours)" compared to "pretrained" and "random" suggests that the meta-learning approach employed by MAML is beneficial for rapid adaptation. The large difference in Y-axis scales highlights the varying difficulty of the different environments. The "ant forward/backward" environment appears to be the most challenging, as evidenced by the wider range of average returns and the higher "oracle" performance. The image of the ant robot in the "ant forward/backward" environment provides context for the task, showing a robot navigating a grid-like environment. The visual representation helps to understand the complexity of the environment and the challenges faced by the reinforcement learning algorithms.

</details>

we use finite differences to compute the Hessian-vector products for TRPO. For both learning and meta-learning updates, we use the standard linear feature baseline proposed by Duan et al. (2016a), which is fitted separately at each iteration for each sampled task in the batch. We compare to three baseline models: (a) pretraining one policy on all of the tasks and then fine-tuning, (b) training a policy from randomly initialized weights, and (c) an oracle policy which receives the parameters of the task as input, which for the tasks below corresponds to a goal position, goal direction, or goal velocity for the agent. The baseline models of (a) and (b) are fine-tuned with gradient descent with a manually tuned step size. Videos of the learned policies can be viewed at sites.google.com/view/maml

2D Navigation. In our first meta-RL experiment, we study a set of tasks where a point agent must move to different goal positions in 2D, randomly chosen for each task within a unit square. The observation is the current 2D position, and actions correspond to velocity commands clipped to be in the range [ -0 . 1 , 0 . 1] . The reward is the negative squared distance to the goal, and episodes terminate when the agent is within 0 . 01 of the goal or at the horizon of H = 100 . The policy was trained with MAML to maximize performance after 1 policy gradient update using 20 trajectories. Additional hyperparameter settings for this problem and the following RL problems are in Appendix A.2. In our evaluation, we compare adaptation to a new task with up to 4 gradient updates, each with 40 samples. The results in Figure 4 show the adaptation performance of models that are initialized with MAML, conventional pretraining on the same set of tasks, random initialization, and an oracle policy that receives the goal position as input. The results show that MAML can learn a model that adapts much more quickly in a single gradient update, and furthermore continues to improve with additional updates.

Locomotion. To study how well MAML can scale to more complex deep RL problems, we also study adaptation on high-dimensional locomotion tasks with the MuJoCo simulator (Todorov et al., 2012). The tasks require two simulated robots - a planar cheetah and a 3D quadruped (the 'ant') - to run in a particular direction or at a particular velocity. In the goal velocity experiments, the reward is the negative absolute value between the current velocity of the agent and a goal, which is chosen uniformly at random between 0 . 0 and 2 . 0 for the cheetah and between 0 . 0 and 3 . 0 for the ant. In the goal direction experiments, the reward is the magnitude of the velocity in either the forward or backward direction, chosen at random for each task in p ( T ) . The horizon is H = 200 , with 20 rollouts per gradient step for all problems except the ant forward/backward task, which used 40 rollouts per step. The results in Figure 5 show that MAML learns a model that can quickly adapt its velocity and direction with even just a single gradient update, and continues to improve with more gradient steps. The results also show that, on these challenging tasks, the MAML initialization substantially outperforms random initialization and pretraining. In fact, pretraining is in some cases worse than random initialization, a fact observed in prior RL work (Parisotto et al., 2016).

## 6. Discussion and Future Work

We introduced a meta-learning method based on learning easily adaptable model parameters through gradient descent. Our approach has a number of benefits. It is simple and does not introduce any learned parameters for metalearning. It can be combined with any model representation that is amenable to gradient-based training, and any differentiable objective, including classification, regression, and reinforcement learning. Lastly, since our method merely produces a weight initialization, adaptation can be performed with any amount of data and any number of gradient steps, though we demonstrate state-of-the-art results on classification with only one or five examples per class. We also show that our method can adapt an RL agent using policy gradients and a very modest amount of experience.

Reusing knowledge from past tasks may be a crucial ingredient in making high-capacity scalable models, such as deep neural networks, amenable to fast training with small datasets. We believe that this work is one step toward a simple and general-purpose meta-learning technique that can be applied to any problem and any model. Further research in this area can make multitask initialization a standard ingredient in deep learning and reinforcement learning.

## Acknowledgements

The authors would like to thank Xi Chen and Trevor Darrell for helpful discussions, Yan Duan and Alex Lee for technical advice, Nikhil Mishra, Haoran Tang, and Greg Kahn for feedback on an early draft of the paper, and the anonymous reviewers for their comments. This work was supported in part by an ONR PECASE award and an NSF GRFP award.

## References

- Abadi, Mart´ ın, Agarwal, Ashish, Barham, Paul, Brevdo, Eugene, Chen, Zhifeng, Citro, Craig, Corrado, Greg S, Davis, Andy, Dean, Jeffrey, Devin, Matthieu, et al. Tensorflow: Large-scale machine learning on heterogeneous distributed systems. arXiv preprint arXiv:1603.04467 , 2016.

- Andrychowicz, Marcin, Denil, Misha, Gomez, Sergio, Hoffman, Matthew W, Pfau, David, Schaul, Tom, and de Freitas, Nando. Learning to learn by gradient descent by gradient descent. In Neural Information Processing Systems (NIPS) , 2016.

- Bengio, Samy, Bengio, Yoshua, Cloutier, Jocelyn, and Gecsei, Jan. On the optimization of a synaptic learning rule. In Optimality in Artificial and Biological Neural Networks , pp. 6-8, 1992.

- Bengio, Yoshua, Bengio, Samy, and Cloutier, Jocelyn. Learning a synaptic learning rule . Universit´ e de Montr´ eal, D´ epartement d'informatique et de recherche op´ erationnelle, 1990.

- Donahue, Jeff, Jia, Yangqing, Vinyals, Oriol, Hoffman, Judy, Zhang, Ning, Tzeng, Eric, and Darrell, Trevor. Decaf: A deep convolutional activation feature for generic visual recognition. In International Conference on Machine Learning (ICML) , 2014.

- Duan, Yan, Chen, Xi, Houthooft, Rein, Schulman, John, and Abbeel, Pieter. Benchmarking deep reinforcement learning for continuous control. In International Conference on Machine Learning (ICML) , 2016a.

- Duan, Yan, Schulman, John, Chen, Xi, Bartlett, Peter L, Sutskever, Ilya, and Abbeel, Pieter. Rl2: Fast reinforcement learning via slow reinforcement learning. arXiv preprint arXiv:1611.02779 , 2016b.

- Edwards, Harrison and Storkey, Amos. Towards a neural statistician. International Conference on Learning Representations (ICLR) , 2017.

- Goodfellow, Ian J, Shlens, Jonathon, and Szegedy, Christian. Explaining and harnessing adversarial examples. International Conference on Learning Representations (ICLR) , 2015.

- Ha, David, Dai, Andrew, and Le, Quoc V. Hypernetworks. International Conference on Learning Representations (ICLR) , 2017.

- Hochreiter, Sepp, Younger, A Steven, and Conwell, Peter R. Learning to learn using gradient descent. In International Conference on Artificial Neural Networks . Springer, 2001.

- Husken, Michael and Goerick, Christian. Fast learning for problem classes using knowledge based network initialization. In Neural Networks, 2000. IJCNN 2000, Proceedings of the IEEE-INNS-ENNS International Joint Conference on , volume 6, pp. 619-624. IEEE, 2000.

- Ioffe, Sergey and Szegedy, Christian. Batch normalization: Accelerating deep network training by reducing internal covariate shift. International Conference on Machine Learning (ICML) , 2015.

- Kaiser, Lukasz, Nachum, Ofir, Roy, Aurko, and Bengio, Samy. Learning to remember rare events. International Conference on Learning Representations (ICLR) , 2017.

- Kingma, Diederik and Ba, Jimmy. Adam: A method for stochastic optimization. International Conference on Learning Representations (ICLR) , 2015.

- Kirkpatrick, James, Pascanu, Razvan, Rabinowitz, Neil, Veness, Joel, Desjardins, Guillaume, Rusu, Andrei A, Milan, Kieran, Quan, John, Ramalho, Tiago, GrabskaBarwinska, Agnieszka, et al. Overcoming catastrophic forgetting in neural networks. arXiv preprint arXiv:1612.00796 , 2016.

- Koch, Gregory. Siamese neural networks for one-shot image recognition. ICML Deep Learning Workshop , 2015.

- Kr¨ ahenb¨ uhl, Philipp, Doersch, Carl, Donahue, Jeff, and Darrell, Trevor. Data-dependent initializations of convolutional neural networks. International Conference on Learning Representations (ICLR) , 2016.

- Lake, Brenden M, Salakhutdinov, Ruslan, Gross, Jason, and Tenenbaum, Joshua B. One shot learning of simple visual concepts. In Conference of the Cognitive Science Society (CogSci) , 2011.

- Li, Ke and Malik, Jitendra. Learning to optimize. International Conference on Learning Representations (ICLR) , 2017.

- Maclaurin, Dougal, Duvenaud, David, and Adams, Ryan. Gradient-based hyperparameter optimization through reversible learning. In International Conference on Machine Learning (ICML) , 2015.

- Munkhdalai, Tsendsuren and Yu, Hong. Meta networks. International Conferecence on Machine Learning (ICML) , 2017.

- Naik, Devang K and Mammone, RJ. Meta-neural networks that learn by learning. In International Joint Conference on Neural Netowrks (IJCNN) , 1992.

- Parisotto, Emilio, Ba, Jimmy Lei, and Salakhutdinov, Ruslan. Actor-mimic: Deep multitask and transfer reinforcement learning. International Conference on Learning Representations (ICLR) , 2016.

- Ravi, Sachin and Larochelle, Hugo. Optimization as a model for few-shot learning. In International Conference on Learning Representations (ICLR) , 2017.

- Rei, Marek. Online representation learning in recurrent neural language models. arXiv preprint arXiv:1508.03854 , 2015.

- Rezende, Danilo Jimenez, Mohamed, Shakir, Danihelka, Ivo, Gregor, Karol, and Wierstra, Daan. One-shot generalization in deep generative models. International Conference on Machine Learning (ICML) , 2016.

- Salimans, Tim and Kingma, Diederik P. Weight normalization: A simple reparameterization to accelerate training of deep neural networks. In Neural Information Processing Systems (NIPS) , 2016.

- Santoro, Adam, Bartunov, Sergey, Botvinick, Matthew, Wierstra, Daan, and Lillicrap, Timothy. Meta-learning with memory-augmented neural networks. In International Conference on Machine Learning (ICML) , 2016.

- Saxe, Andrew, McClelland, James, and Ganguli, Surya. Exact solutions to the nonlinear dynamics of learning in deep linear neural networks. International Conference on Learning Representations (ICLR) , 2014.

- Schmidhuber, Jurgen. Evolutionary principles in selfreferential learning. On learning how to learn: The meta-meta-... hook.) Diploma thesis, Institut f. Informatik, Tech. Univ. Munich , 1987.

- Schmidhuber, J¨ urgen. Learning to control fast-weight memories: An alternative to dynamic recurrent networks. Neural Computation , 1992.

- Schulman, John, Levine, Sergey, Abbeel, Pieter, Jordan, Michael I, and Moritz, Philipp. Trust region policy optimization. In International Conference on Machine Learning (ICML) , 2015.

- Shyam, Pranav, Gupta, Shubham, and Dukkipati, Ambedkar. Attentive recurrent comparators. International Conferecence on Machine Learning (ICML) , 2017.

- Snell, Jake, Swersky, Kevin, and Zemel, Richard S. Prototypical networks for few-shot learning. arXiv preprint arXiv:1703.05175 , 2017.

- Thrun, Sebastian and Pratt, Lorien. Learning to learn . Springer Science & Business Media, 1998.

- Todorov, Emanuel, Erez, Tom, and Tassa, Yuval. Mujoco: A physics engine for model-based control. In International Conference on Intelligent Robots and Systems (IROS) , 2012.

- Vinyals, Oriol, Blundell, Charles, Lillicrap, Tim, Wierstra, Daan, et al. Matching networks for one shot learning. In Neural Information Processing Systems (NIPS) , 2016.

- Wang, Jane X, Kurth-Nelson, Zeb, Tirumala, Dhruva, Soyer, Hubert, Leibo, Joel Z, Munos, Remi, Blundell, Charles, Kumaran, Dharshan, and Botvinick, Matt. Learning to reinforcement learn. arXiv preprint arXiv:1611.05763 , 2016.

- Williams, Ronald J. Simple statistical gradient-following algorithms for connectionist reinforcement learning. Machine learning , 8(3-4):229-256, 1992.

## A. Additional Experiment Details

In this section, we provide additional details of the experimental set-up and hyperparameters.

## A.1. Classification

For N-way, K-shot classification, each gradient is computed using a batch size of NK examples. For Omniglot, the 5-way convolutional and non-convolutional MAML models were each trained with 1 gradient step with step size α = 0 . 4 and a meta batch-size of 32 tasks. The network was evaluated using 3 gradient steps with the same step size α = 0 . 4 . The 20-way convolutional MAML model was trained and evaluated with 5 gradient steps with step size α = 0 . 1 . During training, the meta batch-size was set to 16 tasks. For MiniImagenet, both models were trained using 5 gradient steps of size α = 0 . 01 , and evaluated using 10 gradient steps at test time. Following Ravi & Larochelle (2017), 15 examples per class were used for evaluating the post-update meta-gradient. We used a meta batch-size of 4 and 2 tasks for 1 -shot and 5 -shot training respectively. All models were trained for 60000 iterations on a single NVIDIA Pascal Titan X GPU.

## A.2. Reinforcement Learning

In all reinforcement learning experiments, the MAML policy was trained using a single gradient step with α = 0 . 1 . During evaluation, we found that halving the learning rate after the first gradient step produced superior performance. Thus, the step size during adaptation was set to α = 0 . 1 for the first step, and α = 0 . 05 for all future steps. The step sizes for the baseline methods were manually tuned for each domain. In the 2D navigation, we used a meta batch size of 20 ; in the locomotion problems, we used a meta batch size of 40 tasks. The MAML models were trained for up to 500 meta-iterations, and the model with the best average return during training was used for evaluation. For the ant goal velocity task, we added a positive reward bonus at each timestep to prevent the ant from ending the episode.

## B. Additional Sinusoid Results

In Figure 6, we show the full quantitative results of the MAML model trained on 10 -shot learning and evaluated on 5 -shot, 10 -shot, and 20 -shot. In Figure 7, we show the qualitative performance of MAML and the pretrained baseline on randomly sampled sinusoids.

## C. Additional Comparisons

In this section, we include more thorough evaluations of our approach, including additional multi-task baselines and a comparison representative of the approach of Rei (2015).

## C.1. Multi-task baselines

The pretraining baseline in the main text trained a single network on all tasks, which we referred to as 'pretraining on all tasks'. To evaluate the model, as with MAML, we fine-tuned this model on each test task using K examples. In the domains that we study, different tasks involve different output values for the same input. As a result, by pre-training on all tasks, the model would learn to output the average output for a particular input value. In some instances, this model may learn very little about the actual domain, and instead learn about the range of the output space.

We experimented with a multi-task method to provide a point of comparison, where instead of averaging in the output space, we averaged in the parameter space. To achieve averaging in parameter space, we sequentially trained 500 separate models on 500 tasks drawn from p ( T ) . Each model was initialized randomly and trained on a large amount of data from its assigned task. We then took the average parameter vector across models and fine-tuned on 5 datapoints with a tuned step size. All of our experiments for this method were on the sinusoid task because of computational requirements. The error of the individual regressors was low: less than 0.02 on their respective sine waves.

We tried three variants of this set-up. During training of the individual regressors, we tried using one of the following: no regularization, standard 2 weight decay, and 2 weight regularization to the mean parameter vector thus far of the trained regressors. The latter two variants encourage the individual models to find parsimonious solutions. When using regularization, we set the magnitude of the regularization to be as high as possible without significantly deterring performance. In our results, we refer to this approach as 'multi-task'. As seen in the results in Table 2, we find averaging in the parameter space (multi-task) performed worse than averaging in the output space (pretraining on all tasks). This suggests that it is difficult to find parsimonious solutions to multiple tasks when training on tasks separately, and that MAML is learning a solution that is more sophisticated than the mean optimal parameter vector.

## C.2. Context vector adaptation

Rei (2015) developed a method which learns a context vector that can be adapted online, with an application to recurrent language models. The parameters in this context vector are learned and adapted in the same way as the parameters in the MAML model. To provide a comparison to using such a context vector for meta-learning problems, we concatenated a set of free parameters z to the input x , and only allowed the gradient steps to modify z , rather than modifying the model parameters θ , as in MAML. For im-

## Model-Agnostic Meta-Learning for Fast Adaptation of Deep Networks

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Line Charts: k-shot Regression Performance

### Overview

The image presents three line charts comparing the performance of different machine learning approaches (MAML, pre-trained, and oracle) in a k-shot regression task. Each chart corresponds to a different value of 'k' (k=5, k=10, k=20), representing the number of shots. The performance is measured by 'mean squared error' and plotted against the 'number of gradient steps'.

### Components/Axes

Each chart shares the following components: