# DAC-h3: A Proactive Robot Cognitive Architecture to Acquire and Express Knowledge About the World and the Self

## DAC-h3: A Proactive Robot Cognitive Architecture to Acquire and Express Knowledge About the World and the Self

Clément Moulin-Frier*, Tobias Fischer*, Maxime Petit, Grégoire Pointeau, Jordi-Ysard Puigbo, Ugo Pattacini, Sock Ching Low, Daniel Camilleri, Phuong Nguyen, Matej Hoffmann, Hyung Jin Chang, Martina Zambelli, Anne-Laure Mealier, Andreas Damianou, Giorgio Metta, Tony J. Prescott, Yiannis Demiris, Peter Ford Dominey, and Paul F. M. J. Verschure

Abstract -This paper introduces a cognitive architecture for a humanoid robot to engage in a proactive, mixed-initiative exploration and manipulation of its environment, where the initiative can originate from both the human and the robot. The framework, based on a biologically-grounded theory of the brain and mind, integrates a reactive interaction engine, a number of state-of-the art perceptual and motor learning algorithms, as well as planning abilities and an autobiographical memory. The architecture as a whole drives the robot behavior to solve the symbol grounding problem, acquire language capabilities, execute goal-oriented behavior, and express a verbal narrative of its own experience in the world. We validate our approach in humanrobot interaction experiments with the iCub humanoid robot, showing that the proposed cognitive architecture can be applied in real time within a realistic scenario and that it can be used with naive users.

Index Terms -Cognitive Robotics, Distributed Adaptive Control, Human-Robot Interaction, Symbol Grounding, Autobiographical Memory

Manuscript received December 31, 2016; revised July 25, 2017; accepted August 09, 2017. The research leading to these results has received funding from the European Research Council under the European Union's Seventh Framework Programme (FP/2007-2013) / ERC Grant Agreement n. FP7-ICT612139 (What You Say Is What You Did project), as well as the ERC's CDAC project: Role of Consciousness in Adaptive Behavior (ERC-2013-ADG 341196). M. Hoffmann was supported by the Czech Science Foundation under Project GA17-15697Y. P. Nguyen received funding from ERC's H2020 grant agreement No. 642667 (SECURE). T. Prescott and D. Camilleri received support from the EU Seventh Framework Programme as part of the Human Brain (HBP-SGA1, 720270) project.

C. Moulin-Frier, J.-Y. Puigbo, S. C. Low, and P. F. M. J. Verschure are with the Laboratory for Synthetic, Perceptive, Emotive and Cognitive Systems, Universitat Pompeu Fabra, 08002 Barcelona, Spain. P. F. M. J. Verschure is also with Institute for Bioengineering of Catalonia (IBEC), The Barcelona Institute of Science and Technology (BIST) and Institució Catalana de Recerca i Estudis Avançats (ICREA), Barcelona, Spain.

- T. Fischer, M. Petit, H. J. Chang, M. Zambelli, and Y. Demiris are with the Personal Robotics Laboratory, Department of Electrical and Electronic Engineering, Imperial College London, SW7 2AZ, U.K.

- G. Pointeau, A.-L. Mealier, and P. F. Dominey are with the Robot Cognition Laboratory of the INSERM U846 Stem Cell and Brain Research Institute, Bron 69675, France.

- U. Pattacini, P. Nguyen, M. Hoffmann, and G. Metta are with the Italian Institute of Technology, iCub Facility, Via Morego 30, Genova, Italy. M. Hoffmann is also with the Department of Cybernetics, Faculty of Electrical Engineering, Czech Technical University in Prague.

- D. Camilleri, A. Damianou, and T. J. Prescott are with the Department of Computer Science, University of Sheffield, U.K. A. Damianou is now at Amazon.com.

Digital Object Identifier 10.1109/TCDS.2017.2754143

*C. Moulin-Frier and T. Fischer contributed equally to this work.

## I. INTRODUCTION

T HE so-called Symbol Grounding Problem (SGP, [1], [2], [3]) refers to the way in which a cognitive agent forms an internal and unified representation of an external word referent from the continuous flow of low-level sensorimotor data generated by its interaction with the environment. In this paper, we focus on solving the SGP in the context of human-robot interaction (HRI), where a humanoid iCub robot [4] acquires and expresses knowledge about the world by interacting with a human partner. Solving the SGP is of particular relevance in HRI, where a repertoire of shared symbolic units forms the basis of an efficient linguistic communication channel between the robot and the human.

To solve the SGP, several questions should be addressed:

- How are unified symbolic representations of external referents acquired from the multimodal information collected by the agent (e.g., visual, tactile, motor)? This is referred to as the Physical SGP [5], [6].

- How to acquire a shared lexicon grounded in the sensorimotor interactions between two (or more) agents? This is referred to as the Social SGP [6], [7].

- How is this lexicon then used for communication and collective goal-oriented behavior? This refers to the functional role of physical and social symbol grounding.

This paper addresses these questions by proposing a complete cognitive architecture for HRI and demonstrating its abilities on an iCub robot. Our architecture, called DAC-h3 , builds upon our previous research projects in conceiving biologically grounded cognitive architectures for humanoid robots based on the Distributed Adaptive Control theory of mind and brain (DAC, presented in the next section). In [8] we proposed an integrated architecture for generating a socially competent humanoid robot, demonstrating that gaze, eye contact and utilitarian emotions play an essential role in the psychological validity or social salience of HRI (DAC-h1). In [9], we introduced a unified robot architecture, an innovative Synthetic Tutor Assistant (STA) embodied in a humanoid robot whose goal is to interactively guide learners in a science-based learning paradigm through rich multimodal interactions (DAC-h2).

DAC-h3 is based on a developmental bootstrapping process where the robot is endowed with an intrinsic motivation to act and relate to the world in interaction with social peers.

Levinson [10] refers to this process as the human interaction engine : a set of capabilities including looking at objects of interest and interaction partners, pointing to these entities [11], demonstrating curiosity as a desire to acquire knowledge [12] and showing, telling and sharing this knowledge with others [11], [13]. These are also coherent with the desiderata for developmental cognitive architectures proposed in [14] stating that a cognitive architecture's value system should manifest both exploratory and social motives, reflecting the psychology of development defended by Piaget [15] and Vygotsky [16].

This interaction engine drives the robot to proactively control its own acquisition and expression of knowledge, favoring the grounding of acquired symbols by learning multimodal representations of entities through interaction with a human partner. In DAC-h3 , an entity refers to an internal or external referent: it can be either an object, an agent, an action, or a body part. In turn, the acquired multimodal and linguistic representations of entities are recruited in goal-oriented behavior and form the basis of a persistent concept of self through the development of an autobiographical memory and the expression of a verbal narrative.

We validate the proposed architecture following a humanrobot interaction scenario where the robot has to learn concepts related to its own body and its vicinity in a proactive manner and express those concepts in goal-oriented behavior. We show a complete implementation running in real-time on the iCub humanoid robot. The interaction depends on the internal dynamics of the architecture, the properties of the environment and the behavior of the human. We analyze a typical interaction in detail and provide videos showing the robustness of our system in various environments (https://github.com/robotology/wysiwyd). Our results show that the architecture autonomously drives the iCub to acquire a number of concepts about the present entities (objects, humans, and body parts), whilst proactively maintaining the interaction with a human and recruiting those concepts to express more complex goal-oriented behavior. We also run experiments with naive subjects in order to test the effect of the robot's proactivity level on the interaction.

In Section II we position the current contribution with respect to related works in the field and rely on this analysis to emphasize the specificity of our approach. Our main contribution is described in Section III and consists in the proposal and implementation of an embodied and integrated cognitive architecture for the acquisition of multimodal information about external word referents, as well as a context-dependent lexicon shared with a human partner and used in goal-directed behavior and verbal narrative generation. The experimental validation of our approach on an iCub robot is provided in Section IV, followed by a discussion in Section V.

## II. RELATED WORKS AND PRINCIPLES OF THE PROPOSED ARCHITECTURE

Designing a cognitive robot that is able to solve the SGP requires a set of heterogeneous challenges to be addressed. First, the robot has to be driven by a cognitive architecture bridging the gap between low-level reactive control and symbolic knowledge processing. Second, it needs to interact with its environment, including social partners, in a way that facilitates the acquisition of symbolic knowledge. Third, it needs to actively maintain engagement with the social partners for a fluent interaction. Finally, the acquired symbols need to be used in high-level cognitive functions dealing with linguistic communication and autobiographical memory.

In this section, we review related works on each of these topics along with a brief overview of the solution adopted by the DAC-h3 architecture.

## A. Functionally-driven vs. biologically-inspired approaches in social robotics

The methods used to conceive socially interactive robots derive predominantly from two approaches [17]. Functionallydesigned approaches are based on reverse engineering methods, assuming that a deep understanding of how the mind operates is not a requirement for conceiving socially competent robots (e.g. [18], [19], [20]), whilst biologically-inspired robots are based on theories of natural and social sciences and expect two main advantages of constraining cognitive models by biological knowledge: to conceive robots that are more understandable to humans, as they reason using similar principles, and to provide an efficient experimental benchmark from which the underlying theories of learning can be confronted, tested and refined (e.g. [21], [22], [23]). One specific approach used by Demiris and colleagues for the mirror neuron system is decomposing computational models implemented on robots into brain operating principles which can then be linked and compared to neuroimaging and neurophysiological data [23].

The proposed DAC-h3 cognitive architecture takes advantage of both methods. It is based on an established biologicallygrounded cognitive architecture of the brain and the mind (the DAC theory, presented below) that is adapted for the HRI domain. However, while the global structure of the architecture is constrained by biology, the implementation of specific modules can be driven by their functionality, i.e. using stateof-the-art methods from machine learning that are powerful at implementing particular functions without being directly constrained by biological knowledge.

## B. Cognitive architectures and the SGP

Another distinction in approaches for conceiving social robots, which is of particular relevance for addressing the SGP, reflects a divergence from the more general field of cognitive architectures (or unified theories of cognition [24]). Historically, two opposing approaches have been proposed to formalize how cognitive functions arise in an individual agent from the interaction of interconnected information processing modules in a cognitive architecture. Top-down approaches rely on a symbolic representation of a task, which has to be decomposed recursively into simpler ones to be executed by the agent. These rely principally on methods from symbolic artificial intelligence (from the General Problem Solver [25] to Soar [26] or ACT-R [27]). Although relatively powerful at solving abstract symbolic problems, top-down architectures are not able to solve the SGP per se because they presuppose the existence of symbols. Thus they are not suitable for

addressing the problem of how these symbols can acquired from low-level sensorimotor signals. The alternative, bottomup approaches instead implement behavior without relying on complex knowledge representation and reasoning. This is typically the case in behavior-based robotics [28], emphasizing lower-level sensory-motor control loops as a starting point of behavioral complexity as in the Subsumption architecture [29]. These approaches are not suitable to solve the SGP either because they do not consider symbolic representation as a necessary component of cognition (referred as intelligence without representation in [28]).

Interestingly, this historical distinction between top-down representation based and bottom-up behavior based approaches still holds in the domain of social robotics [30], [31]. Representation based approaches rely on the modeling of psychological aspects of social cognition (e.g. [32]), whereas behavior based approaches emphasize the role of embodiment and reactive control to enable the dynamic coupling of agents [33]. Solving the SGP, both in its physical and social aspects, therefore requires the integration of bottom-up processes for acquiring and grounding symbols in the physical interaction with the (social) environment and top-down processes for taking advantage of the abstraction, reasoning and communication abilities provided by the acquired symbol system. This has been referred to as the micro-macro loop , i.e. a bilateral relationship between an emerged symbol system at the macro level and a physical system consisting of communicating and collaborating agents at the micro level [34].

Several contributions in social robotics rely on such hybrid architectures integrating bottom-up and top-down processes (e.g. [35], [36], [37], [38]). In [35], an architecture called embodied theory of mind was developed to link high-level cognitive skills to the low-level perceptual abilities of a humanoid and implementing joint attention and intentional state understanding. In [36], or [37], the architecture combines deliberative planning, reactive control, and motivational drives for controlling robots in interaction with humans.

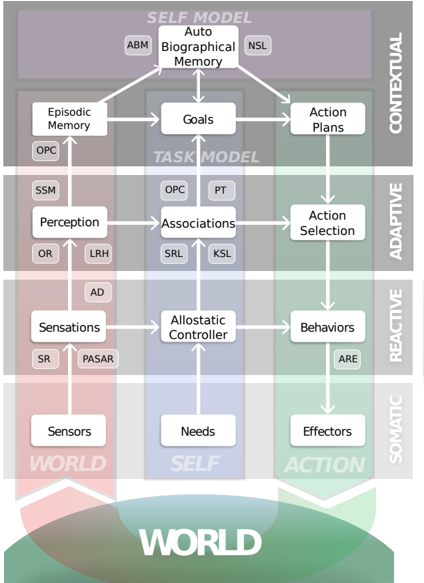

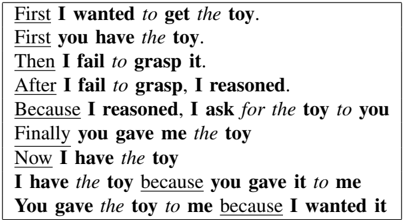

In this paper, we adopt the principles of the Distributed Adaptive Control theory of the mind and the brain (DAC, [39], [40]). DAC is a hybrid architecture which posits that cognition is based on the interaction of four interconnected control loops operating at different levels of abstraction (see Figure 1). The first level is called the somatic layer and corresponds to the embodiment of the agent within its environment, with its sensors and actuators as well as the physiological needs (e.g. for exploration or safety). Extending bottom-up approaches with drive reduction mechanisms, complex behavior is bootstrapped in DAC from the self-regulation of an agent's physiological needs when combined with reactive behaviors (the reactive layer ). This reactive interaction with the environment drives the dynamics of the whole architecture [41], bootstrapping learning processes for solving the physical SGP (the adaptive layer ) and the acquisition of higher-level cognitive representations such as abstract goal selection, memory and planning (the contextual layer ). These high-level representations in turn modulate the activity at the lower levels via top-down pathways shaped by behavioral feedback. The control flow in DAC is therefore distributed, both from bottom-up and top-down interactions between layers, as well as from lateral information processing within each layer.

## C. Representation learning for solving the SGP

As we have seen, a cognitive architecture solving the SGP needs to bridge the gap between low-level sensorimotor data and symbolic knowledge. Several methods have been proposed for compressing multimodal signals into symbols. A solution based on geometrical structures was offered by Gärdenfors with the notion of conceptual spaces (e.g., [42]), whereby similarity between concepts is derived from distances in this space. Lieto et al. [43] advocate the use of the conceptual spaces as the lingua franca for different levels of representation.

Another approach has been proposed in [44], [45], which involves a single class of mental representations called 'Semantic Pointers'. These representations are particularly suited in solving the SGP as they support binding operations of various modalities, which in turn result in a single representation. This representation (which might have been initially formed by an input of a single modality) can then trigger a corresponding concept, whose occurrence leads to simulated stimuli in the other modalities. Furthermore, while semantic pointers can be represented as vectors, the vector representation can be transformed in neural activity which makes the implementation biologically plausible and allows mapping to different brain areas.

Other approaches consider symbols as fundamentally sensorimotor units. For example, Object-Action Complexes (OACs) build symbolic representations of sensorimotor experience and behaviors through the learning of object affordances [46] (for a review of affordance-based approaches, see [47]). In [48], a framework founded on joint perceptuo-motor representations is proposed, integrating declarative episodic and procedural memory systems for combining experiential knowledge with skillful know-how.

In DAC-h3, visual, tactile, motor and linguistic information about the present entities is collected proactively through reactive control loops triggering sensorimotor exploration in interaction with a human partner. Abstract representations are learned on-line using state-of-the-art machine learning methods in each modality (see Section III). An entity is therefore represented internally in the robot's memory as the association between abstracted multimodal representations and linguistic labels.

## D. Interaction paradigms and autonomous exploration

Learning symbolic representations from sensorimotor signals requires an autonomous interaction of a robot with the physical and social world. Several interaction paradigms have been proposed for grounding a lexicon in the physical interaction of a robot with its environment. Since the pioneering paradigm of language games proposed in [49], a number of multiagent models have been proposed showing how particular properties of language can self-organize out of repeated dyadic interactions between agents of a population (e.g. [50], [51]).

In the domain of HRI, significant progress has been made in allowing robots to interact with humans, for example in

learning shared plans [52], [53], [54], learning to imitate actions [55], [56], [57], [58], and learning motor skills [59] that can be used for engaging in joint activities. Other contributions have focused on lexicon acquisition through the transfer of sensorimotor and linguistic information from the interaction between a teacher and a learner through imitation [60] or action [61], [62]. However, in most of these interactions, the human is in charge and the robot is following the human's lead: the choice of which concept to learn is left to the human and the robot must identify it. In this case, the robot must solve the referential indeterminacy problem described by Quine [63], where the robot language learner has to extract the external concept that was referred to by the human speaker. However, acquiring symbols by interacting with other agents is not only a unidirectional process of information transfer between a teacher and learner [10].

Autonomous exploration and proactive behavior solves this problem by allowing robots to take the initiative in exploring their environment [64] and interacting with people [65]. The benefit of these abilities for knowledge acquisition has been demonstrated in several developmental robotics experiments. In [66], it is shown how a combination of social guidance and intrinsic motivation improve the learning of object visual categories in HRI. A similar mechanism is adopted in [67] for learning complex sensorimotor mappings in the context of vocal development. In [68], planning conflicts due to the uncertainty of the detected human's intention are resolved by proactive execution of the corresponding task that optimally reduces the system's uncertainty. In [69], the task is to acquire human-understandable labels for novel objects and learning how to manipulate them. This is realized through a mixed-initiative interaction scenario and it is shown that proactivity improves the predictability and success of human-robot interaction.

A central aspect of the DAC-h3 architecture is the robot's ability to act proactively in a mixed-initiative scenario. This allows self-monitoring of the robot's own knowledge acquisition process, removing dependence on the human's initiative. Interestingly, proactivity in a sense reverses the referential indeterminacy problem mentioned above by shifting the responsibility of solving ambiguities to the agent who is endowed with the adequate prior knowledge to solve it, i.e., the human, in a HRI context. The robot is now in charge of the concepts it wants to learn, and can use joint attention behaviors to guide the human toward the knowledge it wants to acquire. In the proposed system, this is realized through a set of behavioral control loops, by self-regulating knowledge acquisition, and by proactively requesting missing information about entities from the human partner.

## E. Language learning, autobiographical memory and narrative expression

We have just described the main components that a robot requires to solve the SGP: a cognitive architecture able to process both low-level sensorimotor data and high-level symbolic representation, mechanisms for linking these two levels in abstract multimodal representations, as well autonomous behaviors for proactively interacting with the environment. The final challenge to address concerns the use of the acquired symbols for higher-level cognition, including language learning, autobiographical memory and narrative expression.

Several works address the ability of language learning in robotics. The cognitive architecture of iTalk [70] focuses on modeling the emergence of language by learning about the robot's embodiment, learning from others, as well as learning linguistic capability. Cangelosi et al. [71] propose that action, interaction and language should be considered together as they develop in parallel, and one influences the others. Antunes et al. [72] assume that language is already learned, and address the issue that linguistic input typically does not have a one-to-one mapping to actions. They propose to perform reasoning and planning on three different layers (low-level robot perception and action execution, mid-level goal formulation and plan execution, and high-level semantic memory) to interpret the human instructions. Similarly, [73] proposes a system to recognize novel objects using language capabilities in one shot. In these works, language is typically used to understand the human and perform actions, but not necessarily to talk about past events that the robot has experienced.

A number of works investigate the expression of past events by developing narratives based on acquired autobiographical memories [74], [75], [76]. In [75], a user study is presented which suggests that a robot's narrative allows humans to get an insight into long term human-robot interaction from the robot's perspective. The method in [76] takes user preferences into account when referring to past interactions. Similarly to our framework, it is based on the implementation and cooperation between both episodic and semantic memories with a dialog system. However, no learning capabilities (neither language nor knowledge) are introduced by the authors.

In the proposed DAC-h3 architecture, the acquired lexicon allows the robot to execute action plans for achieving goaloriented behavior from human speech requests. Relevant information throughout the interaction of the robot with humans is continuously stored in an autobiographical memory used for the generation of a narrative self, i.e., a verbal description of the own robot's history over the long term (able to store and verbally describe interactions from a long time ago, e.g. a period of several months).

In the next section, we describe how the above features are implemented in a coherent cognitive architecture made up of functional YARP [77] modules running in real-time on the iCub robot.

## III. THE DAC-H3 COGNITIVE ARCHITECTURE

This section presents the DAC-h3 architecture in detail, which is an instantiation of the DAC architecture for human-robot interaction. The proposed architecture provides a general framework for designing autonomous robots which act proactively for 1) maintaining social interaction with humans, 2) bootstrapping the association of multimodal knowledge with its environment that further enrich the interaction through goal-oriented action plans, and 3) express a verbal narrative. It allows a principled organization of various functional modules into a biologically grounded cognitive architecture.

## A. Layer and module overview

In DAC-h3 , the somatic layer consists of an iCub humanoid robot equipped with advanced motor and sensory abilities for interacting with humans and objects. The reactive layer ensures the autonomy of the robot through drive reduction mechanisms implementing proactive behaviors for acquiring and expressing knowledge about the current scene. This allows the bootstrapping of adaptive learning of multimodal representations about entities in the adaptive layer . More specifically, the adaptive layer learns high-level multimodal representations (visual, tactile, motor and linguistic) for the categorization of entities (objects, agents, actions and body parts) and associates them in unified representations. Those representations form the basis of an episodic memory for goaloriented behavior through planning in the contextual layer , which deals with goal representation and action planning. Within the contextual layer, an autobiographical memory of the robot is formed that can be expressed in the form of a verbal narrative.

The complete DAC-h3 architecture is shown in Figure 1. It is composed of structural modules reflecting the cognitive modules proposed by the DAC theory. Each structural module might rely on one or more functional modules implementing more specific functionalities (e.g. dealing with motor control, object perception, and scene representation). The complete system described in this section, therefore, integrates several state-of-the-art algorithms for cognitive robotics and integrates them into a structured cognitive architecture grounded in the principles of the DAC theory. In the remainder of this section, we describe each structural module layer by layer, as well as their interaction with the functional modules , and provide references which provide more detail for the individual modules.

## B. Somatic layer

The somatic layer corresponds to the physical embodiment of the system. We use the iCub robot, an open source humanoid platform developed for research in cognitive robotics [4]. The iCub is a 104 cm tall humanoid robot with 53 degrees of freedom (DOF). The robot is equipped with cameras in its articulated eyes allowing stereo vision, and tactile sensors in the fingertips, palms of the hand, arms and torso. The iCub is augmented with an external RGB-D camera above the robot head for agent detection and skeleton tracking. Finally, an external microphone and speakers are used for speech recognition and synthesis, respectively.

The somatic layer also contains the physiological needs of the robot that will drive its reactive behaviors, as described in the following section on the reactive layer .

## C. Reactive layer

Following DAC principles, the reactive layer oversees the self-regulation of the internal drives of a cognitive agent from the interaction of sensorimotor control loops. The drives aim at self-regulating internal state variables (the needs of the somatic layer ) within their respective homeostatic ranges. In biological

Figure 1. The DAC-h3 cognitive architecture (see Section III) is an implementation of the DAC theory of the brain and mind (see Section II-B) adapted for HRI applications. The architecture is organized as a layered control structure with tight coupling within and between layers: the somatic, reactive, adaptive and contextual layers. Across these layers, a columnar organization exists that deals with the processing of states of the world or exteroception (left, red), the self or interoception (middle, blue) and action (right, green). The role of each layer and their interaction is described in Section III. White boxes connected with arrows correspond to structural modules implementing the cognitive modules proposed in the DAC theory. Some of these structural modules rely on functional modules , indicated by acronyms in the boxes next to the structural modules. Acronyms refer to the following functional modules. SR: Speech Recognizer; PASAR: Prediction, Anticipation, Sensation, Attention and Response; AD: Agent Detector; ARE: Action Rendering Engine; OR: Object Recognition; LRH: Language Reservoir Handler; SSM: Synthetic Sensory Memory; PT: Perspective Taking; SRL: Sensorimotor Representation Learning; KSL: Kinematic Structure Learning; OPC: Object Property Collector; ABM: Autobiographical Memory; NSL: Narrative Structure Learning.

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Diagram: Cognitive Architecture Model

### Overview

The image displays a complex, multi-layered cognitive architecture diagram. It is structured as a matrix with four horizontal processing layers and three vertical functional columns, illustrating the flow of information and control from sensory input to high-level planning and action execution. The entire system interacts with an external "WORLD" represented at the base.

### Components/Axes

**Vertical Columns (Functional Domains):**

1. **WORLD** (Left column, pinkish background): Represents the interface with the external environment.

2. **SELF** (Center column, blue background): Represents the internal state and models of the agent itself.

3. **ACTION** (Right column, green background): Represents the output and behavioral systems.

**Horizontal Layers (Processing Levels):**

1. **CONTEXTUAL** (Top layer, dark grey background): High-level, memory-guided planning.

2. **ADAPTIVE** (Second layer, medium grey background): Learning and associative processing.

3. **REACTIVE** (Third layer, light grey background): Fast, homeostatic control loops.

4. **SOMATIC** (Bottom layer, lightest grey background): Basic bodily functions and interfaces.

**Detailed Component List (by Layer and Column):**

* **CONTEXTUAL Layer:**

* **Auto Biographical Memory** (Top-center, SELF column). Connected to smaller labels: **ABM** (left) and **NSL** (right).

* **Episodic Memory** (Left, WORLD column).

* **Goals** (Center, SELF column).

* **Action Plans** (Right, ACTION column).

* *Flow:* Arrows connect Auto Biographical Memory to Episodic Memory, Goals, and Action Plans. Episodic Memory feeds into Goals, which in turn feeds into Action Plans.

* **ADAPTIVE Layer:**

* **Perception** (Left, WORLD column). Associated labels: **SSM** (above), **OPC** and **LRH** (below).

* **Associations** (Center, SELF column). Associated labels: **OPC** and **PT** (above), **SRL** and **KSL** (below).

* **Action Selection** (Right, ACTION column).

* *Flow:* Perception feeds into Associations, which feeds into Action Selection. A vertical arrow connects Episodic Memory (above) to Perception.

* **REACTIVE Layer:**

* **Sensations** (Left, WORLD column). Associated labels: **SR** and **PASAR** (below).

* **Allostatic Controller** (Center, SELF column). Associated label: **AD** (above).

* **Behaviors** (Right, ACTION column). Associated label: **ARE** (below).

* *Flow:* Sensations feed into the Allostatic Controller, which feeds into Behaviors. A vertical arrow connects Perception (above) to Sensations.

* **SOMATIC Layer:**

* **Sensors** (Left, WORLD column).

* **Needs** (Center, SELF column).

* **Effectors** (Right, ACTION column).

* *Flow:* Sensors feed upward to Sensations. Needs feed upward to the Allostatic Controller. Effectors receive input from Behaviors.

**Base Element:**

* A large, gradient-filled (green to blue) semi-circle at the bottom labeled **WORLD** in bold, white text. This represents the external environment that the entire cognitive system interacts with.

### Detailed Analysis

The diagram is a directed graph showing hierarchical and lateral information flow.

* **Bottom-Up Flow (Perception):** Information originates in the **WORLD** via **Sensors**, becomes **Sensations**, is processed into **Perception**, and is integrated into **Episodic Memory** and the **Auto Biographical Memory**.

* **Top-Down Flow (Control):** High-level **Goals** and **Action Plans** (informed by memory) guide **Action Selection**, which influences **Behaviors** and ultimately drives **Effectors** acting upon the **WORLD**.

* **Internal Regulation:** The **SELF** column is central. The **Allostatic Controller** (reactive) and **Associations** (adaptive) mediate between perception and action, likely maintaining internal stability (homeostasis) and learning. **Needs** provide basic drives to the controller.

* **Acronyms:** Numerous two or three-letter acronyms (ABM, NSL, OPC, SSM, PT, LRH, SRL, KSL, SR, PASAR, AD, ARE) are placed adjacent to main components, likely denoting specific sub-modules, theories, or processes within the architecture. Their exact meanings are not defined in the image.

### Key Observations

1. **Symmetrical Structure:** The three-column (WORLD/SELF/ACTION) and four-layer layout creates a clear, symmetrical matrix for organizing cognitive functions.

2. **Central Role of Memory and Goals:** The **Auto Biographical Memory** and **Goals** are positioned at the top-center, indicating they are the pinnacle of the hierarchy, integrating past experience to direct future action.

3. **Allostatic Controller as a Hub:** In the REACTIVE layer, the **Allostatic Controller** is a central hub receiving input from Sensations (WORLD) and Needs (SELF) to generate Behaviors (ACTION), emphasizing real-time regulation.

4. **Color-Coded Columns:** The consistent pink (WORLD), blue (SELF), and green (ACTION) background shading for the columns provides immediate visual grouping of related functions.

5. **Directional Arrows:** All connections are one-way arrows, defining a strict causal or informational flow without feedback loops explicitly drawn (though feedback is implied by the system's purpose).

### Interpretation

This diagram represents a comprehensive, hybrid cognitive architecture designed for an autonomous agent (e.g., a robot or advanced AI). It integrates multiple paradigms:

* **Reactive Layer:** Embodies subsumption or behavior-based architecture, where **Sensations** and **Needs** trigger fast **Behaviors** via the **Allostatic Controller** for immediate survival and stability.

* **Adaptive Layer:** Incorporates learning and symbolic processing, where **Perception** is refined and linked via **Associations** to inform more flexible **Action Selection**.

* **Contextual Layer:** Adds a deliberative, model-based layer where **Episodic Memory** and an **Auto Biographical Memory** (a model of the self's history) enable long-term planning, goal management, and the generation of complex **Action Plans**.

The architecture suggests a system that balances reflexive reaction with learned adaptation and conscious-like planning. The "SELF" column is crucial, indicating the agent maintains models of its own state, history, and needs, which are central to its decision-making. The explicit connection from the high-level "Goals" down to "Action Selection" shows how abstract intentions are ultimately translated into concrete behaviors that affect the world. The numerous acronyms hint that this is a synthesis of specific, pre-existing computational theories or modules into a unified framework.

</details>

terms, such an internal state variable could, for example, reflect the current glucose level in an organism, with the associated homeostatic range defining the minimum and maximum values of that level. A drive for eating would then correspond to a self-regulation mechanism where the agent actively searches for food whenever its glucose level is below the homeostatic minimum and stops eating even if food is present whenever it is above the homeostatic maximum. A drive is therefore defined as the real-time control loop triggering appropriate behaviors whenever the associated internal state variable goes out of its homeostatic range, as a way to self-regulate its value in a dynamic and autonomous way.

In the social robotics context that is considered in this paper, the drives of the robot do not reflect biological needs as above but are rather related to knowledge acquisition and expression in social interaction. At the foundation of this developmental

bootstrapping process is the intrinsic motivation to interact and communicate. As described by Levinson [10] (see Introduction), a part of the human interaction engine is a set of capabilities that include the motivation to interact and communicate through universal (language independent) manners; including looking at objects of interest and at the interaction partner, as well as pointing to these objects. These reactive capabilities are built into the reactive layer of the architecture forming the core of the DAC-h3 interaction engine. These interaction primitives allow the DAC-h3 system and the human to share attention around specific entities (body parts, objects, or agents), and to bootstrap learning mechanisms in the adaptive layer that associate visual, tactile, motor and linguistic representations of entities as described in the next section.

Currently, the architecture implements the following two drives: one for knowledge acquisition and one for knowledge expression. However, DAC-h3 is designed in a way that facilitates the addition of new drives for further advancements (see Section V). First, a drive for knowledge acquisition provides the iCub with an intrinsic motivation to acquire new knowledge about the current scene. The internal variable associated with this drive is modulated by the number of entities in the current scene with missing information (e.g. unknown name, or missing property). The self-regulation of this drive is realized by proactively asking the human to provide missing information about entities, for instance, their name via speech, synchronized with gaze and pointing; or asking the human to touch the robot's skin associated with a specific body part.

Second, a drive for knowledge expression allows the iCub to proactively express its acquired knowledge by interacting with the human and objects. The internal variable associated with this drive is modulated by the number of entities in the current scene without missing information. The self-regulation is then realized by triggering actions toward the known entities, synchronized with verbal descriptions of those actions (e.g. pointing towards an object or moving a specific body part, while verbally referring to the considered entity).

The implementation of these drives is realized through the three structural modules described below, interacting with each other as well as with the surrounding layers: 1) sensations , 2) allostatic controller , and 3) behaviors (see Figure 1).

1) Sensations: The sensations module deals with low-level sensing to provide relevant information for meaning extraction in the adaptive layer . Specifically, the module detects presence and position of other agents and their body parts ( agent detector functional module; of interest are the head location for gazing at the partner and the location of the hands to detect pointing actions) based on the input of the RGB-D camera. Similarly, it detects objects based on a texture analysis and extracts their location using the stereo vision capabilities of the iCub [78]. The prediction, anticipation, sensation, attention and response functional module (PASAR; [79]) calculates the saliency of agents based on their motion (increased velocity leads to increased saliency), and similarly the saliency for objects is increased if they move or the partner points at them. Finally, the speech recognition functional module extracts text from human speech sensed by a microphone using the Microsoft TM Speech API. The functionalities of the sensations module can, therefore, be summarized as dimensionality reduction and saliency computation, and the resulting data are used for bootstrapping knowledge in higher layers of the architecture.

2) Allostatic Controller: In many situations, several drives which may conflict with each other can be activated at the same time (in the case of this paper, the drive for knowledge acquisition and the drive for knowledge exploration). Such possible conflicts can be solved through the concept of an allostatic controller [80], [81], defined as a set of simple homeostatic control loops and dealing with their scheduling to ensure an efficient global regulation of the internal state variables. The scheduling is decided according to the internal state of the robot and the output of the sensations module. The decision of which drive to follow depends on several factors: the distance of each drive level to their homeostatic boundaries, as well as predefined drive priorities (in DAC-h3 , knowledge acquisition has priority over knowledge expression, which results in a curious personality).

3) Behaviors: To regulate the aforementioned drives, the allostatic controller is connected to the behaviors module, and each drive is linked to corresponding behaviors which are supposed to bring it back into its homeostatic range whenever needed. The positive influence of such a drive regulation mechanism on the acceptance of the HRI by naive users has been demonstrated in previous papers [82], [83].

The drive for knowledge acquisition is regulated by requiring information about entities through coordinated behaviors. Those behaviors depend on the type of the considered entity:

- In the case of an object, the robot produces speech (e.g. 'What is this object?') while pointing and gazing at the unknown object.

- In the case of an agent, the robot produces speech (e.g. 'Who are you?') while looking at the unknown human.

- In the case of a body part, the robot either asks for the name (e.g. 'How do you call this part of my body?') while moving it or, if the name is already known from a previous interaction, asks the human to touch the body part while moving it (e.g., 'Can you touch my index while I move it, please?').

The multimodal information collected through these behaviors will be used to form unified representations of entities in the adaptive layer (see next section).

The drive for knowledge expression is regulated by executing actions towards known entities, synchronized with speech sentences parameterized by the entities' linguistic labels acquired in the adaptive layer (see next section). Motor actions are realized through the action rendering engine (ARE [84]) functional module which allows executing complex actions such as push, reach, take, look in a coordinated human-like fashion. Language production abilities are implemented in the form of predefined grammars (for example asking for the name of an object). Semantic words associated to entities are not present at the reactive level, but are provided from the learned association operating in the adaptive layer . The iSpeak module implements a bridge between the iCub and a voice synthesizer by synchronizing the produced utterance with the lip movements of the iCub to realize a more vivid interaction [85].

## D. Adaptive layer

The adaptive layer oversees the acquisition of a state space of the agent-environment interaction by binding visual, tactile, motor and linguistic representations of entities. It integrates functional modules for maintaining an internal representation of the current scene, visually categorizing entities, recognizing and sensing body parts, extracting linguistic labels from human speech, and learning associations between multimodal representations. They are grouped in three structural modules described below: perceptions , associations and action selection (see Figure 1).

1) Perceptions: The object recognition functional module [86] is used to learn the categorization of objects directly from the visual information given by the iCub eyes with resort to the most recent deep convolutional networks. The bounding boxes of the objects found in the Sensations module are fed to the learning module for the recognition stage. The output of the system consists of the 2D (in the image plane) and 3D (in the world frame) positions of the identified objects along with the corresponding classification scores as stored in the objects properties collector memory (explained below).

There are two functional modules related to language understanding and language production, both integrated within the language reservoir handler (LRH). The comprehension of narrative discourse module receives a sentence and produces the representation of the corresponding meaning, and can thus transform human speech into meaning. The module for narrative discourse production receives a representation of meaning and generates the corresponding sentence (meaning to speech). The meaning is represented in terms of PAOR: predicate(arguments) , where arguments correspond to thematic roles (agent,object,recipient) . Both models are implemented as recurrent neuronal networks based on reservoir computing [87], [88], [89].

The synthetic sensory memory (SSM) module is currently employed for face recognition and action recognition using a fusion of RGB-D data and object location data as provided from the sensations module. In terms of action recognition, SSM has been trained to automatically segment and recognize the following actions: push, pull, lift, drop, wave, and point, while also actively recognizing if the current action is known or unknown. More generally, it has been shown that SSM provides abilities for pattern learning, recall, pattern completion, imagination and association [90]. The SSM module is inspired by the role of the hippocampus by fusing multiple sensory input streams and representing them in a latent feature space [91]. During recall, SSM performs classification of incoming sensory data and returns a label along with an uncertainty measure corresponding to the returned label, which is a use case of the action and face recognition tasks within DAC-h3 . SSM is also capable of imagining novel inputs or reconstructing previously encountered inputs and sending the corresponding generated sensory data. This allows for the replay of memories as detailed in [92].

2) Associations: The associations structural module produces unified representations of entities by associating the multimodal categories formed in the perception module. Those unified representations are formed in the objects properties collector (OPC), a functional module storing all information associated with a particular entity at the present moment in a proto-language format as detailed in [82]. An entity is defined as a concept which can be manipulated and is thus the basis for emerging knowledge. In DAC-h3 , each entity has a name associated, which might be unknown if the entity has been discovered but not yet explored. More specifically, higher level entities such as objects, body parts and agents have additional intrinsic properties. For example, an object also has a location and dimensions associated with it. Furthermore, whether the object is currently present is encoded as well, and if so, its saliency value (as computed by the PASAR module described in Section III-C). On the other hand, a body part is an entity which contains a proprioceptive property (i.e. a specific joint), and a tactile information property (i.e. association with tactile sensors). Thus, the OPC allows integrating multiple modalities of one and the same entity to ground the knowledge about the self, other agents, and objects, as well as their relations. Relations can be used to link several instances in an ontological model (see Section III-E1: Episodic Memory ).

Learning the multimodal associations that form the internal representations of entities relies on the behavior generated by the knowledge acquisition drive operating at the reactive level (see previous section). Multimodal information about entities generated by those behaviors is bound together by registering the acquired information in the specific data format used by the OPC. For instance, the language reservoir handler module described above deals with speech analysis to extract entity labels from human replies (e.g. 'this is a cube'; {P:is, A:this, O:cube, R: ∅ }). The extracted labels are associated with the acquired multimodal information which depends on the entity type: visual representations generated by the object recognition module in case of an object or agent detector in case of an agent, as well as motor and touch information in case of a body part.

The associations of representations can also be applied to the developmental robot itself (instead of external entities as above), to acquire motor capabilities or to learn the links between motor joints and skin sensors of its body [93]. Learning self-related representations of the robot's own body schema is realized by the sensorimotor representation learning functional module dedicated to forward model learning [94]. The module receives sensory data collected from the robot's sensors (e.g. cameras, skin, joint encoders) and allows accurate prediction of the next state given the current state and an action. Importantly, the forward model is learned based on sensory experiences rather than based on known mechanical properties of the robot's body.

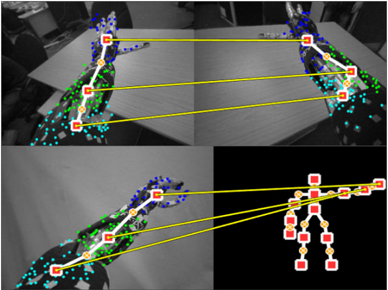

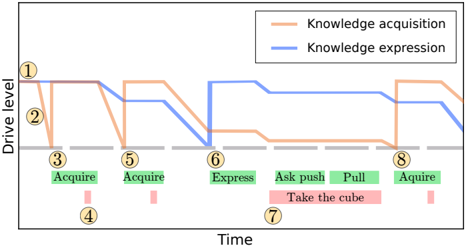

The kinematic structure learning functional module [95], [96] estimates an articulated kinematic structure of arbitrary objects (including the robot's body parts and humans) using visual input videos of the iCub eye cameras. This again is based on sensory experiences rather than known properties of the agents, which is important to autonomously identify the abilities of other agents. Based on the estimated articulated kinematic structures [95], we also allow the iCub to anchor two objects' kinematic structure joints by observing their movements [96] and formulating the problem of finding corresponding kinematic joint matches between two articulated kinematic structures. This

Figure 2. Examples of the kinematic structure correspondences which the iCub has found. The top figure shows the correspondences between the left and right arm of the iCub, which can be used to infer the body part names of one arm if the corresponding names of the other arm are known. Similarly, the bottom figure shows correspondences between the robot's body and the human's body.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Diagram: Multi-View Human Pose Estimation and Keypoint Correspondence

### Overview

The image is a composite of four panels demonstrating a computer vision process for human pose estimation across multiple camera views. It visualizes the detection of body keypoints (joints) in 2D images and their correspondence to a unified 3D skeletal model. The primary visual elements are human figures overlaid with colored dots (keypoints) and yellow lines connecting specific points across different panels to illustrate matching.

### Components/Axes

The image contains no traditional chart axes, legends, or text labels. The informational components are entirely visual:

* **Panels:** Four distinct image panels arranged in a 2x2 grid.

* **Keypoints:** Small colored dots placed on anatomical locations of a human figure (e.g., shoulders, elbows, wrists, hips, knees, ankles).

* **Color Coding:** Keypoints are colored, likely representing different body parts or confidence levels. The dominant colors are blue, green, cyan, and red/yellow.

* **Correspondence Lines:** Solid yellow lines connect specific keypoints between panels, indicating that the system has identified them as the same physical point viewed from different angles.

* **Skeletal Model:** The bottom-right panel displays a simplified, abstract stick-figure representation of the human skeleton, derived from the keypoints.

### Detailed Analysis

**Panel-by-Panel Breakdown:**

1. **Top-Left Panel:**

* **Content:** A grayscale image of a person standing, viewed from a side angle. The person's right arm is extended forward.

* **Keypoints:** A dense cluster of blue dots covers the head and upper torso. Green dots are distributed along the spine, arms, and legs. Cyan dots appear on the lower legs and feet.

* **Connections:** Three yellow lines originate from keypoints in this panel. They connect to:

* A point on the head/neck area (blue cluster) in the Top-Right panel.

* A point on the right hip (green) in the Top-Right panel.

* A point on the right ankle (cyan) in the Top-Right panel.

2. **Top-Right Panel:**

* **Content:** A grayscale image of the same person from a different, more frontal camera angle.

* **Keypoints:** Similar distribution of blue (head/shoulders), green (torso/limbs), and cyan (lower legs/feet) dots.

* **Connections:** Receives the three yellow lines from the Top-Left panel. It also has three yellow lines originating from it, connecting to the Bottom-Left panel.

3. **Bottom-Left Panel:**

* **Content:** Another grayscale view of the person, similar to the top-left but possibly from a slightly different time or camera.

* **Keypoints:** Same color scheme (blue, green, cyan).

* **Connections:** Receives three yellow lines from the Top-Right panel. It also has three yellow lines originating from it, connecting to the skeletal model in the Bottom-Right panel.

4. **Bottom-Right Panel:**

* **Content:** A black background with a stylized, abstract human skeleton model.

* **Keypoints:** The joints are represented by larger red squares with yellow centers. The bones are thick yellow lines.

* **Connections:** Receives three yellow lines from the Bottom-Left panel, linking specific 2D image keypoints to their corresponding joints on the 3D model. The connected joints appear to be the right shoulder, right hip, and right ankle.

**Correspondence Flow:**

The yellow lines create a clear visual chain: **Top-Left Image → Top-Right Image → Bottom-Left Image → 3D Skeleton Model**. This demonstrates the process of matching the same anatomical point across multiple 2D views and finally mapping it to a canonical 3D pose representation.

### Key Observations

* **Multi-View Consistency:** The system successfully identifies and links the same body parts (head, hip, ankle) across three significantly different camera perspectives.

* **Keypoint Density:** The raw detection (blue/green/cyan dots) is very dense and noisy, covering large areas of the body. This suggests an initial, high-recall detection phase.

* **Model Abstraction:** The final skeletal model (bottom-right) is a clean, low-noise abstraction, indicating a processing step that filters and consolidates the noisy 2D detections into a coherent 3D structure.

* **Occlusion Handling:** The person's right arm is extended in the top views, which could cause self-occlusion. The consistent matching of the hip and ankle points suggests the system is robust to such challenges.

### Interpretation

This diagram illustrates a core pipeline in **3D human pose estimation from multi-view cameras**. The data suggests the following process:

1. **2D Keypoint Detection:** Each camera view independently detects a large set of candidate keypoints (the colored dots) on the human figure. The high density implies a model designed for high sensitivity, possibly at the cost of precision.

2. **Cross-View Correspondence:** The system then solves the correspondence problem, identifying which noisy 2D point in View A represents the same physical joint as a point in View B. The yellow lines are the visual proof of this matching.

3. **3D Lifting/Reconstruction:** Finally, the matched 2D points from all views are used to "lift" or reconstruct the pose into a unified 3D skeletal model. The clean skeleton in the bottom-right is the output—a simplified, actionable representation of the person's pose in space.

The **notable anomaly** is the stark contrast between the noisy, dense 2D detections and the clean, sparse 3D model. This highlights the critical role of the multi-view matching and 3D reconstruction algorithms in filtering noise and resolving ambiguity that is inherent in single-view 2D pose estimation. The diagram effectively argues for the power of multi-view systems to achieve robust and accurate 3D understanding.

</details>

allows the iCub to infer correspondences between its own body parts (its left arm and its right arm), as well as between its own body and the body of the human as retrieved by the agent detector [93] (see Figure 2).

Finally, based on these correspondences, the perspective taking functional module [97] enables the robot to reason about the state of the world from the partner's perspective. This is important in situations where the views of the robot and the human diverge, for example, due to objects which are hidden to the human but visible to the robot. More importantly, perspective taking is thought to be an essential element for successful cooperation and to ease communication, for example by resolving ambiguities [98]. By mentally aligning the selfperspective with that of the human partner, this module allows algorithms (concerned with the visuospatial perception of the world) to reason as if the input was acquired from an egocentric perspective; which allows to use learning algorithms trained on egocentric data to reason on data acquired from the human's perspective without the need of adapting them.

3) Action Selection: The action selection module uses the information from associations to provide context to the behaviors module at the reactive level. This context corresponds to entity names which are provided as parameters to the behaviors module, for instance pointing at a specific object or using the object linguistic label in the parameterized grammars defined at the reactive level. This module also deals with the scheduling of action plans from the contextual layer according to the current state of the system as explained in the following.

## E. Contextual layer

The contextual layer deals with higher-level cognitive functions that extend the time horizon of the cognitive agent, such as an episodic memory, goal representation, planning and the formation of a persistent autobiographical memory of the robot interaction with the environment. These functions rely on the unified representations of entities acquired at the adaptive level . The contextual layer consists of three functional modules that are described below: 1) episodic memory , 2) goals and action plans , and 3) autobiographical memory used to generate a narrative structure.

1) Episodic Memory: The episodic memory relies on advanced functions of the object property collector (OPC) to store and associate information about entities in a uniform format based on the interrogative words 'who' (is acting), 'what' (they are doing), 'where' (it happens), 'when' (it happens), 'why' (it is happening) and 'how' called an H5W data structure [82]. It is used for goal representation and as elements of the autobiographical memory . H5W have been argued to be the main questions any conscious being must answer to survive in the world [99], [100].

The concept of relations is the core of the H5W framework. It links up to five concepts and assigns them with semantic roles to form a solution to the H5W problem. We define a relation as a set of five edges connecting those nodes in a directed and labeled manner. The labels of those edges are chosen so that the relation models a typical sentence from the English grammar of the form: Relation → Subject Verb [Object] [Place] [Time]. The brackets indicate that the components are optional; the minimal relation is therefore composed of two entities representing a subject and a verb.

2) Goals and action plans: Goals can be provided to the iCub from human speech, and a meaning is extracted by the language reservoir handler , forming the representation of a goal in the goals module. Each goal consequently refers to the appropriately predefined action plan, defined as a state transition graph with states represented by nodes and actions represented by edges of the graph. The action plans module extracts sequences of actions from this graph, with each action being associated with a pre- and a post-condition state. Goals and action plans can be parameterized by the name of a considered entity. For example, if the human asks the iCub to take the cube, this loads an action plan for the goal 'Take an object' which consists of two actions: 'Ask the human to bring the object closer' and 'Pull the object'. In this case, each action is associated with a pre- and post-condition state in the form of a region in the space where the object is located. In the action selection module of the adaptive layer, the plan is instantiated toward a specific object according to the knowledge retrieved from the associations module (e.g. allowing to retrieve the current position of the cube). The minimal sequence of actions achieving the goal is then executed according to the perceived current state updated in real-time, repeating each action until its post-condition is met (or cease making the effort after a predefined timeout).

Although quite rigid in its current implementation, in the sense that action plans are predefined instead of being learned from the interaction, this planning ability allows closing the loop of the whole architecture, where drive regulation mechanisms at the reactive layer can now be bypassed through contextual goal-oriented behavior. Limitations of this system are discussed in Section V: Conclusions.

3) Autobiographical Memory: The autobiographical memory (ABM [101], [102], [103]) collects long term information (days, months, years) about interactions motivated by the human declarative long term memory situated in the medial temporal lobe, and the distinction between facts and events

[101]. It stores data (e.g. objects locations, human presence) from the beginning to the end of an episode by taking snapshots of the environmental information from the episodic memory containing the pre-conditions and effects of episodes. This allows the generation of high-level concepts extracted by knowledge-based reasoning. In addition, the ABM captures continuous information during an episode (e.g. images from the camera, joints values) [102], which can be used by reasoning modules focusing on the action itself, leading to the production of a procedural memory (e.g. through learning from motor babbling or imitation) [103].

The narrative structure learning module builds on the language processing and ABM capabilities. Narrative structure learning occurs in three phases: 1) First the iCub acquires experience in a given scenario, which generates the meaning representation in the ABM. 2) The iCub then formats each story in term of initial states, goal states, actions and results (IGARF graph [89]). 3) The human then provides a narration (that is understood using the reservoir system explained in Section III-D) for the scenario.

By mapping the events of the narration to the event of the story, the robot can extract the meaning of different discourse functions words (such as 'because'). It can thus automatically generate the corresponding form-meaning mapping that defines the individual grammatical constructions and their sequencing that defines the narrative construction of a new narrative.

## F. Summary on the DAC-h3 architecture

The DAC-h3 architecture, therefore, integrates several stateof-the-art algorithms for cognitive robotics and integrates them into a structured cognitive architecture grounded in the principles of the DAC theory. The drive reduction mechanisms in the reactive layer allow a complex control of the iCub robot which proactively interacts with humans. In turn, this allows the bootstrapping of adaptive learning of multimodal representations about entities in the adaptive layer . Those representations form the basis of an episodic memory for goaloriented behavior through planning in the contextual layer . The life-long interaction of the robot with humans continuously feed an autobiographical memory able to retrieve past experience from request and to express it verbally in a narrative. Altogether, this allows the iCub to interact with humans in complex scenarios, as described in the next section.

## IV. EXPERIMENTAL RESULTS

This section validates the cognitive architecture described in the previous section on a real demonstration with an iCub humanoid robot interacting with objects and a human. We first describe the experimental setup, followed by the behaviors provided to the robot (self-generated and human-requested). Finally, we analyze the DAC-h3 system in two ways: a complete version reporting the full complexity of the system through multiple video demonstrations and the detailed analysis of a particular interaction, as well as a simplified version showing the effect of the robot's proactivity level on naive users.

The code for reproducing these experiments on any iCub robot is available open-source at https://github.com/robotology/

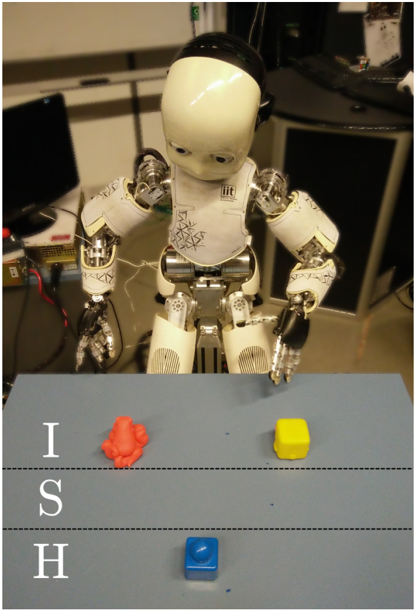

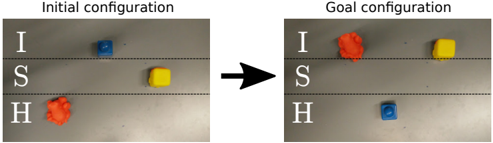

Figure 3. The setup consists of an iCub robot interacting with objects on the table and a human in front of it. The table is separated (indicated by horizontal lines) into three areas: I for the area only reachable by the iCub, S for the shared area, and H for the human-only area (compare with Section IV-A).

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Photograph: Humanoid Robot with Objects on Table

### Overview

The image is a photograph depicting a small, white humanoid robot standing behind a light blue table. On the table are three distinct objects. The scene appears to be set in a laboratory or workshop environment. A vertical text overlay is present on the left side of the image.

### Components & Spatial Layout

**1. Primary Subject (Robot):**

* **Position:** Centered in the upper half of the frame, behind the table.

* **Description:** A child-sized humanoid robot with a white plastic head and torso. The head has large, dark, circular eye sensors. The torso features visible mechanical joints, wiring, and some black markings or signatures on the chest plate. The arms are articulated with metallic, skeletal hands. The lower body consists of mechanical legs. The robot's posture is slightly hunched, with its head tilted downward, appearing to look at the objects on the table.

**2. Table and Objects:**

* **Table:** A flat, light blue surface occupying the lower half of the image. Two faint, horizontal dashed lines are visible across its width.

* **Object 1 (Top Left):** An irregularly shaped, orange object. Its form is organic and non-geometric, resembling a crumpled piece of material or a 3D-printed abstract shape.

* **Object 2 (Top Right):** A bright yellow cube. It appears to be a standard, solid block.

* **Object 3 (Bottom Center):** A bright blue cube, similar in size to the yellow one. It is positioned closer to the viewer, below the dashed lines.

**3. Text Overlay:**

* **Content:** The capital letters "I", "S", and "H" are stacked vertically.

* **Language:** English.

* **Position:** Located in the bottom-left quadrant of the image, superimposed over the blue table surface. The letters are white with a slight shadow or outline for contrast.

* **Transcription:** `I` (top), `S` (middle), `H` (bottom).

**4. Background Environment:**

* **Left Background:** A dark computer monitor (off or displaying black) on a desk. Various cables and a power strip are visible.

* **Right Background:** A dark cabinet or piece of equipment. On top of it sits a white box or container with some indistinct markings.

* **General Setting:** The background is slightly out of focus, suggesting a shallow depth of field focused on the robot and table. The lighting is artificial and functional.

### Detailed Analysis

* **Robot's Focus:** The robot's head orientation and eye sensors are directed downward toward the area of the table where the orange and yellow objects are placed.

* **Object Arrangement:** The objects are placed in a triangular formation. The orange and yellow objects are in the "workspace" area above the dashed lines, while the blue object is in a separate zone below the lines.

* **Color Palette:** The scene is dominated by the white of the robot, the light blue of the table, and the primary colors (orange, yellow, blue) of the objects, creating a high-contrast setup likely for visual recognition tasks.

### Key Observations

1. The robot is a physical, articulated machine, not a digital rendering.

2. The objects are simple, distinct in color and shape (one irregular, two cubic), which is typical for robotics manipulation or computer vision experiments.

3. The dashed lines on the table may demarcate specific zones for the experiment (e.g., a "target zone" and a "staging zone").

4. The vertical text "ISH" is an artificial overlay, not part of the physical scene. Its meaning is not explained within the image.

### Interpretation

This image captures a moment in a robotics research or development setting. The setup strongly suggests an experiment in **object recognition, manipulation, or task planning**. The robot's attentive posture implies it is either processing visual input from the scene or is in a paused state between actions.

The choice of objects—an irregular shape versus perfect cubes—is significant. It tests the robot's ability to handle both standardized and unstructured items. The color coding (orange, yellow, blue) is likely for easy visual segmentation by the robot's cameras.

The "ISH" overlay is ambiguous. It could be:

* An acronym for the project, lab, or a specific test (e.g., "Interactive Scene Handling").

* A label or identifier added for documentation purposes.

* A watermark or signature from the source of the image.

The overall scene conveys a controlled, technical environment focused on advancing human-robot interaction or autonomous capabilities. The primary information is visual and contextual; the image documents a specific experimental configuration rather than presenting quantitative data.

</details>

wysiwyd. It consists of all modules described in the last section implemented in either C++ or Python, and relies on the YARP middleware [77] for defining their connections and ensuring their parallel execution in real-time.

## A. Experimental setup

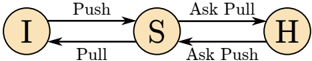

We consider an HRI scenario where the iCub and a human face each other with a table in the middle and objects placed on it. The surface of the table is divided into three distinct areas, as shown in Figure 3:

- 1) an area which is only reachable by the iCub ( I ),

- 2) an area which is only reachable by the human ( H ), and

- 3) an area which is reachable by both agents ( S for Shared ).

The behaviors available to the iCub are the following:

- 'Acquire missing information about an entity', which is described in more detail in Section IV-B1.

- 'Express the acquired knowledge', which is described in more detail in Section IV-B2.

- 'Move an object on the table', either by pushing it from region I to S or pulling it from region S to I ,

- 'Ask the human to move an object', either by asking to push the object from region H to S or by asking to pull it from region S to H .

- 'Show learned representations on screen' while explaining what is being shown, e.g. displaying the robot kinematic structure learned from a previous arm babbling phase.

- 'Interact verbally with the human' while looking at her/him. This is used for replying to some human requests as described in Section IV-C.

These behaviors are implemented in the behaviors module and can be triggered from two distinct pathways as shown in Figure 1. The behaviors for acquiring and expressing knowledge are triggered through the drive reduction mechanisms implemented in the allostatic controller (Section III-C) and are self-generated by the robot. The remaining behaviors are triggered from the action selection module (Section III-D), scheduling action sequences from the goals and action plans modules (Section III-E). In the context of the experiments described in this section, these behaviors are requested by the human partner. We describe these two pathways in the two following subsections.

## B. Self-generated behavior

Two drives for knowledge acquisition and knowledge expression implement the interaction engine of the robot (see Section III-C). They regulate the knowledge acquisition process of the iCub and proactively maintain the interaction with the human. The generated sensorimotor data feeds the adaptive layer of the cognitive architecture to acquire multimodal information about the present entities (see Section III-D). In the current experiment, the entities are objects on the table, body parts (fingers of the iCub), human partners, and actions. The acquired multimodal information depends on the considered entity. Object representations are based on visual categorization and stereo-vision based 3D localization performed by the object recognition functional module. Body part representations associate motor and touch events. Agents and actions representations are learned from visual input in the synthetic sensory memory module presented in Section III-D1. Each entity is also associated with a linguistic label learned by self-regulating the two drives detailed below.

1) Drive to acquire knowledge: This drive maintains a curiosity-driven exploration of the environment by proactively requesting the human to provide information about the present entities, e.g. naming an object or touching a body part. The drive level decays proportionally to the amount of missing information about the present entities (e.g. the unknown name of an entity). When below a given threshold, it triggers a behavior following a generic pattern of interaction, instantiated according to the nature of the knowledge to be acquired. It begins with a behavior to obtain a joint attention between the human and the robot toward the entity that the robot wants to learn about. After the attention has been attracted toward the desired entity, the iCub asks for the missing information (e.g. the name of an object or of the human, or in the case of a body part the name and touch information) and the human replies accordingly. In a third step, this information is passed to the adaptive layer and the knowledge of the robot is updated in consequence.

Each time the drive level reaches the threshold, an entity is chosen in a pseudo-random way within the set of perceived entities with missing information, with a priority to request the name of a detected unknown human partner. Once a new agent enters the scene, the iCub asks for her/his name, which is stored alongside representations of its face in the synthetic sensory memory module. Similarly, the robot stores all objects it has previously encountered in its episodic memory implemented by the object property collector module. When the chosen entity is an object, the robot asks the human to provide the name of interest while pointing at it. Then, the visual representation of the object computed by the object recognition module is mapped to the name. When the chosen entity is a body part (left-hand fingers), the iCub first raises its hand and moves a random finger to attract the attention of the human. Then it asks for the name of that body part. This provides a mapping between the robot's joint identifier and the joint's name. This mapping can be extended to include tactile information by asking the human to touch the body part which is being moved by the robot.

Once a behavior has been triggered, the drive is reset to its default value and decays again as explained above (the amount of the decay being reduced according to what has been acquired).

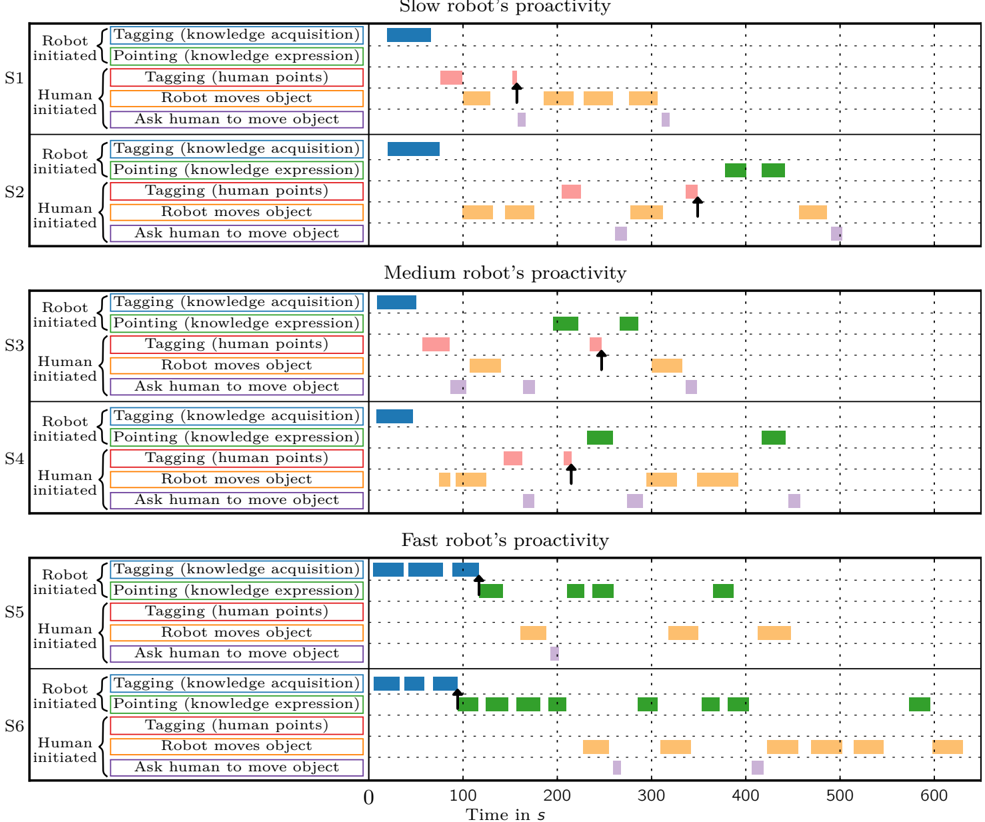

2) Drive to express knowledge: This drive regulates how the iCub expresses the acquired knowledge through synchronized speech, pointing and gaze. It aims at maintaining the interaction with the human by proactively informing her/him about its current state of knowledge. The drive level decays proportionally to the amount of already acquired information about the present entities. When below a given threshold (meaning that a significant amount of information has been acquired), it triggers a behavior alternating gazing toward the human and a known entity, synchronized with speech expressing the knowledge verbally, e.g. 'This is the octopus', or 'I know you, you are Daniel'. Once such a behavior has been triggered, the drive is reset to its default value and decays again as explained above (the amount of the decay changing according to what is learned by satisfying the drive for knowledge acquisition).