## A Tutorial on Thompson Sampling

Daniel J. Russo 1 , Benjamin Van Roy 2 , Abbas Kazerouni 2 , Ian Osband 3 and Zheng Wen 4

1 Columbia University

2 Stanford University

3 Google DeepMind

4 Adobe Research

## ABSTRACT

Thompson sampling is an algorithm for online decision problems where actions are taken sequentially in a manner that must balance between exploiting what is known to maximize immediate performance and investing to accumulate new information that may improve future performance. The algorithm addresses a broad range of problems in a computationally efficient manner and is therefore enjoying wide use. This tutorial covers the algorithm and its application, illustrating concepts through a range of examples, including Bernoulli bandit problems, shortest path problems, product recommendation, assortment, active learning with neural networks, and reinforcement learning in Markov decision processes. Most of these problems involve complex information structures, where information revealed by taking an action informs beliefs about other actions. We will also discuss when and why Thompson sampling is or is not effective and relations to alternative algorithms.

In memory of Arthur F. Veinott, Jr.

## 1 Introduction

The multi-armed bandit problem has been the subject of decades of intense study in statistics, operations research, electrical engineering, computer science, and economics. A 'one-armed bandit' is a somewhat antiquated term for a slot machine, which tends to 'rob' players of their money. The colorful name for our problem comes from a motivating story in which a gambler enters a casino and sits down at a slot machine with multiple levers, or arms, that can be pulled. When pulled, an arm produces a random payout drawn independently of the past. Because the distribution of payouts corresponding to each arm is not listed, the player can learn it only by experimenting. As the gambler learns about the arms' payouts, she faces a dilemma: in the immediate future she expects to earn more by exploiting arms that yielded high payouts in the past, but by continuing to explore alternative arms she may learn how to earn higher payouts in the future. Can she develop a sequential strategy for pulling arms that balances this tradeoff and maximizes the cumulative payout earned? The following Bernoulli bandit problem is a canonical example.

Example 1.1. ( Bernoulli Bandit ) Suppose there are K actions, and when played, any action yields either a success or a failure. Action

k ∈ { 1 , ..., K } produces a success with probability θ k ∈ [0 , 1]. The success probabilities ( θ 1 , .., θ K ) are unknown to the agent, but are fixed over time, and therefore can be learned by experimentation. The objective, roughly speaking, is to maximize the cumulative number of successes over T periods, where T is relatively large compared to the number of arms K .

The 'arms' in this problem might represent different banner ads that can be displayed on a website. Users arriving at the site are shown versions of the website with different banner ads. A success is associated either with a click on the ad, or with a conversion (a sale of the item being advertised). The parameters θ k represent either the click-throughrate or conversion-rate among the population of users who frequent the site. The website hopes to balance exploration and exploitation in order to maximize the total number of successes.

A naive approach to this problem involves allocating some fixed fraction of time periods to exploration and in each such period sampling an arm uniformly at random, while aiming to select successful actions in other time periods. We will observe that such an approach can be quite wasteful even for the simple Bernoulli bandit problem described above and can fail completely for more complicated problems.

Problems like the Bernoulli bandit described above have been studied in the decision sciences since the second world war, as they crystallize the fundamental trade-off between exploration and exploitation in sequential decision making. But the information revolution has created significant new opportunities and challenges, which have spurred a particularly intense interest in this problem in recent years. To understand this, let us contrast the Internet advertising example given above with the problem of choosing a banner ad to display on a highway. A physical banner ad might be changed only once every few months, and once posted will be seen by every individual who drives on the road. There is value to experimentation, but data is limited, and the cost of of trying a potentially ineffective ad is enormous. Online, a different banner ad can be shown to each individual out of a large pool of users, and data from each such interaction is stored. Small-scale experiments are now a core tool at most leading Internet companies.

Our interest in this problem is motivated by this broad phenomenon. Machine learning is increasingly used to make rapid data-driven decisions. While standard algorithms in supervised machine learning learn passively from historical data, these systems often drive the generation of their own training data through interacting with users. An online recommendation system, for example, uses historical data to optimize current recommendations, but the outcomes of these recommendations are then fed back into the system and used to improve future recommendations. As a result, there is enormous potential benefit in the design of algorithms that not only learn from past data, but also explore systemically to generate useful data that improves future performance. There are significant challenges in extending algorithms designed to address Example 1.1 to treat more realistic and complicated decision problems. To understand some of these challenges, consider the problem of learning by experimentation to solve a shortest path problem.

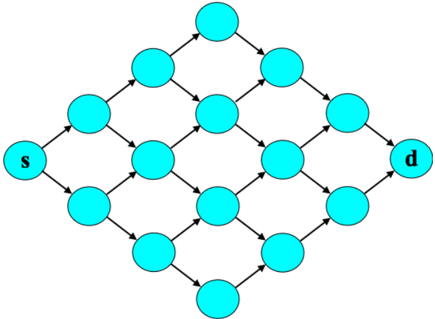

Example 1.2. (Online Shortest Path) An agent commutes from home to work every morning. She would like to commute along the path that requires the least average travel time, but she is uncertain of the travel time along different routes. How can she learn efficiently and minimize the total travel time over a large number of trips?

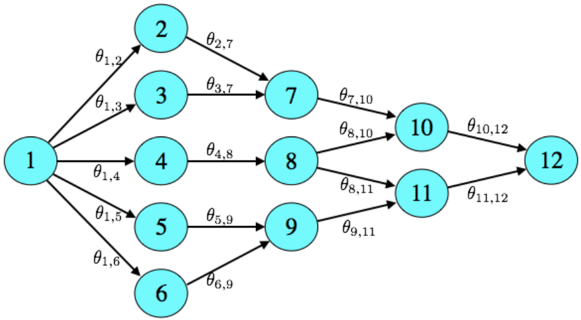

Figure 1.1: Shortest path problem.

<details>

<summary>Image 1 Details</summary>

### Visual Description

\n

## Diagram: Directed Acyclic Graph (DAG)

### Overview

The image depicts a directed acyclic graph (DAG) consisting of 12 nodes, numbered 1 through 12, connected by directed edges. Each edge is labeled with a theta value (θ), representing a parameter or weight associated with the connection. The graph appears to represent a sequence of dependencies or a probabilistic model.

### Components/Axes

The diagram consists of:

* **Nodes:** 12 circular nodes labeled 1 to 12.

* **Edges:** Directed arrows connecting the nodes.

* **Edge Labels:** Each edge is labeled with a theta value (θ) in the format θ<sub>i,j</sub>, where 'i' is the source node and 'j' is the destination node.

### Detailed Analysis or Content Details

The graph's structure and edge labels are as follows:

* Node 1 has outgoing edges to nodes 2, 3, 4, 5, and 6.

* Edge 1 -> 2: θ<sub>1,2</sub>

* Edge 1 -> 3: θ<sub>1,3</sub>

* Edge 1 -> 4: θ<sub>1,4</sub>

* Edge 1 -> 5: θ<sub>1,5</sub>

* Edge 1 -> 6: θ<sub>1,6</sub>

* Node 2 has an outgoing edge to node 7.

* Edge 2 -> 7: θ<sub>2,7</sub>

* Node 3 has an outgoing edge to node 7.

* Edge 3 -> 7: θ<sub>3,7</sub>

* Node 4 has an outgoing edge to node 8.

* Edge 4 -> 8: θ<sub>4,8</sub>

* Node 5 has an outgoing edge to node 9.

* Edge 5 -> 9: θ<sub>5,9</sub>

* Node 6 has an outgoing edge to node 9.

* Edge 6 -> 9: θ<sub>6,9</sub>

* Node 7 has an outgoing edge to node 10.

* Edge 7 -> 10: θ<sub>7,10</sub>

* Node 8 has outgoing edges to nodes 10 and 11.

* Edge 8 -> 10: θ<sub>8,10</sub>

* Edge 8 -> 11: θ<sub>8,11</sub>

* Node 9 has an outgoing edge to node 11.

* Edge 9 -> 11: θ<sub>9,11</sub>

* Node 10 has an outgoing edge to node 12.

* Edge 10 -> 12: θ<sub>10,12</sub>

* Node 11 has an outgoing edge to node 12.

* Edge 11 -> 12: θ<sub>11,12</sub>

### Key Observations

The graph is a DAG, meaning there are no cycles. Node 1 is the root node, and Node 12 is the terminal node. The graph represents a branching structure where information or probability flows from Node 1 to Node 12 through various paths. The theta values represent the strength or weight of each connection.

### Interpretation

This diagram likely represents a Bayesian network or a similar probabilistic graphical model. The nodes represent random variables, and the edges represent conditional dependencies between them. The theta values (θ<sub>i,j</sub>) could represent conditional probabilities or weights in a machine learning model. The structure suggests that the state of Node 1 influences the states of all other nodes, and the final state is represented by Node 12. The branching structure allows for multiple paths from Node 1 to Node 12, indicating that Node 12's state is influenced by multiple factors. Without knowing the specific context, it's difficult to determine the exact meaning of the nodes and edges, but the diagram provides a clear visual representation of a complex dependency structure. The diagram is a visual representation of a system where the value of a node is dependent on the values of its predecessors.

</details>

We can formalize this as a shortest path problem on a graph G = ( V, E ) with vertices V = { 1 , ..., N } and edges E . An example is illustrated in Figure 1.1. Vertex 1 is the source (home) and vertex N is the destination (work). Each vertex can be thought of as an intersection, and for two vertices i, j ∈ V , an edge ( i, j ) ∈ E is present if there is a direct road connecting the two intersections. Suppose that traveling along an edge e ∈ E requires time θ e on average. If these parameters were known, the agent would select a path ( e 1 , .., e n ), consisting of a sequence of adjacent edges connecting vertices 1 and N , such that the expected total time θ e 1 + ... + θ e n is minimized. Instead, she chooses paths in a sequence of periods. In period t , the realized time y t,e to traverse edge e is drawn independently from a distribution with mean θ e . The agent sequentially chooses a path x t , observes the realized travel time ( y t,e ) e ∈ x t along each edge in the path, and incurs cost c t = ∑ e ∈ x t y t,e equal to the total travel time. By exploring intelligently, she hopes to minimize cumulative travel time ∑ T t =1 c t over a large number of periods T .

This problem is conceptually similar to the Bernoulli bandit in Example 1.1, but here the number of actions is the number of paths in the graph, which generally scales exponentially in the number of edges. This raises substantial challenges. For moderate sized graphs, trying each possible path would require a prohibitive number of samples, and algorithms that require enumerating and searching through the set of all paths to reach a decision will be computationally intractable. An efficient approach therefore needs to leverage the statistical and computational structure of problem.

In this model, the agent observes the travel time along each edge traversed in a given period. Other feedback models are also natural: the agent might start a timer as she leaves home and checks it once she arrives, effectively only tracking the total travel time of the chosen path. This is closer to the Bernoulli bandit model, where only the realized reward (or cost) of the chosen arm was observed. We have also taken the random edge-delays y t,e to be independent, conditioned on θ e . A more realistic model might treat these as correlated random variables, reflecting that neighboring roads are likely to be congested at the same time. Rather than design a specialized algorithm for each possible statistical

model, we seek a general approach to exploration that accommodates flexible modeling and works for a broad array of problems. We will see that Thompson sampling accommodates such flexible modeling, and offers an elegant and efficient approach to exploration in a wide range of structured decision problems, including the shortest path problem described here.

Thompson sampling - also known as posterior sampling and probability matching - was first proposed in 1933 (Thompson, 1933; Thompson, 1935) for allocating experimental effort in two-armed bandit problems arising in clinical trials. The algorithm was largely ignored in the academic literature until recently, although it was independently rediscovered several times in the interim (Wyatt, 1997; Strens, 2000) as an effective heuristic. Now, more than eight decades after it was introduced, Thompson sampling has seen a surge of interest among industry practitioners and academics. This was spurred partly by two influential articles that displayed the algorithm's strong empirical performance (Chapelle and Li, 2011; Scott, 2010). In the subsequent five years, the literature on Thompson sampling has grown rapidly. Adaptations of Thompson sampling have now been successfully applied in a wide variety of domains, including revenue management (Ferreira et al. , 2015), marketing (Schwartz et al. , 2017), web site optimization (Hill et al. , 2017), Monte Carlo tree search (Bai et al. , 2013), A/B testing (Graepel et al. , 2010), Internet advertising (Graepel et al. , 2010; Agarwal, 2013; Agarwal et al. , 2014), recommendation systems (Kawale et al. , 2015), hyperparameter tuning (Kandasamy et al. , 2018), and arcade games (Osband et al. , 2016a); and have been used at several companies, including Adobe, Amazon (Hill et al. , 2017), Facebook, Google (Scott, 2010; Scott, 2015), LinkedIn (Agarwal, 2013; Agarwal et al. , 2014), Microsoft (Graepel et al. , 2010), Netflix, and Twitter.

The objective of this tutorial is to explain when, why, and how to apply Thompson sampling. A range of examples are used to demonstrate how the algorithm can be used to solve a variety of problems and provide clear insight into why it works and when it offers substantial benefit over naive alternatives. The tutorial also provides guidance on approximations to Thompson sampling that can simplify computation

as well as practical considerations like prior distribution specification, safety constraints and nonstationarity. Accompanying this tutorial we also release a Python package 1 that reproduces all experiments and figures presented. This resource is valuable not only for reproducible research, but also as a reference implementation that may help practioners build intuition for how to practically implement some of the ideas and algorithms we discuss in this tutorial. A concluding section discusses theoretical results that aim to develop an understanding of why Thompson sampling works, highlights settings where Thompson sampling performs poorly, and discusses alternative approaches studied in recent literature. As a baseline and backdrop for our discussion of Thompson sampling, we begin with an alternative approach that does not actively explore.

1 Python code and documentation is available at https://github.com/iosband/ ts\_tutorial.

## Greedy Decisions

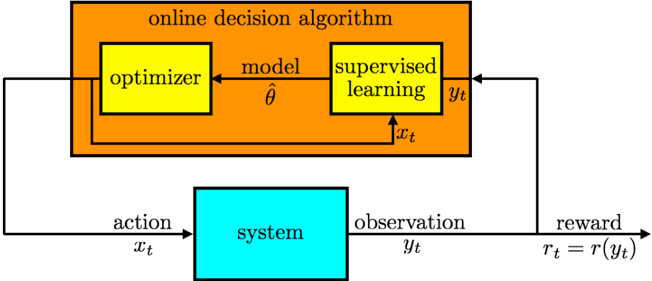

Greedy algorithms serve as perhaps the simplest and most common approach to online decision problems. The following two steps are taken to generate each action: (1) estimate a model from historical data and (2) select the action that is optimal for the estimated model, breaking ties in an arbitrary manner. Such an algorithm is greedy in the sense that an action is chosen solely to maximize immediate reward. Figure 2.1 illustrates such a scheme. At each time t , a supervised learning algorithm fits a model to historical data pairs H t -1 = (( x 1 , y 1 ) , . . . , ( x t -1 , y t -1 )), generating an estimate ˆ θ of model parameters. The resulting model can then be used to predict the reward r t = r ( y t ) from applying action x t . Here, y t is an observed outcome, while r is a known function that represents the agent's preferences. Given estimated model parameters ˆ θ , an optimization algorithm selects the action x t that maximizes expected reward, assuming that θ = ˆ θ . This action is then applied to the exogenous system and an outcome y t is observed.

A shortcoming of the greedy approach, which can severely curtail performance, is that it does not actively explore. To understand this issue, it is helpful to focus on the Bernoulli bandit setting of Example 1.1. In that context, the observations are rewards, so r t = r ( y t ) = y t .

Figure 2.1: Online decision algorithm.

<details>

<summary>Image 2 Details</summary>

### Visual Description

\n

## Diagram: Online Decision Algorithm System

### Overview

The image depicts a diagram illustrating an online decision algorithm interacting with a "system". The algorithm consists of an optimizer and a supervised learning model, and the interaction involves actions, observations, and rewards. The diagram shows the flow of information between the algorithm and the system.

### Components/Axes

The diagram consists of two main blocks:

1. **Online Decision Algorithm:** A large orange rectangle labeled "online decision algorithm". This block contains two smaller blocks: "optimizer" and "supervised learning".

2. **System:** A turquoise rectangle labeled "system".

The following labels are present, indicating data flow:

* **x<sub>t</sub>**: Action input to the system.

* **y<sub>t</sub>**: Observation output from the system.

* **r<sub>t</sub> = r(y<sub>t</sub>)**: Reward calculated from the observation.

* **â** : Model parameter estimate.

* **x<sub>t</sub>**: Action input to the supervised learning model.

### Detailed Analysis or Content Details

The diagram shows the following flow:

1. The "online decision algorithm" receives an observation (y<sub>t</sub>) from the "system".

2. The observation (y<sub>t</sub>) is fed into the "supervised learning" block.

3. The "supervised learning" block outputs a model parameter estimate (â) to the "optimizer".

4. The "optimizer" adjusts the model based on the observation and outputs an action (x<sub>t</sub>) to the "system".

5. The "system" receives the action (x<sub>t</sub>) and produces an observation (y<sub>t</sub>) and a reward (r<sub>t</sub>).

6. The reward (r<sub>t</sub>) is fed back into the "online decision algorithm" to influence future actions.

The reward is defined as r<sub>t</sub> = r(y<sub>t</sub>), indicating that the reward is a function of the observation.

### Key Observations

The diagram illustrates a closed-loop system where the algorithm learns from the environment (the "system") through trial and error. The "supervised learning" component suggests that the algorithm uses observed data to improve its model, while the "optimizer" component suggests that the algorithm adjusts its parameters to maximize the reward.

### Interpretation

This diagram represents a reinforcement learning framework. The "online decision algorithm" acts as an agent that interacts with an environment (the "system"). The agent takes actions (x<sub>t</sub>), receives observations (y<sub>t</sub>) and rewards (r<sub>t</sub>), and learns to optimize its actions to maximize the cumulative reward. The use of "supervised learning" within the algorithm suggests a model-based reinforcement learning approach, where the agent learns a model of the environment and uses this model to predict future outcomes. The feedback loop between the algorithm and the system is crucial for the learning process, as it allows the algorithm to adapt to the environment and improve its performance over time. The equation r<sub>t</sub> = r(y<sub>t</sub>) highlights that the reward is directly tied to the state of the environment as observed by the agent. This is a common setup in reinforcement learning where the reward function defines the goal of the agent.

</details>

At each time t , a greedy algorithm would generate an estimate ˆ θ k of the mean reward for each k th action, and select the action that attains the maximum among these estimates.

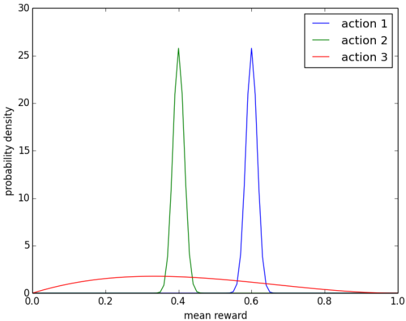

Suppose there are three actions with mean rewards θ ∈ R 3 . In particular, each time an action k is selected, a reward of 1 is generated with probability θ k . Otherwise, a reward of 0 is generated. The mean rewards are not known to the agent. Instead, the agent's beliefs in any given time period about these mean rewards can be expressed in terms of posterior distributions. Suppose that, conditioned on the observed history H t -1 , posterior distributions are represented by the probability density functions plotted in Figure 2.2. These distributions represent beliefs after the agent tries actions 1 and 2 one thousand times each, action 3 three times, receives cumulative rewards of 600, 400, and 1, respectively, and synthesizes these observations with uniform prior distributions over mean rewards of each action. They indicate that the agent is confident that mean rewards for actions 1 and 2 are close to their expectations of approximately 0 . 6 and 0 . 4. On the other hand, the agent is highly uncertain about the mean reward of action 3, though he expects 0 . 4.

The greedy algorithm would select action 1, since that offers the maximal expected mean reward. Since the uncertainty around this expected mean reward is small, observations are unlikely to change the expectation substantially, and therefore, action 1 is likely to be selected

Figure 2.2: Probability density functions over mean rewards.

<details>

<summary>Image 3 Details</summary>

### Visual Description

\n

## Line Chart: Probability Density of Mean Reward for Different Actions

### Overview

The image presents a line chart illustrating the probability density of the mean reward for three different actions. The x-axis represents the mean reward, ranging from 0.0 to 1.0, while the y-axis represents the probability density, ranging from 0.0 to 30.0. Three distinct lines, each representing an action, depict the distribution of mean rewards.

### Components/Axes

* **X-axis Title:** "mean reward"

* **Y-axis Title:** "probability density"

* **X-axis Range:** 0.0 to 1.0

* **Y-axis Range:** 0.0 to 30.0

* **Legend:** Located in the top-right corner.

* "action 1" - Blue line

* "action 2" - Red line

* "action 3" - Green line

### Detailed Analysis

* **Action 1 (Blue Line):** This line exhibits a unimodal distribution, peaking at approximately 0.65 with a probability density of around 26. The line slopes downward on both sides of the peak, approaching a probability density of 0 at the extremes of the x-axis.

* **Action 2 (Red Line):** This line shows a relatively flat distribution with a slight dip in the center. The probability density remains consistently low, fluctuating between approximately 2 and 8 across the entire range of mean rewards. It appears to have a very broad distribution.

* **Action 3 (Green Line):** This line also displays a unimodal distribution, peaking at approximately 0.4 with a probability density of around 25. Similar to Action 1, the line slopes downward on both sides of the peak, approaching a probability density of 0 at the extremes of the x-axis.

Here's a breakdown of approximate data points:

| Action | Mean Reward | Probability Density |

| :------- | :---------- | :------------------ |

| Action 1 | 0.2 | ~2 |

| Action 1 | 0.4 | ~10 |

| Action 1 | 0.6 | ~25 |

| Action 1 | 0.8 | ~10 |

| Action 2 | 0.2 | ~4 |

| Action 2 | 0.4 | ~6 |

| Action 2 | 0.6 | ~4 |

| Action 2 | 0.8 | ~6 |

| Action 3 | 0.2 | ~5 |

| Action 3 | 0.4 | ~25 |

| Action 3 | 0.6 | ~10 |

| Action 3 | 0.8 | ~5 |

### Key Observations

* Action 1 and Action 3 have similar peak probability densities, but their peaks are located at different mean reward values (0.65 and 0.4, respectively).

* Action 2 has a significantly lower probability density across all mean reward values compared to Action 1 and Action 3.

* The distributions for Action 1 and Action 3 are relatively narrow and concentrated around their respective peaks, indicating a higher certainty in the mean reward for those actions.

* Action 2's distribution is broad and flat, suggesting a high degree of uncertainty in the mean reward.

### Interpretation

The chart suggests that Actions 1 and 3 are more likely to yield higher mean rewards than Action 2. Action 1 is centered around a mean reward of 0.65, while Action 3 is centered around 0.4. Action 2, however, has a low probability density across the entire range, indicating it is less likely to provide a substantial reward. The narrow distributions of Actions 1 and 3 suggest that the rewards are more predictable, while the broad distribution of Action 2 indicates a higher level of risk or variability. This data could be used to inform a decision-making process, favoring Actions 1 and 3 over Action 2. The difference in peak locations between Action 1 and Action 3 suggests that different strategies or approaches may lead to different expected rewards.

</details>

ad infinitum . It seems reasonable to avoid action 2, since it is extremely unlikely that θ 2 > θ 1 . On the other hand, if the agent plans to operate over many time periods, it should try action 3. This is because there is some chance that θ 3 > θ 1 , and if this turns out to be the case, the agent will benefit from learning that and applying action 3. To learn whether θ 3 > θ 1 , the agent needs to try action 3, but the greedy algorithm will unlikely ever do that. The algorithm fails to account for uncertainty in the mean reward of action 3, which should entice the agent to explore and learn about that action.

Dithering is a common approach to exploration that operates through randomly perturbing actions that would be selected by a greedy algorithm. One version of dithering, called /epsilon1 -greedy exploration , applies the greedy action with probability 1 -/epsilon1 and otherwise selects an action uniformly at random. Though this form of exploration can improve behavior relative to a purely greedy approach, it wastes resources by failing to 'write off' actions regardless of how unlikely they are to be optimal. To understand why, consider again the posterior distributions of Figure 2.2. Action 2 has almost no chance of being optimal, and therefore, does not deserve experimental trials, while the uncertainty surrounding action 3 warrants exploration. However, /epsilon1 -greedy explo-

ration would allocate an equal number of experimental trials to each action. Though only half of the exploratory actions are wasted in this example, the issue is exacerbated as the number of possible actions increases. Thompson sampling, introduced more than eight decades ago (Thompson, 1933), provides an alternative to dithering that more intelligently allocates exploration effort.

## Thompson Sampling for the Bernoulli Bandit

To digest how Thompson sampling (TS) works, it is helpful to begin with a simple context that builds on the Bernoulli bandit of Example 1.1 and incorporates a Bayesian model to represent uncertainty.

Example 3.1. (Beta-Bernoulli Bandit) Recall the Bernoulli bandit of Example 1.1. There are K actions. When played, an action k produces a reward of one with probability θ k and a reward of zero with probability 1 -θ k . Each θ k can be interpreted as an action's success probability or mean reward. The mean rewards θ = ( θ 1 , ..., θ K ) are unknown, but fixed over time. In the first period, an action x 1 is applied, and a reward r 1 ∈ { 0 , 1 } is generated with success probability P ( r 1 = 1 | x 1 , θ ) = θ x 1 . After observing r 1 , the agent applies another action x 2 , observes a reward r 2 , and this process continues.

Let the agent begin with an independent prior belief over each θ k . Take these priors to be beta-distributed with parameters α = ( α 1 , . . . , α K ) and β ∈ ( β 1 , . . . , β K ). In particular, for each action k , the prior probability density function of θ k is

$$p ( \theta _ { k } ) = \frac { \Gamma ( \alpha _ { k } + \beta _ { k } ) } { \Gamma ( \alpha _ { k } ) \Gamma ( \beta _ { k } ) } \theta _ { k } ^ { \alpha _ { k } - 1 } ( 1 - \theta _ { k } ) ^ { \beta _ { k } - 1 } ,$$

where Γ denotes the gamma function. As observations are gathered, the distribution is updated according to Bayes' rule. It is particularly convenient to work with beta distributions because of their conjugacy properties. In particular, each action's posterior distribution is also beta with parameters that can be updated according to a simple rule:

/negationslash

$$( \alpha _ { k } , \beta _ { k } ) \leftarrow \begin{cases} ( \alpha _ { k } , \beta _ { k } ) & i f x _ { t } \neq k \\ ( \alpha _ { k } , \beta _ { k } ) + ( r _ { t } , 1 - r _ { t } ) & i f x _ { t } = k . \end{cases}$$

Note that for the special case of α k = β k = 1, the prior p ( θ k ) is uniform over [0 , 1]. Note that only the parameters of a selected action are updated. The parameters ( α k , β k ) are sometimes called pseudocounts, since α k or β k increases by one with each observed success or failure, respectively. A beta distribution with parameters ( α k , β k ) has mean α k / ( α k + β k ), and the distribution becomes more concentrated as α k + β k grows. Figure 2.2 plots probability density functions of beta distributions with parameters ( α 1 , β 1 ) = (601 , 401), ( α 2 , β 2 ) = (401 , 601), and ( α 3 , β 3 ) = (2 , 3).

Algorithm 3.1 presents a greedy algorithm for the beta-Bernoulli bandit. In each time period t , the algorithm generates an estimate ˆ θ k = α k / ( α k + β k ), equal to its current expectation of the success probability θ k . The action x t with the largest estimate ˆ θ k is then applied, after which a reward r t is observed and the distribution parameters α x t and β x t are updated.

TS, specialized to the case of a beta-Bernoulli bandit, proceeds similarly, as presented in Algorithm 3.2. The only difference is that the success probability estimate ˆ θ k is randomly sampled from the posterior distribution, which is a beta distribution with parameters α k and β k , rather than taken to be the expectation α k / ( α k + β k ). To avoid a common misconception, it is worth emphasizing TS does not sample ˆ θ k from the posterior distribution of the binary value y t that would be observed if action k is selected. In particular, ˆ θ k represents a statistically plausible success probability rather than a statistically plausible observation.

Algorithm 3.1 BernGreedy( K,α,β ) 1: for t = 1 , 2 , . . . do 2: #estimate model: 3: for k = 1 , . . . , K do 4: ˆ θ k ← α k / ( α k + β k ) 5: end for 6: 7: #select and apply action: 8: x t ← argmax k ˆ θ k 9: Apply x t and observe r t 10: 11: #update distribution: 12: ( α x t , β x t ) ← ( α x t + r t , β x t +1 -r t ) 13: end for

## Algorithm 3.2 BernTS( K,α,β )

```

Algorithm 3.2 BernTS(K, \alpha, \beta)

1: for t = 1, 2,... do

2: #sample model:

3: for k = 1, ..., K do

4: Sample $\hat{k}_k^{\theta_k} \quad$ end for

5: end for

7: #select and apply action:

8: x_t <- argmax_{\hat{k}_k}

9: Apply x_t and observe $r_t$

10:

11: end for

```

To understand how TS improves on greedy actions with or without dithering, recall the three armed Bernoulli bandit with posterior distributions illustrated in Figure 2.2. In this context, a greedy action would forgo the potentially valuable opportunity to learn about action 3. With dithering, equal chances would be assigned to probing actions 2 and 3, though probing action 2 is virtually futile since it is extremely unlikely to be optimal. TS, on the other hand would sample actions 1, 2, or 3, with probabilities approximately equal to 0 . 82, 0, and 0 . 18, respectively. In each case, this is the probability that the random estimate drawn for the action exceeds those drawn for other actions. Since these estimates are drawn from posterior distributions, each of these probabilities is also equal to the probability that the corresponding action is optimal, conditioned on observed history. As such, TS explores to resolve uncertainty where there is a chance that resolution will help the agent identify the optimal action, but avoids probing where feedback would not be helpful.

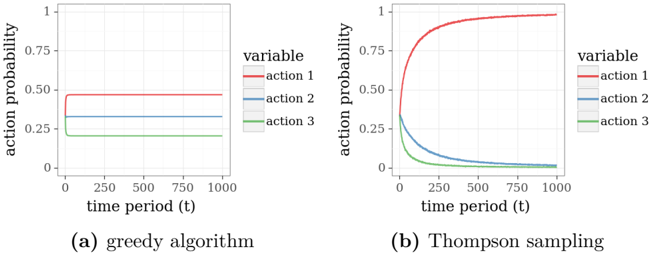

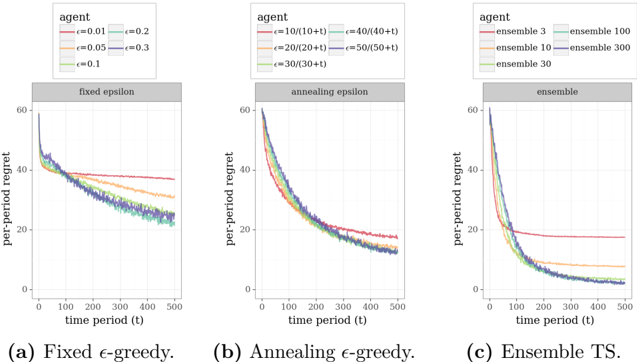

It is illuminating to compare simulated behavior of TS to that of a greedy algorithm. Consider a three-armed beta-Bernoulli bandit with mean rewards θ 1 = 0 . 9, θ 2 = 0 . 8, and θ 3 = 0 . 7. Let the prior distribution over each mean reward be uniform. Figure 3.1 plots results based on ten thousand independent simulations of each algorithm. Each simulation is over one thousand time periods. In each simulation, actions

are randomly rank-ordered for the purpose of tie-breaking so that the greedy algorithm is not biased toward selecting any particular action. Each data point represents the fraction of simulations for which a particular action is selected at a particular time.

Figure 3.1: Probability that the greedy algorithm and Thompson sampling selects an action.

<details>

<summary>Image 4 Details</summary>

### Visual Description

\n

## Line Charts: Action Probability vs. Time Period for Two Algorithms

### Overview

The image presents two line charts comparing the action probability of three actions (Action 1, Action 2, Action 3) over a time period of 1000 units. The left chart (a) represents the "greedy algorithm", while the right chart (b) represents "Thompson sampling". Both charts share the same axes: time period (t) on the x-axis and action probability on the y-axis, ranging from 0 to 1.

### Components/Axes

* **X-axis:** "time period (t)", ranging from 0 to 1000.

* **Y-axis:** "action probability", ranging from 0 to 1.

* **Legend (top-right of both charts):**

* "variable" label.

* "action 1" (represented by a red line).

* "action 2" (represented by a grey line).

* "action 3" (represented by a green line).

* **Chart (a) Title:** "(a) greedy algorithm" positioned below the chart.

* **Chart (b) Title:** "(b) Thompson sampling" positioned below the chart.

### Detailed Analysis or Content Details

**Chart (a): Greedy Algorithm**

* **Action 1 (Red Line):** The line is approximately horizontal, starting at a probability of ~0.52 and remaining relatively constant at ~0.51 throughout the entire time period (0-1000).

* **Action 2 (Grey Line):** The line is approximately horizontal, starting at a probability of ~0.32 and remaining relatively constant at ~0.31 throughout the entire time period (0-1000).

* **Action 3 (Green Line):** The line is approximately horizontal, starting at a probability of ~0.16 and remaining relatively constant at ~0.15 throughout the entire time period (0-1000).

**Chart (b): Thompson Sampling**

* **Action 1 (Red Line):** The line exhibits an upward trend, starting at a probability of ~0.05 at t=0, rapidly increasing to approximately ~0.85 by t=250, and then leveling off to a probability of ~0.82 by t=1000.

* **Action 2 (Grey Line):** The line exhibits a downward trend, starting at a probability of ~0.45 at t=0, decreasing to approximately ~0.15 by t=250, and then leveling off to a probability of ~0.12 by t=1000.

* **Action 3 (Green Line):** The line exhibits a downward trend, starting at a probability of ~0.5 at t=0, decreasing to approximately ~0.03 by t=250, and then leveling off to a probability of ~0.02 by t=1000.

### Key Observations

* The "greedy algorithm" maintains constant action probabilities throughout the time period, indicating a lack of adaptation or learning.

* "Thompson sampling" demonstrates dynamic action probabilities, with Action 1 increasing in probability while Actions 2 and 3 decrease. This suggests that Thompson sampling is learning to favor Action 1 over time.

* The initial probabilities in Thompson sampling are different from the greedy algorithm, showing an initial exploration phase.

### Interpretation

The charts illustrate the difference in behavior between a greedy algorithm and Thompson sampling in a decision-making process. The greedy algorithm consistently selects actions based on their initial probabilities, without adapting to new information. In contrast, Thompson sampling dynamically adjusts action probabilities based on observed outcomes, leading to a convergence towards the optimal action (Action 1 in this case). The initial exploration phase of Thompson sampling is evident in the changing probabilities at the beginning of the time period. The leveling off of the lines in Thompson sampling suggests that the algorithm has reached a stable state where it consistently favors Action 1. This demonstrates the ability of Thompson sampling to balance exploration and exploitation, ultimately leading to better performance than a static greedy approach.

</details>

From the plots, we see that the greedy algorithm does not always converge on action 1, which is the optimal action. This is because the algorithm can get stuck, repeatedly applying a poor action. For example, suppose the algorithm applies action 3 over the first couple time periods and receives a reward of 1 on both occasions. The algorithm would then continue to select action 3, since the expected mean reward of either alternative remains at 0 . 5. With repeated selection of action 3, the expected mean reward converges to the true value of 0 . 7, which reinforces the agent's commitment to action 3. TS, on the other hand, learns to select action 1 within the thousand periods. This is evident from the fact that, in an overwhelmingly large fraction of simulations, TS selects action 1 in the final period.

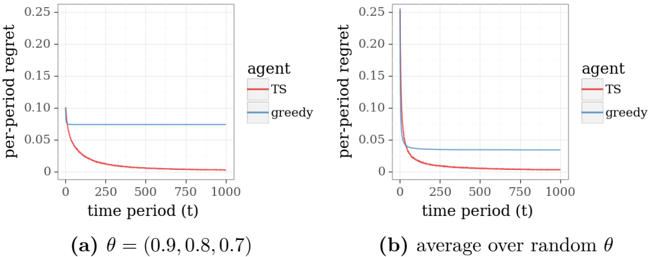

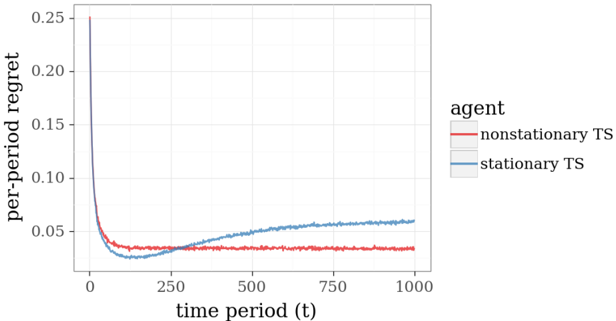

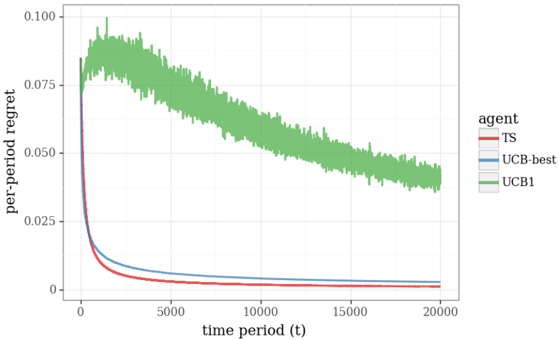

The performance of online decision algorithms is often studied and compared through plots of regret. The per-period regret of an algorithm over a time period t is the difference between the mean reward of an optimal action and the action selected by the algorithm. For the Bernoulli bandit problem, we can write this as regret t ( θ ) = max k θ k -θ x t . Figure 3.2a plots per-period regret realized by the greedy algorithm and TS, again averaged over ten thousand simulations. The average

per-period regret of TS vanishes as time progresses. That is not the case for the greedy algorithm.

Comparing algorithms with fixed mean rewards raises questions about the extent to which the results depend on the particular choice of θ . As such, it is often useful to also examine regret averaged over plausible values of θ . A natural approach to this involves sampling many instances of θ from the prior distributions and generating an independent simulation for each. Figure 3.2b plots averages over ten thousand such simulations, with each action reward sampled independently from a uniform prior for each simulation. Qualitative features of these plots are similar to those we inferred from Figure 3.2a, though regret in Figure 3.2a is generally smaller over early time periods and larger over later time periods, relative to Figure 3.2b. The smaller regret in early time periods is due to the fact that with θ = (0 . 9 , 0 . 8 , 0 . 7), mean rewards are closer than for a typical randomly sampled θ , and therefore the regret of randomly selected actions is smaller. The fact that per-period regret of TS is larger in Figure 3.2a than Figure 3.2b over later time periods, like period 1000, is also a consequence of proximity among rewards with θ = (0 . 9 , 0 . 8 , 0 . 7). In this case, the difference is due to the fact that it takes longer to differentiate actions than it would for a typical randomly sampled θ .

Figure 3.2: Regret from applying greedy and Thompson sampling algorithms to the three-armed Bernoulli bandit.

<details>

<summary>Image 5 Details</summary>

### Visual Description

\n

## Line Chart: Per-Period Regret vs. Time Period for Different Agents

### Overview

The image presents two line charts comparing the per-period regret of two agents, "TS" (Thompson Sampling) and "greedy", over time. The charts differ in the parameter setting used for the agents. The x-axis represents the time period (t), ranging from 0 to 1000. The y-axis represents the per-period regret, ranging from 0 to 0.25.

### Components/Axes

* **X-axis:** "time period (t)" - Scale from 0 to 1000, with markers at 0, 250, 500, 750, and 1000.

* **Y-axis:** "per-period regret" - Scale from 0 to 0.25, with markers at 0, 0.05, 0.10, 0.15, 0.20, and 0.25.

* **Legend:** Located in the top-right corner of each chart.

* "TS" - Represented by a red line.

* "greedy" - Represented by a blue line.

* **Chart (a):** Subtitle "(a) θ = (0.9, 0.8, 0.7)"

* **Chart (b):** Subtitle "(b) average over random θ"

### Detailed Analysis or Content Details

**Chart (a): θ = (0.9, 0.8, 0.7)**

* **TS (Red Line):** The line starts at approximately 0.08 and rapidly decreases to approximately 0.01 by time period 250. It continues to decrease slowly, reaching approximately 0.002 by time period 1000. The trend is strongly downward, indicating a rapid reduction in regret.

* **greedy (Blue Line):** The line starts at approximately 0.09 and remains relatively constant at around 0.08-0.09 throughout the entire time period (1000). The trend is flat, indicating consistent regret.

**Chart (b): average over random θ**

* **TS (Red Line):** The line starts at approximately 0.08 and rapidly decreases to approximately 0.01 by time period 250. It continues to decrease slowly, reaching approximately 0.003 by time period 1000. The trend is strongly downward, indicating a rapid reduction in regret.

* **greedy (Blue Line):** The line starts at approximately 0.09 and remains relatively constant at around 0.08-0.09 throughout the entire time period (1000). The trend is flat, indicating consistent regret.

### Key Observations

* In both charts, the "TS" agent consistently outperforms the "greedy" agent, exhibiting significantly lower per-period regret over time.

* The "greedy" agent's regret remains relatively constant, suggesting it does not adapt or learn from its actions.

* The initial regret for both agents is similar, but the "TS" agent quickly reduces its regret, while the "greedy" agent does not.

* The parameter setting (θ) does not appear to significantly impact the relative performance of the two agents, as the trends are similar in both charts.

### Interpretation

The data demonstrates the effectiveness of the Thompson Sampling (TS) agent in minimizing per-period regret compared to a greedy agent. The TS agent's ability to explore and learn from its actions allows it to adapt and improve its performance over time, leading to a substantial reduction in regret. The greedy agent, lacking this adaptive capability, maintains a consistently high level of regret.

The fact that the trends are similar in both charts (with specific θ values and averaged over random θ values) suggests that the superiority of TS over greedy is robust and not dependent on the specific parameter settings. This indicates that Thompson Sampling is a generally effective strategy for minimizing regret in this type of environment. The flat line for the greedy agent suggests it is stuck in a suboptimal strategy and unable to improve its performance. The rapid initial drop in regret for the TS agent indicates a quick learning phase, where it efficiently identifies better actions.

</details>

## General Thompson Sampling

TS can be applied fruitfully to a broad array of online decision problems beyond the Bernoulli bandit, and we now consider a more general setting. Suppose the agent applies a sequence of actions x 1 , x 2 , x 3 , . . . to a system, selecting each from a set X . This action set could be finite, as in the case of the Bernoulli bandit, or infinite. After applying action x t , the agent observes an outcome y t , which the system randomly generates according to a conditional probability measure q θ ( ·| x t ). The agent enjoys a reward r t = r ( y t ), where r is a known function. The agent is initially uncertain about the value of θ and represents his uncertainty using a prior distribution p .

Algorithms 4.1 and 4.2 present greedy and TS approaches in an abstract form that accommodates this very general problem. The two differ in the way they generate model parameters ˆ θ . The greedy algorithm takes ˆ θ to be the expectation of θ with respect to the distribution p , while TS draws a random sample from p . Both algorithms then apply actions that maximize expected reward for their respective models. Note that, if there are a finite set of possible observations y t , this expectation is given by

$$\mathbb { E } _ { q _ { \hat { \theta } } } [ r ( y _ { t } ) | x _ { t } = x ] = \sum _ { o } q _ { \hat { \theta } } ( o | x ) r ( o ) .$$

The distribution p is updated by conditioning on the realized observation ˆ y t . If θ is restricted to values from a finite set, this conditional distribution can be written by Bayes rule as

$$( 4 . 2 ) \quad \mathbb { P } _ { p , q } ( \theta = u | x _ { t } , y _ { t } ) = \frac { p ( u ) q _ { u } ( y _ { t } | x _ { t } ) } { \sum _ { v } p ( v ) q _ { v } ( y _ { t } | x _ { t } ) } .$$

## Algorithm 4.1 Greedy( X , p, q, r )

```

Algorithm 4.1 Greedy(X,p,q,r)

1: for t = 1,2,... do

2: #estimate model:

3: $\theta \leftarrow \mathbb{E}_{p}[\theta]$

4:

5: $#select and apply action:

6: x_{t \leftarrow argmax_{x\in\mathcal{X}\,q_0^{\theta}}[r(y_{t})|x_{t = x}]$

7:

8: Apply x_{t and observe y_{t}$

9:

10: #update distribution:

11: p \leftarrow \mathbb{P}_{p,q}(\theta \in \cdot | x_{t},y_{t})$

12: end for

```

## Algorithm

4.2

Thompson( X , p, q, r )

```

\frac{1: for t = 1,2,... do

2: #sample model:

3: Sample $\hat{\theta} \sim p

4: #select and apply action:

5: x_{t <- argmax_{x\in\mathbb{E}q_\hat{y}(r(y_t)|x_t = x)}

6: Apply $x_t$ and observe $y_t

7: }

8: #update distribution:

9: p <- \mathbb{P}_{p,q}(\theta \in \cdot|x_{t}, y_{t})

```

The Bernoulli bandit with a beta prior serves as a special case of this more general formulation. In this special case, the set of actions is X = { 1 , . . . , K } and only rewards are observed, so y t = r t . Observations and rewards are modeled by conditional probabilities q θ (1 | k ) = θ k and q θ (0 | k ) = 1 -θ k . The prior distribution is encoded by vectors α and β , with probability density function given by:

$$p ( \theta ) = \prod _ { k = 1 } ^ { K } \frac { \Gamma ( \alpha + \beta ) } { \Gamma ( \alpha _ { k } ) \Gamma ( \beta _ { k } ) } \theta _ { k } ^ { \alpha _ { k } - 1 } ( 1 - \theta _ { k } ) ^ { \beta _ { k } - 1 } ,$$

where Γ denotes the gamma function. In other words, under the prior distribution, components of θ are independent and beta-distributed, with parameters α and β .

For this problem, the greedy algorithm (Algorithm 4.1) and TS (Algorithm 4.2) begin each t th iteration with posterior parameters ( α k , β k ) for k ∈ { 1 , . . . , K } . The greedy algorithm sets ˆ θ k to the expected value E p [ θ k ] = α k / ( α k + β k ), whereas TS randomly draws ˆ θ k from a beta distribution with parameters ( α k , β k ). Each algorithm then selects the action x that maximizes E q ˆ θ [ r ( y t ) | x t = x ] = ˆ θ x . After applying the

selected action, a reward r t = y t is observed, and belief distribution parameters are updated according to

$$( \alpha , \beta ) \gets ( \alpha + r _ { t } 1 _ { x _ { t } } , \beta + ( 1 - r _ { t } ) 1 _ { x _ { t } } ) ,$$

where 1 x t is a vector with component x t equal to 1 and all other components equal to 0.

Algorithms 4.1 and 4.2 can also be applied to much more complex problems. As an example, let us consider a version of the shortest path problem presented in Example 1.2.

Example 4.1. (Independent Travel Times) Recall the shortest path problem of Example 1.2. The model is defined with respect to a directed graph G = ( V, E ), with vertices V = { 1 , . . . , N } , edges E , and mean travel times θ ∈ R N . Vertex 1 is the source and vertex N is the destination. An action is a sequence of distinct edges leading from source to destination. After applying action x t , for each traversed edge e ∈ x t , the agent observes a travel time y t,e that is independently sampled from a distribution with mean θ e . Further, the agent incurs a cost of ∑ e ∈ x t y t,e , which can be thought of as a reward r t = -∑ e ∈ x t y t,e .

Consider a prior for which each θ e is independent and log-Gaussiandistributed with parameters µ e and σ 2 e . That is, ln( θ e ) ∼ N ( µ e , σ 2 e ) is Gaussian-distributed. Hence, E [ θ e ] = e µ e + σ 2 e / 2 . Further, take y t,e | θ to be independent across edges e ∈ E and log-Gaussian-distributed with parameters ln( θ e ) -˜ σ 2 / 2 and ˜ σ 2 , so that E [ y t,e | θ e ] = θ e . Conjugacy properties accommodate a simple rule for updating the distribution of θ e upon observation of y t,e :

$$( 4 . 3 ) \quad ( \mu _ { e } , \sigma _ { e } ^ { 2 } ) \leftarrow \left ( \frac { \frac { 1 } { \sigma _ { e } ^ { 2 } } \mu _ { e } + \frac { 1 } { \tilde { \sigma } ^ { 2 } } \left ( \ln ( y _ { t , e } ) + \frac { \tilde { \sigma } ^ { 2 } } { 2 } \right ) } { \frac { 1 } { \sigma _ { e } ^ { 2 } } + \frac { 1 } { \tilde { \sigma } ^ { 2 } } } , \frac { 1 } { \frac { 1 } { \sigma _ { e } ^ { 2 } } + \frac { 1 } { \tilde { \sigma } ^ { 2 } } } \right ) .$$

To motivate this formulation, consider an agent who commutes from home to work every morning. Suppose possible paths are represented by a graph G = ( V, E ). Suppose the agent knows the travel distance d e associated with each edge e ∈ E but is uncertain about average travel times. It would be natural for her to construct a prior for which expectations are equal to travel distances. With the log-Gaussian prior, this can be accomplished by setting µ e = ln( d e ) -σ 2 e / 2. Note that the

parameters µ e and σ 2 e also express a degree of uncertainty; in particular, the prior variance of mean travel time along an edge is ( e σ 2 e -1) d 2 e .

The greedy algorithm (Algorithm 4.1) and TS (Algorithm 4.2) can be applied to Example 4.1 in a computationally efficient manner. Each algorithm begins each t th iteration with posterior parameters ( µ e , σ e ) for each e ∈ E . The greedy algorithm sets ˆ θ e to the expected value E p [ θ e ] = e µ e + σ 2 e / 2 , whereas TS randomly draws ˆ θ e from a log-Gaussian distribution with parameters µ e and σ 2 e . Each algorithm then selects its action x to maximize E q ˆ θ [ r ( y t ) | x t = x ] = -∑ e ∈ x t ˆ θ e . This can be cast as a deterministic shortest path problem, which can be solved efficiently, for example, via Dijkstra's algorithm. After applying the selected action, an outcome y t is observed, and belief distribution parameters ( µ e , σ 2 e ), for each e ∈ E , are updated according to (4.3).

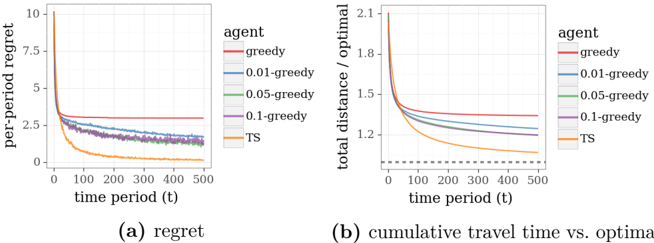

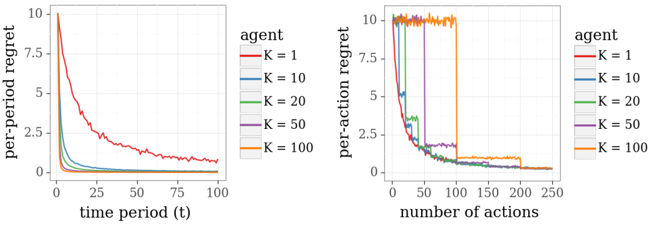

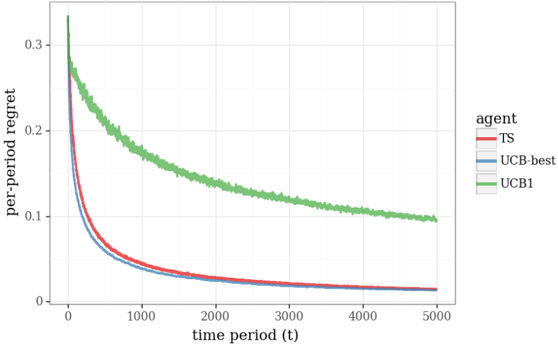

Figure 4.1 presents results from applying greedy and TS algorithms to Example 4.1, with the graph taking the form of a binomial bridge, as shown in Figure 4.2, except with twenty rather than six stages, so there are 184,756 paths from source to destination. Prior parameters are set to µ e = -1 2 and σ 2 e = 1 so that E [ θ e ] = 1, for each e ∈ E , and the conditional distribution parameter is ˜ σ 2 = 1. Each data point represents an average over ten thousand independent simulations.

The plots of regret demonstrate that the performance of TS converges quickly to optimal, while that is far from true for the greedy algorithm. We also plot results generated by /epsilon1 -greedy exploration, varying /epsilon1 . For each trip, with probability 1 -/epsilon1 , this algorithm traverses a path produced by a greedy algorithm. Otherwise, the algorithm samples a path randomly. Though this form of exploration can be helpful, the plots demonstrate that learning progresses at a far slower pace than with TS. This is because /epsilon1 -greedy exploration is not judicious in how it selects paths to explore. TS, on the other hand, orients exploration effort towards informative rather than entirely random paths.

Plots of cumulative travel time relative to optimal offer a sense for the fraction of driving time wasted due to lack of information. Each point plots an average of the ratio between the time incurred over some number of days and the minimal expected travel time given θ . With TS, this converges to one at a respectable rate. The same can not be said for /epsilon1 -greedy approaches.

Figure 4.1: Performance of Thompson sampling and /epsilon1 -greedy algorithms in the shortest path problem.

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Charts: Regret and Cumulative Travel Time vs. Optimal

### Overview

The image presents two line charts, labeled (a) "regret" and (b) "cumulative travel time vs. optimal". Both charts compare the performance of several agents (greedy, 0.01-greedy, 0.05-greedy, 0.1-greedy, and TS) over time periods from 0 to 500. The x-axis represents time period (t), while the y-axis in (a) represents per-period regret, and in (b) represents total distance divided by the optimal distance.

### Components/Axes

* **Chart (a): Regret**

* X-axis: time period (t), ranging from 0 to 500.

* Y-axis: per-period regret, ranging from 0 to 10.

* Legend: "agent" with the following categories:

* greedy (red)

* 0.01-greedy (orange)

* 0.05-greedy (green)

* 0.1-greedy (purple)

* TS (gray)

* **Chart (b): Cumulative Travel Time vs. Optimal**

* X-axis: time period (t), ranging from 0 to 500.

* Y-axis: total distance / optimal, ranging from 1.1 to 2.1.

* Legend: "agent" with the following categories:

* greedy (red)

* 0.01-greedy (orange)

* 0.05-greedy (green)

* 0.1-greedy (purple)

* TS (gray)

* Dotted line at x=100, y=1.25

### Detailed Analysis or Content Details

**Chart (a): Regret**

* **greedy (red):** Starts at approximately 6.5 and remains relatively constant around 3.5-4.0 throughout the time period.

* **0.01-greedy (orange):** Starts at approximately 1.5 and decreases rapidly to around 0.2 by t=100, then continues to decrease slowly, reaching approximately 0.05 by t=500.

* **0.05-greedy (green):** Starts at approximately 2.0 and decreases rapidly to around 0.1 by t=100, then continues to decrease slowly, reaching approximately 0.05 by t=500.

* **0.1-greedy (purple):** Starts at approximately 2.5 and decreases rapidly to around 0.1 by t=100, then continues to decrease slowly, reaching approximately 0.05 by t=500.

* **TS (gray):** Starts at approximately 2.5 and decreases rapidly to around 0.1 by t=100, then continues to decrease slowly, reaching approximately 0.05 by t=500.

**Chart (b): Cumulative Travel Time vs. Optimal**

* **greedy (red):** Starts at approximately 1.9 and decreases rapidly to around 1.25 by t=100, then continues to decrease slowly, reaching approximately 1.15 by t=500.

* **0.01-greedy (orange):** Starts at approximately 1.8 and decreases rapidly to around 1.2 by t=100, then continues to decrease slowly, reaching approximately 1.15 by t=500.

* **0.05-greedy (green):** Starts at approximately 1.8 and decreases rapidly to around 1.2 by t=100, then continues to decrease slowly, reaching approximately 1.15 by t=500.

* **0.1-greedy (purple):** Starts at approximately 1.8 and decreases rapidly to around 1.2 by t=100, then continues to decrease slowly, reaching approximately 1.15 by t=500.

* **TS (gray):** Starts at approximately 1.8 and decreases rapidly to around 1.2 by t=100, then continues to decrease slowly, reaching approximately 1.15 by t=500.

### Key Observations

* In both charts, the "greedy" agent consistently performs worse than the other agents, exhibiting higher regret and a larger ratio of total distance to optimal distance.

* The agents "0.01-greedy", "0.05-greedy", "0.1-greedy", and "TS" exhibit very similar performance in both charts, converging to similar values as time increases.

* The initial drop in both charts is most pronounced between t=0 and t=100.

* The dotted line in chart (b) at x=100, y=1.25 may indicate a benchmark or threshold.

### Interpretation

The data suggests that the "greedy" agent, while simple, is suboptimal in this scenario, consistently incurring higher regret and longer travel times relative to the optimal solution. The other agents, which incorporate some degree of exploration or learning (indicated by the "greedy" coefficient and "TS" for Thompson Sampling), converge to a similar level of performance, suggesting that a small amount of exploration significantly improves results. The rapid initial improvement (between t=0 and t=100) indicates a quick learning phase where the agents adapt to the environment. The convergence of the non-greedy agents suggests diminishing returns from further exploration after a certain point. The dotted line in chart (b) could represent a target performance level, and the agents' convergence towards this level indicates successful adaptation. The charts demonstrate the trade-off between exploration and exploitation in reinforcement learning or decision-making processes.

</details>

Figure 4.2: A binomial bridge with six stages.

<details>

<summary>Image 7 Details</summary>

### Visual Description

\n

## Diagram: Grid-Based Directed Graph

### Overview

</details>

Algorithm 4.2 can be applied to problems with complex information structures, and there is often substantial value to careful modeling of such structures. As an example, we consider a more complex variation of the binomial bridge example.

Example 4.2. (Correlated Travel Times) As with Example 4.1, let each θ e be independent and log-Gaussian-distributed with parameters µ e and σ 2 e . Let the observation distribution be characterized by

$$y _ { t , e } = \zeta _ { t , e } \eta _ { t } \nu _ { t , \ell ( e ) } \theta _ { e } ,$$

where each ζ t,e represents an idiosyncratic factor associated with edge e , η t represents a factor that is common to all edges, /lscript ( e ) indicates whether edge e resides in the lower half of the binomial bridge, and ν t, 0 and ν t, 1 represent factors that bear a common influence on edges in the upper and lower halves, respectively. We take each ζ t,e , η t , ν t, 0 , and ν t, 1 to be independent log-Gaussian-distributed with parameters -˜ σ 2 / 6 and ˜ σ 2 / 3. The distributions of the shocks ζ t,e , η t , ν t, 0 and ν t, 1 are known, and only the parameters θ e corresponding to each individual edge must be learned through experimentation. Note that, given these parameters, the marginal distribution of y t,e | θ is identical to that of Example 4.1, though the joint distribution over y t | θ differs.

The common factors induce correlations among travel times in the binomial bridge: η t models the impact of random events that influence traffic conditions everywhere, like the day's weather, while ν t, 0 and ν t, 1 each reflect events that bear influence only on traffic conditions along edges in half of the binomial bridge. Though mean edge travel times are independent under the prior, correlated observations induce dependencies in posterior distributions.

Conjugacy properties again facilitate efficient updating of posterior parameters. Let φ, z t ∈ R N be defined by

$$\phi _ { e } = \ln ( \theta _ { e } ) \quad a n d \quad z _ { t , e } = \left \{ \begin{array} { l l } { \ln ( y _ { t , e } ) } & { i f e \in x _ { t } } \\ { 0 } & { o t h e r w i s e . } \end{array}$$

Note that it is with some abuse of notation that we index vectors and matrices using edge indices. Define a | x t | × | x t | covariance matrix ˜ Σ with elements

/negationslash

$$\tilde { \Sigma } _ { e , e ^ { \prime } } = \left \{ \begin{array} { l l } { \tilde { \sigma } ^ { 2 } } & { f o r e = e ^ { \prime } } \\ { 2 \tilde { \sigma } ^ { 2 } / 3 } & { f o r e \neq e ^ { \prime } , \ell ( e ) = \ell ( e ^ { \prime } ) } \\ { \tilde { \sigma } ^ { 2 } / 3 } & { o t h e r w i s e , } \end{array}$$

for e, e ′ ∈ x t , and a N × N concentration matrix

$$\tilde { C } _ { e , e ^ { \prime } } = \left \{ \begin{array} { l l } { \tilde { \Sigma } _ { e , e ^ { \prime } } ^ { - 1 } } & { i f e , e ^ { \prime } \in x _ { t } } \\ { 0 } & { o t h e r w i s e , } \end{array}$$

for e, e ′ ∈ E . Then, the posterior distribution of φ is Gaussian with a mean vector µ and covariance matrix Σ that can be updated according

to

$$\begin{array} { r l } { ( 4 . 4 ) } & \left ( \mu , \Sigma \right ) \leftarrow \left ( \left ( \Sigma ^ { - 1 } + \tilde { C } \right ) ^ { - 1 } \left ( \Sigma ^ { - 1 } \mu + \tilde { C } z _ { t } \right ) , \left ( \Sigma ^ { - 1 } + \tilde { C } \right ) ^ { - 1 } \right ) . } \end{array}$$

TS (Algorithm 4.2) can again be applied in a computationally efficient manner. Each t th iteration begins with posterior parameters µ ∈ R N and Σ ∈ R N × N . The sample ˆ θ can be drawn by first sampling a vector ˆ φ from a Gaussian distribution with mean µ and covariance matrix Σ, and then setting ˆ θ e = ˆ φ e for each e ∈ E . An action x is selected to maximize E q ˆ θ [ r ( y t ) | x t = x ] = -∑ e ∈ x t ˆ θ e , using Djikstra's algorithm or an alternative. After applying the selected action, an outcome y t is observed, and belief distribution parameters ( µ, Σ) are updated according to (4.4).

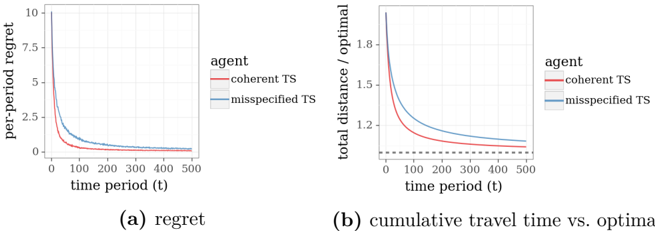

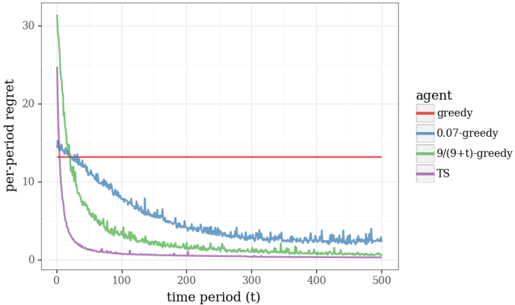

Figure 4.3: Performance of two versions of Thompson sampling in the shortest path problem with correlated travel times.

<details>

<summary>Image 8 Details</summary>

### Visual Description

\n

## Line Chart: Regret and Cumulative Travel Time vs. Optimal

### Overview

The image presents two line charts side-by-side. The left chart (a) displays "regret" on the y-axis against "time period (t)" on the x-axis. The right chart (b) shows "total distance / optimal" on the y-axis against "time period (t)" on the x-axis. Both charts compare two "agent" types: "coherent TS" and "misspecified TS".

### Components/Axes

* **Chart (a): Regret**

* X-axis: "time period (t)", ranging from 0 to 500.

* Y-axis: "per-period regret", ranging from 0 to 10.

* **Chart (b): Cumulative Travel Time vs. Optimal**

* X-axis: "time period (t)", ranging from 0 to 500.

* Y-axis: "total distance / optimal", ranging from 0 to 1.8.

* **Legend (Both Charts):**

* "coherent TS" - represented by a solid red line.

* "misspecified TS" - represented by a dashed grey line.

* The legend is positioned in the top-right corner of each chart.

### Detailed Analysis or Content Details

**Chart (a): Regret**

* **coherent TS (Red Line):** The line starts at approximately 9.5 at t=0, then rapidly decreases, becoming nearly flat around a value of 0.1 at t=200. It remains relatively stable between 0.1 and 0.2 for the rest of the time period.

* **misspecified TS (Grey Dashed Line):** The line begins at approximately 3.5 at t=0, and decreases more slowly than the red line. It reaches approximately 0.2 at t=200, and continues to decrease, leveling off around 0.15 at t=400.

**Chart (b): Cumulative Travel Time vs. Optimal**

* **coherent TS (Red Line):** The line starts at approximately 1.8 at t=0, and decreases rapidly, reaching approximately 1.1 at t=100. It continues to decrease, leveling off around 1.05 at t=400.

* **misspecified TS (Grey Dashed Line):** The line begins at approximately 1.1 at t=0, and decreases more slowly than the red line. It reaches approximately 1.05 at t=200, and continues to decrease, leveling off around 1.03 at t=400. A dotted horizontal line is present at y=1.0.

### Key Observations

* In both charts, the "coherent TS" agent consistently exhibits lower values than the "misspecified TS" agent, indicating better performance.

* The rate of decrease is faster for the "coherent TS" agent in both charts, especially in the initial time periods.

* The "misspecified TS" agent's performance plateaus at a higher level than the "coherent TS" agent.

* The dotted line in chart (b) at y=1.0 suggests a benchmark or optimal performance level.

### Interpretation

The data suggests that the "coherent TS" agent is more effective at minimizing regret and achieving a travel time closer to the optimal solution compared to the "misspecified TS" agent. The rapid initial decrease in both charts for the "coherent TS" agent indicates a faster learning or adaptation process. The leveling off of both lines suggests that the agents are approaching a stable state, but the "misspecified TS" agent remains at a suboptimal level. The dotted line in chart (b) provides a reference point, showing that while both agents improve over time, neither fully reaches the optimal travel time. The difference in performance between the two agents highlights the importance of accurate model specification ("coherent TS" vs. "misspecified TS") in achieving optimal results. The charts demonstrate a clear trade-off between regret and cumulative travel time, with the "coherent TS" agent consistently outperforming the "misspecified TS" agent in both metrics.

</details>

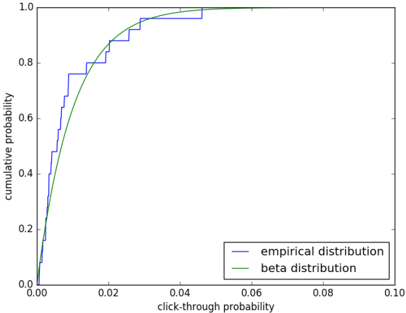

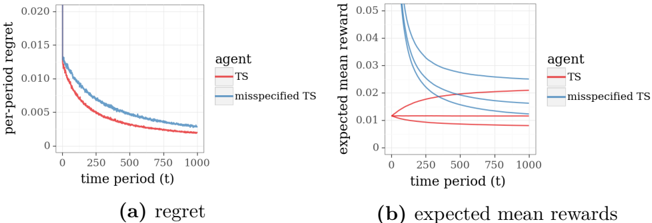

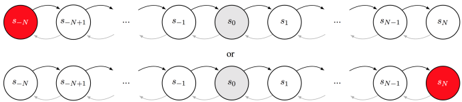

Figure 4.3 plots results from applying TS to Example 4.2, again with the binomial bridge, µ e = -1 2 , σ 2 e = 1, and ˜ σ 2 = 1. Each data point represents an average over ten thousand independent simulations. Despite model differences, an agent can pretend that observations made in this new context are generated by the model described in Example 4.1. In particular, the agent could maintain an independent log-Gaussian posterior for each θ e , updating parameters ( µ e , σ 2 e ) as though each y t,e | θ is independently drawn from a log-Gaussian distribution. As a baseline for comparison, Figure 4.3 additionally plots results from application of this approach, which we will refer to here as misspecified TS . The comparison demonstrates substantial improvement that results from

accounting for interdependencies among edge travel times, as is done by what we refer to here as coherent TS . Note that we have assumed here that the agent must select a path before initiating each trip. In particular, while the agent may be able to reduce travel times in contexts with correlated delays by adjusting the path during the trip based on delays experienced so far, our model does not allow this behavior.

## 5

## Approximations

Conjugacy properties in the Bernoulli bandit and shortest path examples that we have considered so far facilitated simple and computationally efficient Bayesian inference. Indeed, computational efficiency can be an important consideration when formulating a model. However, many practical contexts call for more complex models for which exact Bayesian inference is computationally intractable. Fortunately, there are reasonably efficient and accurate methods that can be used to approximately sample from posterior distributions.

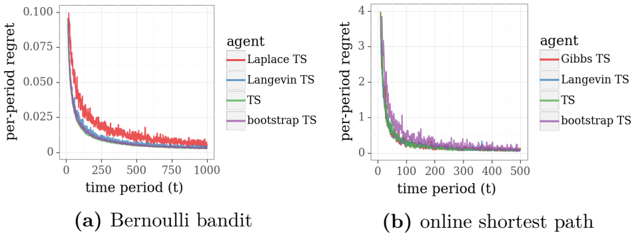

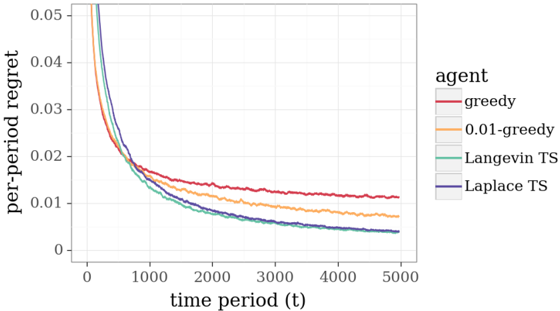

In this section we discuss four approaches to approximate posterior sampling: Gibbs sampling, Langevin Monte Carlo, sampling from a Laplace approximation, and the bootstrap. Such methods are called for when dealing with problems that are not amenable to efficient Bayesian inference. As an example, we consider a variation of the online shortest path problem.

Example 5.1. (Binary Feedback) Consider Example 4.2, except with deterministic travel times and noisy binary observations. Let the graph represent a binomial bridge with M stages. Let each θ e be independent and gamma-distributed with E [ θ e ] = 1, E [ θ 2 e ] = 1 . 5, and observations

be generated according to

$$y _ { t } | \theta \sim \left \{ \begin{array} { l l } { 1 } & { w i t h p r o b a b i l i t y \frac { 1 } { 1 + \exp \left ( \sum _ { e \in x _ { t } } \theta _ { e } - M \right ) } } \\ { 0 } & { o t h e r w i s e . } \end{array}$$

We take the reward to be the rating r t = y t . This information structure could be used to model, for example, an Internet route recommendation service. Each day, the system recommends a route x t and receives feedback y t from the driver, expressing whether the route was desirable. When the realized travel time ∑ e ∈ x t θ e falls short of the prior expectation M , the feedback tends to be positive, and vice versa.

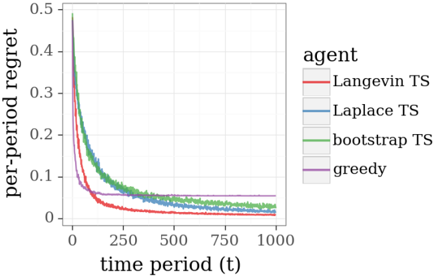

This new model does not enjoy conjugacy properties leveraged in Section 4 and is not amenable to efficient exact Bayesian inference. However, the problem may be addressed via approximation methods. To illustrate, Figure 5.1 plots results from application of three approximate versions of TS to an online shortest path problem on a twenty-stage binomial bridge with binary feedback. The algorithms leverage Langevin Monte Carlo, the Laplace approximation, and the bootstrap, three approaches we will discuss, and the results demonstrate effective learning, in the sense that regret vanishes over time. Also plotted as a baseline for comparison are results from application of the greedy algorithm.

In the remainder of this section, we will describe several approaches to approximate TS. It is worth mentioning that we do not cover an exhaustive list, and further, our descriptions do not serve as comprehensive or definitive treatments of each approach. Rather, our intent is to offer simple descriptions that convey key ideas that may be extended or combined to serve needs arising in any specific application.

Throughout this section, let f t -1 denote the posterior density of θ conditioned on the history H t -1 = (( x 1 , y 1 ) , . . . , ( x t -1 , y t -1 )) of observations. TS generates an action x t by sampling a parameter vector ˆ θ from f t -1 and solving for the optimal path under ˆ θ . The methods we describe generate a sample ˆ θ whose distribution approximates the posterior ˆ f t -1 , which enables approximate implementations of TS when exact posterior sampling is infeasible.

Figure 5.1: Regret experienced by approximation methods applied to the path recommendation problem with binary feedback.

<details>

<summary>Image 9 Details</summary>

### Visual Description

\n

## Line Chart: Per-Period Regret vs. Time Period

### Overview

The image presents a line chart illustrating the per-period regret of different agents over a time period of 1000 units. The chart compares the performance of four agents: Langevin TS, Laplace TS, bootstrap TS, and greedy. The y-axis represents the per-period regret, while the x-axis represents the time period (t).

### Components/Axes

* **X-axis:** "time period (t)", ranging from approximately 0 to 1000.

* **Y-axis:** "per-period regret", ranging from approximately 0 to 0.5.

* **Legend (top-right):**

* Langevin TS (Red)

* Laplace TS (Gray)

* bootstrap TS (Green)

* greedy (Purple)

### Detailed Analysis

The chart displays four distinct lines, each representing an agent's per-period regret over time.

* **Langevin TS (Red):** The line starts at approximately 0.45 at t=0 and rapidly decreases to around 0.07 by t=250. It continues to decrease slowly, reaching approximately 0.055 by t=1000.

* **Laplace TS (Gray):** The line begins at approximately 0.35 at t=0 and decreases more gradually than Langevin TS, reaching around 0.065 by t=250. It continues to decrease, leveling off around 0.05 by t=1000.

* **bootstrap TS (Green):** The line starts at approximately 0.3 at t=0 and decreases at a rate similar to Laplace TS, reaching around 0.06 by t=250. It continues to decrease, leveling off around 0.05 by t=1000.

* **greedy (Purple):** The line begins at approximately 0.25 at t=0 and decreases rapidly, reaching around 0.06 by t=250. It continues to decrease, leveling off around 0.05 by t=1000.

All lines exhibit a decreasing trend, indicating that the per-period regret decreases as the time period increases. The initial decrease is more pronounced for Langevin TS and greedy, while Laplace TS and bootstrap TS show a more gradual decline. All lines converge towards a similar level of per-period regret around t=1000.

### Key Observations

* Langevin TS initially exhibits the highest per-period regret but also the fastest initial decrease.

* The greedy agent starts with the lowest per-period regret but its decrease is not as rapid as Langevin TS.

* Laplace TS and bootstrap TS show similar performance throughout the time period.

* All agents converge to a similar per-period regret level around t=1000, suggesting they achieve comparable performance in the long run.

### Interpretation

The chart demonstrates the learning process of different agents in a sequential decision-making environment. The per-period regret represents the loss incurred by not choosing the optimal action at each time step. The decreasing trend indicates that the agents are learning from their experiences and improving their decision-making over time.

The initial differences in per-period regret likely reflect the exploration-exploitation trade-off of each agent. Langevin TS and greedy may prioritize exploration initially, leading to higher regret but faster learning. Laplace TS and bootstrap TS may prioritize exploitation, leading to lower initial regret but slower learning.

The convergence of the lines towards the end of the time period suggests that all agents eventually achieve a similar level of performance, indicating that they have effectively learned the optimal strategy. The fact that all agents converge to a non-zero regret level suggests that there may be inherent uncertainty or complexity in the environment that prevents them from achieving perfect performance.

</details>

## 5.1 Gibbs Sampling

Gibbs sampling is a general Markov chain Monte Carlo (MCMC) algorithm for drawing approximate samples from multivariate probability distributions. It produces a sequence of sampled parameters ( ˆ θ n : n = 0 , 1 , 2 , . . . ) forming a Markov chain with stationary distribution f t -1 . Under reasonable technical conditions, the limiting distribution of this Markov chain is its stationary distribution, and the distribution of ˆ θ n converges to f t -1 .

Gibbs sampling starts with an initial guess ˆ θ 0 . Iterating over sweeps n = 1 , . . . , N , for each n th sweep, the algorithm iterates over the components k = 1 , . . . , K , for each k generating a one-dimensional marginal distribution

$$f _ { t - 1 } ^ { n , k } ( \theta _ { k } ) \, \infty \, f _ { t - 1 } ( ( \hat { \theta } _ { 1 } ^ { n } , \dots , \hat { \theta } _ { k - 1 } ^ { n } , \theta _ { k } , \hat { \theta } _ { k + 1 } ^ { n - 1 } , \dots , \hat { \theta } _ { K } ^ { n - 1 } ) ) ,$$

and sampling the k th component according to ˆ θ n k ∼ f n,k t -1 . After N of sweeps, the prevailing vector ˆ θ N is taken to be the approximate posterior sample. We refer to (Casella and George, 1992) for a more thorough introduction to the algorithm.

Gibbs sampling applies to a broad range of problems, and is often computationally viable even when sampling from f t -1 is not. This is because sampling from a one-dimensional distribution is simpler. That

said, for complex problems, Gibbs sampling can still be computationally demanding. This is the case, for example, with our path recommendation problem with binary feedback. In this context, it is easy to implement a version of Gibbs sampling that generates a close approximation to a posterior sample within well under a minute. However, running thousands of simulations each over hundreds of time periods can be quite time-consuming. As such, we turn to more efficient approximation methods.

## 5.2 Laplace Approximation

We now discuss an approach that approximates a potentially complicated posterior distribution by a Gaussian distribution. Samples from this simpler Gaussian distribution can then serve as approximate samples from the posterior distribution of interest. Chapelle and Li (Chapelle and Li, 2011) proposed this method to approximate TS in a display advertising problem with a logistic regression model of ad-click-through rates.

Let g denote a probability density function over R K from which we wish to sample. If g is unimodal, and its log density ln( g ( φ )) is strictly concave around its mode φ , then g ( φ ) = e ln( g ( φ )) is sharply peaked around φ . It is therefore natural to consider approximating g locally around its mode. A second-order Taylor approximation to the log-density gives

$$\ln ( g ( \phi ) ) \approx \ln ( g ( \overline { \phi } ) ) - \frac { 1 } { 2 } ( \phi - \overline { \phi } ) ^ { \top } C ( \phi - \overline { \phi } ) ,$$

$$C = - \nabla ^ { 2 } \ln ( g ( \bar { \phi } ) ) .$$

As an approximation to the density g , we can then use

$$\tilde { g } ( \phi ) \varpropto e ^ { - \frac { 1 } { 2 } ( \phi - \overline { \phi } ) ^ { \top } C ( \phi - \overline { \phi } ) } .$$

This is proportional to the density of a Gaussian distribution with mean φ and covariance C -1 , and hence

$$\tilde { g } ( \phi ) = \sqrt { | C / 2 \pi | } e ^ { - \frac { 1 } { 2 } ( \phi - \overline { \phi } ) ^ { \top } C ( \phi - \overline { \phi } ) } .$$

where

We refer to this as the Laplace approximation of g . Since there are efficient algorithms for generating Gaussian-distributed samples, this offers a viable means to approximately sampling from g .

As an example, let us consider application of the Laplace approximation to Example 5.1. Bayes rule implies that the posterior density f t -1 of θ satisfies

$$f _ { t - 1 } ( \theta ) \varpropto f _ { 0 } ( \theta ) \prod _ { \tau = 1 } ^ { t - 1 } \left ( \frac { 1 } { 1 + \exp \left ( \sum _ { e \in x _ { \tau } } \theta _ { e } - M \right ) } \right ) ^ { y _ { \tau } } \left ( \frac { \exp \left ( \sum _ { e \in x _ { \tau } } \theta _ { e } - M \right ) } { 1 + \exp \left ( \sum _ { e \in x _ { \tau } } \theta _ { e } - M \right ) } \right ) ^ { 1 - y _ { \tau } } .$$

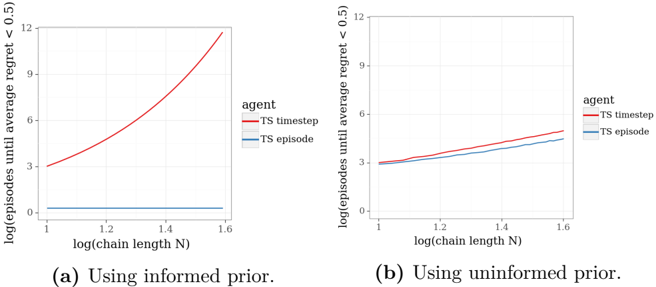

The mode θ can be efficiently computed via maximizing f t -1 , which is log-concave. An approximate posterior sample ˆ θ is then drawn from a Gaussian distribution with mean θ and covariance matrix ( -∇ 2 ln( f t -1 ( θ ))) -1 .