## A Unified View of Piecewise Linear Neural Network Verification

Rudy Bunel University of Oxford

## Ilker Turkaslan

rudy@robots.ox.ac.uk

University of Oxford ilker.turkaslan@lmh.ox.ac.uk

Pushmeet Kohli Deepmind pushmeet@google.com

Philip H.S. Torr University of Oxford philip.torr@eng.ox.ac.uk

M. Pawan Kumar University of Oxford Alan Turing Institute pawan@robots.ox.ac.uk

## Abstract

The success of Deep Learning and its potential use in many safety-critical applications has motivated research on formal verification of Neural Network (NN) models. Despite the reputation of learned NN models to behave as black boxes and the theoretical hardness of proving their properties, researchers have been successful in verifying some classes of models by exploiting their piecewise linear structure and taking insights from formal methods such as Satisifiability Modulo Theory. These methods are however still far from scaling to realistic neural networks. To facilitate progress on this crucial area, we make two key contributions. First, we present a unified framework that encompasses previous methods. This analysis results in the identification of new methods that combine the strengths of multiple existing approaches, accomplishing a speedup of two orders of magnitude compared to the previous state of the art. Second, we propose a new data set of benchmarks which includes a collection of previously released testcases. We use the benchmark to provide the first experimental comparison of existing algorithms and identify the factors impacting the hardness of verification problems.

## 1 Introduction

Despite their success in a wide variety of applications, Deep Neural Networks have seen limited adoption in safety-critical settings. The main explanation for this lies in their reputation for being black-boxes whose behaviour can not be predicted. Current approaches to evaluate trained models mostly rely on testing using held-out data sets. However, as Edsger W. Dijkstra said [3], 'testing shows the presence, not the absence of bugs'. If deep learning models are to be deployed to applications such as autonomous driving cars, we need to be able to verify safety-critical behaviours.

To this end, some researchers have tried to use formal methods. To the best of our knowledge, Zakrzewski [21] was the first to propose a method to verify simple, one hidden layer neural networks. However, only recently were researchers able to work with non-trivial models by taking advantage of the structure of ReLU-based networks [5, 11]. Even then, these works are not scalable to the large networks encountered in most real world problems.

This paper advances the field of NN verification by making the following key contributions:

1. We reframe state of the art verification methods as special cases of Branch-and-Bound optimization, which provides us with a unified framework to compare them.

2. We gather a data set of test cases based on the existing literature and extend it with new benchmarks. We provide the first experimental comparison of verification methods.

3. Based on this framework, we identify algorithmic improvements in the verification process, specifically in the way bounds are computed, the type of branching that are considered, as

Preprint. Work in progress.

well as the strategies guiding the branching. Compared to the previous state of the art, these improvements lead to speed-up of almost two orders of magnitudes .

Section 2 and 3 give the specification of the problem and formalise the verification process. Section 4 presents our unified framework, showing that previous methods are special cases and highlighting potential improvements. Section 5 presents our experimental setup and Section 6 analyses the results.

## 2 Problem specification

We now specify the problem of formal verification of neural networks. Given a network that implements a function ˆ x n = f ( x 0 ) , a bounded input domain C and a property P , we want to prove

$$x _ { 0 } \in \mathcal { C } , \quad \hat { x } _ { n } = f ( x _ { 0 } ) \implies P ( \hat { x } _ { n } ) . \quad ( 1 )$$

For example, the property of robustness to adversarial examples in L ∞ norm around a training sample a with label y a would be encoded by using C /defines { x 0 | ‖ x 0 -a ‖ ∞ ≤ /epsilon1 } and P ( ˆ x n ) = { ∀ y ˆ x n [ y a ] ≥ ˆ x n [ y ] } .

In this paper, we are going to focus on Piecewise-Linear Neural Networks (PL-NN), that is, networks for which we can decompose C into a set of polyhedra C i such that C = ∪ i C i , and the restriction of f to C i is a linear function for each i . While this prevents us from including networks that use activation functions such as sigmoid or tanh, PL-NNs allow the use of linear transformations such as fully-connected or convolutional layers, pooling units such as MaxPooling and activation functions such as ReLUs. In other words, PL-NNs represent the majority of networks used in practice. Operations such as Batch-Normalization or Dropout also preserve piecewise linearity at test-time.

The properties that we are going to consider are Boolean formulas over linear inequalities. In our robustness to adversarial example above, the property is a conjunction of linear inequalities, each of which constrains the output of the original label to be greater than the output of another label.

The scope of this paper does not include approaches relying on additional assumptions such as twice differentiability of the network [8, 21], limitation of the activation to binary values [5, 16] or restriction to a single linear domain [2]. Since they do not provide formal guarantees, we also don't include approximate approaches relying on a limited set of perturbation [10] or on over-approximation methods that potentially lead to undecidable properties [17, 20].

## 3 Verification Formalism

## 3.1 Verification as a Satisfiability problem

The methods we involve in our comparison all leverage the piecewise-linear structure of PL-NN to make the problem more tractable. They all follow the same general principle: given a property to prove, they attempt to discover a counterexample that would make the property false. This is accomplished by defining a set of variables corresponding to the inputs, hidden units and output of the network, and the set of constraints that a counterexample would satisfy.

To help design a unified framework, we reduce all instances of verification problems to a canonical representation. Specifically, the whole satisfiability problem will be transformed into a global optimization problem where the decision will be obtained by checking the sign of the minimum.

If the property to verify is a simple inequality P ( ˆ x n ) /defines c T ˆ x n ≥ b , it is sufficient to add to the network a final fully connected layer with one output, with weight of c and a bias of -b . If the global minimum of this network is positive, it indicates that for all ˆ x n the original network can output, we have c T ˆ x n -b ≥ 0 = ⇒ c T ˆ x n ≥ b , and as a consequence the property is True. On the other hand, if the global minimum is negative, then the minimizer provides a counter-example. The supplementary material shows that OR and AND clauses in the property can similarly be expressed as additional layers, using MaxPooling units.

We can formulate any Boolean formula over linear inequalities on the output of the network as a sequence of additional linear and max-pooling layers. The verification problem will be reduced to the problem of finding whether the scalar output of the potentially modified network can reach a negative value. Assuming the network only contains ReLU activations between each layer, the satisfiability problem to find a counterexample can be expressed as:

$$l _ { 0 } \leq x _ { 0 } \leq u _ { 0 } \quad ( 2 a ) \quad \hat { x } _ { i + 1 } = W _ { i + 1 } x _ { i } + b _ { i + 1 } \quad \forall i \in \{ 0 , \, n - 1 \} \quad ( 2 c )$$

x

ˆ

n

≤

$$\begin{array} { r l r } { \leq 0 } & ( 2 b ) } & x _ { i } = \max \left ( \hat { x } _ { i } , 0 \right ) } & \forall i \in \{ 1 , \, n - 1 \} . } & ( 2 d ) } \end{array}$$

Eq. (10a) represents the constraints on the input and Eq. (10h) on the neural network output. Eq. (10b) encodes the linear layers of the network and Eq. (2d) the ReLU activation functions. If an assignment to all the values can be found, this represents a counterexample. If this problem is unsatisfiable, no counterexample can exist, implying that the property is True. We emphasise that we are required to prove that no counter-examples can exist, and not simply that none could be found.

While for clarity of explanation, we have limited ourselves to the specific case where only ReLU activation functions are used, this is not restrictive. The supplementary material contains a section detailing how each method specifically handles MaxPooling units, as well as how to convert any MaxPooling operation into a combination of linear layers and ReLU activation functions.

The problem described in (2) is still a hard problem. The addition of the ReLU non-linearities (2d) transforms a problem that would have been solvable by simple Linear Programming into an NP-hard problem [11]. Converting a verification problem into this canonical representation does not make its resolution simpler but it does provide a formalism advantage. Specifically, it allows us to prove complex properties, containing several OR clauses, with a single procedure rather than having to decompose the desired property into separate queries as was done in previous work [11].

Operationally, a valid strategy is to impose the constraints (10a) to (2d) and minimise the value of ˆ x n . Finding the exact global minimum is not necessary for verification. However, it provides a measure of satisfiability or unsatisfiability. If the value of the global minimum is positive, it will correspond to the margin by which the property is satisfied.

## 3.2 Mixed Integer Programming formulation

A possible way to eliminate the non-linearities is to encode them with the help of binary variables, transforming the PL-NN verification problem (2) into a Mixed Integer Linear Program (MIP). This can be done with the use of 'big-M' encoding. The following encoding is from Tjeng & Tedrake [19]. Assuming we have access to lower and upper bounds on the values that can be taken by the coordinates of ˆ x i , which we denote l i and u i , we can replace the non-linearities:

$$\begin{array} { r l r } { x _ { i } = \max \left ( \hat { x } _ { i } , 0 \right ) } & { \Rightarrow } & { \delta _ { i } \in \{ 0 , 1 \} ^ { h _ { i } } , \, x _ { i } \geq 0 , \quad x _ { i } \leq u _ { i } \cdot \delta _ { i } \quad ( 3 a ) } \end{array}$$

$$x _ { i } \geq \hat { x } _ { i } , \quad x _ { i } \leq \hat { x } _ { i } - l _ { i } \cdot ( 1 - \delta _ { i } ) \quad ( 3 b )$$

It is easy to verify that δ i [ j ] = 0 ⇔ x i [ j ] = 0 (replacing δ i [ j ] in Eq. (21a)) and δ i [ j ] = 1 ⇔ x i [ j ] = ˆ x i [ j ] (replacing δ i [ j ] in Eq. (21b)).

By taking advantage of the feed-forward structure of the neural network, lower and upper bounds l i and u i can be obtained by applying interval arithmetic [9] to propagate the bounds on the inputs, one layer at a time.

Thanks to this specific feed-forward structure of the problem, the generic, non-linear, non-convex problem has been rewritten into an MIP. Optimization of MIP is well studied and highly efficient off-the-shelf solvers exist. As solving them is NP-hard, performance is going to be dependent on the quality of both the solver used and the encoding. We now ask the following question: how much efficiency can be gained by using a bespoke solver rather than a generic one? In order to answer this, we present specialised solvers for the PLNN verification task.

## 4 Branch-and-Bound for Verification

As described in Section 3.1, the verification problem can be rephrased as a global optimization problem. Algorithms such as Stochastic Gradient Descent are not appropriate as they have no way of guaranteeing whether or not a minima is global. In this section, we present an approach to estimate the global minimum, based on the Branch and Bound paradigm and show that several published methods, introduced as examples of Satisfiability Modulo Theories, fit this framework.

Algorithm 1 describes its generic form. The input domain is repeatedly split into sub-domains (line 7), over which lower and upper bounds of the minimum are computed (lines 9-10). The best upperbound found so far serves as a candidate for the global minimum. Any domain whose lower bound is greater than the current global upper bound can be pruned away as it cannot contain the global minimum (line 13, lines 15-17). By iteratively splitting the domains, it is possible to compute tighter lower bounds. We keep track of the global lower bound on the minimum by taking the minimum over the lower bounds of all sub-domains (line 19). When the global upper bound and the global lower bound differ by less than a small scalar /epsilon1 (line 5), we consider that we have converged.

Algorithm 1 shows how to optimise and obtain the global minimum. If all that we are interested in is the satisfiability problem, the procedure can be simplified by initialising the global upper bound with 0 (in line 2). Any subdomain with a lower bound greater than 0 (and therefore not eligible to

contain a counterexample) will be pruned out (by line 15). The computation of the lower bound can therefore be replaced by the feasibility problem (or its relaxation) imposing the constraint that the output is below zero without changing the algorithm. If it is feasible, there might still be a counterexample and further branching is necessary. If it is infeasible, the subdomain can be pruned out. In addition, if any upper bound improving on 0 is found on a subdomain (line 11), it is possible to stop the algorithm as this already indicates the presence of a counterexample.

## Algorithm 1 Branch and Bound

```

Algorithm 1 Branch and Bound

<section_header_level_1><loc_69><loc_45><loc_431><loc_75>1: function BAB(net, domain, ϵ)</section_header_level_1>

<text><loc_68><loc_76><loc_432><loc_105>2: global_ub ← inf</text>

<text><loc_68><loc_106><loc_433><loc_125>3: global_lb ← -inf</text>

<text><loc_69><loc_126><loc_431><loc_145>4: doms ← [(global_lb, domain)]</text>

<text><loc_69><loc_146><loc_432><loc_165>5: while global_ub - global_lb > ϵ do</text>

<text><loc_68><loc_166><loc_431><loc_185>6: (_, dom) <- pick_out(doms)</text>

<text><loc_69><loc_186><loc_432><loc_205>7: [subdom_1,..., subdom_s] ← split(dom)</text>

<text><loc_68><loc_206><loc_431><loc_225>8: for i = 1 ... s do</text>

<text><loc_69><loc_226><loc_432><loc_245>9: dom_ub <- compute_UB(net, subdom_i)</text>

<text><loc_68><loc_246><loc_431><loc_265>10: dom_lb <- compute_LB(net, subdom_i)</text>

<text><loc_69><loc_266><loc_432><loc_285>11: if dom_ub < global_ub then</text>

<text><loc_68><loc_286><loc_431><loc_305>12: global_ub ← dom_ub</text>

<text><loc_68><loc_306><loc_433><loc_325>13: prune_domains(doms, global_ub)</text>

<text><loc_69><loc_326><loc_432><loc_345>14: end if</text>

<text><loc_68><loc_346><loc_431><loc_365>15: if dom_lb < global_ub then</text>

<text><loc_68><loc_366><loc_433><loc_385>16: domains.append((dom_lb, subdom_i))</text>

<text><loc_69><loc_386><loc_432><loc_405>17: end if</text>

<text><loc_68><loc_406><loc_431><loc_425>18: end for</text>

<text><loc_68><loc_426><loc_433><loc_445>19: global_lb ← min{lb | (lb, dom) ∈ doms}</text>

<text><loc_69><loc_446><loc_432><loc_465>20: end while</text>

<text><loc_69><loc_466><loc_431><loc_485>21: return global_ub</text>

</doctag>

```

greatly impact the quality of the resulting bounds.

Bounding methods , defined by the compute \_ { UB , LB } functions. These procedures estimate respectively upper bounds and lower bounds over the minimum output that the network net can reach over a given input domain. We want the lower bound to be as high as possible, so that this whole domain can be pruned easily. This is usually done by introducing convex relaxations of the problem and minimising them. On the other hand, the computed upper bound should be as small as possible, so as to allow pruning out other regions of the space or discovering counterexamples. As any feasible point corresponds to an upper bound on the minimum, heuristic methods are sufficient.

We now demonstrate how some published work in the literature can be understood as special case of the branch-and-bound framework for verification.

## 4.1 Reluplex

Katz et al. [11] present a procedure named Reluplex to verify properties of Neural Network containing linear functions and ReLU activation unit, functioning as an SMT solver using the splitting-ondemand framework [1]. The principle of Reluplex is to always maintain an assignment to all of the variables, even if some of the constraints are violated.

Starting from an initial assignment, it attempts to fix some violated constraints at each step. It prioritises fixing linear constraints ((10a), (10b), (10h) and some relaxation of (2d)) using a simplex algorithm, even if it leads to violated ReLU constraints. If no solution to this relaxed problem containing only linear constraints exists, the counterexample search is unsatisfiable. Otherwise, either all ReLU are respected, which generates a counterexample, or Reluplex attempts to fix one of the violated ReLU; potentially leading to newly violated linear constraints. This process is not guaranteed to converge, so to make progress, non-linearities that get fixed too often are split into two cases. Two new problems are generated, each corresponding to one of the phases of the ReLU. In the worst setting, the problem will be split completely over all possible combinations of activation patterns, at which point the sub-problems will all be simple LPs.

This algorithm can be mapped to the special case of branch-and-bound for satisfiability. The search strategy is handled by the SMT core and to the best of our knowledge does not prioritise any domain. The branching rule is implemented by the ReLU-splitting procedure: when neither the upper bound search, nor the detection of infeasibility are successful, one non-linear constraint over the j -th neu-

The description of the verification problem as optimization and the pseudo-code of Algorithm 1 are generic and would apply to verification problems beyond the specific case of PLNN. To obtain a practical algorithm, it is necessary to specify several elements.

A search strategy , defined by the pick \_ out function, which chooses the next domain to branch on. Several heuristics are possible, for example those based on the results of previous bound computations. For satisfiable problems or optimization problems, this allows to discover good upper bounds, enabling early pruning.

/negationslash

A branching rule , defined by the split function, which takes a domain dom and return a partition in subdomain such that ⋃ i subdom \_ i = dom and that ( subdom \_ i ∩ subdom \_ j ) = ∅ , ∀ i = j . This will define the 'shape' of the domains, which impacts the hardness of computing bounds. In addition, choosing the right partition can

ron of the i -th layer x i [ j ] = max ( ˆ x i [ j ] , 0 ) is split out into two subdomains: { x i [ j ] = 0 , ˆ x i [ j ] ≤ 0 } and { x i [ j ] = ˆ x i [ j ] , ˆ x i [ j ] ≥ 0 } . This defines the type of subdomains produced. The prioritisation of ReLUs that have been frequently fixed is a heuristic to decide between possible partitions.

As Reluplex only deal with satisfiability, the analogue of the lower bound computation is an overapproximation of the satisfiability problem. The bounding method used is a convex relaxation, obtained by dropping some of the constraints. The following relaxation is applied to ReLU units for which the sign of the input is unknown ( l i [ j ] ≤ 0 and u i [ j ] ≥ 0 ).

$$x _ { i } = \max \left ( \hat { x } _ { i } , 0 \right ) \quad \Rightarrow \quad x _ { i } \geq \hat { x } _ { i } \quad ( 4 a ) \quad \ x _ { i } \geq 0 \quad ( 4 b ) \quad \ x _ { i } \leq u _ { i } . \quad ( 4 c )$$

If this relaxation is unsatisfiable, this indicates that the subdomain cannot contain any counterexample and can be pruned out. The search for an assignment satisfying all the ReLU constraints by iteratively attempting to correct the violated ReLUs is a heuristic that is equivalent to the search for an upper bound lower than 0: success implies the end of the procedure but no guarantees can be given.

## 4.2 Planet

Ehlers [6] also proposed an approach based on SMT. Unlike Reluplex, the proposed tool, named Planet, operates by explicitly attempting to find an assignment to the phase of the non-linearities. Reusing the notation of Section 3.2, it assigns a value of 0 or 1 to each δ i [ j ] variable, verifying at each step the feasibility of the partial assignment so as to prune infeasible partial assignment early.

As in Reluplex, the search strategy is not explicitly encoded and simply enumerates all the domains that have not yet been pruned. The branching rule is the same as for Reluplex, as fixing the decision variable δ i [ j ] = 0 is equivalent to choosing { x i [ j ] = 0 , ˆ x i [ j ] ≤ 0 } and fixing δ i [ j ] = 1 is equivalent to { x i [ j ] = ˆ x i [ j ] , ˆ x i [ j ] ≥ 0 } . Note however that Planet does not include any heuristic to prioritise which decision variables should be split over.

Planet does not include a mechanism for early termination based on a heuristic search of a feasible point. For satisfiable problems, only when a full complete assignment is identified is a solution returned. In order to detect incoherent assignments, Ehlers [6] introduces a global linear approximation to a neural network, which is used as a bounding method to over-approximate the set of values that each hidden unit can take. In addition to the existing linear constraints ((10a), (10b) and (10h)), the non-linear constraints are approximated by sets of linear constraints representing the non-linearities' convex hull. Specifically, ReLUs with input of unknown sign are replaced by the set of equations:

$$\begin{array} { r l } & { x _ { i } = \max ( \hat { x } _ { i } , 0 ) \, \Rightarrow \, x _ { i } \geq \hat { x } _ { i } \quad ( 5 a ) \quad x _ { i } \geq 0 \quad ( 5 b ) \quad x _ { i [ j ] } \leq u _ { i [ j ] } \frac { \hat { x } _ { i [ j ] } - l _ { i [ j ] } } { u _ { i [ j ] } - l _ { i [ j ] } } \quad ( 5 c ) } \\ & { w h o r a n d o p e r r o s p o n d e t h e v a l u o f t h e i t h o o r d i n a t e o f x _ { i [ j ] } . \quad A n i l l u r t r a c t i o n o f t h e f a g i b l e } \end{array}$$

where x i [ j ] corresponds to the value of the j -th coordinate of x i . An illustration of the feasible domain is provided in the supplementary material.

Compared with the relaxation of Reluplex (4), the Planet relaxation is tighter. Specifically, Eq. (4a) and (4b) are identical to Eq. (5a) and (5b) but Eq. (5c) implies Eq. (4c). Indeed, given that ˆ x i [ j ] is smaller than u i [ j ] , the fraction multiplying u i [ j ] is necessarily smaller than 1, implying that this provides a tighter bounds on x i [ j ] .

To use this approximation to compute better bounds than the ones given by simple interval arithmetic, it is possible to leverage the feed-forward structure of the neural networks and obtain bounds one layer at a time. Having included all the constraints up until the i -th layer, it is possible to optimize over the resulting linear program and obtain bounds for all the units of the i -th layer, which in turn will allow us to create the constraints (5) for the next layer.

In addition to the pruning obtained by the convex relaxation, both Planet and Reluplex make use of conflict analysis [15] to discover combinations of splits that cannot lead to satisfiable assignments, allowing them to perform further pruning of the domains.

## 4.3 Potential improvements

As can be seen, previous approaches to neural network verification have relied on methodologies developed in three communities: optimization, for the creation of upper and lower bounds; verification, especially SMT; and machine learning, especially the feed-forward nature of neural networks for the creation of relaxations. A natural question that arises is 'Can other existing literature from these domains be exploited to further improve neural network verification?' Our unified branch-andbound formulation makes it easy to answer this question. To illustrate its power, we now provide a non-exhaustive list of suggestions to speed-up verification algorithms.

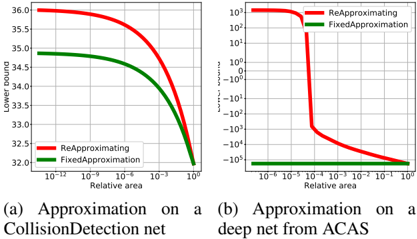

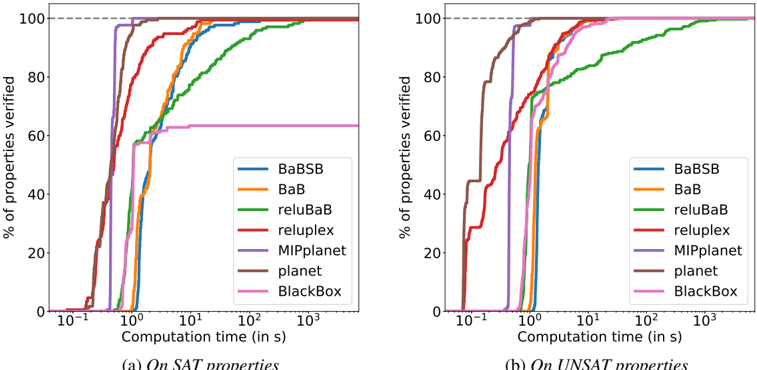

Better bounding While the relaxation proposed by Ehlers [6] is tighter than the one used by Reluplex, it can be improved further still. Specifically, after a splitting operation, on a smaller domain, we can refine all the l i , u i bounds, to obtain a tither relaxation. We show the importance of this in the experiments section with the BaB-relusplit method that performs splitting on the activation like Planet but updates its approximation completely at each step.

One other possible area of improvement lies in the tightness of the bounds used. Equation (5) is very closely related to the Mixed Integer Formulation of Equation (20). Indeed, it corresponds to level 0 of the Sherali-Adams hierarchy of relaxations [18]. The proof for this statement can be found in the supplementary material. Stronger relaxations could be obtained by exploring higher levels of the hierarchy. This would jointly constrain groups of ReLUs, rather than linearising them independently.

Better branching The decision to split on the activation of the ReLU non-linearities made by Planet and Reluplex is intuitive as it provides a clear set of decision variables to fix. However, it ignores another natural branching strategy, namely, splitting the input domain. Indeed, it could be argued that since the function encoded by the neural networks are piecewise linear in their input, this could result in the computation of highly useful upper and lower bounds. To demonstrate this, we propose the novel BaB-input algorithm: a branch-and-bound method that branches over the input features of the network. Based on a domain with input constrained by Eq. (10a), the split function would return two subdomains where bounds would be identical in all dimension except for the dimension with the largest length, denoted i /star . The bounds for each subdomain for dimension i /star are given by l 0[ i /star ] ≤ x 0[ i /star ] ≤ l 0[ i /star ] + u 0[ i /star ] 2 and l 0[ i /star ] + u 0[ i /star ] 2 ≤ x 0[ i /star ] ≤ u 0[ i /star ] . Based on these tighter input bounds, tighter bounds at all layers can be re-evaluated.

One of the main advantage of branching over the variables is that all subdomains generated by the BaB algorithm when splitting over the input variables end up only having simple bound constraints over the value that input variable can take. In order to exploit this property to the fullest, we use the highly efficient lower bound computation approach of Kolter & Wong [13]. This approach was initially proposed in the context of robust optimization. However, our unified framework opens the door for its use in verification. Specifically, Kolter & Wong [13] identified an efficient way of computing bounds for the type of problems we encounter, by generating a feasible solution to the dual of the LP generated by the Planet relaxation. While this bound is quite loose compared to the one obtained through actual optimization, they are very fast to evaluate. We propose a smart branching method BaBSB to replace the longest edge heuristic of BaB-input . For all possible splits, we compute fast bounds for each of the resulting subdomain, and execute the split resulting in the highest lower bound. The intuition is that despite their looseness, the fast bounds will still be useful in identifying the promising splits.

## 5 Experimental setup

The problem of PL-NN verification has been shown to be NP-complete [11]. Meaningful comparison between approaches therefore needs to be experimental.

## 5.1 Methods

The simplest baseline we refer to is BlackBox , a direct encoding of Eq. (2) into the Gurobi solver, which will perform its own relaxation, without taking advantage of the problem's structure.

For the SMT based methods, Reluplex and Planet , we use the publicly available versions [7, 12]. Both tools are implemented in C++ and relies on the GLPK library to solve their relaxation. We wrote some software to convert in both directions between the input format of both solvers.

We also evaluate the potential of using MIP solvers, based on the formulation of Eq. (20). Due to the lack of availability of open-sourced methods at the time of our experiments, we reimplemented the approach in Python, using the Gurobi MIP solver. We report results for a variant called MIPplanet , which uses bounds derived from Planet's convex relaxation rather than simple interval arithmetic. The MIP is not treated as a simple feasibility problem but is encoded to minimize the output ˆ x n of Equation (10h), with a callback interrupting the optimization as soon as a negative value is found. Additional discussions on encodings of the MIP problem can be found in supplementary materials.

In our benchmark, we include the methods derived from our Branch and Bound analysis. Our implementation follows faithfully Algorithm 1, is implemented in Python and uses Gurobi to solve LPs. The pick \_ out strategy consists in prioritising the domain that currently has the smallest lower bound. Upper bounds are generated by randomly sampling points on the considered domain, and we use the convex approximation of Ehlers [6] to obtain lower bounds. As opposed to the approach

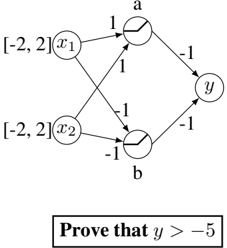

taken by Ehlers [6] of building a single approximation of the network, we rebuild the approximation and recompute all bounds for each sub-domain. This is motivated by the observation shown in Figure 1 which demonstrate the significant improvements it brings, especially for deeper networks. For split , BaB-input performs branching by splitting the input domain in half along its longest edge and BaBSB does it by splitting the input domain along the dimension improving the global lower bound the most according to the fast bounds of Kolter & Wong [13]. We also include results for the BaB-relusplit variant, where the split method is based on the phase of the non-linearities, similarly to Planet .

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Charts: Approximation Performance Comparison

### Overview

The image presents two charts comparing the performance of "ReApproximating" and "FixedApproximation" methods. The left chart (a) shows the relationship between "Relative area" and "Lower bound" for a "CollisionDetection net". The right chart (b) shows the relationship between "Relative area" and "Turns - errors" for a "deep net from ACAS". Both charts use a logarithmic scale for the x-axis ("Relative area").

### Components/Axes

* **Chart (a):**

* X-axis: "Relative area" (logarithmic scale, ranging from approximately 10^-12 to 10^0)

* Y-axis: "Lower bound" (linear scale, ranging from approximately 32.0 to 36.0)

* Legend:

* Red Line: "ReApproximating"

* Green Line: "FixedApproximation"

* **Chart (b):**

* X-axis: "Relative area" (logarithmic scale, ranging from approximately 10^-6 to 10^0)

* Y-axis: "Turns - errors" (logarithmic scale, ranging from approximately 10^-5 to 10^3)

* Legend:

* Red Line: "ReApproximating"

* Green Line: "FixedApproximation"

* **Labels:**

* (a) "Approximation on a CollisionDetection net"

* (b) "Approximation on a deep net from ACAS"

### Detailed Analysis or Content Details

**Chart (a):**

The red line ("ReApproximating") starts at approximately 35.8 at a relative area of 10^-12 and decreases gradually, reaching approximately 32.2 at a relative area of 10^0. The green line ("FixedApproximation") starts at approximately 34.5 at a relative area of 10^-12 and decreases more rapidly, reaching approximately 32.0 at a relative area of 10^0. Both lines converge towards the lower right of the chart.

**Chart (b):**

The red line ("ReApproximating") starts at approximately 10^3 at a relative area of 10^-6 and drops sharply to approximately 10^-1 at a relative area of 10^-2, then continues to decrease slowly to approximately 10^-5 at a relative area of 10^0. The green line ("FixedApproximation") starts at approximately 10^2 at a relative area of 10^-6 and drops sharply to approximately 10^-2 at a relative area of 10^-2, then continues to decrease slowly to approximately 10^-5 at a relative area of 10^0. Both lines exhibit a steep initial drop followed by a more gradual decline.

### Key Observations

* In Chart (a), "FixedApproximation" consistently provides a lower lower bound than "ReApproximating" across the entire range of relative areas.

* In Chart (b), both methods show a significant reduction in errors as the relative area increases, but "ReApproximating" initially has a much higher error rate than "FixedApproximation".

* Both charts demonstrate that increasing the relative area generally improves performance (lower bound in (a) and fewer errors in (b)).

* The logarithmic scale on the x-axis highlights the impact of small changes in relative area at lower values.

### Interpretation

These charts compare two approximation techniques ("ReApproximating" and "FixedApproximation") in the context of collision detection and ACAS (Airborne Collision Avoidance System) deep nets.

Chart (a) suggests that for the CollisionDetection net, "FixedApproximation" provides a more conservative (lower) lower bound, indicating potentially safer or more reliable results. The convergence of the lines suggests that at larger relative areas, the difference in lower bounds diminishes.

Chart (b) indicates that for the ACAS deep net, "ReApproximating" initially suffers from a much higher error rate, but both methods converge to similar error levels at larger relative areas. The steep drop in errors for both methods suggests that increasing the relative area significantly improves the accuracy of the approximation.

The difference in performance between the two methods across the two nets suggests that the optimal approximation technique may depend on the specific application and the characteristics of the underlying network. The use of a logarithmic scale for the x-axis emphasizes the importance of considering the impact of small changes in relative area, particularly at lower values. The data suggests a trade-off between initial accuracy and overall performance as relative area increases.

</details>

## 5.2 Evaluation Criteria

Figure 1: Quality of the linear approximation, depending on the size of the input domain. We plot the value of the lower bound as a function of the area on which it is computed (higher is better). The domains are centered around the global minimum and repeatedly shrunk. Rebuilding completely the linear approximation at each step allows to create tighter lower-bounds thanks to better l i and u i , as opposed to using the same constraints and only changing the bounds on input variables. This effect is even more significant on deeper networks.

For each of the data sets, we compare the different methods using the same protocol. We attempt to verify each property with a timeout of two hours, and a maximum allowed memory usage of 20GB, on a single core of a machine with an i7-5930K CPU. We measure the time taken by the solvers to either prove or disprove the property. If the property is false and the search problem is therefore satisfiable, we expect from the solver to exhibit a counterexample. If the returned input is not a valid counterexample, we don't count the property as successfully proven, even if the property is indeed satisfiable. All code and data necessary to replicate our analysis are released.

## 6 Analysis

We attempt to perform verification on three data sets of properties and report the comparison results. The dimensions of all the problems can be found in the supplementary material.

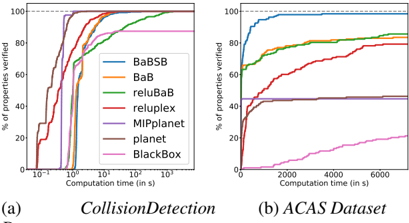

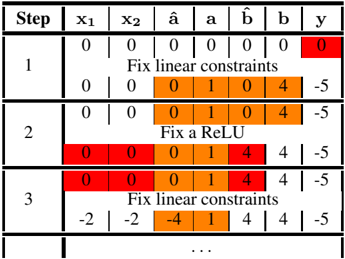

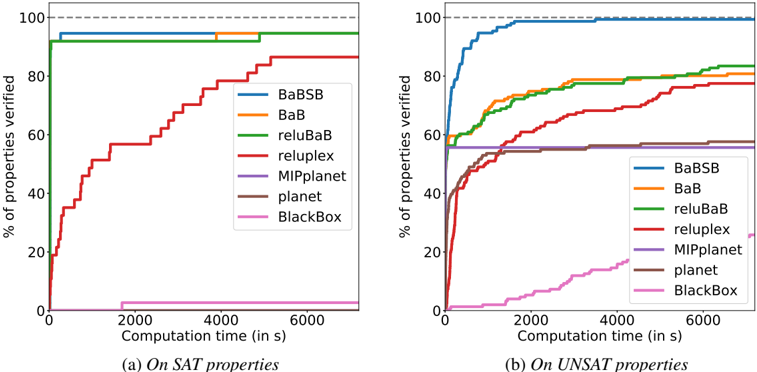

The CollisionDetection data set [6] attempts to predict whether two vehicles with parameterized trajectories are going to collide. 500 properties are extracted from problems arising from a binary search to identify the size of the region around training examples in which the prediction of the network does not change. The network used is relatively shallow but due to the process used to generate the properties, some lie extremely close between the decision boundary between SAT and UNSAT. Results presented in Figure 10 therefore highlight the accuracy of methods.

The Airborne Collision Avoidance System (ACAS) data set, as released by Katz et al. [11] is a neural network based advisory system recommending horizontal manoeuvres for an aircraft in order to avoid collisions, based on sensor measurements. Each of the five possible manoeuvres is assigned a score by the neural network and the action with the minimum score is chosen. The 188 properties to verify are based on some specification describing various scenarios. Due to the deeper network involved, this data set is useful in highlighting the scalability of the various algorithms.

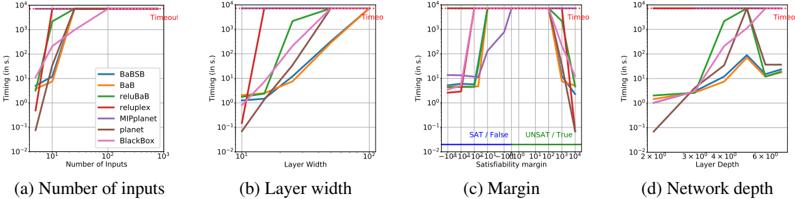

PCAMNIST is a novel data set that we introduce to get a better understanding of what factors influence the performance of various methods. It is generated in a way to give control over different architecture parameters. Details of the dataset construction are given in supplementary materials. We present plots in Figure 4 showing the evolution of runtimes depending on each of the architectural parameters of the networks.

Comparative evaluation of verification approaches In Figure 10, on the shallow networks of CollisionDetection , most solvers succeed against all properties in about 10s. In particular, the SMT inspired solvers Planet , Reluplex and the MIP solver are extremely fast. The BlackBox solver doesn't reach 100% accuracy due to producing incorrect counterexamples. Additional analysis can be found about the cause of those errors in the supplementary materials.

On the deeper networks of ACAS , in Figure 10b, no errors are observed but most methods timeout on the most challenging testcases. The best baseline is Reluplex , who reaches 79.26% success rate at the two hour timeout, while our best method, BaBSB , already achieves 98.40% with a budget of one hour. To reach Reluplex's success rate, the required runtime is two orders of magnitude smaller.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Chart: Performance Comparison of Verification Tools

### Overview

The image presents two charts comparing the performance of several verification tools (BaBSB, BaB, reluBaB, reluplex, MIPplanet, planet, and BlackBox) on two datasets: CollisionDetection and ACAS. The charts plot the percentage of properties verified against computation time. Both charts use a logarithmic scale for the x-axis (Computation time).

### Components/Axes

* **X-axis (Both Charts):** Computation time in seconds (logarithmic scale). The CollisionDetection chart's x-axis ranges from approximately 10<sup>-6</sup> to 10<sup>2</sup> seconds. The ACAS Dataset chart's x-axis ranges from 0 to 6000 seconds.

* **Y-axis (Both Charts):** Percentage of properties verified, ranging from 0% to 100%.

* **Legend (Top-Left of each chart):**

* BaBSB (Blue)

* BaB (Orange)

* reluBaB (Green)

* reluplex (Red)

* MIPplanet (Purple)

* planet (Brown)

* BlackBox (Pink)

* **Chart Titles:**

* (a) CollisionDetection

* (b) ACAS Dataset

### Detailed Analysis or Content Details

**Chart (a) - CollisionDetection:**

* **BaBSB (Blue):** Starts at approximately 0% at 10<sup>-6</sup> seconds, rapidly increases to approximately 80% by 10<sup>-2</sup> seconds, and plateaus around 90-100% from 10<sup>-1</sup> seconds onwards.

* **BaB (Orange):** Starts at approximately 0% at 10<sup>-6</sup> seconds, increases to approximately 70% by 10<sup>-2</sup> seconds, and plateaus around 90-100% from 10<sup>-1</sup> seconds onwards.

* **reluBaB (Green):** Starts at approximately 0% at 10<sup>-6</sup> seconds, increases more slowly than BaBSB and BaB, reaching approximately 60% at 10<sup>-1</sup> seconds, and plateaus around 80-90%.

* **reluplex (Red):** Starts at approximately 0% at 10<sup>-6</sup> seconds, increases slowly, reaching approximately 40% at 1 second, and plateaus around 60-70%.

* **MIPplanet (Purple):** Starts at approximately 0% at 10<sup>-6</sup> seconds, increases slowly, reaching approximately 30% at 1 second, and plateaus around 50-60%.

* **planet (Brown):** Starts at approximately 0% at 10<sup>-6</sup> seconds, increases slowly, reaching approximately 20% at 1 second, and plateaus around 40-50%.

* **BlackBox (Pink):** Starts at approximately 0% at 10<sup>-6</sup> seconds, increases very slowly, reaching approximately 10% at 1 second, and plateaus around 20-30%.

**Chart (b) - ACAS Dataset:**

* **BaBSB (Blue):** Starts at approximately 0% at 0 seconds, rapidly increases to approximately 80% by 2000 seconds, and reaches 100% by 4000 seconds.

* **BaB (Orange):** Starts at approximately 0% at 0 seconds, increases to approximately 60% by 2000 seconds, and reaches 80% by 4000 seconds.

* **reluBaB (Green):** Starts at approximately 0% at 0 seconds, increases to approximately 70% by 4000 seconds.

* **reluplex (Red):** Starts at approximately 0% at 0 seconds, increases slowly, reaching approximately 40% by 6000 seconds.

* **MIPplanet (Purple):** Starts at approximately 0% at 0 seconds, increases slowly, reaching approximately 20% by 6000 seconds.

* **planet (Brown):** Starts at approximately 0% at 0 seconds, increases slowly, reaching approximately 20% by 6000 seconds.

* **BlackBox (Pink):** Starts at approximately 0% at 0 seconds, increases very slowly, reaching approximately 10% by 6000 seconds.

### Key Observations

* **CollisionDetection:** BaBSB and BaB consistently outperform other methods, achieving high verification rates quickly. BlackBox performs the worst.

* **ACAS Dataset:** BaBSB again demonstrates the best performance, reaching 100% verification. The performance gap between the tools is less pronounced than in the CollisionDetection dataset, but BaBSB, BaB, and reluBaB still outperform the others.

* The logarithmic scale on the x-axis of the CollisionDetection chart highlights the rapid initial gains in verification percentage for the better-performing tools.

### Interpretation

These charts compare the effectiveness of different verification tools in proving properties of systems, specifically in the context of collision detection and ACAS (Airborne Collision Avoidance System) datasets. The percentage of properties verified represents the tool's ability to guarantee certain safety or correctness characteristics.

The consistent superior performance of BaBSB and BaB across both datasets suggests they are more efficient and scalable for these types of verification tasks. The slower performance of reluplex, MIPplanet, planet, and BlackBox indicates they may struggle with the complexity of these problems or require significantly more computation time to achieve comparable results.

The difference in performance between the datasets could be due to the inherent complexity of the properties being verified or the characteristics of the datasets themselves. The ACAS dataset appears to require more computation time overall to achieve high verification rates, potentially due to the more complex nature of the ACAS system.

The charts provide valuable insights for selecting the appropriate verification tool for a given application, highlighting the trade-offs between performance, scalability, and computational cost.

</details>

Dataset

Table 1: Average time to explore a node for each method.

| Method | Average time per Node |

|-------------------|-------------------------|

| BaBSB BaB reluBaB | 1.81s 2.11s 1.69s |

| reluplex | 0.30s |

| MIPplanet | |

| | 0.017s |

| planet | 1.5e-3s |

Figure 2: Proportion of properties verifiable for varying time budgets depending on the methods employed. A higher curve means that for the same time budget, more properties will be solvable. All methods solve CollisionDetection quite quickly except reluBaB , which is much slower and BlackBox who produces several incorrect counterexamples.

<details>

<summary>Image 3 Details</summary>

### Visual Description

\n

## Chart: Property Verification vs. Nodes Visited

### Overview

The image presents a line chart illustrating the percentage of properties verified as a function of the number of nodes visited. The chart compares the performance of several different approaches or algorithms, each represented by a distinct colored line. A dashed horizontal line at 100% serves as a reference point for complete verification.

### Components/Axes

* **X-axis:** "Number of Nodes visited" - Logarithmic scale from 10⁰ to 10⁶.

* **Y-axis:** "% of properties verified" - Linear scale from 0 to 100.

* **Lines:** Five distinct colored lines representing different methods. The colors are (from top to bottom): Blue, Red, Orange, Green, and Purple.

* **Horizontal dashed line:** A grey dashed line at 100% representing complete property verification.

* **Legend:** No explicit legend is present in the image. The lines must be identified by their color and relative position.

### Detailed Analysis

The chart displays the following trends and approximate data points:

* **Blue Line:** This line shows the fastest verification rate. It starts at approximately 5% at 10⁰ nodes visited, quickly rises to approximately 80% at 10² nodes visited, reaches approximately 95% at 10³ nodes visited, and plateaus at nearly 100% from 10⁴ nodes visited onwards.

* **Red Line:** This line begins at approximately 0% at 10⁰ nodes visited, increases to approximately 40% at 10² nodes visited, reaches approximately 85% at 10⁵ nodes visited, and plateaus around 85-90% for higher node counts.

* **Orange Line:** This line starts at approximately 10% at 10⁰ nodes visited, rises to approximately 60% at 10² nodes visited, reaches approximately 80% at 10⁴ nodes visited, and plateaus around 80-85% for higher node counts.

* **Green Line:** This line shows a slower initial verification rate. It starts at approximately 0% at 10⁰ nodes visited, increases to approximately 40% at 10² nodes visited, reaches approximately 65% at 10³ nodes visited, and plateaus around 65-70% for higher node counts.

* **Purple Line:** This line exhibits the slowest verification rate. It starts at approximately 0% at 10⁰ nodes visited, increases to approximately 35% at 10³ nodes visited, reaches approximately 50% at 10⁴ nodes visited, and plateaus around 50% for higher node counts.

### Key Observations

* The blue line consistently outperforms all other methods, achieving near-complete verification with a relatively small number of nodes visited.

* The purple line demonstrates the poorest performance, requiring a significantly larger number of nodes visited to achieve even moderate verification rates.

* The red and orange lines show intermediate performance, with the red line eventually reaching a higher verification rate than the orange line.

* All lines exhibit a diminishing return in verification rate as the number of nodes visited increases.

### Interpretation

This chart likely compares the efficiency of different algorithms or strategies for verifying properties within a graph or knowledge base. The "Number of Nodes Visited" represents the computational effort required, while the "% of Properties Verified" represents the effectiveness of the approach.

The superior performance of the blue line suggests that it is a highly efficient algorithm for property verification. The significant difference in performance between the lines indicates that the choice of algorithm can have a substantial impact on the computational cost of verifying properties.

The plateauing of all lines suggests that there is a limit to the verification rate achievable with any given approach. This could be due to inherent limitations in the data or the complexity of the properties being verified. The dashed line at 100% serves as an ideal, but may not be practically achievable.

The chart highlights the trade-off between computational effort and verification accuracy. The optimal approach will depend on the specific application and the relative importance of these two factors. The data suggests that the blue line offers the best balance, achieving high verification rates with relatively low computational cost.

</details>

Number of Nodes visited

(a) Properties solved for a given number of nodes to explore (log scale).

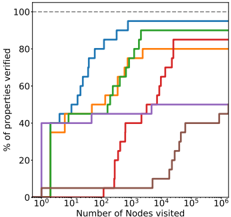

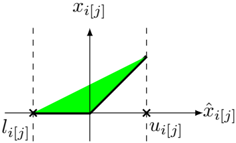

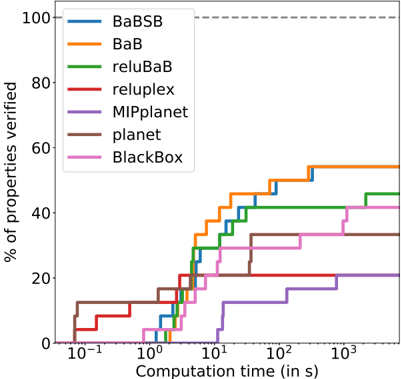

Figure 3: The trade-off taken by the various methods are different. Figure 3a shows how many subdomains needs to be explored before verifying properties while Table 1 shows the average time cost of exploring each subdomain. Our methods have a higher cost per node but they require significantly less branching, thanks to better bounding. Note also that between BaBSB and BaB , the smart branching reduces by an order of magnitude the number of nodes to visit.

Impact of each improvement To identify which changes allow our method to have good performance, we perform an ablation study and study the impact of removing some components of our methods. The only difference between BaBSB and BaB is the smart branching, which represents a significant part of the performance gap.

Branching over the inputs rather than over the activations does not contribute much, as shown by the small difference between BaB and reluBaB . Note however that we are able to use the fast methods of Kolter & Wong [13] for the smart branching because branching over the inputs makes the bounding problem similar to the one solved in robust optimization. Even if it doesn't improve performance by itself, the new type of split enables the use of smart branching.

The rest of the performance gap can be attributed to using a better bounds: reluBaB significantly outperforms planet while using the same branching strategy and the same convex relaxations. The improvement comes from the benefits of rebuilding the approximation at each step shown in Figure 1.

Figure 3 presents some additional analysis on a 20-property subset of the ACAS dataset, showing how the methods used impact the need for branching. Smart branching and the use of better lower bounds reduce heavily the number of subdomains to explore.

Timeout

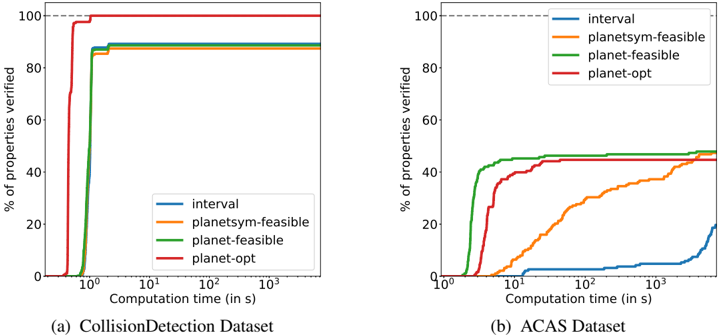

Figure 4: Impact of the various parameters over the runtimes of the different solvers. The base network has 10 inputs and 4 layers of 25 hidden units, and the property to prove is True with a margin of 1000. Each of the plot correspond to a variation of one of this parameters.

<details>

<summary>Image 4 Details</summary>

### Visual Description

\n

## Charts: Performance Timing vs. Various Parameters

### Overview

The image presents four charts, each depicting the timing (in seconds) of different solvers (BaBSB, BaB, reluBaB, relupiex, MIPplanet, planet, BlackBox) as a function of varying parameters: (a) Number of Inputs, (b) Layer Width, (c) Satisfiability Margin, and (d) Network Depth. The y-axis of all charts is on a logarithmic scale. The charts appear to be evaluating the scalability and performance of these solvers under different problem characteristics.

### Components/Axes

Each chart shares the following components:

* **X-axis:** Represents the independent variable being tested (Number of Inputs, Layer Width, Satisfiability Margin, Network Depth).

* **Y-axis:** Labeled "Timing (in s)" and is a logarithmic scale ranging from 10<sup>-2</sup> to 10<sup>1</sup>.

* **Legend:** Located in the top-left corner of each chart, identifying the different solvers by color. The solvers are:

* BaBSB (Blue)

* BaB (Orange)

* reluBaB (Green)

* relupiex (Red)

* MIPplanet (Purple)

* planet (Pink)

* BlackBox (Brown)

* **Title:** Each chart has a title indicating the parameter being varied.

* **Annotations:** The charts include annotations like "Timeout" and "Time" indicating where solvers exceeded a time limit.

### Detailed Analysis or Content Details

**Chart (a): Number of Inputs**

* **X-axis:** Number of Inputs, ranging from approximately 10<sup>0</sup> to 10<sup>4</sup>.

* **Trends:**

* BaBSB (Blue): Starts at approximately 0.01s and increases rapidly, reaching the timeout limit (10<sup>1</sup> s) around 10<sup>3</sup> inputs.

* BaB (Orange): Starts at approximately 0.01s and increases rapidly, reaching the timeout limit around 10<sup>3</sup> inputs.

* reluBaB (Green): Starts at approximately 0.01s and increases rapidly, reaching the timeout limit around 10<sup>3</sup> inputs.

* relupiex (Red): Starts at approximately 0.01s and increases rapidly, reaching the timeout limit around 10<sup>3</sup> inputs.

* MIPplanet (Purple): Starts at approximately 0.01s and increases rapidly, reaching the timeout limit around 10<sup>3</sup> inputs.

* planet (Pink): Starts at approximately 0.01s and increases rapidly, reaching the timeout limit around 10<sup>3</sup> inputs.

* BlackBox (Brown): Starts at approximately 0.01s and increases rapidly, reaching the timeout limit around 10<sup>3</sup> inputs.

**Chart (b): Layer Width**

* **X-axis:** Layer Width, ranging from approximately 10<sup>-2</sup> to 10<sup>1</sup>.

* **Trends:**

* BaBSB (Blue): Increases rapidly from approximately 0.01s to the timeout limit around a layer width of 10<sup>1</sup>.

* BaB (Orange): Increases rapidly from approximately 0.01s to the timeout limit around a layer width of 10<sup>1</sup>.

* reluBaB (Green): Increases rapidly from approximately 0.01s to the timeout limit around a layer width of 10<sup>1</sup>.

* relupiex (Red): Increases rapidly from approximately 0.01s to the timeout limit around a layer width of 10<sup>1</sup>.

* MIPplanet (Purple): Increases rapidly from approximately 0.01s to the timeout limit around a layer width of 10<sup>1</sup>.

* planet (Pink): Increases rapidly from approximately 0.01s to the timeout limit around a layer width of 10<sup>1</sup>.

* BlackBox (Brown): Increases rapidly from approximately 0.01s to the timeout limit around a layer width of 10<sup>1</sup>.

**Chart (c): Margin**

* **X-axis:** Satisfiability Margin, ranging from approximately 10<sup>-1</sup> to 10<sup>1</sup>.

* **Trends:**

* BaBSB (Blue): Remains relatively constant at approximately 0.01s until the margin reaches 10<sup>0</sup>, then increases to approximately 0.1s.

* BaB (Orange): Remains relatively constant at approximately 0.01s until the margin reaches 10<sup>0</sup>, then increases to approximately 0.1s.

* reluBaB (Green): Remains relatively constant at approximately 0.01s until the margin reaches 10<sup>0</sup>, then increases to approximately 0.1s.

* relupiex (Red): Remains relatively constant at approximately 0.01s until the margin reaches 10<sup>0</sup>, then increases to approximately 0.1s.

* MIPplanet (Purple): Remains relatively constant at approximately 0.01s until the margin reaches 10<sup>0</sup>, then increases to approximately 0.1s.

* planet (Pink): Remains relatively constant at approximately 0.01s until the margin reaches 10<sup>0</sup>, then increases to approximately 0.1s.

* BlackBox (Brown): Remains relatively constant at approximately 0.01s until the margin reaches 10<sup>0</sup>, then increases to approximately 0.1s.

**Chart (d): Network Depth**

* **X-axis:** Network Depth, ranging from approximately 2 x 10<sup>3</sup> to 6 x 10<sup>3</sup>.

* **Trends:**

* BaBSB (Blue): Increases from approximately 0.01s to approximately 0.1s, then fluctuates.

* BaB (Orange): Increases from approximately 0.01s to approximately 0.1s, then fluctuates.

* reluBaB (Green): Increases from approximately 0.01s to approximately 1s.

* relupiex (Red): Increases from approximately 0.01s to approximately 1s.

* MIPplanet (Purple): Increases from approximately 0.01s to approximately 0.1s, then fluctuates.

* planet (Pink): Increases from approximately 0.01s to approximately 1s.

* BlackBox (Brown): Increases from approximately 0.01s to approximately 0.1s, then fluctuates.

### Key Observations

* All solvers exhibit a rapid increase in timing as the Number of Inputs and Layer Width increase, quickly reaching the timeout limit.

* The Satisfiability Margin has a relatively small impact on timing until it reaches a certain threshold, after which timing increases.

* Network Depth shows more varied behavior, with some solvers (reluBaB, planet) exhibiting a significant increase in timing, while others (BaBSB, BaB, MIPplanet) show more moderate fluctuations.

* The logarithmic y-axis emphasizes the exponential growth in timing for many solvers.

### Interpretation

The data suggests that the performance of these solvers is highly sensitive to the size and complexity of the problem, as measured by the Number of Inputs and Layer Width. The rapid increase in timing indicates that these problems quickly become intractable for these solvers as these parameters grow. The Satisfiability Margin appears to have a threshold effect, where timing remains relatively constant until a certain margin is reached, after which performance degrades. The Network Depth results suggest that some solvers are more susceptible to the effects of increased network complexity than others.

The consistent "Timeout" annotations across most solvers for larger values of Number of Inputs and Layer Width indicate a fundamental limitation in the scalability of these approaches. The differences in timing between solvers, even before reaching the timeout limit, suggest that some solvers are more efficient than others for certain problem characteristics. The varying responses to Network Depth suggest that the choice of solver should be tailored to the specific structure of the problem being solved. The logarithmic scale is crucial for understanding the magnitude of the performance differences, highlighting the exponential growth in computation time.

</details>

In the graphs of Figure 4, the trend for all the methods are similar, which seems to indicate that hard properties are intrinsically hard and not just hard for a specific solver. Figure 4a shows an expected trend: the largest the number of inputs, the harder the problem is. Similarly, Figure 4b shows unsurprisingly that wider networks require more time to solve, which can be explained by the fact that they have more non-linearities. The impact of the margin, as shown in Figure 4c is

also clear. Properties that are True or False with large satisfiability margin are easy to prove, while properties that have small satisfiability margins are significantly harder.

## 7 Conclusion

The improvement of formal verification of Neural Networks represents an important challenge to be tackled before learned models can be used in safety critical applications. By providing both a unified framework to reason about methods and a set of empirical benchmarks to measure performance with, we hope to contribute to progress in this direction. Our analysis of published algorithms through the lens of Branch and Bound optimization has already resulted in significant improvements in runtime on our benchmarks. Its continued analysis should reveal even more efficient algorithms in the future.

## References

- [1] Barrett, Clark, Nieuwenhuis, Robert, Oliveras, Albert, and Tinelli, Cesare. Splitting on demand in sat modulo theories. International Conference on Logic for Programming Artificial Intelligence and Reasoning , 2006.

- [2] Bastani, Osbert, Ioannou, Yani, Lampropoulos, Leonidas, Vytiniotis, Dimitrios, Nori, Aditya, and Criminisi, Antonio. Measuring neural net robustness with constraints. NIPS , 2016.

- [3] Buxton, John N and Randell, Brian. Software Engineering Techniques: Report on a Conference Sponsored by the NATO Science Committee . NATO Science Committee, 1970.

- [4] Cheng, Chih-Hong, Nührenberg, Georg, and Ruess, Harald. Maximum resilience of artificial neural networks. Automated Technology for Verification and Analysis , 2017.

- [5] Cheng, Chih-Hong, Nührenberg, Georg, and Ruess, Harald. Verification of binarized neural networks. arXiv:1710.03107 , 2017.

- [6] Ehlers, Ruediger. Formal verification of piece-wise linear feed-forward neural networks. Automated Technology for Verification and Analysis , 2017.

- [7] Ehlers, Ruediger. Planet. https://github.com/progirep/planet , 2017.

- [8] Hein, Matthias and Andriushchenko, Maksym. Formal guarantees on the robustness of a classifier against adversarial manipulation. NIPS , 2017.

- [9] Hickey, Timothy, Ju, Qun, and Van Emden, Maarten H. Interval arithmetic: From principles to implementation. Journal of the ACM (JACM) , 2001.

- [10] Huang, Xiaowei, Kwiatkowska, Marta, Wang, Sen, and Wu, Min. Safety verification of deep neural networks. International Conference on Computer Aided Verification , 2017.

- [11] Katz, Guy, Barrett, Clark, Dill, David, Julian, Kyle, and Kochenderfer, Mykel. Reluplex: An efficient smt solver for verifying deep neural networks. CAV , 2017.

- [12] Katz, Guy, Barrett, Clark, Dill, David, Julian, Kyle, and Kochenderfer, Mykel. Reluplex. https://github.com/guykatzz/ReluplexCav2017 , 2017.

- [13] Kolter, Zico and Wong, Eric. Provable defenses against adversarial examples via the convex outer adversarial polytope. arXiv:1711.00851 , 2017.

- [14] Lomuscio, Alessio and Maganti, Lalit. An approach to reachability analysis for feed-forward relu neural networks. arXiv:1706.07351 , 2017.

- [15] Marques-Silva, João P and Sakallah, Karem A. Grasp: A search algorithm for propositional satisfiability. IEEE Transactions on Computers , 1999.

- [16] Narodytska, Nina, Kasiviswanathan, Shiva Prasad, Ryzhyk, Leonid, Sagiv, Mooly, and Walsh, Toby. Verifying properties of binarized deep neural networks. arXiv:1709.06662 , 2017.

- [17] Pulina, Luca and Tacchella, Armando. An abstraction-refinement approach to verification of artificial neural networks. CAV , 2010.

- [18] Sherali, Hanif D and Adams, Warren P. A hierarchy of relaxations and convex hull characterizations for mixed-integer zero-one programming problems. Discrete Applied Mathematics , 1994.

- [19] Tjeng, Vincent and Tedrake, Russ. Verifying neural networks with mixed integer programming. arXiv:1711.07356 , 2017.

- [20] Xiang, Weiming, Tran, Hoang-Dung, and Johnson, Taylor T. Output reachable set estimation and verification for multi-layer neural networks. arXiv:1708.03322 , 2017.

- [21] Zakrzewski, Radosiaw R. Verification of a trained neural network accuracy. IJCNN , 2001.

## A Canonical Form of the verification problem

If the property is a simple inequality P ( ˆ x n ) /defines c T ˆ x n ≥ b , it is sufficient to add to the network a final fully connected layer with one output, with weight of c and a bias of -b . If the global minimum of this network is positive, it indicates that for all ˆ x n the original network can output, we have c T ˆ x n -b ≥ 0 = ⇒ c T ˆ x n ≥ b , and as a consequence the property is True. On the other hand, if the global minimum is negative, then the minimizer provides a counter-example.

Clauses specified using OR (denoted by ∨ ) can be encoded by using a MaxPooling unit. If the property is P ( ˆ x n ) /defines ∨ i [ c T i ˆ x n ≥ b i ] , this is equivalent to max i ( c T i ˆ x n -b i ) ≥ 0 .

Clauses specified using AND (denoted by ∧ ) can be encoded similarly: the property P (ˆ x n ) = ∧ i [ c T i ˆ x n ≥ b i ] is equivalent to min i ( c T i ˆ x n -b i ) ≥ 0 ⇐⇒ -( max i ( -c T i ˆ x n + b i )) ≥ 0

## B Toy Problem example

We have specified the problem of formal verification of neural networks as follows: given a network that implements a function ˆ x n = f ( x 0 ) , a bounded input domain C and a property P , we want to prove that

$$x _ { 0 } \in \mathcal { C } , \quad \hat { x } _ { n } = f ( x _ { 0 } ) \implies P ( \hat { x } _ { n } ) .$$

A toy-example of the Neural Network verification problem is given in Figure 5. On the domain C = [ -2; 2] × [ -2; 2] , we want to prove that the output y of the one hidden-layer network always satisfy the property P ( y ) /defines [ y > -5] . We will use this as a running example to explain the methods used for comparison in our experiments.

a

Figure 5: Example Neural Network. We attempt to prove the property that the network output is always greater than -5

<details>

<summary>Image 5 Details</summary>

### Visual Description

\n

## Diagram: Network/Graph with Inequality Proof

### Overview

The image depicts a directed graph with numerical labels on edges and nodes. The graph has two input nodes (x1 and x2), two intermediate nodes (a and b), and one output node (y). Each input node has a range constraint associated with it. Below the graph is a text instruction to prove an inequality involving 'y'.

### Components/Axes

* **Nodes:** x1, x2, a, b, y

* **Edges:**

* x1 -> a: labeled "1"

* x2 -> a: labeled "1"

* x1 -> b: labeled "-1"

* x2 -> b: labeled "-1"

* a -> y: labeled "-1"

* b -> y: labeled "-1"

* **Node Constraints:**

* x1: [-2, 2]

* x2: [-2, 2]

* **Proof Statement:** "Prove that y > -5"

### Detailed Analysis or Content Details

The graph represents a network where the value of 'y' is determined by the values of 'x1' and 'x2' and the weights on the edges.

* **x1 and x2:** Each is constrained to be between -2 and 2, inclusive.

* **a:** The value of 'a' is determined by x1 and x2. Since x1 and x2 both contribute positively to 'a' with a weight of 1, the maximum value of 'a' is 2 + 2 = 4, and the minimum value is -2 + -2 = -4.

* **b:** The value of 'b' is determined by x1 and x2, but with negative weights (-1). The maximum value of 'b' is -(-2) + -(-2) = 2 + 2 = 4, and the minimum value is -2 + -2 = -4.

* **y:** The value of 'y' is determined by 'a' and 'b', both with negative weights (-1). To minimize 'y', we need to maximize 'a' and 'b'. Therefore, the minimum value of 'y' is -4 + -4 = -8. To maximize 'y', we need to minimize 'a' and 'b'. Therefore, the maximum value of 'y' is 4 + 4 = 8.

### Key Observations

* The graph is a simple network with a clear flow of information from inputs to output.

* The constraints on x1 and x2 directly influence the possible range of values for y.

* The negative edge weights from 'a' and 'b' to 'y' suggest a potential for 'y' to be negative.

* The proof statement asks to demonstrate that 'y' is greater than -5, which seems plausible given the constraints.

### Interpretation

The diagram represents a mathematical relationship between variables. The goal is to prove a lower bound for the output variable 'y' based on the constraints of the input variables 'x1' and 'x2' and the weights of the connections. The network structure and edge weights define a function that maps the input range to the output range. The proof statement suggests that the function is such that even under the most unfavorable conditions (minimum values of x1 and x2), 'y' remains greater than -5.

To prove y > -5:

y = -a - b

a = x1 + x2

b = -x1 - x2

y = -(x1 + x2) - (-x1 - x2) = -x1 - x2 + x1 + x2 = 0

This is incorrect. Let's re-examine the graph.

y = -a - b

a = x1 + x2

b = -x1 - x2

y = -(x1 + x2) - (-x1 - x2) = -x1 - x2 + x1 + x2 = 0

This is still incorrect. Let's re-examine the graph.

y = -a - b

a = x1 + x2

b = -x1 - x2

y = -(x1 + x2) - (-x1 - x2) = -x1 - x2 + x1 + x2 = 0

The error is in the edge weights. The edge from x1 to b is -1, and the edge from x2 to b is -1. Therefore:

b = -x1 - x2

y = -a - b = -(x1 + x2) - (-x1 - x2) = -x1 - x2 + x1 + x2 = 0

The graph is incorrect. The edge from x2 to a is labeled 1, and the edge from x2 to b is labeled -1.

y = -a - b

a = x1 + x2

b = -x1 - x2

y = -(x1 + x2) - (-x1 - x2) = -x1 - x2 + x1 + x2 = 0

The minimum value of y is when x1 = -2 and x2 = -2.

y = -(-2 + -2) - (-(-2) + -(-2)) = -(-4) - (2 + 2) = 4 - 4 = 0

Since y = 0, y > -5. The statement is true.

</details>

## B.1 Problem formulation

For the network of Figure 5, the variables would be { x 1 , x 2 , a in , a out , b in , b out , y } and the set of constraints would be:

$$- 2 \leq x _ { 1 } \leq 2 & & - 2 \leq x _ { 2 } \leq 2 & & ( 7 a )$$

$$\hat { a } = x _ { 1 } + x _ { 2 } \quad & \hat { b } = - x _ { 1 } - x _ { 2 }$$

$$y = - a - b & & ( 7 \text {b} )$$

$$a = \max ( \hat { a } , 0 ) & & b = \max ( \hat { b } , 0 ) & & ( 7 c )$$

$$y \leq - 5 & & ( 7 d )$$

Here, ˆ a is the input value to hidden unit, while a is the value after the ReLU. Any point satisfying all the above constraints would be a counterexample to the property, as it would imply that it is possible to drive the output to -5 or less.

## B.2 MIP formulation

In our example, the non-linearities of equation (7c) would be replaced by

$$\begin{matrix} a \geq 0 & a \geq \hat { a } \\ a \leq \hat { a } - l _ { a } ( 1 - \delta _ { a } ) & a \leq u _ { a } \delta _ { a } \\ \delta _ { a } \in \{ 0 , 1 \} \end{matrix}$$

Figure 6: Evolution of the Reluplex algorithm. Red cells corresponds to value violating Linear constraints, and orange cells corresponds to value violating ReLU constraints. Resolution of violation of linear constraints are prioritised.

<details>

<summary>Image 6 Details</summary>

### Visual Description

\n

## Table: Iterative Optimization Steps

### Overview

The image presents a table detailing iterative steps in an optimization process, likely related to neural network training or a similar machine learning context. The table tracks the values of variables (x1, x2, â, a, b̂, b) and the output (y) across each step. Color-coding highlights changes in variable values.

### Components/Axes

The table has the following columns:

* **Step:** Integer representing the iteration number (1, 2, 3, and ellipsis indicating continuation).

* **x1:** Numerical value.

* **x2:** Numerical value.

* **â:** Numerical value.

* **a:** Numerical value.

* **b̂:** Numerical value.

* **b:** Numerical value.

* **y:** Numerical value representing the output.

Each row represents a step in the iterative process. Text annotations describe the action taken in each step ("Fix linear constraints", "Fix a ReLU").

### Detailed Analysis or Content Details

Here's a breakdown of the data in each step:

**Step 1:**

* x1 = 0

* x2 = 0

* â = 0

* a = 0

* b̂ = 0

* b = 0

* y = 0 (highlighted in red)

* Action: "Fix linear constraints"

* x1 = 0

* x2 = 0

* â = 0

* a = 1

* b̂ = 0

* b = 4

* y = -5

**Step 2:**

* x1 = 0

* x2 = 0

* â = 0

* a = 1

* b̂ = 0

* b = 4

* y = -5

* Action: "Fix a ReLU"

* x1 = 0

* x2 = 0

* â = 0

* a = 1

* b̂ = 4

* b = 4

* y = -5

**Step 3:**

* x1 = 0

* x2 = 0

* â = 0

* a = 1

* b̂ = 4

* b = 4

* y = -5

* Action: "Fix linear constraints"

* x1 = -2

* x2 = -2

* â = -4

* a = 1

* b̂ = 4

* b = 4

* y = -5

The ellipsis (...) indicates that the process continues beyond step 3.

### Key Observations

* The values of x1, x2, and â become negative in step 3, while a, b̂, and b remain non-negative.

* The output 'y' remains constant at -5 throughout the observed steps.

* The color-coding clearly shows which variables are being modified in each step. Red indicates a change from the previous value within that step. Orange indicates a value that has been fixed in that step.

* The actions alternate between "Fix linear constraints" and "Fix a ReLU", suggesting an iterative optimization algorithm that alternates between enforcing linear constraints and applying a ReLU activation function.

### Interpretation

This table likely represents a simplified illustration of an optimization algorithm used in training a neural network with ReLU activation functions. The algorithm appears to be attempting to minimize some loss function (implied by the 'y' value) by iteratively adjusting the values of the variables x1, x2, â, a, b̂, and b.

The alternating steps of "Fix linear constraints" and "Fix a ReLU" suggest a coordinate descent or similar optimization strategy. The ReLU function introduces non-linearity, and the algorithm is likely trying to find values for the variables that satisfy both the linear constraints and the ReLU activation function while minimizing the output 'y'.

The constant value of 'y' (-5) throughout the observed steps could indicate that the algorithm is still in the early stages of optimization and has not yet converged to a minimum, or that the loss function is flat in the current region of the parameter space. The negative values appearing in step 3 suggest the algorithm is exploring regions of the parameter space where some variables may need to be negative to achieve a better solution. The table provides a visual representation of how the algorithm iteratively refines the variable values to approach an optimal solution.

</details>

where l a is a lower bound of the value that ˆ a can take (such as -4) and u a is an upper bound (such as 4). The binary variable δ a indicates which phase the ReLU is in: if δ a = 0 , the ReLU is blocked and a out = 0 , else the ReLU is passing and a out = a in . The problem remains difficult due to the integrality constraint on δ a .

## B.3 Running Reluplex

Table 6 shows the initial steps of a run of the Reluplex algorithm on the example of Figure 5. Starting from an initial assignment, it attempts to fix some violated constraints at each step. It prioritises fixing linear constraints ((7a), (7b) and (7d) in our illustrative example) using a simplex algorithm, even if it leads to violated ReLU constraints (7c). This can be seen in step 1 and 3 of the process.

If no solution to the problem containing only linear constraints exists, this shows that the counterexample search is unsatisfiable. Otherwise, all linear constraints are fixed and Reluplex attempts to fix one violated ReLU at a time, such as in step 2 of Table 6 (fixing the ReLU b ), potentially leading to newly violated linear constraints. In the case where no violated ReLU exists, this means that a satisfiable assignment has been found and that the search can be interrupted.

This process is not guaranteed to converge, so to guarantee progress, non-linearities getting fixed too often are split into two cases. This generates two new sub-problems, each involving an additional linear constraint instead of the linear one. The first one solves the problem where ˆ a ≤ 0 and a = 0 , the second one where ˆ a ≥ 0 and a = ˆ a . In the worst setting, the problem will be split completely over all possible combinations of activation patterns, at which point the sub-problems are simple LPs.

## C Planet approximation

The feasible set of the Mixed Integer Programming formulation is given by the following set of equations. We assume that all l i are negative and u i are positive. In case this isn't true, it is possible to just update the bounds such that they are.

$$l _ { 0 } \leq x _ { 0 } \leq u _ { 0 } & & ( 9 a )$$

$$\hat { x } _ { i + 1 } = W _ { i + i } x _ { i } + b _ { i + i } \quad \forall i \in \{ 0 , \, n - 1 \}$$

$$x _ { i } \geq 0 \quad \forall i \in \{ 1 , \, n - 1 \} \quad ( 9 c )$$

$$x _ { i } \geq \hat { x } _ { i } & & \forall i \in \{ 1 , \, n - 1 \} & & ( 9 d )$$

$$x _ { i } \leq \hat { x } _ { i } - l _ { i } \cdot ( 1 - \delta _ { i } ) & & \forall i \in \{ 1 , \, n - 1 \} & & ( 9 e )$$

$$x _ { i } \leq u _ { i } \cdot \delta _ { i } & & \forall i \in \{ 1 , \, n - 1 \} & ( 9 f )$$

$$\delta _ { i } \in \{ 0 , 1 \} ^ { h _ { i } } & & \forall i \in \{ 1 , \, n - 1 \} & & ( 9 g )$$

$$\hat { x } _ { n } \leq 0 & & ( 9 h )$$

The level 0 of the Sherali-Adams hierarchy of relaxation Sherali & Adams [18] doesn't include any additional constraints. Indeed, polynomials of degree 0 are simply constants and their multiplication with existing constraints followed by linearization therefore doesn't add any new constraints. As a

can be replaced by

$$y \geq x _ { i } & & \forall i \in \{ 1 \dots k \} & & ( 1 5 a )$$

$$y \leq x _ { i } + ( u _ { x _ { 1 \colon k } } - l _ { x _ { i } } ) ( 1 - \delta _ { i } ) & & \forall i \in \{ 1 \dots k \} & & ( 1 5 b )$$

$$\sum _ { i \in \{ 1 , \dots k \} } \delta _ { i } = 1 & & ( 1 5 c )$$

$$\delta _ { i } \in \{ 0 , 1 \} & & \forall i \in \{ 1 \dots k \} & & ( 1 5 d )$$

where u x 1: k is an upper-bound on all x i for i ∈ { 1 . . . k } and l x i is a lower bound on x i .

Figure 7: Feasible domain corresponding to the Planet relaxation for a single ReLU.

<details>

<summary>Image 7 Details</summary>

### Visual Description

\n

## Diagram: Representation of a Region in a 2D Space

### Overview

The image depicts a two-dimensional Cartesian coordinate system with a shaded triangular region. The axes are labeled, and points within the region are marked with specific notations. The diagram appears to represent a constraint or feasible region defined by the axes and the points.

### Components/Axes

* **Horizontal Axis:** Labeled as `x̂ᵢ[j]` (x-hat i j).

* **Vertical Axis:** Labeled as `xᵢ[j]` (x i j).

* **Points:**