## Non-monotonic Reasoning in Deductive Argumentation

Anthony Hunter

Department of Computer Science, University College London, London, UK

Abstract. Argumentation is a non-monotonic process. This reflects the fact that argumentation involves uncertain information, and so new information can cause a change in the conclusions drawn. However, the base logic does not need to be non-monotonic. Indeed, most proposals for structured argumentation use a monotonic base logic (e.g. some form of modus ponens with a rule-based language, or classical logic). Nonetheless, there are issues in capturing defeasible reasoning in argumentation including choice of base logic and modelling of defeasible knowledge. And there are insights and tools to be harnessed for research in nonmonontonic logics. We consider some of these issues in this paper.

## 1 Introduction

Computational argumentation is emerging as an important part of AI research. This comes from the recognition that if we are to develop robust intelligent systems, then it is imperative that they can handle incomplete and inconsistent information in a way that somehow emulates the human ability to tackle such information. And one of the key ways that humans do this is to use argumentation, either internally, by evaluating arguments and counterarguments, or externally, by for instance entering into a discussion or debate where arguments are exchanged. Much research on computational argumentation focuses on one or more of the following layers: the structural layer (How are arguments constructed?); the relational layer (What are the relationships between arguments?); the dialogical layer (How can argumentation be undertaken in dialogues?); the assessment layer (How can a constellation of interacting arguments be evaluated and conclusions drawn?); and the rhetorical layer (How can argumentation be tailored for an audience so that it is convincing?). This has led to the development of a number of formalisms for aspects of argumentation (for reviews see [BH08,RS09,BGG18]), and some promising application areas [ABG + 17]).

Argumentation is related to non-monotonic reasoning. The latter is reasoning that allows for retraction of inferences in the light of new information. Interest in non-monotonic reasoning started with attempts to handle general rules, or defaults, of the form 'if α holds, then β normally holds', where α and β are propositions. It is noteworthy that human practical reasoning relies much more on exploiting general rules (not to be understood as universal laws) than on a myriad of individual facts. General rules tend to be less than 100% accurate, and so have exceptions. Nevertheless it is intuitive and advantageous to resort to such defaults and therefore allow the inference of useful conclusions, even if it does entail making some mistakes as not all exceptions to these defaults are necessarily known. Furthermore, it is often necessary to use general rules when we do not have sufficient information to allow us to specify or use universal laws. For a review of non-monotonic reasoning, see [Bre91].

In using defeasible (or default) knowledge, we might make an inference α on the basis of the information available, and then on the basis of further information, we may want to withdraw α . So with defeasible knowledge, the set of inferences does not increase monotonically with the set of assumptions.

Example 1. Consider the following general statements with the fact the match is struck . For this, we infer, The match lights . But, if we also have the fact The match is wet then we retract The match lights .

## A match lights if struck

## A match doesn't light if struck

The notion of defeasible knowledge covers a diverse variety of information, including heuristics, rules of conjecture, null values in databases, closed world assumptions for databases, and some qualitative abstractions of probabilistic information. Defeasible knowledge is a natural and very common form of information. There are also obvious advantages to applying the same default a number of times: There is an economy in stating (and dealing with) only a general rule instead of stating (and dealing with) many refined instances of such a general rule.

Even though argumentation and non-monotonic reasoning are widely acknowledged as closely related phenomena, there is a need to clarify the relationship. There is pioneering work such as by Simari et. al. [SL92,GS04], Prakken et. al. [Pra93,PS97,Pra10], Bondarenko [BDKT97], and Toni [Ton13,Ton14] that looks at aspect of this relationship, but it is potentially valuable to revisit the topic, and in particular investigate it from the point of view of deductive argumentation. So our primary aim in this paper is to investigate how non-monotonic reasoning arises in deductive argumentation, and how that differs from logics for non-monotonic reasoning. Our secondary aim is to investigate how logics for non-monotonic reasoning can be harnessed in deductive argumentation.

We proceed in the rest of the paper as follows: In Section 2, we review deductive argumentation as an example of a framework for structured argumentation; In Section 3, we consider how non-monotonic reasoning arises in structured argumentation; In Section 4, we review default logic, and consider how it can be harnessed in deductive argumentation; In Section 5, we review conditional logics, and consider how they can be harnessed in deductive argumentation; In Section 7, we consider how we can model defeasible knowledge as defeasible rules; And in Section 8, we draw conclusions and consider future work.

## 2 Deductive argumentation

Deductive argumentation is formalized in terms of deductive arguments and counterarguments, and there are various choices for defining this [BH08,BH14]. In the rest of this section, we will investigate some of the choices we have for defining arguments and counterarguments, and for how they can be used in modelling argumentation.

In order to define a specific system for deductive argumentation, we need to use a base logic. This is a logic that specifies the logical language for the knowledge, and the consequence (or entailment) relation for deriving inferences from the knowledge. In this section, we focus on two choices for base logic. These are simple logic (which has a language of literals and rules of the form α 1 ∧ . . . ∧ α n → β where α 1 , . . . , α n , β are literals, and modus ponens is the only proof rule) and classical logic (propositional and first-order classical logic).

A deductive argument is an ordered pair 〈 Φ, α 〉 where Φ /turnstileleft i α holds for the base logic /turnstileleft i . Φ is the support, or premises, or assumptions of the argument, and α is the claim, or conclusion, of the argument. Different deductive argumentation systems can be obtained by imposing constraints (such as minimality or consistency) on the definition of an argument. For an argument A = 〈 Φ, α 〉 , the function Support ( A ) returns Φ and the function Claim ( A ) returns α .

A counterargument is an argument that attacks another argument. In deductive argumentation, we define the notion of counterargument in terms of logical contradiction between the claim of the counterargument and the premises or claim of the attacked argument. We will review some of the kinds of counterargument that can be specified for simple logic and classical logic.

## 2.1 Simple logic

Simple logic is based on a language of literals and simple rules where each simple rule is of the form α 1 ∧ . . . ∧ α k → β where α 1 to α k and β are literals. A simple logic knowledgebase is a set of literals and a set of simple rules. The consequence relation is modus ponens (i.e. implication elimination).

Definition 1. The simple consequence relation , denoted /turnstileleft s , which is the smallest relation satisfying the following condition, and where ∆ is a simple logic knowledgebase: ∆ /turnstileleft s β iff there is an α 1 ∧··· ∧ α n → β ∈ ∆ , and for each α i ∈ { α 1 , . . . , α n } , either α i ∈ ∆ or ∆ /turnstileleft s α i .

Example 2. Let ∆ = { a, b, a ∧ b → c, c → d } . Hence, ∆ /turnstileleft s c and ∆ /turnstileleft s d . However, ∆ /negationslash/turnstileleft s a and ∆ /negationslash/turnstileleft s b .

Definition 2. Let ∆ be a simple logic knowledgebase. For Φ ⊆ ∆ , and a literal α , 〈 Φ, α 〉 is a simple argument iff Φ /turnstileleft s α and there is no proper subset Φ ′ of Φ such that Φ ′ /turnstileleft s α .

So each simple argument is minimal but not necessarily consistent (where consistency for a simple logic knowledgebase ∆ means that for no atom α does

∆ /turnstileleft s α and ∆ /turnstileleft s ¬ α hold). We do not impose the consistency constraint in the definition for simple arguments as simple logic is paraconsistent, and therefore can support a credulous view on the arguments that can be generated.

Example 3. Let p 1 , p 2 , and p 3 be the following formulae. Note, we use p 1 , p 2 , and p 3 as labels in order to make the presentation of the premises more concise. Then 〈{ p 1 , p 2 , p 3 } , goodEmployee ( John ) 〉 is a simple argument.

```

p1 = clever(John)

p2 = conscientious(John)

p3 = clever(John)^conscientious(John)->goodEmployee(John)

```

For simple logic, we consider two forms of counterargument. For this, recall that literal α is the complement of literal β if and only if α is an atom and β is ¬ α or if β is an atom and α is ¬ β .

Definition 3. For simple arguments A and B , we consider the following type of simple attack :

- -A is a simple undercut of B if there is a simple rule α 1 ∧ · · · ∧ α n → β in Support ( B ) and there is an α i ∈ { α 1 , . . . , α n } such that Claim ( A ) is the complement of α i .

- -A is a simple rebut of B if Claim ( A ) is the complement of Claim ( B ) .

Example 4. The first argument A 1 captures the reasoning that the metro is an efficient form of transport, so one can use it. The second argument A 2 captures the reasoning that there is a strike on the metro, and so the metro is not an efficient form of transport (at least on the day of the strike). A 2 undercuts A 1 .

```

A1 = ({{efficientMetro, efficientMetro -> useMetro}, useMetro)

A2 = ({{strikeMetro, strikeMetro -> ~efficientMetro}, ~efficientMetro)

```

Example 5. The first argument A 1 captures the reasoning that the government has a budget deficit, and so the government should cut spending. The second argument A 2 captures the reasoning that the economy is weak, and so the government should not cut spending. The arguments rebut each other.

```

A1 = ({{govDeficit, govDeficit -> cutGovSpending},cutGovSpending})

A2 = ({{weakEconomy, weakEconomy -> -cutGovSpending},-cutGovSpending})

```

So in simple logic, a rebut attacks the claim of an argument, and an undercut attacks the premises of the argument (either by attacking one of the literals, or by attacking the consequent of one of the rules in the premises).

## 2.2 Classical logic

Classical logic is appealing as the choice of base logic as it better reflects the richer deductive reasoning often seen in arguments arising in discussions and debates.

We assume the usual propositional and predicate (first-order) languages for classical logic, and the usual the classical consequence relation , denoted /turnstileleft . A classical knowledgebase is a set of classical propositional or predicate formulae.

Definition 4. For a classical knowledgebase Φ , and a classical formula α , 〈 Φ, α 〉 is a classical argument iff Φ /turnstileleft α and Φ /negationslash/turnstileleft ⊥ and there is no proper subset Φ ′ of Φ such that Φ ′ /turnstileleft α .

So a classical argument satisfies both minimality and consistency. We impose the consistency constraint because we want to avoid the useless inferences that come with inconsistency in classical logic (such as via ex falso quodlibet).

Example 6. The following classical argument uses a universally quantified formula in contrapositive reasoning to obtain the claim about number 77.

```

( \{ \forall X. multipleOfTen(X) ! even(X), !even(77)}, !multipleOfTen(77))

```

Given the expressivity of classical logic (in terms of language and inferences), there are a number of natural ways that we can define counterarguments. We give some options in the following definition.

Definition 5. Let A and B be two classical arguments. We define the following types of classical attack .

```

A is a classical undercut of B if ∃ Ψ ⊆ Support ( B ) s.t. Claim ( A ) ≡ ¬ \wedge \Psi .

A is a classical direct undercut of B if ∃ φ ∈ Support ( B ) s.t. Claim ( A ) ≡ ¬ φ .

A is a classical rebuttal of B if Claim ( A ) ≡ ¬ Claim ( B ) .

```

Using simple logic, the definitions for counterarguments against the support of another argument are limited to attacking just one of the items in the support. In contrast, using classical logic, a counterargument can be against more than one item in the support. For example, in Example 7, the undercut is not attacking an individual premise but rather saying that two of the premises are incompatible (in this case that the premises lowCost and luxury are incompatible).

Example 7. Consider the following arguments. A 1 is attacked by A 2 as A 2 is an undercut of A 1 though it is not a direct undercut. Essentially, the attack is an integrity constraint.

```

A1 = ({{lowCost, luxury, lowCost^luxury -> goodFlight},goodFlight})

A2 = ({{~lowCostV ~luxury},~lowCostV V ~luxury})

```

We give further examples of undercuts in Figure 2.

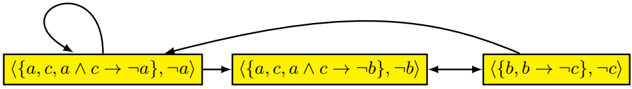

Fig. 1: A generative graph obtained from the simple logic knowlegebase where ∆ = { a, b, c, a ∧ c →¬ a, b →¬ c, a ∧ c →¬ b } . Note, that this exhaustive graph contains a self cycle, and an odd length cycle.

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Flowchart Diagram: Logical Implication System

### Overview

The diagram depicts a cyclic logical system with three interconnected states represented by yellow rectangles. Each state contains a set of logical propositions and their negations, connected by directional arrows indicating transformation or dependency relationships. A self-loop exists on the first state.

### Components/Axes

1. **Rectangles (States)**:

- **State 1**: ⟨{a, c, a ∧ c → ¬a}, ¬a⟩

- Contains propositions: `a`, `c`, and implication `a ∧ c → ¬a`

- Negation: `¬a`

- **State 2**: ⟨{a, c, a ∧ c → ¬b}, ¬b⟩

- Contains propositions: `a`, `c`, and implication `a ∧ c → ¬b`

- Negation: `¬b`

- **State 3**: ⟨{b, b → ¬c}, ¬c⟩

- Contains propositions: `b` and implication `b → ¬c`

- Negation: `¬c`

2. **Arrows (Flow/Dependencies)**:

- **Arrow 1**: From State 1 → State 2 (solid line)

- **Arrow 2**: From State 2 → State 3 (solid line)

- **Arrow 3**: From State 3 → State 1 (solid line)

- **Self-loop**: On State 1 (dashed line)

### Detailed Analysis

- **Logical Structure**:

Each state represents a logical environment with embedded propositions and their negations. The implications (`→`) and conjunctions (`∧`) suggest conditional dependencies between variables (`a`, `b`, `c`).

- State 1: If `a` and `c` are true, then `¬a` must hold (contradiction unless `a` or `c` is false).

- State 2: If `a` and `c` are true, then `¬b` must hold.

- State 3: If `b` is true, then `¬c` must hold.

- **Flow Dynamics**:

The cyclic flow (State 1 → State 2 → State 3 → State 1) implies a sequential transformation of logical constraints. The self-loop on State 1 suggests iterative refinement or stabilization of the `a ∧ c → ¬a` rule.

### Key Observations

1. **Contradiction in State 1**: The implication `a ∧ c → ¬a` creates a paradox unless `a` or `c` is false.

2. **Dependency Chain**:

- State 1’s `¬a` propagates to State 2, where `a ∧ c → ¬b` may enforce `¬b` if `a` and `c` are true.

- State 2’s `¬b` influences State 3, where `b → ¬c` would require `¬c` if `b` is true.

3. **Cyclic Dependency**: The loop from State 3 back to State 1 introduces a feedback mechanism, potentially creating a closed logical system.

### Interpretation

This diagram models a **non-monotonic logical system** where propositions and their negations coexist, governed by conditional rules. The cyclic flow suggests:

- **Iterative Refinement**: The self-loop on State 1 may represent repeated evaluation of `a ∧ c → ¬a` to resolve contradictions.

- **Constraint Propagation**: Changes in one state (e.g., `¬a`) ripple through the system, enforcing dependencies in subsequent states.

- **Paradox Avoidance**: The system appears designed to prevent contradictions by dynamically adjusting negations (e.g., `¬a`, `¬b`, `¬c`).

The structure resembles a **formal proof system** or **automated theorem prover**, where logical rules are applied iteratively to derive conclusions while avoiding inconsistencies. The absence of numerical data implies a focus on symbolic reasoning rather than quantitative analysis.

</details>

## 2.3 Instantiating argument graphs

For a specific deductive argumentation system, once we have a definition for arguments, and for counterarguments (i.e. for the attack relation), we can consider how to use them to instantiate argument graphs. For this, we need to specify which arguments and attacks are to appear in the instantiated argument graph. Two approaches to specifying this are descriptive graphs and generative graphs defined informally as follows.

- Descriptive graphs Here we assume that the structure of the argument graph is given, and the task is to identify the premises and claim of each argument. Therefore the input is an abstract argument graph, and the output is an instantiated argument graph. This kind of task arises in many situations: For example, if we are listening to a debate, we hear the arguments exchanged, and we can construct the instantiated argument graph to reflect the debate.

- Generative graphs Here we assume that we start with a knowledgebase (i.e. a set of logical formula), and the task is to generate the arguments and counterarguments (and hence the attacks between arguments). Therefore, the input is a knowledgebase, and the output is an instantiated argument graph. This kind of task also arises in many situations: For example, if we are making a decision based on conflicting information. We have various items of information that we represent by formulae in the knowledgebase, and we construct an instantiated argument graph to reflect the arguments and counterarguments that follow from that information. Note, we do not need to include all the arguments and attacks that we can generate from the knowledgebase. Rather we can define a selection function to choose which arguments and attacks to include.

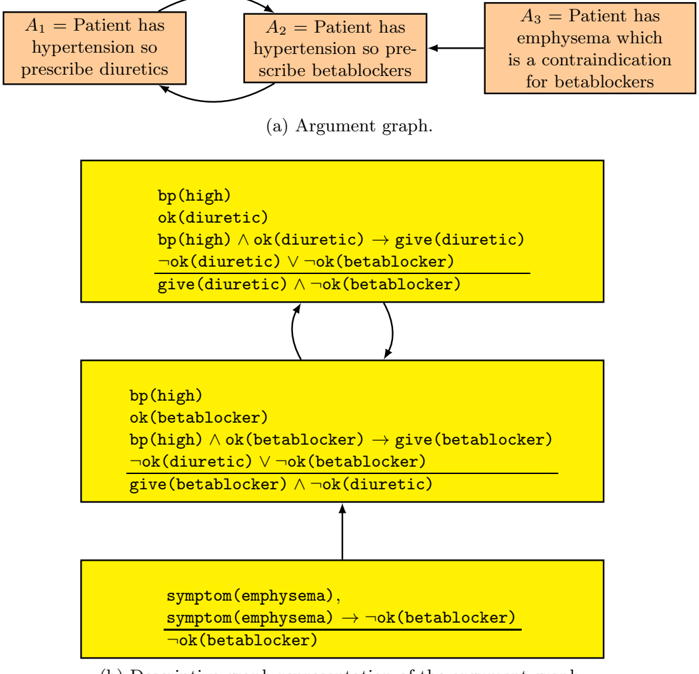

We give an example of generative graph in Figure 1 and an example of a descriptive graph in Figure 2.

For constructing both descriptive graphs and generative graphs, there may be a dynamic aspect to the process. For instance, when constructing descriptive graphs, we may be unsure of the exact structure of the argument graph, and it is only by instantiating individual arguments that we are able to say whether it is

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Diagram: Argument Graph and Conditional Logic Flow

### Overview

The image combines an argument graph (a) with three code blocks representing conditional logic. The argument graph outlines a clinical decision-making process for hypertension treatment, while the code blocks translate these arguments into logical conditions using Python-like syntax. Arrows indicate causal relationships and decision flows.

### Components/Axes

1. **Argument Graph (a)**:

- **Nodes**:

- `A1`: "Patient has hypertension so prescribe diuretics"

- `A2`: "Patient has hypertension so pre-scribe beta blockers"

- `A3`: "Patient has emphysema which is a contraindication for beta blockers"

- **Edges**:

- `A1 → A2`: Direct causal link

- `A2 → A3`: Conditional relationship (A3 is a contraindication for A2's action)

2. **Code Blocks**:

- **Block 1**:

```python

bp(high)

ok(diuretic) → give(diuretic)

¬ok(diuretic) ∨ ¬ok(beta blocker)

```

- **Block 2**:

```python

bp(high) ∧ ok(beta blocker) → give(beta blocker)

¬ok(diuretic) ∨ ¬ok(beta blocker)

```

- **Block 3**:

```python

symptom(emphysema) → ¬ok(beta blocker)

¬ok(beta blocker)

```

### Detailed Analysis

- **Argument Graph**:

- The graph establishes a hierarchy: A1 (diuretics) → A2 (beta blockers) → A3 (emphysema contraindication).

- A3 acts as a terminal node, overriding A2's recommendation.

- **Code Blocks**:

- **Block 1**: Implements A1's logic. If `ok(diuretic)` is true, prescribe diuretics. Otherwise, check for beta blocker compatibility.

- **Block 2**: Implements A2's logic. If both `bp(high)` and `ok(beta blocker)` are true, prescribe beta blockers. Otherwise, fall back to diuretics.

- **Block 3**: Implements A3's logic. If `symptom(emphysema)` is true, explicitly block beta blockers.

### Key Observations

1. **Logical Contradictions**:

- Block 1 and Block 2 share overlapping conditions (`¬ok(diuretic) ∨ ¬ok(beta blocker)`), suggesting mutual exclusivity.

- Block 3 introduces a hard constraint (`¬ok(beta blocker)`) that overrides previous recommendations.

2. **Flow Direction**:

- The argument graph's top-down flow (`A1 → A2 → A3`) mirrors the code blocks' sequential evaluation.

- Emphysema (A3) acts as a circuit breaker in the decision tree.

3. **Syntax Patterns**:

- All blocks use `bp(high)` as a base condition.

- `ok(X)` functions appear to represent treatment eligibility checks.

- `give(X)` functions represent treatment prescriptions.

### Interpretation

This diagram models a clinical decision support system for hypertension management with safety constraints:

1. **Primary Pathway**:

- Start with diuretics (A1) unless contraindicated.

- If diuretics are unsuitable, proceed to beta blockers (A2) if no emphysema.

2. **Safety Mechanism**:

- Emphysema (A3) creates an absolute contraindication for beta blockers, overriding all prior recommendations.

- The code enforces this through explicit negation (`¬ok(beta blocker)`).

3. **Logical Structure**:

- The use of `∧` (and) and `∨` (or) operators creates a prioritized decision tree.

- The repeated `¬ok(beta blocker)` condition in Blocks 1-3 suggests a system-wide safety check.

4. **Clinical Implications**:

- The system prevents beta blocker prescription in emphysema patients, aligning with real-world medical guidelines.

- The fallback to diuretics in Block 2 ensures treatment continuity when beta blockers are contraindicated.

### Technical Notes

- The code uses Python-like syntax but appears to be pseudocode for a rule engine.

- The argument graph and code blocks are spatially aligned, with the graph providing the "why" and the code providing the "how".

- No numerical data or statistical trends are present; the focus is on logical relationships.

</details>

- (b) Descriptive graph representation of the argument graph.

Fig. 2: The abstract argument graph captures a decision making scenario where there are two alternatives for treating a patient, diuretics or betablockers. Since only one treatment should be given for the disorder, each argument attacks the other. There is also a reason to not give betablockers, as the patient has emphysema which is a contraindication for this treatment. The descriptive graph representation of the abstract argument graph is using classical logic. The atom bp ( high ) denotes that the patient has high blood pressure. The top two arguments rebut each other (i.e. the attack is classical defeating rebut). For this, each argument has an integrity constraint in the premises that says that it is not ok to give both betablocker and diuretic. So the top argument is attacked on the premise ok ( diuretic ) and the middle argument is attacked on the premise ok ( betablocker ).

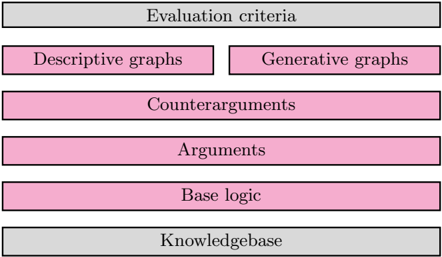

Fig. 3: Framework for constructing argument graphs with deductive arguments: For defining a specific argumentation system, there are four levels for the specification: (1) A base logic is required for defining the logical language and the consequence or entailment relation (i.e. what inferences follow from a set of formlulae); (2) A definition of an argument 〈 Φ, α 〉 specified using the base logic (e.g. Φ is consistent, and Φ entails α ); (3) A definition of counterargument specified using the base logic (i.e. a definition for when one argument attacks another); and (4) A definition of which arguments and counterarguments are composed into an argument graph (which is either a descriptive graph or some form of generative graph). Then to use a deductive argumentation system, a knowledgebase needs to be specified in the language of the base logic, and evaluation criteria such as dialectical semantics need to be selected.

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Flowchart: Evaluation Framework Structure

### Overview

The image depicts a hierarchical flowchart outlining an evaluation framework. It consists of six labeled sections arranged vertically, with two gray sections at the top and bottom acting as headers/footers, and four pink rectangles representing core components in between.

### Components/Axes

1. **Top Header (Gray)**: "Evaluation criteria" (centered)

2. **First Row (Pink)**:

- Left: "Descriptive graphs"

- Right: "Generative graphs"

3. **Second Row (Pink)**: "Counterarguments" (full width)

4. **Third Row (Pink)**: "Arguments" (full width)

5. **Fourth Row (Pink)**: "Base logic" (full width)

6. **Bottom Footer (Gray)**: "Knowledgebase" (centered)

### Detailed Analysis

- **Spatial Structure**:

- The flowchart uses a top-down vertical layout with equal-width sections.

- Gray sections ("Evaluation criteria" and "Knowledgebase") serve as bookends.

- Pink rectangles represent intermediate components, with the first row split into two parallel elements ("Descriptive graphs" and "Generative graphs").

- **Textual Content**:

- All labels are in English, using title case.

- No numerical values, scales, or legends present.

- No embedded diagrams or data tables.

### Key Observations

1. The framework begins with "Evaluation criteria" as the overarching goal.

2. The split between "Descriptive graphs" and "Generative graphs" suggests a bifurcation in methodology or data representation approaches.

3. "Counterarguments" and "Arguments" occupy equal visual weight, emphasizing balanced dialectical analysis.

4. "Base logic" precedes "Knowledgebase," implying foundational reasoning precedes data integration.

### Interpretation

This flowchart represents a structured evaluation process where:

1. **Methodological Choice**: The initial split between descriptive (static data visualization) and generative (dynamic model-based) graphs indicates a decision point in analytical approach.

2. **Dialectical Process**: The pairing of "Counterarguments" and "Arguments" suggests a rigorous debate structure before reaching conclusions.

3. **Knowledge Integration**: The bottom placement of "Knowledgebase" positions it as the foundational input rather than the output, contrasting with typical top-down knowledge application models.

4. **Logical Flow**: The progression from criteria → methodology → debate → logic → knowledgebase implies a bottom-up construction of evaluation rather than top-down imposition.

The absence of numerical data or directional arrows leaves the exact relationships between components open to interpretation, but the vertical stacking strongly suggests a sequential process. The equal width of all pink sections implies equal importance in the evaluation hierarchy.

</details>

attacked or attacks another argument. As another example, when constructing generative graphs, we may be involved in a dialogue, and so through the dialogue, we may obtain further information which allows us to generate further arguments that can be added to the argument graph.

## 2.4 Deductive argumentation as a framework

So in order to construct argument graphs with deductive arguments, we need to specify the the base logic, the definition for arguments, the definition for counterarguments, and the definition for instantiating argument graphs. For the latter, we can either produce a descriptive graph or a generative graph. We summarize the framework for constructing argument graphs with deductive arguments in Figure 3.

Key benefits of deductive arguments include: (1) Explicit representation of the information used to support the claim of the argument; (2) Explicit representation of the claim of the argument; and (3) A simple and precise connection between the support and claim of the argument via the consequence relation. What a deductive argument does not provide is a specific proof of the claim from the premises. There may be more than one way of proving the claim from the premises, but the argument does not specify which is used. It is therefore indifferent to the proof used.

There are a number of proposals for deductive arguments using classical propositional logic [Cay95,BH01,AC02,GH11], classical predicate logic [BH05], description logic [BHP09,MWF10,ZZXL10,ZL13]) temporal logic [MH08], simple (defeasible) logic [GMAB04,Hun10], conditional logic [BGR13], and probabilistic logic [Hae98,Hae01,Hun13]. These are monotonic logics, though non-monotonic logics can be used as a base logics, as we will investigate in this paper.

There has also been progress in understanding the nature of classical logic in computational argumentation. Types of counterarguments include rebuttals [Pol87,Pol92], direct undercuts [EGKF93,EGH95,Cay95], and undercuts and canonical undercuts [BH01]. In most proposals for deductive argumentation, an argument A is a counterargument to an argument B when the claim of A is inconsistent with the support of B . It is possible to generalize this with alternative notions of counterargument. For instance, with some common description logics, there is not an explicit negation symbol. In the proposal for argumentation with description logics, [BHP09] used the description logic notion of incoherence to define the notion of counterargument: A set of formulae in a description logic is incoherent when there is no set of assertions (i.e. ground literals) that would be consistent with the formulae. Using this, an argument A is a counterargument to an argument B when the claim of A together with the support of B is incoherent.

## 3 Non-monotonic reasoning

For a logic with a consequence relation /turnstileleft i , an important property is the monotonicity property (below) which states that if α follows from a knowledgebase ∆ , it still follows from ∆ augmented with any additional formula. This is a property that holds for many logics including classical logic, intuitionistic logic, and many modal and temporal logics.

$$\frac { \Delta \vdash _ { i } \alpha } { \Delta \cup \{ \beta \} \vdash _ { i } \alpha } \quad [ M o n o t o n i c i t y ]$$

In the following example, we assume a consequence relation /turnstileleft i for which the monotonicity property does not hold, and show how it reflects a form of defeasible reasoning.

Example 8. Consider the following set of formulae ∆ where ⇒ is some form of conditional implication symbol.

$$\begin{array} { l } { b i r d ( x ) \Rightarrow f l y i n g T h i n g ( x ) } \\ { o s t r i c h ( x ) \Rightarrow \neg f l y i n g T h i n g ( x ) } \\ { o s t r i c h ( x ) \Rightarrow b i r d ( x ) } \end{array}$$

Assuming we have an appropriate non-monotonic logic, we would get the following behaviour. So given the rules in ∆ and the premise bird ( Tweety ) we get flyingThing(Tweety) , but when we add ostrich ( Tweety ) to the premises, we no longer get flyingThing(Tweety) .

```

\Delta \cup {bird(Tweety)} \vdash _i flyingThing(Tweety)

\Delta \cup { bird(Tweety),ostrich(Tweety)} \vdash _i flyingThing(Tweety)

```

In the following sections, we will consider specific logics that give us such behaviour.

## 3.1 Non-monotonicity in deductive argumentation

We now focus on deductive argumentation, and investigate the monotonic and non-monotonic aspects of it.

- Argument construction is monotonic In deductive reasoning, we start with some premises, and we derive a conclusion using one or more inference steps in a base logic. Within the context of an argument, if we regard the premises as credible, then we should regard the intermediate conclusion of each inference step as credible, and therefore we should regard the conclusion as credible. For example, if we regard the premises 'Philippe and Tony are having tea together in London' as credible, then we should regard that 'Philippe is not in Toulouse' as credible (assuming the background knowledge that London and Toulouse are different places, and that nobody can be in different places at the same time). As another example, if we regard that the statement 'Philippe and Tony are having an ice cream together in Toulouse' is credible, then we should regard the statement 'Tony is not in London' as credible. Note, however, we do not need know that the premises are true to apply deductive reasoning. Rather, deductive reasoning allows us to obtain conclusions that we can regard as credible contingent on the credibility of their premises. This means that we can form arguments monotonically from a knowledgebase: Adding formulae to the knowledgebase allows us to increase the set of arguments.

- Argument evaluation is non-monotonic Given a knowledgebase, we use the base logic to construct the arguments and to identify the attack relationships that hold between arguments. We then apply the dialectical criteria to determine which arguments are in an extension according to a specific semantics. If we then add more formulae to the knowledgebase, we may obtain further arguments, but we do not lose any arguments. So when we add formulae to the knowledgebase, we may make additions to the instantiated argument graph but we do not make any deletions. If we then apply the dialectical criteria, we may then lose arguments from our extension. So in this sense, argumentation is non-monotonic.

In the following example, we illustrate how argument construction is monotonic but argument evaluation is non-monotonic.

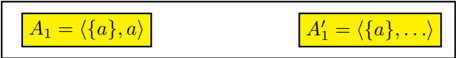

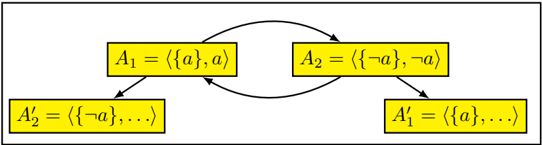

Example 9. Let ∆ = { a } , and so the following is a generative argument graph where A ′ 1 stands for all arguments with claim implied by a such as 〈{ a } , a ∨ b 〉 , 〈{ a } , a ∨ c 〉 , etc. Hence, { A 1 , A ′ 1 , . . . } is the grounded extension of the graph.

<details>

<summary>Image 4 Details</summary>

### Visual Description

Icon/Small Image (456x58)

</details>

If we add ¬ a to ∆ , we get the following generative argument graph where A ′ 2 stands for all arguments with claim implied by ¬ a such as 〈{¬ a } , ¬ a ∨ b 〉 , 〈{¬ a } , ¬ a ∨ c 〉 , etc. Hence, A 1 is no longer in the grounded extension.

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Diagram: State Transition Model with Negation and Expansion

### Overview

The diagram illustrates a cyclical state transition system involving four labeled components (A₁, A₂, A₁′, A₂′) connected by bidirectional arrows. Each component contains set notation with logical operators (¬ for negation) and ellipses indicating continuation. The structure suggests a feedback loop between two primary states (A₁ and A₂) that evolve into expanded versions (A₁′ and A₂′).

### Components/Axes

1. **Rectangular Nodes**:

- **A₁**: Top-left rectangle labeled `⟨{a}, a⟩` (set containing "a" and element "a").

- **A₂**: Top-right rectangle labeled `⟨{¬a}, ¬a⟩` (set containing negation of "a" and element "¬a").

- **A₁′**: Bottom-right rectangle labeled `⟨{a}, ...⟩` (set starting with "a" followed by unspecified elements).

- **A₂′**: Bottom-left rectangle labeled `⟨{¬a}, ...⟩` (set starting with "¬a" followed by unspecified elements).

2. **Arrows**:

- **A₁ → A₂**: Direct transition between negation states.

- **A₂ → A₁′**: Transition from negated state to expanded "a" state.

- **A₁′ → A₂′**: Transition between expanded states.

- **A₂′ → A₁**: Closing the loop back to the original negation state.

### Detailed Analysis

- **Notation**: The use of angle brackets (`⟨...⟩`) suggests ordered pairs or tuples, while set notation (`{...}`) implies membership or state composition.

- **Negation**: The presence of `¬a` in A₂ and A₂′ indicates logical inversion of the base element "a".

- **Ellipses**: The `...` in A₁′ and A₂′ imply additional, unspecified elements in the expanded states, suggesting growth or complexity beyond the initial states.

### Key Observations

1. **Cyclical Dependency**: The loop between A₁ and A₂ creates a binary toggle between "a" and "¬a" states.

2. **State Expansion**: Transitions to A₁′ and A₂′ introduce new elements, indicating a progression from simple to complex states.

3. **Symmetry**: The diagram balances negation (`¬a`) and affirmation (`a`) across the cycle, with mirrored structures in A₁/A₂ and A₁′/A₂′.

### Interpretation

This diagram likely represents a **state machine** or **logical process** where:

- **A₁ and A₂** are foundational states governed by mutual exclusion (one active, the other negated).

- **A₁′ and A₂′** represent evolved states where the original element ("a" or "¬a") is retained but expanded with additional components, possibly reflecting iterative processes or compounded conditions.

- The cyclical arrow from A₂′ back to A₁ suggests a reset or reinitialization mechanism, enabling repeated application of the state transitions.

The model could apply to systems requiring alternating conditions (e.g., binary logic gates, decision trees) or processes with feedback loops (e.g., iterative algorithms, reinforcement learning). The ellipses hint at scalability, where the system can accommodate additional rules or variables beyond the initial states.

</details>

Simple arguments and counterarguments can be used to model defeasible reasoning. For this, we use simple rules that are normally correct but sometimes are incorrect. For instance, if Sid has the goal of going to work, Sid takes the metro. This is generally true, but sometimes Sid works at home, and so it is no longer true that Sid takes the metro, as we see in the next example.

Example 10. The first argument A 1 captures the general rule that if workDay holds, then useMetro ( Sid ) holds. The use of the simple rule in A 1 requires that the assumption normal holds. This is given as an assumption. The second argument A 2 undercuts the first argument by contradicting the assumption that normal holds

```

A1 = \{ workDay , normal , workDay ^ { \ } n o r m a l \to useMetro(Sid) \} , useMetro(Sid) \}

A2 = \{ workAtHome(Sid) , workAtHome(Sid) \to ~normal \} , ~normal \}

```

If we start with just argument A 1 , then A 1 is undefeated, and so useMetro ( Sid ) is an acceptable claim. However, if we add A 2 , then A 1 is a defeated argument and A 2 is an undefeated argument. Hence, if we have A 2 , we have to withdraw useMetro ( Sid ) as an acceptable claim.

So by having appropriate conditions in the antecedent of a simple rule we can disable the rule by generating a counterargument that attacks it. This in effect stops the usage of the simple rule. This means that we have a convention to attack an argument based on the inferences obtained by the simple logic (e.g. as in Example 4 and Example 5), or on the rules used (e.g. Example 10).

This way to disable rules by adding appropriate conditions (as in Example 10) is analogous to the use of abnormality predicates in formalisms such as circumscription (see for example [McC80]). We can use the same approach to capture defeasible reasoning in other logics such as classical logic (as for example, the use of the ok predicate in the arguments in Figure 2). Note, this does not mean that we turn the base logic into a nonmonotonic logic. Both simple logic and classical logic are monotonic logics. Hence, for a simple logic knowledgebase ∆ (and similarly for a classical logic knowledgebase ∆ ), the set of simple arguments (respectively classical arguments) obtained from ∆ is a subset of the set of simple arguments (respectively classical arguments) obtained from ∆ ∪ { α } where α is a formula not in ∆ . But at the level of evaluating arguments and counterarguments, we have non-monotonic defeasible behaviour as illustrated by Example 10.

In the section, we have focused on using simple logic as a base logic. But it is very weak since it only has modus ponens as a proof rule. There is a range of logics between simple logic and classical logic called conditional logics. They are monotonic, and they capture interesting aspects of defeasible reasoning. We will briefly consider one type of conditional logic in Section 5.

## 3.2 Other approaches to structured argumentation

Other approaches to structured argumentation such as ASPIC+ [Pra10,MP14] and assumption-based argumentation (ABA) [Ton13,Ton14] also have an argument construction process that is monotonic but an argument evaluation process that is non-monotonic. These approaches apply rules to the formulae from the knowledge base, where the rules may be defeasible. These rules are defeasible in the sense they describe defeasible knowledge but the underlying logic is essentially modus ponens (i.e. analogous to simple logic as investigated for deductive argumentation) and so monotonic. Note, in these rule-based approaches, an argument is seen as a tree whose root is the claim or conclusion, whose leaves are the premises on which the argument is based, and whose structure corresponds to the application of the rules from the premises to the conclusion.

Through the dialectical semantics of argumentation, it is possible to formalize non-monotonic logics. Bondarenko et. al. showed how assumption-based argumentation subsumes a range of key non-monotonic logics including Theorist, default logic, logic programming, autoepistemic logic, non-monotonic modal logics, and certain instances of circumscription as special cases [BDKT97]. The use of assumptions can be introduced to other approaches to structured argumentation (see for example, for ASPIC+ [Pra10]).

In defeasible logic programming (DeLP) [GS04,GS14], another approach to structured argumentation, strict and defeasible rules are used in a form of logic programming. The language includes a default negation (i.e. a form of negationas-failure) which gives a form of non-monotonic behaviour. Essentially, negationas-failure means that if an atom cannot be proved to be true, then assume it is false. For example, the following is a defeasible rule in DeLP where the negated atom ∼ crossRailwayTracks is the consequent, -< is the defeasible implication symbol, and not ∼ trainIsComing is the condition. Furthermore, ∼ trainIsComing is a negated atom, and not is the default negation operator.

∼ crossRailwayTracks -< not ∼ trainIsComing

For the above defeasible rule, if we cannot prove ∼ trainIsComing (i.e. we cannot show that the train is not coming), then not ∼ trainIsComing holds, and therefore we infer ∼ crossRailwayTracks (i.e. we shouldn't cross the tracks).

In DeLP, the negation-as-failure is with respect to all the strict knowledge (i.e. the subset of the knowledge that is assumed to be true), rather than just the premises of the argument, and this means that as formulae are added to the knowledgebase, it may be necessary to withdraw arguments. For instance, if there is no strict knowledge, then we can construct an argument that has the above defeasible rule as the premise, and ∼ crossRailwayTracks as the claim. But, if we then add ∼ trainIsComing to the knowledgebase as strict knowledge, then we have to withdraw this argument. So unlike deductive argumentation, and the other approaches to structured argumentation, DeLP is not monotonic in argument contruction. However, like deductive argumentation, and the other approaches to structured argumentation, DeLP is non-monotonic in argument evaluation.

## 4 Default logic

As a basis of representing and reasoning with default knowledge, default logic, proposed by Reiter [Rei80], is one of the best known and most widely studied formalisations of default reasoning. Furthermore, it offers a very expressive and lucid language. In default logic, knowledge is represented as a default theory , which consists of a set of first-order formulae and a set of default rules for representing default information. Default rules are of the following form, where α , β and γ are classical formulae.

$$\frac { \alpha \colon \beta } { \gamma }$$

The inference rules are those of classical logic plus a special mechanism to deal with default rules: Basically, if α is inferred, and ¬ β cannot be inferred, then infer γ . For this, α is called the pre-condition, β is called the justification, and γ is called the consequent.

## 4.1 Inferencing in default logic

Default logic is an extension of classical logic. Hence, all classical inferences from the classical information in a default theory are derivable (if there is an extension). The default theory then augments these classical inferences by default inferences derivable using the default rules.

Definition 6. Let ( D,W ) be a default theory, where D is a set of default rules and W is a set of classical formulae. Let Cn be the function that for a set of formulae returns the set of classical consequences of those formulae. The operator Γ indicates what conclusions are to be associated with a given set E of formulae, where E is some set of classical formulae. For this, Γ ( E ) is the smallest set of classical formulae such that the following three conditions are satisfied.

1. W ⊆ Γ ( E )

2. Γ ( E ) = Cn ( Γ ( E ))

3. For each default in D , where α is the pre-condition, β is the justification, and γ is the consequent, the following holds:

$$i f \, \alpha \in \Gamma ( E ) , a n d \neg \beta \notin E , t h e n \gamma \in \Gamma ( E )$$

We refer to E as the satisfaction set, and Γ ( E ) the putative extension.

Once Γ ( E ) has been identified, E is an extension of ( D,W ) iff E = Γ ( E ). If E is an extension, then the first condition ensures that the set of classical formulae W is also in the extension, the second condition ensures the extension is closed under classical consequence, and the third condition ensures that for each default rule, if the pre-condition is in the extension, and the justification is consistent with the extension, then the consequent is in the extension.

We can view E as the set of formulae for which we are ensuring consistency with the justification of each default rule that we are attempting to apply. We can view Γ ( E ) as the set of putative conclusions of a default theory: It contains W , it is closed under classical consequence, and for each default that is applicable (i.e. the precondition is Γ ( E ) and the justification is satisfiable with E ), then the consequent is in Γ ( E ). We ask for the smallest Γ ( E ) to ensure that each default rule that is applied is grounded. This means that it is not the case that one or more default rules are self-supporting. For example, a single default rule is selfsupporting if the pre-condition is satisfied using the consequent. The test E = Γ ( E ) ensures that the set of formulae for which the justifications are checked for consistency coincides with the set of putative conclusions of the default theory. If E ⊂ Γ ( E ), then not all applied rules had their justification checked with Γ ( E ). If Γ ( E ) ⊂ E , then the rules are checked with more than is necessary.

Example 11. Let D be the following set of defaults.

$$\frac { \boldsymbol b i r d ( X ) \colon \neg p e n g u i n ( X ) \land f l y ( X ) } { \quad f l y ( X ) }$$

$$\frac { p e n g u i n ( X ) \colon b i r d ( X ) } { b i r d ( X ) } \quad \frac { p e n g u i n ( X ) \colon \neg f l y ( X ) } { \neg f l y ( X ) }$$

For ( D,W ), where W is { bird ( Tweety ) } , we obtain one extension

$$C n ( \{ b i r d ( T w e t y ) , f l y ( T w e t y ) } )$$

For ( D,W ), where W is { penguin ( Tweety ) } , we obtain one extension

$$C n ( \{ p e n g u i n ( T w e t y ) , b i r d ( T w e t y ) , \neg f l y ( T w e t y ) } )$$

Possible sets of conclusions from a default theory are given in terms of extensions of that theory. A default theory can possess multiple extensions because different ways of resolving conflicts among default rules lead to different alternative extensions. For query-answering this implies two options: in the credulous

Fig. 4: A non-exhaustive number of attempts are made for determining an extension. In each attempt, a guess is made for E , and then Γ ( E ) is calculated.

| Attempt | E | Γ ( E ) | Extension? |

|-----------|---------------------------------------------|--------------------------|-------------------------------------------------------|

| 1 | Cn ( { bird ( Tweety ) } ) | bird(Tweety) fly(Tweety) | E ⊂ Γ ( E ) |

| 2 | Cn ( { fly ( Tweety ) } ) | bird(Tweety) fly(Tweety) | E ⊂ Γ ( E ) |

| 3 | Cn ( { bird ( Tweety ) , fly ( Tweety ) } ) | bird(Tweety) fly(Tweety) | E = Γ ( E ) |

| 4 | Cn ( { bird ( Tweety ) , ¬ fly(Tweety) } ) | bird(Tweety) | Γ ( E ) ⊂ E |

| 5 | Cn ( {¬ bird(Tweety) , ¬ fly(Tweety) } ) | bird ( Tweety ) } | Γ ( E ) /negationslash⊆ E & E /negationslash⊆ Γ ( E ) |

approach, we accept a query if it belongs to one of the extensions of a considered default theory, whereas in the skeptical approach, we accept a query if it belongs to all extensions of the default theory.

The notion of extension in default logic overlaps with the notion of extension in argumentation. However, there is a key difference between the two notions. In the former, the extensions are derived directly from reasoning with the knowledge, whereas in the latter, the extensions are derived from the instantiated argument graph, and that graph has been generated from the knowledge. As a consequence, default logic suppresses the inconsistency arising within the relevant knowledge whereas argumentation draws the inconsistency out in the form of arguments and counterarguments.

## 4.2 Using default logic in deductive argumentation

We can use default logic as a base logic. Normally, a base logic is monotonic. However, there is no reason to not use a non-monotonic logic such as default logic. Let /turnstileleft d be the consequence relation for default logic. For a default theory ∆ = ( D,W ), and a propositional formula α , ∆ /turnstileleft d α denotes that α is in a default logic extension of ( D,W ). So the consequence relation is credulous. We could use a skeptical version as an alternative.

Definition 7. For a default theory Φ = ( D,W ) , and a classical formula α , 〈 Φ, α 〉 is a default argument iff Φ /turnstileleft d α and there is no proper subset Φ ′ of Φ such that Φ ′ /turnstileleft d α .

For the above definition, Φ ′ = ( D ′ , W ′ ) is a proper subset of Φ = ( D,W ) iff D ′ ⊆ D and W ′ ⊂ W or D ′ ⊂ D and W ′ ⊆ W .

We now consider a definition for attack where the counterargument attacks an argument when it negates the justification of a default rule in the premises

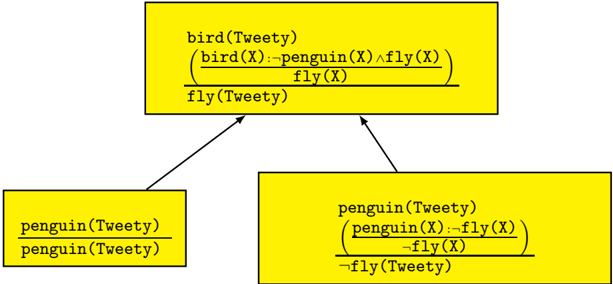

Fig. 5: Example of using default logic as a base logic in deductive argumentation. Here the top argument and the right arguments are default arguments, whereas the left argument is a classical argument. Each argument is presented with the premises above the line, and the claim below the line. Each default rule is given in brackets.

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Flowchart Diagram: Logical Inheritance and Constraints for "Tweety"

### Overview

The diagram illustrates a hierarchical logical structure defining relationships and constraints between entities labeled "bird(Tweety)" and "penguin(Tweety)". Arrows indicate directional dependencies or inheritance, with logical formulas specifying rules governing these relationships.

### Components/Axes

1. **Top Rectangle**:

- Label: `bird(Tweety)`

- Formula:

```

(bird(X) := penguin(X) ∧ fly(X))

fly(X)

fly(Tweety)

```

- Position: Top-center, acting as the root node.

2. **Left Rectangle**:

- Label: `penguin(Tweety)`

- Formula:

```

penguin(X) := penguin(X) ∧ ¬fly(X)

¬fly(X)

¬fly(Tweety)

```

- Position: Bottom-left, connected to the root via an arrow.

3. **Right Rectangle**:

- Label: `penguin(Tweety)`

- Formula:

```

penguin(X) := penguin(X) ∧ ¬fly(X)

¬fly(X)

¬fly(Tweety)

```

- Position: Bottom-right, connected to the root via an arrow.

### Detailed Analysis

- **Logical Formulas**:

- The root node defines `bird(X)` as a penguin that can fly (`penguin(X) ∧ fly(X)`), with `fly(Tweety)` asserting Tweety flies.

- Both penguin nodes define `penguin(X)` as a penguin that cannot fly (`penguin(X) ∧ ¬fly(X)`), with `¬fly(Tweety)` asserting Tweety does not fly.

- Contradiction: The root claims `fly(Tweety)`, while the penguin nodes claim `¬fly(Tweety)`.

- **Arrows**:

- Two arrows from the root node point to the penguin nodes, suggesting inheritance or derivation of properties from the root to the penguin instances.

### Key Observations

1. **Contradiction**: The root node asserts `fly(Tweety)`, while the penguin nodes assert `¬fly(Tweety)`. This creates a logical inconsistency.

2. **Redundancy**: Both penguin nodes share identical labels and formulas, possibly indicating a duplication or emphasis on the penguin constraint.

3. **Variable Scope**: The use of `X` in formulas suggests generalization, but the specific instance `Tweety` is used in conclusions.

### Interpretation

This diagram appears to model a logical paradox or constraint violation in a type hierarchy:

- **Inheritance Conflict**: The root node (`bird(Tweety)`) inherits properties from `penguin(Tweety)` (via the arrow), but the penguin nodes explicitly negate the `fly` property. This creates a contradiction: Tweety is both a flying bird and a non-flying penguin.

- **Possible Use Case**: The diagram might represent a scenario in logic programming (e.g., Prolog) or type theory where conflicting constraints are being analyzed. The redundancy in penguin nodes could highlight a design flaw or a deliberate test case for resolving inconsistencies.

- **Implications**: The contradiction suggests an error in the logical model or a deliberate exploration of how systems handle conflicting rules. Resolving this would require redefining the hierarchy (e.g., separating "bird" and "penguin" as disjoint types) or relaxing constraints (e.g., allowing some penguins to fly).

This analysis underscores the importance of consistency in logical hierarchies and the challenges of modeling real-world entities with overlapping or conflicting attributes.

</details>

of the argument. For this we require a function DefaultRules ( A ) to return the default rules used in the premises of an default argument.

Definition 8. For arguments A and B , where A is a default argument, and B is either a default argument or a classical argument, B justification undercuts A if there is α : β/γ ∈ DefaultRules ( A ) s.t. Claim ( B ) /turnstileleft ¬ β .

We illustrate justification undercuts in Figure 5. In the figure, the left undercut involves a classical argument, and the right undercut involves a default argument.

Using default logic as a base logic in this way does not affect the argument contruction being monotonic. Adding a formula to the knowledgebase would not cause an argument to be withdrawn. Rather it may allow further arguments to be constructed.

The advantage of using default logic in arguments is that it allows default inferences to be drawn. This means that we use a well-developed and wellunderstand formalism for representing and reasoning with the complexities of default knowledge. Hence, we can have a richer and more natural representation of defaults. It also means that inferences can be drawn in the absence of reasons to not draw them. For instance, in Figure 5, we can conclude fly ( Tweety ) from bird ( Tweety ) in the absence of knowing whether it is a penguin. In other words, we just need to know that it is consistent to believe that it is not a penguin and that it is consistent to believe that it can fly. Furthermore, we just need to do this consistency check within the premises of the argument. Note, this consistency check is different to the consistency check used for default negation in DeLP

which involves checking consistency with all the strict knowledge (i.e. the subset of knowledge that is assumed to be correct) [GS04].

In this paper, we have only given an indication of how default logic can be used to capture aspects of non-monotonic reasoning (for a comprehensive review of default logic, see [Bes89]) in deductive argumentation. There are various ways (e.g. by Santos and Pa˜ vao Martins [SM08]) that default logic could be harnessed in deductive argumentation to give richer behaviour . Also possible definitions for counterarguments could be adapted from an approach to argumentation based on default logic by Prakken [Pra93].

## 5 KLM logics for non-monotonic reasoning

The KLM logics for non-monotonic reasoning are a family of conditional logics developed by Kraus, Lehmann and Magidor [KLM90] to capture aspects of nonmonotonic reasoning and where each logic has a proof theory and a possible worlds semantics. We focus here on a member of this family called System P which has the language composed of formulae of the form α ⇒ β where α, β are propositional formulae, and it has the the following set of proof rules.

$$( R E F ) \, \frac { A \Rightarrow A } { A \Rightarrow B } \quad ( C U T ) \, \frac { A \Rightarrow B \quad A \land B \Rightarrow C } { A \Rightarrow C }$$

$$A _ { 0 } \Rightarrow A _ { k }$$

We illustrate this proof systems using the following examples of inferences. In the examples, we can see how the reasoning is monotonic in that no inferences (i.e. no formula of the form α ⇒ β ) are retracted. However, within these formulae, the defeasible reasoning is encoded. For instance, in Example 12, we have a formula that says 'birds fly', and we have the inference that says 'birds that are penguins do not fly'.

Example 12. From the following statements

$$p e n g u i n \Rightarrow b i r d \quad p e n g u i n \Rightarrow \neg f l y \quad b i r d \Rightarrow f l y$$

we get the following inferences

$$\begin{array} { l } \ p e n g u i n \wedge b i r d \Rightarrow \neg f y \\ \text {bird} \Rightarrow \neg p e n g u i n \\ \text {bird} \vee \ p e n g u i n \Rightarrow \neg p e n g u i n \end{array} \quad \begin{array} { l } \ f y \Rightarrow \neg p e n g u i n \\ \text {bird} \vee \ p e n g u i n \Rightarrow \ f y \\ \end{array}$$

We can use System P as a base logic in a deductive argumentation system. We start by giving the following definition of an argument.

Definition 9. Conditional logic argument For a set of conditional statements Φ and a set of propositional formulae Ψ , if Φ /turnstileleft P α ⇒ β and ∧ Ψ ≡ α , then a preferential argument is 〈 Φ ∪ Ψ, α ⇒ β 〉 .

Example 13. For premises Φ = { penguin ⇒ bird , penguin ⇒ ¬ fly } and Ψ = { penguin , bird } , the following is a preferential argument.

```

($ \cup \Psi,penguin\b bird =>~fly)

```

Next, we can define a range of attack relations. The following definition is not exhaustive as there are further options that we could consider.

Definition 10. Some preferential attack relations: Let A 1 = 〈 Φ ∪ Ψ, α ⇒ β 〉 and A 2 = 〈 Φ ′ ∪ Ψ ′ , γ ⇒ δ 〉 .

- -A 2 is a rebuttal of A 1 iff δ /turnstileleft ¬ β and γ /turnstileleft α -A 2 is a direct rebuttal of A 1 iff δ ≡ ¬ β and γ /turnstileleft α -A 2 is a undercut of A 1 iff δ /turnstileleft ¬ α -A 2 is a canonical undercut of A 1 iff δ ≡ ¬ α -A 2 is a direct undercut of A 1 iff there is σ ∈ Ψ such that δ ≡ ¬ σ

Example 14. For A 1 and A 2 below, A 2 is a direct rebuttal of A 1 , but not vice versa,

```

- A _ { 1 } = \langle \Phi _ { 1 } \cup \Psi _ { 1 } , b i r d \Rightarrow f l y \rangle \\ - A _ { 2 } = \langle \Phi _ { 2 } \cup \Psi _ { 2 } , p e n g u i n \wedge b i r d \Rightarrow \neg f l y \rangle

```

- 〉 where -Φ 1 = { bird ⇒ fly } , -Ψ 1 = { bird } , -Φ 2 = { penguin ⇒ bird , penguin ⇒¬ fly } , -Ψ 2 = { penguin , bird } .

Example 15. For A 1 and A 2 below, A 2 is a direct rebuttal of A 1 , but not vice versa,

```

- A _ = \langle \Phi _ { 1 } \cup \Psi _ { 1 } , matchIsStruck \Rightarrow matchLights \rangle

- A _ = \langle \Phi _ { 2 } \cup \Psi _ { 2 } , matchIsStruck \land matchIsWet \Rightarrow \neg matchLights \rangle

```

Harnessing System P, and the other members of the KLM family, offers the ability to undertake intuitive reasoning with defeasible rules. This allows for plausible consequences from knowledge to be investigated. It also allows for more efficient representation of knowledge to be undertaken since fewer rules would be required when compared with using simple logic. Furthermore, this reasoning can be implemented using automated reasoning [GGOP09].

## 6 Further conditional logics

Conditional logics are a valuable alternative to classical logic for knowledge representation and reasoning. Whilst many conditional logics extend classical logic, the implication introduced is normally more restricted than the strict implication used in classical logic. This means that many knowledge modelling situations, such as for non-monotonic reasoning, can be better captured by conditional logics (such as [Del87,KLM90,ACS92]).

By using conditional logic as a base logic, we have a range of options for more effective modelling complex real-world scenarios. For instance, they can be used to capture hypothetical statements of the form 'If α were true, then β would be true'. This done by introducing an extra connective ⇒ to extend a classical logic language. Informally, α ⇒ β is valid when β is true in the possible worlds where α is true. Representing and reasoning with such knowledge in argumentation is valuable because useful arguments exist that refer to fictitious and hypothetical situations as shown by Besnard et. al. [BGR13].

So we are proposing that we should move beyond simple logic (i.e. modus ponens) that is essentially the logic used for most structured argumentation systems. Even though formalisms such as ASPIC+ and ABA are general frameworks that accept a wide range of proof systems, most exmaples of using the frameworks involve simple rule-based systems (i.e. rules with modus ponens).

Once we accept that we can move beyond simple rule-based systems, we have a wide range of formalisms that we could consider, in particular conditional logics. This then raises the question of what proof theory do we want for reasoning with the defeasible rules. Central to such considerations is whether we want to have contrapositive reasoning.

In considering this issue for argumentation, Caminada recalls two simple examples where the inference of contrapositives is problematic [Cam08].

- -Men usually do not have beards. But, this does not imply that if someone does have a beard, then that person is not a man.

- -If I buy a lottery ticket, then I will normally not win a prize. But, this does not imply that if I do win a prize, then I did not buy a ticket.

To identify situation where contrapositive reasoning is potentially desirable, Caminada described the following two types of situation.

- Epistemic Defeasible rules describe how certain facts hold in relation to each other in the world. So the world exists independently of the rules. Here, contrapositive reasoning may be appropriate.

- Constitutive Defeasible rules (in part) describe how the world is constructed (e.g. regulations). So the world does not exist independently of the rules. Here contrapositive reasoning is not appropriate.

The first example below illustrates the first kind of situation, and the second example illustrates the second kind of situation. Note that the knowledge in each example is syntactically identical.

Example 16. The following rules describe an epistemic situation. From these rules, it would be reasonable to infer ¬ A from L .

- -O ⇒ A - goods ordered three months ago will probably have arrived now.

- -A ⇒ C - arrived goods will probably have a customs document.

- -L ⇒¬ C - goods listed as unfulfilled will probably lack customs document.

Example 17. The following rules describe a constitutive situation. From these rules, it would not be reasonable to infer ¬ M from P .

- -S ⇒ M - snoring in the library is form of misbehaviour.

- -M ⇒ R - misbehaviour in the library can result in removal from the library.

- -P ⇒¬ R - professors cannot be removed from the library.

A wide variety of conditional logics have been proposed to capture various aspects of conditionality including deontics, counterfactuals, relevance, and probability [Che75]. These give a range of proof theories and semantics for capturing different aspects of reasoning with conditions, and many of these do not support contrapositive reasoning.

## 7 Modelling defeasible knowledge

Another important aspect of non-monotonic reasoning is how we model defeasible knowledge in a meaningful way. A defeasible or default rule is a rule that is generally true but has exceptions and so is sometimes untrue. But this explanation still leaves some latitude as to what we really mean by a defeasible rule. To illustrate this issue, consider the following rule.

$$b i r d s f l y \quad ( * )$$

If we take all the normal situations (or observations, or worlds or days), and in the majority of these birds fly, then (*) may be equal to the following

$$\text {birds normally fly}$$

Or if we take the set of all birds (or all the birds you have seen, or read about, or watched on TV) and the majority of this set fly, then (*) may be equal to the following

$$\text {most birds fly}$$

Or perhaps if we take the set of all birds, we know the majority have the capability to fly, then (*) could equate with the following. - though this in turn raises the question of what it is to know something has a capability

```

most birds have the capability to fly

```

Or if we take an idealized notion of a bird that we in a society are happy to agree upon, then we could equate (*) with the following

$$a \, \text {prototypical bird flies}$$

As another illustration of this issue, consider the following rule which is in the vein as the 'birds fly' example but introduces further complications.

$$b i r d s \, l a y \, e g g s \quad ( * * )$$

We may take (**) as meaning the following - but on a given day, (or given situation, or observation) a given bird bird will probably not lay an egg.

```

birds normally lay eggs

```

Or we could say (**) means the following - but half the bird population is male and therefore don't lay eggs.

```

most birds have the capability lay eggs

```

Or perhaps we could say (**) means the following - where

```

most species of bird reproduce by laying eggs

```

However, the above involves a lot of missing information to be filled in, and in general it is challenging to know how to formalize a defeasible rule.

Obviously different applications call for different ways to interpret defeasible rules. Specifying the underlying ontology by recourse to description logic may be useful, and in some situations formalization in a probabilistic logic (see for example, [Bac90]) may be appropriate

## 8 Conclusions

Discrimination between the monotonic and non-monotonic aspects of a deductive argumentation system is important to better understand the nature of deductive argumentation. Each deductive argumentation system is based on a base logic which can be monotonic or non-monotonic. In either case, the construction of arguments and counterarguments is monotonic in the sense that adding knowledge to the knowledgebase may increase the set of arguments and counterargument but it cannot reduce the set of arguments or counterarguments. However, at the evaluation level, deductive argumentation is non-monotonic since adding arguments and counterarguments to the instantiated graph may cause arguments to be withdrawn from an extension, or even for extensions to be withdrawn.

Defeasible formulae (such as defeasible rules) are an important kind of knowledge in argumentation, as in non-monotonic reasoning. They are formulae that are often correct, but sometimes can be incorrect. Depending on what aspect of defeasible knowledge we want to model, there is a wide variety of base logics that we can use to represent and reason with it. This range includes simple logic and classical logic when we use appropriate conventions such abnormality predicates, in which case, we can overturn an argument based defeasible knowledge by recourse counterarguments that attack the assumption of normality. This range of base logics also includes default logic and conditional logics.

## References

- ABG + 17. K. Atkinson, P. Baroni, M. Giacomin, A. Hunter, H. Prakken, C. Reed, G. Simari, M. Thimm, and S. Villata. Towards artificial argumentation. AI Magazine , 38(3):25-36., 2017.

- AC02. L. Amgoud and C. Cayrol. A reasoning model based on the production of acceptable arguments. Annals of Mathematics and Artificial Intelligence , 34:197-215, 2002.

- ACS92. H. Arl´ o-Costa and S. Shapiro. Maps between conditional logic and nonmonotonic logic. In Proceedings of the 3rd International Conference on Principles of Knowledge Representation and Reasoning (KR'92) , page 553565. Morgan Kaufmann, 1992.

Bac90. F. Bacchus. Representing and Reasoning with Probabilistic Knowledge. A Logical Approach to Probabilities . MIT Press., 1990.

- BDKT97. A. Bondarenko, P. Dung, R. Kowalski, and F. Toni. An abstract, argumentation-theoretic approach to default reasoning. Artificial Intelligence , 93:63-101, 1997.

Bes89.

Ph. Besnard. An Introduction to Default Logic . Springer, 1989.

BGG18. P. Baroni, D. Gabbay, and M. Giacomin, editors. Handbook of Formal Argumentation , volume 1. College Publications, 2018.

- BGR13. Ph. Besnard, E. Gregoire, and B. Raddaoui. A conditional logic-based argumentation framework. In Proceedings of the 7th International Conference on Scalable Uncertainty Management (SUM'13) , volume 7958 of Lecture Notes in Computer Science , pages 44-56. Springer, 2013.

BH01. Ph. Besnard and A. Hunter. A logic-based theory of deductive arguments. Artificial Intelligence , 128:203-235, 2001.

BH05. Ph Besnard and A Hunter. Practical first-order argumentation. In Proceedings of the 20th National Conference on Artificial Intelligence (AAAI'05) , pages 590-595. MIT Press, 2005.

BH08. Ph. Besnard and A Hunter. Elements of Argumentation . MIT Press, 2008.

BH14. Ph. Besnard and A. Hunter. Constructing argument graphs with deductive arguments. Argument and Computation , 5(1):5-30, 2014.

BHP09. E. Black, A. Hunter, and J. Pan. An argument-based approach to using multiple ontologies. In Proceedings of the 3rd International Conference on Scalable Uncertainty Management (SUM'09) , volume 5785 of Lecture Notes in Computer Science , pages 68-79. Springer, 2009.

Bre91. G. Brewka. Nonmonotonic Reasoning: Logical Foundations of Commonsense . Cambridge University Press, 1991.

Cam08. M. Caminada. On the issue of contraposition of defeasible rules. In Procceings of the International Conference on Computational Models of Argument (COMMA'08) , pages 109-115, 2008.

Cay95. C. Cayrol. On the relation between argumentation and non-monotonic coherence-based entailment. In Proceedings of the 14th International Joint Conference on Artificial Intelligence (IJCAI'95) , pages 1443-1448, 1995.

Che75. B. Chellas. Basic conditional logic. Journal of Philosophical Logic , 4:133153, 1975.

Del87. J. Delgrande. A first-order logic for prototypical properties. Artificial Intelligence , 33:105-130, 1987.

- EGH95. M. Elvang-Gøransson and A. Hunter. Argumentative logics: Reasoning with classically inconsistent information. Data & Knowledge Engineering , 16(2):125-145, 1995.

- EGKF93. M. Elvang-Gøransson, P. Krause, and J. Fox. Acceptability of arguments as 'logical uncertainty'. In Proceedings of the 2nd European Conference on Symbolic and Quantitative Approaches to Reasoning and Uncertainty (ECSQARU'93) , volume 747 of Lecture Notes in Computer Science , pages 85-90. Springer, 1993.

- GGOP09. L. Giordano, V. Gliozzi, N. Olivetti, and G. Pozzato. Analytic tableaux calculi for klm logics of nonmonotonic reasoning. ACM Transactions on Computational Logic , 10(3):1-18, 2009.

- GH11. N. Gorogiannis and A. Hunter. Instantiating abstract argumentation with classical logic arguments: Postulates and properties. Artificial Intelligence , 175(9-10):1479-1497, 2011.

- GMAB04. G. Governatori, M. Maher, G. Antoniou, and D. Billington. Argumentation semantics for defeasible logic. Journal of Logic and Computation , 14(5):675702, 2004.

- GS04. A. Garc´ ıa and G. Simari. Defeasible logic programming: An argumentative approach. Theory and Practice of Logic Programming , 4:95-138, 2004.

- GS14. A. Garcia and G. Simari. Defeasible logic programming: Delp-servers, contextual queries, and explanations for answers. Argument and Computatio , 5(1):63-88, 2014.

- Hae98. R. Haenni. Modelling uncertainty with propositional assumptions-based systems. In Applications of Uncertainty Formalisms , volume 1455 of Lecture Notes in Computer Science , pages 446-470. Springer, 1998.

- Hae01. R. Haenni. Cost-bounded argumentation. International Journal of Approximate Reasoning , 26(2):101-127, 2001.

- Hun10. A. Hunter. Base logics in argumentation. In Proceedings of the 3rd International Conference on Computational Models of Argument (COMMA'10) , volume 216 of Frontiers in Artificial Intelligence and Applications , pages 275-286. IOS Press, 2010.

- Hun13. A. Hunter. A probabilistic approach to modelling uncertain logical arguments. International Journal of Approximate Reasoning , 54(1):47-81, 2013.

- KLM90. S. Kraus, D. Lehmann, and M. Magidor. Non-monotonic reasoning, preferential models and cumulative logics. Artificial Intelligence , 44:167-207, 1990.

- McC80. J. McCarthy. Circumscription: A form of non-monotonic reasoning. Artificial Intelligence , 13(1-2):23-79, 1980.

- MH08. N. Mann and A. Hunter. Argumentation using temporal knowledge. In Proceedings of the 2nd Conference on Computational Models of Argument (COMMA'08) , volume 172 of Frontiers in Artificial Intelligence and Applications , pages 204-215. IOS Press, 2008.

- MP14. S. Modgil and H. Prakken. The ASPIC+ framework for structured argumentation: a tutorial. Argument and Computation , 5(1):31-62, 2014.

- MWF10. M. Moguillansky, R. Wassermann, and M. Falappa. An argumentation machinery to reason over inconsistent ontologies. In Advances in Artificial Intelligence (IBERAMIA 2010) , volume 6433 of LNCS , pages 100-109. Springer, 2010.

- Pol87. J. Pollock. Defeasible reasoning. Cognitive Science , 11(4):481-518, 1987.

- Pol92. J. Pollock. How to reason defeasibly. Artificial Intelligence , 57(1):1-42, 1992.

- Pra93. H. Prakken. An argumentation framework in default logic. Annals of Mathematics and Artificial Intelligence , 9:93-132, 1993.

- Pra10. H. Prakken. An abstract framework for argumentation with structured arguments. Argument and Computation , 1:93-124, 2010.

- PS97. H. Prakken and G. Sartor. Argument-based extended logic programming with defeasible priorities. Journal of Applied Non-classical Logic , 7:25-75, 1997.

- Rei80. R. Reiter. A logic for default reasoning. Artificial Intelligence , 13:81-132, 1980.

- RS09. I. Rahwan and G. Simari, editors. Argumentation in Artificial Intelligence . Springer, 2009.

- SL92. G. Simari and R. Loui. A mathematical treatment of defeasible reasoning and its implementation. Artificial Intelligence , 53:125-157, 1992.

- SM08. E. Santos and J. Pa˜ vao Martins. A default logic based framework for argumentation. In Proceedings of the European Conference on Artificial Intelligence (ECAI'08) , pages 859-860, 2008.

- Ton13. F. Toni. A generalized framework for dispute derivation in assumptionbased argumentation. Artificial Intelligence , 195:1-43, 2013.

- Ton14. F. Toni. A tutorial on assumption-based argumentation. Argument and Computation , 5(1):89-117, 2014.

- ZL13. X. Zhang and Z. Lin. An argumentation framework for description logic ontology reasoning and management. Journal of Intelligent Information Systems , 40(3):375-403, 2013.

- ZZXL10. X. Zhang, Z. Zhang, D. Xu, and Z. Lin. Argumentation-based reasoning with inconsistent knowledge bases. In Advances in Artificial Intelligence , volume 6085 of Lecture Notes in Computer Science , pages 87-99. Springer, 2010.