## p-Bits for Probabilistic Spin Logic

Kerem Y. Camsari, 1 Brian M. Sutton, 1 and Supriyo Datta 1

School of Electrical and Computer Engineering, Purdue University, West Lafayette, IN 47907, USA

(Dated: 13 March 2019)

We introduce the concept of a probabilistic or p-bit, intermediate between the standard bits of digital electronics and the emerging q-bits of quantum computing. We show that low barrier magnets or LBM's provide a natural physical representation for p-bits and can be built either from perpendicular magnets (PMA) designed to be close to the in-plane transition or from circular in-plane magnets (IMA). Magnetic tunnel junctions (MTJ) built using LBM's as free layers can be combined with standard NMOS transistors to provide threeterminal building blocks for large scale probabilistic circuits that can be designed to perform useful functions. Interestingly, this three-terminal unit looks just like the 1T/MTJ device used in embedded MRAM technology, with only one difference: the use of an LBM for the MTJ free layer. We hope that the concept of p-bits and p-circuits will help open up new application spaces for this emerging technology. However, a p-bit need not involve an MTJ, any fluctuating resistor could be combined with a transistor to implement it, while completely digital implementations using conventional CMOS technology are also possible. The p-bit also provides a conceptual bridge between two active but disjoint fields of research, namely stochastic machine learning and quantum computing. First, there are the applications that are based on the similarity of a p-bit to the binary stochastic neuron (BSN), a well-known concept in machine learning. Three-terminal p-bits could provide an efficient hardware accelerator for the BSN. Second, there are the applications that are based on the p-bit being like a poor man's q-bit. Initial demonstrations based on full SPICE simulations show that several optimization problems including quantum annealing are amenable to p-bit implementations which can be scaled up at room temperature using existing technology.

## CONTENTS

| I. | Introduction |

|------|--------------------------------------------|

| | A. Between a bit and a q-bit |

| | B. Binary stochastic neuron (BSN) |

| II. | Hardware Implementation |

| | A. Three-terminal p-Bit |

| | B. Weighted p-bit |

| III. | Applications of p-circuits |

| | A. Applications: Machine learning inspired |

| | B. Applications: Quantum inspired |

| IV. | Conclusions |

| | Acknowledgments |

| V. | References |

## I. INTRODUCTION

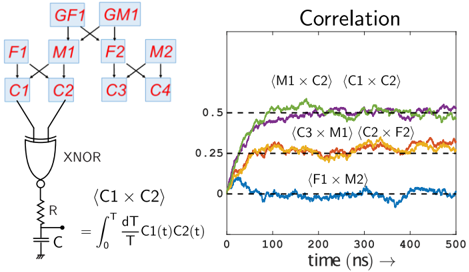

## A. Between a bit and a q-bit

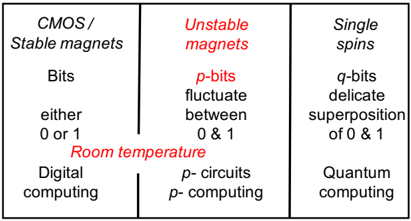

Modern digital circuits are based on binary bits that can take on one of two values, 0 and 1, and are stored using well-developed technologies at room temperature. At the other extreme are quantum circuits based on qbits which are delicate superpositions of 0 and 1 requiring the development of novel technologies typically working at cryogenic temperatures. This article is about what we call probabilistic bits or p-bits that are classical entities fluctuating rapidly between 0 and 1. We will argue that we can use existing technology to build what we call pcircuits that should function robustly at room temperature while addressing some of the applications commonly associated with quantum circuits (Fig. 1).

How would we represent a p-bit physically? Let us first consider the two extremes, namely the bit and the q-bit. A q-bit is often represented by the spin of an electron, while a bit is often represented by binary voltage levels in digital elements like flip-flops and floating-gate transistors. However, bits can also be represented by magnets 1 which are basically collections of a very large number of spins. In a magnet, internal interactions make the energy a minimum when the spins all point either parallel or anti-parallel to a specific direction, called the easy axis. These two directions represent 0 and 1 and are separated by an energy barrier, E b , that ensures their stability.

How large is the barrier? A nanomagnet flips back and forth between 0 and 1 at a rate determined by the energy barrier: τ ∼ τ 0 exp( E b /k B T ) where τ 0 typically has a value between picoseconds and nanoseconds 2 . Assuming a τ 0 of a nanosecond, a barrier of E b ∼ 40 k B T , for example, would retain a 0 (or a 1) for ∼ 10 years, making it suitable for long term memory while a smaller barrier of E b ∼ 14 k B T , would only ensure a short term memory ∼ 1 ms 3 .

It has been recognized that this stability problem also represents an opportunity. Unstable low barrier magnets (LBM) could be used to implement useful functions like random number generation (RNG) 4-6 by sensing the randomly fluctuating magnetization to provide a random time varying voltage. With such applications in mind, we would want magnets to have as low a barrier as possible,

so that many random numbers are generated in a given amount of time. Indeed a 'zero' barrier magnet with E b ≤ k B T flipping back and forth in less than a nanosecond would be ideal.

How can we reduce the energy barrier? Since E b = H K M s Ω / 2, the basic approach is to reduce the total magnetic moment by reducing volume Ω, and/or engineer a small anisotropy field H K 7 . This can be done with perpendicular magnets (PMA) designed to be close to the in-plane transition. A less challenging approach seems to be to use circular in-plane magnets (IMA) 7-9 . We will refer to all these possibilities collectively as LBM's as opposed to say superparamagnets which have more specific connotations in different contexts 3,10-15 .

We could use LBM's to represent the probabilistic bits or p-bits that we alluded to. We have argued that if these p-bits can be incorporated into proper transistor-like structures with gain, then the resulting three-terminal p-bits could be interconnected to build pcircuits that perform useful functions 10,12,16 , not unlike the way transistors are interconnected to build useful digital circuits. However, unlike digital circuits these probabilistic p-circuits incorporate features reminiscent of quantum circuits.

This connection was nicely articulated by Feynman in a seminal paper 17 , where he described a quantum computer that could provide an efficient simulation of quantum many-body problems. But to set the stage for quantum computers, he first described a probabilistic pcomputer which could efficiently simulate classical manybody problems:

. . . 'the other way to simulate a probabilistic nature, which I'll call N . . . is by a computer C which itself is probabilistic, . . . in which the output is not a unique function of the input . . . it simulates nature in this sense: that C goes from some ...initial state . . . to some final state with the same probability that N goes from the corresponding initial state to the corresponding final state . . . If you repeat the same experiment in the computer a large number of times . . . it will give the frequency of a given final state proportional to the number of times, with approximately the same rate . . . as it happens in nature.'

There are many practical problems of great interest which involve large networks of probabilistic quantities. These problems should be simulated efficiently by p-computers of the type envisioned by Feynman. Our purpose here is to discuss appropriate hardware building blocks that can be used to build them 16 and possible applications they could be used for. In this context, let us note that although spins provide a nice unifying paradigm for illustrating the transition from bits to p-bits and q-bits, the physical realization of a p-bit need not involve spins or spintronics; non-spintronic implementations can be just as feasible.

FIG. 1. Between a bit and a q-bit: The p-bit Digital computers use deterministic strings of 0's and 1's called bits to represent information in a binary code. The emerging field of quantum computing is based on q-bits representing a delicate superposition of 0 and 1 that typically requires cryogenic temperatures. We envision a class of probabilistic computers or p-computers operating robustly at room temperature with existing technology based on p-bits which are classical entities fluctuating rapidly between 0 and 1. Although spins provide a nice unifying paradigm for illustrating the transition from bits to p-bits and q-bits, it should be noted that the physical realization of a p-bit need not involve spins or spintronics; non-spintronic implementations can be just as feasible.

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Diagram: Computing Paradigms Based on Physical Systems

### Overview

The diagram categorizes computing paradigms into three vertical sections based on physical systems: **CMOS/Stable magnets**, **Unstable magnets**, and **Single spins**. Each section describes the computational units (bits, p-bits, q-bits), their behavior, and associated computing frameworks. The middle section ("Unstable magnets") is highlighted in red, emphasizing its transitional or intermediate role.

---

### Components/Axes

1. **Left Column (CMOS/Stable magnets)**:

- **Bits**: Defined as "either 0 or 1" (classical binary logic).

- **Digital computing**: Associated with stable physical systems (CMOS transistors, stable magnets).

- **Room temperature**: Indicates operational conditions.

2. **Middle Column (Unstable magnets)**:

- **p-bits**: Described as "fluctuate between 0 & 1" (probabilistic binary states).

- **p-computing**: Framework for probabilistic computing.

- **Room temperature**: Operational conditions (highlighted in red).

3. **Right Column (Single spins)**:

- **q-bits**: Defined as "delicate superposition of 0 & 1" (quantum states).

- **Quantum computing**: Framework for quantum computation.

- **Single spins**: Physical implementation (e.g., electron spins in quantum dots).

---

### Detailed Analysis

- **Left Column**:

- Classical digital computing relies on stable physical systems (CMOS, magnets) with deterministic bits (0 or 1).

- Operates at room temperature, aligning with conventional electronics.

- **Middle Column**:

- **p-bits** represent probabilistic states (fluctuating between 0 and 1), suggesting noise or uncertainty in physical systems (unstable magnets).

- **p-computing** bridges classical and quantum paradigms, leveraging probabilistic behavior.

- Red highlighting may indicate experimental or emerging research focus.

- **Right Column**:

- **q-bits** exploit quantum superposition, enabling parallel computation of 0 and 1 simultaneously.

- **Quantum computing** requires extreme isolation (e.g., cryogenic temperatures) to maintain coherence, contrasting with room-temperature operation in other paradigms.

---

### Key Observations

1. **Stability vs. Delicacy**:

- Classical systems (left) prioritize stability, while quantum systems (right) require fragile, isolated environments.

- The middle column ("Unstable magnets") represents a transitional state with intermediate stability.

2. **Red Highlighting**:

- The term "Room temperature" in the middle column is emphasized in red, possibly indicating its significance in enabling practical p-computing without cryogenic requirements.

3. **Physical Systems**:

- Each column maps a distinct physical system (magnets, spins) to a computing paradigm, illustrating the interplay between hardware and computational theory.

---

### Interpretation

The diagram illustrates the evolution of computing paradigms from classical (CMOS/stable magnets) to quantum (single spins). The middle column ("Unstable magnets") acts as a conceptual bridge, where probabilistic p-bits and p-computing explore the space between deterministic classical systems and quantum superposition. The red emphasis on "Room temperature" in the middle column suggests that p-computing may offer a pragmatic middle ground, avoiding the extreme conditions required for quantum computing while still leveraging probabilistic behavior. This aligns with emerging research into probabilistic hardware (e.g., stochastic computing) as a potential alternative to traditional binary systems. The diagram underscores the trade-offs between stability, computational power, and operational feasibility across paradigms.

</details>

| Bits either 0 or 1 CMOS/ Stable magnets | p -bits fluctuate between 0 & 1 Unstable magnets | q -bits delicate of 0 & 1 Single spins |

|-------------------------------------------|----------------------------------------------------|------------------------------------------|

| | | superposition |

| Room temperature | Room temperature | |

| Digital computing | p - circuits p - computing | Quantum computing |

## B. Binary stochastic neuron (BSN)

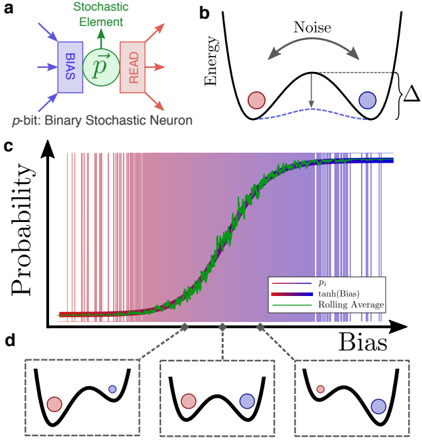

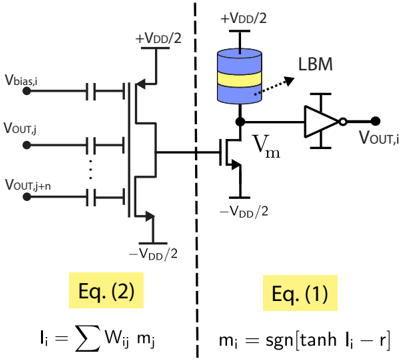

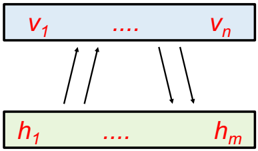

Interestingly the concept of a p-bit connects naturally to another concept well-known in the field of machine learning, namely that of a binary stochastic neuron (BSN) 18,19 whose response m i to an input I i can be described mathematically by

<!-- formula-not-decoded -->

where r is a random number uniformly distributed between -1 and +1 20 . Here we are using bipolar variables m i = ± 1 to represent the 0 and 1 states. If we use binary variables m i = 0 , 1 the corresponding equation would look different 21 . When combined with a synaptic function described by

<!-- formula-not-decoded -->

we have a probabilistic network that can be designed to perform a wide variety of functions through a proper choice of the weights, W ij . A separate bias term h i is often included in Eq. 2 but we will not write it explicitly, assuming that it is included as the weighted input from an extra p-bit that is always +1.

Eqs. 1 and 2 are widely used in many modern algorithms but they are commonly implemented in software. Much work has gone into developing suitable hardware accelerators for matrix multiplication of the type described by Eq. 2 (See for example, Ref. 22 ). Threeterminal p-bits would provide a hardware accelerator for Eq. 1. Together they would function like a probabilistic computer.

Note that a hardware accelerator for Eq. (1) requires more than just an RNG. We need a tunable RNG whose output m i can be biased through the input terminal I i as shown in Fig. 2. Two distinct designs for a threeterminal p-bit have been described 12,13 both of which use a magnetic tunnel junction (MTJ), a popular 'spintronic' device used in magnetic random access memory (MRAM) 23 . However, MRAM applications use stable MTJ's that can store information for many years, while a p-bit makes use of 'bad' MTJ's with low barriers.

The LBM-based implementation of the BSN described here is conceptually very different from a clocked approach using stable magnets where a stochastic output is obtained every time a clock pulse is applied 16,24-30 . All of these approaches work with stable magnets, although LBM's could be used to reduce the switching power that is needed.

In this paper we will focus on unclocked, asynchronous operation using LBM-based hardware accelerators for the BSN (Eq. (1)) 10-12 . But can an asynchronous circuit provide the sequential updating of the BSN's described by Eq. (1) that is required for Gibbs sampling 31 and is commonly enforced in software through a for loop ? The answer is 'yes' as shown both in SPICE simulations 10 as well as arduino-based emulations 32 , provided the synaptic function in Eq. (2) has a delay that is less than or comparable to the response time of the BSN, Eq. (1).

It should be noted that unclocked operation is a rarity in the digital world and most applications will probably use a clocked, sequential approach with dedicated sequencers that update connected p-bits sequentially. A fully digital implementation of p-circuits using such dedicated sequencers has been realized in Ref. 32 . Synchronous operation can be particularly useful if synaptic delays are large enough to interfere with natural asynchronous operation.

Here, we focus on unclocked operation in order to bring out the role of a p-bit in providing a conceptual bridge between two very active fields of research, namely stochastic machine learning and quantum computing . On the one hand p-bits could provide a hardware accelerator for the BSN (Eq. (1)) thereby enabling applications inspired by machine learning ( Section III ). On the other hand, pbits are the classical analogs of q-bits: robust room temperature entities accessible with current technology that could enable at least some of the applications inspired by quantum computing ( Section IV ). But before we discuss applications, let us briefly discuss possible hardware approaches to implementing p-bits ( Section II ).

## II. HARDWARE IMPLEMENTATION

## A. Three-terminal p-Bit

RNG's represent an important component of modern electronics and have been implemented using many different approaches, including Johnson-Nyquist noise of

FIG. 2. Three terminal p-bit: a. A hardware implementation of the BSN (Eq. (1)) requires a central stochastic element with input and output terminals that provide the ability to read and bias the element. b. The stochastic element can be visualized as going back and forth between two low energy states at a rate that depends exponentially on the barrier E b that separates them: τ = τ 0 exp(∆ /k B T ) c. The bias terminal adjusts the relative energies of the two states thereby controlling the probabilities of finding the element in the two states.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Diagram: Binary Stochastic Neuron Model and Energy Landscape Analysis

### Overview

The image presents a technical diagram of a binary stochastic neuron model (a), its energy landscape (b), a probability curve (c), and bias-dependent energy state visualizations (d). The components illustrate how stochastic neurons process inputs, manage noise, and convert bias into probabilistic outputs.

### Components/Axes

**a. Binary Stochastic Neuron Diagram**

- **Components**:

- **p-bit**: Binary stochastic neuron (blue box)

- **Stochastic Element**: Green circle labeled "Stochastic Element"

- **READ**: Red box labeled "READ"

- **Flow**: Arrows indicate input (p-bit → Stochastic Element) and output (Stochastic Element → READ).

- **Labels**:

- "p-bit: Binary Stochastic Neuron" (top-left)

- "Stochastic Element" (green)

- "READ" (red)

**b. Energy Landscape**

- **Axes**: Energy (y-axis, implicit) vs. Position (x-axis, implicit).

- **Elements**:

- Parabolic energy well with two states:

- **Red dot**: High-energy state (unstable)

- **Blue dot**: Low-energy state (stable)

- **Noise**: Curved arrow labeled "Noise" between states.

- **Δ**: Energy difference between states (vertical arrow).

**c. Probability vs. Bias Graph**

- **Axes**:

- **y-axis**: Probability (labeled "Probability")

- **x-axis**: Bias (labeled "Bias")

- **Data Series**:

- **Pi (red line)**: Probability of firing (P_i)

- **tanh(Bias) (purple line)**: Sigmoid function of bias

- **Rolling Average (green line)**: Smoothed version of Pi

- **Legend**: Located at bottom-right, with color-coded labels.

**d. Bias-Dependent Energy States**

- **Insets**: Three energy landscapes (left to right) showing increasing bias.

- **Red dot**: High-energy state (unstable)

- **Blue dot**: Low-energy state (stable)

- **Δ**: Energy difference increases with bias (larger Δ in rightmost inset).

### Detailed Analysis

**c. Probability vs. Bias Graph Trends**

1. **Pi (red line)**:

- Starts near 0 at low bias, increases linearly with bias.

- Reaches ~0.8 probability at high bias.

2. **tanh(Bias) (purple line)**:

- Sigmoid curve: ~0.1 at low bias, ~0.9 at high bias.

3. **Rolling Average (green line)**:

- Smooths Pi's fluctuations, closely follows tanh(Bias) trend.

**d. Energy State Visualizations**

- As bias increases:

- Energy difference (Δ) grows (larger gap between red and blue dots).

- Red dot (high-energy state) becomes less probable, blue dot (low-energy) more probable.

### Key Observations

1. **Noise Impact**: Noise (b) causes energy state transitions, modeled as stochastic switching.

2. **Bias-Driven Probability**: Higher bias (c, d) increases the likelihood of the neuron firing (Pi → tanh(Bias)).

3. **Smoothing Effect**: Rolling Average (green) reduces noise in Pi's probability curve.

### Interpretation

The model demonstrates how stochastic neurons balance noise and bias to produce probabilistic outputs. The energy landscape (b, d) shows that bias shifts the system toward the low-energy (firing) state, while noise introduces randomness. The graph (c) quantifies this:

- **tanh(Bias)** acts as a threshold function, converting bias into a sigmoidal probability.

- **Pi** represents the raw stochastic output, smoothed by the Rolling Average to mimic real-world neural behavior.

- The energy difference (Δ) in d directly correlates with the neuron's sensitivity to bias, explaining how external inputs modulate firing probability.

This framework aligns with biophysical neuron models, where stochastic elements and energy landscapes explain decision-making under uncertainty.

</details>

resistors 33 , phase noise of ring oscillators 34 , process variations of SRAM cells 35 and other physical mechanisms. However, as noted earlier, we need what appears to be a completely new 3-terminal device whose input I i biases its stochastic output m i as shown in Fig. 2c.

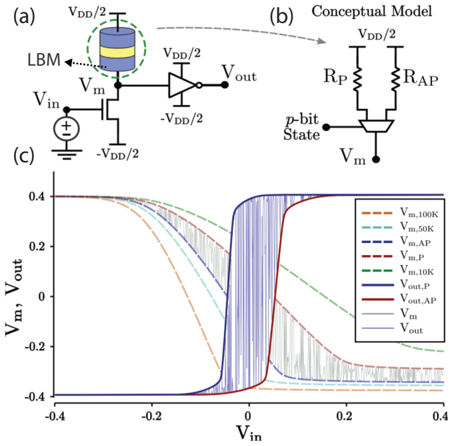

A recent paper 13 shows that such a 3-terminal tunable RNG can be built simply by combining a 2-terminal fluctuating resistance with a transistor (Fig. 3). This seems very attractive at least in the short run, since the basic structure (Fig. 3a) closely resembles the 1T/MTJ structure commonly used for MRAM applications. The first modification that is required is to replace the stable free layer of the MTJ with an LBM. The second modification is to add an inverter to the drain output that amplifies the fluctuations caused by the MTJ resistance.

An MTJ is a device with two magnetic contacts whose electrical resistance R MTJ takes on one of two values R P and R AP depending on whether the magnets are parallel (P) or antiparallel (AP). MTJs are typically used as memory devices, though in recent years applications of MTJs for logic and novel types of computation have been discussed 36-42 .

Standard MTJ devices go to great lengths to ensure that the magnets they use are stable and can store information for many years. The resistance of bad MTJ's, on the other hand, constantly fluctuates between R P and R AP 3 . If we put it in series with a transistor which is

FIG. 3. Embedded MRAM p-bit: a. An NMOS pulldown transistor in series with a stochastic-MTJ whose resistance fluctuates between RP and R AP as shown in b. c. Using a 14 nm HP-FinFET model 43 the input voltage, V in , versus mid-point, V m , and output V out , voltages is simulated in SPICE. Several fixed resistances are shown to convey how V m would vary with modifications to the parallel and antiparallel resistances.

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Circuit Diagram and Voltage Transfer Characteristics

### Overview

The image presents a technical schematic of a p-bit circuit (a) and its conceptual model (b), accompanied by voltage transfer characteristics (c). The diagram illustrates a loop-based memory (LBM) circuit with voltage inputs/outputs, while the graph shows the relationship between input voltage (V_in) and memory/output voltages (V_m, V_out) under varying conditions.

### Components/Axes

**Diagram (a):**

- **LBM Circuit**: Contains a loop with a yellow band (likely a resistive element), connected to:

- **V_in**: Input voltage source

- **V_m**: Memory voltage node

- **V_out**: Output voltage node

- **V_dd/2**: Half of the supply voltage (reference point)

- **Conceptual Model (b)**:

- **p-bit State**: Simplified representation with:

- **R_P**: Parallel resistance

- **R_AP**: Anti-parallel resistance

- **V_m**: Memory voltage source

- **V_dd/2**: Supply voltage reference

**Graph (c):**

- **X-axis**: Input voltage (V_in) ranging from -0.4 to +0.4

- **Y-axis**: Memory voltage (V_m) and Output voltage (V_out), both ranging from -0.4 to +0.4

- **Legend**:

- Solid lines: V_m values (e.g., V_m100K, V_m50K, V_m10K, V_m1P)

- Dashed lines: V_out values (e.g., V_out_P, V_out_AP)

- Dotted lines: Reference voltages (V_m, V_out)

### Detailed Analysis

**Graph Trends**:

1. **V_m (Solid Lines)**:

- Sharp transition at V_in ≈ 0 (hysteresis-like behavior)

- Higher V_m values (e.g., V_m100K) show wider hysteresis loops

- Lower V_m values (e.g., V_m1P) exhibit narrower transitions

2. **V_out (Dashed Lines)**:

- Step-like transitions at V_in ≈ ±0.2

- V_out_P (blue dashed) and V_out_AP (red dashed) show complementary switching

3. **Reference Lines (Dotted)**:

- V_m (gray) and V_out (black) form baseline thresholds

**Key Observations**:

- **Hysteresis Effect**: V_m shows memory retention (non-linear response to V_in)

- **Bistable Behavior**: V_out_P and V_out_AP represent distinct states (parallel/anti-parallel)

- **Voltage Scaling**: Higher V_m values correlate with broader hysteresis ranges

- **Symmetry**: V_in = 0 acts as a critical threshold for state switching

### Interpretation

The circuit demonstrates a **p-bit** (programmable bit) functionality, where:

1. **LBM Circuit (a)**:

- The yellow band in the loop likely represents a resistive memory element (e.g., RRAM)

- V_m controls the memory state via voltage-dependent resistance

- V_out reflects the output state (parallel/anti-parallel configuration)

2. **Conceptual Model (b)**:

- R_P and R_AP represent the two stable resistance states of the memory element

- V_m acts as a control voltage to switch between states

- V_dd/2 provides a reference for bipolar switching

3. **Graph (c)**:

- The sharp transitions confirm **threshold-based switching** (typical of memristive devices)

- Hysteresis in V_m suggests **non-volatility** (retains state without power)

- Complementary V_out_P/V_out_AP indicates **bistable logic** (0/1 states)

**Technical Implications**:

- The system implements **analog-to-digital conversion** via voltage thresholds

- Hysteresis enables **error-resistant memory** (resists noise-induced state changes)

- The p-bit architecture supports **in-memory computing** (data processing within memory cells)

**Uncertainties**:

- Exact resistance values (R_P, R_AP) are not quantified

- Temperature dependence of V_m is implied but not explicitly modeled

- Non-ideal effects (e.g., leakage currents) are not shown in the schematic

</details>

a voltage controlled resistance R T ( V in ) then the voltage V m (Fig. 3) can be written as

<!-- formula-not-decoded -->

The magnitude of this fluctuating voltage V m is largest when the transistor resistance R T ∼ R P or R AP but gets suppressed if R T R P or if R T R AP . The input voltage controls R T thereby tuning the stochastic output V m as shown in Fig. 3c. It was shown that an additional inverter provides an output that is approximately described by an expression that looks just like the BSN (Eq. 1)

<!-- formula-not-decoded -->

but with dimensionless variables like m i and I i replaced by scaled circuit voltages V out and V in .

The scheme in Fig. 3 provides tunability through the series transistor and does not involve the physics of the fluctuating resistor. Ideally, the magnet is unaffected by the change in the transistor resistance though the drain current, in principle, could pin the magnet. In our simulations that are based on Ref. 13 , we take the pinning current into account through a spin-polarized current ( I s ) proportional to an effective fixed layer polarization and the drain current ( I D ), I s = ( P ) I D ˆ x , where ˆ x is the fixed layer direction. This spin-current enters the sLLG equation that calculates an instantaneous magnetization which in turn controls the MTJ resistance.

We note that any significant pinning around zero input voltage V in,i has to be minimized through proper design, especially for low barrier perpendicular magnets which are relatively easy to pin. Unintentional pinning 44 should in general not be an issue for circular in-plane LBM's due to the strong demagnetizing field. The pinning behavior for the average (steady-state) magnetization can be qualitatively understood by numerical simulations of the sLLG equation. In the case of low-barrier perpendicular magnets the spin-torque pinning needs to overcome the thermal noise and therefore the pinning current is of order I PMA ≈ 2( q/ ) αkT where α is the damping coefficient of the magnet. In the case of circular in-plane magnets, the pinning current is of order I IMA ≈ 2( q/ ) αH D M s Vol . , which is much larger than I PMA since for for typical parameters ( H D M s Vol . kT ).

Since the state of the magnet is not affected, if the input voltage V in,i in Eq. 3 is changed at t=0, the statistics of the output voltage V out,i will respond within tens of picoseconds (typical transistor switching speeds) 45 irrespective of the fluctuation rates of the magnet. However, the magnet fluctuations will determine the correlation time of the random number r in Eq. 3.

Alternatively one can envision structures where the input controls the statistics of the fluctuating resistor itself, through phenomena such as the spin-Hall effect 12 or the magneto-electric effect 46 based on a voltage control of magnetism (see for example 47,48 ). In that case, both the speed of response and the correlation time of the random number r will be determined by the specific phenomenon involved.

Non-spintronic implementations: Note that the structure in Fig. 3 could use any fluctuating resistor including CMOS-based units in place of the MTJ showing that the physical realization of a p-bit need not involve spins 49 . For example, a linear feedback shift register (LFSR) is often used to generate a pseudo-randomly fluctuating bit stream 50 . We can apply this fluctuating voltage to the gate of a transistor to obtain a fluctuating resistor which can replace the MTJ in Fig. 3a. We note that the main appeal of the structure in Fig. 3 lies in its simplicity, since a 1T/1MTJ design coupled with two more transistors provide the tunable randomness in a compact transistorlike building block. Using completely digital p-circuit implementations 32 could offer short term scalability and reliability but they would consume a much larger area and power per p-bit.

## B. Weighted p-bit

The structure in Fig. 3 gives us a 'neuron' that implements Eq. 1 in hardware. Such neurons have to be used in conjunction with a 'synapse' that implements

FIG. 4. Example of a weighted p-bit integrating relevant parts of the synapse onto the neurons: Leveraging floating-gate devices along the lines proposed in neuMOS 51 devices, a collection of synapse inputs (from 1 to n) can be summed to produce the bias voltage, V IN ,i for a voltage driven p-bit 52 .

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Block Diagram: Signal Processing System with Neural Network Component

### Overview

The diagram illustrates a hybrid analog-digital signal processing system combining a neural network-like computation with analog voltage biasing and buffering. The system is divided into two primary functional blocks separated by a dashed line, with labeled equations governing the relationships between components.

### Components/Axes

1. **Left Block (Eq. 2)**:

- **Inputs**:

- `Vblas,i` (bias voltage input)

- `Vout,j` and `Vout,j+n` (sequential output voltages)

- **Equation**:

- `I_i = Σ W_ij * m_j` (weighted sum of inputs, representing neural network neuron activation)

- **Components**:

- Voltage sources: `+VDD/2` and `-VDD/2` (supply rails)

- Buffer/amplifier block labeled "LBM" (likely a linear buffer or operational amplifier)

2. **Right Block (Eq. 1)**:

- **Equation**:

- `m_i = sgn[tanh(I_i - r)]` (sigmoid-like activation function with threshold `r`)

- **Components**:

- Voltage source: `+VDD/2` (biasing input to tanh function)

- Output terminal: `Vout,i` (final processed signal)

### Detailed Analysis

- **Left Block**:

- The summation `I_i = Σ W_ij * m_j` suggests a neural network neuron computing a weighted sum of inputs `m_j` with weights `W_ij`.

- Voltage sources `+VDD/2` and `-VDD/2` provide differential biasing, likely to center the signal around 0V for analog processing.

- The "LBM" block acts as a linear buffer or amplifier, maintaining signal integrity before passing to the right block.

- **Right Block**:

- The activation function `m_i = sgn[tanh(I_i - r)]` introduces non-linearity:

- `tanh(I_i - r)` squashes the input `I_i` (centered at `r`) into a hyperbolic tangent range.

- `sgn()` converts the result to a binary output (`+1` or `-1`), acting as a hard limiter.

- The output `Vout,i` is directly connected to the LBM, indicating analog voltage-level representation of the binary output.

### Key Observations

1. **Signal Flow**:

- Inputs `Vblas,i`, `Vout,j`, and `Vout,j+n` are processed through the left block to compute `I_i`.

- `I_i` is then thresholded and non-linearly transformed in the right block to produce `m_i`, which feeds back into the left block via `Vout,i`.

2. **Biasing**:

- The `+VDD/2` and `-VDD/2` sources ensure symmetric voltage ranges for analog components, critical for accurate tanh function operation.

3. **Feedback Loop**:

- `Vout,i` (output of the right block) is fed back as `Vout,j`/`Vout,j+n` in the left block, suggesting iterative processing or recurrent network behavior.

### Interpretation

This diagram represents a **neuromorphic analog-digital hybrid system**, where:

- The left block implements a **linear neuron** (Eq. 2) with analog voltage inputs and outputs.

- The right block applies a **binary thresholding function** (Eq. 1) to introduce non-linearity, mimicking spiking neuron behavior.

- The **LBM** ensures signal fidelity during analog-to-digital conversion, while the `VDD/2` biasing maintains operational stability.

The system likely models a **spiking neural network (SNN)** or **event-based vision sensor** front-end, where analog voltages represent spike timings, and binary outputs trigger downstream processing. The feedback loop (`Vout,i` → `Vout,j`) implies recurrent connections, enabling temporal memory or adaptive weighting.

</details>

Eq. 2. Alternatively we could design a 'weighted p-bit' that integrates each element of Eq. 1 with the relevant part of Eq. 2. For example, we could use floating gate devices along the lines proposed in neuMOS 51 devices as shown in Fig. 4. From charge conservation we can write

<!-- formula-not-decoded -->

where C 0 is the input capacitance of the transistor. This can be rewritten as

<!-- formula-not-decoded -->

By scaling V in and V out (see Eq. 3) to play the roles of the dimensionless quantities I i and m i respectively, we can recast Eq. 4 in a form similar to Eq. 2:

<!-- formula-not-decoded -->

The weights W ij can be adjusted by controlling the specific capacitors C ij that are connected. The range of allowed weights and connections is then limited by the routing topology and neuMOS device size. Note that the control of weights through C ij works best if C 0 ∑ j C ij so that W i,j ≈ C i,j /C 0 , however it is possible to design a weighted p-bit design without this assumption ( C 0 ∑ C ij ) as discussed in detail in Ref. 52 .

Similar control can also be achieved through a network of resistors. The weights are given by the same expression, but with capacitances C ij replaced by conductances

G ij 22 . However, the input conductance G 0 of FET's is typically very low, so that an external conductance has to be added to make G 0 ∑ j G ij .

## III. APPLICATIONS OF P-CIRCUITS

As noted earlier, real applications involve p-bits interconnected by a synapse that can be implemented off-chip either in software or with a hardware matrix multiplier, but then it is necessary to transfer data back and forth between Eq. 1 and Eq. 2. Therefore, a low-level compact hardware implementation of a p-bit along with a local synapse as envisioned in Fig. 4 could be a hardware accelerator for many types of applications, some of which will be discussed in this section. In the capacitvely weighted p-bit design of Fig. 4, the weights and connectivity of the of the p-bit could be dynamically adjusted based on the encoding of a given problem by leveraging a network of programmable switches 53 as would be encountered in FPGAs. Such a p-bit with local interconnections would look like a compact nanodevice implementation of highly scaled digital spiking neurons of neuromorphic chips such as TrueNorth 54 . Alternatively, the interconnection function could be performed off-chip using standard CMOS devices such as FPGAs or GPUs while p-bits are implemented in a standalone chip by modifying embedded MRAM technology. Note however, the off-chip implementation of the interconnection matrix would impose a timing constraint for an asynchronous mode of operation, which requires the weighted summation operation (Eq. 2) to operate much faster than the p-bit operation (Eq. 1) for proper convergence 10,55 . A full on-chip implementation of a reconfigurable p-bit could function as a low-power, efficient hardware accelerator for applications in Machine Learning and Quantum Computing, but in the near term a heterogenous multi-chip synapse / p-bit combination could also prove to be useful.

Now that we have discussed some possible approaches to implementing Eqs. 1 and 2 in hardware, let us present a few illustrative p-bit networks that can implement useful functions and can be built using existing technology. Unless otherwise stated, these results are obtained from full SPICE simulations 56 that solve the stochastic Landau-Lifshitz-Gilbert equation coupled with the PTMbased transistor models in SPICE 43 to model the embedded MTJ based 3-terminal p-bit described in Fig. 3.

## A. Applications: Machine learning inspired

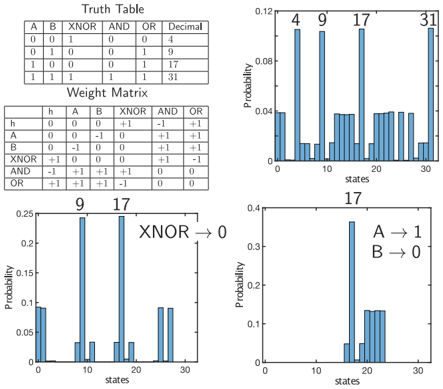

Bayesian inference: A natural application of stochastic circuits is in the simulation of networks whose nodes are stochastic in nature (See for example 16,57-59 ). An archetypal example is a genetic network, a small version of which is shown in Fig. 5. A well-known concept is that of genetic correlation or relatedness between different members of a family tree. For example, assuming

that each of the children C 1 and C 2 get half their genes from their parents F 1 and M 1 we can write their correlation as:

<!-- formula-not-decoded -->

assuming F 1 and M 1 are uncorrelated. Hence the wellknown result that siblings have 50% relatedness. Similarly one can work out the relatedness of more distant relationships like that of an aunt M 1 and her nephew C 3 which turns out to be 25%.

The point is that we could construct a p-circuit with each of the nodes represented by a hardware p-bit interconnected to reflect the genetic influences. The correlation between two nodes, say C 1 and C 2 , is given by

<!-- formula-not-decoded -->

If C 1 (t) and C 2 (t) are binary variables with allowed values of 1 and 0, then they can be multiplied in hardware with an AND gate. If the allowed values are bipolar, -1 and +1, then the multiplication can be implemented with an XNOR gate. In either case the average over time can be performed with a long time constant RCcircuit. A few typical results from SPICE simulations are shown in Fig. 5. The numerical results in Fig. 5 are in good agreement with Bayes theorem even though the circuit operates asynchronously without any sequencers. This is interesting since software simulations of Eqs. 1 and 2 with directed weights usually require the nodes to be updated from parent to child. Whether this behavior generalizes to larger directed networks is left for future work.

We use this genetic circuit as a simple illustration of the concept of nodal correlations which appear in many other contexts in everyday life. Medical diagnosis 60 , for example, involve symptoms such as, say high temperature, which can have multiple origins or parents and one can construct Bayesian networks to determine different causal relationships of interest.

Accelerating learning algorithms: Networks of pbits could be useful in implementing inference networks, where the network weights are trained offline by a learning algorithm in software and the hardware is used to repeatedly perform inference tasks efficiently 61,62 .

Another common example where correlations play an important role is in the learning algorithms used to train modern neural networks like the restricted Boltzmann machine (Fig. 6) 63 having a visible layer and a hidden layer, with connecting weights W ij linking nodes of one layer to those in the other, but not within a layer. A widely used algorithm based on 'contrastive

FIG. 5. Genetic circuit: C1 and C2 are siblings with parents F1, M1, while C3 and C4 are siblings with parents F2, M2. Two of the parents M1 and F2 are siblings with parents GF1, GM1. Genetic correlations between different members can be evaluated from the correlations of the nodal voltages in a p-circuit. An XNOR gate finds their product while a long time constant RC circuit provides the time average.

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Circuit Diagram and Correlation Graph: Component Interactions and Temporal Correlations

### Overview

The image contains two primary components:

1. A **circuit diagram** on the left depicting a logic gate (XNOR) connected to multiple components labeled with identifiers (e.g., GF1, GM1, F1, M1, etc.).

2. A **correlation graph** on the right showing time-dependent correlation values for pairwise interactions between components (e.g., ⟨M1 × C2⟩, ⟨C1 × C2⟩).

---

### Components/Axes

#### Circuit Diagram

- **Labels**:

- Top layer: `GF1`, `GM1` (green boxes).

- Middle layer: `F1`, `M1`, `F2`, `M2` (blue boxes).

- Bottom layer: `C1`, `C2`, `C3`, `C4` (red boxes).

- **Connections**:

- `GF1` and `GM1` feed into `M1` and `M2`.

- `F1` and `F2` feed into `C1` and `C2`.

- `C1` and `C2` connect to an XNOR gate via resistors (`R`) and capacitors (`C`).

- **Equation**:

- `⟨C1 × C2⟩ = ∫₀ᵀ (dT/T)C1(t)C2(t)` (integral of normalized product of C1 and C2 over time).

#### Correlation Graph

- **Axes**:

- **X-axis**: Time (ns), ranging from 0 to 500 ns.

- **Y-axis**: Correlation (unitless), ranging from 0 to 0.5.

- **Legend**:

- **Colors and Labels**:

- Green: `⟨M1 × C2⟩`

- Purple: `⟨C1 × C2⟩`

- Orange: `⟨C3 × M1⟩`

- Red: `⟨C2 × F2⟩`

- Blue: `⟨F1 × M2⟩`

- **Dashed Reference Lines**:

- Horizontal lines at 0.5, 0.25, and 0.

---

### Detailed Analysis

#### Correlation Graph Trends

1. **Green Line (`⟨M1 × C2⟩`)**:

- Peaks at ~0.55 at ~150 ns, then oscillates around 0.5.

- Crosses the 0.5 threshold twice.

2. **Purple Line (`⟨C1 × C2⟩`)**:

- Starts at 0, rises steadily to ~0.45 by 500 ns.

- Smooth, monotonic increase.

3. **Orange Line (`⟨C3 × M1⟩`)**:

- Fluctuates between 0.2 and 0.35.

- No clear trend; irregular oscillations.

4. **Red Line (`⟨C2 × F2⟩`)**:

- Peaks at ~0.3 at ~200 ns, then declines to ~0.15 by 500 ns.

5. **Blue Line (`⟨F1 × M2⟩`)**:

- Remains below 0.25 throughout.

- Sharp dip to ~0.1 at ~300 ns.

#### Circuit Diagram Observations

- The XNOR gate integrates signals from `C1` and `C2`, with the equation suggesting a time-averaged product correlation.

- Components are hierarchically organized: `GF1`/`GM1` (top) → `F1`/`F2`/`M1`/`M2` (middle) → `C1`/`C2`/`C3`/`C4` (bottom).

---

### Key Observations

1. **High Correlation**:

- `⟨M1 × C2⟩` and `⟨C1 × C2⟩` show the strongest correlations, suggesting critical interactions between memory (`M1`) and capacitor (`C2`) components.

2. **Decaying Correlations**:

- `⟨C2 × F2⟩` and `⟨F1 × M2⟩` exhibit lower or decaying values, indicating weaker or transient relationships.

3. **Stability**:

- `⟨C3 × M1⟩` remains stable but low, possibly reflecting a secondary interaction.

---

### Interpretation

1. **System Behavior**:

- The correlation graph reveals that interactions between memory (`M1`, `M2`) and capacitor (`C1`, `C2`) components dominate the system’s behavior. The XNOR gate’s output (`⟨C1 × C2⟩`) correlates with the product of `C1` and `C2`, aligning with the integral equation.

2. **Temporal Dynamics**:

- Peaks in `⟨M1 × C2⟩` at 150 ns suggest a transient event (e.g., signal propagation delay or feedback loop).

- The steady rise in `⟨C1 × C2⟩` implies a cumulative effect of capacitor interactions over time.

3. **Anomalies**:

- The blue line (`⟨F1 × M2⟩`) remains consistently low, potentially indicating a design limitation or unoptimized coupling between `F1` and `M2`.

---

### Conclusion

The data demonstrates that the system’s performance is heavily influenced by the interplay between memory and capacitor components. The XNOR gate’s role in integrating `C1` and `C2` signals is critical, as evidenced by the strong correlation trends. Further optimization of `F1`/`M2` coupling could enhance overall system efficiency.

</details>

divergence' 64 adjusts each weight W ij according to

<!-- formula-not-decoded -->

which requires the repeated evaluation of the correlations 〈 v i h j 〉 . Computing such correlations exactly becomes intractable due to their exponential complexity in the number of neurons, therefore contrastive divergence is often limited by a fixed number of steps (CDn) to limit the number of repeated evaluation of these correlations. This process could be accelerated through an efficient physical representation of the neuron and the synapse 65,66 .

## B. Applications: Quantum inspired

The functionality of neural networks is determined by the weight matrix W ij which determines the connectivity among the neurons. They can be classified broadly by the relation between W ij and W ji . In traditional feedforward networks, information flow is directed with neuron 'i' influencing neuron 'j' through a non-zero weight W ij but with no feedback from neuron 'j' , such that W ji = 0. At the other end of the spectrum, is a network with all connections being reciprocal W ij = W ji . In between these two extremes are the class of networks for which the weights between two nodes are asymmetric, but non-zero.

The class of networks with symmetric connections is particularly interesting since they have a close parallel with classical statistical physics where the natural connections between interacting particles is symmetric and the equilibrium probabilities are given by the celebrated Boltzmann law expressing the probability of a particular configuration α in terms of an energy E α associated with

FIG. 6. Restricted Boltzmann Machine (RBM): RBMs are a special class of stochastic neural networks that restrict connections within a hidden and a visible layer. Standard learning algorithms require repeated evaluations of correlations of the form 〈 v i h j 〉 .

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Diagram: Variable Interaction System

### Overview

The image depicts a two-layered system with bidirectional interactions between variables. The top layer contains variables labeled `V₁` to `Vₙ`, while the bottom layer contains variables labeled `h₁` to `hₘ`. Arrows connect specific variables in the top layer to variables in the bottom layer, indicating mutual influence.

### Components/Axes

- **Top Layer**:

- Labeled `V₁` (leftmost) to `Vₙ` (rightmost), with ellipses (`...`) indicating intermediate variables.

- Background color: Light blue.

- **Bottom Layer**:

- Labeled `h₁` (leftmost) to `hₘ` (rightmost), with ellipses (`...`) indicating intermediate variables.

- Background color: Light green.

- **Arrows**:

- Two double-headed arrows connect `V₁` and `Vₙ` to `h₁` and `hₘ`, respectively.

- Arrows are black, with no explicit labels or legends.

### Detailed Analysis

- **Labels**:

- Top layer variables: `V₁`, `V₂`, ..., `Vₙ` (exact count unspecified, represented by `n`).

- Bottom layer variables: `h₁`, `h₂`, ..., `hₘ` (exact count unspecified, represented by `m`).

- **Spatial Grounding**:

- Top layer is positioned above the bottom layer.

- Arrows originate from `V₁` and `Vₙ` (top layer) and point to `h₁` and `hₘ` (bottom layer), with bidirectional flow.

- **Color Consistency**:

- No legend present; colors are used solely for layer distinction (light blue for `V`, light green for `h`).

### Key Observations

1. The system emphasizes **bidirectional relationships** between variables in the top and bottom layers.

2. The use of `n` and `m` suggests scalability, with the number of variables in each layer being variable.

3. Arrows connect only the first and last variables in each layer (`V₁` ↔ `h₁`, `Vₙ` ↔ `hₘ`), implying a focus on boundary interactions.

### Interpretation

This diagram likely represents a **feedback or coupling mechanism** between two sets of variables. The bidirectional arrows suggest that variables in the top layer (`V`) influence the bottom layer (`h`), and vice versa. This could model:

- **Neural network architectures** (e.g., encoder-decoder with feedback).

- **Control systems** where outputs affect inputs.

- **Dynamic systems** with mutual dependencies (e.g., economic models, ecological interactions).

The absence of numerical values or explicit scales indicates this is a **conceptual diagram** rather than a data-driven chart. The focus is on structural relationships rather than quantitative analysis.

</details>

that configuration.

<!-- formula-not-decoded -->

<!-- formula-not-decoded -->

where T denotes transpose and the constant Z is chosen to ensure that all P ′ α s add up to one. This energy principle is only available for reciprocal networks 67 , and can be very useful in determining the appropriate weights W ij for a particular problem.

This class of networks connects naturally to the world of quantum computing which is governed by Hermitian Hamiltonians, and is also the subject of the emerging field of Ising computing 10,16,68-72 .

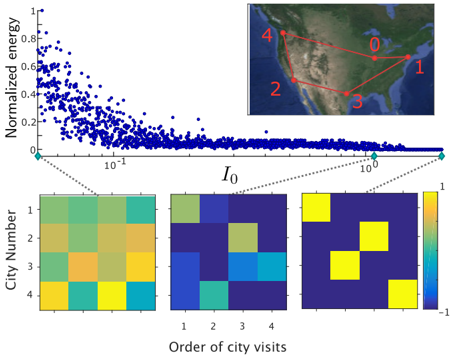

Invertible Boolean logic: Suppose, for example, we wish to design a Boolean gate which will provide three outputs reflecting the AND, OR and XNOR functions of the two inputs A and B. The truth table is shown in Fig. 7. Note that although we are using the binary notation 1 and 0, they actually stand for p-bit values of +1 and -1 respectively.

Since there are five p-bits, two representing the inputs and three representing the outputs, the system has 2 5 = 32 possible states, which can be indexed by their corresponding decimal values. Each of these configurations has an associated energy, E n , n = 0 , 1 , . . . , 31 . What we need is a weight matrix W ij such that the desired configurations 4, 9, 17 and 31 (in decimal notation) specified by the truth table have a low energy E α (Eq. (8)) compared to the rest, so that they are occupied with higher probability. This can be done either by using the principles of linear algebra 12 or by using machine learning algorithms 73 to obtain the weight matrix shown in Fig. 7. Note that an additional p-bit labeled 'h' has been introduced which is clamped to a value of +1 by applying a large bias.

On the right of Fig. 7, a histogram is showing the frequency of all the possible (32) configurations obtained from a simulation of Eq. (1) and Eq. (2) using this weight matrix. Similar results are obtained from a SPICE simulation of a p-circuit of weighted p-bits. Note the peaks at the desired truth table values, with smaller peaks at some of the undesired values. The peaks closely follow

FIG. 7. Invertible Boolean logic: A multi-function Boolean gate with 6 p-bits is shown. Inputs A and B produce the output for a 2-input XNOR, AND and OR gate, respectively. The handle bit, 'h' is used to remove the complementary low-energy states that do not belong to the truth table shown. In the unclamped mode, the system shows the states corresponding to the the lines of the truth table with high probability. A and B can be clamped to produce the correct output for the XNOR, AND and OR in the direct mode. In the inverse mode, any one of the outputs (XNOR is shown as an example) can be clamped to a given value, and the inputs fluctuate among possible input combinations corresponding to this output.

<details>

<summary>Image 7 Details</summary>

### Visual Description

## Truth Table and Weight Matrix with Probability Distributions

### Overview

The image contains four components:

1. A **truth table** for logical operations (XNOR, AND, OR) with decimal equivalents

2. A **weight matrix** showing numerical relationships between variables

3. Two **probability bar charts** visualizing state distributions

4. A **legend** explaining color mappings for the charts

---

### Components/Axes

#### Truth Table

- **Columns**: A, B, XNOR, AND, OR, Decimal

- **Rows**: All combinations of A (0/1) and B (0/1)

- **Decimal Column**: Binary-to-decimal conversion (e.g., 00 = 0, 01 = 1, 10 = 2, 11 = 3)

#### Weight Matrix

- **Rows**: h, A, XNOR, AND, OR

- **Columns**: h, A, B, XNOR, AND, OR

- **Values**: Numerical weights with signs (e.g., +1, -1, +0.5)

#### Probability Charts

- **X-Axis**: "states" (labeled 4, 9, 17, 31 for the first chart; 9, 17 for the second)

- **Y-Axis**: "Probability" (0.0 to 0.12 scale)

- **Legends**:

- First chart: "XNOR → 0" (blue bars)

- Second chart: "A → 1" and "B → 0" (blue bars)

---

### Detailed Analysis

#### Truth Table

| A | B | XNOR | AND | OR | Decimal |

|---|---|------|-----|----|---------|

| 0 | 0 | 1 | 0 | 0 | 0 |

| 0 | 1 | 0 | 0 | 1 | 1 |

| 1 | 0 | 0 | 0 | 1 | 2 |

| 1 | 1 | 1 | 1 | 1 | 3 |

#### Weight Matrix

| | h | A | B | XNOR | AND | OR |

|-------|---|----|----|------|-----|-----|

| **h** | 0 | 0 | 0 | +1 | -1 | +1 |

| **A** | 0 | 0 | -1 | +1 | +1 | +1 |

| **XNOR** | 0 | -1 | 0 | 0 | +1 | +1 |

| **AND** | 0 | +1 | +1 | +1 | 0 | 0 |

| **OR** | 0 | +1 | +1 | +1 | +1 | 0 |

#### Probability Charts

1. **First Chart (XNOR → 0)**:

- States: 4 (highest bar), 9, 17, 31

- Probabilities: ~0.12 (state 4), ~0.08 (state 9), ~0.06 (state 17), ~0.04 (state 31)

- Bars: Blue, aligned with legend "XNOR → 0"

2. **Second Chart (A → 1, B → 0)**:

- States: 9 (highest bar), 17

- Probabilities: ~0.15 (state 9), ~0.10 (state 17)

- Bars: Blue, aligned with legend "A → 1" and "B → 0"

---

### Key Observations

1. **Truth Table**:

- XNOR outputs 1 only when A and B match (rows 0 and 3).

- Decimal values confirm binary-to-decimal mapping (e.g., 11 = 3).

2. **Weight Matrix**:

- Negative weights for XNOR and AND in row "h" suggest inhibitory relationships.

- Positive weights for OR and A/B in row "h" indicate excitatory relationships.

3. **Probability Charts**:

- State 9 and 17 dominate both charts, suggesting these states are most probable.

- First chart shows a decay in probability from state 4 to 31.

- Second chart emphasizes state 9 (A=1, B=0) as the most likely outcome.

---

### Interpretation

- **Logical Relationships**: The truth table defines deterministic outputs for XNOR, AND, and OR gates.

- **Weight Matrix Dynamics**:

- Negative weights for XNOR and AND in row "h" may suppress their influence on the output.

- Positive weights for OR and A/B suggest these variables directly contribute to the output.

- **Probability Trends**:

- State 9 (A=1, B=0) has the highest probability in both charts, aligning with the weight matrix's emphasis on A and B.

- State 17 (A=1, B=1) appears in both charts but with lower probability, possibly due to inhibitory weights for AND/XNOR.

- **Anomalies**:

- State 4 (A=0, B=0) has the highest probability in the first chart despite A and B being 0, which conflicts with the weight matrix's positive weights for A/B. This may indicate a non-linear interaction or external factors not captured in the matrix.

The data suggests a system where logical operations (XNOR, AND, OR) are weighted to prioritize certain states (e.g., A=1, B=0), with inhibitory/excitatory relationships shaping the probability distribution. The discrepancy in state 4's high probability warrants further investigation into the model's assumptions or data quality.

</details>

the Boltzmann law, such that

<!-- formula-not-decoded -->

Undesired peaks can be suppressed if we make the Wmatrix larger, say by an overall multiplicative factor of 2. If all energies are increased by a factor of 2, the ratio of probabilities would be squared: a ratio of 10 would become a ratio of 100.

It is also possible to operate the gate in a traditional feed-forward manner where inputs are specified and an output is obtained. This mode is shown in the middle panel on the right where the inputs A and B are clamped to 1 and 0 respectively. Only one of the four truth table peaks can be seen, namely the line corresponding to A=1, B=0, which is labeled 17.

What is more interesting is that the gates can be run in inverse mode as shown in the lower right panel. The XNOR output is clamped to 0 corresponding to specific lines of the truth table corresponding to 9 and 17. The inputs now fluctuate between the two possibilities, indicating that we can use these gates to provide us with all possible inputs consistent with a specified output, a mode of operation not possible with standard Boolean gates.

FIG. 8. Combinatorial Optimization: A 5-city Traveling Salesman Problem (TSP) implemented using a network of 16 p-bits (fixing city 0), each having two indices, the first denoting the order in which a city is visited and the second denoting the city. The interaction parameter I 0 scales all weights and acts as an inverse temperature and is slowly increased via a simple annealing schedule I 0 ( t + t eq ) = ( 1 / 0 . 99 ) I 0 ( t ) to guide the system into the lowest energy state, providing the shortest traveling distance (Map imagery data: Google, TerraMetrics).

<details>

<summary>Image 8 Details</summary>

### Visual Description

## Scatter Plot with Heatmaps and Network Diagram: Normalized Energy vs. I₀ and City Visit Orders

### Overview

The image combines three primary components:

1. A **scatter plot** showing normalized energy vs. I₀ (logarithmic scale).

2. Three **heatmaps** representing city visit order correlations.

3. A **network diagram** of U.S. cities labeled 0–4.

### Components/Axes

#### Scatter Plot

- **X-axis**: I₀ (logarithmic scale, 10⁻¹ to 10¹).

- **Y-axis**: Normalized energy (0 to 1).

- **Data points**: Blue dots with a power-law decay trend.

- **Inset**: Zoomed-in region highlighting I₀ ≈ 10⁰.

#### Heatmaps

- **X-axis**: Order of city visits (1–4).

- **Y-axis**: City Number (1–4).

- **Color scale**: Green (low) to yellow (high), with a legend ranging from -1 to 1.

- **Heatmaps**:

- **I₀ = 10⁻¹**: Mixed green/yellow (moderate values).

- **I₀ = 10⁰**: Blue/green dominance (lower values).

- **I₀ = 10¹**: Blue/yellow squares (localized high values).

#### Network Diagram

- **Nodes**: Labeled 0–4, connected by red lines.

- **Geographic context**: Map of the U.S. with cities positioned as nodes.

### Detailed Analysis

#### Scatter Plot

- **Trend**: Normalized energy decreases sharply for I₀ < 10⁰, then plateaus.

- **Key data points**:

- At I₀ = 10⁻¹: Energy ≈ 0.8–0.9.

- At I₀ = 10⁰: Energy ≈ 0.2–0.4.

- At I₀ = 10¹: Energy ≈ 0.0–0.1.

#### Heatmaps

- **I₀ = 10⁻¹**:

- City 1: High values (yellow) for visits 1 and 4.

- City 4: High values (yellow) for visits 1 and 3.

- **I₀ = 10⁰**:

- City 2: Moderate values (green) for visits 2 and 3.

- City 3: Low values (blue) for visits 1 and 4.

- **I₀ = 10¹**:

- City 1: High values (yellow) for visits 1 and 4.

- City 4: High values (yellow) for visits 1 and 3.

#### Network Diagram

- **Connections**:

- 0 ↔ 1 ↔ 2 ↔ 3 ↔ 4 (linear chain).

- Additional diagonal links: 0 ↔ 3 and 1 ↔ 4.

### Key Observations

1. **Power-law decay**: Normalized energy drops rapidly for I₀ < 10⁰, then stabilizes.

2. **Heatmap anomalies**:

- At I₀ = 10¹, yellow squares (high values) appear in specific city-visit pairs (e.g., City 1, Visit 1).

- These may indicate localized interactions or feedback loops.

3. **Network structure**: The U.S. city network forms a connected graph with redundant paths (e.g., 0–3 and 1–4 links).

### Interpretation

- **Energy-I₀ relationship**: The power-law decay suggests that energy dissipation is highly sensitive to I₀ at low values but becomes less dependent at higher I₀.

- **Heatmap correlations**: High-energy values (yellow) at I₀ = 10¹ imply that certain city visit orders (e.g., 1→4) amplify energy contributions, possibly due to network topology.

- **Network implications**: The redundant connections (e.g., 0–3 and 1–4) may facilitate energy redistribution, explaining the plateau in the scatter plot.

- **Anomalies**: The localized yellow squares in the I₀ = 10¹ heatmap suggest specific city pairs (e.g., City 1 and 4) have disproportionate influence, warranting further investigation into their physical or systemic properties.

### Spatial Grounding

- **Legend**: Right-aligned for heatmaps, matching color gradients to values.

- **Inset**: Positioned near the lower-left of the scatter plot, focusing on I₀ ≈ 10⁰.

- **Network diagram**: Top-right corner, spatially isolated from heatmaps but thematically linked via city labels.

### Conclusion

The data demonstrates a strong interplay between I₀, city visit order, and network structure in determining normalized energy. The anomalies in the heatmaps highlight the need to explore localized interactions within the network, potentially revealing hidden dependencies or feedback mechanisms.

</details>

This invertible mode is particularly interesting because there are many cases where the direct problem is relatively easy compared to the inverse problem. For example, we can find a suitable weight matrix to implement an adder that provides the sum S of numbers A, B and C. But the same network also solves the inverse problem where a sum S is provided and it finds combinations of k numbers that add up to S 32,52 . This inverse k-sum or subset sum problem is known to be NP-complete 74 and is clearly much more difficult than direct addition. Similarly we can design a weight matrix such that the network multiplies any two numbers. In inverse mode the same network can factorize a given number, which is a hard problem 75 . This ability to factorize has been shown with relatively small numbers 12,32 . How well p-circuits will scale to larger factorization problems remains to be explored.

It is worth mentioning that this method of solving integer factorization and the subset sum problem is similar to the deterministic 'memcomputing' framework where a 'self-organizing logic circuit' is set up to solve the direct problem and operated in reverse to solve the inverse problem (See for example, Ref. 76,77 ).

Optimization by classical annealing: It has been shown that many optimization problems can be mapped onto a network of classical spins with an appropriate weight matrix, such that the optimal solution corresponds to the configuration with the lowest energy 78 . Indeed, even the problem of integer factorization discussed above in terms of inverse multiplication can alternatively be addressed in this framework by casting it as an optimization problem 79-81 .

A well-known example of an optimization problem is the classic N-city traveling salesman problem (TSP). It involves finding the shortest route by which a salesman can visit all cities once starting from a particular one. This problem has been mapped to a network of ( N -1) 2 spins where each spin has two indices, the first denoting the order in which a city is visited and the second denoting the city.

Fig. 8 shows a 5-city TSP mapped to a 16 p-bit network and translated into a p-circuit that is simulated using SPICE. The overall W-matrix is slowly increased and with increasing interaction the network gradually settles from a random state into a low energy state. This process is often called simulated annealing 82 based on the similarity with the freezing of a liquid into a solid with a lowering of temperature in the physical world, which reduces the random thermal energy relative to a fixed interaction.

Note that at high values of interaction the p-bits settle to the correct solution with four p-bits highlighted corresponding to (1,1), (2,3), (3,2) and (4,4), showing that the cities should be visited in the order 1-3-2-4. Unfortunately things may not work quite so smoothly as we scale up to problems with larger numbers of p-bits. The system tends to get stuck in metastable states just as in the physical world solids develop defects that keep them from reaching the lowest energy state.

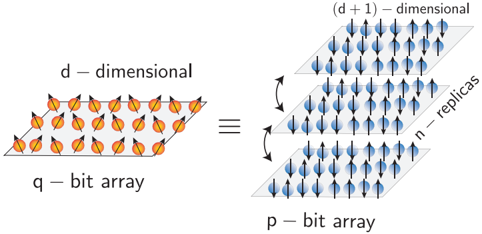

Optimization by quantum annealing: An approach that has been explored is the process of quantum annealing using a network of quantum spins implemented with superconducting q-bits 83,84 . However, it is known that for certain classes of quantum problems classified by 'stoquastic' Hamiltonians 85 , a network of q-bits can be approximated with a larger network of p-bits operating in hardware (Fig. 9) 86 . We have made use of this equivalence to design p-circuits whose SPICE simulations show correlations and averages comparable to those obtained with quantum annealers 86 .

## IV. CONCLUSIONS

In summary, we have introduced the concept of a probabilistic or p-bit, intermediate between the standard bits of digital electronics and the emerging q-bits of quantum computing. Low barrier magnets or LBM's provide a natural physical representation for p-bits and can be built either from perpendicular magnets (PMA) designed to be close to the in-plane transition or from circular in-plane magnets (IMA). Magnetic tunnel junctions (MTJ) built using LBM's as free layers can be combined with standard NMOS transistors to provide three-terminal building blocks for large scale probabilistic circuits that can be designed to perform useful functions. Interestingly, this three-terminal unit looks just like the 1T/MTJ de-

FIG. 9. Mapping a q-bit network into a p-bit network : A special class of quantum many body Hamiltonians that are 'stoquastic' can be solved by mapping them to a classical network of p-bits that consist of a finite number of replicas of the original system that are interacting in the 'vertical' direction. This approach implemented in software is also known as the Path Integral Monte Carlo method. A hardware implementation would constitute a p-computer that is capable of performing quantum annealing 86 .

<details>

<summary>Image 9 Details</summary>

### Visual Description

## Diagram: Transformation of Bit Arrays Across Dimensional Spaces

### Overview

The diagram illustrates a conceptual transformation between two bit arrays: a **d-dimensional q-bit array** (left) and a **(d + 1)-dimensional p-bit array** (right). Arrows indicate bidirectional relationships, with annotations for dimensionality, replication, and array types.

### Components/Axes

- **Left Panel**:

- Label: "d-dimensional q-bit array"

- Visual: Grid of orange arrows (representing qubits) arranged in a 2D lattice.

- Arrows: Black double-headed arrows pointing diagonally, suggesting directional relationships between qubits.

- **Right Panel**:

- Label: "(d + 1)-dimensional p-bit array"

- Subcomponents:

1. Top layer: Grid of blue arrows (representing p-bits) in a 3D-like lattice.

2. Middle layer: Labeled "n - replicas" (horizontal stack of identical blue arrow grids).

3. Bottom layer: Labeled "p-bit array" (single grid of blue arrows).

- Arrows: Black double-headed arrows connecting layers vertically and horizontally.

- **Legend/Annotations**:

- Colors: Orange (q-bit array), Blue (p-bit array).

- Text: "n - replicas" (middle layer), "p-bit array" (bottom layer).

### Detailed Analysis

- **Dimensionality**:

- Left: Explicitly labeled as **d-dimensional** (2D representation).

- Right: Explicitly labeled as **(d + 1)-dimensional** (3D-like representation).

- **Replication**:

- Middle layer of the right panel contains **n replicas** of the p-bit array, indicating repeated instances of the same structure.

- **Arrow Directions**:

- Left panel: Diagonal arrows suggest inter-qubit interactions or entanglement.

- Right panel: Vertical arrows between layers imply hierarchical relationships (e.g., replication or stacking). Horizontal arrows suggest parallel processing or synchronization.

### Key Observations

1. **Dimensional Expansion**: The transformation from q-bit to p-bit arrays increases dimensionality by 1 (d → d + 1).

2. **Replication Mechanism**: The "n - replicas" layer suggests a multiplicative process, possibly for redundancy or parallel computation.

3. **Bidirectional Arrows**: Implies reversible or symmetric relationships between the arrays (e.g., encoding/decoding, transformation/inversion).

### Interpretation

This diagram likely represents a quantum computing or information theory concept:

- **Qubit to P-Bit Transformation**: The increase in dimensionality (d → d + 1) may reflect encoding quantum states (qubits) into classical or higher-dimensional classical states (p-bits), a common step in quantum error correction or simulation.

- **Replication (n replicas)**: The middle layer’s replication could symbolize error correction codes (e.g., surface codes) or parallel processing architectures.

- **Bidirectional Arrows**: Suggests the process is reversible, critical for quantum operations where transformations must be unitary (preserving information).

The diagram emphasizes the interplay between dimensionality, replication, and directional relationships in bit array transformations, with color coding (orange/blue) distinguishing array types. No numerical data or trends are present, but the structural relationships imply a framework for scalable quantum-classical hybrid systems.

</details>

vice used in embedded MRAM technology, with only one difference: the use of an LBM for the MTJ free layer. We hope that this concept will help open up new application spaces for this emerging technology. However, a p-bit need not involve an MTJ, any fluctuating resistor could be combined with a transistor to implement it. It may be interesting to look for resistors that can fluctuate faster based on entities like natural and synthetic antiferromagnets 87,88 , for example.

The p-bit also provides a conceptual bridge between two active but disjoint fields of research, namely stochastic machine learning and quantum computing. This viewpoint suggests two broad classes of applications for p-bit networks. First, there are the applications that are based on the similarity of a p-bit to the binary stochastic neuron (BSN), a well-known concept in machine learning. Three-terminal p-bits could provide an efficient hardware accelerator for the BSN. Second, there are the applications that are based on the p-bit being like a poor man's q-bit. We are encouraged by the initial demonstrations based on full SPICE simulations that several optimization problems including quantum annealing are amenable to p-bit implementations which can be scaled up at room temperature using existing technology.

## ACKNOWLEDGMENTS

S.D. is grateful to Dr. Behtash Behin-Aein for many stimulating discussions leading up to Ref 16 .

## V. REFERENCES

- 1 E. Chen, D. Apalkov, Z. Diao, A. Driskill-Smith, D. Druist, D. Lottis, V. Nikitin, X. Tang, S. Watts, S. Wang, S. Wolf,

A. W. Ghosh, J. Lu, S. J. Poon, M. Stan, W. Butler, S. Gupta, C. K. A. Mewes, T. Mewes, and P. Visscher, 'Advances and Future Prospects of Spin-Transfer Torque Random Access Memory,' IEEE Transactions on Magnetics 46 , 1873-1878 (2010).

- 2 L. Lopez-Diaz, L. Torres, and E. Moro, 'Transition from ferromagnetism to superparamagnetism on the nanosecond time scale,' Physical Review B 65 , 224406 (2002).

- 3 N. Locatelli, A. Mizrahi, A. Accioly, R. Matsumoto, A. Fukushima, H. Kubota, S. Yuasa, V. Cros, L. G. Pereira, D. Querlioz, et al. , 'Noise-enhanced synchronization of stochastic magnetic oscillators,' Physical Review Applied 2 , 034009 (2014).

- 4 B. Parks, M. Bapna, J. Igbokwe, H. Almasi, W. Wang, and S. A. Majetich, 'Superparamagnetic perpendicular magnetic tunnel junctions for true random number generators,' AIP Advances 8 , 055903 (2018), https://doi.org/10.1063/1.5006422.

- 5 D. Vodenicarevic, N. Locatelli, A. Mizrahi, J. Friedman, A. Vincent, M. Romera, A. Fukushima, K. Yakushiji, H. Kubota, S. Yuasa, S. Tiwari, J. Grollier, and D. Querlioz, 'LowEnergy Truly Random Number Generation with Superparamagnetic Tunnel Junctions for Unconventional Computing,' Physical Review Applied 8 , 054045 (2017).

- 6 D. Vodenicarevic, N. Locatelli, A. Mizrahi, T. Hirtzlin, J. S. Friedman, J. Grollier, and D. Querlioz, 'Circuit-Level Evaluation of the Generation of Truly Random Bits with Superparamagnetic Tunnel Junctions,' in 2018 IEEE International Symposium on Circuits and Systems (ISCAS) (2018) pp. 1-4.

- 7 P. Debashis, R. Faria, K. Y. Camsari, and Z. Chen, 'Designing stochastic nanomagnets for probabilistic spin logic,' IEEE Magnetics Letters (2018).

- 8 R. P. Cowburn, D. K. Koltsov, A. O. Adeyeye, M. E. Welland, and D. M. Tricker, 'Single-domain circular nanomagnets,' Physical Review Letters 83 , 1042 (1999).

- 9 P. Debashis, R. Faria, K. Y. Camsari, J. Appenzeller, S. Datta, and Z. Chen, 'Experimental demonstration of nanomagnet networks as hardware for Ising computing,' in 2016 IEEE International Electron Devices Meeting (IEDM) (2016) pp. 34.3.134.3.4.

- 10 B. Sutton, K. Y. Camsari, B. Behin-Aein, and S. Datta, 'Intrinsic optimization using stochastic nanomagnets,' Scientific Reports 7 , 44370 (2017).

- 11 R. Faria, K. Y. Camsari, and S. Datta, 'Low-barrier nanomagnets as p-bits for spin logic,' IEEE Magnetics Letters 8 , 1-5 (2017).

- 12 K. Y. Camsari, R. Faria, B. M. Sutton, and S. Datta, 'Stochastic p -Bits for Invertible Logic,' Physical Review X 7 (2017), 10.1103/PhysRevX.7.031014.

- 13 K. Y. Camsari, S. Salahuddin, and S. Datta, 'Implementing pbits With Embedded MTJ,' IEEE Electron Device Letters 38 , 1767-1770 (2017).

- 14 A. Mizrahi, T. Hirtzlin, A. Fukushima, H. Kubota, S. Yuasa, J. Grollier, and D. Querlioz, 'Neural-like computing with populations of superparamagnetic basis functions,' Nature communications 9 , 1533 (2018).

- 15 M. Bapna and S. A. Majetich, 'Current control of time-averaged magnetization in superparamagnetic tunnel junctions,' Applied Physics Letters 111 , 243107 (2017).

- 16 B. Behin-Aein, V. Diep, and S. Datta, 'A building block for hardware belief networks,' Scientific Reports 6 , 29893 (2016).

- 17 R. P. Feynman, 'Simulating physics with computers,' International Journal of Theoretical Physics 21 , 467-488 (1982).

- 18 D. H. Ackley, G. E. Hinton, and T. J. Sejnowski, 'A Learning Algorithm for Boltzmann Machines*,' Cognitive Science 9 , 147169 (1985).

- 19 R. M. Neal, 'Connectionist learning of belief networks,' Artificial intelligence 56 , 71-113 (1992).

- 20 Eq. 1 can be equivalently written as m i = sgn[tanh I i + r ].

- 21 The signum function (sgn) would be replaced by the step function (Θ) and the tanh function would be replaced by the sigmoid function ( σ ) such that m i = Θ[ σ (2 I i ) -r 0 ] where the random number r 0 is uniformly distributed between 0 and 1.

- 22 M. Hu, J. P. Strachan, Z. Li, E. M. Grafals, N. Davila, C. Graves, S. Lam, N. Ge, J. J. Yang, and R. S. Williams, 'Dot-product engine for neuromorphic computing: programming 1t1m crossbar to accelerate matrix-vector multiplication,' in Proceedings of the 53rd annual design automation conference (ACM, 2016) p. 19.

- 23 S. Bhatti, R. Sbiaa, A. Hirohata, H. Ohno, S. Fukami, and S. Piramanayagam, 'Spintronics based random access memory: A review,' Materials Today (2017).

- 24 B. Behin-Aein, A. Sarkar, and S. Datta, 'Modeling circuits with spins and magnets for all-spin logic,' in Solid-State Device Research Conference (ESSDERC), 2012 Proceedings of the European (IEEE, 2012) pp. 36-40.

- 25 B. Behin-Aein, 'Computing multi-magnet based devices and methods for solution of optimization problems,' (2014), uS Patent 8,698,517.

- 26 W. H. Choi, Y. Lv, J. Kim, A. Deshpande, G. Kang, J.-P. Wang, and C. H. Kim, 'A magnetic tunnel junction based true random number generator with conditional perturb and real-time output probability tracking,' in Electron Devices Meeting (IEDM), 2014 IEEE International (IEEE, 2014) pp. 12-5.