## Gradual Type Theory(Extended Version)

MAX S. NEW, Northeastern University

DANIEL R. LICATA, Wesleyan University

AMAL AHMED, Northeastern University and Inria Paris

Gradually typed languages are designed to support both dynamically typed and statically typed programming styles while preserving the benefits of each. While existing gradual type soundness theorems for these languages aim to show that type-based reasoning is preserved when moving from the fully static setting to a gradual one, these theorems do not imply that correctness of type-based refactorings and optimizations is preserved. Establishing correctness of program transformations is technically difficult, because it requires reasoning about program equivalence, and is often neglected in the metatheory of gradual languages.

In this paper, we propose an axiomatic account of program equivalence in a gradual cast calculus, which we formalize in a logic we call gradual type theory (GTT). Based on Levy's call-by-push-value, GTT gives an axiomatic account of both call-by-value and call-by-name gradual languages. Based on our axiomatic account we prove many theorems that justify optimizations and refactorings in gradually typed languages. For example, uniqueness principles for gradual type connectives show that if the βη laws hold for a connective, then casts between that connective must be equivalent to the so-called 'lazy' cast semantics. Contrapositively, this shows that 'eager' cast semantics violates the extensionality of function types. As another example, we show that gradual upcasts are pure functions and, dually, gradual downcasts are strict functions. We show the consistency and applicability of our axiomatic theory by proving that a contract-based implementation using the lazy cast semantics gives a logical relations model of our type theory, where equivalence in GTT implies contextual equivalence of the programs. Since GTT also axiomatizes the dynamic gradual guarantee, our model also establishes this central theorem of gradual typing. The model is parametrized by the implementation of the dynamic types, and so gives a family of implementations that validate type-based optimization and the gradual guarantee.

CCS Concepts: · Theory of computation → Axiomatic semantics ; · Software and its engineering → Functional languages ;

Additional Key Words and Phrases: gradual typing, graduality, call-by-push-value

## 1 INTRODUCTION

Gradually typed languages are designed to support a mix of dynamically typed and statically typed programmingstyles and preserve the benefits of each. Dynamically typed code can be written without conforming to a syntactic type discipline, so the programmer can always run their program interactively with minimal work. On the other hand, statically typed code provides mathematically sound reasoning principles that justify type-based refactorings, enable compiler optimizations, and underlie formal software verification. The difficulty is accommodating both of these styles and their benefits simultaneously: allowing the dynamic and static code to interact without forcing the dynamic code to be statically checked or violating the correctness of type-based reasoning.

The linchpin to the design of a gradually typed language is the semantics of runtime type casts . These are runtime checks that ensure that typed reasoning principles are valid by checking types of dynamically typed code at the boundary between static and dynamic typing. For instance, when a statically typed function f : Num → Num is applied to a dynamically typed argument x : ?, the language runtime must check if x is a number, and otherwise raise a dynamic type error. A programmer familiar with dynamically typed programming might object that this is overly strong: for

Authors' addresses: Max S. New, Northeastern University, maxnew@ccs.neu.edu; Daniel R. Licata, Wesleyan University, dlicata@wesleyan.edu; Amal Ahmed, Northeastern University and Inria Paris, amal@ccs.neu.edu.

instance if f is just a constant function f = λx : Num . 0 then why bother checking if x is a number since the body of the program does not seem to depend on it? The reason the value is rejected is because the annotation x : Num should introduce an assumption that that the programmer, compiler and automated tools can rely on for behavioral reasoning in the body of the function. For instance, if the variable x is guaranteed to only be instantiated with numbers, then the programmer is free to replace 0 with x -x or vice-versa. However, if x can be instantiated with a closure, then x -x will raise a runtime type error while 0 will succeed, violating the programmers intuition about the correctness of refactorings. We can formalize such relationships by observational equivalence of programs: the two closures λx : Num . 0 and λx : Num . x -x are indistinguishable to any other program in the language. This is precisely the difference between gradual typing and so-called optional typing: in an optionally typed language (Hack, TypeScript, Flow), annotations are checked for consistency but are unreliable to the user, so provide no leverage for reasoning. In a gradually typed language, type annotations should relieve the programmer of the burden of reasoning about incorrect inputs, as long as we are willing to accept that the program as a whole may crash, which is already a possibility in many effectful statically typed languages.

However, the dichotomy between gradual and optional typing is not as firm as one might like. There have been many different proposed semantics of run-time type checking: 'transient' cast semantics [Vitousek et al. 2017] only checks the head connective of a type (number, function, list, . . . ), 'eager' cast semantics [Herman et al. 2010] checks run-time type information on closures, whereas 'lazy' cast semantics [Findler and Felleisen 2002] will always delay a type-check on a function until it is called (and there are other possibilities, see e.g. [Siek et al. 2009; Greenberg 2015]). The extent to which these different semantics have been shown to validate type-based reasoning has been limited to syntactic type soundness and blame soundness theorems. In their strongest form, these theorems say 'If t is a closed program of type A then it diverges, or reduces to a runtime error blaming dynamically typed code, or reduces to a value that satisfies A to a certain extent.' However, the theorem at this level of generality is quite weak, and justifies almost no program equivalences without more information. Saying that a resulting value satisfies type A might be a strong statement, but in transient semantics constrains only the head connective. The blame soundness theorem might also be quite strong, but depends on the definition of blame, which is part of the operational semantics of the language being defined. We argue that these type soundness theorems are only indirectly expressing the actual desired properties of the gradual language, which are program equivalences in the typed portion of the code that are not valid in the dynamically typed portion.

Such program equivalences typically include β -like principles, which arise from computation steps, as well as η equalities , which express the uniqueness or universality of certain constructions. The η law of the untyped λ -calculus, which states that any λ -term M ≡ λx . Mx , is restricted in a typed language to only hold for terms of function type M : A → B ( λ is the unique/universal way of making an element of the function type). This famously 'fails' to hold in call-by-value languages in the presence of effects: if M is a program that prints "hello" before returning a function, then M will print now , whereas λx . Mx will only print when given an argument. But this can be accommodated with one further modification: the η law is valid in simple call-by-value languages 1 (e.g. SML) if we have a 'value restriction' V ≡ λx . Vx . This illustrates that η /extensionality rules must be stated for each type connective, and be sensitive to the effects/evaluation order of the terms involved. For instance, the η principle for the boolean type Bool in call-by-value is that for any term M with a free variable x : Bool , M is equivalent to a term that performs an if statement on

1 This does not hold in languages with some intensional feature of functions such as reference equality. We discuss the applicability of our main results more generally in Section 7.

x : M ≡ if x ( M [ true / x ])( M [ false / x ]) . If we have an if form that is strongly typed (i.e., errors on non-booleans) then this tells us that it is safe to run an if statement on any input of boolean type (in CBN, by contrast an if statement forces a thunk and so is not necessarily safe). In addition, even if our if statement does some kind of coercion, this tells us that the term M only cares about whether x is 'truthy' or 'falsy' and so a client is free to change e.g. one truthy value to a different one without changing behavior. This η principle justifies a number of program optimizations, such as dead-code and common subexpression elimination, and hoisting an if statement outside of the body of a function if it is well-scoped ( λx . if y M N ≡ if y ( λx . M ) ( λx . N ) ). Any eager datatype, one whose elimination form is given by pattern matching such as 0 , + , 1 , × , list , has a similar η principle which enables similar reasoning, such as proofs by induction. The η principles for lazy types in call-by-name support dual behavioral reasoning about lazy functions, records, and streams.

An Axiomatic Approach to Gradual Typing. In this paper, we systematically study questions of program equivalence for a class of gradually typed languages by working in an axiomatic theory of gradual program equivalence, a language and logic we call gradual type theory (GTT). Gradual type theory is the combination of a language of terms and gradual types with a simple logic for proving program equivalence and error approximation (equivalence up to one program erroring whenthe other does not) results. The logic axiomatizes the equational properties gradual programs should satisfy, and offers a high-level syntax for proving theorems about many languages at once: if a language models gradual type theory, then it satisfies all provable equivalences/approximations. Due to its type-theoretic design, different axioms of program equivalence are easily added or removed. Gradual type theory can be used both to explore language design questions and to verify behavioral properties of specific programs, such as correctness of optimizations and refactorings.

To get off the ground, we take two properties of the gradual language for granted. First, we assume a compositionality property: that any cast from A to B can be factored through the dynamic type ?, i.e., the cast 〈 B ⇐ A 〉 t is equivalent to first casting up from A to ? and then down to B : 〈 B ⇐ ? 〉〈 ? ⇐ A 〉 t . These casts often have quite different performance characteristics, but should have the same extensional behavior: of the cast semantics presented in Siek et al. [2009], only the partially eager detection strategy violates this principle, and this strategy is not common. The second property we take for granted is that the language satisfies the dynamic gradual guarantee [Siek et al. 2015a] ('graduality')-a strong correctness theorem of gradual typing- which constrains how changing type annotations changes behavior. Graduality says that if we change the types in a program to be 'more precise'-e.g., by changing from the dynamic type to a more precise type such as integers or functions-the program will either produce the same behavior as the original or raise a dynamic type error. Conversely, if a program does not error and some types are made 'less precise' then behavior does not change.

Wethen study what program equivalences are provable in GTT under various assumptions. Our central application is to study when the β , η equalities are satisfied in a gradually typed language. We approach this problem by a surprising tack: rather than defining the behavior of dynamic type casts and then verifying or invalidating the β and η equalities, we assume the language satisfies β and η equality and then show that certain reductions of casts are in fact program equivalence theorems deducible from the axioms of GTT.

The cast reductions that we show satisfy all three constraints are those given by the 'lazy cast semantics' [Findler and Felleisen 2002; Siek et al. 2009]. As a contrapositive, any gradually typed language for which these reductions are not program equivalences is not a model of the axioms of gradual type theory. This mean the language violates either compositionality, the gradual guarantee, or one of the β , η axioms-and in practice, it is usually η .

For instance, a transient semantics, where only the top-level connectives are checked, violates η for strict pairs

<!-- formula-not-decoded -->

because the top-level connectives of A 1 and A 2 are only checked when the pattern match is introduced. As a concrete counterexample to contextual equivalence, let A 1 , A 2 all be String . Because only the top-level connective is checked, ( 0 , 1 ) is a valid value of type String × String , but pattern matching on the pair ensures that the two components are checked to be strings, so the left-hand side let ( x 1 , x 2 ) = ( 0 , 1 ) ; 0 ↦→ /Omegainv (raises a type error). On the right-hand side, with no pattern, match a value (0) is returned. This means simple program changes that are valid in a typed language, such as changing a function of two arguments to take a single pair of those arguments, are invalidated by the transient semantics. In summary, transient semantics is 'lazier' than the types dictate, catching errors only when the term is inspected.

As a subtler example, in call-by-value 'eager cast semantics' the βη principles for all of the eager datatypes (0 , + , 1 , × , lists, etc.) will be satisfied, but the η principle for the function type → is violated: there are values V : A → A ′ for which V /nequal λx : A . Vx . For instance, take an arbitrary function value V : A → String for some type A , and let V ′ = 〈 A → ? ⇐ A → String 〉 V be the result of casting it to have a dynamically typed output. Then in eager semantics, the following programs are not equivalent:

<!-- formula-not-decoded -->

We cannot observe any difference between these two programs by applying them to arguments, however, they are distinguished from each other by their behavior when cast . Specifically, if we cast both sides to A → Number , then 〈 A → Number ⇐ A → ? 〉( λx : A . V ′ x ) is a value, but 〈 A → Number ⇐ A → ? 〉 V ′ reduces to an error because Number is incompatible with String . However this type error might not correspond to any actual typing violation of the program involved. For one thing, the resulting function might never be executed. Furthermore, in the presence of effects, it may be that the original function V : A → String never returns a string (because it diverges, raises an exception or invokes a continuation), and so that same value casted to A → Number might be a perfectly valid inhabitant of that type. In summary the 'eager' cast semantics is in fact overly eager: in its effort to find bugs faster than 'lazy' semantics it disables the very type-based reasoning that gradual typing should provide.

While criticisms of transient semantics on the basis of type soundness have been made before [Greenman and Felleisen 2018], our development shows that the η principles of types are enough to uniquely determine a cast semantics, and helps clarify the trade-off between eager and lazy semantics of function casts.

Technical Overview of GTT. The gradual type theory developed in this paper unifies our previous work on operational (logical relations) reasoning for gradual typing in a call-by-value setting [New and Ahmed 2018] (which did not consider a proof theory), and on an axiomatic proof theory for gradual typing [New and Licata 2018] in a call-by-name setting (which considered only function and product types, and denotational but not operational models).

In this paper, we develop an axiomatic gradual type theory GTT for a unified language that includes both call-by-value/eager types and call-by-name/lazy types (Sections 2, 3), and show that it is sound for contextual equivalence via a logical relations model (Sections 4, 5, 6). Because the η principles for types play a key role in our approach, it is necessary to work in a setting where we can have η principles for both eager and lazy types. We use Levy's Call-by-Push-Value [Levy 2003] (CBPV), which fully and faithfully embeds both call-by-value and call-by-name evaluation with both eager and lazy datatypes, 2 and underlies much recent work on reasoning about effectful

2 The distinction between 'lazy' vs 'eager' casts above is different than lazy vs. eager datatypes.

programs [Bauer and Pretnar 2013; Lindley et al. 2017]. GTT can prove results in and about existing call-by-value gradually typed languages, and also suggests a design for call-by-name and full call-by-push-value gradually typed languages.

In the prior work [New and Licata 2018; New and Ahmed 2018], gradual type casts are decomposed into upcasts and downcasts, as suggested above. A type dynamism relation (corresponding to type precision [Siek et al. 2015a] and naïve subtyping [Wadler and Findler 2009]) controls which casts exist: a type dynamism A /subsetsqequal A ′ induces an upcast from A to A ′ and a downcast from A ′ to A . Then, a term dynamism judgement is used for equational/approximational reasoning about programs. Term dynamism relates two terms whose types are related by type dynamism, and the upcasts and downcasts are each specified by certain term dynamism judgements holding. This specification axiomatizes only the properties of casts needed to ensure the graduality theorem, and not their precise behavior, so cast reductions can be proved from it , rather than stipulated in advance. The specification defines the casts 'uniquely up to equivalence', which means that any two implementations satisfying it are behaviorally equivalent.

We generalize this axiomatic approach to call-by-push-value (Section 2), where there are both eager/value types and lazy/computation types. This is both a subtler question than it might at first seem, and has a surprisingly nice answer: we find that upcasts are naturally associated with eager/value types and downcasts with lazy/computation types, and that the modalities relating values and computations induce the downcasts for eager/value types and upcasts for lazy/computation types. Moreover, this analysis articulates an important behavioral property of casts that was proved operationally for call-by-value in [New and Ahmed 2018] but missed for call-by-name in [New and Licata 2018]: upcasts for eager types and downcasts for lazy types are both 'pure' in a suitable sense, which enables more refactorings and program optimizations. In particular, we show that these casts can be taken to be (and are essentially forced to be) 'complex values' and 'complex stacks' (respectively) in call-by-push-value, which corresponds to a behavioral property of thunkability and linearity [Munch-Maccagnoni 2014]. We argue in Section 7 that this property is related to blame soundness. Our gradual type theory naturally has two dynamic types, a dynamic eager/value type and a dynamic lazy/computation type, where the former can be thought of as a sum of all possible values, and the latter as a product of all possible behaviors. At the language design level, gradual type theory can be used to prove that, for a variety of eager/value and lazy/computation types, the 'lazy' semantics of casts is the unique implementation satisfying β , η and graduality (Section 3). These behavioral equivalences can then be used in reasoning about optimizations, refactorings, and correctness of specific programs.

Contract-Based Models. To show the consistency of GTT as a theory, and to give a concrete operational interpretation of its axioms and rules, we provide a concrete model based on an operational semantics. The model is a contract interpretation of GTT in that the 'built-in' casts of GTT are translated to ordinary functions in a CBPV language that perform the necessary checks.

To keep the proofs high-level, we break the proof into two steps. First (Sections 4, 5), we translate the axiomatic theory of GTT into an axiomatic theory of CBPV extended with recursive types and an uncatchable error, implementing casts by CBPV code that does contract checking. Then (Section 6) we give an operational semantics for the extended CBPV and define a step-indexed biorthogonal logical relation that interprets the ordering relation on terms as contextual error approximation, which underlies the definition of graduality as presented in [New and Ahmed 2018]. Combining these theorems gives an implementation of the term language of GTT in which β , η are observational equivalences and the dynamic gradual guarantee is satisfied.

Due to the uniqueness theorems of GTT, the only part of this translation that is not predetermined is the definition of the dynamic types themselves and the casts between 'ground' types and the dynamic types. We use CBPV to explore the design space of possible implementations

of the dynamic types, and give one that faithfully distinguishes all types of GTT, and another more Scheme-like implementation that implements sums and lazy pairs by tag bits. Both can be restricted to the CBV or CBN subsets of CBPV, but the unrestricted variant is actually more faithful to Scheme-like dynamically typed programming, because it accounts for variable-argument functions. Our modular proof architecture allows us to easily prove correctness of β , η and graduality for all of these interpretations.

Contributions. The main contributions of the paper are as follows.

- (1) We present Gradual Type Theory in Section 2, a simple axiomatic theory of gradual typing. The theory axiomatizes three simple assumptions about a gradual language: compositionality, graduality, and type-based reasoning in the form of η equivalences.

- (2) We prove many theorems in the formal logic of Gradual Type Theory in Section 3. These include the unique implementation theorems for casts, which show that for each type connective of GTT, the η principle for the type ensures that the casts must implement the lazy contract semantics. Furthermore, we show that upcasts are always pure functions and dually that downcasts are always strict functions, as long as the base type casts are pure/strict.

- (3) To substantiate that GTT is a reasonable axiomatic theory for gradual typing, we construct models of GTT in Sections 4, 5 and 6.3. This proceeds in two stages. First (Section 4), we use call-by-push-value as a typed metalanguage to construct several models of GTT using different recursive types to implement the dynamic types of GTT and interpret the casts as embedding-projection pairs. This extends standard translations of dynamic typing into static typing using type tags: the dynamic value type is constructed as a recursive sum of basic value types, but dually the dynamic computation type is constructed as a recursive product of basic computation types. This dynamic computation type naturally models stack-based implementations of variable-arity functions as used in the Scheme language.

- (4) We then give an operational model of the term dynamism ordering as contextual error approximation in Sections 5 and 6.3. To construct this model, we extend previous work on logical relations for error approximation from call-by-value to call-by-push-value [New and Ahmed 2018], simplifying the presentation in the process.

## 2 AXIOMATIC GRADUAL TYPE THEORY

In this section we introduce the syntax of Gradual Type Theory, an extension of Call-by-pushvalue [Levy 2003] to support the constructions of gradual typing. First we introduce call-by-pushvalue and then describe in turn the gradual typing features: dynamic types, casts, and the dynamism orderings on types and terms.

## 2.1 Background: Call-by-Push-Value

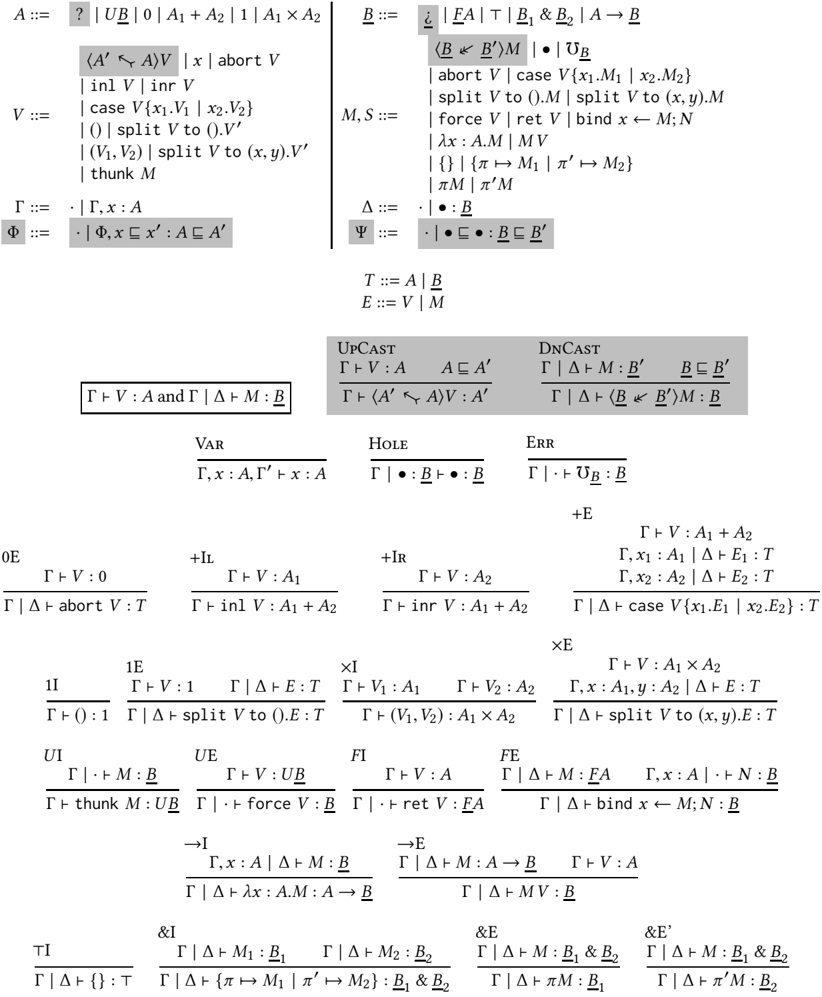

GTT is an extension of CBPV, so we first present CBPV as the unshaded rules in Figure 1. CBPV makes a distinction between value types A and computation types B , where value types classify values Γ /turnstileleft V : A and computation types classify computations Γ /turnstileleft M : B . Effects are computations: for example, we might have an error computation /Omegainv B : B of every computation type, or printing print V ; M : B if V : string and M : B , which prints V and then behaves as M .

Value types and complex values. The value types include eager products 1 and A 1 × A 2 and sums 0 and A 1 + A 2, which behave as in a call-by-value/eager language (e.g. a pair is only a value when its components are). The notion of value V is more permissive than one might expect, and expressions Γ /turnstileleft V : A are sometimes called complex values to emphasize this point: complex values include not only closed runtime values, but also open values that have free value variables (e.g. x : A 1 , x 2 : A 2 /turnstileleft ( x 1 , x 2 ) : A 1 × A 2), and expressions that pattern-match on values (e.g.

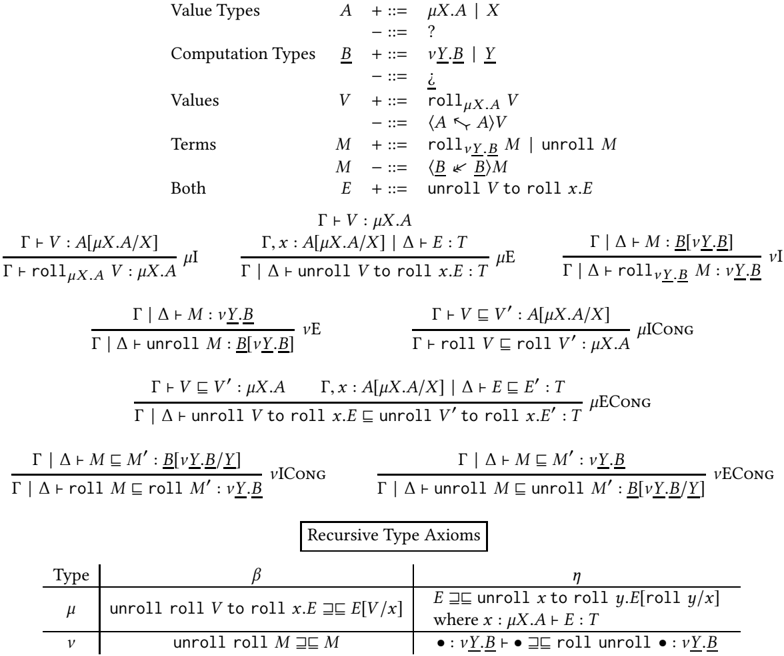

Fig. 1. GTT Syntax and Term Typing

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Type Theory Rules

### Overview

The image presents a collection of type theory rules, defining the syntax and semantics of a formal system. It includes definitions for types, values, expressions, and typing judgments, along with inference rules for various language constructs.

### Components/Axes

* **Top-Left**: Definitions for types `A`, values `V`.

* **Top-Right**: Definitions for types `B`, expressions `M, S`.

* **Middle-Left**: Definitions for contexts `Γ`, `Φ`.

* **Middle-Right**: Definitions for contexts `Δ`, `Ψ`.

* **Bottom**: Inference rules for type checking.

### Detailed Analysis or ### Content Details

**Definitions:**

* **Types (A)**:

* `A ::= ? | UB | 0 | A1 + A2 | 1 | A1 X A2`

* `?`: Unknown type.

* `UB`: Unit type.

* `0`: Empty type.

* `A1 + A2`: Sum type.

* `1`: Unit type.

* `A1 X A2`: Product type.

* **Values (V)**:

* `V ::= <A' <- A> V | x | abort V | inl V | inr V | case V{x1.V1 | x2.V2} | () | split V to ().V' | (V1, V2) | split V to (x, y).V' | thunk M`

* `<A' <- A> V`: Type cast.

* `x`: Variable.

* `abort V`: Abort.

* `inl V`: Left injection.

* `inr V`: Right injection.

* `case V{x1.V1 | x2.V2}`: Case expression.

* `()`: Unit value.

* `split V to ().V'`: Split operation.

* `(V1, V2)`: Pair.

* `split V to (x, y).V'`: Split operation.

* `thunk M`: Thunk.

* **Types (B)**:

* `B ::= ¿ | FA | T | B1 & B2 | A -> B`

* `¿`: Unknown type.

* `FA`: Type constructor.

* `T`: Type variable.

* `B1 & B2`: Product type.

* `A -> B`: Function type.

* **Expressions (M, S)**:

* `M, S ::= <B <- B'> M | • | UB | abort V | case V{x1.M1 | x2.M2} | split V to ().M | split V to (x, y).M | force V | ret V | bind x <- M; N | λx: A.M | MV | {} | {π -> M1 | π' -> M2} | πM | π'M`

* `<B <- B'> M`: Type cast.

* `•`: Hole.

* `UB`: Unit expression.

* `abort V`: Abort.

* `case V{x1.M1 | x2.M2}`: Case expression.

* `split V to ().M`: Split operation.

* `split V to (x, y).M`: Split operation.

* `force V`: Force.

* `ret V`: Return.

* `bind x <- M; N`: Bind.

* `λx: A.M`: Lambda abstraction.

* `MV`: Application.

* `{}`: Empty record.

* `{π -> M1 | π' -> M2}`: Record.

* `πM`: Projection.

* `π'M`: Projection.

* **Contexts (Γ)**:

* `Γ ::= · | Γ, x: A`

* `.`: Empty context.

* `Γ, x: A`: Context extension.

* **Contexts (Φ)**:

* `Φ ::= · | Φ, x <- x': A ⊆ A'`

* `.`: Empty context.

* `Φ, x <- x': A ⊆ A'`: Context extension with subtyping.

* **Contexts (Δ)**:

* `Δ ::= · | • ⊆ B`

* `.`: Empty context.

* `• ⊆ B`: Context extension with subtyping.

* **Contexts (Ψ)**:

* `Ψ ::= · | • ⊆ B ⊆ B'`

* `.`: Empty context.

* `• ⊆ B ⊆ B'`: Context extension with subtyping.

* **Other Definitions**:

* `T ::= A | B`

* `E ::= V | M`

**Typing Rules:**

* **Upcast**:

* Premises: `Γ |- V: A`, `A ⊆ A'`

* Conclusion: `Γ |- <A' <- A> V: A'`

* **Downcast**:

* Premises: `Γ |- Δ |- M: B'`, `B ⊆ B'`

* Conclusion: `Γ |- Δ |- <B <- B'> M: B`

* **Var**:

* Conclusion: `Γ, x: A, Γ' |- x: A`

* **Hole**:

* Conclusion: `Γ |- •: B`

* **Err**:

* Conclusion: `Γ |- UB: B`

* **0E**:

* Premise: `Γ |- V: 0`

* Conclusion: `Γ |- Δ |- abort V: T`

* **+IL**:

* Premise: `Γ |- V: A1`

* Conclusion: `Γ |- inl V: A1 + A2`

* **+IR**:

* Premise: `Γ |- V: A2`

* Conclusion: `Γ |- inr V: A1 + A2`

* **+E**:

* Premises: `Γ |- V: A1 + A2`, `Γ, x1: A1 |- Δ |- E1: T`, `Γ, x2: A2 |- Δ |- E2: T`

* Conclusion: `Γ |- Δ |- case V{x1.E1 | x2.E2}: T`

* **1I**:

* Conclusion: `Γ |- (): 1`

* **1E**:

* Premise: `Γ |- V: 1`

* Conclusion: `Γ |- Δ |- split V to ().E: T`

* **xI**:

* Premises: `Γ |- V1: A1`, `Γ |- V2: A2`

* Conclusion: `Γ |- (V1, V2): A1 X A2`

* **xE**:

* Premises: `Γ |- V: A1 X A2`, `Γ, x: A1, y: A2 |- Δ |- E: T`

* Conclusion: `Γ |- Δ |- split V to (x, y).E: T`

* **UI**:

* Premise: `Γ |- M: B`

* Conclusion: `Γ |- thunk M: UB`

* **UE**:

* Premise: `Γ |- V: UB`

* Conclusion: `Γ |- force V: B`

* **FI**:

* Premise: `Γ |- V: A`

* Conclusion: `Γ |- ret V: FA`

* **FE**:

* Premises: `Γ |- Δ |- M: FA`, `Γ, x: A |- Δ |- N: B`

* Conclusion: `Γ |- Δ |- bind x <- M; N: B`

* **->I**:

* Premise: `Γ, x: A |- Δ |- M: B`

* Conclusion: `Γ |- Δ |- λx: A.M: A -> B`

* **->E**:

* Premises: `Γ |- Δ |- M: A -> B`, `Γ |- V: A`

* Conclusion: `Γ |- Δ |- MV: B`

* **TI**:

* Conclusion: `Γ |- {}: T`

* **&I**:

* Premises: `Γ |- Δ |- M1: B1`, `Γ |- Δ |- M2: B2`

* Conclusion: `Γ |- Δ |- {π -> M1 | π' -> M2}: B1 & B2`

* **&E**:

* Premise: `Γ |- Δ |- M: B1 & B2`

* Conclusion: `Γ |- Δ |- πM: B1`

* **&E'**:

* Premise: `Γ |- Δ |- M: B1 & B2`

* Conclusion: `Γ |- Δ |- π'M: B2`

### Key Observations

* The system includes basic types like unit, empty, sum, and product.

* It supports type casting, function abstraction and application, and record manipulation.

* The typing rules define how to derive the type of an expression based on the types of its sub-expressions and the context.

### Interpretation

The image presents a formal type system, likely for a functional programming language. The definitions and typing rules provide a rigorous framework for ensuring type safety and preventing runtime errors. The system includes features like subtyping, type casting, and record types, which enhance its expressiveness and flexibility. The rules are designed to be compositional, allowing the type of a complex expression to be derived from the types of its constituent parts.

</details>

p : A 1 × A 2 /turnstileleft split p to ( x 1 , x 2 ) . ( x 2 , x 1 ) : A 2 × A 1). Thus, the complex values x : A /turnstileleft V : A ′ are a syntactic class of 'pure functions' from A to A ′ (though there is no pure function type internalizing this judgement), which can be treated like values by a compiler because they have no effects (e.g. they can be dead-code-eliminated, common-subexpression-eliminated, and so on). In focusing [Andreoli 1992] terminology, complex values consist of left inversion and right focus rules.For each pattern-matching construct (e.g. case analysis on a sum, splitting a pair), we have both an elimination rule whose branches are values (e.g. split p to ( x 1 , x 2 ) . V ) and one whose branches are computations (e.g. split p to ( x 1 , x 2 ) . M ). To abbreviate the typing rules for both in Figure 1, weuse the following convention: we write E :: = V | M for either a complex value or a computation, and T :: = A | B for either a value type A or a computation type B , and a judgement Γ | ∆ /turnstileleft E : T for either Γ /turnstileleft V : A or Γ | ∆ /turnstileleft M : B (this is a bit of an abuse of notation because ∆ is not present in the former). Complex values can be translated away without loss of expressiveness by moving all pattern-matching into computations (see Section 5), at the expense of using a behavioral condition of thunkability [Munch-Maccagnoni 2014] to capture the properties complex values have (for example, an analogue of let x = V ; let x ′ = V ′ ; M ≡ let x ′ = V ′ ; let x = V ; M - complex values can be reordered, while arbitrary computations cannot).

Shifts. A key notion in CBPV is the shift types FA and UB , which mediate between value and computation types: FA is the computation type of potentially effectful programs that return a value of type A , while UB is the value type of thunked computations of type B . The introduction rule for FA is returning a value of type A ( ret V), while the elimination rule is sequencing a computation M : FA with a computation x : A /turnstileleft N : B to produce a computation of a B ( bind x ← M ; N ). While any closed complex value V is equivalent to an actual value, a computation of type FA might perform effects (e.g. printing) before returning a value, or might error or non-terminate and not return a value at all. The introduction and elimination rules for U are written thunk M and force V , and say that computations of type B are bijective with values of type UB . As an example of the action of the shifts, 0 is the empty value type, so F 0 classifies effectful computations that never return, but may perform effects (and then, must e.g. non-terminate or error), while UF 0 is the value type where such computations are thunked/delayed and considered as values.1 is the trivial value type, so F 1 is the type of computations that can perform effects with the possibility of terminating successfully by returning () , and UF 1 is the value type where such computations are delayed values. UF is a monad on value types [Moggi 1991], while FU is a comonad on computation types.

Computation types. The computation type constructors in CBPV include lazy unit/products /latticetop and B 1 & B 2 , which behave as in a call-by-name/lazy language (e.g. a component of a lazy pair is evaluated only when it is projected). Functions A → B have a value type as input and a computation type as a result. The equational theory of effects in CBPV computations may be surprising to those familiar only with call-by-value, because at higher computation types effects have a callby-name-like equational theory. For example, at computation type A → B , we have an equality print c ; λx . M = λx . print c ; M . Intuitively, the reason is that A → B is not treated as an observable type (one where computations are run): the states of the operational semantics are only those computations of type FA for some value type A . Thus, 'running' a function computation means supplying it with an argument, and applying both of the above to an argument V is defined to result in print c ; M [ V / x ] . This does not imply that the corresponding equations holds for the call-by-value function type, which we discuss below. As another example, all computations are considered equal at type /latticetop , even computations that perform different effects ( print c vs. {} vs. /Omegainv ), because there is by definition no way to extract an observable of type FA from a computation of type /latticetop . Consequently, U /latticetop is isomorphic to 1.

Complex stacks. Just as the complex values V are a syntactic class terms that have no effects, CBPV includes a judgement for 'stacks' S , a syntactic class of terms that reflect all effects of their input. A stack Γ | · : B /turnstileleft S : B ′ can be thought of as a linear/strict function from B to B ′ , which must use its input hole · exactly once at the head redex position. Consequently, effects can be hoisted out of stacks, because we know the stack will run them exactly once and first. For example, there will be contextual equivalences S [ /Omegainv /·] = /Omegainv and S [ print V ; M ] = print V ; S [ M /·] . Just as complex values include pattern-matching, complex stacks include pattern-matching on values and introduction forms for the stack's output type. For example, · : B 1 & B 2 /turnstileleft { π ↦→ π ′ · | π ′ ↦→ π ·} : B 2 & B 1 is a complex stack, even though it mentions · more than once, because running it requires choosing a projection to get to an observable of type FA , so each time it is run it uses · exactly once. In focusing terms, complex stacks include both left and right inversion, and left focus rules.In the equational theory of CBPV, F and U are adjoint , in the sense that stacks · : FA /turnstileleft S : B are bijective with values x : A /turnstileleft V : UB , as both are bijective with computations x : A /turnstileleft M : B .

To compress the presentation in Figure 1, we use a typing judgement Γ | ∆ /turnstileleft M : B with a 'stoup', a typing context ∆ that is either empty or contains exactly one assumption · : B , so Γ | · /turnstileleft M : B is a computation, while Γ | · : B /turnstileleft M : B ′ is a stack. The typing rules for /latticetop and & treat the stoup additively (it is arbitrary in the conclusion and the same in all premises); for a function application to be a stack, the stack input must occur in the function position. The elimination form for UB , force V , is the prototypical non-stack computation ( ∆ is required to be empty), because forcing a thunk does not use the stack's input.

Embedding call-by-value and call-by-name. To translate call-by-value (CBV) into CBPV, a judgement x 1 : A 1 , . . . , x n : A n /turnstileleft e : A is interpreted as a computation x 1 : A v 1 , . . . , x n : A v n /turnstileleft e v : FA v , where call-by-value products and sums are interpreted as × and + , and the call-by-value function type A → A ′ as U ( A v → FA ′ v ) . Thus, a call-by-value term e : A → A ′ , which should mean an effectful computation of a function value, is translated to a computation e v : FU ( A v → FA ′ v ) . Here, the comonad FU offers an opportunity to perform effects before returning a function value-so under translation the CBV terms print c ; λx . e and λx . print c ; e will not be contextually equivalent. To translate call-by-name (CBN) to CBPV, a judgement x 1 : B 1 , . . . , x m : B m /turnstileleft e : B is translated to x 1 : UB 1 n , . . . , x m : UB m n /turnstileleft e n : B n , representing the fact that call-by-name terms are passed thunked arguments. Product types are translated to /latticetop and × , while a CBN function B → B ′ is translated to UB n → B ′ n with a thunked argument. Sums B 1 + B 2 are translated to F ( UB 1 n + UB 2 n ) , making the 'lifting' in lazy sums explicit. Call-by-push-value subsumes call-by-value and call-by-name in that these embeddings are full and faithful : two CBV or CBN programs are equivalent if and only if their embeddings into CBPV are equivalent, and every CBPV program with a CBV or CBN type can be back-translated.

Extensionality/ η Principles. The main advantage of CBPV for our purposes is that it accounts for the η /extensionality principles of both eager/value and lazy/computation types, because value types have η principles relating them to the value assumptions in the context Γ , while computation types have η principles relating them to the result type of a computation B . For example, the η principle for sums says that any complex value or computation x : A 1 + A 2 /turnstileleft E : T is equivalent to case x { x 1 . E [ inl x 1 / x ] | x 2 . E [ inr x 2 / x ]} , i.e. a case on a value can be moved to any point in a program (where all variables are in scope) in an optimization. Given this, the above translations of CBV and CBN into CBPV explain why η for sums holds in CBV but not CBN: in CBV, x : A 1 + A 2 /turnstileleft E : T is translated to a term with x : A 1 + A 2 free, but in CBN, x : B 1 + B 2 /turnstileleft E : T is translated to a term with x : UF ( UB 1 + UB 2 ) free, and the type UF ( UB 1 + UB 2 ) of monadic computations that return a sum does not satisfy the η principle for sums in CBPV. Dually, the η principle for functions in CBPV is that any computation M : A → B is equal to λx . Mx . ACBNterm e : B → B ′

is translated to a CBPV computation of type UB → B ′ , to which CBPV function extensionality applies, while a CBV term e : A → A ′ is translated to a computation of type FU ( A → FA ′ ) , which does not satisfy the η rule for functions. We discuss a formal statement of these η principles with term dynamism below.

## 2.2 The Dynamic Type(s)

Next, we discuss the additions that make CBPV into our gradual type theory GTT. A dynamic type plays a key role in gradual typing, and since GTT has two different kinds of types, we have a new question of whether the dynamic type should be a value type, or a computation type, or whether we should have both a dynamic value type and a dynamic computation type. Our modular, type-theoretic presentation of gradual typing allows us to easily explore these options, though we find that having both a dynamic value ? and a dynamic computation type ¿ gives the most natural implementation (see Section 4.2). Thus, we add both ? and ¿ to the grammar of types in Figure 1. We do not give introduction and elimination rules for the dynamic types, because we would like constructions in GTT to imply results for many different possible implementations of them. Instead, the terms for the dynamic types will arise from type dynamism and casts.

## 2.3 Type Dynamism

The type dynamism relation of gradual type theory is written A /subsetsqequal A ′ and read as ' A is less dynamic than A ′ '; intuitively, this means that A ′ supports more behaviors than A . Our previous work [New and Ahmed 2018; New and Licata 2018] analyzes this as the existence of an upcast from A to A ′ and a downcast from A ′ to A which form an embedding-projection pair ( ep pair ) for term error approximation (an ordering where runtime errors are minimal): the upcast followed by the downcast is a no-op, while the downcast followed by the upcast might error more than the original term, because it imposes a run-time type check. Syntactically, type dynamism is defined (1) to be reflexive and transitive (a preorder), (2) where every type constructor is monotone in all positions, and (3) where the dynamic type is greatest in the type dynamism ordering. This last condition, the dynamic type is the most dynamic type , implies the existence of an upcast 〈 ? /arrowtailleft A 〉 and a downcast 〈 A /dblarrowheadleft ? 〉 for every type A : any type can be embedded into it and projected from it. However, this by design does not characterize ? uniquely-instead, it is open-ended exactly which types exist (so that we can always add more), and some properties of the casts are undetermined; we exploit this freedom in Section 4.2.

This extends in a straightforward way to CBPV's distinction between value and computation types in Figure 2: there is a type dynamism relation for value types A /subsetsqequal A ′ and for computation types B /subsetsqequal B ′ , which (1) each are preorders (V TyRefl , VTyTrans , CTyRefl , CTyTrans ), (2) every type constructor is monotone ( + Mon , × Mon , & Mon , → Mon ) where the shifts F and U switch which relation is being considered ( U Mon , F Mon ), and (3) the dynamic types ? and ¿ are the most dynamic value and computation types respectively ( VTyTop , CTyTop ). For example, we have U ( A → FA ′ ) /subsetsqequal U ( ? → F ? ) , which is the analogue of A → A ′ /subsetsqequal ? → ? in call-byvalue: because → preserves embedding-retraction pairs, it is monotone, not contravariant, in the domain [New and Ahmed 2018; New and Licata 2018].

## 2.4 Casts

It is not immediately obvious how to add type casts to CPBV, because CBPV exposes finer judgemental distinctions than previous work considered. However, we can arrive at a first proposal by considering how previous work would be embedded into CBPV. In the previous work on both CBV and CBN [New and Ahmed 2018; New and Licata 2018] every type dynamism judgement A /subsetsqequal A ′

Fig. 2. GTT Type Dynamism and Dynamism Contexts

| A /subsetsqequal A ′ and B /subsetsqequal B ′ | VTyRefl A /subsetsqequal A | VTyTrans A /subsetsqequal A ′ A ′ /subsetsqequal A ′′ A /subsetsqequal A ′′ | CTyRefl B /subsetsqequal B ′ | CTyTrans B /subsetsqequal B ′ B ′ /subsetsqequal B ′′ B /subsetsqequal B ′′ |

|-------------------------------------------------|------------------------------|----------------------------------------------------------------------------------------------|--------------------------------|-------------------------------------------------------------------------------|

| VTyTop | U Mon B /subsetsqequal B ′ | + Mon A 1 /subsetsqequal A ′ 1 A 2 /subsetsqequal A ′ 2 | × Mon A 1 /subsetsqequal A ′ 1 | A 2 /subsetsqequal A ′ 2 |

| A /subsetsqequal ? | UB /subsetsqequal UB ′ | A 1 + A 2 /subsetsqequal A ′ 1 + A ′ 2 | A 1 × A 2 /subsetsqequal A | ′ 1 × A ′ 2 |

| CTyTop | F Mon A /subsetsqequal A ′ | & Mon B 1 /subsetsqequal B ′ 1 B 2 /subsetsqequal B ′ 2 | → Mon A /subsetsqequal A ′ | B /subsetsqequal B ′ |

| Dynamism contexts | · dyn - vctx | Φ dyn - vctx A /subsetsqequal A ′ Φ , x /subsetsqequal x ′ : A /subsetsqequal A ′ dyn - vctx | · dyn - cctx (• /subsetsqequal | B /subsetsqequal B • : B /subsetsqequal B ′ ) dyn - cctx |

| | | | | ′ |

induces both an upcast from A to A ′ and a downcast from A ′ to A . Because CBV types are associated to CBPV value types and CBN types are associated to CBPV computation types, this suggests that each value type dynamism A /subsetsqequal A ′ should induce an upcast and a downcast, and each computation type dynamism B /subsetsqequal B ′ should also induce an upcast and a downcast. In CBV, a cast from A to A ′ typically can be represented by a CBV function A → A ′ , whose analogue in CBPV is U ( A → FA ′ ) , and values of this type are bijective with computations x : A /turnstileleft M : FA ′ , and further with stacks · : FA /turnstileleft S : FA ′ . This suggests that a value type dynamism A /subsetsqequal A ′ should induce an embeddingprojection pair of stacks · : FA /turnstileleft S u : FA ′ and · : FA ′ /turnstileleft S d : FA , which allow both the upcast and downcast to a priori be effectful computations. Dually, a CBN cast typically can be represented by a CBN function of type B → B ′ , whose CBPV analogue is a computation of type UB → B ′ , which is equivalent with a computation x : UB /turnstileleft M : B ′ , and with a value x : UB /turnstileleft V : UB ′ . This suggests that a computation type dynamism B /subsetsqequal B ′ should induce an embedding-projection pair of values x : UB /turnstileleft V u : UB ′ and x : UB ′ /turnstileleft V d : UB , where both the upcast and the downcast again may a priori be (co)effectful, in the sense that they may not reflect all effects of their input.

However, this analysis ignores an important property of CBV casts in practice: upcasts always terminate without performing any effects, and in some systems upcasts are even defined to be values, while only the downcasts are effectful (introduce errors). For example, for many types A , the upcast from A to ? is an injection into a sum/recursive type, which is a value constructor. Our previous work on a logical relation for call-by-value gradual typing [New and Ahmed 2018] proved that all upcasts were pure in this sense as a consequence of the embedding-projection pair properties (but their proof depended on the only effects being divergence and type error). In GTT, we can make this property explicit in the syntax of the casts, by making the upcast 〈 A ′ /arrowtailleft A 〉 induced by a value type dynamism A /subsetsqequal A ′ itself a complex value, rather than computation. On the other hand, many downcasts between value types are implemented as a case-analysis looking for a specific tag and erroring otherwise, and so are not complex values.

We can also make a dual observation about CBN casts. The downcast arising from B /subsetsqequal B ′ has a stronger property than being a computation x : UB ′ /turnstileleft M : B as suggested above: it can be taken to be a stack · : B ′ /turnstileleft 〈 B /dblarrowheadleft B ′ 〉· : B , because a downcasted computation evaluates the computation it is 'wrapping' exactly once. One intuitive justification for this point of view, which we make precise in Section 4, is to think of the dynamic computation type ¿ as a recursive product of all possible behaviors that a computation might have, and the downcast as a recursive type unrolling

Fig. 3. GTT Term Dynamism (Structural Rules)

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Type Theory Rules

### Overview

The image presents a set of type theory rules, likely related to a dynamic semantics or type system. Each rule is formatted as an inference rule, with premises above a horizontal line and a conclusion below. The rules cover various aspects of type checking and evaluation, including reflexivity, variable lookup, transitivity, value substitution, hole filling, and stack substitution.

### Components/Axes

The image contains six distinct inference rules, each labeled with a name starting with "TmDyn". The rules involve various type environments (Γ, Δ, Φ, Ψ), terms (E, V, M), types (T, A, B), and variables (x). The symbol "⊑" represents a subtyping or containment relation.

### Detailed Analysis

**1. Top-Right Box:**

* Text: "Φ ⊢ V ⊑ V': A ⊑ A' and Φ | Ψ ⊢ M ⊑ M': B ⊑ B'"

* This appears to be a general condition or context applicable to some of the rules. It states that V is a subtype of V' with type A a subtype of A', and M is a subtype of M' with type B a subtype of B', all under contexts Φ and Ψ.

**2. TmDynRefl (Top-Left):**

* Rule:

```

Γ ⊑ Γ | Δ ⊑ Δ ⊢ E ⊑ E : T ⊑ T

```

* This rule states that if the type environment Γ is a subtype of itself, Δ is a subtype of itself, then E is a subtype of itself with type T a subtype of itself. This represents a reflexivity property.

**3. TmDynVar (Top-Right):**

* Rule:

```

Φ, x ⊑ x' : A ⊑ A', Φ' ⊢ x ⊑ x' : A ⊑ A'

```

* This rule states that if x is a subtype of x' with type A a subtype of A' in the context Φ, then x is a subtype of x' with type A a subtype of A' in the context Φ'. This represents variable lookup.

**4. TmDynTrans (Middle-Left):**

* Rule:

```

Γ ⊑ Γ' | Δ ⊑ Δ' ⊢ E ⊑ E' : T ⊑ T'

Γ' ⊑ Γ'' | Δ' ⊑ Δ'' ⊢ E' ⊑ E'' : T' ⊑ T''

--------------------------------------------------

Γ ⊑ Γ'' | Δ ⊑ Δ'' ⊢ E ⊑ E'' : T ⊑ T''

```

* This rule states that if Γ is a subtype of Γ', Δ is a subtype of Δ', and E is a subtype of E' with type T a subtype of T', and Γ' is a subtype of Γ'', Δ' is a subtype of Δ'', and E' is a subtype of E'' with type T' a subtype of T'', then Γ is a subtype of Γ'', Δ is a subtype of Δ'', and E is a subtype of E'' with type T a subtype of T''. This represents a transitivity property.

**5. TmDynValSubst (Middle-Right):**

* Rule:

```

Φ ⊢ V ⊑ V' : A ⊑ A'

Φ, x ⊑ x' : A ⊑ A', Φ' | Ψ ⊢ E ⊑ E' : T ⊑ T'

--------------------------------------------------

Φ | Ψ ⊢ E[V/x] ⊑ E'[V'/x'] : T ⊑ T'

```

* This rule states that if V is a subtype of V' with type A a subtype of A', and E is a subtype of E' with type T a subtype of T' in the context Φ, then E[V/x] is a subtype of E'[V'/x'] with type T a subtype of T' in the context Φ. This represents value substitution.

**6. TmDynHole (Bottom-Left):**

* Rule:

```

Φ | • ⊑ • : B ⊑ B' ⊢ • ⊑ • : B ⊑ B'

```

* This rule states that if the hole "•" is a subtype of itself with type B a subtype of B', then the hole "•" is a subtype of itself with type B a subtype of B'. This represents a hole filling.

**7. TmDynStkSubst (Bottom-Right):**

* Rule:

```

Φ | Ψ ⊢ M₁ ⊑ M₁' : B₁ ⊑ B₁'

Φ | • ⊑ • : B₁ ⊑ B₁' ⊢ M₂ ⊑ M₂' : B₂ ⊑ B₂'

--------------------------------------------------

Φ | Ψ ⊢ M₂[M₁/•] ⊑ M₂'[M₁'/•] : B₂ ⊑ B₂'

```

* This rule states that if M₁ is a subtype of M₁' with type B₁ a subtype of B₁', and M₂ is a subtype of M₂' with type B₂ a subtype of B₂', then M₂[M₁/•] is a subtype of M₂'[M₁'/•] with type B₂ a subtype of B₂'. This represents stack substitution.

### Key Observations

* The rules define a subtyping relation between terms and types.

* The rules are inference rules, meaning that the conclusion is valid if the premises are valid.

* The rules cover various aspects of type checking and evaluation, including reflexivity, variable lookup, transitivity, value substitution, and stack substitution.

### Interpretation

The rules likely define a dynamic semantics for a type system. The subtyping relation allows for flexibility in type checking, as a term of a subtype can be used where a term of a supertype is expected. The rules ensure that the subtyping relation is preserved during evaluation. The presence of rules for hole filling and stack substitution suggests that the type system may be used for program synthesis or interactive development. The rules are foundational for ensuring type safety and correctness in a programming language or system.

</details>

and product projection, which is a stack. From this point of view, an upcast can introduce errors, because the upcast of an object supporting some 'methods' to one with all possible methods will error dynamically on the unimplemented ones.

These observations are expressed in the (shaded) UpCast and DnCasts rules for casts in Figure 1: the upcast for a value type dynamism is a complex value, while the downcast for a computation type dynamism is a stack (if its argument is). Indeed, this description of casts is simpler than the intuition we began the section with: rather than putting in both upcasts and downcasts for all value and computation type dynamisms, it suffices to put in only upcasts for value type dynamisms and downcasts for computation type dynamisms, because of monotonicity of type dynamism for U / F types. The downcast for a value type dynamism A /subsetsqequal A ′ , as a stack · : FA ′ /turnstileleft 〈 FA /dblarrowheadleft FA ′ 〉· : FA as described above, is obtained from FA /subsetsqequal FA ′ as computation types. The upcast for a computation type dynamism B /subsetsqequal B ′ as a value x : UB /turnstileleft 〈 UB ′ /arrowtailleft UB 〉 x : UB ′ is obtained from UB /subsetsqequal UB ′ as value types. Moreover, we will show below that the value upcast 〈 A ′ /arrowtailleft A 〉 induces a stack · : FA /turnstileleft . . . : FA ′ that behaves like an upcast, and dually for the downcast, so this formulation

- implies the original formulation above.

We justify this design in two ways in the remainder of the paper. In Section 4, we show how to implement casts by a contract translation to CBPV where upcasts are complex values and downcasts are complex stacks. However, one goal of GTT is to be able to prove things about many gradually typed languages at once, by giving different models, so one might wonder whether this design rules out useful models of gradual typing where casts can have more general effects. In Theorem 3.26, we show instead that our design choice is forced for all casts, as long as the casts between ground types and the dynamic types are values/stacks.

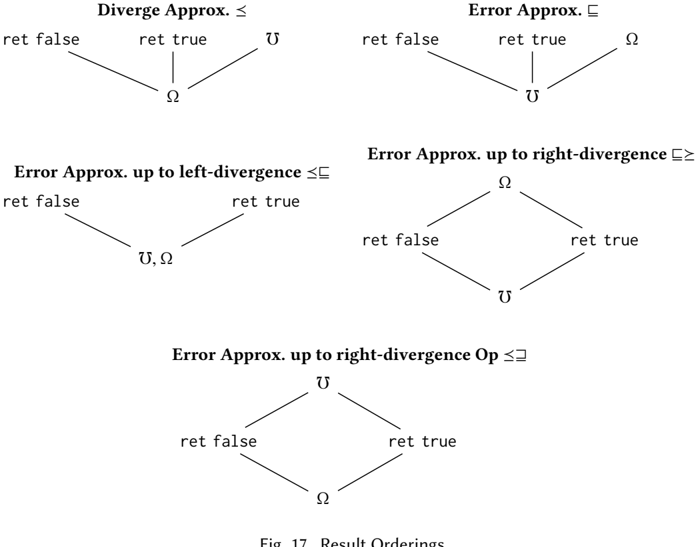

## 2.5 Term Dynamism: Judgements and Structural Rules

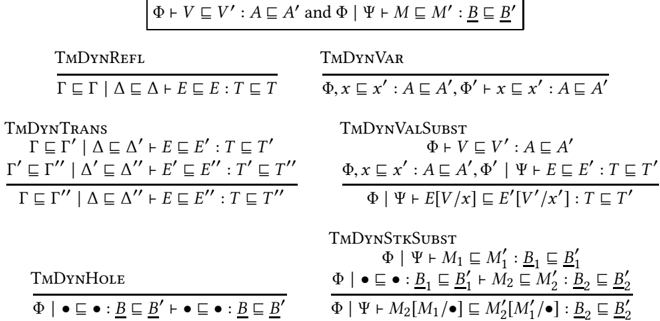

The final piece of GTT is the term dynamism relation, a syntactic judgement that is used for reasoning about the behavioral properties of terms in GTT. To a first approximation, term dynamism can be thought of as syntactic rules for reasoning about contextual approximation relative to errors (not divergence), where E /subsetsqequal E ′ means that either E errors or E and E ′ have the same result. However, a key idea in GTT is to consider a heterogeneous term dynamism judgement E /subsetsqequal E ′ : T /subsetsqequal T ′ between terms E : T and E ′ : T ′ where T /subsetsqequal T ′ -i.e. relating two terms at two different types, where the type on the right is more dynamic than the type on the right. This judgement structure

Fig. 4. GTT Term Dynamism (Congruence Rules)

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Type Theory Inference Rules

### Overview

The image presents a collection of inference rules, likely related to type theory or programming language semantics. Each rule defines a condition under which a certain judgment (statement) can be derived. The rules cover various constructs, including sums, products, functions, thunks, and records.

### Components/Axes

Each rule is structured as follows:

- **Name:** A short identifier for the rule (e.g., "+ILCONG", "XICONG").

- **Premises:** Conditions that must be true for the rule to apply. These are written above a horizontal line.

- **Conclusion:** The judgment that can be derived if the premises are true. This is written below the horizontal line.

- **Judgments:** These typically have the form "Φ ⊢ V ⊑ V': A ⊑ A'", where:

- Φ represents a typing context.

- V and V' are values or expressions.

- A and A' are types.

- ⊑ denotes a subtyping relation.

- ⊢ denotes derivability.

### Detailed Analysis or Content Details

Here's a breakdown of each rule, transcribing the text and providing a brief explanation:

**Row 1**

* **+ILCONG**

* Premise: Φ ⊢ V ⊑ V': A₁ ⊑ A₁'

* Conclusion: Φ ⊢ inl V ⊑ inl V': A₁ + A₂ ⊑ A₁' + A₂'

* Interpretation: If V is a subtype of V' with type A₁ a subtype of A₁', then inl V is a subtype of inl V' with type A₁ + A₂ a subtype of A₁' + A₂'. This rule handles the left injection of a sum type.

* **+IRCONG**

* Premise: Φ ⊢ V ⊑ V': A₂ ⊑ A₂'

* Conclusion: Φ ⊢ inr V ⊑ inr V': A₁ + A₂ ⊑ A₁' + A₂'

* Interpretation: If V is a subtype of V' with type A₂ a subtype of A₂', then inr V is a subtype of inr V' with type A₁ + A₂ a subtype of A₁' + A₂'. This rule handles the right injection of a sum type.

**Row 2**

* **+ECONG**

* Premises:

* Φ ⊢ V ⊑ V': A₁ + A₂ ⊑ A₁' + A₂'

* Φ, x₁ ⊑ x₁': A₁ ⊑ A₁' ⊢ E₁ ⊑ E₁': T ⊑ T'

* Φ, x₂ ⊑ x₂': A₂ ⊑ A₂' ⊢ E₂ ⊑ E₂': T ⊑ T'

* Conclusion: Φ ⊢ case V {x₁.E₁ | x₂.E₂} ⊑ case V' {x₁'.E₁' | x₂'.E₂'}: T'

* Interpretation: This rule handles the case expression for sum types. It states that if V is a subtype of V', and the branches E₁ and E₂ are subtypes of E₁' and E₂' respectively, then the case expression is well-typed.

* **0ECONG**

* Premise: Φ ⊢ V ⊑ V': 0 ⊑ 0

* Conclusion: Φ ⊢ abort V ⊑ abort V': T ⊑ T'

* Interpretation: This rule deals with the `abort` expression, which likely represents a non-returning computation.

**Row 3**

* **1ICONG**

* Conclusion: Φ ⊢ () ⊑ (): 1 ⊑ 1

* Interpretation: This rule states that the unit value () is a subtype of itself, with type 1 being a subtype of itself.

* **1ECONG**

* Premise: Φ ⊢ V ⊑ V': 1 ⊑ 1

* Premise: Φ ⊢ E ⊑ E': T ⊑ T'

* Conclusion: Φ ⊢ split V to ().E ⊑ split V' to ().E': T ⊑ T'

* Interpretation: This rule handles the `split` expression for the unit type.

**Row 4**

* **XICONG**

* Premises:

* Φ ⊢ V₁ ⊑ V₁': A₁ ⊑ A₁'

* Φ ⊢ V₂ ⊑ V₂': A₂ ⊑ A₂'

* Conclusion: Φ ⊢ (V₁, V₂) ⊑ (V₁', V₂'): A₁ × A₂ ⊑ A₁' × A₂'

* Interpretation: If V₁ is a subtype of V₁' and V₂ is a subtype of V₂', then the pair (V₁, V₂) is a subtype of (V₁', V₂'). This rule handles the introduction of product types.

* **→ICONG**

* Premise: Φ, x ⊑ x': A ⊑ A' ⊢ M ⊑ M': B ⊑ B'

* Conclusion: Φ ⊢ λx:A.M ⊑ λx':A'.M': A → B ⊑ A' → B'

* Interpretation: This rule handles lambda abstraction. If M is a subtype of M' under the assumption that x is a subtype of x', then the lambda abstraction λx:A.M is a subtype of λx':A'.M'.

**Row 5**

* **XECONG**

* Premises:

* Φ ⊢ V ⊑ V': A₁ × A₂ ⊑ A₁' × A₂'

* Φ, x ⊑ x': A₁ ⊑ A₁', y ⊑ y': A₂ ⊑ A₂' ⊢ E ⊑ E': T ⊑ T'

* Conclusion: Φ ⊢ split V to (x, y).E ⊑ split V' to (x', y').E': T ⊑ T'

* Interpretation: This rule handles the `split` expression for product types.

* **→ECONG**

* Premises:

* Φ ⊢ M ⊑ M': A → B ⊑ A' → B'

* Φ ⊢ V ⊑ V': A ⊑ A'

* Conclusion: Φ ⊢ M V ⊑ M' V': B ⊑ B'

* Interpretation: This rule handles function application. If M is a subtype of M' and V is a subtype of V', then M V is a subtype of M' V'.

**Row 6**

* **UICONG**

* Premise: Φ ⊢ M ⊑ M': B ⊑ B'

* Conclusion: Φ ⊢ thunk M ⊑ thunk M': UB ⊑ UB'

* Interpretation: This rule handles the introduction of thunks (delayed computations).

* **UECONG**

* Premise: Φ ⊢ V ⊑ V': UB ⊑ UB'

* Conclusion: Φ ⊢ force V ⊑ force V': B ⊑ B'

* Interpretation: This rule handles the `force` operation on thunks, evaluating the delayed computation.

**Row 7**

* **FICONG**

* Premise: Φ ⊢ V ⊑ V': A ⊑ A'

* Conclusion: Φ ⊢ ret V ⊑ ret V': FA ⊑ FA'

* Interpretation: This rule handles the `ret` operation, likely related to a monadic type (F).

* **FECONG**

* Premises:

* Φ ⊢ M ⊑ M': FA ⊑ FA'

* Φ, x ⊑ x': A ⊑ A' ⊢ N ⊑ N': B ⊑ B'

* Conclusion: Φ ⊢ bind x ← M; N ⊑ bind x' ← M'; N': B ⊑ B'

* Interpretation: This rule handles the `bind` operation, likely related to a monadic type (F).

**Row 8**

* **TICONG**

* Conclusion: Φ ⊢ {} ⊑ {}: T ⊑ T

* Interpretation: This rule states that the empty record {} is a subtype of itself, with type T being a subtype of itself.

* **&ICONG**

* Premises:

* Φ ⊢ M₁ ⊑ M₁': B₁ ⊑ B₁'

* Φ ⊢ M₂ ⊑ M₂': B₂ ⊑ B₂'

* Conclusion: Φ ⊢ {π → M₁ | π' → M₂} ⊑ {π → M₁' | π' → M₂'}: B₁ & B₂ ⊑ B₁' & B₂'

* Interpretation: This rule handles record extension.

**Row 9**

* **&ECONG**

* Premise: Φ ⊢ M ⊑ M': B₁ & B₂ ⊑ B₁' & B₂'

* Conclusion: Φ ⊢ πM ⊑ πM': B₁ ⊑ B₁'

* Interpretation: This rule handles record field access.

* **&E'CONG**

* Premise: Φ ⊢ M ⊑ M': B₁ & B₂ ⊑ B₁' & B₂'

* Conclusion: Φ ⊢ π'M ⊑ π'M': B₂ ⊑ B₂'

* Interpretation: This rule handles record field access.

### Key Observations

* The rules define a subtyping relation (⊑) between values and types.

* The rules cover common type theory constructs like sums, products, functions, and records.

* The rules appear to be part of a formal system for verifying the correctness of programs.

* The presence of "thunk" and "force" suggests a system that supports lazy evaluation.

* The presence of "ret" and "bind" suggests a system that incorporates monadic types.

### Interpretation

The inference rules define a type system that ensures type safety and allows for subtyping. The rules specify how to derive judgments about the relationships between values, expressions, and types. The system likely aims to prevent runtime errors by ensuring that programs adhere to the specified type constraints. The inclusion of features like thunks and monads suggests a sophisticated type system capable of handling advanced programming paradigms. The rules collectively define a formal system for reasoning about the types and behavior of programs.

</details>

allows simple axioms characterizing the behavior of casts [New and Licata 2018] and axiomatizes the graduality property [Siek et al. 2015a]. Here, we break this judgement up into value dynamism V /subsetsqequal V ′ : A /subsetsqequal A ′ and computation dynamism M /subsetsqequal M ′ : B /subsetsqequal B ′ . To support reasoning about open terms, the full form of the judgements are

- Γ /subsetsqequal Γ ′ /turnstileleft V /subsetsqequal V ′ : A /subsetsqequal A ′ where Γ /turnstileleft V : A and Γ ′ /turnstileleft V ′ : A ′ and Γ /subsetsqequal Γ ′ and A /subsetsqequal A ′ .

- Γ /subsetsqequal Γ ′ | ∆ /subsetsqequal ∆ ′ /turnstileleft M /subsetsqequal M ′ : B /subsetsqequal B ′ where Γ | ∆ /turnstileleft M : B and Γ ′ | ∆ ′ /turnstileleft M ′ : B ′ .

where Γ /subsetsqequal Γ ′ is the pointwise lifting of value type dynamism, and ∆ /subsetsqequal ∆ ′ is the optional lifting of computation type dynamism. We write Φ : Γ /subsetsqequal Γ ′ and Ψ : ∆ /subsetsqequal ∆ ′ as syntax for 'zipped' pairs of contexts that are pointwise related by type dynamism, x 1 /subsetsqequal x ′ 1 : A 1 /subsetsqequal A ′ 1 , . . . , x n /subsetsqequal x ′ n : A n /subsetsqequal A ′ n , which correctly suggests that one can substitute related terms for related variables. We will implicitly zip/unzip pairs of contexts, and sometimes write e.g. Γ /subsetsqequal Γ to mean x /subsetsqequal x : A /subsetsqequal A for all x : A in Γ .

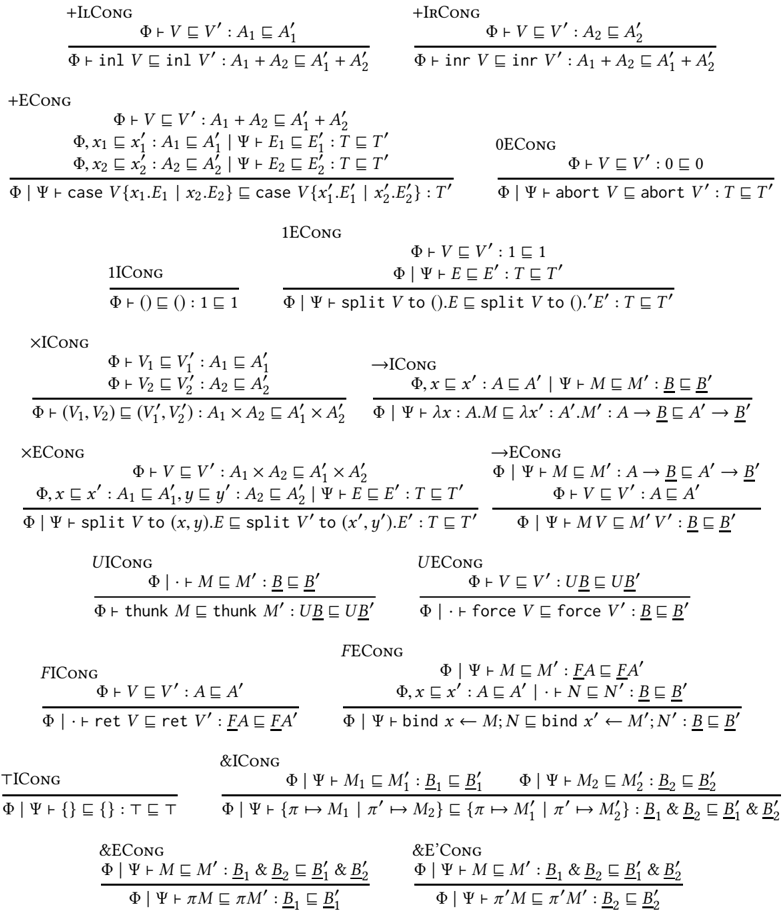

The main point of our rules for term dynamism is that there are no type-specific axioms in the definition beyond the βη -axioms that the type satisfies in a non-gradual language. Thus, adding a new type to gradual type theory does not require any a priori consideration of its gradual behavior in the language definition; instead, this is deduced as a theorem in the type theory. The basic structural rules of term dynamism in Figure 3 and Figure 4 say that it is reflexive and transitive ( TmDynRefl , TmDynTrans ), that assumptions can be used and substituted for ( TmDynVar , TmDynValSubst , TmDynHole , TmDynStkSubst ), and that every term constructor is monotone (the Cong rules). While we could add congruence rules for errors and casts, these follow from the axioms characterizing their behavior below.

We will often abbreviate a 'homogeneous' term dynamism (where the type or context dynamism is given by reflexivity) by writing e.g. Γ /turnstileleft V /subsetsqequal V ′ : A /subsetsqequal A ′ for Γ /subsetsqequal Γ /turnstileleft V /subsetsqequal V ′ : A /subsetsqequal A ′ , or Φ /turnstileleft V /subsetsqequal V ′ : A for Φ /turnstileleft V /subsetsqequal V ′ : A /subsetsqequal A , and similarly for computations. The entirely homogeneous judgements Γ /turnstileleft V /subsetsqequal V ′ : A and Γ | ∆ /turnstileleft M /subsetsqequal M ′ : B can be thought of as a syntax for contextual error approximation (as we prove below). We write V /supersetsqequal /subsetsqequal V ′ ('equidynamism') to mean term dynamism relations in both directions (which requires that the types are also equidynamic Γ /supersetsqequal /subsetsqequal Γ ′ and A /subsetsqequal A ′ ), which is a syntactic judgement for contextual equivalence.

## 2.6 Term Dynamism: Axioms

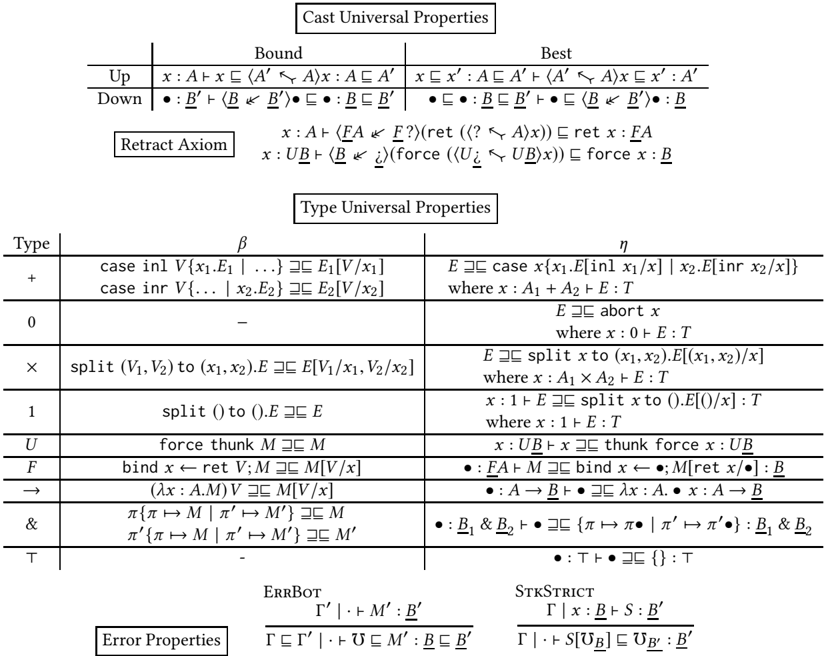

Finally, we assert some term dynamism axioms that describe the behavior of programs. The cast universal properties at the top of Figure 5, following New and Licata [2018], say that the defining property of an upcast from A to A ′ is that it is the least dynamic term of type A ′ that is more dynamic that x , a 'least upper bound'. That is, 〈 A ′ /arrowtailleft A 〉 x is a term of type A ′ that is more dynamic that x (the 'bound' rule), and for any other term x ′ of type A ′ that is more dynamic than x , 〈 A ′ /arrowtailleft A 〉 x is less dynamic than x ′ (the 'best' rule). Dually, the downcast 〈 B /dblarrowheadleft B ′ 〉· is the most dynamic term of type B that is less dynamic than · , a 'greatest lower bound'. These defining properties are entirely independent of the types involved in the casts, and do not change as we add or remove types from the system.

Wewill show that these defining properties already imply that the shift of the upcast 〈 A ′ /arrowtailleft A 〉 forms a Galois connection/adjunction with the downcast 〈 FA /dblarrowheadleft FA ′ 〉 , and dually for computation types (see Theorem 3.9). They do not automatically form a Galois insertion/coreflection/embeddingprojection pair, but we can add this by the retract axioms in Figure 5. Together with other theorems of GTT, these axioms imply that any upcast followed by its corresponding downcast is the identity (see Theorem 3.10). This specification of casts leaves some behavior undefined: for example, we cannot prove in the theory that 〈 F 1 + 1 /dblarrowheadleft F ? 〉〈 ? /arrowtailleft 1 〉 reduces to an error. We choose this design because there are valid models in which it is not an error, for instance if the unique value of 1 is represented as the boolean true . In Section 4.2, we show additional axioms that fully characterize the behavior of the dynamic type.

The type universal properties in the middle of the figure, which are taken directly from CBPV, assert the βη rules for each type as (homogeneous) term equidynamisms-these should be understood as having, as implicit premises, the typing conditions that make both sides type check, in equidynamic contexts.

Fig. 5. GTT Term Dynamism Axioms

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Logical Rules: Type Systems and Properties

### Overview

The image presents a collection of logical rules, likely related to type systems or program semantics. These rules are expressed using mathematical notation and logical symbols, defining relationships between types, expressions, and contexts. The rules are grouped into categories such as "Cast Universal Properties", "Type Universal Properties", and "Error Properties".

### Components/Axes

**1. Cast Universal Properties:**

- Located at the top of the image.

- Subdivided into "Bound" and "Best" categories.

- Contains rules involving type casting or subtyping, denoted by symbols like "⊢" and "⊏".

**2. Retract Axiom:**

- Located below "Cast Universal Properties".

- Presents axioms related to retracting or unwrapping types.

**3. Type Universal Properties:**

- Located in the center of the image.

- Organized in a table format with columns for "Type", "β" (beta reduction), and "η" (eta conversion).

- Lists rules for various types such as +, 0, ×, 1, U, F, →, &, and ⊤.

**4. Error Properties:**

- Located at the bottom of the image.

- Contains rules related to error handling or type checking in erroneous situations.

- Includes labels "ERRBOT" and "STKSTRICT".

### Detailed Analysis or ### Content Details

**Cast Universal Properties:**

* **Up (Bound):** x: A ⊢ x ⊏ (A' ⇦ A) x: A ⊏ A'

* **Up (Best):** x ⊏ x': A ⊏ A' ⊢ (A' ⇦ A) x ⊏ x': A'

* **Down (Bound):** •: B' ⊢ (B ⇦ B') • ⊏: B ⊏ B'

* **Down (Best):** • ⊏: B ⊏ B' ⊢ (B ⇦ B') •: B

**Retract Axiom:**

* x: A ⊢ (FA ⇐ F?) (ret (? ⇐ A) x)) ⊏ ret x: FA

* x: UB ⊢ (B ⇐) (force (U¿ ⊏ UB) x)) ⊏ force x: B

**Type Universal Properties:**

| Type | β AND THE LIST GOES ON!

</details>

The final axioms assert properties of the run-time error term /Omegainv : it is the least dynamic term (has the fewest behaviors) of every computation type, and all complex stacks are strict in errors, because stacks force their evaluation position. We state the first axiom in a heterogeneous way, which includes congruence Γ /subsetsqequal Γ ′ /turnstileleft /Omegainv B /subsetsqequal /Omegainv B ′ : B /subsetsqequal B ′ .

## 3 THEOREMS IN GRADUAL TYPE THEORY

In this section, we show that the axiomatics of gradual type theory determine most properties of casts, which shows that these behaviors of casts are forced in any implementation of gradual typing satisfying graduality and β , η .

## 3.1 Properties inherited from CBPV

Because the GTT term equidynamism relation /supersetsqequal /subsetsqequal includes the congruence and βη axioms of the CBPV equational theory, types inherit the universal properties they have there [Levy 2003]. We recall some relevant definitions and facts.

Definition 3.1 (Isomorphism).

- (1) We write A /simequal v A ′ for a value isomorphism between A and A ′ , which consists of two complex values x : A /turnstileleft V ′ : A ′ and x ′ : A ′ /turnstileleft V : A such that x : A /turnstileleft V [ V ′ / x ′ ] /supersetsqequal /subsetsqequal x : A and x ′ : A ′ /turnstileleft V ′ [ V / x ] /supersetsqequal /subsetsqequal x ′ : A ′ .

- (2) We write B /simequal c B ′ for a computation isomorphism between B and B ′ , which consists of two complex stacks · : B /turnstileleft S ′ : B ′ and · ′ : B ′ /turnstileleft S : B such that · : B /turnstileleft S [ S ′ / x ′ ] /supersetsqequal /subsetsqequal · : B and · ′ : B ′ /turnstileleft S ′ [ S /·] /supersetsqequal/subsetsqequal · ′ : B ′ .

Note that a value isomorphism is a strong condition, and an isomorphism in call-by-value between types A and A ′ corresponds to a computation isomorphism FA /simequal FA ′ , and dually [Levy 2017].

Lemma 3.2 (Initial objects).

- (1) For all (value or computation) types T , there exists a unique expression x : 0 /turnstileleft E : T .

- (2) For all B , there exists a unique stack · : F 0 /turnstileleft S : B .

- (3) 0 is strictly initial: Suppose there is a type A with a complex value x : A /turnstileleft V : 0 . Then V is an isomorphism A /simequal v 0 .

- (4) F 0 is not provably strictly initial among computation types. Proof.

- (1) Take E to be x : 0 /turnstileleft abort x : T . Given any E ′ , we have E /supersetsqequal /subsetsqequal E ′ by the η principle for 0.

- (2) Take S to be · : F 0 /turnstileleft bind x ← · ; abort x : B . Given another S ′ , by the η principle for F types, S ′ /supersetsqequal /subsetsqequal bind x ← · ; S ′ [ ret x ] . By congruence, to show S /supersetsqequal /subsetsqequal S ′ , it suffices to show x : 0 /turnstileleft abort x /supersetsqequal /subsetsqequal S [ ret x ] : B , which is an instance of the previous part.

- (3) We have y : 0 /turnstileleft abort y : A . The composite y : 0 /turnstileleft V [ abort y / x ] : 0 is equidynamic with y by the η principle for 0, which says that any two complex values with domain 0 are equal. The composite x : A /turnstileleft abort V : A is equidynamic with x , because

<!-- formula-not-decoded -->

where the first is by η with x : A , y : A , z : 0 /turnstileleft E [ z ] : = x : A and the second with x : 0 , y : 0 /turnstileleft E [ z ] : = y : A (this depends on the fact that 0 is 'distributive', i.e. Γ , x : 0 has the universal property of 0). Substituting abort V for y and V for z , we have abort V /supersetsqequal /subsetsqequal x .

- (4) F 0 is not strictly initial among computation types, though. Proof sketch: a domain model along the lines of [New and Licata 2018] with only non-termination and type errors shows this, because there F 0 and /latticetop are isomorphic (the same object is both initial and terminal), so if F 0 were strictly initial (any type B with a stack · : B /turnstileleft S : F 0 is isomorphic to F 0), then because every type B has a stack to /latticetop (terminal) and therefore F 0, every type would be isomorphic to /latticetop / F 0-i.e. the stack category would be trivial. But there are non-trivial computation types in this model.

/square

## Lemma 3.3 (Terminal objects).

- (1) For any computation type B , there exists a unique stack · : B /turnstileleft S : /latticetop .

- (2) (In any context Γ ,) there exists a unique complex value V : U /latticetop .

- (3) (In any context Γ ,) there exists a unique complex value V : 1 .

- (4) U /latticetop /simequal v 1

- (5) /latticetop is not a strict terminal object.

## Proof.

- (1) Take S = {} . The η rule for /latticetop , · : /latticetop /turnstileleft · /supersetsqequal/subsetsqequal {} : /latticetop , under the substitution of · : B /turnstileleft S : /latticetop , gives S /supersetsqequal /subsetsqequal {}[ S /·] = {} .

- (2) Take V = thunk {} . We have x : U /latticetop /turnstileleft x /supersetsqequal /subsetsqequal thunk force x /supersetsqequal /subsetsqequal thunk {} : U /latticetop by the η rules for U and /latticetop .

- (3) Take V = () . By η for 1 with x : 1 /turnstileleft E [ x ] : = () : 1, we have x : 1 /turnstileleft () /supersetsqequal/subsetsqequal unroll x to roll () . : 1. By η fro 1 with x : 1 /turnstileleft E [ x ] : = x : 1, we have x : 1 /turnstileleft x /supersetsqequal /subsetsqequal unroll x to roll () . . Therefore x : 1 /turnstileleft x /supersetsqequal /subsetsqequal () : 1.

- (4) We have maps x : U /latticetop /turnstileleft () : 1 and x : 1 /turnstileleft thunk {} : U /latticetop . The composite on 1 is the identity by the previous part. The composite on /latticetop is the identity by part (2).

- (5) Proof sketch: As above, there is a domain model with /latticetop /simequal F 0, so if /latticetop were a strict terminal object, then F 0 would be too. But F 0 is also initial, so it has a map to every type, and therefore every type would be isomorphic to F 0 and /latticetop . But there are non-trivial computation types in the model.

/square

## 3.2 Derived Cast Rules

As noted above, monotonicity of type dynamism for U and F means that we have the following as instances of the general cast rules:

Lemma 3.4 (Shifted Casts). The following are derivable:

<!-- formula-not-decoded -->

Proof. They are instances of the general upcast and downcast rules, using the fact that U and F are congruences for type dynamism, so in the first rule FA /subsetsqequal FA ′ , and in the second, UB /subsetsqequal UB ′ . /square

The cast universal properties in Figure 5 imply the following seemingly more general rules for reasoning about casts:

Lemma 3.5 (Upcast and downcast left and right rules). The following are derivable:

<!-- formula-not-decoded -->

In sequent calculus terminology, an upcast is left-invertible, while a downcast is right-invertible, in the sense that any time we have a conclusion with a upcast on the left/downcast on the right, we can without loss of generality apply these rules (this comes from upcasts and downcasts forming a Galois connection). We write the A /subsetsqequal A ′ and B ′ /subsetsqequal B ′′ premises on the non-invertible rules to emphasize that the premise is not necessarily well-formed given that the conclusion is.

Proof. For upcast left, substitute V ′ into the axiom x /subsetsqequal 〈 A ′′ /arrowtailleft A ′ 〉 x : A ′ /subsetsqequal A ′′ to get V ′ /subsetsqequal 〈 A ′′ /arrowtailleft A ′ 〉 V ′ , and then use transitivity with the premise.

For upcast right, by transitivity of

<!-- formula-not-decoded -->

we have