## Invariant Risk Minimization

Martin Arjovsky, L´ eon Bottou, Ishaan Gulrajani, David Lopez-Paz

## 1 Introduction

Machine learning suffers from a fundamental problem. While machines are able to learn complex prediction rules by minimizing their training error, data are often marred by selection biases, confounding factors, and other peculiarities [49, 48, 23]. As such, machines justifiably inherit these data biases. This limitation plays an essential role in the situations where machine learning fails to fulfill the promises of artificial intelligence. More specifically, minimizing training error leads machines into recklessly absorbing all the correlations found in training data. Understanding which patterns are useful has been previously studied as a correlation-versus-causation dilemma, since spurious correlations stemming from data biases are unrelated to the causal explanation of interest [31, 27, 35, 52]. Following this line, we leverage tools from causation to develop the mathematics of spurious and invariant correlations, in order to alleviate the excessive reliance of machine learning systems on data biases, allowing them to generalize to new test distributions.

As a thought experiment, consider the problem of classifying images of cows and camels [4]. To address this task, we label images of both types of animals. Due to a selection bias, most pictures of cows are taken in green pastures, while most pictures of camels happen to be in deserts. After training a convolutional neural network on this dataset, we observe that the model fails to classify easy examples of images of cows when they are taken on sandy beaches. Bewildered, we later realize that our neural network successfully minimized its training error using a simple cheat: classify green landscapes as cows, and beige landscapes as camels.

To solve the problem described above, we need to identify which properties of the training data describe spurious correlations (landscapes and contexts), and which properties represent the phenomenon of interest (animal shapes). Intuitively, a correlation is spurious when we do not expect it to hold in the future in the same manner as it held in the past. In other words, spurious correlations do not appear to be stable properties [54]. Unfortunately, most datasets are not provided in a form amenable to discover stable properties. Because most machine learning algorithms depend on the assumption that training and testing data are sampled independently from the same distribution [51], it is common practice to shuffle at random the training and testing examples. For instance, whereas the original NIST handwritten data was collected from different writers under different conditions [19], the popular MNIST training and testing sets [8] were carefully shuffled to represent similar mixes of writers. Shuffling brings the training and testing distributions closer together, but

discards what information is stable across writers. However, shuffling the data is something that we do, not something that Nature does for us. When shuffling, we destroy information about how the data distribution changes when one varies the data sources or collection specifics. Yet, this information is precisely what tells us whether a property of the data is spurious or stable.

Here we take a step back, and assume that the training data is collected into distinct, separate environments. These could represent different measuring circumstances, locations, times, experimental conditions, external interventions, contexts, and so forth. Then, we promote learning correlations that are stable across training environments, as these should (under conditions that we will study) also hold in novel testing environments.

Returning to our motivational example, we would like to label pictures of cows and camels under different environments. For instance, the pictures of cows taken in the first environment may be located in green pastures 80% of the time. In the second environment, this proportion could be slightly different, say 90% of the time (since pictures were taken in a different country). These two datasets reveal that 'cow' and 'green background' are linked by a strong, but varying (spurious) correlation, which should be discarded in order to generalize to new environments. Learning machines which pool the data from the two environments together may still rely on the background bias when addressing the prediction task. But, we believe that all cows exhibit features that allow us to recognize them as so, regardless of their context.

This suggests that invariant descriptions of objects relate to the causal explanation of the object itself (' Why is it a cow? ') [32]. As shown by [40, 22], there exists an intimate link between invariance and causation useful for generalization. However, [40] assumes a meaningful causal graph relating the observed variables, an awkward assumption when dealing with perceptual inputs such as pixels. Furthermore, [40] only applies to linear models, and scales exponentially with respect to the number of variables in the learning problem. As such, the seamless integration of causation tools [41] into machine learning pipelines remains cumbersome, disallowing what we believe to be a powerful synergy. Here, we work to address these concerns.

Contributions We propose Invariant Risk Minimization (IRM), a novel learning paradigm that estimates nonlinear, invariant, causal predictors from multiple training environments, to enable out-of-distribution (OOD) generalization. To this end, we first analyze in Section 2 how different learning techniques fail to generalize OOD. From this analysis, we derive our IRM principle in Section 3:

To learn invariances across environments, find a data representation such that the optimal classifier on top of that representation matches for all environments.

Section 4 examines the fundamental links between causation, invariance, and OOD generalization. Section 5 contains basic numerical simulations to validate our claims empirically. Section 6 concludes with a Socratic dialogue discussing directions for future research.

## 2 The many faces of generalization

Following [40], we consider datasets D e := { ( x e i , y e i ) } n e i =1 collected under multiple training environments e ∈ E tr . These environments describe the same pair of random variables measured under different conditions. The dataset D e , from environment e , contains examples identically and independently distributed according to some probability distribution P ( X e , Y e ). 1 Then, our goal is to use these multiple datasets to learn a predictor Y ≈ f ( X ), which performs well across a large set of unseen but related environments E all ⊃ E tr . Namely, we wish to minimize

$$R ^ { O O D } ( f ) = \max _ { e \in \mathcal { E } _ { a l l } } R ^ { e } ( f )$$

where R e ( f ) := E X e ,Y e [ ( f ( X e ) , Y e )] is the risk under environment e . Here, the set of all environments E all contains all possible experimental conditions concerning our system of variables, both observable and hypothetical. This is in the spirit of modal realism and possible worlds [29], where we could consider, for instance, environments where we switch off the Sun. An example clarifies our intentions.

Example 1. Consider the structural equation model [55]:

$$X _ { 1 } & \leftarrow G a u s s i a n ( 0 , \sigma ^ { 2 } ) , \\ Y & \leftarrow X _ { 1 } + G a u s s i a n ( 0 , \sigma ^ { 2 } ) , \\ X _ { 2 } & \leftarrow Y + G a u s s i a n ( 0 , 1 ) .$$

$$X _ { 2 } \gets Y + G a u s s i a n ( 0 , 1 ) .$$

As we formalize in Section 4, the set of all environments E all contains all modifications of the structural equations for X 1 and X 2 , and those varying the noise of Y within a finite range [0 , σ 2 MAX ]. For instance, e ∈ E all may replace the equation of X 2 by X e 2 ← 10 6 , or vary σ 2 within this finite range . To ease exposition consider:

$$\mathcal { E } _ { t r } = \{ r e p l a c e \, \sigma ^ { 2 } \ b y \ 1 0 , \ r e p l a c e \, \sigma ^ { 2 } \ b y \ 2 0 \} .$$

Then, to predict Y from ( X 1 , X 2 ) using a least-squares predictor ˆ Y e = X e 1 ˆ α 1 + X e 2 ˆ α 2 for environment e , we can:

- regress from X e 1 , to obtain ˆ α 1 = 1 and ˆ α 2 = 0,

- regress from X e 2 , to obtain ˆ α 1 = 0 and ˆ α 2 = σ ( e ) 2 / ( σ ( e ) 2 + 1 2 ),

- regress from ( X e 1 , X e 2 ), to obtain ˆ α 1 = 1 / ( σ ( e ) 2 +1) and ˆ α 2 = σ ( e ) 2 / ( σ ( e ) 2 +1).

The regression using X 1 is our first example of an invariant correlation: this is the only regression whose coefficients do not depend on the environment e . Conversely, the second and third regressions exhibit coefficients that vary from environment to environment. These varying (spurious) correlations would not generalize well to novel test environments. Also, not all invariances are interesting: the regression from the empty set of features into Y is invariant, but of weak predictive power.

1 We omit the superscripts ' e ' when referring to a random variable regardless of the environment.

$$3$$

The invariant rule ˆ Y = 1 · X 1 +0 · X 2 is the only predictor with finite R OOD across E all (to see this, let X 2 → ∞ ). Furthermore, this predictor is the causal explanation about how the target variable takes values across environments. In other words, it provides the correct description about how the target variable reacts in response to interventions on each of the inputs. This is compelling, as invariance is a statistically testable quantity that we can measure to discover causation. We elaborate on the relationship between invariance and causation in Section 4. But first, how can we learn the invariant, causal regression? Let us review four techniques commonly discussed in prior work, as well as their limitations.

First, we could merge the data from all the training environments and learn a predictor that minimizes the training error across the pooled data, using all features. This is the ubiquitous Empirical Risk Minimization (ERM) principle [50]. In this example, ERM would grant a large positive coefficient to X 2 if the pooled training environments lead to large σ 2 ( e ) (as in our example), departing from invariance.

Second, we could minimize R rob ( f ) = max e ∈E tr R e ( f ) -r e , a robust learning objective where the constants r e serve as environment baselines [2, 6, 15, 46]. Setting these baselines to zero leads to minimizing the maximum error across environments. Selecting these baselines adequately prevents noisy environments from dominating optimization. For example, [37] selects r e = V [ Y e ] to maximize the minimal explained variance across environments. While promising, robust learning turns out to be equivalent to minimizing a weighted average of environment training errors:

Proposition 2. Given KKT differentiability and qualification conditions, ∃ λ e ≥ 0 such that the minimizer of R rob is a first-order stationary point of ∑ e ∈E tr λ e R e ( f ) .

This proposition shows that robust learning and ERM (a special case of robust learning with λ e = 1 |E tr | ) would never discover the desired invariance, obtaining infinite R OOD . This is because minimizing the risk of any mixture of environments associated to large σ 2 ( e ) yields a predictor with a large weight on X 2 . Unfortunately, this correlation will vanish for testing environments associated to small σ 2 ( e ).

Third, we could adopt a domain adaptation strategy, and estimate a data representation Φ( X 1 , X 2 ) that follows the same distribution for all environments [16, 33]. This would fail to find the true invariance in Example 1, since the distribution of the true causal feature X 1 (and the one of the target Y ) can change across environments. This illustrates why techniques matching feature distributions sometimes attempt to enforce the wrong type of invariance, as discussed in Appendix C.

Fourth, we could follow invariant causal prediction techniques [40]. These search for the subset of variables that, when used to estimate individual regressions for each environment, produce regression residuals with equal distribution across all environments. Matching residual distributions is unsuited for our example, since the noise variance in Y may change across environments.

In sum, finding invariant predictors even on simple problems such as Example 1 is surprisingly difficult. To address this issue, we propose Invariant Risk Minimization (IRM), a learning paradigm to extract nonlinear invariant predictors across multiple environments, enabling OOD generalization.

## 3 Algorithms for invariant risk minimization

In statistical parlance, our goal is to learn correlations invariant across training environments. For prediction problems, this means finding a data representation such that the optimal classifier, 2 on top of that data representation, is the same for all environments. More formally:

Definition 3. We say that a data representation Φ : X → H elicits an invariant predictor w ◦ Φ across environments E if there is a classifier w : H → Y simultaneously optimal for all environments, that is, w ∈ arg min ¯ w : H→Y R e ( ¯ w ◦ Φ) for all e ∈ E .

Why is Definition 3 equivalent to learning features whose correlations with the target variable are stable? For loss functions such as the mean squared error and the cross-entropy, optimal classifiers can be written as conditional expectations. In these cases, a data representation function Φ elicits an invariant predictor across environments E if and only if for all h in the intersection of the supports of Φ( X e ) we have E [ Y e | Φ( X e ) = h ] = E [ Y e ′ | Φ( X e ′ ) = h ], for all e, e ′ ∈ E .

We believe that this concept of invariance clarifies common induction methods in science. Indeed, some scientific discoveries can be traced to the realization that distinct but potentially related phenomena, once described with the correct variables, appear to obey the same exact physical laws. The precise conservation of these laws suggests that they remain valid on a far broader range of conditions. If both Newton's apple and the planets obey the same equations, chances are that gravitation is a thing.

To discover these invariances from empirical data, we introduce Invariant Risk Minimization (IRM), a learning paradigm to estimate data representations eliciting invariant predictors w ◦ Φ across multiple environments. To this end, recall that we have two goals in mind for the data representation Φ: we want it to be useful to predict well, and elicit an invariant predictor across E tr . Mathematically, we phrase these goals as the constrained optimization problem:

$$\min _ { \substack { \Phi \colon X \to \mathcal { H } \\ w \colon \mathcal { H } \to \mathcal { Y } } } \sum _ { e \in \mathcal { E } _ { t r } } R ^ { e } ( w \circ \Phi )

\begin{array} { l l } { { i s a c h l e n g i n g , b i - l e v e l d o t i m i z a t i o n p r o b l e m , s i n c e a c h c o n s t r a i c t a l s a n } } \end{array}

\begin{array} { l l } { { i s a c h l e n g i n g , b i - l e v e l d o t i m i z a t i o n p r o b l e m , s i n c e a c h c o n s t r a i c t a l s a n } } \end{array}$$

This is a challenging, bi-leveled optimization problem, since each constraint calls an inner optimization routine. So, we instantiate (IRM) into the practical version:

$$\min _ { \Phi \colon \mathcal { X } \rightarrow \mathcal { Y } } \sum _ { e \in \mathcal { E } _ { t r } } R ^ { e } ( \Phi ) + \lambda \cdot \| \nabla _ { w | w = 1 . 0 } R ^ { e } ( w \cdot \Phi ) \| ^ { 2 } , \quad \text { (IRMv1)}$$

where Φ becomes the entire invariant predictor, w = 1 . 0 is a scalar and fixed 'dummy' classifier, the gradient norm penalty is used to measure the optimality of the dummy classifier at each environment e , and λ ∈ [0 , ∞ ) is a regularizer balancing between predictive power (an ERM term), and the invariance of the predictor 1 · Φ( x ).

2 We will also use the term 'classifier' to denote the last layer w for regression problems.

## 3.1 From (IRM) to (IRMv1)

This section is a voyage circumventing the subtle optimization issues lurking behind the idealistic objective (IRM), to arrive to the efficient proposal (IRMv1).

## 3.1.1 Phrasing the constraints as a penalty

We translate the hard constraints in (IRM) into the penalized loss

$$L _ { I R M } ( \Phi , w ) = \sum _ { e \in \mathcal { E } _ { t r } } R ^ { e } ( w \circ \Phi ) + \lambda \cdot \mathbb { D } ( w , \Phi , e ) & & ( 1 )$$

where Φ : X → H , the function D ( w, Φ , e ) measures how close w is to minimizing R e ( w ◦ Φ), and λ ∈ [0 , ∞ ) is a hyper-parameter balancing predictive power and invariance. In practice, we would like D ( w, Φ , e ) to be differentiable with respect to Φ and w . Next, we consider linear classifiers w to propose one alternative.

## 3.1.2 Choosing a penalty D for linear classifiers w

Consider learning an invariant predictor w ◦ Φ, where w is a linear-least squares regression, and Φ is a nonlinear data representation. In the sequel, all vectors v ∈ R d are by default in column form, and we denote by v ∈ R 1 × d the row form. By the normal equations, and given a fixed data representation Φ, we can write w e Φ ∈ arg min ¯ w R e ( ¯ w ◦ Φ) as:

$$w _ { \Phi } ^ { e } = \mathbb { E } _ { X ^ { e } } \left [ \Phi ( X ^ { e } ) \Phi ( X ^ { e } ) ^ { \top } \right ] ^ { - 1 } \mathbb { E } _ { X ^ { e } , Y ^ { e } } \left [ \Phi ( X ^ { e } ) Y ^ { e } \right ] , \quad ( 2 )$$

where we assumed invertibility. This analytic expression would suggest a simple discrepancy between two linear least-squares classifiers:

$$\mathbb { D } _ { d i s t } ( w , \Phi , e ) = \| w - w _ { \Phi } ^ { e } \| ^ { 2 } . & & ( 3 )$$

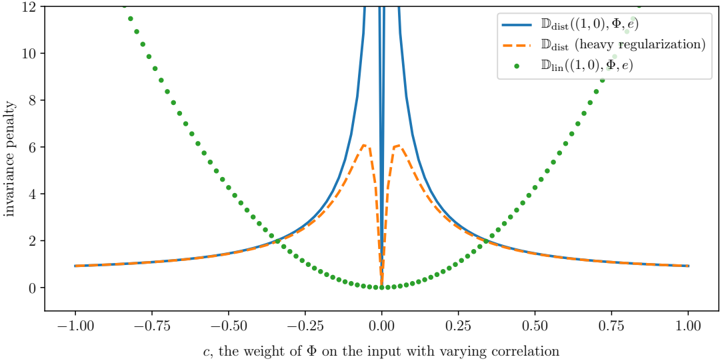

Figure 1 uses Example 1 to show why D dist is a poor discrepancy. The blue curve shows (3) as we vary the coefficient c for a linear data representation Φ( x ) = x · Diag([1 , c ]), and w = (1 , 0). The coefficient c controls how much the representation depends on the variable X 2 , responsible for the spurious correlations in Example 1. We observe that (3) is discontinuous at c = 0, the value eliciting the invariant predictor. This happens because when c approaches zero without being exactly zero, the least-squares rule (2) compensates this change by creating vectors w e Φ whose second coefficient grows to infinity. This causes a second problem, the penalty approaching zero as ‖ c ‖ → ∞ . The orange curve shows that adding severe regularization to the least-squares regression does not fix these numerical problems.

To circumvent these issues, we can undo the matrix inversion in (2) to construct:

$$\mathbb { D } _ { l i n } ( w , \Phi , e ) = \left \| \mathbb { E } _ { X ^ { e } } \left [ \Phi ( X ^ { e } ) \Phi ( X ^ { e } ) ^ { \top } \right ] w - \mathbb { E } _ { X ^ { e } , Y ^ { e } } \left [ \Phi ( X ^ { e } ) Y ^ { e } \right ] \right \| ^ { 2 } ,$$

which measures how much does the classifier w violate the normal equations. The green curve in Figure 1 shows D lin as we vary c , when setting w = (1 , 0). The

Figure 1: Different measures of invariance lead to different optimization landscapes in our Example 1. The na¨ ıve approach of measuring the distance between optimal classifiers D dist leads to a discontinuous penalty (solid blue unregularized, dashed orange regularized). In contrast, the penalty D lin does not exhibit these problems.

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Line Chart: Invariance Penalty vs. Weight of Φ with Varying Correlation

### Overview

The chart illustrates the relationship between the invariance penalty and the weight parameter `c` (representing the weight of Φ on the input with varying correlation). Three distinct data series are plotted: two line-based distributions (`D_dist`) and a linear distribution (`D_lin`), each with unique visual characteristics (solid, dashed, dotted lines). The y-axis represents the magnitude of the invariance penalty, while the x-axis spans correlation values from -1.00 to 1.00.

### Components/Axes

- **X-axis**: Labeled "c, the weight of Φ on the input with varying correlation" with tick marks at -1.00, -0.75, -0.50, -0.25, 0.00, 0.25, 0.50, 0.75, and 1.00.

- **Y-axis**: Labeled "invariance penalty" with values ranging from 0 to 12 in increments of 2.

- **Legend**: Located in the top-right corner, with three entries:

- Solid blue line: `D_dist((1, 0), Φ, ε)`

- Dashed orange line: `D_dist(heavy regularization)`

- Dotted green line: `D_lin((1, 0), ε)`

### Detailed Analysis

1. **Solid Blue Line (`D_dist((1, 0), Φ, ε)`)**:

- Sharp peak at `c = 0.00` with a maximum y-value of approximately 12.

- Rapidly declines to near-zero values as `c` moves away from 0 in both directions.

- Symmetrical shape with steep slopes on either side of the peak.

2. **Dashed Orange Line (`D_dist(heavy regularization)`)**:

- Broader peak centered at `c = 0.00`, with a maximum y-value of ~6.

- Gradual decline on both sides of the peak, less steep than the blue line.

- Symmetrical but flatter compared to the blue line.

3. **Dotted Green Line (`D_lin((1, 0), ε)`)**:

- U-shaped curve with a minimum at `c = 0.00` (y ≈ 0).

- Increases symmetrically as `c` moves toward ±1.00, reaching ~12 at the extremes.

- No sharp peaks; smooth parabolic trend.

### Key Observations

- All three data series exhibit symmetry around `c = 0.00`, suggesting a central role for zero correlation in minimizing the invariance penalty.

- The blue line (`D_dist`) shows the highest sensitivity to `c`, with the sharpest peak and steepest slopes.

- The orange line (`D_dist` with heavy regularization) demonstrates reduced sensitivity, with a broader peak and lower maximum penalty.

- The green line (`D_lin`) exhibits the least sensitivity, with a quadratic relationship between `c` and the penalty.

### Interpretation

The data suggests that the invariance penalty is most sensitive to the weight `c` when the correlation is zero (`c = 0.00`). The sharp peak of the blue line (`D_dist`) indicates that this distribution is highly dependent on precise alignment of `c` with zero correlation. The broader peak of the orange line (`D_dist` with heavy regularization) implies that regularization mitigates sensitivity to small deviations in `c`, making it more robust. The green line (`D_lin`) reveals a fundamentally different relationship, where the penalty increases quadratically as `c` moves away from zero, suggesting a linear model's inherent trade-off between input weighting and invariance.

Notably, the blue and orange lines both peak at `c = 0.00`, but their differing shapes highlight the impact of regularization on model behavior. The green line’s U-shape underscores a baseline penalty that grows with input weighting, independent of correlation alignment. These trends may reflect trade-offs between model complexity, regularization, and input dependency in the system being modeled.

</details>

penalty D lin is smooth (it is a polynomial on both Φ and w ), and achieves an easy-to-reach minimum at c = 0 -the data representation eliciting the invariant predictor. Furthermore, D lin ( w, Φ , e ) = 0 if and only if w ∈ arg min ¯ w R e ( ¯ w ◦ Φ). As a word of caution, we note that the penalty D lin is non-convex for general Φ.

## 3.1.3 Fixing the linear classifier w

Even when minimizing (1) over (Φ , w ) using D lin , we encounter one issue. When considering a pair ( γ Φ , 1 γ w ), it is possible to let D lin tend to zero without impacting the ERM term, by letting γ tend to zero. This problem arises because (1) is severely over-parametrized. In particular, for any invertible mapping Ψ, we can re-write our invariant predictor as

$$w \circ \Phi = \underbrace { \left ( w \circ \Psi ^ { - 1 } \right ) } _ { \tilde { w } } \circ \underbrace { \left ( \Psi \circ \Phi \right ) } _ { \tilde { \Phi } } .$$

This means that we can re-parametrize our invariant predictor as to give w any non-zero value ˜ w of our choosing. Thus, we may restrict our search to the data representations for which all the environment optimal classifiers are equal to the same fixed vector ˜ w . In words, we are relaxing our recipe for invariance into finding a data representation such that the optimal classifier, on top of that data representation, is ' ˜ w ' for all environments . This turns (1) into a relaxed version of IRM, where optimization only happens over Φ:

$$L _ { I R M , w = \tilde { w } } ( \Phi ) = \sum _ { e \in \mathcal { E } _ { t r } } R ^ { e } ( \tilde { w } \circ \Phi ) + \lambda \cdot \mathbb { D } _ { l i n } ( \tilde { w } , \Phi , e ) .$$

As λ →∞ , solutions (Φ ∗ λ , ˜ w ) of (5) tend to solutions (Φ ∗ , ˜ w ) of (IRM) for linear ˜ w .

## 3.1.4 Scalar fixed classifiers ˜ w are sufficient to monitor invariance

Perhaps surprisingly, the previous section suggests that ˜ w = (1 , 0 , . . . , 0) would be a valid choice for our fixed classifier. In this case, only the first component of the data representation would matter! We illustrate this apparent paradox by providing a complete characterization for the case of linear invariant predictors. In the following theorem, matrix Φ ∈ R p × d parametrizes the data representation function, vector w ∈ R p the simultaneously optimal classifier, and v = Φ w the predictor w ◦ Φ.

Theorem 4. For all e ∈ E , let R e : R d → R be convex differentiable cost functions. A vector v ∈ R d can be written v = Φ w , where Φ ∈ R p × d , and where w ∈ R p simultaneously minimize R e ( w ◦ Φ) for all e ∈ E , if and only if v ∇ R e ( v ) = 0 for all e ∈ E . Furthermore, the matrices Φ for which such a decomposition exists are the matrices whose nullspace Ker(Φ) is orthogonal to v and contains all the ∇ R e ( v ) .

So, any linear invariant predictor can be decomposed as linear data representations of different ranks. In particular, we can restrict our search to matrices Φ ∈ R 1 × d and let ˜ w ∈ R 1 be the fixed scalar 1 . 0. This translates (5) into:

$$L _ { I R M , w = 1 . 0 } ( \Phi ^ { \top } ) = \sum _ { e \in \mathcal { E } _ { t r } } R ^ { e } ( \Phi ^ { \top } ) + \lambda \cdot \mathbb { D } _ { l i n } ( 1 . 0 , \Phi ^ { \top } , e ) .$$

Section 4 shows that the existence of decompositions with high-rank data representation matrices Φ are key to out-of-distribution generalization, regardless of whether we restrict IRM to search for rank-1 Φ .

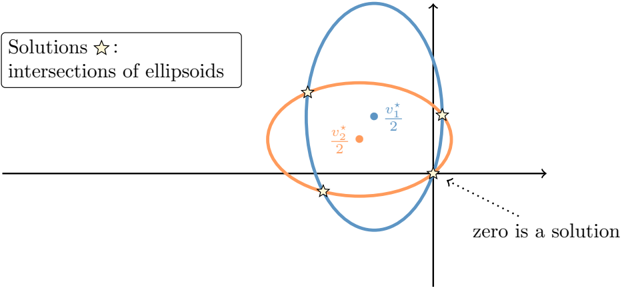

Geometrically, each orthogonality condition v ∇ R e ( v ) = 0 in Theorem 4 defines a ( d -1)-dimensional manifold in R d . Their intersection is itself a manifold of dimension greater than d -m , where m is the number of environments. When using the squared loss, each condition is a quadratic equation whose solutions form an ellipsoid in R d . Figure 2 shows how their intersection is composed of multiple connected components, one of which contains the trivial solution v = 0. This shows that (6) remains nonconvex, and therefore sensitive to initialization.

## 3.1.5 Extending to general losses and multivariate outputs

Continuing from (6), we obtain our final algorithm (IRMv1) by realizing that the invariance penalty (4), introduced for the least-squares case, can be written as a general function of the risk, namely D (1 . 0 , Φ , e ) = ‖∇ w | w =1 . 0 R e ( w · Φ) ‖ 2 , where Φ is again a possibly nonlinear data representation. This expression measures the optimality of the fixed scalar classifier w = 1 . 0 for any convex loss, such as the cross-entropy. If the target space Y returned by Φ has multiple outputs, we multiply all of them by the fixed scalar classifier w = 1 . 0.

Figure 2: The solutions of the invariant linear predictors v = Φ w coincide with the intersection of the ellipsoids representing the orthogonality condition v ∇ R e ( v ) = 0 .

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Diagram: Solutions as Intersections of Ellipsoids

### Overview

The diagram illustrates the solutions to a system of equations represented by two intersecting ellipsoids. The solutions are marked as star symbols at their intersection points. Key elements include labeled data points, axes, and a legend.

### Components/Axes

- **Legend**: Located in the **top-left corner**, labeled "Solutions ★: intersections of ellipsoids."

- **Horizontal Axis**: Labeled "zero is a solution" with a dotted arrow pointing right.

- **Vertical Axis**: Labeled with an upward-pointing arrow (no explicit numerical scale).

- **Ellipsoids**:

- **Blue Ellipsoid**: Contains a data point labeled $ v_1^* $ at $ \frac{1}{2} $ on the vertical axis.

- **Orange Ellipsoid**: Contains a data point labeled $ v_2^* $ at $ -\frac{1}{2} $ on the horizontal axis.

- **Intersection Points**: Four star symbols mark the intersections of the ellipsoids.

### Detailed Analysis

- **Blue Ellipsoid**:

- Data point $ v_1^* $ is positioned at $ \frac{1}{2} $ on the vertical axis.

- The ellipsoid intersects the orange ellipsoid at two star points near the origin.

- **Orange Ellipsoid**:

- Data point $ v_2^* $ is positioned at $ -\frac{1}{2} $ on the horizontal axis.

- The ellipsoid intersects the blue ellipsoid at two star points near the origin.

- **Axes**:

- The horizontal axis explicitly labels "zero is a solution," suggesting $ x = 0 $ is a valid solution.

- The vertical axis lacks explicit numerical markers but aligns with the data points $ \frac{1}{2} $ and $ -\frac{1}{2} $.

### Key Observations

1. **Intersection Points**: The four star symbols represent the combined solutions of the system, distributed symmetrically around the origin.

2. **Data Points**:

- $ v_1^* $ (blue) and $ v_2^* $ (orange) are distinct solutions tied to their respective ellipsoids.

- $ v_2^* $ at $ -\frac{1}{2} $ on the horizontal axis aligns with the "zero is a solution" label, implying a relationship between these points.

3. **Symmetry**: The ellipsoids and their intersections exhibit approximate symmetry about the origin.

### Interpretation

- The diagram demonstrates that the solutions to the system are the geometric intersections of the two ellipsoids. The presence of "zero is a solution" on the horizontal axis suggests that $ x = 0 $ satisfies the system’s equations, likely corresponding to one of the intersection points.

- The data points $ v_1^* $ and $ v_2^* $ highlight specific solutions unique to each ellipsoid, while the star symbols emphasize the combined solutions.

- The symmetry and positioning of the ellipsoids imply that the system’s solutions are balanced around the origin, with $ v_1^* $ and $ v_2^* $ acting as critical reference points.

- The absence of a numerical scale on the vertical axis introduces uncertainty in precise positional relationships beyond the labeled data points.

*Note: All textual elements are extracted as described. No additional languages or hidden data are present.*

</details>

## 3.2 Implementation details

When estimating the objective (IRMv1) using mini-batches for stochastic gradient descent, one can obtain an unbiased estimate of the squared gradient norm as

$$\sum _ { k = 1 } ^ { b } \left [ \nabla _ { w | w = 1 . 0 } \ell ( w \cdot \Phi ( X _ { k } ^ { e , i } ) , Y _ { k } ^ { e , i } ) \cdot \nabla _ { w | w = 1 . 0 } \ell ( w \cdot \Phi ( X _ { k } ^ { e , j } ) , Y _ { k } ^ { e , j } ) \right ] ,$$

where ( X e,i , Y e,i ) and ( X e,j , Y e,j ) are two random mini-batches of size b from environment e , and is a loss function. We offer a PyTorch example in Appendix D.

## 3.3 About nonlinear invariances w

How restrictive is it to assume that the invariant optimal classifier w is linear? One may argue that given a sufficiently flexible data representation Φ, it is possible to write any invariant predictor as 1 . 0 · Φ. However, enforcing a linear invariance may grant non-invariant predictors a penalty D lin equal to zero. For instance, the null data representation Φ 0 ( X e ) = 0 admits any w as optimal amongst all the linear classifiers for all environments. But, the elicited predictor w ◦ Φ 0 is not invariant in cases where E [ Y e ] = 0. Such null predictor would be discarded by the ERM term in the IRM objective. In general, minimizing the ERM term R e ( ˜ w ◦ Φ) will drive Φ so that ˜ w is optimal amongst all predictors, even if ˜ w is linear.

We leave for future work several questions related to this issue. Are there noninvariant predictors that would not be discarded by either the ERM or the invariance term in IRM? What are the benefits of enforcing non-linear invariances w belonging to larger hypothesis classes W ? How can we construct invariance penalties D for non-linear invariances?

## 4 Invariance, causality and generalization

The newly introduced IRM principle promotes low error and invariance across training environments E tr . When do these conditions imply invariance across all environments E all ? More importantly, when do these conditions lead to low error across E all , and consequently out-of-distribution generalization? And at a more fundamental level, how does statistical invariance and out-of-distribution generalization relate to concepts from the theory of causation?

So far, we have omitted how different environments should relate to enable out-of-distribution generalization. The answer to this question is rooted in the theory of causation. We begin by assuming that the data from all the environments share the same underlying Structural Equation Model, or SEM [55, 39]:

Definition 5. A Structural Equation Model (SEM) C := ( S , N ) governing the random vector X = ( X 1 , . . . , X d ) is a set of structural equations :

$$\mathcal { S } _ { i } \colon X _ { i } \gets f _ { i } ( P a ( X _ { i } ) , N _ { i } ) ,$$

where Pa( X i ) ⊆ { X 1 , . . . , X d } \ { X i } are called the parents of X i , and the N i are independent noise random variables. We say that ' X i causes X j ' if X i ∈ Pa( X j ) . We call causal graph of X to the graph obtained by drawing i) one node for each X i , and ii) one edge from X i to X j if X i ∈ Pa( X j ) . We assume acyclic causal graphs.

By running the structural equations of a SEM C according to the topological ordering of its causal graph, we can draw samples from the observational distribution P ( X ). In addition, we can manipulate (intervene) an unique SEM in different ways, indexed by e , to obtain different but related SEMs C e .

Definition 6. Consider a SEM C = ( S , N ) . An intervention e on C consists of replacing one or several of its structural equations to obtain an intervened SEM C e = ( S e , N e ) , with structural equations:

$$S _ { i } ^ { e } \colon X _ { i } ^ { e } \leftarrow f _ { i } ^ { e } ( P a ^ { e } ( X _ { i } ^ { e } ) , N _ { i } ^ { e } ) ,$$

The variable X e is intervened if S i = S e i or N i = N e i .

Similarly, by running the structural equations of the intervened SEM C e , we can draw samples from the interventional distribution P ( X e ). For instance, we may consider Example 1 and intervene on X 2 , by holding it constant to zero, thus replacing the structural equation of X 2 by X e 2 ← 0. Admitting a slight abuse of notation, each intervention e generates a new environment e with interventional distribution P ( X e , Y e ). Valid interventions e , those that do not destroy too much information about the target variable Y , form the set of all environments E all .

Prior work [40] considered valid interventions as those that do not change the structural equation of Y , since arbitrary interventions on this equation render prediction impossible. In this work, we also allow changes in the noise variance of Y , since varying noise levels appear in real problems, and these do not affect the optimal prediction rule. We formalize this as follows.

Definition 7. Consider a SEM C governing the random vector ( X 1 , . . . , X d , Y ) , and the learning goal of predicting Y from X . Then, the set of all environments E all ( C ) indexes all the interventional distributions P ( X e , Y e ) obtainable by valid interventions e . An intervention e ∈ E all ( C ) is valid as long as (i) the causal graph remains acyclic, (ii) E [ Y e | Pa( Y )] = E [ Y | Pa( Y )] , and (iii) V [ Y e | Pa( Y )] remains within a finite range.

Condition (iii) can be waived if one takes into account environment specific baselines into the definition of R OOD , similar to those appearing in the robust learning objective R rob . We leave additional quantifications of out-of-distribution generalization for future work.

The previous definitions establish fundamental links between causation and invariance. Moreover, one can show that a predictor v : X → Y is invariant across E all ( C ) if and only if it attains optimal R OOD , and if and only if it uses only the direct causal parents of Y to predict, that is, v ( x ) = E N Y [ f Y (Pa( Y ) , N Y )]. The rest of this section follows on these ideas to showcase how invariance across training environments can enable out-of-distribution generalization across all environments.

## 4.1 Generalization theory for IRM

The goal of IRM is to build predictors that generalize out-of-distribution, that is, achieving low error across E all . To this end, IRM enforces low error and invariance across E tr . The bridge from low error and invariance across E tr to low error across E all can be traversed in two steps.

For linear IRM, our starting point to answer this question is the theory of Invariant Causal Prediction (ICP) [40, Theorem 2]. There, the authors prove that ICP recovers the target invariance as long as the data (i) is Gaussian, (ii) satisfies a linear SEM, and (iii) is obtained by certain types of interventions. Theorem 9 shows that IRM learns such invariances even when these three assumptions fail to hold. In particular, we allow for non-Gaussian data, dealing with observations produced as a linear transformation of the variables with stable and spurious correlations, and do not require specific types of interventions or the existence of a causal graph.

First, one can show that low error across E tr and invariance across E all leads to low error across E all . This is because, once the data representation Φ eliciting an invariant predictor w ◦ Φ across E all is estimated, the generalization error of w ◦ Φ respects standard error bounds. Second, we examine the remaining condition towards low error across E all : namely, under which conditions does invariance across training environments E tr imply invariance across all environments E all ?

The setting of the theorem is as follows. Y e has an invariant correlation with an unobserved latent variable Z e 1 by a linear relationship Y e = Z e 1 · γ + e , with e independent of Z e 1 . What we observe is X e , which is a scrambled combination of Z e 1 and another variable Z e 2 that can be arbitrarily correlated with Z e 1 and e . Simply regressing using all of X e will then recklessly exploit Z e 2 (since it gives extra, but spurious, information on e and thus Y e ). A particular instance of this setting is when Z e 1 is the cause of Y e , Z e 2 is an effect, and X e contains both causes and effects. To generalize out of distribution the representation has to discard Z e 2 and keep Z e 1 .

Before showing Theorem 9, we need to make our assumptions precise. To learn useful invariances, one must require some degree of diversity across training environments. On the one hand, extracting two random subsets of examples from a large dataset does not lead to diverse environments, as both subsets would follow the same distribution. On the other hand, splitting a large dataset by conditioning on arbitrary variables can generate diverse environments, but may introduce spurious correlations and destroy the invariance of interest [40, Section 3.3]. Therefore, we will require sets of training environments containing sufficient diversity and satisfying an underlying invariance. We formalize the diversity requirement as needing envirnments to lie in linear general position .

Assumption 8. A set of training environments E tr lie in linear general position of degree r if |E tr | > d -r + d r for some r ∈ N , and for all non-zero x ∈ R d :

$$\dim \left ( { s p a n } \left ( \left \{ { \mathbb { E } _ { X ^ { e } } \left [ X ^ { e } X ^ { e \top } \right ] x - { \mathbb { E } _ { X ^ { e } , \epsilon ^ { e } } \left [ X ^ { e } \epsilon ^ { e } \right ] } \right \} } _ { e \in { \mathcal { E } _ { t r } } } \right ) \right ) > d - r .$$

Intuitively, the assumption of linear general position limits the extent to which the training environments are co-linear. Each new environment laying in linear general position will remove one degree of freedom in the space of invariant solutions. Fortunately, Theorem 10 shows that the set of cross-products E X e [ X e X e ] not satisfying a linear general position has measure zero. Using the assumption of linear general position, we can show that the invariances that IRM learns across training environments transfer to all environments.

In words, the next theorem states the following. If one finds a representation Φ of rank r eliciting an invariant predictor w ◦ Φ across E tr , and E tr lie in linear general position of degree r , then w ◦ Φ is invariant across E all .

Theorem 9. Assume that

$$\begin{array} { r l } & { Y ^ { e } = Z _ { 1 } ^ { e } \cdot \gamma + \epsilon ^ { e } , \quad Z _ { 1 } ^ { e } \perp \epsilon ^ { e } , \quad \mathbb { E } [ \epsilon ^ { e } ] = 0 , } \\ & { X ^ { e } = S ( Z _ { 1 } ^ { e } , Z _ { 2 } ^ { e } ) . } \end{array}$$

Here, γ ∈ R c , Z e 1 takes values in R c , Z e 2 takes values in R q , and S ∈ R d × ( c + q ) . Assume that the Z 1 component of S is invertible: that there exists ˜ S ∈ R c × d such that ˜ S ( S ( z 1 , z 2 )) = z 1 , for all z 1 ∈ R c , z 2 ∈ R q . Let Φ ∈ R d × d have rank r > 0 . Then, if at least d -r + d r training environments E tr ⊆ E all lie in linear general position of degree r , we have that

$$\Phi \, \mathbb { E } _ { X ^ { e } } \left [ X ^ { e } X ^ { e ^ { \top } } \right ] \Phi ^ { \top } w = \Phi \, \mathbb { E } _ { X ^ { e } , Y ^ { e } } \left [ X ^ { e } Y ^ { e } \right ] \quad ( 7 )$$

holds for all e ∈ E tr iff Φ elicits the invariant predictor Φ w for all e ∈ E all .

The assumptions about linearity, centered noise, and independence between the noise e and the causal variables Z 1 from Theorem 9 also appear in ICP [40, Assumption 1], implying the invariance E [ Y e | Z e 1 = z 1 ] = z 1 · γ . As in ICP, we allow

correlations between e and the non-causal variables Z e 2 , which leads ERM into absorbing spurious correlations (as in our Example 1, where S = I and Z e 2 = X e 2 ).

In addition, our result contains several novelties. First, we do not assume that the data is Gaussian, the existence of a causal graph, or that the training environments arise from specific types of interventions. Second, the result extends to 'scrambled setups' where S = I . These are situations where the causal relations are not defined on the observable features X , but on a latent variable ( Z 1 , Z 2 ) that IRM needs to recover and filter. Third, we show that representations Φ with higher rank need fewer training environments to generalize. This is encouraging, as representations with higher rank destroy less information about the learning problem at hand.

We close this section with two important observations. First, while robust learning generalizes across interpolations of training environments (recall Proposition 2), learning invariances with IRM buys extrapolation powers. We can observe this in Example 1 where, using two training environments, robust learning yields predictors that work well for σ ∈ [10 , 20], while IRM yields predictors that work well for all σ . Finally, IRM is a differentiable function with respect to the covariances of the training environments. Therefore, in cases when the data follows an approximately invariant model, IRM should return an approximately invariant solution, being robust to mild model misspecification. This is in contrast to common causal discovery methods based on thresholding statistical hypothesis tests.

## 4.2 On the nonlinear case and the number of environments

In the same vein as the linear case, we could attempt to provide IRM with guarantees for the nonlinear regime. Namely, we could assume that each constraint ‖∇ w | w =1 . 0 R e ( w · Φ) ‖ = 0 removes one degree of freedom from the possible set of solutions Φ. Then, for a sufficiently large number of diverse training environments, we would elicit the invariant predictor. Unfortunately, we were unable to phrase such a 'nonlinear general position' assumption and prove that it holds almost everywhere, as we did in Theorem 10 for the linear case. We leave this effort for future work.

While general, Theorem 9 is pessimistic, since it requires the number of training environments to scale linearly with the number of parameters in the representation matrix Φ. Fortunately, as we will observe in our experiments from Section 5, it is often the case that two environments are sufficient to recover invariances. We believe that these are problems where E [ Y e | Φ( X e )] cannot match for two different environments e = e ′ unless Φ extracts the causal invariance. The discussion from Section 3.3 gains relevance here, since enforcing W -invariance for larger families W should allow discarding more non-invariant predictors with fewer training environments. All in all, studying what problems allow the discovery of invariances from few environments is a promising line of work towards a learning theory of invariance.

## 4.3 Causation as invariance

We promote invariance as the main feature of causation. Unsurprisingly, we are not pioneers in doing so. To predict the outcome of an intervention, we rely on (i) the

properties of our intervention and (ii) the properties assumed invariant after the intervention. Pearl's do-calculus [39] on causal graphs is a framework that tells which conditionals remain invariant after an intervention. Rubin's ignorability [44] plays the same role. What's often described as autonomy of causal mechanisms [20, 1] is a specification of invariance under intervention. A large body of philosophical work [47, 42, 38, 12, 54, 13] studies the close link between invariance and causation. Some works in machine learning [45, 18, 21, 26, 36, 43, 34, 7] pursue similar questions.

The invariance view of causation transcends some of the difficulties of working with causal graphs. For instance, the ideal gas law PV = nRT or Newton's universal gravitation F = G m 1 m 2 r 2 are difficult to describe using structural equation models ( What causes what? ), but are prominent examples of laws that are invariant across experimental conditions. When collecting data about gases or celestial bodies, the universality of these laws will manifest as invariant correlations, which will sponsor valid predictions across environments, as well as the conception of scientific theories.

Another motivation supporting the invariance view of causation are the problems studied in machine learning. For instance, consider the task of image classification. Here, the observed variables are hundreds of thousands of correlated pixels. What is the causal graph governing them? It is reasonable to assume that causation does not happen between pixels, but between the real-world concepts captured by the camera. In these cases, invariant correlations in images are a proxy into the causation at play in the real world. To find those invariant correlations, we need methods which can disentangle the observed pixels into latent variables closer to the realm of causation, such as IRM. In rare occasions we are truly interested in the entire causal graph governing all the variables in our learning problem. Rather, our focus is often on the causal invariances improving generalization across novel distributions of examples.

## 5 Experiments

We perform two experiments to assess the generalization abilities of IRM across multiple environments. The source-code is available at https://github.com/facebookresearch/InvariantRiskMinimization .

## 5.1 Synthetic data

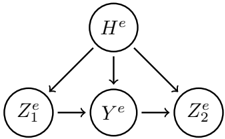

As a first experiment, we extend our motivating Example 1. First, we increase the dimensionality of each of the two input features in X = ( X 1 , X 2 ) to 10 dimensions. Second, as a form of model misspecification, we allow the existence of a 10-dimensional hidden confounder variable H . Third, in some cases the features Z will not be directly observed, but only a scrambled version X = S ( Z ). Figure 3 summarizes the SEM generating the data ( X e , Y e ) for all environments e in these experiments. More specifically, for environment e ∈ R , we consider the following variations:

- Scrambled (S) observations, where S is an orthogonal matrix, or unscrambled (U) observations, where S = I .

- Fully-observed (F) graphs, where W h → 1 = W h → y = W h → 2 = 0, or partially-observed (P) graphs, where ( W h → 1 , W h → y , W h → 2 ) are Gaussian.

- Homoskedastic (O) Y -noise, where σ 2 y = e 2 and σ 2 2 = 1, or heteroskedastic (E) Y -noise, where σ 2 y = 1 and σ 2 2 = e 2 .

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Diagram: Hierarchical Process Flow

### Overview

The image depicts a hierarchical process flow diagram with four nodes connected by directional arrows. The structure suggests a sequential or causal relationship between components, with branching pathways and intermediary steps.

### Components/Axes

- **Nodes**:

- `H^e` (top node)

- `Z₁^e` (left node)

- `Y^e` (central node)

- `Z₂^e` (right node)

- **Arrows**:

- `H^e` → `Z₁^e`

- `H^e` → `Z₂^e`

- `Z₁^e` → `Y^e`

- `Y^e` → `Z₂^e`

- **No axes, legends, or numerical scales** are present.

### Detailed Analysis

1. **Primary Pathway**:

- `H^e` directly influences `Z₂^e` (direct arrow).

- `H^e` also influences `Z₁^e`, which then flows to `Y^e`, which finally reaches `Z₂^e` (indirect pathway: `H^e` → `Z₁^e` → `Y^e` → `Z₂^e`).

2. **Key Relationships**:

- `Z₂^e` receives input from both `H^e` (direct) and `Y^e` (indirect via `Z₁^e`).

- `Y^e` acts as a mediator between `Z₁^e` and `Z₂^e`.

### Key Observations

- **Redundancy**: `Z₂^e` has dual input sources (`H^e` and `Y^e`), suggesting potential redundancy or multiple influencing factors.

- **Mediation**: `Y^e` serves as an intermediary, implying a dependency or transformation step between `Z₁^e` and `Z₂^e`.

- **Hierarchy**: `H^e` is the root node, initiating all downstream processes.

### Interpretation

This diagram likely represents a **causal or operational model** where:

- `H^e` is the initiating event or input (e.g., a hypothesis, system state, or trigger).

- `Z₁^e` and `Z₂^e` are outcomes or states influenced by `H^e`.

- `Y^e` represents a secondary process or variable that mediates the relationship between `Z₁^e` and `Z₂^e`.

The dual pathways to `Z₂^e` suggest that its state is determined by both direct and indirect influences from `H^e`. The presence of `Y^e` implies that transformations or intermediate steps are critical to the final outcome (`Z₂^e`). This structure could model systems in fields like systems engineering, biology (e.g., gene regulation), or economics (e.g., supply chain dependencies).

</details>

$$H ^ { e } & \leftarrow \mathcal { N } ( 0 , e ^ { 2 } ) \\ Z _ { 1 } ^ { e } & \leftarrow \mathcal { N } ( 0 , e ^ { 2 } ) + W _ { h \to 1 } H ^ { e } \\ Y ^ { e } & \leftarrow Z _ { 1 } ^ { e } \cdot W _ { 1 \to y } + \mathcal { N } ( 0 , \sigma _ { y } ^ { 2 } ) + W _ { h \to y } H ^ { e } \\ Z _ { 2 } ^ { e } & \leftarrow W _ { y \to 2 } Y ^ { e } + \mathcal { N } ( 0 , \sigma _ { 2 } ^ { 2 } ) + W _ { h \to 2 } H ^ { e }$$

Figure 3: In our synthetic experiments, the task is to predict Y e from X e = S ( Z e 1 , Z e 2 ) .

These variations lead to eight setups referred to by their initials. For instance, the setup 'FOS' considers fully-observed (F), homoskedastic Y -noise (O), and scrambled observations (S). For all variants, ( W 1 → y , W y → 2 ) have Gaussian entries. Each experiment draws 1000 samples from the three training environments E tr = { 0 . 2 , 2 , 5 } . IRM follows the variant (IRMv1), and uses the environment e = 5 to cross-validate the invariance regularizer λ . We compare to ERM and ICP [40].

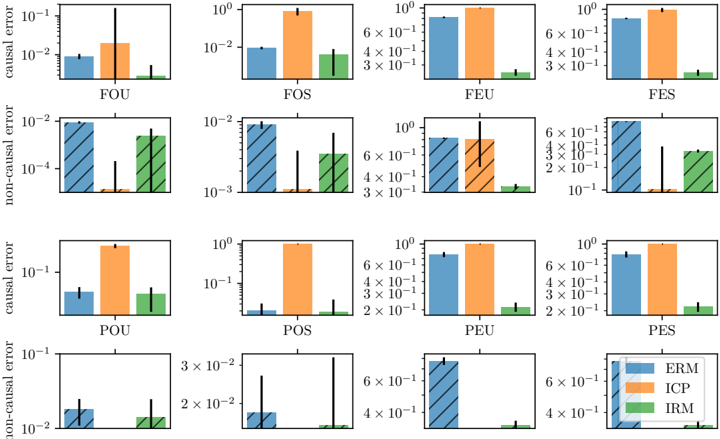

Figure 4 summarizes the results of our experiments. We show two metrics for each estimated prediction rule ˆ Y = X 1 · ˆ W 1 → y + X 2 · ˆ W y → 2 . To this end, we consider a descrambled version of the estimated coefficients ( ˆ M 1 → y , ˆ M y → 2 ) = ( ˆ W 1 → y , ˆ W y → 2 ) S . First, the plain barplots shows the average squared error between ˆ M 1 → y and W 1 → y . This measures how well does a predictor recover the weights associated to the causal variables. Second, each striped barplot shows the norm of estimated weights ˆ M y → 2 associated to the non-causal variable. We would like this norm to be zero, as the desired invariant causal predictor is ˆ Y e = ( W 1 → y , 0) S ( X e 1 , X e 2 ). In summary, IRM is able to estimate the most accurate causal and non-causal weights across all experimental conditions. In most cases, IRM is orders of magnitude more accurate than ERM (our y -axes are in log-scale). IRM also out-performs ICP, the previous state-of-the-art method, by a large margin. Our experiments also show the conservative behaviour of ICP (preferring to reject most covariates as direct causes), leading to large errors on causal weights and small errors on non-causal weights.

## 5.2 Colored MNIST

We validate IRM at learning nonlinear invariant predictors with a synthetic binary classification task derived from MNIST. The goal is to predict a binary label assigned to each image based on the digit. Whereas MNIST images are grayscale, we color each image either red or green in a way that correlates strongly (but spuriously) with the class label. By construction, the label is more strongly correlated with the color than with the digit, so any algorithm purely minimizing training error will tend to exploit the color. Such algorithms will fail at test time because the direction of the

Figure 4: Average errors on causal (plain bars) and non-causal (striped bars) weights for our synthetic experiments. The y -axes are in log-scale. See main text for details.

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Bar Chart: Causal and Non-Causal Error Comparison Across Methods and Scenarios

### Overview

The image contains two sets of grouped bar charts comparing causal and non-causal error rates across eight scenarios (FOU, FOS, FEU, FES, POU, POS, PEU, PES) for three methods: ERM (blue), ICP (orange), and IRM (green). The y-axis uses a logarithmic scale (10^-3 to 10^0), and error bars indicate variability.

### Components/Axes

- **X-axis**: Scenarios (FOU, FOS, FEU, FES, POU, POS, PEU, PES)

- **Y-axis (Left)**: Error magnitude (log scale: 10^-3 to 10^0)

- **Y-axis (Right)**: Error magnitude (linear scale: 10^-1 to 10^0)

- **Legend**:

- Blue: ERM

- Orange: ICP

- Green: IRM

- **Error Bars**: Vertical lines atop bars showing standard deviation

### Detailed Analysis

#### Causal Error (Top Section)

1. **FOU**:

- ERM: ~10^-2

- ICP: ~10^-1

- IRM: ~10^-3

2. **FOS**:

- ERM: ~3×10^-2

- ICP: ~6×10^-1

- IRM: ~10^-2

3. **FEU**:

- ERM: ~10^-2

- ICP: ~10^-1

- IRM: ~10^-3

4. **FES**:

- ERM: ~3×10^-2

- ICP: ~6×10^-1

- IRM: ~10^-2

5. **POU**:

- ERM: ~10^-2

- ICP: ~10^-1

- IRM: ~10^-3

6. **POS**:

- ERM: ~3×10^-2

- ICP: ~6×10^-1

- IRM: ~10^-2

7. **PEU**:

- ERM: ~10^-2

- ICP: ~10^-1

- IRM: ~10^-3

8. **PES**:

- ERM: ~3×10^-2

- ICP: ~6×10^-1

- IRM: ~10^-2

#### Non-Causal Error (Bottom Section)

1. **FOU**:

- ERM: ~10^-2

- ICP: ~10^-1

- IRM: ~10^-3

2. **FOS**:

- ERM: ~3×10^-2

- ICP: ~6×10^-1

- IRM: ~10^-2

3. **FEU**:

- ERM: ~10^-2

- ICP: ~10^-1

- IRM: ~10^-3

4. **FES**:

- ERM: ~3×10^-2

- ICP: ~6×10^-1

- IRM: ~10^-2

5. **POU**:

- ERM: ~10^-2

- ICP: ~10^-1

- IRM: ~10^-3

6. **POS**:

- ERM: ~3×10^-2

- ICP: ~6×10^-1

- IRM: ~10^-2

7. **PEU**:

- ERM: ~10^-2

- ICP: ~10^-1

- IRM: ~10^-3

8. **PES**:

- ERM: ~3×10^-2

- ICP: ~6×10^-1

- IRM: ~10^-2

</details>

correlation is reversed in the test environment. By observing that the strength of the correlation between color and label varies between the two training environments, we can hope to eliminate color as a predictive feature, resulting in better generalization.

We define three environments (two training, one test) from MNIST transforming each example as follows: first, assign a preliminary binary label ˜ y to the image based on the digit: ˜ y = 0 for digits 0-4 and ˜ y = 1 for 5-9. Second, obtain the final label y by flipping ˜ y with probability 0.25. Third, sample the color id z by flipping y with probability p e , where p e is 0.2 in the first environment, 0.1 in the second, and 0.9 in the test one. Finally, color the image red if z = 1 or green if z = 0.

We train MLPs on the colored MNIST training environments using different objectives and report results in Table 1. For each result we report the mean and standard deviation across ten runs. Training with ERM returns a model with high accuracy in the training environments but below-chance accuracy in the test environment, since the ERM model classifies mainly based on color. Training with IRM results in a model that performs worse on the training environments, but relies less on the color and hence generalizes better to the test environments. An oracle that ignores color information by construction outperforms IRM only slightly.

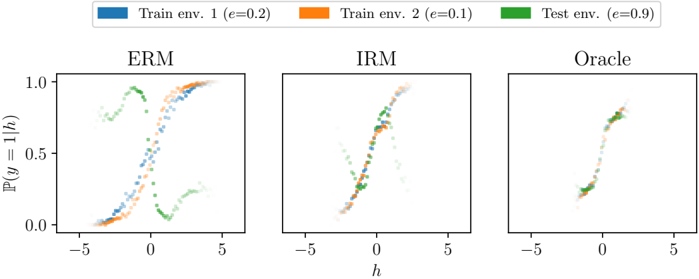

To better understand the behavior of these models, we take advantage of the fact that h = Φ( x ) (the logit) is one-dimensional and y is binary, and plot P ( y = 1 | h, e ) as a function of h for each environment and each model in Figure 5. We show each algorithm in a separate plot, and each environment in a separate color. The figure shows that, whether considering only the two training environments or all three

| Algorithm | Acc. train envs. | Acc. test env. |

|----------------------------------------|--------------------|------------------|

| ERM | 87 . 4 ± 0 . 2 | 17 . 1 ± 0 . 6 |

| IRM (ours) | 70 . 8 ± 0 . 9 | 66 . 9 ± 2 . 5 |

| Random guessing (hypothetical) | 50 | 50 |

| Optimal invariant model (hypothetical) | 75 | 75 |

| ERM, grayscale model (oracle) | 73 . 5 0 . 2 | 73 . 0 0 . 4 |

±

±

Table 1: Accuracy (%) of different algorithms on the Colored MNIST synthetic task. ERM fails in the test environment because it relies on spurious color correlations to classify digits. IRM detects that the color has a spurious correlation with the label and thus uses only the digit to predict, obtaining better generalization to the new unseen test environment.

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Line Chart: Probability Distribution Across Training and Test Environments

### Overview

The image displays three line charts comparing probability distributions (P(y=1|h)) across three environments: ERM (Empirical Risk Minimization), IRM (Integrated Risk Minimization), and Oracle. Each chart shows three data series representing training environments with different error rates (e=0.2, e=0.1) and a test environment (e=0.9). The x-axis represents a parameter "h" ranging from -5 to 5, while the y-axis shows probability values between 0 and 1.

### Components/Axes

- **X-axis**: Parameter "h" (range: -5 to 5)

- **Y-axis**: Probability P(y=1|h) (range: 0 to 1)

- **Legend**:

- Blue: Train env. 1 (e=0.2)

- Orange: Train env. 2 (e=0.1)

- Green: Test env. (e=0.9)

- **Panels**:

- Left: ERM

- Center: IRM

- Right: Oracle

### Detailed Analysis

#### ERM Panel

- **Blue (Train env. 1)**: Starts near 0 at h=-5, rises sharply to ~0.8 at h=0.5, then dips to ~0.3 at h=5.

- **Orange (Train env. 2)**: Begins at ~0.2 at h=-5, peaks at ~0.9 at h=0, then drops to ~0.1 at h=5.

- **Green (Test env.)**: Starts at ~0.1 at h=-5, rises to ~0.7 at h=1.5, dips to ~0.2 at h=3, then rises again to ~0.6 at h=5.

#### IRM Panel

- **Blue (Train env. 1)**: Smooth curve peaking at ~0.7 at h=0.5, then declines to ~0.3 at h=5.

- **Orange (Train env. 2)**: Starts at ~0.1 at h=-5, peaks at ~0.6 at h=0.5, then drops to ~0.2 at h=5.

- **Green (Test env.)**: Begins at ~0.05 at h=-5, peaks at ~0.5 at h=0.5, then dips to ~0.1 at h=5.

#### Oracle Panel

- All three lines (blue, orange, green) overlap closely, peaking at ~0.8 at h=0.5 and declining to ~0.3 at h=5. Data points are densely clustered, indicating minimal variance.

### Key Observations

1. **ERM Variance**: The ERM panel shows significant divergence between training and test environments, particularly in the green (test) line's W-shaped pattern.

2. **IRM Smoothing**: IRM curves are smoother and more tightly grouped than ERM, suggesting better generalization.

3. **Oracle Consistency**: The Oracle panel demonstrates near-identical performance across all environments, with lines overlapping almost perfectly.

4. **Test Environment Sensitivity**: The green (test) line in ERM exhibits a pronounced dip at h=3, absent in other panels, indicating potential overfitting or data distribution shifts.

### Interpretation

The charts illustrate how different risk minimization strategies (ERM vs. IRM) affect model performance across environments with varying error rates. ERM's pronounced divergence between training and test environments (especially in the green line) suggests it may overfit to specific training conditions. IRM's smoother curves imply improved robustness, while the Oracle panel represents an idealized scenario where all environments align perfectly. The test environment's higher error rate (e=0.9) in ERM highlights challenges in generalizing to more error-prone conditions. These patterns underscore the importance of risk-aware training strategies in handling distributional shifts.

</details>

Figure 5: P ( y = 1 | h ) as a function of h for different models trained on Colored MNIST: (left) an ERM-trained model, (center) an IRM-trained model, and (right) an ERM-trained model which only sees grayscale images and therefore is perfectly invariant by construction. IRM learns approximate invariance from data alone and generalizes well to the test environment.

environments, the IRM model is closer to achieving invariance than the ERM model. Notably, the IRM model does not achieve perfect invariance, particularly at the tails of the P ( h ). We suspect this is due to finite sample issues: given the small sample size at the tails, estimating (and hence minimizing) the small differences in P ( y | h, e ) between training environments can be quite difficult, regardless of the method.

We note that conditional domain adaptation techniques which match P ( h | y, e ) across environments could in principle solve this task equally well to IRM, which matches P ( y | h, e ). This is because the distribution of the causal features (the digit shapes) and P ( y | e ) both happen to be identical across environments. However, unlike IRM, conditional domain adaptation will fail if, for example, the distribution of the digits changes across environments. We discuss this further in Appendix C.

Finally, Figure 5 shows that P ( y = 1 | h ) cannot always be expressed with a linear classifier w . Enforcing nonlinear invariances (Section 3.3) could prove useful here.

## 6 Looking forward: a concluding dialogue

[ Eric and Irma are two graduate students studying the Invariant Risk Minimization (IRM) manuscript. Over a cup of coffee at a caf´ e in Palais-Royal, they discuss the advantages and caveats that invariance brings to Empirical Risk Minimization (ERM). ]

- Irma: I have observed that predictors trained with ERM sometimes absorb biases and spurious correlations from data. This leads to undesirable behaviours when predicting about examples that do not follow the distribution of the training data.

- Eric: I have observed that too, and I wonder what are the reasons behind such phenomena. After all, ERM is an optimal principle to learn predictors from empirical data!

- Irma: It is, indeed. But even when your hypothesis class allows you to find the empirical risk minimizer efficiently, there are some assumptions at play. First, ERM assumes that training and testing data are identically and independently distributed according to the same distribution. Second, generalization bounds require that the ratio between the capacity of our hypothesis class and the number of training examples n tends to zero, as n →∞ . Third, ERM achieves zero test error only in the realizable case -that is, when there exists a function in our hypothesis class able to achieve zero error. I suspect that violating these assumptions leads ERM into absorbing spurious correlations, and that this is where invariance may prove useful.

Eric:

Irma:

Interesting. Should we study the three possibilities in turn? Sure thing! But first, let's grab another cup of coffee.

[ We also encourage the reader to grab a cup of coffee. ]

∼

- Irma: First and foremost, we have the 'identically and independently distributed' (iid) assumption. I once heard Professor Ghahramani refer to this assumption as 'the big lie in machine learning'. This is to say that all training and testing examples are drawn from the same distribution P ( X,Y ) = P ( Y | X ) P ( X ).

- Eric: I see. This is obviously not the case when learning from multiple environments, as in IRM. Given this factorization, I guess two things are subject to change: either the marginal distribution P ( X ) of my inputs, or the conditional distribution P ( Y | X ) mapping those inputs into my targets.

- Irma: That's correct. Let's focus first on the case where P ( X e ) changes across environments e . Some researchers from the field of domain adaptation call this covariate shift . This situation is challenging when the supports of P ( X e ) are disjoint across environments. Actually, without a-priori knowledge, there is no reason to believe that our predictor will generalize outside the union of the supports of the training environments.

Eric: A daunting challenge, indeed. How could invariance help here?

- Irma: Two things come to mind. On the one hand, we could try to transform our inputs into some features Φ( X e ), as to match the support of all the training environments. Then, we could learn an invariant classifier w (Φ( X e )) on top of the transformed inputs. [ Appendix D studies the shortcomings of this idea. ] On the other hand, we could assume that the invariant predictor w has a simple structure, that we can estimate given limited supports. The authors of IRM follow this route, by assuming linear classifiers on top of representations.

- Eric: I see! Even though the P ( X e ) may be disjoint, if there is a simple invariance satisfied for all training environments separately, it may also hold in unobserved regions of the space. I wonder if we could go further by assuming some sort of compositional structure in w , the linear assumption of IRM is just the simplest kind. I say this since compositional assumptions often enable learning in one part of the input space, and evaluating on another.

- Irma: It sounds reasonable! What about the case where P ( Y e | X e ) changes? Does this happen in normal supervised learning? I remember attending a lecture by Professor Sch¨ olkopf [45, 25] where he mentioned that P ( Y e | X e ) is often invariant across environments when X e is a cause of Y e , and that it often varies when X e is an effect of Y e . For instance, he explains that MNIST classification is anticausal: as in, the observed pixels are an effect of the mental concept that led the writer to draw the digit in the first place. IRM insists on this relation between invariance and causation, what do you think?

- Eric: I saw that lecture too. Contrary to Professor Sch¨ olkopf, I believe that most supervised learning problems, such as image classification, are causal . In these problems we predict human annotations Y e from pixels X e , hoping that the machine imitates this cognitive process. Furthermore, the annotation process often involves multiple humans in the interest of making P ( Y e | X e ) deterministic. If the annotation process is close to deterministic and shared across environments, predicting annotations is a causal problem, with an invariant conditional expectation.

- Irma: Oh! This means that in supervised learning problems about predicting annotations, P ( Y e | X e ) is often stable across environments, so ERM has great chances of succeeding. This is good news: it explains why ERM is so good at supervised learning, and leaves less to worry about.

- Eric: However, if any of the other problems appear (disjoint P ( X e ), not enough data, not enough capacity), ERM could get in trouble, right?

- Irma: Indeed! Furthermore, in some supervised learning problems, the label is not necessarily created from the input. For instance, the input could be an X-ray image, and the target could be the result of a tumor biopsy on the same patient. Also, there are problems where we predict parts of the input from other parts of the input, like in self-supervised learning [14]. In some other cases, we don't even have labels! This could include

the unsupervised learning of the causal factors of variation behind X e , which involves inverting the causal generative process of the data. In all of these cases, we could be dealing with anticausal problems, where the conditional distribution is subject to change across environments. Then, I expect searching for invariance may help by focusing on invariant predictors that generalize out-of-distribution.

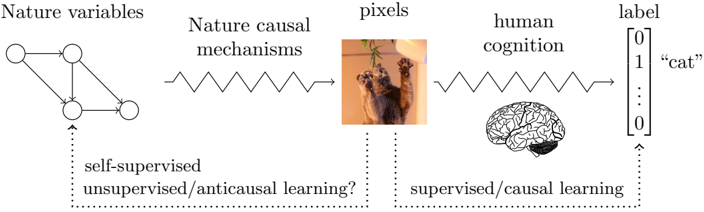

Eric: That is an interesting divide between supervised and unsupervised learning! [ Figure 6 illustrates the main elements of this discussion. ]

Figure 6: All learning problems use empirical observations, here referred to as 'pixels'. Following a causal and cognitive process, humans produce labels. Therefore, supervised learning problems predicting annotations from observations are causal, and therefore P ( label | pixel ) is often invariant. Conversely, types of unsupervised and self-supervised learning trying to disentangle the underlying data causal factors of variation (Nature variables) should to some extent reverse the process generating observations (Nature mechanisms). This leads to anticausal learning problems, possibly with varying conditional distributions; an opportunity to leverage invariance. Cat picture by www. flickr. com/ photos/ pustovit .

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Diagram: Information Flow from Nature Variables to Label Classification

### Overview

The diagram illustrates a conceptual pipeline for processing natural phenomena into labeled outputs, integrating elements of machine learning, human cognition, and biological systems. It depicts a flow from abstract "nature variables" through causal mechanisms to pixel-based representations, human cognitive processing, and final label assignment.

### Components/Axes

1. **Left Section (Nature Variables → Causal Mechanisms → Pixels)**

- **Nature variables**: Graphical representation with 4 nodes connected by bidirectional edges (suggesting complex interdependencies).

- **Nature causal mechanisms**: Zig-zag waveform symbolizing dynamic, non-linear transformations.

- **Pixels**: Image of two cats interacting with a hanging object (visual input representation).

- **Question**: "self-supervised/unsupervised/anticausal learning?" (annotated with dotted arrow from nature variables).

2. **Right Section (Human Cognition → Label)**

- **Human cognition**: Stylized brain illustration with highlighted regions (prefrontal cortex, hippocampus).

- **Label**: Binary classification matrix with:

- Top row: `[0, 1, ..., 0]` (one-hot encoding)

- Bottom row: `"cat"` (text label)

- **Question**: "supervised/causal learning?" (annotated with dotted arrow from human cognition).

3. **Connecting Elements**

- Arrows show directional flow:

- Nature variables → Causal mechanisms → Pixels

- Pixels → Human cognition → Label

- Dotted arrows indicate alternative pathways/uncertainties.

### Detailed Analysis

- **Nature Variables**: The graph structure implies a system of interrelated environmental factors (e.g., temperature, humidity, light cycles) influencing biological systems.

- **Causal Mechanisms**: The waveform suggests temporal dynamics (e.g., neural spiking patterns, ecological feedback loops) transforming raw variables into sensory inputs.

- **Pixel Representation**: The cat image serves as a concrete example of how abstract variables manifest as visual data (e.g., camera sensor output).

- **Human Cognition**: The brain illustration emphasizes biological pattern recognition, with emphasis on cortical areas involved in visual processing and memory.

- **Label System**: The binary matrix indicates a classification task where "cat" is the positive class (1) among multiple possible categories.

### Key Observations

1. **Ambiguity in Learning Paradigms**: The question marks highlight unresolved debates about whether self-supervised/anticausal learning (left) or supervised/causal learning (right) better models biological/artificial systems.

2. **Multimodal Integration**: The diagram bridges abstract mathematics (graph theory), physics (waveforms), computer vision (pixels), and neuroscience (brain regions).

3. **Label Certainty**: The one-hot encoding suggests probabilistic classification, while the text label confirms categorical certainty.

### Interpretation

This diagram appears to model the interplay between:

1. **Natural Systems**: How environmental variables (nature variables) interact through causal mechanisms to produce observable phenomena (pixels).

2. **Cognitive Processing**: How biological/artificial systems interpret sensory data (pixels) through learned patterns (human cognition).

3. **Labeling Mechanisms**: The transition from sensory input to categorical understanding, questioning whether this requires explicit supervision (causal learning) or can emerge from self-organization (anticausal learning).

The inclusion of both mathematical graphs and biological illustrations suggests an attempt to unify formal computational models with biological plausibility. The "anticausal learning" question mark particularly implies exploration of whether systems can learn meaningful representations without direct causal supervision, challenging traditional machine learning paradigms.

</details>

∼

Eric: Secondly, what about the ratio between the capacity of our classifier and the number of training examples n ? Neural networks often have a number of parameters on the same order of magnitude, or even greater, than the number of training examples [56]. In these cases, such ratio will not tend to zero as n →∞ . So, ERM may be in trouble.

- Irma: That is correct. Neural networks are often over-parametrized, and over-parametrization carries subtle consequences. For instance, consider that we are using the pseudo-inverse to solve an over-parametrized linear least-squares problem, or using SGD to train an over-parametrized neural network. Amongst all the zero training error solutions, these procedures will prefer the solution with the smallest capacity [53, 3]. Unfortunately, spurious correlations and biases are often simpler to detect than the true phenomenon of interest [17, 9, 10, 11]. Therefore, low capacity solutions prefer exploiting those simple but spurious correlations. For instance, think about relying on large green textures to declare the presence of a cow on an image.

Eric:

The cows again!

Irma: Always. Although I can give you a more concrete example. Consider predicting Y e from X e = ( X e 1 , X e 2 ), where:

$$Y ^ { e } \leftarrow 1 0 ^ { 6 } \cdot X _ { 1 } ^ { e } \alpha _ { 1 } , \\ X _ { 2 } ^ { e } \leftarrow 1 0 ^ { 6 } \cdot Y ^ { e } \alpha _ { 2 } ^ { \top } \cdot e ,$$

$$e ,$$

the coefficients satisfy ‖ α 1 ‖ = ‖ α 2 ‖ = 1, the training environments are e = { 1 , 10 } , and we have n samples for the 2 n -dimensional input X . In this over-parametrized problem, the invariant regression from the cause X 1 requires large capacity, while the spurious regression from the effect X 2 requires low capacity.

Eric: Oh! Then, the inductive bias of SGD would prefer to exploit the spurious correlation for prediction. In a nutshell, a deficit of training examples forces us into regularization, and regularization comes with the danger of absorbing easy spurious correlations. But, methods based on invariance should realize that, after removing the nuisance variable X 2 , the regression from X 1 is invariant, and thus interesting for out-ofdistribution generalization. This means that invariance could sometimes help fight the issues of small data and over-parametrization. Neat!

∼

- Irma: As a final obstacle to ERM, we have the case where the capacity of our hypothesis class is insufficient to solve the learning problem at hand.

- Eric: This sounds related to the previous point, in the sense that a model with low capacity will stick to spurious correlations, if these are easier to capture.

- Irma: That is correct, although I can see an additional problem arising from insufficient capacity. For instance, the only linear invariant prediction rule to estimate the quadratic Y e = ( X e ) 2 , where X e ∼ Gaussian(0 , e ), is the null predictor Y = 0 · X . Even though X is the only, causal, and invariance-eliciting covariate!