## A continual learning survey: Defying forgetting in classification tasks

Matthias De Lange, Rahaf Aljundi, Marc Masana, Sarah Parisot, Xu Jia, Aleˇ s Leonardis, Gregory Slabaugh, Tinne Tuytelaars

Abstract -Artificial neural networks thrive in solving the classification problem for a particular rigid task, acquiring knowledge through generalized learning behaviour from a distinct training phase. The resulting network resembles a static entity of knowledge, with endeavours to extend this knowledge without targeting the original task resulting in a catastrophic forgetting. Continual learning shifts this paradigm towards networks that can continually accumulate knowledge over different tasks without the need to retrain from scratch. We focus on task incremental classification, where tasks arrive sequentially and are delineated by clear boundaries. Our main contributions concern (1) a taxonomy and extensive overview of the state-of-the-art; (2) a novel framework to continually determine the stability-plasticity trade-off of the continual learner; (3) a comprehensive experimental comparison of 11 state-of-the-art continual learning methods and 4 baselines. We empirically scrutinize method strengths and weaknesses on three benchmarks, considering Tiny Imagenet and large-scale unbalanced iNaturalist and a sequence of recognition datasets. We study the influence of model capacity, weight decay and dropout regularization, and the order in which the tasks are presented, and qualitatively compare methods in terms of required memory, computation time and storage.

Index Terms -Continual Learning, lifelong learning, task incremental learning, catastrophic forgetting, classification, neural networks

✦

## 1 INTRODUCTION

In recent years, machine learning models have been reported to exhibit or even surpass human level performance on individual tasks, such as Atari games [1] or object recognition [2]. While these results are impressive, they are obtained with static models incapable of adapting their behavior over time. As such, this requires restarting the training process each time new data becomes available. In our dynamic world, this practice quickly becomes intractable for data streams or may only be available temporarily due to storage constraints or privacy issues. This calls for systems that adapt continually and keep on learning over time.

Human cognition exemplifies such systems, with a tendency to learn concepts sequentially. Revisiting old concepts by observing examples may occur, but is not essential to preserve this knowledge, and while humans may gradually forget old information, a complete loss of previous knowledge is rarely attested [3].

By contrast, artificial neural networks cannot learn in this manner: they suffer from catastrophic forgetting of old concepts as new ones are learned [3]. To circumvent this problem, research on artificial neural networks has focused mostly on static tasks, with usually shuffled data to ensure i.i.d. conditions, and vast performance increase by revisiting training data over multiple epochs.

Continual Learning studies the problem of learning from an infinite stream of data, with the goal of gradually extending acquired knowledge and using it for future learning [4].

- M. De Lange, R. Aljundi and T. Tuytelaars are with the Center for Processing Speech and Images, Department Electrical Engineering, KU Leuven. Correspondence to matthias.delange@kuleuven.be

- M. Masana is with Computer Vision Center, UAB.

- S. Parisot, X. Jia, A. Leonardis, G. Slabaugh are with Huawei.

Manuscript received 6 Sep. 2019; revised 28 Jul. 2020. Log number TPAMI-2020-02-0259.

Data can stem from changing input domains (e.g. varying imaging conditions) or can be associated with different tasks (e.g. fine-grained classification problems). Continual learning is also referred to as lifelong learning [4], [5], [6], [7], [8], [9], sequential learning [10], [11], [12] or incremental learning [13], [14], [15], [16], [17], [18], [19]. The main criterion is the sequential nature of the learning process, with only a small portion of input data from one or few tasks available at once. The major challenge is to learn without catastrophic forgetting: performance on a previously learned task or domain should not significantly degrade over time as new tasks or domains are added. This is a direct result of a more general problem in neural networks, namely the stability-plasticity dilemma [20], with plasticity referring to the ability of integrating new knowledge, and stability retaining previous knowledge while encoding it. Albeit a challenging problem, progress in continual learning has led to real-world applications starting to emerge [21], [22], [23].

Scope. To keep focus, we limit the scope of our study in two ways. First, we only consider the task incremental setting, where data arrives sequentially in batches and one batch corresponds to one task, such as a new set of categories to be learned. In other words, we assume for a given task, all data becomes available simultaneously for offline training. This enables learning for multiple epochs over all its training data, repeatedly shuffled to ensure i.i.d. conditions. Importantly, data from previous or future tasks cannot be accessed. Optimizing for a new task in this setting will result in catastrophic forgetting, with significant drops in performance for old tasks, unless special measures are taken. The efficacy of those measures, under different circumstances, is exactly what this paper is about. Furthermore, task incremental learning confines the scope to a multi-head con-

figuration, with an exclusive output layer or head for each task. This is in contrast to the even more challenging class incremental setup with all tasks sharing a single head. This introduces additional interference in learning and increases the number of output nodes to choose from. Instead, we assume it is known which task a given sample belongs to.

Second, we focus on classification problems only, as classification is arguably one of the most established tasks for artificial neural networks, with good performance using relatively simple, standard and well understood network architectures. The setup is described in more detail in Section 2, with Section 7 discussing open issues towards tackling a more general setting.

Motivation. There is limited consensus in the literature on experimental setups and datasets to be used. Although papers provide evidence for at least one specific setting of model architecture, combination of tasks and hyperparameters under which the proposed method reduces forgetting and outperforms alternative approaches, there is no comprehensive experimental comparison performed to date.

Findings. To establish a fair comparison, the following question needs to be considered: 'How can the trade-off between stability and plasticity be set in a consistent manner, using only data from the current task?' For this purpose, we propose a generalizing framework that dynamically determines stability and plasticity for continual learning methods. Within this principled framework, we scrutinize 11 representative approaches in the spectrum of continual learning methods, and find method-specific preferences for configurations regarding model capacity and combinations with typical regularization schemes such as weight decay or dropout. All representative methods generalize well to the nicely balanced small-scale Tiny Imagenet setup. However, this no longer holds when transferring to large-scale realworld datasets, such as the profoundly unbalanced iNaturalist, and especially in the unevenly distributed RecogSeq sequence with highly dissimilar recognition tasks. Nevertheless, rigorous methods based on isolation of parameters seem to withstand generalizing to these challenging settings. Additionally, we find the ordering in which tasks are presented to have insignificant impact on performance. A summary of our main findings can be found in Section 6.8.

Related work. Continual learning has been the subject of several recent surveys [7], [24], [25], [26]. Parisi et al. [7] describe a wide range of methods and approaches, yet without an empirical evaluation or comparison. In the same vein, Lesort et al. [24] descriptively survey and formalize continual learning, but with an emphasis on dynamic environments for robotics. Pf¨ ulb and Gepperth [25] perform an empirical study on catastrophic forgetting and develop a protocol for setting hyperparameters and method evaluation. However, they only consider two methods, namely Elastic Weight Consolidation (EWC) [27] and Incremental Moment Matching (IMM) [28]. Also, their evaluation is limited to small datasets. Similarly, Kemker et al. [29] compare only three methods, EWC [27], PathNet [30] and GeppNet [15]. The experiments are performed on a simple fully connected network, including only 3 datasets: MNIST, CUB200 and AudioSet. Farquhar and Gal [26] survey continual learning evaluation strategies and highlight shortcomings of the common practice to use one dataset with different pixel permutations (typically permuted MNIST [31]). In addition, they stress that task incremental learning under multi-head settings hides the true difficulty of the problem. While we agree with this statement, we still opted for a multi-head based survey, as it allows comparing existing methods without major modifications. They also propose a couple of desiderata for evaluating continual learning methods. However, their study is limited to the fairly easy MNIST and Fashion-MNIST datasets with far from realistic data encountered in practical applications. None of these works systematically addresses the questions raised above.

Paper overview. First, we describe in Section 2 the widely adopted task incremental setting for this paper. Next, Section 3 surveys different approaches towards continual learning, structuring them into three main groups: replay, regularization-based and parameter isolation methods. An important issue when aiming for a fair method comparison is the selection of hyperparameters, with in particular the learning rate and the stability-plasticity trade-off. Hence, Section 4 introduces a novel framework to tackle this problem without requiring access to data from previous or future tasks. We provide details on the methods selected for our experimental evaluation in Section 5, and describe the actual experiments, main findings, and qualitative comparison in Section 6. We look further ahead in Section 7, highlighting additional challenges in the field, moving beyond the task incremental setting towards true continual learning. Then, we emphasize the relation with other fields in Section 8, and conclude in Section 9. Finally, implementation details and further experimental results are provided in supplemental material. Code is publicly available for reproducibility 1 .

## 2 THE TASK INCREMENTAL LEARNING SETTING

Due to the general difficulty and variety of challenges in continual learning, many methods relax the general setting to an easier task incremental one. The task incremental setting considers a sequence of tasks, receiving training data of just one task at a time to perform training until convergence. Data ( X ( t ) , Y ( t ) ) is randomly drawn from distribution D ( t ) , with X ( t ) a set of data samples for task t , and Y ( t ) the corresponding ground truth labels. The goal is to control the statistical risk of all seen tasks given limited or no access to data ( X ( t ) , Y ( t ) ) from previous tasks t < T :

<!-- formula-not-decoded -->

with loss function /lscript , parameters θ , T the number of tasks seen so far, and f t representing the network function for task t . The definition can be extended to zero-shot learning for t > T , but mainly remains future work in the field as discussed in Section 7. For the current task T , the statistical risk can be approximated by the empirical risk

<!-- formula-not-decoded -->

1. Code available at: HTTPS://GITHUB.COM/MATTDL/CLSURVEY

The main research focus in task incremental learning aims to determine optimal parameters θ ∗ by optimizing (1). However, data is no longer available for old tasks, impeding evaluation of statistical risk for the new parameter values.

/negationslash

The concept 'task' refers to an isolated training phase with a new batch of data, belonging to a new group of classes, a new domain, or a different output space. Similar to [32], a finer subdivision into three categories emerges based on the marginal output and input distributions P ( Y ( t ) ) and P ( X ( t ) ) of a task t , with P ( X ( t ) ) = P ( X ( t +1) ) . First, class incremental learning defines an output space for all observed class labels {Y ( t ) } ⊂ {Y ( t +1) } with P ( Y ( t ) ) = P ( Y ( t +1) ) . Task incremental learning defines {Y ( t ) } /negationslash = {Y ( t +1) } , which additionally requires task label t to indicate the isolated output nodes Y ( t ) for current task t . Incremental domain learning defines {Y ( t ) } = {Y ( t +1) } with P ( Y ( t ) ) = P ( Y ( t +1) ) . Additionally, data incremental learning [33] defines a more general continual learning setting for any data stream without notion of task, class or domain. Our experiments focus on the task incremental learning setting for a sequence of classification tasks.

/negationslash

## 3 CONTINUAL LEARNING APPROACHES

Early works observed the catastrophic interference problem when sequentially learning examples of different input patterns. Several directions have been explored, such as reducing representation overlap [34], [35], [36], [37], [38], replaying samples or virtual samples from the past [8], [39] or introducing dual architectures [40], [41], [42]. However, due to resource restrictions at the time, these works mainly considered few examples (in the order of tens) and were based on specific shallow architectures.

With the recent increased interest in neural networks, continual learning and catastrophic forgetting also received more attention. The influence of dropout [43] and different activation functions on forgetting have been studied empirically for two sequential tasks [31] , and several works studied task incremental learning from a theoretical perspective [44], [45], [46], [47].

More recent works have addressed continual learning with longer task sequences and a larger number of examples. In the following, we will review the most important works. We distinguish three families, based on how task specific information is stored and used throughout the sequential learning process:

- Replay methods

- Regularization-based methods

- Parameter isolation methods

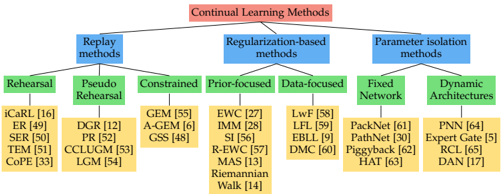

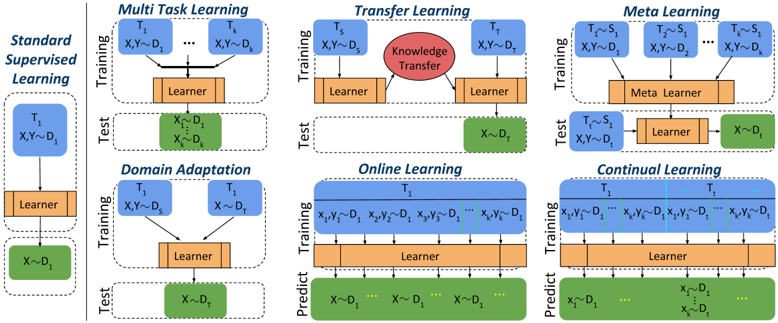

Note that our categorization overlaps to some extent with that introduced in previous work [26], [48]. However, we believe it offers a more general overview and covers most existing works. A summary can be found in Figure 1.

## 3.1 Replay methods

This line of work stores samples in raw format or generates pseudo-samples with a generative model. These previous task samples are replayed while learning a new task to alleviate forgetting. They are either reused as model inputs

Fig. 1: A tree diagram illustrating the different continual learning families of methods and the different branches within each family. The leaves enlist example methods.

<details>

<summary>Image 1 Details</summary>

### Visual Description

\n

## Diagram: Continual Learning Methods

### Overview

The image is a hierarchical diagram illustrating the categorization of Continual Learning Methods. The diagram is structured as a tree, branching out from a central title into three main categories, which are further subdivided into more specific methods. The diagram uses color-coding to distinguish between different levels of the hierarchy.

### Components/Axes

The diagram consists of the following components:

* **Root Node:** "Continual Learning Methods" (in a red rectangular box)

* **Level 1 Branches:** Three main categories:

* "Replay methods" (in a blue rectangular box)

* "Regularization-based methods" (in a blue rectangular box)

* "Parameter isolation methods" (in a blue rectangular box)

* **Level 2 Branches (under Replay methods):**

* "Rehearsal" (in a green rectangular box)

* "Pseudo Rehearsal" (in a green rectangular box)

* "Constrained" (in a green rectangular box)

* **Level 2 Branches (under Regularization-based methods):**

* "Prior-focused" (in a green rectangular box)

* "Data-focused" (in a green rectangular box)

* **Level 2 Branches (under Parameter isolation methods):**

* "Fixed Network" (in a green rectangular box)

* "Dynamic Architectures" (in a green rectangular box)

* **Level 3 Branches (Specific Methods):** Listed under each Level 2 branch, in yellow rectangular boxes, with associated numerical identifiers (e.g., "[16]", "[49]").

### Detailed Analysis or Content Details

Here's a breakdown of the specific methods listed under each category:

**Replay methods:**

* iCaRL [16]

* ER [49]

* SER [50]

* TEM [51]

* CoPe [33]

* DGR [12]

* PR [52]

* CCLUGM [53]

* LGM [54]

**Regularization-based methods:**

* GEM [55]

* A-GEM [6]

* GSS [48]

* EWC [27]

* IMM [28]

* SI [56]

* R-EWC [57]

* MAS [13]

* Riemannian Walk [14]

* LwF [58]

* LFL [59]

* EBLL [9]

* DMC [60]

**Parameter isolation methods:**

* PackNet [61]

* PathNet [30]

* Piggyback [62]

* HAT [63]

* PNN [64]

* Expert Gate [5]

* RCL [65]

* DAN [17]

### Key Observations

The diagram provides a structured overview of the landscape of continual learning methods. The categorization into Replay, Regularization, and Parameter Isolation methods offers a clear way to understand the different approaches to addressing the challenges of continual learning. The numerical identifiers associated with each method likely refer to publications or research papers.

### Interpretation

The diagram illustrates the diverse range of techniques developed to tackle the problem of continual learning, where a model learns new tasks without forgetting previously learned ones. The three main categories represent fundamentally different strategies:

* **Replay methods** attempt to mitigate forgetting by replaying examples from previous tasks.

* **Regularization-based methods** aim to constrain the model's updates to preserve knowledge from previous tasks.

* **Parameter isolation methods** seek to allocate specific parameters or modules to different tasks, preventing interference.

The branching structure suggests a hierarchical relationship between these methods, with more specific techniques falling under broader categories. The presence of numerical identifiers indicates that these are established methods with associated research literature. The diagram serves as a valuable resource for researchers and practitioners in the field of continual learning, providing a concise overview of the key approaches and their relationships. The diagram does not provide any quantitative data or performance comparisons, it is purely a categorization scheme.

</details>

for rehearsal, or to constrain optimization of the new task loss to prevent previous task interference.

Rehearsal methods [16], [49], [50], [51] explicitly retrain on a limited subset of stored samples while training on new tasks. The performance of these methods is upper bounded by joint training on previous and current tasks. Most notable is class incremental learner iCaRL [16], storing a subset of exemplars per class, selected to best approximate class means in the learned feature space. At test time, the class means are calculated for nearest-mean classification based on all exemplars. In data incremental learning, Rolnick et al. [49] suggest reservoir sampling to limit the number of stored samples to a fixed budget assuming an i.i.d. data stream. Continual Prototype Evolution (CoPE) [33] combines the nearest-mean classifier approach with an efficient reservoir-based sampling scheme. Additional experiments on rehearsal for class incremental learning are provided in [66].

While rehearsal might be prone to overfitting the subset of stored samples and seems to be bounded by joint training, constrained optimization is an alternative solution leaving more leeway for backward/forward transfer. As proposed in GEM [55] under the task incremental setting, the key idea is to only constrain new task updates to not interfere with previous tasks. This is achieved through projecting the estimated gradient direction on the feasible region outlined by previous task gradients through first order Taylor series approximation. A-GEM [6] relaxes the problem to project on one direction estimated by randomly selected samples from a previous task data buffer. Aljundi et al. [48] extend this solution to a pure online continual learning setting without task boundaries, proposing to select sample subsets that maximally approximate the feasible region of historical data.

In the absence of previous samples, pseudo rehearsal is an alternative strategy used in early works with shallow neural networks. The output of previous model(s) given random inputs are used to approximate previous task samples [39]. With deep networks and large input vectors (e.g. full resolution images), random input cannot cover the input space [52]. Recently, generative models have shown the ability to generate high quality images [67], [68], opening up possibilities to model the data generating distribution and retrain on generated examples [12]. However, this also adds complexity in training generative models continually, with extra care to balance retrieved examples and avoid mode collapse.

The final version of record is available at http://dx.doi.org/10.1109/TPAMI.2021.3057446

## 3.2 Regularization-based methods

## 4 CONTINUAL HYPERPARAMETER FRAMEWORK

This line of works avoids storing raw inputs, prioritizing privacy, and alleviating memory requirements. Instead, an extra regularization term is introduced in the loss function, consolidating previous knowledge when learning on new data. We can further divide these methods into data-focused and prior-focused methods.

## 3.2.1 Data-focused methods

The basic building block in data-focused methods is knowledge distillation from a previous model (trained on a previous task) to the model being trained on the new data. Silver et al. [8] first proposed to use previous task model outputs given new task input images, mainly for improving new task performance. It has been re-introduced by LwF [58] to mitigate forgetting and transfer knowledge, using the previous model output as soft labels for previous tasks. Other works [59], [60] have been introduced with related ideas, however, it has been shown that this strategy is vulnerable to domain shift between tasks [5]. In an attempt to overcome this issue, Triki et al. [9] facilitate incremental integration of shallow autoencoders to constrain task features in their corresponding learned low dimensional space.

## 3.2.2 Prior-focused methods

To mitigate forgetting, prior-focused methods estimate a distribution over the model parameters, used as prior when learning from new data. Typically, importance of all neural network parameters is estimated, with parameters assumed independent to ensure feasibility. During training of later tasks, changes to important parameters are penalized. Elastic weight consolidation (EWC) [27] was the first to establish this approach. Variational Continual Learning (VCL) introduced a variational framework for this family [69], sprouting a body of Bayesian based works [70], [71]. Zenke et al. [56] estimate importance weights online during task training. Aljundi et al. [13] suggest unsupervised importance estimation, allowing increased flexibility and online user adaptation as in [21]. Further work extends this to task free settings [72].

## 3.3 Parameter isolation methods

This family dedicates different model parameters to each task, to prevent any possible forgetting. When no constraints apply to architecture size, one can grow new branches for new tasks, while freezing previous task parameters [64], [65], or dedicate a model copy to each task [5]. Alternatively, the architecture remains static, with fixed parts allocated to each task. Previous task parts are masked out during new task training, either imposed at parameters level [30], [61], or unit level [63]. These works typically require a task oracle, activating corresponding masks or task branch during prediction. Therefore, they are restrained to a multihead setup, incapable to cope with a shared head between tasks. Expert Gate [5] avoids this problem through learning an auto-encoder gate.

Methods tackling the continual learning problem typically involve extra hyperparameters to balance the stabilityplasticity trade-off. These hyperparameters are in many cases found via a grid search, using held-out validation data from all tasks. However, this inherently violates the main assumption in continual learning, namely no access to previous task data. This may lead to overoptimistic results, that cannot be reproduced in a true continual learning setting. As it is of our concern in this survey to provide a comprehensive and fair study on the different continual learning methods, we need to establish a principled framework to set hyperparameters without violating the continual learning setting. Besides a fair comparison over the existing approaches, this general strategy dynamically determines the stability-plasticity trade-off, and therefore extends its value to real-world continual learners.

First, our proposed framework assumes only access to new task data to comply with the continual learning paradigm. Next, the framework aims at the best trade-off in stability and plasticity in a dynamic fashion. For this purpose, the set of hyperparameters H related to forgetting should be identified for the continual learning method, as we do for all compared methods in Section 5. The hyperparameters are initialized to ensure minimal forgetting of previous tasks. Then, if a predefined threshold on performance is not achieved, hyperparameter values are decayed until reaching the desired performance on the new task validation data. Algorithm 1 illustrates the two main phases of our framework, to be repeated for each new task:

Maximal Plasticity Search first finetunes model copy θ t ′ on new task data, given previous model parameters θ t . The learning rate η ∗ is obtained via a coarse grid search, which aims for highest accuracy A ∗ on a held-out validation set from the new task. The accuracy A ∗ represents the best accuracy that can be achieved while disregarding the previous tasks.

Stability Decay. In the second phase we train θ t with the acquired learning rate η ∗ using the considered continual learning method. Method specific hyperparameters are set to their highest values to ensure minimum forgetting. We define threshold p indicating tolerated drop in new task performance compared to finetuning A ∗ . When this threshold is not met, we decrease hyperparameter values in H by scalar multiplication with decay factor α , and subsequently repeat this phase. This corresponds to increasing model plasticity in order to reach the desired performance threshold. To avoid redundant stability decay iterations, decayed hyperparameters are propagated to later tasks.

## 5 COMPARED METHODS

In Section 6, we carry out a comprehensive comparison between representative methods from each of the three families of continual learning approaches introduced in Section 3. For clarity, we first provide a brief description of the selected methods, highlighting their main characteristics.

## 5.1 Replay methods

iCaRL [16] was the first replay method, focused on learning in a class-incremental way. Assuming fixed allocated

| Algorithm 1 Continual Hyperparameter Selection Frame- work | | | |

|-----------------------------------------------------------------|--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|----|----|

| input H p ∈ [0 , 1] Ψ coarse require θ t require CLM // Maximal | hyperparameter set, α ∈ [0 , 1] decaying factor, accuracy drop margin, D t +1 new task data, learning rate grid previous task model parameters continual learning method Plasticity Search | | |

| 1: | A ∗ = 0 | | |

| 2: | for η ∈ Ψ do | | |

| 3: 4: | A ← Finetune ( D t +1 , η ; θ t ) /triangleright Finetuning accuracy if A > A ∗ then | | |

| 5: A ∗ , η ∗ ← A, η | /triangleright Update best values | | |

| 6: do | | | |

| 7: | A ← CLM D t +1 , η ∗ ; θ t ) if A < (1 - p ) A ∗ then | | |

| 10: | H← α ·H /triangleright Hyperparameter | | |

| | ∗ | | |

| | A < (1 - p ) A | | |

| 9: | | | |

| | decay | | |

| while | | | |

| | | | ( |

| | | 8: | |

memory, it selects and stores samples (exemplars) closest to the feature mean of each class. During training, the estimated loss on new classes is minimized, along with the distillation loss between targets obtained from previous model predictions and current model predictions on the previously learned classes. As the distillation loss strength correlates with preservation of the previous knowledge, this hyperparameter is optimized in our proposed framework. In our study, we consider the task incremental setting, and therefore implement iCarl also in a multi-head fashion to perform a fair comparison with other methods.

GEM [55] exploits exemplars to solve a constrained optimization problem, projecting the current task gradient in a feasible area, outlined by the previous task gradients. The authors observe increased backward transfer by altering the gradient projection with a small constant γ ≥ 0 , constituting H in our framework.

The major drawback of replay methods is limited scalability over the number of classes, requiring additional computation and storage of raw input samples. Although fixing memory limits memory consumption, this also deteriorates the ability of exemplar sets to represent the original distribution. Additionally, storing these raw input samples may also lead to privacy issues.

## 5.2 Regularization-based methods

This survey strongly focuses on regularization-based methods, comparing seven methods in this family. The regularization strength correlates to the amount of knowledge retention, and therefore constitutes H in our hyperparameter framework.

Learning without Forgetting (LwF) [58] retains knowledge of preceding tasks by means of knowledge distillation [73]. Before training the new task, network outputs for the new task data are recorded, and are subsequently used during training to distill prior task knowledge. However, the success of this method depends heavily on the new task data and how strong it is related to prior tasks. Distribution shifts with respect to the previously learned tasks can result in a gradual error build-up to the prior tasks as more dissimilar tasks are added [5], [58]. This error build-up also applies in a class-incremental setup, as shown in [16]. Another drawback resides in the additional overhead to forward all new task data, and storing the outputs. LwF is specifically designed for classification, but has also been applied to other problems, such as object detection [18].

Encoder Based Lifelong Learning (EBLL) [9] extends LwF by preserving important low dimensional feature representations of previous tasks. For each task, an undercomplete autoencoder is optimized end-to-end, projecting features on a lower dimensional manifold. During training, an additional regularization term impedes the current feature projections to deviate from previous task optimal ones. Although required memory grows linearly with the number of tasks, autoencoder size constitutes only a small fraction of the backbone network. The main computational overhead occurs in autoencoder training, and collecting feature projections for the samples in each optimization step.

Elastic Weight Consolidation (EWC) [27] introduces network parameter uncertainty in the Bayesian framework [74]. Following sequential Bayesian estimation, the old posterior of previous tasks t < T constitutes the prior for new task T , founding a mechanism to propagate old task importance weights. The true posterior is intractable, and is therefore estimated using a Laplace approximation with precision determined by the Fisher Information Matrix (FIM). Near a minimum, this FIM shows equivalence to the positive semi-definite second order derivative of the loss [75], and is in practice typically approximated by the empirical FIM to avoid additional backward passes [76]:

<!-- formula-not-decoded -->

with importance weight Ω T k calculated after training task T . Although originally requiring one FIM per task, this can be resolved by propagating a single penalty [77]. Further, the FIM is approximated after optimizing the task, inducing gradients close to zero, and hence very little regularization. This is inherently coped with in our framework, as the regularization strength is initially very high and lowered only to decay stability. Variants of EWC are proposed to address these issues in [78], [57] and [14].

Synaptic Intelligence (SI) [56] breaks the EWC paradigm of determining the new task importance weights Ω T k in a separate phase after training. Instead, they maintain an online estimate ω T during training to eventually attain

<!-- formula-not-decoded -->

with ∆ θ t k = θ t k -θ t -1 k the task-specific parameter distance, and damping parameter ξ avoiding division by zero. Note that the accumulated importance weights Ω T k are still only updated after training task T as in EWC. Further, the knife cuts both ways for efficient online calculation. First, stochastic gradient descent incurs noise in the approximated gradient during training, and therefore the authors state importance weights tend to be overestimated. Second, catastrophic forgetting in a pretrained network becomes

inevitable, as importance weights can't be retrieved. In another work, Riemannian Walk [14] combines the SI path integral with an online version of EWC to measure parameter importance.

Memory Aware Synapses (MAS) [13] redefines the parameter importance measure to an unsupervised setting. Instead of calculating gradients of the loss function L as in (3), the authors obtain gradients of the squared L 2 -norm of the learned network output function f T :

<!-- formula-not-decoded -->

Previously discussed methods require supervised data for the loss-based importance weight estimations, and are therefore confined to available training data. By contrast, MAS enables importance weight estimation on an unsupervised held-out dataset, hence capable of user-specific data adaptation.

Incremental Moment Matching (IMM) [28] estimates Gaussian posteriors for task parameters, in the same vein as EWC, but inherently differs in its use of model merging. In the merging step, the mixture of Gaussian posteriors is approximated by a single Gaussian distribution, i.e. a new set of merged parameters θ 1: T and corresponding covariances Σ 1: T . Although the merging strategy implies a single merged model for deployment, it requires storing models during training for each learned task. In their work, two methods for the merge step are proposed: mean-IMM and mode-IMM . In the former, weights θ k of task-specific networks are averaged following the weighted sum:

<!-- formula-not-decoded -->

with α t k the mixing ratio of task t , subject to ∑ T t α t k = 1 . Alternatively, the second merging method mode-IMM aims for the mode of the Gaussian mixture, with importance weights obtained from (3):

<!-- formula-not-decoded -->

When two models converge to a different local minimum due to independent initialization, simply averaging the models might result in increased loss, as there are no guarantees for a flat or convex loss surface between the two points in parameter space [79]. Therefore, IMM suggests three transfer techniques aiming for an optimal solution in the interpolation of the task-specific models: i) WeightTransfer initializes the new task network with previous task parameters; ii) Drop-Transfer is a variant of dropout [80] with previous task parameters as zero point; iii) L2-transfer is a variant of L2-regularization, again with previous task parameters redefining the zero point. In this study, we compare mean-IMM and mode-IMM with both weight-transfer and L2-transfer. As mean-IMM is consistently outperformed by mode-IMM , we refer for its full results to appendix.

## 5.3 Parameter isolation methods

This family of methods isolates parameters for specific tasks and can guarantee maximal stability by fixing the parameter subsets of previous tasks. Although this refrains stability decay, the capacity used for the new task can be minimized instead to avoid capacity saturation and ensuring stable learning for future tasks.

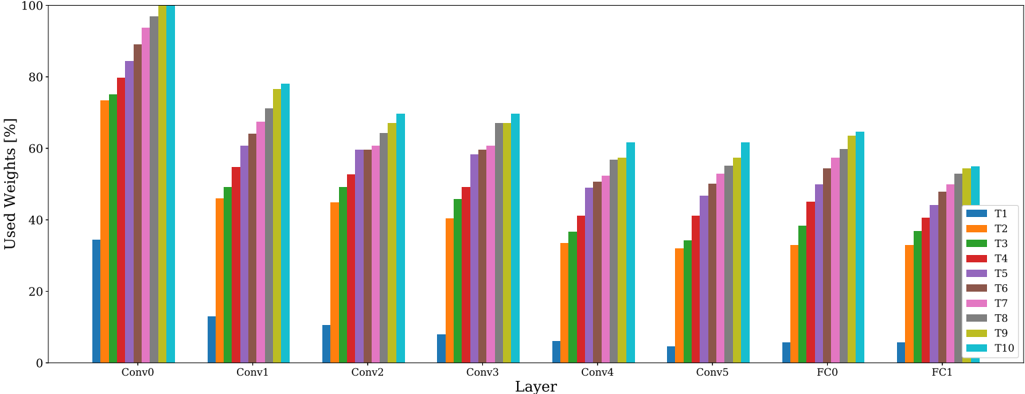

PackNet [61] iteratively assigns parameter subsets to consecutive tasks by constituting binary masks. For this purpose, new tasks establish two training phases. First, the network is trained without altering previous task parameter subsets. Subsequently, a portion of unimportant free parameters are pruned, measured by lowest magnitude. Then, the second training round retrains this remaining subset of important parameters. The pruning mask preserves task performance as it ensures to fix the task parameter subset for future tasks. PackNet allows explicit allocation of network capacity per task, and therefore inherently limits the total number of tasks. H contains the per-layer pruning fraction.

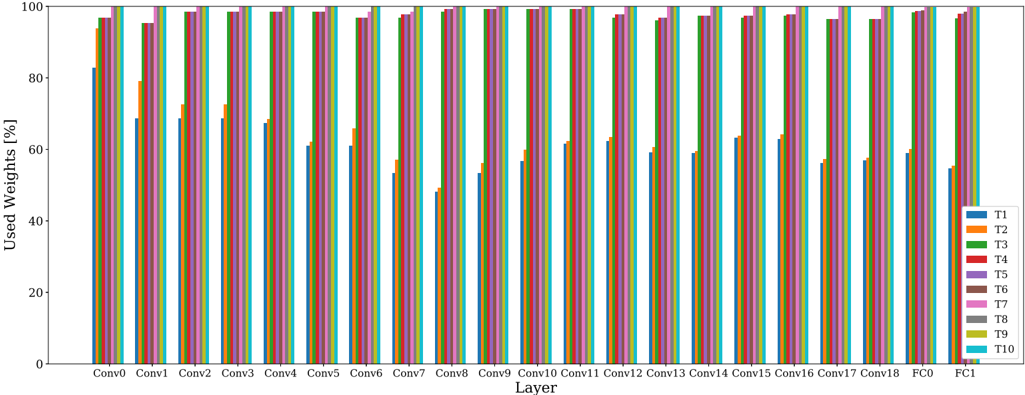

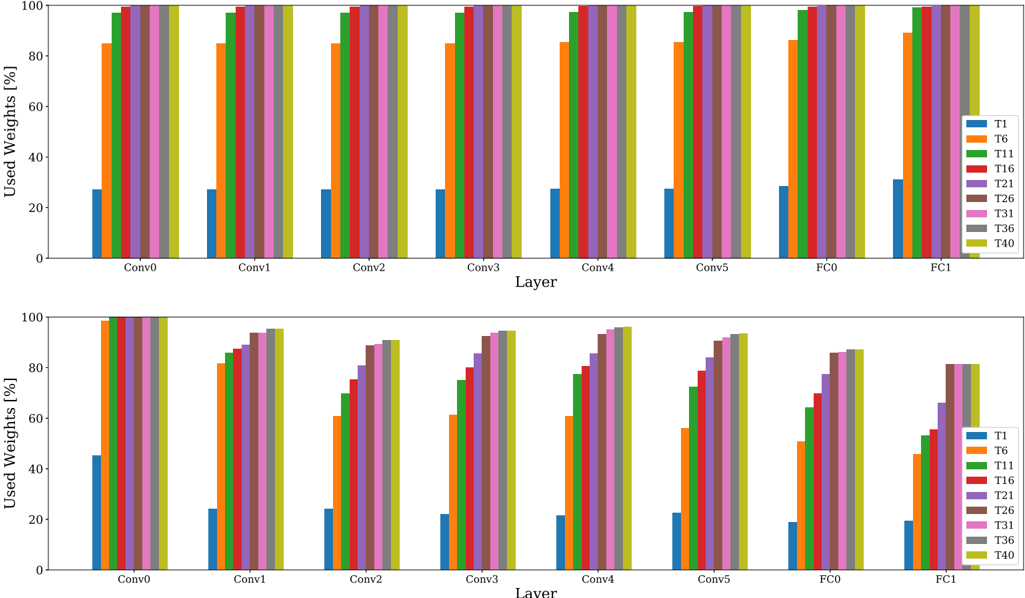

HAT [63] requires only one training phase, incorporating task-specific embeddings for attention masking. The perlayer embeddings are gated through a Sigmoid to attain unit-based attention masks in the forward pass. The Sigmoid slope is rectified through training of each epoch, initially allowing mask modifications and culminating into near-binary masks. To facilitate capacity for further tasks, a regularization term imposes sparsity on the new task attention mask. Core to this method is constraining parameter updates between two units deemed important for previous tasks, based on the attention masks. Both regularization strength and Sigmoid slope are considered in H . We only report results on Tiny Imagenet as difficulties regarding asymmetric capacity and hyperparameter sensitivity prevent using HAT for more difficult setups [81]. We refer to Appendix B.5 and B.6 for a detailed analysis on capacity usage of parameter isolation methods.

## 6 EXPERIMENTS

In this section we first discuss the experimental setup in Section 6.1, followed by a comparison of all the methods on a common BASE model in Section 6.2. The effects of changing the capacity of this model are discussed in Section 6.3. Next, in Section 6.4 we look at the effect of two popular methods for regularization. We continue in Section 6.5, scrutinizing the behaviour of continual learning methods in a realworld setup, abandoning the artificially imposed balance between tasks. In addition, we investigate the effect of the task ordering in both the balanced and unbalanced setup in Section 6.6, and elucidate a qualitative comparison in Table 9 of Section 6.7. Finally, Section 6.8 summarizes our main findings in Table 10.

## 6.1 Experimental Setup

Datasets. We conduct image classification experiments on three datasets, the main characteristics of which are summarized in Table 1. First, we use the Tiny Imagenet dataset [82]. This is a subset of 200 classes from ImageNet [83], rescaled to image size 64 × 64 . Each class contains 500 samples subdivided into training ( 80% ) and validation ( 20% ), and 50 samples for evaluation. In order to construct a balanced dataset, we assign an equal amount of 20 randomly chosen classes to each task in a sequence of 10 consecutive tasks. This task incremental setting allows using an oracle at test

TABLE 1: The balanced Tiny Imagenet, and unbalanced iNaturalist and RecogSeq dataset characteristics.

| | Tiny Imagenet | iNaturalist | RecogSeq |

|-----------------|-----------------|---------------|-------------|

| Tasks | 10 | 10 | 8 |

| Classes/task | 20 | 5 to 314 | 10 to 200 |

| Train data/task | 8k | 0.6k to 66k | 2k to 73k |

| Val. data/task | 1k | 0.1k to 9k | 0.6k to 13k |

| Task selection | random class | supercategory | dataset |

TABLE 2: The RecogSeq dataset sequence details. Note that VOC Actions represents the human action classification subset of the VOC challenge 2012 [85].

| Task | Classes | Samples | Samples | Samples |

|----------------------------------------------------------------------------------------------------------------------------|--------------|------------------------------------|----------------------------------|----------------------------------|

| Oxford Flowers [86] MIT Scenes [87] Caltech-UCSD Birds Stanford Cars [89] FGVC-Aircraft [90] VOC Actions [85] Letters [91] | | Train | Val | Test |

| | 102 67 11 52 | 2040 5360 5994 8144 6666 3102 6850 | 3074 670 2897 4020 1666 1554 580 | 3075 670 2897 4021 1667 1554 570 |

| [88] | 200 | | | |

| | 196 | | | |

| | 100 | | | |

| SVHN [92] | 10 | 73257 | 13016 | 13016 |

time for our evaluation per task, ensuring all tasks are roughly similar in terms of difficulty, size, and distribution, making the interpretation of the results easier.

The second dataset is based on iNaturalist [84], which aims for a more real-world setting with a large number of fine-grained categories and highly imbalanced classes. On top, we impose task imbalance and domain shifts between tasks by assigning 10 super-categories of species as separate tasks. We selected the most balanced 10 super-categories from the total of 14 and only retained categories with at least 100 samples. More details on the statistics for each of these tasks can be found in Table 8. We only utilize the training data, subdivided in training ( 70% ), validation ( 20% ) and evaluation ( 10% ) sets, with all images measuring 800 × 600 .

Thirdly, we adopt a sequence of 8 highly diverse recognition tasks ( RecogSeq ) as in [5] and [13], which also targets distributing an imbalanced number of classes, and reaches beyond object recognition with scene and action recognition. This sequence is composed of 8 consecutive datasets, from fine-grained to coarse classification of objects, actions and scenes, going from flowers, scenes, birds and cars, to aircrafts, actions, letters and digits. Details are provided in Table 2, with validation and evaluation split following [5].

In this survey we scrutinize the effects of different task orderings for both Tiny Imagenet and iNaturalist in Section 6.6. Apart from that section, discussed results on both datasets are performed on a random ordering of tasks, and the fixed dataset ordering for RecogSeq.

Models. We summarize in Table 3 the models used for experiments in this work. Due to the limited size of Tiny Imagenet we can easily run experiments with different models. This allows to analyze the influence of model capacity (Section 6.3) and regularization for each model configuration (Section 6.4). This is important, as the effect of model size and architecture on the performance of different continual learning methods has not received much attention so far. We configure a BASE model, two models with less (SMALL) and more (WIDE) units per layer, and a DEEP model with more layers. The models are based on a VGG configuration [93], but with less parameters due to the small image size. We reduce the feature extractor to comprise 4 max-pooling layers, each preceded by a stack of identical convolutional layers with a consistent 3 × 3 receptive field. The first maxpooling layer is preceded by one conv. layer with 64 filters. Depending on the model, we increase subsequent conv. layer stacks with a multiple of factor 2. The models have a classifier consisting of 2 fully connected layers with each 128 units for the SMALL model and 512 units for the other three models. The multi-head setting imposes a separate fully connected layer with softmax for each task, with the number of outputs defined by the classes in that task. The detailed description can be found in Appendix A.

The size of iNaturalist and RecogSeq impose arduous learning. Therefore, we conduct the experiments solely for AlexNet [94], pretrained on ImageNet.

Evaluation Metrics. To measure performance in the continual learning setup, we evaluate accuracy and forgetting per task, after training each task. We define this measure of forgetting [14] as the difference between the expectation of acquired knowledge of a task, i.e. the accuracy when first learning a task, and the accuracy obtained after training one or more additional tasks. In the figures, we focus on evolution of accuracy for each task as more tasks are added. In the tables, we report average accuracy and average forgetting on the final model, obtained by evaluating each task after learning the entire task sequence.

Baselines. The discussed continual learning methods in Section 5 are compared against several baselines:

- 1) Finetuning starts form the previous task model to optimize current task parameters. This baseline greedily trains each task without considering previous task performance, hence introducing catastrophic forgetting, and representing the minimum desired performance.

- 2) Joint training considers all data in the task sequence simultaneously, hence violating the continual learning setup (indicated with appended ' ∗ ' in reported results). This baseline provides a target reference performance.

For the replay methods we consider two additional finetuning baselines, extending baseline (1) with the benefit of using exemplars:

- 3) Basic rehearsal with Full Memory (R-FM) fully exploits total available exemplar memory R , incrementally dividing equal capacity over all previous tasks. This is a baseline for replay methods defining memory management policies to exploit all memory (e.g. iCaRL).

- 4) Basic rehearsal with Partial Memory (R-PM) preallocates fixed exemplar memory R/ T over all tasks, assuming the amount of tasks T is known beforehand. This is used by methods lacking memory management policies (e.g. GEM).

Replay Buffers. Replay methods (GEM, iCaRL) and corresponding baselines (R-PM, R-FM) can be configured with

TABLE 3: Models used for Tiny Imagenet, iNaturalist and RecogSeq experiments.

| | Model | Feature Extractor | Feature Extractor | Feature Extractor | Classifier (w/o head) | Classifier (w/o head) | Total Parameters | Pretrained | Multi-head |

|-----------------------|---------|---------------------|---------------------|---------------------|-------------------------|-------------------------|--------------------|-------------------|--------------|

| Tiny Imagenet | SMALL | 6 | 4 | 334k | 2 | 279k | 613k | ✗ | /check |

| | BASE | 6 | 4 | 1.15m | 2 | 2.36k | 3.51m | ✗ | /check |

| | WIDE | 6 | 4 | 4.5m | 2 | 4.46m | 8.95m | ✗ | /check |

| | DEEP | 20 | 4 | 4.28m | 2 | 2.36k | 6.64m | ✗ | /check |

| iNaturalist/ RecogSeq | AlexNet | 5 | 3 | 2.47m | 2 (with Dropout) | 54.5m | 57.0m | /check (Imagenet) | /check |

arbitrary replay buffer size. Too large buffers would result in unfair comparison to regularization and parameter isolation methods, with in its limit even holding all data for the previous tasks, as in joint learning, which is not compliant with the continual learning setup. Therefore, we use the memory required to store the BASE model as a basic reference for the amount of exemplars. This corresponds to the additional memory needed to propagate importance weights in the prior-focused methods (EWC, SI, MAS). For Tiny Imagenet, this gives a total replay buffer capacity of 4 . 5 k exemplars. We also experiment with a buffer of 9 k exemplars to examine the influence of increased buffer capacity. Note that the BASE reference implies replay methods to use a significantly different amount of memory than the non-replay based methods; more memory for the SMALL model), and less memory for the WIDE and DEEP models. Comparisons between replay and non-replay based methods should thus only be done for the BASE model. In the following, notation of the methods without replay buffer size refers to the default 4 . 5 k buffer.

Learning details. During training the models are optimized with stochastic gradient descent with a momentum of 0 . 9 . Training lasts for 70 epochs unless preempted by early stopping. Standard AlexNet is configured with dropout for iNaturalist and RecogSeq. For Tiny Imagenet we do not use any form of regularization by default, except for Section 6.4 explicitly scrutinizing regularization influence. The framework we proposed in Section 4 can only be exploited in the case of forgetting-related hyperparameters, in which case we set p = 0 . 2 and α = 0 . 5 . All methods discussed in Section 5 satisfy this requirement, except for IMM with L2 transfer for which we could not identify a specific hyperparameter related to forgetting. For specific implementation details about the continual learning methods we refer to Appendix A.

## 6.2 Comparing Methods on the BASE Network

Tiny Imagenet. We start evaluation of continual learning methods discussed in Section 5 with a comparison using the BASE network, on the Tiny Imagenet dataset, with random ordering of tasks. This provides a balanced setup, making interpretation of results easier.

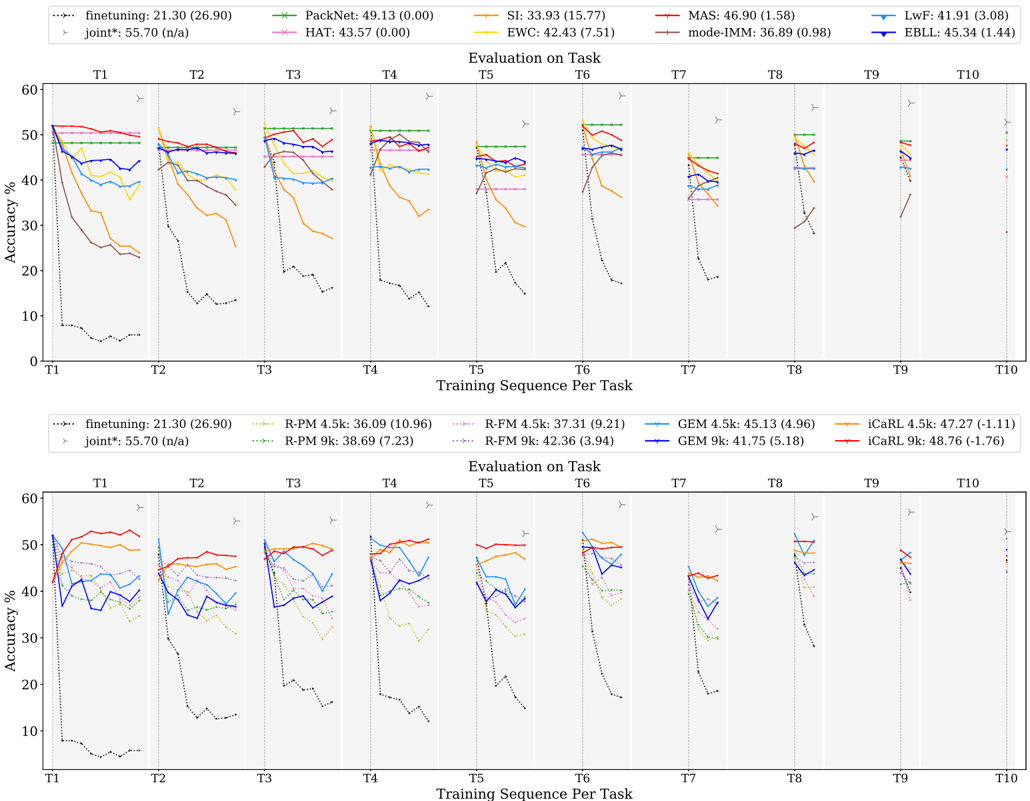

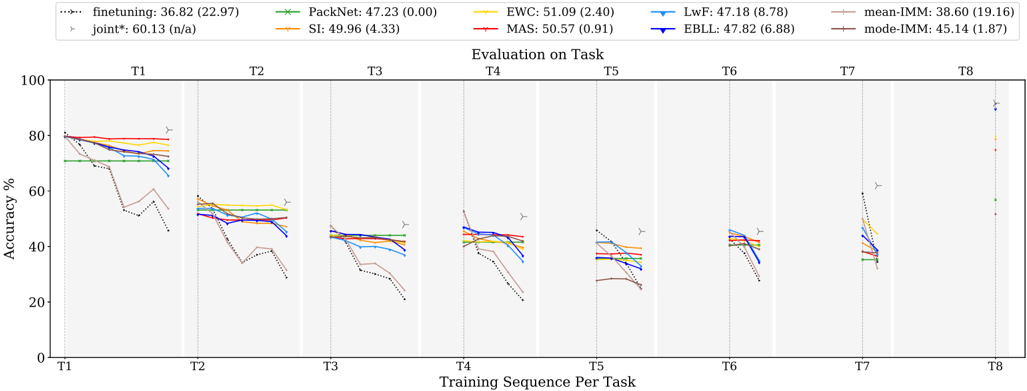

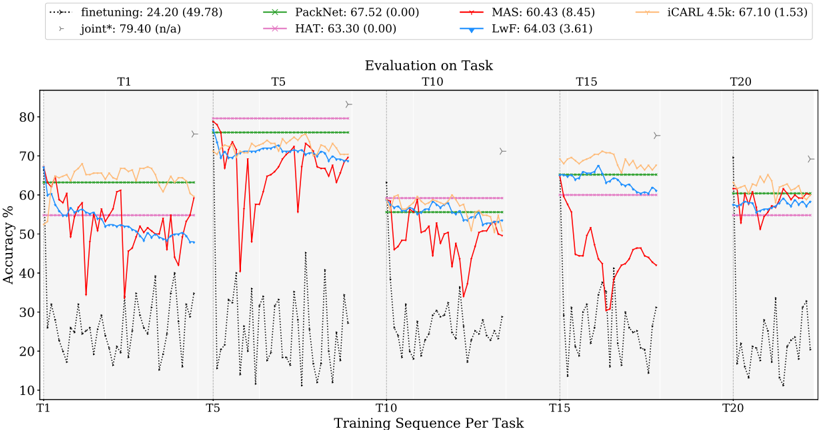

Figure 2 shows results for all three families. Each of these figures consists of 10 subpanels, with each subpanel showing test accuracy evolution for a specific task (e.g. Task 1 for the leftmost panel) as more tasks are added for training. Since the n -th task is added for training only after n steps, curves get shorter as we move to subpanels on the right.

## 6.2.1 Discussion

General observations. As a reference, it is relevant to highlight the soft upper bound obtained when training all tasks jointly. This is indicated by the star symbol in each of the subpanels. For the Tiny Imagenet dataset, all tasks have the same number of classes, same amount of training data and similar level of difficulty, resulting in similar accuracies for all tasks under the joint training scheme. The average accuracy for joint training on this dataset is 55 . 70% , while random guessing would result in 5% .

Further, as reported ample times in literature, the finetuning baseline suffers severely from catastrophic forgetting: initially good results are obtained when learning a task, but as soon as a new task is added, performance drops, resulting in poor average accuracy of only 21 . 30% and 26 . 90% average forgetting.

With 49 . 13% average accuracy, PackNet shows highest overall performance after training all tasks. When learning new tasks, due to compression PackNet only has a fraction of the total model capacity available. Therefore, it typically performs worse on new tasks compared to other methods. However, by fixing task parameters through masking, it allows complete knowledge retention until the end of the task sequence (no forgetting yields flat curves in the figure), resulting in highest accumulation of knowledge. This holds at least when working with long sequences, where forgetting errors gradually build up for other methods.

MAS and iCaRL show competitive results w.r.t. PackNet (resp. 46 . 90% and 47 . 27% ). iCaRL starts with a significantly lower accuracy for new tasks, due to its nearest-neighbour based classification, but improves over time, especially for the first few tasks. Further, doubling replay-buffer size to 9 k enhances iCaRL performance to 48 . 76% .

Regularization-based methods. In prior experiments where we did a grid search over a range of hyperparameters over the whole sequence (rather than using the framework introduced in Section 4), we observed MAS to be remarkably more robust to the choice of hyperparameter values compared to the two related methods EWC and SI. Switching to the continual hyperparameter selection framework described in Section 4, this robustness leads to superior results for MAS with 46 . 90% compared to the other two ( 42 . 43% and 33 . 93% ). Especially improved forgetting stands out ( 1 . 58% vs 7 . 51% and 15 . 77% ). Further, SI underperforms compared to EWC on Tiny Imagenet. We hypothesize this may be due to overfitting we observed when training on the BASE model (see results Appendix B.2). SI inherently constrains parameter importance estimation to the training data only, which is opposed to EWC and MAS able to determine parameter importance both on validation and

training data in a separate phase after task training. Further, mode-IMM catastrophically forgets in evaluation on the first task, but shows transitory recovering through backward transfer in all subsequent tasks. Overall for Tiny Imagenet, IMMdoes not seem competitive with the other strategies for continual learning - especially if one takes into account that they need to store all previous task models, making them much more expensive in terms of storage.

The data-driven methods LwF and EBLL obtain similar results as EWC ( 41 . 91% and 45 . 34% vs 42 . 43% ). EBLL improves over LwF by residing closer to the optimal task representations, lowering average forgetting and improving accuracy. Apart from the first few tasks, curves for these methods are quite flat, indicating low levels of forgetting.

Replay methods. iCaRL starts from the same model as GEM and the regularization-based methods, but uses a feature classifier based on a nearest-neighbor scheme. As indicated earlier, this results in lower accuracies on the first task after training. Remarkably, for about half of the tasks, the iCaRL accuracy increases when learning additional tasks, resulting in a salient negative average forgetting of -1 . 11% . Such level of backward transfer is unusual. After training all ten tasks, a competitive result of 47 . 27% average accuracy can be reported. Comparing iCaRL to its baseline R-FM shows significant improvements over basic rehearsal ( 47 . 27% vs 37 . 31% ). Doubling the size of the replay buffer (iCaRL 9 k) increases performance even more, pushing iCarl closer to PackNet with 48 . 76% average accuracy.

The results of GEM are significantly lower than those of iCaRL ( 45 . 13% vs 47 . 27% ). GEM is originally designed for an online learning setup, while in this comparison each method can exploit multiple epochs for a task. Additional experiments in Appendix B.3 compare GEM sensitivity to the amount of epochs with iCaRL, from which we procure a GEM setup with 5 epochs for all the experiments in this work. Furthermore, the lack of memory management policy in GEM gives iCaRL a compelling advantage w.r.t. the amount of exemplars for the first tasks, e.g. training Task 2 comprises a replay buffer of Task 1 with factor 10 (number of tasks) more exemplars. Surprisingly, GEM 9 k with twice as much exemplar capacity doesn't perform better than GEM 4 . 5 k. This unexpected behavior may be due to the random exemplar selection yielding a less representative subset. Nevertheless, GEM convincingly improves accuracy of the basic replay baseline R-PM with the same partial memory scheme ( 45 . 13% vs 36 . 09% ).

Comparing the two basic rehearsal baselines that use the memory in different ways, we observe the scheme exploiting full memory from the start (R-FM) giving significantly better results than R-PM for tasks 1 to 7, but not for the last three. As more tasks are seen, both baselines converge to the same memory scheme, where for the final task R-FM allocates an equal portion of memory to each task in the sequence, hence equivalent to R-PM.

Parameter isolation methods. Both HAT and PackNet exhibit the virtue of masking, reporting zero forgetting (see the two flat curves). HAT performs better for the first task, but builds up a salient discrepancy with PackNet for later tasks. PackNet rigorously assigns layer-wise portions of network capacity per task, while HAT imposes sparsity by regularization, which does not guarantee uniform capacity over layers and tasks.

## 6.3 Effects of Model Capacity

Tiny Imagenet. Acrucial design choice in continual learning concerns network capacity. Aiming to learn a long task sequence, a high capacity model seems preferable. However, learning the first task using such model, with only data from a single task, holds the risk of overfitting, jeopardizing generalization performance. So far, we compared all methods on the BASE model. Next, we study effects of extending or reducing capacity in this architecture. Details of the models are given in Section 6.1 and Appendix A. In the following, we discuss results of model choice, again using the random ordering of Tiny Imagenet. These results are summarized in the top part of Table 4 for parameter isolation and regularization-based methods and Table 5 for replay methods 2 .

## 6.3.1 Discussion

Overfitting. We observed overfitting for several models and methods, and not only for the WIDE and DEEP models. Comparing different methods, SI seems quite vulnerable to overfitting issues, while PackNet prevents overfitting to some extent, thanks to the network compression phase.

General Observations. Selecting highest accuracy disregarding which model is used, iCaRL 9 k and PackNet remain on the lead with 49 . 94% and 49 . 13% . Further, LwF shows to be competitive with MAS (resp. 46 . 79% and 46 . 90% ).

Baselines. Taking a closer look at the finetuning baseline results (see purple box in Table 4), we observe that it does not reach the same level of accuracy with the SMALL model as with the other models. In particular, the initial accuracies are similar, yet the level of forgetting is much more severe, due to the limited model capacity to learn new tasks.

Joint training results on the DEEP network (blue box) are inferior compared to shallower networks, implying the deep architecture to be less suited to accumulate all knowledge from the task sequence. As performance of joint learning serves as a soft upper bound for the continual learning methods, this already serves as an indication that a deep model may not be optimal.

In accordance, replay baselines R-PM and R-FM show to be quite model agnostic with all but the DEEP model performing very similar. The baselines experience both less forgetting and increased average accuracy when doubling replay buffer size.

SMALL model. Finetuning and SI suffer from severe forgetting ( > 20% ) imposed by the decreased capacity of the network (see red underlining), making these combinations worthless in practice. Other methods experience alleviated forgetting, with EWC most saliently benefitting from a small network (see green underlinings).

WIDE model. Remarkable in WIDE model results is SI consistently outperforming EWC, in contrast with other model

2. Note that, for all of our observations, we also checked the results obtained for the two other task orders (reported in the middle and bottom part of Table 4 and Table 5). The reader can check that the observations are quite consistent.

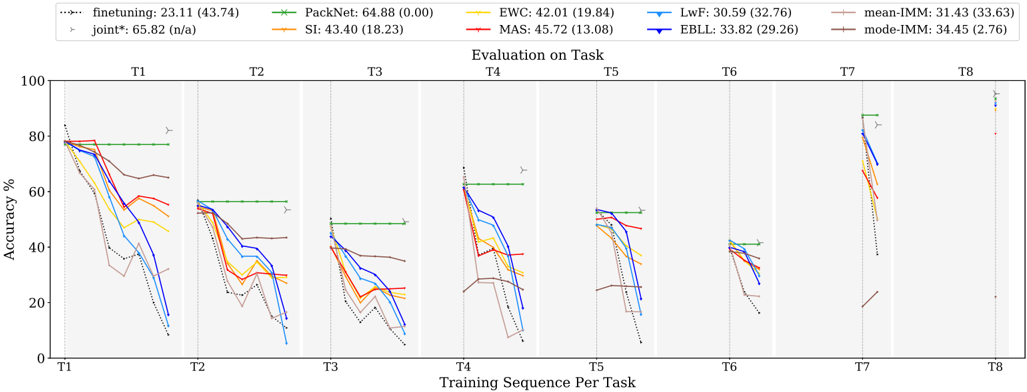

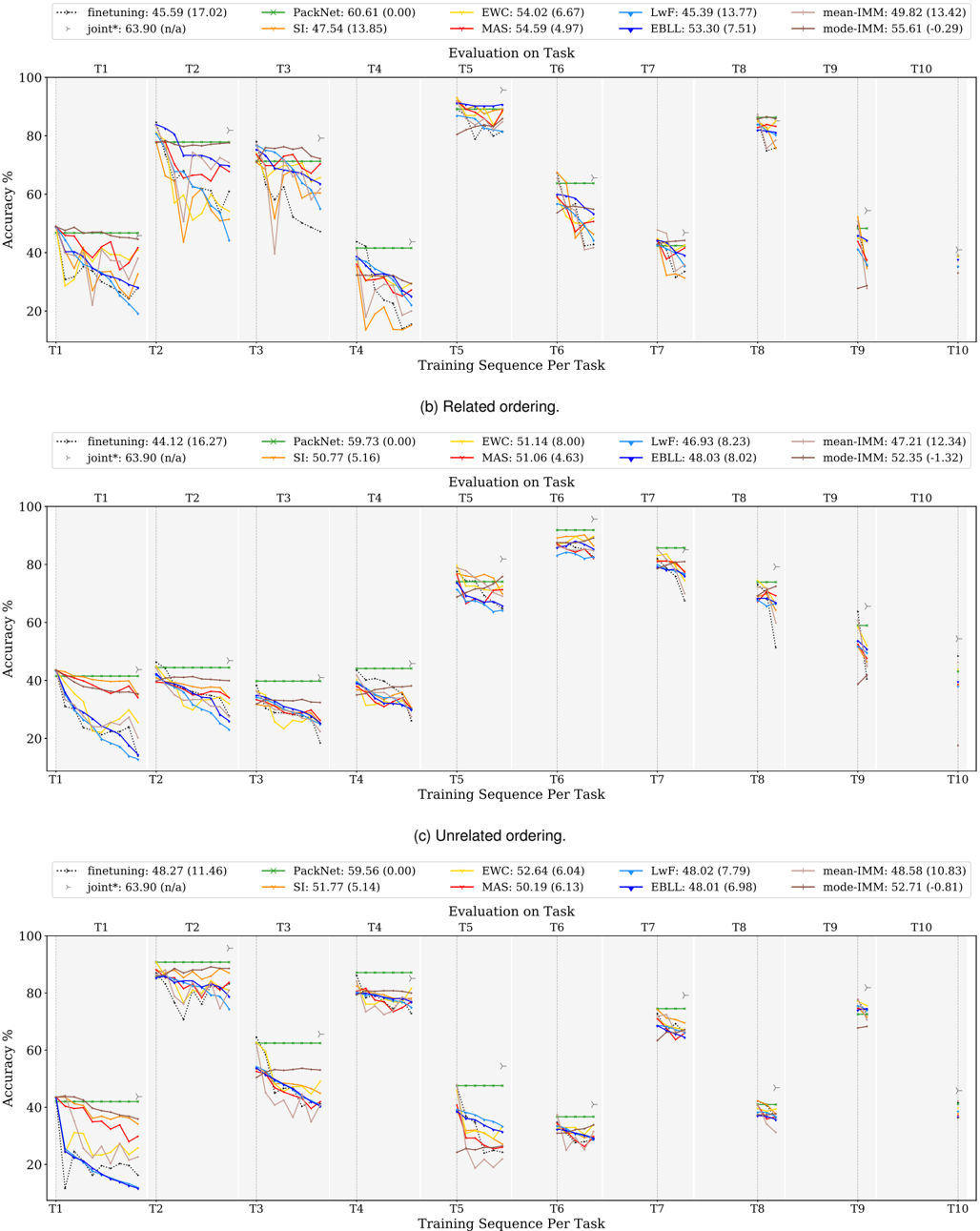

Fig. 2: Parameter isolation and regularization-based methods (top) and replay methods (bottom) on Tiny Imagenet for the BASE model with random ordering, reporting average accuracy (forgetting) in the legend.

<details>

<summary>Image 2 Details</summary>

### Visual Description

\n

## Line Chart: Accuracy vs. Training Sequence Per Task

### Overview

The image presents two line charts displaying accuracy percentages over a training sequence per task. Each chart compares the performance of several different learning algorithms (finetuning, PackNet, SI, EWC, MAS, LwF, mode-IMM, EBLL in the top chart and finetuning, R-PM 4.5k, R-PM 9k, GEM 4.5k, iCaRL 4.5k, GEM 9k, iCaRL 9k in the bottom chart). The x-axis represents the training sequence (T1 to T10), and the y-axis represents accuracy in percentage. Each line represents a different algorithm, and the charts show how accuracy changes as the training sequence progresses. Error bars are present for each data point, indicating the standard deviation.

### Components/Axes

* **X-axis:** Training Sequence Per Task (T1, T2, T3, T4, T5, T6, T7, T8, T9, T10)

* **Y-axis:** Accuracy (%) - Scale ranges from 0 to 60.

* **Top Chart Legend (positioned at the top-center):**

* finetuning: (21.30 (26.90)) - Dotted dark red line

* joint*: (55.70 (n/a)) - Dotted dark blue line

* PackNet: (49.13 (0.00)) - Solid green line

* HAT: (43.57 (0.00)) - Solid red line

* SI: (33.93 (15.77)) - Solid purple line

* EWC: (42.43 (7.51)) - Solid orange line

* MAS: (46.90 (1.58)) - Solid teal line

* LwF: (41.91 (3.44)) - Solid light blue line

* mode-IMM: (36.89 (0.98)) - Solid yellow line

* EBLL: (45.34 (1.08)) - Solid pink line

* **Bottom Chart Legend (positioned at the bottom-center):**

* finetuning: (21.30 (26.90)) - Dotted dark red line

* joint*: (55.70 (n/a)) - Dotted dark blue line

* R-PM 4.5k: (36.09 (10.96)) - Solid green line

* R-PM 9k: (38.69 (7.23)) - Solid red line

* GEM 4.5k: (43.13 (4.96)) - Solid purple line

* GEM 9k: (41.75 (5.18)) - Solid orange line

* iCaRL 4.5k: (47.27 (1.11)) - Solid teal line

* iCaRL 9k: (48.76 (1.76)) - Solid light blue line

* **Title (positioned at the center):** "Evaluation on Task"

### Detailed Analysis or Content Details

**Top Chart:**

* **finetuning (dark red, dotted):** Starts around 20% at T1, fluctuates between 20-30% throughout the training sequence, with a slight upward trend towards T10.

* **joint* (dark blue, dotted):** Starts around 50% at T1, remains relatively stable around 50-60% throughout the training sequence.

* **PackNet (green, solid):** Starts around 40% at T1, increases to approximately 50% by T4, then fluctuates between 45-55% for the remainder of the sequence.

* **HAT (red, solid):** Starts around 35% at T1, increases to approximately 45% by T3, then fluctuates between 40-50% for the remainder of the sequence.

* **SI (purple, solid):** Starts around 25% at T1, increases to approximately 35% by T3, then fluctuates between 30-40% for the remainder of the sequence.

* **EWC (orange, solid):** Starts around 35% at T1, increases to approximately 45% by T4, then fluctuates between 40-50% for the remainder of the sequence.

* **MAS (teal, solid):** Starts around 40% at T1, increases to approximately 50% by T4, then fluctuates between 45-55% for the remainder of the sequence.

* **LwF (light blue, solid):** Starts around 35% at T1, increases to approximately 45% by T4, then fluctuates between 40-50% for the remainder of the sequence.

* **mode-IMM (yellow, solid):** Starts around 30% at T1, increases to approximately 40% by T4, then fluctuates between 35-45% for the remainder of the sequence.

* **EBLL (pink, solid):** Starts around 40% at T1, increases to approximately 50% by T4, then fluctuates between 45-55% for the remainder of the sequence.

**Bottom Chart:**

* **finetuning (dark red, dotted):** Similar to the top chart, starts around 20% at T1, fluctuates between 20-30% throughout the training sequence.

* **joint* (dark blue, dotted):** Similar to the top chart, starts around 50% at T1, remains relatively stable around 50-60% throughout the training sequence.

* **R-PM 4.5k (green, solid):** Starts around 30% at T1, increases to approximately 40% by T4, then fluctuates between 35-45% for the remainder of the sequence.

* **R-PM 9k (red, solid):** Starts around 30% at T1, increases to approximately 40% by T4, then fluctuates between 35-45% for the remainder of the sequence.

* **GEM 4.5k (purple, solid):** Starts around 35% at T1, increases to approximately 45% by T4, then fluctuates between 40-50% for the remainder of the sequence.

* **GEM 9k (orange, solid):** Starts around 35% at T1, increases to approximately 45% by T4, then fluctuates between 40-50% for the remainder of the sequence.

* **iCaRL 4.5k (teal, solid):** Starts around 40% at T1, increases to approximately 50% by T4, then fluctuates between 45-55% for the remainder of the sequence.

* **iCaRL 9k (light blue, solid):** Starts around 40% at T1, increases to approximately 50% by T4, then fluctuates between 45-55% for the remainder of the sequence.

### Key Observations

* The "joint*" method consistently achieves the highest accuracy across both charts, remaining stable around 55-60%.

* "finetuning" consistently shows the lowest accuracy, fluctuating between 20-30%.

* The performance of most algorithms tends to plateau after T4, with fluctuations around a certain accuracy level.

* The error bars indicate variability in performance, but the general trends remain consistent.

* The 9k versions of R-PM, GEM, and iCaRL generally perform slightly better than their 4.5k counterparts.

### Interpretation

The charts demonstrate the performance of various continual learning algorithms on a task. The "joint*" method appears to be the most effective, maintaining high accuracy throughout the training sequence. This suggests that the joint training approach is well-suited for this particular task. "finetuning" consistently underperforms, indicating that it struggles to retain knowledge from previous tasks as new tasks are introduced. The other algorithms show intermediate performance, with varying degrees of success. The slight improvement observed with the 9k versions suggests that increasing the model capacity can lead to better performance, but the gains are not substantial. The error bars highlight the inherent variability in machine learning performance, and it is important to consider these uncertainties when interpreting the results. The plateauing of accuracy after T4 suggests that the algorithms may be reaching their learning capacity or that the task becomes saturated. Overall, the data provides valuable insights into the strengths and weaknesses of different continual learning algorithms and can guide the selection of appropriate methods for specific applications.

</details>

results (see orange box; even more clear in middle and bottom tables in Table 4, which will be discussed later). EWC performs worse for the high capacity WIDE and DEEP models and, as previously discussed, attains best performance for the SMALL model. LwF, EBLL and PackNet mainly reach their top performance when using the WIDE model, with SI performing most stable on both the WIDE and BASE models. IMM also shows increased performance when using the BASE and WIDE model.

DEEP model. Over the whole line of methods (yellow box), extending the BASE model with additional convolutional layers results in lower performance. As we already observed overfitting on the BASE model, additional layers may introduce extra unnecessary layers of abstraction. For the DEEP model iCaRL outperforms all continual learning methods with both memory sizes. HAT deteriorates significantly for the DEEP model, especially in the random ordering, for which we observe issues regarding asymmetric allocation of network capacity. This makes the method sensitive to ordering of tasks, as early fixed parameters determine performance for later tasks at saturated capacity. We discuss this in more detail in Appendix B.5.

Model agnostic. Over all orderings which will be discussed in Section 6.6, some methods don't exhibit a preference for any model, except for the common aversion for the detrimental DEEP model. This is in general most salient for all replay methods in Table 5, but also for PackNet, MAS, LwF and EBLL in Table 4.

Conclusion. We can conclude that (too) deep model architectures do not provide a good match with the continual learning setup. For the same amount of feature extractor parameters, WIDE models obtain significant better results (on average 11% better over all methods in Table 4 and Table 5). Also too small models should be avoided, as the limited available capacity can cause forgetting. On average we observe a modest 0 . 89% more forgetting for the SMALL model compared to the BASE model. At the same time, for some models, poor results may be due to overfitting, which can possibly be overcome using regularization, as we will study next.

TABLE 4: Parameter isolation and regularization-based methods results on Tiny Imagenet for different models with random (top), easy to hard (middle) and hard to easy (bottom) ordering of tasks, reporting average accuracy (forgetting).

| Model | finetuning | joint* | PackNet | HAT | SI | EWC | MAS | LwF | EBLL | mode-IMM |

|---------|---------------|-------------|--------------|---------------|---------------|---------------|---------------|---------------|---------------|---------------|

| SMALL | 16.25 (34.84) | 57.00 (n/a) | 46.68 (0.00) | 44.19 (0.00) | 23.91 (23.26) | 45.13 (0.86) | 40.58 (0.78) | 44.06 (-0.44) | 44.13 (-0.53) | 29.63 (3.06) |

| BASE | 21.30 (26.90) | 55.70 (n/a) | 49.13 (0.00) | 43.57 (0.00) | 33.93 (15.77) | 42.43 (7.51) | 46.90 (1.58) | 41.91 (3.08) | 45.34 (1.44) | 36.89 (0.98) |

| WIDE | 25.28 (24.15) | 57.29 (n/a) | 47.64 (0.00) | 43.78 (0.50) | 33.86 (15.16) | 31.10 (17.07) | 45.08 (2.58) | 46.79 (1.19) | 46.25 (1.72) | 36.42 (1.66) |

| DEEP | 20.82 (20.60) | 51.04 (n/a) | 35.54 (0.00) | 8.06 (3.21) | 24.53 (12.15) | 29.14 (7.92) | 33.58 (0.91) | 32.28 (2.58) | 27.78 (3.14) | 27.51 (0.47) |

| Model | finetuning | joint* | PackNet | HAT | SI | EWC | MAS | LwF | EBLL | mode-IMM |

| SMALL | 16.06 (35.40) | 57.00 (n/a) | 49.21 (0.00) | 43.89 (0.00) | 35.98 (13.02) | 40.18 (7.88) | 44.29 (1.77) | 45.04 (1.89) | 42.07 (1.73) | 26.13 (3.03) |

| BASE | 23.26 (24.85) | 55.70 (n/a) | 50.57 (0.00) | 43.93 (-0.02) | 33.36 (14.28) | 34.09 (13.25) | 44.02 (1.30) | 43.46 (2.53) | 43.60 (1.71) | 36.81 (-1.17) |

| WIDE | 19.67 (29.95) | 57.29 (n/a) | 47.37 (0.00) | 41.63 (0.25) | 34.84 (13.14) | 28.35 (21.16) | 45.58 (1.52) | 42.66 (1.07) | 44.12 (3.46) | 38.68 (-1.09) |

| DEEP | 23.90 (17.91) | 51.04 (n/a) | 34.56 (0.00) | 28.64 (0.87) | 24.56 (14.41) | 24.45 (15.22) | 35.23 (2.37) | 27.61 (3.70) | 29.73 (1.95) | 25.89 (-2.09) |

| Model | finetuning | joint* | PackNet | HAT | SI | EWC | MAS | LwF | EBLL | mode-IMM |

| SMALL | 18.62 (28.68) | 57.00 (n/a) | 44.42 (0.00) | 33.52 (1.55) | 40.39 (4.50) | 41.62 (3.54) | 40.90 (1.35) | 42.36 (0.63) | 43.60 (-0.05) | 24.95 (1.66) |

| BASE | 21.17 (22.73) | 55.70 (n/a) | 43.17 (0.00) | 40.21 (0.18) | 40.79 (2.58) | 41.83 (1.51) | 41.98 (0.14) | 41.58 (1.40) | 41.57 (0.82) | 34.58 (0.23) |

| WIDE | 25.25 (22.69) | 57.29 (n/a) | 45.53 (0.00) | 41.76 (0.07) | 37.91 (8.05) | 29.91 (15.77) | 43.55 (-0.29) | 43.87 (1.26) | 42.42 (0.85) | 35.24 (-0.82) |

| DEEP | 15.33 (20.66) | 51.04 (n/a) | 30.73 (0.00) | 27.21 (3.21) | 22.97 (13.23) | 22.32 (13.86) | 32.99 (2.19) | 30.77 (2.51) | 30.15 (2.67) | 26.38 (1.20) |

TABLE 5: Replay methods results on Tiny Imagenet for different models with random (top), easy to hard (middle) and hard to easy (bottom) ordering of tasks, reporting average accuracy (forgetting).

| Model | finetuning | joint* | R-PM 4.5k | R-PM 9k | R-FM 4.5k | R-FM 9k | GEM 4.5k | GEM 9k | iCaRL 4.5k | iCaRL 9k |

|---------|---------------|-------------|---------------|--------------|--------------|--------------|---------------|--------------|---------------|---------------|

| SMALL | 16.25 (34.84) | 57.00 (n/a) | 36.97 (12.21) | 40.11 (9.23) | 39.60 (9.57) | 41.35 (6.33) | 39.44 (7.38) | 41.59 (4.17) | 43.22 (-1.39) | 46.32 (-1.07) |

| BASE | 21.30 (26.90) | 55.70 (n/a) | 36.09 (10.96) | 38.69 (7.23) | 37.31 (9.21) | 42.36 (3.94) | 45.13 (4.96) | 41.75 (5.18) | 47.27 (-1.11) | 48.76 (-1.76) |

| WIDE | 25.28 (24.15) | 57.29 (n/a) | 36.47 (12.45) | 41.51 (5.15) | 39.25 (9.26) | 41.53 (6.02) | 40.32 (6.97) | 44.23 (3.94) | 44.20 (-1.43) | 49.94 (-2.80) |

| DEEP | 20.82 (20.60) | 51.04 (n/a) | 27.61 (7.14) | 28.99 (6.27) | 32.26 (3.33) | 33.10 (4.70) | 29.66 (6.57) | 23.75 (6.93) | 36.12 (-0.93) | 37.16 (-1.64) |

| Model | finetuning | joint* | R-PM 4.5k | R-PM 9k | R-FM 4.5k | R-FM 9k | GEM 4.5k | GEM 9k | iCaRL 4.5k | iCaRL 9k |

| SMALL | 16.06 (35.40) | 57.00 (n/a) | 37.09 (11.64) | 40.82 (8.08) | 38.47 (9.62) | 40.81 (7.73) | 39.07 (9.45) | 42.16 (6.93) | 48.55 (-1.17) | 46.67 (-2.03) |

| BASE | 23.26 (24.85) | 55.70 (n/a) | 36.70 (8.88) | 39.85 (6.59) | 38.27 (8.35) | 40.95 (6.21) | 30.73 (10.39) | 40.20 (7.41) | 46.30 (-1.53) | 47.47 (-2.22) |

| WIDE | 19.67 (29.95) | 57.29 (n/a) | 39.43 (9.10) | 42.55 (5.32) | 40.67 (7.52) | 43.23 (4.09) | 44.49 (4.66) | 40.78 (8.14) | 48.13 (-2.01) | 44.59 (-2.44) |

| DEEP | 23.90 (17.91) | 51.04 (n/a) | 30.98 (6.32) | 32.06 (4.16) | 29.62 (6.85) | 33.92 (3.56) | 25.29 (12.79) | 29.09 (6.30) | 32.46 (-0.35) | 34.64 (-1.25) |

| Model | finetuning | joint* | R-PM 4.5k | R-PM 9k | R-FM 4.5k | R-FM 9k | GEM 4.5k | GEM 9k | iCaRL 4.5k | iCaRL 9k |

| SMALL | 18.62 (28.68) | 57.00 (n/a) | 33.69 (11.06) | 37.68 (6.34) | 37.18 (7.13) | 38.47 (4.88) | 38.87 (6.78) | 39.28 (7.44) | 46.81 (-0.92) | 46.90 (-1.61) |

| BASE | 21.17 (22.73) | 55.70 (n/a) | 32.90 (8.98) | 34.34 (7.50) | 33.53 (9.08) | 36.83 (5.43) | 35.11 (7.44) | 35.95 (6.68) | 43.29 (-0.47) | 44.52 (-1.71) |

| WIDE | 25.25 (22.69) | 57.29 (n/a) | 34.85 (7.94) | 40.28 (6.67) | 36.70 (6.63) | 37.46 (5.62) | 37.27 (7.86) | 37.91 (6.94) | 41.49 (-1.49) | 49.66 (-2.90) |

| DEEP | 15.33 (20.66) | 51.04 (n/a) | 24.22 (6.67) | 23.42 (5.93) | 25.81 (6.06) | 29.99 (2.91) | 27.08 (6.47) | 31.28 (5.52) | 30.95 (0.85) | 37.93 (-1.35) |

## 6.4 Effects of Regularization

Tiny Imagenet. In the previous subsection we mentioned the problem of overfitting in continual learning. Although an evident solution would be to apply regularization, this might interfere with the continual learning methods. Therefore, we investigate the effects of two popular regularization methods, namely dropout and weight decay, for the parameter isolation and regularization-based methods in Table 6, and for the replay methods in Table 7. For dropout we set the probability of retaining the units to p = 0 . 5 , and weight decay applies a regularization strength of λ = 10 -4 . Any form of regularization is applied in both phases of our framework.

## 6.4.1 Discussion

General observations. In Table 6 and Table 7, negative results (with regularization hurting performance) are underlined in red. This occurs repeatedly, especially in combination with the SMALL model or with weight decay. Over all methods and models dropout mainly shows to be fruitful. This is consistent with earlier observations [31]. There are, however, a few salient exceptions (discussed below). Weight decay over the whole line mainly improves the wide network accuracies. In the following, we will describe the most notable observations and exceptions to the main tendencies.

Finetuning. Goodfellow et al. observe reduced catastrophic forgetting in a transfer learning setup with finetuning when using dropout [31]. Extended to learning a sequence of 10 consecutive tasks in our experiments, finetuning consistently benefits from dropout regularization. This is opposed to weight decay, resulting in increased forgetting and a lower performance on the final model. In spite of good results for dropout, we regularly observe an increase in the level of forgetting, which is compensated for by starting from a better initial model, due to a reduction in overfitting. Dropout leading to more forgetting is something we also observe for many other methods (see blue boxes), and exacerbates as the task sequence grows in length.

Joint training and PackNet. mainly relish higher accuracies with both regularization setups. By construction, they benefit from the regularization without interference issues. PackNet even reaches a top performance of almost 56% , that is 7% higher than closest competitor MAS.

HAT mainly favours weight decay of the model parameters, excluding embedding parameters. The embeddings are already prone to sparsity regularization, for which we found troublesome learning when included for weight decay as well. Dropout results in worse results, probably due to interfering with the unit-based masking. However, difficulties regarding asymmetric capacity in the DEEP model seem to

TABLE 6: Parameter isolation and regularization-based methods: dropout and weight decay for Tiny Imagenet models.

| Model | | finetuning | joint* | PackNet | HAT | SI | EWC | MAS | LwF | EBLL | mode-IMM |

|---------|--------------|---------------|-------------|--------------|--------------|---------------|---------------|---------------|---------------|---------------|---------------|

| SMALL | Plain | 16.25 (34.84) | 57.00 (n/a) | 49.09 (0.00) | 44.19 (0.00) | 23.91 (23.26) | 45.13 (0.86) | 40.58 (0.78) | 44.06 (-0.44) | 44.13 (-0.53) | 29.63 (3.06) |

| SMALL | Dropout | 19.52 (32.62) | 55.97 (n/a) | 50.73 (0.00) | 26.87 (2.46) | 38.34 (9.24) | 40.02 (7.57) | 40.26 (7.63) | 31.56 (18.38) | 34.14 (13.20) | 29.35 (0.90) |

| SMALL | Weight Decay | 15.06 (34.61) | 56.96 (n/a) | 49.90 (0.00) | 42.44 (0.00) | 37.99 (6.43) | 41.22 (1.52) | 37.37 (4.43) | 41.66 (-0.28) | 42.67 (-0.63) | 30.59 (0.93) |

| BASE | Plain | 21.30 (26.90) | 55.70 (n/a) | 47.67 (0.00) | 43.57 (0.00) | 33.93 (15.77) | 42.43 (7.51) | 46.90 (1.58) | 41.91 (3.08) | 45.34 (1.44) | 36.89 (0.98) |

| BASE | Dropout | 29.23 (26.44) | 61.43 (n/a) | 54.28 (0.00) | 38.11 (0.36) | 43.15 (10.83) | 42.09 (12.54) | 48.98 (0.87) | 41.49 (8.72) | 44.66 (7.66) | 34.20 (1.44) |

| BASE | Weight Decay | 19.14 (29.31) | 57.12 (n/a) | 48.28 (0.00) | 44.97 (0.19) | 39.65 (8.11) | 44.35 (3.51) | 44.29 (1.15) | 40.91 (1.29) | 41.26 (0.82) | 37.49 (0.46) |

| WIDE | Plain | 25.28 (24.15) | 57.29 (n/a) | 48.39 (0.00) | 43.78 (0.50) | 33.86 (15.16) | 31.10 (17.07) | 45.08 (2.58) | 46.79 (1.19) | 46.25 (1.72) | 36.42 (1.66) |

| WIDE | Dropout | 30.76 (26.11) | 62.27 (n/a) | 55.96 (0.00) | 34.94 (0.81) | 43.74 (8.80) | 33.94 (19.73) | 47.92 (1.37) | 45.04 (6.85) | 46.19 (5.31) | 42.41 (-0.93) |