## FeCaffe : FPGA-enabled Caffe with OpenCL for Deep Learning Training and Inference on Intel Stratix 10

Ke He, Bo Liu^, Yu Zhang, Andrew Ling* and Dian Gu

IoTG Vision Market Channel PRC, ^Flex Services and *Programmable Solution Group of Intel Corporation {harvey.he | bo.a.liu | richard.yu.zhang | andrew.ling | penny.gu}@intel.com

## ABSTRACT

Deep learning and Convolutional Neural Network (CNN) have becoming increasingly more popular and important in both academic and industrial areas in recent years cause they are able to provide better accuracy and result in classification, detection and recognition areas, compared to traditional approaches. Currently, there are many popular frameworks in the market for deep learning development, such as Caffe, TensorFlow, Pytorch, and most of frameworks natively support CPU and consider GPU as the mainline accelerator by default. FPGA device, viewed as a potential heterogeneous platform, still cannot provide a comprehensive support for CNN development in popular frameworks, in particular to the training phase. In this paper, we firstly propose the FeCaffe , i.e. FPGA-enabled Caffe, a hierarchical software and hardware design methodology based on the Caffe to enable FPGA to support mainline deep learning development features, e.g. training and inference with Caffe. Furthermore, we provide some benchmarks with FeCaffe by taking some classical CNN networks as examples, and further analysis of kernel execution time in details accordingly. Finally, some optimization directions including FPGA kernel design, system pipeline, network architecture, user case application and heterogeneous platform levels, have been proposed gradually to improve FeCaffe performance and efficiency. The result demonstrates the proposed FeCaffe is capable of supporting almost full features during CNN network training and inference respectively with high degree of design flexibility, expansibility and reusability for deep learning development. Compared to prior studies, our architecture can support more network and training settings, and current configuration can achieve 6.4x and 8.4x average execution time improvement for forward and backward respectively for LeNet.

## KEYWORDS

FeCaffe, FPGA, Deep Learning, CNN, Training, Inference, Caffe, OpenCL, Heterogeneous Platform, Stratix 10

## 1 Introduction

Deep learning has becoming increasingly more popular and drawn huge attention in both academic and industrial areas in recent years. The Convolutional Neural Network (CNN), as the subset of deep learning, has already demonstrated the capability for higher accuracy in classification, detection and recognition areas, compared to traditional computer vision methods, and thus it has been widely applied to commercial markets, e.g. digital secure surveillance, retail, industrial areas etc.

With rapid development of deep learning and CNN technology, the framework also has gained sufficient attention and investments to improve and develop as well. The development of CNN network is a sophisticated and systematic process, and it usually contains dataset preparation, pre/post processing, training, validation, and acceleration with heterogeneous platforms etc. All of these actions are required by using the framework so that deep learning algorithm developers can focus on algorithm development only with ease.

Currently, most of popular deep learning frameworks natively support Central Processing Unit (CPU) and consider the Graphic Processing Unit (GPU) as the accelerator by default. Field Programmable Gate Array (FPGA) viewed as another potential device by nature for heterogeneous platform acceleration, the development approach is still comparatively sophisticated, and thus cannot be comprehensively supported by popular frameworks for CNN development, especially in terms of training.

In this paper, in order to improve such a situation to some extent, we propose FeCaffe , i.e. FPGA-enabled Caffe framework with OpenCL and provide some contributions as follows:

- Seamlessly integrate FPGA into Caffe framework to perform CNN network training. To our best knowledge, it is the first time to enable FPGA to provide training features for popular networks and support entire training process and various training settings with Caffe.

- Introduce hierarchical software and hardware architectures in details, and the proposed approach has potential to expand to other OpenCL-backend frameworks due to OpenCL portability and generality.

- The proposed FeCaffe has high degree of design flexibility in terms of novel function development and integration, achieving same fine-grained level with GPU. Following the proposed approach, users can develop new kernels and integrate them into the FeCaffe conveniently. Moreover, various FPGA processing architectures can also be integrated to improve computation efficiency as required.

- Compared to prior work, the proposed approach is able to support more CNN network topologies, training-related settings, and provide better expansibility and ease of use[8] [9] . With regard to the performance, current configuration can achieve 6.4x and 8.4x average improvement for forward and backward respectively for LeNet under same testing conditions [8] .

- First time to support SqueezeNet and GoogLeNet training process with default or customized training settings on FPGA, in supporting multiple loss function definitions. In addition, we also firstly provide the benchmark of training an epoch based on ImageNet 2012 training and validation dataset for SqueezeNet and GoogLeNet respectively.

The rest of this paper is organized as follows. In Section 2, Caffe framework, FPGA OpenCL development, and deep learning with FPGA are introduced respectively. Section 3 describes the design methodology, including hierarchical software and hardware architecture, memory synchronization mechanism. Section 4 presents the result and Section 5 provides the analysis and optimization directions accordingly. Eventually, this paper is concluded in Section 6.

## 2 Related Work

## 2.1 Caffe Framework

Among deep learning frameworks in the market, Caffe, standing for Convolutional Architecture for Fast Feature Embedding, has been viewed as one of the most popular and important deep learning frameworks [1] . Original Caffe natively supports operations on CPU with a number of libraries, e.g. Basic Linear Algebra Subprograms (BLAS) and Math Kernel Library (MKL), and also NVidia GPU as the default accelerator with Compute Unified Device Architecture (CUDA) programming or CUDA Deep Neural Network (CuDNN) library. Some classical and well-known CNN networks, e.g. AlexNet, VGG, GoogLeNet, SqueezeNet, etc., were developed and further widely applied in many applications and scenarios by using Caffe[4] [5] [6] [7] .

## 2.2 OpenCL and FPGA Development

Register Transfer Level (RTL) coding, e.g. Verilog and VHSIC Hardware Description Language (VHDL), has been considered as the conventional FPGA development languages for a long history. It is a hardware-oriented and efficient approach, but requires massive engineering development efforts and comprehensive underlying details of FPGA circuit and design flow skills, e.g. synthesis, placement and routing to achieve a good result in terms of performance and timing. In addition, conventional FPGA development flow does not have a friendly simulation environment, especially for algorithm development. With the increment of size and complexity, in particular to the deep learning and CNN applications, those disadvantages of RTL designs are becoming increasingly more obvious. In order to address these pain points, FPGA vendors provide high-level language design methodology and tools for FPGA development, such as High-level Language Synthesis (HLS) and OpenCL [22] [22] [24] [25] .

OpenCL is public standard with data and task parallel programming models, initially proposed by Khronos Group , especially for parallel acceleration on heterogonous platforms, e.g. GPUs, CPUs and FPGAs. The OpenCL design flow has two design stages: kernel and host development. Host part development is mainly used for device initialization, setup, managing memory allocation, and coordinating kernel behaviors. In this work, we refer to the host code as runtime functions and further divide the runtime into two groups: kernel-related and common runtime. The purpose of common runtime is to create context, command queue, program, and memory allocation while the kernel-related runtime focuses on kernel argument configuration, debug, profiling, launch and release. Kernel development means to develop offload functions and tune performance on various devices, and has two approaches: NDRange and single work-item . NDRange is the default execution model for OpenCL kernel development, and employs a number of build-in functions to complete mapping of algorithm to massive work-items execution concurrently. Single work-item is another design philosophy that is hardware-oriented methodology, achieving maximum throughput and optimization by using more flexible optimization directives, FPGA native components and deeper processing pipeline. Optimizing the system and performance with NDRange design approach is hardware-agnostic, by tuning parameters of group size or compute units, and thus it is general and universal for various devices because the compilers can manage resource and adjustment for each device automatically. On the contrary, single work-item optimization heavily relies on complier tools and specific hardware architectures provided by various device vendors, and users' skillset as well.

## 2.3 CNN Inference with FPGA

Due to rapid growth in wide application areas, there have been a number of research studies based on FPGA for deep learning and CNN applications[11] [12] [13] [14] [15] [16] [17] [18] [19] [20] [21] . Authors in these papers have demonstrated FPGAs are able to achieve impressive benchmarks for some popular CNN networks on Intel and Xilinx devices with HLS, OpenCL and RTL design methodologies respectively. In general, they concentrate on the network inference efficiency, and thus defined their own processing architectures and pipelines with FPGA dedicated DSP blocks, distributed and BRAMs, to realize key CNN processing operations, e.g. convolution, pooling, in parallel with tiles simultaneously. Besides, some optimization technologies, for example, fixed-point quantization, low precision, and data transformation, e.g. Winograd and Fast Fourier Transform (FFT) have also been considered as the preconditions to realize such significantly competitive benchmarks on FPGAs, compared to GPUs and CPUs[14] [19] [20] [21] . Those low precision data type, e.g. Int8, is able to increase DSP efficiency, decrease the weight, intermediate and final result storage based on FPGA limited onchip memory, and DDR bandwidth. The core of processing architectures is to utilize a number of cascaded DSP blocks to perform convolution or matrix multiplication in parallel in several dimensions. In general, the data movement path and processing architecture mechanisms are well-optimized and fixed down to achieve impressive results in terms of throughput, performance per power and energy efficiency, compared to CPUs and GPUs respectively. In addition, there are a few of studies on FPGA CNN inference with Caffe framework. Authors in [10] and [16] presented both hardware architectures and software approaches for Caffe invocation and provided benchmarks on FPGA inference with Caffe for AlexNet, VGG, GoogLeNet and YOLO-v2 respectively.

## 2.4 CNN Training with FPGA

Compared to the inference with FPGA, the research of FPGA training is relative limited, and there are only two studies providing the implementation details and benchmarks. Authors in [8] proposed the pipeline structure of convolution and pooling layers and benchmarked the training process with two FPGA boards on LeNet. A much more aggressive approach based on FPGA clusters for CNN training has been proposed in [9] , and 15 FPGAs, scaling up to 83 FPGAs at most, are used for training AlexNet, VGG-16 and VGG-19 respectively. They both employ multiple FPGA boards for CNN training, and create dedicated processing pipeline with fixed weight update mechanism. In addition, they both utilize customized runtime with low-level network configuration parameters and hardware constraints as the software control during the training, resulting in further limitation on CNN training usage.

## 2.5 Motivation

Most of previous studies only focus on CNN inference with FPGA while the training process of deep learning on FPGA has gained little attention. Among the FPGA-enable inference designs, the trend is to design the most efficient and dedicated processing architectures with fine-tuned and well-designed data buffer and reuse mechanism, e.g. data sharing, weight sharing or even hybrid, for one or some types of classical network topologies. This kind of design philosophy leads to the maximum FPGA throughput and efficiency for inference, but have to suffer from flexibility and adaptation problems in some practical CNN scenarios. For the inference structure, it is usually difficult and time consuming to insert new developed functions or primitives into the welloptimized pipelines, resulting in slow time to market and many development efforts for FPGA-based CNN solutions. CNN training with FPGA is more challenging than inference in terms of not only hardware designs and utilizations, but also software development. Due to high development barrier and a large amount of engineering efforts, only a few of studies are able to provide FPGA approaches with Caffe for inference and thus they are not complete approaches because training parts are excluded. There are still some gaps providing more functions and flexibility with FPGA for deep learning development, compared to GPUs. In summary, FPGAbased architectures have obvious limitations and gaps in terms of flexibility, customization and convenience for CNN training and inference development

Considering all of factors discussed above, we propose the FeCaffe in this paper, and make the contributions as discussed previously. This study is a more comprehensive approach and constitutes an extension to conventional CNN development, which often considers GPUs and CPUs, and also creates more feasibilities and choices for deep learning development based on FPGA-related heterogeneous platforms.

## 3 Design Methodology

## 3.1 Caffe Architecture

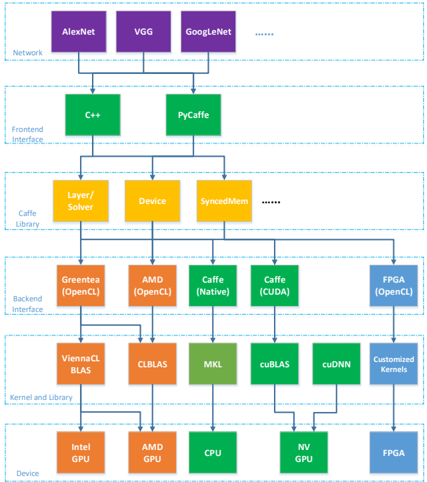

Conventional Caffe framework structure is illustrated in Figure 1, we divide the whole hierarchy into six layers: from network application level to hardware device layer. Note that we only describe some hierarchical functions that are related to hardware devices and CNN operation layers because Caffe framework also has a larger number of components on debug and logging, database I/O processing, and protobuf parsing, etc. Those components can often be reused with almost no change for various Caffe variants.

Figure 1 Hierarchical Architecture of Caffe Framework

<details>

<summary>Image 1 Details</summary>

### Visual Description

\n

## Diagram: Caffe Deep Learning Framework Architecture

### Overview

This diagram illustrates the architecture of the Caffe deep learning framework, showing the flow of data and operations from the network level down to the device level. It depicts a layered structure with five main sections: Network, Frontend Interface, Caffe Library, Backend Interface, and Kernel/Build Library, culminating in the Device layer. The diagram uses color-coding to differentiate the components within each layer.

### Components/Axes

The diagram is structured into five horizontal layers, each representing a different level of abstraction:

1. **Network (Purple):** AlexNet, VGG, GoogleNet, and "..." indicating other networks.

2. **Frontend Interface (Orange):** C++, PyCaffe.

3. **Caffe Library (Yellow):** Layer/Solver, Device, SyncedMem, and "..." indicating other components.

4. **Backend Interface (Green):** Greentea (OpenCL), AMD (OpenCL), Caffe (Native), Caffe (CUDA), FPGA (OpenCL).

5. **Kernel/Build Library (Teal/Brown):** ViennaCL BLAS, CLBLAS, MKL, cuBLAS, cuDNN, Customized Kernels.

6. **Device (Various):** Intel GPU, AMD GPU, CPU, NV GPU, FPGA.

Arrows indicate the flow of data and dependencies between components.

### Detailed Analysis or Content Details

The diagram shows a hierarchical flow:

* **Network Layer:** Multiple network architectures (AlexNet, VGG, GoogleNet) feed into the Frontend Interface.

* **Frontend Interface Layer:** C++ and PyCaffe receive input from the Network layer.

* **Caffe Library Layer:** The Layer/Solver and Device components receive input from the Frontend Interface. SyncedMem is also present, with an ellipsis suggesting other components.

* **Backend Interface Layer:** Greentea (OpenCL), AMD (OpenCL), Caffe (Native), Caffe (CUDA), and FPGA (OpenCL) receive input from the Caffe Library.

* **Kernel/Build Library Layer:** ViennaCL BLAS, CLBLAS, MKL, cuBLAS, cuDNN, and Customized Kernels receive input from the Backend Interface.

* **Device Layer:** Intel GPU, AMD GPU, CPU, NV GPU, and FPGA receive input from the Kernel/Build Library.

The diagram illustrates that a single network architecture can be processed through multiple frontend interfaces, which then utilize the Caffe Library. The Caffe Library then leverages various backend interfaces and kernel libraries to execute operations on different devices.

### Key Observations

* The diagram highlights the flexibility of Caffe, allowing for the use of different network architectures, frontend interfaces, backend interfaces, and devices.

* The presence of both Caffe (Native) and Caffe (CUDA) in the Backend Interface suggests support for both CPU and GPU-based computation.

* The inclusion of FPGA (OpenCL) indicates support for hardware acceleration using Field-Programmable Gate Arrays.

* The "..." notations suggest that the diagram is not exhaustive and that there are other components and options available within each layer.

### Interpretation

The diagram demonstrates the modular and adaptable nature of the Caffe deep learning framework. It showcases how Caffe abstracts the underlying hardware and software complexities, allowing users to easily switch between different network architectures, programming languages (C++, Python), and computational devices (CPU, GPU, FPGA). This flexibility is a key strength of Caffe, enabling it to be deployed in a wide range of applications and environments. The layered architecture promotes code reusability and maintainability, while the support for multiple backends and devices allows for optimized performance based on the available hardware resources. The diagram effectively communicates the framework's design principles and its ability to cater to diverse computational needs. The inclusion of specialized libraries like cuDNN and ViennaCL BLAS indicates a focus on performance optimization for specific hardware platforms.

</details>

(Green: native Caffe provided; Red: GPU-based OpenCL variants provided; Orange: Hardware-related functions; Blue: Our work)

Training and inference for various networks can be performed by calling for C++ and Python interface with native Caffe support. Either C++ or Python can call for the Caffe libraries consisting of a large number of defined classes , e.g. layer , device , syncedmem etc. For the CPU path, it can start from either C++ or Python interface and invoke some math functions or perform some operations directly defined by the layer functions with C++. Those math functions further calls for MKL or BLAS libraries, and finally maps to CPU device. Similarly, GPU approach goes CUDA interface and invokes the math functions optimized by cuBLAS or cuDNN, and some CUDA functions for layer operations. Some hardware-related classes, e.g. device and syncedmem, are mainly used for GPU device and memory management.

Due to open source of Caffe framework and community contributions, a number of OpenCL-based variants, stemmed from native Caffe, have been proposed and maintained, and thus they are capable of supporting more heterogeneous platforms, e.g. AMD

and Intel integrated GPUs, among which, two well-known representives are analyzed in this paper [2] [3] . Author in [2] proposed an OpenCL-based interface mechanism named greentea, and, provided the CNN acceleration library with OpenCL by leveraging official CLBLAS and Vienna CLBLAS libraries. With good compatibility of those libraries, the greentea is able to support CNN activities within Caffe on Intel integrated GPUs and AMD GPUs, i.e. the Greentea path in Figure 1. Similarly, another branch maintained by AMD proposed their backend interface hierarchy and kernel designs to support CNN operations deployed and optimized on AMD GPU devices, i.e. AMD path in Figure 1. It is important to note that some hardware-sensitive classes or functions, e.g. operation layers, device, syncedmem, highlighted by orange in Figure 1, also require significant modifications to support new devices even following the same OpenCL development flow.

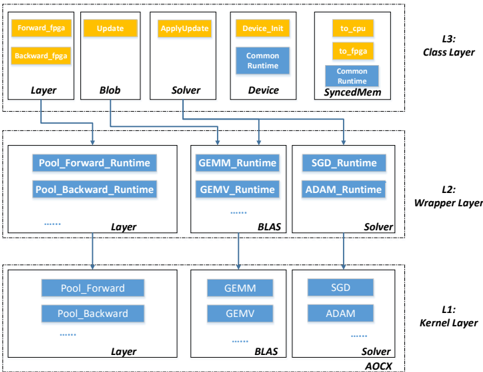

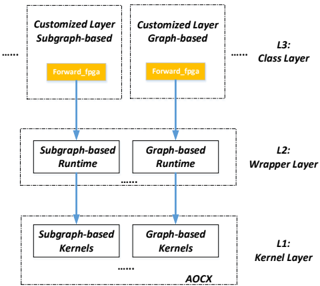

## 3.2 Kernel-related Layers

Following the similar structure, we proposed a novel hierarchical backend interface based on OpenCL flow to support CNN operations on FPGA, i.e. labeled with blue in Figure 1. More details of our path for the kernel development and backend interface can be referred in Figure 2. Here we divide three layers from FPGA kernel development to deep learning operations within Caffe framework. The L1 , i.e. kernel layer , includes all of kernel design files to support necessary operations and all of kernel files are compiled by Intel FPGA SDK for OpenCL and generate a FPGA configuration file. In order to support deep learning training and inference features, we group all of kernels required into three types: layer-related , BLAS-related and solver-related . The layerrelated kernels define the functions to support some layer classes directly, e.g. pooling, activation functions, including both forward and backward operations. BLAS-related group contains some general and common functions from BLAS library, e.g. General Matrix Multiplication (GEMM), General Matrix-Vector Multiplication (GEMV), etc. Solver is employed to update the weights according to various approaches or policies during the training iterations, and thus plays a significant role during network training process. Some common weight update approaches, e.g. Stochastic Gradient Descent (SGD), Adam , AdaDelta , Nesterov , etc., are supported on FPGA. In this study, note that all of kernel files mentioned utilize NDRange design style from the opensourced OpenCL Caffe versions and CLBLAS library for simplicity and saving validation energy.

For the upper layer on top of the kernel, this layer is referred to as the L2 wrapper layer , constituting a number of runtime functions corresponding each kernel from various groups in kernel layer. The runtime functions in this layer are all kernel-related runtimes as described previously, containing the kernel creation, argument setting, kernel launch, release and debug information. The main purpose of wrapper layer is further to encapsulate the OpenCL kernel designs for invocation with ease by high-level functions. With regard to the L3 , class layer , important classes have been defined by native Caffe, but need to extend based on the underlying L1 and L2 layers accordingly. Some straightforward layers, e.g. pooling, can call for the corresponding runtime directly

Figure 2 Hierarchical FeCaffe Structure in Details

<details>

<summary>Image 2 Details</summary>

### Visual Description

\n

## Diagram: Layered System Architecture

### Overview

The image depicts a layered system architecture, likely related to deep learning or numerical computation. It consists of three layers (L1, L2, L3) with various components interconnected by arrows indicating data or control flow. The diagram illustrates how high-level operations in the Class Layer (L3) are decomposed into lower-level operations in the Kernel Layer (L1) through the Wrapper Layer (L2).

### Components/Axes

The diagram is structured into three distinct layers, labeled L1 (Kernel Layer), L2 (Wrapper Layer), and L3 (Class Layer), positioned vertically from bottom to top. Each layer contains several rectangular blocks representing components. Arrows connect these blocks, showing dependencies and data flow.

**L3 (Class Layer):**

* Forward\_fgga

* Backward\_fgga

* Update

* ApplyUpdate

* Device\_Init

* to\_cpu

* to\_joga

* Layer

* Blob

* Solver

* Common Runtime

* Device

* Common Runtime SyncedMem

**L2 (Wrapper Layer):**

* Pool\_Forward\_Runtime

* Pool\_Backward\_Runtime

* GEMM\_Runtime

* GEMV\_Runtime

* SGD\_Runtime

* ADAM\_Runtime

* Layer

* BLAS

* Solver

**L1 (Kernel Layer):**

* Pool\_Forward

* Pool\_Backward

* GEMM

* GEMV

* SGD

* ADAM

* Layer

* BLAS

* Solver

* AOCX

### Detailed Analysis or Content Details

The diagram shows a hierarchical decomposition of operations.

* **L3 to L2:** `Forward_fgga` and `Backward_fgga` connect to `Pool_Forward_Runtime` and `Pool_Backward_Runtime` respectively. `Update` and `ApplyUpdate` connect to `SGD_Runtime` and `ADAM_Runtime`. `Device_Init` connects to `GEMM_Runtime` and `GEMV_Runtime`. `Layer` connects to `Layer` in L2. `Blob` connects to `BLAS`. `Solver` connects to `Solver` in L2. `Common Runtime` connects to `BLAS`. `Device` connects to `GEMM_Runtime` and `GEMV_Runtime`. `Common Runtime SyncedMem` connects to `SGD_Runtime` and `ADAM_Runtime`.

* **L2 to L1:** `Pool_Forward_Runtime` and `Pool_Backward_Runtime` connect to `Pool_Forward` and `Pool_Backward`. `GEMM_Runtime` and `GEMV_Runtime` connect to `GEMM` and `GEMV`. `SGD_Runtime` and `ADAM_Runtime` connect to `SGD` and `ADAM`. `Layer` connects to `Layer` in L1. `BLAS` connects to `BLAS` in L1. `Solver` connects to `Solver` and `AOCX` in L1.

The dotted lines between components within each layer suggest internal connections or data flow within that layer.

### Key Observations

* The diagram emphasizes a layered approach to software design, where higher-level functionalities are built upon lower-level primitives.

* The presence of both `SGD` and `ADAM` suggests support for multiple optimization algorithms.

* The inclusion of `BLAS` indicates the use of optimized linear algebra routines.

* The `fgga` suffix in `Forward_fgga` and `Backward_fgga` might refer to a specific framework or implementation detail.

* The `AOCX` component in L1 is unique and might represent a specific hardware accelerator or compilation target.

### Interpretation

This diagram likely represents the architecture of a deep learning framework or a numerical computation library. The layered structure allows for abstraction and portability. The Class Layer (L3) provides a high-level interface for users, while the Kernel Layer (L1) contains the core computational kernels optimized for specific hardware. The Wrapper Layer (L2) acts as a bridge between the two, providing a consistent interface and handling data transformations.

The connections between layers illustrate the flow of operations. For example, a forward pass through a neural network would start at the `Forward_fgga` component in L3, propagate down to the `Pool_Forward` and `GEMM` components in L1, and then return the results back up the layers.

The presence of `AOCX` suggests that the framework is designed to leverage specific hardware acceleration capabilities. The dotted lines within each layer indicate internal dependencies and data flow, which are crucial for performance optimization. The diagram highlights a modular and extensible design, allowing for easy integration of new components and algorithms.

</details>

to realize acceleration on FPGA. Some functions and layers might need a combination of BLAS library and kernels, and thus leverage various runtime configurations accordingly. Following the proposed architecture and partitions, the proposed design methodology has the potential to be applied in other deep learning frameworks, e.g. TensorFlow or Pytorch. L1 and L2 structures can remain the same due to OpenCL common standard while only L3 is required to update according to high-level functions defined by various frameworks.

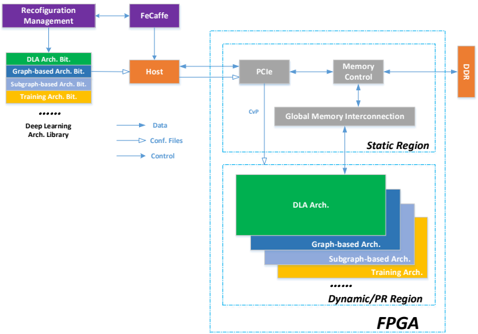

## 3.3 Memory Synchronization and Fallback

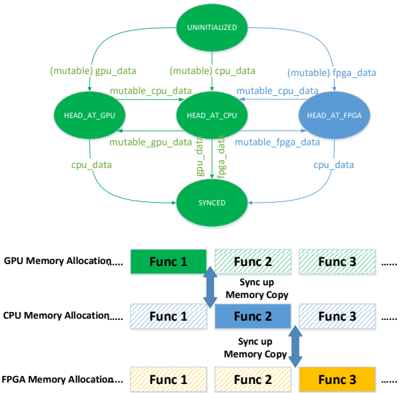

Memory management is a great feature of Caffe framework, and is capable of allocating memory on-demand for efficient usage at both host and device side, and performing synchronization as required. Following this design idea, FeCaffe makes an extension to the scenario of memory management on FPGA. The memory status topography is shown in the top part of Figure 3.

Figure 3 Top: Memory Status Topography; Bottom: Workload Partition Configurations

<details>

<summary>Image 3 Details</summary>

### Visual Description

\n

## Diagram: Data Synchronization Flow

### Overview

The image depicts a diagram illustrating the data synchronization process between a GPU, CPU, and FPGA. The diagram is divided into two main sections: a state transition diagram at the top and a functional allocation diagram at the bottom. The state transition diagram shows the progression of data from an "UNINITIALIZED" state to a "SYNCED" state, involving intermediate states representing data residing on the GPU, CPU, and FPGA. The functional allocation diagram shows how functions are allocated to each memory space and the data transfer between them.

### Components/Axes

The diagram consists of the following components:

* **States:** UNINITIALIZED, HEAD\_AT\_GPU, HEAD\_AT\_CPU, HEAD\_AT\_FPGA, SYNCED.

* **Data Labels:** mutable gpu\_data, mutable cpu\_data, mutable fpga\_data, cpu\_data, gpu\_data, fpga\_data.

* **Memory Allocations:** GPU Memory Allocation, CPU Memory Allocation, FPGA Memory Allocation.

* **Functions:** Func 1, Func 2, Func 3.

* **Data Transfer Operations:** Sync up Memory Copy.

### Detailed Analysis or Content Details

**State Transition Diagram (Top Section):**

* **UNINITIALIZED (Green):** The initial state. Arrows originate from this state, labeled "mutable gpu\_data", "mutable cpu\_data", and "mutable fpga\_data", pointing to the "HEAD\_AT\_GPU", "HEAD\_AT\_CPU", and "HEAD\_AT\_FPGA" states respectively.

* **HEAD\_AT\_GPU (Light Green):** Receives data from "UNINITIALIZED" via "mutable gpu\_data". An arrow labeled "mutable\_cpu\_data" points from this state to "HEAD\_AT\_CPU". An arrow labeled "cpu\_data" points back to the "SYNCED" state.

* **HEAD\_AT\_CPU (Light Blue):** Receives data from "HEAD\_AT\_GPU" via "mutable\_cpu\_data". An arrow labeled "mutable\_fpga\_data" points from this state to "HEAD\_AT\_FPGA". An arrow labeled "gpu\_data" points back to the "SYNCED" state.

* **HEAD\_AT\_FPGA (Light Yellow):** Receives data from "HEAD\_AT\_CPU" via "mutable\_fpga\_data". An arrow labeled "cpu\_data" points back to the "SYNCED" state.

* **SYNCED (Green):** Receives data from "HEAD\_AT\_GPU", "HEAD\_AT\_CPU", and "HEAD\_AT\_FPGA".

**Functional Allocation Diagram (Bottom Section):**

* **GPU Memory Allocation:**

* Func 1 (Dark Green): Positioned on the left.

* Func 2 (Dark Green): Positioned in the center, with a bidirectional arrow labeled "Sync up Memory Copy" pointing to Func 2 in the CPU Memory Allocation row.

* Func 3 (Dark Green): Positioned on the right.

* **CPU Memory Allocation:**

* Func 1 (Light Blue): Positioned on the left.

* Func 2 (Dark Blue): Positioned in the center, with a bidirectional arrow labeled "Sync up Memory Copy" pointing to Func 2 in the FPGA Memory Allocation row.

* Func 3 (Light Blue): Positioned on the right.

* **FPGA Memory Allocation:**

* Func 1 (Light Yellow): Positioned on the left.

* Func 2 (Light Yellow): Positioned in the center.

* Func 3 (Dark Yellow): Positioned on the right.

### Key Observations

* The state transition diagram shows a three-way synchronization process involving the GPU, CPU, and FPGA.

* The functional allocation diagram indicates that Func 2 is responsible for synchronizing data between the GPU and CPU, and between the CPU and FPGA.

* The "Sync up Memory Copy" operation suggests a data transfer mechanism between the different memory spaces.

* Func 1 and Func 3 appear to be independent operations within each memory space.

### Interpretation

The diagram illustrates a data synchronization pipeline where data initially resides in an uninitialized state and is then distributed to the GPU, CPU, and FPGA. The "HEAD\_AT\_" states likely represent the location where the data's processing head is currently located. The "SYNCED" state signifies that all three components have access to the data.

The functional allocation diagram highlights the role of Func 2 in facilitating data transfer between the different memory spaces. The bidirectional arrows labeled "Sync up Memory Copy" suggest that data is copied back and forth between the GPU, CPU, and FPGA, potentially for consistency or redundancy.

The diagram suggests a system where data processing is distributed across the GPU, CPU, and FPGA, with Func 2 acting as a central synchronization point. The allocation of Func 1 and Func 3 to each memory space indicates that these functions are performed locally on each component. The diagram does not provide any information about the nature of the functions or the data being synchronized, but it clearly outlines the flow of data and the roles of the different components in the synchronization process. The use of different colors for the functions in each memory allocation row may indicate different implementations or optimizations for each platform.

</details>

Table 1: Performance Benchmark with Native Caffe Time Measurement (Batch Size = 1)

| AlexNet (ms) | AlexNet (ms) | AlexNet (ms) | VGG_16 (ms) | VGG_16 (ms) | VGG_16 (ms) | SqueezeNet_v1.0 (ms) | SqueezeNet_v1.0 (ms) | SqueezeNet_v1.0 (ms) | GoogLeNet_v1 (ms) | GoogLeNet_v1 (ms) | GoogLeNet_v1 (ms) |

|----------------|----------------|----------------|---------------|---------------|---------------|------------------------|------------------------|------------------------|---------------------|---------------------|---------------------|

| Layer | Forward | Backward | Layer | Forward | Backward | Layer | Forward | Backward | Layer | Forward | Backward |

| Data | 0.001 | 0.001 | data | 0.002 | 0.002 | data | 0.001 | 0.001 | data | 0.635 | 0.003 |

| conv1 | 20.269 | 23.144 | conv1 | 498.268 | 1022.364 | conv1 | 46.025 | 43.506 | conv1 | 43.404 | 43.577 |

| conv2 | 26.661 | 54.883 | conv2 | 304.876 | 659.105 | fire2 | 18.646 | 26.165 | conv2 | 48.861 | 82.239 |

| conv3 | 6.359 | 13.395 | conv3 | 247.751 | 535.662 | fire3 | 18.119 | 26.313 | incep_3a | 34.198 | 53.154 |

| conv4 | 8.420 | 18.624 | conv4 | 132.813 | 281.132 | fire4 | 38.098 | 53.110 | incep_3b | 46.743 | 74.526 |

| conv5 | 8.487 | 19.019 | conv5 | 44.783 | 90.830 | fire5 | 11.464 | 16.732 | incep_4a | 22.026 | 34.657 |

| fc6 | 12.651 | 28.165 | fc6 | 31.676 | 74.651 | fire6 | 14.034 | 20.692 | loss1 | 6.931 | 11.618 |

| fc7 | 6.419 | 13.580 | fc7 | 6.291 | 14.291 | fire7 | 13.851 | 20.989 | incep_4b | 22.307 | 36.201 |

| fc8 | 1.976 | 5.603 | fc8 | 1.906 | 5.601 | fire8 | 20.640 | 30.215 | incep_4c | 23.094 | 36.396 |

| Loss | 1.883 | 0.994 | loss | 1.755 | 0.930 | fire9 | 8.842 | 13.703 | incep_4d | 25.049 | 38.848 |

| | | | | | | conv10 | 8.076 | 10.592 | loss2 | 7.312 | 11.626 |

| | | | | | | loss | 1.448 | 0.785 | incep_4e | 26.757 | 41.106 |

| | | | | | | | | | incep_5a | 15.832 | 23.933 |

| | | | | | | | | | incep_5b | 15.236 | 24.669 |

| | | | | | | | | | loss3 | 2.389 | 3.445 |

| Ave. | 93.230 | 177.527 | | 1270.420 | 2684.860 | | 199.525 | 263.047 | | 341.288 | 516.490 |

| Ave. F->B | 270.79 | 270.79 | | 3955.400 | 3955.400 | | 462.600 | 462.600 | | 857.810 | 857.810 |

The syncedmem class originally defines four status: uninitialized, CPU, GPU and synchronized, highlighted by green. Memory status can be switched by invoking corresponding highlevel functions, e.g. to\_cpu/gpu , and perform data copy as required. Here we create a new status for FPGA, highlighted in blue, and it means the data is at the FPGA DDR memory at current moment. This status can be added into the original pattern by using extended runtime functions, e.g. to\_fpga/cpu , resulting in a larger topography. Under such a flexible memory management for heterogeneous platforms, we can achieve a function-level or finegrained synchronization on various platforms with ease and safety within FeCaffe. The size of memory allocation can be calculated firstly, and then the data can be assigned to any device with the memory size required and management flow mentioned above, given any specific functions or operations. Therefore, the proposed architecture is able to support flexible workload partition on various platforms in theory, taking the bottom part of Figure 3 as the example. A number of functions can be performed straightforward on any specific devices respectively. In the meanwhile, it is also able to partition the workload on GPU, CPU and FPGA respectively with memory synchronization. For simplicity, we only test the combination of CPU and FPGA device, e.g. the fallback mechanism on CPU. Note that OpenCL standard utilizes the master-slave model to synchronize between host and devices, but cannot support synchronization between devices directly. Therefore, there has to be twice synchronization if the FPGA data would like to communicate with GPU.

## 4 Result and Analysis

## 4.1 Performance Benchmark

The result is summarized in Table 1. In this study, we take some classical CNN networks, e.g. AlexNet, VGG-16, SqueezeNet and GoogLeNet, as examples, and perform benchmarks including forward, backward, and for-backward on Intel Stratix 10 FPGA development board with Intel OpenCL SDK version of 19.2. The hardware and software of host platform is Intel Core i7-7700K with 4 Cores and 8 Threads and Ubuntu 16.04 respectively. In order for the accuracy during the training, all of data type is FP32 and native floating-point DSP is implemented for the multiplication and addition operations on FPGA. It is important to note that all of processing kernels for network topologies during training and inference are implemented on FPGA in this test. For the Table 1, the convolution also involves a couple of operations associated, e.g. pooling, Rectified Linear Unit (ReLU), Local Response Normalization (LRN), followed by the convolution layers. The fire layer consists of squeeze, expand, ReLU and concat operations accordingly defined in SqueezeNet and inception layer contains convolution layers of 1x1, 3x3 and 5x5, ReLU, pooling and concat operations in GoogLeNet. Due to limited space, we use convolution, fire and inception to represent those layers. With respect to the time measurement, we utilize Caffe native time function to measure the iterations of 100 times with batch size of 1. The forward and backward flow is the normal approach defined by the native Caffe, computing the result from beginning to the last layer, and gradient from the last to the beginning layer respectively. In this work, we use the train\_val as the model for each network during the performance measurement so that all of layers are required to perform backward calculations, demonstrating great FPGA adaptation but longer process path and time, compared to the deploy model. The performance results are listed in terms of forward, backward and for/backward of each network respectively.

## 4.2 Kernel Breakdowns

In order for the further analysis of FPGA and host behaviors during network forward and backward process within FeCaffe, we choose the deepest network, GoogLeNet, and employ profiling tools to provide workload breakdowns in details, as listed in Table 2. Table 2 elaborates all of execution details, e.g. kernels required, and total instance times for each kernel, including memory write

Table 2: Kernel Statistics within F->B for GoogLeNet

| Kernels | Instance Count | Total Time (ms) | Efficiency |

|---------------|------------------|-------------------|--------------|

| Ave_pool_B | 3 | 3.184 | 36% (DDR) |

| Ave_pool_F | 3 | 2.902 | 39% (DDR) |

| Col2im | 19 | 31.197 | 54% (DDR) |

| Concat | 72 | 18.015 | 10% (DDR) |

| Bias | 59 | 20.315 | 12% (DDR) |

| Dropout_B | 3 | 0.113 | 10% (DDR) |

| Dropout_F | 3 | 0.104 | 10% (DDR) |

| Gemm | 186 | 58.407 | 77% (DDR) |

| Gemv | 69 | 7.067 | 81%(DDR) |

| Im2col | 98 | 187.418 | 42% (DDR) |

| LRN_Diff | 2 | 18.39 | 43% (DDR) |

| LRN_Output | 2 | 4.699 | 16% (DDR) |

| LRN_Scale | 2 | 4.645 | 34% (DDR) |

| Max_pool_B | 13 | 66.337 | 62% (DDR) |

| Max_pool_F | 13 | 62.989 | 60% (DDR) |

| ReLU_B | 61 | 20.707 | 17% (DDR) |

| ReLU_F | 61 | 21.313 | 10% (DDR) |

| Softmax | 3 | 0.776 | 0% (DDR) |

| SoftmaxLoss_B | 3 | 0.063 | 0% (DDR) |

| SoftmaxLoss_F | 3 | 0.089 | 0% (DDR) |

| Split | 41 | 22.943 | 11% (DDR) |

| Add | 9 | 5.632 | 17% (DDR) |

| Asum | 3 | 0.124 | 0% (DDR) |

| Axpy | 25 | 12.695 | 20% (DDR) |

| Scale | 3 | 0.07 | 11% (DDR) |

| Write_Buffer | 198 | 28.168 | 12%(PCIe) |

| Read_Buffer | 3 | 0.091 | 0% (PCIe) |

| Total | 960 | 598.453 | 70%(F->B) |

and read. The Efficiency column has three meanings in Table 2: one is for FPGA DDR bandwidth efficiency during kernel execution, which is an average ratio and has been dynamically measured by FPGA profiling tool; one is for PCIe data transfer efficiency during memory movement between host and FPGA, which is an average ratio and has been dynamically measured by Intel Vtune Amplifier; the last is the ratio of total kernel execution to total for/backward process time. Given the process with batch size of 1, there are 25 kernels used and 960 times of kernel invocations in total, including 198 times for writing data buffer and 3 times for reading data buffer from FPGA to host. The gemm kernel is the most frequent operation with 186 times of invocation. Its total kernel execution time is 58.407ms with 77% FPGA DDR efficiency, and thus the average execution time is about 0.31ms for each invocation. Similarly, the gemv kernel is used by 69 times with 7.067ms for total execution time and 81% DDR efficiency, resulting in average 0.1ms for each time. Kernels of gemm and gemv have extra optimization with local memory buffer and Single Instruction and Multiple Data (SIMD) directives for vectorization. Using local memory buffer can dramatically decrease the times of DDR memory access required. Here we use maximum DDR bandwidth of S10 board, i.e. 14928MB/s with FPGA logic running at 300MHz, as the reference, to compare the DDR efficiency for each kernel. With respect to the data transfer, i.e. write buffer from host to FPGA and read buffer from FPGA to host, many writing buffer events are trigged by loading convolution and bias weights to FPGA to perform convolution layer by layer. The average PCIe data transfer speed is measured at 1.906GB/s, resulting in efficiency of 10% by taking PCIe Gen3 x16 lanes as the reference, i.e. 15.75GB/s. Finally, all of kernel and data transfer time are summed up, achieving

598.453ms and accounting for 70% of total average forward and backward time measured in Table 1, which implies that there are some software runtime overhead for current CPU and FPGA collaborations, leaving the room to further optimize in the future.

Table 3 shows the detail of configuration file in terms of hardware utilization and FPGA frequency after placement for the measurement mentioned in Table 1 and Table 2. Current configuration only occupies 47% and 31% for total BRAM and DSP resources, given more than half of these resource for further optimization. The gemm and gemv kernels are highlighted cause they both are significant kernels and optimized with higher utilization in terms of BRAMs and DSPs so that convolution and full connection layers can be performed with high efficiency.

Table 3: Hardware Utilization on S10

| | ALMs | Regs | M20K | DSPs | Fmax |

|-------|-----------|--------|------------|------------|---------|

| Gemm | 107K(12%) | 326K | 2338 (20%) | 1037 (18%) | 253 MHz |

| Gemv | 49K(5%) | 116K | 756 (6%) | 130 (2%) | 253 MHz |

| Total | 616K(66%) | 1415K | 5419 (47%) | 1796 (31%) | 253 MHz |

## 4.3 Training Process on FPGA

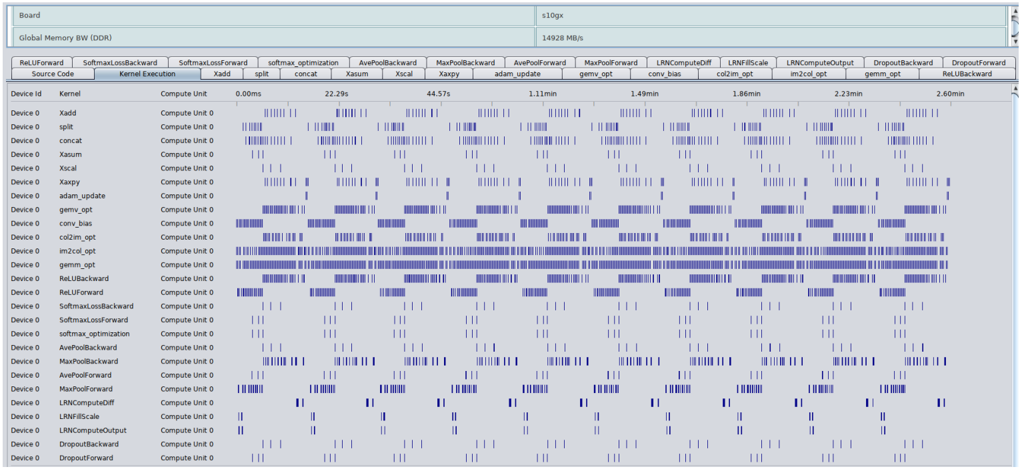

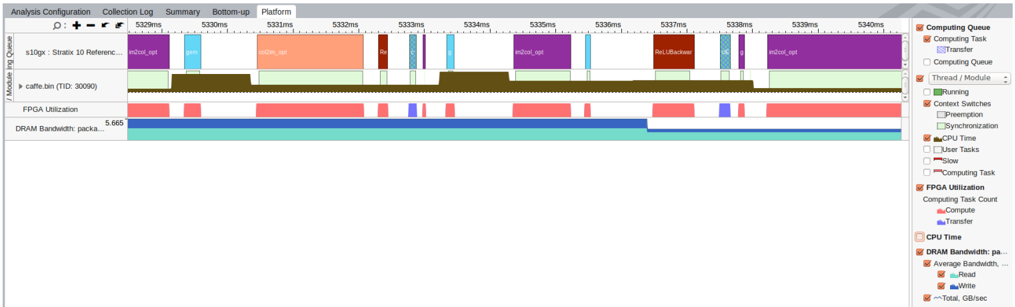

Forward and backward are necessary parts, in addition to that, the training process also needs weight update mechanism after forward and backward processing. In Caffe framework, the solver class is used to optimize and update the weights so that the weights can be trained gradually to reach the loss target as we defined during the training iterations. There are three main computationrelated phases during the weight update process: normalization , regularization and compute\_update. In this study, FeCaffe has considered operations mentioned above with different approaches. Normalization and regularization can be supported via combinations of BLAS-based kernels while the computer\_update is enabled by kernel designs directly, e.g. SGD, Adam and other common policies. Therefore, it is clearly seen that the most of computation burden during weight update has been deployed on FPGA, and thus the proposed FeCaffe is able to provide sufficient features to support CNN training for target networks. We use OpenCL native profiling tool and Intel Vtune Amplifier to capture the entire training process, by using GoogLeNet with batch size and iterations of 16 and 10, and results are shown in Figure 5 and Figure 4 respectively.

Figure 5 illustrates all of kernels required and their execution time for each kernel dynamically during the entire training process by performance registers and counters on FPGA. Figure 4 demonstrates the system profiling by VTune with the view zoomed in during training process. The CPU running time is highlighted by green and FPGA behavior is colored with pink. More details of kernel tasks can be checked via the task line, with different colors. It is clearly seen that the CPU and FPGA interactivity during the CNN training process, and CPU usage can be reduced when FPGA is executing kernel task. Host memory bandwidth can also be monitored for each kernel invocation. For the training with FeCaffe, users can reuse the traditional Caffe format, e.g. solver settings, prototxt, and commands to experience the training on FeCaffe. Moreover, snapshot function can also be supported, and thus the proposed FeCaffe can be reviewed as a comprehensive

Figure 5 Kernel Details for GoogLeNet Training Process

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Chart: Execution Time Breakdown by Layer and Device

### Overview

The image presents a chart visualizing the execution time of various neural network layers (kernels) across multiple devices (Device 0 - Device 3). The chart uses a heatmap-like representation, where the length of the blue bars indicates the execution time for each layer on each device. The chart is designed to show the distribution of computational load across different devices for different layers.

### Components/Axes

* **X-axis (Horizontal):** Represents different neural network layers/kernels. The layers are: `RelUForward`, `SoftmaxForward`, `softmax_optimization`, `AvePoolBackward`, `MaxPoolBackward`, `AvePoolForward`, `MaxPoolForward`, `LRNComputeDiff`, `LRNForwardScale`, `LRNComputeOutput`, `DropoutForward`, `RelUBackward`.

* **Y-axis (Vertical):** Represents different devices: `Source Data`, `Device 0`, `Device 1`, `Device 2`, `Device 3`.

* **Color/Intensity:** Blue bars represent execution time. Longer bars indicate longer execution times.

* **Header:** Displays "Board", "Global Memory BW (DDR)", "110gx", "14928 MB/s".

* **Time Values:** Specific time values are displayed for the `RelUForward` layer: `0.00ms`, `22.29s`, `44.57s`, `1.11min`, `1.48min`, `1.86min`, `2.22min`, `2.46min`.

* **Kernel Execution:** The label "Kernel Execution" is present above the layer names.

* **Compute Unit:** The label "Compute Unit 0" is present for each device.

### Detailed Analysis

The chart shows the execution time for each layer on each device. The time is represented by the length of the blue bars.

* **RelUForward:**

* Source Data: 0.00ms

* Device 0: 22.29s

* Device 1: 44.57s

* Device 2: 1.11min (66.6s)

* Device 3: 1.48min (88.8s)

* Device 4: 1.86min (111.6s)

* Device 5: 2.22min (133.2s)

* Device 6: 2.46min (147.6s)

* **SoftmaxForward:** All devices show a short execution time (represented by short bars).

* **softmax_optimization:** All devices show a short execution time.

* **AvePoolBackward:** Device 0 shows a longer execution time than other devices.

* **MaxPoolBackward:** Device 0 shows a longer execution time than other devices.

* **AvePoolForward:** Device 0 shows a longer execution time than other devices.

* **MaxPoolForward:** Device 0 shows a longer execution time than other devices.

* **LRNComputeDiff:** Device 0 shows a longer execution time than other devices.

* **LRNForwardScale:** Device 0 shows a longer execution time than other devices.

* **LRNComputeOutput:** Device 0 shows a longer execution time than other devices.

* **DropoutForward:** All devices show a short execution time.

* **RelUBackward:** All devices show a short execution time.

The majority of layers show minimal execution time across all devices, with the exception of the `RelUForward` layer, which exhibits a significant increase in execution time as the device number increases. Device 0 consistently shows the shortest execution times for layers where there is a noticeable difference.

### Key Observations

* The `RelUForward` layer is a significant bottleneck, with execution time increasing linearly with device number.

* Device 0 appears to be the fastest device for most layers.

* Most layers have relatively short execution times across all devices.

* The chart suggests a load imbalance, with some layers being significantly more computationally expensive than others.

### Interpretation

This chart likely represents the profiling results of a neural network execution on a multi-device system. The data suggests that the `RelUForward` layer is a major performance bottleneck, and its execution time scales poorly with the number of devices. This could be due to data transfer overhead, synchronization issues, or inherent computational complexity of the layer. The consistent faster performance of Device 0 suggests that it may have better hardware resources or a more optimized implementation.

The chart highlights the importance of load balancing in distributed deep learning. The significant difference in execution times between layers indicates that some devices are underutilized while others are overloaded. Optimizing the distribution of layers across devices could improve overall performance. The "Global Memory BW (DDR)" and "14928 MB/s" in the header suggest that memory bandwidth might be a limiting factor, especially for the `RelUForward` layer. Further investigation into the memory access patterns of this layer could reveal opportunities for optimization.

</details>

Figure 4 CPU and FPGA Behaviors duing GoogLeNet Training Process (Best Viewed Zoomed in)

<details>

<summary>Image 5 Details</summary>

### Visual Description

\n

## Timeline Chart: System Performance Monitoring

### Overview

The image presents a timeline chart displaying system performance metrics over a period from approximately 5320ms to 5340ms. The chart visualizes the activity of several components including d3dgyr_Static (TID 39), caffe_rnn (TID 3000), FPGA Utilization, DRAM Bandwidth, and CPU Time. The chart is structured as a Gantt-like diagram, showing the duration and overlap of different tasks and resource usage.

### Components/Axes

* **X-axis:** Time, ranging from approximately 5320ms to 5340ms, with markers at 5320ms, 5325ms, 5330ms, 5335ms, 5340ms.

* **Y-axis:** Represents different system components and their activity.

* **Legend (Bottom-Right):**

* **Computing Queue:** Purple

* **Computing Task:** Orange

* **Thread / Module:** Grey

* **Running:** Green

* **Context Switches:** Red

* **Preemption:** Light Blue

* **Synchronization:** Dark Blue

* **CPU Time:** Yellow

* **User Tasks:** Dark Yellow

* **Slow:** Light Yellow

* **Computing Task Count:**

* Compute: Orange

* Transfer: Light Orange

* **FPGA Utilization:**

* Computing Task: Orange

* Transfer: Light Orange

* **DRAM Bandwidth:**

* Read: Green

* Write: Red

* Total: GB/sec (Grey)

### Detailed Analysis

The chart is divided into sections representing different system components.

* **d3dgyr_Static (TID 39):** This component shows periods of activity (orange) interspersed with periods of inactivity (grey). There are several short orange blocks between 5320ms and 5340ms, labeled as "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_opt_ag", "ref_

</details>

FPGA-based solution to conveniently provide common deep learning development in particular to training.

## 4.4 Comparison to the State-of-art

This study also presents some comparison with prior work on CNN training with FPGAs in terms of functionality and performance, as listed in Table 4. It is clear that we can provide higher flexibility in terms of CNN network topologies, solver types, training hyperparameter settings, expansibility and ease of use. Compared to FCNN solution [8] , we can achieve average execution time of 1102.162 ms and 1710.090 ms for forward and backward respectively, given LeNet with batch size of 384 and 150 minibaches after 200 iterations, resulting in 6.4x and 8.4x average execution time improvement under same testing conditions. Please note that some performance improvement comes from FPGA device difference cause S10 device has native floating-point DSP blocks and more advanced technology node, compared to the device in [8] . Due to DDR memory size limitation of S10

development board, training of VGG-16 and VGG-19 cannot be performed, and thus we provide the training time consumed for one epoch of ImageNet 2012 with 1.2 million training and 50 thousand validation images for AlexNet, SqueezeNet and GoogLeNet, respectively. Compared to FPDeep [9] , current training performance is much less competitive. Fitting all of weights, feature data and gradient within on-chip memory over FPGA cluster can significantly change the FPGA pipeline design structure, and maximize FPGA on-chip memory bandwidth and DSP resources, at the cost of 43,200 DSPs and several hundreds of Mbits of BRAMs in total. In addition, fixed-point of 16 is another key factor to provide such an incredible training result. Small batch size is another factor to impact our training speed as total training iterations and data communication times between FPGA and host can be reduced during training and inference phases with the increment of batch size, leading to higher FPGA computation efficiency.

Table 4: Comparison with FPGA Prior Work

| | Our Work | FCNN | [8] | FPDeep [9] |

|----------------------------------|--------------------------------------------------------------------------------|---------------------------------------------------------|---------------------------------------------------------|-------------------------------------------------------------------------------------------------------|

| Framework | Caffe | Customized | Customized | Customized |

| Develop Tool | OpenCL with AOC | MaxCompiler Tool | MaxCompiler Tool | RTL Generator |

| CNN Feature | Training and Inference | Training and Inference | Training and Inference | Training and Inference |

| Network Topologies Supported | AlexNet, VGG, SqueezeNet, GoogLeNet, and the Networks with Same Primitives | LeNet | LeNet | AlexNet, VGG-16 and VGG-19 |

| Solver Supported | SGD, Adam, RMS_Prop, Nesterov, Ada_Grad and Ada_Delta | SGD Only | SGD Only | SGD Only |

| Training Hyperparameter Settings | Same with GPUs and CPU, e.g. base_lr, lr_policy, gamma, momentum, weight_decay | Unknown | Unknown | Unknown |

| FPGA Optimization Mechanism | Gemm: NDRange and 2D Local Memory Gemv: NDRange and 1D Local Memory | Systolic-like: Customized Processing Pipeline for | Systolic-like: Customized Processing Pipeline for | All Layers Processing Pipeline Distributed over FPGA Cluster Store All Weights, Feature and Gradients |

| FPGA Optimization Mechanism | Gemm: NDRange and 2D Local Memory Gemv: NDRange and 1D Local Memory | Convolution and Pooling | Convolution and Pooling | with on-chip BRAMs Forward and Backward Processing Pipeline in Parallel |

| Expansibility | Small Efforts to Enable New Functions | More Efforts (Pipeline Need to | More Efforts (Pipeline Need to | More Efforts (Pipeline Need to Update |

| Expansibility | No Inter-FPGA Dependency | Update for New Functions) | Update for New Functions) | for New Functions) |

| Ease of Use | Same with Conventional Caffe, e.g. Prototxt, Commands and Snapshot | Customized Network Config. Parameters and HWConstraints | Customized Network Config. Parameters and HWConstraints | Customized Network Config. Parameters and HWConstraints |

| Device and Board | Stratix 10 Development Kit | Stratix V GSD8 | Stratix V GSD8 | VC709 Board (V7690T) |

| Number | 1 | 2 | 2 | 15 |

| DDR Storage and Bandwidth | 2 GB and 14.578GB/s | 6 GB and 2 * 9.6GB/s | 6 GB and 2 * 9.6GB/s | On-chip Memory Bandwidth |

| Fmax | 253 MHz | 150 MHz | 150 MHz | Unknown |

| Data Type | FP32 | FP32 | FP32 | Fixed-point 16 |

| Total DSP Utilization | 1796 | Unknown | Unknown | 15 * 2880 = 43,200 |

| LeNet (L1-L6) | Forward (ms) Backward | (ms) Forward (ms) | Backward (ms) | |

| L1 (Conv) | 524.293 514.197 | 590 | 1210 | |

| L2 (Pool) | 22.330 23.895 | 530 | 570 | |

| L3 (Conv) | 547.651 1156.870 | 4670 | 10320 | |

| L4 (Pool) | 6.539 | 7.010 170 | 180 | N/A |

| L5 (FC) | 1.345 6.003 | 920 | 1820 | |

| L6 (FC) | 0.004 2.115 | 180 | 200 | |

| Total | 1102.162 (6.4x) 1710.090 | (8.4x) 7060 | 14300 | |

| AlexNet per Epoch | 86.41 Hours (BS:32 and Default Solver) | | N/A | 0.17 Hour |

| SqueezeNet v1.0 per | | | | |

| Epoch | 159.62 Hours (BS:16 and Default Solver) | 159.62 Hours (BS:16 and Default Solver) | N/A N/A | N/A N/A |

| GoogLeNet per Epoch | 291.08 Hours (BS:16, Default Solver with Adam) | 291.08 Hours (BS:16, Default Solver with Adam) | N/A N/A | N/A N/A |

## 5 Analysis and Optimization

Based on the result analysis and comparison, the proposed FeCaffe utilizes the fine-grained and kernel-wise FPGA implementation to achieve the same granularity with GPU acceleration, and is capable of providing sufficient and flexible offload functions for deep learning development. It is a new path for deep learning and thus the overall performance is less competitive compared to the mature and well-developed GPU solutions. Therefore, a number of optimization directions mainly focusing on the performance improvement, from FPGA kernel, software runtime, CNN architectures etc., are introduced as follows:

## 5.1 FPGA-level

In this work, we currently choose the OpenCL flow with NDRange format to develop necessary kernels for CNN operations. Due to good adaptation of compiler tool, users are able to deploy most of NDRange kernel files on FPGA conveniently with minor or even no modifications. However, this implementation approach can cause performance issue and resource usage overhead especially for large scale and complicated designs. Therefore, it is recommended by compiler vendors to develop single work-item designs to achieve the best performance with resource optimization. Compared to NDRange style, the single work-item style is very similar with the traditional FPGA design flow, and provides more choices and flexibility to design and optimize kernels. Users can develop more flexible and sophisticated pipeline structures and utilize more optimization directives to fully unleash FPGA massive on-chip memory storage and bandwidth for better throughput performance

Another optimization approach is to improve FPGA logic clock frequency. Stratix 10 FPGA chip has the Hyperflex technology, which inserts some registers on routing resources during placement and routing phase, and thus is able to dramatically increase FPGA design timing frequency [26] . Current implementation approach cannot enable Hyperflex optimization cause this feature only allows single work-item design with stringent conditions for 19.2 version. Therefore, rewriting kernels can increase clock frequency significantly as well. Enlarging DDR storage size and bandwidth for the FPGA board can also improve performance. Currently DDR bandwidth is still a limitation, compared to GPU and CPUs, and thus multiple banks of DDR can mitigate this situation. In addition to these factors, lower bitwidth for training and inference is another important factor to consider for the performance optimization, with the development of retraining and quantization approaches. Int8 and even Int4 can significantly improve DSP efficiency, intermediate data storage and DDR bandwidth and lead to several times of overall CNN processing capability, compared to single floating-point. This enables FPGA solutions to become more competitive compared to GPUs and dedicated ASICs in terms of Int8 and Int4 computation capability.

## 5.2 System Pipeline-level

Currently, FeCaffe chooses synchronous interface to manage communication for higher-level function invocation, and that means the CPU launches FPGA kernels in sequence, and does not start to process the next kernel until current kernel has been completely executed. Therefore, data transfer between CPU and FPGA cannot be overlapped, but is viewed as kernel overhead for the performance measurement. In the meanwhile, FPGA cannot continue to operate all the time cause it has to wait during data transfer, resulting in lower acceleration efficiency. We can notice the phenomenon that kernels are executed discontinuously by Intel Vtune Amplifier in Figure 4. An optimization approach to address this issue is to utilize asynchronous mechanism for CPU and FPGA. By using asynchronous interface, host can put several kernel launches into the invocation queue and thus data transfer through PCIe for next kernels can be prefetched in advanced while FPGA is executing on the current kernel, realizing FPGA continuous operations and higher efficiency. Therefore, overhead of data transfer can be overlapped for the frame throughput calculation and FPGA continuous operation maximizes the throughput performance in terms of system pipeline level.