## Scaling Laws for Neural Language Models

## Jared Kaplan ∗

Johns Hopkins University, OpenAI jaredk@jhu.edu

Sam McCandlish

## ∗

OpenAI sam@openai.com

Tom Henighan OpenAI henighan@openai.com

Tom B. Brown OpenAI tom@openai.com

Benjamin Chess OpenAI bchess@openai.com

Rewon Child OpenAI rewon@openai.com

Scott Gray OpenAI scott@openai.com

Alec Radford OpenAI alec@openai.com

Jeffrey Wu OpenAI jeffwu@openai.com

Dario Amodei OpenAI damodei@openai.com

## Abstract

Westudy empirical scaling laws for language model performance on the cross-entropy loss. The loss scales as a power-law with model size, dataset size, and the amount of compute used for training, with some trends spanning more than seven orders of magnitude. Other architectural details such as network width or depth have minimal effects within a wide range. Simple equations govern the dependence of overfitting on model/dataset size and the dependence of training speed on model size. These relationships allow us to determine the optimal allocation of a fixed compute budget. Larger models are significantly more sampleefficient, such that optimally compute-efficient training involves training very large models on a relatively modest amount of data and stopping significantly before convergence.

∗ Equal contribution.

Contributions: Jared Kaplan and Sam McCandlish led the research. Tom Henighan contributed the LSTM experiments. Tom Brown, Rewon Child, and Scott Gray, and Alec Radford developed the optimized Transformer implementation. Jeff Wu, Benjamin Chess, and Alec Radford developed the text datasets. Dario Amodei provided guidance throughout the project.

## Contents

| 1 | Introduction | 2 |

|------------|--------------------------------------------------|-----|

| 2 | Background and Methods | 6 |

| 3 | Empirical Results and Basic Power Laws | 7 |

| 4 | Charting the Infinite Data Limit and Overfitting | 10 |

| 5 | Scaling Laws with Model Size and Training Time | 12 |

| 6 | Optimal Allocation of the Compute Budget | 14 |

| 7 | Related Work | 18 |

| 8 | Discussion | 18 |

| Appendices | Appendices | 20 |

| A | Summary of Power Laws | 20 |

| B | Empirical Model of Compute-Efficient Frontier | 20 |

| C | Caveats | 22 |

| D | Supplemental Figures | 23 |

## 1 Introduction

Language provides a natural domain for the study of artificial intelligence, as the vast majority of reasoning tasks can be efficiently expressed and evaluated in language, and the world's text provides a wealth of data for unsupervised learning via generative modeling. Deep learning has recently seen rapid progress in language modeling, with state of the art models [RNSS18, DCLT18, YDY + 19, LOG + 19, RSR + 19] approaching human-level performance on many specific tasks [WPN + 19], including the composition of coherent multiparagraph prompted text samples [RWC + 19].

One might expect language modeling performance to depend on model architecture, the size of neural models, the computing power used to train them, and the data available for this training process. In this work we will empirically investigate the dependence of language modeling loss on all of these factors, focusing on the Transformer architecture [VSP + 17, LSP + 18]. The high ceiling and low floor for performance on language tasks allows us to study trends over more than seven orders of magnitude in scale.

Throughout we will observe precise power-law scalings for performance as a function of training time, context length, dataset size, model size, and compute budget.

## 1.1 Summary

Our key findings for Transformer language models are are as follows:

2 Here we display predicted compute when using a sufficiently small batch size. See Figure 13 for comparison to the purely empirical data.

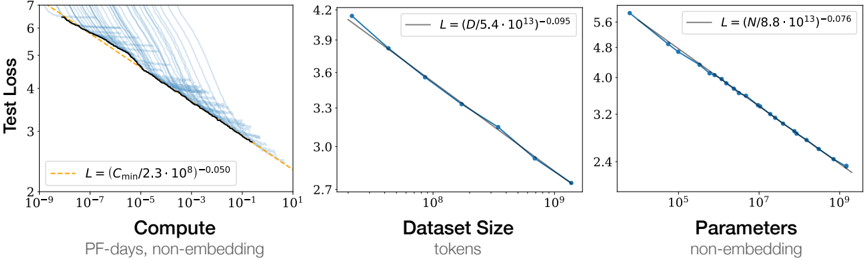

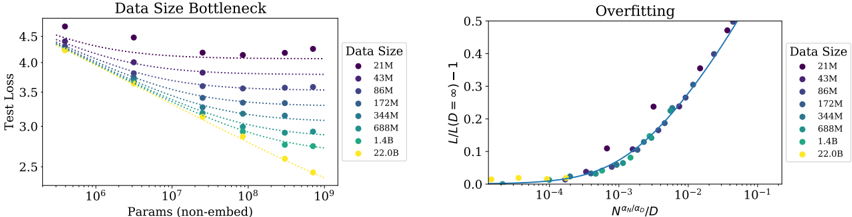

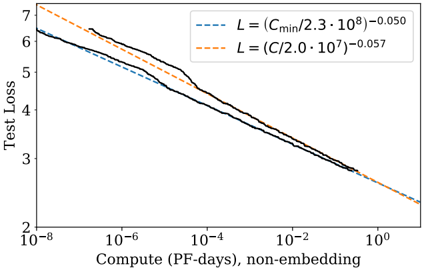

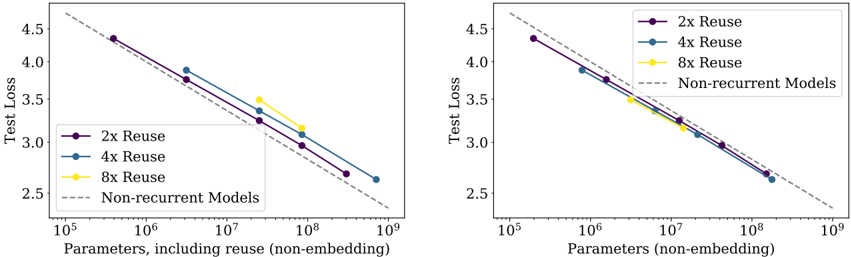

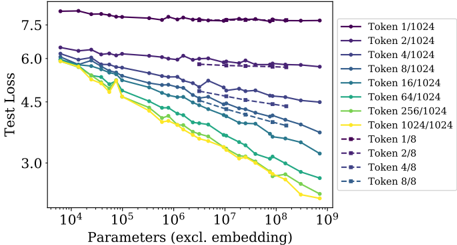

Figure 1 Language modeling performance improves smoothly as we increase the model size, datasetset size, and amount of compute 2 used for training. For optimal performance all three factors must be scaled up in tandem. Empirical performance has a power-law relationship with each individual factor when not bottlenecked by the other two.

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Charts: Scaling Laws for Neural Network Training

### Overview

The image presents three charts illustrating scaling laws for neural network training. Each chart explores the relationship between "Test Loss" and a different factor: "Compute", "Dataset Size", and "Parameters". The charts show how test loss decreases as these factors increase, with each chart also including a fitted power law curve.

### Components/Axes

* **Common Y-axis:** "Test Loss" ranging from approximately 2 to 7.

* **Chart 1 (Left):**

* X-axis: "Compute" (PF-days, non-embedding) on a logarithmic scale from 10<sup>-6</sup> to 10<sup>1</sup>.

* Data Series 1 (Blue, faint lines): Multiple individual training runs showing test loss vs. compute.

* Data Series 2 (Orange, bold line): A fitted curve representing the scaling law: L = (C<sub>min</sub>/(2.3 * 10<sup>9</sup>))<sup>-0.050</sup>

* **Chart 2 (Center):**

* X-axis: "Dataset Size" (tokens) on a logarithmic scale from 10<sup>7</sup> to 10<sup>10</sup>.

* Data Series 1 (Blue, bold line): A fitted curve representing the scaling law: L = (D/(5.4 * 10<sup>43</sup>))<sup>-0.095</sup>

* **Chart 3 (Right):**

* X-axis: "Parameters" (non-embedding) on a logarithmic scale from 10<sup>5</sup> to 10<sup>9</sup>.

* Data Series 1 (Blue, bold line): A fitted curve representing the scaling law: L = (N/(8.8 * 10<sup>13</sup>))<sup>-0.076</sup>

### Detailed Analysis or Content Details

* **Chart 1 (Compute):** The blue lines represent individual training runs, showing a wide range of test loss values for a given compute level. The orange line, representing the scaling law, slopes downward, indicating that as compute increases, test loss decreases.

* At Compute = 10<sup>-6</sup>, Test Loss ≈ 6.5

* At Compute = 10<sup>-1</sup>, Test Loss ≈ 3.0

* At Compute = 10<sup>1</sup>, Test Loss ≈ 2.2

* **Chart 2 (Dataset Size):** The blue line slopes downward, indicating that as dataset size increases, test loss decreases.

* At Dataset Size = 10<sup>7</sup>, Test Loss ≈ 4.2

* At Dataset Size = 10<sup>9</sup>, Test Loss ≈ 2.8

* At Dataset Size = 10<sup>10</sup>, Test Loss ≈ 2.7

* **Chart 3 (Parameters):** The blue line slopes downward, indicating that as the number of parameters increases, test loss decreases.

* At Parameters = 10<sup>5</sup>, Test Loss ≈ 5.6

* At Parameters = 10<sup>7</sup>, Test Loss ≈ 3.5

* At Parameters = 10<sup>9</sup>, Test Loss ≈ 2.5

### Key Observations

* All three charts demonstrate a clear inverse relationship between the input factor (Compute, Dataset Size, Parameters) and Test Loss.

* The scaling laws (orange/blue lines) provide a general trend, but individual training runs (blue lines in Chart 1) exhibit significant variance.

* The rate of decrease in test loss appears to diminish as the input factor increases in all three charts.

### Interpretation

These charts illustrate the scaling laws governing the performance of neural networks. They demonstrate that increasing compute, dataset size, and the number of parameters generally leads to lower test loss, and thus improved model performance. The fitted power law curves provide a quantitative relationship between these factors and test loss, allowing for predictions about the performance of models with different configurations. The variance observed in Chart 1 suggests that other factors, beyond compute, also influence model performance. The diminishing returns observed in all charts indicate that there are limits to the benefits of simply scaling up these factors. The specific exponents in the power laws (e.g., -0.050, -0.095, -0.076) quantify the sensitivity of test loss to changes in each factor. These findings are crucial for efficient resource allocation and model design in machine learning.

</details>

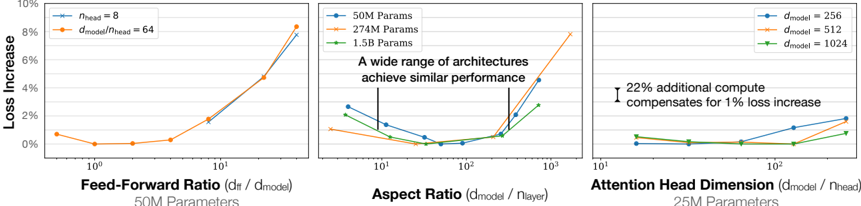

Performance depends strongly on scale, weakly on model shape: Model performance depends most strongly on scale, which consists of three factors: the number of model parameters N (excluding embeddings), the size of the dataset D , and the amount of compute C used for training. Within reasonable limits, performance depends very weakly on other architectural hyperparameters such as depth vs. width. (Section 3)

Smooth power laws: Performance has a power-law relationship with each of the three scale factors N,D,C when not bottlenecked by the other two, with trends spanning more than six orders of magnitude (see Figure 1). We observe no signs of deviation from these trends on the upper end, though performance must flatten out eventually before reaching zero loss. (Section 3)

Universality of overfitting: Performance improves predictably as long as we scale up N and D in tandem, but enters a regime of diminishing returns if either N or D is held fixed while the other increases. The performance penalty depends predictably on the ratio N 0 . 74 /D , meaning that every time we increase the model size 8x, we only need to increase the data by roughly 5x to avoid a penalty. (Section 4)

Universality of training: Training curves follow predictable power-laws whose parameters are roughly independent of the model size. By extrapolating the early part of a training curve, we can roughly predict the loss that would be achieved if we trained for much longer. (Section 5)

Transfer improves with test performance: When we evaluate models on text with a different distribution than they were trained on, the results are strongly correlated to those on the training validation set with a roughly constant offset in the loss - in other words, transfer to a different distribution incurs a constant penalty but otherwise improves roughly in line with performance on the training set. (Section 3.2.2)

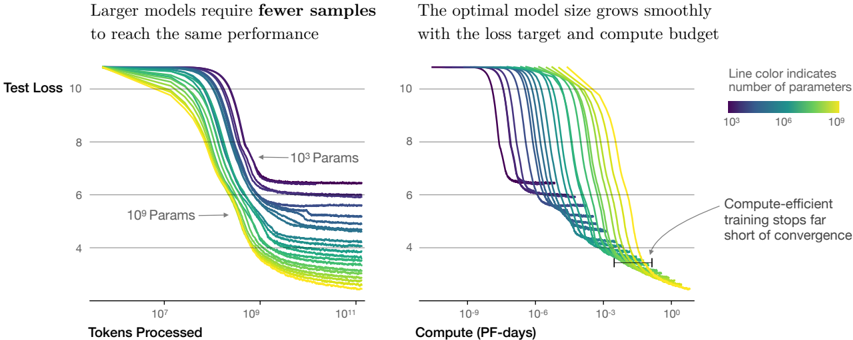

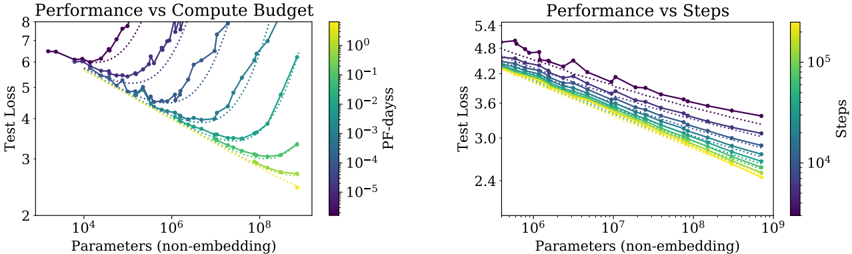

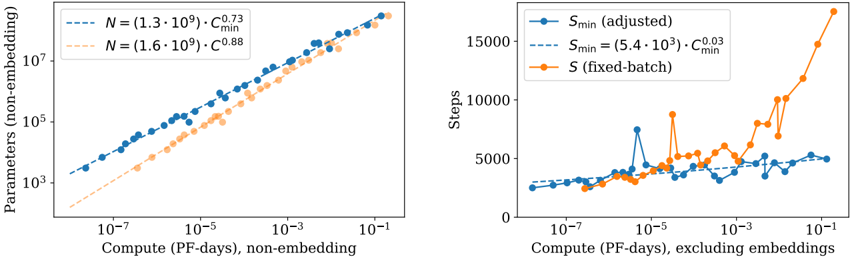

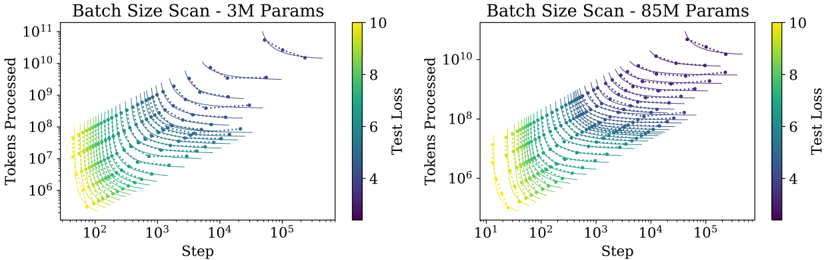

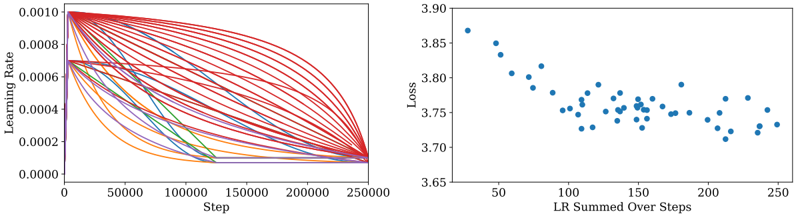

Sample efficiency: Large models are more sample-efficient than small models, reaching the same level of performance with fewer optimization steps (Figure 2) and using fewer data points (Figure 4).

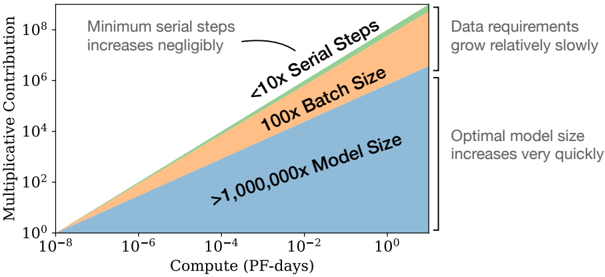

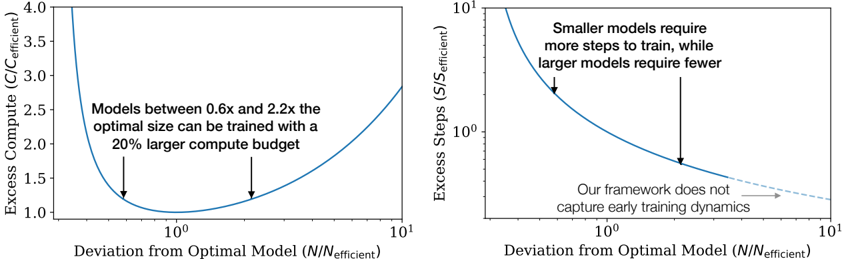

Convergence is inefficient: When working within a fixed compute budget C but without any other restrictions on the model size N or available data D , we attain optimal performance by training very large models and stopping significantly short of convergence (see Figure 3). Maximally compute-efficient training would therefore be far more sample efficient than one might expect based on training small models to convergence, with data requirements growing very slowly as D ∼ C 0 . 27 with training compute. (Section 6)

Optimal batch size: The ideal batch size for training these models is roughly a power of the loss only, and continues to be determinable by measuring the gradient noise scale [MKAT18]; it is roughly 1-2 million tokens at convergence for the largest models we can train. (Section 5.1)

Taken together, these results show that language modeling performance improves smoothly and predictably as we appropriately scale up model size, data, and compute. We expect that larger language models will perform better and be more sample efficient than current models.

Figure 2 We show a series of language model training runs, with models ranging in size from 10 3 to 10 9 parameters (excluding embeddings).

<details>

<summary>Image 2 Details</summary>

### Visual Description

\n

## Charts: Model Performance vs. Data & Compute

### Overview

The image presents two charts comparing the performance of machine learning models with varying numbers of parameters. The left chart shows Test Loss as a function of Tokens Processed, while the right chart shows Test Loss as a function of Compute (in PF-days). Both charts aim to demonstrate the relationship between model size, data usage, compute resources, and performance (measured by Test Loss).

### Components/Axes

* **Left Chart:**

* **Title:** "Larger models require fewer samples to reach the same performance"

* **X-axis:** "Tokens Processed" (logarithmic scale, ranging approximately from 10<sup>6</sup> to 10<sup>11</sup>)

* **Y-axis:** "Test Loss" (linear scale, ranging approximately from 4 to 10)

* **Data Series:** Multiple lines representing different model sizes.

* **Labels:** "10<sup>3</sup> Params" and "10<sup>9</sup> Params" are indicated with arrows pointing to representative lines.

* **Right Chart:**

* **Title:** "The optimal model size grows smoothly with the loss target and compute budget"

* **X-axis:** "Compute (PF-days)" (logarithmic scale, ranging approximately from 10<sup>-9</sup> to 10<sup>1</sup>)

* **Y-axis:** "Test Loss" (linear scale, ranging approximately from 4 to 10)

* **Data Series:** Multiple lines representing different model sizes.

* **Legend:** Located on the right side, indicating that "Line color indicates number of parameters". The legend shows a color gradient from purple (10<sup>3</sup>) to green (10<sup>9</sup>).

* **Annotation:** "Compute-efficient training stops far short of convergence" with an arrow pointing to a line that plateaus at a higher loss value.

### Detailed Analysis or Content Details

**Left Chart (Test Loss vs. Tokens Processed):**

* **Trend:** All lines generally slope downwards, indicating that Test Loss decreases as more tokens are processed. The lines representing larger models (green) reach lower loss values faster than those representing smaller models (purple).

* **Data Points (approximate):**

* **10<sup>3</sup> Params (purple):** Starts around Test Loss = 9.5, reaches approximately Test Loss = 5.5 at 10<sup>11</sup> Tokens Processed.

* **10<sup>9</sup> Params (green):** Starts around Test Loss = 9.5, reaches approximately Test Loss = 4.0 at 10<sup>9</sup> Tokens Processed.

* **Observation:** The lines are densely packed at the beginning (low token count) and spread out as the token count increases, suggesting diminishing returns for larger models.

**Right Chart (Test Loss vs. Compute):**

* **Trend:** Similar to the left chart, all lines slope downwards. Larger models (green) achieve lower loss values with less compute.

* **Data Points (approximate):**

* **10<sup>3</sup> Params (purple):** Starts around Test Loss = 9.5, reaches approximately Test Loss = 5.5 at 10<sup>1</sup> PF-days.

* **10<sup>9</sup> Params (green):** Starts around Test Loss = 9.5, reaches approximately Test Loss = 4.0 at 10<sup>-3</sup> PF-days.

* **Annotation:** The annotated line (yellowish-green) plateaus around Test Loss = 6.0, indicating that further compute investment does not significantly reduce loss.

**Color Mapping:** The color gradient in the legend (purple to green) corresponds to increasing model size (10<sup>3</sup> to 10<sup>9</sup> parameters). This color mapping is consistent across both charts.

### Key Observations

* Larger models converge faster (reach lower loss values) with both increased data (tokens processed) and increased compute.

* The relationship between model size, data, and compute appears smooth and predictable.

* Compute-efficient training can lead to suboptimal results, as indicated by the plateauing line in the right chart.

* The logarithmic scales on the x-axes highlight the significant differences in scale between the two charts.

### Interpretation

The data strongly suggests that increasing model size is an effective strategy for improving performance, but it comes with increased computational cost. The charts demonstrate a trade-off between model size, data requirements, and compute resources. Larger models can achieve the same level of performance as smaller models with less data and compute. However, the annotation on the right chart warns against prematurely stopping training, as this can result in a model that has not fully converged and therefore performs suboptimally. The smooth growth of the optimal model size with loss target and compute budget suggests a predictable scaling relationship that can be leveraged for efficient model development. The use of logarithmic scales indicates that the benefits of increased data and compute diminish as these resources become larger, suggesting that there may be a point of diminishing returns.

</details>

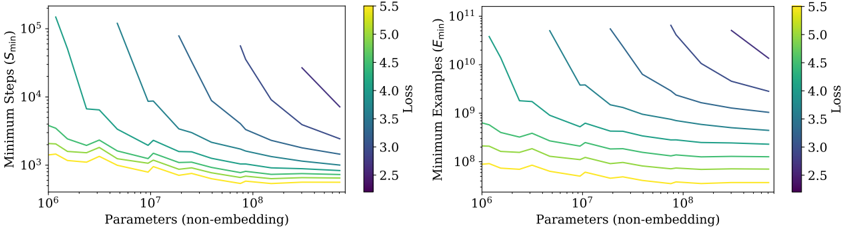

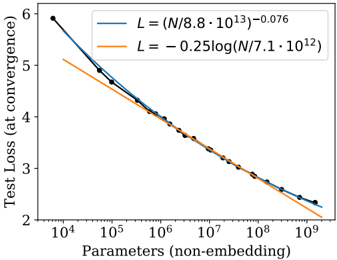

Figure 3 As more compute becomes available, we can choose how much to allocate towards training larger models, using larger batches, and training for more steps. We illustrate this for a billion-fold increase in compute. For optimally compute-efficient training, most of the increase should go towards increased model size. A relatively small increase in data is needed to avoid reuse. Of the increase in data, most can be used to increase parallelism through larger batch sizes, with only a very small increase in serial training time required.

<details>

<summary>Image 3 Details</summary>

### Visual Description

\n

## Chart: Multiplicative Contribution vs. Compute

### Overview

The image presents a chart illustrating the relationship between compute (measured in PF-days) and multiplicative contribution, with different regions representing the impact of serial steps, batch size, and model size. The chart uses a logarithmic scale for both axes. The chart is divided into three colored regions: blue, orange, and light blue. Annotations highlight trends related to minimum serial steps, data requirements, and optimal model size.

### Components/Axes

* **X-axis:** Compute (PF-days), ranging from 10<sup>-8</sup> to 10<sup>0</sup> (logarithmic scale).

* **Y-axis:** Multiplicative Contribution, ranging from 10<sup>0</sup> to 10<sup>8</sup> (logarithmic scale).

* **Legend/Regions:**

* Blue: >1,000,000x Model Size

* Orange: 100x Batch Size

* Light Blue: <10x Serial Steps

* **Annotations:**

* "Minimum serial steps increases negligibly" - pointing to the light blue region.

* "Data requirements grow relatively slowly" - pointing to the light blue region.

* "Optimal model size increases very quickly" - pointing to the blue region.

### Detailed Analysis

The chart shows three distinct regions, each representing a different factor influencing multiplicative contribution as compute increases.

* **Light Blue Region (<10x Serial Steps):** This region occupies the lower-left portion of the chart. The line representing this region starts at approximately 10<sup>0</sup> on the Y-axis when the compute is at 10<sup>-8</sup> and rises relatively slowly to approximately 10<sup>3</sup> on the Y-axis when the compute is at 10<sup>0</sup>. This indicates that increasing compute in this regime yields diminishing returns in multiplicative contribution. The annotation suggests that minimum serial steps increase negligibly and data requirements grow relatively slowly in this region.

* **Orange Region (100x Batch Size):** This region is positioned above and to the right of the light blue region. The line starts at approximately 10<sup>2</sup> on the Y-axis when the compute is at 10<sup>-6</sup> and rises to approximately 10<sup>5</sup> on the Y-axis when the compute is at 10<sup>0</sup>. This region shows a steeper slope than the light blue region, indicating a more significant increase in multiplicative contribution for a given increase in compute.

* **Blue Region (>1,000,000x Model Size):** This region occupies the upper-right portion of the chart. The line starts at approximately 10<sup>3</sup> on the Y-axis when the compute is at 10<sup>-4</sup> and rises very steeply to approximately 10<sup>8</sup> on the Y-axis when the compute is at 10<sup>0</sup>. This indicates that increasing compute in this regime leads to a very rapid increase in multiplicative contribution. The annotation suggests that the optimal model size increases very quickly in this region.

### Key Observations

* The multiplicative contribution increases more rapidly with compute as the model size increases (blue region) compared to increasing batch size (orange region) or minimizing serial steps (light blue region).

* The light blue region demonstrates the least sensitivity to compute increases.

* The chart highlights a trade-off between compute, model size, batch size, and serial steps in achieving multiplicative contribution.

### Interpretation

The chart demonstrates the scaling behavior of machine learning models with respect to compute. It suggests that, initially, optimizing for serial steps and data efficiency (light blue region) provides modest gains. As compute resources increase, increasing batch size (orange region) becomes more effective. However, beyond a certain point, the most significant gains are achieved by increasing model size (blue region), albeit at a rapidly increasing compute cost. The annotations emphasize that while minimizing serial steps and data requirements are important, the optimal model size is the primary driver of multiplicative contribution when sufficient compute is available. This implies that scaling model size is the most impactful strategy for improving performance, but it requires substantial computational resources. The logarithmic scales suggest that the benefits of increasing compute diminish as compute increases, but the rate of diminishing returns varies depending on which factor (serial steps, batch size, or model size) is being optimized.

</details>

## 1.2 Summary of Scaling Laws

The test loss of a Transformer trained to autoregressively model language can be predicted using a power-law when performance is limited by only either the number of non-embedding parameters N , the dataset size D , or the optimally allocated compute budget C min (see Figure 1):

1. For models with a limited number of parameters, trained to convergence on sufficiently large datasets:

$$L ( N ) = ( N _ { c } / N ) ^ { \alpha _ { N } } \, ; \quad \alpha _ { N } \sim 0 . 0 7 6 , \quad N _ { c } \sim 8 . 8 \times 1 0 ^ { 1 3 } \, ( n o n - e m b d i n g p a r a m e t e r s ) \quad ( 1 . 1 )$$

2. For large models trained with a limited dataset with early stopping:

$$L ( D ) = \left ( D _ { c } / D \right ) ^ { \alpha _ { D } } ; \, \alpha _ { D } \sim 0 . 0 9 5 , \quad D _ { c } \sim 5 . 4 \times 1 0 ^ { 1 3 } \left ( t o k e n s \right ) \quad \ \ ( 1 . 2 )$$

3. When training with a limited amount of compute, a sufficiently large dataset, an optimally-sized model, and a sufficiently small batch size (making optimal 3 use of compute):

$$\begin{array} { r l } & { L ( C _ { \min } ) = \left ( C _ { c } ^ { \min } / C _ { \min } \right ) ^ { \alpha _ { c } ^ { \min } } ; \, \alpha _ { C } ^ { \min } \sim 0 . 0 5 0 , \quad C _ { c } ^ { \min } \sim 3 . 1 \times 1 0 ^ { 8 } \left ( P F - d a y s \right ) \quad ( 1 . 3 ) } \end{array}$$

3 We also observe an empirical power-law trend with the training compute C (Figure 1) while training at fixed batch size, but it is the trend with C min that should be used to make predictions. They are related by equation (5.5).

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Charts: Loss vs Model and Dataset Size

### Overview

The image presents two charts visualizing the relationship between loss and model/dataset size. The left chart shows Loss vs. Tokens in Dataset, while the right chart shows Loss vs. Estimated S_min (likely a measure of training steps). Both charts use color to represent the number of parameters in the model.

### Components/Axes

**Left Chart:**

* **Title:** Loss vs Model and Dataset Size

* **X-axis:** Tokens in Dataset (log scale, ranging from approximately 10^7 to 10^10)

* **Y-axis:** Loss (ranging from approximately 2.5 to 4.5)

* **Legend:** Params (color-coded)

* 708M (Yellow)

* 302M (Green)

* 85M (Light Blue)

* 3M (Dark Blue)

* 25M (Orange)

* 393.2K (Purple)

**Right Chart:**

* **Title:** Loss vs Model Size and Training Steps

* **X-axis:** Estimated S_min (log scale, ranging from approximately 10^4 to 10^5)

* **Y-axis:** Loss (ranging from approximately 2.4 to 4.4)

* **Colorbar:** Parameters (non-embedded) (log scale, ranging from approximately 10^6 to 10^8) - This serves as the legend.

### Detailed Analysis or Content Details

**Left Chart:**

* **708M (Yellow):** The line starts at approximately Loss = 4.2 with Tokens = 10^7 and decreases rapidly to approximately Loss = 2.6 with Tokens = 10^10.

* **302M (Green):** The line starts at approximately Loss = 4.0 with Tokens = 10^7 and decreases to approximately Loss = 3.0 with Tokens = 10^10.

* **85M (Light Blue):** The line starts at approximately Loss = 4.1 with Tokens = 10^7 and decreases to approximately Loss = 3.4 with Tokens = 10^10.

* **3M (Dark Blue):** The line starts at approximately Loss = 4.3 with Tokens = 10^7 and decreases to approximately Loss = 3.8 with Tokens = 10^10.

* **25M (Orange):** The line starts at approximately Loss = 4.1 with Tokens = 10^7 and decreases to approximately Loss = 3.2 with Tokens = 10^10.

* **393.2K (Purple):** The line starts at approximately Loss = 4.3 with Tokens = 10^7 and remains relatively flat, ending at approximately Loss = 4.2 with Tokens = 10^10.

**Right Chart:**

The chart displays a heatmap-like representation of loss as a function of estimated S_min and model parameters. The color intensity corresponds to the number of parameters.

* **General Trend:** For all parameter sizes, the loss generally decreases as S_min increases.

* **Parameter Impact:** Higher parameter counts (yellow/orange) generally exhibit lower loss values for a given S_min compared to lower parameter counts (blue/purple).

* **Specific Observations:**

* The highest parameter models (yellow) show the most significant loss reduction with increasing S_min, reaching a loss of approximately 2.4 at S_min = 10^5.

* The lowest parameter models (purple) show a less pronounced loss reduction, remaining around a loss of 3.8-4.0 even at S_min = 10^5.

### Key Observations

* In the left chart, increasing the dataset size consistently reduces loss across all model sizes.

* Larger models (708M, 302M) consistently achieve lower loss values than smaller models (3M, 393.2K) for a given dataset size.

* The right chart confirms that increasing training steps (S_min) reduces loss, and this effect is more pronounced for larger models.

* The 393.2K model shows minimal improvement with increased dataset size in the left chart, suggesting it may be underparameterized.

### Interpretation

The data strongly suggests that both model size and dataset size are critical factors in achieving low loss. Increasing either of these factors leads to improved performance. The right chart reinforces this by showing that increased training (S_min) also contributes to lower loss, particularly for larger models. The consistent trend of decreasing loss with increasing parameters and dataset size indicates a clear scaling relationship. The relatively flat curve for the 393.2K model in the left chart suggests that this model has reached its capacity and cannot benefit further from increased data. This highlights the importance of model capacity in effectively utilizing larger datasets. The colorbar on the right chart provides a continuous representation of parameter size, allowing for a more nuanced understanding of the relationship between model size, training steps, and loss. The logarithmic scales on both axes are appropriate for visualizing the wide range of values involved.

</details>

⋂}⌋˜{√(]{(〈∐√∐√˜√

min

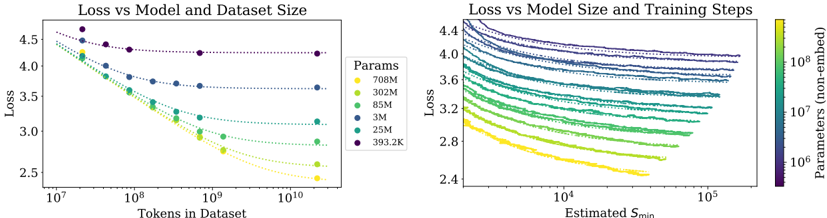

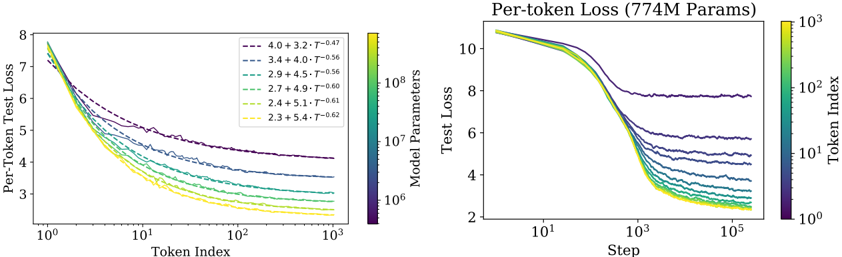

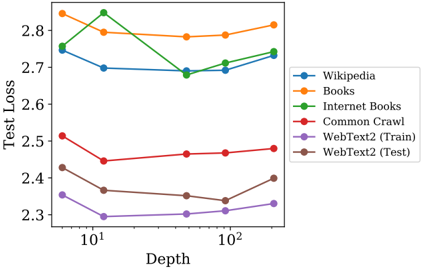

Figure 4 Left : The early-stopped test loss L ( N,D ) varies predictably with the dataset size D and model size N according to Equation (1.5). Right : After an initial transient period, learning curves for all model sizes N can be fit with Equation (1.6), which is parameterized in terms of S min , the number of steps when training at large batch size (details in Section 5.1).

These relations hold across eight orders of magnitude in C min , six orders of magnitude in N , and over two orders of magnitude in D . They depend very weakly on model shape and other Transformer hyperparameters (depth, width, number of self-attention heads), with specific numerical values associated with the Webtext2 training set [RWC + 19]. The power laws α N , α D , α min C specify the degree of performance improvement expected as we scale up N , D , or C min ; for example, doubling the number of parameters yields a loss that is smaller by a factor 2 -α N = 0 . 95 . The precise numerical values of N c , C min c , and D c depend on the vocabulary size and tokenization and hence do not have a fundamental meaning.

The critical batch size, which determines the speed/efficiency tradeoff for data parallelism ([MKAT18]), also roughly obeys a power law in L :

<!-- formula-not-decoded -->

Equation (1.1) and (1.2) together suggest that as we increase the model size, we should increase the dataset size sublinearly according to D ∝ N α N α D ∼ N 0 . 74 . In fact, we find that there is a single equation combining (1.1) and (1.2) that governs the simultaneous dependence on N and D and governs the degree of overfitting:

$$L ( N , D ) = \left [ \left ( \frac { N _ { c } } { N } \right ) ^ { \frac { \alpha _ { N } } { \alpha _ { D } } } + \frac { D _ { c } } { D } \right ] ^ { \alpha _ { D } }$$

with fits pictured on the left in figure 4. We conjecture that this functional form may also parameterize the trained log-likelihood for other generative modeling tasks.

When training a given model for a finite number of parameter update steps S in the infinite data limit, after an initial transient period, the learning curves can be accurately fit by (see the right of figure 4)

$$L ( N , S ) = \left ( \frac { N _ { c } } { N } \right ) ^ { \alpha _ { N } } + \left ( \frac { S _ { c } } { S _ { \min } ( S ) } \right ) ^ { \alpha _ { S } }$$

where S c ≈ 2 . 1 × 10 3 and α S ≈ 0 . 76 , and S min ( S ) is the minimum possible number of optimization steps (parameter updates) estimated using Equation (5.4).

When training within a fixed compute budget C , but with no other constraints, Equation (1.6) leads to the prediction that the optimal model size N , optimal batch size B , optimal number of steps S , and dataset size D should grow as

$$\begin{array} { r } { N \, \infty \, C ^ { \alpha _ { C } ^ { \min } / \alpha _ { N } } , \quad B \, \infty \, C ^ { \alpha _ { C } ^ { \min } / \alpha _ { B } } , \quad S \, \infty \, C ^ { \alpha _ { C } ^ { \min } / \alpha _ { S } } , \quad D = B \cdot S \quad ( 1 . 7 ) } \end{array}$$

with

<!-- formula-not-decoded -->

which closely matches the empirically optimal results N ∝ C 0 . 73 min , B ∝ C 0 . 24 min , and S ∝ C 0 . 03 min . As the computational budget C increases, it should be spent primarily on larger models, without dramatic increases in training time or dataset size (see Figure 3). This also implies that as models grow larger, they become increasingly sample efficient. In practice, researchers typically train smaller models for longer than would

be maximally compute-efficient because of hardware constraints. Optimal performance depends on total compute as a power law (see Equation (1.3)).

We provide some basic theoretical motivation for Equation (1.5), an analysis of learning curve fits and their implications for training time, and a breakdown of our results per token. We also make some brief comparisons to LSTMs and recurrent Transformers [DGV + 18].

## 1.3 Notation

We use the following notation:

- L - the cross entropy loss in nats. Typically it will be averaged over the tokens in a context, but in some cases we report the loss for specific tokens within the context.

- N - the number of model parameters, excluding all vocabulary and positional embeddings

- C ≈ 6 NBS - an estimate of the total non-embedding training compute, where B is the batch size, and S is the number of training steps (ie parameter updates). We quote numerical values in PF-days, where one PF-day = 10 15 × 24 × 3600 = 8 . 64 × 10 19 floating point operations.

- D - the dataset size in tokens

- B crit - the critical batch size [MKAT18], defined and discussed in Section 5.1. Training at the critical batch size provides a roughly optimal compromise between time and compute efficiency.

- C min - an estimate of the minimum amount of non-embedding compute to reach a given value of the loss. This is the training compute that would be used if the model were trained at a batch size much less than the critical batch size.

- S min - an estimate of the minimal number of training steps needed to reach a given value of the loss. This is also the number of training steps that would be used if the model were trained at a batch size much greater than the critical batch size.

- α X - power-law exponents for the scaling of the loss as L ( X ) ∝ 1 /X α X where X can be any of N,D,C,S,B,C min .

## 2 Background and Methods

We train language models on WebText2, an extended version of the WebText [RWC + 19] dataset, tokenized using byte-pair encoding [SHB15] with a vocabulary size n vocab = 50257 . We optimize the autoregressive log-likelihood (i.e. cross-entropy loss) averaged over a 1024-token context, which is also our principal performance metric. We record the loss on the WebText2 test distribution and on a selection of other text distributions. We primarily train decoder-only [LSP + 18, RNSS18] Transformer [VSP + 17] models, though we also train LSTM models and Universal Transformers [DGV + 18] for comparison.

## 2.1 Parameter and Compute Scaling of Transformers

We parameterize the Transformer architecture using hyperparameters n layer (number of layers), d model (dimension of the residual stream), d ff (dimension of the intermediate feed-forward layer), d attn (dimension of the attention output), and n heads (number of attention heads per layer). We include n ctx tokens in the input context, with n ctx = 1024 except where otherwise noted.

We use N to denote the model size, which we define as the number of non-embedding parameters

<!-- formula-not-decoded -->

where we have excluded biases and other sub-leading terms. Our models also have n vocab d model parameters in an embedding matrix, and use n ctx d model parameters for positional embeddings, but we do not include these when discussing the 'model size' N ; we will see that this produces significantly cleaner scaling laws.

Evaluating a forward pass of the Transformer involves roughly

$$C _ { f o r w a r d } \approx 2 N + 2 n _ { l a y e r } n _ { c t x } d _ { m o d e l } \quad ( 2 . 2 )$$

add-multiply operations, where the factor of two comes from the multiply-accumulate operation used in matrix multiplication. A more detailed per-operation parameter and compute count is included in Table 1.

Table 1 Parameter counts and compute (forward pass) estimates for a Transformer model. Sub-leading terms such as nonlinearities, biases, and layer normalization are omitted.

| Operation | Parameters | FLOPs per Token |

|-----------------------|------------------------------------------|-----------------------------------------|

| Embed | ( n vocab + n ctx ) d model | 4 d model |

| Attention: QKV | n layer d model 3 d attn | 2 n layer d model 3 d attn |

| Attention: Mask | - | 2 n layer n ctx d attn |

| Attention: Project | n layer d attn d model | 2 n layer d attn d embd |

| Feedforward | n layer 2 d model d ff | 2 n layer 2 d model d ff |

| De-embed | - | 2 d model n vocab |

| Total (Non-Embedding) | N = 2 d model n layer (2 d attn + d ff ) | C forward = 2 N +2 n layer n ctx d attn |

For contexts and models with d model > n ctx / 12 , the context-dependent computational cost per token is a relatively small fraction of the total compute. Since we primarily study models where d model n ctx / 12 , we do not include context-dependent terms in our training compute estimate. Accounting for the backwards pass (approximately twice the compute as the forwards pass), we then define the estimated non-embedding compute as C ≈ 6 N floating point operators per training token.

## 2.2 Training Procedures

Unless otherwise noted, we train models with the Adam optimizer [KB14] for a fixed 2 . 5 × 10 5 steps with a batch size of 512 sequences of 1024 tokens. Due to memory constraints, our largest models (more than 1B parameters) were trained with Adafactor [SS18]. We experimented with a variety of learning rates and schedules, as discussed in Appendix D.6. We found that results at convergence were largely independent of learning rate schedule. Unless otherwise noted, all training runs included in our data used a learning rate schedule with a 3000 step linear warmup followed by a cosine decay to zero.

## 2.3 Datasets

We train our models on an extended version of the WebText dataset described in [RWC + 19]. The original WebText dataset was a web scrape of outbound links from Reddit through December 2017 which received at least 3 karma. In the second version, WebText2, we added outbound Reddit links from the period of January to October 2018, also with a minimum of 3 karma. The karma threshold served as a heuristic for whether people found the link interesting or useful. The text of the new links was extracted with the Newspaper3k python library. In total, the dataset consists of 20.3M documents containing 96 GB of text and 1 . 62 × 10 10 words (as defined by wc ). We then apply the reversible tokenizer described in [RWC + 19], which yields 2 . 29 × 10 10 tokens. We reserve 6 . 6 × 10 8 of these tokens for use as a test set, and we also test on similarlyprepared samples of Books Corpus [ZKZ + 15], Common Crawl [Fou], English Wikipedia, and a collection of publicly-available Internet Books.

## 3 Empirical Results and Basic Power Laws

To characterize language model scaling we train a wide variety of models, varying a number of factors including:

- Model size (ranging in size from 768 to 1.5 billion non-embedding parameters)

- Dataset size (ranging from 22 million to 23 billion tokens)

- Shape (including depth, width, attention heads, and feed-forward dimension)

- Context length (1024 for most runs, though we also experiment with shorter contexts)

- Batch size ( 2 19 for most runs, but we also vary it to measure the critical batch size)

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Charts: Performance Impact of Architectural Choices

### Overview

The image presents three separate charts comparing the impact of different architectural choices on model loss increase. Each chart focuses on a different parameter: Feed-Forward Ratio, Aspect Ratio, and Attention Head Dimension. Each chart also displays results for different model sizes (parameter counts).

### Components/Axes

Each chart shares the following components:

* **Y-axis:** "Loss Increase" (percentage), ranging from 0% to 10%.

* **X-axis:** Varies depending on the chart, representing the architectural parameter being tested. The X-axis is on a logarithmic scale.

* **Legends:** Each chart has a legend indicating the different model sizes (parameter counts) and/or model dimensions used in the experiment.

**Chart 1: Feed-Forward Ratio (df / dmodel)**

* X-axis label: "Feed-Forward Ratio (df / dmodel)"

* Legend:

* Orange: 50M Params

* Blue: 27M Params

* Yellow: 1.5B Params

**Chart 2: Aspect Ratio (dmodel / nlayer)**

* X-axis label: "Aspect Ratio (dmodel / nlayer)"

* Legend:

* Orange: 50M Params

* Blue: 27M Params

* Yellow: 1.5B Params

* Text Overlay: "A wide range of architectures achieve similar performance"

**Chart 3: Attention Head Dimension (dmodel / nhead)**

* X-axis label: "Attention Head Dimension (dmodel / nhead)"

* Legend:

* Blue: dmodel = 256

* Green: dmodel = 512

* Purple: dmodel = 1024

* Annotation: "22% additional compute compensates for 1% loss increase"

### Detailed Analysis or Content Details

**Chart 1: Feed-Forward Ratio**

* **50M Params (Orange):** Starts at approximately 1.5% loss increase at a ratio of 10^0 (1). Decreases to approximately 0.5% at a ratio of 10^1 (10). Then sharply increases to approximately 9% at a ratio of 10^2 (100).

* **27M Params (Blue):** Starts at approximately 1.5% loss increase at a ratio of 10^0 (1). Decreases to approximately 0.2% at a ratio of 10^1 (10). Then sharply increases to approximately 8% at a ratio of 10^2 (100).

* **1.5B Params (Yellow):** Remains relatively flat around 0.5% loss increase across the entire range of feed-forward ratios.

**Chart 2: Aspect Ratio**

* **50M Params (Orange):** Starts at approximately 3% loss increase at a ratio of 10^0 (1). Decreases to approximately 1% at a ratio of 10^1 (10). Then sharply increases to approximately 8% at a ratio of 10^2 (100).

* **27M Params (Blue):** Starts at approximately 2% loss increase at a ratio of 10^0 (1). Decreases to approximately 0.5% at a ratio of 10^1 (10). Then increases to approximately 4% at a ratio of 10^2 (100).

* **1.5B Params (Yellow):** Remains relatively flat around 0.5% loss increase across the entire range of aspect ratios.

**Chart 3: Attention Head Dimension**

* **dmodel = 256 (Blue):** Starts at approximately 1.5% loss increase at a ratio of 10^0 (1). Remains relatively flat around 1.5% to 2% loss increase across the entire range.

* **dmodel = 512 (Green):** Starts at approximately 0.5% loss increase at a ratio of 10^0 (1). Decreases to approximately 0.2% at a ratio of 10^1 (10). Then increases to approximately 1.5% at a ratio of 10^2 (100).

* **dmodel = 1024 (Purple):** Starts at approximately 0.5% loss increase at a ratio of 10^0 (1). Remains relatively flat around 0.5% to 1% loss increase across the entire range.

### Key Observations

* **Feed-Forward Ratio & Aspect Ratio:** Larger models (1.5B Params) are less sensitive to changes in Feed-Forward Ratio and Aspect Ratio, maintaining a low and stable loss increase. Smaller models (50M and 27M Params) exhibit a significant loss increase at higher ratios.

* **Attention Head Dimension:** The loss increase is relatively stable across different attention head dimensions, with slight variations.

* **Scale Sensitivity:** The charts consistently demonstrate that smaller models are more sensitive to architectural choices than larger models.

* **Logarithmic Scale:** The X-axis is logarithmic, meaning that equal distances represent multiplicative changes in the parameter values.

### Interpretation

These charts demonstrate the impact of different architectural choices on model performance, specifically measured by loss increase. The key takeaway is that larger models (1.5B parameters) are more robust to variations in Feed-Forward Ratio and Aspect Ratio. This suggests that larger models have a greater capacity to learn and adapt to different configurations.

The annotation on the Attention Head Dimension chart indicates a trade-off between computational cost and performance. A 22% increase in compute can compensate for a 1% loss increase, suggesting that increasing computational resources can improve model accuracy.

The text overlay on the Aspect Ratio chart ("A wide range of architectures achieve similar performance") reinforces the idea that there is not a single optimal architecture, and that a variety of configurations can achieve comparable results. This is particularly true for larger models.

The consistent trend across the charts highlights the importance of model size in determining robustness to architectural choices. Smaller models require more careful tuning of hyperparameters to achieve optimal performance, while larger models are more forgiving. The logarithmic scale on the x-axis suggests that the impact of these parameters is not linear, and that there may be diminishing returns to increasing their values beyond a certain point.

</details>

50M Parameters

25M Parameters

Figure 5 Performance depends very mildly on model shape when the total number of non-embedding parameters N is held fixed. The loss varies only a few percent over a wide range of shapes. Small differences in parameter counts are compensated for by using the fit to L ( N ) as a baseline. Aspect ratio in particular can vary by a factor of 40 while only slightly impacting performance; an ( n layer , d model ) = (6 , 4288) reaches a loss within 3% of the (48 , 1600) model used in [RWC + 19].

<details>

<summary>Image 6 Details</summary>

### Visual Description

\n

## Chart: Test Loss vs. Parameters for Different Layer Depths

### Overview

The image presents two line charts comparing the test loss of models with varying numbers of layers against the number of parameters. The left chart shows results for models *with embedding*, while the right chart shows results for models *without embedding*. Both charts use a logarithmic scale for the x-axis (Parameters).

### Components/Axes

* **X-axis (Both Charts):** Parameters. The left chart's scale ranges from approximately 10<sup>6</sup> to 10<sup>9</sup>. The right chart's scale ranges from approximately 10<sup>3</sup> to 10<sup>9</sup>.

* **Y-axis (Both Charts):** Test Loss. The scale ranges from approximately 2 to 7.

* **Left Chart Legend (Top-Left):**

* 0 Layer (Blue)

* 1 Layer (Purple)

* 2 Layers (Dark Red)

* 3 Layers (Orange)

* 6 Layers (Yellow)

* > 6 Layers (Brown)

* **Right Chart Legend (Top-Right):**

* 1 Layer (Purple)

* 2 Layers (Dark Red)

* 3 Layers (Orange)

* 6 Layers (Yellow)

* > 6 Layers (Brown)

### Detailed Analysis or Content Details

**Left Chart (With Embedding):**

* **0 Layer (Blue):** Starts at approximately 6.8 and remains relatively flat, decreasing slightly to around 6.2 at 10<sup>9</sup> parameters.

* **1 Layer (Purple):** Starts at approximately 6.8 and decreases steadily to around 3.8 at 10<sup>9</sup> parameters.

* **2 Layers (Dark Red):** Starts at approximately 6.5 and decreases more rapidly than the 1-layer model, reaching around 3.2 at 10<sup>9</sup> parameters.

* **3 Layers (Orange):** Starts at approximately 6.2 and decreases rapidly, reaching around 2.8 at 10<sup>9</sup> parameters.

* **6 Layers (Yellow):** Starts at approximately 5.5 and decreases very rapidly, reaching around 2.4 at 10<sup>9</sup> parameters.

* **> 6 Layers (Brown):** Starts at approximately 5.2 and decreases most rapidly, reaching around 2.2 at 10<sup>9</sup> parameters.

**Right Chart (Non-Embedding):**

* **1 Layer (Purple):** Starts at approximately 6.8 and decreases steadily to around 3.7 at 10<sup>9</sup> parameters.

* **2 Layers (Dark Red):** Starts at approximately 6.5 and decreases more rapidly than the 1-layer model, reaching around 3.1 at 10<sup>9</sup> parameters.

* **3 Layers (Orange):** Starts at approximately 6.2 and decreases rapidly, reaching around 2.7 at 10<sup>9</sup> parameters.

* **6 Layers (Yellow):** Starts at approximately 5.5 and decreases very rapidly, reaching around 2.3 at 10<sup>9</sup> parameters.

* **> 6 Layers (Brown):** Starts at approximately 5.2 and decreases most rapidly, reaching around 2.1 at 10<sup>9</sup> parameters.

### Key Observations

* In both charts, increasing the number of layers consistently reduces the test loss.

* The rate of loss reduction appears to diminish as the number of parameters increases, especially for models with more layers.

* The models with embedding (left chart) generally exhibit slightly higher test loss values compared to the models without embedding (right chart) for the same number of layers and parameters.

* The 0-layer model (left chart) shows minimal improvement in test loss with increasing parameters, suggesting it is not benefiting from increased model capacity.

### Interpretation

The data demonstrates a clear relationship between model complexity (number of layers) and performance (test loss). Increasing the number of layers generally leads to lower test loss, indicating improved model accuracy. However, the diminishing returns observed at higher parameter counts suggest that there is a point of saturation where adding more layers does not significantly improve performance.

The difference between the "with embedding" and "non-embedding" models suggests that embedding may provide a slight advantage in terms of test loss, but this advantage is not substantial. The 0-layer model's lack of improvement highlights the importance of model capacity for learning complex patterns.

The logarithmic scale on the x-axis emphasizes the impact of increasing parameters, particularly at lower values. The charts provide valuable insights into the trade-offs between model complexity, parameter count, and performance, which can inform model design and optimization strategies. The consistent downward trend for all layer counts suggests that increasing model size is generally beneficial, but careful consideration should be given to the point of diminishing returns and the potential benefits of embedding techniques.

</details>

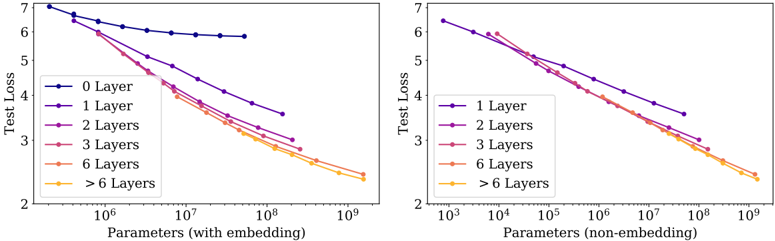

Figure 6 Left: When we include embedding parameters, performance appears to depend strongly on the number of layers in addition to the number of parameters. Right: When we exclude embedding parameters, the performance of models with different depths converge to a single trend. Only models with fewer than 2 layers or with extreme depth-to-width ratios deviate significantly from the trend.

In this section we will display data along with empirically-motivated fits, deferring theoretical analysis to later sections.

## 3.1 Approximate Transformer Shape and Hyperparameter Independence

Transformer performance depends very weakly on the shape parameters n layer , n heads , and d ff when we hold the total non-embedding parameter count N fixed. To establish these results we trained models with fixed size while varying a single hyperparameter. This was simplest for the case of n heads . When varying n layer , we simultaneously varied d model while keeping N ≈ 12 n layer d 2 model fixed. Similarly, to vary d ff at fixed model size we also simultaneously varied the d model parameter, as required by the parameter counts in Table 1. Independence of n layers would follow if deeper Transformers effectively behave as ensembles of shallower models, as has been suggested for ResNets [VWB16]. The results are shown in Figure 5.

## 3.2 Performance with Non-Embedding Parameter Count N

In Figure 6 we display the performance of a wide variety of models, ranging from small models with shape ( n layer , d model ) = (2 , 128) through billion-parameter models, ranging in shape from (6 , 4288) through (207 , 768) . Here we have trained to near convergence on the full WebText2 dataset and observe no overfitting (except possibly for the very largest models).

As shown in Figure 1, we find a steady trend with non-embedding parameter count N , which can be fit to the first term of Equation (1.5), so that

<!-- formula-not-decoded -->

Figure 7

<details>

<summary>Image 7 Details</summary>

### Visual Description

\n

## Charts: Transformer vs LSTM Performance

### Overview

The image presents two charts comparing the performance of Transformer and LSTM models. The left chart shows Test Loss versus Parameters (non-embedding), demonstrating how Transformers asymptotically outperform LSTMs as the number of parameters increases. The right chart shows Per-token Test Loss versus Token Index in Context, illustrating that LSTMs plateau after approximately 100 tokens, while Transformers continue to improve with increasing context length.

### Components/Axes

**Left Chart:**

* **Title:** "Transformers asymptotically outperform LSTMs due to improved use of long contexts"

* **X-axis:** "Parameters (non-embedding)" - Logarithmic scale from approximately 10<sup>5</sup> to 10<sup>9</sup>.

* **Y-axis:** "Test Loss" - Scale from approximately 3.0 to 5.4.

* **Data Series:**

* "Transformers" (Blue line)

* "LSTMs" (Red lines)

* "1 Layer" (Dark Red)

* "2 Layers" (Light Red)

* "4 Layers" (Very Light Red)

**Right Chart:**

* **Title:** "LSTM plateaus after <100 tokens Transformer improves through the whole context"

* **X-axis:** "Token Index in Context" - Logarithmic scale from approximately 10<sup>0</sup> to 10<sup>3</sup>.

* **Y-axis:** "Per-token Test Loss" - Scale from approximately 2.0 to 6.0.

* **Legend:** "Parameters:"

* "400K" (Darkest Red)

* "2M" (Red)

* "3M" (Light Red)

* "200M" (Very Light Red)

* "300M" (Lightest Red)

### Detailed Analysis or Content Details

**Left Chart:**

* **Transformers (Blue Line):** The line slopes downward consistently, indicating a decrease in Test Loss as the number of parameters increases.

* At approximately 10<sup>5</sup> parameters, Test Loss is around 5.0.

* At approximately 10<sup>7</sup> parameters, Test Loss is around 3.8.

* At approximately 10<sup>9</sup> parameters, Test Loss is around 3.0.

* **LSTMs (Red Lines):** The lines initially decrease, but the rate of decrease slows down and eventually plateaus.

* **1 Layer (Dark Red):** Starts around 5.2, plateaus around 4.2.

* **2 Layers (Light Red):** Starts around 5.0, plateaus around 3.9.

* **4 Layers (Very Light Red):** Starts around 4.8, plateaus around 3.7.

**Right Chart:**

* **400K (Darkest Red):** Starts around 5.8, decreases to approximately 4.0, then plateaus.

* **2M (Red):** Starts around 5.6, decreases to approximately 3.8, then plateaus.

* **3M (Light Red):** Starts around 5.4, decreases to approximately 3.6, then plateaus.

* **200M (Very Light Red):** Starts around 5.2, decreases to approximately 3.2, then plateaus.

* **300M (Lightest Red):** Starts around 5.0, decreases to approximately 2.8, then plateaus.

* All lines show a decrease in Test Loss up to approximately 10<sup>2</sup> (100) Token Index in Context, after which they plateau.

### Key Observations

* Transformers consistently outperform LSTMs across all parameter sizes in the left chart.

* LSTMs exhibit diminishing returns with increasing parameters, while Transformers continue to improve.

* LSTMs show a clear plateau effect in the right chart, indicating limited ability to leverage longer contexts.

* Transformers continue to improve with increasing context length in the right chart.

* Increasing the number of parameters in both models generally leads to lower Test Loss, but the effect is more pronounced for Transformers.

### Interpretation

The data strongly suggests that Transformers are superior to LSTMs, particularly when dealing with long-range dependencies in data. The asymptotic improvement of Transformers with increasing parameters indicates a greater capacity to model complex relationships. The plateauing of LSTMs, both in terms of parameters and context length, highlights their limitations in handling long sequences. The right chart visually demonstrates the core advantage of Transformers: their ability to effectively utilize information from a wider context window, leading to improved performance. The logarithmic scales on both axes suggest that the benefits of Transformers are particularly significant at larger scales (more parameters, longer contexts). The difference in performance is not merely a matter of scale; the fundamental architecture of Transformers allows them to overcome the vanishing gradient problem that plagues LSTMs when processing long sequences.

</details>

To observe these trends it is crucial to study performance as a function of N ; if we instead use the total parameter count (including the embedding parameters) the trend is somewhat obscured (see Figure 6). This suggests that the embedding matrix can be made smaller without impacting performance, as has been seen in recent work [LCG + 19].

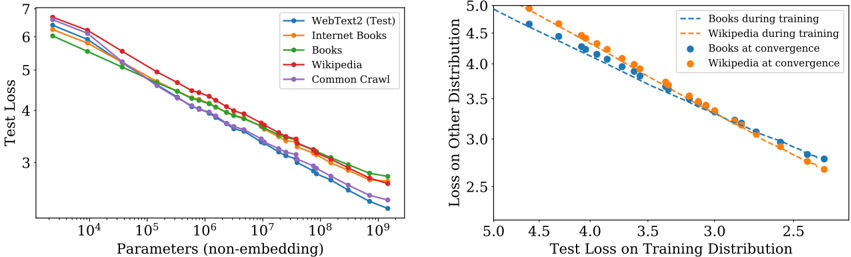

Although these models have been trained on the WebText2 dataset, their test loss on a variety of other datasets is also a power-law in N with nearly identical power, as shown in Figure 8.

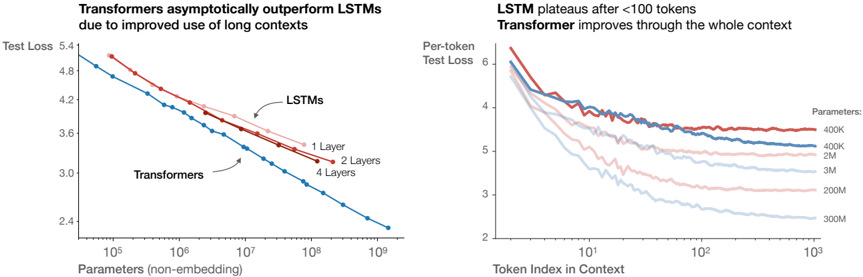

## 3.2.1 Comparing to LSTMs and Universal Transformers

In Figure 7 we compare LSTM and Transformer performance as a function of non-embedding parameter count N . The LSTMs were trained with the same dataset and context length. We see from these figures that the LSTMs perform as well as Transformers for tokens appearing early in the context, but cannot match the Transformer performance for later tokens. We present power-law relationships between performance and context position Appendix D.5, where increasingly large powers for larger models suggest improved ability to quickly recognize patterns.

We also compare the performance of standard Transformers to recurrent Transformers [DGV + 18] in Figure 17 in the appendix. These models re-use parameters, and so perform slightly better as a function of N , at the cost of additional compute per-parameter.

## 3.2.2 Generalization Among Data Distributions

We have also tested our models on a set of additional text data distributions. The test loss on these datasets as a function of model size is shown in Figure 8; in all cases the models were trained only on the WebText2 dataset. We see that the loss on these other data distributions improves smoothly with model size, in direct parallel with the improvement on WebText2. We find that generalization depends almost exclusively on the in-distribution validation loss, and does not depend on the duration of training or proximity to convergence. We also observe no dependence on model depth (see Appendix D.8).

## 3.3 Performance with Dataset Size and Compute

We display empirical trends for the test loss as a function of dataset size D (in tokens) and training compute C in Figure 1.

For the trend with D we trained a model with ( n layer , n embd ) = (36 , 1280) on fixed subsets of the WebText2 dataset. We stopped training once the test loss ceased to decrease. We see that the resulting test losses can be fit with simple power-law

<!-- formula-not-decoded -->

in the dataset size. The data and fit appear in Figure 1.

The total amount of non-embedding compute used during training can be estimated as C = 6 NBS , where B is the batch size, S is the number of parameter updates, and the factor of 6 accounts for the forward and backward passes. Thus for a given value of C we can scan over all models with various N to find the model

using three principles:

1. Changes in vocabulary size or tokenization are expected to rescale the loss by an overall factor. The parameterization of L ( N,D ) (and all models of the loss) must naturally allow for such a rescaling.

2. Fixing D and sending N → ∞ , the overall loss should approach L ( D ) . Conversely, fixing N and sending D →∞ the loss must approach L ( N ) .

3. L ( N,D ) should be analytic at D = ∞ , so that it has a series expansion in 1 /D with integer powers. Theoretical support for this principle is significantly weaker than for the first two.

Our choice of L ( N,D ) satisfies the first requirement because we can rescale N c , D c with changes in the vocabulary. This also implies that the values of N c , D c have no fundamental meaning.

<details>

<summary>Image 8 Details</summary>

### Visual Description

\n

## Charts: Training Loss vs. Parameters & Loss Distribution

### Overview

The image presents two charts. The left chart depicts the relationship between the number of parameters (non-embedding) and the test loss for different datasets. The right chart shows the loss on another distribution plotted against the test loss on the training distribution, for Books and Wikipedia datasets during training and at convergence.

### Components/Axes

**Left Chart:**

* **X-axis:** Parameters (non-embedding), logarithmic scale from approximately 10<sup>4</sup> to 10<sup>9</sup>.

* **Y-axis:** Test Loss, linear scale from approximately 2.5 to 7.

* **Data Series:**

* WebText2 (Test) - Blue line

* Internet Books - Orange line

* Books - Green line

* Wikipedia - Yellow line

* Common Crawl - Purple line

**Right Chart:**

* **X-axis:** Test Loss on Training Distribution, linear scale from approximately 2.0 to 5.0.

* **Y-axis:** Loss on Other Distribution, linear scale from approximately 2.5 to 5.0.

* **Data Series:**

* Books during training - Light blue dashed line

* Wikipedia during training - Orange dashed line

* Books at convergence - Blue dots

* Wikipedia at convergence - Orange dots

### Detailed Analysis or Content Details

**Left Chart:**

* **WebText2 (Test):** The blue line starts at approximately 6.2 at 10<sup>4</sup> parameters and decreases steadily to approximately 2.7 at 10<sup>9</sup> parameters.

* **Internet Books:** The orange line starts at approximately 6.0 at 10<sup>4</sup> parameters and decreases to approximately 3.2 at 10<sup>9</sup> parameters.

* **Books:** The green line starts at approximately 6.1 at 10<sup>4</sup> parameters and decreases to approximately 3.0 at 10<sup>9</sup> parameters.

* **Wikipedia:** The yellow line starts at approximately 6.1 at 10<sup>4</sup> parameters and decreases to approximately 3.1 at 10<sup>9</sup> parameters.

* **Common Crawl:** The purple line starts at approximately 6.3 at 10<sup>4</sup> parameters and decreases to approximately 3.3 at 10<sup>9</sup> parameters.

* All lines exhibit a decreasing trend, indicating that increasing the number of parameters generally reduces the test loss. The rate of decrease slows down as the number of parameters increases.

**Right Chart:**

* **Books during training:** The light blue dashed line starts at approximately (4.8, 4.8) and decreases to approximately (2.5, 3.0).

* **Wikipedia during training:** The orange dashed line starts at approximately (4.8, 4.6) and decreases to approximately (2.5, 3.1).

* **Books at convergence:** The blue dots are at approximately (3.0, 3.0), (3.2, 2.8), (3.5, 2.6), (4.0, 2.5), (4.5, 2.4).

* **Wikipedia at convergence:** The orange dots are at approximately (3.0, 3.2), (3.2, 2.9), (3.5, 2.7), (4.0, 2.6), (4.5, 2.5).

* Both datasets show a negative correlation between test loss on the training distribution and loss on the other distribution.

### Key Observations

* In the left chart, WebText2 consistently exhibits the lowest test loss across all parameter ranges.

* The rate of loss reduction diminishes as the number of parameters increases for all datasets.

* In the right chart, the training curves (dashed lines) are relatively linear, while the convergence points (dots) show a slight curvature.

* The convergence points for Books and Wikipedia are close to each other, suggesting similar performance at convergence.

### Interpretation

The left chart demonstrates the scaling behavior of language models with varying dataset sizes. The consistent lower loss of WebText2 suggests that this dataset is more effective for training, potentially due to its quality or diversity. The diminishing returns of increasing parameters indicate a point of saturation where adding more parameters yields less significant improvements in performance.

The right chart illustrates the concept of generalization. The negative correlation between loss on the training distribution and loss on another distribution suggests that models performing well on the training data also tend to generalize better to unseen data. The convergence points indicate that both Books and Wikipedia datasets can achieve comparable performance when trained to convergence. The difference between the training curves and convergence points highlights the impact of training on model generalization. The fact that the convergence points are not perfectly aligned suggests that the datasets have different characteristics that affect their generalization performance.

</details>

∑∐√∐⌉˜√˜√√({{}{∖˜⌉̂˜̂̂]{˜}

}}⌋√(̂√√]{˜(√√∐]{]{˜

∨]⌋]√˜̂]∐(̂√√]{˜(√√∐]{]{˜

∨]⌋]√˜̂]∐(∐√(̂}{√˜√˜˜{̂˜

⋂˜√√(⊕}√√(}{(⋂√∐]{]{˜(〈]√√√]̂√√]}{

Figure 8 Left: Generalization performance to other data distributions improves smoothly with model size, with only a small and very slowly growing offset from the WebText2 training distribution. Right: Generalization performance depends only on training distribution performance, and not on the phase of training. We compare generalization of converged models (points) to that of a single large model (dashed curves) as it trains.

with the best performance on step S = C 6 BS . Note that in these results the batch size B remains fixed for all models , which means that these empirical results are not truly optimal. We will account for this in later sections using an adjusted C min to produce cleaner trends.

The result appears as the heavy black line on the left-hand plot in Figure 1. It can be fit with

<!-- formula-not-decoded -->

The figure also includes images of individual learning curves to clarify when individual models are optimal. Wewill study the optimal allocation of compute more closely later on. The data strongly suggests that sample efficiency improves with model size, and we also illustrate this directly in Figure 19 in the appendix.

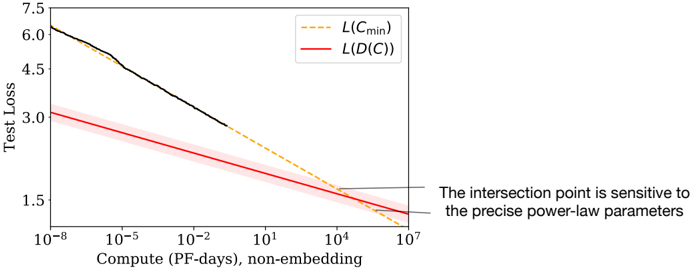

## 4 Charting the Infinite Data Limit and Overfitting

In Section 3 we found a number of basic scaling laws for language modeling performance. Here we will study the performance of a model of size N trained on a dataset with D tokens while varying N and D simultaneously. We will empirically demonstrate that the optimally trained test loss accords with the scaling law of Equation (1.5). This provides guidance on how much data we would need to train models of increasing size while keeping overfitting under control.

## 4.1 Proposed L ( N,D ) Equation

We have chosen the parameterization (1.5) (repeated here for convenience):

<!-- formula-not-decoded -->

⊕}√√(}{(⊗√[˜√(〈]√√√]̂√√]}{

Figure 9 The early-stopped test loss L ( N,D ) depends predictably on the dataset size D and model size N according to Equation (1.5). Left : For large D , performance is a straight power law in N . For a smaller fixed D , performance stops improving as N increases and the model begins to overfit. (The reverse is also true, see Figure 4.) Right : The extent of overfitting depends predominantly on the ratio N α N α D /D , as predicted in equation (4.3). The line is our fit to that equation.

<details>

<summary>Image 9 Details</summary>

### Visual Description

## Charts: Data Size Bottleneck & Overfitting

### Overview

The image presents two charts side-by-side. The left chart, titled "Data Size Bottleneck", depicts the relationship between the number of parameters (non-embedding) and test loss for various data sizes. The right chart, titled "Overfitting", shows the relationship between L/(L-1) and N<sub>val</sub>/D<sub>0</sub>, also for different data sizes. Both charts aim to illustrate how data size impacts model performance, specifically concerning the bottleneck effect and overfitting.

### Components/Axes

**Left Chart (Data Size Bottleneck):**

* **X-axis:** Params (non-embed) - Logarithmic scale, ranging from approximately 10<sup>6</sup> to 10<sup>9</sup>.

* **Y-axis:** Test Loss - Linear scale, ranging from approximately 2.4 to 4.6.

* **Legend:** Data Size, with the following categories:

* 21M (Purple)

* 43M (Dark Blue)

* 86M (Light Blue)

* 172M (Teal)

* 344M (Green)

* 688M (Dark Green)

* 1.4B (Yellow)

* 22.08 (Gold)

**Right Chart (Overfitting):**

* **X-axis:** N<sub>val</sub>/D<sub>0</sub> - Logarithmic scale, ranging from approximately 10<sup>-5</sup> to 10<sup>-1</sup>.

* **Y-axis:** L/(L-1) - 1 - Linear scale, ranging from approximately 0 to 0.5.

* **Legend:** Data Size, with the same categories as the left chart:

* 21M (Purple)

* 43M (Dark Blue)

* 86M (Light Blue)

* 172M (Teal)

* 344M (Green)

* 688M (Dark Green)

* 1.4B (Yellow)

* 22.08 (Gold)

### Detailed Analysis or Content Details

**Left Chart (Data Size Bottleneck):**

* **21M (Purple):** The line starts at approximately 4.5 and decreases slowly, leveling off around 3.8.

* **43M (Dark Blue):** The line starts at approximately 4.3 and decreases more rapidly than the 21M line, reaching around 3.2.

* **86M (Light Blue):** The line starts at approximately 4.1 and decreases rapidly, reaching around 2.8.

* **172M (Teal):** The line starts at approximately 3.9 and decreases rapidly, reaching around 2.7.

* **344M (Green):** The line starts at approximately 3.6 and decreases rapidly, reaching around 2.6.

* **688M (Dark Green):** The line starts at approximately 3.3 and decreases rapidly, reaching around 2.5.

* **1.4B (Yellow):** The line starts at approximately 3.1 and decreases rapidly, reaching around 2.4.

* **22.08 (Gold):** The line starts at approximately 2.9 and decreases very rapidly, reaching around 2.3.

**Right Chart (Overfitting):**

* **21M (Purple):** The line starts at approximately 0 and increases rapidly, reaching 0.45 at approximately 10<sup>-2</sup>.

* **43M (Dark Blue):** The line starts at approximately 0 and increases rapidly, reaching 0.35 at approximately 10<sup>-2</sup>.

* **86M (Light Blue):** The line starts at approximately 0 and increases rapidly, reaching 0.25 at approximately 10<sup>-2</sup>.

* **172M (Teal):** The line starts at approximately 0 and increases rapidly, reaching 0.18 at approximately 10<sup>-2</sup>.

* **344M (Green):** The line starts at approximately 0 and increases rapidly, reaching 0.12 at approximately 10<sup>-2</sup>.

* **688M (Dark Green):** The line starts at approximately 0 and increases rapidly, reaching 0.08 at approximately 10<sup>-2</sup>.

* **1.4B (Yellow):** The line starts at approximately 0 and increases rapidly, reaching 0.05 at approximately 10<sup>-2</sup>.

* **22.08 (Gold):** The line starts at approximately 0 and increases rapidly, reaching 0.03 at approximately 10<sup>-2</sup>.

### Key Observations

* In the "Data Size Bottleneck" chart, increasing the data size consistently lowers the test loss, indicating improved model performance. The rate of decrease slows down as the data size increases, suggesting a diminishing return.

* In the "Overfitting" chart, larger data sizes (smaller values on the x-axis) result in lower values of L/(L-1) - 1, indicating less overfitting. The curves show a steep increase for smaller data sizes, demonstrating a higher susceptibility to overfitting.

* The lines for larger data sizes (yellow and gold) are more flattened in both charts, suggesting that they are less sensitive to changes in parameters and less prone to overfitting.

### Interpretation

These charts demonstrate the crucial role of data size in training machine learning models. The "Data Size Bottleneck" chart illustrates that increasing data size reduces test loss, but there's a point of diminishing returns. The "Overfitting" chart shows that larger datasets mitigate overfitting, as evidenced by the lower L/(L-1) - 1 values.

The relationship between the two charts is that the bottleneck effect (limited improvement with more parameters) is exacerbated by overfitting when the data size is small. As the data size increases, the model can leverage more parameters without overfitting, leading to better performance.

The gold line (22.08) consistently performs best across both charts, indicating that this data size provides a good balance between model capacity and generalization ability. The purple line (21M) consistently performs worst, highlighting the challenges of training models with limited data. The logarithmic scales on the x-axes suggest that the relationships are not linear, and that the benefits of increasing data size or parameters may saturate at some point.

</details>

Since we stop training early when the test loss ceases to improve and optimize all models in the same way, we expect that larger models should always perform better than smaller models. But with fixed finite D , we also do not expect any model to be capable of approaching the best possible loss (ie the entropy of text). Similarly, a model with fixed size will be capacity-limited. These considerations motivate our second principle. Note that knowledge of L ( N ) at infinite D and L ( D ) at infinite N fully determines all the parameters in L ( N,D ) .

The third principle is more speculative. There is a simple and general reason one might expect overfitting to scale ∝ 1 /D at very large D . Overfitting should be related to the variance or the signal-to-noise ratio of the dataset [AS17], and this scales as 1 /D . This expectation should hold for any smooth loss function, since we expect to be able to expand the loss about the D →∞ limit. However, this argument assumes that 1 /D corrections dominate over other sources of variance, such as the finite batch size and other limits on the efficacy of optimization. Without empirical confirmation, we would not be very confident of its applicability.

Our third principle explains the asymmetry between the roles of N and D in Equation (1.5). Very similar symmetric expressions 4 are possible, but they would not have a 1 /D expansion with integer powers, and would require the introduction of an additional parameter.

In any case, we will see that our equation for L ( N,D ) fits the data well, which is the most important justification for our L ( N,D ) ansatz.

## 4.2 Results

We regularize all our models with 10% dropout, and by tracking test loss and stopping once it is no longer decreasing. The results are displayed in Figure 9, including a fit to the four parameters α N , α D , N c , D c in Equation (1.5):

Table 2 Fits to L ( N,D )

| Parameter | α N | α D | N c | D c |

|-------------|---------|---------|---------------|---------------|

| Value | 0 . 076 | 0 . 103 | 6 . 4 × 10 13 | 1 . 8 × 10 13 |

We obtain an excellent fit, with the exception of the runs where the dataset has been reduced by a factor of 1024 , to about 2 × 10 7 tokens. With such a small dataset, an epoch consists of only 40 parameter updates. Perhaps such a tiny dataset represents a different regime for language modeling, as overfitting happens very early in training (see Figure 16). Also note that the parameters differ very slightly from those obtained in Section 3, as here we are fitting the full L ( N,D ) rather than just L ( N, ∞ ) or L ( ∞ , D ) .

To chart the borderlands of the infinite data limit, we can directly study the extent of overfitting. For all but the largest models, we see no sign of overfitting when training with the full 22B token WebText2 dataset, so we can take it as representative of D = ∞ . Thus we can compare finite D to the infinite data limit by

4 For example, one might have used L ( N,D ) = [( N c N ) α N + ( D c D ) α D ] β , but this does not have a 1 /D expansion.

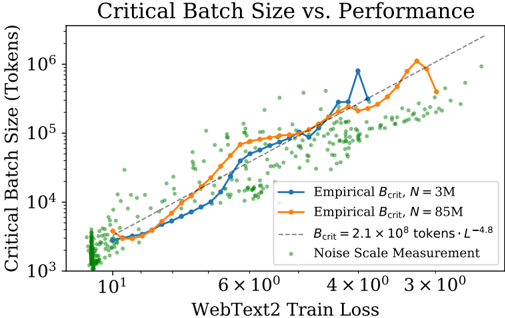

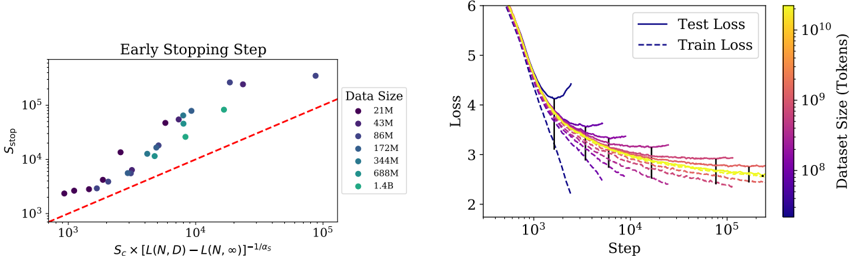

Figure 10 The critical batch size B crit follows a power law in the loss as performance increase, and does not depend directly on the model size. We find that the critical batch size approximately doubles for every 13% decrease in loss. B crit is measured empirically from the data shown in Figure 18, but it is also roughly predicted by the gradient noise scale, as in [MKAT18].

<details>

<summary>Image 10 Details</summary>

### Visual Description

\n

## Chart: Critical Batch Size vs. Performance

### Overview

The image presents a chart illustrating the relationship between Critical Batch Size (in tokens) and WebText2 Train Loss. The chart displays two empirical curves representing different dataset sizes (N = 3M and N = 85M), a theoretical curve, and a scatter plot representing noise scale measurements. The chart aims to demonstrate how critical batch size scales with training loss and dataset size.

### Components/Axes

* **Title:** "Critical Batch Size vs. Performance" (Top-center)

* **X-axis:** "WebText2 Train Loss" (Bottom-center). Scale is logarithmic, with markers at 10<sup>1</sup>, 6 x 10<sup>0</sup>, 4 x 10<sup>0</sup>, 3 x 10<sup>0</sup>.

* **Y-axis:** "Critical Batch Size (Tokens)" (Left-center). Scale is logarithmic, with markers at 10<sup>3</sup>, 10<sup>4</sup>, 10<sup>5</sup>, 10<sup>6</sup>.

* **Legend:** Located in the top-right corner.

* "Empirical B<sub>crit</sub>, N = 3M" (Solid blue line)

* "Empirical B<sub>crit</sub>, N = 85M" (Solid orange line)

* "B<sub>crit</sub> = 2.1 x 10<sup>8</sup> tokens · L<sup>-4.8</sup>" (Gray dashed line)

* "Noise Scale Measurement" (Green dotted points)

### Detailed Analysis

The chart displays the following data:

* **Empirical B<sub>crit</sub>, N = 3M (Blue Line):** This line shows an upward trend, initially steep, then leveling off.

* At WebText2 Train Loss ≈ 10<sup>1</sup>, Critical Batch Size ≈ 2 x 10<sup>3</sup> tokens.

* At WebText2 Train Loss ≈ 6 x 10<sup>0</sup>, Critical Batch Size ≈ 1 x 10<sup>4</sup> tokens.

* At WebText2 Train Loss ≈ 4 x 10<sup>0</sup>, Critical Batch Size ≈ 3 x 10<sup>4</sup> tokens.

* At WebText2 Train Loss ≈ 3 x 10<sup>0</sup>, Critical Batch Size ≈ 5 x 10<sup>4</sup> tokens.

* There is a peak around WebText2 Train Loss ≈ 2 x 10<sup>0</sup>, with Critical Batch Size ≈ 8 x 10<sup>4</sup> tokens.

* **Empirical B<sub>crit</sub>, N = 85M (Orange Line):** This line also shows an upward trend, but it is generally higher than the blue line.

* At WebText2 Train Loss ≈ 10<sup>1</sup>, Critical Batch Size ≈ 5 x 10<sup>3</sup> tokens.

* At WebText2 Train Loss ≈ 6 x 10<sup>0</sup>, Critical Batch Size ≈ 2 x 10<sup>4</sup> tokens.