## Memristors - from In-memory computing, Deep Learning Acceleration, Spiking Neural Networks, to the Future of Neuromorphic and Bio-inspired Computing

Adnan Mehonic * , Abu Sebastian, Bipin Rajendran, Osvaldo Simeone, Eleni Vasilaki, Anthony J. Kenyon

Dr. Adnan Mehonic, Prof Anthony J. Kenyon

Department of Electronic & Electrical Engineering, UCL, Torrington Place, London WC1E 7JE, United Kingdom

E-mail: adnan.mehonic.09@ucl.ac.uk

Dr. Abu Sebastian

IBM Research - Zurich, 8803 Rüschlikon, Switzerland

Dr. Bipin Rajendran, Prof Osvaldo Simeone

Centre for Telecommunications Research, Department of Engineering, King's College London, WC2R 2LS, United Kingdom

Prof. Eleni Vasilaki

Department of Computer Science, University of Sheffield, Sheffield, South Yorkshire, United Kingdom

Keywords: memristor, neuromorphic, AI, deep learning, spiking neural networks, in-memory computing

## Abstract

Machine learning, particularly in the form of deep learning, has driven most of the recent fundamental developments in artificial intelligence. Deep learning is based on computational models that are, to a certain extent, bio-inspired, as they rely on networks of connected simple computing units operating in parallel. Deep learning has been successfully applied in areas such as object/pattern recognition, speech and natural language processing, self-driving vehicles, intelligent self-diagnostics tools, autonomous robots, knowledgeable personal assistants, and monitoring. These successes have been mostly supported by three factors: availability of vast amounts of data, continuous growth in computing power, and algorithmic innovations. The approaching demise of Moore's law, and the consequent expected modest improvements in computing power that can be achieved by scaling, raise the question of whether the described progress will be slowed or halted due to hardware limitations. This paper reviews the case for a novel beyond-CMOS hardware technology - memristors - as a potential solution for the implementation of power-efficient in-memory computing, deep learning accelerators, and spiking neural networks. Central themes are the reliance on nonvon-Neumann computing architectures and the need for developing tailored learning and inference algorithms. To argue that lessons from biology can be useful in providing directions for further progress in artificial intelligence, we briefly discuss an example based reservoir computing. We conclude the review by speculating on the 'big picture' view of future neuromorphic and brain-inspired computing systems.

## 1. Introduction

The three factors are currently driving the main developments in artificial intelligence (AI): availability of vast amounts of data, continuous growth in computing power, and algorithmic innovations. Graphics processing units (GPUs) have been demonstrated as effective coprocessors for the implementation of machine learning (ML) algorithms based on deep learning (DL). Solutions based on deep learning and GPU implementations have led to massive improvements in many AI tasks, but have also caused an exponential increase in demand for computing power. Recent analyses show that the demand for computing power has increased by a factor of 300,000 since 2012, and the estimate is that this demand will double every 3.4 months - at a much faster rate than improvements made historically through Moore's scaling (a 7-fold improvement over the same period of time) [1] . At the same time, Moore's law has been slowing down significantly for the last few years [2] , as there are strong indications that we will not be able to continue scaling down CMOS transistors. This calls for the exploration of alternative technology roadmaps for the development of scalable and efficient AI solutions.

Transistor scaling is not the only way to improve computing performance. Architectural innovations such as GPUs, field-programmable arrays (FPGAs), and application-specific integrated circuits (ASICs), have all significantly advanced the ML field 3 . A common aspect of modern computing architectures for ML is a move away from the classical von Neumann architecture that physically separates memory and computing. This approach yields a performance bottleneck that is often the main reason for both energy and speed inefficiency of ML implementations on conventional hardware platforms due to costly data movements. However, architectural developments alone are not likely to be sufficient. In fact, standard digital CMOS components are inherently not well suited for the implementation of a massive number of continuous weights/synapses in artificial neural networks (ANNs).

1.1. The promise of memristors. There is a strong case to be made for the exploration of alternative technologies. Although the memristor technology is currently still in development, it is a strong candidate for future non-CMOS and beyond von-Neumann computing solutions [4] . Since its early development in 2008 [5] , or even earlier under different names [6] , memristor technology expanded remarkably to include many different materials solutions, physical mechanisms, and novel computing approaches [4] . A single progress report cannot cover all different approaches and fast-growing developments in the field. The evaluation of state of the art in memristor-based electronics can be found elsewhere [7] . Instead, in this paper, we present and discuss a few representative case studies, showcasing the potential role of memristors in the expanding field of AI hardware. We present examples of how memristors are used for in-memory computing systems, deep learning accelerators, and spike-based computing. Finally, we discuss and speculate on the future of neuromorphic and bio-inspired computing paradigms and provide reservoir computing as an example.

For the last 15 years, memristors have been a focal point for many different research communities - mathematicians, solid-state physicists, experimental material scientists, electrical engineers and, more recently, computer scientists and computational neuroscientists. The concept of memristor was introduced almost 50 years ago, back in 1971 [8] , was nearly forgotten for almost four decades. It is now experiencing a rebirth with a vibrant and very active research community. There are many different flavours of memristive technologies. Still, in their most popular implementation, memristors are simple two-terminal devices with the extraordinary property that their resistance depends on their history of electrical stimuli. In other words, memristors are resistors with memory. They promise high levels of integration, stable non-volatile resistance states, fast resistance switching, excellent energy efficiency - all very desirable properties for next generation of memory technologies.

The physical implementations of memristors are broad and arguably include many different technologies such as redox-based resistive random-access memory (ReRAM), phase change memories (PCM), magnetoresistive random-access memory (MRAM). Further differentiations within larger classes can be made, depending on physical mechanisms that govern the resistance change. Many excellent reviews cover the principles and switching mechanisms of memristor devices. Here, we will briefly mention two extensively studied types of memristive devices, namely redox-based random access memory (ReRAM) and phase-change memory (PCM).

Resistance switching is one of the most explored properties of memristive devices. A thin insulating film reversibly changes its electrical resistance - between an insulating state and a conducting state - under the application of an external electrical stimulus. For binary memory devices, two stable states are sought, typically called the high resistance state (HRS), and the low resistance state (LRS). The transition from the HRS to the LRS is called a SET process, while a RESET process describes the transition from the LRS to the HRS.

Basic memory cells of both types, in their most straightforward implementation, have three layers - two conductive electrodes and a thin switching layer sandwiched in-between. Local redox processes govern resistance switching in ReRAM devices. A broad classification can be made based on a distinction between the switching that happens as a result of intrinsic properties of the switching material (typically oxides), and switching that is the result of indiffusion of metal ions (typically from one of the metallic electrodes). The former type is called intrinsic switching, and the latter is called extrinsic switching [9] . Alternatively, a classification can be made depending on the main driving force for the redox process (thermal or electrical), or the type of ions that move. The main three classes are electrochemical metallization cells (or conductive bridge) ReRAMs (ECM), valence change ReRAMs (VCM) and thermochemical ReRAMs (TCM) [4] .

Many ReRAM devices require an electroforming step prior to resistance switching. This can be considered a soft breakdown of the insulating material. A conductive filament is produced inside the insulating film as a result of the applied electrical bias. Modification of conductive filaments, led by a local redox process, leads to the change of resistance. The diameter of the conductive filament is typically of the order of a few nanometers to a few tens of nanometers, and it does not depend on the size of the electrodes. Another, less common type is interfacetype switching, which does not depend on creation and modification of conductive filaments, but can be driven by the formation of a tunnel or Schottky barrier across the whole interface between electrode and switching layer.

In the case of PCMs, the change of resistance due to the crystallisation and amorphisation processes of phase change materials. Amplitude and duration of applied voltage pulses control the phase transitions - the SET process changes the amorphous to a crystalline phase (HRS to LRS transition), and the RESET process changes the crystalline to an amorphous phase (LRS to HRS transition).

For many computing tasks, more than two states are required, and for most memristive devices, including ReRAMs and PCMs, many resistance states can be achieved. However, benchmarking of memristive devices for different applications, beyond pure digital memory, can be challenging and relies on many different parameters other than the number of different resistance states. We will discuss the main device properties in the context of different applications.

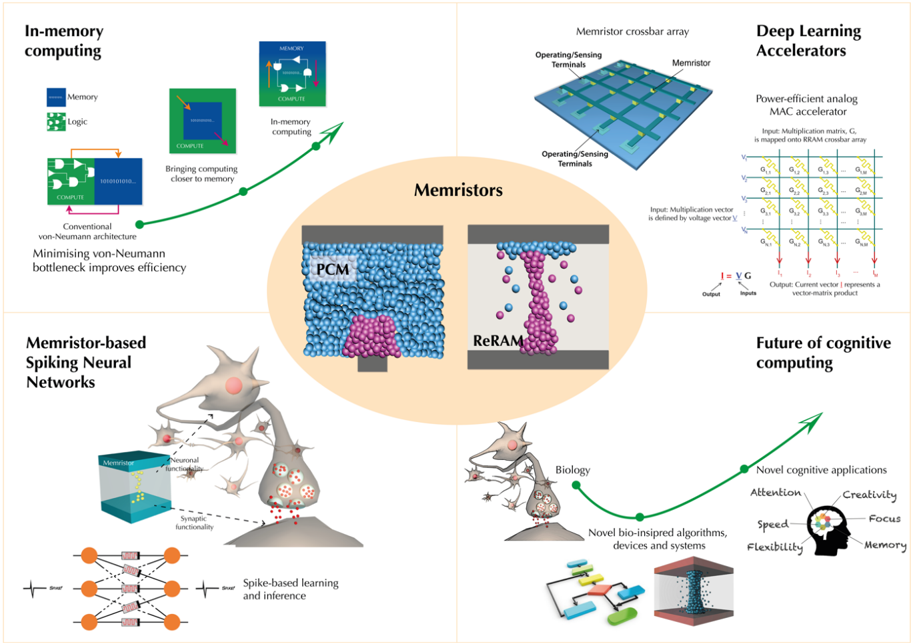

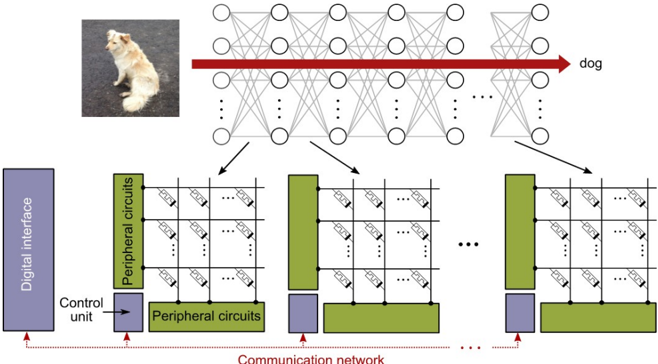

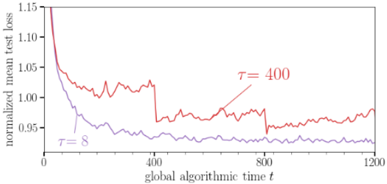

1.2 The landscape of different approaches and applications. In the context of this paper, memristors can be used in applications beyond simple memory devices [10] . A 'big picture' landscape of memristor-based approaches for AI is shown in Figure 1. There is more than one way that memristors can perform computing. A unique feature of memristor devices is the ability to co-locate memory and computing and to break the von Neumann bottleneck at the lowest, nanometre-scale level. One such approach is the concept of in-memory computing, which uses memory not only to store the data but also to perform computation at the same physical location. Furthermore, memristors have long been considered for deep learning acceleration. Specifically, memristive crossbar arrays physically represent weights in artificial neural networks as conductances at each crosspoint. When voltages are applied at one side of the crossbar and current sensed on the orthogonal terminals, the array provides vector-matrix multiplication in constant time step using Kirchhoff's and Ohm's laws. Vector-matrix multiplications dominate most DL algorithms - hundreds of thousands are often needed during training and inference. When weights are implemented as memristor conductances, there is no need for the extensive power-hungry data movement required by conventional digital systems based on the von Neumann architecture.

Other more bio-realistic concepts are also being explored. These include schemes relying on spike-based communication. The central premise of this approach can be summarised with the motto 'computing with time, not in time'. It has been shown that memristors can directly implement some functions of biological neurons and synapses, most importantly, synapse-like plasticity, and neuron-like integration and spiking. In these solutions, the information is encoded and transferred in the form of voltage or current spikes. Memristor resistances are used as proxies for synaptic strengths. More importantly, adjustment of the resistances is controlled according to local learning rules. One popular local learning rule is spike-timingdependent plasticity (STDP), which adjust a local state variable such as conductance dynamically based on the relative timing of spikes. In a simple example, the conductance of a memristive 'synapse' can be increased or decreased depending on the degree of overlap between pre- and post-synaptic voltage pulses. There also exist implementations that do not require overlapping pulses, instead utilising the volatile internal dynamics of memristive devices. Spike-based computing promises further improvements in power-efficiency, taking the inspiration from the remarkable efficiency of the human brain.

Finally, we speculate that, for future developments in AI, new knowledge and computational models from the fields of computational neuroscience could play a crucial role. Virtually all recent developments in ML and DL are driven by the field of computer science. At the same time, the algorithmic inspiration from neuroscience is mostly based on old models established as early as the 1950s. Although we are still at the infancy of understanding the full working principles of the biological brain, novel brain-inspired architectural principles, beyond simple probabilistic deep learning approaches, could lead to higher-level cognitive functionalities. One such example is the concept of reservoir computing, which we discuss briefly in the paper. It is unlikely that current digital CMOS transistor technology can be optimized for the implementation of much more dynamic and adaptive systems in an efficient way. In contrast, memristor-based systems, with their rich switching dynamics and many state variables, may provide a perfect substrate to build a new class of intelligent and efficient neuromorphic systems.

Figure 1. The landscape of memristor-based systems for Artificial Intelligence. In-memory computing aims to eliminate the von-Neumann bottleneck by implementing compute directly within the memory. Deep learning accelerators based on memristive crossbars are used to implement vector-matrix multiplication directly using Ohm's and Kirchhoff's laws. Spiking neural networks, a type of artificial neural networks, are biologically more plausible and do not operate with continuous signals, but use spikes to process and transfer data. Memristor systems could provide a hardware platform to implement spike-based learning and inference. More complex functionalities (neuromorphic), beyond simple digital switching CMOS paradigm, directly implemented in memristive hardware primitives, might fuel the next wave of higher cognitive systems.

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Diagram: Memristor-Based Computing Architectures and Applications

### Overview

The image presents a conceptual framework for memristor-based computing technologies, divided into four quadrants surrounding a central oval labeled "Memristors." Each quadrant explores a distinct application or architectural approach, emphasizing efficiency improvements, neural network implementations, and future cognitive computing paradigms.

---

### Components/Axes

1. **Central Oval (Memristors)**

- Contains two material diagrams:

- **PCM (Phase-Change Memory)**: Blue spheres with a pink core.

- **ReRAM (Resistive RAM)**: Pink spheres with a blue core.

- Text: "Memristors" (bold black font).

2. **Quadrant Labels**

- **Top-Left**: "In-memory computing"

- **Top-Right**: "Memristor crossbar array" and "Deep Learning Accelerators"

- **Bottom-Left**: "Memristor-based Spiking Neural Networks"

- **Bottom-Right**: "Future of cognitive computing"

3. **Diagram Elements**

- **Top-Left**:

- Conventional von-Neumann architecture (green box with "COMPUTE" and "MEMORY" labels).

- Arrows indicate data flow: "Bringing computing closer to memory."

- Text: "Minimising von-Neumann bottleneck improves efficiency."

- **Top-Right**:

- Memristor crossbar array (grid of green lines with yellow nodes labeled "Memristor").

- "Operating/Sensing Terminals" labeled on grid edges.

- Equations for analog MAC accelerator:

- Input: Multiplication matrix **G** mapped to RRAM crossbar.

- Output: Current vector **I** = **Y·G** (vector-matrix product).

- **Bottom-Left**:

- Neuron-like structure with dendritic spines (pink/red dots) and synaptic terminals.

- Text: "Spike-based learning and inference."

- **Bottom-Right**:

- Brain silhouette with labeled cognitive traits: "Attention," "Creativity," "Speed," "Focus," "Flexibility," "Memory."

- Green arrow pointing to "Novel bio-inspired algorithms, devices and systems."

---

### Detailed Analysis

1. **Top-Left (In-memory computing)**

- Conventional architecture shows separation between memory (blue) and logic (green).

- Memristor integration reduces data movement, improving efficiency.

2. **Top-Right (Memristor crossbar array)**

- Grid structure represents crossbar arrays with memristors at intersections.

- Analog MAC accelerator equations suggest parallel computation capabilities.

3. **Bottom-Left (Spiking Neural Networks)**

- Neuron model includes dendritic spines (pink/red) and synaptic terminals.

- Memristor array acts as synaptic weights for spike-based learning.

4. **Bottom-Right (Future cognitive computing)**

- Brain silhouette emphasizes human-like cognitive traits.

- Green arrow links memristors to bio-inspired systems.

---

### Key Observations

- **Color Coding**:

- Blue (PCM), Pink (ReRAM), Green (crossbar arrays), Orange (neurons).

- **Efficiency Focus**: All quadrants emphasize reducing energy consumption (e.g., "power-efficient analog MAC accelerator").

- **Biological Inspiration**: Spiking neural networks and brain-like cognitive traits suggest neuromorphic computing goals.

---

### Interpretation

The diagram illustrates memristors as a foundational technology for next-generation computing systems. By integrating memory and logic (top-left), enabling analog crossbar arrays (top-right), and mimicking biological neural processes (bottom-left), memristors address von-Neumann bottlenecks and enable energy-efficient AI. The bottom-right quadrant ties these advancements to broader cognitive applications, suggesting memristors could underpin systems with human-like adaptability and creativity. The emphasis on "novel bio-inspired algorithms" implies a shift toward neuromorphic computing paradigms that prioritize parallelism and low-power operation.

</details>

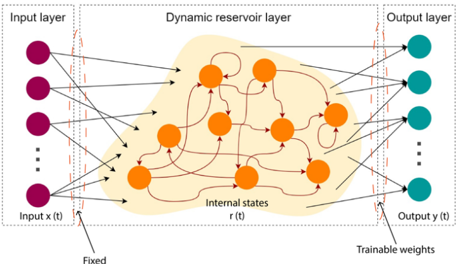

## 2. In-memory computing

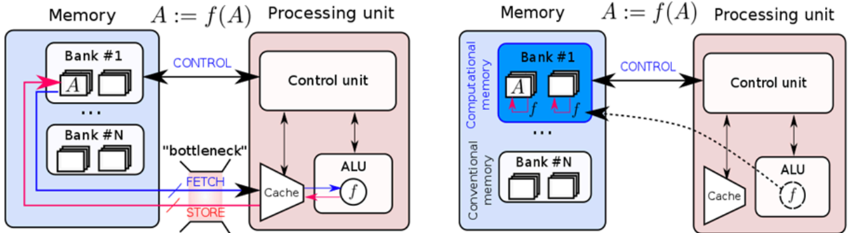

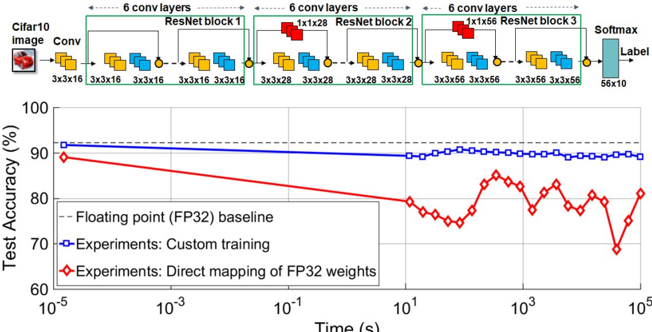

In the von Neumann architecture, which dates back to the 1940s, memory and processing units are physically separated and large amounts of data need to be shuttled back and forth between them during the execution of various computational tasks. The latency and energy associated with accessing data from the memory units are key performance bottlenecks for a range of applications, in particular for the increasingly prominent artificial intelligence related workloads [11] . The energy cost associated with moving data is a key challenge for both severely energy constrained mobile and edge computing as well as high performance computing in a cloud environment due to cooling constraints. The current approaches, such as using hundreds of processors in parallel [12] or application-specific processors [13] , are not likely to fully overcome the challenge of data movement. It is getting increasingly clear that novel architectures need to be explored where memory and processing are better collocated. In-memory computing is one such non-von Neumann approach where certain computational tasks are performed in place in the memory itself organized as a computational memory unit [14,15 ,16, 17]. As schematically illustrated in Figure 2, in-memory computing obviates the need to move data into a processing unit. Computing is performed by exploiting the physical attributes of the memory devices, their array-level organization, the peripheral circuitry as well as the control logic. In this paradigm, the memory is an active participant in the computational task. Besides reducing latency and energy cost associated with data movement, in-memory computing also has the potential to improve the computational time complexity associated with certain tasks due to the massive parallelism afforded by a dense array of millions of nanoscale memory devices serving as compute units. By introducing physical coupling between the memory devices, there is also a potential for further reduction in computational time complexity [18, 19]. Memristive devices such as PCM, ReRAM and MRAM [20, 21] are particularly well suited for in-memory computing.

## Processing unit & Conventional memory Processing unit & Computational memory

Figure 2. In-memory computing. In a conventional computing system, when an operation f is performed on data D, D has to be moved into a processing unit. This incurs significant latency and energy cost and creates the well-known von Neumann bottleneck. With in-memory computing, f(D) is performed within a computational memory unit by exploiting the physical attributes of the memory devices. This obviates the need to move D to the processing unit. (Adapted and reproduced with permission [14] , Copyright 2017, Nature Research)

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Diagram: Memory and Processing Unit Architectures Comparison

### Overview

The image presents two side-by-side diagrams comparing memory and processing unit architectures. The left diagram illustrates a conventional architecture with a clear separation between memory banks and processing units, while the right diagram shows a computational memory architecture with integrated processing capabilities. Both diagrams include control units, ALUs (Arithmetic Logic Units), and memory banks, but differ in their data flow and computational capabilities.

### Components/Axes

**Left Diagram (Conventional Architecture):**

- **Memory Section**:

- Contains multiple "Bank #1" to "Bank #N" blocks, each with two square elements.

- Labels: "Memory", "Bank #1", "Bank #N".

- **Processing Unit Section**:

- Contains "Control unit" and "ALU" blocks.

- Labels: "Processing unit", "Control unit", "ALU".

- **Data Flow**:

- Arrows labeled "CONTROL" (blue) connect memory banks to the control unit.

- Arrows labeled "FETCH" (blue) and "STORE" (red) connect the cache to the ALU.

- A "bottleneck" label (red) highlights the fetch/store pathway.

- **Function Notation**: "A := f(A)" appears above the processing unit.

**Right Diagram (Computational Memory Architecture):**

- **Memory Section**:

- Contains "Computational memory" with "Bank #1" to "Bank #N" blocks.

- Labels: "Computational memory", "Bank #1", "Bank #N".

- **Processing Unit Section**:

- Contains "Control unit" and "ALU" blocks.

- Labels: "Processing unit", "Control unit", "ALU".

- **Data Flow**:

- Arrows labeled "CONTROL" (blue) connect computational memory to the control unit.

- Dashed arrows labeled "f" (function application) connect memory banks directly to the ALU.

- No explicit "bottleneck" label.

- **Function Notation**: "A := f(A)" appears above the processing unit.

**Shared Elements**:

- Both diagrams use color-coded arrows:

- Blue: "CONTROL" signals.

- Red: "STORE" operations (left diagram only).

- Pink: "bottleneck" highlight (left diagram only).

- Both include "Cache" blocks connected to the ALU.

### Detailed Analysis

**Left Diagram**:

- Memory banks are isolated from processing units.

- Data must pass through the control unit before reaching the ALU.

- Fetch/store operations create a bottleneck, indicated by the red label and thicker arrow.

- Function application (f(A)) occurs after data retrieval from memory.

**Right Diagram**:

- Memory banks are labeled "Computational memory," implying integrated computation.

- Dashed arrows labeled "f" suggest in-memory computation (A := f(A)).

- No explicit bottleneck, as computation occurs closer to memory.

- Direct data flow from memory to ALU via function application.

### Key Observations

1. **Bottleneck Elimination**: The right diagram removes the fetch/store bottleneck present in the left diagram.

2. **In-Memory Computation**: The right diagram introduces function application (f) directly in memory banks, enabling computation without data movement.

3. **Architectural Integration**: Computational memory in the right diagram blurs the line between storage and processing.

4. **Control Unit Role**: Both architectures retain a central control unit, but its role differs:

- Left: Manages data flow between isolated components.

- Right: Coordinates integrated memory-processing operations.

### Interpretation

The diagrams contrast traditional von Neumann architectures (left) with emerging computational memory designs (right). The left diagram's bottleneck highlights the performance limitations of separating memory and processing. The right diagram's integration of computation into memory banks suggests:

- Reduced latency through in-memory operations.

- Lower energy consumption by minimizing data movement.

- Potential for parallel computation across memory banks.

This architectural shift aligns with trends in neuromorphic and in-memory computing, where processing units are embedded within memory hierarchies to address the "memory wall" problem. The function notation (f(A)) implies support for complex operations directly in memory, which could enable applications like AI/ML workloads with reduced data transfer overhead.

</details>

Figure 3. The key physical attributes of memristive devices that facilitate in- memory computing . a) Binary storage capability whereby the devices can be switched between high and low resistance values in a repeatable manner (Adapted and reproduced with permission [22] . Copyright 2019, IOP Publishing). b) Multi- level storage capability whereby the devices can be programmed to a continuum of resistance values by the application of appropriate programming pulses (Adapted and reproduced with permission [23] . Copyright 2018, American Institute of Physics) c) The accumulative behavior whereby the resistance of a device can be progressively decreased by the successive application of identical programming pulses (Adapted and reproduced with permission [23] . Copyright 2018, American Institute of Physics).

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Line Graphs: Resistance vs. Cycles, Programming Current, and Pulses

### Overview

The image contains three line graphs (a, b, c) depicting resistance (Ω) as a function of different operational parameters: (a) number of cycles, (b) programming current (μA), and (c) number of pulses. Each graph includes insets illustrating a device structure with layered components (electrode, active layer, electrode) and red dots representing localized features. The graphs use logarithmic scales for resistance and linear scales for operational parameters.

---

### Components/Axes

#### Graph a: Resistance vs. Number of Cycles

- **X-axis**: Number of cycles (logarithmic scale: 10⁰ to 10¹⁰)

- **Y-axis**: Resistance (Ω) (logarithmic scale: 10⁴ to 10⁷)

- **Legend**:

- Black squares: "SET"

- Red dots: "RESET"

- **Insets**: Two device diagrams showing layered structures with red dots in the active layer.

#### Graph b: Resistance vs. Programming Current

- **X-axis**: Programming current (μA) (linear scale: 100 to 800)

- **Y-axis**: Resistance (Ω) (logarithmic scale: 10⁴ to 10⁷)

- **Legend**:

- Purple circles: "SET"

- Green squares: "RESET"

- Blue triangles: "RESET+SET"

- Red diamonds: "RESET+SET+RESET"

- **Insets**: Three device diagrams with red dots in the active layer.

#### Graph c: Resistance vs. Number of Pulses

- **X-axis**: Number of pulses (linear scale: 0 to 30)

- **Y-axis**: Resistance (Ω) (logarithmic scale: 10⁴ to 10⁷)

- **Legend**:

- Purple circles: "SET"

- Green squares: "RESET"

- Blue triangles: "RESET+SET"

- Red diamonds: "RESET+SET+RESET"

- **Insets**: Three device diagrams with red dots in the active layer.

---

### Detailed Analysis

#### Graph a: Resistance vs. Number of Cycles

- **SET (black squares)**: Resistance decreases from ~10⁶ Ω to ~10⁴ Ω over 10⁸ cycles. The trend is monotonic and gradual.

- **RESET (red dots)**: Resistance remains constant at ~10⁷ Ω across all cycles. No variation observed.

- **Key Data Points**:

- At 10² cycles: SET ≈ 10⁶ Ω, RESET ≈ 10⁷ Ω.

- At 10⁸ cycles: SET ≈ 10⁴ Ω, RESET ≈ 10⁷ Ω.

#### Graph b: Resistance vs. Programming Current

- **SET (purple circles)**: Resistance increases from ~10⁵ Ω to ~10⁶ Ω as current rises from 100 to 800 μA.

- **RESET (green squares)**: Resistance remains flat at ~10⁵ Ω regardless of current.

- **RESET+SET (blue triangles)**: Resistance increases from ~10⁵ Ω to ~10⁶ Ω, similar to SET but with a steeper slope.

- **RESET+SET+RESET (red diamonds)**: Resistance increases from ~10⁵ Ω to ~10⁶ Ω, with a plateau at higher currents.

- **Key Data Points**:

- At 100 μA: SET ≈ 10⁵ Ω, RESET ≈ 10⁵ Ω, RESET+SET ≈ 10⁵ Ω, RESET+SET+RESET ≈ 10⁵ Ω.

- At 800 μA: SET ≈ 10⁶ Ω, RESET ≈ 10⁵ Ω, RESET+SET ≈ 10⁶ Ω, RESET+SET+RESET ≈ 10⁶ Ω.

#### Graph c: Resistance vs. Number of Pulses

- **SET (purple circles)**: Resistance decreases from ~10⁶ Ω to ~10⁵ Ω over 30 pulses.

- **RESET (green squares)**: Resistance remains flat at ~10⁵ Ω.

- **RESET+SET (blue triangles)**: Resistance decreases from ~10⁶ Ω to ~10⁵ Ω, with a slower rate than SET.

- **RESET+SET+RESET (red diamonds)**: Resistance decreases from ~10⁶ Ω to ~10⁵ Ω, with a gradual decline.

- **Key Data Points**:

- At 0 pulses: SET ≈ 10⁶ Ω, RESET ≈ 10⁵ Ω, RESET+SET ≈ 10⁶ Ω, RESET+SET+RESET ≈ 10⁶ Ω.

- At 30 pulses: SET ≈ 10⁵ Ω, RESET ≈ 10⁵ Ω, RESET+SET ≈ 10⁵ Ω, RESET+SET+RESET ≈ 10⁵ Ω.

---

### Key Observations

1. **SET Operation**: Resistance decreases with cycles (graph a) and increases with programming current (graph b). In graph c, resistance decreases with pulses.

2. **RESET Operation**: Resistance remains constant across all parameters (graphs a, b, c).

3. **Combined Sequences**:

- "RESET+SET" and "RESET+SET+RESET" show intermediate resistance values between SET and RESET.

- Resistance trends for combined sequences are less pronounced than for individual operations.

4. **Device Structure**: Insets in all graphs show a layered device with red dots in the active layer, likely representing defects or active regions critical for resistance modulation.

---

### Interpretation

- **SET/RESET Dynamics**: The device exhibits non-volatile switching behavior, where resistance changes persist across cycles (graph a) and pulses (graph c). The SET operation reduces resistance, while RESET maintains it.

- **Current Dependence**: Resistance modulation is current-dependent (graph b), with higher currents inducing larger resistance changes. This suggests a threshold effect for SET/RESET operations.

- **Pulse Effects**: Repeated pulses (graph c) reduce resistance, indicating potential fatigue or stabilization of the active layer. The gradual decline suggests a memory effect or degradation mechanism.

- **Device Mechanism**: The red dots in the active layer (insets) may represent localized defects or phase-separated regions that govern resistance switching. Their distribution could influence the device's response to electrical stimuli.

The data collectively demonstrate a resistive switching memory device with tunable resistance via electrical programming, current, and pulse sequences. The interplay between SET/RESET operations and device structure highlights the importance of material composition and defect engineering for optimizing performance.

</details>

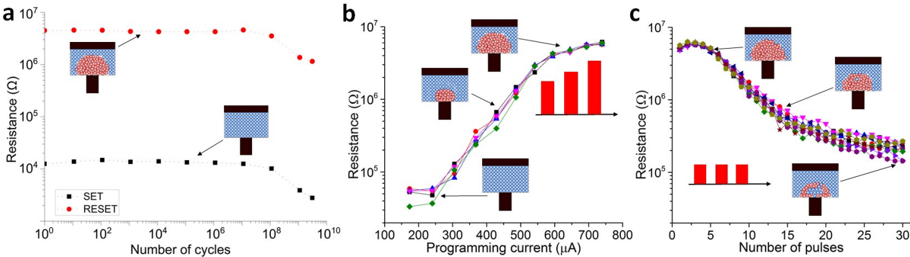

There are several key physical attributes that enable in-memory computing using memristive devices. First of all, the ability to store two levels of resistance/conductance values in a nonvolatile manner and to reversibly switch from one level to the other (binary storage capability) can be exploited for computing. Figure 3 a shows the resistance values achieved upon repeated switching of a representative PCM device between low resistance SET states and high resistance RESET states. Due to the SET and RESET states, resistance could serve as an additional logic state variable. In conventional CMOS, voltage serves as the single logic state variable. The input signals are processed as voltage signals and are output as voltage signals. By combining CMOS circuitry with memristive devices, it is possible to exploit the additional resistance state variable. For example, the RESET state could indicate logic '0' and the SET state could denote logic '1'. This enables logical operations that rely on the interaction between the voltage and resistance state variables and could enable the seamless integration of processing and storage. This is the essential idea behind memristive logic, which is an active area of research [24, 25, 26] . Memristive logic has the potential to impact application areas such as image processing [27] , encryption and database query [28] . Brain-inspired hyperdimensional computing that involves the manipulation of large binary vectors has recently emerged as another promising application area for in-memory logic [29, 30] . Going beyond binary storage, certain memristive devices can also be programmed to a continuum of resistance or conductance values (analog storage capability). For example, Figure 3 b shows a continuum of resistance levels in a PCM device achieved by the application of programming pulses with varying amplitude. The device is first programmed to the fully crystalline state, after which RESET pulses are applied with progressively increasing amplitude. The device resistance is measured after the application of each RESET pulse. Due to this property, it is possible to program a memristive device to a certain desired resistance value through iterative programming by applying several pulses in a closed-loop manner [31] . Yet another physical attribute that enables in-memory computing is the accumulative behavior exhibited by certain memristive devices. In these devices, it is possible to progressively reduce the device resistance by the successive application of SET pulses with the same amplitude. And in certain cases, it is possible to progressively increase the resistance by the successive application of RESET pulses. Experimental measurement of this accumulative behavior in a

PCM device is shown in Figure 3 c . This accumulative behavior is central to applications such as training deep neural networks which is described later. The intrinsic stochasticity associated with the switching behavior in memristive devices can also be exploited for inmemory computing [32] . Applications include stochastic computing [33] and physically unclonable functions [34] .

× Figure 4. a) Compressed sensing involves one matrix-vector multiplication. Data recovery is performed via an iterative scheme, using several matrix-vector multiplications on the very same measurement matrix and its transpose. b) An experimental illustration of compressed sensing recovery in the context of image compression is presented, showing 50% compression of a 128x128 pixel image. The normalized mean square error (NMSE) associated with the reconstructed signal is plotted against the number of iterations. Adapted and reproduced with permission [35] , Copyright 2018, IEEE.

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Diagram: AMP Algorithm and NMSE Performance Analysis

### Overview

The image contains two primary components:

1. **Diagram (a)**: Illustrates the Adaptive Measurement and Processing (AMP) algorithm for iterative image reconstruction.

2. **Graph (b)**: Compares the normalized mean squared error (NMSE) performance of different computational methods over iterations.

3. **Images**: Side-by-side comparison of an original grayscale image and its reconstructed version.

---

### Components/Axes

#### Diagram (a)

- **Measurement Section (Blue Box)**:

- Variables: `x₁, x₂, ..., xₙ` (input signals) and `y₁, y₂, ..., yₘ` (measurements).

- Arrows: Green diagonal arrows indicate measurement mapping `y = Mx`.

- **Iterative Reconstruction Section (Red Box)**:

- Variables:

- `q₁(k), q₂(k), ..., qₘ(k)` (intermediate estimates).

- `u₁(k), u₂(k), ..., uₙ(k)` (update terms).

- `z₁(k), z₂(k), ..., zₘ(k)` (final reconstructions).

- Equations:

- `q(k) = Mx̂(k)` (forward model).

- `u(k) = Mᵀz(k)` (backprojection).

- Arrows: Red arrows indicate iterative updates between variables.

#### Graph (b)

- **X-axis**: Iterations `k` (0 to 30).

- **Y-axis**: NMSE (log scale, 10⁻³ to 10⁰).

- **Legend**:

- Red solid line: PCM chip.

- Blue dashed line: 4x4-bit Fixed-point.

- Green dash-dot line: Floating-point.

#### Images

- **Original Image**: Grayscale photo of a house with a chimney.

- **Reconstructed Image**: Slightly blurred version of the original, showing reconstruction fidelity.

---

### Detailed Analysis

#### Diagram (a)

- **Measurement Mapping**:

- Input signals `x₁–xₙ` are transformed into measurements `y₁–yₘ` via matrix `M`.

- Green arrows show the forward process `y = Mx`.

- **Iterative Reconstruction**:

- Forward model: `q(k) = Mx̂(k)` (blue arrows).

- Backprojection: `u(k) = Mᵀz(k)` (red arrows).

- Iterative updates refine estimates `q(k)` and `u(k)` to produce reconstructions `z(k)`.

#### Graph (b)

- **NMSE Trends**:

- **PCM chip (red)**: Converges to ~0.05 NMSE by iteration 10, stabilizes.

- **4x4-bit Fixed-point (blue)**: Converges to ~0.1 NMSE, slower than PCM.

- **Floating-point (green)**: Converges to ~0.01 NMSE, fastest and most accurate.

- **Key Values**:

- At iteration 30:

- PCM: 0.05 ± 0.01.

- Fixed-point: 0.1 ± 0.02.

- Floating-point: 0.01 ± 0.005.

#### Images

- **Original vs. Reconstructed**:

- Original: Sharp edges, clear chimney details.

- Reconstructed: Slight blurring, reduced contrast in chimney and roof edges.

---

### Key Observations

1. **Algorithm Flow**:

- Measurement → Forward model → Backprojection → Iterative refinement.

2. **NMSE Performance**:

- Floating-point achieves the lowest NMSE, outperforming fixed-point and PCM.

- PCM and fixed-point show similar convergence rates but higher error floors.

3. **Image Quality**:

- Reconstructed image retains structural details but lacks fine texture.

---

### Interpretation

1. **AMP Algorithm**:

- Demonstrates a two-stage process: measurement acquisition followed by iterative reconstruction using forward/backprojection steps.

- The red/blue/green color coding distinguishes measurement (blue) from reconstruction (red) phases.

2. **Computational Trade-offs**:

- Floating-point precision yields the best NMSE but may require higher computational resources.

- Fixed-point and PCM offer lower precision but are more hardware-friendly.

3. **Image Reconstruction**:

- The reconstructed image’s NMSE (~0.01 for floating-point) aligns with the visual quality, suggesting the algorithm effectively balances accuracy and efficiency.

4. **Outliers/Anomalies**:

- No significant outliers in NMSE trends. All methods converge monotonically.

The data suggests that AMP’s iterative reconstruction improves with computational precision, with floating-point methods providing the most accurate results. The visual comparison confirms that reconstruction fidelity degrades with lower precision methods.

</details>

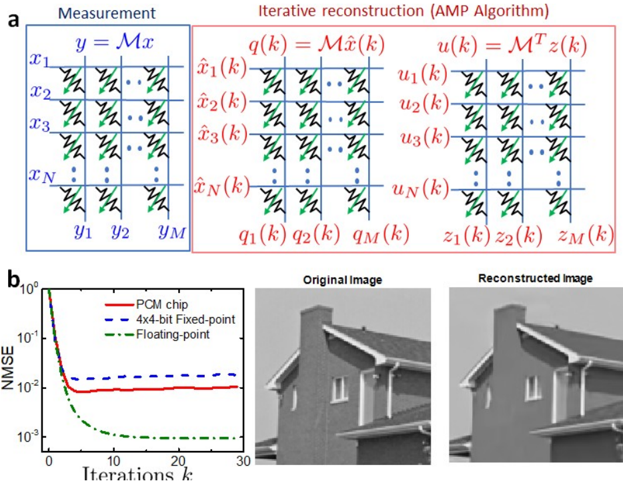

× × A very useful in-memory computing primitive enabled by the binary and analog nonvolatile storage capability is matrix-vector multiplication (MVM) [36, 37] . The physical laws that are exploited to perform this operation are Ohm's law and Kirchhoff's current summation laws. For example, to perform the operation Ax = b , the elements of A are mapped linearly to the conductance values of memristive devices organized in a crossbar configuration. The x values are mapped linearly to the amplitudes of read voltages and are applied to the crossbar along the rows. The result of the computation, b , will be proportional to the resulting current measured along the columns of the array. Compressed sensing and recovery are one of the applications that could benefit from an in-memory computing unit that performs matrix-vector multiplications. The objective behind compressed sensing is to acquire a large signal at subNyquist sampling rate and to subsequently reconstruct that signal accurately. Unlike most other compression schemes, sampling and compression are done simultaneously, with the signal getting compressed as it is sampled. Such techniques have widespread applications in the domain of medical imaging, security systems, and camera sensors. The compressed measurements can be thought of as a mapping of a signal x of length N to a measurement vector y of length M < N. If this process is linear, then it can be modeled by an M N measurement matrix M. The idea is to store this measurement matrix in the in-memory computing unit, with memristive devices organized in a cross-bar configuration (see Figure 4(a)). In this manner the compression operation can be performed in O(1) time complexity.

To recover the original signal from the compressed measurements, an approximate message passing algorithm (AMP) can be used, using an iterative algorithm that involves several matrix-vector multiplications on the very same measurement matrix and its transpose. In this way the same matrix that was coded in the in-memory computing unit can also be used for the reconstruction, reducing reconstruction complexity from O( MN) to O( N ). An experimental illustration of compressed sensing recovery in the context of image compression is shown in Figure 4(b). A 128x128-pixel image was compressed by 50% and recovered using the measurement matrix elements encoded in a PCM array. The normalized mean square error associated with the recovered signal is plotted as a function of the number of iterations. A remarkable property of AMP is that its convergence rate is independent of the precision of the matrix-vector multiplications. The lack of precision only results in a higher error floor, which may be considered acceptable for many applications. Note that, in this application, the measurement matrix remains fixed and hence the property of PCM that is exploited is the multi-level storage capability.

## 3. Deep learning accelerators

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Diagram: Neural Network Processing Pipeline for Image Classification

### Overview

The diagram illustrates a technical system for image classification using a neural network architecture. It shows the flow of data from a digital interface through peripheral circuits, a communication network, and finally into a multi-layered neural network that outputs a classification label ("dog"). The system emphasizes modular processing stages with distinct color-coded components.

### Components/Axes

1. **Digital Interface** (Purple block on the far left)

- Represents the input source for raw image data

2. **Control Unit** (Small purple block below digital interface)

- Coordinates processing between components

3. **Peripheral Circuits** (Green grid structures)

- Multiple identical modules arranged in rows

- Labeled with "Peripheral circuits" text

4. **Communication Network** (Dashed red lines connecting components)

- Connects peripheral circuits to neural network

5. **Neural Network** (Central interconnected node structure)

- Three distinct layers:

- Input layer (leftmost nodes)

- Hidden layers (middle interconnected nodes)

- Output layer (rightmost nodes)

- Final output labeled "dog" with red arrow

6. **Color Coding**

- Purple: Digital interface/control unit

- Green: Peripheral circuits

- Blue: Communication network elements

- Red: Final classification output

### Detailed Analysis

- **Input Processing**: Digital interface → Control unit → Peripheral circuits (grid structure suggests parallel processing)

- **Data Flow**:

- Peripheral circuits connect via communication network (dashed red lines) to neural network

- Neural network shows progressive complexity from input to output layers

- **Output**: Final node cluster outputs "dog" with directional arrow

- **Modular Design**: Repeating peripheral circuit patterns suggest scalable architecture

### Key Observations

1. The system employs a hierarchical processing approach with three distinct stages

2. Peripheral circuits appear to perform feature extraction before neural network processing

3. Communication network uses dashed lines, implying non-direct data transfer

4. Neural network visualization uses standard node-connection architecture

5. Color coding provides clear component differentiation without explicit legend

### Interpretation

This diagram represents a distributed computing architecture for image classification:

- **Peripheral circuits** likely handle preprocessing tasks (e.g., noise reduction, feature extraction)

- **Communication network** coordinates data between processing modules

- **Neural network** performs final pattern recognition and classification

- The red arrow emphasizing "dog" output highlights the system's purpose: transforming raw image data into semantic labels

- Modular design suggests potential for adding more peripheral circuits to handle larger datasets or more complex image types

- The absence of explicit timing indicators implies focus on architectural relationships rather than performance metrics

</details>

Communicationnetwork

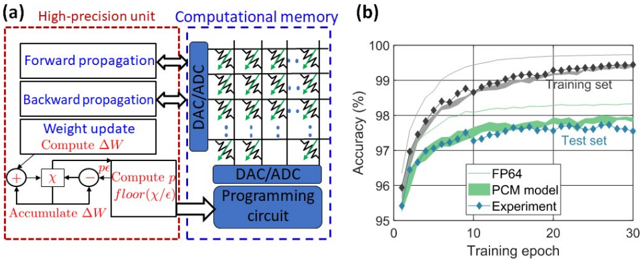

Figure 5. Deep learning based on in-memory computing. The various layers of a neural network are mapped to a computational memory unit where memristive devices are organized in a crossbar configuration. The synaptic weights are stored in the conductance state of the memristive devices. A global communication network is used to send data from one array to another. Adapted and reproduced with permission [17] , Copyright 2020, Nature Research.

Deep neural networks (DNNs), loosely inspired by biological neural networks, consist of parallel processing units called neurons interconnected by plastic synapses. By tuning the weights of these interconnections using millions of labelled examples, these networks are able to perform certain supervised learning tasks remarkably well. These networks are typically trained via a supervised learning algorithm based on gradient descent. During the training phase, the input data is forward propagated through the neuron layers with the synaptic networks performing multiply-accumulate operations. The final layer responses are compared with input data labels and the errors are back-propagated. Both steps involve sequences of matrix-vector multiplications. Subsequently, the synaptic weights are updated to reduce the error. This optimization approach can take multiple days or weeks to train state-of-the-art networks on conventional computers. Hence, there is a significant effort towards the design of custom ASICs based on reduced precision arithmetic and highly optimized dataflow [13, 38] . However, the need to shuttle millions of synaptic weight values between the memory and processing unit remains a key performance bottleneck and hence in-memory computing is being explored as an alternative approach for both inference and training of DNNs [39, 40] . The essential idea is to map the various layers of a neural network to an in-memory computing unit where memristive devices are organized in a crossbar configuration (see Figure 5). The synaptic weights are stored in the conductance state of the memristive devices and the propagation of data through each layer is performed in a single step by inputting the data to the crossbar rows and deciphering the results at the columns.

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Line Graph: Test Accuracy vs. Time for Neural Network Training

### Overview

The image contains two primary components:

1. A **neural network architecture diagram** (top) depicting a ResNet-based model for CIFAR-10 image classification.

2. A **line graph** (bottom) comparing test accuracy over time for three training methods: FP32 baseline, custom training, and direct mapping of FP32 weights.

---

### Components/Axes

#### Neural Network Diagram

- **Input**: CIFAR-10 image (3x32x32)

- **Layers**:

- 6 convolutional layers (Conv) with 3x3x16 filters

- 3 ResNet blocks (each with 6 convolutional layers):

- Block 1: 3x16x16 → 3x28x28

- Block 2: 3x28x28 → 3x56x56

- Block 3: 3x56x56 → 3x56x56

- Output: Softmax layer (56x10) for label prediction

- **Color Coding**:

- Yellow: Convolutional layers

- Blue: ResNet blocks

- Red: ResNet blocks (highlighted in diagram)

- Gray: Softmax layer

#### Line Graph

- **X-axis**: Time (s) on logarithmic scale (10⁻⁵ to 10⁵)

- **Y-axis**: Test Accuracy (%) from 60% to 100%

- **Legend**:

- Dashed gray: FP32 baseline (90% accuracy)

- Solid blue: Custom training experiments (90% accuracy)

- Red diamonds: Direct mapping of FP32 weights (80–85% accuracy)

---

### Detailed Analysis

#### Neural Network Diagram

- **Flow**:

- Input → 6 Conv layers → ResNet Block 1 → ResNet Block 2 → ResNet Block 3 → Softmax → Label

- **Key Details**:

- ResNet blocks use residual connections (indicated by red arrows in diagram).

- Output dimensions grow from 3x16x16 to 3x56x56 across blocks.

#### Line Graph

1. **FP32 Baseline (Gray Dashed Line)**:

- Constant at ~90% accuracy across all time scales.

2. **Custom Training (Blue Line)**:

- Stable at ~90% accuracy, matching the FP32 baseline.

3. **Direct Mapping of FP32 Weights (Red Diamonds)**:

- Starts at ~85% accuracy, dips below 80% at ~10¹ seconds, then recovers to ~80% by 10³ seconds.

- Exhibits significant volatility compared to other methods.

---

### Key Observations

1. **Accuracy Stability**:

- Custom training and FP32 baseline maintain near-identical accuracy (~90%), suggesting robust performance.

2. **Direct Mapping Limitations**:

- Red line shows a 10% accuracy drop relative to the baseline, with erratic fluctuations.

3. **Time Correlation**:

- Direct mapping’s performance degradation occurs at intermediate time scales (~10¹–10³ seconds).

---

### Interpretation

1. **Model Architecture**:

- The ResNet-based design (with residual connections) likely enables efficient feature extraction, contributing to high accuracy.

2. **Training Method Impact**:

- Direct mapping of FP32 weights introduces instability, possibly due to quantization errors or suboptimal weight initialization.

- Custom training avoids these issues, maintaining performance parity with the FP32 baseline.

3. **Practical Implications**:

- Direct mapping may be unsuitable for production without additional optimization (e.g., fine-tuning).

- The FP32 baseline serves as a critical reference for evaluating quantization trade-offs.

---

### Notable Anomalies

- **Red Line Dip**: The sharp accuracy drop at ~10¹ seconds suggests a potential instability during mid-training phases for direct mapping.

- **Recovery at 10³ Seconds**: Partial recovery implies some adaptation to the training process, but residual performance gaps persist.

</details>

Time (s)

Figure 6. Deep learning inference. Experimental results on ResNet-32 using the CIFAR-10 dataset. The classification accuracies obtained via the direct mapping and custom training approaches are compared to the floating-point baseline. Adapted and reproduced with permission [40] , Copyright 2019, IEEE.

Deep learning inference refers to just the forward propagation in a DNN once the weights have been learned. Both binary and analogue storage capability of memristive devices can be exploited for the MVM operations associated with the inference operation. The key challenges are the inaccuracies associated with programming the devices to a specified synaptic weight as well as drift, noise etc. associated with the conductance values [41] . Due to these reasons, the synaptic weights that are obtained by training in high precision arithmetic (e.g. 32-bit floating point) cannot be mapped directly to computational memory. However, it can be shown that by customizing the training procedure to make it aware of these devicelevel nonidealities, it is possible to obtain synaptic weights that are suitable for being mapped to an in-memory computing unit [42,40] . A more recent approach is to use the committee machines of multiple smaller neural networks. The approach shows the promise of increasing inference accuracy without increasing the number of devices by using a committee of smaller neural networks [43] . Figure 6 shows mixed hardware/software experimental results using a prototype multi-level PCM chip. The synaptic weights are mapped to PCM devices organized in a 2-PCM differential configuration (723,444 PCM devices in total). It can be seen that the custom training scheme approaches the floating-point base-line, whereas the direct mapping approach fails to deliver sufficient accuracy. The slight temporal decline in accuracy is attributed to the conductance drift exhibited by PCM devices [44] . However, in spite of the drift, a classification accuracy of close to 90% is maintained over a significant duration of time.

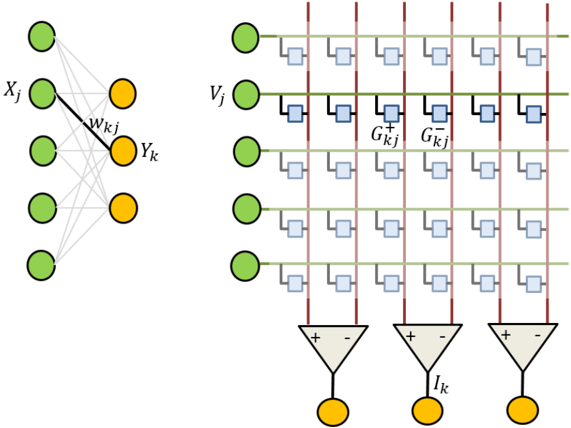

Figure 7. Deep learning training. a) Schematic illustration of the mixed-precision architecture for training DNNs. b) The synaptic weight distributions and classification accuracies are compared between the experiments and floating point baseline [45] .

<details>

<summary>Image 7 Details</summary>

### Visual Description

## Diagram and Chart: High-Precision Unit and Training Accuracy Analysis

### Overview

The image contains two components:

1. **Diagram (a)**: A technical flowchart depicting a high-precision unit and computational memory system.

2. **Graph (b)**: A line chart showing training accuracy over epochs for three data series (FP64, PCM model, Experiment).

---

### Components/Axes

#### Diagram (a)

- **Labels**:

- **High-precision unit** (red dashed box):

- Forward propagation

- Backward propagation

- Weight update

- Compute ΔW

- Accumulate ΔW

- Compute p

- floor(χ/ε)

- **Computational memory** (blue dashed box):

- DAC/ADC (Digital-to-Analog/Analog-to-Digital converters)

- Programming circuit

- **Flow**:

- Forward/backward propagation → Weight update → Compute ΔW → Accumulate ΔW → Compute p → floor(χ/ε) → DAC/ADC → Programming circuit.

#### Graph (b)

- **Axes**:

- **X-axis**: Training epoch (0–30, linear scale).

- **Y-axis**: Accuracy (%) (95–100, linear scale).

- **Legend**:

- **FP64** (gray line)

- **PCM model** (green line)

- **Experiment** (blue diamonds)

---

### Detailed Analysis

#### Diagram (a)

- **Textual elements**:

- "Forward propagation" and "Backward propagation" are labeled with bidirectional arrows.

- "Weight update" includes a summation symbol (+) and a subtraction symbol (−).

- "Compute ΔW" and "Accumulate ΔW" are connected via a feedback loop.

- "Compute p" and "floor(χ/ε)" are linked to the DAC/ADC block.

- **Spatial grounding**:

- The high-precision unit is on the left, computational memory in the center, and programming circuit on the right.

#### Graph (b)

- **Data series**:

- **FP64** (gray line): Starts at ~95% accuracy, rises to ~99.5% by epoch 30.

- **PCM model** (green line): Starts at ~95%, increases to ~98% by epoch 30.

- **Experiment** (blue diamonds): Starts at ~95%, fluctuates between ~96–97.5%, converging to ~97.5% by epoch 30.

- **Trends**:

- FP64 shows the steepest upward slope.

- PCM model and Experiment exhibit slower, more gradual improvements.

- All series converge toward higher accuracy as epochs increase.

---

### Key Observations

1. **FP64 dominates**: The gray line (FP64) consistently outperforms other series, reaching near-100% accuracy.

2. **PCM model vs. Experiment**: The green line (PCM model) and blue diamonds (Experiment) show similar trends but with lower final accuracy (~98% vs. ~97.5%).

3. **Convergence**: All series plateau near 97–99.5% accuracy by epoch 30, suggesting diminishing returns after initial training.

---

### Interpretation

- **Technical implications**:

- The high-precision unit (diagram a) likely enables FP64's superior performance by optimizing weight updates and error computation.

- The PCM model and Experiment may represent alternative architectures or hardware implementations, with slightly lower efficiency.

- **Training dynamics**:

- The graph highlights the importance of epoch count for model convergence. FP64 achieves near-optimal accuracy faster than other methods.

- **Anomalies**:

- The Experiment's fluctuating accuracy (blue diamonds) suggests potential instability or noise in the training process compared to the smoother PCM model.

This analysis underscores the role of precision in computational memory systems and their impact on machine learning training efficiency.

</details>

In-memory computing can also be used in the context of supervised training of DNNs with backpropagation. When performing training of a DNN encoded in crossbar arrays, forward propagation is performed in the same way as inference described above. Next, backward propagation is performed by inputting the error gradient from the subsequent layer onto the columns of the current layer and deciphering the result from the rows. Subsequently the error gradient is computed. Finally, the weight update is performed based on the outer product of activations and error gradients of each layer. This weight update relies on the accumulative behaviour of memristive devices. Recent deep learning research shows that when training DNNs, it is possible to perform the forward and backward propagations rather imprecisely while the gradients need to be accumulated in high precision [ 46 ] . This observation makes the DL training problem amenable to the mixed-precision in-memory computing approach that was recently proposed [ 47 ] . The in-memory compute unit is used to store the synaptic weights and to perform the forward and backward passes, while the weight changes are accumulated in high precision (Figure 7(a)) [ 48 , 49 ] . When the accumulated weight exceeds a certain threshold, pulses are applied to the corresponding memory devices to alter the synaptic weights. This approach was tested using the handwritten digit classification problem based on the MNIST data set. A two-layered neural network was employed with 2-PCM devices in differential configuration (approx. 400,000 devices) representing the synaptic weights. Resulting test accuracy after 20 epochs of training was approx. 98% (Figure 7(b)). After training, inference on this network was performed for over a year with marginal reduction in the test accuracy. The crossbar topology also facilitates the estimation of the gradient and the in-place update of the resulting synaptic weight all in O(1) time complexity [ 50 , 39] . By obviating the need to perform gradient accumulation externally, this approach could yield better performance than the mixed-precision approach. However, significant improvements to the memristive technology, in particular the accumulative behavior, is needed to apply this to a wide range of DNNs [ 51 , 52 ] .

Compared to the charge-based memory devices that are also used for in-memory computing [53, 54, 55] , a key advantage of memristive devices is the potential to be scaled to dimensions of a few nanometers [56, 57, 58, 59,60] . Most of the memristive devices are also suitable for back end of line integration, thus enabling their integration with a wide range of front-end CMOS technologies. Another key advantage is the non-volatility of these devices that would obviate the need for computing systems to be constantly connected to a power supply. However, there are also challenges that need to be overcome. The significant intra-device and intra-device variability associated with the SET and RESET states is a key challenge for applications where memristive devices are used for logical operations. For applications that rely on analogue storage capability, a significant challenge is programming variability that captures the inaccuracies associated with programming an array of devices to desired conductance values. In ReRAM, this variability is attributed mostly to the stochastic nature of filamentary switching and one prominent approach to counter this is that of establishing preferential paths for CF formation [ 61 , 62 ] . Representing single computational elements by using multiple memory devices is another promising approach [ 63 ] . Yet another challenge is the temporal and temperature-induced variations of the programmed conductance values. The resistance 'drift' in PCM devices, which is attributed to the intrinsic structural relaxation of the amorphous phase, is an example. The concept of projected phase change memory is a promising approach towards tackling 'drift' [ 64 , 65 ] . The requirements that the memristive devices need to fulfil when employed for computational memory are heavily application dependant. For memristive logic, high cycling endurance ( > 10 12 cycles) and low device-to-device variability of the SET/RESET resistance values are critical. For computational tasks involving read-only operations, such as matrix-vector multiplication, it is required that the conductance states remain relatively unchanged during their execution. It is also desirable to have a gradual analogue-type switching characteristic for programming a continuum of resistance values in a single device. A linear and symmetric accumulative behaviour is also required in applications where the device conductance needs to be incrementally updated such as in deep learning training [ 66 ] . For stochastic computing applications, random device variability is not problematic, but graceful device degradation is highly desirable, as described in [ 67 ].

## 4. Spiking Neural Networks and Memristors

As opposed to the deep learning networks discussed above, spiking neural networks (SNNs) can more naturally incorporate the notion of time in signal encoding and processing. SNNs are typically modelled on the integrate-and-fire behaviour of neurons in the brain. In this framework, neurons communicate with each other using binary signals or spikes. The arrival of a spike at a synapse triggers a current flow into the downstream neuron, with the magnitude of the current weighted by the effective conductance of the synapse. The incoming currents are integrated by the neuron to determine its membrane potential and a spike is issued when the potential exceeds a threshold. This spiking behaviour can be triggered in a deterministic or probabilistic manner. Once a spike is issued, the membrane potential is reset to a resting potential or decreased according to some predetermined rule. The integration is limited to a specific time window, or else a leak factor is incorporated in the integration, endowing the neuron model with a finite memory of past spiking events.

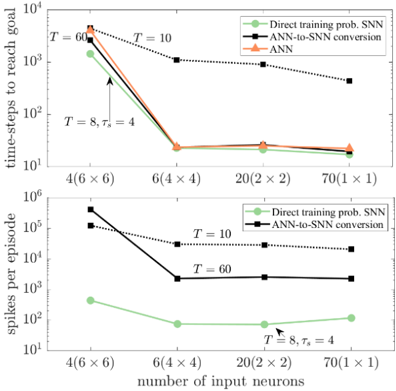

Compared to the realization of second-generation deep neural networks (DNNs discussed in the previous section), SNNs can potentially have significant improvements in efficiency. The first reason for this comes from the underlying signal encoding mechanism. The calculation of the output of a neuron involves the determination of the weighted sum of synaptic weights with real-valued neuronal outputs of the previous layer. For a fully connected second generation DNN with 𝑁 neurons in each layer, this requires 𝑁 ! multiplications of real valued numbers, typically stored in low precision representations. In contrast, the forward propagation operation in an SNN only requires addition operations, as the input neuronal signals are binary spike signals. To elaborate, assume that the input signal is encoded as a spike train with duration 𝑇 , with a minimum inter-spike interval of ∆𝑡 . If the probability of a spike at any instant of time is 𝑝 , then on an average 𝑁𝑝𝑇/∆𝑡 spikes have to be propagated through the synapses, and this requires 𝑁 ! 𝑝𝑇/∆𝑡 addition operations. In most modern processors, the cost of multiplication, 𝐶 " , is 3-4 times higher than that of addition, 𝐶 # . Hence, provided the neuronal and synaptic variables required for computation are available in the processor, SNNs offer a path to more efficient computation if the inequality

$$C _ { a } p \left ( \frac { T } { \Delta t } \right ) < C _ { m }$$

holds. Hence, it is important to develop algorithms for SNNs that minimize 𝑝 and (𝑇/∆𝑡) to improve computational efficiency. This requires the use of sparse binary signal encoding schemes that go beyond rate coding that is typically used in SNNs today. The following section will discuss strategies to develop general-purpose learning rules for SNNs that satisfy such constraints.

The second potential for efficiency improvement of SNNs as compared to second-generation networks arises thanks to novel memory-processor architectures based on memristive devices. While SNNs can be implemented using Si CMOS SRAM or DRAM technologies, the advent of novel nanoscale memristive devices provides opportunities for significant improvements in overall computational efficiency.

Figure 8. A cross-bar array based representation of an SNN. Each synaptic weight is represented by the differential conductance of two nanoscale devices in the crossbar.

<details>

<summary>Image 8 Details</summary>

### Visual Description

## Diagram: Hybrid Neural Network and Operational Amplifier Circuit

### Overview

The image depicts a hybrid system combining a neural network architecture with an operational amplifier (op-amp) circuit. The left side illustrates a multi-layer perceptron (MLP) with weighted connections, while the right side shows a grid of op-amps with feedback gain stages and current outputs. The system appears to model signal processing or analog computation.

### Components/Axes

#### Left: Neural Network

- **Nodes**:

- **Green circles**: Input (`X_j`) and output (`Y_k`) nodes.

- **Orange circles**: Hidden layer nodes.

- **Connections**:

- **Gray lines**: General interconnections between nodes.

- **Black line**: Highlighted connection with weight `W_kj` between a hidden node and output node.

- **Labels**:

- `X_j`: Input vector.

- `Y_k`: Output vector.

- `W_kj`: Weight matrix between hidden layer `k` and output layer.

#### Right: Op-Amp Circuit

- **Grid Structure**:

- **Columns**: Labeled `V_j` (input voltages) at the top.

- **Rows**: Labeled `I_k` (output currents) at the bottom.

- **Blocks**:

- **Blue squares**: Gain stages labeled `G_kj^+` (positive feedback) and `G_kj^-` (negative feedback).

- **Red vertical lines**: Likely power supply rails or signal isolation barriers.

- **Green horizontal lines**: Ground or reference potentials.

- **Outputs**:

- **Yellow circles**: Labeled `I_k`, representing output currents.

- **Triangular symbols**: Summing junctions with `+` and `-` inputs, connected to `I_k`.

### Detailed Analysis

#### Neural Network

- The MLP has **5 input nodes** (`X_j`), **3 hidden layers** (orange nodes), and **1 output node** (`Y_k`).

- Weights (`W_kj`) are explicitly labeled on a single connection, suggesting a focus on specific synaptic strength.

- No activation functions are depicted, implying a linear model or abstraction.

#### Op-Amp Circuit

- **Symmetry**: Each column processes `V_j` through **4 gain stages** (2 positive, 2 negative feedback).

- **Gain Values**: `G_kj^+` and `G_kj^-` are identical in magnitude but opposite in polarity, indicating balanced feedback.

- **Current Outputs**: Three `I_k` currents are summed at the bottom, suggesting aggregation of processed signals.

### Key Observations

1. **Neural Network**:

- The highlighted `W_kj` weight implies criticality in the output computation.

- No bias terms are shown, simplifying the model.

2. **Op-Amp Circuit**:

- Red lines separate columns, possibly indicating independent processing paths.

- Green lines connect all `V_j` inputs to ground, ensuring a common reference.

3. **Integration**:

- The system bridges digital (neural network) and analog (op-amp) domains, possibly for hybrid computation.

### Interpretation

- **Neural Network**: Represents a simplified MLP for pattern recognition or classification, with emphasis on weight `W_kj` as a tunable parameter.

- **Op-Amp Grid**: Models a multi-stage amplifier with feedback control, where `G_kj^+` and `G_kj^-` stabilize gain while `I_k` outputs aggregate processed signals.

- **Hybrid System**: Suggests an analog implementation of neural network operations, where op-amp stages emulate synaptic weights (`W_kj`) and feedback loops. The `V_j` inputs could represent neuron activations, and `I_k` outputs mimic synaptic currents.

### Uncertainties

- Activation functions in the neural network are unspecified.

- Exact values for `G_kj^+`, `G_kj^-`, and `W_kj` are not provided.

- The purpose of red/green lines (power vs. signal) is inferred but not explicitly labeled.

</details>

Memristive devices can be integrated at the junctions of crossbar arrays to represent the weights of synapses, and CMOS circuits at the periphery can be designed to implement the neuronal integration and learning logic. As mentioned above, this architecture enables the computation of spike propagation operation in an efficient manner based on Kirchhoff's law as:

$$I _ { k } = \sum _ { j } \left ( G _ { k j } ^ { + } - G _ { k j } ^ { - } \right ) V _ { j }$$

In this formula, 𝑉 % denotes the applied voltage pulses that are triggered when an input neuron spikes and are applied to the line connected to the 𝑗 th input neuron, 𝐺 $% & and 𝐺 $% ' are the conductances of the devices configured in a differential configuration to represent the synaptic weight, and 𝐼 $ is the total incoming current into the 𝑘 th output neuron. The small form factor of the devices, coupled with the scalability of operating voltages and currents beyond what is possible with conventional CMOS, suggests that these architectures can have several orders of magnitude efficiency improvement over Silicon based implementations [68,69] .

However, apart from the already mentioned non-idealities of memritive devices, crossbar arrays with more than 2048x2048 devices cannot be fabricated and operated reliably due to the resistance drop on the wires and the sneak-paths that corrupt the measurement and programming of synaptic states. One approach to mitigate these issues is to design neurosynaptic cores with smaller crossbars and associated neuron circuits, tile these cores on a 2D array, and provide communication fabrics between the cores [70] . Such tiled neurosynaptic core-based designs are particularly amenable for realizing SNNs, as only binary spikes corresponding to intermittently active spiking neurons need to be transported between cores, as opposed to real-valued neuronal variables that are active for all the neurons in the core in the case of deep learning networks. This is the second inherent advantage that SNNs have over DNNs for computational efficiency improvement.

Overcoming the reliability challenges mentioned above is essential for building reliable systems, and would require the co-optimization of algorithms and architectures that are designed to mitigate or leverage these non-ideal behaviours for computation. Two kinds of systems can be visualized based on the application mode. Inference engines, which do not support on-chip learning, can be designed based on memristive devices integrated on crossbars, where the devices are programmed to the desired conductance states based on the weights obtained from software training. However, as memristive devices support incremental conductance changes by the application of suitable electrical programming pulses, it is also possible to design learning systems where network weight updates are implemented on-chip in an event-driven manner [82] . There are also many recent examples where these devices have been engineered to mimic the integration and fire characteristics of biological neurons [71, 72,73], potentially enabling the realization of all-memristor implementations of spiking neural networks [74] . The field is still in its infancy, and so far, has only witnessed small proof-ofconcept demonstrations. We now discuss some of the approaches that have been explored towards realizing memristive based inference-only spiking networks as well as learning networks with SNNs.

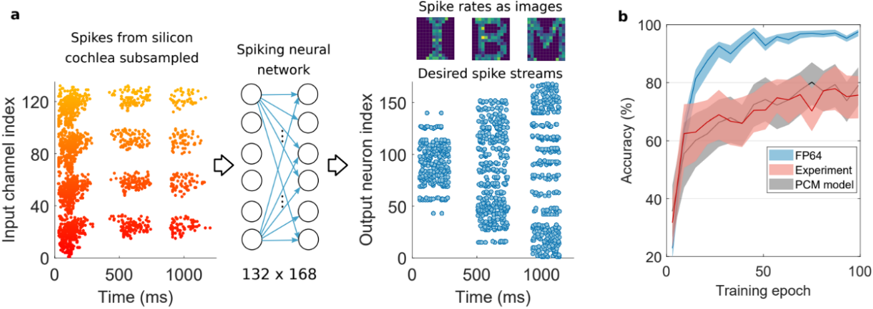

4.1. Memristive SNNs for inference . A common approach to develop SNNs is to start with a second-generation ANN trained using traditional backpropagation-based methods, and then convert the resulting network to a spiking network in software. These solutions are based on weight-normalization schemes so that the spike rates of the neurons in the SNN are proportional to the activations of the neurons in the ANN [75, 76] . While this should in principle result in SNNs with comparable accuracies as their second-generation counterparts, some device-aware re-training would typically be necessary when the network is implemented in hardware due to the non-linearity and limited dynamic ranges of nanoscale devices.

One of the differentiating features of inference engines is that the nanoscale devices storing state variables are programmed only rarely, compared to the number of reads (potentially at every inference cycle). Since higher-energy programming cycles have a stronger effect in degrading device lifetimes compared to the lower-energy read cycles, this mode of operation can have better overall system reliability compared to that of learning systems.

In a preliminary hardware demonstration leveraging this approach, R. Midya et al. used memristors based on SiOxNy:Ag to implement compact oscillatory neurons whose output voltage oscillation frequency is proportional to the input current [77] . In this proof-of-concept demonstration of a 3-layer network, ANN to SNN conversion was limited to the last layer alone, but the approach could be extended to hidden layers as well.

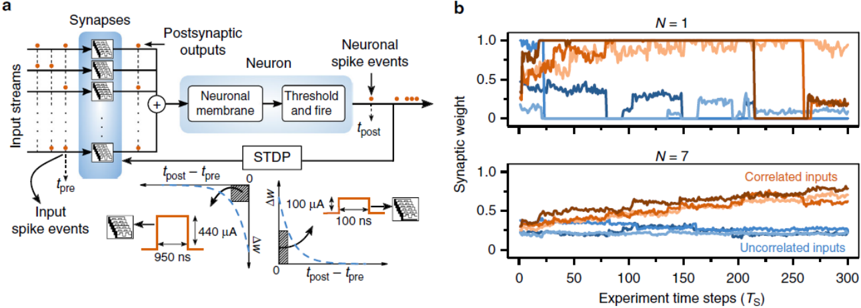

4.2. Memristive SNNs for unsupervised learning and adaptation. Most hardware demonstrations of SNNs using memristive devices have focused on the unsupervised learning paradigm, where the synaptic weights are modified in an unsupervised manner according to the biologically inspired spike timing dependent plasticity (STDP) rule [78] . The rule captures the experimental observation that when a synapse experiences multiple pre-before-post pairings, the effective synaptic strength increases, and conversely, multiple post-before-pre spike pairs result in an effective decrease of synaptic conductance.

It should be noted that while other biological mechanisms may also play a key role in learning and memory formation in the brain, as have been observed experimentally [79, 80] , STDP is a simple local learning rule which is especially straight-forward to implement in hardware. While it is possible to implement timing dependent plasticity rules using many-transistor CMOS circuits [81] , it was experimentally demonstrated early on that memristive devices can exhibit STDP-like weight adaptation behaviours upon the application of suitable waveforms [82, 83,84] . Going beyond individual device demonstrations, IBM has also demonstrated an integrated neuromorphic core with 256x256 phase change memory synapses fabricated along with Si CMOS neuron circuits capable of on-chip learning based on a simplified model of STDP for auto-associative pattern learning tasks [85] .