## A NOVEL MULTI-STEP FINITE-STATE AUTOMATON FOR ARBITRARILY DETERMINISTIC TSETLIN MACHINE LEARNING

## A PREPRINT

## K. Darshana Abeyrathna

Centre for Artificial Intelligence Research University of Agder Grimstad, Norway darshana.abeyrathna@uia.no

## Rishad Shafik

Microsystems Research Group School of Engineering Newcastle University, UK rishad.shafik@newcastle.ac.uk

## Adrian Wheeldon

## Ole-Christoffer Granmo

Centre for Artificial Intelligence Research University of Agder Grimstad, Norway ole.granmo@uia.no

## Alex Yakovlev

Microsystems Research Group School of Engineering Newcastle University, UK alex.yakovlev@newcastle.ac.uk

Jie Lei

Microsystems Research Group School of Engineering Newcastle University, UK adrian.wheeldon@newcastle.ac.uk

## Morten Goodwin

Centre for Artificial Intelligence Research University of Agder Grimstad, Norway morten.goodwin@uia.no

July 7, 2020

## ABSTRACT

Due to the high energy consumption and scalability challenges of deep learning, there is a critical need to shift research focus towards dealing with energy consumption constraints. Tsetlin Machines (TMs) are a recent approach to machine learning that has demonstrated significantly reduced energy usage compared to neural networks alike, while performing competitively accuracy-wise on several benchmarks. However, TMs rely heavily on energy-costly random number generation to stochastically guide a team of Tsetlin Automata to a Nash Equilibrium of the TM game. In this paper, we propose a novel finite-state learning automaton that can replace the Tsetlin Automata in TM learning, for increased determinism. The new automaton uses multi-step deterministic state jumps to reinforce sub-patterns. Simultaneously, flipping a coin to skip every d 'th state update ensures diversification by randomization. The d -parameter thus allows the degree of randomization to be finely controlled. E.g., d = 1 makes every update random and d = ∞ makes the automaton completely deterministic. Our empirical results show that, overall, only substantial degrees of determinism reduces accuracy. Energy-wise, random number generation constitutes switching energy consumption of the TM, saving up to 11 mW power for larger datasets with high d values. We can thus use the new d -parameter to trade off accuracy against energy consumption, to facilitate low-energy machine learning.

Microsystems Research Group School of Engineering Newcastle University, UK jie.lei@newcastle.ac.uk

## 1 Introduction

State-of-the-art deep learning (DL) requires massive computational resources, resulting in high energy consumption [1] and scalability challenges [2]. There is thus a critical need to shift research focus towards dealing with energy consumption constraints [3]. Tsetlin Machines [4] (TMs) are a recent approach to machine learning (ML) that has demonstrated significantly reduced energy usage compared to neural networks alike [5]. Using a linear combination of conjunctive clauses in propositional logic, the TM has obtained competitive performance in terms of accuracy [6, 7, 8], memory footprint [5, 8], energy [5], and learning speed [5, 8] on diverse benchmarks (image classification, regression and natural language understanding). Furthermore, the rules that TMs build seem to be interpretable, similar to the branches in a decision tree (e.g., in the form if X satisfies condition A and not condition B then Y = 1) [6]. The reported small memory footprint and low energy consumption make the TM particularly attractive for addressing the scalability and energy challenge in ML.

Recent progress on TMs. Recent research reports several distinct TM properties. The TM can be used in convolution, providing competitive performance on MNIST, Fashion-MNIST, and Kuzushiji-MNIST, in comparison with CNNs, K-Nearest Neighbor, Support Vector Machines, Random Forests, Gradient Boosting, BinaryConnect, Logistic Circuits and ResNet [8]. The TM has also achieved promising results in text classification by using the conjunctive clauses to capture textual patterns [6]. By introducing clause weights, it has been demonstrated that the number of clauses can be reduced by up to 50 × , without loss of accuracy [9]. Further, hyper-parameter search can be simplified with multi-granular clauses, eliminating the pattern specificity parameter [10]. By indexing the clauses on the features that falsify them, up to an order of magnitude faster inference and learning has been reported [11]. Additionally, regression TMs compare favorably with Regression Trees, Random Forest Regression, and Support Vector Regression [7]. In [12], the TM is equipped with integer weighted clauses, learnt by a stochastic searching on the line (SSL) automaton. The integer weighted TM outperforms simple Multi-Layered Artificial Neural Networks, Decision Trees, Support Vector Machines, K-Nearest Neighbor, Random Forest, Gradient Boosted Trees (XGBoost), Explainable Boosting Machines (EBMs), as well as the standard TM. For continuous features, a scheme for adaptive threshold-based binarization using SSLs was proposed in [13]. Instead of using TAs to represent all unique thresholds, as in [14], merely two SSLs per feature adaptively adjust the thresholds. Finally, TMs have recently been shown to be fault-tolerant, completely masking stuck-at faults [15].

Paper Contributions. TMs rely heavily on energy-costly random number generation to stochastically guide a team of TAs to a Nash Equilibrium of the TM game. In this paper, we propose a novel finite state learning automaton that can replace the TAs of the TM, for increased determinism. The new automaton uses multi-step deterministic state jumps to reinforce sub-patterns. Simultaneously, flipping a coin to skip every d 'th state update ensures diversification by randomization. The d -parameter thus allows the degree of randomization to be finely controlled. We further evaluate the scheme empirically on five datasets, demonstrating that the new d -parameter can be used to trade off accuracy against energy consumption.

## 2 A Multi-Step Finite-State Learning Automaton

The origins of Learning Automata (LA) [16] can be traced back to the work of M. L. Tsetlin in the early 1960s [17]. The objective of an LA is to learn the optimal action through trial and error in a stochastic environment. Various types of LAs are available depending on the nature of the application [18]. Due to their computational simplicity, we here focus on two-action finite-state LA, which we extend by introducing a novel periodically changing structure (variable structure).

In general, the action that an LA performs next is decided by the present state of the LA (the memory). An LA interacts with its environment iteratively. In each iteration, the environment randomly produces a reward or a penalty, responding to the action selected by the LA according to an unknown probability distribution. If the LA receives a reward, it reinforces the action performed by moving to a 'deeper' state. If the action results in a penalty, the LA state moves towards the middle state, to weaken the performed action, ultimately jumping to the other action. In this manner, with a sufficient number of states, a LA converges to the action with the highest probability of producing rewards - the optimal action - with probability arbitrarily close to 1 . 0 [16].

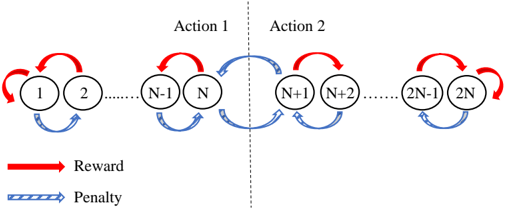

The transitions between states can be be deterministic or stochastic. Deterministic transitions occur with probability 1 . 0 , while stochastic transitions are randomly performed based on a preset probability. If the transition probabilities are changing, we have a variable structure automaton, otherwise, we have one with fixed structure. The pioneering Tsetlin Automaton (TA), depicted in Figure 1, is a deterministic fixed-structure finite-state automaton [17]. The state transition graph in the figure depicts a TA with 2 N memory states. States 1 to N maps to Action 1 and states N +1 to 2 N maps to Action 2.

Action 1 Action 2

1 2 3 ....… N-3 N-2 N-1 N N+1 N+2 N+3 N+4 ……. 2N-2 2N-1 2N

Figure 1: Transition graph of a two-action Tsetlin Automaton with 2N memory states.

<details>

<summary>Image 1 Details</summary>

### Visual Description

\n

## Diagram: State Transition with Reward/Penalty

### Overview

The image depicts a diagram illustrating a sequence of states numbered from 1 to 2N, connected by transitions representing actions. Each transition is associated with either a reward (red arrow) or a penalty (blue dashed arrow). A vertical dashed line separates the sequence into two sections labeled "Action 1" and "Action 2". The diagram appears to model a sequential decision-making process.

### Components/Axes

* **States:** Represented by circles numbered 1 through 2N.

* **Transitions:** Represented by arrows connecting adjacent states.

* **Reward:** Indicated by solid red arrows.

* **Penalty:** Indicated by dashed blue arrows.

* **Action 1:** Label above states N-1, N, N+1.

* **Action 2:** Label above states N+1, N+2, 2N-1.

* **Legend:** Located at the bottom-left corner, defining the meaning of the arrow styles.

### Detailed Analysis or Content Details

The diagram shows a linear sequence of 2N states. The transitions between states alternate between reward and penalty.

* **States 1-N:** Transitions from state *i* to *i+1* are associated with a reward (red arrow). Transitions from state *i+1* to *i* are associated with a penalty (blue dashed arrow).

* **States N+1-2N:** Transitions from state *i* to *i+1* are associated with a penalty (blue dashed arrow). Transitions from state *i+1* to *i* are associated with a reward (red arrow).

* The vertical dashed line separates the sequence at state N.

* The diagram shows a cyclical nature within each state pair (e.g., 1 <-> 2, N-1 <-> N, N+1 <-> N+2, etc.).

### Key Observations

* The reward/penalty structure is inverted between the first N states and the last N states.

* The diagram suggests a system where moving forward in the first half of the sequence is rewarded, while moving backward is penalized. The opposite is true for the second half of the sequence.

* The actions "Action 1" and "Action 2" seem to define the context for the reward/penalty structure.

### Interpretation

This diagram likely represents a reinforcement learning or Markov Decision Process (MDP) scenario. The states represent different conditions or positions within a system. The actions (Action 1 and Action 2) determine the reward/penalty structure for transitions between states. The inversion of the reward/penalty structure suggests a change in the optimal policy or goal after state N.

The diagram could model a scenario where an agent initially benefits from progressing through a sequence of states (Action 1), but then the optimal strategy changes, and the agent is rewarded for reversing course (Action 2). This could represent a task with a changing objective or a system with different constraints in different phases.

The cyclical nature of the transitions suggests that the agent can repeatedly move between adjacent states, accumulating rewards or penalties based on the current action context. The diagram does not provide specific numerical values for the rewards or penalties, but it clearly illustrates the qualitative relationship between states, actions, and outcomes.

</details>

Figure 2: Transition graph of the Multi-Step Variable Structure Finite-State Learning Automaton.

<details>

<summary>Image 2 Details</summary>

### Visual Description

\n

## Diagram: State Transition with Rewards and Penalties

### Overview

The image depicts a diagram illustrating a state transition process with two possible actions and associated rewards and penalties. The diagram shows a sequence of states numbered from 1 to 2N, with transitions between states governed by actions and probabilistic rewards/penalties. The diagram is divided into three sections, showing the initial states, a central section highlighting actions, and the final states.

### Components/Axes

The diagram consists of:

* **States:** Represented by numbered squares (1, 2, 3, ..., N-1, N, N+1, N+2, ..., 2N-2, 2N-1, 2N).

* **Actions:** Labeled "Action 1" and "Action 2" positioned above the central states.

* **Transitions:** Represented by arrows indicating possible state transitions.

* **Reward/Penalty Indicators:** Different colored and styled arrows represent different types of rewards and penalties.

* **Legend:** Located at the bottom of the diagram, explaining the meaning of the arrow styles and colors.

The legend defines the following:

* **Blue dashed arrow:** Weak penalty (takes place with probability 1 or 0.5)

* **Red solid arrow:** Weak reward (takes place with probability 1 or 0.5)

* **Blue wide dashed arrow:** Strong penalty (s=3 and takes place with probability 1 or 0.5)

* **Red wide solid arrow:** Strong reward (s=3 and takes place with probability 1 or 0.5)

### Detailed Analysis / Content Details

The diagram shows a series of states transitioning based on actions and probabilistic rewards/penalties.

* **Initial States (1-3):** State 1 transitions to states 2 and 3. State 2 transitions to states 1 and 3. State 3 transitions to itself, and to states 1 and 2. The transitions are a mix of weak rewards (red arrows) and weak penalties (blue dashed arrows).

* **Central States (N-1, N, N+1, N+2):** State N-1 transitions to states N, N+1, and potentially others. State N transitions to states N-1, N+1, and potentially others. State N+1 transitions to states N, N+2, and potentially others. State N+2 transitions to states N+1 and potentially others. This section highlights the application of "Action 1" and "Action 2". Transitions include both weak and strong rewards/penalties.

* **Final States (2N-2, 2N-1, 2N):** State 2N-2 transitions to states 2N-1 and 2N. State 2N-1 transitions to states 2N-2 and 2N. State 2N transitions to states 2N-1 and 2N-2. The transitions are a mix of weak rewards (red arrows) and weak penalties (blue dashed arrows).

Specifically:

* From state 1, there is a red arrow to state 2 (weak reward) and a blue dashed arrow to state 3 (weak penalty).

* From state 2, there is a red arrow to state 1 (weak reward) and a blue dashed arrow to state 3 (weak penalty).

* From state 3, there is a red arrow to state 1 (weak reward), a blue dashed arrow to state 2 (weak penalty), and a loop back to itself.

* The central section shows transitions between N-1, N, N+1, and N+2 with a mix of all four reward/penalty types.

* The final section mirrors the initial section in terms of transition patterns.

### Key Observations

* The diagram illustrates a Markov Decision Process (MDP) or a similar state-transition model.

* The probabilities of rewards and penalties are either 1 or 0.5, indicating a stochastic environment.

* The "strong" rewards/penalties (s=3) are visually distinguished by thicker lines.

* The diagram suggests a cyclical nature to the state transitions, particularly in the initial and final states.

* The actions "Action 1" and "Action 2" influence the transitions between states N-1, N, N+1, and N+2.

### Interpretation

The diagram represents a system where an agent navigates through a series of states, taking actions that lead to probabilistic rewards or penalties. The presence of both weak and strong rewards/penalties suggests a complex reward structure. The cyclical nature of the transitions in the initial and final states implies that the agent can revisit previously visited states. The actions "Action 1" and "Action 2" represent the agent's choices, and their impact on the state transitions is central to the system's dynamics. The parameter 's=3' associated with strong rewards/penalties likely represents a state or condition that triggers these more significant outcomes.

The diagram is a visual representation of a reinforcement learning problem, where the agent learns to maximize its cumulative reward by choosing optimal actions in each state. The probabilistic nature of the rewards and penalties introduces uncertainty, requiring the agent to learn a policy that is robust to this uncertainty. The diagram could be used to model a variety of real-world systems, such as game playing, robotics, or financial markets. The diagram is a conceptual model and does not provide specific numerical data about the probabilities or reward values.

</details>

While the TA changes state in single steps, the deterministic Krinsky Automaton introduces multi-step state transitions [16]. The purpose is to reinforce an action more strongly when it is rewarded, and more weakly when penalized. The Krinsky Automaton behaves as a TA when the response from the environment is a penalty. However, when it is a reward, any state from 2 to N transitions to state 1 , and any state from N +1 to 2 N -1 transitions to state 2 N . In effect, N consecutive penalties are needed to offset a single reward.

Another variant of LA is the Krylov Automaton. A Krylov Automaton makes both deterministic and stochastic single-step transitions [16]. The state transitions of the Krylov Automaton is identical to those of a TA for rewards. However, when it receives a penalty, it performs the corresponding TA state change randomly, with probability 0 . 5 .

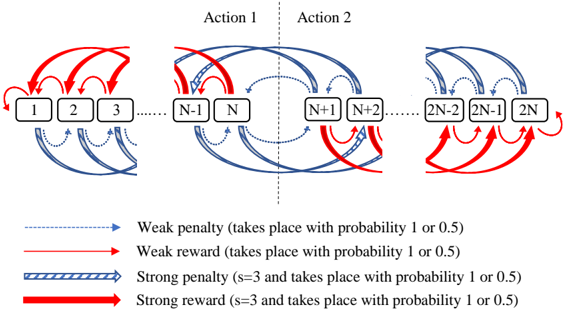

We now introduce our new type of LA, the multi-step variable-structure finite-state LA (MVF-LA), shown in Figure 2. The MVF-LA has two kinds of feedback, strong and weak. As covered in the next section, strong feedback is required by the TM to strongly reinforce frequent sub-patterns, while weak feedback is required to make the TM forget infrequent ones. To achieve this, weak feedback only triggers one-step transitions. Strong feedback, on the other hand, triggers s -step transitions. Thus, a single strong feedback is offset by s instances of weak feedback. Further, MVF-LA has a variable structure that changes periodically . That is, the MVF-LA switches between two different transition graph structures, one deterministic and one stochastic. The deterministic structure is as shown in the figure, while the stochastic structure introduces a transition probability 0 . 5 , for every transition. The switch between structure is performed so that every d 'th transition is stochastic, while the remaining transitions are deterministic.

## 3 The Arbitrarily Deterministic TM (ADTM)

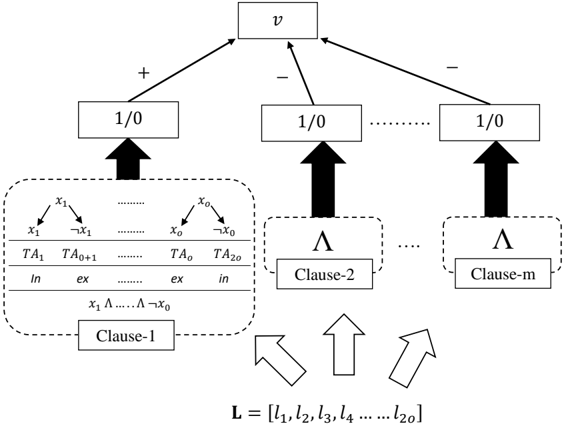

In this section, we introduce the details of the ADTM, shown in Figure 3, where the TA is replaced with the new MVF-LA. The purpose of the ADTM is to control stochasticity, thus allowing management of energy consumption.

## 3.1 ADTMInference

Input Features. Like the TM, an ADTM takes a feature vector of o propositional variables as input, X = [ x 1 , x 2 , x 3 , . . . , x o ] , to be classified into one of two classes, y = 0 or y = 1 . These features are extended with their negation, to produce a set of literals: L = [ x 1 , x 2 , . . . , x o , ¬ x 1 , ¬ x 2 , . . . , ¬ x o ] =[ l 1 , l 2 , . . . , l 2 o ] .

Figure 3: The ADTM structure.

<details>

<summary>Image 3 Details</summary>

### Visual Description

\n

## Diagram: Logical Clause Decomposition

### Overview

The image depicts a diagram illustrating the decomposition of a logical clause, likely within a SAT solver or similar logical reasoning system. It shows a tree-like structure where a variable 'ν' branches into positive and negative literals, which are further broken down into clauses containing literals and their negations. The bottom of the diagram shows an input list 'L'.

### Components/Axes

The diagram consists of the following components:

* **ν:** A rectangular box at the top, representing a variable.

* **1/0 Boxes:** Rectangular boxes connected to 'ν' via '+' and '-' signs, representing literals (variable assignments).

* **Clause Boxes:** Dotted rectangular boxes containing logical conjunctions (∧) of literals. These represent clauses.

* **Literal Nodes:** Within the clause boxes, nodes representing literals (x₁, x₀, ¬x₁, ¬x₀) and their truth assignments (TA₁, TA₀, TA₁+1, TA₂+0).

* **Input List L:** A list represented as `L = [l₁, l₂, l₃, l₄ … l₂₀]`.

* **Arrows:** Arrows indicating the flow of information or dependency.

* **Labels:** Labels such as "Clause-1", "Clause-2", "Clause-m", "in", "ex".

### Detailed Analysis or Content Details

The diagram can be broken down as follows:

1. **Top Level:** The variable 'ν' branches into positive and negative literals. The positive branch is labeled '+', and the negative branches are labeled '-'.

2. **Literal Representation:** Each branch leads to a box labeled "1/0", representing a literal. This suggests a binary assignment (true/false or 1/0).

3. **Clause Decomposition:** Each literal then feeds into a clause box.

* **Clause-1:** Contains literals x₁ and x₀, along with their truth assignments TA₁ and TA₀. The literals are connected via nodes representing their negations (¬x₁, ¬x₀). The clause is represented as `x₁ ∧ ¬x₀`. Below this clause are labels "in" and "ex".

* **Clause-2 to Clause-m:** Represented by a series of dotted boxes, each containing a logical conjunction (∧). The specific literals within these clauses are not fully detailed, but they follow a similar structure to Clause-1.

4. **Input List:** At the bottom of the diagram, there is an input list 'L' defined as `L = [l₁, l₂, l₃, l₄ … l₂₀]`. Arrows point upwards from this list towards the clause boxes, suggesting that the list provides input for the clause construction or evaluation.

5. **Truth Assignments:** The labels TA₁, TA₀, TA₁+1, TA₂+0 suggest truth assignments or values associated with the literals.

### Key Observations

* The diagram illustrates a hierarchical decomposition of a logical problem.

* The input list 'L' appears to be a source of information for constructing or evaluating the clauses.

* The use of 'in' and 'ex' suggests internal and external factors or states.

* The diagram does not provide specific numerical data, but rather a structural representation of a logical process.

### Interpretation

The diagram likely represents a simplified model of a SAT (Satisfiability) solver or a similar logical reasoning system. The variable 'ν' could represent a decision variable, and the branching represents exploring different assignments (true/false). The clauses represent constraints that must be satisfied. The input list 'L' could represent a set of initial facts or assumptions.

The decomposition into clauses and literals suggests a process of breaking down a complex logical problem into smaller, more manageable subproblems. The truth assignments (TA) indicate the values assigned to the literals during the solving process. The 'in' and 'ex' labels might denote internal state and external input, respectively.

The diagram is abstract and does not provide specific details about the solving algorithm or the nature of the logical problem being solved. However, it provides a clear visual representation of the key components and relationships involved in a logical reasoning process. The ellipsis (...) indicates that the diagram is not exhaustive and that there may be more clauses or literals involved in the complete problem.

</details>

Clauses. Patterns are represented by m conjunctive clauses. As shown for Clause-1 in the figure, a clause in the TM comprises of 2 o TAs, each controlling the inclusion of a specific literal. Let the set I j , I j ⊆ { 1 , . . . , 2 o } denote the indexes of the literals that are included in clause j . When evaluating clause j on input literals L , the literals included in the clause are ANDed: c j = ∧ k ∈ I j l k , j = 1 , . . . , m . Note that the output of an empty clause, I j = ∅ , is 1 during learning and 0 during inference.

Classification. In order to identify the sub-patterns associated with both of the classes of a two-class ADTM, the clauses are grouped in two. The number of clauses employed is a user set parameter m . Half of the clauses are assigned positive polarity ( c + j ). The other half is assigned negative polarity ( c -j ). The clause outputs, in turn, are combined into a classification decision through summation and thresholding using the unit step function u ( v ) = 1 if v ≥ 0 else 0 :

<!-- formula-not-decoded -->

That is, classification is based on a majority vote, with the positive clauses voting for y = 0 and the negative for y = 1 .

## 3.2 The MVF-LA Game and Orchestration Scheme

The MVF-LAs in ADTM are updated by so-called Type I and Type II feedback. Depending on the class of the current training sample ( X,y ) and the polarity of the clause (positive or negative), the type of feedback is decided. Clauses with positive polarity receive Type I feedback when the target output is y = 1 , and Type II feedback when the target output is y = 0 . For clauses with negative polarity, Type I feedback replaces Type II, and vice versa. In the following, we focus only on clauses with positive polarity.

Type I feedback: The number of clauses which receive Type I feedback is controlled by selecting them stochastically according to Eqn. 2:

<!-- formula-not-decoded -->

Above, v = ∑ m/ 2 j =1 c + j ( X ) -∑ m/ 2 j =1 c -j ( X ) is the aggregated clause output and T is a user set parameter that decides how many clauses should be involved in learning a particular sub-pattern. Further, Type I feedback consists of two kinds of sub-feedback: Type Ia and Type Ib. Type Ia feedback stimulates recognition of patterns by reinforcing the include action of MVF-LAs whose corresponding literal value is 1 , however, only when the clause output also is 1 . Note that an action is reinforced either by rewarding the action itself, or by penalizing the other action. Type Ia feedback is strong , with step size s (cf. Figure 2). Type Ib feedback combats over-fitting by reinforcing the exclude actions of MVF-LAs when the corresponding literal is 0 or when the clause output is 0 . Type Ib feedback is weak to facilitate learning of frequent patterns (cf. Figure 2).

Table 1: Performance of TM and ADTM with different d on Bankruptcy dataset.

| | TM | ADTM | ADTM | ADTM | ADTM | ADTM | ADTM |

|------|-------|--------|--------|--------|--------|--------|--------|

| | TM | d=1 | d=10 | d=100 | d=500 | d=1000 | d=5000 |

| F1 | 0.998 | 1.000 | 1.000 | 1.000 | 0.999 | 0.999 | 0.988 |

| Acc. | 0.998 | 1.000 | 1.000 | 1.000 | 0.999 | 0.999 | 0.987 |

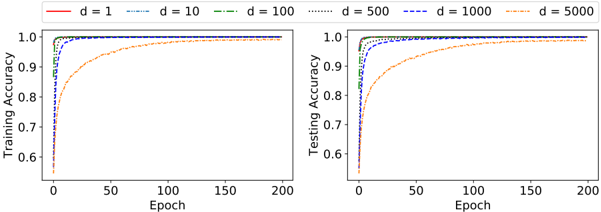

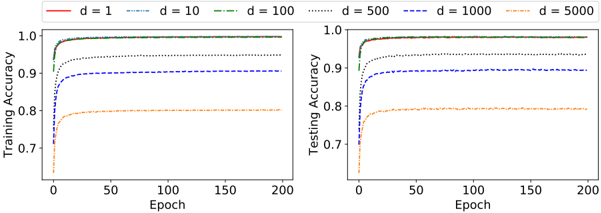

Figure 4: Training and testing accuracy per epoch on the Bankruptcy dataset.

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Line Chart: Training and Testing Accuracy vs. Epoch

### Overview

The image presents two line charts displayed side-by-side. Both charts depict accuracy (Training Accuracy on the left, Testing Accuracy on the right) as a function of Epoch, with different lines representing different values of 'd'. The charts visually compare the learning curves for various 'd' values.

### Components/Axes

* **X-axis (Both Charts):** Epoch, ranging from 0 to approximately 200.

* **Y-axis (Left Chart):** Training Accuracy, ranging from approximately 0.6 to 1.0.

* **Y-axis (Right Chart):** Testing Accuracy, ranging from approximately 0.6 to 1.0.

* **Legend (Top-Center):** Labels for each line, corresponding to different values of 'd':

* d = 1 (Red solid line)

* d = 10 (Blue dashed line)

* d = 100 (Green dotted line)

* d = 500 (Orange dash-dot line)

* d = 1000 (Black dotted line)

* d = 5000 (Purple dash-dot-dot line)

### Detailed Analysis or Content Details

**Left Chart (Training Accuracy):**

* **d = 1 (Red):** The line starts at approximately 0.65 at Epoch 0, rapidly increases to approximately 0.95 by Epoch 20, and plateaus around 0.98-1.0 for Epochs 20-200.

* **d = 10 (Blue):** Starts at approximately 0.65 at Epoch 0, increases more slowly than d=1, reaching approximately 0.9 by Epoch 50, and plateaus around 0.95-0.98 for Epochs 50-200.

* **d = 100 (Green):** Starts at approximately 0.65 at Epoch 0, increases at a moderate pace, reaching approximately 0.9 by Epoch 40, and plateaus around 0.97-0.99 for Epochs 40-200.

* **d = 500 (Orange):** Starts at approximately 0.65 at Epoch 0, increases slowly, reaching approximately 0.85 by Epoch 50, and plateaus around 0.95-0.97 for Epochs 50-200.

* **d = 1000 (Black):** Starts at approximately 0.65 at Epoch 0, increases very slowly, reaching approximately 0.8 by Epoch 50, and plateaus around 0.93-0.95 for Epochs 50-200.

* **d = 5000 (Purple):** Starts at approximately 0.65 at Epoch 0, increases extremely slowly, reaching approximately 0.75 by Epoch 50, and plateaus around 0.9-0.93 for Epochs 50-200.

**Right Chart (Testing Accuracy):**

* **d = 1 (Red):** Starts at approximately 0.65 at Epoch 0, rapidly increases to approximately 0.95 by Epoch 20, and plateaus around 0.98-1.0 for Epochs 20-200.

* **d = 10 (Blue):** Starts at approximately 0.65 at Epoch 0, increases more slowly than d=1, reaching approximately 0.9 by Epoch 50, and plateaus around 0.95-0.98 for Epochs 50-200.

* **d = 100 (Green):** Starts at approximately 0.65 at Epoch 0, increases at a moderate pace, reaching approximately 0.9 by Epoch 40, and plateaus around 0.97-0.99 for Epochs 40-200.

* **d = 500 (Orange):** Starts at approximately 0.65 at Epoch 0, increases slowly, reaching approximately 0.85 by Epoch 50, and plateaus around 0.95-0.97 for Epochs 50-200.

* **d = 1000 (Black):** Starts at approximately 0.65 at Epoch 0, increases very slowly, reaching approximately 0.8 by Epoch 50, and plateaus around 0.93-0.95 for Epochs 50-200.

* **d = 5000 (Purple):** Starts at approximately 0.65 at Epoch 0, increases extremely slowly, reaching approximately 0.75 by Epoch 50, and plateaus around 0.9-0.93 for Epochs 50-200.

### Key Observations

* The training accuracy generally increases with the number of epochs for all values of 'd'.

* The testing accuracy also generally increases with the number of epochs for all values of 'd'.

* Smaller values of 'd' (1, 10, 100) achieve higher accuracy faster than larger values of 'd' (500, 1000, 5000).

* The gap between training and testing accuracy appears to be relatively small for all values of 'd', suggesting minimal overfitting.

* The lines for d=1, d=10, and d=100 are very close to each other in both charts.

* The lines for d=500, d=1000, and d=5000 are very close to each other in both charts, and significantly lower than the other lines.

### Interpretation

The charts demonstrate the impact of the parameter 'd' on the training and testing accuracy of a model over time (epochs). The parameter 'd' likely represents a dimensionality or complexity factor within the model.

The data suggests that lower values of 'd' lead to faster learning and higher accuracy, at least within the observed range of epochs. As 'd' increases, the learning rate decreases, and the final accuracy plateaus at a lower level. This could indicate that increasing 'd' beyond a certain point introduces unnecessary complexity or noise, hindering the model's ability to generalize effectively.

The close proximity of the training and testing accuracy curves for all 'd' values suggests that the model is not significantly overfitting to the training data. The consistent trend across both charts (training and testing) reinforces the reliability of the observed relationship between 'd' and accuracy. The fact that d=500, d=1000, and d=5000 perform similarly suggests that there may be a diminishing return on increasing 'd' beyond a certain threshold. Further investigation would be needed to determine the optimal value of 'd' for maximizing performance.

</details>

Type II feedback: Clauses are also selected stochastically for receiving Type II feedback:

<!-- formula-not-decoded -->

Type II feedback combats false positive clause output by seeking to alter clauses that output 1 so that they instead output 0 . This is achieved simply by reinforcing inclusion of literals of value 0 . Thus, when the clause output is 1 and the corresponding literal value of an MVF-LA is 0 , the include action of the MVF-LA is reinforced. Type II feedback is strong , with step size s . Recall that in all of the above MVF-LA update steps, the parameter d decides the determinism of the updates.

## 4 Empirical Evaluation

We now study the performance of ADTM empirically using five real-world datasets. As baselines, ADTM is compared against regular TMs and seven other state-of-the-are machine learning approaches: Artificial Neural Networks (ANNs), Support Vector Machines (SVMs), Decision Trees (DTs), K-Nearest Neighbor (KNN), Random Forest (RF), Gradient Boosted Trees (XGBoost) [19], and Explainable Boosting Machines (EBMs) [20]. For comprehensiveness, three ANN architectures are used: ANN-1 - with one hidden layer of 5 neurons; ANN-2 - with two hidden layers of 20 and 50 neurons each, and ANN-3 - with three hidden layers and 20, 150, and 100 neurons. Performance of these predictive models are summarized in Table 6. We compute both F1-score (F1) and accuracy (Acc.) as performance measures. However, due to the class imbalance, we emphasize F1-score when comparing the performance of the different predictive models.

## 4.1 Bankruptcy

The Bankruptcy dataset contains historical records of 250 companies 1 . The outcome, Bankruptcy or Non-bankruptcy, is characterized by six categorical features. We thus binarize the features using thresholding [14] before we feed them into the ADTM. We first tune the hyper-parameters of the TM and the best performance is reported in Table 1, for m = 100 (number of clauses), s = 3 (step size for MVF-LA), and T = 10 (summation target). Each MVF-LA contains 100 states per action. The impact of determinism is reported in Table 1, for varying levels of determinism. As seen, performance is indistinguishable for d -values 1 , 10 , and 100 , and the ADTM achieves its highest classification accuracy. However, notice the slight decrease of F1-score and accuracy when determinism is further increased to 500 , 1000 , and 5000 .

Figure 4 shows how training and testing accuracy evolve over the training epochs. Only high determinism seems to influence learning speed and accuracy significantly. The performance of the other considered machine learning models

1 Available from https://archive.ics.uci.edu/ml/datasets/qualitative\_bankruptcy.

Table 2: Performance of TM and ADTM with different d on Balance Scale dataset.

| | TM | ADTM | ADTM | ADTM | ADTM | ADTM | ADTM |

|------|-------|--------|--------|--------|--------|--------|--------|

| | TM | d=1 | d=10 | d=100 | d=500 | d=1000 | d=5000 |

| F1 | 0.945 | 0.982 | 0.983 | 0.982 | 0.968 | 0.951 | 0.911 |

| Acc. | 0.948 | 0.980 | 0.981 | 0.980 | 0.935 | 0.894 | 0.793 |

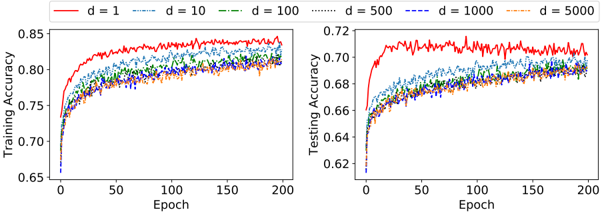

Figure 5: Training and testing accuracy per epoch on the Balance Scale dataset.

<details>

<summary>Image 5 Details</summary>

### Visual Description

\n

## Line Chart: Training and Testing Accuracy vs. Epoch for Different 'd' Values

### Overview

The image presents two line charts displayed side-by-side. The left chart shows Training Accuracy versus Epoch, while the right chart shows Testing Accuracy versus Epoch. Both charts depict the performance of a model trained over 200 epochs for different values of a parameter 'd' (1, 10, 100, 500, 1000, and 5000). The lines represent the accuracy achieved for each 'd' value.

### Components/Axes

* **X-axis (Both Charts):** Epoch, ranging from 0 to 200.

* **Y-axis (Left Chart):** Training Accuracy, ranging from approximately 0.7 to 1.1.

* **Y-axis (Right Chart):** Testing Accuracy, ranging from approximately 0.7 to 1.1.

* **Legend (Top-Center):** Indicates the different values of 'd' and their corresponding line styles and colors:

* d = 1 (Solid Red Line)

* d = 10 (Dashed Blue Line)

* d = 100 (Dotted Gray Line)

* d = 500 (Dash-Dot Green Line)

* d = 1000 (Dashed Green Line)

* d = 5000 (Dotted Green Line)

### Detailed Analysis or Content Details

**Left Chart (Training Accuracy):**

* **d = 1 (Red):** The line starts at approximately 0.78 and rapidly increases to around 0.98 by epoch 50, then plateaus around 1.0.

* **d = 10 (Blue):** The line starts at approximately 0.85 and increases more slowly than d=1, reaching around 0.95 by epoch 50, and then plateaus around 0.97.

* **d = 100 (Gray):** The line starts at approximately 0.88 and increases steadily, reaching around 0.97 by epoch 50, and then plateaus around 1.0.

* **d = 500 (Green):** The line starts at approximately 0.89 and increases rapidly, reaching around 0.99 by epoch 50, and then plateaus around 1.0.

* **d = 1000 (Green):** The line starts at approximately 0.89 and increases rapidly, reaching around 0.99 by epoch 50, and then plateaus around 1.0.

* **d = 5000 (Green):** The line starts at approximately 0.89 and increases rapidly, reaching around 0.99 by epoch 50, and then plateaus around 1.0.

**Right Chart (Testing Accuracy):**

* **d = 1 (Red):** The line starts at approximately 0.78 and rapidly increases to around 0.95 by epoch 50, then plateaus around 1.0.

* **d = 10 (Blue):** The line starts at approximately 0.85 and increases more slowly than d=1, reaching around 0.92 by epoch 50, and then plateaus around 0.94.

* **d = 100 (Gray):** The line starts at approximately 0.88 and increases steadily, reaching around 0.95 by epoch 50, and then plateaus around 0.97.

* **d = 500 (Green):** The line starts at approximately 0.89 and increases rapidly, reaching around 0.98 by epoch 50, and then plateaus around 1.0.

* **d = 1000 (Green):** The line starts at approximately 0.89 and increases rapidly, reaching around 0.98 by epoch 50, and then plateaus around 1.0.

* **d = 5000 (Green):** The line starts at approximately 0.89 and increases rapidly, reaching around 0.98 by epoch 50, and then plateaus around 1.0.

### Key Observations

* For both training and testing accuracy, higher values of 'd' (500, 1000, 5000) generally lead to faster convergence and higher accuracy.

* The lines for d=500, d=1000, and d=5000 are nearly indistinguishable in both charts, suggesting that increasing 'd' beyond 500 provides diminishing returns.

* There is a noticeable gap between the training and testing accuracy, indicating some degree of overfitting, especially for lower values of 'd'.

* The model with d=1 exhibits the slowest learning rate and the lowest final accuracy.

### Interpretation

The data suggests that the parameter 'd' plays a crucial role in the model's performance. Increasing 'd' initially improves both training and testing accuracy, likely by increasing the model's capacity to learn complex patterns. However, beyond a certain point (around d=500), further increases in 'd' do not significantly improve performance, and may even lead to overfitting.

The difference between training and testing accuracy indicates that the model is learning the training data too well, and is not generalizing well to unseen data. This is a common problem in machine learning, and can be addressed by techniques such as regularization or early stopping.

The charts provide valuable insights into the relationship between the parameter 'd' and the model's performance, and can be used to guide the selection of an appropriate value for 'd' during model training. The fact that d=500, d=1000, and d=5000 perform similarly suggests that computational cost should be considered when choosing a value for 'd'.

</details>

is compiled in Table 6. The best performance in terms of F1-score for the other models is obtained by ANN-3. However, ANN-3 is outperformed by the ADTM for all d -values except when d = 5000 .

## 4.2 Balance Scale

The Balance Scale dataset 2 contains three classes: balance scale tip to the right, tip to the left, or in balance. The class is decided by the size of the weight on both sides of the scale and the distance to each weight from the center. Hence the classes are characterized by four features. However, to make the output binary, we remove the 'balanced" class ending up with 576 data samples. The ADTM is equipped with 100 clauses. Each MVF-LA is given 100 states per action. The remaining two parameters, i.e., s value and T are fixed at 3 and 10 , respectively. Table 2 contains the results of TM and ADTM obtained on the Balance Scale dataset. Even though ADTM uses the same number of clauses as the TM, the performance with regards to F1-score and accuracy is better with ADTM when all the MVF-LAs updates are stochastic ( d = 1 ). The performance of the ADTM remains the same until the determinism-parameter surpasses 100 . After that, performance degrades gradually.

Progress of training and testing accuracy per epoch can be found in Figure 5. Each ADTM setup reaches its peak training and testing accuracy and becomes stable within a fewer number of training epochs. As can be seen, accuracy is maintained up to d = 100 , thus reducing random number generation to 1% without accuracy loss. From the results listed in Table 6 for the other machine learning approaches, EBM achieves the highest F1-score and accuracy.

## 4.3 Breast Cancer

The Breast Cancer dataset 3 contains 286 patients records related to the recurrence of breast cancer (201 with nonrecurrence and 85 with recurrence). The recurrence of breast cancer has to be estimated using nine features: Age, Menopause, Tumor Size, Inv Nodes, Node Caps, Deg Malig, Side (left or right), the Position of the Breast, and whether Irradiated or not. However, some of the patient samples miss some of the feature values. These samples are removed from the dataset in the present experiment.

The ADTM is arranged with the following parameter setup: m = 100 , s = 5 , T = 10 , and the number of states in MVF-LA per action is 100 . The classification accuracy of the TM and ADTM are summarized in Table 3. In contrast to the previous two datasets, the performance of both TM and ADTM is considerably lower, and further decreases with increasing determinism. However, the F1 measures obtained by all the other considered machine learning models are also low, i.e., less than 0 . 500 . The highest F1-score is obtained by ANN-1 and KNN, which both are lower than what is achieved with ADTM for d -values up to 100 .

2 Available from http://archive.ics.uci.edu/ml/datasets/balance+scale.

3 Available from https://archive.ics.uci.edu/ml/datasets/Breast+Cancer

Table 3: Performance of TM and ADTM with different d on Breast Cancer dataset.

| | TM | ADTM | ADTM | ADTM | ADTM | ADTM | ADTM |

|------|-------|--------|--------|--------|--------|--------|--------|

| | TM | d=1 | d=10 | d=100 | d=500 | d=1000 | d=5000 |

| F1 | 0.531 | 0.568 | 0.531 | 0.501 | 0.490 | 0.501 | 0.488 |

| Acc. | 0.703 | 0.702 | 0.698 | 0.691 | 0.690 | 0.690 | 0.693 |

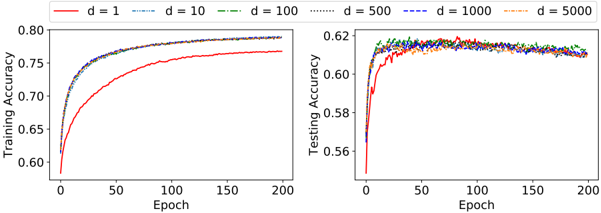

Figure 6: Training and testing accuracy per epoch on the Breast Cancer dataset.

<details>

<summary>Image 6 Details</summary>

### Visual Description

\n

## Line Chart: Training and Testing Accuracy vs. Epoch for Different 'd' Values

### Overview

The image presents two line charts side-by-side. The left chart displays Training Accuracy against Epoch, while the right chart shows Testing Accuracy against Epoch. Both charts compare the performance of models with different values of 'd' (1, 10, 100, 500, 1000, and 5000) during the training process.

### Components/Axes

* **X-axis (Both Charts):** Epoch, ranging from 0 to 200.

* **Y-axis (Left Chart):** Training Accuracy, ranging from 0.65 to 0.85.

* **Y-axis (Right Chart):** Testing Accuracy, ranging from 0.62 to 0.72.

* **Legend (Top-Center):** Indicates the different values of 'd' and their corresponding line styles and colors:

* d = 1 (Solid Red Line)

* d = 10 (Dashed Blue Line)

* d = 100 (Dashed Green Line)

* d = 500 (Dashed Cyan Line)

* d = 1000 (Dashed Magenta Line)

* d = 5000 (Dashed Yellow Line)

### Detailed Analysis or Content Details

**Left Chart (Training Accuracy):**

* **d = 1 (Red):** The line starts at approximately 0.72 at Epoch 0 and steadily increases, reaching a plateau around 0.83-0.85 between Epochs 100 and 200. There are minor fluctuations.

* **d = 10 (Blue):** Starts at approximately 0.71 at Epoch 0. The line increases more slowly than the red line, reaching around 0.79-0.81 by Epoch 200.

* **d = 100 (Green):** Starts at approximately 0.70 at Epoch 0. The line increases at a moderate pace, reaching around 0.80-0.82 by Epoch 200.

* **d = 500 (Cyan):** Starts at approximately 0.70 at Epoch 0. The line increases at a similar pace to the green line, reaching around 0.81-0.82 by Epoch 200.

* **d = 1000 (Magenta):** Starts at approximately 0.69 at Epoch 0. The line increases at a slower pace, reaching around 0.79-0.80 by Epoch 200.

* **d = 5000 (Yellow):** Starts at approximately 0.69 at Epoch 0. The line increases at a similar pace to the magenta line, reaching around 0.79-0.80 by Epoch 200.

**Right Chart (Testing Accuracy):**

* **d = 1 (Red):** The line starts at approximately 0.64 at Epoch 0 and increases to around 0.71-0.72 by Epoch 200, with some fluctuations.

* **d = 10 (Blue):** Starts at approximately 0.63 at Epoch 0. The line increases more slowly than the red line, reaching around 0.68-0.69 by Epoch 200.

* **d = 100 (Green):** Starts at approximately 0.63 at Epoch 0. The line increases at a moderate pace, reaching around 0.69-0.70 by Epoch 200.

* **d = 500 (Cyan):** Starts at approximately 0.63 at Epoch 0. The line increases at a similar pace to the green line, reaching around 0.69-0.70 by Epoch 200.

* **d = 1000 (Magenta):** Starts at approximately 0.62 at Epoch 0. The line increases at a slower pace, reaching around 0.68-0.69 by Epoch 200.

* **d = 5000 (Yellow):** Starts at approximately 0.62 at Epoch 0. The line increases at a similar pace to the magenta line, reaching around 0.68-0.69 by Epoch 200.

### Key Observations

* The model with d = 1 consistently achieves the highest training and testing accuracy across all epochs.

* As 'd' increases, the training and testing accuracy generally decrease.

* The gap between training and testing accuracy is relatively small for all values of 'd', suggesting limited overfitting.

* The lines for d = 100, 500, 1000, and 5000 are relatively close to each other in both charts, indicating similar performance for these values of 'd'.

### Interpretation

The data suggests that the parameter 'd' significantly impacts the performance of the model. A smaller value of 'd' (specifically, d = 1) leads to the best training and testing accuracy. Increasing 'd' appears to reduce the model's ability to learn effectively, potentially due to increased complexity or a higher risk of getting stuck in local optima during optimization.

The close proximity of the lines for d = 100, 500, 1000, and 5000 suggests that there might be a diminishing return in performance as 'd' increases beyond a certain point. The relatively small gap between training and testing accuracy indicates that the model is generalizing well to unseen data, even with larger values of 'd'.

The charts provide a clear visualization of the trade-off between model complexity (represented by 'd') and performance. The optimal value of 'd' appears to be 1, based on the observed accuracy levels. Further investigation might be needed to understand the underlying reasons for this relationship and to explore other parameter settings that could potentially improve performance.

</details>

The training and testing accuracy progress per epoch is reported in Figure 6, showing a clear degradation of performance with increasing determinism.

Table 4: Performance of TM and ADTM with different d on Liver Disorders dataset.

| | TM | ADTM | ADTM | ADTM | ADTM | ADTM | ADTM |

|------|-------|--------|--------|--------|--------|--------|--------|

| | TM | d=1 | d=10 | d=100 | d=500 | d=1000 | d=5000 |

| F1 | 0.648 | 0.705 | 0.694 | 0.692 | 0.692 | 0.689 | 0.692 |

| Acc. | 0.533 | 0.610 | 0.610 | 0.612 | 0.612 | 0.610 | 0.611 |

Figure 7: Training and testing accuracy per epoch on the Liver Disorders dataset.

<details>

<summary>Image 7 Details</summary>

### Visual Description

## Line Chart: Training and Testing Accuracy vs. Epoch

### Overview

The image presents two line charts side-by-side. The left chart displays Training Accuracy against Epoch, while the right chart shows Testing Accuracy against Epoch. Both charts compare the performance of models with different values of 'd' (1, 10, 100, 500, 1000, and 5000). The charts aim to illustrate how the accuracy of these models evolves over training epochs.

### Components/Axes

* **X-axis (Both Charts):** Epoch, ranging from 0 to 200.

* **Y-axis (Left Chart):** Training Accuracy, ranging from 0.60 to 0.80.

* **Y-axis (Right Chart):** Testing Accuracy, ranging from 0.55 to 0.63.

* **Legend (Top-Center):**

* `d = 1` (Solid Red Line)

* `d = 10` (Dashed Red Line)

* `d = 100` (Dashed-Dotted Red Line)

* `d = 500` (Solid Blue Line)

* `d = 1000` (Dashed Blue Line)

* `d = 5000` (Dashed-Dotted Blue Line)

### Detailed Analysis or Content Details

**Left Chart (Training Accuracy):**

* **d = 1 (Solid Red):** Starts at approximately 0.60 at Epoch 0, increases rapidly to around 0.75 by Epoch 50, and plateaus around 0.77-0.78 from Epoch 100 onwards.

* **d = 10 (Dashed Red):** Starts at approximately 0.60 at Epoch 0, increases rapidly to around 0.75 by Epoch 50, and plateaus around 0.77-0.78 from Epoch 100 onwards.

* **d = 100 (Dashed-Dotted Red):** Starts at approximately 0.60 at Epoch 0, increases rapidly to around 0.75 by Epoch 50, and plateaus around 0.77-0.78 from Epoch 100 onwards.

* **d = 500 (Solid Blue):** Starts at approximately 0.60 at Epoch 0, increases rapidly to around 0.77 by Epoch 50, and plateaus around 0.78-0.79 from Epoch 100 onwards.

* **d = 1000 (Dashed Blue):** Starts at approximately 0.60 at Epoch 0, increases rapidly to around 0.77 by Epoch 50, and plateaus around 0.78-0.79 from Epoch 100 onwards.

* **d = 5000 (Dashed-Dotted Blue):** Starts at approximately 0.60 at Epoch 0, increases rapidly to around 0.77 by Epoch 50, and plateaus around 0.78-0.79 from Epoch 100 onwards.

**Right Chart (Testing Accuracy):**

* **d = 1 (Solid Red):** Starts at approximately 0.56 at Epoch 0, increases rapidly to around 0.61 by Epoch 20, and plateaus around 0.61-0.62 from Epoch 50 onwards.

* **d = 10 (Dashed Red):** Starts at approximately 0.56 at Epoch 0, increases rapidly to around 0.61 by Epoch 20, and plateaus around 0.61-0.62 from Epoch 50 onwards.

* **d = 100 (Dashed-Dotted Red):** Starts at approximately 0.56 at Epoch 0, increases rapidly to around 0.61 by Epoch 20, and plateaus around 0.61-0.62 from Epoch 50 onwards.

* **d = 500 (Solid Blue):** Starts at approximately 0.56 at Epoch 0, increases rapidly to around 0.62 by Epoch 20, and plateaus around 0.62-0.63 from Epoch 50 onwards.

* **d = 1000 (Dashed Blue):** Starts at approximately 0.56 at Epoch 0, increases rapidly to around 0.62 by Epoch 20, and plateaus around 0.62-0.63 from Epoch 50 onwards.

* **d = 5000 (Dashed-Dotted Blue):** Starts at approximately 0.56 at Epoch 0, increases rapidly to around 0.62 by Epoch 20, and plateaus around 0.62-0.63 from Epoch 50 onwards.

### Key Observations

* In the Training Accuracy chart, all models converge to a similar accuracy level (around 0.77-0.79) regardless of the 'd' value.

* In the Testing Accuracy chart, models with `d = 500`, `d = 1000`, and `d = 5000` achieve slightly higher testing accuracy (around 0.62-0.63) compared to those with `d = 1`, `d = 10`, and `d = 100` (around 0.61-0.62).

* Both charts show rapid initial learning (steep slope) followed by a plateau, indicating diminishing returns from further training.

* The difference in performance between different 'd' values is more pronounced in the Testing Accuracy chart than in the Training Accuracy chart.

### Interpretation

The data suggests that increasing the value of 'd' (up to 500 or 1000) improves the generalization performance of the models, as evidenced by the higher testing accuracy. However, beyond a certain point (around d=500), further increases in 'd' do not lead to significant improvements in either training or testing accuracy. This could indicate that the models are reaching a point of diminishing returns or that other factors are becoming more limiting.

The gap between training and testing accuracy suggests some degree of overfitting, particularly for the models with lower 'd' values. The models are learning the training data well, but their ability to generalize to unseen data is limited. The plateau in both charts indicates that the models have converged and further training is unlikely to yield significant improvements. The consistent performance of d=500, d=1000, and d=5000 suggests that these values represent a good balance between model complexity and generalization ability.

</details>

## 4.4 Liver Disorders

The Liver Disorders dataset 4 was created by BUPA Medical Research and Development Ltd. (hereafter 'BMRDL') during the 1980s as part of a larger health-screening database. The dataset consists of 7 attributes. However, McDermott and Forsyth [21] claim that many researchers have used the dataset incorrectly, considering the Selector attribute as the class label. Based on the recommendation of McDermott and Forsythof, we here instead use the Number of Half-Pint Equivalents of Alcoholic Beverages as the dependent variable, binarized using the threshold ≥ 3 . The Selector attribute is discarded. The remaining attributes represent the results of various blood tests, and we use them as features.

Here, ADTM is given 10 clauses per class, with s = 3 and T = 10 . Each MVF-LA action possesses 100 states. The performance of ADTM for different levels of determinism is summarized in Table 4. For d = 1 , the F1-score of

4 Available from https://archive.ics.uci.edu/ml/datasets/Liver+Disorders.

Table 5: Performance of TM and ADTM with different d on Heart Disease dataset.

| | TM | ADTM | ADTM | ADTM | ADTM | ADTM | ADTM |

|------|-------|--------|--------|--------|--------|--------|--------|

| | TM | d=1 | d=10 | d=100 | d=500 | d=1000 | d=5000 |

| F1 | 0.687 | 0.759 | 0.766 | 0.767 | 0.760 | 0.762 | 0.605 |

| Acc. | 0.672 | 0.778 | 0.780 | 0.783 | 0.773 | 0.781 | 0.633 |

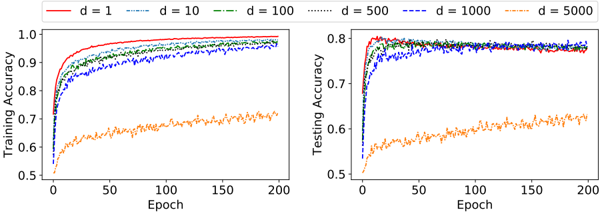

Figure 8: Training and testing accuracy per epoch on the Heart Disease dataset.

<details>

<summary>Image 8 Details</summary>

### Visual Description

## Line Chart: Training and Testing Accuracy vs. Epoch for Different 'd' Values

### Overview

The image presents two line charts side-by-side. The left chart displays Training Accuracy against Epoch, while the right chart shows Testing Accuracy against Epoch. Both charts compare the performance of models with different values of 'd' (1, 10, 100, 500, 1000, and 5000).

### Components/Axes

* **X-axis (Both Charts):** Epoch, ranging from 0 to 200.

* **Y-axis (Left Chart):** Training Accuracy, ranging from 0.5 to 1.0.

* **Y-axis (Right Chart):** Testing Accuracy, ranging from 0.5 to 0.8.

* **Legend (Top-Left):** Labels for each line representing different 'd' values:

* d = 1 (Red solid line)

* d = 10 (Blue dashed line)

* d = 100 (Green dash-dot line)

* d = 500 (Orange dotted line)

* d = 1000 (Purple dotted line)

* d = 5000 (Black solid line)

### Detailed Analysis or Content Details

**Left Chart (Training Accuracy):**

* **d = 1 (Red):** Starts at approximately 0.55, rapidly increases to around 0.95 by Epoch 50, and plateaus around 0.97-0.99 for the remainder of the epochs.

* **d = 10 (Blue):** Starts at approximately 0.55, increases more slowly than d=1, reaching around 0.85 by Epoch 50, and continues to increase, reaching approximately 0.95 by Epoch 200.

* **d = 100 (Green):** Starts at approximately 0.55, increases at a similar rate to d=10, reaching around 0.85 by Epoch 50, and continues to increase, reaching approximately 0.95 by Epoch 200.

* **d = 500 (Orange):** Starts at approximately 0.6, increases slowly, reaching around 0.7 by Epoch 50, and plateaus around 0.72-0.75 for the remainder of the epochs.

* **d = 1000 (Purple):** Starts at approximately 0.6, increases slowly, reaching around 0.7 by Epoch 50, and plateaus around 0.72-0.75 for the remainder of the epochs.

* **d = 5000 (Black):** Starts at approximately 0.55, increases slowly, reaching around 0.7 by Epoch 50, and plateaus around 0.72-0.75 for the remainder of the epochs.

**Right Chart (Testing Accuracy):**

* **d = 1 (Red):** Starts at approximately 0.55, rapidly increases to around 0.9 by Epoch 50, and plateaus around 0.92-0.95 for the remainder of the epochs.

* **d = 10 (Blue):** Starts at approximately 0.55, increases more slowly than d=1, reaching around 0.8 by Epoch 50, and continues to increase, reaching approximately 0.9 by Epoch 200.

* **d = 100 (Green):** Starts at approximately 0.55, increases at a similar rate to d=10, reaching around 0.8 by Epoch 50, and continues to increase, reaching approximately 0.9 by Epoch 200.

* **d = 500 (Orange):** Starts at approximately 0.6, increases slowly, reaching around 0.65 by Epoch 50, and plateaus around 0.67-0.7 for the remainder of the epochs.

* **d = 1000 (Purple):** Starts at approximately 0.6, increases slowly, reaching around 0.65 by Epoch 50, and plateaus around 0.67-0.7 for the remainder of the epochs.

* **d = 5000 (Black):** Starts at approximately 0.6, increases slowly, reaching around 0.65 by Epoch 50, and plateaus around 0.67-0.7 for the remainder of the epochs.

### Key Observations

* Lower values of 'd' (1, 10, 100) achieve significantly higher training and testing accuracy compared to higher values (500, 1000, 5000).

* The lines for d=500, d=1000, and d=5000 are very close to each other in both charts, indicating similar performance.

* There is a noticeable gap between training and testing accuracy for all 'd' values, suggesting some degree of overfitting.

* The training accuracy plateaus much earlier than the testing accuracy for d=1, d=10, and d=100.

### Interpretation

The data suggests that the parameter 'd' plays a crucial role in the performance of the model. Lower values of 'd' lead to better accuracy, both in training and testing. However, the performance plateaus for higher values of 'd', indicating that increasing 'd' beyond a certain point does not improve the model's ability to learn.

The gap between training and testing accuracy suggests that the model is overfitting, especially for lower values of 'd'. This means the model is learning the training data too well and is not generalizing well to unseen data.

The consistent performance of d=500, d=1000, and d=5000 suggests that there might be a limit to the benefit of increasing 'd', and that other factors might be more important for improving performance beyond a certain point. The choice of 'd' represents a trade-off between model complexity and generalization ability. A smaller 'd' may lead to underfitting, while a larger 'd' may lead to overfitting.

</details>

ADTM is better than what is achieved with the standard TM. In contrast to the performance on previous datasets, the performance of ADTM on Liver Disorders dataset with respect to F1-score does not decrease significantly with d . Instead, it fluctuates around 0 . 690 . Unlike the other datasets, the ADTM with d = 1 actually requires more training rounds than for larger d -values, before it learns the final MVF-LA actions. The ADTM with d = 1 is also unable to reach a similar level of training accuracy, compared to higher d -values. Despite the diverse learning speed, testing accuracy becomes similar after roughly 50 training rounds. The other considered machine learning models obtain somewhat similar F1-scores on the same dataset, with DT, RF, and EBM peaking with scores higher than 0 . 700 .

## 4.5 Heart Disease

The Heart Disease dataset 5 concerns prediction of heart disease. To this end, 13 features are available, selected among 75. Out of the 13 features, 6 are real-valued, 3 are binary, 3 are nominal, and one is ordered. In this case, the ADTM is built on 100 clauses. The number of state transitions when the feedback is strong, s , is equal to 3 while the target, T , is equal to 10 . The number of states per MVF-LA action in the ADTM is 100 . As one can see in Table 5, the ADTM provides better performance than TM in terms of F1-score and accuracy when d = 1 . The F1-measure increases with the d -value and peaks at d = 100 . Then it fluctuates and reaches its lowest value of 0 . 605 when d = 5000 .

Figure 8 shows that the training and testing accuracy for d = 5000 is consistently low over the training epochs, compared to the training and testing accuracy of other d -values. The other d -values, after initial variations, perform similarly roughly from the 75 th epoch and onward. Out of other machine learning algorithms, EBM provides the best F1-score. Even though ANN-1, ANN-2, DT, RF, and XGBoost obtain better F1-scores than TM, the F1 scores of ADTM when d equals to 1 , 10 , 100 , 500 , and 1000 are the highest ones.

## 5 Effects of Determinism on Energy Consumption

In the hardware implementation of TM, power is consumed by the pseudorandom number generators (PRNGs) when generating a new random number [5]. This is referred to as switching power . In the TM, every TA update is randomized, and switching power is consumed by the PRNGs on every cycle. Additionally, power is also consumed by the PRNGs whilst idle. We term this leakage power . Leakage power is always consumed by the PRNGs whilst they are powered up, even when not generating new numbers.

In the ADTM with hybrid TA where the determinism parameter d is introduced, d = 1 would be equivalent to a TM where every TA update is randomized. d = ∞ means the ADTM is fully deterministic, and no random numbers are required from the PRNG. If a TA update is randomized only on the d th cycle, the PRNGs need only be actively switched (and therefore consume switching power ) for 1 d portion of the entire training procedure. The switching power consumed by the PRNGs accounts for 7% of the total system power when using a traditional TA (equivalent to d = 1 ). With

5 Available from https://archive.ics.uci.edu/ml/datasets/Statlog+%28Heart%29.

Table 6: Classification accuracy of state-of-the-art machine learning models on all the datasets.

| | Bankruptcy | Bankruptcy | Balance Scale | Balance Scale | Breast Cancer | Breast Cancer | Liver Disorder | Liver Disorder | Heart Disease | Heart Disease |

|---------|--------------|--------------|-----------------|-----------------|-----------------|-----------------|------------------|------------------|-----------------|-----------------|

| | F1 | Acc. | F1 | Acc. | F1 | Acc. | F1 | Acc. | F1 | Acc. |

| ANN-1 | 0.995 | 0.994 | 0.990 | 0.990 | 0.458 | 0.719 | 0.671 | 0.612 | 0.738 | 0.772 |

| ANN-2 | 0.996 | 0.995 | 0.995 | 0.995 | 0.403 | 0.683 | 0.652 | 0.594 | 0.742 | 0.769 |

| ANN-3 | 0.997 | 0.997 | 0.995 | 0.995 | 0.422 | 0.685 | 0.656 | 0.602 | 0.650 | 0.734 |

| DT | 0.993 | 0.993 | 0.986 | 0.986 | 0.276 | 0.706 | 0.728 | 0.596 | 0.729 | 0.781 |

| SVM | 0.994 | 0.994 | 0.887 | 0.887 | 0.384 | 0.678 | 0.622 | 0.571 | 0.679 | 0.710 |

| KNN | 0.995 | 0.994 | 0.953 | 0.953 | 0.458 | 0.755 | 0.638 | 0.566 | 0.641 | 0.714 |

| RF | 0.949 | 0.942 | 0.859 | 0.860 | 0.370 | 0.747 | 0.729 | 0.607 | 0.713 | 0.774 |

| XGBoost | 0.983 | 0.983 | 0.931 | 0.931 | 0.367 | 0.719 | 0.656 | 0.635 | 0.701 | 0.788 |

| EBM | 0.993 | 0.992 | 1.000 | 1.000 | 0.389 | 0.745 | 0.710 | 0.629 | 0.783 | 0.824 |

Table 7: Comparative power per datapoint with two different d values.

| | Bankruptcy | Breast Cancer | Balance Scale | Liver Disorder | Heart Disease |

|----------------|--------------|-----------------|-----------------|------------------|-----------------|

| Power (d=1) | 6.94 mW | 15.8 mW | 7.7 mW | 12.6 mW | 148 mW |

| Power (d=5000) | 6.45 mW | 14.7 mW | 7.2 mW | 11.8 mW | 137.6 mW |

d = 100 this is reduced to 0.07% of the system power, and with d = 5000 this is reduced further to 0.001% of the same. It can be seen that as d increases in the ADTM, the switching power consumed by the PRNGs tends to zero.

In the special case of d = ∞ the PRNGs are no longer required for TA updates since the TAs are fully deterministic we can omit these PRNGs from the design and prevent their leakage power from being consumed. The leakage power of the PRNGs accounts for 32% of the total system power. On top of the switching power savings this equates to 39% of system power, meaning large power and therefore energy savings can be made in the ADTM.

Table 7 shows comparative training power consumption per datapoint (i.e. all TAs being updated concurrently) for two different d values: d =1 and d =5000. Typically, the overall power is higher for bigger datasets as they require increased number of concurrent TAs as well as PRNGs. As can be seen, the increase in d value reduces the power consumption by 11 mW in the case of Heart Disease dataset. This saving is made by reducing the switching activity in the PRNGs as explained above. More savings are made by larger d values as the PRNG concurrent switching activities are reduced.

## 6 Conclusion

In this paper, we proposed a novel finite-state learning automaton (MFV-LA) that can replace the Tsetlin Automaton in TM learning, for increased determinism, and thus reduced energy usage. The new automaton uses multi-step deterministic state jumps to reinforce sub-patterns. Simultaneously, flipping a coin to skip every d 'th state update ensures diversification by randomization. The new d -parameter thus allows the degree of randomization to be finely controlled. E.g., d = 1 makes every update random and d = ∞ makes the automaton fully deterministic. Our empirical results show that, overall, only substantial degrees of determinism reduces accuracy. Energy-wise, the pseudorandom number generator contributes to switching energy consumption within the TM, which can be completely eliminated with d = ∞ . We can thus use the new d -parameter to trade off accuracy against energy consumption, to facilitate low-energy machine learning.

## Acknowledgement

The authors gratefully acknowledge the contributions from Jonathan Edwards at Temporal Computing on strategies for deterministic Tsetlin Machine learning.

## References

- [1] Emma Strubell, Ananya Ganesh, and Andrew McCallum. Energy and Policy Considerations for Deep Learning in NLP. In ACL , 2019.

- [2] J. Chen and X. Ran. Deep Learning With Edge Computing: A Review. Proc. of the IEEE , 107(8):1655-1674, 2019.

- [3] Eva García-Martín, Crefeda Faviola Rodrigues, Graham Riley, and Håkan Grahn. Estimation of energy consumption in machine learning. Journal of Parallel and Distributed Computing , 134:75 - 88, 2019.

- [4] Ole-Christoffer Granmo and B John Oommen. Solving Stochastic Nonlinear Resource Allocation Problems Using a Hierarchy of Twofold Resource Allocation Automata. IEEE Transaction on Computers , 2010.

- [5] Adrian Wheeldon, Rishad Shafik, Tousif Rahman, Jie Lei, Alex Yakovlev, and Ole-Christoffer Granmo. Learning Automata based Energy-efficient AI Hardware Design for IoT. Philosophical Transactions of the Royal Society A , 2020.

- [6] Geir Thore Berge, Ole-Christoffer Granmo, Tor Oddbjørn Tveit, Morten Goodwin, Lei Jiao, and Bernt Viggo Matheussen. Using the Tsetlin Machine to Learn Human-Interpretable Rules for High-Accuracy Text Categorization with Medical Applications. IEEE Access , 7:115134-115146, 2019.

- [7] K. Darshana Abeyrathna, Ole-Christoffer Granmo, Xuan Zhang, Lei Jiao, and Morten Goodwin. The Regression Tsetlin Machine - A Novel Approach to Interpretable Non-Linear Regression. Philosophical Transactions of the Royal Society A , 378, 2019.

- [8] Ole-Christoffer Granmo, Sondre Glimsdal, Lei Jiao, Morten Goodwin, Christian W Omlin, and Geir Thore Berge. The Convolutional Tsetlin Machine. arXiv preprint arXiv:1905.09688 , 2019.

- [9] Adrian Phoulady, Ole-Christoffer Granmo, Saeed Rahimi Gorji, and Hady Ahmady Phoulady. The Weighted Tsetlin Machine: Compressed Representations with Clause Weighting. In Ninth International Workshop on Statistical Relational AI (StarAI 2020) , 2020.

- [10] Saeed Rahimi Gorji, Ole-Christoffer Granmo, Adrian Phoulady, and Morten Goodwin. A Tsetlin Machine with Multigranular Clauses. In Lecture Notes in Computer Science: Proceedings of the Thirty-ninth International Conference on Innovative Techniques and Applications of Artificial Intelligence (SGAI-2019) , volume 11927. Springer, 2019.

- [11] Saeed Gorji et al. Increasing the Inference and Learning Speed of Tsetlin Machines with Clause Indexing. In International Conference on Industrial, Engineering and Other Applications of Applied Intelligent Systems . Springer, 2020.

- [12] K Darshana Abeyrathna, Ole-Christoffer Granmo, and Morten Goodwin. Extending the tsetlin machine with integer-weighted clauses for increased interpretability. arXiv preprint arXiv:2005.05131 , 2020.

- [13] K Darshana Abeyrathna, Ole-Christoffer Granmo, and Morten Goodwin. Adaptive Sparse Representation of Continuous Input for Tsetlin Machines Based on Stochastic Searching on the Line. In Preparation , 2020.

- [14] Kuruge Darshana Abeyrathna, Ole-Christoffer Granmo, Xuan Zhang, and Morten Goodwin. A scheme for continuous input to the Tsetlin Machine with applications to forecasting disease outbreaks. In International Conference on Industrial, Engineering and Other Applications of Applied Intelligent Systems , pages 564-578. Springer, 2019.

- [15] Rishad Shafik, Adrian Wheeldon, and Alex Yakovlev. Explainability and Dependability Analysis of Learning Automata based AI Hardware. In IEEE 26th International Symposium on On-Line Testing and Robust System Design (IOLTS) . IEEE, 2020.

- [16] Kumpati S Narendra and Mandayam AL Thathachar. Learning Automata: An Introduction . Courier Corporation, 2012.

- [17] Michael Lvovitch Tsetlin. On behaviour of finite automata in random medium. Avtomat. i Telemekh , 22(10):13451354, 1961.

- [18] MALThathachar and P S Sastry. Networks of Learning Automata: Techniques for Online Stochastic Optimization . Kluwer Academic Publishers, 2004.

- [19] Tianqi Chen and Carlos Guestrin. Xgboost: A scalable tree boosting system. In Proceedings of the 22nd acm sigkdd international conference on knowledge discovery and data mining , pages 785-794, 2016.

- [20] Harsha Nori, Samuel Jenkins, Paul Koch, and Rich Caruana. Interpretml: A unified framework for machine learning interpretability. arXiv preprint arXiv:1909.09223 , 2019.

- [21] James McDermott and Richard S Forsyth. Diagnosing a disorder in a classification benchmark. Pattern Recognition Letters , 73:41-43, 2016.