## CryptoEmu: An Instruction Set Emulator for Computation Over Ciphers

Xiaoyang Gong xgong35@wisc.edu Dan Negrut negrut@wisc.edu

## Abstract

Fully homomorphic encryption (FHE) allows computations over encrypted data. This technique makes privacy-preserving cloud computing a reality. Users can send their encrypted sensitive data to a cloud server, get encrypted results returned and decrypt them, without worrying about data breaches.

This project report presents a homomorphic instruction set emulator, CryptoEmu, that enables fully homomorphic computation over encrypted data. The software-based instruction set emulator is built upon an open-source, state-of-the-art homomorphic encryption library that supports gate-level homomorphic evaluation. The instruction set architecture supports multiple instructions that belong to the subset of ARMv8 instruction set architecture. The instruction set emulator utilizes parallel computing techniques to emulate every functional unit for minimum latency. This project report includes details on design considerations, instruction set emulator architecture, and datapath and control unit implementation. We evaluated and demonstrated the instruction set emulator's performance and scalability on a 48-core workstation. CryptoEmu has shown a significant speedup in homomorphic computation performance when compared with HELib, a state-of-the-art homomorphic encryption library.

## Index Terms

Fully Homomorphic Encryption, Parallel Computing, Homomorphic Instruction Set, Homomorphic Processor, Computer Architecture.

## I. INTRODUCTION

I N the conventional cloud service model, users share data with their service provide (cloud) to outsource computations. The cloud receives encrypted data and decrypts it with the cloud's private key or the private key shared between the user and the cloud. Thus, the service provider has access to user data, which might contain sensitive information like health records, bank statements, or trade secrets. Privacy concerns have been raised along with the wide adoption of cloud services. In 2019, over 164.68 million sensitive records were exposed in the United States [1].

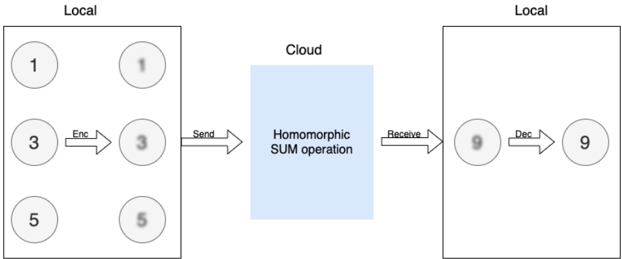

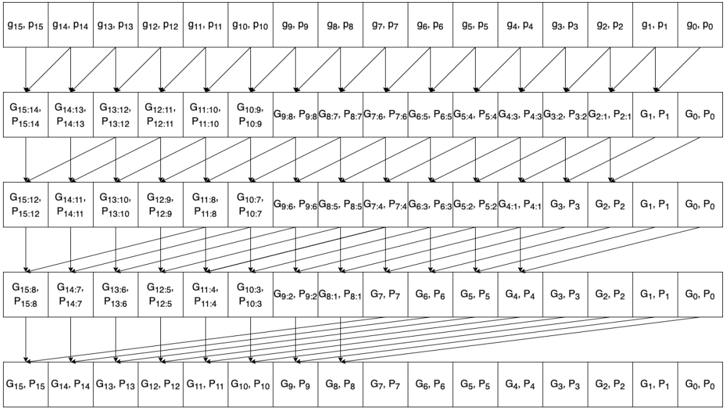

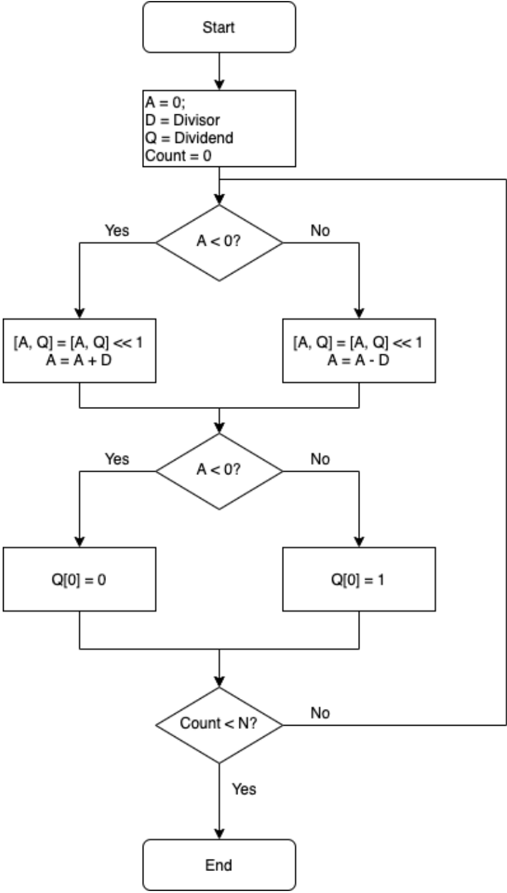

In the worst-case scenario, the cloud service provider cannot be trusted. User data is inherently unsafe if it is in plain text. Even if the service provider is honest, cloud service is prone to fail victims of cybercrime. Security loopholes or sophisticated social engineering attacks expose user privacy on the cloud, and a successful attack usually results in a massive user data leak. One way to eliminate this type of risk is to allow the cloud to operate on the encrypted user data without decrypting it. Fully Homomorphic Encryption (FHE) is a special encryption scheme that allows arbitrary computation over encrypted data without knowing the private key. An FHE enabled cloud service model shown in Fig. 1. In this example, the user wants to compute the sum of 1, 3, 5 in the cloud. The user first encrypts data with FHE, then sends the cipher (shown in Fig. 1 as bubbles with blurry text) to the cloud. When the cloud receives encrypted data, it homomorphically adds all encrypted data together to form an encrypted sum and returns the encrypted sum to the user. The user decrypts the encrypted sum with a secret key, and the result in cleartext is 9 - the sum of 1, 3, and 5. In the entire process, the cloud has no knowledge of user data input and output. Therefore, user data is safe from the insecure cloud or any attack targeted at the cloud service provider.

Fig. 1: Homomorphic Encryption

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Diagram: Homomorphic Encryption Process

### Overview

The image illustrates a homomorphic encryption process involving local computations, encryption, sending data to the cloud for a SUM operation, receiving the result, and decryption back to a local environment.

### Components/Axes

* **Local (Left)**: Represents a local computing environment. Contains three numerical values (1, 3, 5) represented in circles. These values are then encrypted, resulting in blurred versions of the same numbers. An arrow labeled "Enc" indicates the encryption process. An arrow labeled "Send" indicates the encrypted data is sent to the cloud.

* **Cloud (Center)**: Represents a cloud computing environment. A blue rectangle contains the text "Homomorphic SUM operation," indicating the type of computation performed in the cloud.

* **Local (Right)**: Represents another local computing environment. Receives the result from the cloud via an arrow labeled "Receive". The received value (9) is initially blurred, then decrypted via an arrow labeled "Dec", resulting in a clear numerical value (9).

### Detailed Analysis

* **Local (Left)**:

* Three numerical values are present: 1 (top-left), 3 (middle-left), and 5 (bottom-left).

* These values are encrypted, resulting in blurred versions: 1 (top-right), 3 (middle-right), and 5 (bottom-right).

* The encryption process is indicated by an arrow labeled "Enc" pointing from the original values to their encrypted counterparts.

* The encrypted values are sent to the cloud, indicated by an arrow labeled "Send".

* **Cloud (Center)**:

* The cloud performs a "Homomorphic SUM operation" on the received encrypted data.

* **Local (Right)**:

* The result of the SUM operation (9) is received from the cloud.

* The received value is initially blurred, indicating it is still encrypted.

* The value is decrypted, indicated by an arrow labeled "Dec", resulting in a clear numerical value (9).

### Key Observations

* The diagram demonstrates a process where data is encrypted locally, sent to the cloud for computation, and then decrypted locally.

* The "Homomorphic SUM operation" suggests that the cloud is performing a summation of the encrypted values.

* The initial values (1, 3, 5) sum up to 9, which is the final decrypted value, confirming the SUM operation.

### Interpretation

The diagram illustrates the concept of homomorphic encryption, where computations can be performed on encrypted data without decrypting it first. This allows for secure data processing in untrusted environments like the cloud. The diagram specifically shows a SUM operation, but homomorphic encryption can support other types of computations as well. The process ensures that the data remains confidential throughout the entire process, as it is only decrypted in the trusted local environment.

</details>

Blurry text in the figure denotes encrypted data.

Over the years, the research community has developed various encryption schemes that enable computation over ciphers. TFHE [2] is an open-source FHE library that allows fully homomorphic evaluation on arbitrary Boolean circuits. TFHE library

supports FHE operations on unlimited numbers of logic gates. Using FHE logic gates provided by TFHE, users can build an application-specific FHE circuit to perform arbitrary computations over encrypted data. While TFHE library has a good performance in gate-by-gate FHE evaluation speed and memory usage [3], a rigid logic circuit has reusability and scalability issues for general-purpose computing. Also, evaluating a logic circuit in software is slow. Because bitwise FHE operations on ciphers are about one billion times slower than the same operations on plain text, computation time ramps up as the circuit becomes complex.

Herein, we propose a solution that embraces a different approach that draws on a homomorphic instruction set emulator called CryptoEmu. CryptoEmu supports multiple FHE instructions (ADD, SUB, DIV, etc.). When CryptoEmu decodes an instruction, it invokes a pre-built function, referred as functional unit, to perform an FHE operation on input ciphertext. All functional units are built upon FHE gates from TFHE library, and they are accelerated using parallel computing techniques. During execution, the functional units fully utilize a multi-core processor to achieve an optimal speedup. A user would simply reprogram the FHE assembly code for various applications, while relying on the optimized functional units.

This report is organized as follows. Section 2 provides a primer on homomorphic encryption and summarizes related work. Section 3 introduces TFHE, an open-source library for fully homomorphic encryption. TFHE provides the building blocks for CryptoEmu. Section 4 describes CryptoEmu's general architecture. Section 5 and 6 provide detailed instruction set emulator implementations and gives benchmark results on Euler, a CPU/GPU supercomputer. Section 7 analyzes CryptoEmu's scalability and vulnerability, and compared CryptoEmu with a popular FHE software library, HELib [4]. Conclusions and future directions of investigation/development are provided in Section 8.

## II. BACKGROUND

Homomorphic Encryption. Homomorphic encryption (HE) is an encryption scheme that supports computation on encrypted data and generates an encrypted output. When the encrypted output is decrypted, its value is equal to the result when applying equivalent computation on unencrypted data. HE is formally defined as follows: let Enc () be an HE encryption function, Dec () be an HE decryption function, f () be a function, g () be a homomorphic equivalent of f () , and a and b be input data in plaintext. The following equation holds:

$$f ( a , b ) = D e c \left ( g ( E n c ( a ) , E n c ( b ) ) \right ) .$$

An HE scheme is a partially homomorphic encryption (PHE) scheme if g () supports only either addition or multiplication. An HE scheme is a somewhat homomorphic encryption (SWHE) scheme if a limited number of g () is allowed to be applied to encrypted data. An HE scheme is a fully homomorphic encryption (FHE) scheme if any g () can be applied for an unlimited number of times over encrypted data [5].

The first FHE scheme was proposed by Gentry [6]. In HE schemes, the plaintext is encrypted with Gaussian noise. The noise grows after every homomorphic evaluation until the noise becomes too large for the encryption scheme to work. This is the reason that SWHE only allows a limited number of homomorphic evaluations. Gentry introduced a novel technique called 'bootstrapping' such that a ciphertext can be homomorphically decrypted and homomorphically encrypted with the secret key to reduce Gaussian noise [6], [7]. Building off [6], [8] improved bootstrapping to speedup homomorphic evaluations. The TFHE library based on [3] and [9] is one of the FHE schemes with a fast bootstrapping procedure.

Related work. This project proposed a software-based, multiple-instruction ISA emulator that supports fully homomorphic, general-purpose computation. Several general-purpose HE computer architecture implementations exist in both software and hardware. HELib [10] is an FHE software library the implements the Brakerski-Gentry-Vaikuntanathan (BGV) homomorphic encryption scheme [11]. HELib supports HE arithmetic such as addition, subtraction, multiplication, and data movement operations. HELib can be treated as an assembly language for general-purpose HE computing. Cryptoleq [12] is a softwarebased one-instruction set computer (OISC) emulator for general-purpose HE computing. Cryptoleq uses Paillier partially homomorphic scheme [13] and supports Turing-complete SUBLEQ instruction. HEROIC [14] is another OISC architecture implemented on FPGA, based on Paillier partially homomorphic scheme. Cryptoblaze [15] is a multiple-instruction computer based on non-deterministic Paillier encryption that supports partially homomorphic computation. Cryptoblaze is implemented on the FPGA.

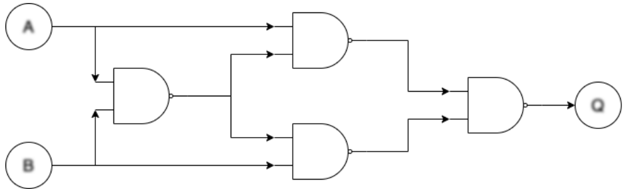

## III. TFHE LIBRARY

TFHE [2] is an FHE C/C++ software library used to implement fast gate-by-gate bootstrapping. The idea of TFHE is straightforward: if one can homomorphically evaluate a universal logic gate and homomorphically evaluate the next universal logic gate that uses the previous logic gate's output as its input, one can homomorphically evaluate arbitrary Boolean functions, essentially allowing arbitrary FHE computations on encrypted binary data. Figure 2 demonstrates a minimum FHE gate-level library: NAND gate. Bootstrapped NAND gates are used to construct an FHE XOR gate. Similarly, any FHE logic circuit can be constructed with a combination of bootstrapped NAND gates.

Fig. 2: Use of bootstrapped NAND gate to form arbitrary FHE logic circuit. Blurry text in the figure denotes encrypted data.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Logic Diagram: Complex AND Gate Circuit

### Overview

The image depicts a logic circuit diagram composed of AND gates. The circuit takes two inputs, A and B, and produces a single output, Q. The diagram shows the arrangement of the AND gates and the flow of signals through the circuit.

### Components/Axes

* **Inputs:**

* A (top-left)

* B (bottom-left)

* **Output:**

* Q (right)

* **Logic Gates:** The diagram contains four AND gates.

* **Flow Direction:** The arrows indicate the direction of signal flow from left to right.

### Detailed Analysis

1. **Input A:** The input A is fed directly into the top AND gate on the right side of the diagram. It is also fed into an AND gate on the left side of the diagram.

2. **Input B:** The input B is fed directly into the bottom AND gate on the right side of the diagram. It is also fed into the AND gate on the left side of the diagram.

3. **Left AND Gate:** The inputs A and B are fed into the left AND gate. The output of this AND gate is then fed into both the top and bottom AND gates on the right side of the diagram.

4. **Top Right AND Gate:** This AND gate takes input A and the output of the left AND gate.

5. **Bottom Right AND Gate:** This AND gate takes input B and the output of the left AND gate.

6. **Output AND Gate:** The outputs of the top and bottom AND gates on the right side of the diagram are fed into the final AND gate, which produces the output Q.

### Key Observations

* The circuit uses multiple AND gates to perform a more complex logical operation.

* The inputs A and B are used directly and also combined through an initial AND gate.

* The output Q is the result of combining the direct inputs with the result of the initial AND operation.

### Interpretation

The diagram represents a complex AND gate circuit. The circuit's logic can be described as follows: The output Q is true if and only if both (A AND B) AND (A AND B) are true. The initial AND gate combines A and B, and this result is then used in conjunction with the original A and B inputs in the subsequent AND gates. The final AND gate combines the outputs of these intermediate AND gates to produce the final output Q. This circuit effectively implements a more complex logical function than a simple AND gate.

</details>

TFHE API. TFHE library contains a comprehensive gate bootstrapping API for the FHE scheme [2], including secret-keyset and cloud-keyset generation; Encryption/decryption with secret-keyset; and FHE evaluation on a binary gate netlist with cloudkeyset. TFHE API's performance is evaluated on a single core of Intel Xeon CPU E5-2650 v3 @ 2.30GHz CPU, running CentOS Linux release 8.2.2004 with 128 GB memory. Table I shows the benchmark result of TFHE APIs that are critical to CryptoEmu's performance. TFHE gate bootstrapping parameter setup, Secret-keyset, and cloud-keyset generation are not included in the table.

TABLE I: TFHE API Benchmark

| API | Category | Bootstrapped? | Latency (ms) |

|-----------------|------------------------|-----------------|----------------|

| Encrypt | Encrypt decrypt | N/A | 0.0343745 |

| Decrypt | Encrypt decrypt | N/A | 0.000319556 |

| CONSTANT | Homomorphic operations | No | 0.00433995 |

| NOT | Homomorphic operations | No | 0.000679717 |

| COPY | Homomorphic operations | No | 0.000624117 |

| NAND | Homomorphic operations | Yes | 25.5738 |

| OR | Homomorphic operations | Yes | 25.618 |

| AND | Homomorphic operations | Yes | 25.6176 |

| XOR | Homomorphic operations | Yes | 25.6526 |

| XNOR | Homomorphic operations | Yes | 25.795 |

| NOR | Homomorphic operations | Yes | 25.6265 |

| ANDNY | Homomorphic operations | Yes | 25.6982 |

| ANDYN | Homomorphic operations | Yes | 25.684 |

| ORNY | Homomorphic operations | Yes | 25.7787 |

| ORYN | Homomorphic operations | Yes | 25.6957 |

| MUX | Homomorphic operations | Yes | 49.2645 |

| CreateBitCipher | Ciphertexts | N/A | 0.001725 |

| DeleteBitCipher | Ciphertexts | N/A | 0.002228 |

| ReadBitFromFile | Ciphertexts | N/A | 0.0175304 |

| WriteBitToFile | Ciphertexts | N/A | 0.00960664 |

In Table I, outside the 'Homomorphic operations' category, all other operations are relatively fast. In general, the latency is around 25ms, with exceptions of MUX that takes around 50ms, and CONSTANT, NOT, COPY that are relatively fast. The difference in speed is from gate bootstrapping. Unary gates like CONSTANT, NOT and COPY do not need to be bootstrapped. Binary gates need to be bootstrapped once. MUX needs to be bootstrapped twice. The bootstrapping procedure is manifestly the most computationally expensive operation in TFHE. This overhead is alleviated in CryptoEmu via parallel computing as detailed below.

## IV. CRYPTOEMU ARCHITECTURE OVERVIEW

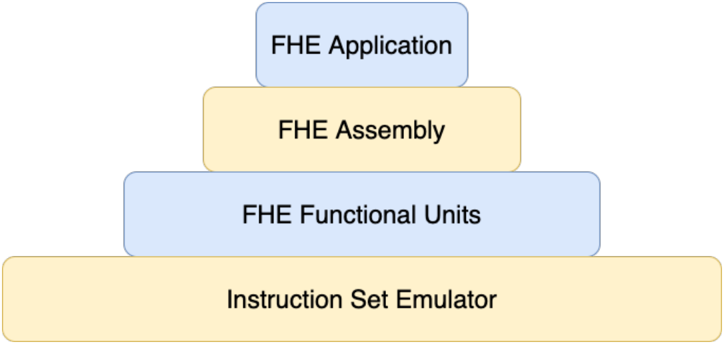

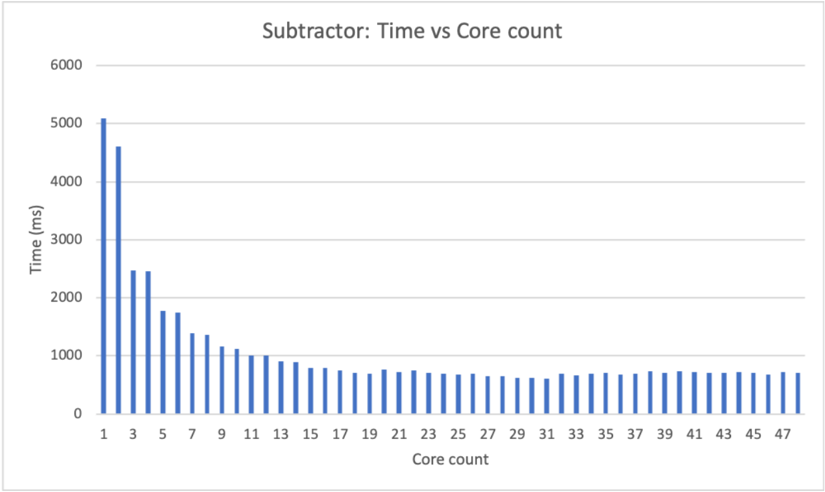

CryptoEmu is a C/C++ utility that emulates the behavior of Fully Homomorphic Encryption (FHE) instructions. The instruction set that CryptoEmu supports is a subset of ARMv8 A32 instructions for fully homomorphic computation over encrypted data. Figure 3 shows the abstract layer for an FHE application. For an FHE application that performs computation over encrypted data, the application will be compiled into FHE assembly that the instruction emulator supports. The instruction set emulator coordinates control units and functional units to decode and execute FHE assembly and returns final results. The design and implementation of CryptoEmu are anchored by two assumptions:

Assumption 1. The instruction set emulator runs on a high-performance multi-core machine.

Assumption 2. The cloud service provider is honest. However, the cloud is subject to cyber-attacks on the user's data.

In §VII-C we will discuss modification on CryptoEmu's implementation when Assumption 2 does not hold.

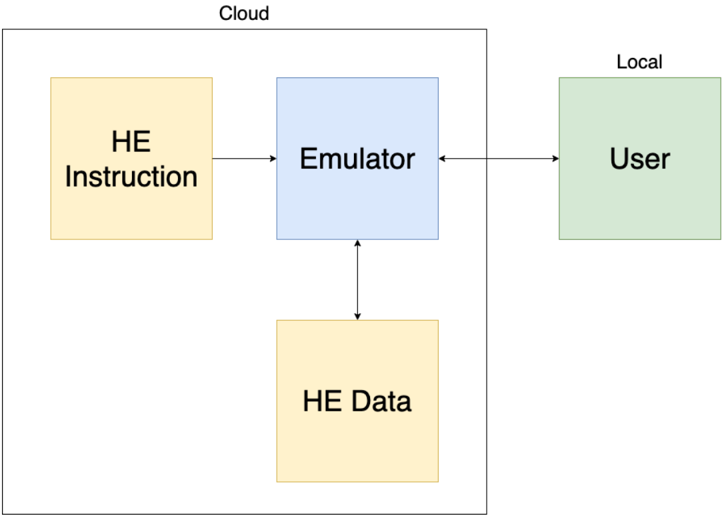

Cloud service model. Figure 4 shows the cloud service model. The instruction set emulator does what an actual hardware asset for encrypted execution would do: it reads from an unencrypted memory space an HE instruction ; i.e., it fetches

Fig. 3: Abstract Layers

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Diagram: FHE Abstraction Layers

### Overview

The image is a diagram illustrating the abstraction layers of a Fully Homomorphic Encryption (FHE) system. It presents a layered structure, resembling a pyramid, with each layer representing a different level of abstraction in the FHE stack.

### Components/Axes

The diagram consists of four rectangular blocks stacked vertically, each representing a different layer. The layers are:

1. **FHE Application** (Top, light blue)

2. **FHE Assembly** (Second from top, light yellow)

3. **FHE Functional Units** (Third from top, light blue)

4. **Instruction Set Emulator** (Bottom, light yellow)

### Detailed Analysis

The diagram shows a hierarchical structure, with the "Instruction Set Emulator" at the base and the "FHE Application" at the top. The blocks are arranged in descending order of size from bottom to top.

* **FHE Application**: The topmost layer, representing the application level that utilizes FHE.

* **FHE Assembly**: The layer below the application, likely representing an assembly-level interface for FHE operations.

* **FHE Functional Units**: The layer below the assembly, representing the fundamental building blocks for FHE computations.

* **Instruction Set Emulator**: The bottom layer, representing the underlying hardware or software that emulates the instruction set required for FHE.

### Key Observations

The diagram visually emphasizes the layered architecture of an FHE system, highlighting the different levels of abstraction involved. The size of each block could be interpreted as representing the complexity or scope of each layer, with the Instruction Set Emulator being the most fundamental and the FHE Application being the most abstract.

### Interpretation

The diagram illustrates the layered approach to building and using FHE systems. It suggests that FHE applications are built on top of lower-level components, such as FHE assembly and functional units, which in turn rely on an instruction set emulator. This layered architecture allows developers to work at different levels of abstraction, depending on their needs and expertise. The diagram implies that the complexity of FHE is managed through this layered approach, making it more accessible to application developers.

</details>

instruction that needs to be executed. The instruction set emulator also reads and writes HE data from an encrypted memory space, to process the user's data and return encrypted results to the encrypted memory space. The user, or any device that owns the user's secret key, will communicate with the cloud through an encrypted channel. The user provides all encrypted data to cloud. The user can send unencrypted HE instructions to the cloud through a secure channel. The user is also responsible for resolving branch directions for the cloud, based on the encrypted branch taken/non-taken result provided by the cloud.

Fig. 4: Cloud service model

<details>

<summary>Image 4 Details</summary>

### Visual Description

## System Diagram: HE Instruction and Data Flow

### Overview

The image is a system diagram illustrating the flow of Homomorphic Encryption (HE) instructions and data between a user, an emulator, and cloud storage. The diagram highlights the interaction between local and cloud-based components.

### Components/Axes

* **Cloud:** A rectangular box encompassing "HE Instruction", "Emulator", and "HE Data".

* **Local:** A label indicating the location of the "User" component.

* **HE Instruction:** A yellow rectangle labeled "HE Instruction".

* **Emulator:** A blue rectangle labeled "Emulator".

* **HE Data:** A yellow rectangle labeled "HE Data".

* **User:** A green rectangle labeled "User".

* **Arrows:** Indicate the direction of data flow between components.

### Detailed Analysis

* **HE Instruction:** Located within the "Cloud" boundary, on the left side. An arrow points from "HE Instruction" to "Emulator".

* **Emulator:** Located within the "Cloud" boundary, in the center. Arrows point to and from "HE Instruction", "HE Data", and "User".

* **HE Data:** Located within the "Cloud" boundary, below the "Emulator". An arrow points from "Emulator" to "HE Data".

* **User:** Located outside the "Cloud" boundary, on the right side, labeled as "Local". An arrow points from "User" to "Emulator" and from "Emulator" to "User".

### Key Observations

* The "Emulator" acts as a central hub, interacting with all other components.

* "HE Instruction" and "HE Data" are both located within the "Cloud".

* The "User" is located "Local" and interacts with the "Emulator" in the "Cloud".

### Interpretation

The diagram depicts a system where a user interacts with an emulator hosted in the cloud. The emulator processes homomorphic encryption instructions and data, likely for secure computation or storage. The user's interaction with the emulator suggests a scenario where data is processed in the cloud without being decrypted, maintaining privacy. The cloud contains the HE Instruction and HE Data, while the user is local.

</details>

## A. Data Processing

In actuality, the HE instruction and HE data can be text files or arrays of data bits stored in buffers, if sufficient memory is available. CryptoEmu employs a load-store architecture. All computations occur on virtual registers (vReg), where a vReg is an array of 32 encrypted data bits. Depending on the memory available on the machine, the number of total vReg is configurable. However, it is the compiler's responsibility to recycle vRegs and properly generate read/write addresses. A snippet of possible machine instructions is as follows:

LOAD R1

```

READ_ADDR1

READ_ADDR2

```

LOAD R2

```

ADD R0 R1, R2

STORE R0 WRITE_ADDR

```

Above, to perform a homomorphic addition, CryptoEmu fetches the LOAD instruction from the instruction memory. Because the instruction itself is in cleartext, CryptoEmu decodes the instruction, loads a piece of encrypted data from HE data memory indexed by READ ADDR1, and copies the encrypted data into vReg R1. Then, CryptoEmu increments its program counter by 4 bytes, reads the next LOAD instruction, and loads encrypted data from HE data memory into vReg R2. After the two operands are ready, CryptoEmu invokes a 32-bit adder and passes R1, R2 to it. The adder returns encrypted data in R0. Finally, CryptoEmu invokes the STORE operation and writes R0 data into the HE data memory pointed to by WRITE ADDR. Under Assumption 2, the honest cloud could infer some user information from program execution because HE instructions are in cleartext. However, all user data stays encrypted and protected from malicious attackers. Vulnerabilities are discussed in §VII-C.

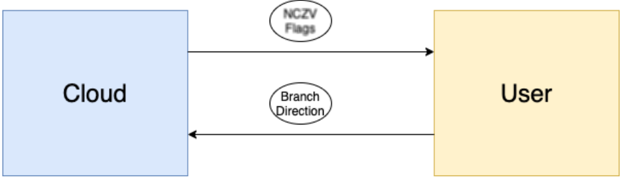

## B. Branch and Control Flow

CryptoEmu can perform a homomorphic comparison and generate N (negative), Z (zero), C (Unsigned overflow), and V (signed overflow) conditional flags. Based on conditional flags, the branch instruction changes the value of the program counter and therefore changes program flow. Because branches are homomorphically evaluated on the encrypted conditional flag, the branch direction is also encrypted. To solve this problem, CryptoEmu employs a client-server communication model from CryptoBlaze [15]. Through a secure communication channel, the cloud server will send an encrypted branch decision to a machine (client) that owns the user's private key. The client deciphers the encrypted branch decision and sends the branch decision encrypted with the server's public key to the server. The cloud server finally decrypts the branch decision, and CryptoEmu will move forward with a branch direction. Under assumption 2, the honest cloud will not use branch decision query and binary search to crack user's encrypted data, nor will the honest cloud infer user information from the user. In §VII-C, the scenario that assumption 2 does not hold will be discussed.

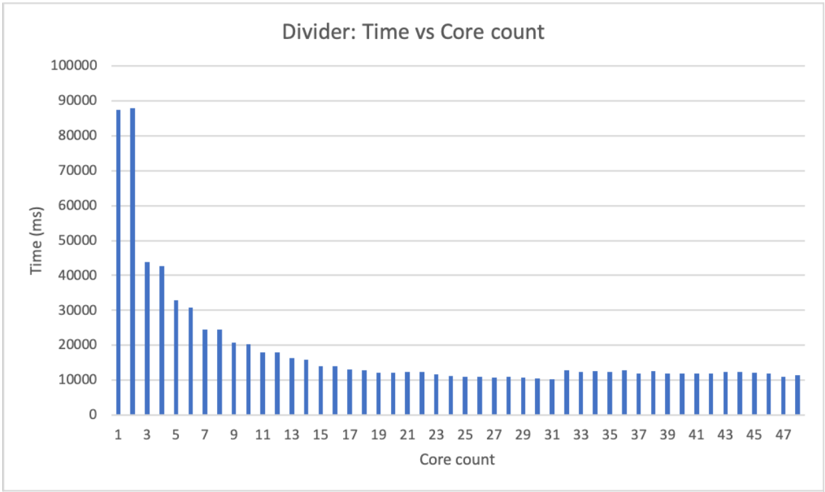

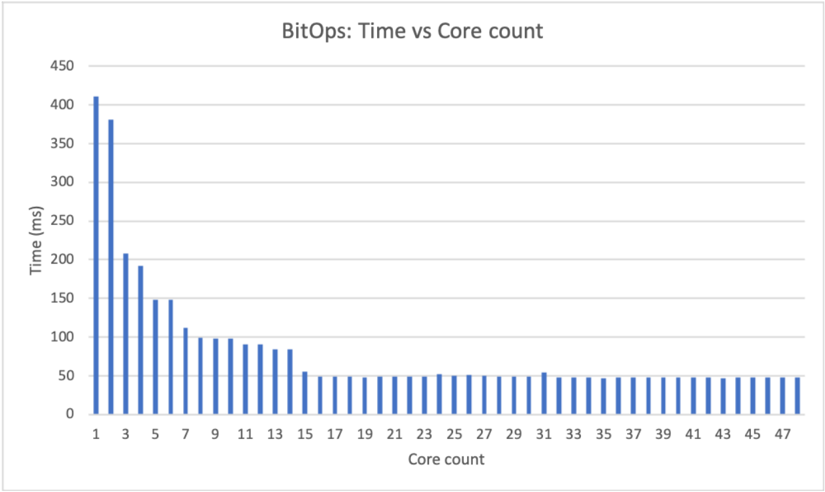

## V. DATA PROCESSING UNITS

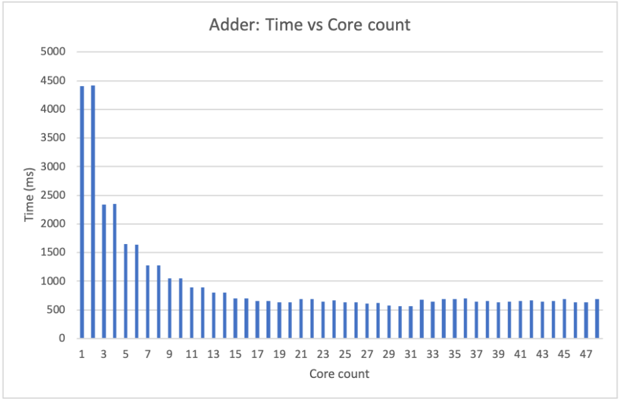

Data processing units are subroutines that perform manipulation on encrypted data, including homomorphic binary arithmetic, homomorphic bitwise operation, and data movement. Under Assumption 1, data processing units are implemented with OpenMP [16] and are designed for parallel computing. If the data processing units exhaust all cores available, the rest of the operations will be serialized. We benchmarked the performance of data processing units with 16-bit and 32-bit vReg size. Benchmarks are based an computing node on Euler. The computing node has 2 NUMA nodes. Each NUMA nodes has two sockets, and each socket has a 12-core Intel Xeon CPU E5-2650 v3 @ 2.30GHz CPU. The 48-core computing node runs CentOS Linux release 8.2.2004 with 128 GB memory.

## A. Load/Store Unit

CryptoEmu employs a load/store architecture. A LOAD instruction reads data from data memory; a STORE instruction writes data to data memory. The TFHE library [2] provides the API for load and store operations on FHE data. If data memory is presented as a file, CryptoEmu invokes the specific LD/ST subroutine, moves the file pointer to the right LD/ST address, and calls the appropriate file IO API, i.e.,

```

import_gate_bootstrapping_ciphertext_fromFile()

or

export_gate_bootstrapping_ciphertext_toFile()

```

Preferably, if the machine has available memory, the entire data file is loaded into a buffer as this approach significantly improves LD/ST instruction's performance. Table II shows LD/ST latency for 16-bit and 32-bit. LD/ST on a buffer is significantly faster than LD/ST on a file. The performance speedup is even more when the data file size is large because LD/ST on file needs to use fseek() function to access data at the address specified by HE instructions.

TABLE II: LD/ST latencies.

| | 16-bit (ms) | 32-bit (ms) |

|----------------|---------------|---------------|

| Load (file) | 0.027029 | 0.0554521 |

| Store (file) | 0.0127804 | 0.0276899 |

| Load (buffer) | 0.0043463 | 0.00778488 |

| Store (buffer) | 0.0043381 | 0.0077692 |

```

#pragma omp parallel sections num_threads(2)

{

#pragma omp section

{

bootsAND(&g_o[0], &a_i[0], &b_i[0], bk);

}

#pragma omp section

{

bootsXOR(&p_o[0], &a_i[0], &b_i[0], bk);

}

}

```

Fig. 5: Parallel optimization for bitwise (g,p) calculation, get gp ()

## B. Adder

CryptoEmu supports a configurable adder unit of variable width. As for the ISA that CryptoEmu supports, adders are either 16-bit or 32-bit. Operating under an assumption that CryptoEmu runs on a host that supports multi-threading, the adder unit is implemented as a parallel prefix adder [17]. The parallel prefix adder has a three-stage structure: pre-calculation of generate and propagate bit; carry propagation; and sum computation. Each stage can be divided into sub-stages and can leverage a multi-core processor. Herein, we use the OpenMP [16] library to leverage parallel computing.

Stage 1: Propagate and generate calculation. Let a and b be the operands to adder, and let a [ i ] and b [ i ] be the i th bit of a and b . In carry-lookahead logic, a [ i ] and b [ i ] generates a carry if a [ i ] AND b [ i ] is 1 and propagates a carry if a [ i ] XOR b [ i ] is 1. This calculation requires an FHE AND gate and an FHE XOR gate, see §III and Fig. 2 for gate bootstrapping. An OpenMP parallel region is created to handle two parallel sections. As shown in Fig. 5, CryptoEmu spawns two threads to execute two OpenMP sections in parallel.

For a 16-bit adder, get gp() calculations are applied on every bit. This process is parallelizable: as shown in Fig. 6, CryptoEmu spawns 16 parallel sections [16], one per bit. Inside each parallel section, the code starts another parallel region that uses two threads. Because of nested parallelism, 32 threads in total are required to calculate every generation and propagate a bit concurrently. If there is an insufficient number of cores, parallel sections will be serialized, which will only affect the efficiency of the emulator.

Stage 2: Carry propagation. Let G i be the carry signal at i th bit, P i be the accumulated propagation bit at i th bit, g i and p i be outputs from propagate and generate calculation. We define operator such that

$$( g _ { x } , p _ { x } ) \odot ( g _ { y } , p _ { y } ) = ( g _ { x } + p _ { x } \cdot g _ { y } , p _ { x } \cdot p _ { y } ) \, .$$

Carry signal G i and accumulated propagation P i can be recursively defined as

$$( G _ { i } , P _ { i } ) = ( g _ { i } , p _ { i } ) \odot ( G _ { i - 1 } , P _ { i - 1 } ) , w h e r e ( G _ { 0 } , P _ { 0 } ) = ( g _ { 0 } , p _ { 0 } ) \, .$$

The above recursive formula is equivalent to

$$( G _ { i \colon j } , P _ { i \colon j } ) = ( G _ { i \colon n } , G _ { i \colon n } ) \odot ( G _ { m \colon j } , P _ { m \colon j } ) , w h e r e i \geq j a n d m \geq n \, .$$

Therefore, carry propagation can be reduced to a parallel scan problem. In CryptoEmu, we defined a routine, get carry() to perform operation . As shown in Fig. 7 , the requires two FHE AND gate and an FHE OR gate. CryptoEmu spawns two threads to perform the operation in parallel.

For a 16-bit adder, we need 4 levels to compute the carry out from the most significant bit. As shown in fig 8, every two arrows that share an arrowhead represents one operation. The operations at the same level can be executed in parallel. In the case of a 16-bit adder, the maximum number of concurrent is 15 at level 1. Because of nested parallelism within the operation, the maximum number of threads required is 30. With a sufficient number of cores, parallel scan reduced carry propagation time from 16 times operation latency, to 4 times operation latency.

Stage 3: Sum calculation. The last stage for parallel prefix adder is sum calculation. Let s i be the sum bit at i th bit, p i be the propagation bit at i th bit, G i be the carry signal at i th bit. Then

$$s _ { i } = p _ { i } \, X O R \, G _ { i } \, .$$

One FHE XOR gate is needed to calculate 1-bit sum. For 16-bit adder, 16 FHE XOR gates are needed. All FHE XOR evaluation are independent, therefore can be executed in parallel. In total 16 threads are required for the best parallel optimization on sum calculation stage.

```

<loc_66><loc_61><loc_379><loc_273><_C++_>#pragma omp parallel sections num_threads(N)

{

#pragma omp section

{

get_gp(&g[0], &p[0], &a[0], &b[0], bk);

}

#pragma omp section

{

get_gp(&g[1], &p[1], &a[1], &b[1], bk);

}

...

#pragma omp section

{

get_gp(&g[14], &p[14], &a[14], &b[14], bk);

}

#pragma omp section

{

get_gp(&g[15], &p[15], &a[15], &b[15], bk);

}

}

```

Fig. 7: Parallel optimization for bitwise carry calculation, get carry ()

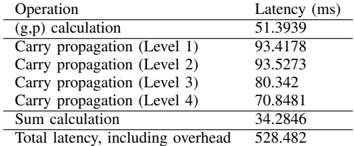

a) Benchmark: the 16-bit adder.: Table III shows benchmarking results for a 16-bit adder unit executed on the target machine describe earlier in the document. If parallelized, the 1-bit get gp() shown in Fig. 5 has one FHE gate latency around 25ms as shown in Table I. Ideally, if sufficient cores are available and there is no overhead from parallel optimization, (g,p) calculation should run 16 get gp() concurrently, and total latency should be 25ms. In reality, 16-bit (g,p) calculation uses 32 threads and takes 51.39ms to complete due to overhead in parallel computing.

For carry propagation calculation, the 1-bit get carry() shown in Fig. 7 has two FHE gate latency of around 50ms when parallelized. In an ideal scenario, each level for carry propagation should run get carry() in parallel, and total latency should be around 50ms. In reality, the 16-bit carry propagation calculation uses 30 threads on level 1 and takes 93.42ms. A collection of 28 threads are used on carry propagation level 2; the operation takes 93.58ms. A collection of 24 threads are used on carry propagation level 3; the operation takes 80.34ms. Finally, 16 threads are used on carry propagation level 4; the operation takes 70.85ms.

Fig. 8: Parallel scan for carry signals

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Carry Lookahead Adder Diagram

### Overview

The image is a schematic diagram illustrating the structure of a carry-lookahead adder. It shows the propagation and generation of carry signals across multiple stages of addition. The diagram uses blocks to represent the generation and propagation of carry signals, and arrows to indicate the flow of these signals.

### Components/Axes

The diagram consists of several rows of blocks, each representing a stage in the carry-lookahead adder. Each block contains two variables, G and P, representing the generate and propagate signals, respectively. The subscripts indicate the bit positions involved in the calculation. Arrows connect the blocks, showing the flow of carry signals.

* **Blocks:** Each block contains "G" and "P" values, representing carry generate and propagate signals. The subscripts indicate the bit positions involved.

* **Arrows:** Arrows indicate the flow of carry signals between blocks.

* **Rows:** The diagram has 5 rows, each representing a stage of carry generation and propagation.

### Detailed Analysis or ### Content Details

**Row 1:**

* The first row contains blocks labeled as "G15, P15" to "G0, P0".

* The blocks are arranged horizontally from left to right.

* The subscripts decrease from 15 to 0.

**Row 2:**

* The second row contains blocks labeled as "G15:14, P15:14" to "G0, P0".

* The blocks are arranged horizontally from left to right.

* The subscripts decrease from 15:14 to 0.

* Arrows connect each block in the first row to a block in the second row. The arrows point diagonally downwards and to the right.

**Row 3:**

* The third row contains blocks labeled as "G15:12, P15:12" to "G0, P0".

* The blocks are arranged horizontally from left to right.

* The subscripts decrease from 15:12 to 0.

* Arrows connect each block in the second row to a block in the third row. The arrows point diagonally downwards and to the right.

**Row 4:**

* The fourth row contains blocks labeled as "G15:8, P15:8" to "G0, P0".

* The blocks are arranged horizontally from left to right.

* The subscripts decrease from 15:8 to 0.

* Arrows connect each block in the third row to a block in the fourth row. The arrows point diagonally downwards and to the right.

**Row 5:**

* The fifth row contains blocks labeled as "G15, P15" to "G0, P0".

* The blocks are arranged horizontally from left to right.

* The subscripts decrease from 15 to 0.

* Arrows connect each block in the fourth row to a block in the fifth row. The arrows point downwards.

**Specific Block Labels:**

* Row 1: G15, P15; G14, P14; G13, P13; G12, P12; G11, P11; G10, P10; G9, P9; G8, P8; G7, P7; G6, P6; G5, P5; G4, P4; G3, P3; G2, P2; G1, P1; G0, P0

* Row 2: G15:14, P15:14; G14:13, P14:13; G13:12, P13:12; G12:11, P12:11; G11:10, P11:10; G10:9, P10:9; G9:8, P9:8; G8:7, P8:7; G7:6, P7:6; G6:5, P6:5; G5:4, P5:4; G4:3, P4:3; G3:2, P3:2; G2:1, P2:1; G1, P1; G0, P0

* Row 3: G15:12, P15:12; G14:11, P14:11; G13:10, P13:10; G12:9, P12:9; G11:8, P11:8; G10:7, P10:7; G9:6, P9:6; G8:5, P8:5; G7:4, P7:4; G6:3, P6:3; G5:2, P5:2; G4:1, P4:1; G3, P3; G2, P2; G1, P1; G0, P0

* Row 4: G15:8, P15:8; G14:7, P14:7; G13:6, P13:6; G12:5, P12:5; G11:4, P11:4; G10:3, P10:3; G9:2, P9:2; G8:1, P8:1; G7, P7; G6, P6; G5, P5; G4, P4; G3, P3; G2, P2; G1, P1; G0, P0

* Row 5: G15, P15; G14, P14; G13, P13; G12, P12; G11, P11; G10, P10; G9, P9; G8, P8; G7, P7; G6, P6; G5, P5; G4, P4; G3, P3; G2, P2; G1, P1; G0, P0

### Key Observations

* The diagram illustrates a hierarchical structure for carry generation and propagation.

* The carry signals are combined across multiple bit positions in each stage.

* The final row represents the carry signals for each bit position.

### Interpretation

The diagram shows the architecture of a carry-lookahead adder, which is a type of adder used in digital circuits to improve speed by reducing the delay associated with carry propagation. The diagram illustrates how carry signals are generated and propagated across multiple stages, allowing for faster addition compared to ripple-carry adders. The hierarchical structure allows for parallel computation of carry signals, which significantly reduces the overall addition time.

</details>

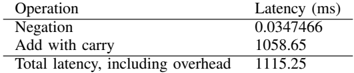

TABLE III: 16-bit adder latency

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Data Table: Operation Latency

### Overview

The image presents a data table showing the latency (in milliseconds) for different operations. The operations include (g,p) calculation, carry propagation at four levels, sum calculation, and total latency including overhead.

### Components/Axes

* **Columns:** The table has two columns: "Operation" and "Latency (ms)".

* **Rows:** Each row represents a specific operation and its corresponding latency.

### Detailed Analysis

Here's a breakdown of the data:

| Operation | Latency (ms) |

| ------------------------------- | ------------- |

| (g,p) calculation | 51.3939 |

| Carry propagation (Level 1) | 93.4178 |

| Carry propagation (Level 2) | 93.5273 |

| Carry propagation (Level 3) | 80.342 |

| Carry propagation (Level 4) | 70.8481 |

| Sum calculation | 34.2846 |

| Total latency, including overhead | 528.482 |

* **(g,p) calculation:** 51.3939 ms

* **Carry propagation (Level 1):** 93.4178 ms

* **Carry propagation (Level 2):** 93.5273 ms

* **Carry propagation (Level 3):** 80.342 ms

* **Carry propagation (Level 4):** 70.8481 ms

* **Sum calculation:** 34.2846 ms

* **Total latency, including overhead:** 528.482 ms

### Key Observations

* Carry propagation at levels 1 and 2 have the highest individual latencies among the listed operations.

* The total latency, including overhead, is significantly higher than the sum of the individual operation latencies, indicating a substantial overhead component.

* The latency of carry propagation decreases as the level increases from 2 to 4.

### Interpretation

The data suggests that carry propagation is a significant bottleneck in the overall process, especially at the initial levels (1 and 2). The substantial difference between the sum of individual operation latencies and the total latency indicates that overhead contributes significantly to the overall processing time. The decreasing latency in carry propagation from level 2 to 4 may indicate optimizations or reduced complexity at higher levels.

</details>

| Operation | Latency (ms) |

|-----------------------------------|----------------|

| (g,p) calculation | 51.3939 |

| Carry propagation (Level 1) | 93.4178 |

| Carry propagation (Level 2) | 93.5273 |

| Carry propagation (Level 3) | 80.342 |

| Carry propagation (Level 4) | 70.8481 |

| Sum calculation | 34.2846 |

| Total latency, including overhead | 528.482 |

For sum calculation, 1-bit sum calculation uses one FHE XOR gate, with latency around 25ms. Ideally, if CryptoEmu runs all 16 XOR gates in parallel without parallel computing overhead, the latency for 16-bit sum calculation should be around 25ms. In reality, due to OpenMP overhead, the 16-bit sum calculation uses 16 threads and takes 34.28ms to complete.

In total, a 16-bit adder's latency is 486.66ms. This result includes latency for all stages, plus overheads like variable declaration, memory allocation, and temporary variable manipulation.

b) Benchmark: the 32-bit adder.: Table IV shows benchmarking results for a 32-bit adder unit executed on the target machine describe earlier in the document. Note that get gp() and get carry() have the same performance as the 16-bit adder. If sufficient cores are available, in the absence of OpenMP overhead, the (g,p) calculation should run 32 get gp() concurrently for 32-bit adder at a total latency of 25ms. In reality, the 32-bit (g,p) calculation uses 32 threads and takes 94.92ms to complete.

TABLE IV: 32-bit adder latency

| Operation | Latency (ms) |

|-----------------------------------|----------------|

| (g,p) calculation | 94.9246 |

| Carry propagation (Level 1) | 147.451 |

| Carry propagation (Level 2) | 133.389 |

| Carry propagation (Level 3) | 127.331 |

| Carry propagation (Level 4) | 112.268 |

| Carry propagation (Level 5) | 91.5781 |

| Sum calculation | 49.0098 |

| Total latency, including overhead | 941.12 |

For carry propagation calculation, if sufficient cores are available, each level for carry propagation should run get carry() in parallel. Without parallel computing overhead, total latency should be around 50ms. Level 0 of 32-bit carry propagation calculation uses 62 threads. Because the platform on which CryptoEmu is tested has only 48 cores, level 0 carry propagation calculation is serialized and takes 147.45ms to complete. Level 1 carry propagation calculation uses 60 threads, and similar to level 0, its calculation is serialized. Level 1 carry propagation calculation takes 133.39ms. Level 3 carry propagation calculation that uses 56 threads is serialized and takes 127.33ms to complete. Level 4 carry propagation calculation uses 48 threads, and it is possible to run every get carry() in parallel on our 48-core workstation. Level 4 carry propagation calculation takes 112.27ms. Level 5 carry propagation calculation uses 32 threads. It is able to execute all get carry() concurrently; level 5 takes 91.58ms to complete.

For sum calculation, the 32-bit adder spawns 32 threads in parallel to perform FHE XOR operation if sufficient cores are available. The latency for the 32-bit sum calculation should be around 25ms. In reality, the 32-bit sum calculation uses 32 threads and takes 49ms to complete.

In total, 32-bit adder's latency is 941.12ms. This result includes latency for all stages, plus overheads like variable declaration, memory allocation, and temporary variable manipulation.

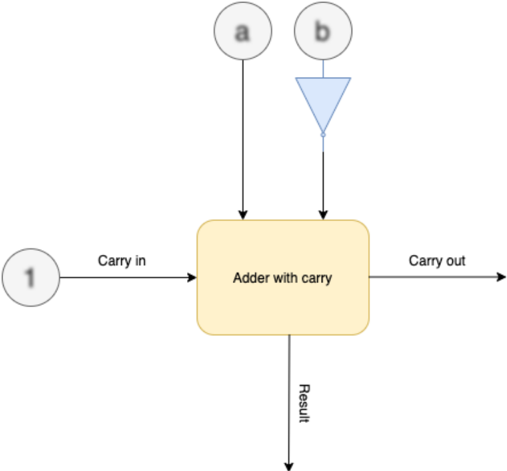

## C. Subtractor

The subtractor unit supports variable ALU size. CryptoEmu supports subtractors with 16-bit operands or 32-bit operands. Let a be the minuend, b be the subtrahend, and diff be the difference. Formula for 2's complement subtraction is:

$$a + N O T ( b ) + 1 = d i f f \, .$$

As shown in Fig. 9, CryptoEmu reuses adder units in §V-B to perform homomorphic subtractions. On the critical path, extra homomorphic NOT gates are used to create subtrahend's complement. For a subtractor with N-bit operands, N homomorphic NOT operations need to be applied on the subtrahend. While all FHE NOT gates can be evaluated in parallel, in §III we showed that FHE NOT gates do not need bootstrapping, and is relatively fast (around 0.0007ms latency per gate) comparing to bootstrapped FHE gates. Therefore, parallel execution is not necessary. Instead, the homomorphic NOT operation is implemented in an iterative for-loop, as shown below:

$$\begin{array} { r l } & { f o r ( i n t \, i = 0 ; \, i < N ; \, + + i ) } \\ & { b o o t s N O T ( \& b _ { - } n e g [ i ] , \, & b k [ i ] , \, b k ) ; } \end{array}$$

In addition to homomorphic NOT operation on subtrahend, the carry out bit from bit 0 needs to be evaluated with an OR gate because carry in is 1. Therefore, the adder in §V-B is extended to take a carry in bit. When sufficient cores are available on the machine, a subtractor units adds negation and carry bit calculation to the adder unit's critical path.

Fig. 9: Subtractor architecture. Blurry text in the figure denotes encrypted data.

<details>

<summary>Image 7 Details</summary>

### Visual Description

## Diagram: Adder with Carry

### Overview

The image is a diagram of an adder with carry, illustrating the inputs and outputs of the adder. The diagram shows the flow of data into and out of the adder, including carry-in, carry-out, and the result.

### Components/Axes

* **Inputs:**

* 'a' - Input 'a' to the adder. Represented by a circle with the letter 'a' inside.

* 'b' - Input 'b' to the adder. Represented by a circle with the letter 'b' inside, connected to a blue triangle pointing downwards.

* 'Carry in' - Carry-in input to the adder. Represented by a circle with the number '1' inside.

* **Process:**

* 'Adder with carry' - The main processing block, represented by a yellow rounded rectangle.

* **Outputs:**

* 'Carry out' - Carry-out output from the adder.

* 'Result' - The result of the addition.

### Detailed Analysis

* The diagram shows three inputs to the "Adder with carry" block: 'a', 'b', and 'Carry in'.

* The 'a' input comes directly from a source labeled 'a'.

* The 'b' input comes from a source labeled 'b', which is connected to a blue triangle pointing downwards.

* The 'Carry in' input comes from a source labeled '1'.

* The "Adder with carry" block has two outputs: 'Carry out' and 'Result'.

* The 'Carry out' output goes to the right.

* The 'Result' output goes downwards.

### Key Observations

* The diagram illustrates the basic components and flow of data in an adder with carry.

* The blue triangle pointing downwards connected to 'b' is likely an inverter.

### Interpretation

The diagram represents a full adder circuit. The 'a' and 'b' inputs are the two numbers being added. The 'Carry in' input is the carry from the previous stage of addition. The 'Adder with carry' block performs the addition and generates two outputs: the 'Result' (sum) and the 'Carry out' (carry to the next stage). The blue triangle pointing downwards connected to 'b' likely represents an inverter, suggesting that the 'b' input might be inverted before being fed into the adder.

</details>

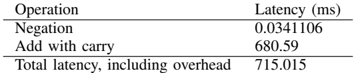

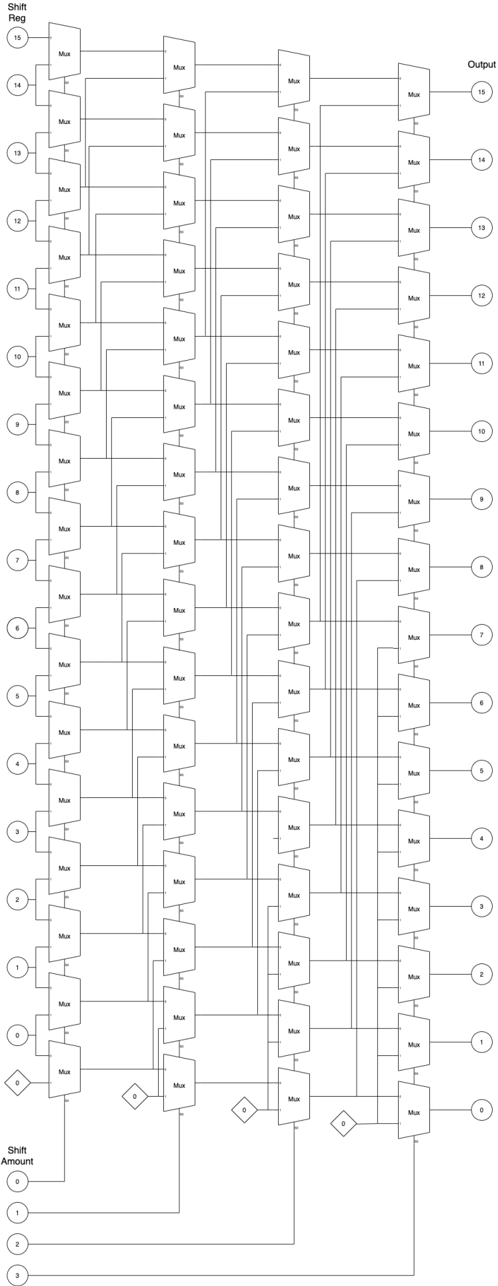

a) Benchmark.: Tables V and VI report benchmark results for the 16-bit and 32-bit subtractors on the target machine. Negation on the subtrahend takes a trivial amount of time to complete. The homomorphic addition is the most time-consuming operation in the subtractor unit. The homomorphic addition is a little slower than the homomorphic additions in §V-B because the adder needs to use extra bootstrapped FHE gates to process carry in and calculate carry out from sum bit 0.

TABLE V: 16-bit subtractor latency

<details>

<summary>Image 8 Details</summary>

### Visual Description

## Data Table: Operation Latency

### Overview

The image presents a data table showing the latency of different operations, measured in milliseconds (ms). The table includes the latency for "Negation", "Add with carry", and the "Total latency, including overhead".

### Components/Axes

* **Columns:**

* Operation

* Latency (ms)

* **Rows:**

* Negation

* Add with carry

* Total latency, including overhead

### Detailed Analysis or ### Content Details

| Operation | Latency (ms) |

| ------------------------------- | ------------ |

| Negation | 0.0341106 |

| Add with carry | 680.59 |

| Total latency, including overhead | 715.015 |

### Key Observations

* The "Add with carry" operation has a significantly higher latency (680.59 ms) compared to the "Negation" operation (0.0341106 ms).

* The "Total latency, including overhead" (715.015 ms) is greater than the "Add with carry" latency, indicating that the overhead and negation latency contribute to the total latency.

### Interpretation

The data suggests that the "Add with carry" operation is the most time-consuming operation in this context. The total latency is only slightly higher than the "Add with carry" latency, implying that the overhead and negation operations contribute a relatively small amount to the overall latency. The table highlights the performance bottleneck associated with the "Add with carry" operation.

</details>

| Operation | Latency (ms) |

|-----------------------------------|----------------|

| Negation | 0.0341106 |

| Add with carry | 680.59 |

| Total latency, including overhead | 715.015 |

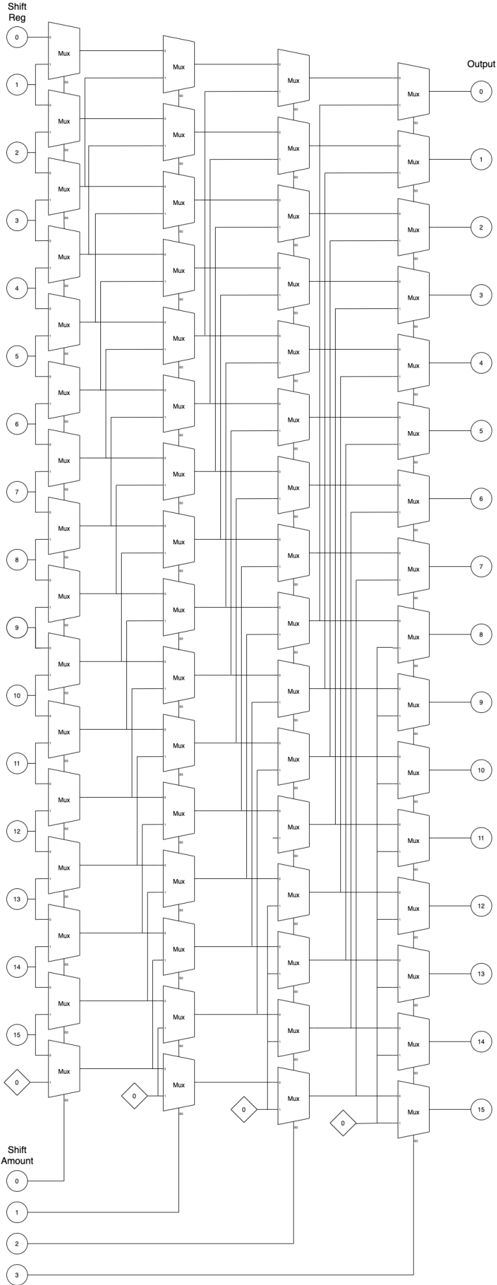

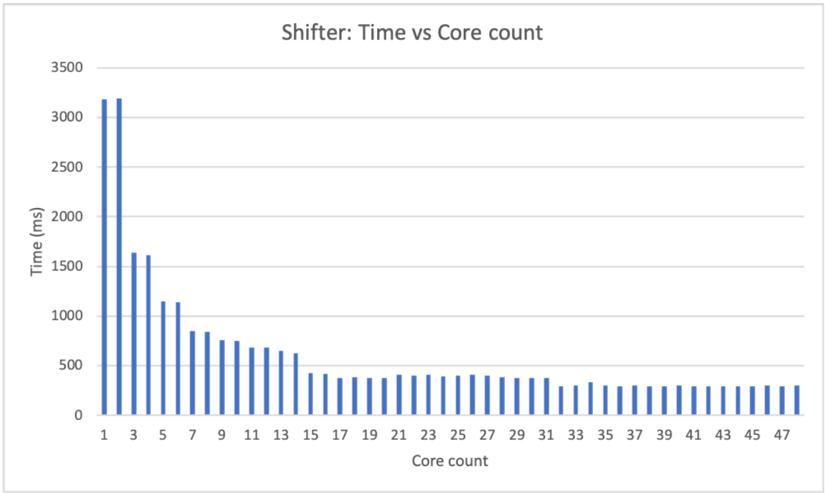

## D. Shifter

CryptoEmu supports three types of shifters: logic left shift (LLS), logic right shift (LRS), and arithmetic right shift (ARS). Each shifter type has two modes: immediate and register mode. In immediate mode, the shift amount is in cleartext. For example, the following instruction shifts encrypted data in R0 to left by 1 bit and assigns the shifted value to R0.

<details>

<summary>Image 9 Details</summary>

### Visual Description

Icon/Small Image (238x17)

</details>

This instruction is usually issued by the cloud to process user data. Shift immediate implementation is trivial. The shifter calls bootCOPY() API to move all data to the input direction by the specified amount. The LSB or MSB will be assigned to an encrypted constant using the bootCONSTANT() API call. Because neither bootCOPY() nor bootCONSTANT() need to be bootstrapped, they are fast operations, see Table III. Therefore, an iterative loop is used for shifting. Parallel optimization is unnecessary.

In register mode, the shift amount is an encrypted data stored in the input register. For example, the following instruction shifts encrypted data in R0 to left by the value stored in R1 and assign shifted value to R0.

```

LLS R0 R0 R1

```

Because the shifting amount stored in R1 is encrypted, the shifter can't simply move all encrypted bits left/right by a certain amount. The shifter is implemented as a barrel shifter, with parallel computing enabled.

a) Logic left shift.: Figure 10 shows the architecture for the 16-bit LLS. In the figure, numbers in the bubbles denote encrypted data in the shift register and the shift amount register. Numbers in the diamond denote an encrypted constant value generated by the bootsCONSTANT() API. The 16-bit LLS has four stages. In each stage, based on the encrypted shift amount, the FHE MUX homomorphically selects an encrypted bit from the shift register. In the end, the LLS outputs encrypted shifted data. FHE MUX is an elementary logic unit provided by TFHE library [2]. FHE MUX needs to be bootstrapped twice, and its latency is around 50ms. Therefore, it is reasonable to spawn multiple threads to execute all MUX select in parallel in each stage. For the 16-bit LLS, each stage needs 16 threads to perform a homomorphic MUX select, as shown in the following code:

```

its latency is around 50ms. Therefore, it is reasonable to spawn multiple threads to execute all MUX select stage. For the 16-bit LLS, each stage needs 16 threads to perform a homomorphic MUX select, as show code:

{

#pragma omp parallel sections num_threads(16)

{

#pragma omp section

{

bootsMUX(&out[0], &amt[0], &zero[0], &in[0], bk);

}

#pragma omp section

{

bootsMUX(&out[1], &amt[0], &in[0], &in[1], bk);

}

...

#pragma omp section

{

bootsMUX(&out[14], &amt[0], &in[13], &in[14], bk);

}

#pragma omp section

{

bootsMUX(&out[15], &amt[0], &in[14], &in[15], bk);

}

}

```

TABLE VI: 32-bit subtractor latency

<details>

<summary>Image 10 Details</summary>

### Visual Description

## Table: Operation Latency

### Overview

The image presents a table showing the latency (in milliseconds) for different operations. It includes the latency for negation, addition with carry, and the total latency including overhead.

### Components/Axes

* **Columns:**

* Operation

* Latency (ms)

* **Rows:**

* Negation

* Add with carry

* Total latency, including overhead

### Detailed Analysis

The table provides the following latency values:

* **Negation:** 0.0347466 ms

* **Add with carry:** 1058.65 ms

* **Total latency, including overhead:** 1115.25 ms

### Key Observations

* The "Add with carry" operation has a significantly higher latency compared to "Negation".

* The "Total latency, including overhead" is greater than the "Add with carry" latency, indicating that overhead contributes to the overall latency.

### Interpretation

The data suggests that the "Add with carry" operation is computationally more expensive than "Negation". The difference between the "Total latency" and the "Add with carry" latency (1115.25 - 1058.65 = 56.6 ms) represents the overhead associated with the operations. This overhead could include factors such as memory access, function call overhead, or other system-level processes. The table highlights the relative performance costs of different operations, which can be useful for optimizing code or hardware designs.

</details>

| Operation | Latency (ms) |

|-----------------------------------|----------------|

| Negation | 0.0347466 |

| Add with carry | 1058.65 |

| Total latency, including overhead | 1115.25 |

Fig. 10: LLS architecture

<details>

<summary>Image 11 Details</summary>

### Visual Description

## Diagram: Barrel Shifter Architecture

### Overview

The image depicts a barrel shifter architecture, a digital circuit that can shift a data word by a specified number of bits without using any sequential logic, only combinational logic. The diagram shows a 16-bit barrel shifter implemented using multiple stages of multiplexers (Mux). The shift amount is controlled by the input at the bottom, and the output is the shifted data at the top.

### Components/Axes

* **Shift Reg:** (Shift Register) - Labeled on the top-left. Represents the input data to be shifted. Values range from 0 to 15, arranged vertically.

* **Output:** Labeled on the top-right. Represents the output data after shifting. Values range from 0 to 15, arranged vertically.

* **Shift Amount:** Labeled on the bottom-left. Represents the amount by which the input data is shifted. Values range from 0 to 3, arranged vertically.

* **Mux:** (Multiplexer) - Labeled on each multiplexer component.

* **Diamonds with "0":** These represent a constant input of 0 to the multiplexers.

### Detailed Analysis

The diagram consists of several columns of multiplexers. Each multiplexer selects between two inputs based on the shift amount. The shift amount inputs (0-3) control the selection of the multiplexers in each stage.

* **Input Stage (Shift Reg):** The input data is labeled from 0 to 15, arranged vertically on the left side. Each input is connected to the first column of multiplexers.

* **Multiplexer Stages:** There are multiple columns of multiplexers. Each multiplexer has two inputs: one from the previous stage and one from a shifted position. The shift amount determines which input is selected.

* **Shift Amount Control:** The shift amount inputs (0, 1, 2, 3) at the bottom control the selection of the multiplexers in each stage. The diamond shapes with "0" inside represent a constant input of 0 to the multiplexers.

* **Output Stage:** The output data is labeled from 0 to 15, arranged vertically on the right side. Each output is connected to the last column of multiplexers.

**Detailed Connections:**

* **Shift Amount 0:** When the shift amount is 0, the multiplexers select the input directly from the previous stage, resulting in no shift.

* **Shift Amount 1:** When the shift amount is 1, the multiplexers select the input shifted by one position.

* **Shift Amount 2:** When the shift amount is 2, the multiplexers select the input shifted by two positions.

* **Shift Amount 3:** The diagram does not explicitly show the connections for shift amount 3, but it can be inferred that the multiplexers would select the input shifted by three positions.

### Key Observations

* The diagram illustrates a 16-bit barrel shifter architecture.

* The shift amount is controlled by the input at the bottom.

* The output is the shifted data at the top.

* The multiplexers select between two inputs based on the shift amount.

* The diamond shapes with "0" inside represent a constant input of 0 to the multiplexers.

### Interpretation

The barrel shifter architecture allows for efficient shifting of data by a specified number of bits. The multiplexers select between different shifted positions based on the shift amount. This architecture is commonly used in digital circuits for various applications, such as data processing, signal processing, and cryptography. The use of multiplexers allows for a fast and efficient implementation of the shift operation. The diagram provides a clear representation of the barrel shifter architecture and its components.

</details>

In a parallel implementation with zero overhead, each stage should have one FHE MUX latency of around 50ms. Therefore, in an ideal scenario, four stages would have a latency of around 200ms.

b) Benchmark: Logic left shift.: Table VII shows the benchmark results for the 16-bit LLS on the target platform. Each stage spawns 16 threads to run all FHE MUX in parallel with latency from 50-90ms, a latency that is in between 1 FHE MUX latency to 2 FHE MUX latency, due to parallel computing overhead. In total, it takes around 290ms to carry out a homomorphic LLS operation on 16-bit encrypted data.

TABLE VII: 16-bit LLS latency

| Operation | Latency (ms) |

|-----------------------------------|----------------|

| Mux select (Stage 1) | 87.3295 |

| Mux select (Stage 2) | 82.2656 |

| Mux select (Stage 3) | 76.6871 |

| Mux select(Stage 4) | 55.5396 |

| Total latency, including overhead | 287.92 |

Table VIII shows the benchmark result for the 32-bit LLS. Each stage spawns 32 threads and takes around 90-100ms to complete. In total, it takes around 450-500ms for a homomorphic LLS operation on 32-bit encrypted data.

TABLE VIII: 32-bit LLS latency

| Operation | Latency (ms) |

|-----------------------------------|----------------|

| Mux select (Stage 1) | 101.92 |

| Mux select (Stage 2) | 89.7695 |

| Mux select (Stage 3) | 98.8634 |

| Mux select(Stage 4) | 91.3939 |

| Mux select (Stage 5) | 91.8491 |

| Total latency, including overhead | 474.739 |

c) Logic right shift/Arithmetic right shift.: LRS has an architecture that is similar to the architecture of LLS. Figure 11 shows the architecture for the 16-bit LRS. Compared to Fig. 10, the only difference between LLS and LRS is the bit order of the input register and output register. To reuse the LRS architecture for ARS, one should simply pass MSB of the shift register as the shift-in value, shown as the numbers in the diamond in Fig. 11. The LRS and ARS shifter implementation is similar to that of LLS. For 16-bit LRS/ARS, CryptoEmu spawns 16 parallel threads to perform a homomorphic MUX select at each stage.

Table IX shows the benchmark result for the 16-bit LRS and ARS. LRS and ARS have similar performance. At each stage, LRS/ARS utilizes 16 threads, and each stage takes 50-90ms to complete. Single stage latency is between 1 FHE MUX latency to 2 FHE MUX latency, due to parallel computing overhead. In total, 16-bit LRS/ARS latency is around 290-300ms.

TABLE IX: 16-bit LRS, ARS latency

| Operation | LRS Latency (ms) | ARS Latency (ms) |

|-----------------------------------|--------------------|--------------------|

| Mux select (Stage 1) | 88.1408 | 87.7689 |

| Mux select (Stage 2) | 85.5154 | 79.6416 |

| Mux select (Stage 3) | 75.0295 | 75.2938 |

| Mux select(Stage 4) | 55.9639 | 54.9246 |

| Total latency, including overhead | 290.517 | 296.124 |

Table X shows the benchmark result for the 32-bit LRS and ARS. The 32-bit LRS/ARS has five stages. Each stage creates 32 parallel threads to evaluate FHE MUX and takes 90-100ms to complete. Single stage latency is around 2 FHE MUX latency. In total, the 32-bit LRS/ARS takes around 470-500ms to complete a homomorphic LRS/ARS operation on 32-bit encrypted data.

TABLE X: 32-bit LRS, ARS latency

| Operation | LRS Latency (ms) | ARS Latency (ms) |

|-----------------------------------|--------------------|--------------------|

| Mux select (Level 1) | 104.33 | 106.132 |

| Mux select (Level 2) | 104.75 | 95.3209 |

| Mux select (Level 3) | 90.2377 | 90.1364 |

| Mux select(Level 4) | 89.7183 | 90.5383 |

| Mux select(Level 5) | 90.6315 | 94.4654 |

| Total latency, including overhead | 472.896 | 491.787 |

Fig. 11: LRS architecture

<details>

<summary>Image 12 Details</summary>

### Visual Description

## Diagram: Barrel Shifter

### Overview

The image is a schematic diagram of a 16-bit barrel shifter implemented using multiplexers (Mux). It shows how the input bits are shifted based on the shift amount. The diagram consists of multiple stages of multiplexers arranged in columns, with connections indicating the data flow for different shift amounts.

### Components/Axes

* **Shift Reg:** (Top-left) Input register with 16 bits, labeled 0 to 15.

* **Output:** (Top-right) Output register with 16 bits, labeled 0 to 15.

* **Mux:** Multiplexer component, repeated throughout the diagram.

* **Shift Amount:** (Bottom-left) Shift amount input, labeled 0 to 3.

* **Diamonds:** Control signals for the multiplexers, labeled "0".

### Detailed Analysis

The diagram shows a multi-stage arrangement of multiplexers. Each stage corresponds to a different power of 2 shift (1, 2, 4, 8).

* **Input (Shift Reg):** The input is a 16-bit register, with each bit labeled from 0 to 15, positioned vertically on the left side of the diagram. Each bit is connected to the first column of multiplexers.

* **Multiplexers (Mux):** The diagram uses multiple columns of multiplexers. Each multiplexer has two inputs and one output. The control signal for each multiplexer determines which input is passed to the output. The multiplexers are arranged in a grid-like structure.

* **Shift Amount:** The shift amount is controlled by the input at the bottom of the diagram, labeled 0 to 3. These inputs control the selection lines of the multiplexers.

* **Control Signals:** The diamond shapes labeled "0" represent control signals that determine the selection of the multiplexers.

* **Output:** The output is a 16-bit register, with each bit labeled from 0 to 15, positioned vertically on the right side of the diagram. Each bit is the output of the last column of multiplexers.

**Data Flow:**

The data flows from left to right. The input bits from the "Shift Reg" are fed into the first column of multiplexers. Based on the "Shift Amount" and the control signals, the multiplexers select the appropriate input and pass it to the next stage. This process continues through each column of multiplexers until the final shifted output is obtained at the "Output" register.

**Specific Connections:**

* The first column of multiplexers takes the input from the "Shift Reg".

* The second column of multiplexers takes the output from the first column.

* The third column of multiplexers takes the output from the second column.

* The fourth column of multiplexers takes the output from the third column.

* The output of the fourth column is the final shifted output.

### Key Observations

* The diagram illustrates a 16-bit barrel shifter.

* The shifter is implemented using multiplexers.

* The shift amount is controlled by the input at the bottom of the diagram.

* The control signals determine the selection of the multiplexers.

* The data flows from left to right through the multiplexer stages.

### Interpretation

The diagram demonstrates how a barrel shifter can be implemented using multiplexers. A barrel shifter is a digital circuit that can shift a data word by a specified number of bits without using any sequential logic, making it faster than shift registers for multi-bit shifts. The arrangement of multiplexers allows for efficient shifting of the input bits based on the shift amount. The control signals determine the specific connections and data flow required to achieve the desired shift. The diagram provides a clear visual representation of the internal workings of a barrel shifter, highlighting the role of multiplexers in achieving the shifting functionality.

</details>

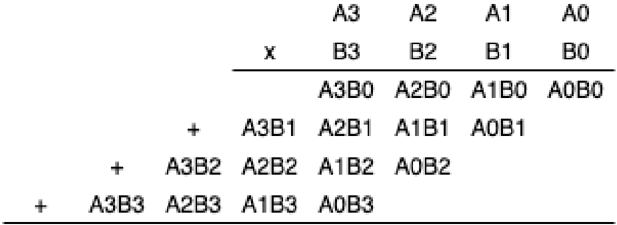

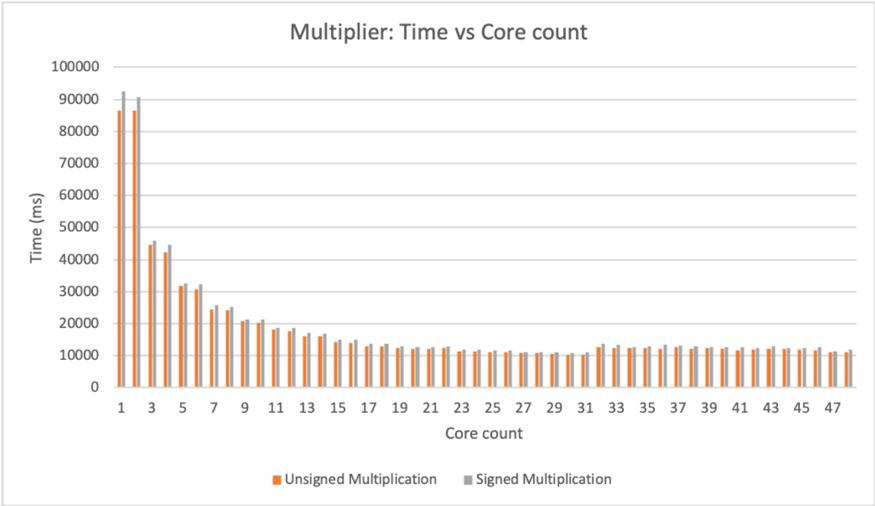

## E. Multiplier

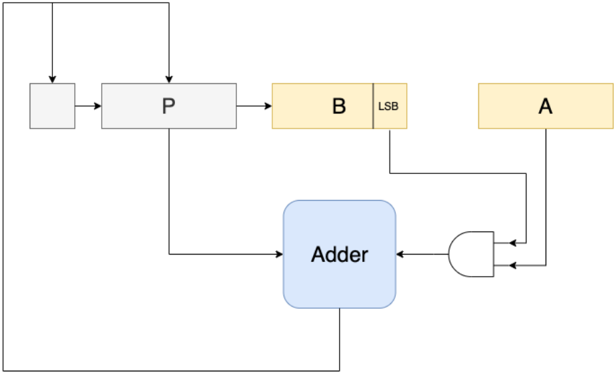

Design consideration. Binary multiplication can be treated as a summation of partial products [18], see Fig. 12. Therefore, adder units mentioned in §V-B can be reused for partial sum computation. Summing up all partial products is a sum reduction operation, and therefore can be parallelized.

Fig. 12: Binary multiplication

<details>

<summary>Image 13 Details</summary>

### Visual Description

## Multiplication Diagram: Binary Multiplication

### Overview

The image depicts the multiplication of two 4-bit binary numbers, A and B, using the standard multiplication algorithm. It shows the partial products generated by multiplying each bit of B with the entire number A, and then summing these partial products to obtain the final result.

### Components/Axes

* **Top Row:** A3, A2, A1, A0 (representing the bits of number A, from most significant to least significant)

* **Second Row:** x, B3, B2, B1, B0 (representing the bits of number B, from most significant to least significant, with 'x' indicating multiplication)

* **Partial Products:**

* A3B0, A2B0, A1B0, A0B0 (A multiplied by B0)

* A3B1, A2B1, A1B1, A0B1 (A multiplied by B1)

* A3B2, A2B2, A1B2, A0B2 (A multiplied by B2)

* A3B3, A2B3, A1B3, A0B3 (A multiplied by B3)

* **'+' signs:** Indicate the addition of the partial products.

### Detailed Analysis or ### Content Details

The diagram illustrates the multiplication process as follows:

1. **First Partial Product:** A is multiplied by B0, resulting in A3B0, A2B0, A1B0, A0B0.

2. **Second Partial Product:** A is multiplied by B1, resulting in A3B1, A2B1, A1B1, A0B1. This partial product is shifted one position to the left.

3. **Third Partial Product:** A is multiplied by B2, resulting in A3B2, A2B2, A1B2, A0B2. This partial product is shifted two positions to the left.

4. **Fourth Partial Product:** A is multiplied by B3, resulting in A3B3, A2B3, A1B3, A0B3. This partial product is shifted three positions to the left.

The '+' signs indicate that these partial products are then added together to obtain the final product.

### Key Observations

* The diagram clearly shows the bitwise multiplication and the necessary left shifts for each partial product.

* The representation uses symbolic notation (A3B0, etc.) to represent the AND operation between the corresponding bits of A and B.

### Interpretation

The diagram demonstrates the standard algorithm for binary multiplication. Each partial product is generated by performing a bitwise AND operation between the bits of the multiplicand (A) and a single bit of the multiplier (B). The partial products are then shifted left by an amount corresponding to the bit position of the multiplier and added together. This process is fundamental to digital arithmetic and is implemented in hardware multipliers within computer systems. The diagram provides a visual representation of this process, making it easier to understand the underlying logic.

</details>

However, the best parallel optimization cannot be achieved on our 48-core computing node. For a 16-bit wide multiplier, the product is a 32-bit encrypted value. Therefore, a 32-bit adder is required to carry out the homomorphic addition. Each 32-bit adder has peak thread usage of 64 threads: 31 threads with nested parallel (g,p) calculation that uses two threads. Thus, on the server used (with 48 cores), the 32-bit adder has to be partially serialized. For the 16-bit multiplier's parallel sum reduction, at most eight summation occur in parallel and each summation uses a 32-bit adder. The peak thread usage is 512 threads. For a 32-bit multiplier, maximum thread usage is 2048 threads. Thus, because homomorphic multiplication is a computationally demanding process, the server used does not have sufficient resources to do all operations in parallel. Homomorphic multiplication will thus show suboptimal performance on the server used in this project.

Based on the design consideration above, CryptoEmu implements a carry-save multiplier [19] that supports variable ALU width. Carry-save multiplier uses an adder described in V-B to sum up partial products in series.

- 1) Unsigned multiplication: Figure 13 shows the multiplier's architecture. For a 16-bit multiplier, A and B are 16-bit operands stored in vRegs. P is an intermediate 16-bit vReg to store partial products. Adder is a 16-bit adder with a carry in bit, see §V-B.

On startup, vReg P is initialized to encrypted 0 using TFHE library's bootCONSTANT() API. Next, we enter an iterative loop and homomorphically AND all bits in vReg A with LSB of vReg B , and use the result as one of the operands to the 16-bit adder. Data stored in vReg P is then passed to the 16-bit adder as the second operand. The adder performs the homomorphic addition to output an encrypted carry out bit. Next, we right shift carry out, vReg P and vReg B , and reached the end of the iterative for-loop. We repeat the for-loop 16 times, and the final product is a 32-bit result stored in vReg P and vReg B . The pseudo-code below shows the 16-bit binary multiplication algorithm.

```

P = 0;

for 16 times:

(P, carry out) = P + (B[0] ? A : 0)

[carry out, P, B] = [carry out, P, B] >> 1;

return [P, B]

```

For implementation, an N-bit multiplier uses N threads to concurrently evaluate all the FHE AND gates. The adder is already parallel optimized. The rest of the multiplication subroutine is executed sequentially. Therefore, the multiplier is a computationally expensive unit in CryptoEmu.

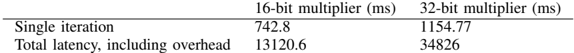

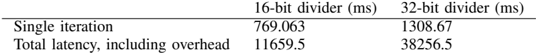

- a) Benchmark: Unsigned multiplication.: Table XI shows benchmark for 16-bit unsigned multiplication and 32-bit unsigned multiplication. A single pass for partial product summation takes around 715ms and 925ms, respectively. Total latency is roughly equal to N times single iteration's latency because summation operations are in sequence.

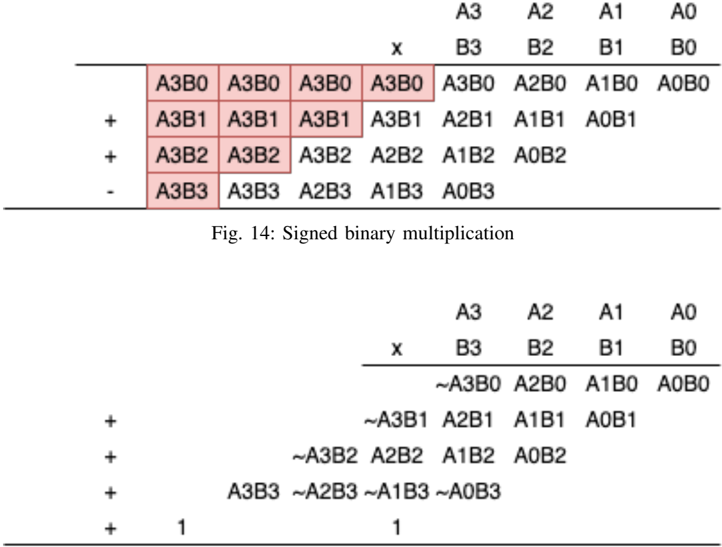

- 2) Signed multiplication: Signed multiplication is implemented using the carry-save multiplier in Figure 13, with slight modifications. For N-bit signed multiplication, partial products need to be signed extended to 2N bit. Figure 14 shows the partial product summation for 4-bit signed multiplication. This algorithm requires a 2N-bit adder and a 2N-bit subtractor for

TABLE XI: Unsigned multiplication latency

| | 16-bit multiplier (ms) | 32-bit multiplier (ms) |

|-----------------------------------|--------------------------|--------------------------|

| Single iteration | 715.812 | 926.79 |

| Total latency, including overhead | 11316.8 | 36929.2 |

Fig. 13: Carry save multiplier

<details>

<summary>Image 14 Details</summary>

### Visual Description

## Diagram: Data Flow Diagram

### Overview

The image is a data flow diagram illustrating a computational process. It shows several components (registers, adder, AND gate) and the flow of data between them.

### Components/Axes

* **Registers:**

* A (yellow)

* B (yellow)

* P (gray)

* Unnamed register (gray)

* **Adder:** (blue)

* **AND Gate:** (white)

* **LSB:** Label indicating the Least Significant Bit of register B.

### Detailed Analysis or ### Content Details

1. **Data Flow:**

* Data flows from the unnamed register to register P.

* Data flows from register P to register B.

* Data flows from the LSB of register B to the AND gate.

* Data flows from register A to the AND gate.

* The output of the AND gate flows to the Adder.

* Data flows from register P to the Adder.

* The output of the Adder flows back to the unnamed register and to register P.

* Data flows from register B to register A.

### Key Observations

* The diagram shows a feedback loop involving the Adder, the unnamed register, and register P.

* The LSB of register B and register A are inputs to an AND gate, whose output is fed into the Adder.

### Interpretation

The diagram represents a computational process, possibly an arithmetic operation or a state machine. The registers A, B, and P likely hold data, while the Adder performs an addition operation. The AND gate seems to control the input to the Adder based on the LSB of register B and the value in register A. The feedback loop suggests an iterative process where the output of the Adder is used in subsequent calculations. The data flow from register B to register A suggests a data transfer or assignment operation.

</details>

N-bit signed multiplication. The algorithm is further simplified with a 'magic number' [19]. Figure 15 shows the simplified signed multiplication. Based on this algorithm, the unsigned carry-save multiplier is modified to adopt signed multiplication.

| | | | | A3 | A2 | A1 | AO |

|------|------|-----------|------|----------------|-----------|------|----------|

| A3B0 | A3B0 | | X | B3 | B2 | B1 | BO |

| A3B1 | | A3B0 A3B1 | A3B0 | A3B0 | A2B0 A1B1 | | A1B0A0B0 |

| | A3B1 | | A3B1 | A2B1 | | A0B1 | |

| A3B2 | A3B2 | A3B2 | A2B2 | A1B2 | A0B2 | | |

| A3B3 | A3B3 | | | A2B3 A1B3 A0B3 | | | |

Fig. 15: Simplified signed binary multiplication

<details>

<summary>Image 15 Details</summary>

### Visual Description

## Diagram: Signed Binary Multiplication

### Overview

The image presents two diagrams illustrating signed binary multiplication. The first diagram shows a multiplication process with terms like A3B0, A3B1, etc., some of which are highlighted in red. The second diagram shows a similar multiplication process, but with some terms complemented (indicated by the tilde symbol ~).

### Components/Axes

**First Diagram:**

* **Top Row:** A3, A2, A1, A0 (likely representing bits of one number)

* **Second Row:** x, B3, B2, B1, B0 (likely representing bits of the other number)

* **Subsequent Rows:** Products of the bits, with offsets similar to manual multiplication.

* **Operators:** +, - (indicating addition and subtraction)

* **Highlighted Cells:** A3B0, A3B1, A3B2, A3B3 (in red)

**Second Diagram:**

* **Top Row:** A3, A2, A1, A0

* **Second Row:** x, B3, B2, B1, B0

* **Subsequent Rows:** Products of the bits, with offsets. Some terms are complemented.

* **Operators:** +

* **Constants:** 1

**Caption:**

* Fig. 14: Signed binary multiplication

### Detailed Analysis

**First Diagram:**

* The first row of products is: A3B0, A2B0, A1B0, A0B0

* The second row of products is: A3B1, A2B1, A1B1, A0B1

* The third row of products is: A3B2, A2B2, A1B2, A0B2

* The fourth row of products is: A3B3, A2B3, A1B3, A0B3

* The operators are +, +, - for the second, third, and fourth rows respectively.

* The terms A3B0, A3B1, A3B2, and A3B3 in the leftmost columns are highlighted in red.

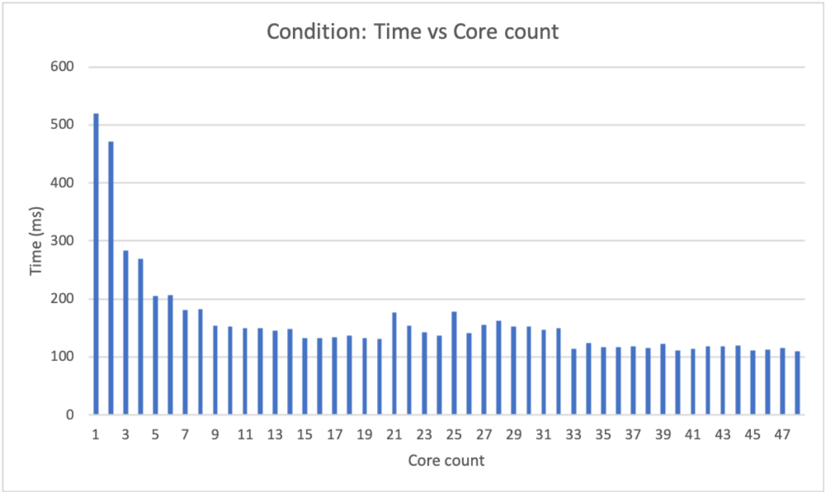

**Second Diagram:**