# Deep Sound Field Reconstruction in Real Rooms: Introducing the ISOBEL Sound Field Dataset

**Authors**: Miklas Strøm Kristoffersen, Martin Bo Møller, Pablo Martínez-Nuevo, Jan Østergaard

## Deep Sound Field Reconstruction in Real Rooms: Introducing the ISOBEL Sound Field Dataset

Miklas Strøm Kristoffersen, 1, 2 Martin Bo Møller, 1 Pablo Mart´ ınez-Nuevo, 1 and Jan Østergaard 2

1 Research Department, Bang & Olufsen a/s, Struer, Denmark

2 AI and Sound Section, Department of Electronic Systems, Aalborg University, Aalborg, Denmark

Knowledge of loudspeaker responses are useful in a number of applications, where a sound system is located inside a room that alters the listening experience depending on position within the room. Acquisition of sound fields for sound sources located in reverberant rooms can be achieved through labor intensive measurements of impulse response functions covering the room, or alternatively by means of reconstruction methods which can potentially require significantly fewer measurements. This paper extends evaluations of sound field reconstruction at low frequencies by introducing a dataset with measurements from four real rooms. The ISOBEL Sound Field dataset is publicly available, and aims to bridge the gap between synthetic and real-world sound fields in rectangular rooms. Moreover, the paper advances on a recent deep learning-based method for sound field reconstruction using a very low number of microphones, and proposes an approach for modeling both magnitude and phase response in a U-Net-like neural network architecture. The complex-valued sound field reconstruction demonstrates that the estimated room transfer functions are of high enough accuracy to allow for personalized sound zones with contrast ratios comparable to ideal room transfer functions using 15 microphones below 150 Hz.

The following article has been submitted to the Journal of the Acoustical Society of America. After it is published, it will be found at http://asa.scitation.org/journal/jas.

## I. INTRODUCTION

The response of a sound system in a room primarily varies with the room itself, the position of the loudspeakers, and the listening position. In order to deliver the intended sound system behavior to listeners, it is necessary to know about and compensate for this effect. Applications include among others room equalization (Cecchi et al. , 2018; Karjalainen et al. , 2001; Radlovic et al. , 2000), virtual reality sound field navigation (Tylka and Choueiri, 2015), source localization (Nowakowski et al. , 2017), and spatial sound field reproduction over predefined or dynamic regions of space also referred to as sound zones (Betlehem et al. , 2015; Møller and Østergaard, 2020). An approach to achieve this, is to measure the loudspeaker response at the desired listening locations and adjust the sound system accordingly. However, the task of measuring impulse responses on a sufficiently fine-grained grid in an entire room, quickly poses as a time-consuming and extensive manual labor that is not desirable. Instead, methods have been developed for the purpose of estimating impulse responses in a room based on a limited number of actual measurements. These methods are also referred to as sound field reconstruction and virtual microphones. The task of reconstructing room impulse responses in positions that have not been measured directly, is an active research field which has been explored in several studies (Ajdler et al. , 2006; Antonello et al. , 2017; Fernandez-Grande, 2019; Mignot et al. , 2014; Verburg and Fernandez-Grande, 2018; Vu and Lissek, 2020).

Machine learning, and in particular deep learning, is currently receiving widespread attention across scien- tific domains, and as an example within room acoustics, it has been used to estimate acoustical parameters of rooms (Genovese et al. , 2019; Yu and Kleijn, 2021). In recent work, deep learning-based methods were introduced to sound field reconstruction in reverberant rectangular rooms (Llu´ ıs et al. , 2020). This data-driven approach is able to learn sound field magnitude characteristics from large scale volumes of simulated data without prior information of room characteristics, such as room dimensions and reverberation time. The method is computationally efficient, and works with irregularly and arbitrarily distributed microphones for which there is no requirement of knowing absolute locations in the Euclidean space, in contrast to previous solutions. Furthermore, the reconstruction proves to work with a very low number of microphones, making real-world implementation feasible. To assess the issue of real-world sound field reconstruction, the method is evaluated using measurements in a single room (Llu´ ıs et al. , 2020). However, it is still unknown how much knowledge is transferred from the simulated to the real environment, as well as how well the model generalizes to different real rooms. This is a general problem in deep learning applications that rely on labor intensive data collections, which is our motivation for publishing an open access dataset of real-world sound fields in a diverse set of rooms.

This paper studies sound field reconstruction at low frequencies in rectangular rooms with a low number of microphones. The main contributions are:

- This paper introduces a sound field dataset, which is publicly available for development and evaluation of sound field reconstruction methods in four real rooms. It is our hope that the ISOBEL Sound Field

dataset will help the community in benchmarking and comparing state-of-the-art results.

- We assess the real-world performance of deep learning-based sound field magnitude reconstruction trained on simulated sound fields. For this purpose, we consider low frequencies, since lowfrequency room modes can significantly alter listening experience.Furthermore, we are interested in using a very low number of microphones.

- Moreover, we extend the deep learning-based sound field reconstruction to cover complex-valued inputs, i.e. both the magnitude and the phase of a sound field. Evaluation is performed in both simulated and real rooms, where a performance gap is observed. We argue why complex sound field reconstruction may have more difficulties in transferring useful knowledge from synthetic to real data.

- Lastly, we demonstrate the application of complexvalued sound field reconstruction within the field of sound zone control. Specifically, it is shown that sound fields reconstructed from as little as five microphones pose as valuable inputs to acoustic contrast control.

The paper is organized as follows: Section II introduces the concept of sound field reconstruction. Details of measurements from real rooms are presented in Section III. In Section IV, we focus on the problem of reconstructing the magnitude of sound fields, while Section V extends the model to complex-valued sound fields. Finally, Section VI investigates the application of sound zones through sound field reconstruction.

## II. SOUND FIELD RECONSTRUCTION

Our approach towards the sound field reconstruction problem is based on the observation that acoustic pressure in a room can be described using a three-dimensional regular grid of points defining a three-dimensional discrete function. The approach specifically for the purpose of magnitude reconstruction was introduced in (Llu´ ıs et al. , 2020). First, let R = [0 , l x ] × [0 , l y ] × [0 , l z ] denote a rectangular room, where l x , l y , l z > 0 are the length, width, and height of the room, respectively. Given such room, we define the grid as a discrete set of coordinates D o . However, for the sake of simplicity, we reduce the three-dimensional problem to a two-dimensional reconstruction on horizontal planes. The two-dimensional grid with a constant height z o is defined as

<!-- formula-not-decoded -->

for z o ∈ [0 , l z ], i = 0 , . . . , I -1, j = 0 , . . . , J -1, and integers I, J ≥ 2. Note, though, that the dataset collected for this study, which we will introduce in Section III, does in fact contain multiple horizontal planes at different heights. We keep the investigations of three-dimensional reconstruction for future work, and frame the core challenge of this paper as estimation of sound pressure in two-dimensional horizontal planes.

The function that we seek to reconstruct on this grid is the Fourier transform of the sound field in a frequency band that covers the low frequencies. The complexvalued frequency-domain sound field calculated using the Fourier transform is given by

<!-- formula-not-decoded -->

where ω ∈ R is a given excitation frequency, and p ( r , t ) denotes the spatio-temporal sound field with r ∈ R . We refer to the real and imaginary parts of the sound field using s Re ( r , ω ) and s Im ( r , ω ), respectively. Note that s is defined as the magnitude of the Fourier transform in (Llu´ ıs et al. , 2020). Instead, for magnitude reconstruction, we introduce the magnitude of the sound field

<!-- formula-not-decoded -->

for ω ∈ R and r ∈ R .

The procedure for reconstructing s ( r , ω ) on D o takes its starting point from actual observations of the sound field in select positions of the grid. We refer to the collected set of these available sample points as S o , which we further define to be a subset of the full grid. That is, S o ⊆ D o . The cardinality |S o | of the set S o is the number of available sample points, which we will also refer to as the number of microphones n mic in later experiments. We define the samples available to the reconstruction algorithm as

<!-- formula-not-decoded -->

An important aspect of these definitions is that the grid is unitless and positions can be defined in relative terms. That is, when sampling a point in the grid, only the relative position within the grid, and hence the room, needs to be known. This allows us to relax the data collection compared to alternative methods that require absolute locations. Another important element to consider is that the sampling pattern of S o can form any arrangement within D o as long as 1 ≤ |S o | ≤ |D o | . As an example, this means that sampled points can be irregularly distributed spatially in a room.

Situations may arise where the sound field resolution, as defined by l x , I , l y , and J , is too coarse. As an example, consider rooms that are either very long, wide, or in general large. Another example includes applications where fine-grained variations within a sound field are of importance. To compensate for this effect, we allow the reconstruction to base its output on another grid than D o . Such domain will typically be an upsampling of the original grid, but similarly it can be defined with other transformations, e.g. downsampling. Specifically, we define the grid as

<!-- formula-not-decoded -->

where i = 0 , . . . , IL -1, j = 0 , . . . , J P -1, and L, P must be chosen such that IL, JP ∈ Z + . Note that a value larger than one for either L or P results in an upsampling in the respective dimension.

The task of the sound field reconstruction is then to estimate the sound field on the grid D L,P o based on the sampled points S o . In particular, the objective of the reconstruction algorithm is to learn parameters w given

<!-- formula-not-decoded -->

where g w is an estimator and Ω = { ω k } K k =1 is the set of frequencies at which the sound field will be reconstructed. The remainder of the paper describes the procedure for learning parameters w using deep learning-based methods.

## A. Evaluation Metrics

The successfulness of the estimator is quantitatively judged using normalized mean square error (NMSE) at each frequency point in { ω k } K k =1

<!-- formula-not-decoded -->

The NMSE provides an average error over all positions in the grid between reconstructed and original sound fields for a single room at a single frequency. We also introduce an average NMSE, which is the NMSE performance averaged over all frequencies of interest as well as over all realizations from M trials, e.g. multiple rooms

MNMSE =

<!-- formula-not-decoded -->

This measure serves as an overall indication of the accuracy of a model, whereas the NMSE k allows a deeper insight of model behaviors at different frequencies. Note that the M trials are specific to each experiment and will be described accordingly.

## III. THE ISOBEL SOUND FIELD DATASET

A major contribution of this paper is the ISOBEL Sound Field dataset, which is released as open access alongside the manuscript. 1 The intended purpose is to use the measurements from real rooms for evaluation of sound field reconstruction in a diverse set of rooms. Note that the room-wide measurements of room impulse responses have several other use-cases that will not be further investigated in this paper, but we encourage the use outside sound field reconstruction as well. This section details the dataset and the measurement procedure.

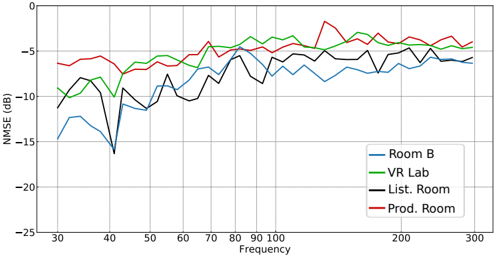

The dataset consists of measurements from four different rooms as specified in Table I and depicted in Fig. 1. The data collection is an extension to the real room measured in (Llu´ ıs et al. , 2020), which is included in the ISOBEL Sound Field dataset as Room B for simple access to all measured rooms. The rooms are located at Aalborg University, Aalborg, Denmark, and Bang & Olufsen a/s, Struer, Denmark. The rooms have significantly different acoustic properties and also vary in size. Two types of measurements are conducted in each room: 1) Reverberation time; 2) Sound field. However, only the sound field measurements are released as part of the dataset.

The reverberation times are measured in conformity with ISO 3382-2 (ISO 3382-2:2008, 2008) and calculated based on resulting impulse responses using backwards integration and least-squares best fit evaluation of the decay curves. 2 The reverberation times reported in the table are the arithmetic averages of 1/3 octave T 20 estimates in the frequency range 50-316 Hz.

The sound field measurements are performed on a 32 by 32 grid with sample points distributed uniformly along the length and width of each room. That is, a total of 1024 positions are measured in each room if possible, but in some cases it is not feasible to measure all positions due to e.g. obstacles. 3 The horizontal grids are measured at four different heights: 1, 1.3, 1.6, and 1.9 meters above the floor. 4 This is achieved using the microphone rig depicted in Fig. 1. Two 10 inch loudspeakers are used to acquire sound fields from two different source positions in each room. Both loudspeakers are placed on the floor, one in a corner and one in an arbitrary position. The sound sources are kept in the same position, while the microphones are moved around the room to record impulse responses. For each microphone position in the grid, the two sources play logarithmic sine sweeps in the frequency range 0.1-24,000 Hz followed by a quiet tail, (Farina, 2000). We use a sampling frequency of 48,000 Hz. The equipment includes among others four G.R.A.S. 40AZ prepolarized free-field microphones connected to four G.R.A.S. 26CC CCP standard preamplifiers and an RME Fireface UFX+ sound card. The four microphones are level calibrated at 1,000 Hz using a Br¨ uel & Kjær sound calibrator type 4231 prior to the measurements.

TABLE I. Room characteristics in the ISOBEL Sound Field dataset. The reverberation times are the arithmetic averages of 1/3 octave T 20 estimates in the frequency range 50-316Hz.

| Room | Dim. [m] | Size [m 2 /m 3 ] | T 20 [s] |

|------------|---------------------|--------------------|------------|

| Room B | 4.16 x 6.46 x 2.30 | 27/ 62 | 0.39 |

| VR Lab | 6.98 x 8.12 x 3.03 | 57/172 | 0.37 |

| List. Room | 4.14 x 7.80 x 2.78 | 32/ 90 | 0.8 |

| Prod. Room | 9.13 x 12.03 x 2.60 | 110/286 | 0.77 |

FIG. 1. Left: Rig with four microphones. Rooms from top left to bottom right: Room B, VR Lab, Listening Room, and Product Room.

<details>

<summary>Image 1 Details</summary>

### Visual Description

\n

## Photographs: Room Setup with Coordinate Systems

### Overview

The image presents a collage of six photographs depicting interior views of what appears to be an acoustic testing room or similar controlled environment. Each photograph showcases a different perspective of the room, with five of the six images including a 3D coordinate system (x, y, z) overlaid on the scene. One image shows a microphone stand with height markings.

### Components/Axes

The coordinate systems consistently use the following:

* **x-axis:** Represented by a red line, generally oriented along the longer dimension of the room.

* **y-axis:** Represented by a red line, generally oriented along the shorter dimension of the room.

* **z-axis:** Represented by a red line, oriented vertically, indicating height.

The microphone stand image displays height markings in meters:

* 1.0 m

* 1.3 m

* 1.6 m

* 1.9 m

### Detailed Analysis or Content Details

**Image 1 (Top-Left):** Microphone stand. The stand is black and positioned on a blue carpet. The height markings are clearly visible along the vertical pole.

**Image 2 (Top-Center):** Room view with a television and sound absorption panels. The room has wooden flooring and black sound absorption panels on the walls. A television is mounted on the wall above a low cabinet.

**Image 3 (Top-Right):** Room view with a window and coordinate system. The room has a window covered with white curtains. The coordinate system is overlaid, with the x-axis extending along the length of the room, the y-axis along the width, and the z-axis vertically. A small device is placed on the floor near the origin of the coordinate system.

**Image 4 (Bottom-Left):** Room view with coordinate system and wall-mounted objects. The room has a light-colored wooden floor and light-colored walls. Several small, square objects are mounted on the wall. The coordinate system is overlaid, similar to Image 3. A small device is placed on the floor near the origin of the coordinate system.

**Image 5 (Bottom-Right):** Room view with a window, coordinate system, and furniture. The room has a window covered with a roller blind. A dark-colored cabinet is visible in the background. The coordinate system is overlaid, similar to Images 3 and 4.

### Key Observations

* The coordinate systems are consistently oriented, suggesting a standardized measurement approach within the room.

* The presence of sound absorption panels in Image 2 indicates the room is designed for acoustic measurements or controlled sound environments.

* The small devices placed near the origin of the coordinate systems in Images 3 and 4 likely represent measurement points or the location of a sound source.

* The varying room configurations suggest multiple testing setups or different acoustic conditions.

* The microphone stand in Image 1 indicates sound recording or analysis is performed.

### Interpretation

The images document a controlled environment designed for acoustic testing or research. The consistent use of a 3D coordinate system suggests precise spatial measurements are being taken. The presence of sound absorption materials and a microphone indicates the room is used for analyzing sound properties, potentially including sound pressure levels, reverberation time, or sound localization. The different room configurations suggest the ability to vary the acoustic environment for different experiments. The height markings on the microphone stand provide a reference for vertical positioning of the microphone during measurements. The images collectively demonstrate a systematic approach to acoustic analysis within a controlled setting. The images do not provide any quantitative data beyond the height markings on the microphone stand.

</details>

## IV. SOUND FIELD MAGNITUDE RECONSTRUCTION

In the previous sections we have introduced the problem of reconstructing sound fields on two-dimensional grids in rectangular rooms, as well as introduced a realworld dataset specifically for evaluation of estimators solving such problem. In recent work, (Llu´ ıs et al. , 2020) showed that the problem fits within the context of deep learning-based methods for image reconstruction. Specifically, the tasks of inpainting, (Bertalmio et al. , 2000; Liu et al. , 2018), and super-resolution, (Dong et al. , 2016; Ledig et al. , 2017), which can be paralleled to the tasks of filling in the grid points that are not measured in the sound fields D L,P o \S o , as well as upsampling the grid resolution to achieve fine-grained variations in sound fields. One realization is that these methods are designed to work with real-valued images. To accommodate this, (Llu´ ıs et al. , 2020) propose to reconstruct only the magnitude of the sound field, i.e. | s ( r , ω ) | , using a U-Net-like architecture, (Ronneberger et al. , 2015).

To this end, the sampled grids are defined as tensors together with masks specifying which positions are measured (Llu´ ıs et al. , 2020). As an example, {| s ( r , ω k ) |} r ∈D L,P o ,k can be constructed as a tensor of the form S mag ∈ R IL × JP × K . The network is trained using a large number of simulated realizations of rooms, as will be described in the following section. For the experiments, we are interested in assessing the ability of the model to generalize to a wide range of real rooms.

## A. Simulation of Sound Fields for Training Data

Green's function can be used to approximate sound fields in rectangular rooms that are lightly damped, (Ja- cobsen and Juhl, 2013). The function provides a solution as an infinite summation of room modes in the three dimensions of a room, x , y , and z . It is defined as follows

<!-- formula-not-decoded -->

where ∑ N = ∑ ∞ n x =0 ∑ ∞ n y =0 ∑ ∞ n z =0 , for compactness, denotes summation across modal orders in the three dimensions of the room, and similarly the triplet of integers ( n x , n y , n z ) are represented by N . Furthermore, V denotes the volume of the room, ω 2 N represents angular resonance frequency of a mode associated with a specific N , the shape of the mode is denoted ψ N ( · ), τ N is the time constant of the mode, and c is the speed of sound. Assuming rigid boundaries, the shape is determined using the expression (Jacobsen and Juhl, 2013)

<!-- formula-not-decoded -->

Here, Λ N = √ x y z are constants used for normalization with 0 = 1, 1 = 2 = · · · = 2. Using Sabine's equation, the absorption coefficient is calculated and used to determine time constants of each mode.This is done by assuming that surfaces of a room have uniform distribution of absorption.

In the following experiments, two sets of training data are used. The first dataset is introduced in (Llu´ ıs et al. , 2020) and consists of 5,000 rectangular rooms. The room dimensions are sampled randomly in accordance with the recommendations for listening rooms in ITU-R BS.1116-3 (ITU-R BS.1116-3, 2015). The dataset uses a

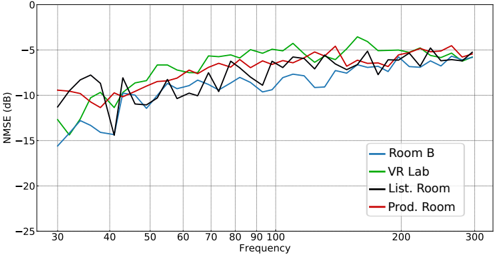

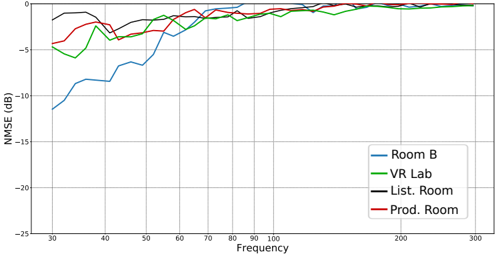

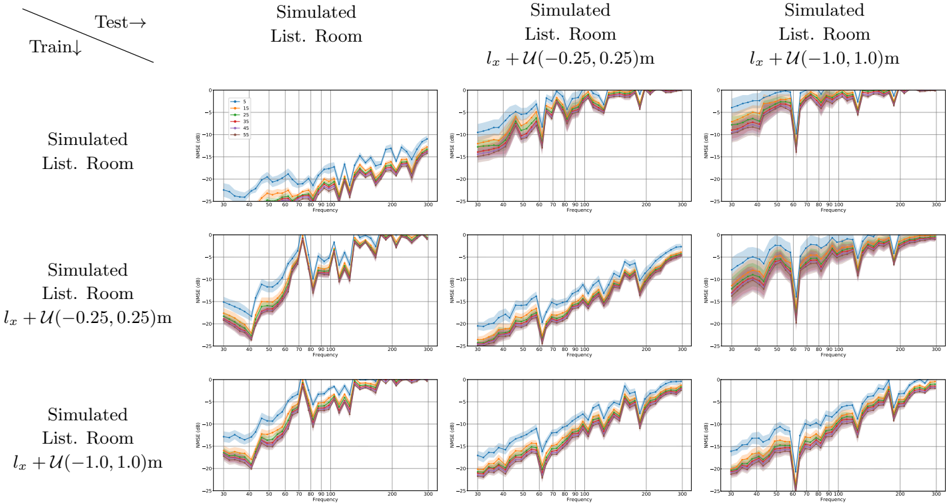

FIG. 2. NMSE in dB of U-Net-based magnitude reconstruction in the four measured rooms with n mic = 15 using the original pretrained model presented in (Llu´ ıs et al. , 2020).

<details>

<summary>Image 2 Details</summary>

### Visual Description

\n

## Line Chart: NMSE vs. Frequency for Different Rooms

### Overview

The image presents a line chart comparing the Normalized Mean Squared Error (NMSE) in decibels (dB) across different rooms (Room B, VR Lab, List. Room, and Prod. Room) as a function of frequency, ranging from 30 Hz to 300 Hz. The chart aims to visualize the performance of a system or measurement in each room across the specified frequency spectrum.

### Components/Axes

* **X-axis:** Frequency (Hz), ranging from 30 to 300 Hz, with tick marks every 10 Hz.

* **Y-axis:** NMSE (dB), ranging from -25 dB to 0 dB, with tick marks every 5 dB.

* **Data Series:**

* Room B (Blue Line)

* VR Lab (Green Line)

* List. Room (Black Line)

* Prod. Room (Red Line)

* **Legend:** Located in the top-right corner of the chart, associating each color with a specific room.

### Detailed Analysis

Here's a breakdown of each data series, describing the trend and then extracting approximate data points.

* **Room B (Blue Line):** The line generally fluctuates between approximately -5 dB and -15 dB. It starts at around -16 dB at 30 Hz, dips to approximately -18 dB at 40 Hz, rises to around -8 dB at 60 Hz, then fluctuates between -10 dB and -14 dB until 300 Hz, ending at approximately -12 dB.

* **VR Lab (Green Line):** This line exhibits a more consistent trend, generally staying between -5 dB and -12 dB. It begins at approximately -10 dB at 30 Hz, rises to around -5 dB at 50 Hz, then fluctuates between -7 dB and -12 dB until 300 Hz, ending at approximately -8 dB.

* **List. Room (Black Line):** The black line shows a more erratic pattern, with larger fluctuations. It starts at approximately -6 dB at 30 Hz, dips to around -16 dB at 40 Hz, rises to approximately -4 dB at 80 Hz, then fluctuates between -6 dB and -15 dB until 300 Hz, ending at approximately -5 dB.

* **Prod. Room (Red Line):** This line generally stays between -5 dB and 0 dB. It starts at approximately -3 dB at 30 Hz, dips to around -6 dB at 40 Hz, rises to approximately 0 dB at 100 Hz, then fluctuates between -5 dB and -2 dB until 300 Hz, ending at approximately -3 dB.

### Key Observations

* **Lowest NMSE:** The Prod. Room consistently exhibits the lowest NMSE values across most of the frequency range, indicating the best performance.

* **Highest NMSE:** Room B generally shows the highest NMSE values, suggesting the poorest performance.

* **Frequency Dependence:** All rooms show some degree of frequency dependence, with NMSE values changing as frequency increases.

* **Peak at 100 Hz:** The Prod. Room shows a distinct peak in NMSE around 100 Hz, reaching 0 dB.

* **Initial Dip:** All lines show a dip in NMSE between 30 Hz and 40 Hz.

### Interpretation

The chart suggests that the acoustic environment or measurement setup in the Prod. Room is most favorable for the system being evaluated, as indicated by the consistently lower NMSE values. The VR Lab and List. Room show intermediate performance, while Room B exhibits the highest error. The frequency dependence observed in all rooms suggests that the system's performance is affected by the frequency content of the signal. The peak in NMSE at 100 Hz for the Prod. Room could indicate a resonance or other specific acoustic phenomenon at that frequency. The initial dip in NMSE across all rooms might be related to the system's sensitivity at lower frequencies. This data could be used to optimize the system's performance or to identify rooms that are less suitable for certain applications. Further investigation into the acoustic properties of each room could provide insights into the observed differences in NMSE.

</details>

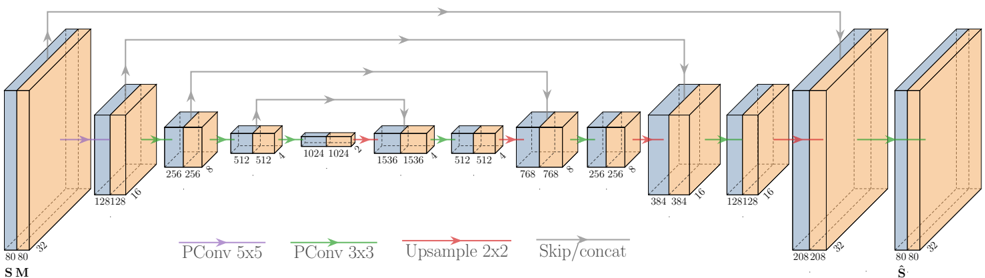

FIG. 3. NMSE in dB of U-Net-based magnitude reconstruction in the four measured rooms with n mic = 15 using the model presented in (Llu´ ıs et al. , 2020) trained using the extended dataset.

<details>

<summary>Image 3 Details</summary>

### Visual Description

\n

## Line Chart: NMSE vs. Frequency for Different Rooms

### Overview

The image presents a line chart comparing the Normalized Mean Squared Error (NMSE) in decibels (dB) across different rooms (Room B, VR Lab, List. Room, and Prod. Room) as a function of frequency, ranging from approximately 30 Hz to 300 Hz. The chart aims to visualize the performance of a system or measurement in each room based on frequency response.

### Components/Axes

* **X-axis:** Frequency (Hz), ranging from 30 to 300 Hz, with tick marks at 30, 40, 50, 60, 70, 80, 90, 100, 200, and 300 Hz.

* **Y-axis:** NMSE (dB), ranging from -25 dB to 0 dB, with tick marks at -25, -20, -15, -10, -5, and 0 dB.

* **Legend:** Located in the bottom-right corner, identifying the four data series:

* Room B (Blue line)

* VR Lab (Green line)

* List. Room (Black line)

* Prod. Room (Red line)

* **Grid:** A light gray grid is present, aiding in the readability of the chart.

### Detailed Analysis

The chart displays four distinct lines, each representing a room.

* **Room B (Blue):** Starts at approximately -16 dB at 30 Hz, dips to around -18 dB at 40 Hz, then fluctuates between -10 dB and -14 dB until approximately 150 Hz. After 150 Hz, it stabilizes around -8 dB, with minor fluctuations up to 300 Hz.

* **VR Lab (Green):** Begins at approximately -11 dB at 30 Hz, rises to a peak of around -4 dB at 60 Hz, then declines to approximately -8 dB at 100 Hz. It then fluctuates between -6 dB and -10 dB, reaching a peak of approximately -4 dB at 240 Hz before settling around -6 dB at 300 Hz.

* **List. Room (Black):** Starts at approximately -8 dB at 30 Hz, dips to around -12 dB at 40 Hz, then rises to approximately -6 dB at 80 Hz. It then fluctuates between -6 dB and -10 dB, with a slight upward trend towards -5 dB at 300 Hz.

* **Prod. Room (Red):** Begins at approximately -10 dB at 30 Hz, rises to around -5 dB at 60 Hz, then declines to approximately -8 dB at 100 Hz. It then fluctuates between -6 dB and -9 dB, settling around -6 dB at 300 Hz.

### Key Observations

* The VR Lab and Prod. Room exhibit similar trends, with a peak around 60 Hz and then a gradual decline and stabilization.

* Room B consistently shows the highest NMSE values (worst performance) across most of the frequency range.

* The List. Room generally performs better than Room B but worse than VR Lab and Prod. Room.

* All rooms show a general trend of decreasing NMSE (improving performance) as frequency increases, although with fluctuations.

* The VR Lab shows the lowest NMSE values (best performance) in the 60-100 Hz range.

### Interpretation

The chart suggests that the acoustic environment in Room B is the least favorable for the system being evaluated, as indicated by its consistently higher NMSE values. The VR Lab and Prod. Room offer similar performance, while the List. Room falls in between. The fluctuations in NMSE across frequencies indicate that the system's performance is frequency-dependent and varies depending on the room's acoustic characteristics. The decreasing trend of NMSE with increasing frequency could be due to the system's inherent limitations or the room's acoustic properties at higher frequencies. The data suggests that the choice of room significantly impacts the system's performance, and optimizing the acoustic environment could lead to improved results. The chart provides valuable insights for selecting the most suitable room for testing or deployment of the system.

</details>

constant reverberation time T 60 of 0.6 s and only includes room modes in the x and y dimensions, i.e. n z = 0.

The second dataset consists of 20,000 rectangular rooms. Room dimensions are uniformly sampled with V ∼ U (50 , 300)m 3 , l x ∼ U (3 . 5 , 10)m, l z ∼ U (1 . 5 , 3 . 5)m, and l y = V/l x l z . Compared to the first dataset, the room dimensions span a larger range and allow us to represent e.g. the Product Room, which is not included in the original training data. The dataset uses reverberation times T 60 sampled from U (0 . 2 , 1 . 0)s and includes room modes in all three x -, y -, and z -dimensions.

For both datasets, a grid D L,P o is defined with I = J = 8 and L = P = 4, which effectively divides a sound field into 32x32 uniformly-spaced microphone positions. Using this grid, the magnitude of the sound field is reconstructed at 1/12 octave center-frequencies resolution in the range [30, 300] Hz. Simulations are specified to include all room modes with a resonance frequency below 400 Hz, which means that there is a total of K = 40 frequency slices.

## B. Experiments on the ISOBEL Sound Field Dataset

The U-Net-like architecture has shown promising results on simulated data and on measurements from a single real room (Llu´ ıs et al. , 2020). In the following experiments, we expose the model to the ISOBEL Sound Field dataset. We include results from the original model, as well as a model built around a similar architecture but using the extended training data with a larger range of room dimensions and reverberation characteristics. We investigate the performance of the model trained with the two different simulated datasets in the four rooms included in the real-world dataset. Special attention is paid to the number of available samples, i.e. the number of microphones n mic . We are mainly interested in settings with a very low number of microphones. In particular, we show results for 5, 15, and 25 microphones in the rooms with a total of 32 × 32 = 1024 available positions. In each room, a total of 40 different and randomly sampled realizations of microphone positions S o are used for each value of n mic . We report the average performance across the 40 realizations, and use the source located in one of the corners of each room.

Fig. 2 and Fig. 3 show NMSE k results for 15 microphones of model trained with the original and the extended datasets, respectively. It is clear that the model trained with the original dataset does not generalize well to all the rooms. This behavior is expected, since the training data are not designed to represent rooms that fall outside the recommendations for listening room dimensions. On the contrary, the extended training data are motivated in encompassing a wider selection of rooms, which also shows in the results for e.g. the Product Room. One important observation in this regard is that performance does not decrease in rooms that are already represented in the simulated data when more diverse simulated rooms are included, which can e.g. be seen from the performance in Room B. This result indicates that the capacity of the model is sufficient for generalizing to a wide range of diverse rooms and room

TABLE II. MNMSE in dB with M = 40 different and randomly sampled realizations of S o for each room in the ISOBEL SF dataset. A lower score is better.

| | | n mic | n mic | n mic |

|------------|-------|---------|---------|---------|

| Room | Model | 5 | 15 | 25 |

| Room B | Orig. | -6.33 | -8.71 | -9.62 |

| | Ext. | -6.27 | -8.84 | -10.25 |

| VR Lab | Orig. | -4.01 | -5.08 | -5.63 |

| | Ext. | -4.12 | -6.78 | -8.05 |

| List. Room | Orig. | -4.38 | -6.92 | -7.94 |

| | Ext. | -5 | -7.61 | -8.44 |

| Prod. Room | Orig. | -3.89 | -4.91 | -5.55 |

| | Ext. | -5.18 | -6.67 | -7.73 |

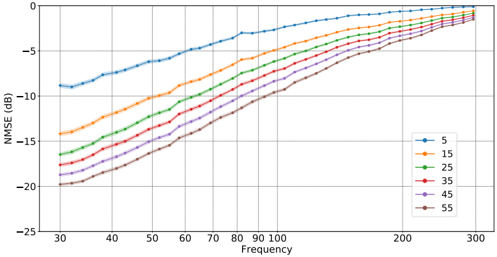

FIG. 4. Architecture of the U-Net-like convolutional neural network proposed for complex sound field reconstruction. S is the tensor with real and imaginary sound fields concatenated along the frequency-dimension, M is the mask tensor, and ˆ S is the reconstructed sound field tensor.

<details>

<summary>Image 4 Details</summary>

### Visual Description

\n

## Diagram: U-Net Architecture Visualization

### Overview

The image depicts a simplified U-Net architecture, a common convolutional neural network used for image segmentation. The diagram illustrates the encoder-decoder structure with skip connections. The flow of data is generally left-to-right, with a contracting path (encoder) on the left and an expanding path (decoder) on the right. The diagram uses 3D blocks to represent layers or groups of layers, with dimensions indicated on each block.

### Components/Axes

The diagram uses the following visual cues:

* **Rectangular Blocks:** Represent layers or groups of convolutional layers. Dimensions (width x height x depth) are labeled on each block.

* **Arrows:** Indicate the flow of data.

* **Color-coded Lines:** Represent different types of operations:

* **Green:** PConv 5x5 (presumably a convolutional layer with a 5x5 kernel)

* **Teal:** PConv 3x3 (presumably a convolutional layer with a 3x3 kernel)

* **Red:** Upsample 2x2 (upsampling operation, likely bilinear interpolation)

* **Purple:** Skip/concat (skip connection, concatenating feature maps)

* **Labels:** "SM" (likely input image), "Ŝ" (likely output segmented image).

### Detailed Analysis or Content Details

The diagram can be broken down into sections:

**Encoder (Left Side):**

1. **Input:** 80x80x3 (labeled "SM")

2. **Layer 1:** 128x128x16 (Green PConv 5x5)

3. **Layer 2:** 256x256x16 (Teal PConv 3x3)

4. **Layer 3:** 512x512x32 (Teal PConv 3x3)

5. **Layer 4:** 1024x1024x64 (Teal PConv 3x3)

6. **Layer 5:** 1536x1536x128 (Teal PConv 3x3)

**Bottleneck (Center):**

7. **Layer 6:** 512x512x128 (Teal PConv 3x3)

8. **Layer 7:** 768x768x64 (Teal PConv 3x3)

**Decoder (Right Side):**

9. **Layer 8:** 256x256x32 (Red Upsample 2x2, Purple Skip/concat from Layer 5)

10. **Layer 9:** 384x384x16 (Teal PConv 3x3)

11. **Layer 10:** 128x128x16 (Red Upsample 2x2, Purple Skip/concat from Layer 3)

12. **Layer 11:** 208x208x32 (Teal PConv 3x3)

13. **Layer 12:** 80x80x32 (Red Upsample 2x2, Purple Skip/concat from Layer 1)

14. **Output:** 80x80x32 (labeled "Ŝ")

The skip connections are indicated by purple lines, connecting the output of a layer in the encoder to the input of a corresponding layer in the decoder. These connections concatenate the feature maps from the encoder and decoder.

### Key Observations

* The architecture follows a U-shape, with a contracting path (encoder) and an expanding path (decoder).

* The number of feature channels increases in the encoder and decreases in the decoder.

* Skip connections are used to preserve fine-grained details from the encoder during the decoding process.

* The dimensions of the blocks change systematically, reflecting the downsampling and upsampling operations.

### Interpretation

This diagram illustrates the core principles of the U-Net architecture. The encoder extracts hierarchical features from the input image, while the decoder reconstructs the segmented image from these features. The skip connections are crucial for preserving spatial information and improving segmentation accuracy. The U-Net is particularly effective for image segmentation tasks where precise localization of objects is important. The use of different convolutional kernel sizes (5x5 and 3x3) suggests an attempt to capture both broad contextual information and fine-grained details. The increasing and decreasing number of feature channels reflects the trade-off between representational capacity and computational cost. The diagram provides a high-level overview of the architecture and does not include details about activation functions, normalization layers, or other implementation specifics.

</details>

acoustic characteristics, given that the model is provided with ample training samples.

Table II details MNMSE results, which are the NMSE results averaged across frequencies K = 40 and S o realizations M = 40. The MNMSE results for n mic = 15 are the condensed results shown for the NMSE k in Figs. 2 and 3. The scores in the table reiterate the observations from the figures, performance is improved with the extended training data for some rooms in particular, while performance is maintained in the other rooms. Interestingly, there seems to be a tendency of more pronounced improvements with a larger number of microphones. We attribute this effect to similar observations within classical methods that as the number of microphones increase, relative improvement for reconstruction is higher at low frequencies as opposed to the highfrequency range, (Ajdler et al. , 2006; Llu´ ıs et al. , 2020).

In summary, the deep learning-based model is confirmed to possess the ability to generalize to a diverse set of real rooms for sound field magnitude reconstruction. Based solely on training with simulated data, these promising results motivate further investigations, e.g. of reconstructing the complex-valued sound fields.

## V. COMPLEX SOUND FIELD RECONSTRUCTION

We propose to extend the U-Net-based model to work with complex-valued room transfer functions (RTFs). Reconstruction of both magnitude and phase of sound fields enable new opportunities, such as the application of sound zones. A topic, which we investigate in Section VI.

The proposed model is based on the model designed to work with the magnitude of sound fields. Note that deep learning-based models that work directly on complex-valued inputs have been introduced, e.g. within Transformers (Kim et al. , 2020; Yang et al. , 2020), but in this paper we instead choose to process the sound fields such that the U-Net-based model receives real-valued inputs. Specifically, we present the model to real and imaginary parts of sound fields separately. That is, where the magnitude-based model receive as input {| s ( r , ω k ) |} r ∈D L,P o ,k in the tensor form S mag ∈ R IL × JP × K , the complex-based model instead receives a concatenation of the real and imaginary sound fields. Specifically, using the real sound field { s Re ( r , ω k ) } r ∈D L,P o ,k with the tensor form S Re ∈ R IL × JP × K , and similarly the imaginary sound field tensor S Im ∈ R IL × JP × K , we define the concatenated input:

<!-- formula-not-decoded -->

where S ∈ R IL × JP × 2 K is the resulting tensor with real and imaginary sound fields concatenated along the frequency-dimension. Note that the complex-valued sound field is easily recovered from this tensor form. In addition, we define a mask tensor M ∈ R IL × JP × 2 K computed from S o and D L,P o .

We follow the pre- and postprocessing steps as described in (Llu´ ıs et al. , 2020), which entails completion, scaling, upsampling, mask generation, and rescaling based on linear regression. These steps are, however, adjusted such that they operate on a tensor that has doubled in size from K to 2 K in the third dimension. Furthermore, we have observed significant improvements by changing the min-max scaling of the input to a max scaling that takes into account both real and imaginary parts for each frequency slice. Specifically:

<!-- formula-not-decoded -->

<!-- formula-not-decoded -->

for each ω k . Note that this alters the scaling operation from working in the range [0,1] to working in [-1,1]. The

motivation in doing so, is that values can be negative, in contrast to the real values from the magnitude. By using max scaling we ensure that zero will not shift between realizations.

The architecture of the proposed neural network, as illustrated in Fig. 4, is based on a U-Net (Ronneberger et al. , 2015). We employ partial convolutions (PConv) as proposed for image inpainting in (Liu et al. , 2018). In the encoding part of the U-Net, we use a stride of two in the partial convolutions in order to halve the feature maps, while doubling the number of kernels in each layer. The decoder part acts opposite with upsampling feature maps and reducing the number of kernels to reach an output tensor ˆ S with matching dimensions to the input tensor S . We use ReLU as activation function in the encoding part, and leaky ReLU with a slope coefficient of -0.2 in the decoder. We initialize the weights using the uniform Xavier method (Glorot and Bengio, 2010), initialize the biases as zero, and use the Adam optimizer (Kingma and Ba, 2014) with early stopping when performance on a validation set stops increasing. Due to the increased input and output sizes, we double the number of kernels in all layers compared to the U-Net for magnitude reconstruction. We also do not use a 1x1 convolution with sigmoid activation in the last layer, since the range of our output is not constrained to [0,1] but instead [-1,1]. We have not experienced any decreases in performance from not including this layer.

## A. Experiments

In this section, we assess the complex-valued sound field reconstruction. The simulated extended dataset introduced in Section IV A is used to train the model. It is important to note that NMSE scores are not directly comparable between magnitude and complex reconstruction, for which reason it is not possible to scrutinize differences between the two types of models. That is, the results presented in the following experiments will stand on their own, and only indicative parallels can be drawn to the results from magnitude reconstruction.

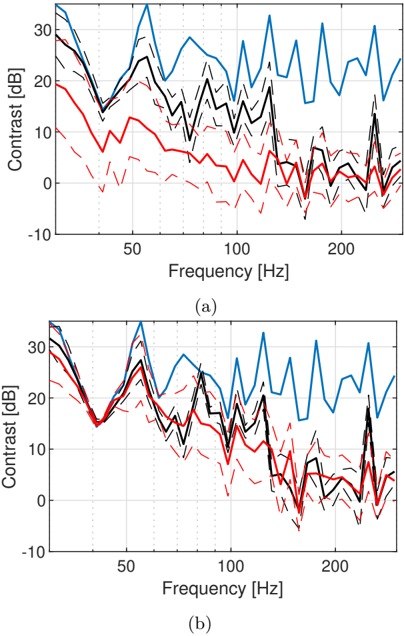

First, we test how the model performs on the simulated data associated with the training data, but held out specifically for evaluation. This test set consists of 190 simulated rooms, the validation set contains approximately 1,000 rooms, and the training set holds the remaining rooms from the 20,000 available rooms. In each room, three different realizations of S o are used for each value of n mic . Results in terms of NMSE are shown in Fig. 5. Some tendencies are similar to those observed for magnitude reconstruction, such as improvements in performance with an increasing number of available microphones. At the same time, as frequency increases, performance degrades.

Next, we evaluate the complex reconstruction model on the ISOBEL Sound Field dataset. The approach is similar to the experiment in Section IV B, except the use of the complex-valued sound fields instead of the magnitude. As can be seen from the results in Fig. 6, per-

FIG. 5. NMSE in dB for complex reconstruction of simulated sound fields in the test set with 190 different rooms and three realizations of S o in each room ( M = 570 for each value of n mic ). The solid lines indicate average NMSE k shown with 95% confidence intervals. Colors indicate different values of n mic in the range [5, 55].

<details>

<summary>Image 5 Details</summary>

### Visual Description

\n

## Line Chart: NMSE vs. Frequency for Different Values

### Overview

The image presents a line chart illustrating the relationship between Normalized Mean Squared Error (NMSE) in decibels (dB) and Frequency, for several different values. The chart displays how NMSE changes as frequency increases for each value.

### Components/Axes

* **X-axis:** Frequency, ranging from approximately 30 to 300. The axis is labeled "Frequency".

* **Y-axis:** NMSE (dB), ranging from approximately -25 to 0. The axis is labeled "NMSE (dB)".

* **Legend:** Located in the top-right corner of the chart. The legend identifies each line by its corresponding value: 5, 15, 25, 35, 45, and 55. Each value is associated with a unique color.

* **Data Series:** Six distinct lines, each representing a different value.

### Detailed Analysis

The chart shows six lines, each representing a different value. The lines generally trend upwards, indicating that NMSE increases with frequency.

* **Value 5 (Blue Line):** The blue line starts at approximately -11 dB at a frequency of 30, and gradually increases to approximately -0.5 dB at a frequency of 300. This line exhibits the lowest NMSE values across the entire frequency range.

* **Value 15 (Orange Line):** The orange line begins at approximately -16 dB at a frequency of 30, and rises to approximately -3 dB at a frequency of 300.

* **Value 25 (Green Line):** The green line starts at approximately -18 dB at a frequency of 30, and increases to approximately -5 dB at a frequency of 300.

* **Value 35 (Red Line):** The red line begins at approximately -19 dB at a frequency of 30, and rises to approximately -7 dB at a frequency of 300.

* **Value 45 (Purple Line):** The purple line starts at approximately -20 dB at a frequency of 30, and increases to approximately -8 dB at a frequency of 300.

* **Value 55 (Brown Line):** The brown line begins at approximately -21 dB at a frequency of 30, and rises to approximately -9 dB at a frequency of 300.

The lines converge towards the higher frequency end of the spectrum, indicating that the difference in NMSE between the different values diminishes as frequency increases.

### Key Observations

* NMSE generally increases with frequency for all values.

* Lower values (5, 15) exhibit lower NMSE values across the entire frequency range.

* The difference in NMSE between the different values is more pronounced at lower frequencies.

* The lines converge at higher frequencies, suggesting that the impact of the value on NMSE decreases as frequency increases.

### Interpretation

The chart demonstrates the relationship between NMSE and frequency for different values. The increasing trend of NMSE with frequency suggests that the accuracy of a model or estimation decreases as frequency increases. The lower NMSE values for lower values indicate that these values provide better accuracy, particularly at lower frequencies. The convergence of the lines at higher frequencies suggests that the impact of the value on accuracy becomes less significant at higher frequencies. This could be due to limitations in the model or estimation method at higher frequencies, or it could indicate that the underlying signal becomes more complex and difficult to model accurately. The data suggests that the choice of value is more critical at lower frequencies, while the impact of the value diminishes at higher frequencies.

</details>

FIG. 6. Average NMSE k in dB of complex reconstruction in the four measured rooms with n mic = 15.

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Line Chart: NMSE vs. Frequency for Different Rooms

### Overview

The image presents a line chart comparing the Normalized Mean Squared Error (NMSE) in decibels (dB) across different rooms (Room B, VR Lab, List. Room, and Prod. Room) as a function of frequency, ranging from approximately 30 Hz to 300 Hz. The chart aims to visualize the performance or accuracy of a system or measurement within each room at various frequencies.

### Components/Axes

* **X-axis:** Frequency (Hz), ranging from 30 to 300 Hz. The axis is linearly scaled.

* **Y-axis:** NMSE (dB), ranging from -25 dB to 0 dB. The axis is linearly scaled.

* **Legend:** Located in the top-right corner, identifying the four data series:

* Blue Line: Room B

* Green Line: VR Lab

* Black Line: List. Room

* Red Line: Prod. Room

### Detailed Analysis

The chart displays four distinct lines, each representing a room.

* **Room B (Blue Line):** This line exhibits a strong upward trend from approximately -22 dB at 30 Hz to around -2 dB at 300 Hz. The line is relatively smooth, with some fluctuations. At 30 Hz, the NMSE is approximately -22 dB. At 60 Hz, it's around -14 dB. At 100 Hz, it's approximately -8 dB. At 200 Hz, it's around -4 dB. At 300 Hz, it's approximately -2 dB.

* **VR Lab (Green Line):** This line starts at approximately -4 dB at 30 Hz, dips to around -10 dB at 40 Hz, then rises to approximately 0 dB at 300 Hz. It shows more variability than the other lines. At 30 Hz, the NMSE is approximately -4 dB. At 60 Hz, it's around -8 dB. At 100 Hz, it's approximately -6 dB. At 200 Hz, it's around 0 dB. At 300 Hz, it's approximately 0 dB.

* **List. Room (Black Line):** This line remains relatively stable, fluctuating between approximately -2 dB and 2 dB across the entire frequency range. It shows minimal change with frequency. At 30 Hz, the NMSE is approximately -2 dB. At 60 Hz, it's around 0 dB. At 100 Hz, it's approximately 0 dB. At 200 Hz, it's around 0 dB. At 300 Hz, it's approximately 0 dB.

* **Prod. Room (Red Line):** This line starts at approximately -2 dB at 30 Hz, dips to around -6 dB at 50 Hz, then rises to approximately 0 dB at 300 Hz. It is similar to the VR Lab line, but generally remains slightly lower in NMSE values. At 30 Hz, the NMSE is approximately -2 dB. At 60 Hz, it's around -4 dB. At 100 Hz, it's approximately -2 dB. At 200 Hz, it's around 0 dB. At 300 Hz, it's approximately 0 dB.

### Key Observations

* Room B exhibits the most significant increase in NMSE with increasing frequency.

* List. Room demonstrates the most stable performance across all frequencies, with consistently low NMSE values.

* VR Lab and Prod. Room show similar trends, with NMSE values fluctuating more than List. Room but less than Room B.

* All rooms converge towards an NMSE of approximately 0 dB at 300 Hz.

### Interpretation

The chart suggests that the performance or accuracy of the system being measured is highly dependent on both the room and the frequency. Room B appears to be the most sensitive to frequency changes, exhibiting a substantial increase in error as frequency increases. List. Room, on the other hand, provides the most consistent and accurate results across the tested frequency range. The convergence of all lines at higher frequencies (around 300 Hz) might indicate a limitation of the system or measurement technique at those frequencies, or a shared characteristic of the rooms at higher frequencies. The NMSE values represent the difference between predicted and actual values, so lower NMSE values indicate better performance. The data suggests that for applications requiring accuracy across a broad frequency range, List. Room would be the preferred environment. The differences in NMSE between the rooms could be due to variations in acoustic properties, noise levels, or other environmental factors.

</details>

formances in the real rooms are not comparable to those from simulated data. Moreover, although it is not possible to compare directly, performance seems worse than what is achieved with the magnitude-based reconstruction in the same rooms, see Fig. 3. That is, the complex reconstruction model is not transferring useful knowledge as successfully from the simulations-based training to the real world. Given that the network is able to reconstruct the simulated sound fields, it appears that the complex simulation model is a worse match for the real rooms than the magnitude simulation model. The outcome is that the framework is able to reconstruct sound fields which are close to fields included in the training data, it is indicated that the complex simulations are a poor match for the real rooms. Two apparent differences are the identical boundary conditions at all surfaces and perfectly rectangular geometry assumed in the simulations, but which are not true in the real rooms. To provide insights into how the network behaves relative to rooms which does not match the training data set we now present the following simulations.

FIG. 7. NMSE in dB for complex reconstruction of simulated sound fields in rooms with no or small variations in the room dimensions. Rows: Training data. Columns: Test data. Four random realizations of S o are used in each of the 11 test rooms ( M = 44). The solid lines indicate average NMSE k shown with 95% confidence intervals. Colors indicate different n mic values, i.e., n mic = 5 (blue), n mic = 15 (orange), n mic = 25 (green), n mic = 35 (red), n mic = 45 (purple), and n mic = 55 (brown).

<details>

<summary>Image 7 Details</summary>

### Visual Description

## Chart: Noise Gain vs. Frequency for Simulated List Rooms

### Overview

</details>

## B. Discussion of Experiments

Several optimizations and fine-tuning approaches have been investigated for the complex reconstruction in real rooms without achieving notable improvements. Instead, we take another approach, and show what happens to the model, when it is exposed to data that are not represented in the training data. To this end, we are interested in assessing the performance of room specialized models. That is, if room dimensions and reverberation time are known, how well will a model trained specifically for that room perform. For this, we introduce new datasets each with 824 realizations for training, 165 for validation, and 11 for testing. Each simulated realization has a randomly positioned source. In total, three such datasets are generated according to the procedure described in Section IV A. The first dataset assumes that room characteristics are known perfectly, we use the parameters of the Listening Room. The second and third datasets introduce uncertainty in the room dimensions. In particular, we alter the length and width of rooms, while keeping the aspect ratio ( l x /l y ) of the room constant. We accomplish this by uniformly sampling an error, which is added to the length of a room, and correct the width to achieve the original aspect ratio. The two datasets sample errors in the range [-0.25, 0.25] m and [-1, 1] m, respectively. The results for the three models evaluated on each of the test sets are shown in Fig. 7. The first column shows how the three models perform on the dataset with no added uncertainties. Even with small variations of the 0.25 m scale, performance rapidly degrades with increasing frequency. On the diagonal, training data match test data, and once again high frequencies see a significant performance decrease with increasing uncertainty. In general, the models do not perform well on datasets with more variation than what is included in their own training data, which can be seen in the three upper right figures.

Further experiments showed that the three models do not generalize to the real-world measurements of the Listening Room. This result indicates that the simplifications imposed during the simulations of rooms causes the simulated sound fields to not represent the exact real rooms we intend it to. That is, a model trained with simulated data generated using exact parameters of a real room will not be able to reconstruct the sound field accurately in the real room. As suggested by our results, neither will a model trained with ± 1 m uncertainty. This calls for inclusion of diverse room parameters when training a model with simulated data if the intended purpose is to use the reconstruction in real rooms.

We showed in Section IV how magnitude reconstruction recovered performance in some of the real rooms by using an extended training dataset with more diverse simulated rooms. The same effect is not observed for complex reconstruction. We believe two factors are the main reasons: 1) the boundary conditions in the simulations assume nearly rigid walls and do not include e.g. phase shifts of real wall reflections; 2) the simulations assume perfectly rectangular rooms with a uniform dis-

tribution of absorption. Thus, we hypothesize that the model does not see representative data during training, analogous to not having the correct room dimensions represented in the training data.

## VI. THE SOUND ZONES APPLICATION

One potential application for the sound field reconstruction presented in this paper, is in the process of setting up sound zones. Sound zones generally refers to the scenario where multiple loudspeakers are used to reproduce individual audio signals to individual people within a room (Betlehem et al. , 2015). To control the sound field at the location of the listeners in the room, it is necessary to know the RTFs between each loudspeaker and locations sampling the listening regions. If the desired locations of the sound zones change over time, it becomes labor intensive to measure all the RTFs in situ. As an alternative, a small set of RTFs could be measured and used to extrapolate the RTFs at the positions of interest.

## 1. Setup

For this example, we will explore the scenario where sound is reproduced in one zone (the bright zone) and suppressed in another zone (the dark zone). 5

The question posed in a sound zones scenario, is how the output of the available loudspeakers should be adjusted to achieve the desired scenario. A simple formulation of this problem in the frequency domain is typically denoted acoustic contrast control and relies on maximizing the ratio of mean square pressure in the bright zone relative to the dark zone (Choi and Kim, 2002). This ratio is termed as the acoustic contrast and can be expressed as

<!-- formula-not-decoded -->

where H B ( ω ) ∈ C M × L is a matrix of RTFs from L loudspeakers to M microphone positions in the bright zone and H D ( ω ) ∈ C M × L are the RTFs from the loudspeakers to points in the dark zone. The adjustment of the loudspeaker responses q ( ω ) ∈ C L can be determined as the eigenvector of ( H H D ( ω ) H D ( ω )+ λ D I ) -1 H H B ( ω ) H B ( ω ) which corresponds to the maximal eigenvalue (Elliott et al. , 2012), where · H denotes the Hermitian transpose. In this investigation, the regularization parameter is chosen as

<!-- formula-not-decoded -->

This choice is made to scale the regularization relative to the maximal singular value of H H D ( ω ) H D ( ω ), thereby, controlling the condition number of the inverted matrix.

## 2. Sparse Reconstruction method

An alternative method for estimating the RTFs at positions of interest can be obtained by a sparse reconstruction problem inspired by (Fernandez-Grande, 2019).

Here, the sound pressure observed at the physical microphone locations are modeled as a combination of impinging plane waves

<!-- formula-not-decoded -->

where s ( · , · ) is defined in (2), φ n ( r m ) = e j k T n r m is the candidate plane wave, propagating with wave number k n ∈ R 3 , to observation point r m ∈ R 3 , and b n ( ω ) ∈ C is the complex weight of the n th candidate plane wave. The candidate plane waves can be obtained by sampling the wave number domain in a cubic grid. Note that the eigenfunctions of the room used in Green's function can be expanded into a number of plane waves whose propagation directions in the wave number domain equals the characteristic frequency of the eigenfunction ( ‖ k n ‖ 2 2 = ( ω/c ) 2 ). This fact was used in (Fernandez-Grande, 2019) to regularize the sparse reconstruction problem as

<!-- formula-not-decoded -->

where λ ∈ R + and L ( ω ) ∈ R N × N is a diagonal matrix, where the diagonal elements express the distance between the characteristic frequency associated with the n th candidate plane wave and the angular excitation frequency ω as |‖ k N ‖ 2 2 -( ω/c ) 2 | .

Note that the sparse reconstruction model is not directly comparable to the proposed sound field reconstruction. This is due to the sparse reconstruction relying on knowledge of the absolute locations of the microphone observations. The proposed algorithm, on the other hand, only requires the relative microphone locations on a unitless observation grid.

## 3. Experiments

For the experiments, we use the simulated Listening Room from the previous section, with eight loudspeakers placed at the corners of the floor and halfway between the corners. We have two predefined zones in the middle of the room, which are bright and dark zone respectively. We now, sample random positions in the 32 by 32 x,ygrid 1 m above the floor and use those observations to estimate the RTFs within the zones.

We compare the sparse reconstruction method to the deep learning-based model trained in the previous section. Specifically, the room specialized models are used.

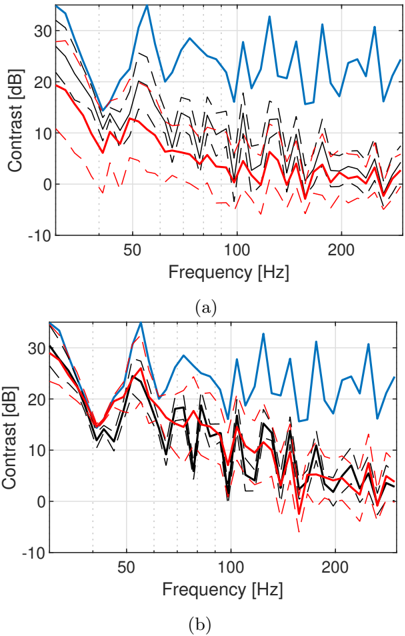

The resulting performance is evaluated in terms of the acoustic contrast over 50 random microphone samplings for each number of microphones. In Fig. 8 the results are based on evaluations using the true RTFs when the loudspeaker weights are determined using either the true RTFs, estimated RTFs based on the model trained with simulated room with no added uncertainties, or estimates based on the sparse reconstruction. It

FIG. 8. Contrast results for the dataset with no added uncertainty to the simulated Listening Room (50 different observation masks). (blue): Perfectly known TFs. (black): Deep learning model. (red): Sparse reconstruction. (dashed): ± 1 standard deviation.

<details>

<summary>Image 8 Details</summary>

### Visual Description

\n

## Line Chart: Contrast vs. Frequency

### Overview

The image presents two line charts, labeled (a) and (b), displaying contrast in decibels (dB) as a function of frequency in Hertz (Hz). Each chart contains three distinct line series, visually differentiated by color and line style. The charts appear to compare contrast performance across different conditions or algorithms.

### Components/Axes

* **X-axis:** Frequency [Hz]. Scale ranges from approximately 0 to 250 Hz, with major ticks at 50 Hz, 100 Hz, 150 Hz, 200 Hz, and 250 Hz.

* **Y-axis:** Contrast [dB]. Scale ranges from approximately -10 dB to 30 dB, with major ticks at -10 dB, 0 dB, 10 dB, 20 dB, and 30 dB.

* **Line Series:**

* Blue Solid Line: Represents one condition/algorithm.

* Black Dashed Line: Represents another condition/algorithm.

* Red Dash-Dot Line: Represents a third condition/algorithm.

* **Labels:**

* (a) - Bottom left corner

* (b) - Bottom right corner

### Detailed Analysis or Content Details

**Chart (a):**

* **Blue Line:** Starts at approximately 25 dB at 0 Hz, decreases to around 18 dB at 20 Hz, then fluctuates between 18 dB and 28 dB with multiple peaks and troughs until 250 Hz, ending at approximately 22 dB.

* **Black Line:** Starts at approximately 15 dB at 0 Hz, decreases to around 10 dB at 20 Hz, then fluctuates between 10 dB and 22 dB with multiple peaks and troughs until 250 Hz, ending at approximately 12 dB.

* **Red Line:** Starts at approximately 8 dB at 0 Hz, decreases to around 2 dB at 20 Hz, then fluctuates between -2 dB and 12 dB with multiple peaks and troughs until 250 Hz, ending at approximately 6 dB.

**Chart (b):**

* **Blue Line:** Starts at approximately 28 dB at 0 Hz, decreases to around 20 dB at 20 Hz, then fluctuates between 18 dB and 30 dB with multiple peaks and troughs until 250 Hz, ending at approximately 25 dB.

* **Black Line:** Starts at approximately 18 dB at 0 Hz, decreases to around 12 dB at 20 Hz, then fluctuates between 10 dB and 22 dB with multiple peaks and troughs until 250 Hz, ending at approximately 15 dB.

* **Red Line:** Starts at approximately 10 dB at 0 Hz, decreases to around 4 dB at 20 Hz, then fluctuates between -2 dB and 14 dB with multiple peaks and troughs until 250 Hz, ending at approximately 8 dB.

### Key Observations

* Both charts exhibit similar trends, with all three lines generally decreasing in contrast from 0 Hz to 20 Hz, followed by fluctuations at higher frequencies.

* The blue line consistently demonstrates the highest contrast values across both charts.

* The red line consistently demonstrates the lowest contrast values across both charts.

* Chart (b) shows generally higher contrast values across all lines compared to chart (a).

* The fluctuations in contrast at higher frequencies are more pronounced in chart (b).

### Interpretation

The data suggests a comparison of contrast sensitivity or performance between three different conditions or algorithms (represented by the blue, black, and red lines) across a range of frequencies. The higher contrast values observed in chart (b) indicate that the condition/algorithm represented by this chart performs better than the one represented by chart (a). The consistent ranking of the lines (blue > black > red) suggests a systematic difference in performance between the three conditions. The initial decrease in contrast from 0 Hz to 20 Hz could represent a low-frequency attenuation effect, while the subsequent fluctuations likely reflect the complex frequency response of the system being evaluated. The variations in contrast at higher frequencies could be due to noise, interference, or the inherent limitations of the measurement system. The data could be used to optimize the parameters of the algorithms or to select the best condition for a specific application.

</details>

is observed that the deep learning-based model performs better than the sparse reconstruction below 150 Hz for 5 and 15 microphones. Above 150 Hz, both models struggle to provide sufficiently accurate RTFs to create sound zones.

In Fig. 9, the model specialized for the Listening Room with l x + U ( -1 . 0 , 1 . 0) m, is compared to the sparse reconstruction. As expected, the resulting performance is reduced for the model. However, it is observed that there is still a benefit when using 5 microphones. At 15 microphones, on the other hand, the performance is comparable for both methods.

These results indicate that sound zones could be created based on sound fields extrapolated from very few microphone positions. However, at this stage it requires models which are specialized to the particular room or a narrow range of rooms. Alternatively, it would be required to increase the number of microphones to improve the accuracy of the estimated RTFs.

## VII. CONCLUSION

In this paper, deep learning-based sound field reconstruction is evaluated using a new set of extensive mea-

FIG. 9. Contrast results for the simulated Listening Room with l x + U ( -1 . 0 , 1 . 0) m (50 different observation masks). (blue): Perfectly known TFs. (black): Deep learning model. (red): Sparse reconstruction. (dashed): ± 1 standard deviation.

<details>

<summary>Image 9 Details</summary>

### Visual Description

\n

## Chart: Contrast vs. Frequency

### Overview

The image presents two line charts, labeled (a) and (b), displaying contrast in decibels (dB) as a function of frequency in Hertz (Hz). Each chart features multiple lines representing different data series. The charts appear to compare contrast levels across a range of frequencies.

### Components/Axes

* **X-axis:** Frequency [Hz], ranging from approximately 0 to 250 Hz. The scale is linear.

* **Y-axis:** Contrast [dB], ranging from approximately -10 dB to 30 dB. The scale is linear.

* **Data Series:** Each chart contains three distinct line series, visually differentiated by color and line style.

* Blue Line: Solid line, representing the highest contrast values.

* Red Line: Dashed line, representing lower contrast values.

* Black Lines: Multiple solid lines, representing intermediate contrast values.

* **Labels:**

* Chart (a) is labeled "(a)" in the bottom-left corner.

* Chart (b) is labeled "(b)" in the bottom-left corner.

### Detailed Analysis or Content Details

**Chart (a):**

* **Blue Line:** The blue line starts at approximately 28 dB at 0 Hz, decreases to around 15 dB at 20 Hz, then fluctuates between approximately 15 dB and 30 dB for the remainder of the frequency range, exhibiting a generally oscillating pattern with high frequency variations.

* **Red Line:** The red line begins at approximately 5 dB at 0 Hz, decreases to around -5 dB at 20 Hz, and then fluctuates between approximately -5 dB and 10 dB for the rest of the frequency range, showing a similar oscillating pattern but with lower overall contrast.

* **Black Lines:** There are multiple black lines. They generally fall between the blue and red lines in terms of contrast. They start around 10-20 dB at 0 Hz, decrease to around 0-5 dB at 20 Hz, and then fluctuate between approximately 0 dB and 15 dB.

**Chart (b):**

* **Blue Line:** The blue line starts at approximately 30 dB at 0 Hz, decreases to around 18 dB at 20 Hz, and then fluctuates between approximately 18 dB and 32 dB for the remainder of the frequency range, exhibiting a generally oscillating pattern with high frequency variations.

* **Red Line:** The red line begins at approximately 18 dB at 0 Hz, decreases to around 8 dB at 20 Hz, and then fluctuates between approximately 8 dB and 20 dB for the rest of the frequency range, showing a similar oscillating pattern but with lower overall contrast.

* **Black Lines:** There are multiple black lines. They generally fall between the blue and red lines in terms of contrast. They start around 15-25 dB at 0 Hz, decrease to around 5-10 dB at 20 Hz, and then fluctuate between approximately 5 dB and 18 dB.

### Key Observations

* Both charts exhibit similar trends, with all lines showing a decrease in contrast at lower frequencies (up to approximately 20 Hz) followed by fluctuations at higher frequencies.

* The blue line consistently represents the highest contrast levels in both charts.

* The red line consistently represents the lowest contrast levels in both charts.

* Chart (b) generally shows higher contrast values across all frequencies compared to chart (a).

* The black lines show a range of contrast values, suggesting variability within the data.

### Interpretation

The charts likely represent the frequency response of a system or process related to contrast perception or measurement. The differences between charts (a) and (b) could indicate variations in experimental conditions, system parameters, or data processing methods. The decrease in contrast at lower frequencies might be due to limitations in the system's ability to resolve or detect contrast at those frequencies. The fluctuating contrast at higher frequencies suggests a more complex relationship between frequency and contrast, potentially involving interference or resonance effects. The multiple black lines indicate that there is a distribution of contrast values at each frequency, suggesting that the system's response is not uniform. The data suggests that the system performs better at higher frequencies, as indicated by the higher contrast values observed in that range. The consistent pattern across both charts suggests a robust underlying relationship between frequency and contrast.

</details>

surements from real rooms, which are released alongside the paper. The focus of the work is threefold: examine performance of simulation-based learning of magnitude reconstruction in real rooms, extend reconstruction to complex-valued sound fields, and show a sound zone application taking advantage of the reconstructed sound fields. Experiments for each of the three directions indicate promising aspects of data-driven sound field reconstruction, even with a low number of arbitrarily placed microphones.

In the future, it would be of interest to investigate whether transfer learning can help bridge the discrepancies between simulated and real data. With the addition of more rooms, some could be used in the training phase. Furthermore, three-dimensional reconstruction can be achieved using available convolutional models designed specifically to solve three-dimensional problems.

## ACKNOWLEDGMENTS

This work is part of the ISOBEL Grand Solutions project, and is supported in part by the Innovation Fund Denmark (IFD) under File No. 9069-00038A.

- 1 The data are collected under the Interactive Sound Zones for Better Living (ISOBEL) project, which aims to develop interactive sound zone systems, responding to the need for sound exposure control in dynamic real-world contexts, adapted to and tested in healthcare and homes. The ISOBEL Sound Field dataset can be accessed at https://doi.org/10.5281/zenodo.4501339 .

- 2 Further details of the experimental setup and protocol, e.g. equipment, are available in the measurement reports included with the dataset.

3 See footnote 2.

4 Room B has measurements at a single height: 1 meter above the floor.

- 5 The use case with multiple individual audio signals can be realized using superposition of this solution and one where the role of bright and dark zone are reversed.

- Ajdler, T., Sbaiz, L., and Vetterli, M. ( 2006 ). 'The Plenacoustic Function and Its Sampling,' IEEE Transactions on Signal Processing 54 (10), 3790-3804, doi: 10.1109/TSP.2006.879280 .

- Antonello, N., Sena, E. D., Moonen, M., Naylor, P. A., and van Waterschoot, T. ( 2017 ). 'Room Impulse Response Interpolation Using a Sparse Spatio-Temporal Representation of the Sound Field,' IEEE/ACM Transactions on Audio, Speech, and Language Processing 25 (10), 1929-1941, doi: 10.1109/TASLP.2017. 2730284 .

- Bertalmio, M., Sapiro, G., Caselles, V., and Ballester, C. ( 2000 ). 'Image inpainting,' in Proceedings of the 27th Annual Conference on Computer Graphics and Interactive Techniques , SIGGRAPH '00, ACM Press/Addison-Wesley Publishing Co., USA, pp. 417-424, doi: 10.1145/344779.344972 .

- Betlehem, T., Zhang, W., Poletti, M. A., and Abhayapala, T. D. ( 2015 ). 'Personal Sound Zones: Delivering interface-free audio to multiple listeners,' IEEE Signal Processing Magazine 32 (2), 81-91, doi: 10.1109/MSP.2014.2360707 .

- Cecchi, S., Carini, A., and Spors, S. ( 2018 ). 'Room Response Equalization-A Review,' Applied Sciences 8 (1), 16, doi: 10. 3390/app8010016 .

- Choi, J., and Kim, Y. ( 2002 ). 'Generation of an acoustically bright zone with an illuminated region using multiple sources,' Journal of the Acoustical Society of America 111 (4), 1695-1700.

- Dong, C., Loy, C. C., He, K., and Tang, X. ( 2016 ). 'Image SuperResolution Using Deep Convolutional Networks,' IEEE Transactions on Pattern Analysis and Machine Intelligence 38 (2), 295307, doi: 10.1109/TPAMI.2015.2439281 .

- Elliott, S. J., Cheer, J., Choi, J., and Kim, Y. ( 2012 ). 'Robustness and regularization of personal audio systems,' IEEE Transactions on Audio, Speech, and Language Processing 20 (7), 21232133.

- Farina, A. ( 2000 ). 'Simultaneous Measurement of Impulse Response and Distortion with a Swept-Sine Technique,' in Proceedings of the Audio Engineering Society Convention 108 .

- Fernandez-Grande, E. ( 2019 ). 'Sound field reconstruction in a room from spatially distributed measurements,' in 23rd International Congress on Acoustics , pp. 4961-68.

- Genovese, A. F., Gamper, H., Pulkki, V., Raghuvanshi, N., and Tashev, I. J. ( 2019 ). 'Blind Room Volume Estimation from Singlechannel Noisy Speech,' in ICASSP 2019 - 2019 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP) , pp. 231-235, doi: 10.1109/ICASSP.2019.8682951 .

- Glorot, X., and Bengio, Y. ( 2010 ). 'Understanding the difficulty of training deep feedforward neural networks,' in Proceedings of the Thirteenth International Conference on Artificial Intelligence and Statistics , pp. 249-256.

- ISO 3382-2:2008 ( 2008 ). 'Acoustics - Measurement of room acoustic parameters - Part 2: Reverberation time in ordinary rooms,' Standard.

- ITU-R BS.1116-3 ( 2015 ). 'Methods for the subjective assessment of small impairments in audio systems,' Standard.

- Jacobsen, F., and Juhl, P. M. ( 2013 ). Fundamentals of General Linear Acoustics (John Wiley & Sons).

- Karjalainen, M., Makivirta, A., Antsalo, P., and Valimaki, V. ( 2001 ). 'Low-frequency modal equalization of loudspeaker-room responses,' in Audio Engineering Society Convention 111 .