# Towards a generalized monaural and binaural auditory model for psychoacoustics and speech intelligibility

**Authors**: Thomas Biberger, Stephan D. Ewert

This work has been submitted to Acta Acustica for possible publication. Copyright may be transferred without notice, after which this version may no longer be accessible.

## Towards a generalized monaural and binaural auditory model for psychoacoustics and speech intelligibility

Thomas Biberger a) and Stephan D. Ewert Medizinische Physik and Cluster of Excellence Hearing4all, Universität Oldenburg, 26111 Oldenburg, Germany.

a) Electronic mail: thomas.biberger@uni-oldenburg.de

Running title: Modeling masking and speech intelligibility

## ABSTRACT

Auditory perception involves cues in the monaural auditory pathways as well as binaural cues based on differences between the ears. So far auditory models have often focused on either monaural or binaural experiments in isolation. Although binaural models typically build upon stages of (existing) monaural models, only a few attempts have been made to extend a monaural model by a binaural stage using a unified decision stage for monaural and binaural cues. In such approaches, a typical prototype of binaural processing has been the classical equalization-cancelation mechanism, which either involves signal-adaptive delays and provides a single channel output or can be implemented with tapped delays providing a highdimensional multichannel output. This contribution extends the (monaural) generalized envelope power spectrum model by a non-adaptive binaural stage with only a few, fixed output channels. The binaural stage resembles features of physiologically motivated hemispheric binaural processing, as simplified signal processing stages, yielding a 5-channel monaural and binaural matrix feature 'decoder' (BMFD). The back end of the existing monaural model is applied to the 5-channel BMFD output and calculates short-time envelope power and power features. The model is evaluated and discussed for a baseline database of monaural and binaural psychoacoustic experiments from the literature.

## I. INTRODUCTION

Auditory perception is typically binaural, involving signals at both ears. Besides enabling localization based on interaural time and intensity differences, interaural disparities can also be exploited to better detect a target stimulus in spatially separated or spatially differently distributed maskers (spatial release from masking, SRM; e.g., [1, 2]) or an antiphasic tone in diotic noise (binaural masking level difference, BMLD; e.g., [3, 4]). Auditory models have been used to explain and analyze monaural and binaural psychoacoustic phenomena (e.g., [59), and as supportive tools offering instrumental assessment of, e.g., speech intelligibility (SI) and audio quality, applicable for development and control of signal processing (e.g., [10-16]). In such applications typically monaural phenomena and perceptive cues involved in, e.g., spectral and temporal masking [17, 18], as well as binaural cues involved in, e.g., sound source location, apparent source width [15], occur in combination [19, 20]. Auditory models as well as psychoacoustic experiments have often focused on either monaural or binaural aspects of perception in isolation, having led to a variety of monaural models (e.g., [ 5, 6, 9, 21, 22, 23, 24, 25, 26]) and binaural models (e.g., [8, 12, 27, 28, 29, 31, 32, 33, 34]). The binaural models typically share 'common ground' assumptions of essential monaural preprocessing steps followed by a binaural interaction (BI) stage. In many of these binaural models, the prototype binaural interaction is based on the equalization-cancelation mechanism (EC; [28]) providing a 'monaural', single channel output signal after a signal-adaptive binaural noise cancelation. This single channel output either uses the optimal internal delay to compensate for external interaural delays in connection with an optimal level compensation (equalization) to cancel undesired noise, comparable to an adaptive binaural (or bilateral) beamformer (for an overview, see [35]), or simply selects the better ear (referred to as 'betterear glimpsing' if applied in time-frequency frames, see [1]). Thus, the EC mechanism can be easily applied as binaural front end to an existing monaural model (for speech intelligibility see, e.g., [12, 13, 14, 36, 37]). Providing a monaural or diotic input, reverts such models to

monaural ones, although they are typically applied to binaural (dichotic) stimuli. Focusing on a large variety of basic binaural psychoacoustic experiments, Breebaart et al. [8, 38, 39] combined a number of internal delays and interaural gains in a matrix of (excitatoryinhibitory) cancelation elements. By this, a signal-adaptive mechanism to equalize prior to is required to 'select' optimal matrix elements by applying weights in the form of a template for a given psychoacoustic experiment. Both the monaural front end and the templatematching procedure used in the Breebaart model have been taken from the (monaural) perception model of Dau et al. [5, 6].

The question arises whether a simpler, non-adaptive approach is sufficient to model binaural simple addition of the left and right input channel can explain a large part of the observed spatial release from masking (SRM). Such a simplistic binaural interaction has also been suggested by [40] as midline spatial channel in the human auditory cortex. Additionally, the existence of delay lines as utilized in the EC and Breebaart approach has been questioned in mammals (for a review see [41]) and physiologic studies (e.g., [42, 43]) suggest a simpler hemispheric model without delay lines to account for binaural interaction, involving fixed phase delays and excitation as well as inhibition from the contralateral ear. Regarding the cancelation as in the EC approach is avoided, however, a signal-adaptive template mechanism The above mentioned models show successful concepts for combining monaural and binaural model stages in a combined model, however, they have been either explicitly applied to binaural psychoacoustics or speech intelligibility whereas their front and back ends without binaural stage have been explicitly applied to the respective monaural experiments. Moreover, the models require a signal-adaptive mechanism in the EC stage and a selection from 3 output channels (EC approach: Left, EC output, right) or a signal-adaptive template to extract information from the high-dimensional matrix of delay-gain elements. interaction. For speech intelligibility in symmetrically placed interferers, e.g., [2] found that a

development of effective auditory signal processing models, such a fixed binaural interaction could be beneficial for applications where computational efficiency is important. Moreover, it appears desirable to evaluate the same model both in monaural and binaural experiments as well as in basic psychoacoustic tasks and speech intelligibility. The advantage of such a unified modelling approach (see, e.g., [9, 26] for monaural models) is the applicability of the model to a wide variety of stimuli as well as the potential of the model to directly link performance and cues in basic psychoacoustic tasks, such as detection and discrimination thresholds, to higher level processes involved in speech intelligibility. In the long run, such a link might help to understand and disentangle peripheral and central deficits in hearing impaired and elderly persons (e.g., [44 - 48]) and in the context of model-driven stimulus design for psychoacoustics and physiology (e.g., [49]).

Here we suggest and examine a combined monaural and binaural model in a variety of 'benchmark' psychoacoustic and speech intelligibility experiments. The combined approach uses the monaural front end and back end of the generalized power spectrum model (GPSM; [26]) which has been successfully applied to monaural psychoacoustics, speech intelligibility and audio quality ([9, 16, 19, 20, 26]). A binaural processing stage with five fixed (nonadaptive) output channels is suggested prior to the model back end, referred to as binaural matrix feature decoder (BMFD). The output comprises the left (L) and right (R) channels, the L+R channel and the L-R and R-L channels, incorporating a fixed phase delay and gain. L and R enable better ear glimpsing in connection with a selection of time-frequency frames across the BMFD output channels in the back end (better ear channels). The three other channels realize a binaural interaction: L+R represents a midline channel, enhancing coherent (frontal) signals at both ears. The L-R and R-L channels effectively mimic the outputs expected in hemispheric models of binaural interaction in a highly simplified manner. These channels are comparable to two elements in the delay-gain matrix of the Breebaart model, or to two according parameter choices in the EC approach. The ability of the suggested model to

account for the monaural and binaural data and the relevance of the five BMFD output channels are assessed in the following.

## II. Model description

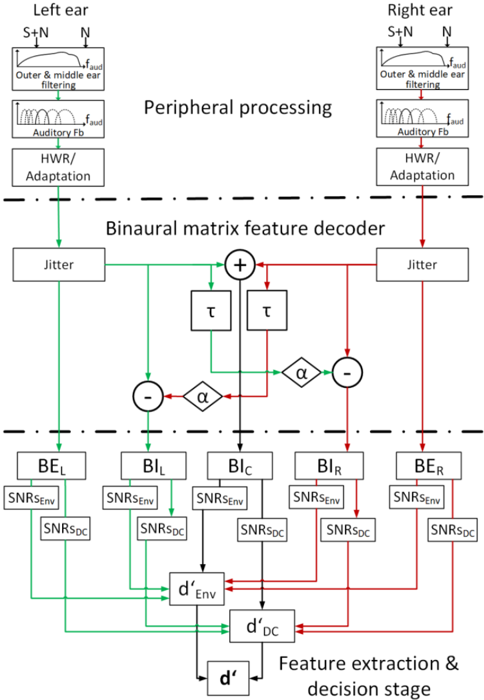

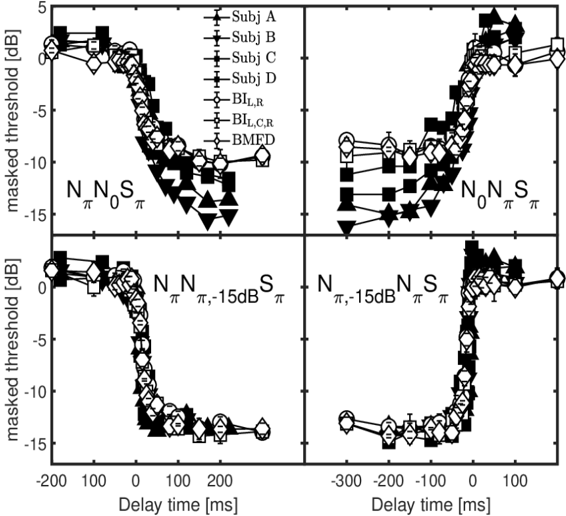

The front end of the proposed GPSM with BMFD extension calculates short-time power and envelope power features for each of two better-ear (BE) channels (L: BEL, R: BER) and the three binaural interaction (BI) channels (L-R: BIL, L+R: BIC, R-L: BIR), comprising the binaural matrix feature decoder. Signal-to-noise ratios based on these features are assessed by a task-dependent decision stage (psychoacoustics or speech intelligibility) in the model back end. The model processes two input stimuli, the target-plus-masker (signal) and masker alone (noise).

## A. Monaural processing stages

The peripheral processing, feature extraction and decision stage of the GPSM with BMFD extension, illustrated in Figure 1 are similar to that of the monaural mr-GPSM proposed in [26]. In the following, the processing stages related to the envelope power pathway are only roughly described here, and for a more comprehensive description the reader is referred to [9, 26].

Figure 1: Block diagram of the GPSM with BMFD extension. After peripheral processing, the left and right ear signals are binaurally processed by using the BMFD that provides two better-ear channels BEL and BER and three binaural interaction channels BIL, BIC, BIR. For each of the five BMFD outputs, envelope power and power SNRs are calculated in short-time frames and then combined across the five channels of the BMFD and across auditory and modulation channels, resulting in a sensitivity index denv ' based on envelope power SNRs and dDC ' based on power SNRs. The final combined d ' is then compared to a threshold criterion that assumes that a signal is detected if d ' > (0.5) 1 2 / .

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Diagram: Binaural Auditory Processing System Architecture

### Overview

This diagram illustrates a computational model of binaural auditory processing, focusing on feature decoding and decision-making. It depicts signal flow from peripheral ear processing through a binaural matrix feature decoder to a final decision stage. The system incorporates environmental noise adaptation and binaural integration mechanisms.

### Components/Axes

**Key Components:**

1. **Peripheral Processing (Top Section)**

- Left Ear Pathway:

- Outer & Middle Ear Filtering (S+N → N)

- Auditory Frequency Band (f_aud)

- HWR/Adaptation Module

- Right Ear Pathway:

- Outer & Middle Ear Filtering (S+N → N)

- Auditory Frequency Band (f_aud)

- HWR/Adaptation Module

2. **Binaural Matrix Feature Decoder (Middle Section)**

- Jitter Modules (Green/Red pathways)

- Temporal Integration (τ) Blocks

- Alpha (α) Modulation Gates

- SNR Components:

- Environmental SNR (SNRs_env)

- DC SNR (SNRs_DC)

3. **Decision Stage (Bottom Section)**

- Environmental Discriminability (d'_Env)

- DC Discriminability (d'_DC)

- Final Discriminability (d')

**Color Coding:**

- Green: Left ear/environmental processing pathways

- Red: Right ear/DC processing pathways

- Black: Temporal/integration components

### Detailed Analysis

**Peripheral Processing:**

- Both ears show identical processing chains:

- S+N (Signal+Noise) input

- Outer/middle ear filtering reduces noise

- Auditory FB (frequency band) extraction

- HWR/Adaptation adjusts to hearing conditions

**Binaural Matrix:**

- Jitter modules introduce temporal variability

- τ (tau) represents temporal integration constants

- α (alpha) gates modulate signal flow

- SNR components:

- SNRs_env: Environmental noise SNR

- SNRs_DC: DC (direct current) SNR

**Decision Stage:**

- d'_Env: Environmental discriminability

- d'_DC: DC discriminability

- d': Final discriminability metric

### Key Observations

1. Symmetrical left/right ear processing with identical components

2. Binaural integration occurs through matrix operations combining:

- Jitter-adjusted signals

- Temporal integration (τ)

- Alpha-modulated pathways

3. SNR values are computed for both environmental and DC components

4. Final decision metric (d') combines environmental and DC discriminability

### Interpretation

This diagram represents a computational model of how the auditory system processes binaural signals. The peripheral processing stages mimic outer/middle ear mechanics and frequency band extraction. The binaural matrix introduces key elements:

- **Jitter**: Models temporal variability in neural responses

- **Temporal Integration (τ)**: Represents neural adaptation timescales

- **Alpha Modulation**: Implements attentional gating mechanisms

The SNR components (SNRs_env and SNRs_DC) likely represent:

- Environmental SNR: Background noise adaptation

- DC SNR: Direct sound component strength

The final discriminability metric (d') combines these factors, suggesting the system optimizes for both environmental robustness and direct sound processing. The symmetrical left/right processing with different color pathways indicates binaural integration occurs after initial peripheral processing.

The model appears to implement a Bayesian framework where:

1. Peripheral processing reduces noise (SNR improvement)

2. Binaural matrix computes feature statistics

3. Decision stage integrates these statistics for final perception

This architecture could be used to simulate hearing aid processing or develop auditory prosthetics that better handle complex sound environments.

</details>

The initial Outer & middle ear filtering stage (see Figure 1) weights the input signal with the hearing threshold in quiet [50], followed by the Auditory Fb , reflecting basilar membrane filtering by applying a fourth-order Gammatone filterbank with bandwidth equal to the

equivalent rectangular bandwidth of the auditory filter (ERBN; [51]) and third octave spacing from 63 to 12500 Hz. In contrast to Hilbert envelope extraction in [26], each auditory channel is half-wave rectified to simulate that inner hair cells primarily respond only to one direction of deflection. The half-wave rectified signals are divided by an integrator with time constant of 2 ms, realized as a first-order low pass filter with cut-off frequency of 500 Hz, to simulate effects of neural adaptation of the auditory system in a simple feed-forward manner.

## B. Binaural processing stages

The adapted signals from the monaural processing of the left and right ear serve as input for the binaural processor. First, amplitude and phase jitter are applied independently for each auditory channel to the input signals, to limit the performance of the BI. Amplitude and time jitters are generated as zero-mean Gaussian processes with a standard deviation of σϵ = 0.25 and σδ = 105 µs, as suggested by [28] and also applied by [36] and [37]. Based on the jittered signals three BI channels BIL, BIC, and BIR are calculated according to Eq. 1-3:

<!-- formula-not-decoded -->

<!-- formula-not-decoded -->

<!-- formula-not-decoded -->

BIL results from subtracting the time delayed and amplified right ear channel 𝛼 ∙

𝑅(𝑝, 𝑡 - 𝜏(𝑝)) from the left ear channel 𝐿(𝑝, 𝑡) in each auditory channel p . BIR is calculated

vice versa to BIL. Based on physiologic findings and preliminary tests, a frequency-dependent delay τ equal to a phase shift of π/4 was chosen, resulting in longer delays for lower frequencies. The amplification factor α equals 3 (see discussion for further details). BIC accounts for the effect of adding the left and right ear signals prior to auditory processing. Taking the half-wave rectified signal representation into account, this is achieved by the square root of the product 𝐿(𝑝, 𝑡) and 𝑅(𝑝, 𝑡) , making BIC a midline channel most sensitive to sound images spatially placed in the median plane. In addition to the three BI channels, the (monaural) left and right channel 𝐿(𝑝, 𝑡) and 𝑅(𝑝, 𝑡) are passed unaltered as output of the five channel BMFD stage. They can be used for better-ear glimpsing in the following feature extraction stage (referred to as BEL, BER).

## C. Power and envelope power feature extraction stage

A first-order low-pass filter with cut-off frequency of 150 Hz [7, 52] is applied to the five output channels of the BMFD. The consecutive processing stages in each of the five BMFD channels are separated into two independent pathways where envelope power SNRs (EPSM; left-hand side of Figure 1), and power SNRs (PSM; right-hand side of Figure 1) are calculated. Indices for the BMFD channels are omitted for clarity in the following equations.

In the PSM path, the intensity (DC-power) features PDC,j(p) are calculated in short-time windows j by taking the squared mean of the Hilbert envelope within each auditory channel p

<!-- formula-not-decoded -->

The duration of the windows depends on the center frequency of the auditory channel, where the lowest center frequency of 63 Hz corresponds to window length of 45 ms and the highest center frequency provides a window length of 8 ms. As proposed by Rhebergen and

Versfeld [11] values for the window duration were taken from [53] and multiplied by 2.5. Intensities P DC ,j(p) falling below the hearing threshold are set to 1e-10. Then the SNRDC ,j(p) is calculated between target-plus-masker intensities P DC,targ+mask ,j(p) and the masker intensities P DC,mask ,j(p) according to

<!-- formula-not-decoded -->

For speech intelligibility predictions, optionally a band importance function (BIF) as used in the ESII, is multiplicatively applied to the intensity SNRDC (p) . Note that the here applied BIF is normalized by its highest value and thus the SNRDC within this auditory channel remains unaffected from the (normalized) BIF, while all other channels become attenuated. In the EPSM path, the envelopes are initially processed by a modulation filterbank consisting of bandpass filters ranging from 2 to 256 Hz with a Q-value of 1 and a third-order low-pass filter with cut-off frequency of 1 Hz. Hereby, based on [54], only modulation filter center frequencies up to one fourth of the corresponding auditory channel center frequency are considered. Then the AC-coupled envelope power P env ,j(p,n) is calculated for each auditory channel p , modulation channel n , and time window i , as it was proposed in [25], by applying a lower limit of -27 dB for the envelope power, reflecting the limitation in human sensitivity to amplitude modulation (AM) [22, 52]. The envelope power based signal-to-noise ratio SNRenv ,i(p,n) between the target-plus-masker and masker envelope power is calculated according to [25] and then a logarithmic weighting of envelope power SNRs is applied for auditory channels with intensity levels of the target-plus-masker stimuli below 35 dB, while envelope power SNRs above that level are unaffected from weighting.

Taken together, the output of the model front end consists of intensity weighted envelope power SNRs, SNRenvW ,i(p,n) , and power SNRs, SNRDC ,j(p) , for each of the five BMFD output channels.

## D. Decision stage

The envelope power and power based SNRs are subjected to a task-specific decision stage for predicting psychoacoustic detection or discrimination thresholds and SI data.

## 1. Psychoacoustics

In the first step, SNRenvW ,i(p,n) in each of the five front end output channels are combined by taking the largest value for each time frame within each auditory and modulation channel resulting in SNRenvWC ,i(p,n) . SNRenvWC ,i(p,n) is then averaged across temporal segments i per modulation filter, resulting in a two-dimensional representation of envelope power SNRenv (p,n). The same procedure is applied to combine SNRDC ,j(p) across the five channels resulting in the SNRDCW ,j(p) which is then is averaged across temporal segments j , resulting in a 1-dimensional representation of power SNRs over auditory channels denoted as SNRDC (p)

Finally, the envelope power and power SNRs [SNRenv (p,n) , SNRDC (p) ] are combined in the same manner as proposed in [26]:

<!-- formula-not-decoded -->

At first envelope power and power SNRs are combined across auditory and modulation channels (in case of envelope power) and auditory channels [inner brackets in Eq. 6] and then multiplied with empirical determined correction factors β = 0.21 and γ = 0.45. Both correction factors are identical to those proposed in [9, 26] and are used due to violation of the

assumption of independent observations in the auditory and modulation channels, because of using overlapping bandpass filter. Finally, the domain (envelope or power), providing the highest SNR-value is chosen.

As in [9, 26] the decision criterion used in this study is based on [7] assuming that a signal is detected if the SNR > -6 dB (equivalent to a power ratio of 0.25), which can, according to [55] also be expressed as sensitivity index d ' = (2 ∙ SNR) 1 2 / ≈ (0.5) 1 2 / .

## 2. Speech intelligibility

The overall SNR is obtained by applying the same procedure as described for psychoacoustic predictions. The overall SNR is converted to the sensitivity index d ' by using equation (6) from [25] and finally transformed into percent correct responses.

## E. Model configurations

All model versions with binaural extension tested in this study had the same settings as the monaural GPSM-versions in [9, 26]: For psychoacoustic experiments, auditory filters had a third-octave spacing ranging from 63 to 12500 Hz, while auditory filters range from 63 to 8000 Hz for SI experiments. For SI predictions, the band-importance weighting, as it was proposed by Table 3 of [56] was exclusively applied to the power SNRs. Each of the models used exactly the same set of parameters for all experiments.

## III. Psychoacoustic evaluation

## A. Monaural experiments

In this study the same set of headphone-based monaural psychoacoustic experiments were applied for model evaluation as in [9, 26]. Thus, these experiments are only briefly explained in the following. For more detailed information the reader is referred to [9] or the respective original publications.

Experiment 1 (Intensity discrimination and hearing thresholds). Just noticeable intensity level differences (JNDs) as a function of the reference level (20, 30, 40, 50, 60, 70 dB) were measured for a 1-kHz pure-tone (in quiet) and broadband noise ranged from 0.1 to 8 kHz [57]. The target interval contained an increased level 𝐿𝑡 = 𝐿0 +∆𝐿 where L0 corresponds to the reference level and ∆L corresponds to the JND, which can be rewritten in terms of intensities as ∆𝐿 = 10 log10 𝐼 𝑡 𝐼 𝑜 = ∆𝐼+𝐼0 𝐼 𝑜 . Hearing thresholds ranging from 50 Hz to 10 kHz were taken from [50].

In Experiment 2 (Spectral masking with narrow-band and pure-tone maskers) the masking patterns for four different signal-masker combinations of noise-in-tone (NT), noise-in-noise (NN), tone-in-tone (TT) and tone-in-noise (TN) originated from [58]. The noise corresponds to a Gaussian noise with a bandwidth of 80 Hz, while the tone refers to a sinusoidal stimulus. The masker had a fixed center frequency at 1 kHz, while the signal had frequencies of 0.25, 0.5, 0.75, 0.9, 1.0, 1.1, 1.25, 1.5, 2, 3, and 4 kHz. All signal-masker combinations, with exception of the TT condition, where each stimulus had a fixed phase of 90°, had random phases. Data for the masker levels of 45 and 85 dB are considered here.

Experiment 3 (Tone in noise masker) was taken from [24] and reflects detection thresholds of a 2-kHz pure tone signal in the presence of a band limited (0.02 to 5 kHz) Gaussian noise masker for signal durations from 5 to 200 ms. The masker had a duration of 500 ms and the

signal was temporally centered in the masker. The presentation level of the masker was 65 dB SPL.

Experiment 4 (AM-depth discrimination) is based on the study from [59] where AM-depth discrimination function for a 16 Hz sinusoidal AM with respect to fixed reference AM-depths was measured for sinusoidally modulated broadband noise (1.952-4 kHz) and pure-tone carriers (4 kHz) at an overall presentation level of 65 dB SPL. The AM depth of the (standard) reference signal ms ranged, in 5-dB steps, from -28 to -3 dB. The increased AM depth of the target signal is given by 𝑚𝑐 = 𝑚𝑠√1 + 𝑚𝑖𝑛𝑐 . Within the measurement the fractional increment 𝑚𝑖𝑛𝑐 = (𝑚𝑐 2 -𝑚𝑠 2 ) 𝑚𝑠 2 / was varied in dB ( 10log𝑚𝑖𝑛𝑐 ).

In Experiment 5 (AM detection) temporal modulation transfer functions (TMTF) for three narrow band noise carriers of 3, 31, and 314 Hz [5] and broadband noise carriers [22] were considered. The narrow band noise carriers were centered at 5 kHz and a sinusoidal AM of 3, 5, 10, 20, 30, 50, and 100 Hz was used. The narrow band carrier level was 65 dB SPL and the stimuli were adjusted to have equal power after AM. The broadband noise carriers ranged from 0.001 to 6 kHz and a sinusoidal AM of 4, 8, 16, 32, 64, 128, 256, 512, and 1024 Hz was applied. The level of the broadband carriers was 77 dB SPL.

Experiment 7 (Amplitude modulation masking) was taken from [9] and measured AM masking and detection thresholds for a target sinusoidal amplitude modulation (SAM) in the presence of a sinusoidal or squarewave masker modulation. The effect of varying the carrier type (broadband and pure-tone carriers), masker waveform (sinusoidal or squarewave), and modulation rate of the target (4 and 16 Hz) and masker (16 and 64 Hz) were examined in four different stimulus configurations which can be seen in Table 1 of [9].

## B. Binaural experiments

Six binaural headphone experiments from literature were used for the model evaluation. The maskers used in the binaural experiments had a duration of 400 ms unless otherwise stated. In several binaural experiments target and masker signals comprise interaural manipulations indicated by subscripts: The subscript 0 indicates no interaural phase shift (in phase), the subscript π indicates an interaural phase shift of π (out of phase), and the subscript m indicates that the corresponding signal was presented monaurally. Accordingly, a N0Sπ stimulus indicates that the noise signal N0 is interaurally in phase, while the target signal Sπ is interaurally out of phase. The experiments are only briefly described in the following and the reader is referred to [38, 39] for experiment 1-5 or the original literature for further details.

Experiment 1 (ITD discrimination) is based on the ITD experiments from [60, 61], where discrimination threshold for ITDs were measured for pure tone stimuli at various frequencies. The reference stimuli were presented diotically at a level of 65 dB SPL, while the target stimuli were presented at the same level but had an ITD. The tested frequencies ranged from 90 to 1500 Hz.

Experiment 2 (IID discrimination) is based on the IID experiments from [62, 63], where thresholds for IID were measured for pure tones at various frequencies ranging from 62.5 to 4000 Hz. The reference stimuli were presented diotically at a level of 65 dB SPL. The target stimuli had an IID, resulting in an overall level of (65+IID/2) dB SPL for the left channel and (65 - IID/2) dB SPL for the right channel.

Experiment 3 (Frequency and interaural phase relationships in wideband conditions) is based on experiments of [3, 4, 64, 65], where thresholds of the four binaural conditions N0Sπ, NπS0, N0Sm, and NπSm, were measured as a function of the frequency of the pure tone signal (125, 250, 500, 1000, 2000, and 4000 Hz). The masker was a low-pass noise with a cutoff frequency of 8 kHz and a spectral level of 40 dB/Hz.

Experiment 4 (N0Sπ depending on signal duration) is based on experiments of [66-69], where N0Sπ detection thresholds were measured as a function of the target signal (Sπ)

duration. The masker signal (N0) was a 500-ms wideband noise with a spectral density of 36.2 dB/Hz. The target signal was a pure tone of either 500 Hz or 4 kHz with signal durations ranging from 2 to 256 ms.

Experiment 5 (Temporal phase transition) is based on the experiments of Kollmeier and Gilky [70] where N0NπSπ, NπN0Sπ, NπNπ,-15dBSπ, Nπ,-15dBNπSπ, thresholds were measured as a function of the temporal position of the target signal (Sπ) relative to the masker-phase transition (NπN0 or N0Nπ) to estimate the temporal resolution of the binaural auditory system. The broadband noise maskers with a duration of 750 ms were bandpass filtered from 100 to 2000 Hz and had a spectral level of 40 dB/Hz. The N0Nπ masker started with an interaural phase of N0 that switched to Nπ after 375 ms. Accordingly, NπN0 started with a 375 ms interaurally out of phase segment followed by a 375 ms in phase segment. The interaurally out of phase masker NπNπ,-15dB was attenuated by 15 dB 375 ms after its onset. The interaurally out of phase masker Nπ,-15dBNπ was amplified by 15 dB 375 ms after its onset. Sπ was an interaurally out of phase pure tone of 500 Hz with a duration of 20 ms. The masked threshold was measured as a function of the delay time between the transition of the noise segments and the signal offset.

Experiment 6 (Time-intensity-trading) is based on experiments of Hafter and Carrier [71], where d ' was measured for several combinations of fixed ITDs (0, +10, +20, +30, and +40 µs; positive sign indicates left ear leading) and varying IIDs (ranging from 0 to -3 dB; negative sign indicates right ear more intense) to examine to which extent time differences can be traded against level differences. The reference signal was a diotic pure tone of 500 Hz (centered sound image). The test signal had a ITD promoting lateralization to the left side, and a IID promoting lateralization to the right side. The lowest d ' measured for a certain IID at a fixed ITD indicates that the test signal was most similar to a centered image.

## C. Results and discussion

Predictions from three model versions were compared to disentangle the contribution of the binaural interaction (BIL, BIC, BIL) and better-ear (BEL, BER) BMFD channels. Model predictions based on all five channels are abbreviated as BMFD and represented by open circles. Model predictions based on the three binaural interaction channels are abbreviated as BIL,C,R (open squares), while predictions based on only the left and right BI channel are abbreviated as BIL,R (open diamonds).

## 1. Monaural Experiments

The upper part of Table 1 reports root-mean squared errors (RMSEs) and the coefficient of determination (R²) between experimental data and predictions based on BMFD, BIL,R, and the monaural mr-GPSM [26]. For the monaural experiments stimuli were only provided to the left-ear input channel of the BMFD and the right-ear input channel was set to zero. As obvious from the RMSE- and R²-values, BMFD predictions largely agree with those from the monaural mr-GPSM. Given the similarity of both models for the monaural data, detailed figures to compare the subjective and predicted data are not shown here. The similarity is expected as the BMFD has only a few modifications which potentially influence monaural prediction performance. As shown in Table 1, prediction performance was not degraded when only BIL and BIR (BIL,R) were used instead of all five BMFD outputs. This result was also expected, because when the right input channel is set to zero, BIL only depends on the left ear channel, and in such monaural conditions BIL is equal to BEL. Accordingly, reducing the number of output channels of the BMFD would be sufficient to capture important monaural psychoacoustic effects, but may not sufficient to account for all the binaural aspects assumed to be important to explain a variety of data from binaural psychoacoustic and SI experiments.

To summarize, for monaural experiments tested in this study the GPSM with binaural BMFD extension largely maintains the prediction performance of the monaural mr-GPSM.

## 2. Binaural Experiments

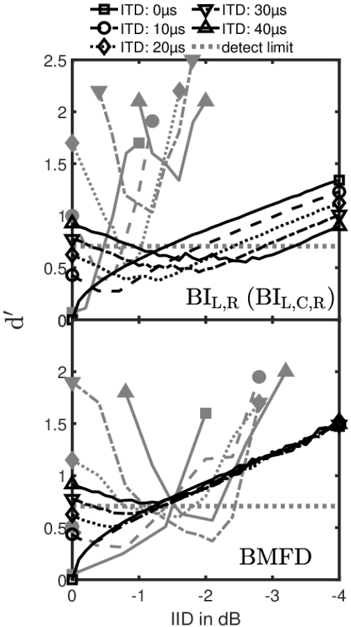

In Figures 2 - 6, subjective and predicted data for the binaural experiments are represented by closed and open symbols, respectively. The lower part of Table 1 reports root-mean square errors (RMSE) and the coefficient of determination (R²) between experimental data and predictions based on BMFD, BIL,C,R, and BIL,R.

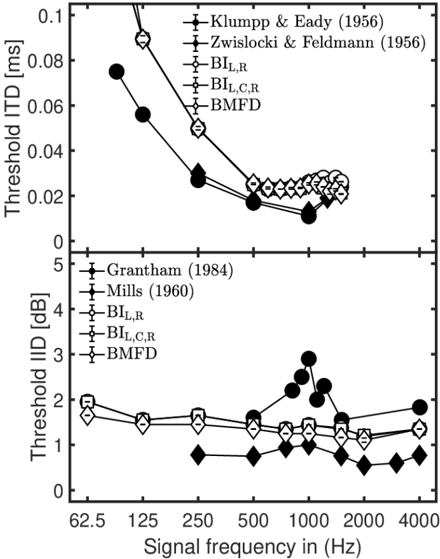

As illustrated in the upper panel of Figure 2, data of [60, 61] showed that ITD thresholds decreases with increasing target tone frequency, where the smallest ITD threshold of about 0.012 ms was found at 1 kHz. These decreasing threshold ITDs represent a more or less constant IPD of about 0.05 rad (~ 3°). For frequencies above 1 kHz, measured ITD thresholds increase, which is due to a reduced phase-locking ability of the IHCs for higher frequencies. For all three model versions, predicted ITD thresholds are higher than observed in the data, particularly at low frequencies. Here a nearly constant IPD of about 0.07 - 0.08 rad (~ 4°-5°) was predicted, which is higher than the nearly constant IPD of about 3° in the data. In agreement with the data, predicted ITD thresholds decrease with increasing frequency reaching a plateau at 500 Hz and above. At about 700 Hz, all three models predicted the lowest ITD threshold of about 0.023 µs. For frequencies above 900 Hz BIL,R predictions showed increased ITD thresholds, while predictions based on BIL,C,R and BMFD showed increased thresholds up to about 1200 Hz followed by slightly decreased threshold up to 1500 Hz. For all three model versions ITD thresholds slightly decrease for frequencies above 1.5 kHz.

Figure 2: Empirical data (filled symbols) and model predictions (open symbols) for ITD thresholds in ms (upper panel) and IID thresholds in dB (lower panel).

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Line Graph: Threshold ITD and IID Across Signal Frequencies

### Overview

The image contains two vertically stacked line graphs comparing threshold values for Interaural Time Difference (ITD) and Interaural Intensity Difference (IID) across a range of signal frequencies (62.5 Hz to 4000 Hz). The top panel shows threshold ITD in milliseconds (ms), while the bottom panel shows threshold IID in decibels (dB). Multiple data series are plotted, each representing different studies or models (e.g., Klumpp & Eady, 1956; Zwislocki & Feldmann, 1956; BI_L,R; BI_L,C,R; BMFD).

### Components/Axes

- **X-axis**: Signal frequency in Hz (62.5, 125, 250, 500, 1000, 2000, 4000).

- **Y-axis (Top Panel)**: Threshold ITD in ms (0 to 0.1).

- **Y-axis (Bottom Panel)**: Threshold IID in dB (0 to 5).

- **Legends**:

- **Top Panel**:

- Klumpp & Eady (1956): Black circles with error bars.

- Zwislocki & Feldmann (1956): Black diamonds with error bars.

- BI_L,R: Open circles.

- BI_L,C,R: Open squares.

- BMFD: Open diamonds.

- **Bottom Panel**:

- Grantham (1984): Black circles with error bars.

- Mills (1960): Black diamonds with error bars.

- BI_L,R: Open circles.

- BI_L,C,R: Open squares.

- BMFD: Open diamonds.

### Detailed Analysis

#### Top Panel (Threshold ITD)

- **Klumpp & Eady (1956)**: Starts at ~0.08 ms at 62.5 Hz, decreases sharply to ~0.02 ms at 4000 Hz.

- **Zwislocki & Feldmann (1956)**: Begins at ~0.06 ms at 62.5 Hz, declines to ~0.02 ms at 4000 Hz.

- **BI_L,R**: Starts at ~0.07 ms, drops to ~0.02 ms.

- **BI_L,C,R**: Similar trend to BI_L,R but slightly higher at lower frequencies.

- **BMFD**: Starts at ~0.06 ms, converges with other series at higher frequencies.

#### Bottom Panel (Threshold IID)

- **Grantham (1984)**: Starts at ~2 dB, remains relatively flat (~1.5–2 dB) across frequencies.

- **Mills (1960)**: Peaks at ~3 dB at 62.5 Hz, dips to ~1 dB at 4000 Hz.

- **BI_L,R**: Starts at ~2 dB, decreases to ~1 dB.

- **BI_L,C,R**: Similar to BI_L,R but slightly higher at lower frequencies.

- **BMFD**: Starts at ~1.5 dB, remains stable (~1–1.5 dB).

### Key Observations

1. **Frequency Dependence**: Both ITD and IID thresholds generally decrease with increasing frequency, suggesting better auditory localization at higher frequencies.

2. **Convergence**: Most data series converge at higher frequencies (2000–4000 Hz), indicating agreement across studies/models at these ranges.

3. **Anomalies**:

- Mills (1960) shows a peak in IID at 62.5 Hz, diverging from the general trend.

- BI_L,C,R consistently exhibits slightly higher thresholds than BI_L,R in both panels.

4. **Model Variations**: BMFD and BI_L,R show the smallest thresholds, while older studies (e.g., Klumpp & Eady) have higher values.

### Interpretation

The data demonstrates a clear trend where auditory localization thresholds (ITD and IID) improve with higher signal frequencies, likely due to enhanced temporal and spectral resolution in the auditory system. The convergence of modern models (BI_L,R, BI_L,C,R, BMFD) at higher frequencies suggests these frameworks better capture the physiological mechanisms of sound localization. Older studies (e.g., Klumpp & Eady, Mills) exhibit higher thresholds, possibly reflecting limitations in experimental design or theoretical assumptions. The anomaly in Mills (1960) for IID at low frequencies may indicate unique experimental conditions or species-specific differences. Overall, the graphs highlight the importance of frequency in auditory processing and the evolution of modeling approaches over time.

</details>

The lower panel of Figure 2 shows measured IID thresholds adopted from the studies of [62, 63]. Across frequencies ranging from 250 Hz to 4 kHz, Mills [62] measured rather similar IID thresholds (average threshold of about 0.8 dB), where the maximum of about 1 dB was reached at 1 kHz. Grantham [63] observed overall about 1.3 dB higher IID thresholds with substantially increased thresholds around 1 kHz. Predicted IID thresholds for the three model versions slightly decreased from about 2 dB at 62.5 Hz to about 1.1 dB at 2 kHz, and increased again for higher frequencies. The predicted IID pattern agrees well with the average of both data sets. Predicted thresholds for BIL,R, and BIL,C,R between frequencies from 62.5 Hz to 2 kHz are on average 0.2 dB higher than those from BMFD.

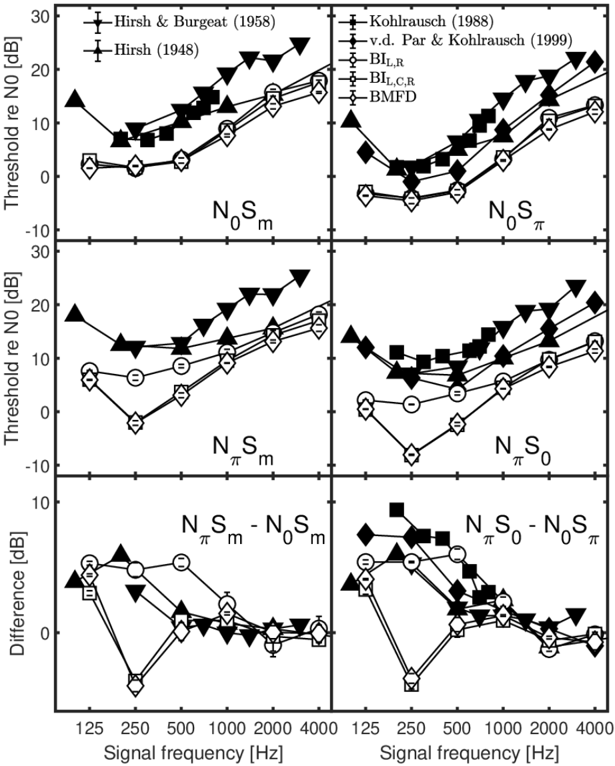

The upper four panels of Figure 3 show measured N0Sm, NπSm N0Sπ, NπS0, thresholds adopted from the studies of [3, 4, 64, 65]. All threshold patterns show a V shape with a minimum at 250 Hz. For the monaural target (Sm) thresholds are lower for N0Sm than for NπSm,

while for the binaural target (Sπ or S0) thresholds are lower for N0Sπ than for NπS0. The resulting threshold differences of NπSm-N0Sm and NπS0-N0Sπ are shown in both lower panels of Figure 3. The largest differences, up to about 9.5 dB, occur for signal frequencies below 500 Hz. BIL,R predictions (open circles) show a similar overall pattern to the data, and accordingly the predicted NπSm-N0Sm and NπS0-N0Sπ patterns largely agree with data. For NπSm and NπS0, both middle panels in Figure 3 show larger deviations between the data and the BIL,C,R and BMFD predictions at 250 Hz and 500 Hz. This deviation is based on the contribution of the BIC channel that overestimates human performance for the NπSm and NπS0 conditions. Accordingly large deviations between data and predictions are observed in the difference patterns in the lower two panels for BIL,C,R and BMFD at 250 Hz.

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Multi-Panel Graph: Threshold and Difference Plots Across Signal Frequencies

### Overview

The image contains six subplots arranged in a 2x3 grid, comparing threshold values and differences across signal frequencies (125–4000 Hz). Each plot uses distinct symbols and lines to represent data from historical and modern studies (e.g., Hirsch & Burgeat 1958, Kohlrausch 1988). The graphs focus on relationships between noise thresholds (N₀), signal components (Sₘ, S₀, S_π), and their differences.

---

### Components/Axes

1. **X-Axes**:

- All plots share the same x-axis: **Signal frequency [Hz]** (125–4000 Hz).

- Subplots are labeled with terms like **N₀Sₘ**, **N₀S_π**, **N_πSₘ**, etc., indicating specific noise-signal relationships.

2. **Y-Axes**:

- **Top Row**: **Threshold re N₀ [dB]** (range: -30 to 30 dB).

- **Bottom Row**: **Difference [dB]** (range: -10 to 10 dB).

3. **Legends**:

- Positioned in the **top-right** of each plot.

- Symbols include:

- **Triangles** (▲): Hirsch & Burgeat (1958)

- **Squares** (■): Hirsch (1948)

- **Circles** (○): Kohlrausch (1988)

- **Diamonds** (◆): v.d. Par & Kohlrausch (1999)

- **Other symbols**: BI_L,R, BI_L,C,R, BMFDI (exact labels unclear due to resolution).

---

### Detailed Analysis

#### Top Row: Threshold Plots

1. **N₀Sₘ (Top-Left)**:

- **Trend**: All lines show an **upward trend** with increasing frequency.

- **Key Data**:

- Hirsch & Burgeat (1958): Peaks at ~2000 Hz (~25 dB).

- Hirsch (1948): Lower amplitude, peaks at ~1000 Hz (~15 dB).

- Kohlrausch (1988): Smooth rise, ~10 dB at 4000 Hz.

2. **N₀S_π (Top-Right)**:

- **Trend**: **Downward trend** at lower frequencies, then stabilizes.

- **Key Data**:

- v.d. Par & Kohlrausch (1999): Sharp drop to ~-10 dB at 500 Hz.

- BMFDI: Minimal variation, ~0 dB across frequencies.

#### Middle Row: Threshold Plots

3. **N_πSₘ (Middle-Left)**:

- **Trend**: **V-shaped** pattern (dips at ~1000 Hz).

- **Key Data**:

- BI_L,R: Lowest dip (~-5 dB at 1000 Hz).

- BI_L,C,R: Higher amplitude, ~5 dB at 2000 Hz.

4. **N_πS₀ (Middle-Right)**:

- **Trend**: **Upward trend** with frequency.

- **Key Data**:

- BMFDI: Steep rise, ~20 dB at 4000 Hz.

- Kohlrausch (1988): Gradual increase, ~15 dB at 2000 Hz.

#### Bottom Row: Difference Plots

5. **N_πSₘ - N₀Sₘ (Bottom-Left)**:

- **Trend**: **U-shaped** with minima at ~500 Hz.

- **Key Data**:

- Hirsch (1948): Sharp dip to ~-8 dB at 500 Hz.

- v.d. Par & Kohlrausch (1999): Shallow curve, ~-2 dB at 1000 Hz.

6. **N_πS₀ - N₀S_π (Bottom-Right)**:

- **Trend**: **Downward trend** with frequency.

- **Key Data**:

- BMFDI: Steep decline, ~-10 dB at 4000 Hz.

- Hirsch & Burgeat (1958): Moderate drop, ~-5 dB at 2000 Hz.

---

### Key Observations

1. **Historical vs. Modern Data**:

- Older studies (Hirsch 1948, Hirsch & Burgeat 1958) show sharper peaks and higher variability.

- Modern models (Kohlrausch 1988, v.d. Par & Kohlrausch 1999) exhibit smoother trends.

2. **Signal Interactions**:

- Differences (bottom row) highlight discrepancies between noise-signal relationships (e.g., N_πSₘ - N₀Sₘ vs. N_πS₀ - N₀S_π).

3. **Anomalies**:

- BMFDI data in the bottom-right plot shows the steepest decline, suggesting unique assumptions in their model.

- BI_L,R in the middle-left plot has the lowest threshold, indicating potential sensitivity to specific noise components.

---

### Interpretation

1. **Model Consistency**:

- Modern studies (Kohlrausch, v.d. Par) align more closely with each other, suggesting refined methodologies.

- Older studies diverge significantly, possibly due to measurement limitations or theoretical assumptions.

2. **Frequency Dependence**:

- Thresholds and differences are strongly frequency-dependent, with critical regions at 500–2000 Hz.

- The U-shaped and V-shaped trends imply non-linear interactions between noise and signal components.

3. **Practical Implications**:

- The differences (bottom row) could guide noise reduction strategies by identifying frequency ranges where noise-signal mismatches are most pronounced.

- Discrepancies between models (e.g., BMFDI vs. Kohlrausch) highlight the need for validation across experimental setups.

---

### Spatial Grounding & Cross-Reference

- **Legend Consistency**: All symbols in each plot match their legend entries (e.g., triangles always represent Hirsch & Burgeat).

- **Positioning**: Legends are consistently placed in the top-right, ensuring clarity without obscuring data.

---

### Conclusion

This graph demonstrates evolving understanding of noise-signal interactions over time, with modern models showing greater consistency and precision. The differences between plots underscore the importance of frequency-specific analysis in noise management.

</details>

Figure 3: Empirical data (filled symbols) and model predictions (open symbols) for masked thresholds for wideband N0Sm (upper-left panel), N0Sπ (upper-right panel), NπSm (middle-left panel), and NπS0 (middle-right panel) conditions as a function of the frequency of the signal. Differences in thresholds between the NπSm and N0Sm are shown in the lower-left panel, while the lower-right panel represents differences in threshold between NπS0 and N0Sπ.

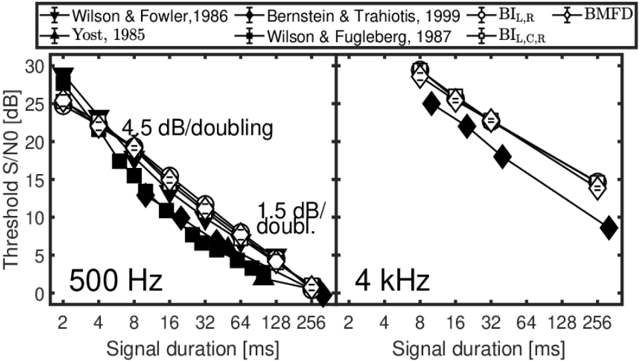

Measured N0Sπ thresholds as a function of signal duration adopted from [66-69] are shown in Figure 4. For the target signal with frequency of 500 Hz, thresholds decrease with a slope of about 4.5 dB per duration doubling, while for longer signal durations a slope of about 1.5 dB per duration doubling is observed. For the 4 kHz target signal, the data shows a slope of about 3 dB per duration doubling. For all three model versions, nearly identical thresholds were observed with on average higher thresholds than observed in the data. For both signal frequencies predicted thresholds decreased with about 3 dB per doubling of the signal duration, as the signal's energy increases by 3 dB per duration doubling. Such increase in signal duration means that more short-time frames of the model provide an SNR-advantage, that effectively lowers the threshold.

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Line Graph: Threshold S/N0 vs. Signal Duration at 500 Hz and 4 kHz

### Overview

The image contains two side-by-side line graphs comparing threshold signal-to-noise ratio (S/N0) in decibels (dB) against signal duration in milliseconds (ms) for two frequencies: 500 Hz (left) and 4 kHz (right). Multiple data series are plotted, each representing different studies or methods, with distinct markers and line styles. The graphs show exponential decay trends, with annotations indicating dB/doubling rates.

---

### Components/Axes

- **X-axis**: "Signal duration [ms]" (logarithmic scale: 2, 4, 8, 16, 32, 64, 128, 256 ms)

- **Y-axis**: "Threshold S/N0 [dB]" (linear scale: 0–30 dB)

- **Legends**:

- **Left Plot (500 Hz)**:

- Wilson & Fowler, 1986 (black squares)

- Bernstein & Trahiotis, 1999 (black circles)

- Yost, 1985 (black diamonds)

- Wilson & Fugleberg, 1987 (black triangles)

- BI_L,R (white circles)

- BMFD (white diamonds)

- **Right Plot (4 kHz)**:

- Same legend entries as left plot, but only BI_L,R and BMFD are visible.

- **Annotations**:

- "4.5 dB/doubling" (left plot)

- "1.5 dB/doubling" (right plot)

---

### Detailed Analysis

#### Left Plot (500 Hz)

- **Trend**: Steeper exponential decay. All data series converge near 0 dB at 256 ms.

- **Key Data Points**:

- **Wilson & Fowler, 1986**: Starts at ~28 dB (2 ms), ends at ~1 dB (256 ms).

- **Bernstein & Trahiotis, 1999**: Starts at ~26 dB (2 ms), ends at ~0.5 dB (256 ms).

- **Yost, 1985**: Starts at ~24 dB (2 ms), ends at ~0 dB (256 ms).

- **Wilson & Fugleberg, 1987**: Starts at ~22 dB (2 ms), ends at ~0.5 dB (256 ms).

- **BI_L,R**: Starts at ~20 dB (2 ms), ends at ~0 dB (256 ms).

- **BMFD**: Starts at ~18 dB (2 ms), ends at ~0 dB (256 ms).

#### Right Plot (4 kHz)

- **Trend**: Slower exponential decay. Lines diverge slightly at longer durations.

- **Key Data Points**:

- **BI_L,R**: Starts at ~15 dB (2 ms), ends at ~5 dB (256 ms).

- **BMFD**: Starts at ~12 dB (2 ms), ends at ~3 dB (256 ms).

---

### Key Observations

1. **Frequency Dependence**: The 500 Hz plot shows a steeper decline (4.5 dB/doubling) compared to 4 kHz (1.5 dB/doubling), suggesting frequency-dependent sensitivity to signal duration.

2. **Convergence**: At 500 Hz, all methods converge near 0 dB at 256 ms, indicating diminishing returns for longer signals. At 4 kHz, divergence persists, implying sustained sensitivity.

3. **Method Variability**: At 500 Hz, Wilson & Fowler (1986) consistently reports the highest thresholds, while BI_L,R and BMFD show the lowest. At 4 kHz, BI_L,R and BMFD diverge, with BI_L,R maintaining higher thresholds.

---

### Interpretation

The data demonstrates that **longer signal durations improve threshold S/N0**, but the rate of improvement depends on frequency. At 500 Hz, all methods achieve near-optimal thresholds by 256 ms, suggesting saturation. At 4 kHz, thresholds remain elevated even at longer durations, indicating that higher frequencies may require additional signal processing or longer durations for optimal detection. The divergence between BI_L,R and BMFD at 4 kHz highlights methodological differences in handling higher-frequency signals. These findings could inform auditory system modeling or signal design for hearing aids.

</details>

Figure 4: Empirical data (filled symbols) and model predictions (open symbols) for N0Sπ thresholds as a function of the signal duration. Data and predictions are shown for signal frequencies of 500 Hz (left panel) and 4 kHz (right panel).

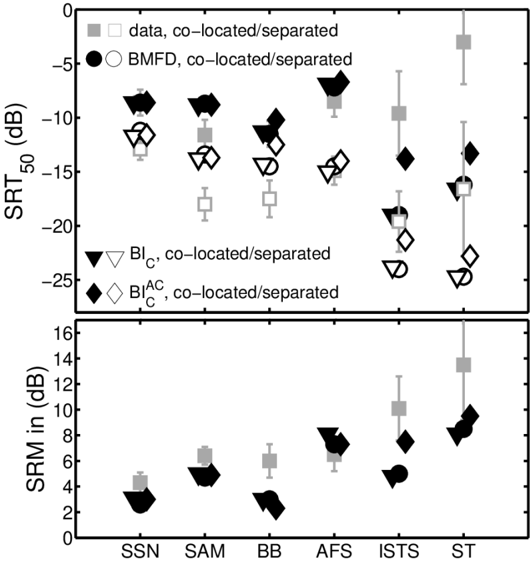

In Figure 5, masked thresholds from four subjects measured by Kollmeier and Gilky [70] are shown. In N0NπSπ and NπN0Sπ conditions lower thresholds (large BMLD) were measured for target signals (Sπ) in the interaurally in phase masker segments (N0) than for Sπ in interaurally out of phase masker segments (Nπ). Similarly for the corresponding 'monaural' NπNπ,-15dBSπ and Nπ,-15dBNπSπ conditions, Sπ in attenuated Nπ segments resulted in lower thresholds compared to Sπ in not attenuated Nπ segments. While a gradual release from masking was observed when shifting Sπ from the Nπ segment into the N0 segment (upper-left panel), a very steep release from masking was observed for the corresponding 'monaural' NπNπ,-15dBSπ condition (lower-left-panel). A similar behavior was found for the N0NπSπ and the Nπ,-15dBNπSπ conditions. Similar predicted masked thresholds are observed for the three model versions and the predicted steepness of the transition is the same for all four conditions. The predicted BMLD in NπN0Sπ (upper-left panel) and the predicted masking effect in N0NπSπ (upper-right panel) are somewhat smaller than observed in data. Overall, the predictions largely agree to experimental data, which is also indicated by reasonable RMSE and R² values of about 2.7 dB and 0.8, respectively.

Figure 5: Empirical data (filled symbols) and model predictions (open symbols) for NπN0Sπ (upper-left panel) and NπN0Sπ (upper-right panel) thresholds as a function of the temporal position of the signal center relative to the masker-phase transition. Monaural thresholds for NπNπ,-15dBSπ and Nπ,-15dBNπSπ are shown in the lower-left and lower-right panels. Filled symbols represent four subjects measured by Kollmeier and Gilky [70].

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Chart/Diagram Type: Masked Threshold vs. Delay Time for Different Configurations

### Overview

The image contains four quadrants of graphs, each representing masked threshold (dB) as a function of delay time (ms) for distinct experimental configurations. Each quadrant has a unique combination of "N" and "S" parameters (e.g., NNS, N-15dB S), with data points plotted using symbols corresponding to subjects (Subj A-D), baselines (BL_R, BL_CR), and BMFD. The graphs show sharp transitions in masked threshold around specific delay times, suggesting dynamic responses to experimental conditions.

### Components/Axes

- **X-axis (Delay Time, ms)**:

- Top-left and top-right graphs: -200 to 200 ms.

- Bottom-left and bottom-right graphs: -300 to 100 ms.

- **Y-axis (Masked Threshold, dB)**:

- All graphs: -15 to 5 dB.

- **Legends**:

- **Top-left corner**:

- Symbols:

- Circles: Subj A

- Triangles: Subj B

- Squares: Subj C

- Diamonds: Subj D

- Black crosses: BL_R

- White crosses: BL_CR

- Black triangles: BMFD

- **Axis labels**:

- Top-left graph: "NNS" (top-left), "NNS" (bottom-left).

- Top-right graph: "N0ππ" (top-left), "N0ππ" (bottom-left).

- Bottom-left graph: "Nππ-15dB" (top-left), "Nππ-15dB" (bottom-left).

- Bottom-right graph: "Nπ-15dB ππ" (top-left), "Nπ-15dB ππ" (bottom-left).

### Detailed Analysis

#### Top-Left Graph (NNS)

- **Trend**:

- Masked threshold starts at ~0 dB, drops sharply to ~-15 dB around -100 ms, then rises back to ~0 dB at 200 ms.

- **Data Points**:

- Subj A (circles): Peaks at ~0 dB at -200 ms, drops to ~-15 dB at -100 ms, rises to ~0 dB at 200 ms.

- Subj B (triangles): Similar trend but with slightly less depth in the trough.

- BL_R (black crosses): Steeper decline, reaching ~-15 dB at -100 ms.

- BMFD (black triangles): Gradual decline to ~-10 dB at 200 ms.

#### Top-Right Graph (N0ππ)

- **Trend**:

- Masked threshold starts at ~0 dB, declines gradually to ~-5 dB at 200 ms.

- **Data Points**:

- Subj C (squares): Steady decline from ~0 dB to ~-5 dB.

- BL_CR (white crosses): Slightly less steep decline, ending at ~-3 dB.

- BMFD (black triangles): Minimal change, remaining near 0 dB.

#### Bottom-Left Graph (Nππ-15dB)

- **Trend**:

- Masked threshold starts at ~0 dB, drops sharply to ~-15 dB at -150 ms, then rises to ~-5 dB at 100 ms.

- **Data Points**:

- Subj D (diamonds): Sharpest drop, reaching ~-15 dB at -150 ms.

- BL_R (black crosses): Similar sharp decline but recovers faster.

- BMFD (black triangles): Gradual decline to ~-10 dB.

#### Bottom-Right Graph (Nπ-15dB ππ)

- **Trend**:

- Masked threshold starts at ~0 dB, declines gradually to ~-5 dB at 100 ms.

- **Data Points**:

- Subj A (circles): Steady decline to ~-5 dB.

- BL_CR (white crosses): Slightly less steep, ending at ~-3 dB.

- BMFD (black triangles): Minimal change, remaining near 0 dB.

### Key Observations

1. **Configuration-Specific Responses**:

- NNS and Nππ-15dB configurations show the most pronounced threshold changes, suggesting sensitivity to "N" and "S" parameters.

- N0ππ and Nπ-15dB ππ configurations exhibit milder responses, indicating reduced sensitivity.

2. **Baseline Effects**:

- BL_R (black crosses) consistently shows sharper threshold drops compared to BL_CR (white crosses), implying different baseline conditions.

- BMFD (black triangles) remains relatively stable across configurations, possibly serving as a control.

3. **Delay Time Sensitivity**:

- Sharp transitions around -100 ms (NNS) and -150 ms (Nππ-15dB) suggest critical delay thresholds for the observed effects.

### Interpretation

The data demonstrates that experimental configurations (N/S parameters) and baseline conditions (BL_R/BL_CR) significantly influence masked threshold dynamics. The sharp declines in NNS and Nππ-15dB configurations may reflect specific neural or sensory processing events triggered by delay time. BMFD’s stability across configurations suggests it represents a baseline or reference condition. The differing responses of BL_R and BL_CR highlight the importance of baseline selection in interpreting results. These findings could inform the design of experiments targeting threshold modulation in sensory or cognitive tasks.

</details>

The upper and lower panel of Figure 6 show measured d ' s from the time-intensity-trading experiment of subject S1 and S4 from Hafter and Carrier [71], respectively (see their Figure 1). For clarity only these two subjects with the largest difference in performance are shown in different panels. Likewise, the model predictions for the BI channels and all five channels are split to the two panels for better visibility. Both subjects show that for increasing ITD of 0, 10, 20, 30, and 40 µs a larger opposing ILD was required for 'trading' yielding the lowest sensitivity index d ' for discrimination of the trading stimulus from the diotic reference signal. It is obvious that the model based on only the BI channels (upper panel of Figure 6) can only mimic the general pattern while there are large differences in the sensitivity and the ILD required for trading as a function of ITD. Moreover, the model with all five BMFD output

channels (lower panel of Figure 6) shows even larger deviations to the data and fails to predict a clear dependency of ILD on ITD. Overall the model is closer to the performance of subject S4 than to S1.

Figure 6: Empirical data (grey lines, closed symbols) and model predictions (black lines, open symbols) for the time-intensity trading experiment of Hafter and Carrier [71] with different ITDs of 0, 10, 20, 30, and 40 µs. The ordinate represents d ' , while the abscissa represents the IID in dB. Since BIL,R and BIL,C,R predicts nearly identical d ' only BIL,R predictions are shown in the upper panel for improved clarity. The lower panel represents predictions from BMFD. The dashed horizontal lines indicate the decision criterion of the models, e.g., differences between test and reference signals resulting in d ' values below the criterion are not assumed to be detectable.

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Line Graph: Detection Performance vs. Interaural Intensity Difference (IID)

### Overview

The image contains two subplots comparing detection performance (d/d') across varying interaural intensity differences (IID in dB) for different interaural time differences (ITD). The top subplot focuses on "BI_L,R (BI_L,C,R)" and the bottom on "BMFD". Data points are marked with symbols corresponding to ITD values (0µs, 10µs, 20µs, 30µs, 40µs), and a dashed line indicates the "detect limit" at d/d' = 1.

---

### Components/Axes

- **X-axis (Horizontal)**:

- Label: "IID in dB"

- Range: -4 to 0 (in increments of 1 dB)

- Position: Bottom of both subplots

- **Y-axis (Vertical)**:

- Label: "d/d'"

- Range: 0 to 2.5 (in increments of 0.5)

- Position: Left of both subplots

- **Legend**:

- Located in the top-left corner of the image.

- Symbols:

- **Squares**: ITD = 0µs

- **Circles**: ITD = 10µs

- **Triangles**: ITD = 30µs

- **Diamonds**: ITD = 20µs

- **Dashed lines**: Detect limit (d/d' = 1)

- **Subplots**:

- **Top**: Labeled "BI_L,R (BI_L,C,R)" in the bottom-right corner.

- **Bottom**: Labeled "BMFD" in the bottom-right corner.

---

### Detailed Analysis

#### Top Subplot (BI_L,R / BI_L,C,R):

1. **ITD = 0µs (Squares)**:

- Starts at (IID = -4 dB, d/d' ≈ 0.2) and rises sharply to (IID = 0 dB, d/d' ≈ 1.5).

- Crosses the detect limit (d/d' = 1) at IID ≈ -2 dB.

2. **ITD = 10µs (Circles)**:

- Begins at (IID = -4 dB, d/d' ≈ 0.1) and increases gradually.

- Crosses the detect limit at IID ≈ -1.5 dB.

3. **ITD = 20µs (Diamonds)**:

- Starts at (IID = -4 dB, d/d' ≈ 0.3) and rises steeply.

- Crosses the detect limit at IID ≈ -1.8 dB.

4. **ITD = 30µs (Triangles)**:

- Begins at (IID = -4 dB, d/d' ≈ 0.4) and increases sharply.

- Crosses the detect limit at IID ≈ -1.2 dB.

5. **ITD = 40µs (Diamonds)**:

- Starts at (IID = -4 dB, d/d' ≈ 0.5) and rises steeply.

- Crosses the detect limit at IID ≈ -0.8 dB.

#### Bottom Subplot (BMFD):

1. **ITD = 0µs (Squares)**:

- Starts at (IID = -4 dB, d/d' ≈ 0.1) and rises gradually.

- Crosses the detect limit at IID ≈ -2.5 dB.

2. **ITD = 10µs (Circles)**:

- Begins at (IID = -4 dB, d/d' ≈ 0.2) and increases slowly.

- Crosses the detect limit at IID ≈ -2 dB.

3. **ITD = 20µs (Diamonds)**:

- Starts at (IID = -4 dB, d/d' ≈ 0.3) and rises steeply.

- Crosses the detect limit at IID ≈ -1.5 dB.

4. **ITD = 30µs (Triangles)**:

- Begins at (IID = -4 dB, d/d' ≈ 0.4) and increases sharply.

- Crosses the detect limit at IID ≈ -1 dB.

5. **ITD = 40µs (Diamonds)**:

- Starts at (IID = -4 dB, d/d' ≈ 0.5) and rises steeply.

- Crosses the detect limit at IID ≈ -0.5 dB.

---

### Key Observations

1. **Detect Limit Threshold**:

- All ITD values eventually cross the detect limit (d/d' = 1), but performance improves with higher ITDs.

- For example, ITD = 40µs crosses the threshold at IID = -0.8 dB (top subplot), while ITD = 0µs requires IID = -2 dB.

2. **Performance Trends**:

- Higher ITDs (e.g., 30µs, 40µs) achieve higher d/d' values at the same IID compared to lower ITDs.

- The BMFD subplot shows consistently lower d/d' values than BI_L,R across all ITDs.

3. **Anomalies**:

- In the top subplot, the ITD = 20µs (diamonds) line dips below the detect limit at IID = -3 dB, suggesting reduced performance at extreme IID values.

---

### Interpretation

- **Detection Capability**:

- The graph demonstrates that detection performance (d/d') improves with larger ITDs, particularly at lower IID values. This suggests that temporal cues (ITD) enhance localization accuracy in noisy environments.

- **Model Comparison**:

- The BI_L,R (BI_L,C,R) model outperforms BMFD across all ITDs, indicating that the former may better capture interaural cues for sound localization.

- **Practical Implications**:

- Systems relying on ITD cues (e.g., hearing aids, binaural audio processing) could prioritize higher ITD values to improve detection thresholds in challenging acoustic conditions.

- **Uncertainty**:

- Exact d/d' values are approximate due to the lack of gridlines. For example, the ITD = 40µs line in the top subplot crosses the detect limit at IID ≈ -0.8 dB, but the precise value may vary slightly.

</details>

The lower part of Table 1 summarizes RMSE and R² between experimental data and predictions for the three model versions. Is it observed that for most binaural experiments the three model versions BMFD, BIL,C,R, and BIL,R achieve a comparable prediction performance. Only in experiment 3 (Frequency and interaural phase relationships in wideband conditions) BIL,R achieved a substantially better performance compared to the other two versions. Therefore, it can be stated that BIL and BIR are sufficient to explain most of the data of the binaural psychoacoustic experiments used in this study.

Overall, Table 1 showed that the GPSM with binaural BMFD extension, accounts for several monaural and binaural psychoacoustic experiments.

Table 1 about here

## IV. Speech intelligibility evaluation

The binaural model extension was also tested for the headphone-based binaural (dichotic) speech intelligibility experiments of Ewert et al. [2], where SRTs were measured for frontal target speech [German Oldenburger Satztest (OLSA), [72]] in the presence of two co-located or spatially separated maskers with different spectro-temporal characteristics, but identical long-term spectrum.

Four stationary speech-shaped noise (SSN) based maskers, SSN, SAM, BB, and AFS with different spectro-temporal stimulus properties and two speech maskers were used in [2]: The SAM masker was obtained by applying an 8-Hz sinusoidal amplitude modulation with 100% modulation depth to the SSN masker yielding regular temporal modulations coherent across all auditory channels (co-modulation). For the BB masker, the SSN was multiplied with the Hilbert envelope of a broadband speech signal (ten randomly selected OLSA sentences), introducing temporal gaps that reflect the modulations of intact speech. Temporal

irregularities of the speech envelope are coherent across all auditory channels. For the acrossfrequency shifted (AFS) masker, the speech envelope was randomly shifted in eight groups (each consisting of four adjacent auditory frequency channels) resulting in incoherent AMs across auditory channels. As speech maskers, a male version of the International Speech Test Signal (ISTS; [73]), composed of intact continuous speech uttered by six different female talkers in different languages, was used as 'nonsense' speech. A single talker (ST) masker used randomly cut parts of ten concatenated OLSA sentences spoken by a different male speaker than in the target OLSA material.

Two spatial target-masker configurations were measured for each masker: In the colocated configuration target and masker sources were placed in front of the receiver (0°). In the spatially separated configuration, the masker positions were changed two both sides at ±60° relative to the frontal direction. Speech intelligibility improvements depending on the spatial separation between target and masker are expressed as SRM. A single masker had a level of 65 dB SPL, and accordingly the presentation of two statistically independent masker sequences resulting in an overall masker level of 68 dB SPL. A detailed description of the experiment can be found in [2].

## A. Results and discussion

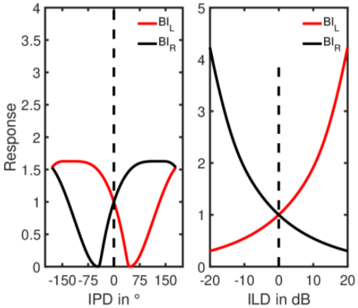

Measured and predicted SRTs are represented by gray and black symbols, respectively. Co-located maskers are indicated by closed symbols and separated maskers by open symbols. Predicted SRTs shown in Figure 7 are averaged over 5 repeated simulations each based on 20 OLSA sentences. Each model version was calibrated to the speech material as proposed in [25] by setting the parameters k, q, m, 𝜎𝑠 in order to match the SSN data, which are shown in Table 2.

Table 2 about here

Figure 7: The upper panel shows SRT50 results, while the lower panel shows the respective SRM. Data is represented by squares, while predictions are given by circles, triangles, and diamonds, respectively. The spatially co-located (front) and separated masker conditions are indicated by closed and open symbols, respectively.

<details>

<summary>Image 7 Details</summary>

### Visual Description

## Chart/Diagram Type: Two-panel comparison of SRT50 and SRM values

### Overview

The image presents two panels comparing acoustic metrics (SRT50 and SRM) across six categories (SSN, SAM, BB, AFS, ISTS, ST). Each panel uses distinct symbols and colors to represent different data series, with error bars indicating measurement uncertainty.

### Components/Axes

- **Top Panel (SRT50 in dB)**:

- **X-axis**: Categories (SSN, SAM, BB, AFS, ISTS, ST)

- **Y-axis**: SRT50 values (dB), ranging from -25 to 0.

- **Legend**:

- Gray squares: "data, co-located/separated"

- Black circles: "BMFD, co-located/separated"

- Black triangles: "BI_C, co-located/separated"

- Black diamonds: "BI_AC, co-located/separated"

- **Data Points**:

- Gray squares (data) and black circles (BMFD) cluster between -10 and -20 dB.

- Black triangles (BI_C) and diamonds (BI_AC) show lower values (-20 to -25 dB).

- **Bottom Panel (SRM in dB)**:

- **X-axis**: Same categories (SSN, SAM, BB, AFS, ISTS, ST).

- **Y-axis**: SRM values (dB), ranging from 0 to 16.

- **Legend**:

- Black circles: "BMFD, co-located/separated"

- Black diamonds: "BI_AC, co-located/separated"

- **Data Points**:

- Black circles (BMFD) increase from ~4 dB (SSN) to ~9 dB (ST).

- Black diamonds (BI_AC) rise from ~3 dB (SSN) to ~8 dB (ST).

### Detailed Analysis

- **Top Panel Trends**:

- **Data (gray squares)**: SRT50 values decrease slightly across categories (e.g., -10 dB for SSN to -15 dB for ISTS).

- **BMFD (black circles)**: Similar trend to data, with minor fluctuations (e.g., -12 dB for SSN to -18 dB for ISTS).

- **BI_C (black triangles)**: Consistently lower than data/BMFD (e.g., -18 dB for SSN to -25 dB for ISTS).

- **BI_AC (black diamonds)**: Lowest values, with a steep decline (e.g., -22 dB for SSN to -28 dB for ISTS).

- **Error Bars**: Largest uncertainties in ISTS and ST (e.g., ±3 dB for ISTS data).

- **Bottom Panel Trends**:

- **BMFD (black circles)**: SRM increases steadily (4 dB → 9 dB).

- **BI_AC (black diamonds)**: Slightly lower than BMFD but follows a similar upward trend (3 dB → 8 dB).

- **Error Bars**: Smaller uncertainties compared to the top panel (e.g., ±1 dB for ISTS).

### Key Observations

1. **BI_AC consistently underperforms** in SRT50 (lower values) but shows higher SRM, suggesting trade-offs in acoustic metrics.

2. **BI_C and data/BMFD** exhibit similar SRT50 trends but diverge in SRM.

3. **Error bars** highlight variability, particularly in ISTS and ST for SRT50.

### Interpretation

The data suggests that **BI_AC** may prioritize SRM over SRT50, potentially indicating a focus on different acoustic properties. The **BI_C** series shows the most significant attenuation (lowest SRT50), while **BMFD** balances both metrics. The error bars imply measurement challenges in certain categories (e.g., ISTS/ST), warranting further investigation. The separation of "co-located/separated" in the legend hints at experimental conditions affecting results, though the exact implications require additional context.

</details>

For noise maskers (SSN, SAM, AFS, and BB) presented co-located to target speech, the highest SRT50s were measured for stationary SSN and fluctuating AFS maskers, and listeners took only advantage from listen into dips when speech was presented in fluctuating SAM and BB maskers. The highest SRT50 was measured when speech was masked by the single talker (ST), resulting in about 5.5 dB higher thresholds compared to the SSN masker. A spatial separation of target speech and maskers resulted in SRM values ranging between 4.3 and 13.5 dB. The smallest SRM of about 4.3 dB was observed for the SSN maskers, while the largest SRM values of 10.1 and 13.5 dB were observed for ISTS and ST masker.

All model versions were calibrated to account for the co-located SSN masker, while all other thresholds use the same parameters. For co-located predictions based on the BMFD

(closed circles in the upper panel of Figure 7) for fluctuating noise maskers BB and AFS largely agree with data, while the predicted SRT50 for the SAM maskers is about 3 dB higher than measured SRT50. For BMFD the largest differences between predicted and measured SRTs of up to 13 dB can be observed for co-located ISTS and ST maskers. Particularly the ST masker is very similar to the target sentences and makes it difficult for the listener to separate the target from the interfering speech (informational masking, e.g. [74]), which results in high SRTs and high variability across listeners. In contrast to human listeners, the current model, as other intrusive SI models, has a-priori knowledge about the target speech and the masker signals and is only limited by aspects of amplitude modulation and energetic masking (and not informational masking), yielding to substantially lower thresholds for the speech like maskers. For the spatially separated conditions (open circles in the upper panel of Figure 7) BMFD predictions fit well for SSN and AFS while in overestimates the thresholds for SAM and BB and again underestimates thresholds for the speech like maskers ISTS and ST as can be expected (see above). Regarding the SRM (lower panel of Figure 7), BMFD predictions show a good agreement with the data for SSN, SAM (about 2 dB reduced SRM) and AFS. For BB the predicted SRM is about 3 dB lower and for ISTS and ST up to 5 dB lower than the measured SRM. For ISTS and ST these differences are partly caused by larger discrepancies between predicted and measured SRTs in co-located conditions.

In a further step, each of the five BMFD outputs was analyzed to identify the most contributing channel. Here, BIC with highest sensitivity to the hemispheric midline denoted as BIC in Figure 7, gave most contribution to SI predictions, that is clearly shown by very similar predictions of BMFD and BIC in Figure 7. This agrees well with the findings of Ewert et al. [2], where a simple binaural summation of the left and right ear signals (prior to the model) showed similar results for predictions using the binaural speech intelligibility model (BSIM; [12]). For this summed diotic input, BSIM effectively reduces to a similar processing as suggested in the monaural ESII [11] model, using a short-time assessment of power-based

SNRs. In contrast the current BIC predictions are based on both short-time envelope power and power SNRs. It should be noted that although predictions of both the power pathway of BMFD and BSIM are based on power SNRs, substantial differences exist, like the SNR combination across time frames and auditory channels, which could have an influence on predicted SRTs.

Analyzing the contribution of envelope power and power SNRs, revealed that AM cues are mostly dominant. Predictions only based on envelope power SNRs provided by the center binaural interaction channel are denoted as BI C AC and shown as diamonds in Figure 7. With exception of the BB masker condition BI C AC -based predictions already explain most of the SRM observed in the data.

Although BIC does not play an important role for the binaural psychoacoustic experiments in this study, it can successfully account for a large part of the SRM in the speech intelligibility experiments.

## VI. General discussion

The suggested model explores the ability of a strongly simplified, fixed (non-adaptive) binaural interaction stage to account for key aspects of binaural psychoacoustics and speech intelligibility with spatially separated interferers. The investigated 5-channel BMFD stage was incorporated in an existing monaural model using power and envelope power SNR cues. It was demonstrated that the suggested model maintains the ability of the former monaural approach to account for monaural psychoacoustic key phenomena. Binaural psychoacoustics was well covered except for larger discrepancies for time-intensity trading. For speech intelligibility, the key aspects where also predicted with larger discrepancies for speech-like interferers. Here aspects of informational masking which are generally not covered by signal-

processing models play a role, as has been previously shown for other speech intelligibility models.

It is conceivable that the current simplified approach might not reach the performance of other 'specialist', dedicated monaural and binaural models for psychoacoustics and speech intelligibility for each of the experiments considered here. The value of the current approach is that i) based on former work [9, 16, 19, 20, 26] the suggested model can be assumed to generalize well for other unknown data. This makes the model interesting also in the context of instrumental (spatial) audio quality predictions. ii) Another consideration is that the simple processing in the BMFD stage is generally advantageous for real-time applications, e.g., for control of signal processing algorithms in hearing supportive devices or as hearing aid processing stage itself. iii) The current approach demonstrates that the physiologically motivated hemispheric interaural interaction in mammals (e.g., [42, 43]), as realized here in the two binaural interaction channels BIL and BIR, is suited to explain a broad variety of perception experiments.

## A. Contribution of binaural interaction and better ear channels

For the binaural psychoacoustic experiments used in this study, the two BIL and BIR channels appear sufficient to account for the data. BIC has only a negligible effect on the predicted data as also indicated by very similar RMSE and R² values shown in Table I for the model versions including BIc (BIL,C,R) and excluding BIc (BIL,R), except for the binaural experiment 3 on interaural phase effects in wideband conditions: Here predicted thresholds based on BIC are significantly better than human performance in NπSm and NπS0 conditions (see middle panels in Figure 6) and accordingly predicted difference pattern for NπSm-N0Sm and NπS0-N0Sπ show a large deviation of up to 10 dB at 250 Hz from measured data. In

general, both better ear channels BEL and BER did not make any substantial contribution in the binaural psychoacoustic experiments.

For speech intelligibility, the importance of the five BMFD channels is different and BIC has been shown to account for a large part of the data (see Figure 7). In the current SI conditions, a frontal target was presented in either co-located or spatially separated maskers. In view of the psychoacoustic conditions, the co-located condition can be regarded as N0S0, while the separated condition can be considered as S0 plus noise with frequency-dependent interaural phase difference. In the separated conditions, the BIC channel amplifies the coherent frontal target speaker (S0), while spatially separated maskers with IPDs ≠ 0 are incoherently added or might be partially cancelled.