## InfoGram and Admissible Machine Learning

## Deep Mukhopadhyay

deep@unitedstatalgo.com

## Abstract

We have entered a new era of machine learning (ML), where the most accurate algorithm with superior predictive power may not even be deployable, unless it is admissible under the regulatory constraints. This has led to great interest in developing fair, transparent and trustworthy ML methods. The purpose of this article is to introduce a new information-theoretic learning framework (admissible machine learning) and algorithmic risk-management tools (InfoGram, L-features, ALFA-testing) that can guide an analyst to redesign off-the-shelf ML methods to be regulatory compliant, while maintaining good prediction accuracy. We have illustrated our approach using several real-data examples from financial sectors, biomedical research, marketing campaigns, and the criminal justice system.

Keywords : Admissible machine learning; InfoGram; L-Features; Information-theory; ALFAtesting, Algorithmic risk management; Fairness; Interpretability; COREml; FINEml.

| 1 | Introduction | 2 |

|-----------|----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|----------|

| 2 | Information-Theoretic Principles and Methods | 7 |

| 3 | 2.1 Notation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2.2 Conditional Mutual Information . . . . . . . . . . . . . . . . 2.3 Net-Predictive Information . . . . . . . . . . . . . . . . | 7 7 8 |

| | Conclusion | 35 |

| 4 | Elements of Admissible Machine Learning COREml: Algorithmic Interpretability . . . . . . . . . . . . . 3.1.1 From Predictive Features to Core Features . . . . . . 3.1.2 InfoGram and L-Features . . . . . . . . . . . . . . . . 3.1.3 COREtree: High-dimensional Microarray Data Analysis 3.1.4 COREglm: Breast Cancer Wisconsin Data . . . . . . . . . . . . . . . | 13 15 20 |

| 2.4 | . . . Nonparametric Estimation Algorithm . . . . . . . . . . . . . | 9 |

| 2.5 | Model-based Bootstrap . . . . . . . . . . . . . . . . . . . . . . | 11 |

| 2.6 | A Few Examples . . . . . . . . . . . . . . . . . . . . . . . . . | 11 |

| | Appendix A.1 | 12 12 12 |

| 3.1 | Revisiting COMPAS Data . . . . . . . . Two Cultures of Machine Learning . . . . . . | 42 |

| | 3.2.2 InfoGram and Admissible Feature 3.2.3 FINEtree and ALFA-Test: Financial 3.2.4 Admissible Criminal Justice Risk 3.2.5 FINEglm and Application to Marketing Proof of Theorem 1 . . . . . . . . . . . . . . . . . . . . . . . . The Algorithmic Accountability Act . . . . . . | 39 39 42 |

| 5 A.2 A.3 | . . . . . . . . . . . . . . . . COREtree: Iris Data . . . . . . . . . | |

| A.7 | EU's Artificial Intelligence | 43 |

| 3.2 | | |

| | FINEml: Algorithmic Fairness . . . . . . . . 3.2.1 FINE-ML: Approaches and Limitations . . . . | 22 22 |

| | . . . . Selection . Industry | 25 |

| | . . | 26 |

| | . . . Applications Assessment . . . . . . . | 32 |

| | Campaign | 32 |

| | . . . . . . . . . . . . | 39 |

| | | 40 |

| A.5 | Fair Housing Act's Disparate Impact Standard Beware of The 'Spurious Bias' Problem | |

| | | 40 |

| A.4 | . . . . . . . . . . . . | |

| A.6 | . . . . . . . . . . . . . | |

| | . . . . . . . . . . . . | |

| A.8 | | |

| | Act . . . . . . . . . . . . . . . | 44 |

## Category: Fairness, Explainability, and Algorithm Bias

Machine learning (ML) methods are rapidly becoming an essential part of automated decision-making systems that directly affect human lives. While substantial progress has been made toward developing more powerful computational algorithms, the widespread adoption of these technologies still faces several barriers-the biggest one being ensuring adherence to regulatory requirements, without compromising too much accuracy. Naturally, the question arises: how to systematically go about building such regulatory-compliant fair and trustworthy algorithms? This paper offers new statistical principles and information-theoretic graphical exploratory tools that engineers can use to 'detect, mitigate, and remediate' off-the-shelf ML-algorithms, thereby making them admissible under appropriate laws and regulatory scrutiny.

## 1 Introduction

First-generation 'prediction-only' machine learning technology has served the tech and eCommerce industry pretty well. However, ML is now rapidly expanding beyond its traditional domains into highly regulated or safety-critical areas-such as healthcare, criminal justice systems, transportation, financial markets, and national security-where achieving high predictive-accuracy is often as important as ensuring regulatory compliance and transparency in order to ensure the trustworthiness. We thus focus on developing admissible machine learning technology that can balance fairness, interpretability, and accuracy in the best manner possible. How to systematically go about building such algorithms in a fast and scalable manner? This article introduces some new statistical learning theory and information-theoretic graphical exploratory tools to address this question.

Going Beyond 'Pure' Prediction Algorithms . Predictive accuracy is not the be-all and end-all for judging the 'quality' of a machine learning model. Here is a dazzling example: Researchers at the Icahn School of Medicine at Mount Sinai in New York City found that (Zech et al., 2018, Reardon, 2019) a deep-learning algorithm, which showed more than 90% accuracy on the x-rays produced at Mount Sinai, performed poorly when tested on data from other institutions. Later it was found that 'the algorithm was also factoring in the odds of a positive finding based on how common pneumonia was at each institution-not something they expected or wanted.' This sort of unreliable and inconsistent performance

can be clearly dangerous. As a result of these safety concerns, despite lots of hype and hysteria around AI in imaging, only about 30% of radiologists are currently using machine learning (ML) for their everyday clinical practices (Allen et al., 2021). To apply machine learning appropriately and safely- especially when human life is at stake-we have to think beyond predictive accuracy. The deployed algorithm needs to be comprehensible (by endusers like doctors, judges, regulators, researchers, etc.) in order to make sure it has learned relevant and admissible features from the data, which is meaningful in light of investigators' domain knowledge. The fact of the matter is, an algorithm that is solely focused on what is learned, without reasoning how it learned what it has learned, is not intelligent enough. We next expand on this issue using two real data applications.

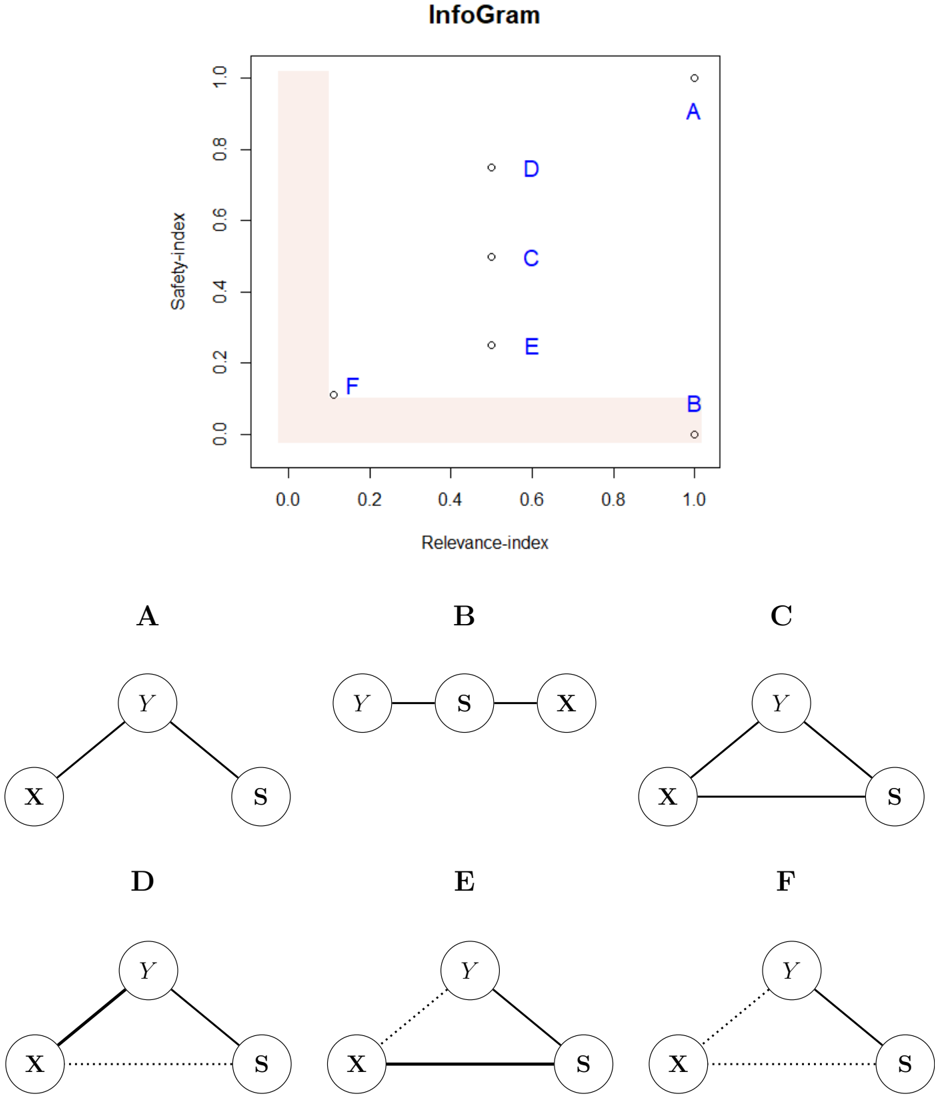

Admissible ML for Industry . Consider the UCI Credit Card data (discussed in more details in Sec 3.2.3), collected in October 2005, from an important Taiwan-based bank. We have records of n 30 , 000 cardholders. The data composed of a response variable Y denoting: default payment status (Yes = 1, No = 0), along with p 23 predictor variables (e.g., gender, education, age, history of past payment, etc.). The goal is to accurately predict the probability of default given the profile of a particular customer.

On the surface, this seems to be a straightforward classification problem for which we have a large inventory of powerful algorithms. Yeh and Lien (2009) performed an exhaustive comparison between six machine learning methods (logistic regression, K-nearest neighbor, neural net, etc.) and finally selected the neural network model, which attained 83% accuracy on a 80-20 train-test split of the data. However, traditionally build ML models are not deployable, unless it is admissible under the financial regulatory constraints 1 (Wall, 2018), which demand that (i) the method should not discriminate people on the basis of protective features 2 , here based on gender and age ; and (ii) The method should be simpler to interpret and transparent (compared to those big neural-nets or ensemble models like random forest and gradient boosting).

To improve fairness, one may remove the sensitive variables and go back to business as usual by fitting the model on the rest of the features-known as 'fairness through unawareness.' Obviously this is not going to work because there will be some proxy attributes (e.g, zip code or profession) that share some degree of correlation (information-sharing) with race,

1 The Equal Credit Opportunity Act (ECOA) is a major federal financial regulation law enacted in 1974.

2 https://en.wikipedia.org/wiki/Protected group

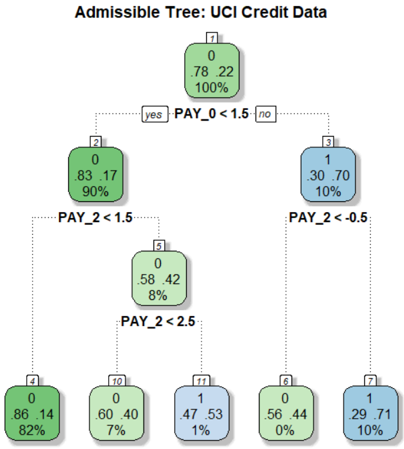

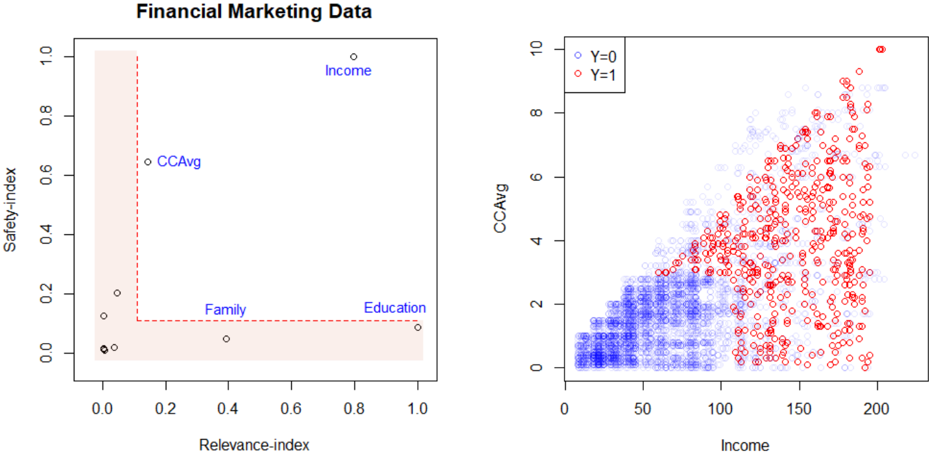

Figure 1: A shallow admissible tree classifier for the UCI credit card data with four decision nodes, which is as accurate as the most complex state-of-the-art ML model.

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Decision Tree: UCI Credit Data Classification

### Overview

The image depicts a decision tree for classifying UCI Credit Data, with nodes representing splits based on payment history variables (PAY_0, PAY_2) and their outcomes (class 0 or 1). The tree uses color coding (green for class 0, blue for class 1) and includes numerical counts and percentages for each node.

### Components/Axes

- **Nodes**: Labeled with counts (e.g., "0", "1") and percentages (e.g., "82%", "10%").

- **Branches**: Split conditions (e.g., "PAY_0 < 1.5", "PAY_2 < 1.5").

- **Colors**:

- Green: Class 0 (non-default)

- Blue: Class 1 (default)

- **Root Node**: Labeled "7" with values "0.78", "22", and "100%".

### Detailed Analysis

1. **Root Node (7)**:

- Values: "0.78", "22", "100%" (likely representing Gini impurity, node size, and total data proportion).

- Splits into:

- **Left Branch (yes)**: PAY_0 < 1.5 → Node 2 (green, 90% class 0).

- **Right Branch (no)**: PAY_0 ≥ 1.5 → Node 3 (blue, 10% class 1).

2. **Node 2 (PAY_0 < 1.5)**:

- Splits into:

- **Left Branch (PAY_2 < 1.5)**: Node 5 (green, 8% class 0).

- **Right Branch (PAY_2 ≥ 1.5)**: Node 4 (green, 82% class 0).

3. **Node 5 (PAY_2 < 1.5)**:

- Splits into:

- **Left Branch (PAY_2 < 2.5)**: Node 10 (green, 7% class 0).

- **Right Branch (PAY_2 ≥ 2.5)**: Node 17 (blue, 1% class 0).

4. **Node 3 (PAY_0 ≥ 1.5)**:

- Splits into:

- **Left Branch (PAY_2 < -0.5)**: Node 8 (green, 0% class 0).

- **Right Branch (PAY_2 ≥ -0.5)**: Node 7 (blue, 10% class 1).

5. **Leaf Nodes**:

- **Node 4**: 86.14 instances, 82% class 0.

- **Node 8**: 56.44 instances, 0% class 0 (100% class 1).

- **Node 10**: 60.40 instances, 7% class 0.

- **Node 17**: 47.53 instances, 1% class 0.

### Key Observations

- **Class Distribution**:

- Class 0 dominates in most nodes (e.g., 82% in Node 4, 7% in Node 10).

- Class 1 is rare except in Node 8 (100% class 1) and Node 3 (10% class 1).

- **Splits**:

- PAY_0 < 1.5 leads to higher class 0 prevalence.

- PAY_2 < -0.5 results in 100% class 1 (high-risk).

- **Anomalies**:

- Node 7 appears twice (root and rightmost leaf), potentially indicating a data labeling error.

### Interpretation

The tree models credit risk by splitting on payment history variables. Nodes with higher class 1 percentages (blue) indicate higher default risk. For example:

- **PAY_2 < -0.5** (Node 8) is a strong predictor of default (100% class 1).

- **PAY_0 < 1.5** (Node 2) and **PAY_2 < 1.5** (Node 5) correlate with lower default risk (82% and 7% class 0, respectively).

- The repeated "7" label suggests a potential inconsistency in the tree structure, which may require validation.

This tree highlights how payment history variables (PAY_0, PAY_2) influence credit risk classification, with specific thresholds (e.g., PAY_2 < -0.5) serving as critical decision points.

</details>

gender, or age. These proxy variables can then lead to the same unfair results. It is not clear how to define and detect those proxy variables to mitigate hidden biases in the data. In fact, on a recent review by Chouldechova and Roth (2020) on algorithmic fairness, the authors forthrightly stated

' But despite the volume and velocity of published work, our understanding of the fundamental questions related to fairness and machine learning remain in its infancy. '

Currently, there exists no systematic method to directly construct an admissible algorithm that can mitigate bias. To quote a real practitioner of a reputed AI-industry: 'I ran 40,000 different random forest models with different features and hyper-parameters to search a fair model.' This ad-hoc and inefficient strategy could be a significant barrier for an efficient large-scale implementation of admissible AI technologies. Fig. 1 shows a fair and shallow tree classifier with four decision nodes, which attains 82.65% accuracy; this was built in a completely automated manner without any hand-crafted manual tuning. Section 2 will introduce the required theory and methods behind our procedure. Nevertheless, this simple and transparent anatomy of the final model makes it easy to convey which are the key drivers of the model: variables Pay 0 and Pay 2 3 are the most important indicators to

3 Pay 0 and Pay 2 denote the repayment status of the last two months (-1=pay duly, 1=payment delay for one month, 2=payment delay for two months, and so on).

default. These variables have two key characteristics: they are highly predictive and at the same time safe to use in the sense that they share very little predictive information with the sensitive attributes age and gender, and for that reason, we call them admissible features. The model also convey how the key variables impacting credit risk: the simple decision tree shown in Fig. 1 is fairly self-explanatory, and its clarity facilitates an easy explanation of the predictions.

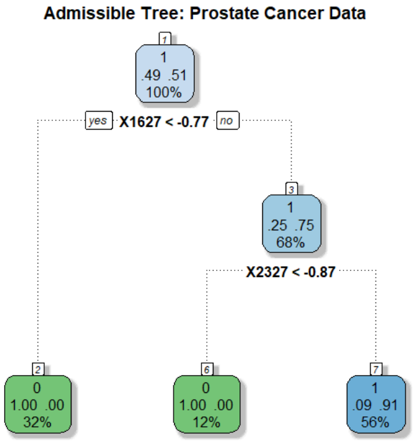

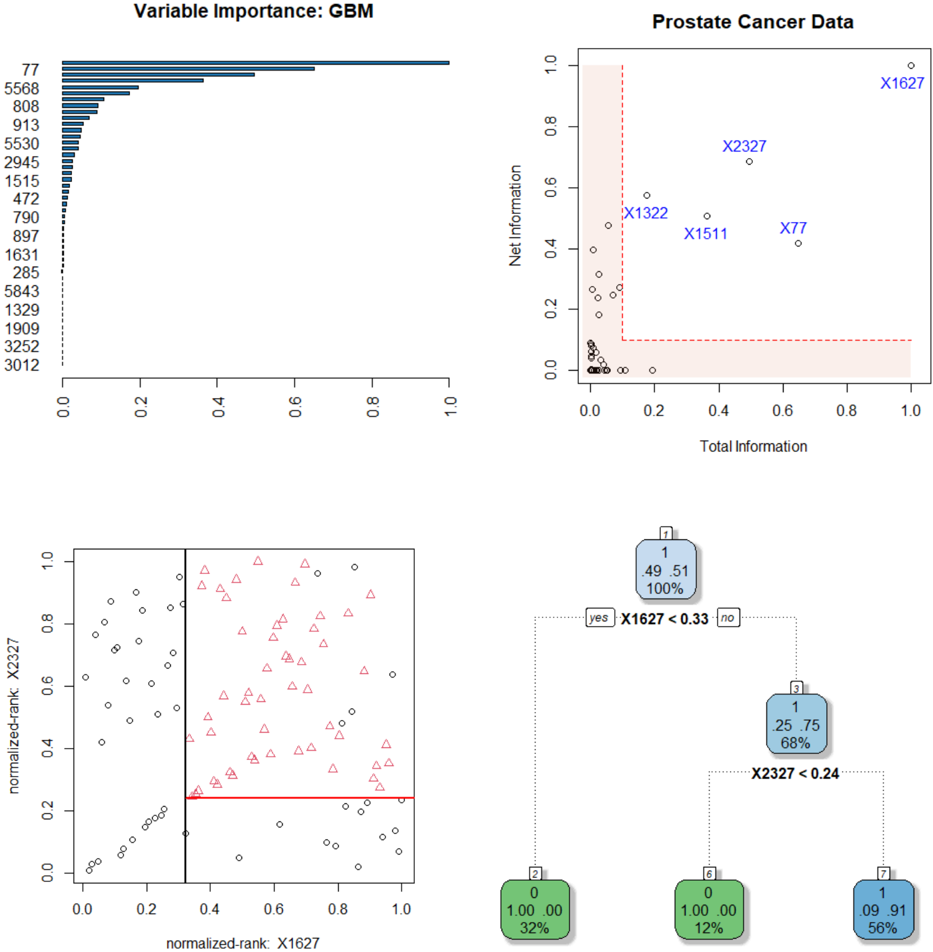

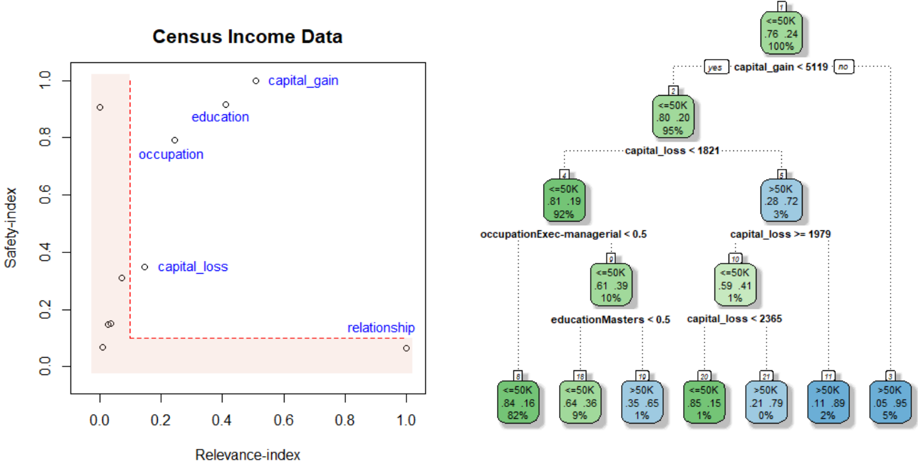

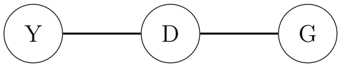

Admissible ML for Science . Legal requirement is not the only reason why we want to build admissible ML. In scientific investigations, it is important to know whether the deployed algorithm helps researchers to better understand the phenomena by refining their 'mental model.' Consider, for example, the prostate cancer data where we have p 6033 gene expression measurements from 52 tumor and 50 normal specimens. Fig. 2 shows a 95% accurate classification model for prostate data with only two 'core' driver genes! This compact model is admissible in the sense that it confers the following benefits: (i) it identifies a two-gene signature (composed of gene-1627 and gene-2327) as the top factor associated with prostate cancer. They are jointly overexpressed in the tumor samples but interestingly they have very little marginal information (not individually differentially expressed, as shown in Fig. 6). Accordingly, traditional linear-model-based analysis will fail to detect this genepair as a key biomarker. (ii) The simple decision tree model in Fig. 2 provides a mechanistic understanding and justification as to why the algorithm thinks a patient has prostate cancer or not. (iii) Finally, it provides the needed guidance on what to do next by having a control over the system. In particular, a cancer biologist can choose between different diagnosis and treatment plans with the goal to regulate those two oncogenes.

Goals and Organization . The primary goal of this paper is to introduce some new fundamental concepts and tools to lay the foundation of admissible machine learning that are efficient (enjoy good predictive accuracy), fair (prevent discrimination against minority groups), and interpretable (provide mechanistic understanding) to the best possible extent.

Our statistical learning framework is grounded in the foundational concepts of information theory. The required statistical formalism (nonparametric estimation and inference methods) and information-theoretic principles (entropy, conditional entropy, relative entropy, and conditional mutual information) are introduced in Section 2. A new nonparametric estimation technique for conditional mutual information (CMI) is proposed that scales to large

Figure 2: A two-gene admissible tree classifier for prostate cancer data with p 6033 gene expression measurements on 50 control and 52 cancer patients.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Decision Tree: Prostate Cancer Data Classification

### Overview

The image depicts a binary decision tree for classifying prostate cancer data. The tree uses feature thresholds (X1627 and X2327) to split data into nodes representing classifications (green for benign, blue for malignant). Percentages indicate class distributions, while numerical values likely represent feature averages or risk scores.

### Components/Axes

- **Root Node (1)**:

- Value: `1.49 .51`

- Percentage: `100%`

- Split Condition: `X1627 < -0.77` (yes/no branches)

- **Left Branch (X1627 < -0.77)**:

- Node 2:

- Value: `0.00 .00`

- Percentage: `32%` (green, benign)

- **Right Branch (X1627 ≥ -0.77)**:

- Node 3:

- Value: `1.25 .75`

- Percentage: `68%` (blue, malignant)

- Split Condition: `X2327 < -0.87` (yes/no branches)

- Node 6 (X2327 < -0.87):

- Value: `0.00 .00`

- Percentage: `12%` (green, benign)

- Node 7 (X2327 ≥ -0.87):

- Value: `0.91 .91`

- Percentage: `56%` (blue, malignant)

### Detailed Analysis

- **Root Node**: Represents the entire dataset (100%). The split on `X1627 < -0.77` divides data into 32% (left) and 68% (right).

- **Left Subtree (Node 2)**: All 32% of data in this branch are classified as benign (green) with a feature average of `0.00`.

- **Right Subtree (Node 3)**: 68% of data split further by `X2327 < -0.87`:

- **Node 6**: 12% of total data (12% of 68%) classified as benign (green) with `0.00` average.

- **Node 7**: 56% of total data (56% of 68%) classified as malignant (blue) with `0.91` average.

### Key Observations

1. **Feature Thresholds**:

- `X1627 < -0.77` separates 32% benign cases from 68% malignant.

- `X2327 < -0.87` further refines malignant cases into 12% benign and 56% malignant.

2. **Class Distributions**:

- Benign cases (green) occupy 44% of total data (32% + 12%).

- Malignant cases (blue) dominate at 56% of total data.

3. **Numerical Values**:

- Malignant nodes (blue) have higher averages (`0.91` in Node 7 vs. `0.25` in Node 3), suggesting these values correlate with disease severity.

### Interpretation

The tree demonstrates a hierarchical classification strategy:

- **Primary Split**: `X1627` acts as the strongest discriminator, separating 32% benign from 68% malignant cases.

- **Secondary Refinement**: For malignant cases (`X1627 ≥ -0.77`), `X2327` further distinguishes 12% benign from 56% malignant.

- **Outcome Correlation**: Higher numerical values (e.g., `0.91` in Node 7) align with malignant classifications, implying these features may represent risk scores or biomarker levels.

### Spatial Grounding

- **Root Node**: Top-center position, largest text size.

- **Branches**: Dotted lines connect nodes; left branches labeled "yes," right "no."

- **Color Coding**: Green (benign) and blue (malignant) nodes match class distributions.

### Trend Verification

- **Root to Node 2**: Sharp drop in value (`1.49 → 0.00`) with 32% benign classification.

- **Root to Node 3**: Moderate value (`1.49 → 1.25`) with 68% malignant classification.

- **Node 3 to Node 7**: Further value increase (`1.25 → 0.91`) with 56% malignant, confirming feature thresholds refine malignancy detection.

### Conclusion

This decision tree prioritizes `X1627` and `X2327` as key biomarkers for prostate cancer classification. The model achieves 56% malignant classification accuracy in the final node, with feature thresholds acting as interpretable decision rules. The numerical values likely represent average risk scores, with higher values correlating with malignancy.

</details>

datasets by leveraging the power of machine learning. For statistical inference, we have devised a new model-based bootstrap strategy. The method was applied to the problem of conditional independence testing and integrative genomics (breast cancer multi-omics data from Cancer Genome Atlas). Based on this theoretical foundation, in Section 3, we laid out the basic elements of admissible machine learning. Section 3.1 focuses on algorithmic interpretability: how can we efficiently search and design self-explanatory algorithmic models by balancing accuracy and robustness to the best possible extent? Can we do it in a completely model-agnostic manner? Key concepts and tools introduced in this section are: Core features, infogram, L-features, net-predictive information, and COREml. The procedure was applied to several real datasets, including high-dimensional microarray gene expression datasets (prostate cancer and SRBCT data), MONK's problems, and Wisconsin breast cancer data. Section 3.2 focuses on algorithmic fairness, which tackles the challenging problem of designing admissible ML algorithms that are simultaneously efficient, interpretable, and equitable. There are several key techniques introduced in this section: admissible feature selection, ALFA-testing, graphical risk assessment tool, and FINEml. We illustrate the proposed methods using examples from criminal justice system (ProPublica's COMPAS recidivism data), financial service industry (Adult income data, Taiwan credit card data), and marketing ad campaign. We conclude the paper in Section 4 by reviewing the challenges and opportunities of next-generation admissible ML technologies.

## 2 Information-Theoretic Principles and Methods

The foundation of admissible machine learning relies on information-theoretic principles and nonparametric methods. The key theoretical ideas and results are presented in this section to develop a deeper understanding of the conceptual basis of our new framework.

## 2.1 Notation

Let Y be the response variable taking values t 1 , . . . , k u , X p X 1 , . . . , X p q denotes a p -dimensional feature matrix, and S p S 1 , . . . , S q q is additional set of q covariates (e.g., collection of sensitive attributes like race, gender, age, etc.). A variable is called mixed when it can take either discrete, continuous, or even categorical values, i.e., completely unrestricted data-types. Throughout, we will allow both X and S to be mixed . We write Y K K X to denote the independence of Y and X . While, the conditional independence of Y and X given S is denoted by Y K K X | S . For a continuous random variable, f and F denote the probability density and distribution function, respectively. For a discrete random variable the probability mass function will be denoted by p with proper subscript.

## 2.2 Conditional Mutual Information

Our theory starts with an information-theoretic view of conditional dependence. Under conditional independence:

$$Y \, \mathbb { I } \, X | S$$

the following decomposition holds for all y, x , s

$$f _ { Y , X | S } ( y , x | s ) \, = \, f _ { Y | S } ( y | s ) f _ { X | S } ( x | s ) .$$

More than testing independence, often the real interest lies in quantifying the conditional dependence: the average deviation of the ratio

$$\frac { f _ { Y , X | S } ( y , x | S ) } { f _ { Y | S } ( y | S ) f _ { X | S } ( x | S ) } , \quad ( 2 . 1 )$$

which can be measured by conditional mutual information (Wyner, 1978).

Definition 1. Conditional mutual information (CMI) between Y and X given S is defined as:

$$\begin{array} { r l } \text {as:} & = \underset { y , x , s } { \iiint } \log \left ( \frac { f _ { Y , X | S } ( y , x | S ) } { f _ { Y | S } ( y | S ) f _ { X | S } ( x | S ) } \right ) f _ { Y , X , S } ( y , x , s ) \, d y \, d x \, d s . \quad ( 2 . 2 ) \end{array}$$

Two Important Properties . (P1) One of the striking features of CMI is that it captures multivariate non-linear conditional dependencies between the variables in a completely nonparametric manner. (P2) CMI possesses the necessary and sufficient condition as a measure of conditional independence, in the sense that

$$M I ( Y , X | S ) = 0 \, i f a n d o n l y i f \, Y \perp X | S .$$

Conditional independence relation can be described using graphical model (also known as Markov network), as shown the figure below:

Figure 3: Representing conditional independence graphically, where each node is a random variable (or random vector). The edge between Y and X passes through the S .

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Diagram: Sequential Process Flow (Y → S → X)

### Overview

The image depicts a linear sequence diagram with three interconnected nodes labeled **Y**, **S**, and **X**. The nodes are arranged horizontally, connected by straight lines, suggesting a directional flow from left to right. No numerical data, scales, or additional annotations are present.

### Components/Axes

- **Nodes**:

- **Y**: Positioned on the far left.

- **S**: Centered between Y and X.

- **X**: Positioned on the far right.

- **Connectors**:

- A single horizontal line connects **Y** to **S**.

- A single horizontal line connects **S** to **X**.

- **Labels**:

- All nodes are explicitly labeled with uppercase letters (**Y**, **S**, **X**).

- No axis titles, legends, or numerical markers are visible.

### Detailed Analysis

- **Node Placement**:

- **Y** is spatially isolated on the left, **S** is centrally aligned, and **X** is isolated on the right.

- Lines are equidistant and parallel, emphasizing a linear progression.

- **Textual Elements**:

- No embedded text, legends, or color-coded data series.

- Labels are minimalistic, with no additional context (e.g., units, descriptions).

### Key Observations

1. The diagram lacks numerical or categorical data, focusing solely on structural relationships.

2. The absence of branching or feedback loops suggests a strictly sequential process.

3. The central node **S** acts as an intermediary between **Y** and **X**, potentially indicating dependency or mediation.

### Interpretation

This diagram likely represents a simplified workflow, causal chain, or dependency structure. The linear flow from **Y** to **S** to **X** implies that **Y** initiates a process, **S** modifies or mediates it, and **X** is the final outcome. Without additional context, the labels could represent variables, stages, or entities in a system. The minimalist design prioritizes clarity of sequence over quantitative analysis.

**Note**: No factual or numerical data is present in the image. The interpretation is based solely on the structural relationships depicted.

</details>

## 2.3 Net-Predictive Information

One of the major significances of CMI as a measure of conditional dependence comes from its interpretation in terms of additional 'information gain' on Y learned through X when we already know S . In other words, CMI measures the Net-Predictive Information (NPI) of X -the exclusive information content of X for Y beyond what is already subsumed by S . To formally arrive at this interpretation, we have to look at CMI from a different angle, by expressing it in terms of conditional entropy. Entropy is a fundamental information-theoretic uncertainty measure. For a random variable Z , entropy H p Z q is defined as E Z r log f Z s .

Definition 2. The conditional entropy H p Y | S q is defined as the expected entropy of Y | S s

$$H ( Y | S ) = \int _ { s } H ( Y | S = s ) d F _ { s } ,$$

which measures how much uncertainty remains in Y after knowing S , on average.

Theorem 1. For Y discrete and p X , S q mixed multidimensional random vectors, MI p Y, X | S q can be expressed as the difference between two conditional-entropy statistics:

$$M I ( Y , X | S ) \, = \, H ( Y | S ) \, - \, H ( Y | S , X ) .$$

The proof involves some standard algebraic manipulations, and is given in Appendix A.1.

Remark 1 (Uncertainty Reduction) . The alternative way of defining CMI through eq. (2.5) allows us to interpret it from a new angle: Conditional mutual information MI p Y, X | S q measures the net impact of X in reducing the uncertainty of Y , given S . This new perspective will prove to be vital for our subsequent discussions. Note that, if H p Y | S , X q H p Y | S q , then X carries no net -predictive information about Y .

## 2.4 Nonparametric Estimation Algorithm

The basic formula (2.2) of conditional mutual information (CMI) that we have presented in the earlier section, is, unfortunately, not readily applicable for two reasons. First, the practical side: in the current form, (2.2) requires estimation of f Y, X | S and f X | S , which could be a herculean task, especially when X p X 1 , . . . , X p q and S p S 1 , . . . , S q q are largedimensional. Second, the theoretical side: since the triplet p Y, X , S q is mixed (not all discrete or continuous random vectors) the expression (2.2) is not even a valid representation. The necessary reformulation is given in the next theorem.

Theorem 2. Let Y be a discrete random variable taking values 1 , . . . , k , and p X , S q be a mixed pair of random vectors. Then the conditional mutual information can be rewritten as

$$M I ( Y , X | S ) \, = \, E _ { X , S } \left [ K L \left ( p _ { Y | X , S } \right \| p _ { Y | S } \right ) \right ] ,$$

where Kullback-Leibler (KL) divergence from p Y | X x , S s to p Y | S s is defined as

$$K L \left ( p _ { Y | x , s } \| p _ { Y | s } \right ) = \sum _ { y } p _ { Y | x , s } ( y | x , s ) \, \log \left ( \frac { p _ { Y | x , s } ( y | x , s ) } { p _ { Y | s } ( y | s ) } \right ) .$$

To prove it, first rewrite the dependence-ratio (2.1) solely in terms of conditional distribution of Y as follows:

$$\frac { P r ( Y = y | X = x , S = s ) } { P r ( Y = y | S = s ) } \, = \, \frac { p _ { Y | X , S } ( y | X , s ) } { p _ { Y | S } ( y | S ) }$$

Next, substitute this into (2.2) and express it as

$$M I ( Y , X | S ) \ = \ \iint _ { x , s } \left [ \sum _ { y } p _ { Y | X , S } ( y | X , s ) \log \left ( \frac { p _ { Y | X , S } ( y | X , s ) } { p _ { Y | S } ( y | S ) } \right ) \right ] d F x , s$$

Replace the part inside the square brackets by (2.7) to finish the proof.

Remark 2. CMI measures how much information is shared only between X and Y that is not contained in S . Theorem 2 makes this interpretation explicit.

Estimator . Goal is to develop a practical nonparametric algorithm for estimating CMI from n i.i.d samples t x i , y i , s i u n i 1 that works for large( n, p, q ) settings. Theorem 2 immediately

leads to the following estimator of (2.6):

$$\widehat { M I } ( Y , X | S ) = \frac { 1 } { n } \sum _ { i = 1 } ^ { n } \log \frac { \widehat { P r } ( Y = y _ { i } | x _ { i } , s _ { i } ) } { \widehat { P r } ( Y = y _ { i } | s _ { i } ) } .$$

Algorithm 1 . Conditional mutual information estimation : the proposed ML-powered nonparametric estimation method consists of three simple steps:

Step 1 . Choose a machine learning classifier (e.g., support vector machines, random forest, gradient boosted trees, deep neural network, etc.), and call it ML 0 .

Step 2 . Train the following two models:

$$\begin{array} { r c l } \text {ML.train} _ { y | x , s } & \leftarrow & \text {ML} _ { 0 } \left ( Y \sim [ X , S ] \right ) \\ \text {ML.train} _ { y | s } & \leftarrow & \text {ML} _ { 0 } \left ( Y \sim S \right ) \end{array}$$

Step 3 . Extract the conditional probability estimates x Pr p Y y i | x i , s i q from ML.train y | x , s , and x Pr p Y y i | s i q from ML 0 Y S , for i 1 , . . . , n .

Step 4 . Return x MI p Y, X | S q by applying formula (2.8).

Remark 3. We will be using the gradient boosting machine ( gbm ) of Friedman (2001) in our numerical examples (obviously, one can use other methods), whose convergence behavior is well-studied in literature (Breiman et al., 2004, Zhang, 2004), where it was definitively shown that under some very general conditions, the empirical risk (probability of misclassification) of the gbm classifier approaches the optimal Bayes risk. This Bayes risk consistency property surely carries over to our conditional probability estimates in (2.8), which justifies the good empirical performance of our method in real datasets.

Remark 4. Taking the base of the log in (2.8) to be 2, we get the measure in the unit of bits . If the log is taken to be the natural log e , then it is in nats unit. We will use log 2 in all our computation.

The proposed style of nonparametric estimation provides some important practical benefits:

Flexibility: Unlike traditional conditional independence testing procedures (Candes et al., 2018, Berrett et al., 2019), our approach requires neither the knowledge of the exact parametric form of high-dimensional F X 1 ,...,X p nor the knowledge of the conditional distribution of X | S , which are generally unknown in practice.

Applicability: (i) Data-type: The method can be safely used for mixed X and S (any combination of discrete, continuous, or even categorical variables). (ii) Data-dimension: The method is applicable to high-dimensional X p X 1 , . . . , X p q and S p S 1 , . . . , S q q .

- Scalability: Unlike traditional nonparametric methods (such as kernel density or k -nearest neighbor-based methods), our procedure is scalable for big datasets with large( n, p, q ).

## 2.5 Model-based Bootstrap

One can even perform statistical inference for our ML-powered conditional-mutual-information statistic. In order to test H 0 : Y K K X | S , obtain bootstrap-based p-value by noting that under the null Pr p Y y | X x , S s q reduces to Pr p Y y | S s q .

Algorithm 2 . Model-based Bootstrap : The inference scheme proceeds as follows:

Step 1. Let

$$\begin{array} { r } { \hat { p } _ { i | s } = \Pr ( Y _ { i } = 1 | S = s _ { i } ) , \, f o r i = 1 , \dots , n } \end{array}$$

as extracted from (already estimated) the model ML.train y | s (step 2 of Algorithm 1).

Step 2. Generate the null Y n 1 p Y 1 , . . . , Y n q by

$$Y _ { i } ^ { * } \, \leftarrow \, B e r n o u l l i ( \widehat { p } _ { i | s } ) , \, f o r \, i = 1 , \dots , n$$

Step 3. Compute x MI p Y , X | S q using the Algorithm 1.

Step 4. Repeat the process B times (say, B 500); compute the bootstrap null distribution, and return the p-value.

Remark 5. Aparametric version of this inference was proposed by Rosenbaum (1984) in the context of observational causal study. His scheme resamples Y by estimating Pr p Y 1 | S q using a logistic regression model. The procedure was called conditional permutation test.

## 2.6 A Few Examples

Example 1. Model: X Bernoulli p 0 . 5 q ; S Bernoulli p 0 . 5 q ; Y X when S 0 and 1 X when S 1. In this case, it is easy to see that the true MI p Y, X | S q 1. We simulated n 500 i.i.d p x i , y i , s i q from this model and computed our estimate using (2.8). We repeated the process 50 times to access the variability of the estimate. Our estimate is:

$$\dot { M } ( Y , X | S ) \ = \ 0 . 9 9 4 \pm 0 . 0 0 2 3 4 .$$

with (avg.) p-value being almost zero. We repeated the same experiment by making Y Bernoulli p 0 . 5 q (i.e., now true MI p Y, X | S q 0), which yields

$$M I ( Y , X | S ) \ = \ 0 . 0 0 2 2 \pm 0 . 0 0 1 7 .$$

with (avg.) pvalue being 0 . 820.

Example 2. Integrative Genomics . The wide availability of multi-omics data has revolutionized the field of biology. It is a general consensus among practitioners that combining individual omics data sets (mRNA, microRNA, CNV and DNA methylation, etc.) leads to improved prediction. However, before undertaking such analysis, it is probably worthwhile to check what is the additional information we gain from a combined analysis compared to a single-platform one. To illustrate this point, we use a Breast cancer multi-omics data that is a part of The Cancer Genome Atlas (TCGA, http://cancergenome.nih.gov/). It contain the expression of three-kinds of omics data sets: miRNA, mRNA, and proteomics from three kinds of breast cancer samples ( n 150): Basal, Her2, and LumA. X 1 is 150 184 matrix of miRNA, X 2 is 150 200 matrix of mRNA, and X 3 is 150 142 matrix of proteomics.

$$\text {MI} ( Y , \text {X} _ { 2 } \, | \, \text {X} _ { 1 } ) = 0 . 0 1 3 ; \quad & p { \text {-value} } = 0 . 3 5 6 \\ \text {MI} ( Y , \text {X} _ { 3 } \, | \, \text {X} _ { 1 } ) = 0 . 0 1 8 6 ; \quad & p { \text {-value} } = 0 . 2 3 5 \\ \text {MI} \left ( Y , \{ \text {X} _ { 2 } , \text {X} _ { 3 } \} \, | \, \text {X} _ { 1 } \right ) = 0 . 0 1 9 2 ; \quad & p { \text {-value} } = 0 . 5 0 1 .$$

It shows: neither mRNA or proteonomics add any substantial information beyond what is already captured by miRNAs.

## 3 Elements of Admissible Machine Learning

How to design admissible machine learning algorithms with enhanced efficiency, interpretability, and equity? 4 A systematic pipeline for developing such admissible ML models is laid out in this section, which is grounded in the earlier information-theoretic concepts and nonparametric modeling ideas.

## 3.1 COREml: Algorithmic Interpretability

## 3.1.1 From Predictive Features to Core Features

One of the first tasks of any predictive modeling is to identify the key drivers that are affecting the response Y . Here we will discuss a new information-theoretic graphical tool to quickly spot the 'core' decision-making variables, which are going to be vital in building interpretable models. One of the advantages of this method is that it works even in the presence of correlated features, as the following example illustrates; also see Appendix A.7.

$$\begin{array} { r l } \text {Example 3. Correlated features. $Y \sim Bernoulli(\pi(x))$ where $\pi(x)=1/(1+e^{-\mathcal{M}(x)})$ and } \\ \\ \mathcal { M } ( x ) = 3 \sin ( X _ { 1 } ) - 2 X _ { 2 } . \end{array}$$

4 However, the general premise of admissible ML is extremely broad and flexible, and will continue to evolve with the regulatory requirements to ensure rapid development of trustworthy algorithmic methods.

X 1 , . . . X p 1 be i.i.d N p 0 , 1 q random variables, and

$$X _ { p } = 2 X _ { 1 } - X _ { 2 } + \epsilon , w h e r e \epsilon \sim \mathcal { N } ( 0 , 2 ) ,$$

which means X p has no additional predictive value beyond what is already captured by the core variables X 1 and X 2 . Another way of saying this is that X p is redundant -the conditional mutual information between Y and X p given t X 1 , X 2 u is zero:

$$M I \left ( Y , X _ { p } | \{ X _ { 1 } , X _ { 2 } \} \right ) = 0 .$$

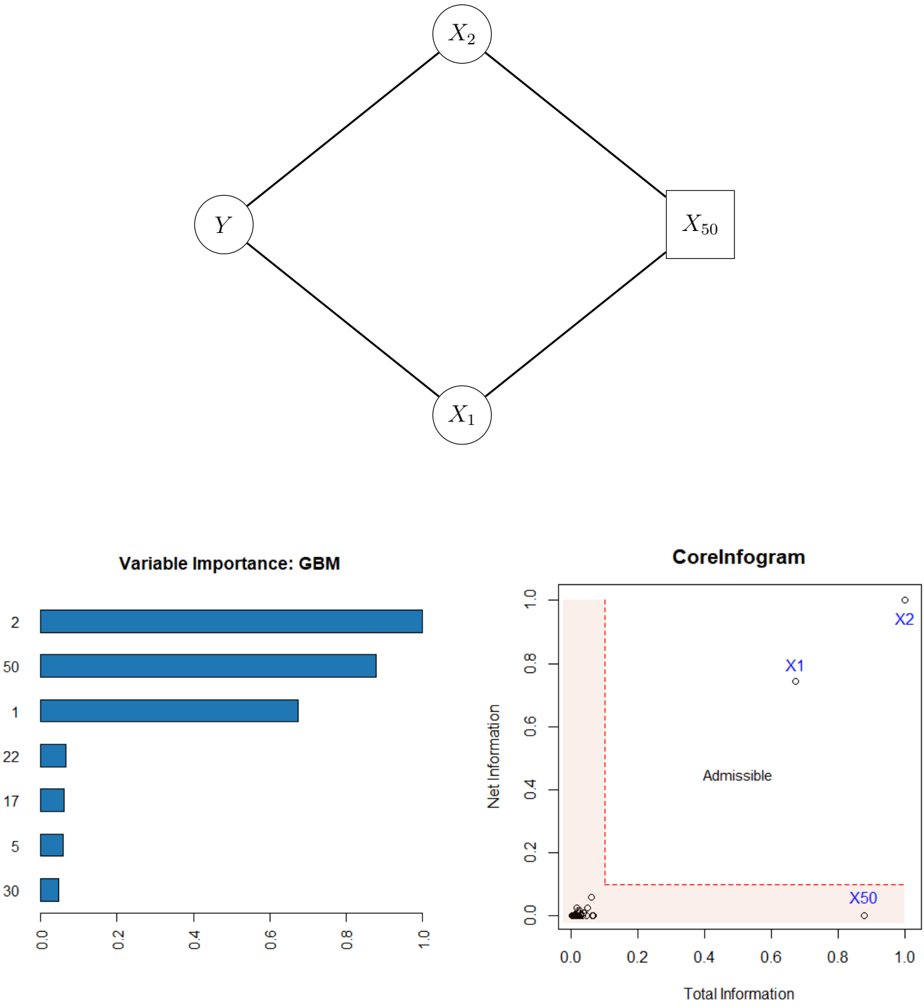

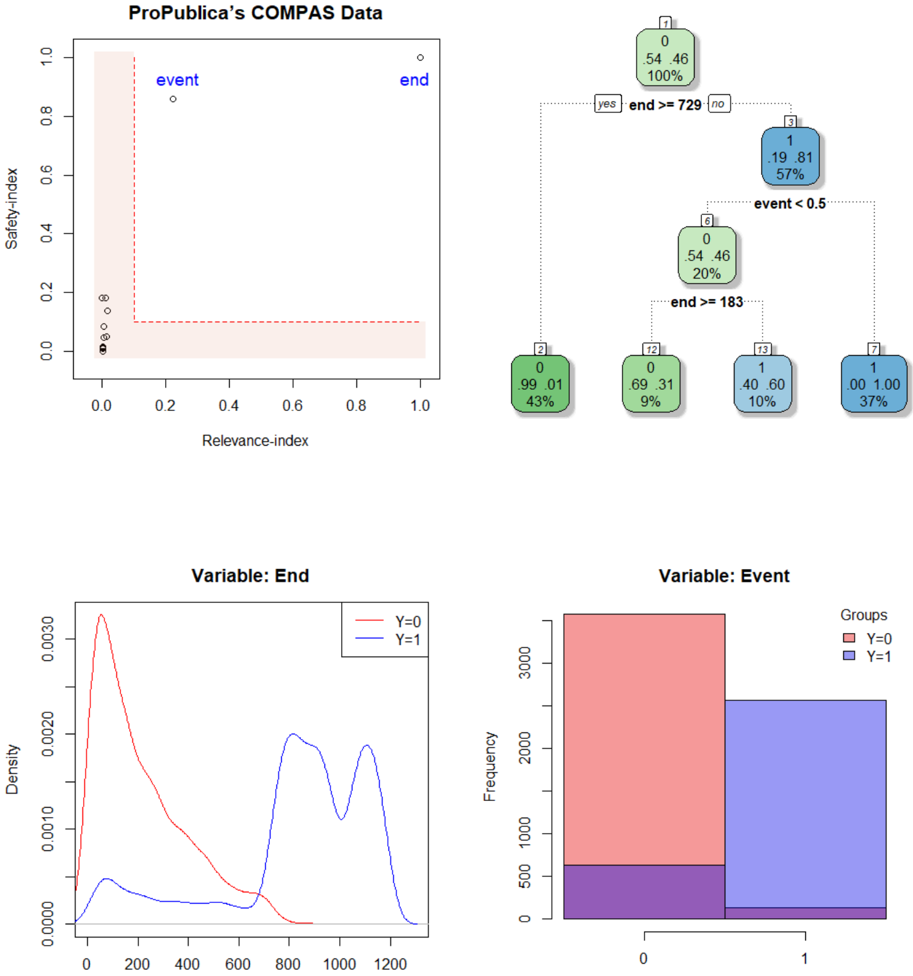

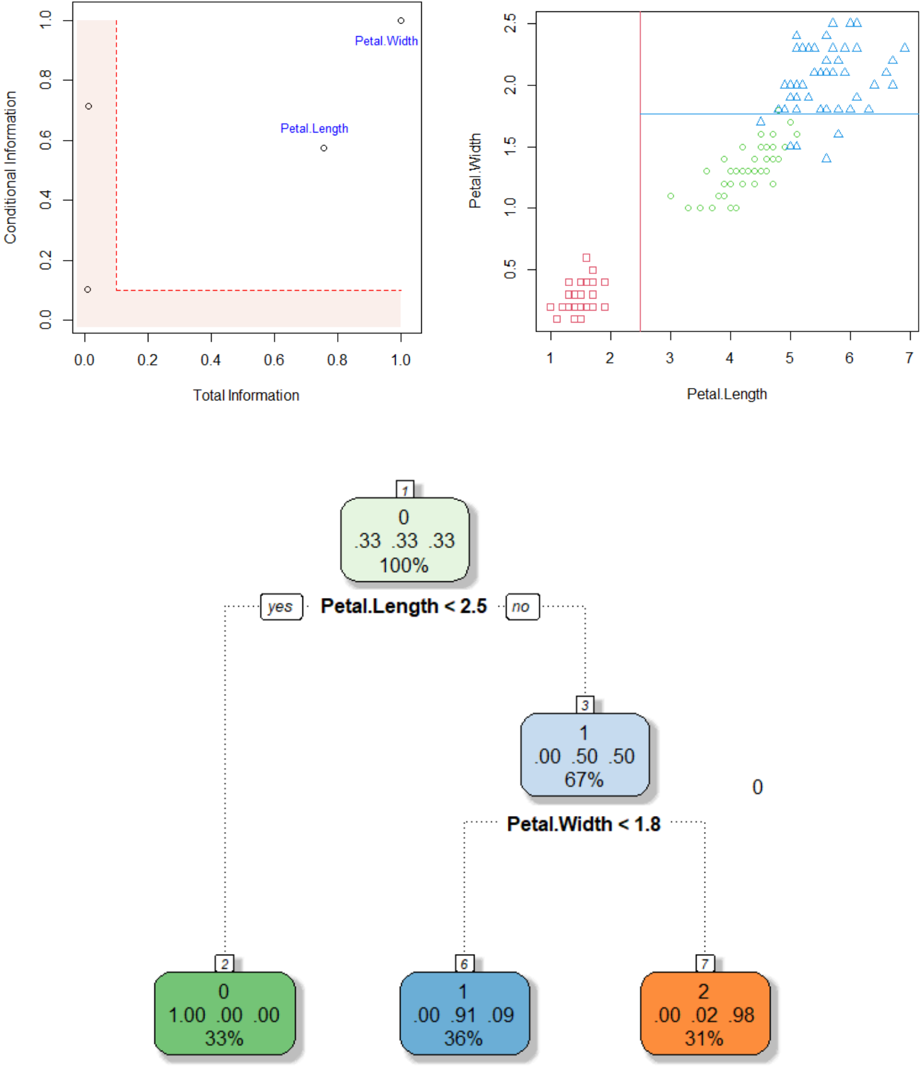

The top of Fig. 4 graphically depicts this. The following nomenclature will be useful for discussing our method:

$$\begin{array} { r c l } { C o r e S e t } & { = } & { \{ X _ { 1 } , X _ { 2 } \} } \\ { I m i t a t o r } & { = } & { \{ X _ { p } \} } \\ { P r o b e s } & { = } & { \{ X _ { 3 } , \dots , X _ { p - 1 } \} . } \end{array}$$

Note that the imitator X p is highly predictive for Y due to its association with the core variables. We have simulated n 500 samples with p 50. For each feature we compute,

$$R _ { j } \ = \ o v e r a l l r e l e v a n c e s o r e \, o f \, j t h p r e d i c t o r , \ j = 1 , \dots , p .$$

The bottom-left corner of Fig. 4 shows the relative importance scores (scaled between 0 and 1) for the top seven features using gbm algorithm 5 , which correctly finds t X 1 , X 2 , X 50 u as the important predictors. However, it is important to recognise that this modus operandiirrespective of the ML algorithm-can not distinguish the 'fake imitator' X 50 from the real ones X 1 and X 2 . To enable refined characterization of the variables, we have to 'add more dimension' to the classical machine learning feature importance tools.

## 3.1.2 InfoGram and L-Features

We introduce a tool for identification of core admissible features based on the concept of net-predictive information (NPI) of a feature X j .

Definition 3. The net-predictive (conditional) information of X j given all the rest of the variables X j t X 1 , . . . , X p uzt X j u is defined in terms of conditional mutual information:

$$C _ { j } \ = \ M I ( Y , X _ { j } | X _ { - j } ) , \, f o r j = 1 , \dots , p .$$

5 based on whether a particular variable was selected to split on during learning a tree, and how much it improves the Gini impurity or information gain.

Figure 4: Top: The graphical representation of example 3 is shown. Bottom-left: The gbm-feature importance score for top seven features; rest are almost zero thus not shown. Bottom-right: infogram identifies the core variables t X 1 , X 2 u from the X 50 . The L-shaped area with 0 . 1 width is highlighted in red; it contains inadmissible variables with either low relevance or high redundancy.

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Diagram: Network Structure with Nodes and Connections

### Overview

The image contains a diamond-shaped diagram with four nodes labeled **Y**, **X₁**, **X₂**, and **X₅₀**. Nodes are connected via straight edges, forming a hierarchical or dependency structure. **X₅₀** is distinguished by a square shape, while other nodes are circles.

### Components/Axes

- **Nodes**:

- **Y** (top-left)

- **X₁** (bottom-left)

- **X₂** (top-right)

- **X₅₀** (bottom-right, square-shaped)

- **Edges**:

- **Y** → **X₁**

- **Y** → **X₂**

- **X₁** → **X₅₀**

- **X₂** → **X₅₀**

### Detailed Analysis

- **Node Labels**: All nodes are explicitly labeled with uppercase letters.

- **Node Shapes**: **X₅₀** is a square; others are circles.

- **Edge Directions**: All edges are unidirectional, suggesting a flow or dependency from parent to child nodes.

### Key Observations

- **X₅₀** is the terminal node, receiving input from both **X₁** and **X₂**.

- **Y** acts as a root node, branching into **X₁** and **X₂**.

### Interpretation

This diagram likely represents a decision tree, causal model, or dependency graph. **X₅₀** may be the output or target variable, influenced by **X₁** and **X₂**, which are themselves influenced by **Y**. The square shape of **X₅₀** could indicate a special role (e.g., final output or critical node).

---

## Chart: Variable Importance (GBM)

### Overview

A horizontal bar chart titled **"Variable Importance: GBM"** displays the relative importance of variables in a Gradient Boosting Machine (GBM) model. The x-axis ranges from 0.0 to 1.0, and the y-axis lists categorical variables.

### Components/Axes

- **X-axis**: "Importance Score" (0.0 to 1.0)

- **Y-axis**: Categorical variables: **2**, **50**, **1**, **22**, **17**, **5**, **30**

- **Legend**: Blue bars represent variable importance.

### Detailed Analysis

- **Variable Importance Scores**:

- **2**: ~0.95 (longest bar)

- **50**: ~0.85

- **1**: ~0.65

- **22**, **17**, **5**, **30**: ~0.1 each (short bars)

- **Spatial Grounding**:

- Bars are aligned horizontally, with **2** at the top and **30** at the bottom.

- Legend is on the right, matching the blue color of the bars.

### Key Observations

- **Variable 2** is the most important, contributing ~95% of the importance score.

- **Variables 22, 17, 5, and 30** have negligible importance (~10% each).

### Interpretation

This chart highlights the dominance of **Variable 2** in the GBM model, suggesting it is the primary driver of predictions. The low importance of other variables implies they contribute minimally to the model’s output.

---

## Chart: CoreInfogram

### Overview

A scatter plot titled **"CoreInfogram"** visualizes the relationship between **Total Information** (x-axis) and **Net Information** (y-axis). Points are labeled **X₁**, **X₂**, and **X₅₀**, with a red dashed boundary defining an "Admissible" region.

### Components/Axes

- **X-axis**: "Total Information" (0.0 to 1.0)

- **Y-axis**: "Net Information" (0.0 to 1.0)

- **Legend**:

- **X₁**: Circle (blue)

- **X₂**: Square (blue)

- **X₅₀**: Triangle (blue)

- **Boundary**: Red dashed lines at **x=0.2** and **y=0.1**, forming a rectangular "Admissible" region.

### Detailed Analysis

- **Data Points**:

- **X₂**: (0.9, 0.95) – top-right corner, outside the admissible region.

- **X₁**: (0.7, 0.75) – inside the admissible region.

- **X₅₀**: (0.9, 0.05) – bottom-right corner, outside the admissible region.

- **Boundary**:

- Red dashed lines at **x=0.2** (vertical) and **y=0.1** (horizontal).

- "Admissible" region is the area above **y=0.1** and to the right of **x=0.2**.

### Key Observations

- **X₂** and **X₅₀** lie outside the admissible region, while **X₁** is inside.

- The admissible region suggests a threshold for acceptable information content.

### Interpretation

The CoreInfogram evaluates the balance between total and net information. Points outside the admissible region (e.g., **X₂**, **X₅₀**) may indicate inefficiencies or noise in the data. **X₁**’s position within the admissible region suggests it meets the criteria for meaningful information.

---

## Final Notes

- **Language**: All text is in English.

- **Missing Data**: No numerical tables or additional text blocks are present.

- **Uncertainty**: Approximate values (e.g., ~0.95 for Variable 2) are based on visual estimation.

</details>

For easy interpretation, we standardize C j by C j max j C j and convert it between 0 and 1. Infogram, which is a abbreviation of information diagram, is a scatter plot of tp R j , C j qu p j 1 over the unit square r 0 , 1 s 2 ; see the bottom-right corner of Fig. 4.

L-Features . The highlighted L-shaped area contains features that are either irrelevant or redundant. For example, notice the position of X 50 in the plot, indicating that it is highly predictive but contains no new complementary information for the response. Clearly, there could be an opposite scenario: a variable carries valuable net individual information for Y , despite being moderately relevant (not ranked among the top few); see Sec. 3.1.4.

Remark 6 (Predictive Features vs. CoreSet) . Recall that in Example 3, the irrelevant feature X 50 is strongly correlated with the relevant ones X 1 and X 2 through (3.2), thus violate the so-called 'irrepresentable condition'-for more details see the bibliographic notes section of Hastie et al. (2015, p. 311). In this scenario (which may easily arise in practice), it is hard to recover the 'important' variables using traditional variable selection methods. The bottom line is: identifying CoreSet is a much more difficult undertaking than merely selecting the most predictive ones. The goal of infogram is to facilitate this process of discovering the key variables that are driving the outcome.

Remark 7 (CoreML) . Two additional comments before diving into a real data examples. First, machine learning models based on 'core' features ( CoreML ) show improved stability, especially when there exists considerable correlation among the features. 6 This will be demonstrated in the next two sections. Second, our approach is not tied to any particular machine learning method; it is completely model-agnostic and can be integrated with any arbitrary algorithm: choose a specific classifier ML 0 and compute (3.3) and (3.4) to generate the associated infogram.

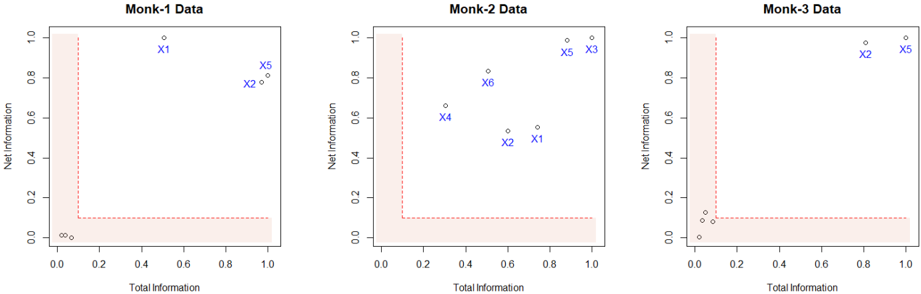

Example 4. MONK's problems (Thrun et al., 1991). It is a collection of three binary artificial classification problems (MONK-1, MONK-2 and MONK-3) with p 6 attributes; available in the UCI Machine Learning Repository. As shown in Fig. 5, infogram selects t X 1 , X 2 , X 5 u for the MONK-1 data, and t X 2 , X 5 u for the MONK-3 data as the core features. MONK-2 is an idiosyncratic case, where all six features turned out to be core! This indicates the possible complex nature of the classification rule for the MONK-2 problem.

## 3.1.3 COREtree: High-dimensional Microarray Data Analysis

How does one distill a compact (parsimonious) ML model by balancing accuracy, robustness, and interpretability to the best extent? To answer that, we introduce COREtree , whose

6 Numerous studies have found that many current methods like partial dependence plots, LIME, and SHAP could be highly misleading, particularly when there is strong dependence among features.

Figure 5: Infograms of Monk's problems. CoreSets are denoted in blue.

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Scatter Plots: Monk Data Analysis

### Overview

Three scatter plots labeled "Monk-1 Data," "Monk-2 Data," and "Monk-3 Data" visualize relationships between "Total Information" (x-axis) and "Net Information" (y-axis). Each plot contains blue data points labeled X1–X6 and a red dashed L-shaped boundary line. The axes range from 0 to 1.0 in both dimensions.

### Components/Axes

- **X-axis**: "Total Information" (0.0 to 1.0)

- **Y-axis**: "Net Information" (0.0 to 1.0)

- **Red Dashed Line**: Forms an L-shape, dividing the plot into two regions:

- Vertical segment: x=0.0, y=0.0 to y=1.0

- Horizontal segment: x=0.0 to x=1.0, y=0.0

- **Data Points**: Blue dots labeled X1–X6 with approximate coordinates:

- **Monk-1**:

- X1: (0.5, 0.9)

- X5: (0.8, 0.8)

- X2: (0.7, 0.7)

- X3: (0.6, 0.6)

- X4: (0.4, 0.5)

- X6: (0.3, 0.4)

- **Monk-2**:

- X1: (0.7, 0.5)

- X2: (0.6, 0.4)

- X3: (0.8, 0.9)

- X4: (0.5, 0.6)

- X5: (0.9, 0.95)

- X6: (0.4, 0.7)

- **Monk-3**:

- X1: (0.1, 0.1)

- X2: (0.2, 0.15)

- X3: (0.3, 0.2)

- X4: (0.4, 0.25)

- X5: (0.9, 0.95)

- X6: (0.8, 0.9)

### Detailed Analysis

1. **Red Boundary Line**:

- Acts as a threshold, separating regions where "Net Information" is either above or below the line.

- All data points lie **above** the red line, suggesting they meet a minimum "Net Information" criterion.

2. **Data Point Distribution**:

- **Monk-1**: Points cluster near the top-right quadrant (x=0.4–0.8, y=0.5–0.9), with X1 and X5 being the most extreme.

- **Monk-2**: Points are more dispersed, with X5 (0.9, 0.95) and X3 (0.8, 0.9) near the top-right, while X1 and X2 are closer to the red line.

- **Monk-3**: Points are tightly clustered near the bottom-left (x=0.1–0.4, y=0.1–0.25) except for X5 and X6, which are outliers in the top-right.

3. **Trends**:

- **Monk-1**: Data points show a moderate positive correlation (y ≈ x + 0.3 for X1–X3).

- **Monk-2**: No clear trend; points are scattered but skewed toward higher y-values.

- **Monk-3**: Strong clustering in the bottom-left, with two outliers (X5, X6) suggesting anomalies.

### Key Observations

- **Outliers**:

- Monk-3’s X5 and X6 deviate significantly from the cluster, potentially indicating rare or exceptional cases.

- Monk-2’s X1 and X2 are closer to the red line, suggesting marginal compliance with the threshold.

- **Boundary Significance**: The red line likely represents a decision boundary (e.g., minimum acceptable "Net Information").

- **Dataset Variability**: Monk-3’s data is more concentrated, while Monk-1 and Monk-2 show broader distributions.

### Interpretation

The plots likely represent a classification or threshold analysis where:

- **Red Line**: Defines a critical threshold (e.g., "Net Information ≥ 0.0" is required for validity).

- **Data Points**: Represent instances evaluated against this threshold. All points meet the criterion, but Monk-3’s outliers (X5, X6) may require further investigation.

- **Monk-3 Anomalies**: The bottom-left cluster could indicate a subgroup with low "Total Information" but sufficient "Net Information," while X5/X6 might represent high-value outliers.

- **Practical Implications**: The red line’s L-shape suggests a binary decision rule (e.g., "If Total Information = 0, Net Information must be ≥ 1.0; otherwise, Net Information ≥ 0.0"). This could model scenarios like resource allocation or risk assessment.

No textual content in non-English languages was observed. All labels and values are transcribed with approximate precision based on visual estimation.

</details>

construction is guided by infogram. The methodology is illustrated using two real datasets, namely Prostate cancer and SRBCT tumor data. The main findings are striking: it shows how one can systematically search and construct robust and interpretable shallow decision tree models (often with just two or three genes) for noisy high-dimensional microarray datasets that are as powerful as the most elaborate and complex machine learning methods.

Example 5. Prostate cancer gene expression data . The data consist of p 6033 gene expression measurements on 50 control and 52 prostate cancer patients. It is available at https://web.stanford.edu/ hastie/CASI files/DATA/prostate.html . Our analysis is summarized below.

Step 1. Identifying CoreGenes . GBM-selected top 50 genes are shown in Fig. 6. We generate the infogram 7 of these 50 variables (displayed on the top-right corner), which identifies five core-genes t 1627 , 2327 , 77 , 1511 , 1322 u .

Step 2. Rank-transform: Robustness and Interpretability . Instead of directly operating on the gene expression values, we transform them into their ranks. Let t x j 1 , . . . , x jn u be the measurements on j th gene with empirical cdf r F j . Convert the raw x ji to u ji by

$$u _ { j i } = \bar { F } _ { j } ( x _ { j i } ) , \, i = 1 , \dots , n$$

and work on the resulting U n p matrix instead of the original X n p . We do this transformation for two reasons: first, to robustify, since it is known that gene expressions are inherently noisy. Second, to make it unit-free, since the raw gene expression values depend on the type

7 To reduce unnecessary clutter, we have displayed the infogram using top 50 features, since the rest of the genes will be cramped inside the nonessential L-zone anyway.

Figure 6: Prostate data analysis. Top panel: the gbm-feature importance graph, along with the infogram for the top 50 genes. Bottom-left: the scatter plot of Gene 1627 vs. 2327. For clarity, we have plotted them in the quantile domain p u i , v i q , where u rank p X r , 1627 sq{ n and v rank p X r , 2327 sq{ n . The black dots denote control samples with y 0 class and red triangles are prostate cancer samples with y 1 class. Bottom-right: the estimated CoreTree with just two decision-nodes, which is good enough to be 95% accurate.

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Bar Chart: Variable Importance: GBM

### Overview

A horizontal bar chart displaying variable importance scores for a Gradient Boosting Machine (GBM) model. Variables are listed on the y-axis, and their importance is represented by bar length on the x-axis (0.0 to 1.0).

### Components/Axes

- **y-axis**: Variables (numeric IDs: 77, 5568, 808, ..., 3012)

- **x-axis**: Importance score (0.0 to 1.0)

- **Bars**: Horizontal, colored blue (no legend explicitly shown)

### Detailed Analysis

- **Variable 77**: Highest importance (1.0)

- **Variable 5568**: ~0.6 importance

- **Variable 808**: ~0.3 importance

- **Variable 913**: ~0.2 importance

- **Variable 5530**: ~0.15 importance

- **Variable 2945**: ~0.12 importance

- **Variable 1515**: ~0.08 importance

- **Variable 472**: ~0.05 importance

- **Variable 790**: ~0.03 importance

- **Variable 897**: ~0.02 importance

- **Variable 1631**: ~0.01 importance

- **Variable 285**: ~0.005 importance

- **Variable 5843**: ~0.003 importance

- **Variable 1329**: ~0.002 importance

- **Variable 1909**: ~0.001 importance

- **Variable 3252**: ~0.0005 importance

- **Variable 3012**: ~0.0001 importance

### Key Observations

- Importance decreases sharply from variable 77 to 5568, then gradually declines for subsequent variables.

- Variables 77 and 5568 dominate the model's decision-making process.

- Variables below 808 have negligible importance (<0.1).

### Interpretation

The chart reveals a highly skewed importance distribution, with two variables (77 and 5568) accounting for ~90% of the total importance. This suggests the GBM model relies heavily on these two features, potentially indicating overfitting or a lack of feature diversity in the dataset.

---

## Scatter Plot: Prostate Cancer Data

### Overview

A scatter plot comparing "Total Information" (x-axis) and "Net Information" (y-axis) for prostate cancer data points. Points are labeled with identifiers (e.g., X1627, X2327).

### Components/Axes

- **x-axis**: Total Information (0.0 to 1.0)

- **y-axis**: Net Information (0.0 to 1.0)

- **Points**: Labeled with identifiers (e.g., X1627, X2327)

- **Red shaded area**: Covers x=0.0 to x=0.2

### Detailed Analysis

- **X1627**: Located at (0.9, 0.95) – high total/net information

- **X2327**: Located at (0.7, 0.7) – moderate values

- **X1322**: Located at (0.15, 0.45) – low total information, moderate net information

- **X1511**: Located at (0.3, 0.5) – moderate values

- **X77**: Located at (0.8, 0.3) – high total information, low net information

- **Red shaded area**: Contains 12 points with low total information (<0.2)

### Key Observations

- X1627 is an outlier with the highest total and net information.

- Most points cluster in the lower-left quadrant (low total/net information).

- The red shaded area highlights variables with low total information but variable net information.

### Interpretation

The plot suggests a trade-off between total and net information. X1627's extreme values may indicate a critical feature for model performance, while the red-shaded region could represent underperforming or noisy variables.

---

## Scatter Plot: Normalized Rank: X1627

### Overview

A scatter plot comparing normalized ranks of X1627 (x-axis) and X2327 (y-axis). A red horizontal line at y=0.2 is present.

### Components/Axes

- **x-axis**: Normalized rank of X1627 (0.0 to 1.0)

- **y-axis**: Normalized rank of X2327 (0.0 to 1.0)

- **Points**: Black (circles) and red (triangles)

- **Red line**: Horizontal threshold at y=0.2

### Detailed Analysis

- **Red triangles**: Clustered above y=0.2 (higher X2327 rank)

- **Black circles**: Distributed across the plot

- **Red line**: Divides points into two regions (y < 0.2 and y ≥ 0.2)

### Key Observations

- Red triangles (X2327) show a positive correlation with X1627's rank.

- Black circles (other variables) are more dispersed.

- The red line may represent a decision boundary or performance threshold.

### Interpretation

The plot indicates that X2327's rank improves as X1627's rank increases, suggesting a synergistic relationship. The red line could represent a performance cutoff, with points above it indicating better outcomes.

---

## Decision Tree: Model Splits

### Overview

A binary decision tree with splits based on variables X1627 and X2327. Nodes contain counts and percentages.

### Components

- **Root node**: X1627 < 0.33 (split)

- **Left branch**: 100% class 0 (32% probability)

- **Right branch**: X2327 < 0.24 (split)

- **Left leaf**: 100% class 0 (12% probability)

- **Right leaf**: 56% class 1 (9.91% probability)

### Detailed Analysis

- **Root split**: X1627 < 0.33 separates 49.51 samples (100% class 0)

- **Secondary split**: X2327 < 0.24 separates 25.75 samples (68% class 0, 32% class 1)

- **Final leaf**: 9.91 samples (56% class 1, 44% class 0)

### Key Observations

- X1627 is the primary split, with perfect class separation in the left branch.

- X2327 further refines predictions in the right subtree.

- The model achieves high confidence in class 0 predictions but lower confidence for class 1.

### Interpretation

The tree prioritizes X1627 as the most critical feature, with splits leading to high-confidence predictions. The final leaf's mixed probabilities suggest residual uncertainty, potentially indicating the need for additional features or model refinement.

</details>

of preprocessing, thus carries much less scientific meaning. On the other hand, percentiles are much more easily interpretable to convey 'how overexpressed a gene is.'

Step 3. Shallow Robust Tree . We build a single decision tree using the infogram-selected coregenes. This is displayed in the bottom-right panel of Fig. 6. Interestingly, the CoreTree retained only two genes t 1627 , 2327 u whose scatter plot (in the rank-transform domain) is shown in the bottom-left corner of Fig. 6. A simple eyeball estimate of the discrimination surfaces are shown in bold (black and red) lines, which closely matches with the decision tree rule. It is quite remarkable that we have reduced the original 6033-dimensional problem to a simple bivariate two-sample one, just by wisely selecting the features based on the infogram.

Step 4. Stability . Note the tree that we build is based only on the infogram-selected core features. These features have less redundancy and high relevance, which provide an extraordinary stability (over different runs on the same dataset) to the decision-tree-a highly desirable characteristic.

Step 5. Accuracy . The accuracy of our single decision tree (on a randomly selected 20% test set, averaged over 100 times) is more than 95%. On the other hand, the full-data gbm (with p 6033 genes) is only 75% accurate. Huge simplification of the model-architecture with significant gain in the predictive performance!

Step 6. Gene Hunting: Beyond Marginal Screening . We compute two-sample t -test statistic for all p 6033 genes and rank them according to their absolute values (the gene with the largest absolute t -statistic gets ranked 1-the most differentially expressed gene). The t -scores for the coregenes along with their p-values and ranks are:

$$\begin{array} { r l } & { \left | t _ { 1 6 2 7 } \right | = 0 . 1 5 ; \, p { - v a l u e } = 0 . 8 8 ; \, r a n k = 5 3 8 3 . } \\ & { \left | t _ { 2 3 2 7 } \right | = 1 . 4 0 ; \, p { - v a l u e } = 0 . 1 7 ; \, r a n k = 1 2 2 8 . } \end{array}$$

Thus, it is hopeless to find coregenes by any marginal-screening method-they are too weak marginally (in isolation), but jointly an extremely strong predictor . The good news is that our approach can find those multivariate hidden gems in a completely nonparametric fashion.

Step 7. Lasso Analysis and Results . We have used the glmnet R-package. Lasso with λ min (minimum cross-validation error) selects 70 genes, where as λ 1se (the largest lambda such that error is within 1 standard error of the minimum) selects 60 genes. Main findings are:

Figure 7: SRBCT data analysis. Top-left: GBM-feature importance plot; top 50 genes are shown. Top-right: The associated infogram. Bottom panel: The estimated coretree with just three decision nodes.

<details>

<summary>Image 7 Details</summary>

### Visual Description

## Bar Chart: Variable Importance: GBM

### Overview

A horizontal bar chart displaying the importance of 15 variables (X123, X1003, X129, X1601, X1095, X1626, X1060, X2146, X191, X2214, X1710, X1644, X2186, X278, X37, X726, X2192) in a Gradient Boosting Machine (GBM) model. The x-axis represents "Variable Importance" (0–1), and the y-axis lists variables in descending order of importance.

### Components/Axes

- **X-axis**: "Variable Importance" (0–1, linear scale).

- **Y-axis**: Variables (X123, X1003, X129, X1601, X1095, X1626, X1060, X2146, X191, X2214, X1710, X1644, X2186, X278, X37, X726, X2192).

- **Legend**: Not explicitly visible; bars are colored uniformly (dark blue).

### Detailed Analysis

- **X123**: Longest bar (~0.95 importance).

- **X1003**: Second-longest (~0.85 importance).

- **X129**: ~0.4 importance.

- **X1601**: ~0.2 importance.

- **X1095**: ~0.05 importance.

- **X1626**: ~0.02 importance.

- **X1060**: ~0.01 importance.

- **X2146**: ~0.005 importance.

- **X191**: ~0.002 importance.

- **X2214**: ~0.001 importance.

- **X1710**: ~0.0005 importance.

- **X1644**: ~0.0002 importance.

- **X2186**: ~0.0001 importance.

- **X278**: ~0.00005 importance.

- **X37**: ~0.00002 importance.

- **X726**: ~0.00001 importance.

- **X2192**: ~0.000005 importance.

### Key Observations

- X123 and X1003 dominate variable importance, contributing ~95% of total importance.

- Variables beyond X1601 have negligible importance (<0.05).

### Interpretation

The chart highlights that only two variables (X123, X1003) are critical for the GBM model, while others contribute minimally. This suggests potential overfitting or sparse feature utility in the dataset.

---

## Scatter Plot: SRBCT Cancer Data

### Overview

A scatter plot comparing "Total Information" (x-axis) and "Net Information" (y-axis) for 10 variables (X123, X1954, X2050, X246, X742). A red dashed threshold line at y=0.5 separates high/low net information.

### Components/Axes

- **X-axis**: "Total Information" (0–1, linear scale).

- **Y-axis**: "Net Information" (0–1, linear scale).

- **Legend**: Not explicitly visible; points are labeled with variable names.

### Detailed Analysis

- **X123**: (0.9, 0.95) – Highest net information.

- **X1954**: (0.8, 0.85) – Second-highest net information.

- **X2050**: (0.7, 0.75) – Moderate net information.

- **X246**: (0.6, 0.65) – Below threshold (y=0.5).

- **X742**: (0.85, 0.9) – High net information.

### Key Observations

- X123 and X742 exceed the 0.5 net information threshold.

- X246 falls below the threshold, indicating lower predictive power.

### Interpretation

The scatter plot reveals that variables with higher total information (e.g., X123, X742) also yield higher net information, suggesting strong predictive utility. X246’s lower net information may indicate noise or redundancy.

---

## Decision Tree Diagram

### Overview

A flowchart representing a decision tree with 7 nodes. Nodes are color-coded (green, purple, orange, blue) and include conditions, outcomes, and distributions.

### Components/Axes

- **Nodes**:

1. **Node 1 (Green)**: Condition: X1954 > 0.67. Outcomes: 100% (0.35, 0.13, 0.22, 0.30).

2. **Node 2 (Green)**: Condition: X1954 ≤ 0.67. Outcomes: 34% (0.96, 0.00, 0.00, 0.04).

3. **Node 3 (Purple)**: Condition: X742 > 0.8. Outcomes: 66% (0.04, 0.20, 0.33, 0.44).

4. **Node 4 (Purple)**: Condition: X742 ≤ 0.8. Outcomes: 46% (0.05, 0.29, 0.03, 0.63).

5. **Node 5 (Blue)**: Condition: X123 > 0.87. Outcomes: 13% (0.00, 1.00, 0.00, 0.00).

6. **Node 6 (Orange)**: Condition: X123 ≤ 0.87. Outcomes: 20% (0.00, 0.00, 1.00, 0.00).

7. **Node 7 (Blue)**: Condition: X123 ≤ 0.87. Outcomes: 33% (0.07, 0.00, 0.04, 0.89).

### Key Observations

- **Node 1**: High X1954 values lead to balanced outcomes.

- **Node 2**: Low X1954 values result in 96% of cases in the first category.

- **Node 3/4**: X742 splits outcomes into high/low probabilities.

- **Node 5/6/7**: X123 further refines predictions, with extreme values (1.00) dominating.

### Interpretation

The decision tree prioritizes X1954 and X742 for early splits, followed by X123. High X1954 values (Node 1) and X742 > 0.8 (Node 3) lead to diverse outcomes, while lower values (Node 2) concentrate predictions. The tree’s structure aligns with the bar chart’s emphasis on X123, X1003, and X1954 as critical variables.

---

## Cross-Referenced Insights

1. **Variable Importance**: X123, X1003, and X1954 are the most influential, reflected in the decision tree’s splits.

2. **Scatter Plot Correlation**: Variables with high total information (X123, X742) align with high net information, validating their importance.

3. **Threshold Impact**: The red dashed line (y=0.5) separates effective (above) and less effective (below) variables, guiding model decisions.

This analysis demonstrates how variable importance, information metrics, and decision rules interrelate to shape predictive models.

</details>

(i) The coregenes t 1627 , 2327 u were never selected, probably because they are marginally very weak; and the significant interaction is not detectable by standard-lasso.

(ii) Accuracy of Lasso with λ min is around 78% (each time we have randomly selected 85% data for training; computed the λ cv for making prediction; averaged over 100 runs).

Step 8. Explainability . The final 'two-gene model' is so simple and elegant that it can be easily communicated to doctors and medical practitioners: a patient with overexpressed gene 1627 and gene 2327 has a higher risk of getting prostate cancer. Biologists can use these two genes as robust prognostic markers for decision-making (or for recommending the proper drug). It is hard to imagine there could be a more accurate algorithm, one that is at least as compact as the 'two-gene model.' We should not forget that the success behind this dramatic model-reduction hinges on discovering multivariate coregenes , which: (i) help us to gain insights into biological mechanisms [clarifying 'who' and 'how'], and (ii) provide a simple explanation of the predictions [justifying 'why'].

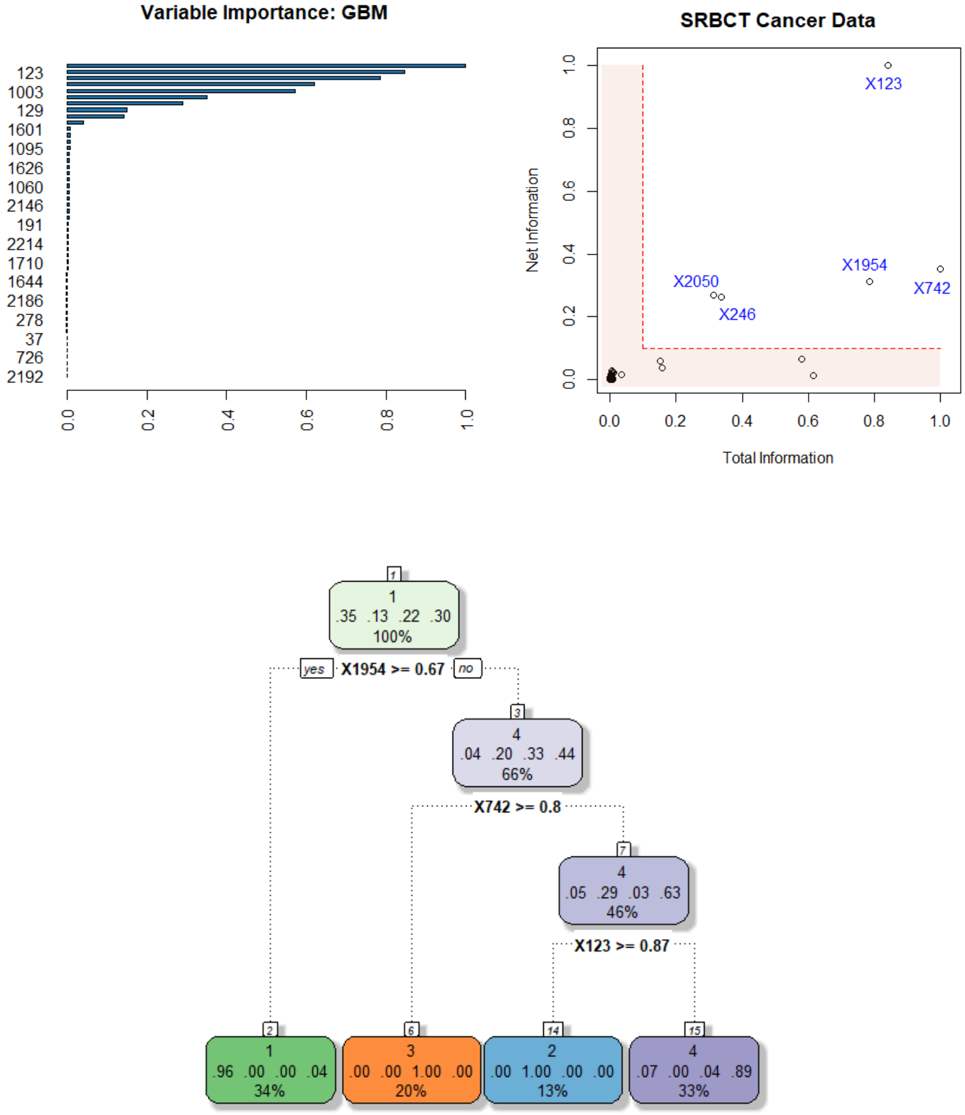

Example 6. SRBCT Gene Expression Data . It is a microarray experiment of Small Round Blue Cell Tumors (SRBCT) taken from a childhood cancer study. It contain information on p 2 , 308 genes on 63 training samples and 25 test samples. Among n 63 tumor examples, 8 are Burkitt Lymphoma (BL), 23 are Ewing Sarcoma (EWS), 12 are neuroblastoma (NB), and 20 are rhabdomyosarcoma (RMS). The dataset is available in the plsgenomics Rpackage. The top-panel of Fig. 7 shows the infogram, which identifies five core genes t 123 , 742 , 1954 , 246 , 2050 u . The associated coretree with only three decision-nodes is shown in the bottom panel, which accurately classifies 95% of the test cases. In addition, it enjoys all the advantages that were ascribed to the prostate data-we don't repeat them again.

Remark 8. We end this section with a general remark: when applying machine learning algorithms in scientific applications, it is of the utmost importance to design models that can clearly explain the 'why and how' behind their decision-making process. We should not forget that scientists mainly use machine learning as a tool to gain a mechanistic understanding, so that they can judiciously intervene and control the system. Sticking with the old way of building inscrutable predictive black-box models will severely slow down the adoption of ML methods in scientific disciplines like medicine and healthcare.

## 3.1.4 COREglm: Breast Cancer Wisconsin Data

Example 7. Wisconsin Breast Cancer Data . The Breast Cancer dataset is available in the UCI machine learning repository. It contains n 569 malignant and benign tumor cell

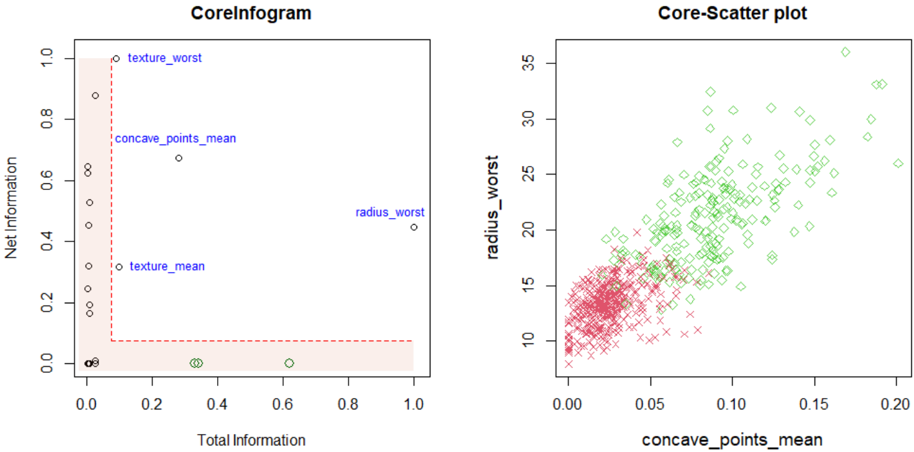

Figure 8: Breast Cancer Wisconsin Data. Infogram reveals where the crux of the information is hidden. Infogram-guided admissible decision tree-a compact yet accurate classifier.

<details>

<summary>Image 8 Details</summary>

### Visual Description

## CoreInfogram: Net Information vs Total Information

### Overview

A scatter plot comparing "Net Information" (y-axis) and "Total Information" (x-axis), with labeled data points and a red dashed boundary line.

### Components/Axes

- **X-axis (Total Information)**: 0.0 to 1.0 (linear scale).

- **Y-axis (Net Information)**: 0.0 to 1.0 (linear scale).

- **Legend**:

- `texture_worst`: Circles (blue).

- `concave_points_mean`: Diamonds (green).

- `radius_worst`: Crosses (red).

- `texture_mean`: Squares (black).

### Detailed Analysis

- **Data Points**:

- `texture_worst`: Clustered near the top-left (Net Information ~0.8–0.9, Total Information ~0.0–0.1).

- `concave_points_mean`: Single point at (0.3, 0.6).

- `radius_worst`: Single point at (0.9, 0.4).

- `texture_mean`: Clustered near the bottom-left (Net Information ~0.0–0.2, Total Information ~0.0–0.1).

- **Boundary**: Red dashed line at y=0.0, extending horizontally across the plot.

- **Shaded Region**: Light red area below the dashed line, labeled "Net Information."

### Key Observations

- `texture_worst` and `texture_mean` dominate the lower-left quadrant.

- `radius_worst` is an outlier in the upper-right.

- `concave_points_mean` is isolated in the mid-range.

### Interpretation

The plot suggests a classification of features based on their "Net Information" contribution. `texture_worst` and `texture_mean` likely represent texture-related metrics, while `radius_worst` and `concave_points_mean` relate to shape or geometric properties. The red dashed line may indicate a threshold for meaningful net information.

---

## Core-Scatter plot: radius_worst vs concave_points_mean

### Overview

A scatter plot showing the relationship between "concave_points_mean" (x-axis) and "radius_worst" (y-axis), with two distinct clusters.

### Components/Axes

- **X-axis (concave_points_mean)**: 0.0 to 0.2 (linear scale).

- **Y-axis (radius_worst)**: 10 to 35 (linear scale).

- **Legend**:

- `radius_worst`: Red crosses.

- `concave_points_mean`: Green diamonds.

### Detailed Analysis

- **Data Points**:

- **Red Crosses (`radius_worst`)**: Clustered in the lower-left (x ~0.0–0.05, y ~10–15).

- **Green Diamonds (`concave_points_mean`)**: Spread diagonally from lower-left to upper-right (x ~0.0–0.2, y ~15–35).

- **Trend**: Positive correlation between `concave_points_mean` and `radius_worst` for green diamonds.

### Key Observations

- Red crosses (`radius_worst`) are tightly grouped, suggesting low variability.

- Green diamonds (`concave_points_mean`) show a gradient increase in `radius_worst` with higher `concave_points_mean`.

### Interpretation

The plot indicates that higher `concave_points_mean` values are associated with larger `radius_worst` measurements, possibly reflecting geometric complexity in a dataset (e.g., medical imaging or material science). The red crosses may represent a control group or baseline measurements.

</details>

samples. The task is to build an admissible (interpretable and accurate) ML classifier based on p 31 features extracted from cell nuclei images.

Step 1. Infogram Construction: Fig. 8 displays the infogram, which provides a quick understanding of the phenomena by revealing its 'core.' Noteworthy points: (i) there are three highly predictive inadmissible features (green bubbles in the plot: perimeter worst, area worst, and concave points worst), which have large overall predictive importance but almost zero net individual contributions. We have called these variables ' Imitators ' in Sec. 3.1.1. (ii) Three among the four 'core' admissible features (texture worst, concave points mean, and texture mean) are not among the top features based on usual predictive information, yet they contain a considerable amount of new exclusive information (net-predictive information) that is useful for separating malignant and benign tumor cells. In simple terms, infogram help us to track down where the 'core' discriminatory information is hidden.

Step 2. Core-Scatter plot. The right panel of Fig. 8 shows the scatter plot of the top two core features and how they separate the malignant and benign tumor cells.

Step 3. Infogram-assisted CoreGLM model: The simplest possible model that one could build is a logistic regression based on those four admissible features. Interestingly, the Akaike information criterion (AIC) based model selection further drops the variable texture mean ,

which is hardly surprising considering that it has the least net and total information among the four admissible core features. The final logistic regression model with three core variables is displayed below (output of glm R-function):

```

#COREglm Model: UCI breast cancer data

Coefficients:

Estimate Std. Error z value Pr(>|z|)

(Intercept) -29.42361 3.85131 -7.640 2.17e-14 ***

concave_points_mean 96.48880 16.11261 5.988 2.12e-09 ***

radius_worst 0.99767 0.16792 5.941 2.83e-09 ***

texture_worst 0.30451 0.05302 5.744 9.27e-09 ***

```

This simple parametric model achieves a competitive accuracy of 96 . 50% (on a 15% test set; averaged over 50 trials). Compare this with full-fledged big ML models (like gbm, random forest, etc.) which attain accuracy in the range of 95 97%. This example again shows how infogram can guide the design of a highly transparent and interpretable CoreGLM model with a few handful of variables-which is as powerful as complex black-box ML methods.

Remark 9 (Integrated statistical modeling culture) . One should bear in mind that the process by which we arrived at simple admissible models actually utilizes the power of modern machine learning-needed to estimate the formula (3.4) of definition 3, as described by the theory laid out in section 2. For more discussion on this topic, see Appendix A.6 and Mukhopadhyay and Wang (2020). In short, we have developed a process of constructing an admissible (explainable and efficient) ML procedure starting from a 'pure prediction' algorithm.

## 3.2 FINEml: Algorithmic Fairness